copying

Browse files- README.md +61 -0

- config.json +175 -0

- merges.txt +0 -0

- preprocessor_config.json +19 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +24 -0

- tokenizer.json +0 -0

- tokenizer_config.json +35 -0

- vocab.json +0 -0

README.md

ADDED

|

@@ -0,0 +1,61 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: en

|

| 3 |

+

license: mit

|

| 4 |

+

tags:

|

| 5 |

+

- vision

|

| 6 |

+

- video-classification

|

| 7 |

+

model-index:

|

| 8 |

+

- name: nielsr/xclip-base-patch32

|

| 9 |

+

results:

|

| 10 |

+

- task:

|

| 11 |

+

type: video-classification

|

| 12 |

+

dataset:

|

| 13 |

+

name: Kinetics 400

|

| 14 |

+

type: kinetics-400

|

| 15 |

+

metrics:

|

| 16 |

+

- type: top-1 accuracy

|

| 17 |

+

value: 80.4

|

| 18 |

+

- type: top-5 accuracy

|

| 19 |

+

value: 95.0

|

| 20 |

+

---

|

| 21 |

+

|

| 22 |

+

# X-CLIP (base-sized model)

|

| 23 |

+

|

| 24 |

+

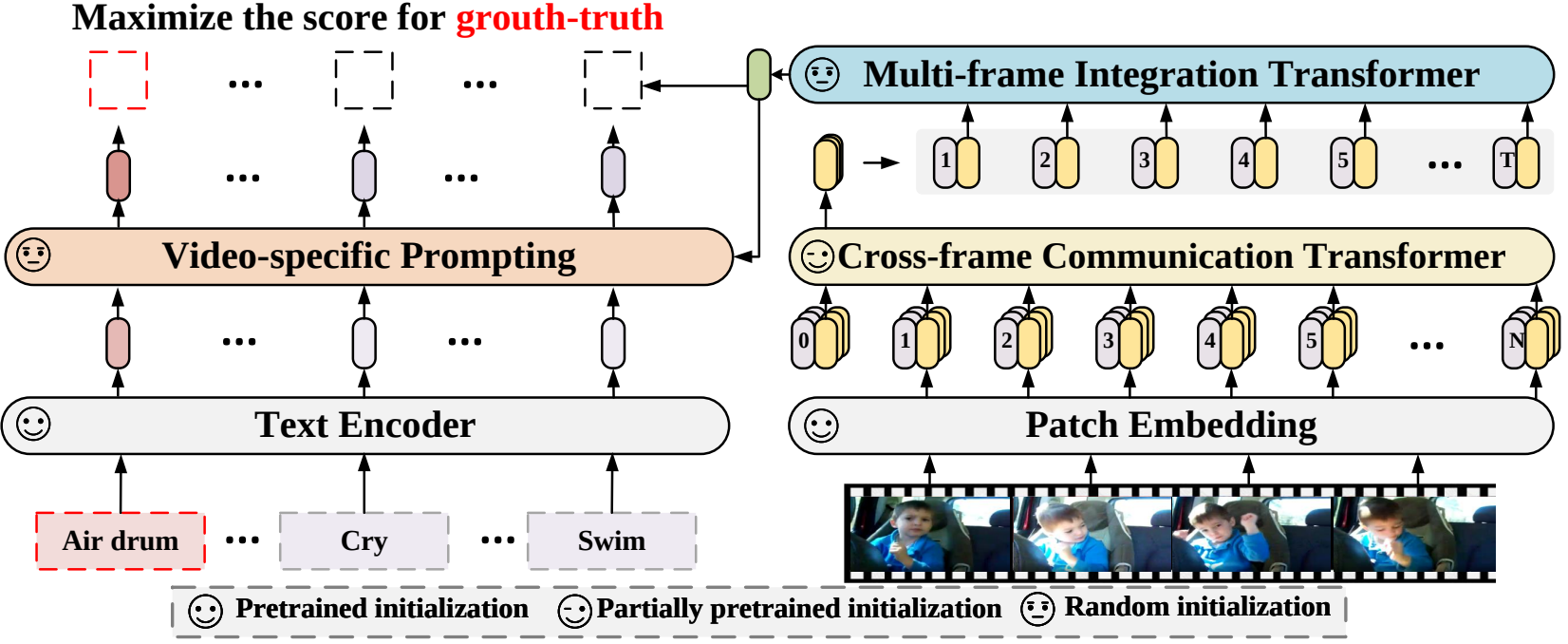

X-CLIP model (base-sized, patch resolution of 32) trained fully-supervised on [Kinetics-400](https://www.deepmind.com/open-source/kinetics). It was introduced in the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Ni et al. and first released in [this repository](https://github.com/microsoft/VideoX/tree/master/X-CLIP).

|

| 25 |

+

|

| 26 |

+

This model was trained using 8 frames per video, at a resolution of 224x224.

|

| 27 |

+

|

| 28 |

+

Disclaimer: The team releasing X-CLIP did not write a model card for this model so this model card has been written by the Hugging Face team.

|

| 29 |

+

|

| 30 |

+

## Model description

|

| 31 |

+

|

| 32 |

+

X-CLIP is a minimal extension of [CLIP](https://huggingface.co/docs/transformers/model_doc/clip) for general video-language understanding. The model is trained in a contrastive way on (video, text) pairs.

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

This allows the model to be used for tasks like zero-shot, few-shot or fully supervised video classification and video-text retrieval.

|

| 37 |

+

|

| 38 |

+

## Intended uses & limitations

|

| 39 |

+

|

| 40 |

+

You can use the raw model for determining how well text goes with a given video. See the [model hub](https://huggingface.co/models?search=microsoft/xclip) to look for

|

| 41 |

+

fine-tuned versions on a task that interests you.

|

| 42 |

+

|

| 43 |

+

### How to use

|

| 44 |

+

|

| 45 |

+

For code examples, we refer to the [documentation](https://huggingface.co/transformers/main/model_doc/xclip.html#).

|

| 46 |

+

|

| 47 |

+

## Training data

|

| 48 |

+

|

| 49 |

+

This model was trained on [Kinetics-400](https://www.deepmind.com/open-source/kinetics).

|

| 50 |

+

|

| 51 |

+

### Preprocessing

|

| 52 |

+

|

| 53 |

+

The exact details of preprocessing during training can be found [here](https://github.com/microsoft/VideoX/blob/40f6d177e0a057a50ac69ac1de6b5938fd268601/X-CLIP/datasets/build.py#L247).

|

| 54 |

+

|

| 55 |

+

The exact details of preprocessing during validation can be found [here](https://github.com/microsoft/VideoX/blob/40f6d177e0a057a50ac69ac1de6b5938fd268601/X-CLIP/datasets/build.py#L285).

|

| 56 |

+

|

| 57 |

+

During validation, one resizes the shorter edge of each frame, after which center cropping is performed to a fixed-size resolution (like 224x224). Next, frames are normalized across the RGB channels with the ImageNet mean and standard deviation.

|

| 58 |

+

|

| 59 |

+

## Evaluation results

|

| 60 |

+

|

| 61 |

+

This model achieves a top-1 accuracy of 80.4% and a top-5 accuracy of 95.0%.

|

config.json

ADDED

|

@@ -0,0 +1,175 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"architectures": [

|

| 4 |

+

"XClipModel"

|

| 5 |

+

],

|

| 6 |

+

"initializer_factor": 1.0,

|

| 7 |

+

"logit_scale_init_value": 2.6592,

|

| 8 |

+

"model_type": "xclip",

|

| 9 |

+

"projection_dim": 512,

|

| 10 |

+

"prompt_alpha": 0.1,

|

| 11 |

+

"prompt_attention_dropout": 0.0,

|

| 12 |

+

"prompt_hidden_act": "quick_gelu",

|

| 13 |

+

"prompt_layers": 2,

|

| 14 |

+

"prompt_num_attention_heads": 8,

|

| 15 |

+

"prompt_projection_dropout": 0.0,

|

| 16 |

+

"text_config": {

|

| 17 |

+

"_name_or_path": "",

|

| 18 |

+

"add_cross_attention": false,

|

| 19 |

+

"architectures": null,

|

| 20 |

+

"attention_dropout": 0.0,

|

| 21 |

+

"bad_words_ids": null,

|

| 22 |

+

"bos_token_id": 0,

|

| 23 |

+

"chunk_size_feed_forward": 0,

|

| 24 |

+

"cross_attention_hidden_size": null,

|

| 25 |

+

"decoder_start_token_id": null,

|

| 26 |

+

"diversity_penalty": 0.0,

|

| 27 |

+

"do_sample": false,

|

| 28 |

+

"dropout": 0.0,

|

| 29 |

+

"early_stopping": false,

|

| 30 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 31 |

+

"eos_token_id": 2,

|

| 32 |

+

"exponential_decay_length_penalty": null,

|

| 33 |

+

"finetuning_task": null,

|

| 34 |

+

"forced_bos_token_id": null,

|

| 35 |

+

"forced_eos_token_id": null,

|

| 36 |

+

"hidden_act": "quick_gelu",

|

| 37 |

+

"hidden_size": 512,

|

| 38 |

+

"id2label": {

|

| 39 |

+

"0": "LABEL_0",

|

| 40 |

+

"1": "LABEL_1"

|

| 41 |

+

},

|

| 42 |

+

"initializer_factor": 1.0,

|

| 43 |

+

"initializer_range": 0.02,

|

| 44 |

+

"intermediate_size": 2048,

|

| 45 |

+

"is_decoder": false,

|

| 46 |

+

"is_encoder_decoder": false,

|

| 47 |

+

"label2id": {

|

| 48 |

+

"LABEL_0": 0,

|

| 49 |

+

"LABEL_1": 1

|

| 50 |

+

},

|

| 51 |

+

"layer_norm_eps": 1e-05,

|

| 52 |

+

"length_penalty": 1.0,

|

| 53 |

+

"max_length": 20,

|

| 54 |

+

"max_position_embeddings": 77,

|

| 55 |

+

"min_length": 0,

|

| 56 |

+

"model_type": "xclip_text_model",

|

| 57 |

+

"no_repeat_ngram_size": 0,

|

| 58 |

+

"num_attention_heads": 8,

|

| 59 |

+

"num_beam_groups": 1,

|

| 60 |

+

"num_beams": 1,

|

| 61 |

+

"num_hidden_layers": 12,

|

| 62 |

+

"num_return_sequences": 1,

|

| 63 |

+

"output_attentions": false,

|

| 64 |

+

"output_hidden_states": false,

|

| 65 |

+

"output_scores": false,

|

| 66 |

+

"pad_token_id": 1,

|

| 67 |

+

"prefix": null,

|

| 68 |

+

"problem_type": null,

|

| 69 |

+

"pruned_heads": {},

|

| 70 |

+

"remove_invalid_values": false,

|

| 71 |

+

"repetition_penalty": 1.0,

|

| 72 |

+

"return_dict": true,

|

| 73 |

+

"return_dict_in_generate": false,

|

| 74 |

+

"sep_token_id": null,

|

| 75 |

+

"task_specific_params": null,

|

| 76 |

+

"temperature": 1.0,

|

| 77 |

+

"tf_legacy_loss": false,

|

| 78 |

+

"tie_encoder_decoder": false,

|

| 79 |

+

"tie_word_embeddings": true,

|

| 80 |

+

"tokenizer_class": null,

|

| 81 |

+

"top_k": 50,

|

| 82 |

+

"top_p": 1.0,

|

| 83 |

+

"torch_dtype": null,

|

| 84 |

+

"torchscript": false,

|

| 85 |

+

"transformers_version": "4.22.0.dev0",

|

| 86 |

+

"typical_p": 1.0,

|

| 87 |

+

"use_bfloat16": false,

|

| 88 |

+

"vocab_size": 49408

|

| 89 |

+

},

|

| 90 |

+

"text_config_dict": null,

|

| 91 |

+

"torch_dtype": "float32",

|

| 92 |

+

"transformers_version": null,

|

| 93 |

+

"vision_config": {

|

| 94 |

+

"_name_or_path": "",

|

| 95 |

+

"add_cross_attention": false,

|

| 96 |

+

"architectures": null,

|

| 97 |

+

"attention_dropout": 0.0,

|

| 98 |

+

"bad_words_ids": null,

|

| 99 |

+

"bos_token_id": null,

|

| 100 |

+

"chunk_size_feed_forward": 0,

|

| 101 |

+

"cross_attention_hidden_size": null,

|

| 102 |

+

"decoder_start_token_id": null,

|

| 103 |

+

"diversity_penalty": 0.0,

|

| 104 |

+

"do_sample": false,

|

| 105 |

+

"drop_path_rate": 0.0,

|

| 106 |

+

"dropout": 0.0,

|

| 107 |

+

"early_stopping": false,

|

| 108 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 109 |

+

"eos_token_id": null,

|

| 110 |

+

"exponential_decay_length_penalty": null,

|

| 111 |

+

"finetuning_task": null,

|

| 112 |

+

"forced_bos_token_id": null,

|

| 113 |

+

"forced_eos_token_id": null,

|

| 114 |

+

"hidden_act": "quick_gelu",

|

| 115 |

+

"hidden_size": 768,

|

| 116 |

+

"id2label": {

|

| 117 |

+

"0": "LABEL_0",

|

| 118 |

+

"1": "LABEL_1"

|

| 119 |

+

},

|

| 120 |

+

"image_size": 224,

|

| 121 |

+

"initializer_factor": 1.0,

|

| 122 |

+

"initializer_range": 0.02,

|

| 123 |

+

"intermediate_size": 3072,

|

| 124 |

+

"is_decoder": false,

|

| 125 |

+

"is_encoder_decoder": false,

|

| 126 |

+

"label2id": {

|

| 127 |

+

"LABEL_0": 0,

|

| 128 |

+

"LABEL_1": 1

|

| 129 |

+

},

|

| 130 |

+

"layer_norm_eps": 1e-05,

|

| 131 |

+

"length_penalty": 1.0,

|

| 132 |

+

"max_length": 20,

|

| 133 |

+

"min_length": 0,

|

| 134 |

+

"mit_hidden_size": 512,

|

| 135 |

+

"mit_intermediate_size": 2048,

|

| 136 |

+

"mit_num_attention_heads": 8,

|

| 137 |

+

"mit_num_hidden_layers": 1,

|

| 138 |

+

"model_type": "xclip_vision_model",

|

| 139 |

+

"no_repeat_ngram_size": 0,

|

| 140 |

+

"num_attention_heads": 12,

|

| 141 |

+

"num_beam_groups": 1,

|

| 142 |

+

"num_beams": 1,

|

| 143 |

+

"num_channels": 3,

|

| 144 |

+

"num_frames": 8,

|

| 145 |

+

"num_hidden_layers": 12,

|

| 146 |

+

"num_return_sequences": 1,

|

| 147 |

+

"output_attentions": false,

|

| 148 |

+

"output_hidden_states": false,

|

| 149 |

+

"output_scores": false,

|

| 150 |

+

"pad_token_id": null,

|

| 151 |

+

"patch_size": 32,

|

| 152 |

+

"prefix": null,

|

| 153 |

+

"problem_type": null,

|

| 154 |

+

"pruned_heads": {},

|

| 155 |

+

"remove_invalid_values": false,

|

| 156 |

+

"repetition_penalty": 1.0,

|

| 157 |

+

"return_dict": true,

|

| 158 |

+

"return_dict_in_generate": false,

|

| 159 |

+

"sep_token_id": null,

|

| 160 |

+

"task_specific_params": null,

|

| 161 |

+

"temperature": 1.0,

|

| 162 |

+

"tf_legacy_loss": false,

|

| 163 |

+

"tie_encoder_decoder": false,

|

| 164 |

+

"tie_word_embeddings": true,

|

| 165 |

+

"tokenizer_class": null,

|

| 166 |

+

"top_k": 50,

|

| 167 |

+

"top_p": 1.0,

|

| 168 |

+

"torch_dtype": null,

|

| 169 |

+

"torchscript": false,

|

| 170 |

+

"transformers_version": "4.22.0.dev0",

|

| 171 |

+

"typical_p": 1.0,

|

| 172 |

+

"use_bfloat16": false

|

| 173 |

+

},

|

| 174 |

+

"vision_config_dict": null

|

| 175 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_center_crop": true,

|

| 3 |

+

"do_normalize": true,

|

| 4 |

+

"do_resize": true,

|

| 5 |

+

"feature_extractor_type": "VideoMAEFeatureExtractor",

|

| 6 |

+

"image_mean": [

|

| 7 |

+

0.485,

|

| 8 |

+

0.456,

|

| 9 |

+

0.406

|

| 10 |

+

],

|

| 11 |

+

"image_std": [

|

| 12 |

+

0.229,

|

| 13 |

+

0.224,

|

| 14 |

+

0.225

|

| 15 |

+

],

|

| 16 |

+

"processor_class": "XCLIPProcessor",

|

| 17 |

+

"resample": 2,

|

| 18 |

+

"size": 224

|

| 19 |

+

}

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d2840b05bd4ed269688ff76a239e703dd930db4a160726c5b79b9ef26f173452

|

| 3 |

+

size 786535711

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "openai/clip-vit-base-patch32",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"processor_class": "CLIPProcessor",

|

| 25 |

+

"special_tokens_map_file": "/home/niels/.cache/huggingface/hub/models--openai--clip-vit-base-patch32/snapshots/f4881ba48ee4d21b7ed5602603b9e3e92eb1b346/special_tokens_map.json",

|

| 26 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 27 |

+

"unk_token": {

|

| 28 |

+

"__type": "AddedToken",

|

| 29 |

+

"content": "<|endoftext|>",

|

| 30 |

+

"lstrip": false,

|

| 31 |

+

"normalized": true,

|

| 32 |

+

"rstrip": false,

|

| 33 |

+

"single_word": false

|

| 34 |

+

}

|

| 35 |

+

}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|