Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,168 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Global Context Vision Transformer (GC ViT)

|

| 2 |

+

|

| 3 |

+

This model contains the official PyTorch implementation of **Global Context Vision Transformers** (ICML2023) \

|

| 4 |

+

\

|

| 5 |

+

[Global Context Vision

|

| 6 |

+

Transformers](https://arxiv.org/pdf/2206.09959.pdf) \

|

| 7 |

+

[Ali Hatamizadeh](https://research.nvidia.com/person/ali-hatamizadeh),

|

| 8 |

+

[Hongxu (Danny) Yin](https://scholar.princeton.edu/hongxu),

|

| 9 |

+

[Greg Heinrich](https://developer.nvidia.com/blog/author/gheinrich/),

|

| 10 |

+

[Jan Kautz](https://jankautz.com/),

|

| 11 |

+

and [Pavlo Molchanov](https://www.pmolchanov.com/).

|

| 12 |

+

|

| 13 |

+

GC ViT achieves state-of-the-art results across image classification, object detection and semantic segmentation tasks. On ImageNet-1K dataset for classification, GC ViT variants with `51M`, `90M` and `201M` parameters achieve `84.3`, `85.9` and `85.7` Top-1 accuracy, respectively, surpassing comparably-sized prior art such as CNN-based ConvNeXt and ViT-based Swin Transformer.

|

| 14 |

+

|

| 15 |

+

<p align="center">

|

| 16 |

+

<img src="https://github.com/NVlabs/GCVit/assets/26806394/d1820d6d-3aef-470e-a1d3-af370f1c1f77" width=63% height=63%

|

| 17 |

+

class="center">

|

| 18 |

+

</p>

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

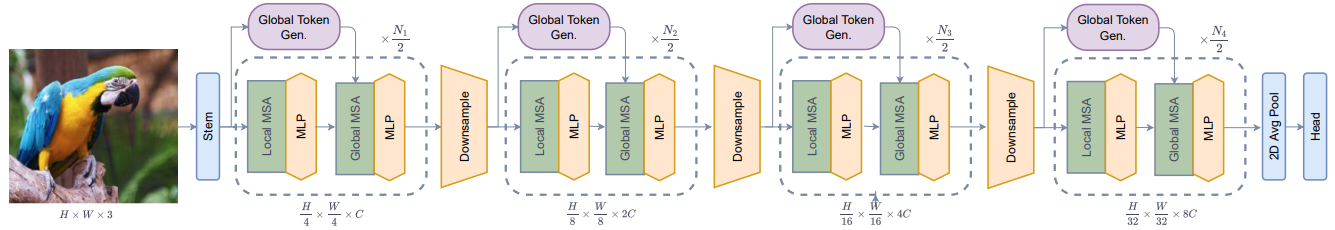

The architecture of GC ViT is demonstrated in the following:

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

## Introduction

|

| 27 |

+

|

| 28 |

+

**GC ViT** leverages global context self-attention modules, joint with local self-attention, to effectively yet efficiently model both long and short-range spatial interactions, without the need for expensive

|

| 29 |

+

operations such as computing attention masks or shifting local windows.

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

<p align="center">

|

| 33 |

+

<img src="https://github.com/NVlabs/GCVit/assets/26806394/da64f22a-e7af-4577-8884-b08ba4e24e49" width=72% height=72%

|

| 34 |

+

class="center">

|

| 35 |

+

</p>

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

## ImageNet Benchmarks

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

**ImageNet-1K Pretrained Models**

|

| 42 |

+

|

| 43 |

+

<table>

|

| 44 |

+

<tr>

|

| 45 |

+

<th>Model Variant</th>

|

| 46 |

+

<th>Acc@1</th>

|

| 47 |

+

<th>#Params(M)</th>

|

| 48 |

+

<th>FLOPs(G)</th>

|

| 49 |

+

<th>Download</th>

|

| 50 |

+

</tr>

|

| 51 |

+

<tr>

|

| 52 |

+

<td>GC ViT-XXT</td>

|

| 53 |

+

<th>79.9</th>

|

| 54 |

+

<td>12</td>

|

| 55 |

+

<td>2.1</td>

|

| 56 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1apSIWQCa5VhWLJws8ugMTuyKzyayw4Eh">model</a></td>

|

| 57 |

+

</tr>

|

| 58 |

+

<tr>

|

| 59 |

+

<td>GC ViT-XT</td>

|

| 60 |

+

<th>82.0</th>

|

| 61 |

+

<td>20</td>

|

| 62 |

+

<td>2.6</td>

|

| 63 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1OgSbX73AXmE0beStoJf2Jtda1yin9t9m">model</a></td>

|

| 64 |

+

</tr>

|

| 65 |

+

<tr>

|

| 66 |

+

<td>GC ViT-T</td>

|

| 67 |

+

<th>83.5</th>

|

| 68 |

+

<td>28</td>

|

| 69 |

+

<td>4.7</td>

|

| 70 |

+

<td><a href="https://drive.google.com/uc?export=download&id=11M6AsxKLhfOpD12Nm_c7lOvIIAn9cljy">model</a></td>

|

| 71 |

+

</tr>

|

| 72 |

+

<tr>

|

| 73 |

+

<td>GC ViT-T2</td>

|

| 74 |

+

<th>83.7</th>

|

| 75 |

+

<td>34</td>

|

| 76 |

+

<td>5.5</td>

|

| 77 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1cTD8VemWFiwAx0FB9cRMT-P4vRuylvmQ">model</a></td>

|

| 78 |

+

</tr>

|

| 79 |

+

<tr>

|

| 80 |

+

<td>GC ViT-S</td>

|

| 81 |

+

<th>84.3</th>

|

| 82 |

+

<td>51</td>

|

| 83 |

+

<td>8.5</td>

|

| 84 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1Nn6ABKmYjylyWC0I41Q3oExrn4fTzO9Y">model</a></td>

|

| 85 |

+

</tr>

|

| 86 |

+

<tr>

|

| 87 |

+

<td>GC ViT-S2</td>

|

| 88 |

+

<th>84.8</th>

|

| 89 |

+

<td>68</td>

|

| 90 |

+

<td>10.7</td>

|

| 91 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1E5TtYpTqILznjBLLBTlO5CGq343RbEan">model</a></td>

|

| 92 |

+

</tr>

|

| 93 |

+

<tr>

|

| 94 |

+

<td>GC ViT-B</td>

|

| 95 |

+

<th>85.0</th>

|

| 96 |

+

<td>90</td>

|

| 97 |

+

<td>14.8</td>

|

| 98 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1PF7qfxKLcv_ASOMetDP75n8lC50gaqyH">model</a></td>

|

| 99 |

+

</tr>

|

| 100 |

+

|

| 101 |

+

<tr>

|

| 102 |

+

<td>GC ViT-L</td>

|

| 103 |

+

<th>85.7</th>

|

| 104 |

+

<td>201</td>

|

| 105 |

+

<td>32.6</td>

|

| 106 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1Lkz1nWKTwCCUR7yQJM6zu_xwN1TR0mxS">model</a></td>

|

| 107 |

+

</tr>

|

| 108 |

+

|

| 109 |

+

</table>

|

| 110 |

+

|

| 111 |

+

|

| 112 |

+

**ImageNet-21K Pretrained Models**

|

| 113 |

+

|

| 114 |

+

<table>

|

| 115 |

+

<tr>

|

| 116 |

+

<th>Model Variant</th>

|

| 117 |

+

<th>Resolution</th>

|

| 118 |

+

<th>Acc@1</th>

|

| 119 |

+

<th>#Params(M)</th>

|

| 120 |

+

<th>FLOPs(G)</th>

|

| 121 |

+

<th>Download</th>

|

| 122 |

+

</tr>

|

| 123 |

+

<tr>

|

| 124 |

+

<td>GC ViT-L</td>

|

| 125 |

+

<td>224 x 224</td>

|

| 126 |

+

<th>86.6</th>

|

| 127 |

+

<td>201</td>

|

| 128 |

+

<td>32.6</td>

|

| 129 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1maGDr6mJkLyRTUkspMzCgSlhDzNRFGEf">model</a></td>

|

| 130 |

+

</tr>

|

| 131 |

+

<tr>

|

| 132 |

+

<td>GC ViT-L</td>

|

| 133 |

+

<td>384 x 384</td>

|

| 134 |

+

<th>87.4</th>

|

| 135 |

+

<td>201</td>

|

| 136 |

+

<td>120.4</td>

|

| 137 |

+

<td><a href="https://drive.google.com/uc?export=download&id=1P-IEhvQbJ3FjnunVkM1Z9dEpKw-tsuWv">model</a></td>

|

| 138 |

+

</tr>

|

| 139 |

+

|

| 140 |

+

</table>

|

| 141 |

+

|

| 142 |

+

|

| 143 |

+

## Citation

|

| 144 |

+

|

| 145 |

+

Please consider citing GC ViT paper if it is useful for your work:

|

| 146 |

+

|

| 147 |

+

```

|

| 148 |

+

@inproceedings{hatamizadeh2023global,

|

| 149 |

+

title={Global context vision transformers},

|

| 150 |

+

author={Hatamizadeh, Ali and Yin, Hongxu and Heinrich, Greg and Kautz, Jan and Molchanov, Pavlo},

|

| 151 |

+

booktitle={International Conference on Machine Learning},

|

| 152 |

+

pages={12633--12646},

|

| 153 |

+

year={2023},

|

| 154 |

+

organization={PMLR}

|

| 155 |

+

}

|

| 156 |

+

```

|

| 157 |

+

|

| 158 |

+

## Licenses

|

| 159 |

+

|

| 160 |

+

Copyright © 2023, NVIDIA Corporation. All rights reserved.

|

| 161 |

+

|

| 162 |

+

This work is made available under the Nvidia Source Code License-NC. Click [here](LICENSE) to view a copy of this license.

|

| 163 |

+

|

| 164 |

+

The pre-trained models are shared under [CC-BY-NC-SA-4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/). If you remix, transform, or build upon the material, you must distribute your contributions under the same license as the original.

|

| 165 |

+

|

| 166 |

+

For license information regarding the timm, please refer to its [repository](https://github.com/rwightman/pytorch-image-models).

|

| 167 |

+

|

| 168 |

+

For license information regarding the ImageNet dataset, please refer to the ImageNet [official website](https://www.image-net.org/).

|