restructure readme to match updated template

Browse files- README.md +29 -26

- configs/metadata.json +2 -1

- docs/README.md +29 -26

README.md

CHANGED

|

@@ -5,12 +5,10 @@ tags:

|

|

| 5 |

library_name: monai

|

| 6 |

license: apache-2.0

|

| 7 |

---

|

| 8 |

-

# Description

|

| 9 |

-

A pre-trained model for the endoscopic tool segmentation task.

|

| 10 |

-

|

| 11 |

# Model Overview

|

| 12 |

-

|

| 13 |

-

|

|

|

|

| 14 |

|

| 15 |

|

| 16 |

|

|

@@ -19,55 +17,60 @@ Datasets used in this work were provided by [Activ Surgical](https://www.activsu

|

|

| 19 |

|

| 20 |

Since datasets are private, existing public datasets like [EndoVis 2017](https://endovissub2017-roboticinstrumentsegmentation.grand-challenge.org/Data/) can be used to train a similar model.

|

| 21 |

|

|

|

|

|

|

|

| 22 |

When using EndoVis or any other dataset, it should be divided into "train", "valid" and "test" folders. Samples in each folder would better be images and converted to jpg format. Otherwise, "images", "labels", "val_images" and "val_labels" parameters in "configs/train.json" and "datalist" in "configs/inference.json" should be modified to fit given dataset. After that, "dataset_dir" parameter in "configs/train.json" and "configs/inference.json" should be changed to root folder which contains previous "train", "valid" and "test" folders.

|

| 23 |

|

| 24 |

Please notice that loading data operation in this bundle is adaptive. If images and labels are not in the same format, it may lead to a mismatching problem. For example, if images are in jpg format and labels are in npy format, PIL and Numpy readers will be used separately to load images and labels. Since these two readers have their own way to parse file's shape, loaded labels will be transpose of the correct ones and incur a missmatching problem.

|

| 25 |

|

| 26 |

## Training configuration

|

| 27 |

-

The training

|

| 28 |

-

|

| 29 |

-

Actual Model Input: 736 x 480 x 3

|

| 30 |

-

|

| 31 |

-

|

| 32 |

-

Input: 3 channel video frames

|

| 33 |

|

| 34 |

-

|

|

|

|

| 35 |

|

| 36 |

-

|

| 37 |

-

|

|

|

|

|

|

|

| 38 |

|

| 39 |

-

|

|

|

|

| 40 |

|

| 41 |

-

|

| 42 |

-

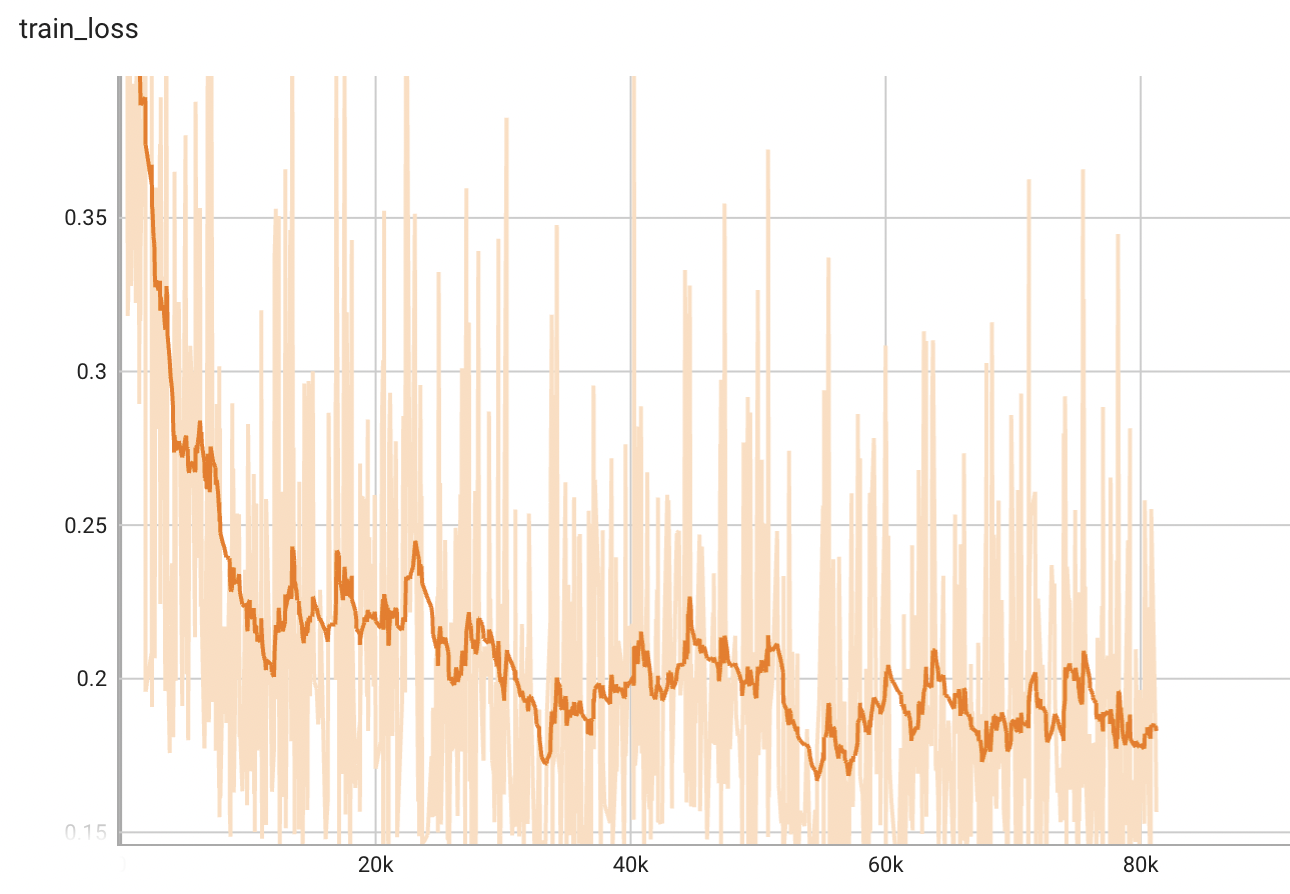

A graph showing the training loss over 100 epochs.

|

| 43 |

|

| 44 |

-

|

|

|

|

| 45 |

|

| 46 |

-

##

|

| 47 |

-

|

| 48 |

|

| 49 |

-

|

| 50 |

|

| 51 |

-

|

| 52 |

-

Execute training:

|

| 53 |

|

| 54 |

```

|

| 55 |

python -m monai.bundle run training --meta_file configs/metadata.json --config_file configs/train.json --logging_file configs/logging.conf

|

| 56 |

```

|

| 57 |

|

| 58 |

-

Override the `train` config to execute evaluation with the trained model:

|

| 59 |

|

| 60 |

```

|

| 61 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file "['configs/train.json','configs/evaluate.json']" --logging_file configs/logging.conf

|

| 62 |

```

|

| 63 |

|

| 64 |

-

Execute inference:

|

| 65 |

|

| 66 |

```

|

| 67 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json --logging_file configs/logging.conf

|

| 68 |

```

|

| 69 |

|

| 70 |

-

Export checkpoint to TorchScript file:

|

| 71 |

|

| 72 |

```

|

| 73 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.json

|

|

|

|

| 5 |

library_name: monai

|

| 6 |

license: apache-2.0

|

| 7 |

---

|

|

|

|

|

|

|

|

|

|

| 8 |

# Model Overview

|

| 9 |

+

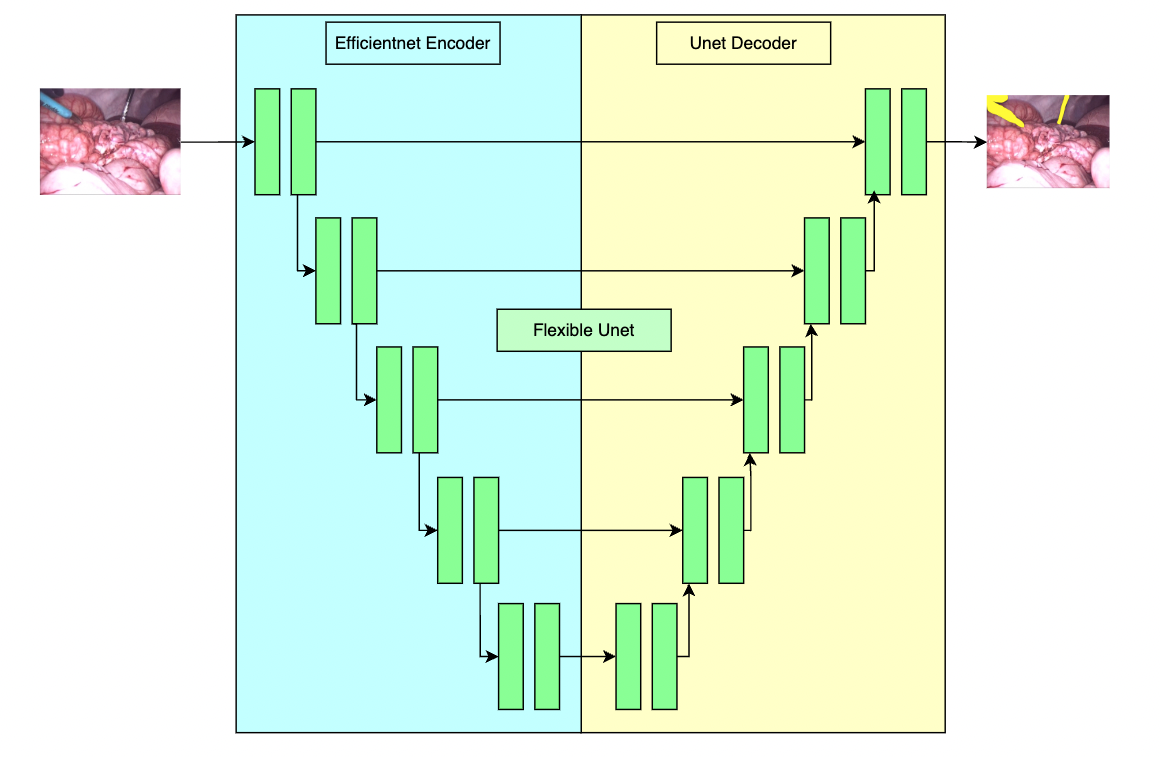

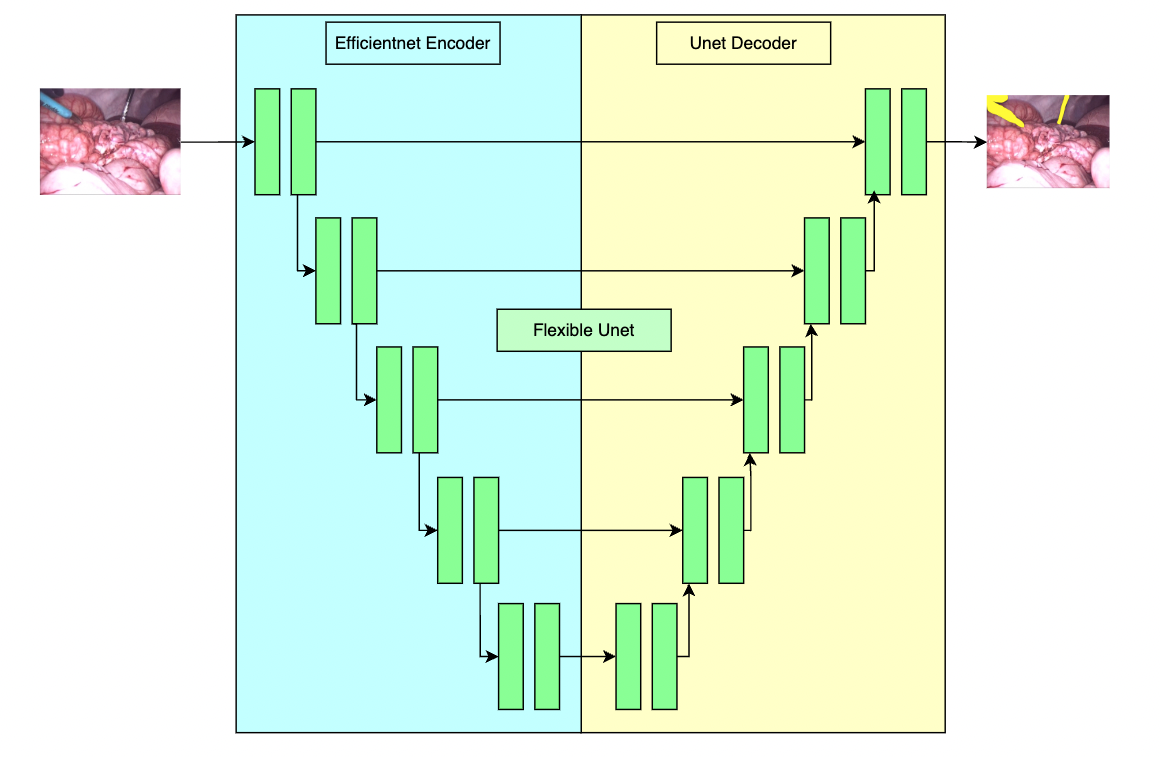

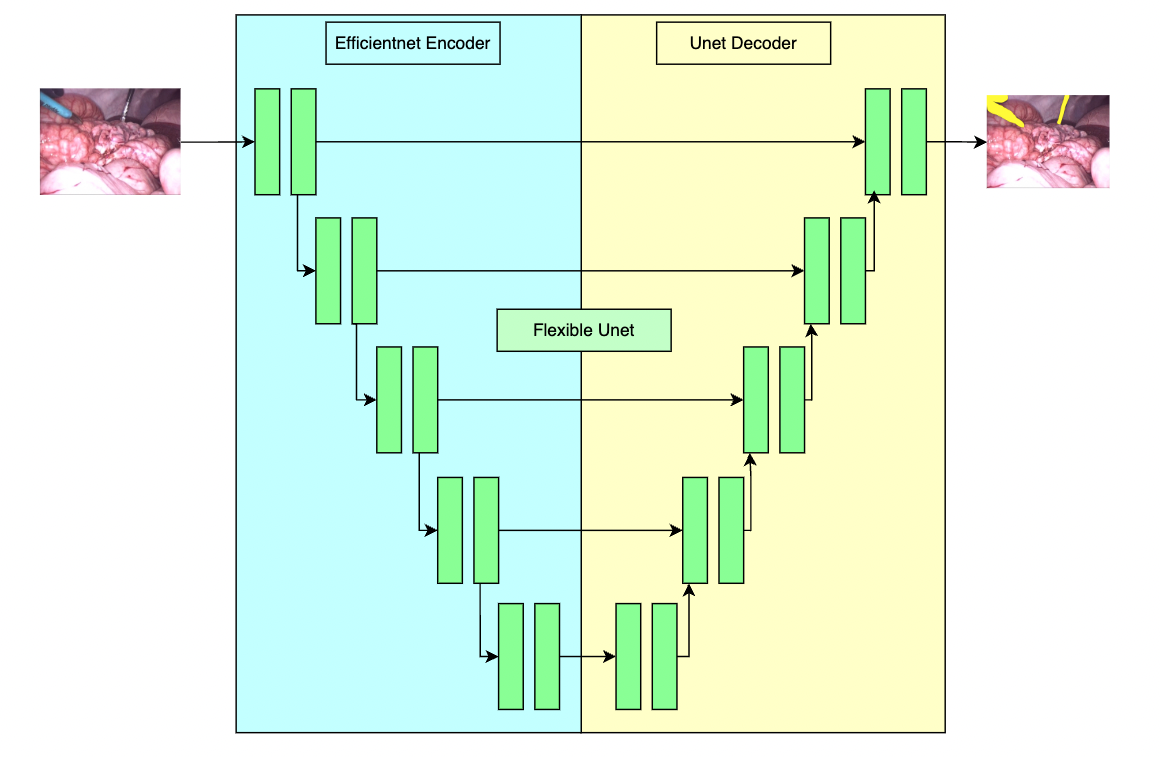

A pre-trained model for the endoscopic tool segmentation task and is trained using a flexible unet structure with an efficient-b2 [1] as the backbone and a UNet architecture [2] as the decoder. Datasets use private samples from [Activ Surgical](https://www.activsurgical.com/).

|

| 10 |

+

|

| 11 |

+

The [PyTorch model](https://drive.google.com/file/d/19yS3t2oLBiB7wT-qeQ82da95VJs_vzRK/view?usp=share_link) and [torchscript model](https://drive.google.com/file/d/1cDZ3Jr7mhpzdzaFyz8yHNowH8k0T1VZz/view?usp=share_link) are shared in google drive. Details can be found in large_files.yml file. Modify the "bundle_root" parameter specified in configs/train.json and configs/inference.json to reflect where models are downloaded. Expected directory path to place downloaded models is "models/" under "bundle_root".

|

| 12 |

|

| 13 |

|

| 14 |

|

|

|

|

| 17 |

|

| 18 |

Since datasets are private, existing public datasets like [EndoVis 2017](https://endovissub2017-roboticinstrumentsegmentation.grand-challenge.org/Data/) can be used to train a similar model.

|

| 19 |

|

| 20 |

+

### Preprocessing

|

| 21 |

+

|

| 22 |

When using EndoVis or any other dataset, it should be divided into "train", "valid" and "test" folders. Samples in each folder would better be images and converted to jpg format. Otherwise, "images", "labels", "val_images" and "val_labels" parameters in "configs/train.json" and "datalist" in "configs/inference.json" should be modified to fit given dataset. After that, "dataset_dir" parameter in "configs/train.json" and "configs/inference.json" should be changed to root folder which contains previous "train", "valid" and "test" folders.

|

| 23 |

|

| 24 |

Please notice that loading data operation in this bundle is adaptive. If images and labels are not in the same format, it may lead to a mismatching problem. For example, if images are in jpg format and labels are in npy format, PIL and Numpy readers will be used separately to load images and labels. Since these two readers have their own way to parse file's shape, loaded labels will be transpose of the correct ones and incur a missmatching problem.

|

| 25 |

|

| 26 |

## Training configuration

|

| 27 |

+

The training as performed with the following:

|

| 28 |

+

- GPU: At least 12GB of GPU memory

|

| 29 |

+

- Actual Model Input: 736 x 480 x 3

|

| 30 |

+

- Optimizer: Adam

|

| 31 |

+

- Learning Rate: 1e-4

|

|

|

|

| 32 |

|

| 33 |

+

### Input

|

| 34 |

+

A three channel video frame

|

| 35 |

|

| 36 |

+

### Output

|

| 37 |

+

Two channels:

|

| 38 |

+

- Label 1: tools

|

| 39 |

+

- Label 0: everything else

|

| 40 |

|

| 41 |

+

## Performance

|

| 42 |

+

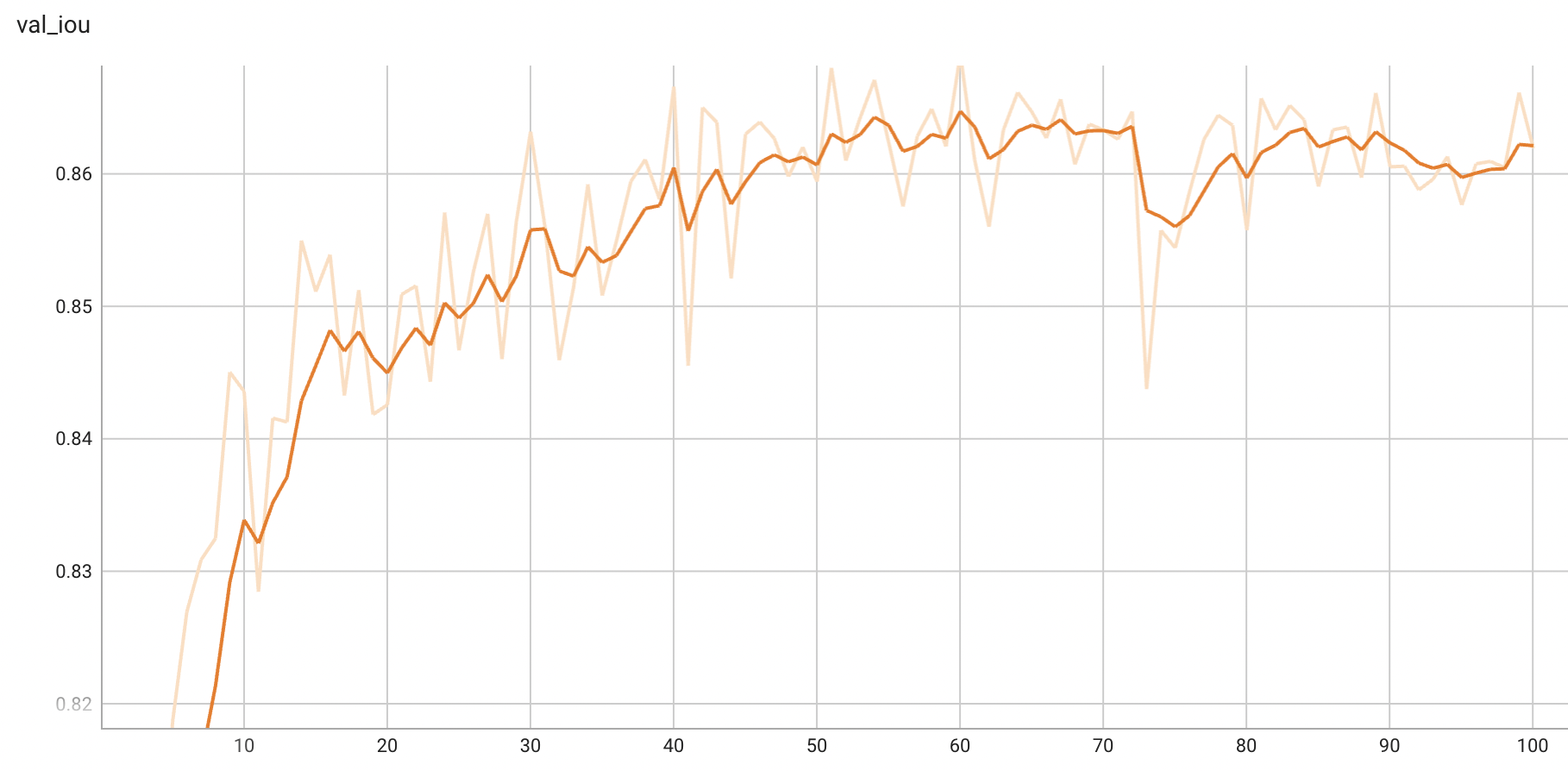

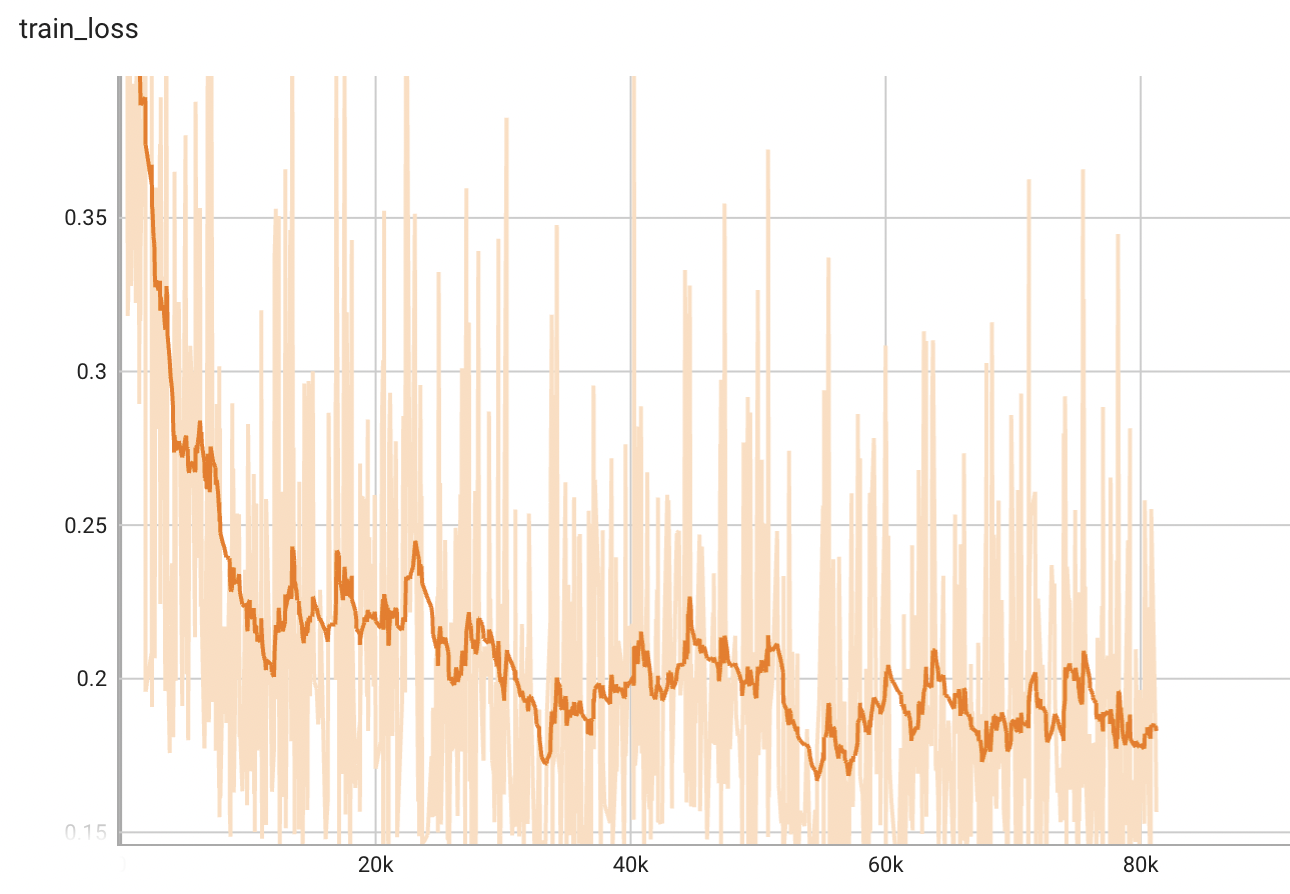

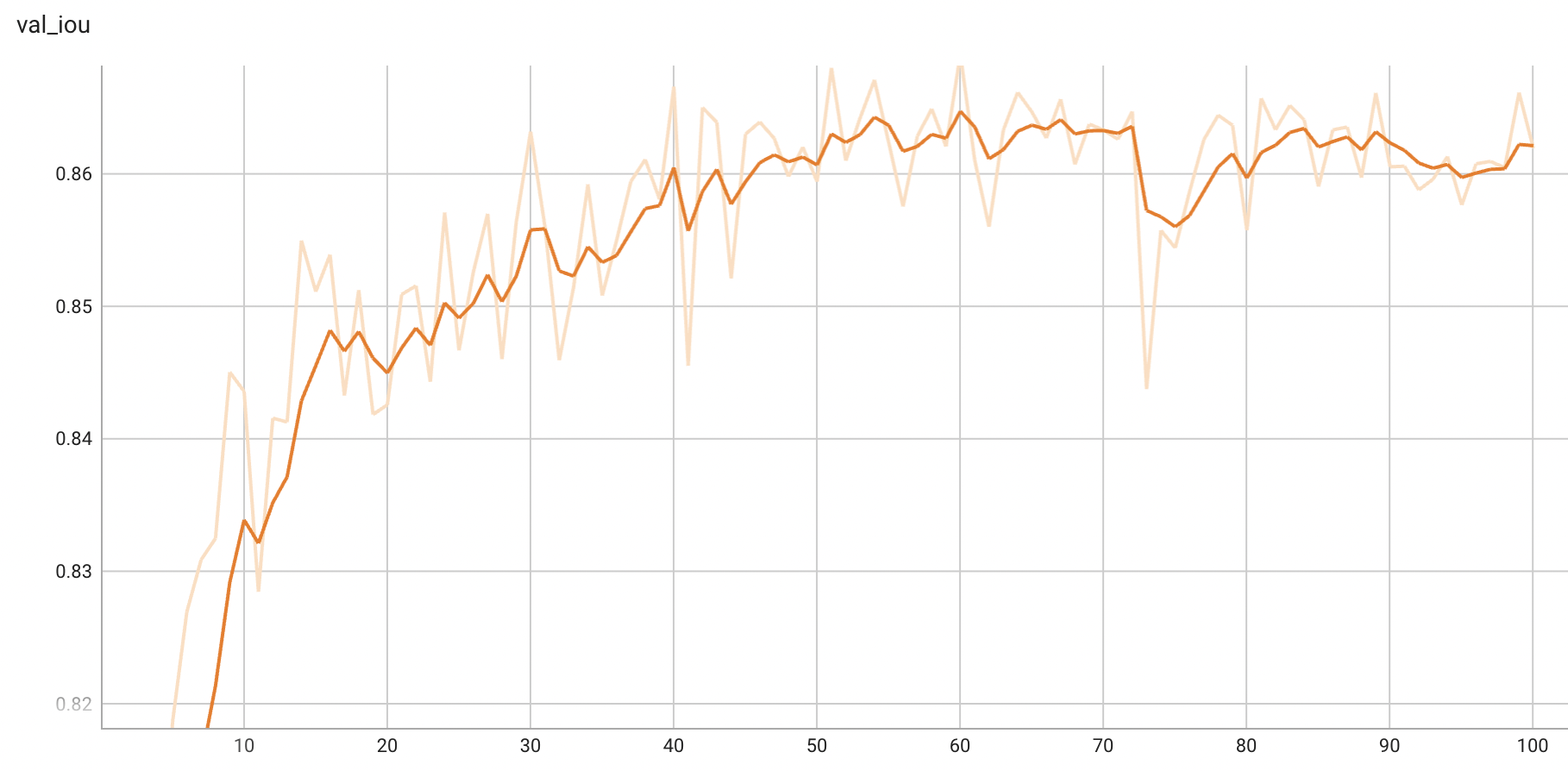

IoU was used for evaluating the performance of the model. This model achieves a mean IoU score of 0.87.

|

| 43 |

|

| 44 |

+

#### Training Loss

|

| 45 |

+

|

| 46 |

|

| 47 |

+

#### Validation IoU

|

| 48 |

+

|

| 49 |

|

| 50 |

+

## MONAI Bundle Commands

|

| 51 |

+

In addition to the Pythonic APIs, a few command line interfaces (CLI) are provided to interact with the bundle. The CLI supports flexible use cases, such as overriding configs at runtime and predefining arguments in a file.

|

| 52 |

|

| 53 |

+

For more details usage instructions, visit the [MONAI Bundle Configuration Page](https://docs.monai.io/en/latest/config_syntax.html).

|

| 54 |

|

| 55 |

+

#### Execute training:

|

|

|

|

| 56 |

|

| 57 |

```

|

| 58 |

python -m monai.bundle run training --meta_file configs/metadata.json --config_file configs/train.json --logging_file configs/logging.conf

|

| 59 |

```

|

| 60 |

|

| 61 |

+

#### Override the `train` config to execute evaluation with the trained model:

|

| 62 |

|

| 63 |

```

|

| 64 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file "['configs/train.json','configs/evaluate.json']" --logging_file configs/logging.conf

|

| 65 |

```

|

| 66 |

|

| 67 |

+

#### Execute inference:

|

| 68 |

|

| 69 |

```

|

| 70 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json --logging_file configs/logging.conf

|

| 71 |

```

|

| 72 |

|

| 73 |

+

#### Export checkpoint to TorchScript file:

|

| 74 |

|

| 75 |

```

|

| 76 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.json

|

configs/metadata.json

CHANGED

|

@@ -1,7 +1,8 @@

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_20220324.json",

|

| 3 |

-

"version": "0.3.

|

| 4 |

"changelog": {

|

|

|

|

| 5 |

"0.3.1": "add figures of workflow and metrics, add invert transform",

|

| 6 |

"0.3.0": "update dataset processing",

|

| 7 |

"0.2.1": "update to use monai 1.0.1",

|

|

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_20220324.json",

|

| 3 |

+

"version": "0.3.2",

|

| 4 |

"changelog": {

|

| 5 |

+

"0.3.2": "restructure readme to match updated template",

|

| 6 |

"0.3.1": "add figures of workflow and metrics, add invert transform",

|

| 7 |

"0.3.0": "update dataset processing",

|

| 8 |

"0.2.1": "update to use monai 1.0.1",

|

docs/README.md

CHANGED

|

@@ -1,9 +1,7 @@

|

|

| 1 |

-

# Description

|

| 2 |

-

A pre-trained model for the endoscopic tool segmentation task.

|

| 3 |

-

|

| 4 |

# Model Overview

|

| 5 |

-

|

| 6 |

-

|

|

|

|

| 7 |

|

| 8 |

|

| 9 |

|

|

@@ -12,55 +10,60 @@ Datasets used in this work were provided by [Activ Surgical](https://www.activsu

|

|

| 12 |

|

| 13 |

Since datasets are private, existing public datasets like [EndoVis 2017](https://endovissub2017-roboticinstrumentsegmentation.grand-challenge.org/Data/) can be used to train a similar model.

|

| 14 |

|

|

|

|

|

|

|

| 15 |

When using EndoVis or any other dataset, it should be divided into "train", "valid" and "test" folders. Samples in each folder would better be images and converted to jpg format. Otherwise, "images", "labels", "val_images" and "val_labels" parameters in "configs/train.json" and "datalist" in "configs/inference.json" should be modified to fit given dataset. After that, "dataset_dir" parameter in "configs/train.json" and "configs/inference.json" should be changed to root folder which contains previous "train", "valid" and "test" folders.

|

| 16 |

|

| 17 |

Please notice that loading data operation in this bundle is adaptive. If images and labels are not in the same format, it may lead to a mismatching problem. For example, if images are in jpg format and labels are in npy format, PIL and Numpy readers will be used separately to load images and labels. Since these two readers have their own way to parse file's shape, loaded labels will be transpose of the correct ones and incur a missmatching problem.

|

| 18 |

|

| 19 |

## Training configuration

|

| 20 |

-

The training

|

| 21 |

-

|

| 22 |

-

Actual Model Input: 736 x 480 x 3

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

Input: 3 channel video frames

|

| 26 |

|

| 27 |

-

|

|

|

|

| 28 |

|

| 29 |

-

|

| 30 |

-

|

|

|

|

|

|

|

| 31 |

|

| 32 |

-

|

|

|

|

| 33 |

|

| 34 |

-

|

| 35 |

-

A graph showing the training loss over 100 epochs.

|

| 36 |

|

| 37 |

-

|

|

|

|

| 38 |

|

| 39 |

-

##

|

| 40 |

-

|

| 41 |

|

| 42 |

-

|

| 43 |

|

| 44 |

-

|

| 45 |

-

Execute training:

|

| 46 |

|

| 47 |

```

|

| 48 |

python -m monai.bundle run training --meta_file configs/metadata.json --config_file configs/train.json --logging_file configs/logging.conf

|

| 49 |

```

|

| 50 |

|

| 51 |

-

Override the `train` config to execute evaluation with the trained model:

|

| 52 |

|

| 53 |

```

|

| 54 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file "['configs/train.json','configs/evaluate.json']" --logging_file configs/logging.conf

|

| 55 |

```

|

| 56 |

|

| 57 |

-

Execute inference:

|

| 58 |

|

| 59 |

```

|

| 60 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json --logging_file configs/logging.conf

|

| 61 |

```

|

| 62 |

|

| 63 |

-

Export checkpoint to TorchScript file:

|

| 64 |

|

| 65 |

```

|

| 66 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.json

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

# Model Overview

|

| 2 |

+

A pre-trained model for the endoscopic tool segmentation task and is trained using a flexible unet structure with an efficient-b2 [1] as the backbone and a UNet architecture [2] as the decoder. Datasets use private samples from [Activ Surgical](https://www.activsurgical.com/).

|

| 3 |

+

|

| 4 |

+

The [PyTorch model](https://drive.google.com/file/d/19yS3t2oLBiB7wT-qeQ82da95VJs_vzRK/view?usp=share_link) and [torchscript model](https://drive.google.com/file/d/1cDZ3Jr7mhpzdzaFyz8yHNowH8k0T1VZz/view?usp=share_link) are shared in google drive. Details can be found in large_files.yml file. Modify the "bundle_root" parameter specified in configs/train.json and configs/inference.json to reflect where models are downloaded. Expected directory path to place downloaded models is "models/" under "bundle_root".

|

| 5 |

|

| 6 |

|

| 7 |

|

|

|

|

| 10 |

|

| 11 |

Since datasets are private, existing public datasets like [EndoVis 2017](https://endovissub2017-roboticinstrumentsegmentation.grand-challenge.org/Data/) can be used to train a similar model.

|

| 12 |

|

| 13 |

+

### Preprocessing

|

| 14 |

+

|

| 15 |

When using EndoVis or any other dataset, it should be divided into "train", "valid" and "test" folders. Samples in each folder would better be images and converted to jpg format. Otherwise, "images", "labels", "val_images" and "val_labels" parameters in "configs/train.json" and "datalist" in "configs/inference.json" should be modified to fit given dataset. After that, "dataset_dir" parameter in "configs/train.json" and "configs/inference.json" should be changed to root folder which contains previous "train", "valid" and "test" folders.

|

| 16 |

|

| 17 |

Please notice that loading data operation in this bundle is adaptive. If images and labels are not in the same format, it may lead to a mismatching problem. For example, if images are in jpg format and labels are in npy format, PIL and Numpy readers will be used separately to load images and labels. Since these two readers have their own way to parse file's shape, loaded labels will be transpose of the correct ones and incur a missmatching problem.

|

| 18 |

|

| 19 |

## Training configuration

|

| 20 |

+

The training as performed with the following:

|

| 21 |

+

- GPU: At least 12GB of GPU memory

|

| 22 |

+

- Actual Model Input: 736 x 480 x 3

|

| 23 |

+

- Optimizer: Adam

|

| 24 |

+

- Learning Rate: 1e-4

|

|

|

|

| 25 |

|

| 26 |

+

### Input

|

| 27 |

+

A three channel video frame

|

| 28 |

|

| 29 |

+

### Output

|

| 30 |

+

Two channels:

|

| 31 |

+

- Label 1: tools

|

| 32 |

+

- Label 0: everything else

|

| 33 |

|

| 34 |

+

## Performance

|

| 35 |

+

IoU was used for evaluating the performance of the model. This model achieves a mean IoU score of 0.87.

|

| 36 |

|

| 37 |

+

#### Training Loss

|

| 38 |

+

|

| 39 |

|

| 40 |

+

#### Validation IoU

|

| 41 |

+

|

| 42 |

|

| 43 |

+

## MONAI Bundle Commands

|

| 44 |

+

In addition to the Pythonic APIs, a few command line interfaces (CLI) are provided to interact with the bundle. The CLI supports flexible use cases, such as overriding configs at runtime and predefining arguments in a file.

|

| 45 |

|

| 46 |

+

For more details usage instructions, visit the [MONAI Bundle Configuration Page](https://docs.monai.io/en/latest/config_syntax.html).

|

| 47 |

|

| 48 |

+

#### Execute training:

|

|

|

|

| 49 |

|

| 50 |

```

|

| 51 |

python -m monai.bundle run training --meta_file configs/metadata.json --config_file configs/train.json --logging_file configs/logging.conf

|

| 52 |

```

|

| 53 |

|

| 54 |

+

#### Override the `train` config to execute evaluation with the trained model:

|

| 55 |

|

| 56 |

```

|

| 57 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file "['configs/train.json','configs/evaluate.json']" --logging_file configs/logging.conf

|

| 58 |

```

|

| 59 |

|

| 60 |

+

#### Execute inference:

|

| 61 |

|

| 62 |

```

|

| 63 |

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json --logging_file configs/logging.conf

|

| 64 |

```

|

| 65 |

|

| 66 |

+

#### Export checkpoint to TorchScript file:

|

| 67 |

|

| 68 |

```

|

| 69 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.json

|