complete the model package

Browse files- .gitattributes +1 -0

- README.md +49 -0

- configs/inference.json +116 -0

- configs/logging.conf +21 -0

- configs/metadata.json +75 -0

- docs/README.md +42 -0

- docs/license.txt +201 -0

- models/model.pt +3 -0

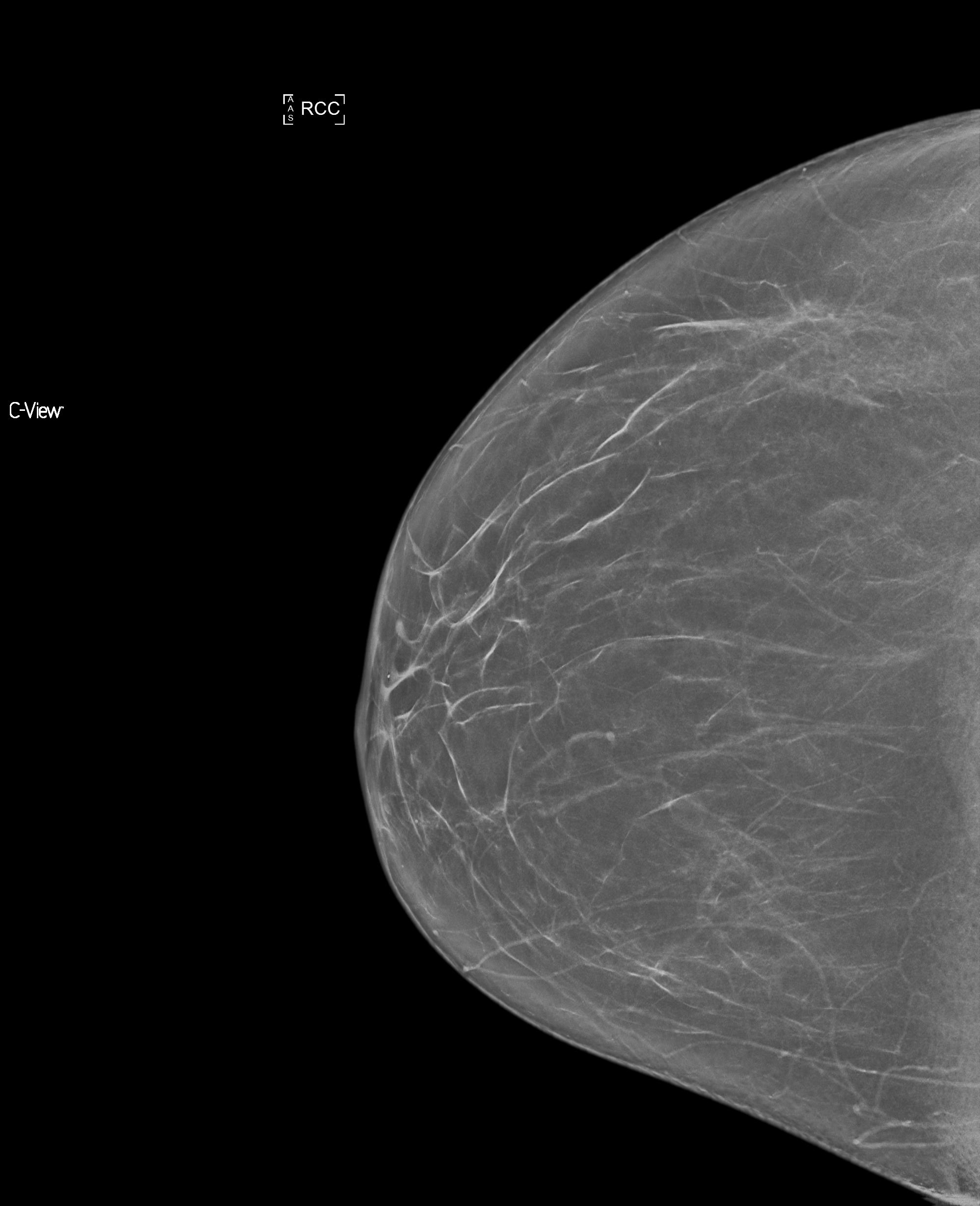

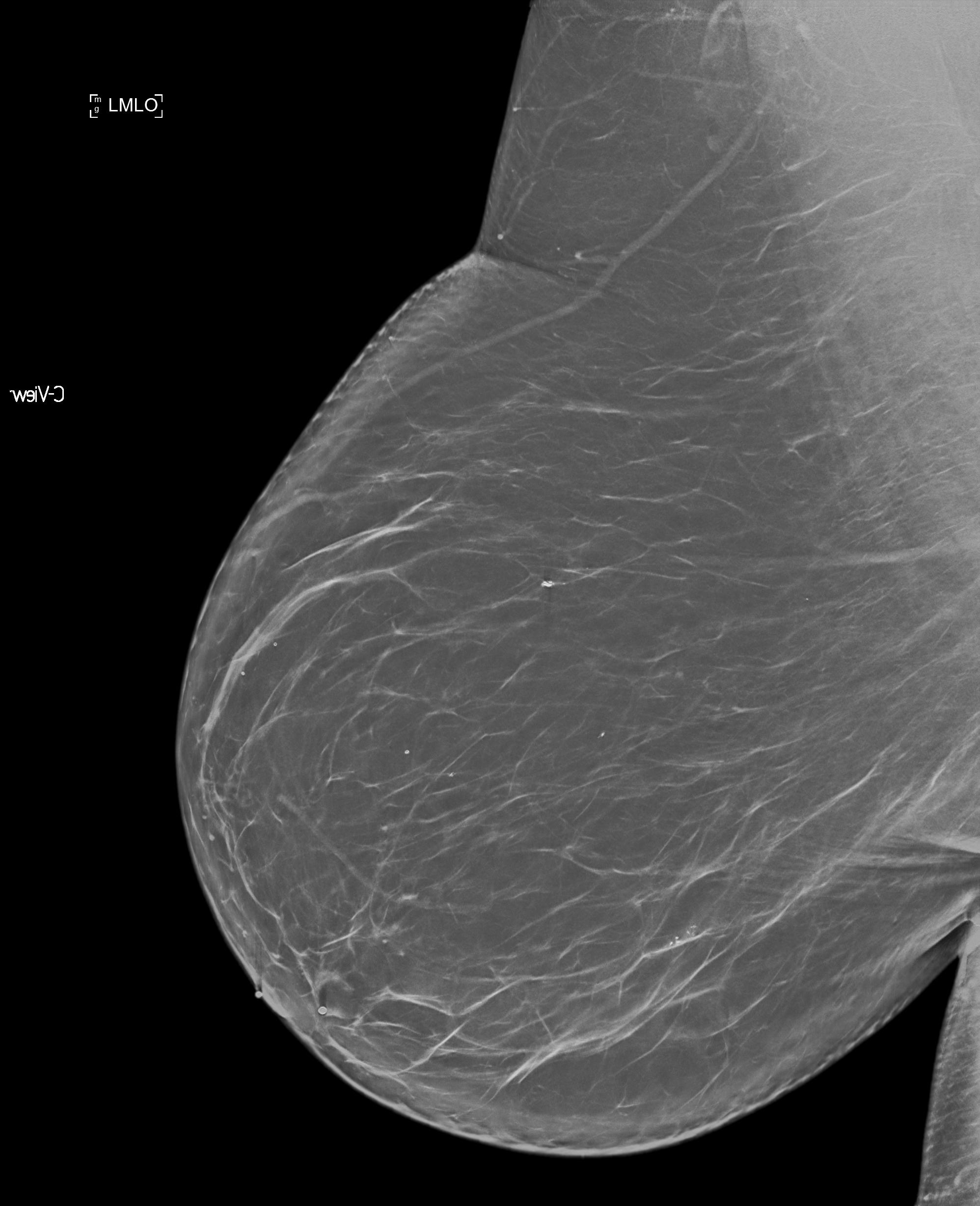

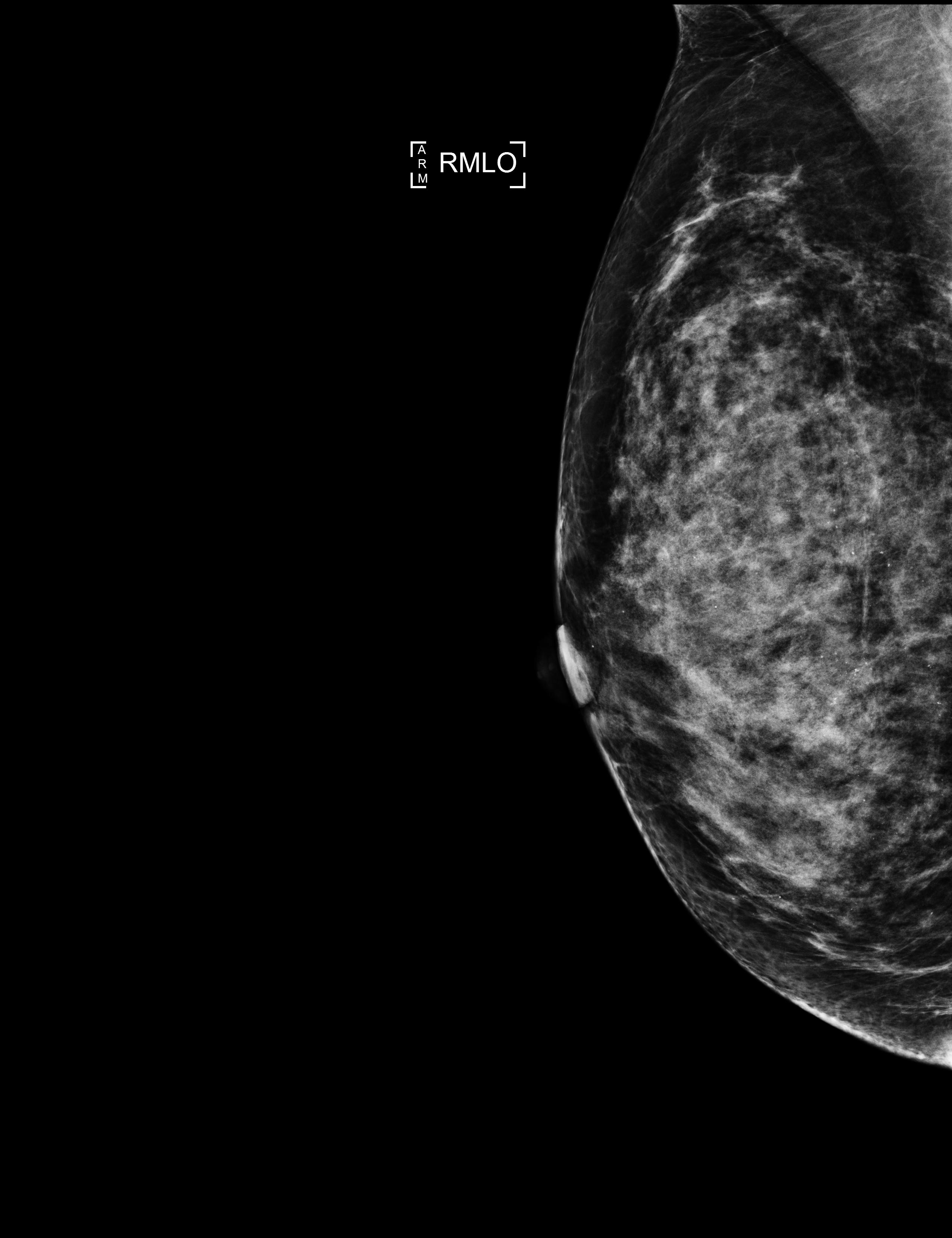

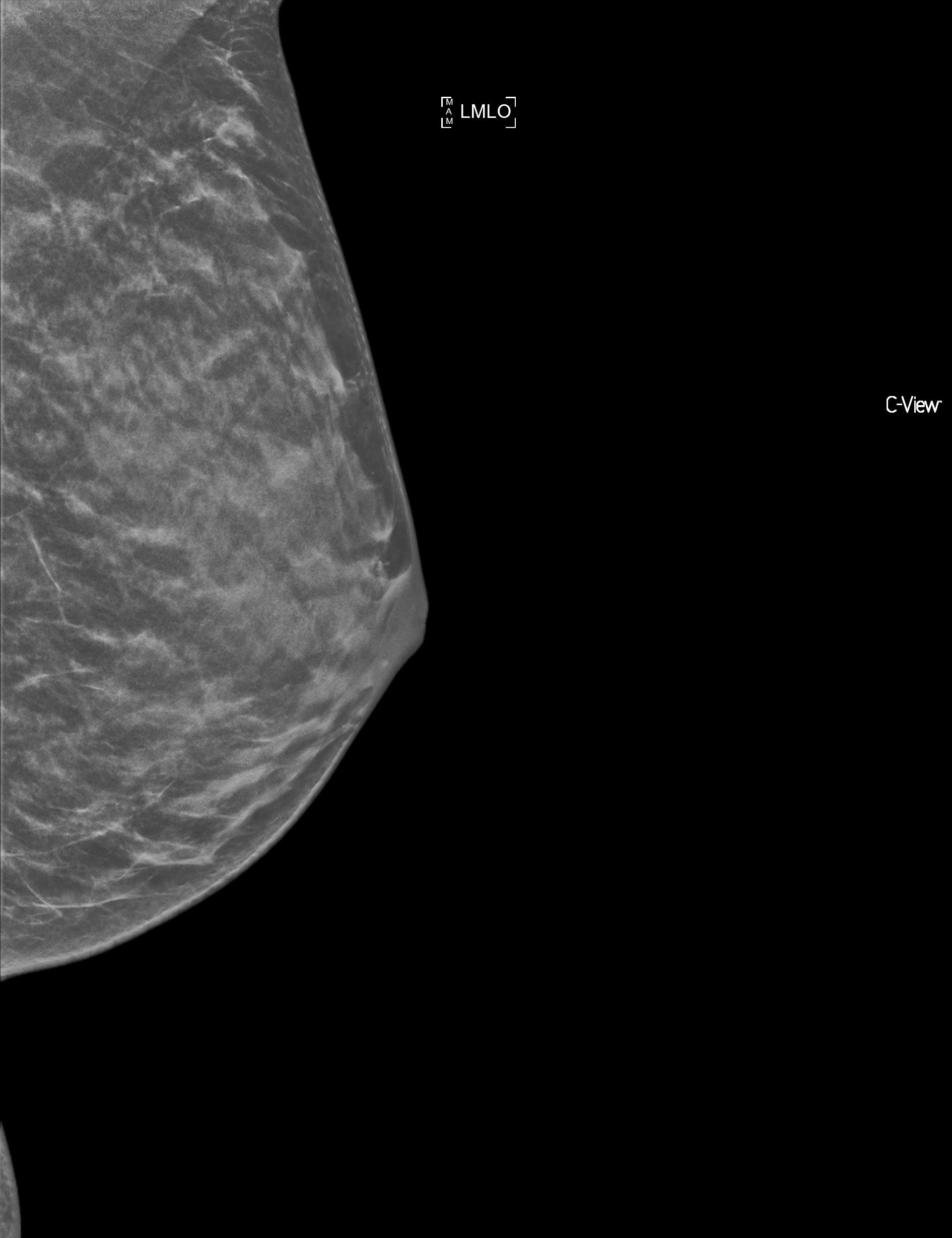

- sample_data/A/sample_A1.jpg +0 -0

- sample_data/A/sample_A2.jpg +0 -0

- sample_data/A/sample_A3.jpg +0 -0

- sample_data/A/sample_A4.jpg +3 -0

- sample_data/B/sample_B1.jpg +0 -0

- sample_data/B/sample_B2.jpg +0 -0

- sample_data/B/sample_B3.jpg +0 -0

- sample_data/B/sample_B4.jpg +0 -0

- sample_data/C/sample_C1.jpg +0 -0

- sample_data/C/sample_C2.jpg +0 -0

- sample_data/C/sample_C3.jpg +0 -0

- sample_data/C/sample_C4.jpg +0 -0

- sample_data/D/sample_D1.jpg +0 -0

- sample_data/D/sample_D2.jpg +0 -0

- sample_data/D/sample_D3.jpg +0 -0

- sample_data/D/sample_D4.jpg +0 -0

- scripts/__init__.py +1 -0

- scripts/createList.py +17 -0

- scripts/create_dataset.py +42 -0

- scripts/script.py +24 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

sample_data/A/sample_A4.jpg filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,49 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- monai

|

| 4 |

+

- medical

|

| 5 |

+

library_name: monai

|

| 6 |

+

license: unknown

|

| 7 |

+

---

|

| 8 |

+

# Description

|

| 9 |

+

A pre-trained model for breast-density classification.

|

| 10 |

+

|

| 11 |

+

# Model Overview

|

| 12 |

+

This model is trained using transfer learning on InceptionV3. The model weights were fine tuned using the Mayo Clinic Data. The details of training and data is outlined in https://arxiv.org/abs/2202.08238.

|

| 13 |

+

|

| 14 |

+

The bundle does not support torchscript.

|

| 15 |

+

|

| 16 |

+

# Input and Output Formats

|

| 17 |

+

The input image should have the size [3, 299, 299]. The output is an array with probabilities for each of the four class.

|

| 18 |

+

|

| 19 |

+

# Sample Data

|

| 20 |

+

In the folder `sample_data` few example input images are stored for each category of images. These images are stored in jpeg format for sharing purpose.

|

| 21 |

+

|

| 22 |

+

# Input and Output Formats

|

| 23 |

+

The input image should have the size [299, 299, 3]. For a dicom image which are single channel. The channel can be repeated 3 times.

|

| 24 |

+

The output is an array with probabilities for each of the four class.

|

| 25 |

+

|

| 26 |

+

# Commands Example

|

| 27 |

+

Create a json file with names of all the input files. Execute the following command

|

| 28 |

+

```

|

| 29 |

+

python scripts/create_dataset.py -base_dir <path to the bundle root dir>/sample_data -output_file configs/sample_image_data.json

|

| 30 |

+

```

|

| 31 |

+

Change the `filename` for the field `data` with the absolute path for `sample_image_data.json`

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

# Add scripts folder to your python path as follows

|

| 35 |

+

```

|

| 36 |

+

export PYTHONPATH=$PYTHONPATH:<path to the bundle root dir>/scripts

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

# Execute Inference

|

| 40 |

+

The inference can be executed as follows

|

| 41 |

+

```

|

| 42 |

+

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json configs/logging.conf

|

| 43 |

+

```

|

| 44 |

+

|

| 45 |

+

# Execute training

|

| 46 |

+

It is a work in progress and will be shared in the next version soon.

|

| 47 |

+

|

| 48 |

+

# Contributors

|

| 49 |

+

This model is made available from Center for Augmented Intelligence in Imaging, Mayo Clinic Florida. For questions email Vikash Gupta (gupta.vikash@mayo.edu).

|

configs/inference.json

ADDED

|

@@ -0,0 +1,116 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"import": [

|

| 3 |

+

"$import glob",

|

| 4 |

+

"$import os",

|

| 5 |

+

"$import torchvision"

|

| 6 |

+

],

|

| 7 |

+

"bundle_root": ".",

|

| 8 |

+

"model_dir": "$@bundle_root + '/models'",

|

| 9 |

+

"output_dir": "$@bundle_root + '/output'",

|

| 10 |

+

"data": {

|

| 11 |

+

"_target_": "scripts.createList.CreateImageLabelList",

|

| 12 |

+

"filename": "./configs/sample_image_data.json"

|

| 13 |

+

},

|

| 14 |

+

"test_imagelist": "$@data.create_dataset('Test')[0]",

|

| 15 |

+

"test_labellist": "$@data.create_dataset('Test')[1]",

|

| 16 |

+

"dataset": {

|

| 17 |

+

"_target_": "CacheDataset",

|

| 18 |

+

"data": "$[{'image': i, 'label': l} for i, l in zip(@test_imagelist, @test_labellist)]",

|

| 19 |

+

"transform": "@preprocessing",

|

| 20 |

+

"cache_rate": 1,

|

| 21 |

+

"num_workers": 4

|

| 22 |

+

},

|

| 23 |

+

"dataloader": {

|

| 24 |

+

"_target_": "DataLoader",

|

| 25 |

+

"dataset": "@dataset",

|

| 26 |

+

"batch_size": 4,

|

| 27 |

+

"shuffle": false,

|

| 28 |

+

"num_workers": 4

|

| 29 |

+

},

|

| 30 |

+

"device": "$torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')",

|

| 31 |

+

"network_def": {

|

| 32 |

+

"_target_": "TorchVisionFCModel",

|

| 33 |

+

"model_name": "inception_v3",

|

| 34 |

+

"num_classes": 4,

|

| 35 |

+

"pool": null,

|

| 36 |

+

"use_conv": false,

|

| 37 |

+

"bias": true,

|

| 38 |

+

"pretrained": true

|

| 39 |

+

},

|

| 40 |

+

"network": "$@network_def.to(@device)",

|

| 41 |

+

"preprocessing": {

|

| 42 |

+

"_target_": "Compose",

|

| 43 |

+

"transforms": [

|

| 44 |

+

{

|

| 45 |

+

"_target_": "LoadImaged",

|

| 46 |

+

"keys": "image"

|

| 47 |

+

},

|

| 48 |

+

{

|

| 49 |

+

"_target_": "EnsureChannelFirstd",

|

| 50 |

+

"keys": "image",

|

| 51 |

+

"channel_dim": 2

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"_target_": "ScaleIntensityd",

|

| 55 |

+

"keys": "image",

|

| 56 |

+

"minv": 0.0,

|

| 57 |

+

"maxv": 1.0

|

| 58 |

+

},

|

| 59 |

+

{

|

| 60 |

+

"_target_": "Resized",

|

| 61 |

+

"keys": "image",

|

| 62 |

+

"spatial_size": [

|

| 63 |

+

299,

|

| 64 |

+

299

|

| 65 |

+

]

|

| 66 |

+

}

|

| 67 |

+

]

|

| 68 |

+

},

|

| 69 |

+

"inferer": {

|

| 70 |

+

"_target_": "SimpleInferer"

|

| 71 |

+

},

|

| 72 |

+

"postprocessing": {

|

| 73 |

+

"_target_": "Compose",

|

| 74 |

+

"transforms": [

|

| 75 |

+

{

|

| 76 |

+

"_target_": "Activationsd",

|

| 77 |

+

"keys": "pred",

|

| 78 |

+

"sigmoid": true

|

| 79 |

+

}

|

| 80 |

+

]

|

| 81 |

+

},

|

| 82 |

+

"handlers": [

|

| 83 |

+

{

|

| 84 |

+

"_target_": "CheckpointLoader",

|

| 85 |

+

"load_path": "$@model_dir + '/model.pt'",

|

| 86 |

+

"load_dict": {

|

| 87 |

+

"model": "@network"

|

| 88 |

+

}

|

| 89 |

+

},

|

| 90 |

+

{

|

| 91 |

+

"_target_": "StatsHandler",

|

| 92 |

+

"iteration_log": false,

|

| 93 |

+

"output_transform": "$lambda x: None"

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"_target_": "ClassificationSaver",

|

| 97 |

+

"output_dir": "@output_dir",

|

| 98 |

+

"batch_transform": "$monai.handlers.from_engine(['image_meta_dict'])",

|

| 99 |

+

"output_transform": "$monai.handlers.from_engine(['pred'])"

|

| 100 |

+

}

|

| 101 |

+

],

|

| 102 |

+

"evaluator": {

|

| 103 |

+

"_target_": "SupervisedEvaluator",

|

| 104 |

+

"device": "@device",

|

| 105 |

+

"val_data_loader": "@dataloader",

|

| 106 |

+

"network": "@network",

|

| 107 |

+

"inferer": "@inferer",

|

| 108 |

+

"postprocessing": "@postprocessing",

|

| 109 |

+

"val_handlers": "@handlers",

|

| 110 |

+

"amp": true

|

| 111 |

+

},

|

| 112 |

+

"evaluating": [

|

| 113 |

+

"$setattr(torch.backends.cudnn, 'benchmark', True)",

|

| 114 |

+

"$@evaluator.run()"

|

| 115 |

+

]

|

| 116 |

+

}

|

configs/logging.conf

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[loggers]

|

| 2 |

+

keys=root

|

| 3 |

+

|

| 4 |

+

[handlers]

|

| 5 |

+

keys=consoleHandler

|

| 6 |

+

|

| 7 |

+

[formatters]

|

| 8 |

+

keys=fullFormatter

|

| 9 |

+

|

| 10 |

+

[logger_root]

|

| 11 |

+

level=INFO

|

| 12 |

+

handlers=consoleHandler

|

| 13 |

+

|

| 14 |

+

[handler_consoleHandler]

|

| 15 |

+

class=StreamHandler

|

| 16 |

+

level=INFO

|

| 17 |

+

formatter=fullFormatter

|

| 18 |

+

args=(sys.stdout,)

|

| 19 |

+

|

| 20 |

+

[formatter_fullFormatter]

|

| 21 |

+

format=%(asctime)s - %(name)s - %(levelname)s - %(message)s

|

configs/metadata.json

ADDED

|

@@ -0,0 +1,75 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_20220324.json",

|

| 3 |

+

"changelog": {

|

| 4 |

+

"0.1.0": "complete the model package"

|

| 5 |

+

},

|

| 6 |

+

"version": "0.1.0",

|

| 7 |

+

"monai_version": "1.0.0",

|

| 8 |

+

"pytorch_version": "1.12.1",

|

| 9 |

+

"numpy_version": "1.21.2",

|

| 10 |

+

"optional_packages_version": {

|

| 11 |

+

"torchvision": "0.13.1"

|

| 12 |

+

},

|

| 13 |

+

"task": "Breast Density Classification",

|

| 14 |

+

"description": "A pre-trained model for classifying breast images (mammograms) ",

|

| 15 |

+

"authors": "Center for Augmented Intelligence in Imaging, Mayo Clinic Florida",

|

| 16 |

+

"copyright": "Copyright (c) Mayo Clinic",

|

| 17 |

+

"data_source": "Mayo Clinic ",

|

| 18 |

+

"data_type": "Jpeg",

|

| 19 |

+

"image_classes": "three channel data, intensity scaled to [0, 1]. A single grayscale is copied to 3 channels",

|

| 20 |

+

"label_classes": "four classes marked as [1, 0, 0, 0], [0, 1, 0, 0], [0, 0, 1, 0] and [0, 0, 0, 1] for the classes A, B, C and D respectively.",

|

| 21 |

+

"pred_classes": "One hot data",

|

| 22 |

+

"eval_metrics": {

|

| 23 |

+

"accuracy": 0.96

|

| 24 |

+

},

|

| 25 |

+

"intended_use": "This is an example, not to be used for diagnostic purposes",

|

| 26 |

+

"references": [

|

| 27 |

+

"Gupta, Vikash, et al. A multi-reconstruction study of breast density estimation using Deep Learning. arXiv preprint arXiv:2202.08238 (2022)."

|

| 28 |

+

],

|

| 29 |

+

"network_data_format": {

|

| 30 |

+

"inputs": {

|

| 31 |

+

"image": {

|

| 32 |

+

"type": "image",

|

| 33 |

+

"format": "magnitude",

|

| 34 |

+

"modality": "Mammogram",

|

| 35 |

+

"num_channels": 3,

|

| 36 |

+

"spatial_shape": [

|

| 37 |

+

229,

|

| 38 |

+

229

|

| 39 |

+

],

|

| 40 |

+

"dtype": "float32",

|

| 41 |

+

"value_range": [

|

| 42 |

+

0,

|

| 43 |

+

1

|

| 44 |

+

],

|

| 45 |

+

"is_patch_data": false,

|

| 46 |

+

"channel_def": {

|

| 47 |

+

"0": "image"

|

| 48 |

+

}

|

| 49 |

+

}

|

| 50 |

+

},

|

| 51 |

+

"outputs": {

|

| 52 |

+

"pred": {

|

| 53 |

+

"type": "image",

|

| 54 |

+

"format": "labels",

|

| 55 |

+

"dtype": "float32",

|

| 56 |

+

"value_range": [

|

| 57 |

+

0,

|

| 58 |

+

1

|

| 59 |

+

],

|

| 60 |

+

"num_channels": 4,

|

| 61 |

+

"spatial_shape": [

|

| 62 |

+

1,

|

| 63 |

+

4

|

| 64 |

+

],

|

| 65 |

+

"is_patch_data": false,

|

| 66 |

+

"channel_def": {

|

| 67 |

+

"0": "A",

|

| 68 |

+

"1": "B",

|

| 69 |

+

"2": "C",

|

| 70 |

+

"3": "D"

|

| 71 |

+

}

|

| 72 |

+

}

|

| 73 |

+

}

|

| 74 |

+

}

|

| 75 |

+

}

|

docs/README.md

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Description

|

| 2 |

+

A pre-trained model for breast-density classification.

|

| 3 |

+

|

| 4 |

+

# Model Overview

|

| 5 |

+

This model is trained using transfer learning on InceptionV3. The model weights were fine tuned using the Mayo Clinic Data. The details of training and data is outlined in https://arxiv.org/abs/2202.08238.

|

| 6 |

+

|

| 7 |

+

The bundle does not support torchscript.

|

| 8 |

+

|

| 9 |

+

# Input and Output Formats

|

| 10 |

+

The input image should have the size [3, 299, 299]. The output is an array with probabilities for each of the four class.

|

| 11 |

+

|

| 12 |

+

# Sample Data

|

| 13 |

+

In the folder `sample_data` few example input images are stored for each category of images. These images are stored in jpeg format for sharing purpose.

|

| 14 |

+

|

| 15 |

+

# Input and Output Formats

|

| 16 |

+

The input image should have the size [299, 299, 3]. For a dicom image which are single channel. The channel can be repeated 3 times.

|

| 17 |

+

The output is an array with probabilities for each of the four class.

|

| 18 |

+

|

| 19 |

+

# Commands Example

|

| 20 |

+

Create a json file with names of all the input files. Execute the following command

|

| 21 |

+

```

|

| 22 |

+

python scripts/create_dataset.py -base_dir <path to the bundle root dir>/sample_data -output_file configs/sample_image_data.json

|

| 23 |

+

```

|

| 24 |

+

Change the `filename` for the field `data` with the absolute path for `sample_image_data.json`

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

# Add scripts folder to your python path as follows

|

| 28 |

+

```

|

| 29 |

+

export PYTHONPATH=$PYTHONPATH:<path to the bundle root dir>/scripts

|

| 30 |

+

```

|

| 31 |

+

|

| 32 |

+

# Execute Inference

|

| 33 |

+

The inference can be executed as follows

|

| 34 |

+

```

|

| 35 |

+

python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json configs/logging.conf

|

| 36 |

+

```

|

| 37 |

+

|

| 38 |

+

# Execute training

|

| 39 |

+

It is a work in progress and will be shared in the next version soon.

|

| 40 |

+

|

| 41 |

+

# Contributors

|

| 42 |

+

This model is made available from Center for Augmented Intelligence in Imaging, Mayo Clinic Florida. For questions email Vikash Gupta (gupta.vikash@mayo.edu).

|

docs/license.txt

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright 2022 Vikash Gupta

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

models/model.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:710a49172243ea9972bec5dc717382c294ed77310596b6ad0df9bb83f7ceafce

|

| 3 |

+

size 100826617

|

sample_data/A/sample_A1.jpg

ADDED

|

sample_data/A/sample_A2.jpg

ADDED

|

sample_data/A/sample_A3.jpg

ADDED

|

sample_data/A/sample_A4.jpg

ADDED

|

Git LFS Details

|

sample_data/B/sample_B1.jpg

ADDED

|

sample_data/B/sample_B2.jpg

ADDED

|

sample_data/B/sample_B3.jpg

ADDED

|

sample_data/B/sample_B4.jpg

ADDED

|

sample_data/C/sample_C1.jpg

ADDED

|

sample_data/C/sample_C2.jpg

ADDED

|

sample_data/C/sample_C3.jpg

ADDED

|

sample_data/C/sample_C4.jpg

ADDED

|

sample_data/D/sample_D1.jpg

ADDED

|

sample_data/D/sample_D2.jpg

ADDED

|

sample_data/D/sample_D3.jpg

ADDED

|

sample_data/D/sample_D4.jpg

ADDED

|

scripts/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

from .createList import CreateImageLabelList

|

scripts/createList.py

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import json

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

class CreateImageLabelList:

|

| 5 |

+

def __init__(self, filename):

|

| 6 |

+

self.filename = filename

|

| 7 |

+

fid = open(self.filename, "r")

|

| 8 |

+

self.json_dict = json.load(fid)

|

| 9 |

+

|

| 10 |

+

def create_dataset(self, grp):

|

| 11 |

+

image_list = []

|

| 12 |

+

label_list = []

|

| 13 |

+

image_label_list = self.json_dict[grp]

|

| 14 |

+

for _ in image_label_list:

|

| 15 |

+

image_list.append(_["image"])

|

| 16 |

+

label_list.append(_["label"])

|

| 17 |

+

return image_list, label_list

|

scripts/create_dataset.py

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import json

|

| 3 |

+

import os

|

| 4 |

+

import sys

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

def main(base_dir: str, output_file: str):

|

| 8 |

+

|

| 9 |

+

list_classes = ["A", "B", "C", "D"]

|

| 10 |

+

|

| 11 |

+

output_list = []

|

| 12 |

+

for _class in list_classes:

|

| 13 |

+

data_dir = os.path.join(base_dir, _class)

|

| 14 |

+

list_files = os.listdir(data_dir)

|

| 15 |

+

if _class == "A":

|

| 16 |

+

_label = [1, 0, 0, 0]

|

| 17 |

+

elif _class == "B":

|

| 18 |

+

_label = [0, 1, 0, 0]

|

| 19 |

+

elif _class == "C":

|

| 20 |

+

_label = [0, 0, 1, 0]

|

| 21 |

+

elif _class == "D":

|

| 22 |

+

_label = [0, 0, 0, 1]

|

| 23 |

+

|

| 24 |

+

for _file in list_files:

|

| 25 |

+

_out = {"image": os.path.join(data_dir, _file), "label": _label}

|

| 26 |

+

output_list.append(_out)

|

| 27 |

+

|

| 28 |

+

data_dict = {"Test": output_list}

|

| 29 |

+

|

| 30 |

+

fid = open(output_file, "w")

|

| 31 |

+

json.dump(data_dict, fid, indent=1)

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

if __name__ == "__main__":

|

| 35 |

+

parser = argparse.ArgumentParser(description="")

|

| 36 |

+

|

| 37 |

+

parser.add_argument("-base_dir", "--base_dir", default="sample_data", help="dir of dataset")

|

| 38 |

+

parser.add_argument(

|

| 39 |

+

"-output_file", "--output_file", default="configs/sample_image_data.json", help="output file name"

|

| 40 |

+

)

|

| 41 |

+

parser_args, _ = parser.parse_known_args(sys.argv)

|

| 42 |

+

main(base_dir=parser_args.base_dir, output_file=parser_args.output_file)

|

scripts/script.py

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

|

| 3 |

+

from monai.bundle.config_parser import ConfigParser

|

| 4 |

+

|

| 5 |

+

os.environ["CUDA_DEVICE_IRDER"] = "PCI_BUS_ID"

|

| 6 |

+

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

|

| 7 |

+

|

| 8 |

+

parser = ConfigParser()

|

| 9 |

+

parser.read_config("../configs/inference.json")

|

| 10 |

+

data = parser.get_parsed_content("data")

|

| 11 |

+

device = parser.get_parsed_content("device")

|

| 12 |

+

network = parser.get_parsed_content("network_def")

|

| 13 |

+

|

| 14 |

+

inference = parser.get_parsed_content("evaluator")

|

| 15 |

+

inference.run()

|

| 16 |

+

|

| 17 |

+

print(type(network))

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

datalist = parser.get_parsed_content("test_imagelist")

|

| 21 |

+

print(datalist)

|

| 22 |

+

|

| 23 |

+

inference = parser.get_parsed_content("evaluator")

|

| 24 |

+

inference.run()

|