Commit

•

d7893f9

1

Parent(s):

0d7cee1

Deploy the App

Browse files- app.py +34 -0

- images/UI input.png +0 -0

- images/UI.png +0 -0

- images/input.png +0 -0

- models/mobilenet.pt +3 -0

app.py

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import torch

|

| 3 |

+

from torchvision import transforms

|

| 4 |

+

|

| 5 |

+

model = torch.jit.load("../models/mobilenet.pt")

|

| 6 |

+

model.eval()

|

| 7 |

+

|

| 8 |

+

transform = transforms.Compose([

|

| 9 |

+

transforms.RandomResizedCrop(224),

|

| 10 |

+

transforms.RandomHorizontalFlip(),

|

| 11 |

+

transforms.ToTensor(),

|

| 12 |

+

transforms.Normalize([0.485,0.456,0.406],[0.229,0.224,0.225])

|

| 13 |

+

])

|

| 14 |

+

|

| 15 |

+

CLASSES = ["Ak", "Ala_Idris", "Buzgulu", "Dimnit", "Nazli"]

|

| 16 |

+

|

| 17 |

+

def classify_image(inp):

|

| 18 |

+

inp = transform(inp).unsqueeze(0)

|

| 19 |

+

out = model(inp)

|

| 20 |

+

# print(out.argmax(dim=1, keepdim=True))

|

| 21 |

+

# print(out.argmax())

|

| 22 |

+

return CLASSES[out.argmax().item()]

|

| 23 |

+

|

| 24 |

+

iface = gr.Interface(fn=classify_image,

|

| 25 |

+

inputs=gr.Image(type="pil", label="Input Image"),

|

| 26 |

+

outputs="text",

|

| 27 |

+

examples=[

|

| 28 |

+

"../data/app_data/ak.png",

|

| 29 |

+

"../data/app_data/idris.png",

|

| 30 |

+

"../data/app_data/buzgulu.png",

|

| 31 |

+

"../data/app_data/dimnit.png",

|

| 32 |

+

"../data/app_data/nazli.png",

|

| 33 |

+

])

|

| 34 |

+

iface.launch()

|

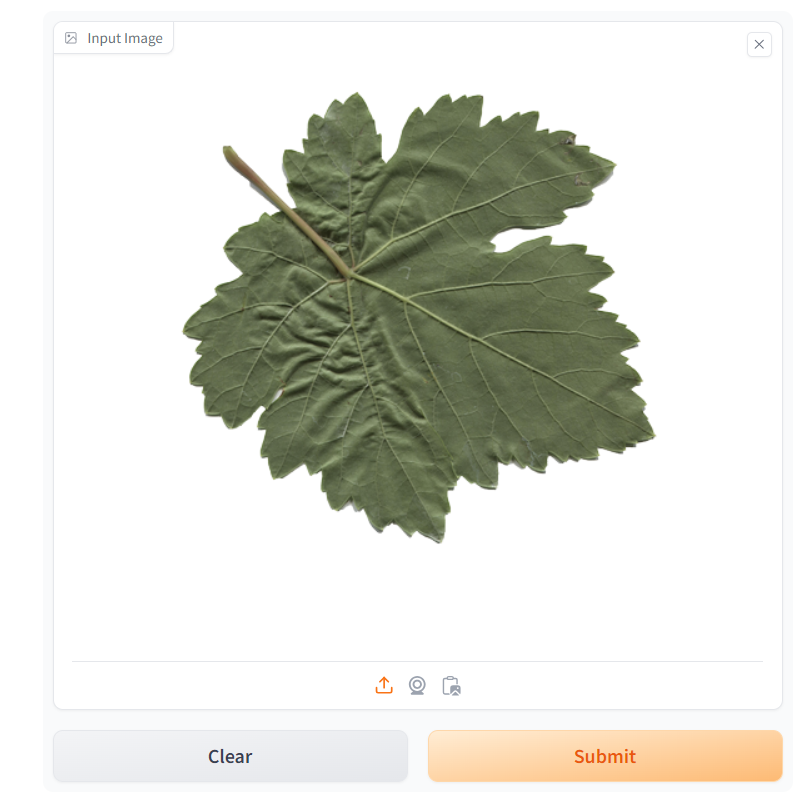

images/UI input.png

ADDED

|

images/UI.png

ADDED

|

images/input.png

ADDED

|

models/mobilenet.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ebfbd9067f789299f10506d0e19f41581821b90d469722e69373afe1a61377b6

|

| 3 |

+

size 9293289

|