Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,238 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

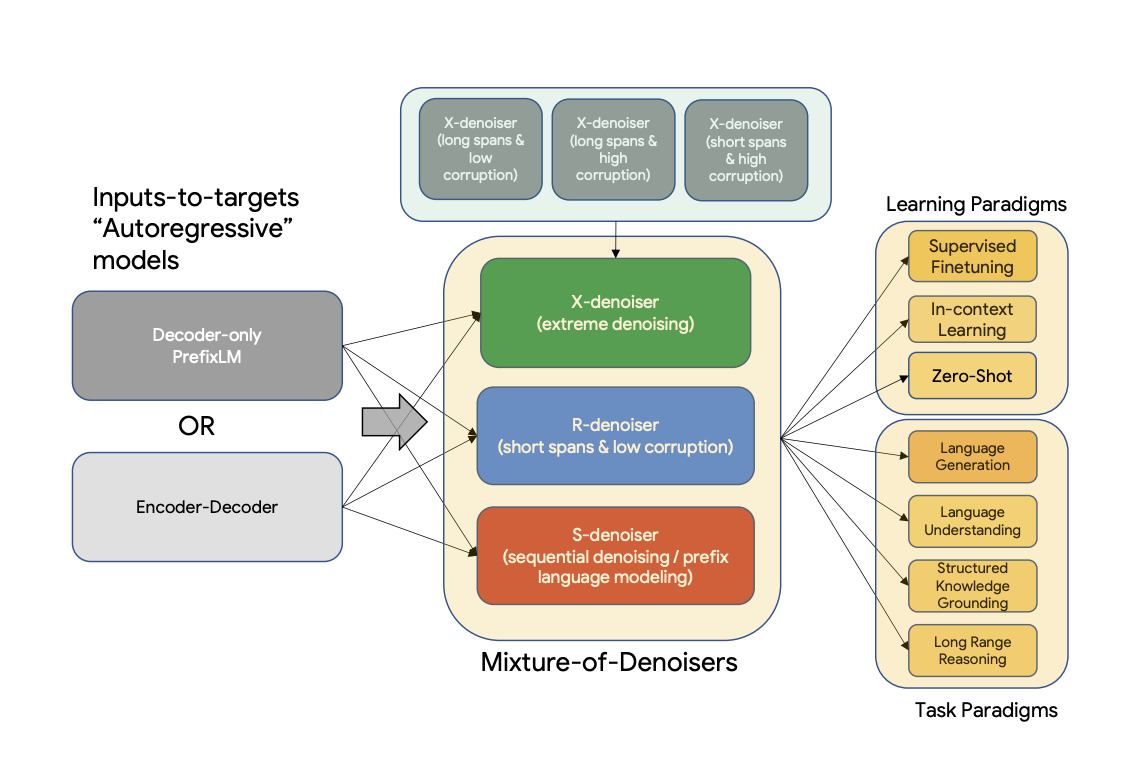

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

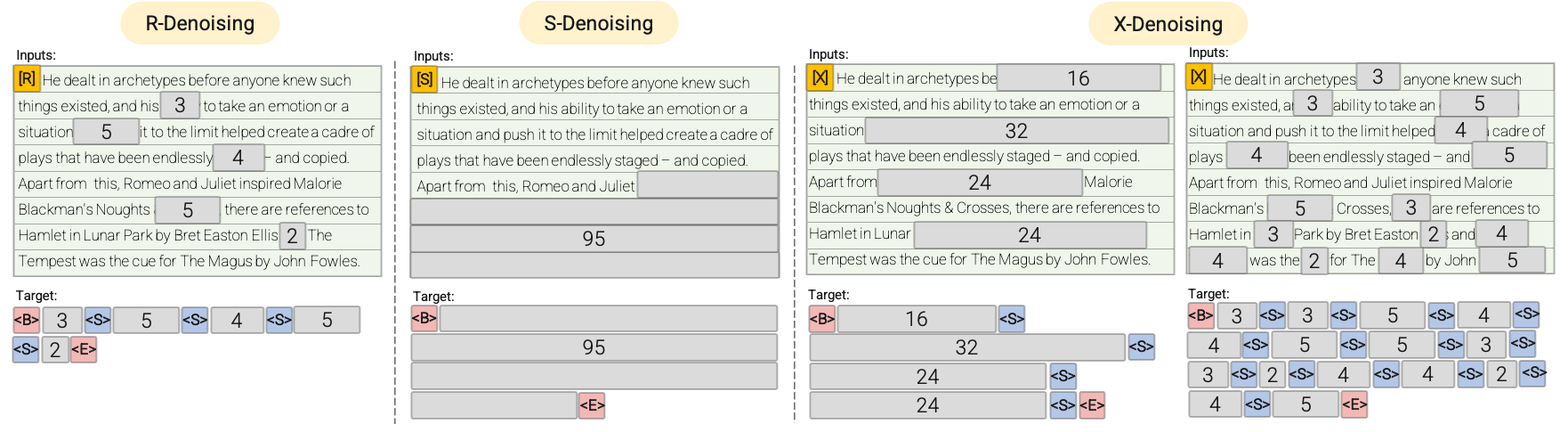

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

- fr

|

| 5 |

+

- ro

|

| 6 |

+

- de

|

| 7 |

+

- multilingual

|

| 8 |

+

widget:

|

| 9 |

+

- text: 'Translate to German: My name is Arthur'

|

| 10 |

+

example_title: Translation

|

| 11 |

+

- text: >-

|

| 12 |

+

Please answer to the following question. Who is going to be the next

|

| 13 |

+

Ballon d'or?

|

| 14 |

+

example_title: Question Answering

|

| 15 |

+

- text: >-

|

| 16 |

+

Q: Can Geoffrey Hinton have a conversation with George Washington? Give

|

| 17 |

+

the rationale before answering.

|

| 18 |

+

example_title: Logical reasoning

|

| 19 |

+

- text: >-

|

| 20 |

+

Please answer the following question. What is the boiling point of

|

| 21 |

+

Nitrogen?

|

| 22 |

+

example_title: Scientific knowledge

|

| 23 |

+

- text: >-

|

| 24 |

+

Answer the following yes/no question. Can you write a whole Haiku in a

|

| 25 |

+

single tweet?

|

| 26 |

+

example_title: Yes/no question

|

| 27 |

+

- text: >-

|

| 28 |

+

Answer the following yes/no question by reasoning step-by-step. Can you

|

| 29 |

+

write a whole Haiku in a single tweet?

|

| 30 |

+

example_title: Reasoning task

|

| 31 |

+

- text: 'Q: ( False or not False or False ) is? A: Let''s think step by step'

|

| 32 |

+

example_title: Boolean Expressions

|

| 33 |

+

- text: >-

|

| 34 |

+

The square root of x is the cube root of y. What is y to the power of 2,

|

| 35 |

+

if x = 4?

|

| 36 |

+

example_title: Math reasoning

|

| 37 |

+

- text: >-

|

| 38 |

+

Premise: At my age you will probably have learnt one lesson. Hypothesis:

|

| 39 |

+

It's not certain how many lessons you'll learn by your thirties. Does the

|

| 40 |

+

premise entail the hypothesis?

|

| 41 |

+

example_title: Premise and hypothesis

|

| 42 |

+

tags:

|

| 43 |

+

- text2text-generation

|

| 44 |

+

datasets:

|

| 45 |

+

- svakulenk0/qrecc

|

| 46 |

+

- taskmaster2

|

| 47 |

+

- djaym7/wiki_dialog

|

| 48 |

+

- deepmind/code_contests

|

| 49 |

+

- lambada

|

| 50 |

+

- gsm8k

|

| 51 |

+

- aqua_rat

|

| 52 |

+

- esnli

|

| 53 |

+

- quasc

|

| 54 |

+

- qed

|

| 55 |

+

- c4

|

| 56 |

+

license: apache-2.0

|

| 57 |

+

---

|

| 58 |

+

|

| 59 |

+

# TL;DR FLan-UL2 improvements over previous version

|

| 60 |

+

The original UL2 model was only trained with receptive field of 512, which made it non-ideal for N-shot prompting where N is large.

|

| 61 |

+

This Flan-UL2 checkpoint uses a receptive field of 2048 which makes it more usable for few-shot in-context learning.

|

| 62 |

+

|

| 63 |

+

The original UL2 model also had mode switch tokens that was rather mandatory to get good performance.

|

| 64 |

+

However, they were a little cumbersome as this requires often some changes during inference or finetuning. In this update/change, we continue training UL2 20B for an additional 100k steps (with small batch) to forget “mode tokens” before applying Flan instruction tuning. This Flan-UL2 checkpoint does not require mode tokens anymore.

|

| 65 |

+

|

| 66 |

+

# Converting from T5x to huggingface

|

| 67 |

+

You can use the [`convert_`]() and pass the argument `strict = False`. The final layer norm is missing from the original dictionnary, we used an identity layer.

|

| 68 |

+

|

| 69 |

+

# Performance improvment

|

| 70 |

+

|

| 71 |

+

The reported results are the following :

|

| 72 |

+

| | MMLU | BBH | MMLU-CoT | BBH-CoT | Avg |

|

| 73 |

+

| :--- | :---: | :---: | :---: | :---: | :---: |

|

| 74 |

+

| FLAN-PaLM 62B | 59.6 | 47.5 | 56.9 | 44.9 | 49.9 |

|

| 75 |

+

| FLAN-PaLM 540B | 73.5 | 57.9 | 70.9 | 66.3 | 67.2 |

|

| 76 |

+

| FLAN-T5-XXL 11B | 55.1 | 45.3 | 48.6 | 41.4 | 47.6 |

|

| 77 |

+

| FLAN-UL2 20B | 55.7(+1.1%) | 45.9(+1.3%) | 52.2(+7.4%) | 42.7(+3.1%) | 49.1(+3.2%) |

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

# Introduction

|

| 81 |

+

|

| 82 |

+

UL2 is a unified framework for pretraining models that are universally effective across datasets and setups. UL2 uses Mixture-of-Denoisers (MoD), apre-training objective that combines diverse pre-training paradigms together. UL2 introduces a notion of mode switching, wherein downstream fine-tuning is associated with specific pre-training schemes.

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

**Abstract**

|

| 87 |

+

|

| 88 |

+

Existing pre-trained models are generally geared towards a particular class of problems. To date, there seems to be still no consensus on what the right architecture and pre-training setup should be. This paper presents a unified framework for pre-training models that are universally effective across datasets and setups. We begin by disentangling architectural archetypes with pre-training objectives -- two concepts that are commonly conflated. Next, we present a generalized and unified perspective for self-supervision in NLP and show how different pre-training objectives can be cast as one another and how interpolating between different objectives can be effective. We then propose Mixture-of-Denoisers (MoD), a pre-training objective that combines diverse pre-training paradigms together. We furthermore introduce a notion of mode switching, wherein downstream fine-tuning is associated with specific pre-training schemes. We conduct extensive ablative experiments to compare multiple pre-training objectives and find that our method pushes the Pareto-frontier by outperforming T5 and/or GPT-like models across multiple diverse setups. Finally, by scaling our model up to 20B parameters, we achieve SOTA performance on 50 well-established supervised NLP tasks ranging from language generation (with automated and human evaluation), language understanding, text classification, question answering, commonsense reasoning, long text reasoning, structured knowledge grounding and information retrieval. Our model also achieve strong results at in-context learning, outperforming 175B GPT-3 on zero-shot SuperGLUE and tripling the performance of T5-XXL on one-shot summarization.

|

| 89 |

+

|

| 90 |

+

For more information, please take a look at the original paper.

|

| 91 |

+

|

| 92 |

+

Paper: [Unifying Language Learning Paradigms](https://arxiv.org/abs/2205.05131v1)

|

| 93 |

+

|

| 94 |

+

Authors: *Yi Tay, Mostafa Dehghani, Vinh Q. Tran, Xavier Garcia, Dara Bahri, Tal Schuster, Huaixiu Steven Zheng, Neil Houlsby, Donald Metzler*

|

| 95 |

+

|

| 96 |

+

# Training

|

| 97 |

+

|

| 98 |

+

## Flan UL2, a 20B Flan trained UL2 model

|

| 99 |

+

The Flan-UL2 model was initialized using the `UL2` checkpoints, and was then trained additionally using Flan Prompting. This means that the original training corpus is `C4`,

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

## UL2 PreTraining

|

| 103 |

+

|

| 104 |

+

The model is pretrained on the C4 corpus. For pretraining, the model is trained on a total of 1 trillion tokens on C4 (2 million steps)

|

| 105 |

+

with a batch size of 1024. The sequence length is set to 512/512 for inputs and targets.

|

| 106 |

+

Dropout is set to 0 during pretraining. Pre-training took slightly more than one month for about 1 trillion

|

| 107 |

+

tokens. The model has 32 encoder layers and 32 decoder layers, `dmodel` of 4096 and `df` of 16384.

|

| 108 |

+

The dimension of each head is 256 for a total of 16 heads. Our model uses a model parallelism of 8.

|

| 109 |

+

The same same sentencepiece tokenizer as T5 of vocab size 32000 is used (click [here](https://huggingface.co/docs/transformers/v4.20.0/en/model_doc/t5#transformers.T5Tokenizer) for more information about the T5 tokenizer).

|

| 110 |

+

|

| 111 |

+

UL-20B can be interpreted as a model that is quite similar to T5 but trained with a different objective and slightly different scaling knobs.

|

| 112 |

+

UL-20B was trained using the [Jax](https://github.com/google/jax) and [T5X](https://github.com/google-research/t5x) infrastructure.

|

| 113 |

+

|

| 114 |

+

The training objective during pretraining is a mixture of different denoising strategies that are explained in the following:

|

| 115 |

+

|

| 116 |

+

## Mixture of Denoisers

|

| 117 |

+

|

| 118 |

+

To quote the paper:

|

| 119 |

+

> We conjecture that a strong universal model has to be exposed to solving diverse set of problems

|

| 120 |

+

> during pre-training. Given that pre-training is done using self-supervision, we argue that such diversity

|

| 121 |

+

> should be injected to the objective of the model, otherwise the model might suffer from lack a certain

|

| 122 |

+

> ability, like long-coherent text generation.

|

| 123 |

+

> Motivated by this, as well as current class of objective functions, we define three main paradigms that

|

| 124 |

+

> are used during pre-training:

|

| 125 |

+

|

| 126 |

+

- **R-Denoiser**: The regular denoising is the standard span corruption introduced in [T5](https://huggingface.co/docs/transformers/v4.20.0/en/model_doc/t5)

|

| 127 |

+

that uses a range of 2 to 5 tokens as the span length, which masks about 15% of

|

| 128 |

+

input tokens. These spans are short and potentially useful to acquire knowledge instead of

|

| 129 |

+

learning to generate fluent text.

|

| 130 |

+

|

| 131 |

+

- **S-Denoiser**: A specific case of denoising where we observe a strict sequential order when

|

| 132 |

+

framing the inputs-to-targets task, i.e., prefix language modeling. To do so, we simply

|

| 133 |

+

partition the input sequence into two sub-sequences of tokens as context and target such that

|

| 134 |

+

the targets do not rely on future information. This is unlike standard span corruption where

|

| 135 |

+

there could be a target token with earlier position than a context token. Note that similar to

|

| 136 |

+

the Prefix-LM setup, the context (prefix) retains a bidirectional receptive field. We note that

|

| 137 |

+

S-Denoising with very short memory or no memory is in similar spirit to standard causal

|

| 138 |

+

language modeling.

|

| 139 |

+

|

| 140 |

+

- **X-Denoiser**: An extreme version of denoising where the model must recover a large part

|

| 141 |

+

of the input, given a small to moderate part of it. This simulates a situation where a model

|

| 142 |

+

needs to generate long target from a memory with relatively limited information. To do

|

| 143 |

+

so, we opt to include examples with aggressive denoising where approximately 50% of the

|

| 144 |

+

input sequence is masked. This is by increasing the span length and/or corruption rate. We

|

| 145 |

+

consider a pre-training task to be extreme if it has a long span (e.g., ≥ 12 tokens) or have

|

| 146 |

+

a large corruption rate (e.g., ≥ 30%). X-denoising is motivated by being an interpolation

|

| 147 |

+

between regular span corruption and language model like objectives.

|

| 148 |

+

|

| 149 |

+

See the following diagram for a more visual explanation:

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

**Important**: For more details, please see sections 3.1.2 of the [paper](https://arxiv.org/pdf/2205.05131v1.pdf).

|

| 154 |

+

|

| 155 |

+

## Fine-tuning

|

| 156 |

+

|

| 157 |

+

The model was continously fine-tuned after N pretraining steps where N is typically from 50k to 100k.

|

| 158 |

+

In other words, after each Nk steps of pretraining, the model is finetuned on each downstream task. See section 5.2.2 of [paper](https://arxiv.org/pdf/2205.05131v1.pdf) to get an overview of all datasets that were used for fine-tuning).

|

| 159 |

+

|

| 160 |

+

As the model is continuously finetuned, finetuning is stopped on a task once it has reached state-of-the-art to save compute.

|

| 161 |

+

In total, the model was trained for 2.65 million steps.

|

| 162 |

+

|

| 163 |

+

**Important**: For more details, please see sections 5.2.1 and 5.2.2 of the [paper](https://arxiv.org/pdf/2205.05131v1.pdf).

|

| 164 |

+

|

| 165 |

+

## Contribution

|

| 166 |

+

|

| 167 |

+

This model was contributed by [Younes Belkada](https://huggingface.co/Seledorn) & [Arthur Zucker]().

|

| 168 |

+

|

| 169 |

+

## Examples

|

| 170 |

+

|

| 171 |

+

The following shows how one can predict masked passages using the different denoising strategies.

|

| 172 |

+

Given the size of the model the following examples need to be run on at least a 40GB A100 GPU.

|

| 173 |

+

|

| 174 |

+

### S-Denoising

|

| 175 |

+

|

| 176 |

+

For *S-Denoising*, please make sure to prompt the text with the prefix `[S2S]` as shown below.

|

| 177 |

+

|

| 178 |

+

```python

|

| 179 |

+

from transformers import T5ForConditionalGeneration, AutoTokenizer

|

| 180 |

+

import torch

|

| 181 |

+

|

| 182 |

+

model = T5ForConditionalGeneration.from_pretrained("google/ul2", low_cpu_mem_usage=True, torch_dtype=torch.bfloat16).to("cuda")

|

| 183 |

+

tokenizer = AutoTokenizer.from_pretrained("google/ul2")

|

| 184 |

+

|

| 185 |

+

input_string = "[S2S] Mr. Dursley was the director of a firm called Grunnings, which made drills. He was a big, solid man with a bald head. Mrs. Dursley was thin and blonde and more than the usual amount of neck, which came in very useful as she spent so much of her time craning over garden fences, spying on the neighbours. The Dursleys had a small son called Dudley and in their opinion there was no finer boy anywhere <extra_id_0>"

|

| 186 |

+

|

| 187 |

+

inputs = tokenizer(input_string, return_tensors="pt").input_ids.to("cuda")

|

| 188 |

+

|

| 189 |

+

outputs = model.generate(inputs, max_length=200)

|

| 190 |

+

|

| 191 |

+

print(tokenizer.decode(outputs[0]))

|

| 192 |

+

# -> <pad>. Dudley was a very good boy, but he was also very stupid.</s>

|

| 193 |

+

```

|

| 194 |

+

|

| 195 |

+

### R-Denoising

|

| 196 |

+

|

| 197 |

+

For *R-Denoising*, please make sure to prompt the text with the prefix `[NLU]` as shown below.

|

| 198 |

+

|

| 199 |

+

```python

|

| 200 |

+

from transformers import T5ForConditionalGeneration, AutoTokenizer

|

| 201 |

+

import torch

|

| 202 |

+

|

| 203 |

+

model = T5ForConditionalGeneration.from_pretrained("google/ul2", low_cpu_mem_usage=True, torch_dtype=torch.bfloat16).to("cuda")

|

| 204 |

+

tokenizer = AutoTokenizer.from_pretrained("google/ul2")

|

| 205 |

+

|

| 206 |

+

input_string = "[NLU] Mr. Dursley was the director of a firm called <extra_id_0>, which made <extra_id_1>. He was a big, solid man with a bald head. Mrs. Dursley was thin and <extra_id_2> of neck, which came in very useful as she spent so much of her time <extra_id_3>. The Dursleys had a small son called Dudley and <extra_id_4>"

|

| 207 |

+

|

| 208 |

+

inputs = tokenizer(input_string, return_tensors="pt", add_special_tokens=False).input_ids.to("cuda")

|

| 209 |

+

|

| 210 |

+

outputs = model.generate(inputs, max_length=200)

|

| 211 |

+

|

| 212 |

+

print(tokenizer.decode(outputs[0]))

|

| 213 |

+

# -> "<pad><extra_id_0> Burrows<extra_id_1> brooms for witches and wizards<extra_id_2> had a lot<extra_id_3> scolding Dudley<extra_id_4> a daughter called Petunia. Dudley was a nasty, spoiled little boy who was always getting into trouble. He was very fond of his pet rat, Scabbers.<extra_id_5> Burrows<extra_id_3> screaming at him<extra_id_4> a daughter called Petunia</s>

|

| 214 |

+

"

|

| 215 |

+

```

|

| 216 |

+

|

| 217 |

+

### X-Denoising

|

| 218 |

+

|

| 219 |

+

For *X-Denoising*, please make sure to prompt the text with the prefix `[NLG]` as shown below.

|

| 220 |

+

|

| 221 |

+

```python

|

| 222 |

+

from transformers import T5ForConditionalGeneration, AutoTokenizer

|

| 223 |

+

import torch

|

| 224 |

+

|

| 225 |

+

model = T5ForConditionalGeneration.from_pretrained("google/ul2", low_cpu_mem_usage=True, torch_dtype=torch.bfloat16).to("cuda")

|

| 226 |

+

tokenizer = AutoTokenizer.from_pretrained("google/ul2")

|

| 227 |

+

|

| 228 |

+

input_string = "[NLG] Mr. Dursley was the director of a firm called Grunnings, which made drills. He was a big, solid man wiht a bald head. Mrs. Dursley was thin and blonde and more than the usual amount of neck, which came in very useful as she

|

| 229 |

+

spent so much of her time craning over garden fences, spying on the neighbours. The Dursleys had a small son called Dudley and in their opinion there was no finer boy anywhere. <extra_id_0>"

|

| 230 |

+

|

| 231 |

+

model.cuda()

|

| 232 |

+

inputs = tokenizer(input_string, return_tensors="pt", add_special_tokens=False).input_ids.to("cuda")

|

| 233 |

+

|

| 234 |

+

outputs = model.generate(inputs, max_length=200)

|

| 235 |

+

|

| 236 |

+

print(tokenizer.decode(outputs[0]))

|

| 237 |

+

# -> "<pad><extra_id_0> Burrows<extra_id_1> a lot of money from the manufacture of a product called '' Burrows'''s ''<extra_id_2> had a lot<extra_id_3> looking down people's throats<extra_id_4> a daughter called Petunia. Dudley was a very stupid boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat, ugly boy who was always getting into trouble. He was a big, fat,"

|

| 238 |

+

```

|