Commit

•

f54d294

1

Parent(s):

47c41fa

Update README.md

Browse files

README.md

CHANGED

|

@@ -31,9 +31,44 @@ For more information, please refer to Section *5.1.2* of the [official XLS-R pap

|

|

| 31 |

|

| 32 |

## Usage

|

| 33 |

|

| 34 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 35 |

|

| 36 |

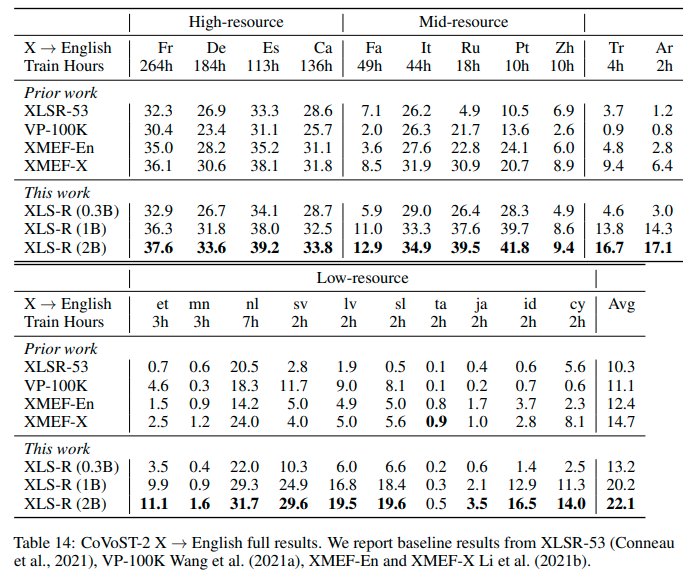

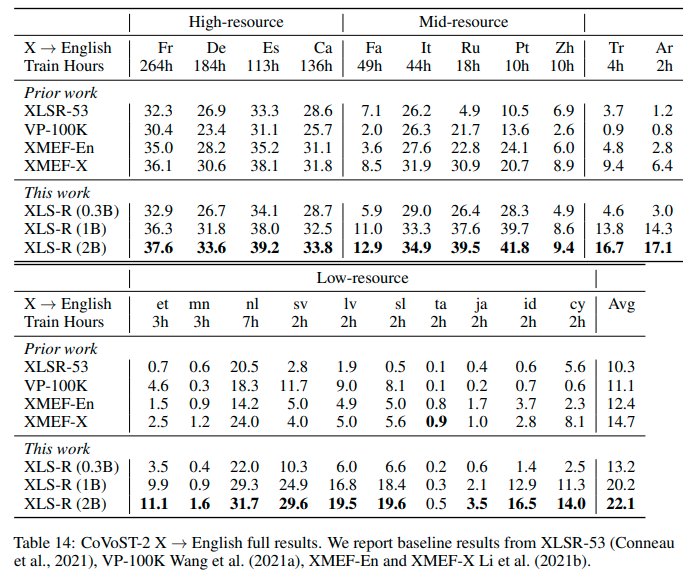

## Results `{lang}` -> `en`

|

| 37 |

|

|

|

|

|

|

|

| 38 |

|

| 39 |

|

|

|

|

| 31 |

|

| 32 |

## Usage

|

| 33 |

|

| 34 |

+

As this a standard sequence to sequence transformer model, you can use the `generate` method to generate the

|

| 35 |

+

transcripts by passing the speech features to the model.

|

| 36 |

+

|

| 37 |

+

You can use the model directly via the ASR pipeline

|

| 38 |

+

|

| 39 |

+

```python

|

| 40 |

+

from datasets import load_dataset

|

| 41 |

+

from transformers import pipeline

|

| 42 |

+

|

| 43 |

+

# replace following lines to load an audio file of your choice

|

| 44 |

+

librispeech_en = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

|

| 45 |

+

audio_file = librispeech_en[0]["file"]

|

| 46 |

+

|

| 47 |

+

asr = pipeline("automatic-speech-recognition", model="facebook/wav2vec2-xls-r-300m-21-to-en", feature_extractor="facebook/wav2vec2-xls-r-300m-21-to-en")

|

| 48 |

+

|

| 49 |

+

translation = asr(audio_file)

|

| 50 |

+

```

|

| 51 |

+

|

| 52 |

+

or step-by-step as follows:

|

| 53 |

+

|

| 54 |

+

```python

|

| 55 |

+

import torch

|

| 56 |

+

from transformers import Speech2Text2Processor, SpeechEncoderDecoder

|

| 57 |

+

from datasets import load_dataset

|

| 58 |

+

|

| 59 |

+

model = SpeechEncoderDecoder.from_pretrained("facebook/wav2vec2-xls-r-300m-21-to-en")

|

| 60 |

+

processor = Speech2Text2Processor.from_pretrained("facebook/wav2vec2-xls-r-300m-21-to-en")

|

| 61 |

+

|

| 62 |

+

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

|

| 63 |

+

|

| 64 |

+

inputs = processor(ds[0]["audio"]["array"], sampling_rate=16_000, return_tensors="pt")

|

| 65 |

+

generated_ids = model.generate(input_ids=inputs["input_features"], attention_mask=inputs["attention_mask"])

|

| 66 |

+

transcription = processor.batch_decode(generated_ids)

|

| 67 |

+

```

|

| 68 |

|

| 69 |

## Results `{lang}` -> `en`

|

| 70 |

|

| 71 |

+

See the row of **XLS-R (0.3B)** for the performance on [Covost2](https://huggingface.co/datasets/covost2) for this model.

|

| 72 |

+

|

| 73 |

|

| 74 |

|