Eugene Siow

commited on

Commit

·

a16c423

1

Parent(s):

f826388

Initial commit.

Browse files- README.md +146 -0

- config.json +10 -0

- images/msrn_2_4_compare.png +0 -0

- images/msrn_4_4_compare.png +0 -0

- pytorch_model_2x.pt +3 -0

- pytorch_model_3x.pt +3 -0

- pytorch_model_4x.pt +3 -0

README.md

ADDED

|

@@ -0,0 +1,146 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- image-super-resolution

|

| 5 |

+

datasets:

|

| 6 |

+

- div2k

|

| 7 |

+

metrics:

|

| 8 |

+

- pnsr

|

| 9 |

+

- ssim

|

| 10 |

+

---

|

| 11 |

+

# Multi-scale Residual Network for Image Super-Resolution (MSRN)

|

| 12 |

+

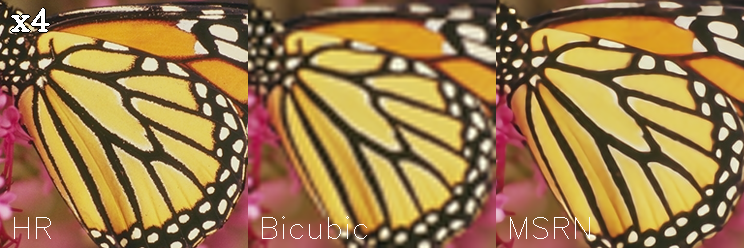

MSRN model pre-trained on DIV2K (800 images training, augmented to 4000 images, 100 images validation) for 2x, 3x and 4x image super resolution. It was introduced in the paper [Multi-scale Residual Network for Image Super-Resolution](https://openaccess.thecvf.com/content_ECCV_2018/html/Juncheng_Li_Multi-scale_Residual_Network_ECCV_2018_paper.html) by Li et al. (2018) and first released in [this repository](https://github.com/MIVRC/MSRN-PyTorch).

|

| 13 |

+

|

| 14 |

+

The goal of image super resolution is to restore a high resolution (HR) image from a single low resolution (LR) image. The image below shows the ground truth (HR), the bicubic upscaling x2 and model upscaling x2.

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

## Model description

|

| 18 |

+

The MSRN model proposes a feature extraction structure called the multi-scale residual block. This module can "adaptively detect image features at different scales" and "exploit the potential features of the image".

|

| 19 |

+

|

| 20 |

+

This model also applies the balanced attention (BAM) method invented by [Wang et al. (2021)](https://arxiv.org/abs/2104.07566) to further improve the results.

|

| 21 |

+

## Intended uses & limitations

|

| 22 |

+

You can use the pre-trained models for upscaling your images 2x, 3x and 4x. You can also use the trainer to train a model on your own dataset.

|

| 23 |

+

### How to use

|

| 24 |

+

The model can be used with the [super_image](https://github.com/eugenesiow/super-image) library:

|

| 25 |

+

```bash

|

| 26 |

+

pip install super-image

|

| 27 |

+

```

|

| 28 |

+

Here is how to use a pre-trained model to upscale your image:

|

| 29 |

+

```python

|

| 30 |

+

from super_image import MsrnModel, ImageLoader

|

| 31 |

+

from PIL import Image

|

| 32 |

+

import requests

|

| 33 |

+

|

| 34 |

+

url = 'https://paperswithcode.com/media/datasets/Set5-0000002728-07a9793f_zA3bDjj.jpg'

|

| 35 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 36 |

+

|

| 37 |

+

model = MsrnModel.from_pretrained('eugenesiow/msrn-bam', scale=2) # scale 2, 3 and 4 models available

|

| 38 |

+

inputs = ImageLoader.load_image(image)

|

| 39 |

+

preds = model(inputs)

|

| 40 |

+

|

| 41 |

+

ImageLoader.save_image(preds, './scaled_2x.png') # save the output 2x scaled image to `./scaled_2x.png`

|

| 42 |

+

ImageLoader.save_compare(inputs, preds, './scaled_2x_compare.png') # save an output comparing the super-image with a bicubic scaling

|

| 43 |

+

```

|

| 44 |

+

## Training data

|

| 45 |

+

The models for 2x, 3x and 4x image super resolution were pretrained on [DIV2K](https://data.vision.ee.ethz.ch/cvl/DIV2K/), a dataset of 800 high-quality (2K resolution) images for training, augmented to 4000 images and uses a dev set of 100 validation images (images numbered 801 to 900).

|

| 46 |

+

## Training procedure

|

| 47 |

+

### Preprocessing

|

| 48 |

+

We follow the pre-processing and training method of [Wang et al.](https://arxiv.org/abs/2104.07566).

|

| 49 |

+

Low Resolution (LR) images are created by using bicubic interpolation as the resizing method to reduce the size of the High Resolution (HR) images by x2, x3 and x4 times.

|

| 50 |

+

During training, RGB patches with size of 64×64 from the LR input are used together with their corresponding HR patches.

|

| 51 |

+

Data augmentation is applied to the training set in the pre-processing stage where five images are created from the four corners and center of the original image.

|

| 52 |

+

|

| 53 |

+

The following code provides some helper functions to preprocess the data.

|

| 54 |

+

```python

|

| 55 |

+

from super_image.data import EvalDataset, TrainAugmentDataset, DatasetBuilder

|

| 56 |

+

|

| 57 |

+

DatasetBuilder.prepare(

|

| 58 |

+

base_path='./DIV2K/DIV2K_train_HR',

|

| 59 |

+

output_path='./div2k_4x_train.h5',

|

| 60 |

+

scale=4,

|

| 61 |

+

do_augmentation=True

|

| 62 |

+

)

|

| 63 |

+

DatasetBuilder.prepare(

|

| 64 |

+

base_path='./DIV2K/DIV2K_val_HR',

|

| 65 |

+

output_path='./div2k_4x_val.h5',

|

| 66 |

+

scale=4,

|

| 67 |

+

do_augmentation=False

|

| 68 |

+

)

|

| 69 |

+

train_dataset = TrainAugmentDataset('./div2k_4x_train.h5', scale=4)

|

| 70 |

+

val_dataset = EvalDataset('./div2k_4x_val.h5')

|

| 71 |

+

```

|

| 72 |

+

### Pretraining

|

| 73 |

+

The model was trained on GPU. The training code is provided below:

|

| 74 |

+

```python

|

| 75 |

+

from super_image import Trainer, TrainingArguments, MsrnModel, MsrnConfig

|

| 76 |

+

|

| 77 |

+

training_args = TrainingArguments(

|

| 78 |

+

output_dir='./results', # output directory

|

| 79 |

+

num_train_epochs=1000, # total number of training epochs

|

| 80 |

+

)

|

| 81 |

+

|

| 82 |

+

config = MsrnConfig(

|

| 83 |

+

scale=4, # train a model to upscale 4x

|

| 84 |

+

bam=True, # apply balanced attention to the network

|

| 85 |

+

supported_scales=[2, 3, 4],

|

| 86 |

+

)

|

| 87 |

+

model = MsrnModel(config)

|

| 88 |

+

|

| 89 |

+

trainer = Trainer(

|

| 90 |

+

model=model, # the instantiated model to be trained

|

| 91 |

+

args=training_args, # training arguments, defined above

|

| 92 |

+

train_dataset=train_dataset, # training dataset

|

| 93 |

+

eval_dataset=val_dataset # evaluation dataset

|

| 94 |

+

)

|

| 95 |

+

|

| 96 |

+

trainer.train()

|

| 97 |

+

```

|

| 98 |

+

## Evaluation results

|

| 99 |

+

The evaluation metrics include [PSNR](https://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio#Quality_estimation_with_PSNR) and [SSIM](https://en.wikipedia.org/wiki/Structural_similarity#Algorithm).

|

| 100 |

+

|

| 101 |

+

Evaluation datasets include:

|

| 102 |

+

- Set5 - [Bevilacqua et al. (2012)](http://people.rennes.inria.fr/Aline.Roumy/results/SR_BMVC12.html)

|

| 103 |

+

- Set14 - [Zeyde et al. (2010)](https://sites.google.com/site/romanzeyde/research-interests)

|

| 104 |

+

- BSD100 - [Martin et al. (2001)](https://www.eecs.berkeley.edu/Research/Projects/CS/vision/bsds/)

|

| 105 |

+

- Urban100 - [Huang et al. (2015)](https://sites.google.com/site/jbhuang0604/publications/struct_sr)

|

| 106 |

+

|

| 107 |

+

The results columns below are represented below as `PSNR/SSIM`. They are compared against a Bicubic baseline.

|

| 108 |

+

|

| 109 |

+

|Dataset |Scale |Bicubic |msrn-bam |

|

| 110 |

+

|--- |--- |--- |--- |

|

| 111 |

+

|Set5 |2x |33.64/0.9292 |**38.023705/0.960794** |

|

| 112 |

+

|Set5 |3x |30.39/0.8678 |**35.155403/0.940999** |

|

| 113 |

+

|Set5 |4x |28.42/0.8101 |**32.263668/0.89554** |

|

| 114 |

+

|Set14 |2x |30.22/0.8683 |**33.635643/0.917744** |

|

| 115 |

+

|Set14 |3x |27.53/0.7737 |**30.974932/0.857354** |

|

| 116 |

+

|Set14 |4x |25.99/0.7023 |**28.660543/0.782889** |

|

| 117 |

+

|BSD100 |2x |29.55/0.8425 |**32.208752/0.899763** |

|

| 118 |

+

|BSD100 |3x |27.20/0.7382 |**29.668056/0.820912** |

|

| 119 |

+

|BSD100 |4x |25.96/0.6672 |**27.614033/0.736893** |

|

| 120 |

+

|Urban100 |2x |26.66/0.8408 |**32.084557/0.927621** |

|

| 121 |

+

|Urban100 |3x | |**29.314505/0.873682** |

|

| 122 |

+

|Urban100 |4x |23.14/0.6573 |**26.100685/0.785711** |

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

## BibTeX entry and citation info

|

| 127 |

+

```bibtex

|

| 128 |

+

@misc{wang2021bam,

|

| 129 |

+

title={BAM: A Lightweight and Efficient Balanced Attention Mechanism for Single Image Super Resolution},

|

| 130 |

+

author={Fanyi Wang and Haotian Hu and Cheng Shen},

|

| 131 |

+

year={2021},

|

| 132 |

+

eprint={2104.07566},

|

| 133 |

+

archivePrefix={arXiv},

|

| 134 |

+

primaryClass={eess.IV}

|

| 135 |

+

}

|

| 136 |

+

```

|

| 137 |

+

|

| 138 |

+

```bibtex

|

| 139 |

+

@InProceedings{Li_2018_ECCV,

|

| 140 |

+

author = {Li, Juncheng and Fang, Faming and Mei, Kangfu and Zhang, Guixu},

|

| 141 |

+

title = {Multi-scale Residual Network for Image Super-Resolution},

|

| 142 |

+

booktitle = {The European Conference on Computer Vision (ECCV)},

|

| 143 |

+

month = {September},

|

| 144 |

+

year = {2018}

|

| 145 |

+

}

|

| 146 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "eugenesiow/msrn-bam",

|

| 3 |

+

"data_parallel": true,

|

| 4 |

+

"model_type": "MSRN",

|

| 5 |

+

"bam": true,

|

| 6 |

+

"n_feats": 64,

|

| 7 |

+

"n_blocks": 8,

|

| 8 |

+

"rgb_range": 255,

|

| 9 |

+

"supported_scales": [2,3,4]

|

| 10 |

+

}

|

images/msrn_2_4_compare.png

ADDED

|

images/msrn_4_4_compare.png

ADDED

|

pytorch_model_2x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1cab3f5885433b1871da3e061af773a65cb59f1aa58ca5d14be3e5ef01dec8e5

|

| 3 |

+

size 23786629

|

pytorch_model_3x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:84a5c6b9993b0bfde05bba2aea52d45be7d6333e9ab2cd9e6fe64b452da0bdba

|

| 3 |

+

size 24525189

|

pytorch_model_4x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1182755427e413d8914c9833b44d02e30653998fc01a7876f48f21c8247105e7

|

| 3 |

+

size 24378241

|