import from zenodo

Browse files- README.md +50 -0

- exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train/feats_stats.npz +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/config.yaml +256 -0

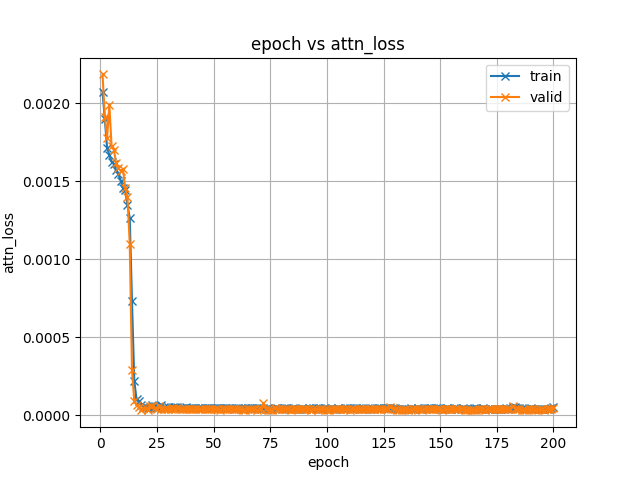

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/attn_loss.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/backward_time.png +0 -0

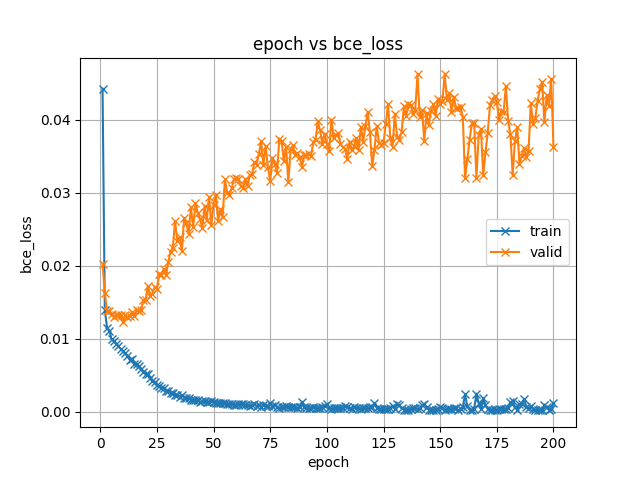

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/bce_loss.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/forward_time.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/iter_time.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/l1_loss.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/loss.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/lr_0.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/mse_loss.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/optim_step_time.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/train_time.png +0 -0

- exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train.loss.ave_5best.pth +3 -0

- meta.yaml +8 -0

README.md

ADDED

|

@@ -0,0 +1,50 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- espnet

|

| 4 |

+

- audio

|

| 5 |

+

- text-to-speech

|

| 6 |

+

language: ja

|

| 7 |

+

datasets:

|

| 8 |

+

- jsut

|

| 9 |

+

license: cc-by-4.0

|

| 10 |

+

---

|

| 11 |

+

## Example ESPnet2 TTS model

|

| 12 |

+

### `kan-bayashi/jsut_tacotron2_accent_with_pause`

|

| 13 |

+

♻️ Imported from https://zenodo.org/record/4433194/

|

| 14 |

+

|

| 15 |

+

This model was trained by kan-bayashi using jsut/tts1 recipe in [espnet](https://github.com/espnet/espnet/).

|

| 16 |

+

### Demo: How to use in ESPnet2

|

| 17 |

+

```python

|

| 18 |

+

# coming soon

|

| 19 |

+

```

|

| 20 |

+

### Citing ESPnet

|

| 21 |

+

```BibTex

|

| 22 |

+

@inproceedings{watanabe2018espnet,

|

| 23 |

+

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson {Enrique Yalta Soplin} and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

|

| 24 |

+

title={{ESPnet}: End-to-End Speech Processing Toolkit},

|

| 25 |

+

year={2018},

|

| 26 |

+

booktitle={Proceedings of Interspeech},

|

| 27 |

+

pages={2207--2211},

|

| 28 |

+

doi={10.21437/Interspeech.2018-1456},

|

| 29 |

+

url={http://dx.doi.org/10.21437/Interspeech.2018-1456}

|

| 30 |

+

}

|

| 31 |

+

@inproceedings{hayashi2020espnet,

|

| 32 |

+

title={{Espnet-TTS}: Unified, reproducible, and integratable open source end-to-end text-to-speech toolkit},

|

| 33 |

+

author={Hayashi, Tomoki and Yamamoto, Ryuichi and Inoue, Katsuki and Yoshimura, Takenori and Watanabe, Shinji and Toda, Tomoki and Takeda, Kazuya and Zhang, Yu and Tan, Xu},

|

| 34 |

+

booktitle={Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

|

| 35 |

+

pages={7654--7658},

|

| 36 |

+

year={2020},

|

| 37 |

+

organization={IEEE}

|

| 38 |

+

}

|

| 39 |

+

```

|

| 40 |

+

or arXiv:

|

| 41 |

+

```bibtex

|

| 42 |

+

@misc{watanabe2018espnet,

|

| 43 |

+

title={ESPnet: End-to-End Speech Processing Toolkit},

|

| 44 |

+

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson Enrique Yalta Soplin and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

|

| 45 |

+

year={2018},

|

| 46 |

+

eprint={1804.00015},

|

| 47 |

+

archivePrefix={arXiv},

|

| 48 |

+

primaryClass={cs.CL}

|

| 49 |

+

}

|

| 50 |

+

```

|

exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train/feats_stats.npz

ADDED

|

Binary file (1.4 kB). View file

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/config.yaml

ADDED

|

@@ -0,0 +1,256 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

config: conf/tuning/train_tacotron2.yaml

|

| 2 |

+

print_config: false

|

| 3 |

+

log_level: INFO

|

| 4 |

+

dry_run: false

|

| 5 |

+

iterator_type: sequence

|

| 6 |

+

output_dir: exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause

|

| 7 |

+

ngpu: 1

|

| 8 |

+

seed: 0

|

| 9 |

+

num_workers: 1

|

| 10 |

+

num_att_plot: 3

|

| 11 |

+

dist_backend: nccl

|

| 12 |

+

dist_init_method: env://

|

| 13 |

+

dist_world_size: null

|

| 14 |

+

dist_rank: null

|

| 15 |

+

local_rank: 0

|

| 16 |

+

dist_master_addr: null

|

| 17 |

+

dist_master_port: null

|

| 18 |

+

dist_launcher: null

|

| 19 |

+

multiprocessing_distributed: false

|

| 20 |

+

cudnn_enabled: true

|

| 21 |

+

cudnn_benchmark: false

|

| 22 |

+

cudnn_deterministic: true

|

| 23 |

+

collect_stats: false

|

| 24 |

+

write_collected_feats: false

|

| 25 |

+

max_epoch: 200

|

| 26 |

+

patience: null

|

| 27 |

+

val_scheduler_criterion:

|

| 28 |

+

- valid

|

| 29 |

+

- loss

|

| 30 |

+

early_stopping_criterion:

|

| 31 |

+

- valid

|

| 32 |

+

- loss

|

| 33 |

+

- min

|

| 34 |

+

best_model_criterion:

|

| 35 |

+

- - valid

|

| 36 |

+

- loss

|

| 37 |

+

- min

|

| 38 |

+

- - train

|

| 39 |

+

- loss

|

| 40 |

+

- min

|

| 41 |

+

keep_nbest_models: 5

|

| 42 |

+

grad_clip: 1.0

|

| 43 |

+

grad_clip_type: 2.0

|

| 44 |

+

grad_noise: false

|

| 45 |

+

accum_grad: 1

|

| 46 |

+

no_forward_run: false

|

| 47 |

+

resume: true

|

| 48 |

+

train_dtype: float32

|

| 49 |

+

use_amp: false

|

| 50 |

+

log_interval: null

|

| 51 |

+

unused_parameters: false

|

| 52 |

+

use_tensorboard: true

|

| 53 |

+

use_wandb: false

|

| 54 |

+

wandb_project: null

|

| 55 |

+

wandb_id: null

|

| 56 |

+

pretrain_path: null

|

| 57 |

+

init_param: []

|

| 58 |

+

freeze_param: []

|

| 59 |

+

num_iters_per_epoch: 500

|

| 60 |

+

batch_size: 20

|

| 61 |

+

valid_batch_size: null

|

| 62 |

+

batch_bins: 3750000

|

| 63 |

+

valid_batch_bins: null

|

| 64 |

+

train_shape_file:

|

| 65 |

+

- exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train/text_shape.phn

|

| 66 |

+

- exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train/speech_shape

|

| 67 |

+

valid_shape_file:

|

| 68 |

+

- exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/valid/text_shape.phn

|

| 69 |

+

- exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/valid/speech_shape

|

| 70 |

+

batch_type: numel

|

| 71 |

+

valid_batch_type: null

|

| 72 |

+

fold_length:

|

| 73 |

+

- 150

|

| 74 |

+

- 240000

|

| 75 |

+

sort_in_batch: descending

|

| 76 |

+

sort_batch: descending

|

| 77 |

+

multiple_iterator: false

|

| 78 |

+

chunk_length: 500

|

| 79 |

+

chunk_shift_ratio: 0.5

|

| 80 |

+

num_cache_chunks: 1024

|

| 81 |

+

train_data_path_and_name_and_type:

|

| 82 |

+

- - dump/raw/tr_no_dev/text

|

| 83 |

+

- text

|

| 84 |

+

- text

|

| 85 |

+

- - dump/raw/tr_no_dev/wav.scp

|

| 86 |

+

- speech

|

| 87 |

+

- sound

|

| 88 |

+

valid_data_path_and_name_and_type:

|

| 89 |

+

- - dump/raw/dev/text

|

| 90 |

+

- text

|

| 91 |

+

- text

|

| 92 |

+

- - dump/raw/dev/wav.scp

|

| 93 |

+

- speech

|

| 94 |

+

- sound

|

| 95 |

+

allow_variable_data_keys: false

|

| 96 |

+

max_cache_size: 0.0

|

| 97 |

+

max_cache_fd: 32

|

| 98 |

+

valid_max_cache_size: null

|

| 99 |

+

optim: adam

|

| 100 |

+

optim_conf:

|

| 101 |

+

lr: 0.001

|

| 102 |

+

eps: 1.0e-06

|

| 103 |

+

weight_decay: 0.0

|

| 104 |

+

scheduler: null

|

| 105 |

+

scheduler_conf: {}

|

| 106 |

+

token_list:

|

| 107 |

+

- <blank>

|

| 108 |

+

- <unk>

|

| 109 |

+

- '1'

|

| 110 |

+

- '2'

|

| 111 |

+

- '0'

|

| 112 |

+

- '3'

|

| 113 |

+

- '4'

|

| 114 |

+

- '-1'

|

| 115 |

+

- '5'

|

| 116 |

+

- a

|

| 117 |

+

- o

|

| 118 |

+

- '-2'

|

| 119 |

+

- i

|

| 120 |

+

- '-3'

|

| 121 |

+

- u

|

| 122 |

+

- e

|

| 123 |

+

- k

|

| 124 |

+

- n

|

| 125 |

+

- t

|

| 126 |

+

- '6'

|

| 127 |

+

- r

|

| 128 |

+

- '-4'

|

| 129 |

+

- s

|

| 130 |

+

- N

|

| 131 |

+

- m

|

| 132 |

+

- pau

|

| 133 |

+

- '7'

|

| 134 |

+

- sh

|

| 135 |

+

- d

|

| 136 |

+

- g

|

| 137 |

+

- w

|

| 138 |

+

- '8'

|

| 139 |

+

- U

|

| 140 |

+

- '-5'

|

| 141 |

+

- I

|

| 142 |

+

- cl

|

| 143 |

+

- h

|

| 144 |

+

- y

|

| 145 |

+

- b

|

| 146 |

+

- '9'

|

| 147 |

+

- j

|

| 148 |

+

- ts

|

| 149 |

+

- ch

|

| 150 |

+

- '-6'

|

| 151 |

+

- z

|

| 152 |

+

- p

|

| 153 |

+

- '-7'

|

| 154 |

+

- f

|

| 155 |

+

- ky

|

| 156 |

+

- ry

|

| 157 |

+

- '-8'

|

| 158 |

+

- gy

|

| 159 |

+

- '-9'

|

| 160 |

+

- hy

|

| 161 |

+

- ny

|

| 162 |

+

- '-10'

|

| 163 |

+

- by

|

| 164 |

+

- my

|

| 165 |

+

- '-11'

|

| 166 |

+

- '-12'

|

| 167 |

+

- '-13'

|

| 168 |

+

- py

|

| 169 |

+

- '-14'

|

| 170 |

+

- '-15'

|

| 171 |

+

- v

|

| 172 |

+

- '10'

|

| 173 |

+

- '-16'

|

| 174 |

+

- '-17'

|

| 175 |

+

- '11'

|

| 176 |

+

- '-21'

|

| 177 |

+

- '-20'

|

| 178 |

+

- '12'

|

| 179 |

+

- '-19'

|

| 180 |

+

- '13'

|

| 181 |

+

- '-18'

|

| 182 |

+

- '14'

|

| 183 |

+

- dy

|

| 184 |

+

- '15'

|

| 185 |

+

- ty

|

| 186 |

+

- '-22'

|

| 187 |

+

- '16'

|

| 188 |

+

- '18'

|

| 189 |

+

- '19'

|

| 190 |

+

- '17'

|

| 191 |

+

- <sos/eos>

|

| 192 |

+

odim: null

|

| 193 |

+

model_conf: {}

|

| 194 |

+

use_preprocessor: true

|

| 195 |

+

token_type: phn

|

| 196 |

+

bpemodel: null

|

| 197 |

+

non_linguistic_symbols: null

|

| 198 |

+

cleaner: jaconv

|

| 199 |

+

g2p: pyopenjtalk_accent_with_pause

|

| 200 |

+

feats_extract: fbank

|

| 201 |

+

feats_extract_conf:

|

| 202 |

+

fs: 24000

|

| 203 |

+

fmin: 80

|

| 204 |

+

fmax: 7600

|

| 205 |

+

n_mels: 80

|

| 206 |

+

hop_length: 300

|

| 207 |

+

n_fft: 2048

|

| 208 |

+

win_length: 1200

|

| 209 |

+

normalize: global_mvn

|

| 210 |

+

normalize_conf:

|

| 211 |

+

stats_file: exp/tts_stats_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train/feats_stats.npz

|

| 212 |

+

tts: tacotron2

|

| 213 |

+

tts_conf:

|

| 214 |

+

embed_dim: 512

|

| 215 |

+

elayers: 1

|

| 216 |

+

eunits: 512

|

| 217 |

+

econv_layers: 3

|

| 218 |

+

econv_chans: 512

|

| 219 |

+

econv_filts: 5

|

| 220 |

+

atype: location

|

| 221 |

+

adim: 512

|

| 222 |

+

aconv_chans: 32

|

| 223 |

+

aconv_filts: 15

|

| 224 |

+

cumulate_att_w: true

|

| 225 |

+

dlayers: 2

|

| 226 |

+

dunits: 1024

|

| 227 |

+

prenet_layers: 2

|

| 228 |

+

prenet_units: 256

|

| 229 |

+

postnet_layers: 5

|

| 230 |

+

postnet_chans: 512

|

| 231 |

+

postnet_filts: 5

|

| 232 |

+

output_activation: null

|

| 233 |

+

use_batch_norm: true

|

| 234 |

+

use_concate: true

|

| 235 |

+

use_residual: false

|

| 236 |

+

dropout_rate: 0.5

|

| 237 |

+

zoneout_rate: 0.1

|

| 238 |

+

reduction_factor: 1

|

| 239 |

+

spk_embed_dim: null

|

| 240 |

+

use_masking: true

|

| 241 |

+

bce_pos_weight: 5.0

|

| 242 |

+

use_guided_attn_loss: true

|

| 243 |

+

guided_attn_loss_sigma: 0.4

|

| 244 |

+

guided_attn_loss_lambda: 1.0

|

| 245 |

+

pitch_extract: null

|

| 246 |

+

pitch_extract_conf: {}

|

| 247 |

+

pitch_normalize: null

|

| 248 |

+

pitch_normalize_conf: {}

|

| 249 |

+

energy_extract: null

|

| 250 |

+

energy_extract_conf: {}

|

| 251 |

+

energy_normalize: null

|

| 252 |

+

energy_normalize_conf: {}

|

| 253 |

+

required:

|

| 254 |

+

- output_dir

|

| 255 |

+

- token_list

|

| 256 |

+

distributed: false

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/attn_loss.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/backward_time.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/bce_loss.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/forward_time.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/iter_time.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/l1_loss.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/loss.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/lr_0.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/mse_loss.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/optim_step_time.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/images/train_time.png

ADDED

|

exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train.loss.ave_5best.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7507b55253266b016f726db2bdbf0e65bf56ce11988c035c563a3415802509df

|

| 3 |

+

size 107024142

|

meta.yaml

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

espnet: 0.8.0

|

| 2 |

+

files:

|

| 3 |

+

model_file: exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/train.loss.ave_5best.pth

|

| 4 |

+

python: "3.7.3 (default, Mar 27 2019, 22:11:17) \n[GCC 7.3.0]"

|

| 5 |

+

timestamp: 1610420633.790906

|

| 6 |

+

torch: 1.5.1

|

| 7 |

+

yaml_files:

|

| 8 |

+

train_config: exp/tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_accent_with_pause/config.yaml

|