import from zenodo

Browse files- README.md +51 -0

- exp/enh_stats_8k/train/feats_stats.npz +0 -0

- exp/enh_train_raw/64epoch.pth +3 -0

- exp/enh_train_raw/RESULTS.TXT +20 -0

- exp/enh_train_raw/config.yaml +154 -0

- exp/enh_train_raw/images/backward_time.png +0 -0

- exp/enh_train_raw/images/forward_time.png +0 -0

- exp/enh_train_raw/images/iter_time.png +0 -0

- exp/enh_train_raw/images/loss.png +0 -0

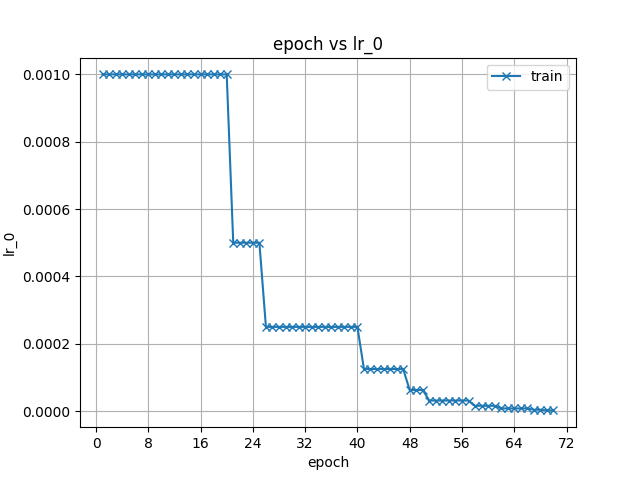

- exp/enh_train_raw/images/lr_0.png +0 -0

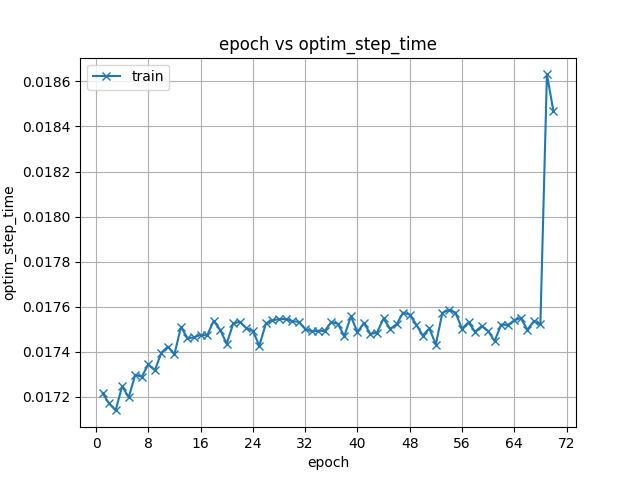

- exp/enh_train_raw/images/optim_step_time.png +0 -0

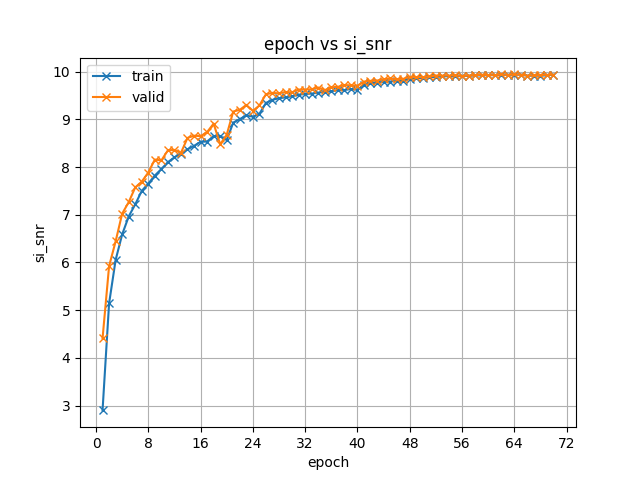

- exp/enh_train_raw/images/si_snr.png +0 -0

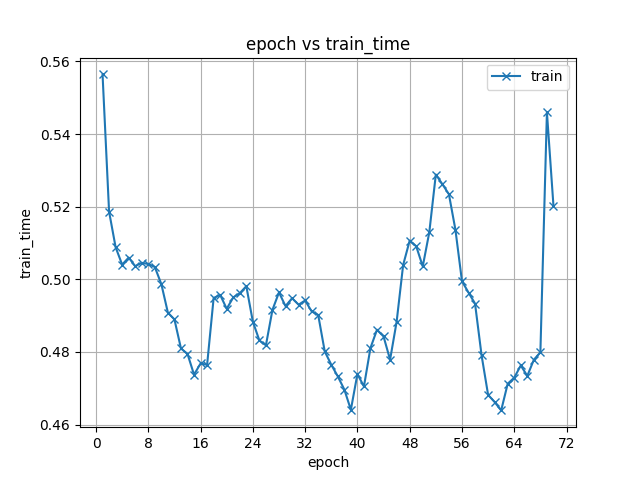

- exp/enh_train_raw/images/train_time.png +0 -0

- meta.yaml +8 -0

README.md

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- espnet

|

| 4 |

+

- audio

|

| 5 |

+

- speech-enhancement

|

| 6 |

+

- audio-to-audio

|

| 7 |

+

language: en

|

| 8 |

+

datasets:

|

| 9 |

+

- librimix

|

| 10 |

+

license: cc-by-4.0

|

| 11 |

+

---

|

| 12 |

+

## Example ESPnet2 ENH model

|

| 13 |

+

### `anogkongda/librimix_enh_train_raw_valid.si_snr.ave`

|

| 14 |

+

♻️ Imported from https://zenodo.org/record/4480771/

|

| 15 |

+

|

| 16 |

+

This model was trained by anogkongda using librimix/enh1 recipe in [espnet](https://github.com/espnet/espnet/).

|

| 17 |

+

### Demo: How to use in ESPnet2

|

| 18 |

+

```python

|

| 19 |

+

# coming soon

|

| 20 |

+

```

|

| 21 |

+

### Citing ESPnet

|

| 22 |

+

```BibTex

|

| 23 |

+

@inproceedings{watanabe2018espnet,

|

| 24 |

+

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson {Enrique Yalta Soplin} and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

|

| 25 |

+

title={{ESPnet}: End-to-End Speech Processing Toolkit},

|

| 26 |

+

year={2018},

|

| 27 |

+

booktitle={Proceedings of Interspeech},

|

| 28 |

+

pages={2207--2211},

|

| 29 |

+

doi={10.21437/Interspeech.2018-1456},

|

| 30 |

+

url={http://dx.doi.org/10.21437/Interspeech.2018-1456}

|

| 31 |

+

}

|

| 32 |

+

@inproceedings{hayashi2020espnet,

|

| 33 |

+

title={{Espnet-TTS}: Unified, reproducible, and integratable open source end-to-end text-to-speech toolkit},

|

| 34 |

+

author={Hayashi, Tomoki and Yamamoto, Ryuichi and Inoue, Katsuki and Yoshimura, Takenori and Watanabe, Shinji and Toda, Tomoki and Takeda, Kazuya and Zhang, Yu and Tan, Xu},

|

| 35 |

+

booktitle={Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

|

| 36 |

+

pages={7654--7658},

|

| 37 |

+

year={2020},

|

| 38 |

+

organization={IEEE}

|

| 39 |

+

}

|

| 40 |

+

```

|

| 41 |

+

or arXiv:

|

| 42 |

+

```bibtex

|

| 43 |

+

@misc{watanabe2018espnet,

|

| 44 |

+

title={ESPnet: End-to-End Speech Processing Toolkit},

|

| 45 |

+

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson Enrique Yalta Soplin and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

|

| 46 |

+

year={2018},

|

| 47 |

+

eprint={1804.00015},

|

| 48 |

+

archivePrefix={arXiv},

|

| 49 |

+

primaryClass={cs.CL}

|

| 50 |

+

}

|

| 51 |

+

```

|

exp/enh_stats_8k/train/feats_stats.npz

ADDED

|

Binary file (778 Bytes). View file

|

|

|

exp/enh_train_raw/64epoch.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b5dc0e50e9ad1089cb8e5fa235e53d26b47e0177a0125abf2a275e6cb3b019f8

|

| 3 |

+

size 13878717

|

exp/enh_train_raw/RESULTS.TXT

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<!-- Generated by ./scripts/utils/show_enh_score.sh -->

|

| 2 |

+

# RESULTS

|

| 3 |

+

## Environments

|

| 4 |

+

- date: `Mon Jan 25 19:16:45 CST 2021`

|

| 5 |

+

- python version: `3.6.3 |Anaconda, Inc.| (default, Nov 20 2017, 20:41:42) [GCC 7.2.0]`

|

| 6 |

+

- espnet version: `espnet 0.9.7`

|

| 7 |

+

- pytorch version: `pytorch 1.6.0`

|

| 8 |

+

- Git hash: `dcaba2585e28b85c815807165ba9953565ee8694`

|

| 9 |

+

- Commit date: `Thu Jan 21 21:26:59 2021 +0800`

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

## enh_train_raw

|

| 13 |

+

|

| 14 |

+

config: ./conf/train.yaml

|

| 15 |

+

|

| 16 |

+

|dataset|STOI|SAR|SDR|SIR|

|

| 17 |

+

|---|---|---|---|---|

|

| 18 |

+

|enhanced_dev|0.845746|11.1029|10.6679|22.6471|

|

| 19 |

+

|enhanced_test|0.846766|10.9166|10.4193|22.0783|

|

| 20 |

+

|

exp/enh_train_raw/config.yaml

ADDED

|

@@ -0,0 +1,154 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

config: ./conf/train.yaml

|

| 2 |

+

print_config: false

|

| 3 |

+

log_level: INFO

|

| 4 |

+

dry_run: false

|

| 5 |

+

iterator_type: chunk

|

| 6 |

+

output_dir: exp/enh_train_raw

|

| 7 |

+

ngpu: 1

|

| 8 |

+

seed: 0

|

| 9 |

+

num_workers: 4

|

| 10 |

+

num_att_plot: 3

|

| 11 |

+

dist_backend: nccl

|

| 12 |

+

dist_init_method: env://

|

| 13 |

+

dist_world_size: 4

|

| 14 |

+

dist_rank: 0

|

| 15 |

+

local_rank: 0

|

| 16 |

+

dist_master_addr: localhost

|

| 17 |

+

dist_master_port: 48369

|

| 18 |

+

dist_launcher: null

|

| 19 |

+

multiprocessing_distributed: true

|

| 20 |

+

cudnn_enabled: true

|

| 21 |

+

cudnn_benchmark: false

|

| 22 |

+

cudnn_deterministic: true

|

| 23 |

+

collect_stats: false

|

| 24 |

+

write_collected_feats: false

|

| 25 |

+

max_epoch: 200

|

| 26 |

+

patience: 5

|

| 27 |

+

val_scheduler_criterion:

|

| 28 |

+

- valid

|

| 29 |

+

- loss

|

| 30 |

+

early_stopping_criterion:

|

| 31 |

+

- valid

|

| 32 |

+

- loss

|

| 33 |

+

- min

|

| 34 |

+

best_model_criterion:

|

| 35 |

+

- - valid

|

| 36 |

+

- si_snr

|

| 37 |

+

- max

|

| 38 |

+

- - valid

|

| 39 |

+

- loss

|

| 40 |

+

- min

|

| 41 |

+

keep_nbest_models: 1

|

| 42 |

+

grad_clip: 5.0

|

| 43 |

+

grad_clip_type: 2.0

|

| 44 |

+

grad_noise: false

|

| 45 |

+

accum_grad: 1

|

| 46 |

+

no_forward_run: false

|

| 47 |

+

resume: true

|

| 48 |

+

train_dtype: float32

|

| 49 |

+

use_amp: false

|

| 50 |

+

log_interval: null

|

| 51 |

+

unused_parameters: false

|

| 52 |

+

use_tensorboard: true

|

| 53 |

+

use_wandb: false

|

| 54 |

+

wandb_project: null

|

| 55 |

+

wandb_id: null

|

| 56 |

+

pretrain_path: null

|

| 57 |

+

init_param: []

|

| 58 |

+

freeze_param: []

|

| 59 |

+

num_iters_per_epoch: null

|

| 60 |

+

batch_size: 16

|

| 61 |

+

valid_batch_size: null

|

| 62 |

+

batch_bins: 1000000

|

| 63 |

+

valid_batch_bins: null

|

| 64 |

+

train_shape_file:

|

| 65 |

+

- exp/enh_stats_8k/train/speech_mix_shape

|

| 66 |

+

- exp/enh_stats_8k/train/speech_ref1_shape

|

| 67 |

+

- exp/enh_stats_8k/train/speech_ref2_shape

|

| 68 |

+

- exp/enh_stats_8k/train/noise_ref1_shape

|

| 69 |

+

valid_shape_file:

|

| 70 |

+

- exp/enh_stats_8k/valid/speech_mix_shape

|

| 71 |

+

- exp/enh_stats_8k/valid/speech_ref1_shape

|

| 72 |

+

- exp/enh_stats_8k/valid/speech_ref2_shape

|

| 73 |

+

- exp/enh_stats_8k/valid/noise_ref1_shape

|

| 74 |

+

batch_type: folded

|

| 75 |

+

valid_batch_type: null

|

| 76 |

+

fold_length:

|

| 77 |

+

- 80000

|

| 78 |

+

- 80000

|

| 79 |

+

- 80000

|

| 80 |

+

- 80000

|

| 81 |

+

sort_in_batch: descending

|

| 82 |

+

sort_batch: descending

|

| 83 |

+

multiple_iterator: false

|

| 84 |

+

chunk_length: 24000

|

| 85 |

+

chunk_shift_ratio: 0.5

|

| 86 |

+

num_cache_chunks: 1024

|

| 87 |

+

train_data_path_and_name_and_type:

|

| 88 |

+

- - dump/raw/train/wav.scp

|

| 89 |

+

- speech_mix

|

| 90 |

+

- sound

|

| 91 |

+

- - dump/raw/train/spk1.scp

|

| 92 |

+

- speech_ref1

|

| 93 |

+

- sound

|

| 94 |

+

- - dump/raw/train/spk2.scp

|

| 95 |

+

- speech_ref2

|

| 96 |

+

- sound

|

| 97 |

+

- - dump/raw/train/noise1.scp

|

| 98 |

+

- noise_ref1

|

| 99 |

+

- sound

|

| 100 |

+

valid_data_path_and_name_and_type:

|

| 101 |

+

- - dump/raw/dev/wav.scp

|

| 102 |

+

- speech_mix

|

| 103 |

+

- sound

|

| 104 |

+

- - dump/raw/dev/spk1.scp

|

| 105 |

+

- speech_ref1

|

| 106 |

+

- sound

|

| 107 |

+

- - dump/raw/dev/spk2.scp

|

| 108 |

+

- speech_ref2

|

| 109 |

+

- sound

|

| 110 |

+

- - dump/raw/dev/noise1.scp

|

| 111 |

+

- noise_ref1

|

| 112 |

+

- sound

|

| 113 |

+

allow_variable_data_keys: false

|

| 114 |

+

max_cache_size: 0.0

|

| 115 |

+

max_cache_fd: 32

|

| 116 |

+

valid_max_cache_size: null

|

| 117 |

+

optim: adam

|

| 118 |

+

optim_conf:

|

| 119 |

+

lr: 0.001

|

| 120 |

+

weight_decay: 0

|

| 121 |

+

scheduler: reducelronplateau

|

| 122 |

+

scheduler_conf:

|

| 123 |

+

mode: min

|

| 124 |

+

factor: 0.5

|

| 125 |

+

patience: 1

|

| 126 |

+

init: xavier_uniform

|

| 127 |

+

model_conf:

|

| 128 |

+

loss_type: si_snr

|

| 129 |

+

use_preprocessor: false

|

| 130 |

+

encoder: conv

|

| 131 |

+

encoder_conf:

|

| 132 |

+

channel: 512

|

| 133 |

+

kernel_size: 16

|

| 134 |

+

stride: 8

|

| 135 |

+

separator: tcn

|

| 136 |

+

separator_conf:

|

| 137 |

+

num_spk: 2

|

| 138 |

+

layer: 8

|

| 139 |

+

stack: 3

|

| 140 |

+

bottleneck_dim: 128

|

| 141 |

+

hidden_dim: 512

|

| 142 |

+

kernel: 3

|

| 143 |

+

causal: false

|

| 144 |

+

norm_type: gLN

|

| 145 |

+

nonlinear: relu

|

| 146 |

+

decoder: conv

|

| 147 |

+

decoder_conf:

|

| 148 |

+

channel: 512

|

| 149 |

+

kernel_size: 16

|

| 150 |

+

stride: 8

|

| 151 |

+

required:

|

| 152 |

+

- output_dir

|

| 153 |

+

version: 0.9.7

|

| 154 |

+

distributed: true

|

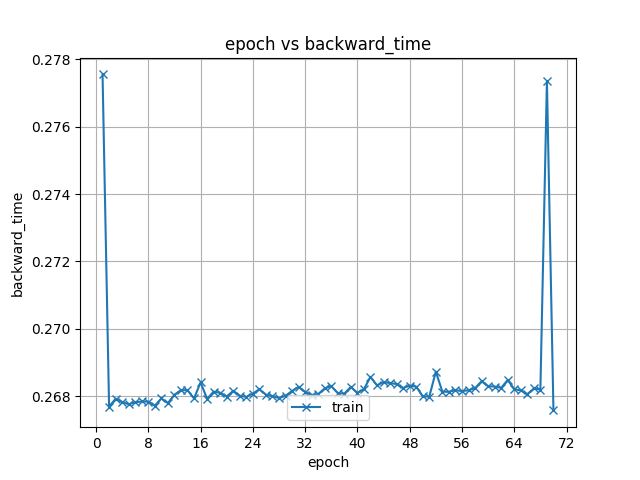

exp/enh_train_raw/images/backward_time.png

ADDED

|

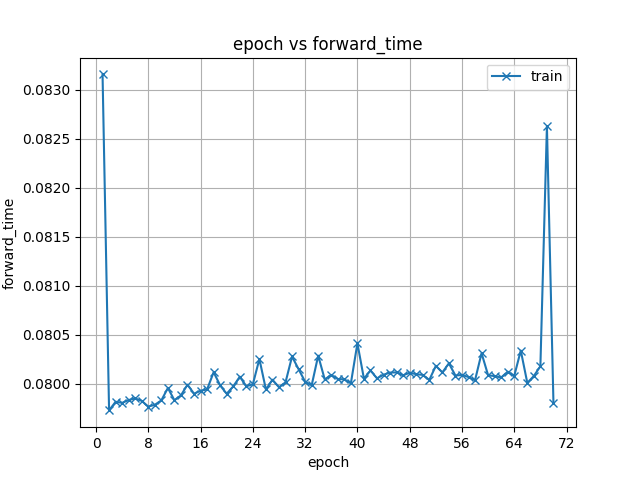

exp/enh_train_raw/images/forward_time.png

ADDED

|

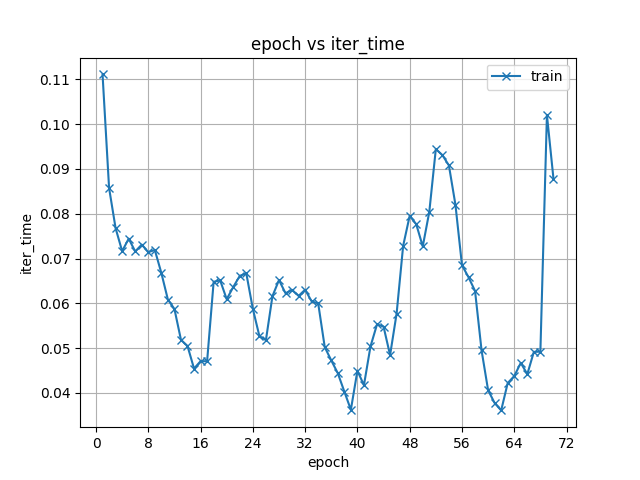

exp/enh_train_raw/images/iter_time.png

ADDED

|

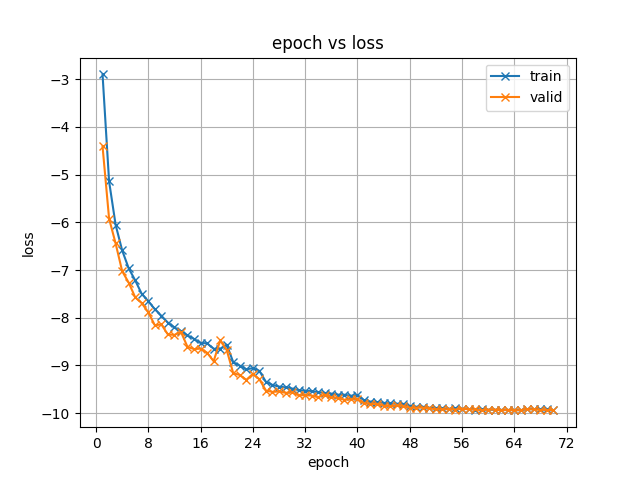

exp/enh_train_raw/images/loss.png

ADDED

|

exp/enh_train_raw/images/lr_0.png

ADDED

|

exp/enh_train_raw/images/optim_step_time.png

ADDED

|

exp/enh_train_raw/images/si_snr.png

ADDED

|

exp/enh_train_raw/images/train_time.png

ADDED

|

meta.yaml

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

espnet: 0.9.7

|

| 2 |

+

files:

|

| 3 |

+

model_file: exp/enh_train_raw/64epoch.pth

|

| 4 |

+

python: "3.6.3 |Anaconda, Inc.| (default, Nov 20 2017, 20:41:42) \n[GCC 7.2.0]"

|

| 5 |

+

timestamp: 1611988263.681143

|

| 6 |

+

torch: 1.6.0

|

| 7 |

+

yaml_files:

|

| 8 |

+

train_config: exp/enh_train_raw/config.yaml

|