add usage section

Browse files

README.md

CHANGED

|

@@ -12,25 +12,13 @@ license: cc-by-4.0

|

|

| 12 |

- [Table of Contents](#table-of-contents)

|

| 13 |

- [Dataset Description](#dataset-description)

|

| 14 |

- [Dataset Summary](#dataset-summary)

|

| 15 |

-

|

| 16 |

-

- [Languages](#languages)

|

| 17 |

- [Dataset Structure](#dataset-structure)

|

| 18 |

- [Data Instances](#data-instances)

|

| 19 |

- [Data Fields](#data-fields)

|

| 20 |

- [Data Splits](#data-splits)

|

| 21 |

- [Dataset Creation](#dataset-creation)

|

| 22 |

- [Curation Rationale](#curation-rationale)

|

| 23 |

-

- [Source Data](#source-data)

|

| 24 |

-

- [Initial Data Collection and Normalization](#initial-data-collection-and-normalization)

|

| 25 |

-

- [Who are the source language producers?](#who-are-the-source-language-producers)

|

| 26 |

-

- [Annotations](#annotations)

|

| 27 |

-

- [Annotation process](#annotation-process)

|

| 28 |

-

- [Who are the annotators?](#who-are-the-annotators)

|

| 29 |

-

- [Personal and Sensitive Information](#personal-and-sensitive-information)

|

| 30 |

-

- [Considerations for Using the Data](#considerations-for-using-the-data)

|

| 31 |

-

- [Social Impact of Dataset](#social-impact-of-dataset)

|

| 32 |

-

- [Discussion of Biases](#discussion-of-biases)

|

| 33 |

-

- [Other Known Limitations](#other-known-limitations)

|

| 34 |

- [Additional Information](#additional-information)

|

| 35 |

- [Dataset Curators](#dataset-curators)

|

| 36 |

- [Licensing Information](#licensing-information)

|

|

@@ -38,7 +26,7 @@ license: cc-by-4.0

|

|

| 38 |

- [Contributions](#contributions)

|

| 39 |

## Dataset Description

|

| 40 |

- **Homepage:** https://google.github.io/cartoonset/

|

| 41 |

-

- **Repository:**

|

| 42 |

- **Paper:** XGAN: Unsupervised Image-to-Image Translation for Many-to-Many Mappings

|

| 43 |

- **Leaderboard:**

|

| 44 |

- **Point of Contact:**

|

|

@@ -46,58 +34,64 @@ license: cc-by-4.0

|

|

| 46 |

|

| 47 |

|

| 48 |

|

| 49 |

-

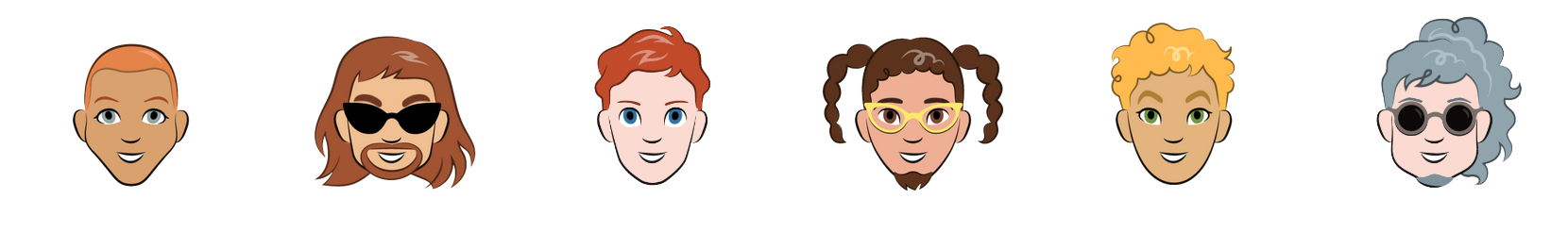

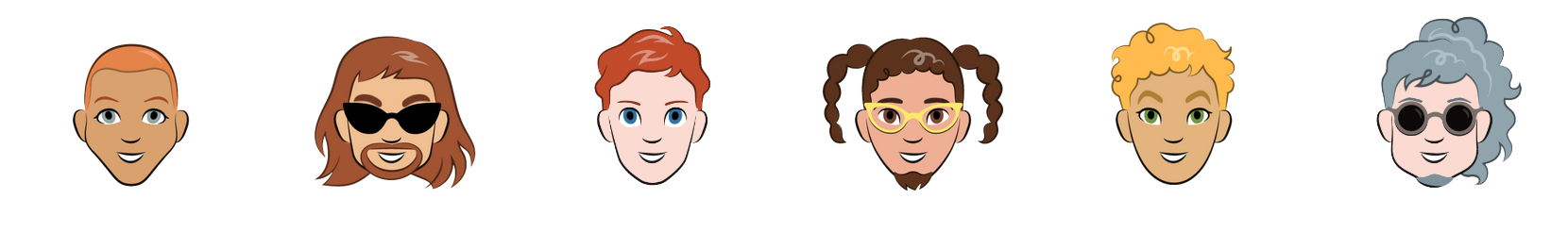

Cartoon Set is a collection of random, 2D cartoon avatar images. The cartoons vary in 10 artwork categories, 4 color categories, and 4 proportion categories, with a total of ~1013 possible combinations. We provide sets of 10k and 100k randomly chosen cartoons and labeled attributes.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 50 |

|

| 51 |

-

### Supported Tasks and Leaderboards

|

| 52 |

-

- `image-classification`: The goal of this task is to classify a given image into one of 10 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-Cartoon Set).

|

| 53 |

-

### Languages

|

| 54 |

-

English

|

| 55 |

## Dataset Structure

|

| 56 |

### Data Instances

|

| 57 |

A sample from the training set is provided below:

|

| 58 |

```

|

| 59 |

{

|

| 60 |

-

'

|

| 61 |

-

'label': 0

|

| 62 |

}

|

| 63 |

```

|

| 64 |

### Data Fields

|

| 65 |

-

-

|

| 66 |

-

- label: 0-9 with the following correspondence

|

| 67 |

-

0 airplane

|

| 68 |

-

1 automobile

|

| 69 |

-

2 bird

|

| 70 |

-

3 cat

|

| 71 |

-

4 deer

|

| 72 |

-

5 dog

|

| 73 |

-

6 frog

|

| 74 |

-

7 horse

|

| 75 |

-

8 ship

|

| 76 |

-

9 truck

|

| 77 |

### Data Splits

|

| 78 |

-

Train

|

| 79 |

## Dataset Creation

|

| 80 |

### Curation Rationale

|

| 81 |

[More Information Needed]

|

| 82 |

-

### Source Data

|

| 83 |

-

#### Initial Data Collection and Normalization

|

| 84 |

-

[More Information Needed]

|

| 85 |

-

#### Who are the source language producers?

|

| 86 |

-

[More Information Needed]

|

| 87 |

-

### Annotations

|

| 88 |

-

#### Annotation process

|

| 89 |

-

[More Information Needed]

|

| 90 |

-

#### Who are the annotators?

|

| 91 |

-

[More Information Needed]

|

| 92 |

-

### Personal and Sensitive Information

|

| 93 |

-

[More Information Needed]

|

| 94 |

-

## Considerations for Using the Data

|

| 95 |

-

### Social Impact of Dataset

|

| 96 |

-

[More Information Needed]

|

| 97 |

-

### Discussion of Biases

|

| 98 |

-

[More Information Needed]

|

| 99 |

-

### Other Known Limitations

|

| 100 |

-

[More Information Needed]

|

| 101 |

## Additional Information

|

| 102 |

### Dataset Curators

|

| 103 |

[More Information Needed]

|

|

@@ -105,13 +99,24 @@ Train and Test

|

|

| 105 |

[More Information Needed]

|

| 106 |

### Citation Information

|

| 107 |

```

|

| 108 |

-

@

|

| 109 |

-

|

| 110 |

-

|

| 111 |

-

|

| 112 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 113 |

}

|

| 114 |

```

|

| 115 |

### Contributions

|

| 116 |

-

|

| 117 |

-

Thanks to [@czabo](https://github.com/czabo) for adding this dataset.

|

|

|

|

| 12 |

- [Table of Contents](#table-of-contents)

|

| 13 |

- [Dataset Description](#dataset-description)

|

| 14 |

- [Dataset Summary](#dataset-summary)

|

| 15 |

+

- [Usage](#usage)

|

|

|

|

| 16 |

- [Dataset Structure](#dataset-structure)

|

| 17 |

- [Data Instances](#data-instances)

|

| 18 |

- [Data Fields](#data-fields)

|

| 19 |

- [Data Splits](#data-splits)

|

| 20 |

- [Dataset Creation](#dataset-creation)

|

| 21 |

- [Curation Rationale](#curation-rationale)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 22 |

- [Additional Information](#additional-information)

|

| 23 |

- [Dataset Curators](#dataset-curators)

|

| 24 |

- [Licensing Information](#licensing-information)

|

|

|

|

| 26 |

- [Contributions](#contributions)

|

| 27 |

## Dataset Description

|

| 28 |

- **Homepage:** https://google.github.io/cartoonset/

|

| 29 |

+

- **Repository:** https://github.com/google/cartoonset/

|

| 30 |

- **Paper:** XGAN: Unsupervised Image-to-Image Translation for Many-to-Many Mappings

|

| 31 |

- **Leaderboard:**

|

| 32 |

- **Point of Contact:**

|

|

|

|

| 34 |

|

| 35 |

|

| 36 |

|

| 37 |

+

[Cartoon Set](https://google.github.io/cartoonset/) is a collection of random, 2D cartoon avatar images. The cartoons vary in 10 artwork categories, 4 color categories, and 4 proportion categories, with a total of ~1013 possible combinations. We provide sets of 10k and 100k randomly chosen cartoons and labeled attributes.

|

| 38 |

+

|

| 39 |

+

#### Usage

|

| 40 |

+

`cartoonset` provides the images as byte strings, this gives you a bit more flexibility into how to load the data. Here we show 2 ways:

|

| 41 |

+

|

| 42 |

+

**Using PIL:**

|

| 43 |

+

```python

|

| 44 |

+

import datasets

|

| 45 |

+

from io import BytesIO

|

| 46 |

+

from PIL import Image

|

| 47 |

+

|

| 48 |

+

ds = datasets.load_dataset("cgarciae/cartoonset", "10k") # or "100k"

|

| 49 |

+

|

| 50 |

+

def process_fn(sample):

|

| 51 |

+

img = Image.open(BytesIO(sample["img_bytes"]))

|

| 52 |

+

...

|

| 53 |

+

return {"img": img}

|

| 54 |

+

|

| 55 |

+

ds = ds.map(process_fn, remove_columns=["img_bytes"])

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

**Using TensorFlow:**

|

| 59 |

+

```python

|

| 60 |

+

import datasets

|

| 61 |

+

import tensorflow as tf

|

| 62 |

+

|

| 63 |

+

hfds = datasets.load_dataset("cgarciae/cartoonset", "10k") # or "100k"

|

| 64 |

+

|

| 65 |

+

ds = tf.data.Dataset.from_generator(

|

| 66 |

+

lambda: hfds,

|

| 67 |

+

output_signature={

|

| 68 |

+

"img_bytes": tf.TensorSpec(shape=(), dtype=tf.string),

|

| 69 |

+

},

|

| 70 |

+

)

|

| 71 |

+

|

| 72 |

+

def process_fn(sample):

|

| 73 |

+

img = tf.image.decode_png(sample["img_bytes"], channels=channels)

|

| 74 |

+

...

|

| 75 |

+

return {"img": img}

|

| 76 |

+

|

| 77 |

+

ds = ds.map(process_fn)

|

| 78 |

+

```

|

| 79 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 80 |

## Dataset Structure

|

| 81 |

### Data Instances

|

| 82 |

A sample from the training set is provided below:

|

| 83 |

```

|

| 84 |

{

|

| 85 |

+

'img_bytes': b'0x...',

|

|

|

|

| 86 |

}

|

| 87 |

```

|

| 88 |

### Data Fields

|

| 89 |

+

- img_bytes: A byte string containing the raw data of a 500x500 PNG image.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 90 |

### Data Splits

|

| 91 |

+

Train

|

| 92 |

## Dataset Creation

|

| 93 |

### Curation Rationale

|

| 94 |

[More Information Needed]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 95 |

## Additional Information

|

| 96 |

### Dataset Curators

|

| 97 |

[More Information Needed]

|

|

|

|

| 99 |

[More Information Needed]

|

| 100 |

### Citation Information

|

| 101 |

```

|

| 102 |

+

@article{DBLP:journals/corr/abs-1711-05139,

|

| 103 |

+

author = {Amelie Royer and

|

| 104 |

+

Konstantinos Bousmalis and

|

| 105 |

+

Stephan Gouws and

|

| 106 |

+

Fred Bertsch and

|

| 107 |

+

Inbar Mosseri and

|

| 108 |

+

Forrester Cole and

|

| 109 |

+

Kevin Murphy},

|

| 110 |

+

title = {{XGAN:} Unsupervised Image-to-Image Translation for many-to-many Mappings},

|

| 111 |

+

journal = {CoRR},

|

| 112 |

+

volume = {abs/1711.05139},

|

| 113 |

+

year = {2017},

|

| 114 |

+

url = {http://arxiv.org/abs/1711.05139},

|

| 115 |

+

eprinttype = {arXiv},

|

| 116 |

+

eprint = {1711.05139},

|

| 117 |

+

timestamp = {Mon, 13 Aug 2018 16:47:38 +0200},

|

| 118 |

+

biburl = {https://dblp.org/rec/journals/corr/abs-1711-05139.bib},

|

| 119 |

+

bibsource = {dblp computer science bibliography, https://dblp.org}

|

| 120 |

}

|

| 121 |

```

|

| 122 |

### Contributions

|

|

|

|

|

|