Datasets:

Librarian Bot: Add language metadata for dataset

Browse filesThis pull request aims to enrich the metadata of your dataset by adding language metadata to `YAML` block of your dataset card `README.md`.

How did we find this information?

- The librarian-bot downloaded a sample of rows from your dataset using the [dataset-server](https://huggingface.co/docs/datasets-server/) library

- The librarian-bot used a language detection model to predict the likely language of your dataset. This was done on columns likely to contain text data.

- Predictions for rows are aggregated by language and a filter is applied to remove languages which are very infrequently predicted

- A confidence threshold is applied to remove languages which are not confidently predicted

The following languages were detected with the following mean probabilities:

- English (en): 99.74%

If this PR is merged, the language metadata will be added to your dataset card. This will allow users to filter datasets by language on the [Hub](https://huggingface.co/datasets).

If the language metadata is incorrect, please feel free to close this PR.

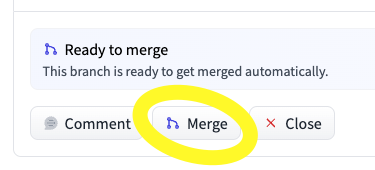

To merge this PR, you can use the merge button below the PR:

This PR comes courtesy of [Librarian Bot](https://huggingface.co/librarian-bots). If you have any feedback, queries, or need assistance, please don't hesitate to reach out to

@davanstrien

.

|

@@ -1,32 +1,34 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

- name:

|

| 10 |

-

dtype: string

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

|

|

|

|

|

|

| 30 |

|

| 31 |

This dataset is the triplet subset of https://huggingface.co/datasets/sentence-transformers/sql-questions with a train and test split.

|

| 32 |

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

task_categories:

|

| 5 |

+

- sentence-similarity

|

| 6 |

+

dataset_info:

|

| 7 |

+

config_name: triplet

|

| 8 |

+

features:

|

| 9 |

+

- name: query

|

| 10 |

+

dtype: string

|

| 11 |

+

- name: positive

|

| 12 |

+

dtype: string

|

| 13 |

+

- name: negative

|

| 14 |

+

dtype: string

|

| 15 |

+

splits:

|

| 16 |

+

- name: train

|

| 17 |

+

num_bytes: 12581563.792427007

|

| 18 |

+

num_examples: 42076

|

| 19 |

+

- name: test

|

| 20 |

+

num_bytes: 3149278.207572993

|

| 21 |

+

num_examples: 10532

|

| 22 |

+

download_size: 1254810

|

| 23 |

+

dataset_size: 15730842

|

| 24 |

+

configs:

|

| 25 |

+

- config_name: triplet

|

| 26 |

+

data_files:

|

| 27 |

+

- split: train

|

| 28 |

+

path: triplet/train-*

|

| 29 |

+

- split: test

|

| 30 |

+

path: triplet/test-*

|

| 31 |

+

---

|

| 32 |

|

| 33 |

This dataset is the triplet subset of https://huggingface.co/datasets/sentence-transformers/sql-questions with a train and test split.

|

| 34 |

|