Creating your own dataset

Sometimes the dataset that you need to build an NLP application doesn’t exist, so you’ll need to create it yourself. In this section we’ll show you how to create a corpus of GitHub issues, which are commonly used to track bugs or features in GitHub repositories. This corpus could be used for various purposes, including:

- Exploring how long it takes to close open issues or pull requests

- Training a multilabel classifier that can tag issues with metadata based on the issue’s description (e.g., “bug,” “enhancement,” or “question”)

- Creating a semantic search engine to find which issues match a user’s query

Here we’ll focus on creating the corpus, and in the next section we’ll tackle the semantic search application. To keep things meta, we’ll use the GitHub issues associated with a popular open source project: 🤗 Datasets! Let’s take a look at how to get the data and explore the information contained in these issues.

Getting the data

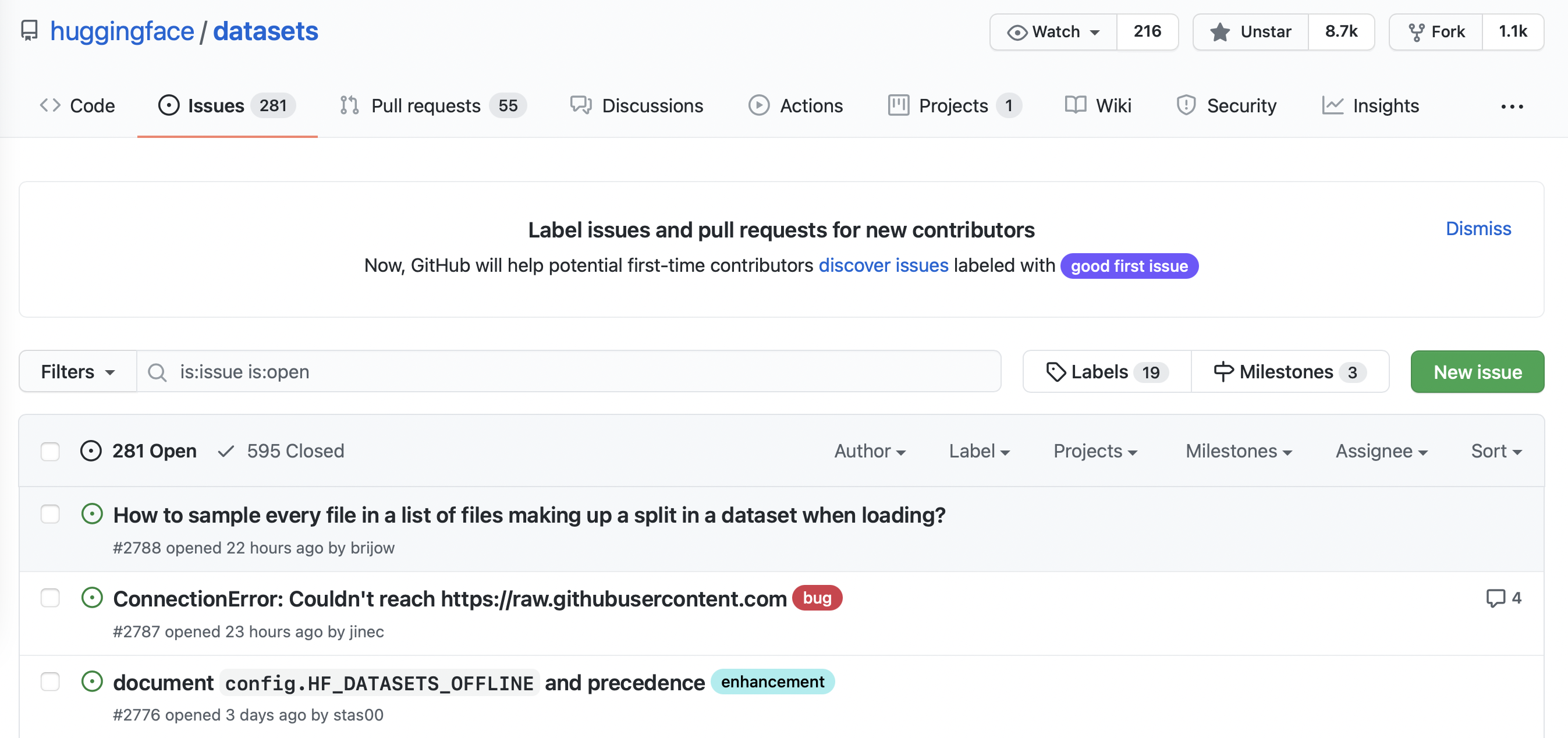

You can find all the issues in 🤗 Datasets by navigating to the repository’s Issues tab. As shown in the following screenshot, at the time of writing there were 331 open issues and 668 closed ones.

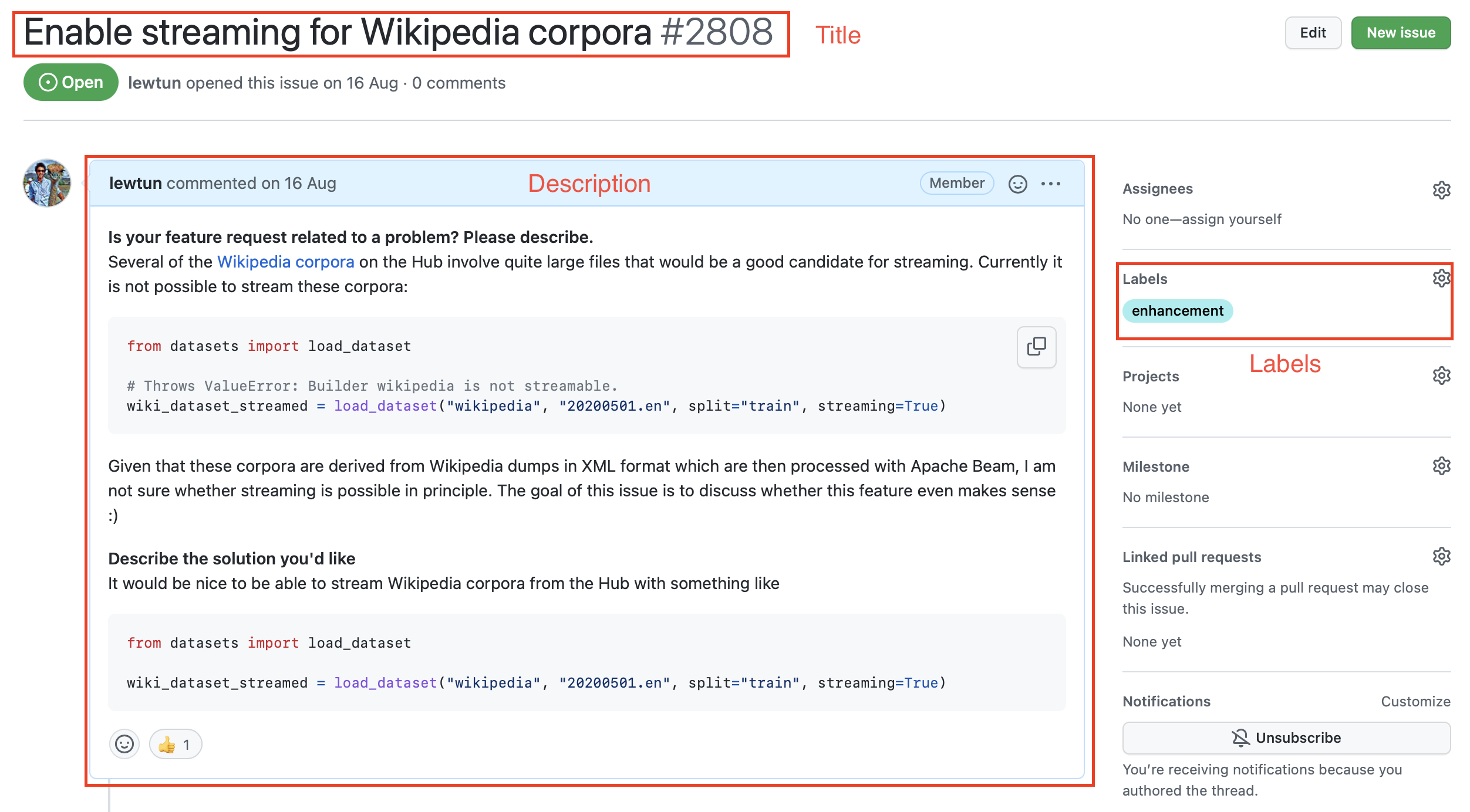

If you click on one of these issues you’ll find it contains a title, a description, and a set of labels that characterize the issue. An example is shown in the screenshot below.

To download all the repository’s issues, we’ll use the GitHub REST API to poll the Issues endpoint. This endpoint returns a list of JSON objects, with each object containing a large number of fields that include the title and description as well as metadata about the status of the issue and so on.

A convenient way to download the issues is via the requests library, which is the standard way for making HTTP requests in Python. You can install the library by running:

!pip install requests

Once the library is installed, you can make GET requests to the Issues endpoint by invoking the requests.get() function. For example, you can run the following command to retrieve the first issue on the first page:

import requests

url = "https://api.github.com/repos/huggingface/datasets/issues?page=1&per_page=1"

response = requests.get(url)The response object contains a lot of useful information about the request, including the HTTP status code:

response.status_code

200where a 200 status means the request was successful (you can find a list of possible HTTP status codes here). What we are really interested in, though, is the payload, which can be accessed in various formats like bytes, strings, or JSON. Since we know our issues are in JSON format, let’s inspect the payload as follows:

response.json()

[{'url': 'https://api.github.com/repos/huggingface/datasets/issues/2792',

'repository_url': 'https://api.github.com/repos/huggingface/datasets',

'labels_url': 'https://api.github.com/repos/huggingface/datasets/issues/2792/labels{/name}',

'comments_url': 'https://api.github.com/repos/huggingface/datasets/issues/2792/comments',

'events_url': 'https://api.github.com/repos/huggingface/datasets/issues/2792/events',

'html_url': 'https://github.com/huggingface/datasets/pull/2792',

'id': 968650274,

'node_id': 'MDExOlB1bGxSZXF1ZXN0NzEwNzUyMjc0',

'number': 2792,

'title': 'Update GooAQ',

'user': {'login': 'bhavitvyamalik',

'id': 19718818,

'node_id': 'MDQ6VXNlcjE5NzE4ODE4',

'avatar_url': 'https://avatars.githubusercontent.com/u/19718818?v=4',

'gravatar_id': '',

'url': 'https://api.github.com/users/bhavitvyamalik',

'html_url': 'https://github.com/bhavitvyamalik',

'followers_url': 'https://api.github.com/users/bhavitvyamalik/followers',

'following_url': 'https://api.github.com/users/bhavitvyamalik/following{/other_user}',

'gists_url': 'https://api.github.com/users/bhavitvyamalik/gists{/gist_id}',

'starred_url': 'https://api.github.com/users/bhavitvyamalik/starred{/owner}{/repo}',

'subscriptions_url': 'https://api.github.com/users/bhavitvyamalik/subscriptions',

'organizations_url': 'https://api.github.com/users/bhavitvyamalik/orgs',

'repos_url': 'https://api.github.com/users/bhavitvyamalik/repos',

'events_url': 'https://api.github.com/users/bhavitvyamalik/events{/privacy}',

'received_events_url': 'https://api.github.com/users/bhavitvyamalik/received_events',

'type': 'User',

'site_admin': False},

'labels': [],

'state': 'open',

'locked': False,

'assignee': None,

'assignees': [],

'milestone': None,

'comments': 1,

'created_at': '2021-08-12T11:40:18Z',

'updated_at': '2021-08-12T12:31:17Z',

'closed_at': None,

'author_association': 'CONTRIBUTOR',

'active_lock_reason': None,

'pull_request': {'url': 'https://api.github.com/repos/huggingface/datasets/pulls/2792',

'html_url': 'https://github.com/huggingface/datasets/pull/2792',

'diff_url': 'https://github.com/huggingface/datasets/pull/2792.diff',

'patch_url': 'https://github.com/huggingface/datasets/pull/2792.patch'},

'body': '[GooAQ](https://github.com/allenai/gooaq) dataset was recently updated after splits were added for the same. This PR contains new updated GooAQ with train/val/test splits and updated README as well.',

'performed_via_github_app': None}]Whoa, that’s a lot of information! We can see useful fields like title, body, and number that describe the issue, as well as information about the GitHub user who opened the issue.

✏️ Try it out! Click on a few of the URLs in the JSON payload above to get a feel for what type of information each GitHub issue is linked to.

As described in the GitHub documentation, unauthenticated requests are limited to 60 requests per hour. Although you can increase the per_page query parameter to reduce the number of requests you make, you will still hit the rate limit on any repository that has more than a few thousand issues. So instead, you should follow GitHub’s instructions on creating a personal access token so that you can boost the rate limit to 5,000 requests per hour. Once you have your token, you can include it as part of the request header:

GITHUB_TOKEN = xxx # Copy your GitHub token here

headers = {"Authorization": f"token {GITHUB_TOKEN}"}⚠️ Do not share a notebook with your GITHUB_TOKEN pasted in it. We recommend you delete the last cell once you have executed it to avoid leaking this information accidentally. Even better, store the token in a .env file and use the python-dotenv library to load it automatically for you as an environment variable.

Now that we have our access token, let’s create a function that can download all the issues from a GitHub repository:

import time

import math

from pathlib import Path

import pandas as pd

from tqdm.notebook import tqdm

def fetch_issues(

owner="huggingface",

repo="datasets",

num_issues=10_000,

rate_limit=5_000,

issues_path=Path("."),

):

if not issues_path.is_dir():

issues_path.mkdir(exist_ok=True)

batch = []

all_issues = []

per_page = 100 # Number of issues to return per page

num_pages = math.ceil(num_issues / per_page)

base_url = "https://api.github.com/repos"

for page in tqdm(range(num_pages)):

# Query with state=all to get both open and closed issues

query = f"issues?page={page}&per_page={per_page}&state=all"

issues = requests.get(f"{base_url}/{owner}/{repo}/{query}", headers=headers)

batch.extend(issues.json())

if len(batch) > rate_limit and len(all_issues) < num_issues:

all_issues.extend(batch)

batch = [] # Flush batch for next time period

print(f"Reached GitHub rate limit. Sleeping for one hour ...")

time.sleep(60 * 60 + 1)

all_issues.extend(batch)

df = pd.DataFrame.from_records(all_issues)

df.to_json(f"{issues_path}/{repo}-issues.jsonl", orient="records", lines=True)

print(

f"Downloaded all the issues for {repo}! Dataset stored at {issues_path}/{repo}-issues.jsonl"

)Now when we call fetch_issues() it will download all the issues in batches to avoid exceeding GitHub’s limit on the number of requests per hour; the result will be stored in a repository_name-issues.jsonl file, where each line is a JSON object the represents an issue. Let’s use this function to grab all the issues from 🤗 Datasets:

# Depending on your internet connection, this can take several minutes to run...

fetch_issues()Once the issues are downloaded we can load them locally using our newfound skills from section 2:

issues_dataset = load_dataset("json", data_files="datasets-issues.jsonl", split="train")

issues_datasetDataset({

features: ['url', 'repository_url', 'labels_url', 'comments_url', 'events_url', 'html_url', 'id', 'node_id', 'number', 'title', 'user', 'labels', 'state', 'locked', 'assignee', 'assignees', 'milestone', 'comments', 'created_at', 'updated_at', 'closed_at', 'author_association', 'active_lock_reason', 'pull_request', 'body', 'timeline_url', 'performed_via_github_app'],

num_rows: 3019

})Great, we’ve created our first dataset from scratch! But why are there several thousand issues when the Issues tab of the 🤗 Datasets repository only shows around 1,000 issues in total 🤔? As described in the GitHub documentation, that’s because we’ve downloaded all the pull requests as well:

GitHub’s REST API v3 considers every pull request an issue, but not every issue is a pull request. For this reason, “Issues” endpoints may return both issues and pull requests in the response. You can identify pull requests by the

pull_requestkey. Be aware that theidof a pull request returned from “Issues” endpoints will be an issue id.

Since the contents of issues and pull requests are quite different, let’s do some minor preprocessing to enable us to distinguish between them.

Cleaning up the data

The above snippet from GitHub’s documentation tells us that the pull_request column can be used to differentiate between issues and pull requests. Let’s look at a random sample to see what the difference is. As we did in section 3, we’ll chain Dataset.shuffle() and Dataset.select() to create a random sample and then zip the html_url and pull_request columns so we can compare the various URLs:

sample = issues_dataset.shuffle(seed=666).select(range(3))

# Print out the URL and pull request entries

for url, pr in zip(sample["html_url"], sample["pull_request"]):

print(f">> URL: {url}")

print(f">> Pull request: {pr}\n")>> URL: https://github.com/huggingface/datasets/pull/850

>> Pull request: {'url': 'https://api.github.com/repos/huggingface/datasets/pulls/850', 'html_url': 'https://github.com/huggingface/datasets/pull/850', 'diff_url': 'https://github.com/huggingface/datasets/pull/850.diff', 'patch_url': 'https://github.com/huggingface/datasets/pull/850.patch'}

>> URL: https://github.com/huggingface/datasets/issues/2773

>> Pull request: None

>> URL: https://github.com/huggingface/datasets/pull/783

>> Pull request: {'url': 'https://api.github.com/repos/huggingface/datasets/pulls/783', 'html_url': 'https://github.com/huggingface/datasets/pull/783', 'diff_url': 'https://github.com/huggingface/datasets/pull/783.diff', 'patch_url': 'https://github.com/huggingface/datasets/pull/783.patch'}Here we can see that each pull request is associated with various URLs, while ordinary issues have a None entry. We can use this distinction to create a new is_pull_request column that checks whether the pull_request field is None or not:

issues_dataset = issues_dataset.map(

lambda x: {"is_pull_request": False if x["pull_request"] is None else True}

)✏️ Try it out! Calculate the average time it takes to close issues in 🤗 Datasets. You may find the Dataset.filter() function useful to filter out the pull requests and open issues, and you can use the Dataset.set_format() function to convert the dataset to a DataFrame so you can easily manipulate the created_at and closed_at timestamps. For bonus points, calculate the average time it takes to close pull requests.

Although we could proceed to further clean up the dataset by dropping or renaming some columns, it is generally a good practice to keep the dataset as “raw” as possible at this stage so that it can be easily used in multiple applications.

Before we push our dataset to the Hugging Face Hub, let’s deal with one thing that’s missing from it: the comments associated with each issue and pull request. We’ll add them next with — you guessed it — the GitHub REST API!

Augmenting the dataset

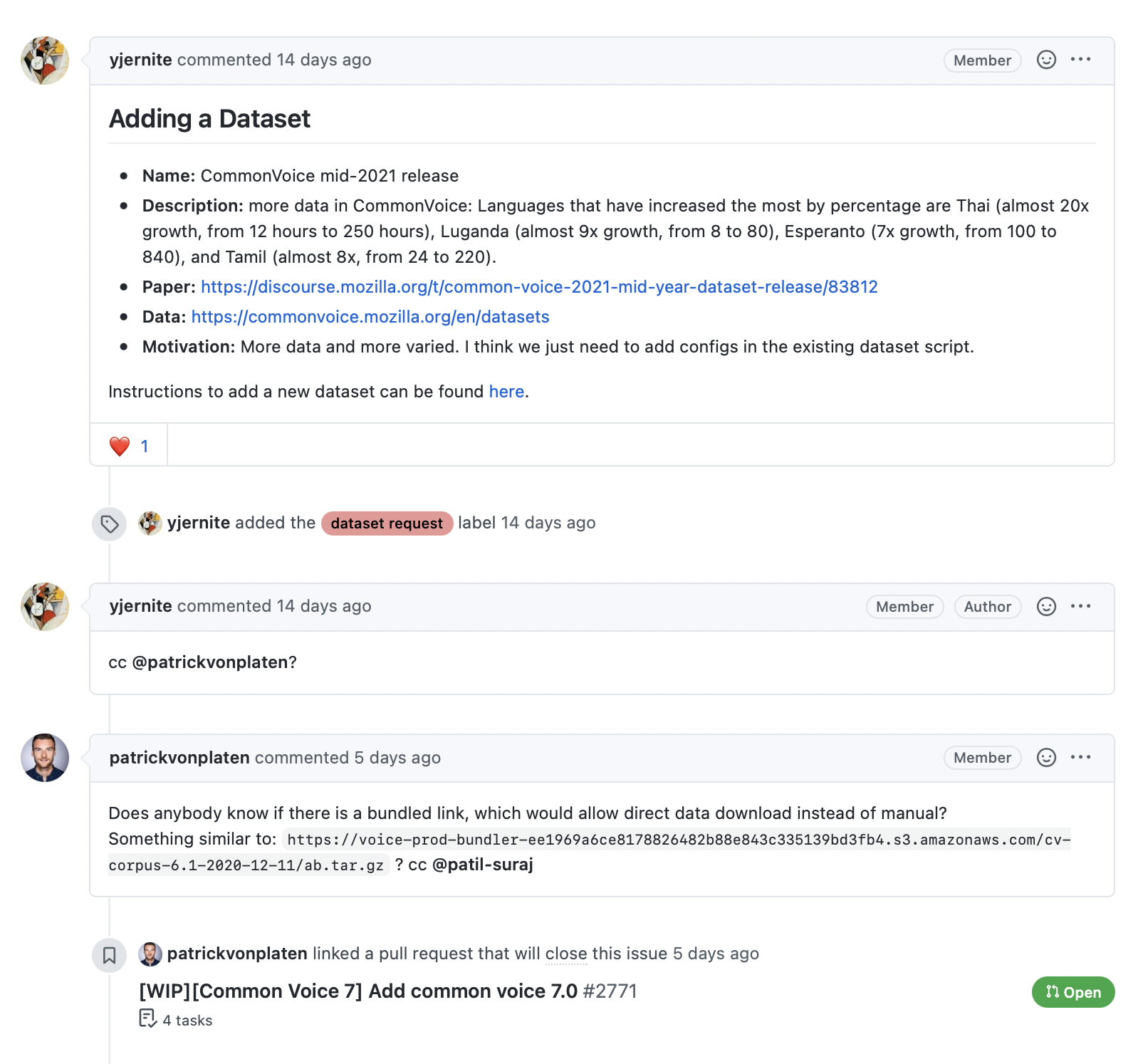

As shown in the following screenshot, the comments associated with an issue or pull request provide a rich source of information, especially if we’re interested in building a search engine to answer user queries about the library.

The GitHub REST API provides a Comments endpoint that returns all the comments associated with an issue number. Let’s test the endpoint to see what it returns:

issue_number = 2792

url = f"https://api.github.com/repos/huggingface/datasets/issues/{issue_number}/comments"

response = requests.get(url, headers=headers)

response.json()[{'url': 'https://api.github.com/repos/huggingface/datasets/issues/comments/897594128',

'html_url': 'https://github.com/huggingface/datasets/pull/2792#issuecomment-897594128',

'issue_url': 'https://api.github.com/repos/huggingface/datasets/issues/2792',

'id': 897594128,

'node_id': 'IC_kwDODunzps41gDMQ',

'user': {'login': 'bhavitvyamalik',

'id': 19718818,

'node_id': 'MDQ6VXNlcjE5NzE4ODE4',

'avatar_url': 'https://avatars.githubusercontent.com/u/19718818?v=4',

'gravatar_id': '',

'url': 'https://api.github.com/users/bhavitvyamalik',

'html_url': 'https://github.com/bhavitvyamalik',

'followers_url': 'https://api.github.com/users/bhavitvyamalik/followers',

'following_url': 'https://api.github.com/users/bhavitvyamalik/following{/other_user}',

'gists_url': 'https://api.github.com/users/bhavitvyamalik/gists{/gist_id}',

'starred_url': 'https://api.github.com/users/bhavitvyamalik/starred{/owner}{/repo}',

'subscriptions_url': 'https://api.github.com/users/bhavitvyamalik/subscriptions',

'organizations_url': 'https://api.github.com/users/bhavitvyamalik/orgs',

'repos_url': 'https://api.github.com/users/bhavitvyamalik/repos',

'events_url': 'https://api.github.com/users/bhavitvyamalik/events{/privacy}',

'received_events_url': 'https://api.github.com/users/bhavitvyamalik/received_events',

'type': 'User',

'site_admin': False},

'created_at': '2021-08-12T12:21:52Z',

'updated_at': '2021-08-12T12:31:17Z',

'author_association': 'CONTRIBUTOR',

'body': "@albertvillanova my tests are failing here:\r\n```\r\ndataset_name = 'gooaq'\r\n\r\n def test_load_dataset(self, dataset_name):\r\n configs = self.dataset_tester.load_all_configs(dataset_name, is_local=True)[:1]\r\n> self.dataset_tester.check_load_dataset(dataset_name, configs, is_local=True, use_local_dummy_data=True)\r\n\r\ntests/test_dataset_common.py:234: \r\n_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ \r\ntests/test_dataset_common.py:187: in check_load_dataset\r\n self.parent.assertTrue(len(dataset[split]) > 0)\r\nE AssertionError: False is not true\r\n```\r\nWhen I try loading dataset on local machine it works fine. Any suggestions on how can I avoid this error?",

'performed_via_github_app': None}]We can see that the comment is stored in the body field, so let’s write a simple function that returns all the comments associated with an issue by picking out the body contents for each element in response.json():

def get_comments(issue_number):

url = f"https://api.github.com/repos/huggingface/datasets/issues/{issue_number}/comments"

response = requests.get(url, headers=headers)

return [r["body"] for r in response.json()]

# Test our function works as expected

get_comments(2792)["@albertvillanova my tests are failing here:\r\n```\r\ndataset_name = 'gooaq'\r\n\r\n def test_load_dataset(self, dataset_name):\r\n configs = self.dataset_tester.load_all_configs(dataset_name, is_local=True)[:1]\r\n> self.dataset_tester.check_load_dataset(dataset_name, configs, is_local=True, use_local_dummy_data=True)\r\n\r\ntests/test_dataset_common.py:234: \r\n_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ \r\ntests/test_dataset_common.py:187: in check_load_dataset\r\n self.parent.assertTrue(len(dataset[split]) > 0)\r\nE AssertionError: False is not true\r\n```\r\nWhen I try loading dataset on local machine it works fine. Any suggestions on how can I avoid this error?"]This looks good, so let’s use Dataset.map() to add a new comments column to each issue in our dataset:

# Depending on your internet connection, this can take a few minutes...

issues_with_comments_dataset = issues_dataset.map(

lambda x: {"comments": get_comments(x["number"])}

)The final step is to push our dataset to the Hub. Let’s take a look at how we can do that.

Uploading the dataset to the Hugging Face Hub

Now that we have our augmented dataset, it’s time to push it to the Hub so we can share it with the community! Uploading a dataset is very simple: just like models and tokenizers from 🤗 Transformers, we can use a push_to_hub() method to push a dataset. To do that we need an authentication token, which can be obtained by first logging into the Hugging Face Hub with the notebook_login() function:

from huggingface_hub import notebook_login

notebook_login()This will create a widget where you can enter your username and password, and an API token will be saved in ~/.huggingface/token. If you’re running the code in a terminal, you can log in via the CLI instead:

huggingface-cli login

Once we’ve done this, we can upload our dataset by running:

issues_with_comments_dataset.push_to_hub("github-issues")From here, anyone can download the dataset by simply providing load_dataset() with the repository ID as the path argument:

remote_dataset = load_dataset("lewtun/github-issues", split="train")

remote_datasetDataset({

features: ['url', 'repository_url', 'labels_url', 'comments_url', 'events_url', 'html_url', 'id', 'node_id', 'number', 'title', 'user', 'labels', 'state', 'locked', 'assignee', 'assignees', 'milestone', 'comments', 'created_at', 'updated_at', 'closed_at', 'author_association', 'active_lock_reason', 'pull_request', 'body', 'performed_via_github_app', 'is_pull_request'],

num_rows: 2855

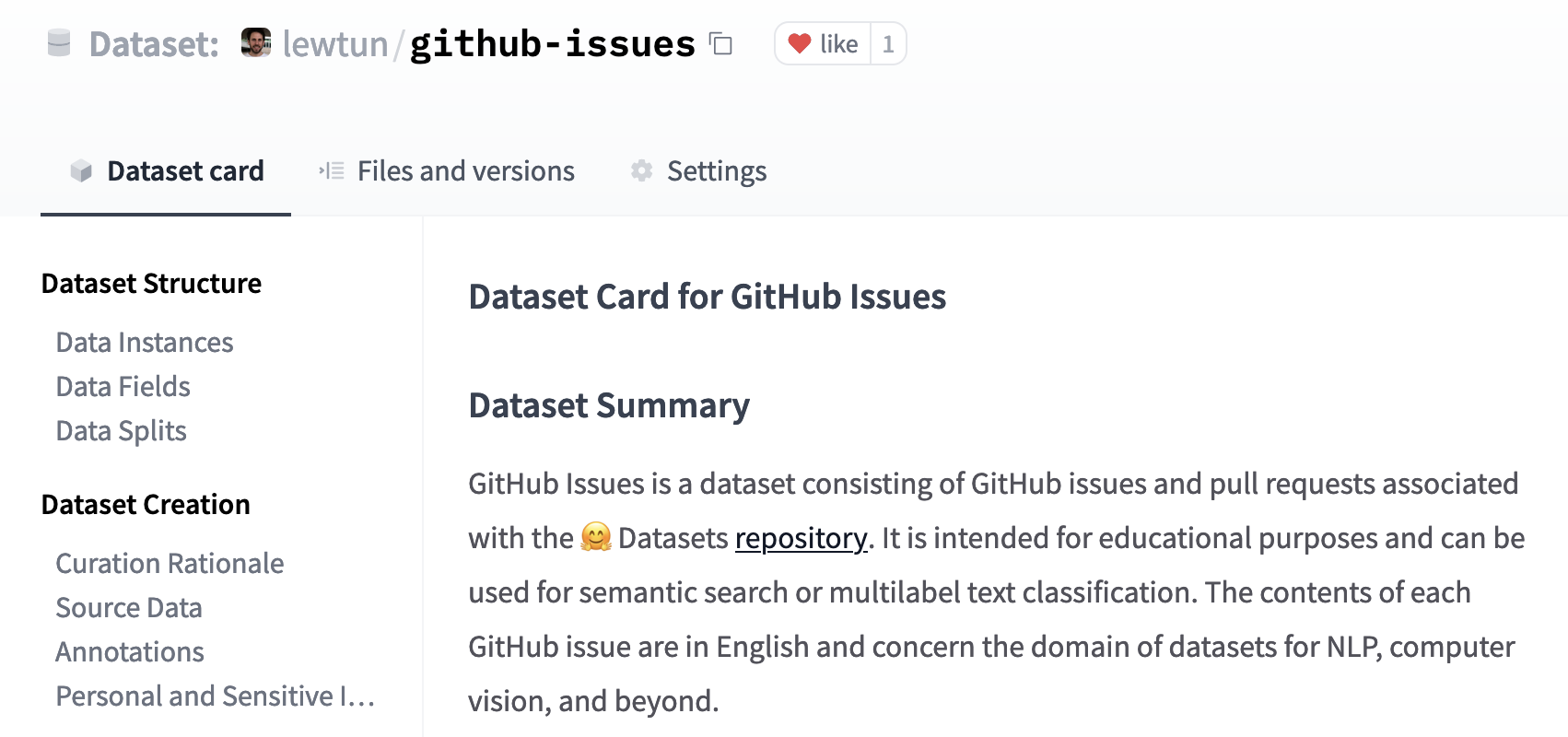

})Cool, we’ve pushed our dataset to the Hub and it’s available for others to use! There’s just one important thing left to do: adding a dataset card that explains how the corpus was created and provides other useful information for the community.

💡 You can also upload a dataset to the Hugging Face Hub directly from the terminal by using huggingface-cli and a bit of Git magic. See the 🤗 Datasets guide for details on how to do this.

Creating a dataset card

Well-documented datasets are more likely to be useful to others (including your future self!), as they provide the context to enable users to decide whether the dataset is relevant to their task and to evaluate any potential biases in or risks associated with using the dataset.

On the Hugging Face Hub, this information is stored in each dataset repository’s README.md file. There are two main steps you should take before creating this file:

- Use the

datasets-taggingapplication to create metadata tags in YAML format. These tags are used for a variety of search features on the Hugging Face Hub and ensure your dataset can be easily found by members of the community. Since we have created a custom dataset here, you’ll need to clone thedatasets-taggingrepository and run the application locally. Here’s what the interface looks like:

- Read the 🤗 Datasets guide on creating informative dataset cards and use it as a template.

You can create the README.md file directly on the Hub, and you can find a template dataset card in the lewtun/github-issues dataset repository. A screenshot of the filled-out dataset card is shown below.

✏️ Try it out! Use the dataset-tagging application and 🤗 Datasets guide to complete the README.md file for your GitHub issues dataset.

That’s it! We’ve seen in this section that creating a good dataset can be quite involved, but fortunately uploading it and sharing it with the community is not. In the next section we’ll use our new dataset to create a semantic search engine with 🤗 Datasets that can match questions to the most relevant issues and comments.

✏️ Try it out! Go through the steps we took in this section to create a dataset of GitHub issues for your favorite open source library (pick something other than 🤗 Datasets, of course!). For bonus points, fine-tune a multilabel classifier to predict the tags present in the labels field.