Commit

•

68b0af9

1

Parent(s):

4c4a040

Upload folder using huggingface_hub

Browse files- README.md +42 -0

- adapter_config.json +27 -0

- adapter_model.safetensors +3 -0

- all_results.json +7 -0

- special_tokens_map.json +24 -0

- tokenizer.model +3 -0

- tokenizer_config.json +44 -0

- train_results.json +7 -0

- trainer_log.jsonl +21 -0

- trainer_state.json +150 -0

- training_args.bin +3 -0

- training_loss.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

library_name: peft

|

| 4 |

+

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- lora

|

| 7 |

+

- generated_from_trainer

|

| 8 |

+

base_model: upstage/SOLAR-10.7B-v1.0

|

| 9 |

+

model-index:

|

| 10 |

+

- name: solar-10b-ocn-v1

|

| 11 |

+

results: []

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 15 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 16 |

+

|

| 17 |

+

# solar-10b-ocn-v1

|

| 18 |

+

|

| 19 |

+

This model is a fine-tuned version of upstage/SOLAR-10.7B-v1.0 on the oncc_medqa_instruct dataset.

|

| 20 |

+

|

| 21 |

+

### Training hyperparameters

|

| 22 |

+

|

| 23 |

+

The following hyperparameters were used during training:

|

| 24 |

+

- learning_rate: 0.0005

|

| 25 |

+

- train_batch_size: 4

|

| 26 |

+

- eval_batch_size: 8

|

| 27 |

+

- seed: 42

|

| 28 |

+

- gradient_accumulation_steps: 4

|

| 29 |

+

- total_train_batch_size: 16

|

| 30 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 31 |

+

- lr_scheduler_type: cosine

|

| 32 |

+

- lr_scheduler_warmup_steps: 10

|

| 33 |

+

- num_epochs: 1.0

|

| 34 |

+

- mixed_precision_training: Native AMP

|

| 35 |

+

|

| 36 |

+

### Framework versions

|

| 37 |

+

|

| 38 |

+

- PEFT 0.8.2

|

| 39 |

+

- Transformers 4.37.2

|

| 40 |

+

- Pytorch 2.1.1+cu121

|

| 41 |

+

- Datasets 2.16.1

|

| 42 |

+

- Tokenizers 0.15.1

|

adapter_config.json

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "upstage/SOLAR-10.7B-v1.0",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"fan_in_fan_out": false,

|

| 7 |

+

"inference_mode": true,

|

| 8 |

+

"init_lora_weights": true,

|

| 9 |

+

"layers_pattern": null,

|

| 10 |

+

"layers_to_transform": null,

|

| 11 |

+

"loftq_config": {},

|

| 12 |

+

"lora_alpha": 16,

|

| 13 |

+

"lora_dropout": 0.2,

|

| 14 |

+

"megatron_config": null,

|

| 15 |

+

"megatron_core": "megatron.core",

|

| 16 |

+

"modules_to_save": null,

|

| 17 |

+

"peft_type": "LORA",

|

| 18 |

+

"r": 8,

|

| 19 |

+

"rank_pattern": {},

|

| 20 |

+

"revision": null,

|

| 21 |

+

"target_modules": [

|

| 22 |

+

"q_proj",

|

| 23 |

+

"v_proj"

|

| 24 |

+

],

|

| 25 |

+

"task_type": "CAUSAL_LM",

|

| 26 |

+

"use_rslora": false

|

| 27 |

+

}

|

adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:def74a3b68fb21040b5cd2091a7cfd7ad6547ea4c41d1e933b14dec5d778dad6

|

| 3 |

+

size 20472752

|

all_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 0.16439640081574763,

|

| 4 |

+

"train_runtime": 1233.0121,

|

| 5 |

+

"train_samples_per_second": 2.638,

|

| 6 |

+

"train_steps_per_second": 0.165

|

| 7 |

+

}

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": false,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "</s>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": false,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "</s>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<unk>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dadfd56d766715c61d2ef780a525ab43b8e6da4de6865bda3d95fdef5e134055

|

| 3 |

+

size 493443

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,44 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": true,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"0": {

|

| 6 |

+

"content": "<unk>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"1": {

|

| 14 |

+

"content": "<s>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"2": {

|

| 22 |

+

"content": "</s>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

}

|

| 29 |

+

},

|

| 30 |

+

"additional_special_tokens": [],

|

| 31 |

+

"bos_token": "<s>",

|

| 32 |

+

"clean_up_tokenization_spaces": false,

|

| 33 |

+

"eos_token": "</s>",

|

| 34 |

+

"legacy": true,

|

| 35 |

+

"model_max_length": 1000000000000000019884624838656,

|

| 36 |

+

"pad_token": "</s>",

|

| 37 |

+

"padding_side": "right",

|

| 38 |

+

"sp_model_kwargs": {},

|

| 39 |

+

"spaces_between_special_tokens": false,

|

| 40 |

+

"split_special_tokens": false,

|

| 41 |

+

"tokenizer_class": "LlamaTokenizer",

|

| 42 |

+

"unk_token": "<unk>",

|

| 43 |

+

"use_default_system_prompt": true

|

| 44 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 0.16439640081574763,

|

| 4 |

+

"train_runtime": 1233.0121,

|

| 5 |

+

"train_samples_per_second": 2.638,

|

| 6 |

+

"train_steps_per_second": 0.165

|

| 7 |

+

}

|

trainer_log.jsonl

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{"current_steps": 10, "total_steps": 203, "loss": 0.7976, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0005, "epoch": 0.05, "percentage": 4.93, "elapsed_time": "0:01:01", "remaining_time": "0:19:41"}

|

| 2 |

+

{"current_steps": 20, "total_steps": 203, "loss": 0.165, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0004966952699028185, "epoch": 0.1, "percentage": 9.85, "elapsed_time": "0:02:00", "remaining_time": "0:18:18"}

|

| 3 |

+

{"current_steps": 30, "total_steps": 203, "loss": 0.1474, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0004868684495393958, "epoch": 0.15, "percentage": 14.78, "elapsed_time": "0:02:58", "remaining_time": "0:17:10"}

|

| 4 |

+

{"current_steps": 40, "total_steps": 203, "loss": 0.1534, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00047077933882184867, "epoch": 0.2, "percentage": 19.7, "elapsed_time": "0:03:59", "remaining_time": "0:16:17"}

|

| 5 |

+

{"current_steps": 50, "total_steps": 203, "loss": 0.1286, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00044885329909757834, "epoch": 0.25, "percentage": 24.63, "elapsed_time": "0:05:00", "remaining_time": "0:15:19"}

|

| 6 |

+

{"current_steps": 60, "total_steps": 203, "loss": 0.1416, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0004216700075136953, "epoch": 0.29, "percentage": 29.56, "elapsed_time": "0:06:02", "remaining_time": "0:14:24"}

|

| 7 |

+

{"current_steps": 70, "total_steps": 203, "loss": 0.1198, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00038994813160490117, "epoch": 0.34, "percentage": 34.48, "elapsed_time": "0:07:04", "remaining_time": "0:13:26"}

|

| 8 |

+

{"current_steps": 80, "total_steps": 203, "loss": 0.1522, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0003545263292756348, "epoch": 0.39, "percentage": 39.41, "elapsed_time": "0:08:05", "remaining_time": "0:12:26"}

|

| 9 |

+

{"current_steps": 90, "total_steps": 203, "loss": 0.137, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0003163410764959277, "epoch": 0.44, "percentage": 44.33, "elapsed_time": "0:09:04", "remaining_time": "0:11:23"}

|

| 10 |

+

{"current_steps": 100, "total_steps": 203, "loss": 0.124, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0002764019088988165, "epoch": 0.49, "percentage": 49.26, "elapsed_time": "0:10:00", "remaining_time": "0:10:18"}

|

| 11 |

+

{"current_steps": 110, "total_steps": 203, "loss": 0.122, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00023576473183801758, "epoch": 0.54, "percentage": 54.19, "elapsed_time": "0:11:00", "remaining_time": "0:09:18"}

|

| 12 |

+

{"current_steps": 120, "total_steps": 203, "loss": 0.1249, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00019550390453030946, "epoch": 0.59, "percentage": 59.11, "elapsed_time": "0:12:07", "remaining_time": "0:08:22"}

|

| 13 |

+

{"current_steps": 130, "total_steps": 203, "loss": 0.1287, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.0001566838363176219, "epoch": 0.64, "percentage": 64.04, "elapsed_time": "0:13:07", "remaining_time": "0:07:22"}

|

| 14 |

+

{"current_steps": 140, "total_steps": 203, "loss": 0.1395, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 0.00012033084598233163, "epoch": 0.69, "percentage": 68.97, "elapsed_time": "0:14:08", "remaining_time": "0:06:21"}

|

| 15 |

+

{"current_steps": 150, "total_steps": 203, "loss": 0.124, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 8.740602809470736e-05, "epoch": 0.74, "percentage": 73.89, "elapsed_time": "0:15:09", "remaining_time": "0:05:21"}

|

| 16 |

+

{"current_steps": 160, "total_steps": 203, "loss": 0.1315, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 5.877984374768877e-05, "epoch": 0.79, "percentage": 78.82, "elapsed_time": "0:16:11", "remaining_time": "0:04:21"}

|

| 17 |

+

{"current_steps": 170, "total_steps": 203, "loss": 0.1109, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 3.520910744510819e-05, "epoch": 0.84, "percentage": 83.74, "elapsed_time": "0:17:13", "remaining_time": "0:03:20"}

|

| 18 |

+

{"current_steps": 180, "total_steps": 203, "loss": 0.1236, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 1.7316978560340647e-05, "epoch": 0.88, "percentage": 88.67, "elapsed_time": "0:18:15", "remaining_time": "0:02:19"}

|

| 19 |

+

{"current_steps": 190, "total_steps": 203, "loss": 0.1205, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 5.576486348011222e-06, "epoch": 0.93, "percentage": 93.6, "elapsed_time": "0:19:13", "remaining_time": "0:01:18"}

|

| 20 |

+

{"current_steps": 200, "total_steps": 203, "loss": 0.1078, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 2.980240718784277e-07, "epoch": 0.98, "percentage": 98.52, "elapsed_time": "0:20:13", "remaining_time": "0:00:18"}

|

| 21 |

+

{"current_steps": 203, "total_steps": 203, "loss": null, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": null, "epoch": 1.0, "percentage": 100.0, "elapsed_time": "0:20:33", "remaining_time": "0:00:00"}

|

trainer_state.json

ADDED

|

@@ -0,0 +1,150 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"best_metric": null,

|

| 3 |

+

"best_model_checkpoint": null,

|

| 4 |

+

"epoch": 0.9975429975429976,

|

| 5 |

+

"eval_steps": 500,

|

| 6 |

+

"global_step": 203,

|

| 7 |

+

"is_hyper_param_search": false,

|

| 8 |

+

"is_local_process_zero": true,

|

| 9 |

+

"is_world_process_zero": true,

|

| 10 |

+

"log_history": [

|

| 11 |

+

{

|

| 12 |

+

"epoch": 0.05,

|

| 13 |

+

"learning_rate": 0.0005,

|

| 14 |

+

"loss": 0.7976,

|

| 15 |

+

"step": 10

|

| 16 |

+

},

|

| 17 |

+

{

|

| 18 |

+

"epoch": 0.1,

|

| 19 |

+

"learning_rate": 0.0004966952699028185,

|

| 20 |

+

"loss": 0.165,

|

| 21 |

+

"step": 20

|

| 22 |

+

},

|

| 23 |

+

{

|

| 24 |

+

"epoch": 0.15,

|

| 25 |

+

"learning_rate": 0.0004868684495393958,

|

| 26 |

+

"loss": 0.1474,

|

| 27 |

+

"step": 30

|

| 28 |

+

},

|

| 29 |

+

{

|

| 30 |

+

"epoch": 0.2,

|

| 31 |

+

"learning_rate": 0.00047077933882184867,

|

| 32 |

+

"loss": 0.1534,

|

| 33 |

+

"step": 40

|

| 34 |

+

},

|

| 35 |

+

{

|

| 36 |

+

"epoch": 0.25,

|

| 37 |

+

"learning_rate": 0.00044885329909757834,

|

| 38 |

+

"loss": 0.1286,

|

| 39 |

+

"step": 50

|

| 40 |

+

},

|

| 41 |

+

{

|

| 42 |

+

"epoch": 0.29,

|

| 43 |

+

"learning_rate": 0.0004216700075136953,

|

| 44 |

+

"loss": 0.1416,

|

| 45 |

+

"step": 60

|

| 46 |

+

},

|

| 47 |

+

{

|

| 48 |

+

"epoch": 0.34,

|

| 49 |

+

"learning_rate": 0.00038994813160490117,

|

| 50 |

+

"loss": 0.1198,

|

| 51 |

+

"step": 70

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"epoch": 0.39,

|

| 55 |

+

"learning_rate": 0.0003545263292756348,

|

| 56 |

+

"loss": 0.1522,

|

| 57 |

+

"step": 80

|

| 58 |

+

},

|

| 59 |

+

{

|

| 60 |

+

"epoch": 0.44,

|

| 61 |

+

"learning_rate": 0.0003163410764959277,

|

| 62 |

+

"loss": 0.137,

|

| 63 |

+

"step": 90

|

| 64 |

+

},

|

| 65 |

+

{

|

| 66 |

+

"epoch": 0.49,

|

| 67 |

+

"learning_rate": 0.0002764019088988165,

|

| 68 |

+

"loss": 0.124,

|

| 69 |

+

"step": 100

|

| 70 |

+

},

|

| 71 |

+

{

|

| 72 |

+

"epoch": 0.54,

|

| 73 |

+

"learning_rate": 0.00023576473183801758,

|

| 74 |

+

"loss": 0.122,

|

| 75 |

+

"step": 110

|

| 76 |

+

},

|

| 77 |

+

{

|

| 78 |

+

"epoch": 0.59,

|

| 79 |

+

"learning_rate": 0.00019550390453030946,

|

| 80 |

+

"loss": 0.1249,

|

| 81 |

+

"step": 120

|

| 82 |

+

},

|

| 83 |

+

{

|

| 84 |

+

"epoch": 0.64,

|

| 85 |

+

"learning_rate": 0.0001566838363176219,

|

| 86 |

+

"loss": 0.1287,

|

| 87 |

+

"step": 130

|

| 88 |

+

},

|

| 89 |

+

{

|

| 90 |

+

"epoch": 0.69,

|

| 91 |

+

"learning_rate": 0.00012033084598233163,

|

| 92 |

+

"loss": 0.1395,

|

| 93 |

+

"step": 140

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"epoch": 0.74,

|

| 97 |

+

"learning_rate": 8.740602809470736e-05,

|

| 98 |

+

"loss": 0.124,

|

| 99 |

+

"step": 150

|

| 100 |

+

},

|

| 101 |

+

{

|

| 102 |

+

"epoch": 0.79,

|

| 103 |

+

"learning_rate": 5.877984374768877e-05,

|

| 104 |

+

"loss": 0.1315,

|

| 105 |

+

"step": 160

|

| 106 |

+

},

|

| 107 |

+

{

|

| 108 |

+

"epoch": 0.84,

|

| 109 |

+

"learning_rate": 3.520910744510819e-05,

|

| 110 |

+

"loss": 0.1109,

|

| 111 |

+

"step": 170

|

| 112 |

+

},

|

| 113 |

+

{

|

| 114 |

+

"epoch": 0.88,

|

| 115 |

+

"learning_rate": 1.7316978560340647e-05,

|

| 116 |

+

"loss": 0.1236,

|

| 117 |

+

"step": 180

|

| 118 |

+

},

|

| 119 |

+

{

|

| 120 |

+

"epoch": 0.93,

|

| 121 |

+

"learning_rate": 5.576486348011222e-06,

|

| 122 |

+

"loss": 0.1205,

|

| 123 |

+

"step": 190

|

| 124 |

+

},

|

| 125 |

+

{

|

| 126 |

+

"epoch": 0.98,

|

| 127 |

+

"learning_rate": 2.980240718784277e-07,

|

| 128 |

+

"loss": 0.1078,

|

| 129 |

+

"step": 200

|

| 130 |

+

},

|

| 131 |

+

{

|

| 132 |

+

"epoch": 1.0,

|

| 133 |

+

"step": 203,

|

| 134 |

+

"total_flos": 8.131633707692851e+16,

|

| 135 |

+

"train_loss": 0.16439640081574763,

|

| 136 |

+

"train_runtime": 1233.0121,

|

| 137 |

+

"train_samples_per_second": 2.638,

|

| 138 |

+

"train_steps_per_second": 0.165

|

| 139 |

+

}

|

| 140 |

+

],

|

| 141 |

+

"logging_steps": 10,

|

| 142 |

+

"max_steps": 203,

|

| 143 |

+

"num_input_tokens_seen": 0,

|

| 144 |

+

"num_train_epochs": 1,

|

| 145 |

+

"save_steps": 100,

|

| 146 |

+

"total_flos": 8.131633707692851e+16,

|

| 147 |

+

"train_batch_size": 4,

|

| 148 |

+

"trial_name": null,

|

| 149 |

+

"trial_params": null

|

| 150 |

+

}

|

training_args.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2b029cca67f8d67aa3886ace88170b2de2064903ed1f10bddd5e088fb20f5d1f

|

| 3 |

+

size 4856

|

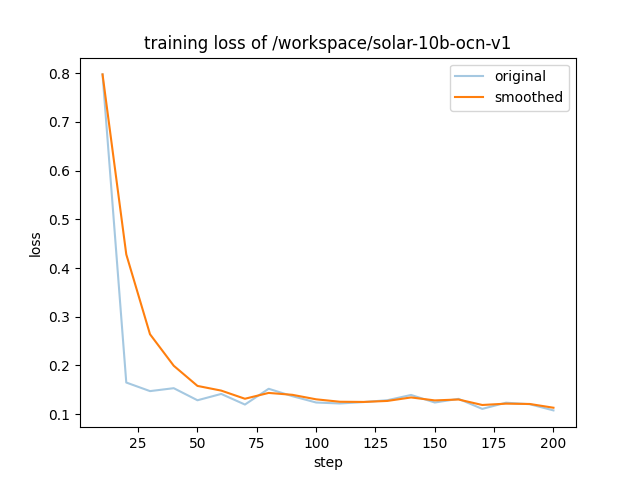

training_loss.png

ADDED

|