End of training

Browse files- README.md +3 -2

- all_results.json +22 -0

- eval_results.json +17 -0

- train_results.json +8 -0

- trainer_state.json +0 -0

- training_eval_loss.png +0 -0

- training_loss.png +0 -0

- training_rewards_accuracies.png +0 -0

- training_sft_loss.png +0 -0

README.md

CHANGED

|

@@ -2,9 +2,10 @@

|

|

| 2 |

license: apache-2.0

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

|

|

|

|

|

|

| 5 |

- trl

|

| 6 |

- dpo

|

| 7 |

-

- llama-factory

|

| 8 |

- generated_from_trainer

|

| 9 |

base_model: mistralai/Mistral-7B-Instruct-v0.2

|

| 10 |

model-index:

|

|

@@ -17,7 +18,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 17 |

|

| 18 |

# Mistral-7B-Instruct-v0.2-ORPO-SALT

|

| 19 |

|

| 20 |

-

This model is a fine-tuned version of [mistralai/Mistral-7B-Instruct-v0.2](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.2) on the

|

| 21 |

It achieves the following results on the evaluation set:

|

| 22 |

- Loss: 0.8630

|

| 23 |

- Rewards/chosen: -0.0798

|

|

|

|

| 2 |

license: apache-2.0

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- lora

|

| 7 |

- trl

|

| 8 |

- dpo

|

|

|

|

| 9 |

- generated_from_trainer

|

| 10 |

base_model: mistralai/Mistral-7B-Instruct-v0.2

|

| 11 |

model-index:

|

|

|

|

| 18 |

|

| 19 |

# Mistral-7B-Instruct-v0.2-ORPO-SALT

|

| 20 |

|

| 21 |

+

This model is a fine-tuned version of [mistralai/Mistral-7B-Instruct-v0.2](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.2) on the dpo_mix_en and the bct_non_cot_dpo_1000 datasets.

|

| 22 |

It achieves the following results on the evaluation set:

|

| 23 |

- Loss: 0.8630

|

| 24 |

- Rewards/chosen: -0.0798

|

all_results.json

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"eval_logits/chosen": -2.8472585678100586,

|

| 4 |

+

"eval_logits/rejected": -2.8558220863342285,

|

| 5 |

+

"eval_logps/chosen": -0.7975095510482788,

|

| 6 |

+

"eval_logps/rejected": -1.0328320264816284,

|

| 7 |

+

"eval_loss": 0.8629826903343201,

|

| 8 |

+

"eval_odds_ratio_loss": 0.6547309160232544,

|

| 9 |

+

"eval_rewards/accuracies": 0.5618181824684143,

|

| 10 |

+

"eval_rewards/chosen": -0.07975095510482788,

|

| 11 |

+

"eval_rewards/margins": 0.02353225089609623,

|

| 12 |

+

"eval_rewards/rejected": -0.10328320413827896,

|

| 13 |

+

"eval_runtime": 194.6972,

|

| 14 |

+

"eval_samples_per_second": 5.65,

|

| 15 |

+

"eval_sft_loss": 0.7975095510482788,

|

| 16 |

+

"eval_steps_per_second": 2.825,

|

| 17 |

+

"total_flos": 2.1013894560546816e+18,

|

| 18 |

+

"train_loss": 0.9013287582572352,

|

| 19 |

+

"train_runtime": 18144.1457,

|

| 20 |

+

"train_samples_per_second": 1.637,

|

| 21 |

+

"train_steps_per_second": 0.102

|

| 22 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"eval_logits/chosen": -2.8472585678100586,

|

| 4 |

+

"eval_logits/rejected": -2.8558220863342285,

|

| 5 |

+

"eval_logps/chosen": -0.7975095510482788,

|

| 6 |

+

"eval_logps/rejected": -1.0328320264816284,

|

| 7 |

+

"eval_loss": 0.8629826903343201,

|

| 8 |

+

"eval_odds_ratio_loss": 0.6547309160232544,

|

| 9 |

+

"eval_rewards/accuracies": 0.5618181824684143,

|

| 10 |

+

"eval_rewards/chosen": -0.07975095510482788,

|

| 11 |

+

"eval_rewards/margins": 0.02353225089609623,

|

| 12 |

+

"eval_rewards/rejected": -0.10328320413827896,

|

| 13 |

+

"eval_runtime": 194.6972,

|

| 14 |

+

"eval_samples_per_second": 5.65,

|

| 15 |

+

"eval_sft_loss": 0.7975095510482788,

|

| 16 |

+

"eval_steps_per_second": 2.825

|

| 17 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9969690846635686,

|

| 3 |

+

"total_flos": 2.1013894560546816e+18,

|

| 4 |

+

"train_loss": 0.9013287582572352,

|

| 5 |

+

"train_runtime": 18144.1457,

|

| 6 |

+

"train_samples_per_second": 1.637,

|

| 7 |

+

"train_steps_per_second": 0.102

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

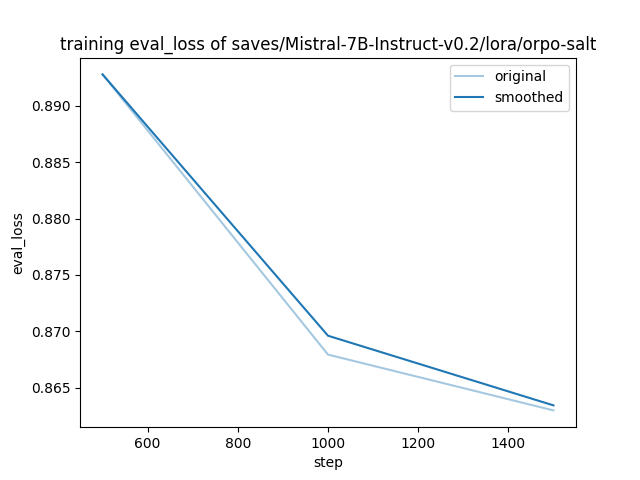

training_eval_loss.png

ADDED

|

training_loss.png

ADDED

|

training_rewards_accuracies.png

ADDED

|

training_sft_loss.png

ADDED

|