Replace with clean markdown card

Browse files

README.md

CHANGED

|

@@ -10,18 +10,16 @@ tags:

|

|

| 10 |

- braindecode

|

| 11 |

- foundation-model

|

| 12 |

- convolutional

|

| 13 |

-

- transformer

|

| 14 |

---

|

| 15 |

|

| 16 |

# Labram

|

| 17 |

|

| 18 |

-

Labram from Jiang, W B et al (2024) .

|

| 19 |

|

| 20 |

-

> **Architecture-only repository.**

|

| 21 |

> `braindecode.models.Labram` class. **No pretrained weights are

|

| 22 |

-

> distributed here**

|

| 23 |

-

> data

|

| 24 |

-

> separately.

|

| 25 |

|

| 26 |

## Quick start

|

| 27 |

|

|

@@ -40,241 +38,59 @@ model = Labram(

|

|

| 40 |

)

|

| 41 |

```

|

| 42 |

|

| 43 |

-

The signal-shape arguments above are

|

| 44 |

-

|

| 45 |

|

| 46 |

## Documentation

|

| 47 |

-

|

| 48 |

-

-

|

| 49 |

-

<https://braindecode.org/stable/generated/braindecode.models.Labram.html>

|

| 50 |

-

- Interactive browser with live instantiation:

|

| 51 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 52 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/labram.py#L196>

|

| 53 |

|

| 54 |

-

## Architecture description

|

| 55 |

-

|

| 56 |

-

The block below is the rendered class docstring (parameters,

|

| 57 |

-

references, architecture figure where available).

|

| 58 |

-

|

| 59 |

-

<div class='bd-doc'><main>

|

| 60 |

-

<p>Labram from Jiang, W B et al (2024) [Jiang2024]_.</p>

|

| 61 |

-

<span style="display:inline-block;padding:2px 8px;border-radius:4px;background:#5cb85c;color:white;font-size:11px;font-weight:600;margin-right:4px;">Convolution</span><span style="display:inline-block;padding:2px 8px;border-radius:4px;background:#d9534f;color:white;font-size:11px;font-weight:600;margin-right:4px;">Foundation Model</span>

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

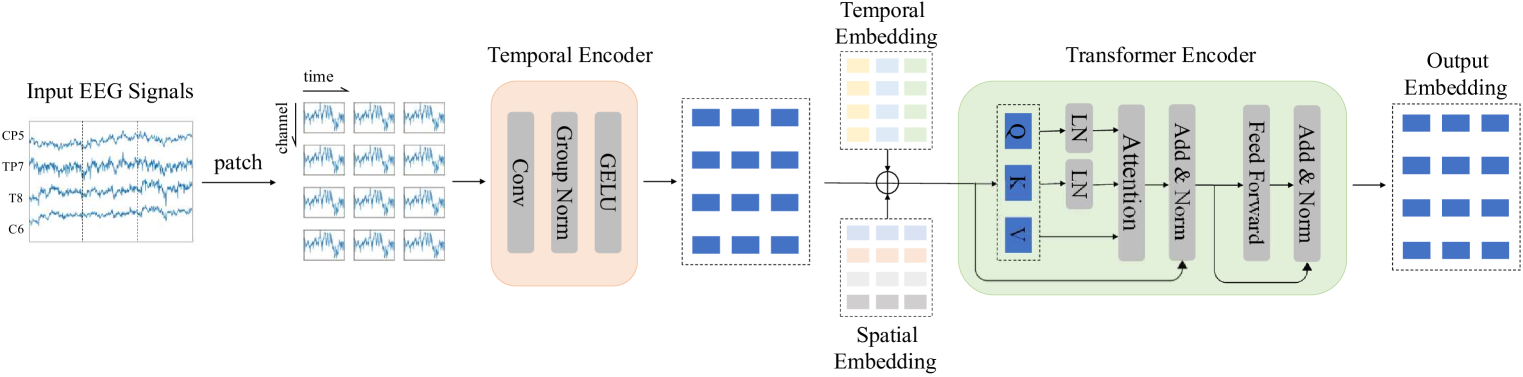

.. figure:: https://arxiv.org/html/2405.18765v1/x1.png

|

| 66 |

-

:align: center

|

| 67 |

-

:alt: Labram Architecture.

|

| 68 |

-

|

| 69 |

-

Large Brain Model for Learning Generic Representations with Tremendous

|

| 70 |

-

EEG Data in BCI from [Jiang2024]_.

|

| 71 |

-

|

| 72 |

-

This is an **adaptation** of the code [Code2024]_ from the Labram model.

|

| 73 |

-

|

| 74 |

-

The model is transformer architecture with **strong** inspiration from

|

| 75 |

-

BEiTv2 [BeiTv2]_.

|

| 76 |

-

|

| 77 |

-

The models can be used in two modes:

|

| 78 |

-

|

| 79 |

-

- Neural Tokenizer: Design to get an embedding layers (e.g. classification).

|

| 80 |

-

- Neural Decoder: To extract the ampliture and phase outputs with a VQSNP.

|

| 81 |

-

|

| 82 |

-

The braindecode's modification is to allow the model to be used in

|

| 83 |

-

with an input shape of (batch, n_chans, n_times), if neural tokenizer

|

| 84 |

-

equals True. The original implementation uses (batch, n_chans, n_patches,

|

| 85 |

-

patch_size) as input with static segmentation of the input data.

|

| 86 |

-

|

| 87 |

-

The models have the following sequence of steps::

|

| 88 |

-

|

| 89 |

-

if neural tokenizer:

|

| 90 |

-

- SegmentPatch: Segment the input data in patches;

|

| 91 |

-

- TemporalConv: Apply a temporal convolution to the segmented data;

|

| 92 |

-

- Residual adding cls, temporal and position embeddings (optional);

|

| 93 |

-

- WindowsAttentionBlock: Apply a windows attention block to the data;

|

| 94 |

-

- LayerNorm: Apply layer normalization to the data;

|

| 95 |

-

- Linear: An head linear layer to transformer the data into classes.

|

| 96 |

-

|

| 97 |

-

else:

|

| 98 |

-

- PatchEmbed: Apply a patch embedding to the input data;

|

| 99 |

-

- Residual adding cls, temporal and position embeddings (optional);

|

| 100 |

-

- WindowsAttentionBlock: Apply a windows attention block to the data;

|

| 101 |

-

- LayerNorm: Apply layer normalization to the data;

|

| 102 |

-

- Linear: An head linear layer to transformer the data into classes.

|

| 103 |

-

|

| 104 |

-

.. important::

|

| 105 |

-

**Pre-trained Weights Available**

|

| 106 |

-

|

| 107 |

-

This model has pre-trained weights available on the Hugging Face Hub.

|

| 108 |

-

You can load them using:

|

| 109 |

-

|

| 110 |

-

.. code:: python

|

| 111 |

-

from braindecode.models import Labram

|

| 112 |

-

|

| 113 |

-

# Load pre-trained model from Hugging Face Hub

|

| 114 |

-

model = Labram.from_pretrained("braindecode/labram-pretrained")

|

| 115 |

-

|

| 116 |

-

To push your own trained model to the Hub:

|

| 117 |

-

|

| 118 |

-

.. code:: python

|

| 119 |

-

# After training your model

|

| 120 |

-

model.push_to_hub(

|

| 121 |

-

repo_id="username/my-labram-model", commit_message="Upload trained Labram model"

|

| 122 |

-

)

|

| 123 |

-

|

| 124 |

-

Requires installing ``braindecode[hug]`` for Hub integration.

|

| 125 |

-

|

| 126 |

-

.. versionadded:: 0.9

|

| 127 |

-

|

| 128 |

-

|

| 129 |

-

Examples

|

| 130 |

-

--------

|

| 131 |

-

Load pre-trained weights::

|

| 132 |

-

|

| 133 |

-

>>> import torch

|

| 134 |

-

>>> from braindecode.models import Labram

|

| 135 |

-

>>> model = Labram(n_times=1600, n_chans=64, n_outputs=4)

|

| 136 |

-

>>> url = "https://huggingface.co/braindecode/Labram-Braindecode/blob/main/braindecode_labram_base.pt"

|

| 137 |

-

>>> state = torch.hub.load_state_dict_from_url(url, progress=True)

|

| 138 |

-

>>> model.load_state_dict(state)

|

| 139 |

-

|

| 140 |

-

|

| 141 |

-

Parameters

|

| 142 |

-

----------

|

| 143 |

-

patch_size : int

|

| 144 |

-

The size of the patch to be used in the patch embedding.

|

| 145 |

-

learned_patcher : bool

|

| 146 |

-

Whether to use a learned patch embedding (via a convolutional layer) or a fixed patch embedding (via rearrangement).

|

| 147 |

-

embed_dim : int

|

| 148 |

-

The dimension of the embedding.

|

| 149 |

-

conv_in_channels : int

|

| 150 |

-

The number of convolutional input channels.

|

| 151 |

-

conv_out_channels : int

|

| 152 |

-

The number of convolutional output channels.

|

| 153 |

-

num_layers : int (default=12)

|

| 154 |

-

The number of attention layers of the model.

|

| 155 |

-

num_heads : int (default=10)

|

| 156 |

-

The number of attention heads.

|

| 157 |

-

mlp_ratio : float (default=4.0)

|

| 158 |

-

The expansion ratio of the mlp layer

|

| 159 |

-

qkv_bias : bool (default=False)

|

| 160 |

-

If True, add a learnable bias to the query, key, and value tensors.

|

| 161 |

-

qk_norm : Pytorch Normalize layer (default=nn.LayerNorm)

|

| 162 |

-

If not None, apply LayerNorm to the query and key tensors.

|

| 163 |

-

Default is nn.LayerNorm for better weight transfer from original LaBraM.

|

| 164 |

-

Set to None to disable Q,K normalization.

|

| 165 |

-

qk_scale : float (default=None)

|

| 166 |

-

If not None, use this value as the scale factor. If None,

|

| 167 |

-

use head_dim**-0.5, where head_dim = dim // num_heads.

|

| 168 |

-

drop_prob : float (default=0.0)

|

| 169 |

-

Dropout rate for the attention weights.

|

| 170 |

-

attn_drop_prob : float (default=0.0)

|

| 171 |

-

Dropout rate for the attention weights.

|

| 172 |

-

drop_path_prob : float (default=0.0)

|

| 173 |

-

Dropout rate for the attention weights used on DropPath.

|

| 174 |

-

norm_layer : Pytorch Normalize layer (default=nn.LayerNorm)

|

| 175 |

-

The normalization layer to be used.

|

| 176 |

-

init_values : float (default=0.1)

|

| 177 |

-

If not None, use this value to initialize the gamma_1 and gamma_2

|

| 178 |

-

parameters for residual scaling. Default is 0.1 for better weight

|

| 179 |

-

transfer from original LaBraM. Set to None to disable.

|

| 180 |

-

use_abs_pos_emb : bool (default=True)

|

| 181 |

-

If True, use absolute position embedding.

|

| 182 |

-

use_mean_pooling : bool (default=True)

|

| 183 |

-

If True, use mean pooling.

|

| 184 |

-

init_scale : float (default=0.001)

|

| 185 |

-

The initial scale to be used in the parameters of the model.

|

| 186 |

-

neural_tokenizer : bool (default=True)

|

| 187 |

-

The model can be used in two modes: Neural Tokenizer or Neural Decoder.

|

| 188 |

-

attn_head_dim : bool (default=None)

|

| 189 |

-

The head dimension to be used in the attention layer, to be used only

|

| 190 |

-

during pre-training.

|

| 191 |

-

activation: nn.Module, default=nn.GELU

|

| 192 |

-

Activation function class to apply. Should be a PyTorch activation

|

| 193 |

-

module class like ``nn.ReLU`` or ``nn.ELU``. Default is ``nn.GELU``.

|

| 194 |

-

|

| 195 |

-

References

|

| 196 |

-

----------

|

| 197 |

-

.. [Jiang2024] Wei-Bang Jiang, Li-Ming Zhao, Bao-Liang Lu. 2024, May.

|

| 198 |

-

Large Brain Model for Learning Generic Representations with Tremendous

|

| 199 |

-

EEG Data in BCI. The Twelfth International Conference on Learning

|

| 200 |

-

Representations, ICLR.

|

| 201 |

-

.. [Code2024] Wei-Bang Jiang, Li-Ming Zhao, Bao-Liang Lu. 2024. Labram

|

| 202 |

-

Large Brain Model for Learning Generic Representations with Tremendous

|

| 203 |

-

EEG Data in BCI. GitHub https://github.com/935963004/LaBraM

|

| 204 |

-

(accessed 2024-03-02)

|

| 205 |

-

.. [BeiTv2] Zhiliang Peng, Li Dong, Hangbo Bao, Qixiang Ye, Furu Wei. 2024.

|

| 206 |

-

BEiT v2: Masked Image Modeling with Vector-Quantized Visual Tokenizers.

|

| 207 |

-

arXiv:2208.06366 [cs.CV]

|

| 208 |

-

|

| 209 |

-

.. rubric:: Hugging Face Hub integration

|

| 210 |

-

|

| 211 |

-

When the optional ``huggingface_hub`` package is installed, all models

|

| 212 |

-

automatically gain the ability to be pushed to and loaded from the

|

| 213 |

-

Hugging Face Hub. Install with::

|

| 214 |

-

|

| 215 |

-

pip install braindecode[hub]

|

| 216 |

-

|

| 217 |

-

**Pushing a model to the Hub:**

|

| 218 |

-

|

| 219 |

-

.. code::

|

| 220 |

-

from braindecode.models import Labram

|

| 221 |

-

|

| 222 |

-

# Train your model

|

| 223 |

-

model = Labram(n_chans=22, n_outputs=4, n_times=1000)

|

| 224 |

-

# ... training code ...

|

| 225 |

-

|

| 226 |

-

# Push to the Hub

|

| 227 |

-

model.push_to_hub(

|

| 228 |

-

repo_id="username/my-labram-model",

|

| 229 |

-

commit_message="Initial model upload",

|

| 230 |

-

)

|

| 231 |

-

|

| 232 |

-

**Loading a model from the Hub:**

|

| 233 |

-

|

| 234 |

-

.. code::

|

| 235 |

-

from braindecode.models import Labram

|

| 236 |

-

|

| 237 |

-

# Load pretrained model

|

| 238 |

-

model = Labram.from_pretrained("username/my-labram-model")

|

| 239 |

-

|

| 240 |

-

# Load with a different number of outputs (head is rebuilt automatically)

|

| 241 |

-

model = Labram.from_pretrained("username/my-labram-model", n_outputs=4)

|

| 242 |

-

|

| 243 |

-

**Extracting features and replacing the head:**

|

| 244 |

|

| 245 |

-

|

| 246 |

-

import torch

|

| 247 |

|

| 248 |

-

|

| 249 |

-

# Extract encoder features (consistent dict across all models)

|

| 250 |

-

out = model(x, return_features=True)

|

| 251 |

-

features = out["features"]

|

| 252 |

|

| 253 |

-

# Replace the classification head

|

| 254 |

-

model.reset_head(n_outputs=10)

|

| 255 |

|

| 256 |

-

|

| 257 |

|

| 258 |

-

|

| 259 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 260 |

|

| 261 |

-

config = model.get_config() # all __init__ params

|

| 262 |

-

with open("config.json", "w") as f:

|

| 263 |

-

json.dump(config, f)

|

| 264 |

|

| 265 |

-

|

| 266 |

|

| 267 |

-

|

| 268 |

-

|

| 269 |

-

|

| 270 |

|

| 271 |

-

See :ref:`load-pretrained-models` for a complete tutorial.</main>

|

| 272 |

-

</div>

|

| 273 |

|

| 274 |

## Citation

|

| 275 |

|

| 276 |

-

|

| 277 |

-

*References* section above) and braindecode:

|

| 278 |

|

| 279 |

```bibtex

|

| 280 |

@article{aristimunha2025braindecode,

|

|

|

|

| 10 |

- braindecode

|

| 11 |

- foundation-model

|

| 12 |

- convolutional

|

|

|

|

| 13 |

---

|

| 14 |

|

| 15 |

# Labram

|

| 16 |

|

| 17 |

+

Labram from Jiang, W B et al (2024) [Jiang2024].

|

| 18 |

|

| 19 |

+

> **Architecture-only repository.** Documents the

|

| 20 |

> `braindecode.models.Labram` class. **No pretrained weights are

|

| 21 |

+

> distributed here.** Instantiate the model and train it on your own

|

| 22 |

+

> data.

|

|

|

|

| 23 |

|

| 24 |

## Quick start

|

| 25 |

|

|

|

|

| 38 |

)

|

| 39 |

```

|

| 40 |

|

| 41 |

+

The signal-shape arguments above are illustrative defaults — adjust to

|

| 42 |

+

match your recording.

|

| 43 |

|

| 44 |

## Documentation

|

| 45 |

+

- Full API reference: <https://braindecode.org/stable/generated/braindecode.models.Labram.html>

|

| 46 |

+

- Interactive browser (live instantiation, parameter counts):

|

|

|

|

|

|

|

| 47 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 48 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/labram.py#L196>

|

| 49 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 50 |

|

| 51 |

+

## Architecture

|

|

|

|

| 52 |

|

| 53 |

+

|

|

|

|

|

|

|

|

|

|

| 54 |

|

|

|

|

|

|

|

| 55 |

|

| 56 |

+

## Parameters

|

| 57 |

|

| 58 |

+

| Parameter | Type | Description |

|

| 59 |

+

|---|---|---|

|

| 60 |

+

| `patch_size` | int | The size of the patch to be used in the patch embedding. |

|

| 61 |

+

| `learned_patcher` | bool | Whether to use a learned patch embedding (via a convolutional layer) or a fixed patch embedding (via rearrangement). |

|

| 62 |

+

| `embed_dim` | int | The dimension of the embedding. |

|

| 63 |

+

| `conv_in_channels` | int | The number of convolutional input channels. |

|

| 64 |

+

| `conv_out_channels` | int | The number of convolutional output channels. |

|

| 65 |

+

| `num_layers` | int (default=12) | The number of attention layers of the model. |

|

| 66 |

+

| `num_heads` | int (default=10) | The number of attention heads. |

|

| 67 |

+

| `mlp_ratio` | float (default=4.0) | The expansion ratio of the mlp layer |

|

| 68 |

+

| `qkv_bias` | bool (default=False) | If True, add a learnable bias to the query, key, and value tensors. |

|

| 69 |

+

| `qk_norm` | Pytorch Normalize layer (default=nn.LayerNorm) | If not None, apply LayerNorm to the query and key tensors. Default is nn.LayerNorm for better weight transfer from original LaBraM. Set to None to disable Q,K normalization. |

|

| 70 |

+

| `qk_scale` | float (default=None) | If not None, use this value as the scale factor. If None, use head_dim**-0.5, where head_dim = dim // num_heads. |

|

| 71 |

+

| `drop_prob` | float (default=0.0) | Dropout rate for the attention weights. |

|

| 72 |

+

| `attn_drop_prob` | float (default=0.0) | Dropout rate for the attention weights. |

|

| 73 |

+

| `drop_path_prob` | float (default=0.0) | Dropout rate for the attention weights used on DropPath. |

|

| 74 |

+

| `norm_layer` | Pytorch Normalize layer (default=nn.LayerNorm) | The normalization layer to be used. |

|

| 75 |

+

| `init_values` | float (default=0.1) | If not None, use this value to initialize the gamma_1 and gamma_2 parameters for residual scaling. Default is 0.1 for better weight transfer from original LaBraM. Set to None to disable. |

|

| 76 |

+

| `use_abs_pos_emb` | bool (default=True) | If True, use absolute position embedding. |

|

| 77 |

+

| `use_mean_pooling` | bool (default=True) | If True, use mean pooling. |

|

| 78 |

+

| `init_scale` | float (default=0.001) | The initial scale to be used in the parameters of the model. |

|

| 79 |

+

| `neural_tokenizer` | bool (default=True) | The model can be used in two modes: Neural Tokenizer or Neural Decoder. |

|

| 80 |

+

| `attn_head_dim` | bool (default=None) | The head dimension to be used in the attention layer, to be used only during pre-training. |

|

| 81 |

+

| `activation: nn.Module, default=nn.GELU` | — | Activation function class to apply. Should be a PyTorch activation module class like `nn.ReLU` or `nn.ELU`. Default is `nn.GELU`. |

|

| 82 |

|

|

|

|

|

|

|

|

|

|

| 83 |

|

| 84 |

+

## References

|

| 85 |

|

| 86 |

+

1. Wei-Bang Jiang, Li-Ming Zhao, Bao-Liang Lu. 2024, May. Large Brain Model for Learning Generic Representations with Tremendous EEG Data in BCI. The Twelfth International Conference on Learning Representations, ICLR.

|

| 87 |

+

2. Wei-Bang Jiang, Li-Ming Zhao, Bao-Liang Lu. 2024. Labram Large Brain Model for Learning Generic Representations with Tremendous EEG Data in BCI. GitHub https://github.com/935963004/LaBraM (accessed 2024-03-02)

|

| 88 |

+

3. Zhiliang Peng, Li Dong, Hangbo Bao, Qixiang Ye, Furu Wei. 2024. BEiT v2: Masked Image Modeling with Vector-Quantized Visual Tokenizers. arXiv:2208.06366 [cs.CV]

|

| 89 |

|

|

|

|

|

|

|

| 90 |

|

| 91 |

## Citation

|

| 92 |

|

| 93 |

+

Cite the original architecture paper (see *References* above) and braindecode:

|

|

|

|

| 94 |

|

| 95 |

```bibtex

|

| 96 |

@article{aristimunha2025braindecode,

|