Replace with clean markdown card

Browse files

README.md

CHANGED

|

@@ -13,13 +13,12 @@ tags:

|

|

| 13 |

|

| 14 |

# EEGInceptionERP

|

| 15 |

|

| 16 |

-

EEG Inception for ERP-based from Santamaria-Vazquez et al (2020) .

|

| 17 |

|

| 18 |

-

> **Architecture-only repository.**

|

| 19 |

> `braindecode.models.EEGInceptionERP` class. **No pretrained weights are

|

| 20 |

-

> distributed here**

|

| 21 |

-

> data

|

| 22 |

-

> separately.

|

| 23 |

|

| 24 |

## Quick start

|

| 25 |

|

|

@@ -38,245 +37,46 @@ model = EEGInceptionERP(

|

|

| 38 |

)

|

| 39 |

```

|

| 40 |

|

| 41 |

-

The signal-shape arguments above are

|

| 42 |

-

|

| 43 |

|

| 44 |

## Documentation

|

| 45 |

-

|

| 46 |

-

-

|

| 47 |

-

<https://braindecode.org/stable/generated/braindecode.models.EEGInceptionERP.html>

|

| 48 |

-

- Interactive browser with live instantiation:

|

| 49 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 50 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/eeginception_erp.py#L15>

|

| 51 |

|

| 52 |

-

## Architecture description

|

| 53 |

-

|

| 54 |

-

The block below is the rendered class docstring (parameters,

|

| 55 |

-

references, architecture figure where available).

|

| 56 |

-

|

| 57 |

-

<div class='bd-doc'><main>

|

| 58 |

-

<p>EEG Inception for ERP-based from Santamaria-Vazquez et al (2020) [santamaria2020]_.</p>

|

| 59 |

-

<span style="display:inline-block;padding:2px 8px;border-radius:4px;background:#5cb85c;color:white;font-size:11px;font-weight:600;margin-right:4px;">Convolution</span>

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

.. figure:: https://braindecode.org/dev/_static/model/eeginceptionerp.jpg

|

| 64 |

-

:align: center

|

| 65 |

-

:alt: EEGInceptionERP Architecture

|

| 66 |

-

|

| 67 |

-

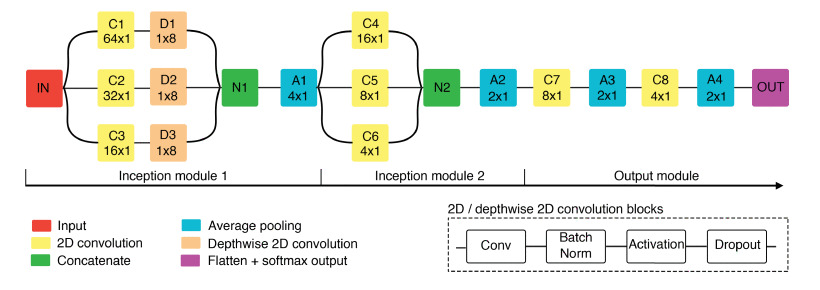

Figure: Overview of EEG-Inception architecture. 2D convolution blocks and depthwise 2D convolution blocks include batch normalization, activation and dropout regularization. The kernel size is displayed for convolutional and average pooling layers.

|

| 68 |

-

|

| 69 |

-

.. rubric:: Architectural Overview

|

| 70 |

-

|

| 71 |

-

A two-stage, multi-scale CNN tailored to ERP detection from short (0-1000 ms) single-trial epochs. Signals are mapped through

|

| 72 |

-

* (i) :class:`_InceptionModule1` multi-scale temporal feature extraction plus per-branch spatial mixing;

|

| 73 |

-

* (ii) :class:`_InceptionModule2` deeper multi-scale refinement at a reduced temporal resolution; and

|

| 74 |

-

* (iii) :class:`_OutputModule` compact aggregation and linear readout.

|

| 75 |

-

|

| 76 |

-

.. rubric:: Macro Components

|

| 77 |

-

|

| 78 |

-

- :class:`_InceptionModule1` **(multi-scale temporal + spatial mixing)**

|

| 79 |

-

|

| 80 |

-

- *Operations.*

|

| 81 |

-

|

| 82 |

-

- `EEGInceptionERP.c1`: :class:`torch.nn.Conv2d` ``k=(64,1)``, stride ``(1,1)``, *same* pad on input reshaped to ``(B,1,128,8)`` → BN → activation → dropout.

|

| 83 |

-

- `EEGInceptionERP.d1`: :class:`torch.nn.Conv2d` (depthwise) ``k=(1,8)``, *valid* pad over channels → BN → activation → dropout.

|

| 84 |

-

- `EEGInceptionERP.c2`: :class:`torch.nn.Conv2d` ``k=(32,1)`` → BN → activation → dropout; then `EEGInceptionERP.d2` depthwise ``k=(1,8)`` → BN → activation → dropout.

|

| 85 |

-

- `EEGInceptionERP.c3`: :class:`torch.nn.Conv2d` ``k=(16,1)`` → BN → activation → dropout; then `EEGInceptionERP.d3` depthwise ``k=(1,8)`` → BN → activation → dropout.

|

| 86 |

-

- `EEGInceptionERP.n1`: :class:`torch.nn.Concat` over branch features.

|

| 87 |

-

- `EEGInceptionERP.a1`: :class:`torch.nn.AvgPool2d` ``pool=(4,1)``, stride ``(4,1)`` for temporal downsampling.

|

| 88 |

-

|

| 89 |

-

*Interpretability/robustness.* Depthwise `1 x n_chans` layers act as learnable montage-wide spatial filters per temporal scale; pooling stabilizes against jitter.

|

| 90 |

-

|

| 91 |

-

- :class:`_InceptionModule2` **(refinement at coarser timebase)**

|

| 92 |

-

|

| 93 |

-

- *Operations.*

|

| 94 |

-

|

| 95 |

-

- `EEGInceptionERP.c4`: :class:`torch.nn.Conv2d` ``k=(16,1)`` → BN → activation → dropout.

|

| 96 |

-

- `EEGInceptionERP.c5`: :class:`torch.nn.Conv2d` ``k=(8,1)`` → BN → activation → dropout.

|

| 97 |

-

- `EEGInceptionERP.c6`: :class:`torch.nn.Conv2d` ``k=(4,1)`` → BN → activation → dropout.

|

| 98 |

-

- `EEGInceptionERP.n2`: :class:`torch.nn.Concat` (merge C4-C6 outputs).

|

| 99 |

-

- `EEGInceptionERP.a2`: :class:`torch.nn.AvgPool2d` ``pool=(2,1)``, stride ``(2,1)``.

|

| 100 |

-

- `EEGInceptionERP.c7`: :class:`torch.nn.Conv2d` ``k=(8,1)`` → BN → activation → dropout; then `EEGInceptionERP.a3`: :class:`torch.nn.AvgPool2d` ``pool=(2,1)``.

|

| 101 |

-

- `EEGInceptionERP.c8`: :class:`torch.nn.Conv2d` ``k=(4,1)`` → BN → activation → dropout; then `EEGInceptionERP.a4`: :class:`torch.nn.AvgPool2d` ``pool=(2,1)``.

|

| 102 |

-

|

| 103 |

-

*Role.* Adds higher-level, shorter-window evidence while progressively compressing temporal dimension.

|

| 104 |

-

|

| 105 |

-

- :class:`_OutputModule` **(aggregation + readout)**

|

| 106 |

-

|

| 107 |

-

- *Operations.*

|

| 108 |

-

|

| 109 |

-

- :class:`torch.nn.Flatten`

|

| 110 |

-

- :class:`torch.nn.Linear` ``(features → 2)``

|

| 111 |

-

|

| 112 |

-

.. rubric:: Convolutional Details

|

| 113 |

-

|

| 114 |

-

- **Temporal (where time-domain patterns are learned).**

|

| 115 |

-

|

| 116 |

-

First module uses 1D temporal kernels along the 128-sample axis: ``64``, ``32``, ``16``

|

| 117 |

-

(≈500, 250, 125 ms at 128 Hz). After ``pool=(4,1)``, the second module applies ``16``,

|

| 118 |

-

``8``, ``4`` (≈125, 62.5, 31.25 ms at the pooled rate). All strides are ``1`` in convs;

|

| 119 |

-

temporal resolution changes only via average pooling.

|

| 120 |

-

|

| 121 |

-

- **Spatial (how electrodes are processed).**

|

| 122 |

-

|

| 123 |

-

Depthwise convs with ``k=(1,8)`` span all channels and are applied **per temporal branch**,

|

| 124 |

-

yielding scale-specific channel projections (no cross-branch mixing until concatenation).

|

| 125 |

-

There is no full 2D mixing kernel; spatial mixing is factorized and lightweight.

|

| 126 |

-

|

| 127 |

-

- **Spectral (how frequency information is captured).**

|

| 128 |

-

|

| 129 |

-

No explicit transform; multiple temporal kernels form a *learned filter bank* over

|

| 130 |

-

ERP-relevant bands. Successive pooling acts as low-pass integration to emphasize sustained

|

| 131 |

-

post-stimulus components.

|

| 132 |

-

|

| 133 |

-

.. rubric:: Additional Mechanisms

|

| 134 |

-

|

| 135 |

-

- Every conv/depthwise block includes **BatchNorm**, nonlinearity (paper used grid-searched activation), and **dropout**.

|

| 136 |

-

- Two Inception stages followed by short convs and pooling keep parameters small (≈15k reported) while preserving multi-scale evidence.

|

| 137 |

-

- Expected input: epochs of shape ``(B,1,128,8)`` (time x channels as a 2D map) or reshaped from ``(B,8,128)`` with an added singleton feature dimension.

|

| 138 |

-

|

| 139 |

-

.. rubric:: Usage and Configuration

|

| 140 |

-

|

| 141 |

-

- **Key knobs.** Number of filters per branch; kernel lengths in both Inception modules; depthwise kernel over channels (typically ``n_chans``); pooling lengths/strides; dropout rate; choice of activation.

|

| 142 |

-

- **Training tips.** Use 0-1000 ms windows at 128 Hz with CAR; tune activation and dropout (they strongly affect performance); early-stop on validation loss when overfitting emerges.

|

| 143 |

-

|

| 144 |

-

.. rubric:: Implementation Details

|

| 145 |

-

|

| 146 |

-

The model is strongly based on the original InceptionNet for an image. The main goal is

|

| 147 |

-

to extract features in parallel with different scales. The authors extracted three scales

|

| 148 |

-

proportional to the window sample size. The network had three parts:

|

| 149 |

-

1-larger inception block largest, 2-smaller inception block followed by 3-bottleneck

|

| 150 |

-

for classification.

|

| 151 |

-

|

| 152 |

-

One advantage of the EEG-Inception block is that it allows a network

|

| 153 |

-

to learn simultaneous components of low and high frequency associated with the signal.

|

| 154 |

-

The winners of BEETL Competition/NeurIps 2021 used parts of the

|

| 155 |

-

model [beetl]_.

|

| 156 |

-

|

| 157 |

-

The code for the paper and this model is also available at [santamaria2020]_

|

| 158 |

-

and an adaptation for PyTorch [2]_.

|

| 159 |

-

|

| 160 |

-

|

| 161 |

-

Parameters

|

| 162 |

-

----------

|

| 163 |

-

n_times : int, optional

|

| 164 |

-

Size of the input, in number of samples. Set to 128 (1s) as in

|

| 165 |

-

[santamaria2020]_.

|

| 166 |

-

sfreq : float, optional

|

| 167 |

-

EEG sampling frequency. Defaults to 128 as in [santamaria2020]_.

|

| 168 |

-

drop_prob : float, optional

|

| 169 |

-

Dropout rate inside all the network. Defaults to 0.5 as in

|

| 170 |

-

[santamaria2020]_.

|

| 171 |

-

scales_samples_s: list(float), optional

|

| 172 |

-

Windows for inception block. Temporal scale (s) of the convolutions on

|

| 173 |

-

each Inception module. This parameter determines the kernel sizes of

|

| 174 |

-

the filters. Defaults to 0.5, 0.25, 0.125 seconds, as in

|

| 175 |

-

[santamaria2020]_.

|

| 176 |

-

n_filters : int, optional

|

| 177 |

-

Initial number of convolutional filters. Defaults to 8 as in

|

| 178 |

-

[santamaria2020]_.

|

| 179 |

-

activation: nn.Module, optional

|

| 180 |

-

Activation function. Defaults to ELU activation as in

|

| 181 |

-

[santamaria2020]_.

|

| 182 |

-

batch_norm_alpha: float, optional

|

| 183 |

-

Momentum for BatchNorm2d. Defaults to 0.01.

|

| 184 |

-

depth_multiplier: int, optional

|

| 185 |

-

Depth multiplier for the depthwise convolution. Defaults to 2 as in

|

| 186 |

-

[santamaria2020]_.

|

| 187 |

-

pooling_sizes: list(int), optional

|

| 188 |

-

Pooling sizes for the inception blocks. Defaults to 4, 2, 2 and 2, as

|

| 189 |

-

in [santamaria2020]_.

|

| 190 |

-

|

| 191 |

-

|

| 192 |

-

References

|

| 193 |

-

----------

|

| 194 |

-

.. [santamaria2020] Santamaria-Vazquez, E., Martinez-Cagigal, V.,

|

| 195 |

-

Vaquerizo-Villar, F., & Hornero, R. (2020).

|

| 196 |

-

EEG-inception: A novel deep convolutional neural network for assistive

|

| 197 |

-

ERP-based brain-computer interfaces.

|

| 198 |

-

IEEE Transactions on Neural Systems and Rehabilitation Engineering , v. 28.

|

| 199 |

-

Online: http://dx.doi.org/10.1109/TNSRE.2020.3048106

|

| 200 |

-

.. [2] Grifcc. Implementation of the EEGInception in torch (2022).

|

| 201 |

-

Online: https://github.com/Grifcc/EEG/

|

| 202 |

-

.. [beetl] Wei, X., Faisal, A.A., Grosse-Wentrup, M., Gramfort, A., Chevallier, S.,

|

| 203 |

-

Jayaram, V., Jeunet, C., Bakas, S., Ludwig, S., Barmpas, K., Bahri, M., Panagakis,

|

| 204 |

-

Y., Laskaris, N., Adamos, D.A., Zafeiriou, S., Duong, W.C., Gordon, S.M.,

|

| 205 |

-

Lawhern, V.J., Śliwowski, M., Rouanne, V. & Tempczyk, P. (2022).

|

| 206 |

-

2021 BEETL Competition: Advancing Transfer Learning for Subject Independence &

|

| 207 |

-

Heterogeneous EEG Data Sets. Proceedings of the NeurIPS 2021 Competitions and

|

| 208 |

-

Demonstrations Track, in Proceedings of Machine Learning Research

|

| 209 |

-

176:205-219 Available from https://proceedings.mlr.press/v176/wei22a.html.

|

| 210 |

-

|

| 211 |

-

.. rubric:: Hugging Face Hub integration

|

| 212 |

-

|

| 213 |

-

When the optional ``huggingface_hub`` package is installed, all models

|

| 214 |

-

automatically gain the ability to be pushed to and loaded from the

|

| 215 |

-

Hugging Face Hub. Install with::

|

| 216 |

-

|

| 217 |

-

pip install braindecode[hub]

|

| 218 |

-

|

| 219 |

-

**Pushing a model to the Hub:**

|

| 220 |

-

|

| 221 |

-

.. code::

|

| 222 |

-

from braindecode.models import EEGInceptionERP

|

| 223 |

-

|

| 224 |

-

# Train your model

|

| 225 |

-

model = EEGInceptionERP(n_chans=22, n_outputs=4, n_times=1000)

|

| 226 |

-

# ... training code ...

|

| 227 |

-

|

| 228 |

-

# Push to the Hub

|

| 229 |

-

model.push_to_hub(

|

| 230 |

-

repo_id="username/my-eeginceptionerp-model",

|

| 231 |

-

commit_message="Initial model upload",

|

| 232 |

-

)

|

| 233 |

-

|

| 234 |

-

**Loading a model from the Hub:**

|

| 235 |

-

|

| 236 |

-

.. code::

|

| 237 |

-

from braindecode.models import EEGInceptionERP

|

| 238 |

-

|

| 239 |

-

# Load pretrained model

|

| 240 |

-

model = EEGInceptionERP.from_pretrained("username/my-eeginceptionerp-model")

|

| 241 |

-

|

| 242 |

-

# Load with a different number of outputs (head is rebuilt automatically)

|

| 243 |

-

model = EEGInceptionERP.from_pretrained("username/my-eeginceptionerp-model", n_outputs=4)

|

| 244 |

-

|

| 245 |

-

**Extracting features and replacing the head:**

|

| 246 |

|

| 247 |

-

|

| 248 |

-

import torch

|

| 249 |

|

| 250 |

-

|

| 251 |

-

# Extract encoder features (consistent dict across all models)

|

| 252 |

-

out = model(x, return_features=True)

|

| 253 |

-

features = out["features"]

|

| 254 |

|

| 255 |

-

# Replace the classification head

|

| 256 |

-

model.reset_head(n_outputs=10)

|

| 257 |

|

| 258 |

-

|

| 259 |

|

| 260 |

-

|

| 261 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 262 |

|

| 263 |

-

config = model.get_config() # all __init__ params

|

| 264 |

-

with open("config.json", "w") as f:

|

| 265 |

-

json.dump(config, f)

|

| 266 |

|

| 267 |

-

|

| 268 |

|

| 269 |

-

|

| 270 |

-

|

| 271 |

-

|

| 272 |

|

| 273 |

-

See :ref:`load-pretrained-models` for a complete tutorial.</main>

|

| 274 |

-

</div>

|

| 275 |

|

| 276 |

## Citation

|

| 277 |

|

| 278 |

-

|

| 279 |

-

*References* section above) and braindecode:

|

| 280 |

|

| 281 |

```bibtex

|

| 282 |

@article{aristimunha2025braindecode,

|

|

|

|

| 13 |

|

| 14 |

# EEGInceptionERP

|

| 15 |

|

| 16 |

+

EEG Inception for ERP-based from Santamaria-Vazquez et al (2020) [santamaria2020].

|

| 17 |

|

| 18 |

+

> **Architecture-only repository.** Documents the

|

| 19 |

> `braindecode.models.EEGInceptionERP` class. **No pretrained weights are

|

| 20 |

+

> distributed here.** Instantiate the model and train it on your own

|

| 21 |

+

> data.

|

|

|

|

| 22 |

|

| 23 |

## Quick start

|

| 24 |

|

|

|

|

| 37 |

)

|

| 38 |

```

|

| 39 |

|

| 40 |

+

The signal-shape arguments above are illustrative defaults — adjust to

|

| 41 |

+

match your recording.

|

| 42 |

|

| 43 |

## Documentation

|

| 44 |

+

- Full API reference: <https://braindecode.org/stable/generated/braindecode.models.EEGInceptionERP.html>

|

| 45 |

+

- Interactive browser (live instantiation, parameter counts):

|

|

|

|

|

|

|

| 46 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 47 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/eeginception_erp.py#L15>

|

| 48 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 49 |

|

| 50 |

+

## Architecture

|

|

|

|

| 51 |

|

| 52 |

+

|

|

|

|

|

|

|

|

|

|

| 53 |

|

|

|

|

|

|

|

| 54 |

|

| 55 |

+

## Parameters

|

| 56 |

|

| 57 |

+

| Parameter | Type | Description |

|

| 58 |

+

|---|---|---|

|

| 59 |

+

| `n_times` | int, optional | Size of the input, in number of samples. Set to 128 (1s) as in [santamaria2020]. |

|

| 60 |

+

| `sfreq` | float, optional | EEG sampling frequency. Defaults to 128 as in [santamaria2020]. |

|

| 61 |

+

| `drop_prob` | float, optional | Dropout rate inside all the network. Defaults to 0.5 as in [santamaria2020]. |

|

| 62 |

+

| `scales_samples_s: list(float), optional` | — | Windows for inception block. Temporal scale (s) of the convolutions on each Inception module. This parameter determines the kernel sizes of the filters. Defaults to 0.5, 0.25, 0.125 seconds, as in [santamaria2020]. |

|

| 63 |

+

| `n_filters` | int, optional | Initial number of convolutional filters. Defaults to 8 as in [santamaria2020]. |

|

| 64 |

+

| `activation: nn.Module, optional` | — | Activation function. Defaults to ELU activation as in [santamaria2020]. |

|

| 65 |

+

| `batch_norm_alpha: float, optional` | — | Momentum for BatchNorm2d. Defaults to 0.01. |

|

| 66 |

+

| `depth_multiplier: int, optional` | — | Depth multiplier for the depthwise convolution. Defaults to 2 as in [santamaria2020]. |

|

| 67 |

+

| `pooling_sizes: list(int), optional` | — | Pooling sizes for the inception blocks. Defaults to 4, 2, 2 and 2, as in [santamaria2020]. |

|

| 68 |

|

|

|

|

|

|

|

|

|

|

| 69 |

|

| 70 |

+

## References

|

| 71 |

|

| 72 |

+

1. Santamaria-Vazquez, E., Martinez-Cagigal, V., Vaquerizo-Villar, F., & Hornero, R. (2020). EEG-inception: A novel deep convolutional neural network for assistive ERP-based brain-computer interfaces. IEEE Transactions on Neural Systems and Rehabilitation Engineering , v. 28. Online: http://dx.doi.org/10.1109/TNSRE.2020.3048106

|

| 73 |

+

2. Grifcc. Implementation of the EEGInception in torch (2022). Online: https://github.com/Grifcc/EEG/

|

| 74 |

+

3. Wei, X., Faisal, A.A., Grosse-Wentrup, M., Gramfort, A., Chevallier, S., Jayaram, V., Jeunet, C., Bakas, S., Ludwig, S., Barmpas, K., Bahri, M., Panagakis, Y., Laskaris, N., Adamos, D.A., Zafeiriou, S., Duong, W.C., Gordon, S.M., Lawhern, V.J., Śliwowski, M., Rouanne, V. & Tempczyk, P. (2022). 2021 BEETL Competition: Advancing Transfer Learning for Subject Independence & Heterogeneous EEG Data Sets. Proceedings of the NeurIPS 2021 Competitions and Demonstrations Track, in Proceedings of Machine Learning Research 176:205-219 Available from https://proceedings.mlr.press/v176/wei22a.html.

|

| 75 |

|

|

|

|

|

|

|

| 76 |

|

| 77 |

## Citation

|

| 78 |

|

| 79 |

+

Cite the original architecture paper (see *References* above) and braindecode:

|

|

|

|

| 80 |

|

| 81 |

```bibtex

|

| 82 |

@article{aristimunha2025braindecode,

|