Commit

·

ddb7519

1

Parent(s):

e72f6f2

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +5 -0

- __pycache__/train.cpython-310.pyc +0 -0

- animatediff/data/__pycache__/dataset.cpython-310.pyc +0 -0

- animatediff/models/__pycache__/attention.cpython-310.pyc +0 -0

- animatediff/models/__pycache__/motion_module.cpython-310.pyc +0 -0

- animatediff/models/__pycache__/resnet.cpython-310.pyc +0 -0

- animatediff/models/__pycache__/unet.cpython-310.pyc +0 -0

- animatediff/models/__pycache__/unet_blocks.cpython-310.pyc +0 -0

- animatediff/pipelines/__pycache__/pipeline_animation.cpython-310.pyc +0 -0

- animatediff/utils/__pycache__/convert_from_ckpt.cpython-310.pyc +0 -0

- animatediff/utils/__pycache__/convert_lora_safetensor_to_diffusers.cpython-310.pyc +0 -0

- animatediff/utils/__pycache__/util.cpython-310.pyc +0 -0

- data/.cache/model_base_caption_capfilt_large.pth +3 -0

- data/EveryDream/'1.3.5' +6 -0

- data/EveryDream/.cache/model_base_caption_capfilt_large.pth +3 -0

- data/EveryDream/.github/FUNDING.yml +5 -0

- data/EveryDream/.gitignore +139 -0

- data/EveryDream/AutoCaption.ipynb +1 -0

- data/EveryDream/EveryDream_Tools.ipynb +387 -0

- data/EveryDream/LICENSE +661 -0

- data/EveryDream/README.MD +63 -0

- data/EveryDream/activate_venv.bat +1 -0

- data/EveryDream/clip_rename.bat +6 -0

- data/EveryDream/create_venv.bat +15 -0

- data/EveryDream/deactivate_venv.bat +1 -0

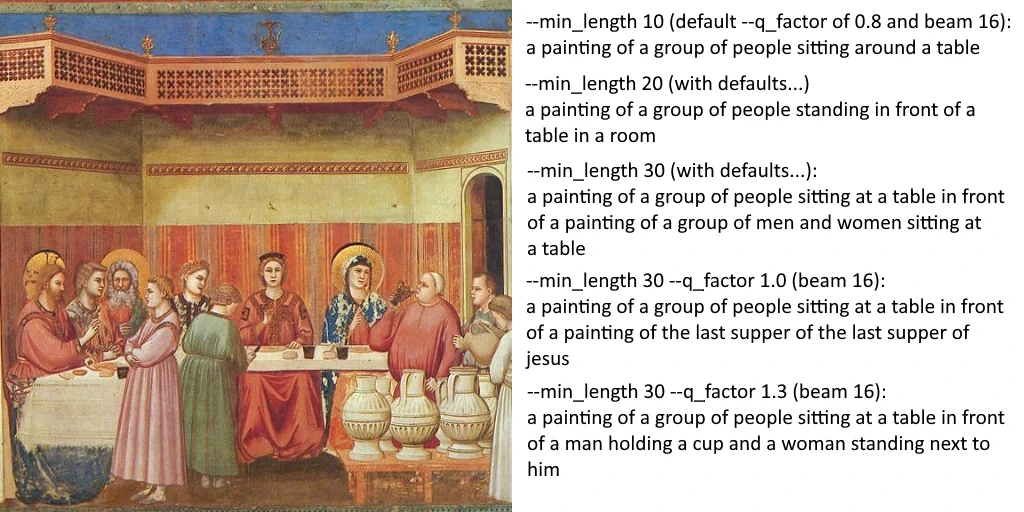

- data/EveryDream/demo/beam_min_vs_q.webp +0 -0

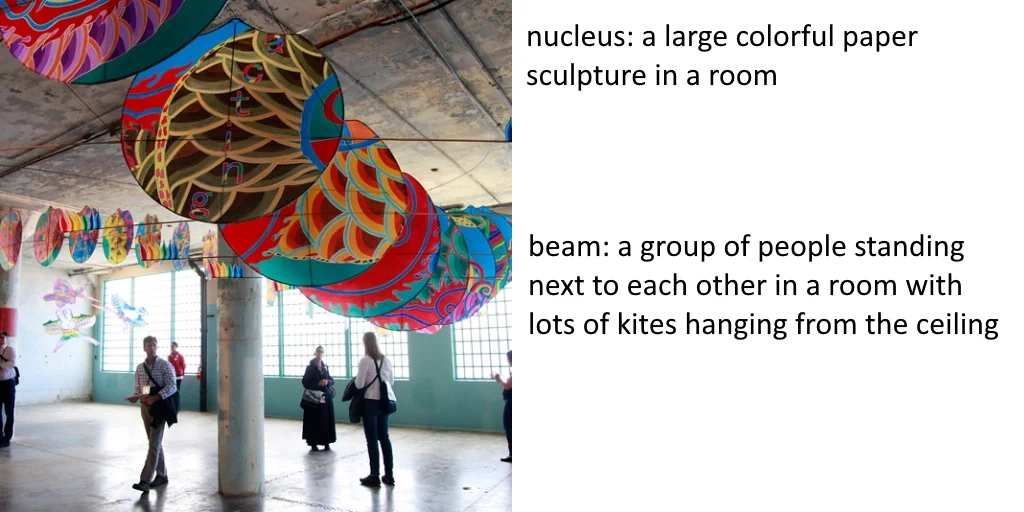

- data/EveryDream/demo/beam_vs_nucleus.webp +0 -0

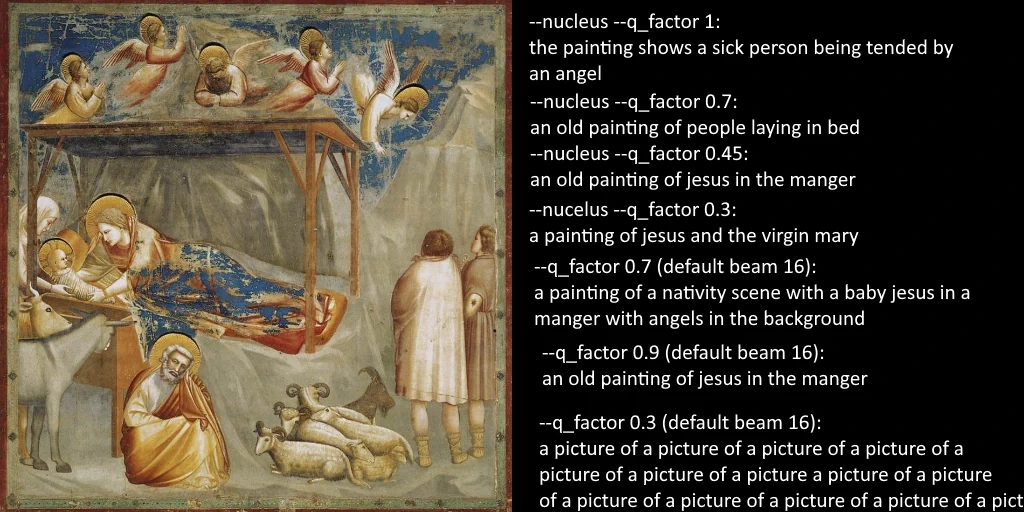

- data/EveryDream/demo/beam_vs_nucleus_2.webp +0 -0

- data/EveryDream/demo/demo01.png +0 -0

- data/EveryDream/demo/demo02.png +0 -0

- data/EveryDream/demo/demo03.png +0 -0

- data/EveryDream/demo/output_zip.png +0 -0

- data/EveryDream/demo/upload_images_caption.png +0 -0

- data/EveryDream/doc/AUTO_CAPTION.md +115 -0

- data/EveryDream/doc/CAPTION_GUI.md +20 -0

- data/EveryDream/doc/COMPRESS_IMG.md +69 -0

- data/EveryDream/doc/FILE_RENAME.md +36 -0

- data/EveryDream/doc/LAION_SCRAPE.md +51 -0

- data/EveryDream/doc/VIDEO_EXTRACTOR.md +27 -0

- data/EveryDream/environment.yaml +9 -0

- data/EveryDream/input/.gitkeep +0 -0

- data/EveryDream/laion/Put LAION parquets here.txt +2 -0

- data/EveryDream/output/.gitkeep +0 -0

- data/EveryDream/requirements.txt +10 -0

- data/EveryDream/scripts/BLIP/BLIP.gif +3 -0

- data/EveryDream/scripts/BLIP/CODEOWNERS +2 -0

- data/EveryDream/scripts/BLIP/CODE_OF_CONDUCT.md +105 -0

- data/EveryDream/scripts/BLIP/LICENSE.txt +12 -0

- data/EveryDream/scripts/BLIP/README.md +116 -0

- data/EveryDream/scripts/BLIP/SECURITY.md +7 -0

.gitattributes

CHANGED

|

@@ -217,3 +217,8 @@ data/raw/3dDS9/2.png filter=lfs diff=lfs merge=lfs -text

|

|

| 217 |

data/raw/3dDS9/3.png filter=lfs diff=lfs merge=lfs -text

|

| 218 |

data/raw/3dDS9/4.png filter=lfs diff=lfs merge=lfs -text

|

| 219 |

triton-2.0.0-cp310-cp310-win_amd64.whl filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 217 |

data/raw/3dDS9/3.png filter=lfs diff=lfs merge=lfs -text

|

| 218 |

data/raw/3dDS9/4.png filter=lfs diff=lfs merge=lfs -text

|

| 219 |

triton-2.0.0-cp310-cp310-win_amd64.whl filter=lfs diff=lfs merge=lfs -text

|

| 220 |

+

data/EveryDream/scripts/BLIP/BLIP.gif filter=lfs diff=lfs merge=lfs -text

|

| 221 |

+

models/Motion_Module/2fbkcvmxtmp filter=lfs diff=lfs merge=lfs -text

|

| 222 |

+

models/Motion_Module/gqdawx6utmp filter=lfs diff=lfs merge=lfs -text

|

| 223 |

+

models/Motion_Module/impovhmrtmp filter=lfs diff=lfs merge=lfs -text

|

| 224 |

+

models/Motion_Module/oz5u8b9jtmp filter=lfs diff=lfs merge=lfs -text

|

__pycache__/train.cpython-310.pyc

ADDED

|

Binary file (12.2 kB). View file

|

|

|

animatediff/data/__pycache__/dataset.cpython-310.pyc

CHANGED

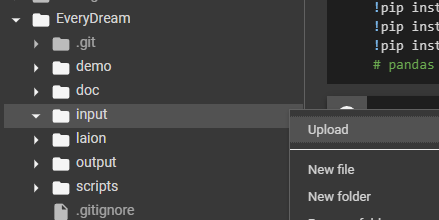

|

Binary files a/animatediff/data/__pycache__/dataset.cpython-310.pyc and b/animatediff/data/__pycache__/dataset.cpython-310.pyc differ

|

|

|

animatediff/models/__pycache__/attention.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/models/__pycache__/attention.cpython-310.pyc and b/animatediff/models/__pycache__/attention.cpython-310.pyc differ

|

|

|

animatediff/models/__pycache__/motion_module.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/models/__pycache__/motion_module.cpython-310.pyc and b/animatediff/models/__pycache__/motion_module.cpython-310.pyc differ

|

|

|

animatediff/models/__pycache__/resnet.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/models/__pycache__/resnet.cpython-310.pyc and b/animatediff/models/__pycache__/resnet.cpython-310.pyc differ

|

|

|

animatediff/models/__pycache__/unet.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/models/__pycache__/unet.cpython-310.pyc and b/animatediff/models/__pycache__/unet.cpython-310.pyc differ

|

|

|

animatediff/models/__pycache__/unet_blocks.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/models/__pycache__/unet_blocks.cpython-310.pyc and b/animatediff/models/__pycache__/unet_blocks.cpython-310.pyc differ

|

|

|

animatediff/pipelines/__pycache__/pipeline_animation.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/pipelines/__pycache__/pipeline_animation.cpython-310.pyc and b/animatediff/pipelines/__pycache__/pipeline_animation.cpython-310.pyc differ

|

|

|

animatediff/utils/__pycache__/convert_from_ckpt.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/utils/__pycache__/convert_from_ckpt.cpython-310.pyc and b/animatediff/utils/__pycache__/convert_from_ckpt.cpython-310.pyc differ

|

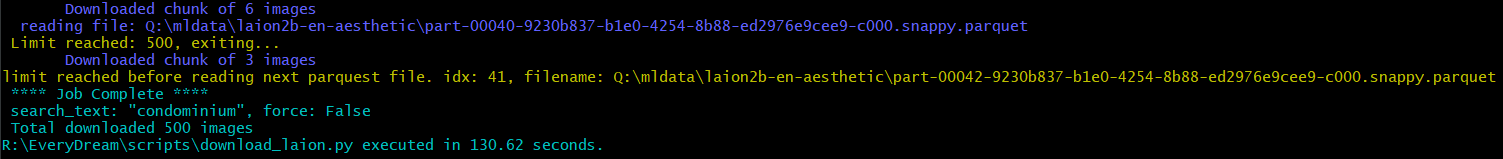

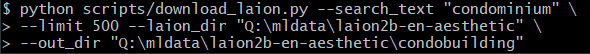

|

|

animatediff/utils/__pycache__/convert_lora_safetensor_to_diffusers.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/utils/__pycache__/convert_lora_safetensor_to_diffusers.cpython-310.pyc and b/animatediff/utils/__pycache__/convert_lora_safetensor_to_diffusers.cpython-310.pyc differ

|

|

|

animatediff/utils/__pycache__/util.cpython-310.pyc

CHANGED

|

Binary files a/animatediff/utils/__pycache__/util.cpython-310.pyc and b/animatediff/utils/__pycache__/util.cpython-310.pyc differ

|

|

|

data/.cache/model_base_caption_capfilt_large.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:96ac8749bd0a568c274ebe302b3a3748ab9be614c737f3d8c529697139174086

|

| 3 |

+

size 896081425

|

data/EveryDream/'1.3.5'

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Requirement already satisfied: pandas in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (2.1.3)

|

| 2 |

+

Requirement already satisfied: numpy<2,>=1.22.4 in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (from pandas) (1.26.0)

|

| 3 |

+

Requirement already satisfied: python-dateutil>=2.8.2 in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (from pandas) (2.8.2)

|

| 4 |

+

Requirement already satisfied: pytz>=2020.1 in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (from pandas) (2023.3.post1)

|

| 5 |

+

Requirement already satisfied: tzdata>=2022.1 in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (from pandas) (2023.3)

|

| 6 |

+

Requirement already satisfied: six>=1.5 in c:\users\texas\anaconda3\envs\animatediff\lib\site-packages (from python-dateutil>=2.8.2->pandas) (1.16.0)

|

data/EveryDream/.cache/model_base_caption_capfilt_large.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:96ac8749bd0a568c274ebe302b3a3748ab9be614c737f3d8c529697139174086

|

| 3 |

+

size 896081425

|

data/EveryDream/.github/FUNDING.yml

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# These are supported funding model platforms

|

| 2 |

+

|

| 3 |

+

github: victorchall

|

| 4 |

+

patreon: everydream

|

| 5 |

+

ko_fi: everydream

|

data/EveryDream/.gitignore

ADDED

|

@@ -0,0 +1,139 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# EveryDream

|

| 2 |

+

/everydream-venv/**

|

| 3 |

+

/laion/*.parquet

|

| 4 |

+

/output/**

|

| 5 |

+

/.cache/**

|

| 6 |

+

/.venv/**

|

| 7 |

+

/input/**

|

| 8 |

+

/scripts/BLIP

|

| 9 |

+

/.vscode/**

|

| 10 |

+

|

| 11 |

+

# Byte-compiled / optimized / DLL files

|

| 12 |

+

__pycache__/

|

| 13 |

+

*.py[cod]

|

| 14 |

+

*$py.class

|

| 15 |

+

|

| 16 |

+

# C extensions

|

| 17 |

+

*.so

|

| 18 |

+

|

| 19 |

+

# Distribution / packaging

|

| 20 |

+

.Python

|

| 21 |

+

build/

|

| 22 |

+

develop-eggs/

|

| 23 |

+

dist/

|

| 24 |

+

downloads/

|

| 25 |

+

eggs/

|

| 26 |

+

.eggs/

|

| 27 |

+

lib/

|

| 28 |

+

lib64/

|

| 29 |

+

parts/

|

| 30 |

+

sdist/

|

| 31 |

+

var/

|

| 32 |

+

wheels/

|

| 33 |

+

pip-wheel-metadata/

|

| 34 |

+

share/python-wheels/

|

| 35 |

+

*.egg-info/

|

| 36 |

+

.installed.cfg

|

| 37 |

+

*.egg

|

| 38 |

+

MANIFEST

|

| 39 |

+

|

| 40 |

+

# PyInstaller

|

| 41 |

+

# Usually these files are written by a python script from a template

|

| 42 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 43 |

+

*.manifest

|

| 44 |

+

*.spec

|

| 45 |

+

|

| 46 |

+

# Installer logs

|

| 47 |

+

pip-log.txt

|

| 48 |

+

pip-delete-this-directory.txt

|

| 49 |

+

|

| 50 |

+

# Unit test / coverage reports

|

| 51 |

+

htmlcov/

|

| 52 |

+

.tox/

|

| 53 |

+

.nox/

|

| 54 |

+

.coverage

|

| 55 |

+

.coverage.*

|

| 56 |

+

.cache

|

| 57 |

+

nosetests.xml

|

| 58 |

+

coverage.xml

|

| 59 |

+

*.cover

|

| 60 |

+

*.py,cover

|

| 61 |

+

.hypothesis/

|

| 62 |

+

.pytest_cache/

|

| 63 |

+

|

| 64 |

+

# Translations

|

| 65 |

+

*.mo

|

| 66 |

+

*.pot

|

| 67 |

+

|

| 68 |

+

# Django stuff:

|

| 69 |

+

*.log

|

| 70 |

+

local_settings.py

|

| 71 |

+

db.sqlite3

|

| 72 |

+

db.sqlite3-journal

|

| 73 |

+

|

| 74 |

+

# Flask stuff:

|

| 75 |

+

instance/

|

| 76 |

+

.webassets-cache

|

| 77 |

+

|

| 78 |

+

# Scrapy stuff:

|

| 79 |

+

.scrapy

|

| 80 |

+

|

| 81 |

+

# Sphinx documentation

|

| 82 |

+

docs/_build/

|

| 83 |

+

|

| 84 |

+

# PyBuilder

|

| 85 |

+

target/

|

| 86 |

+

|

| 87 |

+

# Jupyter Notebook

|

| 88 |

+

.ipynb_checkpoints

|

| 89 |

+

|

| 90 |

+

# IPython

|

| 91 |

+

profile_default/

|

| 92 |

+

ipython_config.py

|

| 93 |

+

|

| 94 |

+

# pyenv

|

| 95 |

+

.python-version

|

| 96 |

+

|

| 97 |

+

# pipenv

|

| 98 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 99 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 100 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 101 |

+

# install all needed dependencies.

|

| 102 |

+

#Pipfile.lock

|

| 103 |

+

|

| 104 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

|

| 105 |

+

__pypackages__/

|

| 106 |

+

|

| 107 |

+

# Celery stuff

|

| 108 |

+

celerybeat-schedule

|

| 109 |

+

celerybeat.pid

|

| 110 |

+

|

| 111 |

+

# SageMath parsed files

|

| 112 |

+

*.sage.py

|

| 113 |

+

|

| 114 |

+

# Environments

|

| 115 |

+

.env

|

| 116 |

+

.venv

|

| 117 |

+

env/

|

| 118 |

+

venv/

|

| 119 |

+

ENV/

|

| 120 |

+

env.bak/

|

| 121 |

+

venv.bak/

|

| 122 |

+

|

| 123 |

+

# Spyder project settings

|

| 124 |

+

.spyderproject

|

| 125 |

+

.spyproject

|

| 126 |

+

|

| 127 |

+

# Rope project settings

|

| 128 |

+

.ropeproject

|

| 129 |

+

|

| 130 |

+

# mkdocs documentation

|

| 131 |

+

/site

|

| 132 |

+

|

| 133 |

+

# mypy

|

| 134 |

+

.mypy_cache/

|

| 135 |

+

.dmypy.json

|

| 136 |

+

dmypy.json

|

| 137 |

+

|

| 138 |

+

# Pyre type checker

|

| 139 |

+

.pyre/

|

data/EveryDream/AutoCaption.ipynb

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"cells":[{"cell_type":"markdown","metadata":{},"source":["# Please read the documentation here before you start.\n","\n","I suggest reading this doc before you connect to your runtime to avoid using credits or being charged while you figure it out.\n","\n","[Auto Captioning Readme](doc/AUTO_CAPTION.md)\n","\n","This notebook requires an Nvidia GPU instance. Any will do, you don't need anything power. As low as 4GB should be fine.\n","\n","Only colab has automatic file transfers at this time. If you are using another platform, you will need to manually download your output files."]},{"cell_type":"code","execution_count":null,"metadata":{"colab":{"base_uri":"https://localhost:8080/"},"executionInfo":{"elapsed":929,"status":"ok","timestamp":1667184580032,"user":{"displayName":"Victor Hall","userId":"00029068894644207946"},"user_tz":240},"id":"lWGx2LuU8Q_I","outputId":"d0eb4d03-f16d-460b-981d-d5f88447e85e"},"outputs":[],"source":["#download repo\n","!git clone https://github.com/victorchall/EveryDream.git\n","# Set working directory\n","%cd EveryDream"]},{"cell_type":"code","execution_count":null,"metadata":{"colab":{"base_uri":"https://localhost:8080/"},"executionInfo":{"elapsed":4944,"status":"ok","timestamp":1667184754992,"user":{"displayName":"Victor Hall","userId":"00029068894644207946"},"user_tz":240},"id":"RJxfSai-8pkD","outputId":"0ac1b805-62a0-48aa-e0da-ee19503bb3f1"},"outputs":[],"source":["# install requirements\n","!pip install torch=='1.12.1+cu113' 'torchvision==0.13.1+cu113' --extra-index-url https://download.pytorch.org/whl/cu113\n","!pip install pandas>='1.3.5'\n","!git clone https://github.com/salesforce/BLIP scripts/BLIP\n","!pip install timm\n","!pip install fairscale=='0.4.4'\n","!pip install transformers=='4.19.2'\n","!pip install timm\n","!pip install aiofiles"]},{"cell_type":"markdown","metadata":{"id":"sbeUIVXJ-EVf"},"source":["# Upload your input images into the EveryDream/input folder\n","\n",""]},{"cell_type":"markdown","metadata":{},"source":["## Please read the documentation for information on the parameters\n","\n","[Auto Captioning](doc/AUTO_CAPTION.md)\n","\n","*You cannot have commented lines between uncommented lines. If you uncomment a line below, move it above any other commented lines.*\n","\n","*!python must remain the first line.*\n","\n","Default params should work fairly well."]},{"cell_type":"code","execution_count":null,"metadata":{"colab":{"base_uri":"https://localhost:8080/"},"executionInfo":{"elapsed":18221,"status":"ok","timestamp":1667185808005,"user":{"displayName":"Victor Hall","userId":"00029068894644207946"},"user_tz":240},"id":"4TAICahl-RPn","outputId":"da7fa1a8-0855-403a-c295-4da31658d1f6"},"outputs":[],"source":["!python scripts/auto_caption.py \\\n","--img_dir input \\\n","--out_dir output \\\n","#--format mrwho \\\n","#--min_length 34 \\\n","#--q_factor 1.3 \\\n","#--nucleus \\"]},{"cell_type":"markdown","metadata":{"id":"HBrWnu1C_lN9"},"source":["## Download your captioned images from EveryDream/output\n","\n","If you're on a colab you can use the cell below to push your output to your Gdrive."]},{"cell_type":"code","execution_count":null,"metadata":{},"outputs":[],"source":["from google.colab import drive\n","drive.mount('/content/drive')\n","\n","!mkdir /content/drive/MyDrive/AutoCaption\n","!cp output/*.* /content/drive/MyDrive/AutoCaption"]},{"cell_type":"markdown","metadata":{},"source":["## If not on colab/gdrive, the following will zip up your files for extraction\n","\n","You'll still need to use your runtime's own download feature to download the zip.\n","\n",""]},{"cell_type":"code","execution_count":null,"metadata":{},"outputs":[],"source":["!pip install patool\n","\n","import patoolib\n","\n","!mkdir output/zip\n","\n","!zip -r output/zip/output.zip output"]}],"metadata":{"colab":{"authorship_tag":"ABX9TyN9ZSr0RyOQKdfeVsl2uOiE","collapsed_sections":[],"provenance":[{"file_id":"16QrivRfoDFvE7fAa7eLeVlxj78Q573E0","timestamp":1667185879409}]},"kernelspec":{"display_name":"Python 3.10.5 ('.venv': venv)","language":"python","name":"python3"},"language_info":{"name":"python","version":"3.10.5"},"vscode":{"interpreter":{"hash":"faf4a6abb601e3a9195ce3e9620411ceec233a951446de834cdf28542d2d93b4"}}},"nbformat":4,"nbformat_minor":0}

|

data/EveryDream/EveryDream_Tools.ipynb

ADDED

|

@@ -0,0 +1,387 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"cell_type": "markdown",

|

| 5 |

+

"metadata": {

|

| 6 |

+

"id": "view-in-github",

|

| 7 |

+

"colab_type": "text"

|

| 8 |

+

},

|

| 9 |

+

"source": [

|

| 10 |

+

"<a href=\"https://colab.research.google.com/github/victorchall/EveryDream/blob/main/EveryDream_Tools.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

|

| 11 |

+

]

|

| 12 |

+

},

|

| 13 |

+

{

|

| 14 |

+

"cell_type": "code",

|

| 15 |

+

"source": [

|

| 16 |

+

"#@title #Connect to Google Drive\n",

|

| 17 |

+

"from google.colab import drive\n",

|

| 18 |

+

"drive.mount('/content/drive')\n"

|

| 19 |

+

],

|

| 20 |

+

"metadata": {

|

| 21 |

+

"id": "Z_ZHfnQ52dg9"

|

| 22 |

+

},

|

| 23 |

+

"execution_count": null,

|

| 24 |

+

"outputs": []

|

| 25 |

+

},

|

| 26 |

+

{

|

| 27 |

+

"cell_type": "markdown",

|

| 28 |

+

"metadata": {

|

| 29 |

+

"id": "uJfwih4wAVgw"

|

| 30 |

+

},

|

| 31 |

+

"source": [

|

| 32 |

+

"# Please read the documentation here before you start.\n",

|

| 33 |

+

"\n",

|

| 34 |

+

"I suggest reading this doc before you connect to your runtime to avoid using credits or being charged while you figure it out.\n",

|

| 35 |

+

"\n",

|

| 36 |

+

"[Auto Captioning Readme](doc/AUTO_CAPTION.md)\n",

|

| 37 |

+

"\n",

|

| 38 |

+

"This notebook requires an Nvidia GPU instance. Any will do, you don't need anything power. As low as 4GB should be fine.\n",

|

| 39 |

+

"\n",

|

| 40 |

+

"Only colab has automatic file transfers at this time. If you are using another platform, you will need to manually download your output files."

|

| 41 |

+

]

|

| 42 |

+

},

|

| 43 |

+

{

|

| 44 |

+

"cell_type": "code",

|

| 45 |

+

"execution_count": null,

|

| 46 |

+

"metadata": {

|

| 47 |

+

"id": "lWGx2LuU8Q_I"

|

| 48 |

+

},

|

| 49 |

+

"outputs": [],

|

| 50 |

+

"source": [

|

| 51 |

+

"#download repo\n",

|

| 52 |

+

"!git clone https://github.com/victorchall/EveryDream.git\n",

|

| 53 |

+

"# Set working directory\n",

|

| 54 |

+

"%cd EveryDream"

|

| 55 |

+

]

|

| 56 |

+

},

|

| 57 |

+

{

|

| 58 |

+

"cell_type": "code",

|

| 59 |

+

"execution_count": null,

|

| 60 |

+

"metadata": {

|

| 61 |

+

"id": "RJxfSai-8pkD"

|

| 62 |

+

},

|

| 63 |

+

"outputs": [],

|

| 64 |

+

"source": [

|

| 65 |

+

"# install requirements\n",

|

| 66 |

+

"!pip install torch=='1.12.1+cu113' 'torchvision==0.13.1+cu113' --extra-index-url https://download.pytorch.org/whl/cu113\n",

|

| 67 |

+

"!pip install pandas>='1.3.5'\n",

|

| 68 |

+

"!git clone https://github.com/salesforce/BLIP scripts/BLIP\n",

|

| 69 |

+

"!pip install timm\n",

|

| 70 |

+

"!pip install fairscale=='0.4.4'\n",

|

| 71 |

+

"!pip install transformers=='4.19.2'\n",

|

| 72 |

+

"!pip install timm\n",

|

| 73 |

+

"!pip install aiofiles\n",

|

| 74 |

+

"!pip install colorama"

|

| 75 |

+

]

|

| 76 |

+

},

|

| 77 |

+

{

|

| 78 |

+

"cell_type": "markdown",

|

| 79 |

+

"source": [

|

| 80 |

+

"#Extract Frames from video\n",

|

| 81 |

+

"\n",

|

| 82 |

+

"Here we will use the folder input_vid and upload in the same way we did our images"

|

| 83 |

+

],

|

| 84 |

+

"metadata": {

|

| 85 |

+

"id": "huQSI8Y-Bboz"

|

| 86 |

+

}

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"cell_type": "code",

|

| 90 |

+

"source": [

|

| 91 |

+

"!python /scripts/extract_video_frames.py \\\n",

|

| 92 |

+

"--vid_dir input_vid \\\n",

|

| 93 |

+

"--out_dir output/vid \\\n",

|

| 94 |

+

"--format png \\\n",

|

| 95 |

+

"--interval 10 "

|

| 96 |

+

],

|

| 97 |

+

"metadata": {

|

| 98 |

+

"id": "RDuBL4k8Avz-"

|

| 99 |

+

},

|

| 100 |

+

"execution_count": null,

|

| 101 |

+

"outputs": []

|

| 102 |

+

},

|

| 103 |

+

{

|

| 104 |

+

"cell_type": "markdown",

|

| 105 |

+

"source": [

|

| 106 |

+

"Move the extracted frames to the input directory for captions"

|

| 107 |

+

],

|

| 108 |

+

"metadata": {

|

| 109 |

+

"id": "iqcUzcRuCTLR"

|

| 110 |

+

}

|

| 111 |

+

},

|

| 112 |

+

{

|

| 113 |

+

"cell_type": "code",

|

| 114 |

+

"source": [

|

| 115 |

+

"!cp -r output/vid input"

|

| 116 |

+

],

|

| 117 |

+

"metadata": {

|

| 118 |

+

"id": "Uv8wAHSQAvrm"

|

| 119 |

+

},

|

| 120 |

+

"execution_count": null,

|

| 121 |

+

"outputs": []

|

| 122 |

+

},

|

| 123 |

+

{

|

| 124 |

+

"cell_type": "markdown",

|

| 125 |

+

"metadata": {

|

| 126 |

+

"id": "sbeUIVXJ-EVf"

|

| 127 |

+

},

|

| 128 |

+

"source": [

|

| 129 |

+

"# Upload your input images into the EveryDream/input folder\n",

|

| 130 |

+

"\n",

|

| 131 |

+

""

|

| 132 |

+

]

|

| 133 |

+

},

|

| 134 |

+

{

|

| 135 |

+

"cell_type": "markdown",

|

| 136 |

+

"metadata": {

|

| 137 |

+

"id": "bscWH13SAVgz"

|

| 138 |

+

},

|

| 139 |

+

"source": [

|

| 140 |

+

"## Please read the documentation for information on the parameters\n",

|

| 141 |

+

"\n",

|

| 142 |

+

"[Auto Captioning](doc/AUTO_CAPTION.md)\n",

|

| 143 |

+

"\n",

|

| 144 |

+

"*You cannot have commented lines between uncommented lines. If you uncomment a line below, move it above any other commented lines.*\n",

|

| 145 |

+

"\n",

|

| 146 |

+

"*!python must remain the first line.*\n",

|

| 147 |

+

"\n",

|

| 148 |

+

"Default params should work fairly well."

|

| 149 |

+

]

|

| 150 |

+

},

|

| 151 |

+

{

|

| 152 |

+

"cell_type": "code",

|

| 153 |

+

"execution_count": null,

|

| 154 |

+

"metadata": {

|

| 155 |

+

"id": "4TAICahl-RPn"

|

| 156 |

+

},

|

| 157 |

+

"outputs": [],

|

| 158 |

+

"source": [

|

| 159 |

+

"!python scripts/auto_caption.py \\\n",

|

| 160 |

+

"--img_dir input \\\n",

|

| 161 |

+

"--out_dir output \\\n",

|

| 162 |

+

"#--format mrwho \\\n",

|

| 163 |

+

"#--min_length 34 \\\n",

|

| 164 |

+

"#--q_factor 1.3 \\\n",

|

| 165 |

+

"#--nucleus \\\n",

|

| 166 |

+

"\n",

|

| 167 |

+

"## mutiple files can be targeted in succession\n",

|

| 168 |

+

"\n",

|

| 169 |

+

"#!python scripts/auto_caption.py \\\n",

|

| 170 |

+

"#--img_dir input/subfolder \\\n",

|

| 171 |

+

"#--out_dir output/subfolder \\\n",

|

| 172 |

+

"#--format mrwho \\\n",

|

| 173 |

+

"#--min_length 34 \\\n",

|

| 174 |

+

"#--q_factor 1.3 \\\n",

|

| 175 |

+

"#--nucleus \\"

|

| 176 |

+

]

|

| 177 |

+

},

|

| 178 |

+

{

|

| 179 |

+

"cell_type": "markdown",

|

| 180 |

+

"source": [

|

| 181 |

+

"# Laion Downloader\n",

|

| 182 |

+

"\n",

|

| 183 |

+

"* --laion_dir: directory with laion parquet files, default is ./laion\n",

|

| 184 |

+

"\n",

|

| 185 |

+

"* --search_text: csv of words with AND logic, ex \\\"photo,man,dog\\\"\n",

|

| 186 |

+

"\n",

|

| 187 |

+

"* --out_dir: directory to download files to, ive defaulted this to inputs so they can be captioned \n",

|

| 188 |

+

"\n",

|

| 189 |

+

"* --log_dir: directory for logs, if ommitted will not log, logs may be large!\n",

|

| 190 |

+

"\n",

|

| 191 |

+

"* --column:column to search for matches, defaults is 'TEXT', but you could use 'URL' if you wanted\",\n",

|

| 192 |

+

"\n",

|

| 193 |

+

"* --limit: max number of matching images to download, warning: may be slightly imprecise due to concurrency and http errors, defaults is 100\n",

|

| 194 |

+

"\n",

|

| 195 |

+

"* --min_hw: min height AND width of image to download, default is 512\n",

|

| 196 |

+

" \n",

|

| 197 |

+

"* --force: forces a full download of all images, even if no search is provided, USE CAUTION!\n",

|

| 198 |

+

"\n",

|

| 199 |

+

"* --parquet_skip: skips the first n parquet files on disk, useful to resume\n",

|

| 200 |

+

" \n",

|

| 201 |

+

"* --verbose: additional logging of URL and TEXT \n",

|

| 202 |

+

" \n",

|

| 203 |

+

"* --test: skips downloading, for checking filters, use with \"--verbose\"\n"

|

| 204 |

+

],

|

| 205 |

+

"metadata": {

|

| 206 |

+

"id": "wY2f2LkPGSVa"

|

| 207 |

+

}

|

| 208 |

+

},

|

| 209 |

+

{

|

| 210 |

+

"cell_type": "code",

|

| 211 |

+

"source": [

|

| 212 |

+

"!python scripts/download_laion.py \\\n",

|

| 213 |

+

"--laion_dir ./laion \\\n",

|

| 214 |

+

"--search_text \"photo,man,dog\" \\\n",

|

| 215 |

+

"#--out_dir input \\\n",

|

| 216 |

+

"#--log_dir logs \\\n",

|

| 217 |

+

"#--column TEXT \\\n",

|

| 218 |

+

"#--limit 100 \\\n",

|

| 219 |

+

"#--min_hw 512 \\\n",

|

| 220 |

+

"#--force False \\\n",

|

| 221 |

+

"#--parquet_skip 0 \\\n",

|

| 222 |

+

"#--Verbose False \\\n",

|

| 223 |

+

"#--test not \\\n"

|

| 224 |

+

],

|

| 225 |

+

"metadata": {

|

| 226 |

+

"id": "cxw60TTmEy2C"

|

| 227 |

+

},

|

| 228 |

+

"execution_count": null,

|

| 229 |

+

"outputs": []

|

| 230 |

+

},

|

| 231 |

+

{

|

| 232 |

+

"cell_type": "markdown",

|

| 233 |

+

"source": [

|

| 234 |

+

"Here we can take our now captioned images and replace generic terms with our subjects\n",

|

| 235 |

+

"\n",

|

| 236 |

+

"* --find: will search for a word in this case man\n",

|

| 237 |

+

"\n",

|

| 238 |

+

"* --replace: will replace our found word with in this case bob smith\n",

|

| 239 |

+

"\n",

|

| 240 |

+

"* --append_only: this will allow us to add a tag at he end "

|

| 241 |

+

],

|

| 242 |

+

"metadata": {

|

| 243 |

+

"id": "EBdLelNpDjYc"

|

| 244 |

+

}

|

| 245 |

+

},

|

| 246 |

+

{

|

| 247 |

+

"cell_type": "code",

|

| 248 |

+

"source": [

|

| 249 |

+

"!python scripts/filename_replace.py \\\n",

|

| 250 |

+

"--img_dir output \\\n",

|

| 251 |

+

"--find \"man\" \\\n",

|

| 252 |

+

"--replace \"bob smith\""

|

| 253 |

+

],

|

| 254 |

+

"metadata": {

|

| 255 |

+

"id": "6Y1md3OHAvhw"

|

| 256 |

+

},

|

| 257 |

+

"execution_count": null,

|

| 258 |

+

"outputs": []

|

| 259 |

+

},

|

| 260 |

+

{

|

| 261 |

+

"cell_type": "markdown",

|

| 262 |

+

"source": [

|

| 263 |

+

"Now we can chose to create text files based on our file names, this is usefull for images with very long discriptions or tag list, windows has a limit of 256 characters, and files will not transfer correctly to a windows program if they are longer, moving these files in a zip is fine however and causes no issues\n"

|

| 264 |

+

],

|

| 265 |

+

"metadata": {

|

| 266 |

+

"id": "W0MspWmXJQuc"

|

| 267 |

+

}

|

| 268 |

+

},

|

| 269 |

+

{

|

| 270 |

+

"cell_type": "code",

|

| 271 |

+

"source": [

|

| 272 |

+

"!python scripts/createtxtfromfilename.py"

|

| 273 |

+

],

|

| 274 |

+

"metadata": {

|

| 275 |

+

"id": "BpvenvyQJr9b"

|

| 276 |

+

},

|

| 277 |

+

"execution_count": null,

|

| 278 |

+

"outputs": []

|

| 279 |

+

},

|

| 280 |

+

{

|

| 281 |

+

"cell_type": "markdown",

|

| 282 |

+

"source": [

|

| 283 |

+

"Compress our images "

|

| 284 |

+

],

|

| 285 |

+

"metadata": {

|

| 286 |

+

"id": "boVkDsiWJ_-P"

|

| 287 |

+

}

|

| 288 |

+

},

|

| 289 |

+

{

|

| 290 |

+

"cell_type": "code",

|

| 291 |

+

"source": [

|

| 292 |

+

"!python scripts/compress_img.py \\\n",

|

| 293 |

+

"--img_dir output \\\n",

|

| 294 |

+

"--out_dir output/compressed_images \\\n",

|

| 295 |

+

"--max_mp 1.5 \n",

|

| 296 |

+

"#--overwrite False \\\n",

|

| 297 |

+

"#--Quality 95 \\\n",

|

| 298 |

+

"#--noresize False \\\n",

|

| 299 |

+

"#--delete \\"

|

| 300 |

+

],

|

| 301 |

+

"metadata": {

|

| 302 |

+

"id": "F6QYfylhKAII"

|

| 303 |

+

},

|

| 304 |

+

"execution_count": null,

|

| 305 |

+

"outputs": []

|

| 306 |

+

},

|

| 307 |

+

{

|

| 308 |

+

"cell_type": "markdown",

|

| 309 |

+

"metadata": {

|

| 310 |

+

"id": "HBrWnu1C_lN9"

|

| 311 |

+

},

|

| 312 |

+

"source": [

|

| 313 |

+

"## Download your DataSet from EveryDream/output\n",

|

| 314 |

+

"\n",

|

| 315 |

+

"If you're on a colab you can use the cell below to push your output to your Gdrive."

|

| 316 |

+

]

|

| 317 |

+

},

|

| 318 |

+

{

|

| 319 |

+

"cell_type": "code",

|

| 320 |

+

"execution_count": null,

|

| 321 |

+

"metadata": {

|

| 322 |

+

"id": "ldW2sDLcAVgz"

|

| 323 |

+

},

|

| 324 |

+

"outputs": [],

|

| 325 |

+

"source": [

|

| 326 |

+

"\n",

|

| 327 |

+

"!mkdir /content/drive/MyDrive/Auto_Data_sets\n",

|

| 328 |

+

"!cp -r output/ /content/drive/MyDrive/Auto_Data_sets"

|

| 329 |

+

]

|

| 330 |

+

},

|

| 331 |

+

{

|

| 332 |

+

"cell_type": "markdown",

|

| 333 |

+

"metadata": {

|

| 334 |

+

"id": "B-HFqbP4AVgz"

|

| 335 |

+

},

|

| 336 |

+

"source": [

|

| 337 |

+

"## If not on colab/gdrive, the following will zip up your files for extraction\n",

|

| 338 |

+

"\n",

|

| 339 |

+

"You'll still need to use your runtime's own download feature to download the zip.\n",

|

| 340 |

+

"\n",

|

| 341 |

+

""

|

| 342 |

+

]

|

| 343 |

+

},

|

| 344 |

+

{

|

| 345 |

+

"cell_type": "code",

|

| 346 |

+

"execution_count": null,

|

| 347 |

+

"metadata": {

|

| 348 |

+

"id": "SVa80mrKAVg0"

|

| 349 |

+

},

|

| 350 |

+

"outputs": [],

|

| 351 |

+

"source": [

|

| 352 |

+

"!pip install patool\n",

|

| 353 |

+

"\n",

|

| 354 |

+

"import patoolib\n",

|

| 355 |

+

"\n",

|

| 356 |

+

"!mkdir output/zip\n",

|

| 357 |

+

"\n",

|

| 358 |

+

"!zip -r output/zip/output.zip output"

|

| 359 |

+

]

|

| 360 |

+

}

|

| 361 |

+

],

|

| 362 |

+

"metadata": {

|

| 363 |

+

"colab": {

|

| 364 |

+

"provenance": [],

|

| 365 |

+

"machine_shape": "hm",

|

| 366 |

+

"include_colab_link": true

|

| 367 |

+

},

|

| 368 |

+

"kernelspec": {

|

| 369 |

+

"display_name": "Python 3.10.5 ('.venv': venv)",

|

| 370 |

+

"language": "python",

|

| 371 |

+

"name": "python3"

|

| 372 |

+

},

|

| 373 |

+

"language_info": {

|

| 374 |

+

"name": "python",

|

| 375 |

+

"version": "3.10.5"

|

| 376 |

+

},

|

| 377 |

+

"vscode": {

|

| 378 |

+

"interpreter": {

|

| 379 |

+

"hash": "faf4a6abb601e3a9195ce3e9620411ceec233a951446de834cdf28542d2d93b4"

|

| 380 |

+

}

|

| 381 |

+

},

|

| 382 |

+

"accelerator": "GPU",

|

| 383 |

+

"gpuClass": "standard"

|

| 384 |

+

},

|

| 385 |

+

"nbformat": 4,

|

| 386 |

+

"nbformat_minor": 0

|

| 387 |

+

}

|

data/EveryDream/LICENSE

ADDED

|

@@ -0,0 +1,661 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

GNU AFFERO GENERAL PUBLIC LICENSE

|

| 2 |

+

Version 3, 19 November 2007

|

| 3 |

+

|

| 4 |

+

Copyright (C) 2007 Free Software Foundation, Inc. <https://fsf.org/>

|

| 5 |

+

Everyone is permitted to copy and distribute verbatim copies

|

| 6 |

+

of this license document, but changing it is not allowed.

|

| 7 |

+

|

| 8 |

+

Preamble

|

| 9 |

+

|

| 10 |

+

The GNU Affero General Public License is a free, copyleft license for

|

| 11 |

+

software and other kinds of works, specifically designed to ensure

|

| 12 |

+

cooperation with the community in the case of network server software.

|

| 13 |

+

|

| 14 |

+

The licenses for most software and other practical works are designed

|

| 15 |

+

to take away your freedom to share and change the works. By contrast,

|

| 16 |

+

our General Public Licenses are intended to guarantee your freedom to

|

| 17 |

+

share and change all versions of a program--to make sure it remains free

|

| 18 |

+

software for all its users.

|

| 19 |

+

|

| 20 |

+

When we speak of free software, we are referring to freedom, not

|

| 21 |

+

price. Our General Public Licenses are designed to make sure that you

|

| 22 |

+

have the freedom to distribute copies of free software (and charge for

|

| 23 |

+

them if you wish), that you receive source code or can get it if you

|

| 24 |

+

want it, that you can change the software or use pieces of it in new

|

| 25 |

+

free programs, and that you know you can do these things.

|

| 26 |

+

|

| 27 |

+

Developers that use our General Public Licenses protect your rights

|

| 28 |

+

with two steps: (1) assert copyright on the software, and (2) offer

|

| 29 |

+

you this License which gives you legal permission to copy, distribute

|

| 30 |

+

and/or modify the software.

|

| 31 |

+

|

| 32 |

+

A secondary benefit of defending all users' freedom is that

|

| 33 |

+

improvements made in alternate versions of the program, if they

|

| 34 |

+

receive widespread use, become available for other developers to

|

| 35 |

+

incorporate. Many developers of free software are heartened and

|

| 36 |

+