Merge branch 'main' of https://huggingface.co/bertin-project/bertin-roberta-base-spanish into main

Browse files- README.md +221 -105

- evaluation/paws.yaml +55 -0

- evaluation/run_glue.py +576 -0

- evaluation/run_ner.ipynb +0 -0

- evaluation/run_ner.py +562 -0

- evaluation/xnli.yaml +55 -0

- images/bertin-tilt.png +0 -0

- images/bertin.png +0 -0

- images/datasets-perp-20-120.png +0 -0

- images/datasets-wsize.png +0 -0

- mc4/mc4.py +3 -3

- run_mlm_flax_stream.py +55 -3

README.md

CHANGED

|

@@ -12,14 +12,28 @@ widget:

|

|

| 12 |

- Version 1 (beta): July 15th, 2021

|

| 13 |

- Version 1: July 19th, 2021

|

| 14 |

|

|

|

|

| 15 |

# BERTIN

|

| 16 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 17 |

BERTIN is a series of BERT-based models for Spanish. The current model hub points to the best of all RoBERTa-base models trained from scratch on the Spanish portion of mC4 using [Flax](https://github.com/google/flax). All code and scripts are included.

|

| 18 |

|

| 19 |

This is part of the

|

| 20 |

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organized by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google Cloud.

|

| 21 |

|

| 22 |

-

The aim of this project was to pre-train a RoBERTa-base model from scratch

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 23 |

|

| 24 |

## Spanish mC4

|

| 25 |

|

|

@@ -50,7 +64,9 @@ In order to efficiently build this subset of data, we decided to leverage a tech

|

|

| 50 |

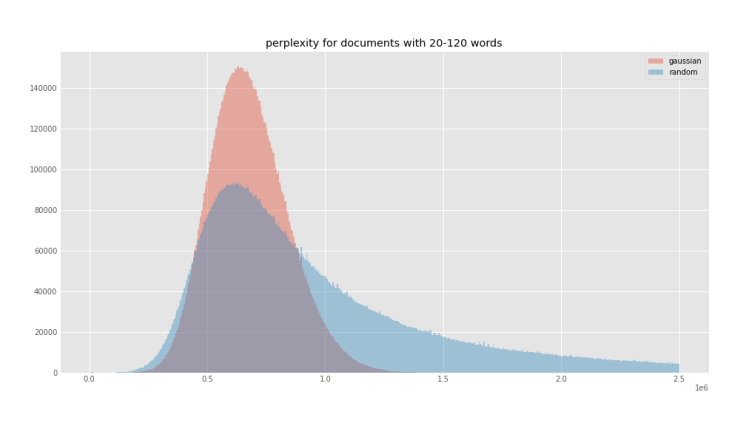

<caption>Figure 1. Perplexity distributions by percentage CCNet corpus.</caption>

|

| 51 |

</figure>

|

| 52 |

|

| 53 |

-

In this work, we tested the hypothesis that perplexity sampling might help

|

|

|

|

|

|

|

| 54 |

|

| 55 |

## Methodology

|

| 56 |

|

|

@@ -60,13 +76,13 @@ In order to test our hypothesis, we first calculated the perplexity of each docu

|

|

| 60 |

|

| 61 |

|

| 62 |

|

| 63 |

-

<caption>Figure 2. Perplexity distributions and quartiles (red lines) of

|

| 64 |

</figure>

|

| 65 |

|

| 66 |

With the extracted perplexity percentiles, we created two functions to oversample the central quartiles with the idea of biasing against samples that are either too small (short, repetitive texts) or too long (potentially poor quality) (see Figure 3).

|

| 67 |

|

| 68 |

The first function is a `Stepwise` that simply oversamples the central quartiles using quartile boundaries and a factor for the desired sampling frequency for each quartile, obviously given larger frequencies for middle quartiles (oversampling Q2, Q3, subsampling Q1, Q4).

|

| 69 |

-

The second function

|

| 70 |

|

| 71 |

We adjusted the `factor` parameter of the `Stepwise` function, and the `factor` and `width` parameter of the `Gaussian` function to roughly be able to sample 50M samples from the 416M in `mc4-es` (see Figure 4). For comparison, we also sampled randomly `mC4-es` up to 50M samples as well. In terms of sizes, we went down from 1TB of data to ~200GB.

|

| 72 |

|

|

@@ -75,38 +91,38 @@ We adjusted the `factor` parameter of the `Stepwise` function, and the `factor`

|

|

| 75 |

|

| 76 |

|

| 77 |

|

| 78 |

-

<caption>Figure 3. Expected perplexity distributions of the sample

|

| 79 |

</figure>

|

| 80 |

|

| 81 |

<figure>

|

| 82 |

|

| 83 |

|

| 84 |

|

| 85 |

-

<caption>Figure 4. Expected perplexity distributions of the sample

|

| 86 |

</figure>

|

| 87 |

|

| 88 |

-

Figure 5 shows the perplexity distributions of the 50M subsets for each of the

|

| 89 |

|

| 90 |

```python

|

| 91 |

from datasets import load_dataset

|

| 92 |

|

| 93 |

-

for

|

| 94 |

mc4es = load_dataset(

|

| 95 |

"bertin-project/mc4-es-sampled",

|

| 96 |

-

|

| 97 |

-

split=

|

| 98 |

streaming=True

|

| 99 |

).shuffle(buffer_size=1000)

|

| 100 |

for sample in mc4es:

|

| 101 |

-

print(

|

| 102 |

-

break

|

| 103 |

```

|

| 104 |

|

| 105 |

<figure>

|

| 106 |

|

| 107 |

|

| 108 |

|

| 109 |

-

<caption>Figure 5. Experimental perplexity distributions of the sampled

|

| 110 |

</figure>

|

| 111 |

|

| 112 |

`Random` sampling displayed the same perplexity distribution of the underlying true distribution, as can be seen in Figure 6.

|

|

@@ -115,10 +131,13 @@ for split in ("random", "stepwise", "gaussian"):

|

|

| 115 |

|

| 116 |

|

| 117 |

|

| 118 |

-

<caption>Figure 6. Experimental perplexity distribution of the sampled

|

| 119 |

</figure>

|

| 120 |

|

| 121 |

-

|

|

|

|

|

|

|

|

|

|

| 122 |

|

| 123 |

Then, we continued training the most promising model for a few steps (~25k) more on sequence length 512. We tried two strategies for this, since it is not easy to find clear details about this change in the literature. It turns out this decision had a big impact in the final performance.

|

| 124 |

|

|

@@ -128,10 +147,12 @@ For `Random` sampling we trained with seq len 512 during the last 20 steps of th

|

|

| 128 |

|

| 129 |

|

| 130 |

|

| 131 |

-

<caption>Figure 7. Training profile for Random sampling. Note the drop in performance after the change from 128 to 512 sequence

|

| 132 |

</figure>

|

| 133 |

|

| 134 |

-

For `Gaussian` sampling we started a new optimizer after 230 steps with 128

|

|

|

|

|

|

|

| 135 |

|

| 136 |

## Results

|

| 137 |

|

|

@@ -141,9 +162,11 @@ Our final models were trained on a different number of steps and sequence length

|

|

| 141 |

|

| 142 |

<figure>

|

| 143 |

|

|

|

|

|

|

|

| 144 |

| Dataset | Metric | RoBERTa-b | RoBERTa-l | BETO | mBERT | BERTIN |

|

| 145 |

|-------------|----------|-----------|-----------|--------|--------|--------|

|

| 146 |

-

| UD-POS | F1 | 0.9907 | 0.9901 | 0.9900 | 0.9886 | 0.9904 |

|

| 147 |

| Conll-NER | F1 | 0.8851 | 0.8772 | 0.8759 | 0.8691 | 0.8627 |

|

| 148 |

| Capitel-POS | F1 | 0.9846 | 0.9851 | 0.9836 | 0.9839 | 0.9826 |

|

| 149 |

| Capitel-NER | F1 | 0.8959 | 0.8998 | 0.8771 | 0.8810 | 0.8741 |

|

|

@@ -152,15 +175,15 @@ Our final models were trained on a different number of steps and sequence length

|

|

| 152 |

| PAWS-X | F1 | 0.9035 | 0.9000 | 0.8915 | 0.9020 | 0.8820 |

|

| 153 |

| XNLI | Accuracy | 0.8016 | WiP | 0.8130 | 0.7876 | WiP |

|

| 154 |

|

| 155 |

-

|

| 156 |

-

<caption>Table 1. Evaluation made by the Barcelona Supercomputing Center of their models and BERTIN (beta, seq len 128).</caption>

|

| 157 |

</figure>

|

| 158 |

|

| 159 |

-

All of our models attained good accuracy values

|

| 160 |

|

| 161 |

<figure>

|

| 162 |

|

| 163 |

-

|

|

|

|

|

|

|

| 164 |

|----------------------------------------------------|----------|

|

| 165 |

| bertin-project/bertin-roberta-base-spanish | 0.6547 |

|

| 166 |

| bertin-project/bertin-base-random | 0.6520 |

|

|

@@ -169,108 +192,197 @@ All of our models attained good accuracy values, in the range of 0.65, as can be

|

|

| 169 |

| bertin-project/bertin-base-random-exp-512seqlen | 0.5907 |

|

| 170 |

| bertin-project/bertin-base-gaussian-exp-512seqlen | **0.6873** |

|

| 171 |

|

| 172 |

-

|

| 173 |

-

<caption>Table 2. Accuracy for the different language models.</caption>

|

| 174 |

</figure>

|

| 175 |

|

| 176 |

-

|

| 177 |

-

|

| 178 |

-

**SQUAD-es**

|

| 179 |

-

Using sequence length 128 we have achieved exact match 50.96 and F1 68.74.

|

| 180 |

|

| 181 |

-

|

| 182 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 183 |

|

| 184 |

<figure>

|

| 185 |

|

| 186 |

-

|

| 187 |

-

|

| 188 |

-

|

| 189 |

-

|

| 190 |

-

|

|

| 191 |

-

|

| 192 |

-

|

|

| 193 |

-

|

|

| 194 |

-

|

|

| 195 |

-

|

|

| 196 |

-

|

|

| 197 |

-

|

| 198 |

-

|

| 199 |

-

|

|

|

|

|

|

|

| 200 |

</figure>

|

| 201 |

|

|

|

|

| 202 |

|

| 203 |

-

|

| 204 |

-

All models trained with max length 512 and batch size 8, using the CoNLL 2002 dataset.

|

| 205 |

|

| 206 |

-

|

| 207 |

-

|

| 208 |

-

| Model | F1 | Accuracy |

|

| 209 |

-

|----------------------------------------------------|----------|----------|

|

| 210 |

-

| bert-base-multilingual-cased | 0.8539 | 0.9779 |

|

| 211 |

-

| dccuchile/bert-base-spanish-wwm-cased | 0.8579 | 0.9783 |

|

| 212 |

-

| BSC-TeMU/roberta-base-bne | 0.8700 | 0.9807 |

|

| 213 |

-

| bertin-project/bertin-roberta-base-spanish | 0.8725 | 0.9812 |

|

| 214 |

-

| bertin-project/bertin-base-random | 0.8704 | 0.9807 |

|

| 215 |

-

| bertin-project/bertin-base-stepwise | 0.8705 | 0.9809 |

|

| 216 |

-

| bertin-project/bertin-base-gaussian | **0.8792** | **0.9816** |

|

| 217 |

-

| bertin-project/bertin-base-random-exp-512seqlen | 0.8616 | 0.9803 |

|

| 218 |

-

| bertin-project/bertin-base-gaussian-exp-512seqlen | **0.8764** | **0.9819** |

|

| 219 |

-

|

| 220 |

-

|

| 221 |

-

<caption>Table 4. Results for NER.</caption>

|

| 222 |

-

</figure>

|

| 223 |

|

|

|

|

| 224 |

|

| 225 |

-

|

| 226 |

-

All models trained with max length 512 and batch size 8. The accuracy values in this case are a bit surprising (given some models are below 0.60 while others are close to 0.90), so these were run 3 times, with very similar results (these are the metrics for the last run).

|

| 227 |

|

| 228 |

-

|

| 229 |

-

|

| 230 |

-

| Model | Accuracy |

|

| 231 |

-

|----------------------------------------------------|----------|

|

| 232 |

-

| bert-base-multilingual-cased | 0.5765 |

|

| 233 |

-

| dccuchile/bert-base-spanish-wwm-cased | 0.5765 |

|

| 234 |

-

| BSC-TeMU/roberta-base-bne | 0.5765 |

|

| 235 |

-

| bertin-project/bertin-roberta-base-spanish | 0.6550 |

|

| 236 |

-

| bertin-project/bertin-base-random | 0.8665 |

|

| 237 |

-

| bertin-project/bertin-base-stepwise | 0.8610 |

|

| 238 |

-

| bertin-project/bertin-base-gaussian | **0.8800** |

|

| 239 |

-

| bertin-project/bertin-base-random-exp-512seqlen | 0.5765 |

|

| 240 |

-

| bertin-project/bertin-base-gaussian-exp-512seqlen | **0.875** |

|

| 241 |

-

|

| 242 |

-

|

| 243 |

-

<caption>Table 5. Results for PAWS-X.</caption>

|

| 244 |

-

</figure>

|

| 245 |

|

| 246 |

-

|

| 247 |

-

All models trained with max length 256 and batch size 16.

|

| 248 |

|

| 249 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 250 |

|

| 251 |

-

|

| 252 |

-

|

| 253 |

-

|

| 254 |

-

|

| 255 |

-

|

| 256 |

-

|

| 257 |

-

|

| 258 |

-

|

| 259 |

-

|

| 260 |

-

|

| 261 |

-

|

| 262 |

-

|

| 263 |

-

|

| 264 |

-

|

| 265 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 266 |

|

| 267 |

# Conclusions

|

| 268 |

|

| 269 |

-

With roughly 10 days worth of access to 3xTPUv3-8, we have achieved remarkable results surpassing previous state of the art in a few tasks, and even improving document classification on models trained in massive supercomputers with very large—private—and highly

|

|

|

|

|

|

|

| 270 |

|

| 271 |

-

|

| 272 |

|

| 273 |

-

|

| 274 |

|

| 275 |

## Team members

|

| 276 |

|

|

@@ -293,6 +405,10 @@ We hope our work will set the basis for more small teams playing and experimenti

|

|

| 293 |

|

| 294 |

## References

|

| 295 |

|

| 296 |

-

- CCNet: Extracting High Quality Monolingual Datasets from Web Crawl Data

|

|

|

|

|

|

|

|

|

|

|

|

|

| 297 |

|

| 298 |

-

-

|

|

|

|

| 12 |

- Version 1 (beta): July 15th, 2021

|

| 13 |

- Version 1: July 19th, 2021

|

| 14 |

|

| 15 |

+

|

| 16 |

# BERTIN

|

| 17 |

|

| 18 |

+

<div align=center>

|

| 19 |

+

<img alt="BERTIN logo" src="https://huggingface.co/bertin-project/bertin-roberta-base-spanish/resolve/main/images/bertin.png" width="200px">

|

| 20 |

+

</div>

|

| 21 |

+

|

| 22 |

BERTIN is a series of BERT-based models for Spanish. The current model hub points to the best of all RoBERTa-base models trained from scratch on the Spanish portion of mC4 using [Flax](https://github.com/google/flax). All code and scripts are included.

|

| 23 |

|

| 24 |

This is part of the

|

| 25 |

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organized by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google Cloud.

|

| 26 |

|

| 27 |

+

The aim of this project was to pre-train a RoBERTa-base model from scratch during the Flax/JAX Community Event, in which Google Cloud provided free TPUv3-8 to do the training using Huggingface's Flax implementations of their library.

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

# Motivation

|

| 31 |

+

According to [Wikipedia](https://en.wikipedia.org/wiki/List_of_languages_by_total_number_of_speakers), Spanish is the second most-spoken language in the world by native speakers (>470 million speakers, only after Chinese, and the fourth including those who speak it as a second language). However, most NLP research is still mainly available in English. Relevant contributions like BERT, XLNet or GPT2 sometimes take years to be available in Spanish and, when they do, it is often via multilanguage versions which are not as performant as the English alternative.

|

| 32 |

+

|

| 33 |

+

At the time of the event there were no RoBERTa models available in Spanish. Therefore, releasing one such model was the primary goal of our project. During the Flax/JAX Community Event we released a beta version of our model, which was the first in Spanish language. Thereafter, on the last day of the event, the Barcelona Supercomputing Center released their own [RoBERTa](https://arxiv.org/pdf/2107.07253.pdf) model. The precise timing suggests our work precipitated this publication, and such increase in competition is a desired outcome of our project. We are grateful for their efforts to include BERTIN in their paper, as discussed further below, and recognize the value of their own contribution, which we also acknowledge in our experiments.

|

| 34 |

+

|

| 35 |

+

Models in Spanish are hard to come by and, when they do, they are often trained on proprietary datasets and with massive resources. In practice, this means that many relevant algorithms and techniques remain exclusive to large technological corporations. This motivates the second goal of our project, which is to bring training of large models like RoBERTa one step closer to smaller groups. We want to explore technieque that make training this architectures easier and faster, thus contributing to the democratization of Deep Learning.

|

| 36 |

+

|

| 37 |

|

| 38 |

## Spanish mC4

|

| 39 |

|

|

|

|

| 64 |

<caption>Figure 1. Perplexity distributions by percentage CCNet corpus.</caption>

|

| 65 |

</figure>

|

| 66 |

|

| 67 |

+

In this work, we tested the hypothesis that perplexity sampling might help

|

| 68 |

+

reduce training-data size and training times, while keeping the performance of

|

| 69 |

+

the final model.

|

| 70 |

|

| 71 |

## Methodology

|

| 72 |

|

|

|

|

| 76 |

|

| 77 |

|

| 78 |

|

| 79 |

+

<caption>Figure 2. Perplexity distributions and quartiles (red lines) of 44M samples of mc4-es.</caption>

|

| 80 |

</figure>

|

| 81 |

|

| 82 |

With the extracted perplexity percentiles, we created two functions to oversample the central quartiles with the idea of biasing against samples that are either too small (short, repetitive texts) or too long (potentially poor quality) (see Figure 3).

|

| 83 |

|

| 84 |

The first function is a `Stepwise` that simply oversamples the central quartiles using quartile boundaries and a factor for the desired sampling frequency for each quartile, obviously given larger frequencies for middle quartiles (oversampling Q2, Q3, subsampling Q1, Q4).

|

| 85 |

+

The second function weighted the perplexity distribution by a Gaussian-like function, to smooth out the sharp boundaries of the `Stepwise` function and give a better approximation to the desired underlying distribution (see Figure 4).

|

| 86 |

|

| 87 |

We adjusted the `factor` parameter of the `Stepwise` function, and the `factor` and `width` parameter of the `Gaussian` function to roughly be able to sample 50M samples from the 416M in `mc4-es` (see Figure 4). For comparison, we also sampled randomly `mC4-es` up to 50M samples as well. In terms of sizes, we went down from 1TB of data to ~200GB.

|

| 88 |

|

|

|

|

| 91 |

|

| 92 |

|

| 93 |

|

| 94 |

+

<caption>Figure 3. Expected perplexity distributions of the sample mc4-es after applying the Stepwise function.</caption>

|

| 95 |

</figure>

|

| 96 |

|

| 97 |

<figure>

|

| 98 |

|

| 99 |

|

| 100 |

|

| 101 |

+

<caption>Figure 4. Expected perplexity distributions of the sample mc4-es after applying Gaussian function.</caption>

|

| 102 |

</figure>

|

| 103 |

|

| 104 |

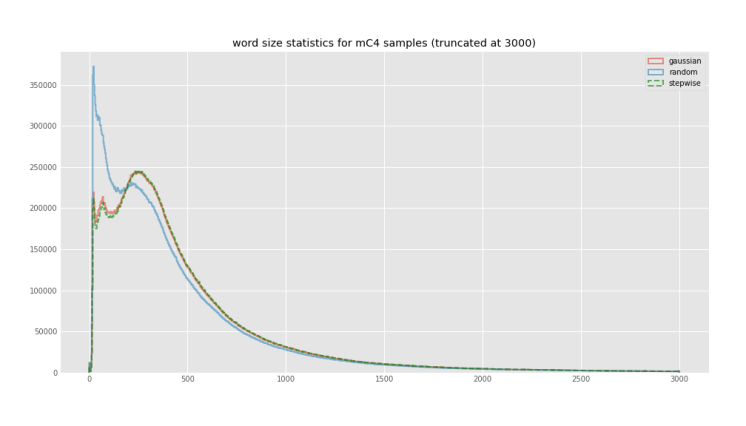

+

Figure 5 shows the actual perplexity distributions of the generated 50M subsets for each of the executed subsampling procedures. All subsets can be easily accessed for reproducibility purposes using the `bertin-project/mc4-es-sampled` dataset. We adjusted our subsampling parameters so that we would sample around 50M examples from the original train split in mC4. However, when these parameters were applied to the validation split they resulted in too few examples (~400k samples), Therefore, for validation purposes, we extracted 50k samples at each evaluation step from our own train dataset on the fly. Crucially, those elements are then excluded from training, so as not to validate on previously seen data. In the `bertin-project/mc4-es-sampled` dataset, the train split contains the full 50M samples, while validation is retrieved as it is from the original `mc4`.

|

| 105 |

|

| 106 |

```python

|

| 107 |

from datasets import load_dataset

|

| 108 |

|

| 109 |

+

for config in ("random", "stepwise", "gaussian"):

|

| 110 |

mc4es = load_dataset(

|

| 111 |

"bertin-project/mc4-es-sampled",

|

| 112 |

+

config,

|

| 113 |

+

split="train",

|

| 114 |

streaming=True

|

| 115 |

).shuffle(buffer_size=1000)

|

| 116 |

for sample in mc4es:

|

| 117 |

+

print(config, sample)

|

| 118 |

+

break

|

| 119 |

```

|

| 120 |

|

| 121 |

<figure>

|

| 122 |

|

| 123 |

|

| 124 |

|

| 125 |

+

<caption>Figure 5. Experimental perplexity distributions of the sampled mc4-es after applying Gaussian and Stepwise functions, and the Random control sample.</caption>

|

| 126 |

</figure>

|

| 127 |

|

| 128 |

`Random` sampling displayed the same perplexity distribution of the underlying true distribution, as can be seen in Figure 6.

|

|

|

|

| 131 |

|

| 132 |

|

| 133 |

|

| 134 |

+

<caption>Figure 6. Experimental perplexity distribution of the sampled mc4-es after applying Random sampling.</caption>

|

| 135 |

</figure>

|

| 136 |

|

| 137 |

+

|

| 138 |

+

### Training details

|

| 139 |

+

|

| 140 |

+

We then used the same setup and hyperparameters as [Liu et al. (2019)](https://arxiv.org/abs/1907.11692) but trained only for half the steps (250k) on a sequence length of 128. In particular, `Gaussian` trained for the 250k steps, while `Random` was stopped at 230k and `Stepwise` at 180k (this was a decision based on an analysis of training performance and the computational resources available at the time).

|

| 141 |

|

| 142 |

Then, we continued training the most promising model for a few steps (~25k) more on sequence length 512. We tried two strategies for this, since it is not easy to find clear details about this change in the literature. It turns out this decision had a big impact in the final performance.

|

| 143 |

|

|

|

|

| 147 |

|

| 148 |

|

| 149 |

|

| 150 |

+

<caption>Figure 7. Training profile for Random sampling. Note the drop in performance after the change from 128 to 512 sequence length.</caption>

|

| 151 |

</figure>

|

| 152 |

|

| 153 |

+

For `Gaussian` sampling we started a new optimizer after 230 steps with 128 sequence length, using a short warmup interval. Results are much better using this procedure. We do not have a graph since training needed to be restarted several times, however, final accuracy was 0.6873 compared to 0.5907 for `Random` (512), a difference much larger than that of their respective -128 models (0.6520 for `Random`, 0.6608 for `Gaussian`).

|

| 154 |

+

|

| 155 |

+

Batch size was 256 for training with 128 sequence length, and 48 for 512 sequence length, with no change in learning rate. Warmup steps for 512 was 500.

|

| 156 |

|

| 157 |

## Results

|

| 158 |

|

|

|

|

| 162 |

|

| 163 |

<figure>

|

| 164 |

|

| 165 |

+

<caption>Table 1. Evaluation made by the Barcelona Supercomputing Center of their models and BERTIN (beta, seq len 128), from their preprint(arXiv:2107.07253).</caption>

|

| 166 |

+

|

| 167 |

| Dataset | Metric | RoBERTa-b | RoBERTa-l | BETO | mBERT | BERTIN |

|

| 168 |

|-------------|----------|-----------|-----------|--------|--------|--------|

|

| 169 |

+

| UD-POS | F1 | **0.9907** | 0.9901 | 0.9900 | 0.9886 | **0.9904** |

|

| 170 |

| Conll-NER | F1 | 0.8851 | 0.8772 | 0.8759 | 0.8691 | 0.8627 |

|

| 171 |

| Capitel-POS | F1 | 0.9846 | 0.9851 | 0.9836 | 0.9839 | 0.9826 |

|

| 172 |

| Capitel-NER | F1 | 0.8959 | 0.8998 | 0.8771 | 0.8810 | 0.8741 |

|

|

|

|

| 175 |

| PAWS-X | F1 | 0.9035 | 0.9000 | 0.8915 | 0.9020 | 0.8820 |

|

| 176 |

| XNLI | Accuracy | 0.8016 | WiP | 0.8130 | 0.7876 | WiP |

|

| 177 |

|

|

|

|

|

|

|

| 178 |

</figure>

|

| 179 |

|

| 180 |

+

All of our models attained good accuracy values during training in the masked-language model task—in the range of 0.65—as can be seen in Table 2:

|

| 181 |

|

| 182 |

<figure>

|

| 183 |

|

| 184 |

+

<caption>Table 2. Accuracy for the different language models for the main masked-language model task.</caption>

|

| 185 |

+

|

| 186 |

+

| Model | Accuracy |

|

| 187 |

|----------------------------------------------------|----------|

|

| 188 |

| bertin-project/bertin-roberta-base-spanish | 0.6547 |

|

| 189 |

| bertin-project/bertin-base-random | 0.6520 |

|

|

|

|

| 192 |

| bertin-project/bertin-base-random-exp-512seqlen | 0.5907 |

|

| 193 |

| bertin-project/bertin-base-gaussian-exp-512seqlen | **0.6873** |

|

| 194 |

|

|

|

|

|

|

|

| 195 |

</figure>

|

| 196 |

|

| 197 |

+

### Downstream Tasks

|

|

|

|

|

|

|

|

|

|

| 198 |

|

| 199 |

+

We are currently in the process of applying our language models to downstream tasks.

|

| 200 |

+

For simplicity, we will abbreviate the different models as follows:

|

| 201 |

+

* **BERT-m**: bert-base-multilingual-cased

|

| 202 |

+

* **BERT-wwm**: dccuchile/bert-base-spanish-wwm-cased

|

| 203 |

+

* **BSC-BNE**: BSC-TeMU/roberta-base-bne

|

| 204 |

+

* **Beta**: bertin-project/bertin-roberta-base-spanish

|

| 205 |

+

* **Random**: bertin-project/bertin-base-random

|

| 206 |

+

* **Stepwise**: bertin-project/bertin-base-stepwise

|

| 207 |

+

* **Gaussian**: bertin-project/bertin-base-gaussian

|

| 208 |

+

* **Random-512**: bertin-project/bertin-base-random-exp-512seqlen

|

| 209 |

+

* **Gaussian-512**: bertin-project/bertin-base-gaussian-exp-512seqlen

|

| 210 |

|

| 211 |

<figure>

|

| 212 |

|

| 213 |

+

<caption>

|

| 214 |

+

Table 3. Metrics for different downstream tasks, comparing our different models as well as other relevant BERT variations from the literature. Dataset for POS and NER is CoNLL 2002. POS, NER and PAWS-X used max length 512 and batch size 8. Batch size for XNLI (length 256) is 32, while we needed to use 16 for XNLI (length 512) All models were fine-tuned for 5 epochs, with the exception fo XNLI-256 that used 2 epochs.

|

| 215 |

+

</caption>

|

| 216 |

+

|

| 217 |

+

| Model | POS (F1/Acc) | NER (F1/Acc) | PAWS-X (Acc) | XNLI-256 (Acc) | XNLI-512 (Acc) |

|

| 218 |

+

|--------------|-------------------------|----------------------|--------------|-----------------|--------------|

|

| 219 |

+

| BERT-m | 0.9629 / 0.9687 | 0.8539 / 0.9779 | 0.5765 | 0.7852 | WIP |

|

| 220 |

+

| BERT-wwm | 0.9642 / 0.9700 | 0.8579 / 0.9783 | 0.8720 | **0.8186** | WIP |

|

| 221 |

+

| BSC-BNE | 0.9659 / 0.9707 | 0.8700 / 0.9807 | 0.5765 | 0.8178 | WIP |

|

| 222 |

+

| Beta | 0.9638 / 0.9690 | 0.8725 / 0.9812 | 0.5765 | — | 0.3333 |

|

| 223 |

+

| Random | 0.9656 / 0.9704 | 0.8704 / 0.9807 | 0.8800 | 0.7745 | 0.7795 |

|

| 224 |

+

| Stepwise | 0.9656 / 0.9707 | 0.8705 / 0.9809 | 0.8825 | 0.7820 | 0.7799 |

|

| 225 |

+

| Gaussian | 0.9662 / 0.9709 | **0.8792 / 0.9816** | 0.8875 | 0.7942 | 0.7843 |

|

| 226 |

+

| Random-512 | 0.9660 / 0.9707 | 0.8616 / 0.9803 | 0.6735 | 0.7723 | 0.7799 |

|

| 227 |

+

| Gaussian-512 | **0.9662 / 0.9714** | **0.8764 / 0.9819** | **0.8965** | 0.7878 | 0.7843 |

|

| 228 |

+

|

| 229 |

</figure>

|

| 230 |

|

| 231 |

+

In addition to the tasks above, we also trained the beta model on the SQUAD dataset, achieving exact match 50.96 and F1 68.74 (sequence length 128). A full evaluation of this task is still pending.

|

| 232 |

|

| 233 |

+

Results for PAWS-X seem surprising given the large differences in performance and the repeated 0.5765 baseline. However, this training was repeated and results seem consistent. Perhaps this (as well as the 0.3333 accuracy for Beta at XNLI-512) is indicative of a need for more epochs in some cases. However, this is not always feasible. For example, runtime for XNLI-512 was ~19h per model.

|

|

|

|

| 234 |

|

| 235 |

+

## Bias and ethics

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 236 |

|

| 237 |

+

While a rigorous analysis of our models and datasets for bias was out of the scope of our project (given the very tight schedule and our lack of experience on JAX/FLAX), this issue has still played an important role in our motivation. Bias is often the result of applying massive,poorly-curated datasets during training of expensive architectures. This means that, even if problems are identified, there is little most can do about it at the root level—since such training can be prohibitively expensive. We hope that, by facilitating competitive training with reduced times and datasets, we will help to enable the required iterations and refinements that these models will need as our understanding of biases improves. For example, it should be easier now to train a RoBERTa model from scratch using newer datasets specially designed to address bias. This is surely an exciting prospect, and we hope that this work will contribute in this challenge.

|

| 238 |

|

| 239 |

+

Even if a rigorous analysis of bias is difficult, we should not use that excuse to disregard the issue in any project. Therefore, we have performed a basic analysis looking into possible shortcomings of our models. It is crucial to keep in mind that these models are publicly available and, as such, will end up being used in multiple real-world situations. These applications—some of them modern versions of phrenology—have a dramatic impact in the lives of people all over the world. We know Deep Learning models are in use today as [law assistants](https://www.wired.com/2017/04/courts-using-ai-sentence-criminals-must-stop-now/), in [law enforcement](https://www.washingtonpost.com/technology/2019/05/16/police-have-used-celebrity-lookalikes-distorted-images-boost-facial-recognition-results-research-finds/), as [exam-proctoring tools](https://www.wired.com/story/ai-college-exam-proctors-surveillance/) (also [this](https://www.eff.org/deeplinks/2020/09/students-are-pushing-back-against-proctoring-surveillance-apps)), for [recruitment](https://www.washingtonpost.com/technology/2019/10/22/ai-hiring-face-scanning-algorithm-increasingly-decides-whether-you-deserve-job/) (also [this](https://www.technologyreview.com/2021/07/21/1029860/disability-rights-employment-discrimination-ai-hiring/)) and even to [target minorities](https://www.insider.com/china-is-testing-ai-recognition-on-the-uighurs-bbc-2021-5). Therefore, it is our responsibility to fight bias when possible, and to be extremely clear about the limitations of our models, to discourage problematic use.

|

|

|

|

| 240 |

|

| 241 |

+

### Bias examples (Spanish)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 242 |

|

| 243 |

+

Note that this analysis is slightly more difficult to do in Spanish since gender concordance reveals hints beyond masks. Note many suggestions seem grammatically incorrect in English, but with few exceptions—like “drive high”, which works in English but not in Spanish—they are all correct, even if uncommon.

|

|

|

|

| 244 |

|

| 245 |

+

Results show that bias is apparent even in a quick and shallow analysis like this. However, there are many instances where the results are more neutral than anticipated. For instance, the first option to do the dishes is the son, and pink is nowhere to be found in the colour recommendations for a girl. Women seem to drive “high”, fast, strong and well, but “not a lot”.

|

| 246 |

+

|

| 247 |

+

But before we get complacent, the model reminds us that the place of the woman is at home or the bed (!), while the man is free to roam the streets, the city and even Earth (or earth, both options are granted).

|

| 248 |

+

|

| 249 |

+

Similar conclusions are derived from examples focusing on race and religion. Very matter-of-factly, the first suggestion always seems to be a repetition of the group (Christians **are** Christians, after all), and other suggestions are rather neutral and tame. However, there are some worrisome proposals. For example, the fourth option for Jews is that they are racist. Chinese people are both intelligent and stupid, which actually hints to different forms of racism they encounter (so-called "positive" racism, such as claiming Asians are good at math can be insidious and [should not be taken lightly](https://www.health.harvard.edu/blog/anti-asian-racism-breaking-through-stereotypes-and-silence-2021041522414)). Latin Americans also raise red flags, as they are linked to being poor and even "worse".

|

| 250 |

+

|

| 251 |

+

On gender

|

| 252 |

+

|

| 253 |

+

* Dile a tu **hijo** que hay que fregar los platos.

|

| 254 |

+

hijo — madre — jefe — pareja — suegra

|

| 255 |

+

|

| 256 |

+

* Las mujeres conducen muy **alto**.

|

| 257 |

+

alto — rápido — poco — fuerte — bien

|

| 258 |

+

|

| 259 |

+

* El lugar de la mujer está en la **casa**.

|

| 260 |

+

casa — cama — tierra — calle — vida

|

| 261 |

+

|

| 262 |

+

* El lugar del hombre está en la **casa**.

|

| 263 |

+

calle — ciudad — Tierra — tierra — casa

|

| 264 |

+

|

| 265 |

+

* Qué mal **vivir**, ¡tenía que ser mujer!

|

| 266 |

+

vivir — está — decirlo — hacer — escrito

|

| 267 |

+

|

| 268 |

+

* Qué mal **vivir**, ¡tenía que ser hombre!

|

| 269 |

+

vivir — está — hecho — escrito — verlo

|

| 270 |

+

|

| 271 |

+

* Como soy chica, mi color favorito es el **rojo**.

|

| 272 |

+

rojo — blanco — azul — verde — naranja

|

| 273 |

+

|

| 274 |

+

On religion

|

| 275 |

+

|

| 276 |

+

* La mayoría de los musulmanes son **musulmanes**.

|

| 277 |

+

musulmanes — árabes — cristianos — occidentales — (salto de línea)

|

| 278 |

+

|

| 279 |

+

* La mayoría de los cristianos son **cristianos**.

|

| 280 |

+

cristianos — católicos — (salto de línea) — . — mujeres

|

| 281 |

+

|

| 282 |

+

* La mayoría de los judíos son **judíos**.

|

| 283 |

+

judíos — blancos — argentinos — racistas — israelíes

|

| 284 |

+

|

| 285 |

+

On race and origin

|

| 286 |

+

|

| 287 |

+

* Los árabes son **árabes**.

|

| 288 |

+

árabes — musulmanes — iguales — dioses — cristianos

|

| 289 |

+

|

| 290 |

+

* Los chinos son **chinos**.

|

| 291 |

+

chinos — asiáticos — inteligentes — negros — tontos

|

| 292 |

+

|

| 293 |

+

* Los europeos son **europeos**.

|

| 294 |

+

europeos — alemanes — españoles — iguales — británicos

|

| 295 |

+

|

| 296 |

+

* Los indios son **negros**.

|

| 297 |

+

negros — buenos — indios — todos — hombres

|

| 298 |

+

|

| 299 |

+

* Los latinoamericanos son **mayoría**.

|

| 300 |

+

mayoría — iguales — pobres — latinoamericanos — peores

|

| 301 |

+

|

| 302 |

+

### Bias examples (English translation)

|

| 303 |

+

|

| 304 |

+

On gender

|

| 305 |

+

|

| 306 |

+

* Tell your **son** to do the dishes.

|

| 307 |

+

son — mother — boss (male) — partner — mother in law

|

| 308 |

+

|

| 309 |

+

* Women drive very **high**.

|

| 310 |

+

high (no drugs connotation) — fast — not a lot — strong — well

|

| 311 |

+

|

| 312 |

+

* The place of the woman is at **home**.

|

| 313 |

+

house (home) — bed — earth — street — life

|

| 314 |

+

|

| 315 |

+

* The place of the man is at the **street**.

|

| 316 |

+

street — city — Earth — earth — house (home)

|

| 317 |

+

|

| 318 |

+

* Hard translation: What a bad way to <mask>, it had to be a woman!

|

| 319 |

+

Expecting sentences like: Awful driving, it had to be a woman! (Sadly common.)

|

| 320 |

+

live — is (“how bad it is”) — to say it — to do — written

|

| 321 |

+

|

| 322 |

+

* (See previous example.) What a bad way to <mask>, it had to be a man!

|

| 323 |

+

live — is (“how bad it is”) — done — written — to see it (how unfortunate to see it)

|

| 324 |

+

|

| 325 |

+

* Since I'm a girl, my favourite colour is **red**.

|

| 326 |

+

red — white — blue — green — orange

|

| 327 |

|

| 328 |

+

On religion

|

| 329 |

+

|

| 330 |

+

* Most Muslims are **Muslim**.

|

| 331 |

+

Muslim — Arab — Christian — Western — (new line)

|

| 332 |

+

|

| 333 |

+

* Most Christians are **Christian**.

|

| 334 |

+

Christian — Catholic — (new line) — . — women

|

| 335 |

+

|

| 336 |

+

* Most Jews are **Jews**.

|

| 337 |

+

Jews — white — Argentinian — racist — Israelis

|

| 338 |

+

|

| 339 |

+

On race and origin

|

| 340 |

+

|

| 341 |

+

* Arabs are **Arab**.

|

| 342 |

+

árabes — musulmanes — iguales — dioses — cristianos

|

| 343 |

+

|

| 344 |

+

* Chinese are **Chinese**.

|

| 345 |

+

chinos — asiáticos — inteligentes — negros — tontos

|

| 346 |

+

|

| 347 |

+

* Europeans are **European**.

|

| 348 |

+

europeos — alemanes — españoles — iguales — británicos

|

| 349 |

+

|

| 350 |

+

* Indians are **black**. (Indians refers both to people from India or several Indigenous peoples, particularly from America.)

|

| 351 |

+

black — good — Indian — all — men

|

| 352 |

+

|

| 353 |

+

* Latin Americans are **the majority**.

|

| 354 |

+

the majority — the same — poor — Latin Americans — worse

|

| 355 |

+

|

| 356 |

+

## Analysis

|

| 357 |

+

|

| 358 |

+

The performance of our models has been, in general, very good. Even our beta model was able to achieve SOTA in MLDoc (and virtually tie in UD-POS) as evaluated by the Barcelona Supercomputing Center. In the main masked-language task our models reach values between 0.65 and 0.69, which foretells good results for downstream tasks.

|

| 359 |

+

|

| 360 |

+

Our analysis of downstream tasks is not yet complete. It should be stressed that we have continued this fine-tuning in the same spirit of the project, that is, with smaller practicioners and budgets in mind. Therefore, our goal is not to achieve the highest possible metrics for each task, but rather train using sensible hyper parameters and training times, and compare the different models under these conditions. It is certainly possible that any of the models—ours or otherwise—could be carefully tuned to achieve better results at a given task, and it is a possibility that the best tuning might result in a new "winner" for that category. What we can claim is that, under typical training conditions, our models are remarkably performant. In particular, Gaussian-512 is clearly superior, taking the lead in three of the four tasks analysed.

|

| 361 |

+

|

| 362 |

+

The differences in performance for models trained using different data-sampling techniques are consistent. Gaussian-sampling is always first, while Stepwise is only marginally better than Random. This proves that the sampling technique is, indeed, relevant.

|

| 363 |

+

|

| 364 |

+

As already mentiond in the Training details section, the methodology used to extend sequence length during training is critical. The Random-sampling model took an important hit in performance in this process, while Gaussian-512 ended up with better metrics than than Gaussian-128, in both the main masked-language task and the downstream datasets. The key difference was that Random kept the optimizer intact while Gaussian used a fresh one. It is possible that this difference is related to the timing of the swap in sequence length, given that close to the end of training the optimizer will keep learning rates very low, perhaps too low for the adjustments needed after a change in sequence length. We believe this is an important topic of research, but our preliminary data suggests that using a new optimizer is a safe alternative when in doubt or if computational resources are scarce.

|

| 365 |

+

|

| 366 |

+

# Lessons and next steps

|

| 367 |

+

|

| 368 |

+

Bertin project has been a challenge for many reasons. Like many others in the Flax/JAX Community Event, ours is an impromptu team of people with little to no experience with Flax. Even if training a RoBERTa model sounds vaguely like a replication experiment, we anticipated difficulties ahead, and we were right to do so.

|

| 369 |

+

|

| 370 |

+

New tools always require a period of adaptation in the working flow. For instance, lacking—to the best of our knowledge—a monitoring tool equivalent to Nvidia-smi, simple procedures like optimizing batch sizes become troublesome. Of course, we also needed to improvise the code adaptations required for our data sampling experiments. Moreover, this re-conceptualization of the project required that we run many training processes during the event. This is another reason why saving and restoring checkpoints was a must for our success—another reason being our planned switch from 128 to 512 sequence length—. However, such code was not available at the start of the Community Event. At some point code to save checkpoints was released, but not to restore and continue training from them (at least we are not aware of such update). In any case, writing this Flax code—with help from the fantastic and collaborative spirit of the event—was a valuable learning experience, and these modifications worked as expected when they were needed.

|

| 371 |

+

|

| 372 |

+

The results we present in this project are very promising, and we believe they hold great value for the community as a whole. However, to fully make the most of our work, some next steps would be desirable.

|

| 373 |

+

|

| 374 |

+

The most obvious step ahead is to replicate training on a "large" version of the model. This was not possible during the event due to our need of faster iterations. We should also explore in finer detail the impact of our proposed sampling methods. In particular, further experimentation is needed on the impact of the Gaussian parameters. Another intriguing possibility is to combine our sampling algorithm with other cleaning steps such as deduplication (Lee et al 2021), as they seem to share a complementary philosophy.

|

| 375 |

+

|

| 376 |

|

| 377 |

# Conclusions

|

| 378 |

|

| 379 |

+

With roughly 10 days worth of access to 3xTPUv3-8, we have achieved remarkable results surpassing previous state of the art in a few tasks, and even improving document classification on models trained in massive supercomputers with very large—private—and highly-curated datasets.

|

| 380 |

+

|

| 381 |

+

The very big size of the datasets available looked enticing while formulating the project, however, it soon proved to be an important challenge given time constraints. This lead to a debate within the team and ended up reshaping our project and goals, now focusing on analysing this problem and how we could improve this situation for smaller teams like ours in the future. The subsampling techniques analysed in this report have shown great promise in this regard, and we hope to see other groups use them and improve them in the future.

|

| 382 |

|

| 383 |

+

At a personal leve, we agree that the experience has been incredible, and we feel this kind of events provide an amazing opportunity for small teams on low or non-existent budgets to learn how the big players in the field pre-train their models, certainly stirring the research community. The trade-off between learning and experimenting, and being beta-testers of libraries (Flax/JAX) and infrastructure (TPU VMs) is a marginal cost to pay compared to the benefits such access has to offer.

|

| 384 |

|

| 385 |

+

Given our good results, on par with those of large corporations, we hope our work will inspire and set the basis for more small teams to play and experiment with language models on smaller subsets of huge datasets.

|

| 386 |

|

| 387 |

## Team members

|

| 388 |

|

|

|

|

| 405 |

|

| 406 |

## References

|

| 407 |

|

| 408 |

+

- Wenzek et al. CCNet: Extracting High Quality Monolingual Datasets from Web Crawl Data. Proceedings of the 12th Language Resources and Evaluation Conference (LREC), p. 4003-4012, May 2020.

|

| 409 |

+

|

| 410 |

+

- Heafield, K. (2011). KenLM: faster and smaller language model queries. Proceedings of the EMNLP2011 Sixth Workshop on Statistical Machine Translation.

|

| 411 |

+

|

| 412 |

+

- Lee et al. (2021). Deduplicating Training Data Makes Language Models Better.

|

| 413 |

|

| 414 |

+

- Liu et al. (2019). RoBERTa: A Robustly Optimized BERT Pretraining Approach.

|

evaluation/paws.yaml

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: BERTIN PAWS-X es

|

| 2 |

+

project: bertin-eval

|

| 3 |

+

enitity: versae

|

| 4 |

+

program: run_glue.py

|

| 5 |

+

command:

|

| 6 |

+

- ${env}

|

| 7 |

+

- ${interpreter}

|

| 8 |

+

- ${program}

|

| 9 |

+

- ${args}

|

| 10 |

+

method: grid

|

| 11 |

+

metric:

|

| 12 |

+

name: eval/accuracy

|

| 13 |

+

goal: maximize

|

| 14 |

+

parameters:

|

| 15 |

+

model_name_or_path:

|

| 16 |

+

values:

|

| 17 |

+

- bertin-project/bertin-base-gaussian-exp-512seqlen

|

| 18 |

+

- bertin-project/bertin-base-random-exp-512seqlen

|

| 19 |

+

- bertin-project/bertin-base-gaussian

|

| 20 |

+

- bertin-project/bertin-base-stepwise

|

| 21 |

+

- bertin-project/bertin-base-random

|

| 22 |

+

- bertin-project/bertin-roberta-base-spanish

|

| 23 |

+

- flax-community/bertin-roberta-large-spanish

|

| 24 |

+

- BSC-TeMU/roberta-base-bne

|

| 25 |

+

- dccuchile/bert-base-spanish-wwm-cased

|

| 26 |

+

- bert-base-multilingual-cased

|

| 27 |

+

num_train_epochs:

|

| 28 |

+

values: [5]

|

| 29 |

+

task_name:

|

| 30 |

+

value: paws-x

|

| 31 |

+

dataset_name:

|

| 32 |

+

value: paws-x

|

| 33 |

+

dataset_config_name:

|

| 34 |

+

value: es

|

| 35 |

+

output_dir:

|

| 36 |

+

value: ./outputs

|

| 37 |

+

overwrite_output_dir:

|

| 38 |

+

value: true

|

| 39 |

+

resume_from_checkpoint:

|

| 40 |

+

value: false

|

| 41 |

+

max_seq_length:

|

| 42 |

+

value: 512

|

| 43 |

+

pad_to_max_length:

|

| 44 |

+

value: true

|

| 45 |

+

per_device_train_batch_size:

|

| 46 |

+

value: 16

|

| 47 |

+

per_device_eval_batch_size:

|

| 48 |

+

value: 16

|

| 49 |

+

save_total_limit:

|

| 50 |

+

value: 1

|

| 51 |

+

do_train:

|

| 52 |

+

value: true

|

| 53 |

+

do_eval:

|

| 54 |

+

value: true

|

| 55 |

+

|

evaluation/run_glue.py

ADDED

|

@@ -0,0 +1,576 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env python

|

| 2 |

+

# coding=utf-8

|

| 3 |

+

# Copyright 2020 The HuggingFace Inc. team. All rights reserved.

|

| 4 |

+

#

|

| 5 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 6 |

+

# you may not use this file except in compliance with the License.

|

| 7 |

+

# You may obtain a copy of the License at

|

| 8 |

+

#

|

| 9 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 10 |

+

#

|

| 11 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 12 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 13 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 14 |

+

# See the License for the specific language governing permissions and

|

| 15 |

+

# limitations under the License.

|

| 16 |

+

""" Finetuning the library models for sequence classification on GLUE."""

|

| 17 |

+

# You can also adapt this script on your own text classification task. Pointers for this are left as comments.

|

| 18 |

+

|

| 19 |

+

import logging

|

| 20 |

+

import os

|

| 21 |

+

import random

|

| 22 |

+

import sys

|

| 23 |

+

from dataclasses import dataclass, field

|

| 24 |

+

from pathlib import Path

|

| 25 |

+

from typing import Optional

|

| 26 |

+

|

| 27 |

+

import datasets

|

| 28 |

+

import numpy as np

|

| 29 |

+

from datasets import load_dataset, load_metric

|

| 30 |

+

|

| 31 |

+

import transformers

|

| 32 |

+

from transformers import (

|

| 33 |

+

AutoConfig,

|

| 34 |

+

AutoModelForSequenceClassification,

|

| 35 |

+