First commit

Browse files- LICENSE.txt +97 -0

- README.md +63 -0

- demo.ipynb +610 -0

- dnnlib/__init__.py +24 -0

- dnnlib/submission/__init__.py +8 -0

- dnnlib/submission/internal/__init__.py +7 -0

- dnnlib/submission/internal/local.py +22 -0

- dnnlib/submission/run_context.py +110 -0

- dnnlib/submission/submit.py +369 -0

- dnnlib/tflib/__init__.py +20 -0

- dnnlib/tflib/autosummary.py +193 -0

- dnnlib/tflib/custom_ops.py +181 -0

- dnnlib/tflib/network.py +825 -0

- dnnlib/tflib/ops/__init__.py +9 -0

- dnnlib/tflib/ops/fused_bias_act.cu +220 -0

- dnnlib/tflib/ops/fused_bias_act.py +211 -0

- dnnlib/tflib/ops/upfirdn_2d.cu +359 -0

- dnnlib/tflib/ops/upfirdn_2d.py +418 -0

- dnnlib/tflib/optimizer.py +372 -0

- dnnlib/tflib/tfutil.py +264 -0

- dnnlib/util.py +472 -0

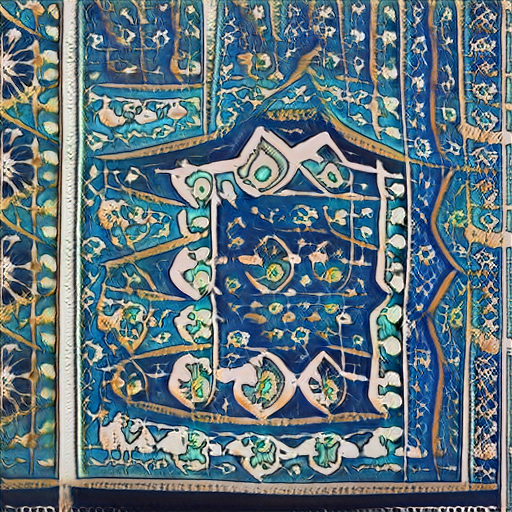

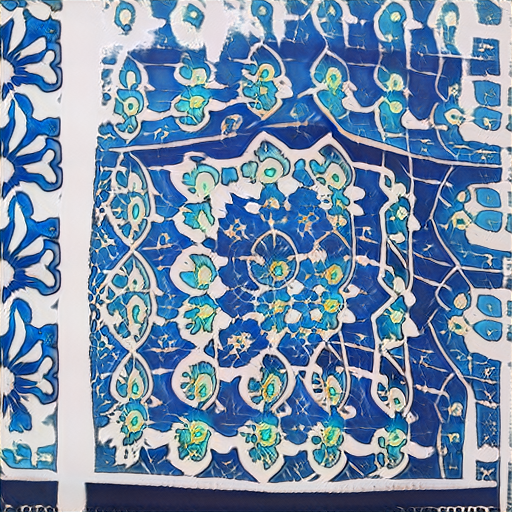

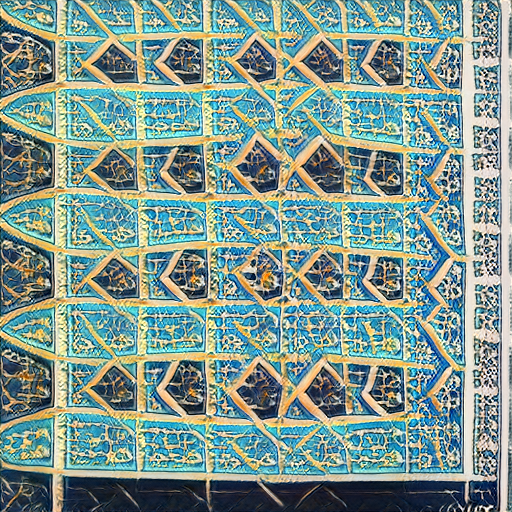

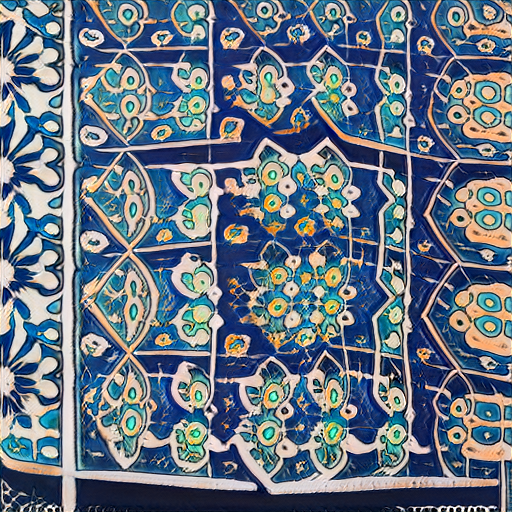

- gallery/gallery.md +15 -0

- gallery/gl-mosaics1.png +3 -0

- gallery/gl-mosaics10.png +3 -0

- gallery/gl-mosaics2.png +3 -0

- gallery/gl-mosaics3.png +3 -0

- gallery/gl-mosaics4.png +3 -0

- gallery/gl-mosaics5.png +3 -0

- gallery/gl-mosaics6.png +3 -0

- gallery/gl-mosaics7.png +3 -0

- gallery/gl-mosaics8.png +3 -0

- gallery/gl-mosaics9.png +3 -0

- generate.py +700 -0

- imgs/calligraphyv2.PNG +3 -0

- imgs/calligraphyv3.png +3 -0

- imgs/calligraphyv4.png +3 -0

- imgs/calligraphyv5.png +3 -0

- imgs/mosaic.png +3 -0

- imgs/mosaicsv2.png +3 -0

- imgs/mosaicsv3.png +3 -0

- imgs/mosaicsv4.png +3 -0

- models.py +142 -0

- rasm.py +146 -0

- requirements.txt +32 -0

- utils.py +165 -0

- video.gif +3 -0

LICENSE.txt

ADDED

|

@@ -0,0 +1,97 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (c) 2020, NVIDIA Corporation. All rights reserved.

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

NVIDIA Source Code License for StyleGAN2 with Adaptive Discriminator Augmentation (ADA)

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

=======================================================================

|

| 8 |

+

|

| 9 |

+

1. Definitions

|

| 10 |

+

|

| 11 |

+

"Licensor" means any person or entity that distributes its Work.

|

| 12 |

+

|

| 13 |

+

"Software" means the original work of authorship made available under

|

| 14 |

+

this License.

|

| 15 |

+

|

| 16 |

+

"Work" means the Software and any additions to or derivative works of

|

| 17 |

+

the Software that are made available under this License.

|

| 18 |

+

|

| 19 |

+

The terms "reproduce," "reproduction," "derivative works," and

|

| 20 |

+

"distribution" have the meaning as provided under U.S. copyright law;

|

| 21 |

+

provided, however, that for the purposes of this License, derivative

|

| 22 |

+

works shall not include works that remain separable from, or merely

|

| 23 |

+

link (or bind by name) to the interfaces of, the Work.

|

| 24 |

+

|

| 25 |

+

Works, including the Software, are "made available" under this License

|

| 26 |

+

by including in or with the Work either (a) a copyright notice

|

| 27 |

+

referencing the applicability of this License to the Work, or (b) a

|

| 28 |

+

copy of this License.

|

| 29 |

+

|

| 30 |

+

2. License Grants

|

| 31 |

+

|

| 32 |

+

2.1 Copyright Grant. Subject to the terms and conditions of this

|

| 33 |

+

License, each Licensor grants to you a perpetual, worldwide,

|

| 34 |

+

non-exclusive, royalty-free, copyright license to reproduce,

|

| 35 |

+

prepare derivative works of, publicly display, publicly perform,

|

| 36 |

+

sublicense and distribute its Work and any resulting derivative

|

| 37 |

+

works in any form.

|

| 38 |

+

|

| 39 |

+

3. Limitations

|

| 40 |

+

|

| 41 |

+

3.1 Redistribution. You may reproduce or distribute the Work only

|

| 42 |

+

if (a) you do so under this License, (b) you include a complete

|

| 43 |

+

copy of this License with your distribution, and (c) you retain

|

| 44 |

+

without modification any copyright, patent, trademark, or

|

| 45 |

+

attribution notices that are present in the Work.

|

| 46 |

+

|

| 47 |

+

3.2 Derivative Works. You may specify that additional or different

|

| 48 |

+

terms apply to the use, reproduction, and distribution of your

|

| 49 |

+

derivative works of the Work ("Your Terms") only if (a) Your Terms

|

| 50 |

+

provide that the use limitation in Section 3.3 applies to your

|

| 51 |

+

derivative works, and (b) you identify the specific derivative

|

| 52 |

+

works that are subject to Your Terms. Notwithstanding Your Terms,

|

| 53 |

+

this License (including the redistribution requirements in Section

|

| 54 |

+

3.1) will continue to apply to the Work itself.

|

| 55 |

+

|

| 56 |

+

3.3 Use Limitation. The Work and any derivative works thereof only

|

| 57 |

+

may be used or intended for use non-commercially. Notwithstanding

|

| 58 |

+

the foregoing, NVIDIA and its affiliates may use the Work and any

|

| 59 |

+

derivative works commercially. As used herein, "non-commercially"

|

| 60 |

+

means for research or evaluation purposes only.

|

| 61 |

+

|

| 62 |

+

3.4 Patent Claims. If you bring or threaten to bring a patent claim

|

| 63 |

+

against any Licensor (including any claim, cross-claim or

|

| 64 |

+

counterclaim in a lawsuit) to enforce any patents that you allege

|

| 65 |

+

are infringed by any Work, then your rights under this License from

|

| 66 |

+

such Licensor (including the grant in Section 2.1) will terminate

|

| 67 |

+

immediately.

|

| 68 |

+

|

| 69 |

+

3.5 Trademarks. This License does not grant any rights to use any

|

| 70 |

+

Licensor’s or its affiliates’ names, logos, or trademarks, except

|

| 71 |

+

as necessary to reproduce the notices described in this License.

|

| 72 |

+

|

| 73 |

+

3.6 Termination. If you violate any term of this License, then your

|

| 74 |

+

rights under this License (including the grant in Section 2.1) will

|

| 75 |

+

terminate immediately.

|

| 76 |

+

|

| 77 |

+

4. Disclaimer of Warranty.

|

| 78 |

+

|

| 79 |

+

THE WORK IS PROVIDED "AS IS" WITHOUT WARRANTIES OR CONDITIONS OF ANY

|

| 80 |

+

KIND, EITHER EXPRESS OR IMPLIED, INCLUDING WARRANTIES OR CONDITIONS OF

|

| 81 |

+

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE, TITLE OR

|

| 82 |

+

NON-INFRINGEMENT. YOU BEAR THE RISK OF UNDERTAKING ANY ACTIVITIES UNDER

|

| 83 |

+

THIS LICENSE.

|

| 84 |

+

|

| 85 |

+

5. Limitation of Liability.

|

| 86 |

+

|

| 87 |

+

EXCEPT AS PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL

|

| 88 |

+

THEORY, WHETHER IN TORT (INCLUDING NEGLIGENCE), CONTRACT, OR OTHERWISE

|

| 89 |

+

SHALL ANY LICENSOR BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY DIRECT,

|

| 90 |

+

INDIRECT, SPECIAL, INCIDENTAL, OR CONSEQUENTIAL DAMAGES ARISING OUT OF

|

| 91 |

+

OR RELATED TO THIS LICENSE, THE USE OR INABILITY TO USE THE WORK

|

| 92 |

+

(INCLUDING BUT NOT LIMITED TO LOSS OF GOODWILL, BUSINESS INTERRUPTION,

|

| 93 |

+

LOST PROFITS OR DATA, COMPUTER FAILURE OR MALFUNCTION, OR ANY OTHER

|

| 94 |

+

COMMERCIAL DAMAGES OR LOSSES), EVEN IF THE LICENSOR HAS BEEN ADVISED OF

|

| 95 |

+

THE POSSIBILITY OF SUCH DAMAGES.

|

| 96 |

+

|

| 97 |

+

=======================================================================

|

README.md

ADDED

|

@@ -0,0 +1,63 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

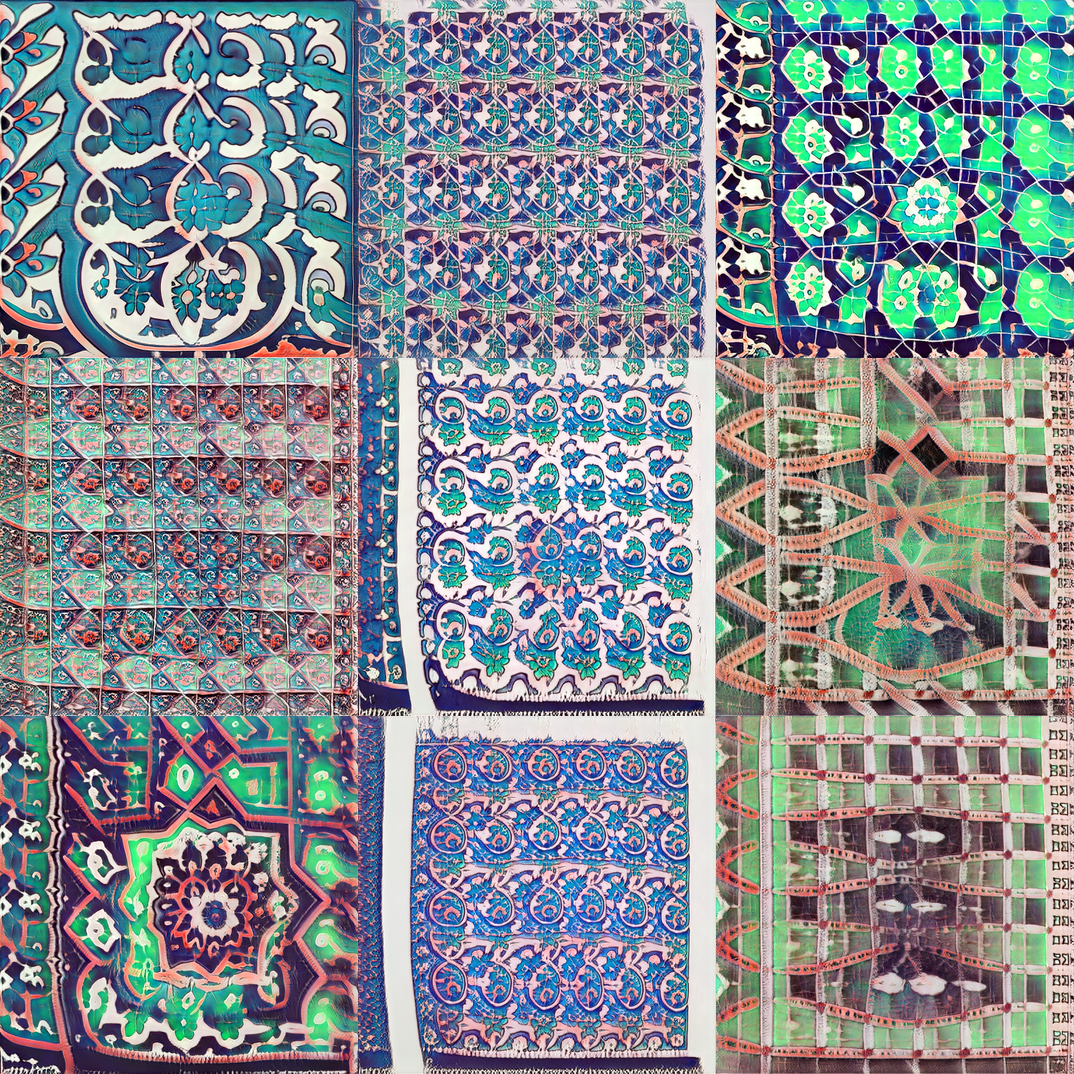

| 1 |

+

## rasm

|

| 2 |

+

Arabic art using GANs. We currently have two models for generating calligraphy and mosaics.

|

| 3 |

+

|

| 4 |

+

## Notebooks

|

| 5 |

+

|

| 6 |

+

<table class="tg">

|

| 7 |

+

<tr>

|

| 8 |

+

<th class="tg-yw4l"><b>Name</b></th>

|

| 9 |

+

<th class="tg-yw4l"><b>Notebook</b></th>

|

| 10 |

+

</tr>

|

| 11 |

+

<tr>

|

| 12 |

+

<td class="tg-yw4l">Visualization</td>

|

| 13 |

+

<td class="tg-yw4l"><a href="https://colab.research.google.com/github/ARBML/rasm/blob/master/demo.ipynb">

|

| 14 |

+

<img src="https://colab.research.google.com/assets/colab-badge.svg" width = '100px' >

|

| 15 |

+

</a></td>

|

| 16 |

+

</tr>

|

| 17 |

+

</table>

|

| 18 |

+

|

| 19 |

+

## Visualization

|

| 20 |

+

A set of functions for vis, interpolation and animation. Mostly tested in colab notebooks.

|

| 21 |

+

|

| 22 |

+

### Load Model

|

| 23 |

+

```python

|

| 24 |

+

from rasm import Rasm

|

| 25 |

+

model = Rasm(mode = 'calligraphy')

|

| 26 |

+

model = Rasm(mode = 'mosaics')

|

| 27 |

+

```

|

| 28 |

+

|

| 29 |

+

### Generate random

|

| 30 |

+

```python

|

| 31 |

+

model.generate_randomly()

|

| 32 |

+

```

|

| 33 |

+

|

| 34 |

+

### Generate grid

|

| 35 |

+

```python

|

| 36 |

+

model.generate_grid()

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

### Generate animation

|

| 40 |

+

```python

|

| 41 |

+

model.generate_animation(size = 2, steps = 20)

|

| 42 |

+

```

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## Sample Models

|

| 47 |

+

|

| 48 |

+

### Mosaics

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

### Calligraphy

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

## References

|

| 61 |

+

- Gan-surgery: https://github.com/aydao/stylegan2-surgery

|

| 62 |

+

- WikiArt model: https://github.com/pbaylies/stylegan2

|

| 63 |

+

- Starter-Notebook: https://github.com/Hephyrius/Stylegan2-Ada-Google-Colab-Starter-Notebook/

|

demo.ipynb

ADDED

|

@@ -0,0 +1,610 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"nbformat": 4,

|

| 3 |

+

"nbformat_minor": 0,

|

| 4 |

+

"metadata": {

|

| 5 |

+

"colab": {

|

| 6 |

+

"name": "SGAN Vis.ipynb",

|

| 7 |

+

"provenance": [],

|

| 8 |

+

"machine_shape": "hm"

|

| 9 |

+

},

|

| 10 |

+

"kernelspec": {

|

| 11 |

+

"name": "python3",

|

| 12 |

+

"display_name": "Python 3"

|

| 13 |

+

},

|

| 14 |

+

"accelerator": "GPU",

|

| 15 |

+

"widgets": {

|

| 16 |

+

"application/vnd.jupyter.widget-state+json": {

|

| 17 |

+

"edfc5ae9a3924ee6a04811ab3dec1656": {

|

| 18 |

+

"model_module": "@jupyter-widgets/controls",

|

| 19 |

+

"model_name": "VBoxModel",

|

| 20 |

+

"state": {

|

| 21 |

+

"_view_name": "VBoxView",

|

| 22 |

+

"_dom_classes": [],

|

| 23 |

+

"_model_name": "VBoxModel",

|

| 24 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 25 |

+

"_model_module_version": "1.5.0",

|

| 26 |

+

"_view_count": null,

|

| 27 |

+

"_view_module_version": "1.5.0",

|

| 28 |

+

"box_style": "",

|

| 29 |

+

"layout": "IPY_MODEL_86ea189295a54dd0aa2a771a95b12f00",

|

| 30 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 31 |

+

"children": [

|

| 32 |

+

"IPY_MODEL_61e1782fb995412fbef8e59c570e8823",

|

| 33 |

+

"IPY_MODEL_a03932d2929c4225b7735d0831a18566"

|

| 34 |

+

]

|

| 35 |

+

}

|

| 36 |

+

},

|

| 37 |

+

"86ea189295a54dd0aa2a771a95b12f00": {

|

| 38 |

+

"model_module": "@jupyter-widgets/base",

|

| 39 |

+

"model_name": "LayoutModel",

|

| 40 |

+

"state": {

|

| 41 |

+

"_view_name": "LayoutView",

|

| 42 |

+

"grid_template_rows": null,

|

| 43 |

+

"right": null,

|

| 44 |

+

"justify_content": null,

|

| 45 |

+

"_view_module": "@jupyter-widgets/base",

|

| 46 |

+

"overflow": null,

|

| 47 |

+

"_model_module_version": "1.2.0",

|

| 48 |

+

"_view_count": null,

|

| 49 |

+

"flex_flow": null,

|

| 50 |

+

"width": null,

|

| 51 |

+

"min_width": null,

|

| 52 |

+

"border": null,

|

| 53 |

+

"align_items": null,

|

| 54 |

+

"bottom": null,

|

| 55 |

+

"_model_module": "@jupyter-widgets/base",

|

| 56 |

+

"top": null,

|

| 57 |

+

"grid_column": null,

|

| 58 |

+

"overflow_y": null,

|

| 59 |

+

"overflow_x": null,

|

| 60 |

+

"grid_auto_flow": null,

|

| 61 |

+

"grid_area": null,

|

| 62 |

+

"grid_template_columns": null,

|

| 63 |

+

"flex": null,

|

| 64 |

+

"_model_name": "LayoutModel",

|

| 65 |

+

"justify_items": null,

|

| 66 |

+

"grid_row": null,

|

| 67 |

+

"max_height": null,

|

| 68 |

+

"align_content": null,

|

| 69 |

+

"visibility": null,

|

| 70 |

+

"align_self": null,

|

| 71 |

+

"height": null,

|

| 72 |

+

"min_height": null,

|

| 73 |

+

"padding": null,

|

| 74 |

+

"grid_auto_rows": null,

|

| 75 |

+

"grid_gap": null,

|

| 76 |

+

"max_width": null,

|

| 77 |

+

"order": null,

|

| 78 |

+

"_view_module_version": "1.2.0",

|

| 79 |

+

"grid_template_areas": null,

|

| 80 |

+

"object_position": null,

|

| 81 |

+

"object_fit": null,

|

| 82 |

+

"grid_auto_columns": null,

|

| 83 |

+

"margin": null,

|

| 84 |

+

"display": null,

|

| 85 |

+

"left": null

|

| 86 |

+

}

|

| 87 |

+

},

|

| 88 |

+

"61e1782fb995412fbef8e59c570e8823": {

|

| 89 |

+

"model_module": "@jupyter-widgets/controls",

|

| 90 |

+

"model_name": "HTMLModel",

|

| 91 |

+

"state": {

|

| 92 |

+

"_view_name": "HTMLView",

|

| 93 |

+

"style": "IPY_MODEL_c5b6fad0fd114c90ac0f23d91546846f",

|

| 94 |

+

"_dom_classes": [],

|

| 95 |

+

"description": "",

|

| 96 |

+

"_model_name": "HTMLModel",

|

| 97 |

+

"placeholder": "",

|

| 98 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 99 |

+

"_model_module_version": "1.5.0",

|

| 100 |

+

"value": "Generating images: 1",

|

| 101 |

+

"_view_count": null,

|

| 102 |

+

"_view_module_version": "1.5.0",

|

| 103 |

+

"description_tooltip": null,

|

| 104 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 105 |

+

"layout": "IPY_MODEL_7dea244035a34d0f8658e75a12b5cd89"

|

| 106 |

+

}

|

| 107 |

+

},

|

| 108 |

+

"a03932d2929c4225b7735d0831a18566": {

|

| 109 |

+

"model_module": "@jupyter-widgets/controls",

|

| 110 |

+

"model_name": "IntProgressModel",

|

| 111 |

+

"state": {

|

| 112 |

+

"_view_name": "ProgressView",

|

| 113 |

+

"style": "IPY_MODEL_13d6a5cf5103499ebfb40e2e9c520d27",

|

| 114 |

+

"_dom_classes": [],

|

| 115 |

+

"description": "",

|

| 116 |

+

"_model_name": "IntProgressModel",

|

| 117 |

+

"bar_style": "success",

|

| 118 |

+

"max": 1,

|

| 119 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 120 |

+

"_model_module_version": "1.5.0",

|

| 121 |

+

"value": 1,

|

| 122 |

+

"_view_count": null,

|

| 123 |

+

"_view_module_version": "1.5.0",

|

| 124 |

+

"orientation": "horizontal",

|

| 125 |

+

"min": 0,

|

| 126 |

+

"description_tooltip": null,

|

| 127 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 128 |

+

"layout": "IPY_MODEL_653de804a191499ca46a8eb6b91e7a6b"

|

| 129 |

+

}

|

| 130 |

+

},

|

| 131 |

+

"c5b6fad0fd114c90ac0f23d91546846f": {

|

| 132 |

+

"model_module": "@jupyter-widgets/controls",

|

| 133 |

+

"model_name": "DescriptionStyleModel",

|

| 134 |

+

"state": {

|

| 135 |

+

"_view_name": "StyleView",

|

| 136 |

+

"_model_name": "DescriptionStyleModel",

|

| 137 |

+

"description_width": "",

|

| 138 |

+

"_view_module": "@jupyter-widgets/base",

|

| 139 |

+

"_model_module_version": "1.5.0",

|

| 140 |

+

"_view_count": null,

|

| 141 |

+

"_view_module_version": "1.2.0",

|

| 142 |

+

"_model_module": "@jupyter-widgets/controls"

|

| 143 |

+

}

|

| 144 |

+

},

|

| 145 |

+

"7dea244035a34d0f8658e75a12b5cd89": {

|

| 146 |

+

"model_module": "@jupyter-widgets/base",

|

| 147 |

+

"model_name": "LayoutModel",

|

| 148 |

+

"state": {

|

| 149 |

+

"_view_name": "LayoutView",

|

| 150 |

+

"grid_template_rows": null,

|

| 151 |

+

"right": null,

|

| 152 |

+

"justify_content": null,

|

| 153 |

+

"_view_module": "@jupyter-widgets/base",

|

| 154 |

+

"overflow": null,

|

| 155 |

+

"_model_module_version": "1.2.0",

|

| 156 |

+

"_view_count": null,

|

| 157 |

+

"flex_flow": null,

|

| 158 |

+

"width": null,

|

| 159 |

+

"min_width": null,

|

| 160 |

+

"border": null,

|

| 161 |

+

"align_items": null,

|

| 162 |

+

"bottom": null,

|

| 163 |

+

"_model_module": "@jupyter-widgets/base",

|

| 164 |

+

"top": null,

|

| 165 |

+

"grid_column": null,

|

| 166 |

+

"overflow_y": null,

|

| 167 |

+

"overflow_x": null,

|

| 168 |

+

"grid_auto_flow": null,

|

| 169 |

+

"grid_area": null,

|

| 170 |

+

"grid_template_columns": null,

|

| 171 |

+

"flex": null,

|

| 172 |

+

"_model_name": "LayoutModel",

|

| 173 |

+

"justify_items": null,

|

| 174 |

+

"grid_row": null,

|

| 175 |

+

"max_height": null,

|

| 176 |

+

"align_content": null,

|

| 177 |

+

"visibility": null,

|

| 178 |

+

"align_self": null,

|

| 179 |

+

"height": null,

|

| 180 |

+

"min_height": null,

|

| 181 |

+

"padding": null,

|

| 182 |

+

"grid_auto_rows": null,

|

| 183 |

+

"grid_gap": null,

|

| 184 |

+

"max_width": null,

|

| 185 |

+

"order": null,

|

| 186 |

+

"_view_module_version": "1.2.0",

|

| 187 |

+

"grid_template_areas": null,

|

| 188 |

+

"object_position": null,

|

| 189 |

+

"object_fit": null,

|

| 190 |

+

"grid_auto_columns": null,

|

| 191 |

+

"margin": null,

|

| 192 |

+

"display": null,

|

| 193 |

+

"left": null

|

| 194 |

+

}

|

| 195 |

+

},

|

| 196 |

+

"13d6a5cf5103499ebfb40e2e9c520d27": {

|

| 197 |

+

"model_module": "@jupyter-widgets/controls",

|

| 198 |

+

"model_name": "ProgressStyleModel",

|

| 199 |

+

"state": {

|

| 200 |

+

"_view_name": "StyleView",

|

| 201 |

+

"_model_name": "ProgressStyleModel",

|

| 202 |

+

"description_width": "",

|

| 203 |

+

"_view_module": "@jupyter-widgets/base",

|

| 204 |

+

"_model_module_version": "1.5.0",

|

| 205 |

+

"_view_count": null,

|

| 206 |

+

"_view_module_version": "1.2.0",

|

| 207 |

+

"bar_color": null,

|

| 208 |

+

"_model_module": "@jupyter-widgets/controls"

|

| 209 |

+

}

|

| 210 |

+

},

|

| 211 |

+

"653de804a191499ca46a8eb6b91e7a6b": {

|

| 212 |

+

"model_module": "@jupyter-widgets/base",

|

| 213 |

+

"model_name": "LayoutModel",

|

| 214 |

+

"state": {

|

| 215 |

+

"_view_name": "LayoutView",

|

| 216 |

+

"grid_template_rows": null,

|

| 217 |

+

"right": null,

|

| 218 |

+

"justify_content": null,

|

| 219 |

+

"_view_module": "@jupyter-widgets/base",

|

| 220 |

+

"overflow": null,

|

| 221 |

+

"_model_module_version": "1.2.0",

|

| 222 |

+

"_view_count": null,

|

| 223 |

+

"flex_flow": null,

|

| 224 |

+

"width": null,

|

| 225 |

+

"min_width": null,

|

| 226 |

+

"border": null,

|

| 227 |

+

"align_items": null,

|

| 228 |

+

"bottom": null,

|

| 229 |

+

"_model_module": "@jupyter-widgets/base",

|

| 230 |

+

"top": null,

|

| 231 |

+

"grid_column": null,

|

| 232 |

+

"overflow_y": null,

|

| 233 |

+

"overflow_x": null,

|

| 234 |

+

"grid_auto_flow": null,

|

| 235 |

+

"grid_area": null,

|

| 236 |

+

"grid_template_columns": null,

|

| 237 |

+

"flex": null,

|

| 238 |

+

"_model_name": "LayoutModel",

|

| 239 |

+

"justify_items": null,

|

| 240 |

+

"grid_row": null,

|

| 241 |

+

"max_height": null,

|

| 242 |

+

"align_content": null,

|

| 243 |

+

"visibility": null,

|

| 244 |

+

"align_self": null,

|

| 245 |

+

"height": null,

|

| 246 |

+

"min_height": null,

|

| 247 |

+

"padding": null,

|

| 248 |

+

"grid_auto_rows": null,

|

| 249 |

+

"grid_gap": null,

|

| 250 |

+

"max_width": null,

|

| 251 |

+

"order": null,

|

| 252 |

+

"_view_module_version": "1.2.0",

|

| 253 |

+

"grid_template_areas": null,

|

| 254 |

+

"object_position": null,

|

| 255 |

+

"object_fit": null,

|

| 256 |

+

"grid_auto_columns": null,

|

| 257 |

+

"margin": null,

|

| 258 |

+

"display": null,

|

| 259 |

+

"left": null

|

| 260 |

+

}

|

| 261 |

+

},

|

| 262 |

+

"ee14e110bec24331816d2243cdde5e37": {

|

| 263 |

+

"model_module": "@jupyter-widgets/controls",

|

| 264 |

+

"model_name": "VBoxModel",

|

| 265 |

+

"state": {

|

| 266 |

+

"_view_name": "VBoxView",

|

| 267 |

+

"_dom_classes": [],

|

| 268 |

+

"_model_name": "VBoxModel",

|

| 269 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 270 |

+

"_model_module_version": "1.5.0",

|

| 271 |

+

"_view_count": null,

|

| 272 |

+

"_view_module_version": "1.5.0",

|

| 273 |

+

"box_style": "",

|

| 274 |

+

"layout": "IPY_MODEL_c3fbec3f54b54293a667dfe43c5aed56",

|

| 275 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 276 |

+

"children": [

|

| 277 |

+

"IPY_MODEL_93630fd11e7f47fa9ef23b1057ec1dd9",

|

| 278 |

+

"IPY_MODEL_aa9410600ce04b5592f4d2a7b4531656"

|

| 279 |

+

]

|

| 280 |

+

}

|

| 281 |

+

},

|

| 282 |

+

"c3fbec3f54b54293a667dfe43c5aed56": {

|

| 283 |

+

"model_module": "@jupyter-widgets/base",

|

| 284 |

+

"model_name": "LayoutModel",

|

| 285 |

+

"state": {

|

| 286 |

+

"_view_name": "LayoutView",

|

| 287 |

+

"grid_template_rows": null,

|

| 288 |

+

"right": null,

|

| 289 |

+

"justify_content": null,

|

| 290 |

+

"_view_module": "@jupyter-widgets/base",

|

| 291 |

+

"overflow": null,

|

| 292 |

+

"_model_module_version": "1.2.0",

|

| 293 |

+

"_view_count": null,

|

| 294 |

+

"flex_flow": null,

|

| 295 |

+

"width": null,

|

| 296 |

+

"min_width": null,

|

| 297 |

+

"border": null,

|

| 298 |

+

"align_items": null,

|

| 299 |

+

"bottom": null,

|

| 300 |

+

"_model_module": "@jupyter-widgets/base",

|

| 301 |

+

"top": null,

|

| 302 |

+

"grid_column": null,

|

| 303 |

+

"overflow_y": null,

|

| 304 |

+

"overflow_x": null,

|

| 305 |

+

"grid_auto_flow": null,

|

| 306 |

+

"grid_area": null,

|

| 307 |

+

"grid_template_columns": null,

|

| 308 |

+

"flex": null,

|

| 309 |

+

"_model_name": "LayoutModel",

|

| 310 |

+

"justify_items": null,

|

| 311 |

+

"grid_row": null,

|

| 312 |

+

"max_height": null,

|

| 313 |

+

"align_content": null,

|

| 314 |

+

"visibility": null,

|

| 315 |

+

"align_self": null,

|

| 316 |

+

"height": null,

|

| 317 |

+

"min_height": null,

|

| 318 |

+

"padding": null,

|

| 319 |

+

"grid_auto_rows": null,

|

| 320 |

+

"grid_gap": null,

|

| 321 |

+

"max_width": null,

|

| 322 |

+

"order": null,

|

| 323 |

+

"_view_module_version": "1.2.0",

|

| 324 |

+

"grid_template_areas": null,

|

| 325 |

+

"object_position": null,

|

| 326 |

+

"object_fit": null,

|

| 327 |

+

"grid_auto_columns": null,

|

| 328 |

+

"margin": null,

|

| 329 |

+

"display": null,

|

| 330 |

+

"left": null

|

| 331 |

+

}

|

| 332 |

+

},

|

| 333 |

+

"93630fd11e7f47fa9ef23b1057ec1dd9": {

|

| 334 |

+

"model_module": "@jupyter-widgets/controls",

|

| 335 |

+

"model_name": "HTMLModel",

|

| 336 |

+

"state": {

|

| 337 |

+

"_view_name": "HTMLView",

|

| 338 |

+

"style": "IPY_MODEL_af65853e39c04163a6173f438cc0ae71",

|

| 339 |

+

"_dom_classes": [],

|

| 340 |

+

"description": "",

|

| 341 |

+

"_model_name": "HTMLModel",

|

| 342 |

+

"placeholder": "",

|

| 343 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 344 |

+

"_model_module_version": "1.5.0",

|

| 345 |

+

"value": "Generating images: 9",

|

| 346 |

+

"_view_count": null,

|

| 347 |

+

"_view_module_version": "1.5.0",

|

| 348 |

+

"description_tooltip": null,

|

| 349 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 350 |

+

"layout": "IPY_MODEL_2bb901e39be5406bb1f495030180732b"

|

| 351 |

+

}

|

| 352 |

+

},

|

| 353 |

+

"aa9410600ce04b5592f4d2a7b4531656": {

|

| 354 |

+

"model_module": "@jupyter-widgets/controls",

|

| 355 |

+

"model_name": "IntProgressModel",

|

| 356 |

+

"state": {

|

| 357 |

+

"_view_name": "ProgressView",

|

| 358 |

+

"style": "IPY_MODEL_f2b4a35ed75343aab26390a8c26b6e0f",

|

| 359 |

+

"_dom_classes": [],

|

| 360 |

+

"description": "",

|

| 361 |

+

"_model_name": "IntProgressModel",

|

| 362 |

+

"bar_style": "success",

|

| 363 |

+

"max": 9,

|

| 364 |

+

"_view_module": "@jupyter-widgets/controls",

|

| 365 |

+

"_model_module_version": "1.5.0",

|

| 366 |

+

"value": 9,

|

| 367 |

+

"_view_count": null,

|

| 368 |

+

"_view_module_version": "1.5.0",

|

| 369 |

+

"orientation": "horizontal",

|

| 370 |

+

"min": 0,

|

| 371 |

+

"description_tooltip": null,

|

| 372 |

+

"_model_module": "@jupyter-widgets/controls",

|

| 373 |

+

"layout": "IPY_MODEL_df1e1c500f9c42e09fd96d7cb6cca316"

|

| 374 |

+

}

|

| 375 |

+

},

|

| 376 |

+

"af65853e39c04163a6173f438cc0ae71": {

|

| 377 |

+

"model_module": "@jupyter-widgets/controls",

|

| 378 |

+

"model_name": "DescriptionStyleModel",

|

| 379 |

+

"state": {

|

| 380 |

+

"_view_name": "StyleView",

|

| 381 |

+

"_model_name": "DescriptionStyleModel",

|

| 382 |

+

"description_width": "",

|

| 383 |

+

"_view_module": "@jupyter-widgets/base",

|

| 384 |

+

"_model_module_version": "1.5.0",

|

| 385 |

+

"_view_count": null,

|

| 386 |

+

"_view_module_version": "1.2.0",

|

| 387 |

+

"_model_module": "@jupyter-widgets/controls"

|

| 388 |

+

}

|

| 389 |

+

},

|

| 390 |

+

"2bb901e39be5406bb1f495030180732b": {

|

| 391 |

+

"model_module": "@jupyter-widgets/base",

|

| 392 |

+

"model_name": "LayoutModel",

|

| 393 |

+

"state": {

|

| 394 |

+

"_view_name": "LayoutView",

|

| 395 |

+

"grid_template_rows": null,

|

| 396 |

+

"right": null,

|

| 397 |

+

"justify_content": null,

|

| 398 |

+

"_view_module": "@jupyter-widgets/base",

|

| 399 |

+

"overflow": null,

|

| 400 |

+

"_model_module_version": "1.2.0",

|

| 401 |

+

"_view_count": null,

|

| 402 |

+

"flex_flow": null,

|

| 403 |

+

"width": null,

|

| 404 |

+

"min_width": null,

|

| 405 |

+

"border": null,

|

| 406 |

+

"align_items": null,

|

| 407 |

+

"bottom": null,

|

| 408 |

+

"_model_module": "@jupyter-widgets/base",

|

| 409 |

+

"top": null,

|

| 410 |

+

"grid_column": null,

|

| 411 |

+

"overflow_y": null,

|

| 412 |

+

"overflow_x": null,

|

| 413 |

+

"grid_auto_flow": null,

|

| 414 |

+

"grid_area": null,

|

| 415 |

+

"grid_template_columns": null,

|

| 416 |

+

"flex": null,

|

| 417 |

+

"_model_name": "LayoutModel",

|

| 418 |

+

"justify_items": null,

|

| 419 |

+

"grid_row": null,

|

| 420 |

+

"max_height": null,

|

| 421 |

+

"align_content": null,

|

| 422 |

+

"visibility": null,

|

| 423 |

+

"align_self": null,

|

| 424 |

+

"height": null,

|

| 425 |

+

"min_height": null,

|

| 426 |

+

"padding": null,

|

| 427 |

+

"grid_auto_rows": null,

|

| 428 |

+

"grid_gap": null,

|

| 429 |

+

"max_width": null,

|

| 430 |

+

"order": null,

|

| 431 |

+

"_view_module_version": "1.2.0",

|

| 432 |

+

"grid_template_areas": null,

|

| 433 |

+

"object_position": null,

|

| 434 |

+

"object_fit": null,

|

| 435 |

+

"grid_auto_columns": null,

|

| 436 |

+

"margin": null,

|

| 437 |

+

"display": null,

|

| 438 |

+

"left": null

|

| 439 |

+

}

|

| 440 |

+

},

|

| 441 |

+

"f2b4a35ed75343aab26390a8c26b6e0f": {

|

| 442 |

+

"model_module": "@jupyter-widgets/controls",

|

| 443 |

+

"model_name": "ProgressStyleModel",

|

| 444 |

+

"state": {

|

| 445 |

+

"_view_name": "StyleView",

|

| 446 |

+

"_model_name": "ProgressStyleModel",

|

| 447 |

+

"description_width": "",

|

| 448 |

+

"_view_module": "@jupyter-widgets/base",

|

| 449 |

+

"_model_module_version": "1.5.0",

|

| 450 |

+

"_view_count": null,

|

| 451 |

+

"_view_module_version": "1.2.0",

|

| 452 |

+

"bar_color": null,

|

| 453 |

+

"_model_module": "@jupyter-widgets/controls"

|

| 454 |

+

}

|

| 455 |

+

},

|

| 456 |

+

"df1e1c500f9c42e09fd96d7cb6cca316": {

|

| 457 |

+

"model_module": "@jupyter-widgets/base",

|

| 458 |

+

"model_name": "LayoutModel",

|

| 459 |

+

"state": {

|

| 460 |

+

"_view_name": "LayoutView",

|

| 461 |

+

"grid_template_rows": null,

|

| 462 |

+

"right": null,

|

| 463 |

+

"justify_content": null,

|

| 464 |

+

"_view_module": "@jupyter-widgets/base",

|

| 465 |

+

"overflow": null,

|

| 466 |

+

"_model_module_version": "1.2.0",

|

| 467 |

+

"_view_count": null,

|

| 468 |

+

"flex_flow": null,

|

| 469 |

+

"width": null,

|

| 470 |

+

"min_width": null,

|

| 471 |

+

"border": null,

|

| 472 |

+

"align_items": null,

|

| 473 |

+

"bottom": null,

|

| 474 |

+

"_model_module": "@jupyter-widgets/base",

|

| 475 |

+

"top": null,

|

| 476 |

+

"grid_column": null,

|

| 477 |

+

"overflow_y": null,

|

| 478 |

+

"overflow_x": null,

|

| 479 |

+

"grid_auto_flow": null,

|

| 480 |

+

"grid_area": null,

|

| 481 |

+

"grid_template_columns": null,

|

| 482 |

+

"flex": null,

|

| 483 |

+

"_model_name": "LayoutModel",

|

| 484 |

+

"justify_items": null,

|

| 485 |

+

"grid_row": null,

|

| 486 |

+

"max_height": null,

|

| 487 |

+

"align_content": null,

|

| 488 |

+

"visibility": null,

|

| 489 |

+

"align_self": null,

|

| 490 |

+

"height": null,

|

| 491 |

+

"min_height": null,

|

| 492 |

+

"padding": null,

|

| 493 |

+

"grid_auto_rows": null,

|

| 494 |

+

"grid_gap": null,

|

| 495 |

+

"max_width": null,

|

| 496 |

+

"order": null,

|

| 497 |

+

"_view_module_version": "1.2.0",

|

| 498 |

+

"grid_template_areas": null,

|

| 499 |

+

"object_position": null,

|

| 500 |

+

"object_fit": null,

|

| 501 |

+

"grid_auto_columns": null,

|

| 502 |

+

"margin": null,

|

| 503 |

+

"display": null,

|

| 504 |

+

"left": null

|

| 505 |

+

}

|

| 506 |

+

}

|

| 507 |

+

}

|

| 508 |

+

}

|

| 509 |

+

},

|

| 510 |

+

"cells": [

|

| 511 |

+

{

|

| 512 |

+

"cell_type": "code",

|

| 513 |

+

"metadata": {

|

| 514 |

+

"id": "QvYDzQccgMg_"

|

| 515 |

+

},

|

| 516 |

+

"source": [

|

| 517 |

+

"%tensorflow_version 1.x\r\n",

|

| 518 |

+

"import tensorflow as tf"

|

| 519 |

+

],

|

| 520 |

+

"execution_count": null,

|

| 521 |

+

"outputs": []

|

| 522 |

+

},

|

| 523 |

+

{

|

| 524 |

+

"cell_type": "code",

|

| 525 |

+

"metadata": {

|

| 526 |

+

"colab": {

|

| 527 |

+

"base_uri": "https://localhost:8080/"

|

| 528 |

+

},

|

| 529 |

+

"id": "y8VaukPJgclY",

|

| 530 |

+

"outputId": "56ac601b-2cba-427e-c9bb-860d583c1cf6"

|

| 531 |

+

},

|

| 532 |

+

"source": [

|

| 533 |

+

"%cd /content\n",

|

| 534 |

+

"!rm -rf /content/rasm\n",

|

| 535 |

+

"!git clone https://github.com/ARBML/rasm\n",

|

| 536 |

+

"%cd rasm"

|

| 537 |

+

],

|

| 538 |

+

"execution_count": null,

|

| 539 |

+

"outputs": []

|

| 540 |

+

},

|

| 541 |

+

{

|

| 542 |

+

"cell_type": "code",

|

| 543 |

+

"metadata": {

|

| 544 |

+

"colab": {

|

| 545 |

+

"base_uri": "https://localhost:8080/"

|

| 546 |

+

},

|

| 547 |

+

"id": "BPyug4mhnEEz",

|

| 548 |

+

"outputId": "ad0b269c-23f8-4376-8830-9f9d0541b6c8"

|

| 549 |

+

},

|

| 550 |

+

"source": [

|

| 551 |

+

"from rasm import Rasm\n",

|

| 552 |

+

"model = Rasm(mode = 'calligraphy')"

|

| 553 |

+

],

|

| 554 |

+

"execution_count": null,

|

| 555 |

+

"outputs": []

|

| 556 |

+

},

|

| 557 |

+

{

|

| 558 |

+

"cell_type": "code",

|

| 559 |

+

"metadata": {

|

| 560 |

+

"colab": {

|

| 561 |

+

"base_uri": "https://localhost:8080/",

|

| 562 |

+

"height": 919,

|

| 563 |

+

"referenced_widgets": [

|

| 564 |

+

"edfc5ae9a3924ee6a04811ab3dec1656",

|

| 565 |

+

"86ea189295a54dd0aa2a771a95b12f00",

|

| 566 |

+

"61e1782fb995412fbef8e59c570e8823",

|

| 567 |

+

"a03932d2929c4225b7735d0831a18566",

|

| 568 |

+

"c5b6fad0fd114c90ac0f23d91546846f",

|

| 569 |

+

"7dea244035a34d0f8658e75a12b5cd89",

|

| 570 |

+

"13d6a5cf5103499ebfb40e2e9c520d27",

|

| 571 |

+

"653de804a191499ca46a8eb6b91e7a6b"

|

| 572 |

+

]

|

| 573 |

+

},

|

| 574 |

+

"id": "e5mniebmwJiy",

|

| 575 |

+

"outputId": "cf409647-73e5-479e-d711-c3f914721ee5"

|

| 576 |

+

},

|

| 577 |

+

"source": [

|

| 578 |

+

"model.generate_randomly()"

|

| 579 |

+

],

|

| 580 |

+

"execution_count": null,

|

| 581 |

+

"outputs": []

|

| 582 |

+

},

|

| 583 |

+

{

|

| 584 |

+

"cell_type": "code",

|

| 585 |

+

"metadata": {

|

| 586 |

+

"colab": {

|

| 587 |

+

"base_uri": "https://localhost:8080/",

|

| 588 |

+

"height": 919,

|

| 589 |

+

"referenced_widgets": [

|

| 590 |

+

"ee14e110bec24331816d2243cdde5e37",

|

| 591 |

+

"c3fbec3f54b54293a667dfe43c5aed56",

|

| 592 |

+

"93630fd11e7f47fa9ef23b1057ec1dd9",

|

| 593 |

+

"aa9410600ce04b5592f4d2a7b4531656",

|

| 594 |

+

"af65853e39c04163a6173f438cc0ae71",

|

| 595 |

+

"2bb901e39be5406bb1f495030180732b",

|

| 596 |

+

"f2b4a35ed75343aab26390a8c26b6e0f",

|

| 597 |

+

"df1e1c500f9c42e09fd96d7cb6cca316"

|

| 598 |

+

]

|

| 599 |

+

},

|

| 600 |

+

"id": "F2RGfy_9wRFS",

|

| 601 |

+

"outputId": "2b5fa5af-401f-44b0-f2a6-13da08507396"

|

| 602 |

+

},

|

| 603 |

+

"source": [

|

| 604 |

+

"model.generate_grid()"

|

| 605 |

+

],

|

| 606 |

+

"execution_count": null,

|

| 607 |

+

"outputs": []

|

| 608 |

+

}

|

| 609 |

+

]

|

| 610 |

+

}

|

dnnlib/__init__.py

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# NVIDIA CORPORATION and its licensors retain all intellectual property

|

| 4 |

+

# and proprietary rights in and to this software, related documentation

|

| 5 |

+

# and any modifications thereto. Any use, reproduction, disclosure or

|

| 6 |

+

# distribution of this software and related documentation without an express

|

| 7 |

+

# license agreement from NVIDIA CORPORATION is strictly prohibited.

|

| 8 |

+

|

| 9 |

+

from . import submission

|

| 10 |

+

|

| 11 |

+

from .submission.run_context import RunContext

|

| 12 |

+

|

| 13 |

+

from .submission.submit import SubmitTarget

|

| 14 |

+

from .submission.submit import PathType

|

| 15 |

+

from .submission.submit import SubmitConfig

|

| 16 |

+

from .submission.submit import submit_run

|

| 17 |

+

from .submission.submit import submit_diagnostic

|

| 18 |

+

from .submission.submit import get_path_from_template

|

| 19 |

+

from .submission.submit import convert_path

|

| 20 |

+

from .submission.submit import make_run_dir_path

|

| 21 |

+

|

| 22 |

+

from .util import EasyDict

|

| 23 |

+

|

| 24 |

+

submit_config: SubmitConfig = None # Package level variable for SubmitConfig which is only valid when inside the run function.

|

dnnlib/submission/__init__.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2019, NVIDIA Corporation. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# This work is made available under the Nvidia Source Code License-NC.

|

| 4 |

+

# To view a copy of this license, visit

|

| 5 |

+

# https://nvlabs.github.io/stylegan2/license.html

|

| 6 |

+

|

| 7 |

+

from . import run_context

|

| 8 |

+

from . import submit

|

dnnlib/submission/internal/__init__.py

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2019, NVIDIA Corporation. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# This work is made available under the Nvidia Source Code License-NC.

|

| 4 |

+

# To view a copy of this license, visit

|

| 5 |

+

# https://nvlabs.github.io/stylegan2/license.html

|

| 6 |

+

|

| 7 |

+

from . import local

|

dnnlib/submission/internal/local.py

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2019, NVIDIA Corporation. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# This work is made available under the Nvidia Source Code License-NC.

|

| 4 |

+

# To view a copy of this license, visit

|

| 5 |

+

# https://nvlabs.github.io/stylegan2/license.html

|

| 6 |

+

|

| 7 |

+

class TargetOptions():

|

| 8 |

+

def __init__(self):

|

| 9 |

+

self.do_not_copy_source_files = False

|

| 10 |

+

|

| 11 |

+

class Target():

|

| 12 |

+

def __init__(self):

|

| 13 |

+

pass

|

| 14 |

+

|

| 15 |

+

def finalize_submit_config(self, submit_config, host_run_dir):

|

| 16 |

+

# print ('Local submit ', end='', flush=True)

|

| 17 |

+

submit_config.run_dir = host_run_dir

|

| 18 |

+

|

| 19 |

+

def submit(self, submit_config, host_run_dir):

|

| 20 |

+

from ..submit import run_wrapper, convert_path

|

| 21 |

+

# print('- run_dir: %s' % convert_path(submit_config.run_dir), flush=True)

|

| 22 |

+

return run_wrapper(submit_config)

|

dnnlib/submission/run_context.py

ADDED

|

@@ -0,0 +1,110 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|