Upload folder using huggingface_hub

Browse files- LICENSE +46 -0

- README.md +65 -0

- config.json +18 -0

- fig_accuracy_latency.png +0 -0

- mobileclip_blt.pt +3 -0

LICENSE

ADDED

|

@@ -0,0 +1,46 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (C) 2024 Apple Inc. All Rights Reserved.

|

| 2 |

+

|

| 3 |

+

IMPORTANT: This Apple software is supplied to you by Apple

|

| 4 |

+

Inc. ("Apple") in consideration of your agreement to the following

|

| 5 |

+

terms, and your use, installation, modification or redistribution of

|

| 6 |

+

this Apple software constitutes acceptance of these terms. If you do

|

| 7 |

+

not agree with these terms, please do not use, install, modify or

|

| 8 |

+

redistribute this Apple software.

|

| 9 |

+

|

| 10 |

+

In consideration of your agreement to abide by the following terms, and

|

| 11 |

+

subject to these terms, Apple grants you a personal, non-exclusive

|

| 12 |

+

license, under Apple's copyrights in this original Apple software (the

|

| 13 |

+

"Apple Software"), to use, reproduce, modify and redistribute the Apple

|

| 14 |

+

Software, with or without modifications, in source and/or binary forms;

|

| 15 |

+

provided that if you redistribute the Apple Software in its entirety and

|

| 16 |

+

without modifications, you must retain this notice and the following

|

| 17 |

+

text and disclaimers in all such redistributions of the Apple Software.

|

| 18 |

+

Neither the name, trademarks, service marks or logos of Apple Inc. may

|

| 19 |

+

be used to endorse or promote products derived from the Apple Software

|

| 20 |

+

without specific prior written permission from Apple. Except as

|

| 21 |

+

expressly stated in this notice, no other rights or licenses, express or

|

| 22 |

+

implied, are granted by Apple herein, including but not limited to any

|

| 23 |

+

patent rights that may be infringed by your derivative works or by other

|

| 24 |

+

works in which the Apple Software may be incorporated.

|

| 25 |

+

|

| 26 |

+

The Apple Software is provided by Apple on an "AS IS" basis. APPLE

|

| 27 |

+

MAKES NO WARRANTIES, EXPRESS OR IMPLIED, INCLUDING WITHOUT LIMITATION

|

| 28 |

+

THE IMPLIED WARRANTIES OF NON-INFRINGEMENT, MERCHANTABILITY AND FITNESS

|

| 29 |

+

FOR A PARTICULAR PURPOSE, REGARDING THE APPLE SOFTWARE OR ITS USE AND

|

| 30 |

+

OPERATION ALONE OR IN COMBINATION WITH YOUR PRODUCTS.

|

| 31 |

+

|

| 32 |

+

IN NO EVENT SHALL APPLE BE LIABLE FOR ANY SPECIAL, INDIRECT, INCIDENTAL

|

| 33 |

+

OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF

|

| 34 |

+

SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS

|

| 35 |

+

INTERRUPTION) ARISING IN ANY WAY OUT OF THE USE, REPRODUCTION,

|

| 36 |

+

MODIFICATION AND/OR DISTRIBUTION OF THE APPLE SOFTWARE, HOWEVER CAUSED

|

| 37 |

+

AND WHETHER UNDER THEORY OF CONTRACT, TORT (INCLUDING NEGLIGENCE),

|

| 38 |

+

STRICT LIABILITY OR OTHERWISE, EVEN IF APPLE HAS BEEN ADVISED OF THE

|

| 39 |

+

POSSIBILITY OF SUCH DAMAGE.

|

| 40 |

+

|

| 41 |

+

-------------------------------------------------------------------------------

|

| 42 |

+

SOFTWARE DISTRIBUTED WITH ML-MobileCLIP:

|

| 43 |

+

|

| 44 |

+

The ML-MobileCLIP software includes a number of subcomponents with separate

|

| 45 |

+

copyright notices and license terms - please see the file ACKNOWLEDGEMENTS.

|

| 46 |

+

-------------------------------------------------------------------------------

|

README.md

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

license_name: apple-ascl

|

| 4 |

+

license_link: LICENSE

|

| 5 |

+

library_name: mobileclip

|

| 6 |

+

---

|

| 7 |

+

|

| 8 |

+

# MobileCLIP: Fast Image-Text Models through Multi-Modal Reinforced Training

|

| 9 |

+

|

| 10 |

+

MobileCLIP was introduced in [MobileCLIP: Fast Image-Text Models through Multi-Modal Reinforced Training

|

| 11 |

+

](https://arxiv.org/pdf/2311.17049.pdf) (CVPR 2024), by Pavan Kumar Anasosalu Vasu, Hadi Pouransari, Fartash Faghri, Raviteja Vemulapalli, Oncel Tuzel.

|

| 12 |

+

|

| 13 |

+

This repository contains the **MobileCLIP-B (LT)** checkpoint.

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

### Highlights

|

| 18 |

+

|

| 19 |

+

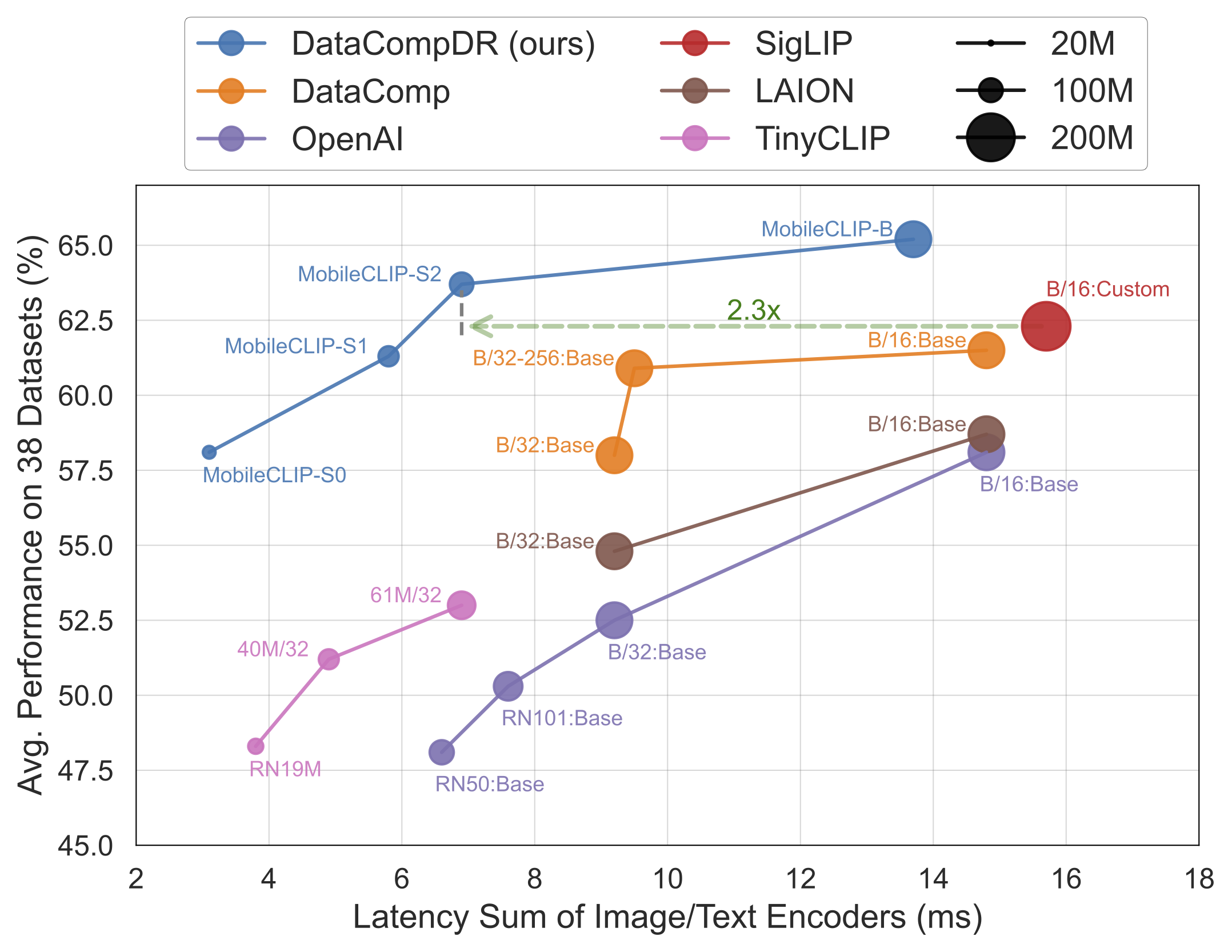

* Our smallest variant `MobileCLIP-S0` obtains similar zero-shot performance as [OpenAI](https://arxiv.org/abs/2103.00020)'s ViT-B/16 model while being 4.8x faster and 2.8x smaller.

|

| 20 |

+

* `MobileCLIP-S2` obtains better avg zero-shot performance than [SigLIP](https://arxiv.org/abs/2303.15343)'s ViT-B/16 model while being 2.3x faster and 2.1x smaller, and trained with 3x less seen samples.

|

| 21 |

+

* `MobileCLIP-B`(LT) attains zero-shot ImageNet performance of **77.2%** which is significantly better than recent works like [DFN](https://arxiv.org/abs/2309.17425) and [SigLIP](https://arxiv.org/abs/2303.15343) with similar architectures or even [OpenAI's ViT-L/14@336](https://arxiv.org/abs/2103.00020).

|

| 22 |

+

|

| 23 |

+

## Checkpoints

|

| 24 |

+

|

| 25 |

+

| Model | # Seen <BR>Samples (B) | # Params (M) <BR> (img + txt) | Latency (ms) <BR> (img + txt) | IN-1k Zero-Shot <BR> Top-1 Acc. (%) | Avg. Perf. (%) <BR> on 38 datasets |

|

| 26 |

+

|:----------------------------------------------------------|:----------------------:|:-----------------------------:|:-----------------------------:|:-----------------------------------:|:----------------------------------:|

|

| 27 |

+

| [MobileCLIP-S0](https://hf.co/pcuenq/MobileCLIP-S0) | 13 | 11.4 + 42.4 | 1.5 + 1.6 | 67.8 | 58.1 |

|

| 28 |

+

| [MobileCLIP-S1](https://hf.co/pcuenq/MobileCLIP-S1) | 13 | 21.5 + 63.4 | 2.5 + 3.3 | 72.6 | 61.3 |

|

| 29 |

+

| [MobileCLIP-S2](https://hf.co/pcuenq/MobileCLIP-S2) | 13 | 35.7 + 63.4 | 3.6 + 3.3 | 74.4 | 63.7 |

|

| 30 |

+

| [MobileCLIP-B](https://hf.co/pcuenq/MobileCLIP-B) | 13 | 86.3 + 63.4 | 10.4 + 3.3 | 76.8 | 65.2 |

|

| 31 |

+

| [MobileCLIP-B (LT)](https://hf.co/pcuenq/MobileCLIP-B-LT) | 36 | 86.3 + 63.4 | 10.4 + 3.3 | 77.2 | 65.8 |

|

| 32 |

+

|

| 33 |

+

## How to Use

|

| 34 |

+

|

| 35 |

+

First, download the desired checkpoint visiting one of the links in the table above, then click the `Files and versions` tab, and download the PyTorch checkpoint.

|

| 36 |

+

For programmatic downloading, if you have `huggingface_hub` installed, you can also run:

|

| 37 |

+

|

| 38 |

+

```

|

| 39 |

+

huggingface-cli download pcuenq/MobileCLIP-B-LT

|

| 40 |

+

```

|

| 41 |

+

|

| 42 |

+

Then, install [`ml-mobileclip`](https://github.com/apple/ml-mobileclip) by following the instructions in the repo. It uses an API similar to [`open_clip`'s](https://github.com/mlfoundations/open_clip).

|

| 43 |

+

You can run inference with a code snippet like the following:

|

| 44 |

+

|

| 45 |

+

```py

|

| 46 |

+

import torch

|

| 47 |

+

from PIL import Image

|

| 48 |

+

import mobileclip

|

| 49 |

+

|

| 50 |

+

model, _, preprocess = mobileclip.create_model_and_transforms('mobileclip_blt', pretrained='/path/to/mobileclip_blt.pt')

|

| 51 |

+

tokenizer = mobileclip.get_tokenizer('mobileclip_blt')

|

| 52 |

+

|

| 53 |

+

image = preprocess(Image.open("docs/fig_accuracy_latency.png").convert('RGB')).unsqueeze(0)

|

| 54 |

+

text = tokenizer(["a diagram", "a dog", "a cat"])

|

| 55 |

+

|

| 56 |

+

with torch.no_grad(), torch.cuda.amp.autocast():

|

| 57 |

+

image_features = model.encode_image(image)

|

| 58 |

+

text_features = model.encode_text(text)

|

| 59 |

+

image_features /= image_features.norm(dim=-1, keepdim=True)

|

| 60 |

+

text_features /= text_features.norm(dim=-1, keepdim=True)

|

| 61 |

+

|

| 62 |

+

text_probs = (100.0 * image_features @ text_features.T).softmax(dim=-1)

|

| 63 |

+

|

| 64 |

+

print("Label probs:", text_probs)

|

| 65 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"embed_dim": 512,

|

| 3 |

+

"image_cfg": {

|

| 4 |

+

"image_size": 224,

|

| 5 |

+

"model_name": "vit_b16"

|

| 6 |

+

},

|

| 7 |

+

"text_cfg": {

|

| 8 |

+

"context_length": 77,

|

| 9 |

+

"vocab_size": 49408,

|

| 10 |

+

"dim": 512,

|

| 11 |

+

"ffn_multiplier_per_layer": 4.0,

|

| 12 |

+

"n_heads_per_layer": 8,

|

| 13 |

+

"n_transformer_layers": 12,

|

| 14 |

+

"norm_layer": "layer_norm_fp32",

|

| 15 |

+

"causal_masking": true,

|

| 16 |

+

"model_name": "base"

|

| 17 |

+

}

|

| 18 |

+

}

|

fig_accuracy_latency.png

ADDED

|

mobileclip_blt.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:670844f7a886dd6eff7a9285adfc53f3d3c889c03bfc8354010cb5c6bf27441a

|

| 3 |

+

size 599214572

|