End of training

Browse files- README.md +26 -0

- checkpoint-1000/optimizer.bin +3 -0

- checkpoint-1000/random_states_0.pkl +3 -0

- checkpoint-1000/scheduler.bin +3 -0

- checkpoint-1000/text_encoder/config.json +25 -0

- checkpoint-1000/text_encoder/pytorch_model.bin +3 -0

- checkpoint-1000/unet/config.json +66 -0

- checkpoint-1000/unet/diffusion_pytorch_model.bin +3 -0

- checkpoint-500/optimizer.bin +3 -0

- checkpoint-500/random_states_0.pkl +3 -0

- checkpoint-500/scheduler.bin +3 -0

- checkpoint-500/text_encoder/config.json +25 -0

- checkpoint-500/text_encoder/pytorch_model.bin +3 -0

- checkpoint-500/unet/config.json +66 -0

- checkpoint-500/unet/diffusion_pytorch_model.bin +3 -0

- feature_extractor/preprocessor_config.json +28 -0

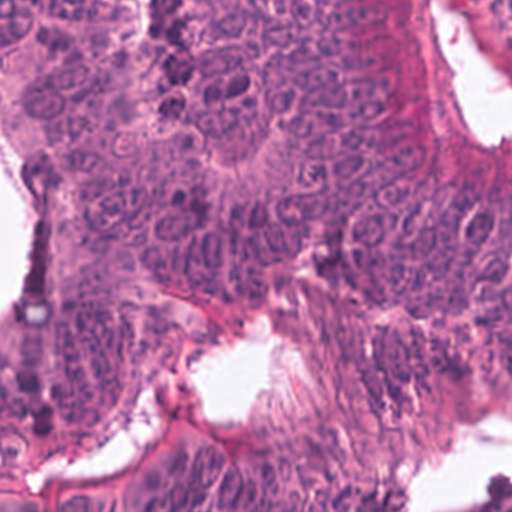

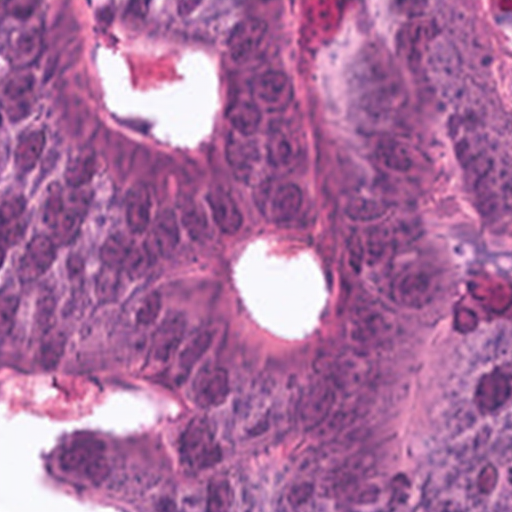

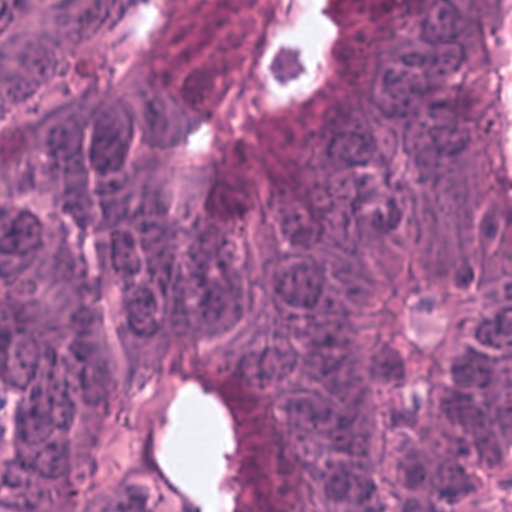

- image_0.png +0 -0

- image_1.png +0 -0

- image_2.png +0 -0

- image_3.png +0 -0

- model_index.json +33 -0

- scheduler/scheduler_config.json +14 -0

- text_encoder/config.json +25 -0

- text_encoder/pytorch_model.bin +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +34 -0

- tokenizer/vocab.json +0 -0

- unet/config.json +66 -0

- unet/diffusion_pytorch_model.bin +3 -0

- vae/config.json +31 -0

- vae/diffusion_pytorch_model.bin +3 -0

README.md

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

base_model: stabilityai/stable-diffusion-2-1-base

|

| 5 |

+

instance_prompt: a photo of sks tumor-tissue-histology

|

| 6 |

+

tags:

|

| 7 |

+

- stable-diffusion

|

| 8 |

+

- stable-diffusion-diffusers

|

| 9 |

+

- text-to-image

|

| 10 |

+

- diffusers

|

| 11 |

+

- dreambooth

|

| 12 |

+

inference: true

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

# DreamBooth - anic87/crc-tumor-text

|

| 16 |

+

|

| 17 |

+

This is a dreambooth model derived from stabilityai/stable-diffusion-2-1-base. The weights were trained on a photo of sks tumor-tissue-histology using [DreamBooth](https://dreambooth.github.io/).

|

| 18 |

+

You can find some example images in the following.

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

DreamBooth for the text encoder was enabled: True.

|

checkpoint-1000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1b51dd432b13ae414c83b1523c0e6f681936c9001074783fb71a1e70b0fccf69

|

| 3 |

+

size 9651291999

|

checkpoint-1000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:35b98ad06289c92fad7961e271b295946aad9641d9ed52ce56ab70c2247f9858

|

| 3 |

+

size 14567

|

checkpoint-1000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:327b757db9352c2a219e7dda4accca19e0b04040256987f03e61f50669775b12

|

| 3 |

+

size 559

|

checkpoint-1000/text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "gelu",

|

| 11 |

+

"hidden_size": 1024,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 4096,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 16,

|

| 19 |

+

"num_hidden_layers": 23,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 512,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.26.1",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

checkpoint-1000/text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f3135442e90483a0b8bcf65e10cac6dd789cc27145a65c441bb18a6149210897

|

| 3 |

+

size 1361677143

|

checkpoint-1000/unet/config.json

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"attention_head_dim": [

|

| 9 |

+

5,

|

| 10 |

+

10,

|

| 11 |

+

20,

|

| 12 |

+

20

|

| 13 |

+

],

|

| 14 |

+

"block_out_channels": [

|

| 15 |

+

320,

|

| 16 |

+

640,

|

| 17 |

+

1280,

|

| 18 |

+

1280

|

| 19 |

+

],

|

| 20 |

+

"center_input_sample": false,

|

| 21 |

+

"class_embed_type": null,

|

| 22 |

+

"class_embeddings_concat": false,

|

| 23 |

+

"conv_in_kernel": 3,

|

| 24 |

+

"conv_out_kernel": 3,

|

| 25 |

+

"cross_attention_dim": 1024,

|

| 26 |

+

"cross_attention_norm": null,

|

| 27 |

+

"down_block_types": [

|

| 28 |

+

"CrossAttnDownBlock2D",

|

| 29 |

+

"CrossAttnDownBlock2D",

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"DownBlock2D"

|

| 32 |

+

],

|

| 33 |

+

"downsample_padding": 1,

|

| 34 |

+

"dual_cross_attention": false,

|

| 35 |

+

"encoder_hid_dim": null,

|

| 36 |

+

"flip_sin_to_cos": true,

|

| 37 |

+

"freq_shift": 0,

|

| 38 |

+

"in_channels": 4,

|

| 39 |

+

"layers_per_block": 2,

|

| 40 |

+

"mid_block_only_cross_attention": null,

|

| 41 |

+

"mid_block_scale_factor": 1,

|

| 42 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 43 |

+

"norm_eps": 1e-05,

|

| 44 |

+

"norm_num_groups": 32,

|

| 45 |

+

"num_class_embeds": null,

|

| 46 |

+

"only_cross_attention": false,

|

| 47 |

+

"out_channels": 4,

|

| 48 |

+

"projection_class_embeddings_input_dim": null,

|

| 49 |

+

"resnet_out_scale_factor": 1.0,

|

| 50 |

+

"resnet_skip_time_act": false,

|

| 51 |

+

"resnet_time_scale_shift": "default",

|

| 52 |

+

"sample_size": 64,

|

| 53 |

+

"time_cond_proj_dim": null,

|

| 54 |

+

"time_embedding_act_fn": null,

|

| 55 |

+

"time_embedding_dim": null,

|

| 56 |

+

"time_embedding_type": "positional",

|

| 57 |

+

"timestep_post_act": null,

|

| 58 |

+

"up_block_types": [

|

| 59 |

+

"UpBlock2D",

|

| 60 |

+

"CrossAttnUpBlock2D",

|

| 61 |

+

"CrossAttnUpBlock2D",

|

| 62 |

+

"CrossAttnUpBlock2D"

|

| 63 |

+

],

|

| 64 |

+

"upcast_attention": false,

|

| 65 |

+

"use_linear_projection": true

|

| 66 |

+

}

|

checkpoint-1000/unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:509d0b26fec6e94fabb945cea4314129e047793a9110458975e2a3277439b025

|

| 3 |

+

size 3463923045

|

checkpoint-500/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b72a9101418d6fa6ab8782a2ab731cf5d79ed3341d015ca5df645ed5a491ddd8

|

| 3 |

+

size 9651291999

|

checkpoint-500/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:855f0b728412479651dfae88f73a5d7ccc9c18859b97a9cfc45484715f65d3eb

|

| 3 |

+

size 14567

|

checkpoint-500/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c78bdf9fb4e265639c0fa7f35aee3409d84a5fad00aa5616450c323e0f2948e6

|

| 3 |

+

size 559

|

checkpoint-500/text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "gelu",

|

| 11 |

+

"hidden_size": 1024,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 4096,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 16,

|

| 19 |

+

"num_hidden_layers": 23,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 512,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.26.1",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

checkpoint-500/text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6d51e2d1825228cf05a284d91dbcb858fee273cd2e4222a88dc93f905dc22a72

|

| 3 |

+

size 1361677143

|

checkpoint-500/unet/config.json

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"attention_head_dim": [

|

| 9 |

+

5,

|

| 10 |

+

10,

|

| 11 |

+

20,

|

| 12 |

+

20

|

| 13 |

+

],

|

| 14 |

+

"block_out_channels": [

|

| 15 |

+

320,

|

| 16 |

+

640,

|

| 17 |

+

1280,

|

| 18 |

+

1280

|

| 19 |

+

],

|

| 20 |

+

"center_input_sample": false,

|

| 21 |

+

"class_embed_type": null,

|

| 22 |

+

"class_embeddings_concat": false,

|

| 23 |

+

"conv_in_kernel": 3,

|

| 24 |

+

"conv_out_kernel": 3,

|

| 25 |

+

"cross_attention_dim": 1024,

|

| 26 |

+

"cross_attention_norm": null,

|

| 27 |

+

"down_block_types": [

|

| 28 |

+

"CrossAttnDownBlock2D",

|

| 29 |

+

"CrossAttnDownBlock2D",

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"DownBlock2D"

|

| 32 |

+

],

|

| 33 |

+

"downsample_padding": 1,

|

| 34 |

+

"dual_cross_attention": false,

|

| 35 |

+

"encoder_hid_dim": null,

|

| 36 |

+

"flip_sin_to_cos": true,

|

| 37 |

+

"freq_shift": 0,

|

| 38 |

+

"in_channels": 4,

|

| 39 |

+

"layers_per_block": 2,

|

| 40 |

+

"mid_block_only_cross_attention": null,

|

| 41 |

+

"mid_block_scale_factor": 1,

|

| 42 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 43 |

+

"norm_eps": 1e-05,

|

| 44 |

+

"norm_num_groups": 32,

|

| 45 |

+

"num_class_embeds": null,

|

| 46 |

+

"only_cross_attention": false,

|

| 47 |

+

"out_channels": 4,

|

| 48 |

+

"projection_class_embeddings_input_dim": null,

|

| 49 |

+

"resnet_out_scale_factor": 1.0,

|

| 50 |

+

"resnet_skip_time_act": false,

|

| 51 |

+

"resnet_time_scale_shift": "default",

|

| 52 |

+

"sample_size": 64,

|

| 53 |

+

"time_cond_proj_dim": null,

|

| 54 |

+

"time_embedding_act_fn": null,

|

| 55 |

+

"time_embedding_dim": null,

|

| 56 |

+

"time_embedding_type": "positional",

|

| 57 |

+

"timestep_post_act": null,

|

| 58 |

+

"up_block_types": [

|

| 59 |

+

"UpBlock2D",

|

| 60 |

+

"CrossAttnUpBlock2D",

|

| 61 |

+

"CrossAttnUpBlock2D",

|

| 62 |

+

"CrossAttnUpBlock2D"

|

| 63 |

+

],

|

| 64 |

+

"upcast_attention": false,

|

| 65 |

+

"use_linear_projection": true

|

| 66 |

+

}

|

checkpoint-500/unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2a5c538c461730d76d2567fc104279e671504f8357031c3ba579c2064a0515d5

|

| 3 |

+

size 3463923045

|

feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": {

|

| 3 |

+

"height": 224,

|

| 4 |

+

"width": 224

|

| 5 |

+

},

|

| 6 |

+

"do_center_crop": true,

|

| 7 |

+

"do_convert_rgb": true,

|

| 8 |

+

"do_normalize": true,

|

| 9 |

+

"do_rescale": true,

|

| 10 |

+

"do_resize": true,

|

| 11 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 12 |

+

"image_mean": [

|

| 13 |

+

0.48145466,

|

| 14 |

+

0.4578275,

|

| 15 |

+

0.40821073

|

| 16 |

+

],

|

| 17 |

+

"image_processor_type": "CLIPFeatureExtractor",

|

| 18 |

+

"image_std": [

|

| 19 |

+

0.26862954,

|

| 20 |

+

0.26130258,

|

| 21 |

+

0.27577711

|

| 22 |

+

],

|

| 23 |

+

"resample": 3,

|

| 24 |

+

"rescale_factor": 0.00392156862745098,

|

| 25 |

+

"size": {

|

| 26 |

+

"shortest_edge": 224

|

| 27 |

+

}

|

| 28 |

+

}

|

image_0.png

ADDED

|

image_1.png

ADDED

|

image_2.png

ADDED

|

image_3.png

ADDED

|

model_index.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"feature_extractor": [

|

| 5 |

+

"transformers",

|

| 6 |

+

"CLIPFeatureExtractor"

|

| 7 |

+

],

|

| 8 |

+

"requires_safety_checker": false,

|

| 9 |

+

"safety_checker": [

|

| 10 |

+

null,

|

| 11 |

+

null

|

| 12 |

+

],

|

| 13 |

+

"scheduler": [

|

| 14 |

+

"diffusers",

|

| 15 |

+

"PNDMScheduler"

|

| 16 |

+

],

|

| 17 |

+

"text_encoder": [

|

| 18 |

+

"transformers",

|

| 19 |

+

"CLIPTextModel"

|

| 20 |

+

],

|

| 21 |

+

"tokenizer": [

|

| 22 |

+

"transformers",

|

| 23 |

+

"CLIPTokenizer"

|

| 24 |

+

],

|

| 25 |

+

"unet": [

|

| 26 |

+

"diffusers",

|

| 27 |

+

"UNet2DConditionModel"

|

| 28 |

+

],

|

| 29 |

+

"vae": [

|

| 30 |

+

"diffusers",

|

| 31 |

+

"AutoencoderKL"

|

| 32 |

+

]

|

| 33 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": false,

|

| 8 |

+

"num_train_timesteps": 1000,

|

| 9 |

+

"prediction_type": "epsilon",

|

| 10 |

+

"set_alpha_to_one": false,

|

| 11 |

+

"skip_prk_steps": true,

|

| 12 |

+

"steps_offset": 1,

|

| 13 |

+

"trained_betas": null

|

| 14 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "gelu",

|

| 11 |

+

"hidden_size": 1024,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 4096,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 16,

|

| 19 |

+

"num_hidden_layers": 23,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 512,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.26.1",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f3135442e90483a0b8bcf65e10cac6dd789cc27145a65c441bb18a6149210897

|

| 3 |

+

size 1361677143

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "!",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "/projects/ac67/projects/textual_inversion/hub/models--stabilityai--stable-diffusion-2-1-base/snapshots/88bb1a46821197d1ac0cb54d1d09fb6e70b171bc/tokenizer",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"special_tokens_map_file": "./special_tokens_map.json",

|

| 25 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<|endoftext|>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unet/config.json

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1-base",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"attention_head_dim": [

|

| 9 |

+

5,

|

| 10 |

+

10,

|

| 11 |

+

20,

|

| 12 |

+

20

|

| 13 |

+

],

|

| 14 |

+

"block_out_channels": [

|

| 15 |

+

320,

|

| 16 |

+

640,

|

| 17 |

+

1280,

|

| 18 |

+

1280

|

| 19 |

+

],

|

| 20 |

+

"center_input_sample": false,

|

| 21 |

+

"class_embed_type": null,

|

| 22 |

+

"class_embeddings_concat": false,

|

| 23 |

+

"conv_in_kernel": 3,

|

| 24 |

+

"conv_out_kernel": 3,

|

| 25 |

+

"cross_attention_dim": 1024,

|

| 26 |

+

"cross_attention_norm": null,

|

| 27 |

+

"down_block_types": [

|

| 28 |

+

"CrossAttnDownBlock2D",

|

| 29 |

+

"CrossAttnDownBlock2D",

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"DownBlock2D"

|

| 32 |

+

],

|

| 33 |

+

"downsample_padding": 1,

|

| 34 |

+

"dual_cross_attention": false,

|

| 35 |

+

"encoder_hid_dim": null,

|

| 36 |

+

"flip_sin_to_cos": true,

|

| 37 |

+

"freq_shift": 0,

|

| 38 |

+

"in_channels": 4,

|

| 39 |

+

"layers_per_block": 2,

|

| 40 |

+

"mid_block_only_cross_attention": null,

|

| 41 |

+

"mid_block_scale_factor": 1,

|

| 42 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 43 |

+

"norm_eps": 1e-05,

|

| 44 |

+

"norm_num_groups": 32,

|

| 45 |

+

"num_class_embeds": null,

|

| 46 |

+

"only_cross_attention": false,

|

| 47 |

+

"out_channels": 4,

|

| 48 |

+

"projection_class_embeddings_input_dim": null,

|

| 49 |

+

"resnet_out_scale_factor": 1.0,

|

| 50 |

+

"resnet_skip_time_act": false,

|

| 51 |

+

"resnet_time_scale_shift": "default",

|

| 52 |

+

"sample_size": 64,

|

| 53 |

+

"time_cond_proj_dim": null,

|

| 54 |

+

"time_embedding_act_fn": null,

|

| 55 |

+

"time_embedding_dim": null,

|

| 56 |

+

"time_embedding_type": "positional",

|

| 57 |

+

"timestep_post_act": null,

|

| 58 |

+

"up_block_types": [

|

| 59 |

+

"UpBlock2D",

|

| 60 |

+

"CrossAttnUpBlock2D",

|

| 61 |

+

"CrossAttnUpBlock2D",

|

| 62 |

+

"CrossAttnUpBlock2D"

|

| 63 |

+

],

|

| 64 |

+

"upcast_attention": false,

|

| 65 |

+

"use_linear_projection": true

|

| 66 |

+

}

|

unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:509d0b26fec6e94fabb945cea4314129e047793a9110458975e2a3277439b025

|

| 3 |

+

size 3463923045

|

vae/config.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"_name_or_path": "/projects/ac67/projects/textual_inversion/hub/models--stabilityai--stable-diffusion-2-1-base/snapshots/88bb1a46821197d1ac0cb54d1d09fb6e70b171bc/vae",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

128,

|

| 8 |

+

256,

|

| 9 |

+

512,

|

| 10 |

+

512

|

| 11 |

+

],

|

| 12 |

+

"down_block_types": [

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D",

|

| 16 |

+

"DownEncoderBlock2D"

|

| 17 |

+

],

|

| 18 |

+

"in_channels": 3,

|

| 19 |

+

"latent_channels": 4,

|

| 20 |

+

"layers_per_block": 2,

|

| 21 |

+

"norm_num_groups": 32,

|

| 22 |

+

"out_channels": 3,

|

| 23 |

+

"sample_size": 768,

|

| 24 |

+

"scaling_factor": 0.18215,

|

| 25 |

+

"up_block_types": [

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D",

|

| 29 |

+

"UpDecoderBlock2D"

|

| 30 |

+

]

|

| 31 |

+

}

|

vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1b4889b6b1d4ce7ae320a02dedaeff1780ad77d415ea0d744b476155c6377ddc

|

| 3 |

+

size 334707217

|