bubbliiiing

commited on

Commit

·

b860ca0

1

Parent(s):

ab7a211

Create Weights

Browse files- LICENSE +71 -0

- README.md +492 -5

- README_en.md +463 -0

- configuration.json +1 -0

- model_index.json +24 -0

- scheduler/scheduler_config.json +18 -0

- text_encoder/config.json +32 -0

- text_encoder/model-00001-of-00002.safetensors +3 -0

- text_encoder/model-00002-of-00002.safetensors +3 -0

- text_encoder/model.safetensors.index.json +226 -0

- tokenizer/added_tokens.json +102 -0

- tokenizer/special_tokens_map.json +125 -0

- tokenizer/spiece.model +3 -0

- tokenizer/tokenizer_config.json +940 -0

- transformer/config.json +32 -0

- transformer/diffusion_pytorch_model.safetensors +3 -0

- vae/config.json +39 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

LICENSE

CHANGED

|

@@ -0,0 +1,71 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The CogVideoX License

|

| 2 |

+

|

| 3 |

+

1. Definitions

|

| 4 |

+

|

| 5 |

+

“Licensor” means the CogVideoX Model Team that distributes its Software.

|

| 6 |

+

|

| 7 |

+

“Software” means the CogVideoX model parameters made available under this license.

|

| 8 |

+

|

| 9 |

+

2. License Grant

|

| 10 |

+

|

| 11 |

+

Under the terms and conditions of this license, the licensor hereby grants you a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable, royalty-free copyright license. The intellectual property rights of the generated content belong to the user to the extent permitted by applicable local laws.

|

| 12 |

+

This license allows you to freely use all open-source models in this repository for academic research. Users who wish to use the models for commercial purposes must register and obtain a basic commercial license in https://open.bigmodel.cn/mla/form .

|

| 13 |

+

Users who have registered and obtained the basic commercial license can use the models for commercial activities for free, but must comply with all terms and conditions of this license. Additionally, the number of service users (visits) for your commercial activities must not exceed 1 million visits per month.

|

| 14 |

+

If the number of service users (visits) for your commercial activities exceeds 1 million visits per month, you need to contact our business team to obtain more commercial licenses.

|

| 15 |

+

The above copyright statement and this license statement should be included in all copies or significant portions of this software.

|

| 16 |

+

|

| 17 |

+

3. Restriction

|

| 18 |

+

|

| 19 |

+

You will not use, copy, modify, merge, publish, distribute, reproduce, or create derivative works of the Software, in whole or in part, for any military, or illegal purposes.

|

| 20 |

+

|

| 21 |

+

You will not use the Software for any act that may undermine China's national security and national unity, harm the public interest of society, or infringe upon the rights and interests of human beings.

|

| 22 |

+

|

| 23 |

+

4. Disclaimer

|

| 24 |

+

|

| 25 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

| 26 |

+

|

| 27 |

+

5. Limitation of Liability

|

| 28 |

+

|

| 29 |

+

EXCEPT TO THE EXTENT PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL THEORY, WHETHER BASED IN TORT, NEGLIGENCE, CONTRACT, LIABILITY, OR OTHERWISE WILL ANY LICENSOR BE LIABLE TO YOU FOR ANY DIRECT, INDIRECT, SPECIAL, INCIDENTAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES, OR ANY OTHER COMMERCIAL LOSSES, EVEN IF THE LICENSOR HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

|

| 30 |

+

|

| 31 |

+

6. Dispute Resolution

|

| 32 |

+

|

| 33 |

+

This license shall be governed and construed in accordance with the laws of People’s Republic of China. Any dispute arising from or in connection with this License shall be submitted to Haidian District People's Court in Beijing.

|

| 34 |

+

|

| 35 |

+

Note that the license is subject to update to a more comprehensive version. For any questions related to the license and copyright, please contact us at license@zhipuai.cn.

|

| 36 |

+

|

| 37 |

+

1. 定义

|

| 38 |

+

|

| 39 |

+

“许可方”是指分发其软件的 CogVideoX 模型团队。

|

| 40 |

+

|

| 41 |

+

“软件”是指根据本许可提供的 CogVideoX 模型参数。

|

| 42 |

+

|

| 43 |

+

2. 许可授予

|

| 44 |

+

|

| 45 |

+

根据本许可的条款和条件,许可方特此授予您非排他性、全球性、不可转让、不可再许可、可撤销、免版税的版权许可。生成内容的知识产权所属,可根据适用当地法律的规定,在法律允许的范围内由用户享有生成内容的知识产权或其他权利。

|

| 46 |

+

本许可允许您免费使用本仓库中的所有开源模型进行学术研究。对于希望将模型用于商业目的的用户,需在 https://open.bigmodel.cn/mla/form 完成登记并获得基础商用授权。

|

| 47 |

+

|

| 48 |

+

经过登记并获得基础商用授权的用户可以免费使用本模型进行商业活动,但必须遵守本许可的所有条款和条件。

|

| 49 |

+

在本许可证下,您的商业活动的服务用户数量(访问量)不得超过100万人次访问 / 每月。如果超过,您需要与我们的商业团队联系以获得更多的商业许可。

|

| 50 |

+

上述版权声明和本许可声明应包含在本软件的所有副本或重要部分中。

|

| 51 |

+

|

| 52 |

+

3.限制

|

| 53 |

+

|

| 54 |

+

您不得出于任何军事或非法目的使用、复制、修改、合并、发布、分发、复制或创建本软件的全部或部分衍生作品。

|

| 55 |

+

|

| 56 |

+

您不得利用本软件从事任何危害国家安全和国家统一、危害社会公共利益、侵犯人身权益的行为。

|

| 57 |

+

|

| 58 |

+

4.免责声明

|

| 59 |

+

|

| 60 |

+

本软件“按原样”提供,不提供任何明示或暗示的保证,包括但不限于对适销性、特定用途的适用性和非侵权性的保证。

|

| 61 |

+

在任何情况下,作者或版权持有人均不对任何索赔、损害或其他责任负责,无论是在合同诉讼、侵权行为还是其他方面,由软件或软件的使用或其他交易引起、由软件引起或与之相关 软件。

|

| 62 |

+

|

| 63 |

+

5. 责任限制

|

| 64 |

+

|

| 65 |

+

除适用��律禁止的范围外,在任何情况下且根据任何法律理论,无论是基于侵权行为、疏忽、合同、责任或其他原因,任何许可方均不对您承担任何直接、间接、特殊、偶然、示范性、 或间接损害,或任何其他商业损失,即使许可人已被告知此类损害的可能性。

|

| 66 |

+

|

| 67 |

+

6.争议解决

|

| 68 |

+

|

| 69 |

+

本许可受中华人民共和国法律管辖并按其解释。 因本许可引起的或与本许可有关的任何争议应提交北京市海淀区人民法院。

|

| 70 |

+

|

| 71 |

+

请注意,许可证可能会更新到更全面的版本。 有关许可和版权的任何问题,请通过 license@zhipuai.cn 与我们联系。

|

README.md

CHANGED

|

@@ -1,5 +1,492 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

frameworks:

|

| 3 |

+

- Pytorch

|

| 4 |

+

license: other

|

| 5 |

+

tasks:

|

| 6 |

+

- text-to-video-synthesis

|

| 7 |

+

|

| 8 |

+

#model-type:

|

| 9 |

+

##如 gpt、phi、llama、chatglm、baichuan 等

|

| 10 |

+

#- gpt

|

| 11 |

+

|

| 12 |

+

#domain:

|

| 13 |

+

##如 nlp、cv、audio、multi-modal

|

| 14 |

+

#- nlp

|

| 15 |

+

|

| 16 |

+

#language:

|

| 17 |

+

##语言代码列表 https://help.aliyun.com/document_detail/215387.html?spm=a2c4g.11186623.0.0.9f8d7467kni6Aa

|

| 18 |

+

#- cn

|

| 19 |

+

|

| 20 |

+

#metrics:

|

| 21 |

+

##如 CIDEr、Blue、ROUGE 等

|

| 22 |

+

#- CIDEr

|

| 23 |

+

|

| 24 |

+

#tags:

|

| 25 |

+

##各种自定义,包括 pretrained、fine-tuned、instruction-tuned、RL-tuned 等训练方法和其他

|

| 26 |

+

#- pretrained

|

| 27 |

+

|

| 28 |

+

#tools:

|

| 29 |

+

##如 vllm、fastchat、llamacpp、AdaSeq 等

|

| 30 |

+

#- vllm

|

| 31 |

+

---

|

| 32 |

+

# CogVideoX-Fun

|

| 33 |

+

|

| 34 |

+

😊 Welcome!

|

| 35 |

+

|

| 36 |

+

[English](./README_en.md) | 简体中文

|

| 37 |

+

|

| 38 |

+

# 目录

|

| 39 |

+

- [目录](#目录)

|

| 40 |

+

- [简介](#简介)

|

| 41 |

+

- [快速启动](#快速启动)

|

| 42 |

+

- [视频作品](#视频作品)

|

| 43 |

+

- [如何使用](#如何使用)

|

| 44 |

+

- [模型地址](#模型地址)

|

| 45 |

+

- [未来计划](#未来计划)

|

| 46 |

+

- [参考文献](#参考文献)

|

| 47 |

+

- [许可证](#许可证)

|

| 48 |

+

|

| 49 |

+

# 简介

|

| 50 |

+

CogVideoX-Fun是一个基于CogVideoX结构修改后的的pipeline,是一个生成条件更自由的CogVideoX,可用于生成AI图片与视频、训练Diffusion Transformer的基线模型与Lora模型,我们支持从已经训练好的CogVideoX-Fun模型直接进行预测,生成不同分辨率,6秒左右、fps8的视频(1 ~ 49帧),也支持用户训练自己的基线模型与Lora模型,进行一定的风格变换。

|

| 51 |

+

|

| 52 |

+

我们会逐渐支持从不同平台快速启动,请参阅 [快速启动](#快速启动)。

|

| 53 |

+

|

| 54 |

+

新特性:

|

| 55 |

+

- 通过奖励反向传播技术训练Lora,以优化生成的视频,使其更好地与人类偏好保持一致,[更多信息](scripts/README_TRAIN_REWARD.md)。新版本的控制模型,支持不同的控制条件,如Canny、Depth、Pose、MLSD等。[2024.11.21]

|

| 56 |

+

- CogVideoX-Fun Control现在在diffusers中得到了支持。感谢 [a-r-r-o-w](https://github.com/a-r-r-o-w)在这个 [PR](https://github.com/huggingface/diffusers/pull/9671)中贡献了支持。查看[文档](https://huggingface.co/docs/diffusers/main/en/api/pipelines/cogvideox)以了解更多信息。[2024.10.16]

|

| 57 |

+

- 重新训练i2v模型,添加Noise,使得视频的运动幅度更大。上传控制模型训练代码与Control模型。[ 2024.09.29 ]

|

| 58 |

+

- 创建代码!现在支持 Windows 和 Linux。支持2b与5b最大256x256x49到1024x1024x49的任意分辨率的视频生成。[ 2024.09.18 ]

|

| 59 |

+

|

| 60 |

+

功能概览:

|

| 61 |

+

- [数据预处理](#data-preprocess)

|

| 62 |

+

- [训练DiT](#dit-train)

|

| 63 |

+

- [模型生成](#video-gen)

|

| 64 |

+

|

| 65 |

+

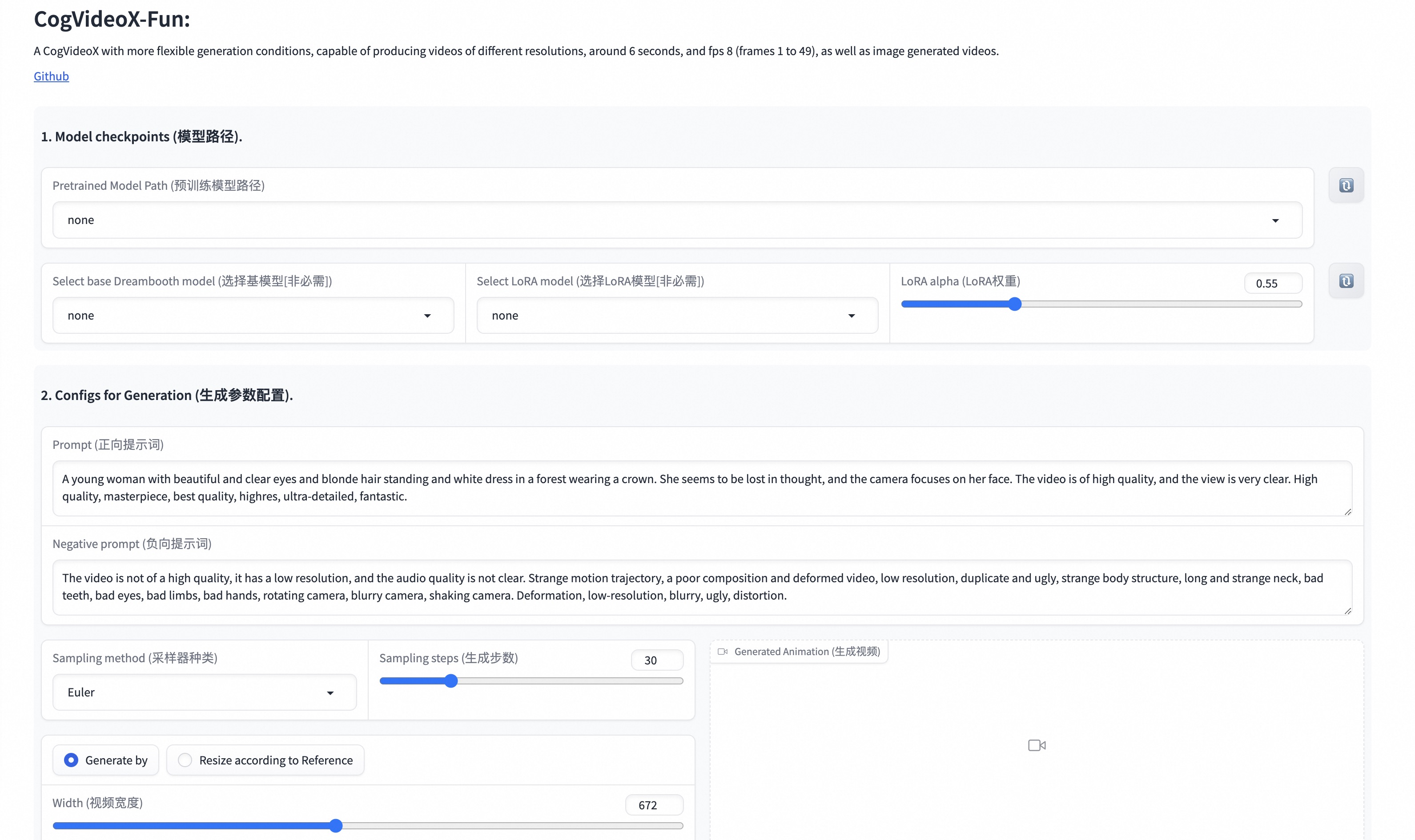

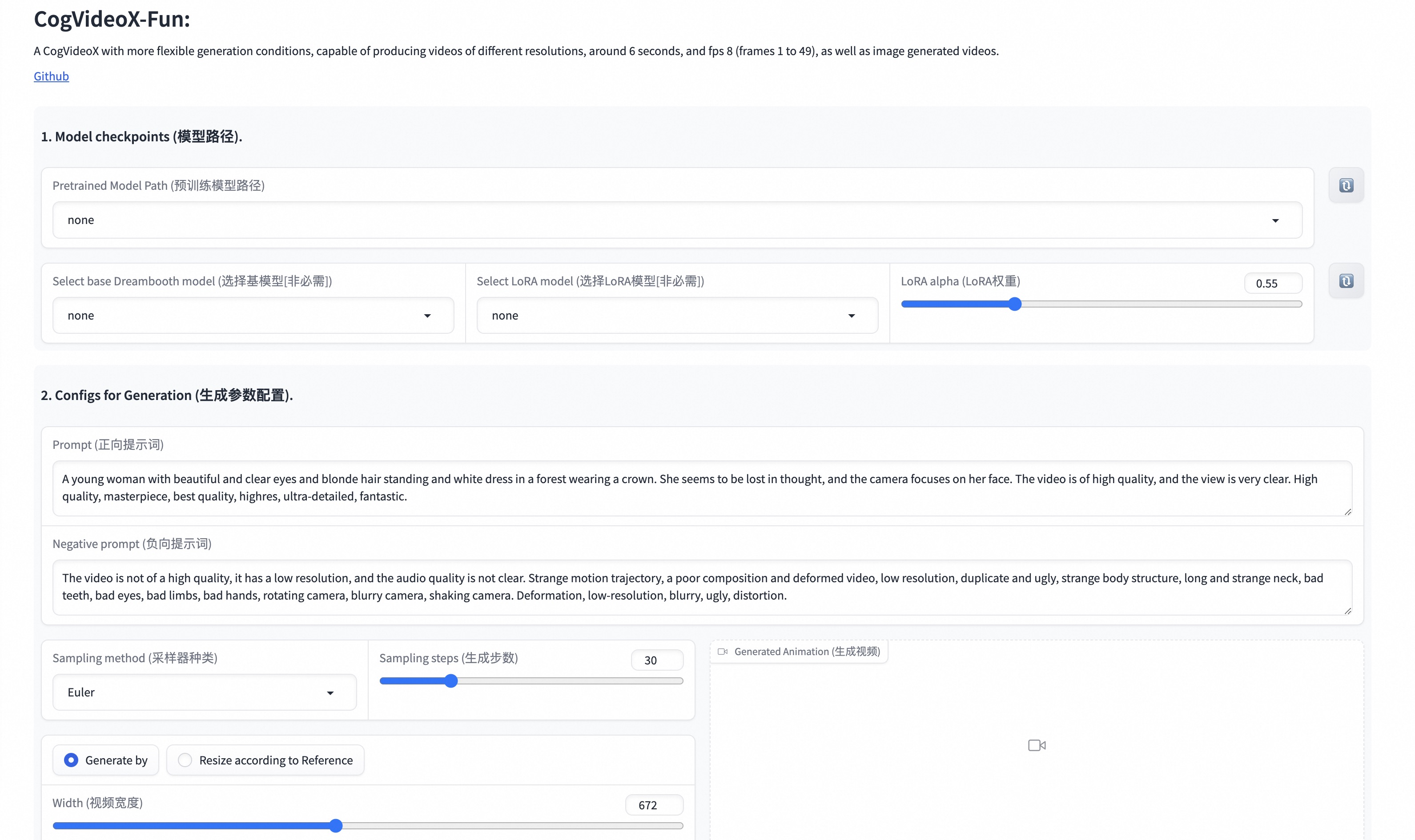

我们的ui界面如下:

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

# 快速启动

|

| 69 |

+

### 1. 云使用: AliyunDSW/Docker

|

| 70 |

+

#### a. 通过阿里云 DSW

|

| 71 |

+

DSW 有免费 GPU 时间,用户可申请一次,申请后3个月内有效。

|

| 72 |

+

|

| 73 |

+

阿里云在[Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1)提供免费GPU时间,获取并在阿里云PAI-DSW中使用,5分钟内即可启动CogVideoX-Fun。

|

| 74 |

+

|

| 75 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/cogvideox_fun)

|

| 76 |

+

|

| 77 |

+

#### b. 通过ComfyUI

|

| 78 |

+

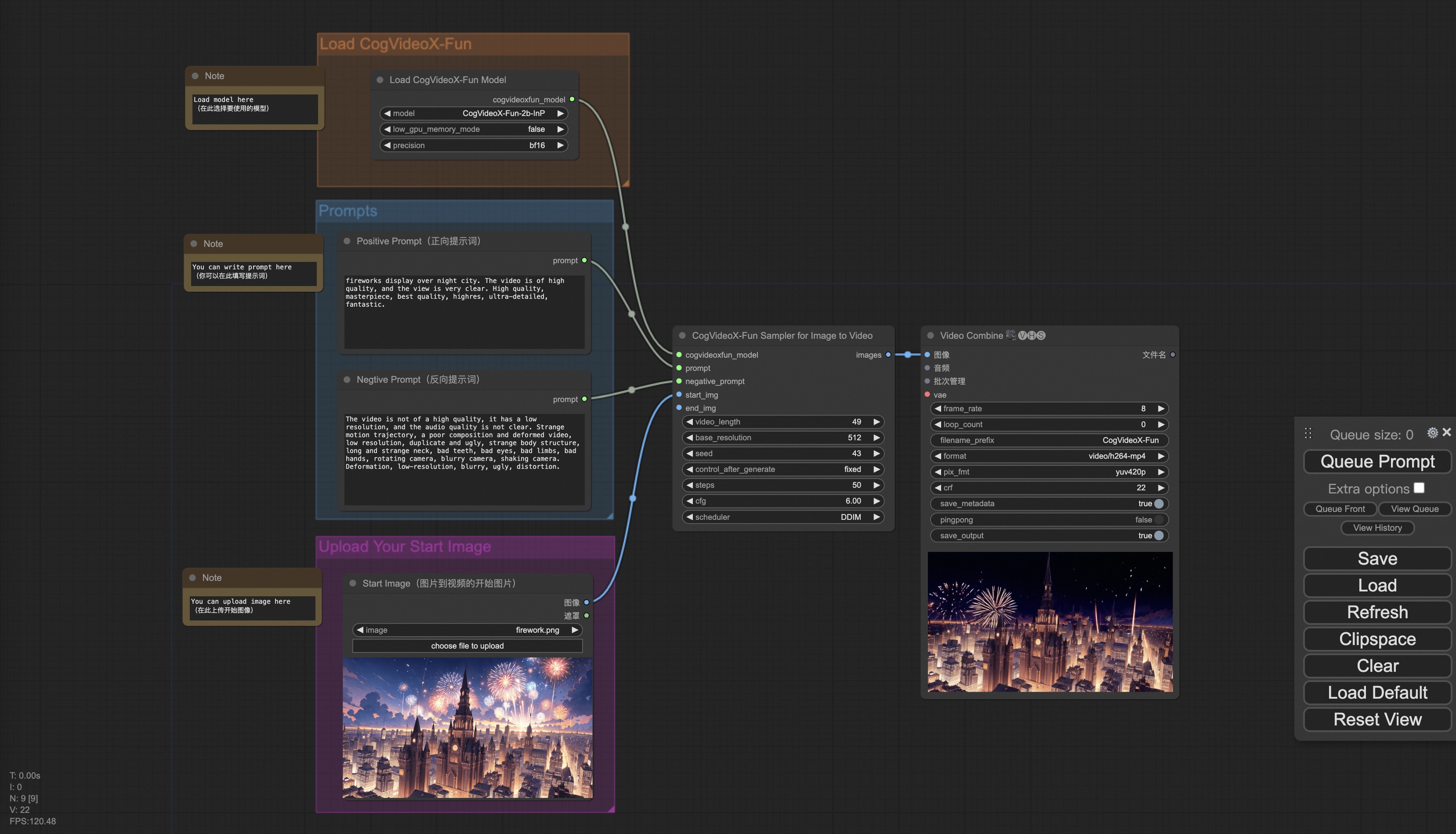

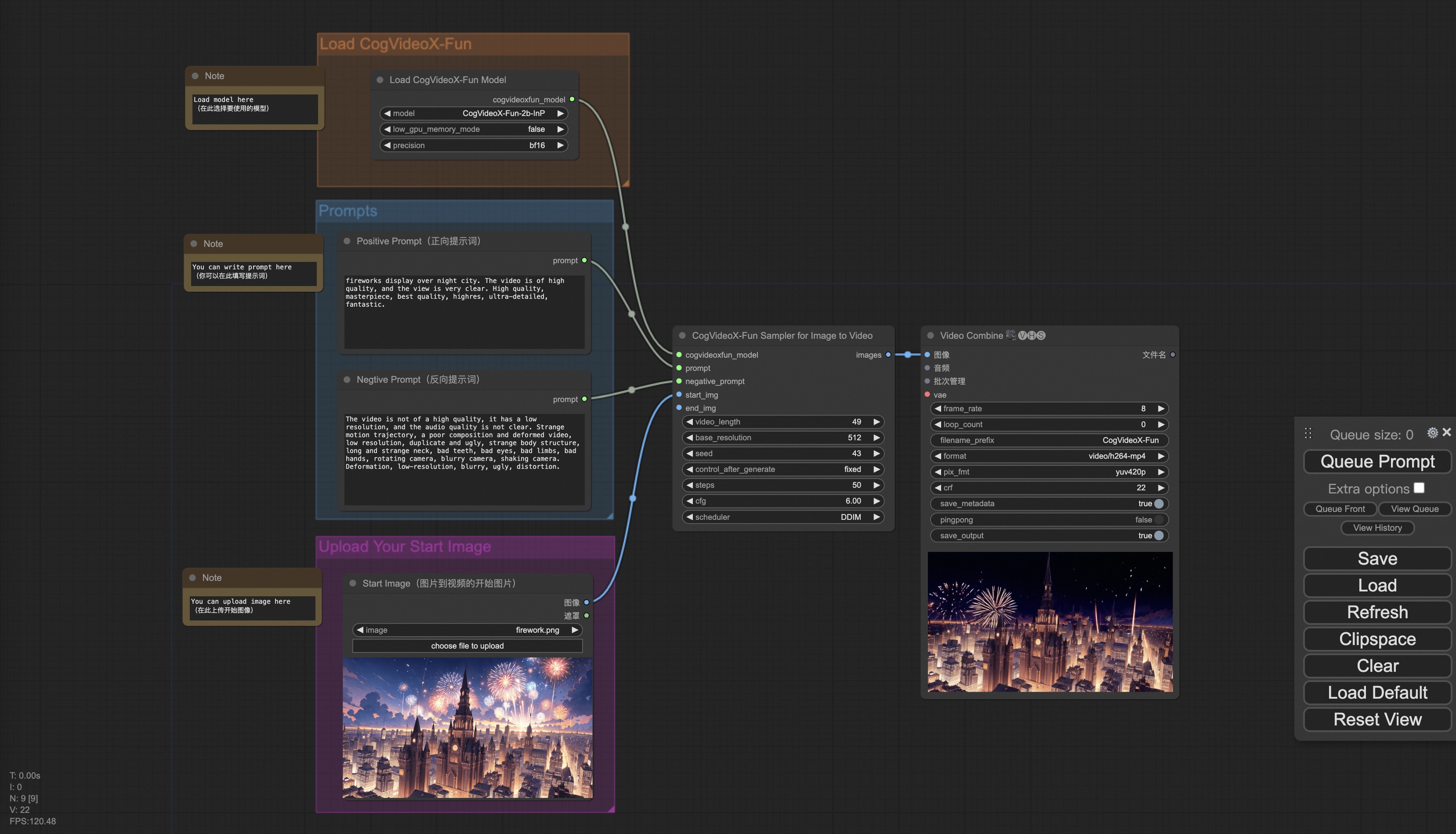

我们的ComfyUI界面如下,具体查看[ComfyUI README](comfyui/README.md)。

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

#### c. 通过docker

|

| 82 |

+

使用docker的情况下,请保证机器中已经正确安装显卡驱动与CUDA环境,然后以此执行以下命令:

|

| 83 |

+

|

| 84 |

+

```

|

| 85 |

+

# pull image

|

| 86 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 87 |

+

|

| 88 |

+

# enter image

|

| 89 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 90 |

+

|

| 91 |

+

# clone code

|

| 92 |

+

git clone https://github.com/aigc-apps/CogVideoX-Fun.git

|

| 93 |

+

|

| 94 |

+

# enter CogVideoX-Fun's dir

|

| 95 |

+

cd CogVideoX-Fun

|

| 96 |

+

|

| 97 |

+

# download weights

|

| 98 |

+

mkdir models/Diffusion_Transformer

|

| 99 |

+

mkdir models/Personalized_Model

|

| 100 |

+

|

| 101 |

+

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/Diffusion_Transformer/CogVideoX-Fun-V1.1-2b-InP.tar.gz -O models/Diffusion_Transformer/CogVideoX-Fun-V1.1-2b-InP.tar.gz

|

| 102 |

+

|

| 103 |

+

cd models/Diffusion_Transformer/

|

| 104 |

+

tar -xvf CogVideoX-Fun-V1.1-2b-InP.tar.gz

|

| 105 |

+

cd ../../

|

| 106 |

+

```

|

| 107 |

+

|

| 108 |

+

### 2. 本地安装: 环境检查/下载/安装

|

| 109 |

+

#### a. 环境检查

|

| 110 |

+

我们已验证CogVideoX-Fun可在以下环境中执行:

|

| 111 |

+

|

| 112 |

+

Windows 的详细信息:

|

| 113 |

+

- 操作系统 Windows 10

|

| 114 |

+

- python: python3.10 & python3.11

|

| 115 |

+

- pytorch: torch2.2.0

|

| 116 |

+

- CUDA: 11.8 & 12.1

|

| 117 |

+

- CUDNN: 8+

|

| 118 |

+

- GPU: Nvidia-3060 12G & Nvidia-3090 24G

|

| 119 |

+

|

| 120 |

+

Linux 的详细信息:

|

| 121 |

+

- 操作系统 Ubuntu 20.04, CentOS

|

| 122 |

+

- python: python3.10 & python3.11

|

| 123 |

+

- pytorch: torch2.2.0

|

| 124 |

+

- CUDA: 11.8 & 12.1

|

| 125 |

+

- CUDNN: 8+

|

| 126 |

+

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 127 |

+

|

| 128 |

+

我们需要大约 60GB 的可用磁盘空间,请检查!

|

| 129 |

+

|

| 130 |

+

#### b. 权重放置

|

| 131 |

+

我们最好将[权重](#model-zoo)按照指定路径进行放置:

|

| 132 |

+

|

| 133 |

+

```

|

| 134 |

+

📦 models/

|

| 135 |

+

├── 📂 Diffusion_Transformer/

|

| 136 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 137 |

+

│ └── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 138 |

+

├── 📂 Personalized_Model/

|

| 139 |

+

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 140 |

+

```

|

| 141 |

+

|

| 142 |

+

# 视频作品

|

| 143 |

+

所展示的结果都是图生视频获得。

|

| 144 |

+

|

| 145 |

+

### CogVideoX-Fun-V1.1-5B

|

| 146 |

+

|

| 147 |

+

Resolution-1024

|

| 148 |

+

|

| 149 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 150 |

+

<tr>

|

| 151 |

+

<td>

|

| 152 |

+

<video src="https://github.com/user-attachments/assets/34e7ec8f-293e-4655-bb14-5e1ee476f788" width="100%" controls autoplay loop></video>

|

| 153 |

+

</td>

|

| 154 |

+

<td>

|

| 155 |

+

<video src="https://github.com/user-attachments/assets/7809c64f-eb8c-48a9-8bdc-ca9261fd5434" width="100%" controls autoplay loop></video>

|

| 156 |

+

</td>

|

| 157 |

+

<td>

|

| 158 |

+

<video src="https://github.com/user-attachments/assets/8e76aaa4-c602-44ac-bcb4-8b24b72c386c" width="100%" controls autoplay loop></video>

|

| 159 |

+

</td>

|

| 160 |

+

<td>

|

| 161 |

+

<video src="https://github.com/user-attachments/assets/19dba894-7c35-4f25-b15c-384167ab3b03" width="100%" controls autoplay loop></video>

|

| 162 |

+

</td>

|

| 163 |

+

</tr>

|

| 164 |

+

</table>

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

Resolution-768

|

| 168 |

+

|

| 169 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 170 |

+

<tr>

|

| 171 |

+

<td>

|

| 172 |

+

<video src="https://github.com/user-attachments/assets/0bc339b9-455b-44fd-8917-80272d702737" width="100%" controls autoplay loop></video>

|

| 173 |

+

</td>

|

| 174 |

+

<td>

|

| 175 |

+

<video src="https://github.com/user-attachments/assets/70a043b9-6721-4bd9-be47-78b7ec5c27e9" width="100%" controls autoplay loop></video>

|

| 176 |

+

</td>

|

| 177 |

+

<td>

|

| 178 |

+

<video src="https://github.com/user-attachments/assets/d5dd6c09-14f3-40f8-8b6d-91e26519b8ac" width="100%" controls autoplay loop></video>

|

| 179 |

+

</td>

|

| 180 |

+

<td>

|

| 181 |

+

<video src="https://github.com/user-attachments/assets/9327e8bc-4f17-46b0-b50d-38c250a9483a" width="100%" controls autoplay loop></video>

|

| 182 |

+

</td>

|

| 183 |

+

</tr>

|

| 184 |

+

</table>

|

| 185 |

+

|

| 186 |

+

Resolution-512

|

| 187 |

+

|

| 188 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 189 |

+

<tr>

|

| 190 |

+

<td>

|

| 191 |

+

<video src="https://github.com/user-attachments/assets/ef407030-8062-454d-aba3-131c21e6b58c" width="100%" controls autoplay loop></video>

|

| 192 |

+

</td>

|

| 193 |

+

<td>

|

| 194 |

+

<video src="https://github.com/user-attachments/assets/7610f49e-38b6-4214-aa48-723ae4d1b07e" width="100%" controls autoplay loop></video>

|

| 195 |

+

</td>

|

| 196 |

+

<td>

|

| 197 |

+

<video src="https://github.com/user-attachments/assets/1fff0567-1e15-415c-941e-53ee8ae2c841" width="100%" controls autoplay loop></video>

|

| 198 |

+

</td>

|

| 199 |

+

<td>

|

| 200 |

+

<video src="https://github.com/user-attachments/assets/bcec48da-b91b-43a0-9d50-cf026e00fa4f" width="100%" controls autoplay loop></video>

|

| 201 |

+

</td>

|

| 202 |

+

</tr>

|

| 203 |

+

</table>

|

| 204 |

+

|

| 205 |

+

### CogVideoX-Fun-V1.1-5B with Reward Backpropagation

|

| 206 |

+

|

| 207 |

+

<table border="0" style="width: 100%; text-align: center; margin-top: 20px;">

|

| 208 |

+

<thead>

|

| 209 |

+

<tr>

|

| 210 |

+

<th style="text-align: center;" width="10%">Prompt</sup></th>

|

| 211 |

+

<th style="text-align: center;" width="30%">CogVideoX-Fun-V1.1-5B</th>

|

| 212 |

+

<th style="text-align: center;" width="30%">CogVideoX-Fun-V1.1-5B <br> HPSv2.1 Reward LoRA</th>

|

| 213 |

+

<th style="text-align: center;" width="30%">CogVideoX-Fun-V1.1-5B <br> MPS Reward LoRA</th>

|

| 214 |

+

</tr>

|

| 215 |

+

</thead>

|

| 216 |

+

<tr>

|

| 217 |

+

<td>

|

| 218 |

+

Pig with wings flying above a diamond mountain

|

| 219 |

+

</td>

|

| 220 |

+

<td>

|

| 221 |

+

<video src="https://github.com/user-attachments/assets/6682f507-4ca2-45e9-9d76-86e2d709efb3" width="100%" controls autoplay loop></video>

|

| 222 |

+

</td>

|

| 223 |

+

<td>

|

| 224 |

+

<video src="https://github.com/user-attachments/assets/ec9219a2-96b3-44dd-b918-8176b2beb3b0" width="100%" controls autoplay loop></video>

|

| 225 |

+

</td>

|

| 226 |

+

<td>

|

| 227 |

+

<video src="https://github.com/user-attachments/assets/a75c6a6a-0b69-4448-afc0-fda3c7955ba0" width="100%" controls autoplay loop></video>

|

| 228 |

+

</td>

|

| 229 |

+

</tr>

|

| 230 |

+

<tr>

|

| 231 |

+

<td>

|

| 232 |

+

A dog runs through a field while a cat climbs a tree

|

| 233 |

+

</td>

|

| 234 |

+

<td>

|

| 235 |

+

<video src="https://github.com/user-attachments/assets/0392d632-2ec3-46b4-8867-0da1db577b6d" width="100%" controls autoplay loop></video>

|

| 236 |

+

</td>

|

| 237 |

+

<td>

|

| 238 |

+

<video src="https://github.com/user-attachments/assets/7d8c729d-6afb-408e-b812-67c40c3aaa96" width="100%" controls autoplay loop></video>

|

| 239 |

+

</td>

|

| 240 |

+

<td>

|

| 241 |

+

<video src="https://github.com/user-attachments/assets/dcd1343c-7435-4558-b602-9c0fa08cbd59" width="100%" controls autoplay loop></video>

|

| 242 |

+

</td>

|

| 243 |

+

</tr>

|

| 244 |

+

</table>

|

| 245 |

+

|

| 246 |

+

### CogVideoX-Fun-V1.1-5B-Control

|

| 247 |

+

|

| 248 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 249 |

+

<tr>

|

| 250 |

+

<td>

|

| 251 |

+

<video src="https://github.com/user-attachments/assets/53002ce2-dd18-4d4f-8135-b6f68364cabd" width="100%" controls autoplay loop></video>

|

| 252 |

+

</td>

|

| 253 |

+

<td>

|

| 254 |

+

<video src="https://github.com/user-attachments/assets/fce43c0b-81fa-4ab2-9ca7-78d786f520e6" width="100%" controls autoplay loop></video>

|

| 255 |

+

</td>

|

| 256 |

+

<td>

|

| 257 |

+

<video src="https://github.com/user-attachments/assets/b208b92c-5add-4ece-a200-3dbbe47b93c3" width="100%" controls autoplay loop></video>

|

| 258 |

+

</td>

|

| 259 |

+

<tr>

|

| 260 |

+

<td>

|

| 261 |

+

A young woman with beautiful clear eyes and blonde hair, wearing white clothes and twisting her body, with the camera focused on her face. High quality, masterpiece, best quality, high resolution, ultra-fine, dreamlike.

|

| 262 |

+

</td>

|

| 263 |

+

<td>

|

| 264 |

+

A young woman with beautiful clear eyes and blonde hair, wearing white clothes and twisting her body, with the camera focused on her face. High quality, masterpiece, best quality, high resolution, ultra-fine, dreamlike.

|

| 265 |

+

</td>

|

| 266 |

+

<td>

|

| 267 |

+

A young bear.

|

| 268 |

+

</td>

|

| 269 |

+

</tr>

|

| 270 |

+

<tr>

|

| 271 |

+

<td>

|

| 272 |

+

<video src="https://github.com/user-attachments/assets/ea908454-684b-4d60-b562-3db229a250a9" width="100%" controls autoplay loop></video>

|

| 273 |

+

</td>

|

| 274 |

+

<td>

|

| 275 |

+

<video src="https://github.com/user-attachments/assets/ffb7c6fc-8b69-453b-8aad-70dfae3899b9" width="100%" controls autoplay loop></video>

|

| 276 |

+

</td>

|

| 277 |

+

<td>

|

| 278 |

+

<video src="https://github.com/user-attachments/assets/d3f757a3-3551-4dcb-9372-7a61469813f5" width="100%" controls autoplay loop></video>

|

| 279 |

+

</td>

|

| 280 |

+

</tr>

|

| 281 |

+

</table>

|

| 282 |

+

|

| 283 |

+

### CogVideoX-Fun-V1.1-5B-Pose

|

| 284 |

+

|

| 285 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 286 |

+

<tr>

|

| 287 |

+

<td>

|

| 288 |

+

Resolution-512

|

| 289 |

+

</td>

|

| 290 |

+

<td>

|

| 291 |

+

Resolution-768

|

| 292 |

+

</td>

|

| 293 |

+

<td>

|

| 294 |

+

Resolution-1024

|

| 295 |

+

</td>

|

| 296 |

+

<tr>

|

| 297 |

+

<td>

|

| 298 |

+

<video src="https://github.com/user-attachments/assets/a746df51-9eb7-4446-bee5-2ee30285c143" width="100%" controls autoplay loop></video>

|

| 299 |

+

</td>

|

| 300 |

+

<td>

|

| 301 |

+

<video src="https://github.com/user-attachments/assets/db295245-e6aa-43be-8c81-32cb411f1473" width="100%" controls autoplay loop></video>

|

| 302 |

+

</td>

|

| 303 |

+

<td>

|

| 304 |

+

<video src="https://github.com/user-attachments/assets/ec9875b2-fde0-48e1-ab7e-490cee51ef40" width="100%" controls autoplay loop></video>

|

| 305 |

+

</td>

|

| 306 |

+

</tr>

|

| 307 |

+

</table>

|

| 308 |

+

|

| 309 |

+

### CogVideoX-Fun-V1.1-2B

|

| 310 |

+

|

| 311 |

+

Resolution-768

|

| 312 |

+

|

| 313 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 314 |

+

<tr>

|

| 315 |

+

<td>

|

| 316 |

+

<video src="https://github.com/user-attachments/assets/03235dea-980e-4fc5-9c41-e40a5bc1b6d0" width="100%" controls autoplay loop></video>

|

| 317 |

+

</td>

|

| 318 |

+

<td>

|

| 319 |

+

<video src="https://github.com/user-attachments/assets/f7302648-5017-47db-bdeb-4d893e620b37" width="100%" controls autoplay loop></video>

|

| 320 |

+

</td>

|

| 321 |

+

<td>

|

| 322 |

+

<video src="https://github.com/user-attachments/assets/cbadf411-28fa-4b87-813d-da63ff481904" width="100%" controls autoplay loop></video>

|

| 323 |

+

</td>

|

| 324 |

+

<td>

|

| 325 |

+

<video src="https://github.com/user-attachments/assets/87cc9d0b-b6fe-4d2d-b447-174513d169ab" width="100%" controls autoplay loop></video>

|

| 326 |

+

</td>

|

| 327 |

+

</tr>

|

| 328 |

+

</table>

|

| 329 |

+

|

| 330 |

+

### CogVideoX-Fun-V1.1-2B-Pose

|

| 331 |

+

|

| 332 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 333 |

+

<tr>

|

| 334 |

+

<td>

|

| 335 |

+

Resolution-512

|

| 336 |

+

</td>

|

| 337 |

+

<td>

|

| 338 |

+

Resolution-768

|

| 339 |

+

</td>

|

| 340 |

+

<td>

|

| 341 |

+

Resolution-1024

|

| 342 |

+

</td>

|

| 343 |

+

<tr>

|

| 344 |

+

<td>

|

| 345 |

+

<video src="https://github.com/user-attachments/assets/487bcd7b-1b7f-4bb4-95b5-96a6b6548b3e" width="100%" controls autoplay loop></video>

|

| 346 |

+

</td>

|

| 347 |

+

<td>

|

| 348 |

+

<video src="https://github.com/user-attachments/assets/2710fd18-8489-46e4-8086-c237309ae7f6" width="100%" controls autoplay loop></video>

|

| 349 |

+

</td>

|

| 350 |

+

<td>

|

| 351 |

+

<video src="https://github.com/user-attachments/assets/b79513db-7747-4512-b86c-94f9ca447fe2" width="100%" controls autoplay loop></video>

|

| 352 |

+

</td>

|

| 353 |

+

</tr>

|

| 354 |

+

</table>

|

| 355 |

+

|

| 356 |

+

# 如何使用

|

| 357 |

+

|

| 358 |

+

<h3 id="video-gen">1. 生成 </h3>

|

| 359 |

+

|

| 360 |

+

#### a. 视频生成

|

| 361 |

+

##### i、运行python文件

|

| 362 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 363 |

+

- 步骤2:在predict_t2v.py文件中修改prompt、neg_prompt、guidance_scale和seed。

|

| 364 |

+

- 步骤3:运行predict_t2v.py文件,等待生成结果,结果保存在samples/cogvideox-fun-videos-t2v文件夹中。

|

| 365 |

+

- 步骤4:如果想结合自己训练的其他backbone与Lora,则看情况修改predict_t2v.py中的predict_t2v.py和lora_path。

|

| 366 |

+

|

| 367 |

+

##### ii、通过ui界面

|

| 368 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 369 |

+

- 步骤2:运行app.py文件,进入gradio页面。

|

| 370 |

+

- 步骤3:根据页面选择生成模型,填入prompt、neg_prompt、guidance_scale和seed等,点击生成,等待生成结果,结果保存在sample文件夹中。

|

| 371 |

+

|

| 372 |

+

##### iii、通过comfyui

|

| 373 |

+

具体查看[ComfyUI README](comfyui/README.md)。

|

| 374 |

+

|

| 375 |

+

### 2. 模型训练

|

| 376 |

+

一个完整的CogVideoX-Fun训练链路应该包括数据预处理��Video DiT训练。

|

| 377 |

+

|

| 378 |

+

<h4 id="data-preprocess">a.数据预处理</h4>

|

| 379 |

+

我们给出了一个简单的demo通过图片数据训练lora模型,详情可以查看[wiki](https://github.com/aigc-apps/CogVideoX-Fun/wiki/Training-Lora)。

|

| 380 |

+

|

| 381 |

+

一个完整的长视频切分、清洗、描述的数据预处理链路可以参考video caption部分的[README](cogvideox/video_caption/README.md)进行。

|

| 382 |

+

|

| 383 |

+

如果期望训练一个文生图视频的生成模型,您需要以这种格式排列数据集。

|

| 384 |

+

```

|

| 385 |

+

📦 project/

|

| 386 |

+

├── 📂 datasets/

|

| 387 |

+

│ ├── 📂 internal_datasets/

|

| 388 |

+

│ ├── 📂 train/

|

| 389 |

+

│ │ ├── 📄 00000001.mp4

|

| 390 |

+

│ │ ├── 📄 00000002.jpg

|

| 391 |

+

│ │ └── 📄 .....

|

| 392 |

+

│ └── 📄 json_of_internal_datasets.json

|

| 393 |

+

```

|

| 394 |

+

|

| 395 |

+

json_of_internal_datasets.json是一个标准的json文件。json中的file_path可以被设置为相对路径,如下所示:

|

| 396 |

+

```json

|

| 397 |

+

[

|

| 398 |

+

{

|

| 399 |

+

"file_path": "train/00000001.mp4",

|

| 400 |

+

"text": "A group of young men in suits and sunglasses are walking down a city street.",

|

| 401 |

+

"type": "video"

|

| 402 |

+

},

|

| 403 |

+

{

|

| 404 |

+

"file_path": "train/00000002.jpg",

|

| 405 |

+

"text": "A group of young men in suits and sunglasses are walking down a city street.",

|

| 406 |

+

"type": "image"

|

| 407 |

+

},

|

| 408 |

+

.....

|

| 409 |

+

]

|

| 410 |

+

```

|

| 411 |

+

|

| 412 |

+

你也可以将路径设置为绝对路径:

|

| 413 |

+

```json

|

| 414 |

+

[

|

| 415 |

+

{

|

| 416 |

+

"file_path": "/mnt/data/videos/00000001.mp4",

|

| 417 |

+

"text": "A group of young men in suits and sunglasses are walking down a city street.",

|

| 418 |

+

"type": "video"

|

| 419 |

+

},

|

| 420 |

+

{

|

| 421 |

+

"file_path": "/mnt/data/train/00000001.jpg",

|

| 422 |

+

"text": "A group of young men in suits and sunglasses are walking down a city street.",

|

| 423 |

+

"type": "image"

|

| 424 |

+

},

|

| 425 |

+

.....

|

| 426 |

+

]

|

| 427 |

+

```

|

| 428 |

+

<h4 id="dit-train">b. Video DiT训练 </h4>

|

| 429 |

+

|

| 430 |

+

如果数据预处理时,数据的格式为相对路径,则进入scripts/train.sh进行如下设置。

|

| 431 |

+

```

|

| 432 |

+

export DATASET_NAME="datasets/internal_datasets/"

|

| 433 |

+

export DATASET_META_NAME="datasets/internal_datasets/json_of_internal_datasets.json"

|

| 434 |

+

|

| 435 |

+

...

|

| 436 |

+

|

| 437 |

+

train_data_format="normal"

|

| 438 |

+

```

|

| 439 |

+

|

| 440 |

+

如果数据的格式为绝对路径,则进入scripts/train.sh进行如下设置。

|

| 441 |

+

```

|

| 442 |

+

export DATASET_NAME=""

|

| 443 |

+

export DATASET_META_NAME="/mnt/data/json_of_internal_datasets.json"

|

| 444 |

+

```

|

| 445 |

+

|

| 446 |

+

最后运行scripts/train.sh。

|

| 447 |

+

```sh

|

| 448 |

+

sh scripts/train.sh

|

| 449 |

+

```

|

| 450 |

+

|

| 451 |

+

关于一些参数的设置细节,可以查看[Readme Train](scripts/README_TRAIN.md)与[Readme Lora](scripts/README_TRAIN_LORA.md)

|

| 452 |

+

|

| 453 |

+

# 模型地址

|

| 454 |

+

|

| 455 |

+

V1.5:

|

| 456 |

+

|

| 457 |

+

| 名称 | 存储空间 | Hugging Face | Model Scope | 描述 |

|

| 458 |

+

|--|--|--|--|--|

|

| 459 |

+

| CogVideoX-Fun-V1.5-5b-InP | 20.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.5-5b-InP) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.5-5b-InP) | 官方的图生视频权重。支持多分辨率(512,768,1024)的视频预测,以85帧、每秒8帧进行训练 |

|

| 460 |

+

|

| 461 |

+

|

| 462 |

+

V1.1:

|

| 463 |

+

|

| 464 |

+

| 名称 | 存储空间 | Hugging Face | Model Scope | 描述 |

|

| 465 |

+

|--|--|--|--|--|

|

| 466 |

+

| CogVideoX-Fun-V1.1-2b-InP | 13.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-2b-InP) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-2b-InP) | 官方的图生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 467 |

+

| CogVideoX-Fun-V1.1-5b-InP | 20.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-InP) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-InP) | 官方的图生视频权重。添加了Noise,运动幅度相比于V1.0更大。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 468 |

+

| CogVideoX-Fun-V1.1-2b-Pose | 13.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-2b-Pose) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-2b-Pose) | 官方的姿态控制生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 469 |

+

| CogVideoX-Fun-V1.1-5b-Pose | 20.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-Pose) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-Pose) | 官方的姿态控制生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 470 |

+

| CogVideoX-Fun-V1.1-5b-Control | 20.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-Control) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-Control) | 官方的控制生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练。支持不同的控制条件,如Canny、Depth、Pose、MLSD等 |

|

| 471 |

+

| CogVideoX-Fun-V1.1-Reward-LoRAs | - | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-Reward-LoRAs) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-Reward-LoRAs) | 官方的奖励反向传播技术模型,优化CogVideoX-Fun-V1.1生成的视频,使其更好地符合人类偏好。 |

|

| 472 |

+

|

| 473 |

+

V1.0:

|

| 474 |

+

|

| 475 |

+

| 名称 | 存储空间 | Hugging Face | Model Scope | 描述 |

|

| 476 |

+

|--|--|--|--|--|

|

| 477 |

+

| CogVideoX-Fun-2b-InP | 13.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-2b-InP) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-2b-InP) | 官方的图生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 478 |

+

| CogVideoX-Fun-5b-InP | 20.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/CogVideoX-Fun-5b-InP) | [😄Link](https://modelscope.cn/models/PAI/CogVideoX-Fun-5b-InP) | 官方的图生视频权重。支持多分辨率(512,768,1024,1280)的视频预测,以49帧、每秒8帧进行训练 |

|

| 479 |

+

|

| 480 |

+

# 未来计划

|

| 481 |

+

- 支持中文。

|

| 482 |

+

|

| 483 |

+

# 参考文献

|

| 484 |

+

- CogVideo: https://github.com/THUDM/CogVideo/

|

| 485 |

+

- EasyAnimate: https://github.com/aigc-apps/EasyAnimate

|

| 486 |

+

|

| 487 |

+

# 许可证

|

| 488 |

+

本项目采用 [Apache License (Version 2.0)](https://github.com/modelscope/modelscope/blob/master/LICENSE).

|

| 489 |

+

|

| 490 |

+

CogVideoX-2B 模型 (包括其对应的Transformers模块,VAE模块) 根据 [Apache 2.0 协议](LICENSE) 许可证发布。

|

| 491 |

+

|

| 492 |

+

CogVideoX-5B 模型(Transformer 模块)在[CogVideoX许可证](https://huggingface.co/THUDM/CogVideoX-5b/blob/main/LICENSE)下发布.

|

README_en.md

ADDED

|

@@ -0,0 +1,463 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# CogVideoX-Fun

|

| 2 |

+

|

| 3 |

+

😊 Welcome!

|

| 4 |

+

|

| 5 |

+

[](https://huggingface.co/spaces/alibaba-pai/CogVideoX-Fun-5b)

|

| 6 |

+

|

| 7 |

+

English | [简体中文](./README.md)

|

| 8 |

+

|

| 9 |

+

# Table of Contents

|

| 10 |

+

- [Table of Contents](#table-of-contents)

|

| 11 |

+

- [Introduction](#introduction)

|

| 12 |

+

- [Quick Start](#quick-start)

|

| 13 |

+

- [Video Result](#video-result)

|

| 14 |

+

- [How to use](#how-to-use)

|

| 15 |

+

- [Model zoo](#model-zoo)

|

| 16 |

+

- [TODO List](#todo-list)

|

| 17 |

+

- [Reference](#reference)

|

| 18 |

+

- [License](#license)

|

| 19 |

+

|

| 20 |

+

# Introduction

|

| 21 |

+

CogVideoX-Fun is a modified pipeline based on the CogVideoX structure, designed to provide more flexibility in generation. It can be used to create AI images and videos, as well as to train baseline models and Lora models for Diffusion Transformer. We support predictions directly from the already trained CogVideoX-Fun model, allowing the generation of videos at different resolutions, approximately 6 seconds long with 8 fps (1 to 49 frames). Users can also train their own baseline models and Lora models to achieve certain style transformations.

|

| 22 |

+

|

| 23 |

+

We will support quick pull-ups from different platforms, refer to [Quick Start](#quick-start).

|

| 24 |

+

|

| 25 |

+

What's New:

|

| 26 |

+

- Use reward backpropagation to train Lora and optimize the video, aligning it better with human preferences, detailes in [here](scripts/README_TRAIN_REWARD.md). A new version of the control model supports various conditions (e.g., Canny, Depth, Pose, MLSD, etc.). [2024.11.21]

|

| 27 |

+

- CogVideoX-Fun Control is now supported in diffusers. Thanks to [a-r-r-o-w](https://github.com/a-r-r-o-w) who contributed the support in this [PR](https://github.com/huggingface/diffusers/pull/9671). Check out the [docs](https://huggingface.co/docs/diffusers/main/en/api/pipelines/cogvideox) to know more. [ 2024.10.16 ]

|

| 28 |

+

- Retrain the i2v model and add noise to increase the motion amplitude of the video. Upload the control model training code and control model. [ 2024.09.29 ]

|

| 29 |

+

- Create code! Now supporting Windows and Linux. Supports 2b and 5b models. Supports video generation at any resolution from 256x256x49 to 1024x1024x49. [ 2024.09.18 ]

|

| 30 |

+

|

| 31 |

+

Function:

|

| 32 |

+

- [Data Preprocessing](#data-preprocess)

|

| 33 |

+

- [Train DiT](#dit-train)

|

| 34 |

+

- [Video Generation](#video-gen)

|

| 35 |

+

|

| 36 |

+

Our UI interface is as follows:

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

# Quick Start

|

| 40 |

+

### 1. Cloud usage: AliyunDSW/Docker

|

| 41 |

+

#### a. From AliyunDSW

|

| 42 |

+

DSW has free GPU time, which can be applied once by a user and is valid for 3 months after applying.

|

| 43 |

+

|

| 44 |

+

Aliyun provide free GPU time in [Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1), get it and use in Aliyun PAI-DSW to start CogVideoX-Fun within 5min!

|

| 45 |

+

|

| 46 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/cogvideox_fun)

|

| 47 |

+

|

| 48 |

+

#### b. From ComfyUI

|

| 49 |

+

Our ComfyUI is as follows, please refer to [ComfyUI README](comfyui/README.md) for details.

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

#### c. From docker

|

| 53 |

+

If you are using docker, please make sure that the graphics card driver and CUDA environment have been installed correctly in your machine.

|

| 54 |

+

|

| 55 |

+

Then execute the following commands in this way:

|

| 56 |

+

|

| 57 |

+

```

|

| 58 |

+

# pull image

|

| 59 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 60 |

+

|

| 61 |

+

# enter image

|

| 62 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 63 |

+

|

| 64 |

+

# clone code

|

| 65 |

+

git clone https://github.com/aigc-apps/CogVideoX-Fun.git

|

| 66 |

+

|

| 67 |

+

# enter CogVideoX-Fun's dir

|

| 68 |

+

cd CogVideoX-Fun

|

| 69 |

+

|

| 70 |

+

# download weights

|

| 71 |

+

mkdir models/Diffusion_Transformer

|

| 72 |

+

mkdir models/Personalized_Model

|

| 73 |

+

|

| 74 |

+

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/Diffusion_Transformer/CogVideoX-Fun-V1.1-2b-InP.tar.gz -O models/Diffusion_Transformer/CogVideoX-Fun-V1.1-2b-InP.tar.gz

|

| 75 |

+

|

| 76 |

+

cd models/Diffusion_Transformer/

|

| 77 |

+

tar -xvf CogVideoX-Fun-V1.1-2b-InP.tar.gz

|

| 78 |

+

cd ../../

|

| 79 |

+

```

|

| 80 |

+

|

| 81 |

+

### 2. Local install: Environment Check/Downloading/Installation

|

| 82 |

+

#### a. Environment Check

|

| 83 |

+

We have verified CogVideoX-Fun execution on the following environment:

|

| 84 |

+

|

| 85 |

+

The detailed of Windows:

|

| 86 |

+

- OS: Windows 10

|

| 87 |

+

- python: python3.10 & python3.11

|

| 88 |

+

- pytorch: torch2.2.0

|

| 89 |

+

- CUDA: 11.8 & 12.1

|

| 90 |

+

- CUDNN: 8+

|

| 91 |

+

- GPU: Nvidia-3060 12G & Nvidia-3090 24G

|

| 92 |

+

|

| 93 |

+

The detailed of Linux:

|

| 94 |

+

- OS: Ubuntu 20.04, CentOS

|

| 95 |

+

- python: python3.10 & python3.11

|

| 96 |

+

- pytorch: torch2.2.0

|

| 97 |

+

- CUDA: 11.8 & 12.1

|

| 98 |

+

- CUDNN: 8+

|

| 99 |

+

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 100 |

+

|

| 101 |

+

We need about 60GB available on disk (for saving weights), please check!

|

| 102 |

+

|

| 103 |

+

#### b. Weights

|

| 104 |

+

We'd better place the [weights](#model-zoo) along the specified path:

|

| 105 |

+

|

| 106 |

+

```

|

| 107 |

+

📦 models/

|

| 108 |

+

├─�� 📂 Diffusion_Transformer/

|

| 109 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 110 |

+

│ └── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 111 |

+

├── 📂 Personalized_Model/

|

| 112 |

+

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 113 |

+

```

|

| 114 |

+

|

| 115 |

+

# Video Result

|

| 116 |

+

The results displayed are all based on image.

|

| 117 |

+

|

| 118 |

+

### CogVideoX-Fun-V1.1-5B

|

| 119 |

+

|

| 120 |

+

Resolution-1024

|

| 121 |

+

|

| 122 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 123 |

+

<tr>

|

| 124 |

+

<td>

|

| 125 |

+

<video src="https://github.com/user-attachments/assets/34e7ec8f-293e-4655-bb14-5e1ee476f788" width="100%" controls autoplay loop></video>

|

| 126 |

+

</td>

|

| 127 |

+

<td>

|

| 128 |

+

<video src="https://github.com/user-attachments/assets/7809c64f-eb8c-48a9-8bdc-ca9261fd5434" width="100%" controls autoplay loop></video>

|

| 129 |

+

</td>

|

| 130 |

+

<td>

|

| 131 |

+

<video src="https://github.com/user-attachments/assets/8e76aaa4-c602-44ac-bcb4-8b24b72c386c" width="100%" controls autoplay loop></video>

|

| 132 |

+

</td>

|

| 133 |

+

<td>

|

| 134 |

+