Commit

•

2bbaaba

1

Parent(s):

55e3bc2

End of training

Browse files- README.md +48 -0

- logs/text2image-fine-tune/1709666306.451813/events.out.tfevents.1709666306.9a0a6aa6c700.4367.1 +3 -0

- logs/text2image-fine-tune/1709666306.4535224/hparams.yml +42 -0

- logs/text2image-fine-tune/1709667217.0104744/events.out.tfevents.1709667217.9a0a6aa6c700.8410.1 +3 -0

- logs/text2image-fine-tune/1709667217.0121987/hparams.yml +42 -0

- logs/text2image-fine-tune/events.out.tfevents.1709666306.9a0a6aa6c700.4367.0 +3 -0

- logs/text2image-fine-tune/events.out.tfevents.1709667217.9a0a6aa6c700.8410.0 +3 -0

- model_index.json +16 -0

- movq/config.json +37 -0

- movq/diffusion_pytorch_model.safetensors +3 -0

- scheduler/scheduler_config.json +20 -0

- unet/config.json +68 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- val_imgs_grid.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,48 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

base_model: kandinsky-community/kandinsky-2-2-decoder

|

| 5 |

+

datasets:

|

| 6 |

+

- kbharat7/DogChestXrayDatasetNew

|

| 7 |

+

prior:

|

| 8 |

+

- kandinsky-community/kandinsky-2-2-prior

|

| 9 |

+

tags:

|

| 10 |

+

- kandinsky

|

| 11 |

+

- text-to-image

|

| 12 |

+

- diffusers

|

| 13 |

+

- diffusers-training

|

| 14 |

+

inference: true

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# Finetuning - aditya11997/kandi2-decoder-3.1

|

| 18 |

+

|

| 19 |

+

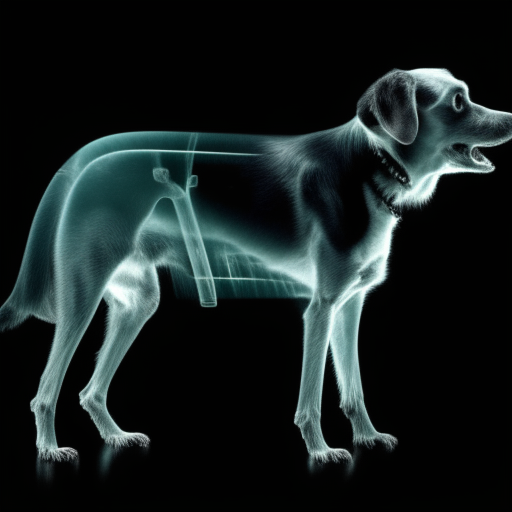

This pipeline was finetuned from **kandinsky-community/kandinsky-2-2-decoder** on the **kbharat7/DogChestXrayDatasetNew** dataset. Below are some example images generated with the finetuned pipeline using the following prompts: ['photo of dogxraysmall']:

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## Pipeline usage

|

| 25 |

+

|

| 26 |

+

You can use the pipeline like so:

|

| 27 |

+

|

| 28 |

+

```python

|

| 29 |

+

from diffusers import DiffusionPipeline

|

| 30 |

+

import torch

|

| 31 |

+

|

| 32 |

+

pipeline = AutoPipelineForText2Image.from_pretrained("aditya11997/kandi2-decoder-3.1", torch_dtype=torch.float16)

|

| 33 |

+

prompt = "photo of dogxraysmall"

|

| 34 |

+

image = pipeline(prompt).images[0]

|

| 35 |

+

image.save("my_image.png")

|

| 36 |

+

```

|

| 37 |

+

|

| 38 |

+

## Training info

|

| 39 |

+

|

| 40 |

+

These are the key hyperparameters used during training:

|

| 41 |

+

|

| 42 |

+

* Epochs: 1

|

| 43 |

+

* Learning rate: 1e-05

|

| 44 |

+

* Batch size: 1

|

| 45 |

+

* Gradient accumulation steps: 4

|

| 46 |

+

* Image resolution: 768

|

| 47 |

+

* Mixed-precision: None

|

| 48 |

+

|

logs/text2image-fine-tune/1709666306.451813/events.out.tfevents.1709666306.9a0a6aa6c700.4367.1

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:537f8d7179e73153771ad0acbd8d5f2fca04b1f74b9224bd95c7f9d06c633e65

|

| 3 |

+

size 2162

|

logs/text2image-fine-tune/1709666306.4535224/hparams.yml

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

adam_beta1: 0.9

|

| 2 |

+

adam_beta2: 0.999

|

| 3 |

+

adam_epsilon: 1.0e-08

|

| 4 |

+

adam_weight_decay: 0.0

|

| 5 |

+

allow_tf32: false

|

| 6 |

+

cache_dir: null

|

| 7 |

+

checkpointing_steps: 500

|

| 8 |

+

checkpoints_total_limit: 3

|

| 9 |

+

dataloader_num_workers: 0

|

| 10 |

+

dataset_config_name: null

|

| 11 |

+

dataset_name: kbharat7/DogChestXrayDatasetNew

|

| 12 |

+

enable_xformers_memory_efficient_attention: false

|

| 13 |

+

gradient_accumulation_steps: 4

|

| 14 |

+

gradient_checkpointing: true

|

| 15 |

+

hub_model_id: null

|

| 16 |

+

hub_token: null

|

| 17 |

+

image_column: image

|

| 18 |

+

learning_rate: 1.0e-05

|

| 19 |

+

local_rank: -1

|

| 20 |

+

logging_dir: logs

|

| 21 |

+

lr_scheduler: constant

|

| 22 |

+

lr_warmup_steps: 0

|

| 23 |

+

max_grad_norm: 1.0

|

| 24 |

+

max_train_samples: null

|

| 25 |

+

max_train_steps: 5

|

| 26 |

+

mixed_precision: null

|

| 27 |

+

num_train_epochs: 1

|

| 28 |

+

output_dir: kandi2-decoder-3.1

|

| 29 |

+

pretrained_decoder_model_name_or_path: kandinsky-community/kandinsky-2-2-decoder

|

| 30 |

+

pretrained_prior_model_name_or_path: kandinsky-community/kandinsky-2-2-prior

|

| 31 |

+

push_to_hub: true

|

| 32 |

+

report_to: tensorboard

|

| 33 |

+

resolution: 768

|

| 34 |

+

resume_from_checkpoint: null

|

| 35 |

+

seed: null

|

| 36 |

+

snr_gamma: null

|

| 37 |

+

tracker_project_name: text2image-fine-tune

|

| 38 |

+

train_batch_size: 1

|

| 39 |

+

train_data_dir: null

|

| 40 |

+

use_8bit_adam: false

|

| 41 |

+

use_ema: false

|

| 42 |

+

validation_epochs: 5

|

logs/text2image-fine-tune/1709667217.0104744/events.out.tfevents.1709667217.9a0a6aa6c700.8410.1

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6a8020dc5a3940b0763b12a608ca9e93542e64ede07dafe8d2ccec27b8e90cc6

|

| 3 |

+

size 2162

|

logs/text2image-fine-tune/1709667217.0121987/hparams.yml

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

adam_beta1: 0.9

|

| 2 |

+

adam_beta2: 0.999

|

| 3 |

+

adam_epsilon: 1.0e-08

|

| 4 |

+

adam_weight_decay: 0.0

|

| 5 |

+

allow_tf32: false

|

| 6 |

+

cache_dir: null

|

| 7 |

+

checkpointing_steps: 500

|

| 8 |

+

checkpoints_total_limit: 3

|

| 9 |

+

dataloader_num_workers: 0

|

| 10 |

+

dataset_config_name: null

|

| 11 |

+

dataset_name: kbharat7/DogChestXrayDatasetNew

|

| 12 |

+

enable_xformers_memory_efficient_attention: false

|

| 13 |

+

gradient_accumulation_steps: 4

|

| 14 |

+

gradient_checkpointing: true

|

| 15 |

+

hub_model_id: null

|

| 16 |

+

hub_token: null

|

| 17 |

+

image_column: image

|

| 18 |

+

learning_rate: 1.0e-05

|

| 19 |

+

local_rank: -1

|

| 20 |

+

logging_dir: logs

|

| 21 |

+

lr_scheduler: constant

|

| 22 |

+

lr_warmup_steps: 0

|

| 23 |

+

max_grad_norm: 1.0

|

| 24 |

+

max_train_samples: null

|

| 25 |

+

max_train_steps: 5

|

| 26 |

+

mixed_precision: null

|

| 27 |

+

num_train_epochs: 1

|

| 28 |

+

output_dir: kandi2-decoder-3.1

|

| 29 |

+

pretrained_decoder_model_name_or_path: kandinsky-community/kandinsky-2-2-decoder

|

| 30 |

+

pretrained_prior_model_name_or_path: kandinsky-community/kandinsky-2-2-prior

|

| 31 |

+

push_to_hub: true

|

| 32 |

+

report_to: tensorboard

|

| 33 |

+

resolution: 768

|

| 34 |

+

resume_from_checkpoint: null

|

| 35 |

+

seed: null

|

| 36 |

+

snr_gamma: null

|

| 37 |

+

tracker_project_name: text2image-fine-tune

|

| 38 |

+

train_batch_size: 1

|

| 39 |

+

train_data_dir: null

|

| 40 |

+

use_8bit_adam: false

|

| 41 |

+

use_ema: false

|

| 42 |

+

validation_epochs: 5

|

logs/text2image-fine-tune/events.out.tfevents.1709666306.9a0a6aa6c700.4367.0

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:84006203c9e56bec8fd36d55031f43ad6811ab2ccf411d4df30323a3ff8bbfa3

|

| 3 |

+

size 207463

|

logs/text2image-fine-tune/events.out.tfevents.1709667217.9a0a6aa6c700.8410.0

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3bec078df4c7986b68c59a7bdb3749733c1ca839b68166c34b1a6509d2a3e364

|

| 3 |

+

size 213320

|

model_index.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "KandinskyV22Pipeline",

|

| 3 |

+

"_diffusers_version": "0.27.0.dev0",

|

| 4 |

+

"movq": [

|

| 5 |

+

"diffusers",

|

| 6 |

+

"VQModel"

|

| 7 |

+

],

|

| 8 |

+

"scheduler": [

|

| 9 |

+

"diffusers",

|

| 10 |

+

"DDPMScheduler"

|

| 11 |

+

],

|

| 12 |

+

"unet": [

|

| 13 |

+

"diffusers",

|

| 14 |

+

"UNet2DConditionModel"

|

| 15 |

+

]

|

| 16 |

+

}

|

movq/config.json

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "VQModel",

|

| 3 |

+

"_diffusers_version": "0.27.0.dev0",

|

| 4 |

+

"_name_or_path": "/root/.cache/huggingface/hub/models--kandinsky-community--kandinsky-2-2-decoder/snapshots/9ae140d347fed8ce6e8bb3005dcc1f48543bb8e3/movq",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

128,

|

| 8 |

+

256,

|

| 9 |

+

256,

|

| 10 |

+

512

|

| 11 |

+

],

|

| 12 |

+

"down_block_types": [

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D",

|

| 16 |

+

"AttnDownEncoderBlock2D"

|

| 17 |

+

],

|

| 18 |

+

"force_upcast": false,

|

| 19 |

+

"in_channels": 3,

|

| 20 |

+

"latent_channels": 4,

|

| 21 |

+

"layers_per_block": 2,

|

| 22 |

+

"lookup_from_codebook": false,

|

| 23 |

+

"mid_block_add_attention": true,

|

| 24 |

+

"norm_num_groups": 32,

|

| 25 |

+

"norm_type": "spatial",

|

| 26 |

+

"num_vq_embeddings": 16384,

|

| 27 |

+

"out_channels": 3,

|

| 28 |

+

"sample_size": 32,

|

| 29 |

+

"scaling_factor": 0.18215,

|

| 30 |

+

"up_block_types": [

|

| 31 |

+

"AttnUpDecoderBlock2D",

|

| 32 |

+

"UpDecoderBlock2D",

|

| 33 |

+

"UpDecoderBlock2D",

|

| 34 |

+

"UpDecoderBlock2D"

|

| 35 |

+

],

|

| 36 |

+

"vq_embed_dim": 4

|

| 37 |

+

}

|

movq/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:43a5860fea195a7116f2471396c5cc9535fade9b63c4857d8a192ffd924b7002

|

| 3 |

+

size 271380364

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "DDPMScheduler",

|

| 3 |

+

"_diffusers_version": "0.27.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": true,

|

| 8 |

+

"clip_sample_range": 1.0,

|

| 9 |

+

"dynamic_thresholding_ratio": 0.995,

|

| 10 |

+

"num_train_timesteps": 1000,

|

| 11 |

+

"prediction_type": "epsilon",

|

| 12 |

+

"rescale_betas_zero_snr": false,

|

| 13 |

+

"sample_max_value": 1.0,

|

| 14 |

+

"set_alpha_to_one": false,

|

| 15 |

+

"steps_offset": 1,

|

| 16 |

+

"thresholding": false,

|

| 17 |

+

"timestep_spacing": "leading",

|

| 18 |

+

"trained_betas": null,

|

| 19 |

+

"variance_type": "fixed_small"

|

| 20 |

+

}

|

unet/config.json

ADDED

|

@@ -0,0 +1,68 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.27.0.dev0",

|

| 4 |

+

"_name_or_path": "kandinsky-community/kandinsky-2-2-decoder",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": "image",

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": 64,

|

| 10 |

+

"attention_type": "default",

|

| 11 |

+

"block_out_channels": [

|

| 12 |

+

384,

|

| 13 |

+

768,

|

| 14 |

+

1152,

|

| 15 |

+

1536

|

| 16 |

+

],

|

| 17 |

+

"center_input_sample": false,

|

| 18 |

+

"class_embed_type": null,

|

| 19 |

+

"class_embeddings_concat": false,

|

| 20 |

+

"conv_in_kernel": 3,

|

| 21 |

+

"conv_out_kernel": 3,

|

| 22 |

+

"cross_attention_dim": 768,

|

| 23 |

+

"cross_attention_norm": null,

|

| 24 |

+

"down_block_types": [

|

| 25 |

+

"ResnetDownsampleBlock2D",

|

| 26 |

+

"SimpleCrossAttnDownBlock2D",

|

| 27 |

+

"SimpleCrossAttnDownBlock2D",

|

| 28 |

+

"SimpleCrossAttnDownBlock2D"

|

| 29 |

+

],

|

| 30 |

+

"downsample_padding": 1,

|

| 31 |

+

"dropout": 0.0,

|

| 32 |

+

"dual_cross_attention": false,

|

| 33 |

+

"encoder_hid_dim": 1280,

|

| 34 |

+

"encoder_hid_dim_type": "image_proj",

|

| 35 |

+

"flip_sin_to_cos": true,

|

| 36 |

+

"freq_shift": 0,

|

| 37 |

+

"in_channels": 4,

|

| 38 |

+

"layers_per_block": 3,

|

| 39 |

+

"mid_block_only_cross_attention": null,

|

| 40 |

+

"mid_block_scale_factor": 1,

|

| 41 |

+

"mid_block_type": "UNetMidBlock2DSimpleCrossAttn",

|

| 42 |

+

"norm_eps": 1e-05,

|

| 43 |

+

"norm_num_groups": 32,

|

| 44 |

+

"num_attention_heads": null,

|

| 45 |

+

"num_class_embeds": null,

|

| 46 |

+

"only_cross_attention": false,

|

| 47 |

+

"out_channels": 8,

|

| 48 |

+

"projection_class_embeddings_input_dim": null,

|

| 49 |

+

"resnet_out_scale_factor": 1.0,

|

| 50 |

+

"resnet_skip_time_act": false,

|

| 51 |

+

"resnet_time_scale_shift": "scale_shift",

|

| 52 |

+

"reverse_transformer_layers_per_block": null,

|

| 53 |

+

"sample_size": 64,

|

| 54 |

+

"time_cond_proj_dim": null,

|

| 55 |

+

"time_embedding_act_fn": null,

|

| 56 |

+

"time_embedding_dim": null,

|

| 57 |

+

"time_embedding_type": "positional",

|

| 58 |

+

"timestep_post_act": null,

|

| 59 |

+

"transformer_layers_per_block": 1,

|

| 60 |

+

"up_block_types": [

|

| 61 |

+

"SimpleCrossAttnUpBlock2D",

|

| 62 |

+

"SimpleCrossAttnUpBlock2D",

|

| 63 |

+

"SimpleCrossAttnUpBlock2D",

|

| 64 |

+

"ResnetUpsampleBlock2D"

|

| 65 |

+

],

|

| 66 |

+

"upcast_attention": false,

|

| 67 |

+

"use_linear_projection": false

|

| 68 |

+

}

|

unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:139d4dff44d0732c5cc11b4d589e44c1cdc778541cf652f4f513870737b37a09

|

| 3 |

+

size 5012309584

|

val_imgs_grid.png

ADDED

|