First Model Version

Browse files- .gitattributes +0 -0

- README.md +25 -0

- TabPedia/pretrained.pth +3 -0

- assets/framework.png +0 -0

- donut-base/.DS_Store +0 -0

- donut-base/README.md +3 -0

- donut-base/added_tokens.json +1 -0

- donut-base/config.json +23 -0

- donut-base/gitattributes.txt +31 -0

- donut-base/pytorch_model.bin +3 -0

- donut-base/sentencepiece.bpe.model +3 -0

- donut-base/special_tokens_map.json +1 -0

- donut-base/tokenizer_config.json +1 -0

.gitattributes

CHANGED

|

File without changes

|

README.md

CHANGED

|

@@ -1,3 +1,28 @@

|

|

| 1 |

---

|

| 2 |

license: cc-by-nc-4.0

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: cc-by-nc-4.0

|

| 3 |

---

|

| 4 |

+

|

| 5 |

+

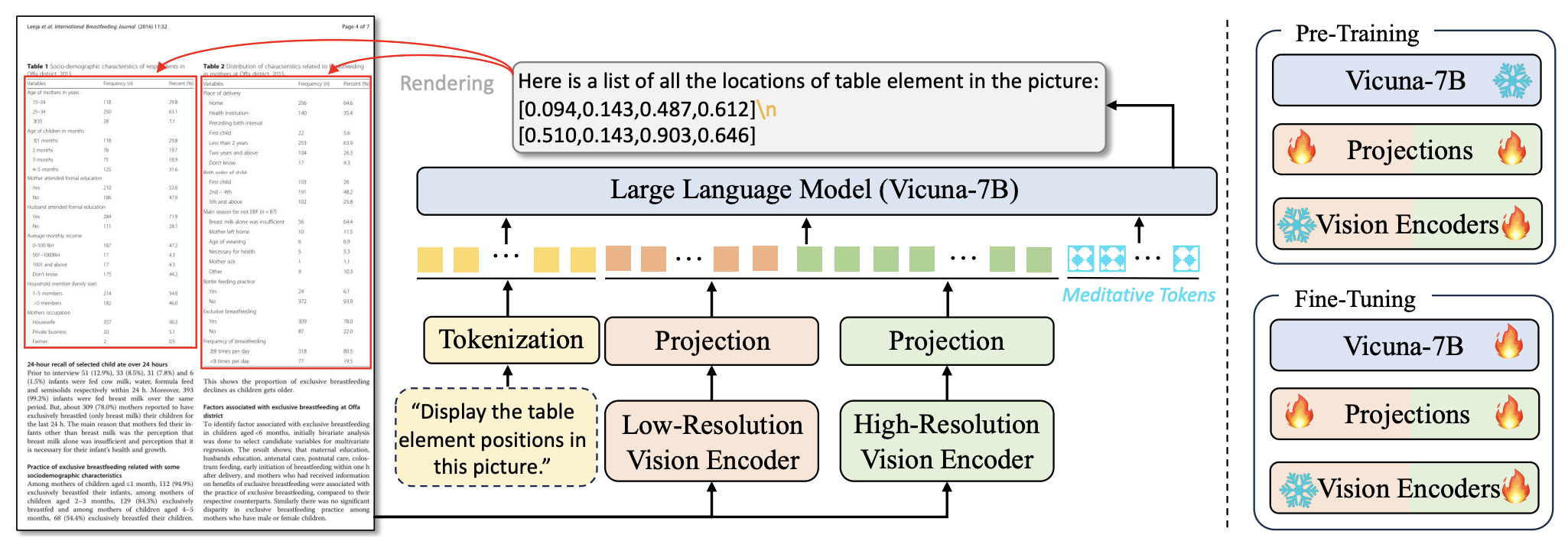

# TabPedia: Towards Comprehensive Visual Table Understanding with Concept Synergy

|

| 6 |

+

<p align="center">

|

| 7 |

+

<img src="assets/framework.png" width="800">

|

| 8 |

+

</p>

|

| 9 |

+

|

| 10 |

+

<p align="center">

|

| 11 |

+

📃 <a href="https://arxiv.org/pdf/2406.01326" target="_blank">Paper</a> | 🤗 <a href="https://huggingface.co/datasets/ByteDance/ComTQA" target="_blank">Hugging Face</a> | 🚀 <a href="https://github.com/zhaowc-ustc/TabPedia-Towards-Comprehensive-Visual-Table-Understanding-with-Concept-Synergy" target="_blank">Github</a>

|

| 12 |

+

|

| 13 |

+

</p>

|

| 14 |

+

|

| 15 |

+

This repository includes our pretrained model and dount model for better evaluation.

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

## Citation

|

| 19 |

+

|

| 20 |

+

If you find this work useful, please consider citing our paper:

|

| 21 |

+

```

|

| 22 |

+

@article{zhao2024tabpedia,

|

| 23 |

+

title={TabPedia: Towards Comprehensive Visual Table Understanding with Concept Synergy},

|

| 24 |

+

author={Zhao, Weichao and Feng, Hao and Liu, Qi and Tang, Jingqun and Wei, Shu and Wu, Binghong and Liao, Lei and Ye, Yongjie and Liu, Hao and Li, Houqiang and others},

|

| 25 |

+

journal={arXiv preprint arXiv:2406.01326},

|

| 26 |

+

year={2024}

|

| 27 |

+

}

|

| 28 |

+

```

|

TabPedia/pretrained.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:595cfa0e019b40f2bf9080db78a0ca820f940aa71f407f31b0fc1e20216a0980

|

| 3 |

+

size 31634109147

|

assets/framework.png

ADDED

|

donut-base/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

donut-base/README.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

---

|

donut-base/added_tokens.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"<sep/>": 57522, "<s_iitcdip>": 57523, "<s_synthdog>": 57524}

|

donut-base/config.json

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"align_long_axis": true,

|

| 3 |

+

"architectures": [

|

| 4 |

+

"DonutModel"

|

| 5 |

+

],

|

| 6 |

+

"decoder_layer": 4,

|

| 7 |

+

"encoder_layer": [

|

| 8 |

+

2,

|

| 9 |

+

2,

|

| 10 |

+

14,

|

| 11 |

+

2

|

| 12 |

+

],

|

| 13 |

+

"input_size": [

|

| 14 |

+

2560,

|

| 15 |

+

1920

|

| 16 |

+

],

|

| 17 |

+

"max_length": 1536,

|

| 18 |

+

"max_position_embeddings": 1536,

|

| 19 |

+

"model_type": "donut",

|

| 20 |

+

"torch_dtype": "float32",

|

| 21 |

+

"transformers_version": "4.11.3",

|

| 22 |

+

"window_size": 10

|

| 23 |

+

}

|

donut-base/gitattributes.txt

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.zstandard filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

donut-base/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d21e8b5e708168f4f9885d18f8bc95ad6950439e7ac518161828ff0b27b984e8

|

| 3 |

+

size 1018458179

|

donut-base/sentencepiece.bpe.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cb9e3dce4c326195d08fc3dd0f7e2eee1da8595c847bf4c1a9c78b7a82d47e2d

|

| 3 |

+

size 1296245

|

donut-base/special_tokens_map.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"bos_token": "<s>", "eos_token": "</s>", "unk_token": "<unk>", "sep_token": "</s>", "pad_token": "<pad>", "cls_token": "<s>", "mask_token": {"content": "<mask>", "single_word": false, "lstrip": true, "rstrip": false, "normalized": true}, "additional_special_tokens": ["<s_iitcdip>", "<s_synthdog>"]}

|

donut-base/tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"bos_token": "<s>", "eos_token": "</s>", "unk_token": "<unk>", "sep_token": "</s>", "cls_token": "<s>", "pad_token": "<pad>", "mask_token": {"content": "<mask>", "single_word": false, "lstrip": true, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "sp_model_kwargs": {}, "special_tokens_map_file": null, "tokenizer_file": "/root/.cache/huggingface/transformers/213c2041358e63047b407f94cde1ae23904d31a3bceb57eab291028c1e949437.7135a4b25ac726e19641f0d68803ff02bad960d6319064f55fa9c536929b86fc", "name_or_path": "hyunwoongko/asian-bart-ecjk", "tokenizer_class": "XLMRobertaTokenizer"}

|