Commit

•

08a158a

1

Parent(s):

23b438e

Upload folder using huggingface_hub

Browse files- .mdl +0 -0

- .msc +0 -0

- README.md +137 -1

- all_results.json +7 -0

- config.json +43 -0

- configuration.json +5 -0

- configuration_qwen.py +71 -0

- cpp_kernels.py +55 -0

- generation_config.json +12 -0

- model-00001-of-00002.safetensors +3 -0

- model-00002-of-00002.safetensors +3 -0

- model.safetensors.index.json +202 -0

- modeling_qwen.py +1363 -0

- qwen.tiktoken +0 -0

- qwen_generation_utils.py +416 -0

- special_tokens_map.json +13 -0

- tokenization_qwen.py +276 -0

- tokenizer_config.json +19 -0

- train_results.json +7 -0

- trainer_log.jsonl +126 -0

- trainer_state.json +780 -0

- training_args.bin +3 -0

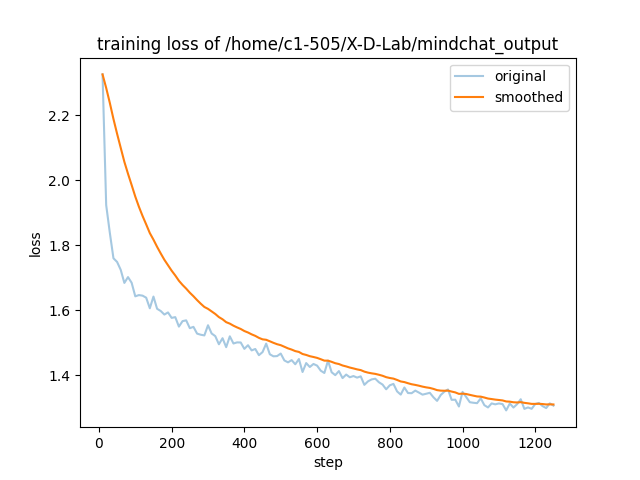

- training_loss.png +0 -0

.mdl

ADDED

|

Binary file (49 Bytes). View file

|

|

|

.msc

ADDED

|

Binary file (1.57 kB). View file

|

|

|

README.md

CHANGED

|

@@ -1,3 +1,139 @@

|

|

| 1 |

---

|

| 2 |

-

license:

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

license: other

|

| 3 |

+

language:

|

| 4 |

+

- zh

|

| 5 |

---

|

| 6 |

+

<h1 align="center">🐋MindChat(漫谈): 心理大模型</h1>

|

| 7 |

+

<div align=center><img src ="https://github.com/X-D-Lab/MindChat/blob/main/assets/image/logo-github.png?raw=true"/></div>

|

| 8 |

+

|

| 9 |

+

## 💪 模型进展

|

| 10 |

+

|

| 11 |

+

**🔥更好的模型永远在路上!🔥**

|

| 12 |

+

* Sep 5, 2023: 更新[MindChat-Qwen-7B-v2](https://modelscope.cn/models/X-D-Lab/MindChat-Qwen-7B-v2/summary)模型, 增加支持[**疑病**](./assets/image/yibing.png)、**躯体焦虑**、**工作学习兴趣**、**自罪感**、**自杀意念**这个五个维度的测评;

|

| 13 |

+

* Aug 5, 2023: 首个基于[Qwen-7B](https://github.com/QwenLM/Qwen-7B)的垂域大模型MindChat-Qwen-7B训练完成并对外开源;

|

| 14 |

+

* Jul 23, 2023: 提供MindChat体验地址: [MindChat-创空间](https://modelscope.cn/studios/X-D-Lab/MindChat/summary), 欢迎体验

|

| 15 |

+

* Jul 21, 2023: MindChat-InternLM-7B训练完成, 在**模型安全、共情输出、人类价值观对齐**等方面进行针对性强化;

|

| 16 |

+

* Jul 15, 2023: MindChat-Baichuan-13B训练完成, 作为**首个百亿级参数的心理大模型**正式开源;

|

| 17 |

+

* Jul 9, 2023: MindChat-beta训练完成, 并正式开源;

|

| 18 |

+

* Jul 6, 2023: 首次提交MindChat(漫谈)心理大模型;

|

| 19 |

+

|

| 20 |

+

## 👏 模型介绍

|

| 21 |

+

|

| 22 |

+

心理大模型——漫谈(MindChat)期望从**心理咨询、心理评估、心理诊断、心理治疗**四个维度帮助人们**纾解心理压力与解决心理困惑**, 提高心理健康水平. 作为一个心理大模型, MindChat通过营造轻松、开放的交谈环境, 以放松身心、交流感受或分享经验的方式, 与用户建立信任和理解的关系. MindChat希望为用户提供**隐私、温暖、安全、及时、方便**的对话环境, 从而帮助用户克服各种困难和挑战, 实现自我成长和发展.

|

| 23 |

+

|

| 24 |

+

无论是在工作场景还是在个人生活中, MindChat期望通过心理学专业知识和人工智能大模型技术, 在**严格保护用户隐私**的前提下, **全时段全天候**为用户提供全面的心理支持和诊疗帮助, 同时实现自我成长和发展, **以期为建设一个更加健康、包容和平等的社会贡献力量**.

|

| 25 |

+

|

| 26 |

+

[](https://modelscope.cn/studios/X-D-Lab/MindChat/summary)

|

| 27 |

+

|

| 28 |

+

## 🔥 模型列表

|

| 29 |

+

|

| 30 |

+

| 模型名称 | 合并后的权重 |

|

| 31 |

+

| :----: | :----: |

|

| 32 |

+

| MindChat-InternLM-7B | [ModelScope](https://modelscope.cn/models/X-D-Lab/MindChat-7B/summary) / [HuggingFace](https://huggingface.co/X-D-Lab/MindChat-7B) / [OpenXLab](https://openxlab.org.cn/models/detail/thomas-yanxin/MindChat-InternLM-7B) |

|

| 33 |

+

| MindChat-Qwen-7B | [ModelScope](https://modelscope.cn/models/X-D-Lab/MindChat-Qwen-7B/summary) / [HuggingFace](https://huggingface.co/X-D-Lab/MindChat-Qwen-7B-v2) / OpenXLab |

|

| 34 |

+

| MindChat-Baichuan-13B | [ModelScope](https://modelscope.cn/models/X-D-Lab/MindChat-Baichuan-13B/summary) / [HuggingFace](https://huggingface.co/X-D-Lab/MindChat-baichuan-13B) / OpenXLab |

|

| 35 |

+

|

| 36 |

+

更为优质的MindChat模型将在不久的未来对外开源开放. 敬请期待!

|

| 37 |

+

|

| 38 |

+

此外, 本团队同时关注人们的身理健康, 建有安全、可靠、普惠的[中文医疗大模型孙思邈(Sunsimiao)](https://github.com/X-D-Lab/Sunsimiao), 欢迎下载使用, 敬请批评指证!

|

| 39 |

+

|

| 40 |

+

## ☁️ 数据词云

|

| 41 |

+

|

| 42 |

+

目前MindChat的开源模型采用了经过人工清洗的**约20万条的高质量多轮心理对话数据**进行训练, 涵盖**工作、家庭、学习、生活、社交、安全**等多个方面

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

因数据权限限制, 我们目前仅开源了极少量的[数据案例](https://github.com/X-D-Lab/MindChat/blob/main/data/dataset_example.json). 后续我们将不断完善数据积累, 争取为开源社区贡献一份高质量的多轮心理对话数据, 共同推动开源心理大模型的建设.

|

| 47 |

+

|

| 48 |

+

## 📣 对话案例

|

| 49 |

+

|

| 50 |

+

目前我们对模型进行了**学习**、**家庭**、**工作**、**朋友**、**安全**这五个方面进行了测试, 后续将整理并提供更为全面的评测结果.

|

| 51 |

+

|

| 52 |

+

以下是部分案例的摘录, 更多对话案例及比较请见[**此处**](https://github.com/X-D-Lab/MindChat/blob/main/assets/Dialogue_cases/).

|

| 53 |

+

|

| 54 |

+

### 📖 学习

|

| 55 |

+

|

| 56 |

+

| 轮数 | 用户 | MindChat |

|

| 57 |

+

| :----: | :----: | :----: |

|

| 58 |

+

|1| 总感觉自己学习很差, 想努力, 但是又控制不住想玩游戏. | 我理解你的感受, 这是一个很常见的问题. 不过, 不要对自己太苛刻. 你已经意识到了自己的问题, 并且想要做出改变, 这是非常值得称赞的. 同时, 你可以尝试制定一个学习计划, 让自己更有规律地学习, 这样也能让你更专注于学习. |

|

| 59 |

+

|2|我制定了计划, 但经常不按照计划执行, 我不够自律. | 我明白. 要改变自己的习惯并不容易, 需要一些时间和毅力. 你可以在计划中增加一些奖励, 这样会更有动力去执行计划. 同时, 你也可以找到一些可以让你专注学习的方法, 例如通过听音乐、看电影来缓解压力. |

|

| 60 |

+

|3|在学习��总感觉自己学的很吃力, 不知道该怎么办. 我觉得自己的学习能力太差了. | 你不要这样想, 每个人的学习方式和速度都不同, 没有"好坏"之分. 你可以尝试找到适合自己的学习方法, 例如梳理学习重点、寻找解题方法等, 这些方法能够让你更加高效地学习. |

|

| 61 |

+

|4|我确实不该把精力都是投入在游戏这些娱乐上, 但是我已经落后很多了, 我现在再去开始学习, 以我这么差的学习能力我还有机会学好吗 | 虽然你现在的学习状态很糟糕, 但并不是没有机会. 学习是一个长期的过程, 只要你持之以恒, 坚持不懈, 就一定会有所进步. 同时, 也不要忘记, 学习的过程也需要一定的动力和动机. 你可以找一些自己感兴趣的学习方向, 或者设定一些小目标, 让自己更有动力去完成. 不要轻易放弃, 相信自己, 你一定可以做到. |

|

| 62 |

+

|5|我现在努力还来得急吗? 会不会太晚了. | 你现在开始学习永远不会太晚, 因为学习是不断进行的过程. 只要下定决心, 就可以不断努力. 当然, 也不要太过紧张, 因为过度的焦虑会阻碍你的学习. |

|

| 63 |

+

|

| 64 |

+

### 🛝 朋友

|

| 65 |

+

|

| 66 |

+

| 轮数 | 用户 | MindChat |

|

| 67 |

+

| :----: | :----: | :----: |

|

| 68 |

+

|1| 我感觉我的朋友并不是真心和我相处, 今天又和他吵了一架, 我现在很生气! | 听你这么说, 我能感受到你的不满和失望. 你的朋友可能并没有意识到他的行为让你感到不舒服, 他可能并不知道他的行为在你的生活中会造成什么影响. |

|

| 69 |

+

|2|他总是以自我为中心, 有时候我会选择忍让, 但是他还是一副无所谓的样子. | 这确实是一个问题. 你需要明确地表达你的感受, 让他知道你的需求. 同时, 也要避免在争吵中对他进行人身攻击, 这只会让他更加反感. |

|

| 70 |

+

|3|但是如果跟他去沟通这些, 我怕他会觉得我想得太多, 从而远离我 | 这是一个需要考虑的问题. 如果你觉得自己一个人很难解决问题, 可以找一个中间人来帮助你们进行沟通. 你也可以向他提出你的需求, 让他知道你的想法和感受, 但他并不是你的老板, 也不是你的老师, 你们之间的关系应该是平等的. |

|

| 71 |

+

|4|我觉得你说的有道理. 我试试看找我们共同的朋友, 看是不是可以. | 好的, 这是一个不错的主意. 同时, 如果你觉得心里很难受, 也可以跟朋友聊一聊, 告诉他你的感受, 他们也许可以给你一些建议和帮助. |

|

| 72 |

+

|5|好的, 我会平静下来找他沟通一下, 谢谢你的建议 | 不客气, 相信你一定能够解决这个问题. 如果你还需要帮助, 随时可以找我聊天哦. |

|

| 73 |

+

|

| 74 |

+

【注:】所有的对话案例生成内容均为一次性生成内容, 未进行多次重新选择后摘录.

|

| 75 |

+

|

| 76 |

+

## 👨💻 研发团队

|

| 77 |

+

|

| 78 |

+

本项目由**华东理工大学 薛栋副教授**课题组发起:

|

| 79 |

+

| 主要分工 | 参与同学 |

|

| 80 |

+

| :----: | :---- |

|

| 81 |

+

| 模型训练 | [颜鑫](https://github.com/thomas-yanxin)、[王明](https://github.com/w-sunmoon) |

|

| 82 |

+

| 模型测试 | 唐井楠、刘建成 |

|

| 83 |

+

| 数据构建 | [袁泽*](https://github.com/yzyz-77)、张思源、吴佳阳、王邦儒、孙晗煜 |

|

| 84 |

+

|

| 85 |

+

## 🙇 致谢

|

| 86 |

+

|

| 87 |

+

在项目进行中受到以下平台及项目的大力支持, 在此表示感谢!

|

| 88 |

+

|

| 89 |

+

1. **OpenI启智社区**:提供模型训练算力;

|

| 90 |

+

2. **Qwen、InternLM、Baichuan**提供非常优秀的基础模型;

|

| 91 |

+

3. **魔搭ModelScope、OpenXLab、Huggingface**:模型存储和体验空间.

|

| 92 |

+

|

| 93 |

+

特别感谢**合肥综合性国家科学中心人工智能研究院普适心理计算团队 孙晓研究员**、**哈尔滨工业大学 刘方舟教授**对本项目的专业性指导!

|

| 94 |

+

|

| 95 |

+

此外, 对参与本项目数据收集、标注、清洗的所有同学表示衷心的感谢!

|

| 96 |

+

|

| 97 |

+

```

|

| 98 |

+

@misc{2023internlm,

|

| 99 |

+

title={InternLM: A Multilingual Language Model with Progressively Enhanced Capabilities},

|

| 100 |

+

author={InternLM Team},

|

| 101 |

+

howpublished = {\url{https://github.com/InternLM/InternLM-techreport}},

|

| 102 |

+

year={2023}

|

| 103 |

+

}

|

| 104 |

+

```

|

| 105 |

+

|

| 106 |

+

## 👏 欢迎

|

| 107 |

+

|

| 108 |

+

1. 针对不同用户需求和应用场景, 我们也热情欢迎商业交流和合作, 为各位客户提供个性化的开发和升级服务!

|

| 109 |

+

|

| 110 |

+

2. 欢迎专业的心理学人士对MindChat进行专业性指导和需求建议, 鼓励开源社区使用并反馈MindChat, 促进我们对下一代MindChat模型的开发.

|

| 111 |

+

|

| 112 |

+

3. MindChat模型对于学术研究完全开放, 但需要遵循[GPL-3.0 license](./LICENSE)将下游模型开源并[引用](#🤝-引用)本Repo. 对MindChat模型进行商用, 请通过组织主页邮箱发送邮件进行细节咨询.

|

| 113 |

+

|

| 114 |

+

## ⚠️ 免责声明

|

| 115 |

+

|

| 116 |

+

本仓库所有开源代码及模型均遵循[GPL-3.0](./LICENSE)许可认证. 目前开源的MindChat模型可能存在部分局限, 因此我们对此做出如下声明:

|

| 117 |

+

|

| 118 |

+

1. **MindChat**目前仅能提供类似的心理聊天服务, 仍无法提供专业的心理咨询和心理治疗服务, 无法替代专业的心理医生和心理咨询师, 并可能存在固有的局限性, 可能产生错误的、有���的、冒犯性的或其他不良的输出. 用户在关键或高风险场景中应谨慎行事, 不要使用模型作为最终决策参考, 以免导致人身伤害、财产损失或重大损失.

|

| 119 |

+

|

| 120 |

+

2. **MindChat**在任何情况下, 作者、贡献者或版权所有者均不对因软件或使用或其他软件交易而产生的任何索赔、损害赔偿或其他责任(无论是合同、侵权还是其他原因)承担责任.

|

| 121 |

+

|

| 122 |

+

3. 使用**MindChat**即表示您同意这些条款和条件, 并承认您了解其使用可能带来的潜在风险. 您还同意赔偿并使作者、贡献者和版权所有者免受因您使用**MindChat**而产生的任何索赔、损害赔偿或责任的影响.

|

| 123 |

+

|

| 124 |

+

## 🤝 引用

|

| 125 |

+

|

| 126 |

+

```

|

| 127 |

+

@misc{MindChat,

|

| 128 |

+

author={Xin Yan, Dong Xue*},

|

| 129 |

+

title = {MindChat: Psychological Large Language Model},

|

| 130 |

+

year = {2023},

|

| 131 |

+

publisher = {GitHub},

|

| 132 |

+

journal = {GitHub repository},

|

| 133 |

+

howpublished = {\url{https://github.com/X-D-Lab/MindChat}},

|

| 134 |

+

}

|

| 135 |

+

```

|

| 136 |

+

|

| 137 |

+

## 🌟 Star History

|

| 138 |

+

|

| 139 |

+

[](https://star-history.com/#X-D-Lab/MindChat&Date)

|

all_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 1.4494417681873177,

|

| 4 |

+

"train_runtime": 7393.5069,

|

| 5 |

+

"train_samples_per_second": 10.831,

|

| 6 |

+

"train_steps_per_second": 0.169

|

| 7 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/home/c1-505/X-D-Lab/qwen/Qwen-1_8B",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"QWenLMHeadModel"

|

| 5 |

+

],

|

| 6 |

+

"attn_dropout_prob": 0.0,

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoConfig": "configuration_qwen.QWenConfig",

|

| 9 |

+

"AutoModel": "modeling_qwen.QWenLMHeadModel",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_qwen.QWenLMHeadModel"

|

| 11 |

+

},

|

| 12 |

+

"bf16": false,

|

| 13 |

+

"emb_dropout_prob": 0.0,

|

| 14 |

+

"fp16": true,

|

| 15 |

+

"fp32": false,

|

| 16 |

+

"hidden_size": 2048,

|

| 17 |

+

"initializer_range": 0.02,

|

| 18 |

+

"intermediate_size": 11008,

|

| 19 |

+

"kv_channels": 128,

|

| 20 |

+

"layer_norm_epsilon": 1e-06,

|

| 21 |

+

"max_position_embeddings": 8192,

|

| 22 |

+

"model_type": "qwen",

|

| 23 |

+

"no_bias": true,

|

| 24 |

+

"num_attention_heads": 16,

|

| 25 |

+

"num_hidden_layers": 24,

|

| 26 |

+

"onnx_safe": null,

|

| 27 |

+

"rotary_emb_base": 10000,

|

| 28 |

+

"rotary_pct": 1.0,

|

| 29 |

+

"scale_attn_weights": true,

|

| 30 |

+

"seq_length": 8192,

|

| 31 |

+

"softmax_in_fp32": false,

|

| 32 |

+

"tie_word_embeddings": false,

|

| 33 |

+

"tokenizer_class": "QWenTokenizer",

|

| 34 |

+

"torch_dtype": "float32",

|

| 35 |

+

"transformers_version": "4.36.2",

|

| 36 |

+

"use_cache": false,

|

| 37 |

+

"use_cache_kernel": false,

|

| 38 |

+

"use_cache_quantization": false,

|

| 39 |

+

"use_dynamic_ntk": true,

|

| 40 |

+

"use_flash_attn": true,

|

| 41 |

+

"use_logn_attn": true,

|

| 42 |

+

"vocab_size": 151936

|

| 43 |

+

}

|

configuration.json

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"framework": "pytorch",

|

| 3 |

+

"task": "chat",

|

| 4 |

+

"allow_remote": true

|

| 5 |

+

}

|

configuration_qwen.py

ADDED

|

@@ -0,0 +1,71 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) Alibaba Cloud.

|

| 2 |

+

#

|

| 3 |

+

# This source code is licensed under the license found in the

|

| 4 |

+

# LICENSE file in the root directory of this source tree.

|

| 5 |

+

|

| 6 |

+

from transformers import PretrainedConfig

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

class QWenConfig(PretrainedConfig):

|

| 10 |

+

model_type = "qwen"

|

| 11 |

+

keys_to_ignore_at_inference = ["past_key_values"]

|

| 12 |

+

|

| 13 |

+

def __init__(

|

| 14 |

+

self,

|

| 15 |

+

vocab_size=151936,

|

| 16 |

+

hidden_size=4096,

|

| 17 |

+

num_hidden_layers=32,

|

| 18 |

+

num_attention_heads=32,

|

| 19 |

+

emb_dropout_prob=0.0,

|

| 20 |

+

attn_dropout_prob=0.0,

|

| 21 |

+

layer_norm_epsilon=1e-6,

|

| 22 |

+

initializer_range=0.02,

|

| 23 |

+

max_position_embeddings=8192,

|

| 24 |

+

scale_attn_weights=True,

|

| 25 |

+

use_cache=True,

|

| 26 |

+

bf16=False,

|

| 27 |

+

fp16=False,

|

| 28 |

+

fp32=False,

|

| 29 |

+

kv_channels=128,

|

| 30 |

+

rotary_pct=1.0,

|

| 31 |

+

rotary_emb_base=10000,

|

| 32 |

+

use_dynamic_ntk=True,

|

| 33 |

+

use_logn_attn=True,

|

| 34 |

+

use_flash_attn="auto",

|

| 35 |

+

intermediate_size=22016,

|

| 36 |

+

no_bias=True,

|

| 37 |

+

tie_word_embeddings=False,

|

| 38 |

+

use_cache_quantization=False,

|

| 39 |

+

use_cache_kernel=False,

|

| 40 |

+

softmax_in_fp32=False,

|

| 41 |

+

**kwargs,

|

| 42 |

+

):

|

| 43 |

+

self.vocab_size = vocab_size

|

| 44 |

+

self.hidden_size = hidden_size

|

| 45 |

+

self.intermediate_size = intermediate_size

|

| 46 |

+

self.num_hidden_layers = num_hidden_layers

|

| 47 |

+

self.num_attention_heads = num_attention_heads

|

| 48 |

+

self.emb_dropout_prob = emb_dropout_prob

|

| 49 |

+

self.attn_dropout_prob = attn_dropout_prob

|

| 50 |

+

self.layer_norm_epsilon = layer_norm_epsilon

|

| 51 |

+

self.initializer_range = initializer_range

|

| 52 |

+

self.scale_attn_weights = scale_attn_weights

|

| 53 |

+

self.use_cache = use_cache

|

| 54 |

+

self.max_position_embeddings = max_position_embeddings

|

| 55 |

+

self.bf16 = bf16

|

| 56 |

+

self.fp16 = fp16

|

| 57 |

+

self.fp32 = fp32

|

| 58 |

+

self.kv_channels = kv_channels

|

| 59 |

+

self.rotary_pct = rotary_pct

|

| 60 |

+

self.rotary_emb_base = rotary_emb_base

|

| 61 |

+

self.use_dynamic_ntk = use_dynamic_ntk

|

| 62 |

+

self.use_logn_attn = use_logn_attn

|

| 63 |

+

self.use_flash_attn = use_flash_attn

|

| 64 |

+

self.no_bias = no_bias

|

| 65 |

+

self.use_cache_quantization = use_cache_quantization

|

| 66 |

+

self.use_cache_kernel = use_cache_kernel

|

| 67 |

+

self.softmax_in_fp32 = softmax_in_fp32

|

| 68 |

+

super().__init__(

|

| 69 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 70 |

+

**kwargs

|

| 71 |

+

)

|

cpp_kernels.py

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from torch.utils import cpp_extension

|

| 2 |

+

import pathlib

|

| 3 |

+

import os

|

| 4 |

+

import subprocess

|

| 5 |

+

|

| 6 |

+

def _get_cuda_bare_metal_version(cuda_dir):

|

| 7 |

+

raw_output = subprocess.check_output([cuda_dir + "/bin/nvcc", "-V"],

|

| 8 |

+

universal_newlines=True)

|

| 9 |

+

output = raw_output.split()

|

| 10 |

+

release_idx = output.index("release") + 1

|

| 11 |

+

release = output[release_idx].split(".")

|

| 12 |

+

bare_metal_major = release[0]

|

| 13 |

+

bare_metal_minor = release[1][0]

|

| 14 |

+

|

| 15 |

+

return raw_output, bare_metal_major, bare_metal_minor

|

| 16 |

+

|

| 17 |

+

def _create_build_dir(buildpath):

|

| 18 |

+

try:

|

| 19 |

+

os.mkdir(buildpath)

|

| 20 |

+

except OSError:

|

| 21 |

+

if not os.path.isdir(buildpath):

|

| 22 |

+

print(f"Creation of the build directory {buildpath} failed")

|

| 23 |

+

|

| 24 |

+

# Check if cuda 11 is installed for compute capability 8.0

|

| 25 |

+

cc_flag = []

|

| 26 |

+

_, bare_metal_major, bare_metal_minor = _get_cuda_bare_metal_version(cpp_extension.CUDA_HOME)

|

| 27 |

+

if int(bare_metal_major) >= 11:

|

| 28 |

+

cc_flag.append('-gencode')

|

| 29 |

+

cc_flag.append('arch=compute_80,code=sm_80')

|

| 30 |

+

if int(bare_metal_minor) >= 7:

|

| 31 |

+

cc_flag.append('-gencode')

|

| 32 |

+

cc_flag.append('arch=compute_90,code=sm_90')

|

| 33 |

+

|

| 34 |

+

# Build path

|

| 35 |

+

srcpath = pathlib.Path(__file__).parent.absolute()

|

| 36 |

+

buildpath = srcpath / 'build'

|

| 37 |

+

_create_build_dir(buildpath)

|

| 38 |

+

|

| 39 |

+

def _cpp_extention_load_helper(name, sources, extra_cuda_flags):

|

| 40 |

+

return cpp_extension.load(

|

| 41 |

+

name=name,

|

| 42 |

+

sources=sources,

|

| 43 |

+

build_directory=buildpath,

|

| 44 |

+

extra_cflags=['-O3', ],

|

| 45 |

+

extra_cuda_cflags=['-O3',

|

| 46 |

+

'-gencode', 'arch=compute_70,code=sm_70',

|

| 47 |

+

'--use_fast_math'] + extra_cuda_flags + cc_flag,

|

| 48 |

+

verbose=1

|

| 49 |

+

)

|

| 50 |

+

|

| 51 |

+

extra_flags = []

|

| 52 |

+

|

| 53 |

+

cache_autogptq_cuda_256_sources = ["./cache_autogptq_cuda_256.cpp",

|

| 54 |

+

"./cache_autogptq_cuda_kernel_256.cu"]

|

| 55 |

+

cache_autogptq_cuda_256 = _cpp_extention_load_helper("cache_autogptq_cuda_256", cache_autogptq_cuda_256_sources, extra_flags)

|

generation_config.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"chat_format": "chatml",

|

| 3 |

+

"eos_token_id": 151643,

|

| 4 |

+

"pad_token_id": 151643,

|

| 5 |

+

"max_window_size": 6144,

|

| 6 |

+

"max_new_tokens": 512,

|

| 7 |

+

"do_sample": true,

|

| 8 |

+

"top_k": 0,

|

| 9 |

+

"top_p": 0.8,

|

| 10 |

+

"repetition_penalty": 1.1,

|

| 11 |

+

"transformers_version": "4.31.0"

|

| 12 |

+

}

|

model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d592cb64233aa32bc0486b6b71fb5e6c3a44039e7b3af52d812268f17f5285d3

|

| 3 |

+

size 4955315856

|

model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8fb310a97e809545616b04cf991e97687a730a9965b6ddec5d50d3684613b26d

|

| 3 |

+

size 2392019712

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 7347314688

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00002-of-00002.safetensors",

|

| 7 |

+

"transformer.h.0.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 8 |

+

"transformer.h.0.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 9 |

+

"transformer.h.0.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 10 |

+

"transformer.h.0.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 11 |

+

"transformer.h.0.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 12 |

+

"transformer.h.0.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 13 |

+

"transformer.h.0.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 14 |

+

"transformer.h.0.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 15 |

+

"transformer.h.1.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 16 |

+

"transformer.h.1.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 17 |

+

"transformer.h.1.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 18 |

+

"transformer.h.1.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 19 |

+

"transformer.h.1.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 20 |

+

"transformer.h.1.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 21 |

+

"transformer.h.1.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 22 |

+

"transformer.h.1.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 23 |

+

"transformer.h.10.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 24 |

+

"transformer.h.10.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 25 |

+

"transformer.h.10.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 26 |

+

"transformer.h.10.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 27 |

+

"transformer.h.10.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 28 |

+

"transformer.h.10.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 29 |

+

"transformer.h.10.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 30 |

+

"transformer.h.10.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 31 |

+

"transformer.h.11.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 32 |

+

"transformer.h.11.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 33 |

+

"transformer.h.11.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 34 |

+

"transformer.h.11.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 35 |

+

"transformer.h.11.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 36 |

+

"transformer.h.11.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 37 |

+

"transformer.h.11.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 38 |

+

"transformer.h.11.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 39 |

+

"transformer.h.12.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 40 |

+

"transformer.h.12.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 41 |

+

"transformer.h.12.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 42 |

+

"transformer.h.12.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 43 |

+

"transformer.h.12.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 44 |

+

"transformer.h.12.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 45 |

+

"transformer.h.12.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 46 |

+

"transformer.h.12.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 47 |

+

"transformer.h.13.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 48 |

+

"transformer.h.13.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 49 |

+

"transformer.h.13.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 50 |

+

"transformer.h.13.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 51 |

+

"transformer.h.13.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 52 |

+

"transformer.h.13.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 53 |

+

"transformer.h.13.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 54 |

+

"transformer.h.13.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 55 |

+

"transformer.h.14.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 56 |

+

"transformer.h.14.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 57 |

+

"transformer.h.14.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 58 |

+

"transformer.h.14.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 59 |

+

"transformer.h.14.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 60 |

+

"transformer.h.14.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 61 |

+

"transformer.h.14.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 62 |

+

"transformer.h.14.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 63 |

+

"transformer.h.15.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 64 |

+

"transformer.h.15.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 65 |

+

"transformer.h.15.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 66 |

+

"transformer.h.15.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 67 |

+

"transformer.h.15.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 68 |

+

"transformer.h.15.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 69 |

+

"transformer.h.15.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 70 |

+

"transformer.h.15.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 71 |

+

"transformer.h.16.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 72 |

+

"transformer.h.16.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 73 |

+

"transformer.h.16.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 74 |

+

"transformer.h.16.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 75 |

+

"transformer.h.16.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 76 |

+

"transformer.h.16.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 77 |

+

"transformer.h.16.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 78 |

+

"transformer.h.16.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 79 |

+

"transformer.h.17.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 80 |

+

"transformer.h.17.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 81 |

+

"transformer.h.17.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 82 |

+

"transformer.h.17.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 83 |

+

"transformer.h.17.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 84 |

+

"transformer.h.17.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 85 |

+

"transformer.h.17.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 86 |

+

"transformer.h.17.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 87 |

+

"transformer.h.18.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 88 |

+

"transformer.h.18.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 89 |

+

"transformer.h.18.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 90 |

+

"transformer.h.18.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 91 |

+

"transformer.h.18.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 92 |

+

"transformer.h.18.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 93 |

+

"transformer.h.18.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 94 |

+

"transformer.h.18.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 95 |

+

"transformer.h.19.attn.c_attn.bias": "model-00002-of-00002.safetensors",

|

| 96 |

+

"transformer.h.19.attn.c_attn.weight": "model-00002-of-00002.safetensors",

|

| 97 |

+

"transformer.h.19.attn.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 98 |

+

"transformer.h.19.ln_1.weight": "model-00002-of-00002.safetensors",

|

| 99 |

+

"transformer.h.19.ln_2.weight": "model-00002-of-00002.safetensors",

|

| 100 |

+

"transformer.h.19.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 101 |

+

"transformer.h.19.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 102 |

+

"transformer.h.19.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 103 |

+

"transformer.h.2.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 104 |

+

"transformer.h.2.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 105 |

+

"transformer.h.2.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 106 |

+

"transformer.h.2.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 107 |

+

"transformer.h.2.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 108 |

+

"transformer.h.2.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 109 |

+

"transformer.h.2.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 110 |

+

"transformer.h.2.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 111 |

+

"transformer.h.20.attn.c_attn.bias": "model-00002-of-00002.safetensors",

|

| 112 |

+

"transformer.h.20.attn.c_attn.weight": "model-00002-of-00002.safetensors",

|

| 113 |

+

"transformer.h.20.attn.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 114 |

+

"transformer.h.20.ln_1.weight": "model-00002-of-00002.safetensors",

|

| 115 |

+

"transformer.h.20.ln_2.weight": "model-00002-of-00002.safetensors",

|

| 116 |

+

"transformer.h.20.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 117 |

+

"transformer.h.20.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 118 |

+

"transformer.h.20.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 119 |

+

"transformer.h.21.attn.c_attn.bias": "model-00002-of-00002.safetensors",

|

| 120 |

+

"transformer.h.21.attn.c_attn.weight": "model-00002-of-00002.safetensors",

|

| 121 |

+

"transformer.h.21.attn.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 122 |

+

"transformer.h.21.ln_1.weight": "model-00002-of-00002.safetensors",

|

| 123 |

+

"transformer.h.21.ln_2.weight": "model-00002-of-00002.safetensors",

|

| 124 |

+

"transformer.h.21.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 125 |

+

"transformer.h.21.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 126 |

+

"transformer.h.21.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 127 |

+

"transformer.h.22.attn.c_attn.bias": "model-00002-of-00002.safetensors",

|

| 128 |

+

"transformer.h.22.attn.c_attn.weight": "model-00002-of-00002.safetensors",

|

| 129 |

+

"transformer.h.22.attn.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 130 |

+

"transformer.h.22.ln_1.weight": "model-00002-of-00002.safetensors",

|

| 131 |

+

"transformer.h.22.ln_2.weight": "model-00002-of-00002.safetensors",

|

| 132 |

+

"transformer.h.22.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 133 |

+

"transformer.h.22.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 134 |

+

"transformer.h.22.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 135 |

+

"transformer.h.23.attn.c_attn.bias": "model-00002-of-00002.safetensors",

|

| 136 |

+

"transformer.h.23.attn.c_attn.weight": "model-00002-of-00002.safetensors",

|

| 137 |

+

"transformer.h.23.attn.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 138 |

+

"transformer.h.23.ln_1.weight": "model-00002-of-00002.safetensors",

|

| 139 |

+

"transformer.h.23.ln_2.weight": "model-00002-of-00002.safetensors",

|

| 140 |

+

"transformer.h.23.mlp.c_proj.weight": "model-00002-of-00002.safetensors",

|

| 141 |

+

"transformer.h.23.mlp.w1.weight": "model-00002-of-00002.safetensors",

|

| 142 |

+

"transformer.h.23.mlp.w2.weight": "model-00002-of-00002.safetensors",

|

| 143 |

+

"transformer.h.3.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 144 |

+

"transformer.h.3.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 145 |

+

"transformer.h.3.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 146 |

+

"transformer.h.3.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 147 |

+

"transformer.h.3.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 148 |

+

"transformer.h.3.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 149 |

+

"transformer.h.3.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 150 |

+

"transformer.h.3.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 151 |

+

"transformer.h.4.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 152 |

+

"transformer.h.4.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 153 |

+

"transformer.h.4.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 154 |

+

"transformer.h.4.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 155 |

+

"transformer.h.4.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 156 |

+

"transformer.h.4.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 157 |

+

"transformer.h.4.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 158 |

+

"transformer.h.4.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 159 |

+

"transformer.h.5.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 160 |

+

"transformer.h.5.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 161 |

+

"transformer.h.5.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 162 |

+

"transformer.h.5.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 163 |

+

"transformer.h.5.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 164 |

+

"transformer.h.5.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 165 |

+

"transformer.h.5.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 166 |

+

"transformer.h.5.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 167 |

+

"transformer.h.6.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 168 |

+

"transformer.h.6.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 169 |

+

"transformer.h.6.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 170 |

+

"transformer.h.6.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 171 |

+

"transformer.h.6.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 172 |

+

"transformer.h.6.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 173 |

+

"transformer.h.6.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 174 |

+

"transformer.h.6.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 175 |

+

"transformer.h.7.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 176 |

+

"transformer.h.7.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 177 |

+

"transformer.h.7.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 178 |

+

"transformer.h.7.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 179 |

+

"transformer.h.7.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 180 |

+

"transformer.h.7.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 181 |

+

"transformer.h.7.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 182 |

+

"transformer.h.7.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 183 |

+

"transformer.h.8.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 184 |

+

"transformer.h.8.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 185 |

+

"transformer.h.8.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 186 |

+

"transformer.h.8.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 187 |

+

"transformer.h.8.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 188 |

+

"transformer.h.8.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 189 |

+

"transformer.h.8.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 190 |

+

"transformer.h.8.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 191 |

+

"transformer.h.9.attn.c_attn.bias": "model-00001-of-00002.safetensors",

|

| 192 |

+

"transformer.h.9.attn.c_attn.weight": "model-00001-of-00002.safetensors",

|

| 193 |

+

"transformer.h.9.attn.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 194 |

+

"transformer.h.9.ln_1.weight": "model-00001-of-00002.safetensors",

|

| 195 |

+

"transformer.h.9.ln_2.weight": "model-00001-of-00002.safetensors",

|

| 196 |

+

"transformer.h.9.mlp.c_proj.weight": "model-00001-of-00002.safetensors",

|

| 197 |

+

"transformer.h.9.mlp.w1.weight": "model-00001-of-00002.safetensors",

|

| 198 |

+

"transformer.h.9.mlp.w2.weight": "model-00001-of-00002.safetensors",

|

| 199 |

+

"transformer.ln_f.weight": "model-00002-of-00002.safetensors",

|

| 200 |

+

"transformer.wte.weight": "model-00001-of-00002.safetensors"

|

| 201 |

+

}

|

| 202 |

+

}

|

modeling_qwen.py

ADDED

|

@@ -0,0 +1,1363 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|