Upload folder using huggingface_hub

Browse files- .gitattributes +3 -0

- README.md +157 -5

- asset/input/canny.jpg +0 -0

- asset/input/depth.jpg +0 -0

- asset/input/pose.jpg +0 -0

- asset/output/canny.jpg +3 -0

- asset/output/depth.jpg +3 -0

- asset/output/pose.jpg +3 -0

- pytorch_model_canny_distill.pt +3 -0

- pytorch_model_depth_distill.pt +3 -0

- pytorch_model_pose_distill.pt +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

asset/output/canny.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

asset/output/depth.jpg filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

asset/output/pose.jpg filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,5 +1,157 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

## Using HunyuanDiT ControlNet

|

| 3 |

+

|

| 4 |

+

|

| 5 |

+

### Instructions

|

| 6 |

+

|

| 7 |

+

The dependencies and installation are basically the same as the [**base model**](https://huggingface.co/Tencent-Hunyuan/HunyuanDiT-v1.1).

|

| 8 |

+

|

| 9 |

+

We provide three types of ControlNet weights for you to test: canny, depth and pose ControlNet.

|

| 10 |

+

|

| 11 |

+

Download the model using the following commands:

|

| 12 |

+

|

| 13 |

+

```bash

|

| 14 |

+

cd HunyuanDiT

|

| 15 |

+

# Use the huggingface-cli tool to download the model.

|

| 16 |

+

huggingface-cli download Tencent-Hunyuan/HYDiT-ControlNet --local-dir ./ckpts/t2i/controlnet

|

| 17 |

+

|

| 18 |

+

# Quick start

|

| 19 |

+

python3 sample_controlnet.py --no-enhance --load-key distill --infer-steps 50 --control_type canny --prompt "在夜晚的酒店门前,一座古老的中国风格的狮子雕像矗立着,它的眼睛闪烁着光芒,仿佛在守护着这座建筑。背景是夜晚的酒店前,构图方式是特写,平视,居中构图。这张照片呈现了真实摄影风格,蕴含了中国雕塑文化,同时展现了神秘氛围" --condition_image_path controlnet/asset/input/canny.jpg --control_weight 1.0

|

| 20 |

+

```

|

| 21 |

+

|

| 22 |

+

Examples of condition input and ControlNet results are as follows:

|

| 23 |

+

<table>

|

| 24 |

+

<tr>

|

| 25 |

+

<td colspan="3" align="center">Condition Input</td>

|

| 26 |

+

</tr>

|

| 27 |

+

|

| 28 |

+

<tr>

|

| 29 |

+

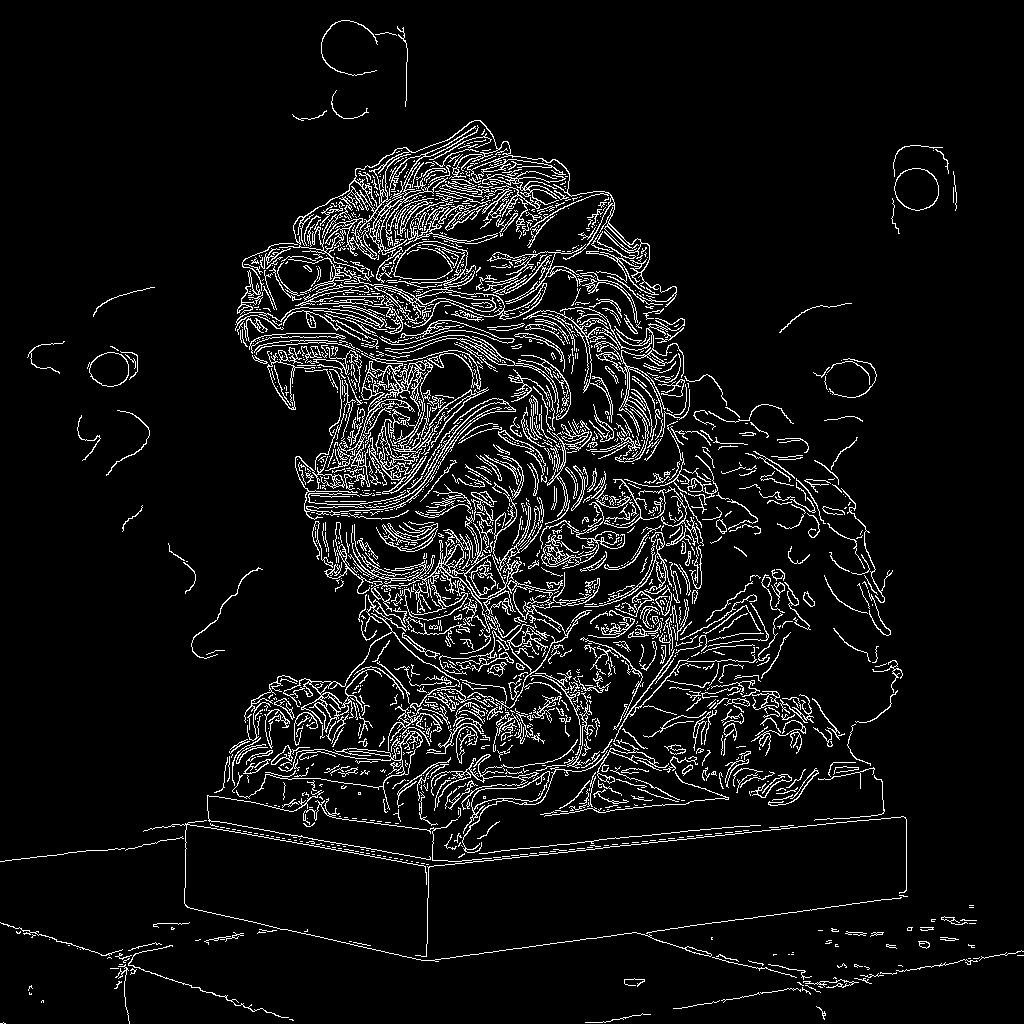

<td align="center">Canny ControlNet </td>

|

| 30 |

+

<td align="center">Depth ControlNet </td>

|

| 31 |

+

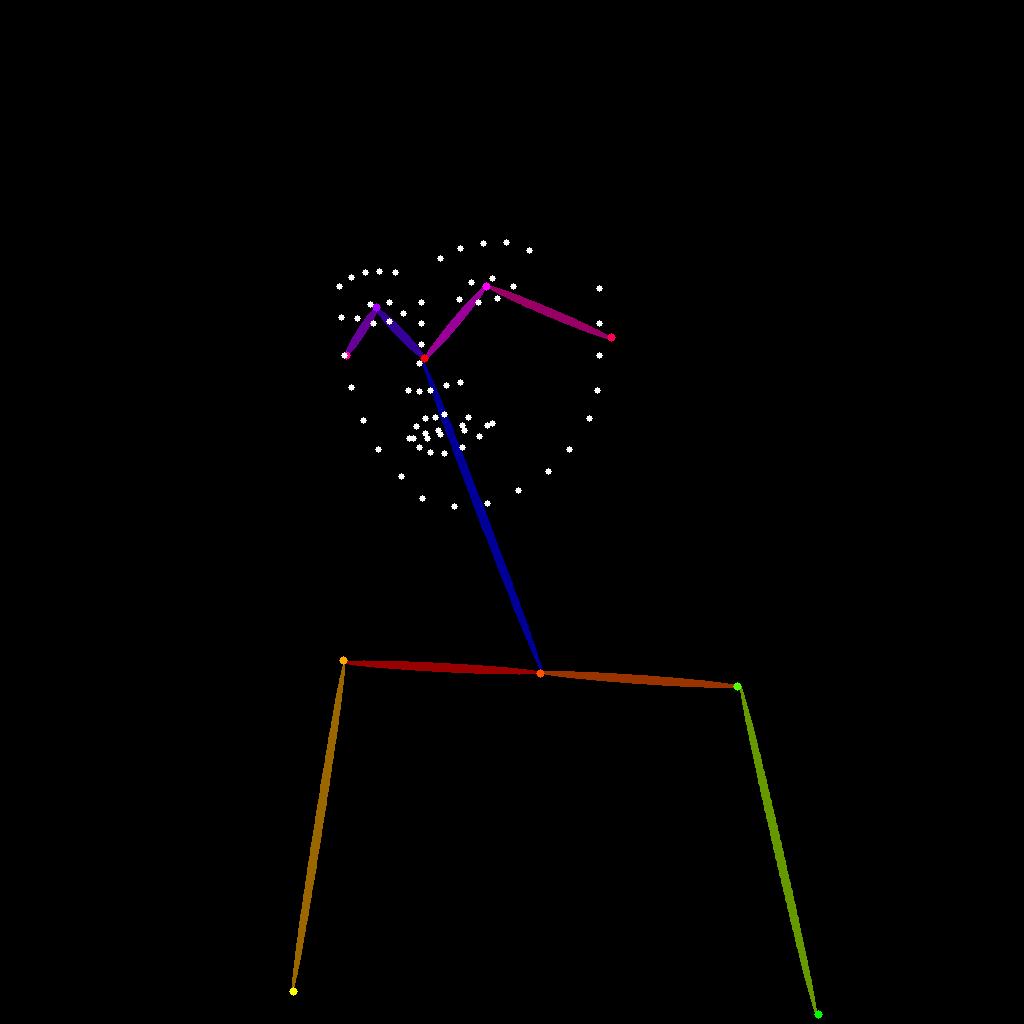

<td align="center">Pose ControlNet </td>

|

| 32 |

+

</tr>

|

| 33 |

+

|

| 34 |

+

<tr>

|

| 35 |

+

<td align="center">在夜晚的酒店门前,一座古老的中国风格的狮子雕像矗立着,它的眼睛闪烁着光芒,仿佛在守护着这座建筑。背景是夜晚的酒店前,构图方式是特写,平视,居中构图。这张照片呈现了真实摄影风格,蕴含了中国雕塑文化,同时展现了神秘氛围<br>(At night, an ancient Chinese-style lion statue stands in front of the hotel, its eyes gleaming as if guarding the building. The background is the hotel entrance at night, with a close-up, eye-level, and centered composition. This photo presents a realistic photographic style, embodies Chinese sculpture culture, and reveals a mysterious atmosphere.) </td>

|

| 36 |

+

<td align="center">在茂密的森林中,一只黑白相间的熊猫静静地坐在绿树红花中,周围是山川和海洋。背景是白天的森林,光线充足<br>(In the dense forest, a black and white panda sits quietly in green trees and red flowers, surrounded by mountains, rivers, and the ocean. The background is the forest in a bright environment.) </td>

|

| 37 |

+

<td align="center">一位亚洲女性,身穿绿色上衣,戴着紫色头巾和紫色围巾,站在黑板前。背景是黑板。照片采用近景、平视和居中构图的方式呈现真实摄影风格<br>(An Asian woman, dressed in a green top, wearing a purple headscarf and a purple scarf, stands in front of a blackboard. The background is the blackboard. The photo is presented in a close-up, eye-level, and centered composition, adopting a realistic photographic style) </td>

|

| 38 |

+

</tr>

|

| 39 |

+

|

| 40 |

+

<tr>

|

| 41 |

+

<td align="center"><img src="asset/input/canny.jpg" alt="Image 0" width="200"/></td>

|

| 42 |

+

<td align="center"><img src="asset/input/depth.jpg" alt="Image 1" width="200"/></td>

|

| 43 |

+

<td align="center"><img src="asset/input/pose.jpg" alt="Image 2" width="200"/></td>

|

| 44 |

+

|

| 45 |

+

</tr>

|

| 46 |

+

|

| 47 |

+

<tr>

|

| 48 |

+

<td colspan="3" align="center">ControlNet Output</td>

|

| 49 |

+

</tr>

|

| 50 |

+

|

| 51 |

+

<tr>

|

| 52 |

+

<td align="center"><img src="asset/output/canny.jpg" alt="Image 3" width="200"/></td>

|

| 53 |

+

<td align="center"><img src="asset/output/depth.jpg" alt="Image 4" width="200"/></td>

|

| 54 |

+

<td align="center"><img src="asset/output/pose.jpg" alt="Image 5" width="200"/></td>

|

| 55 |

+

</tr>

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

</table>

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

### Training

|

| 62 |

+

|

| 63 |

+

We utilize [**DWPose**](https://github.com/IDEA-Research/DWPose) for pose extraction. Please follow their guidelines to download the checkpoints and save them to `hydit/annotator/ckpts` directory. Additionally, ensure that you install the related dependencies.

|

| 64 |

+

```bash

|

| 65 |

+

pip install matplotlib

|

| 66 |

+

pip install onnxruntime_gpu

|

| 67 |

+

```

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

We provide three types of weights for ControlNet training, `ema`, `module` and `distill`, and you can choose according to the actual effects. By default, we use `distill` weights.

|

| 71 |

+

|

| 72 |

+

Here is an example, we load the `distill` weights into the main model and conduct ControlNet training.

|

| 73 |

+

|

| 74 |

+

If you want to load the `module` weights into the main model, just remove the `--ema-to-module` parameter.

|

| 75 |

+

|

| 76 |

+

If apply multiple resolution training, you need to add the `--multireso` and `--reso-step 64` parameter.

|

| 77 |

+

|

| 78 |

+

```bash

|

| 79 |

+

task_flag="canny_controlnet" # task flag is used to identify folders.

|

| 80 |

+

control_type=canny

|

| 81 |

+

resume=./ckpts/t2i/model/ # checkpoint root for resume

|

| 82 |

+

index_file=path/to/your/index_file

|

| 83 |

+

results_dir=./log_EXP # save root for results

|

| 84 |

+

batch_size=1 # training batch size

|

| 85 |

+

image_size=1024 # training image resolution

|

| 86 |

+

grad_accu_steps=2 # gradient accumulation

|

| 87 |

+

warmup_num_steps=0 # warm-up steps

|

| 88 |

+

lr=0.0001 # learning rate

|

| 89 |

+

ckpt_every=10000 # create a ckpt every a few steps.

|

| 90 |

+

ckpt_latest_every=5000 # create a ckpt named `latest.pt` every a few steps.

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

sh $(dirname "$0")/run_g_controlnet.sh \

|

| 94 |

+

--task-flag ${task_flag} \

|

| 95 |

+

--control_type ${control_type} \

|

| 96 |

+

--noise-schedule scaled_linear --beta-start 0.00085 --beta-end 0.03 \

|

| 97 |

+

--predict-type v_prediction \

|

| 98 |

+

--multireso \

|

| 99 |

+

--reso-step 64 \

|

| 100 |

+

--ema-to-module \

|

| 101 |

+

--uncond-p 0.44 \

|

| 102 |

+

--uncond-p-t5 0.44 \

|

| 103 |

+

--index-file ${index_file} \

|

| 104 |

+

--random-flip \

|

| 105 |

+

--lr ${lr} \

|

| 106 |

+

--batch-size ${batch_size} \

|

| 107 |

+

--image-size ${image_size} \

|

| 108 |

+

--global-seed 999 \

|

| 109 |

+

--grad-accu-steps ${grad_accu_steps} \

|

| 110 |

+

--warmup-num-steps ${warmup_num_steps} \

|

| 111 |

+

--use-flash-attn \

|

| 112 |

+

--use-fp16 \

|

| 113 |

+

--use-ema \

|

| 114 |

+

--ema-dtype fp32 \

|

| 115 |

+

--results-dir ${results_dir} \

|

| 116 |

+

--resume-split \

|

| 117 |

+

--resume ${resume} \

|

| 118 |

+

--ckpt-every ${ckpt_every} \

|

| 119 |

+

--ckpt-latest-every ${ckpt_latest_every} \

|

| 120 |

+

--log-every 10 \

|

| 121 |

+

--deepspeed \

|

| 122 |

+

--deepspeed-optimizer \

|

| 123 |

+

--use-zero-stage 2 \

|

| 124 |

+

"$@"

|

| 125 |

+

```

|

| 126 |

+

|

| 127 |

+

Recommended parameter settings

|

| 128 |

+

|

| 129 |

+

| Parameter | Description | Recommended Parameter Value | Note|

|

| 130 |

+

|:---------------:|:---------:|:---------------------------------------------------:|:--:|

|

| 131 |

+

| `--batch_size` | Training batch size | 1 | Depends on GPU memory|

|

| 132 |

+

| `--grad-accu-steps` | Size of gradient accumulation | 2 | - |

|

| 133 |

+

| `--lr` | Learning rate | 0.0001 | - |

|

| 134 |

+

| `--control_type` | ControlNet condition type, support 3 types now (canny, depth and pose) | / | - |

|

| 135 |

+

|

| 136 |

+

|

| 137 |

+

### Inference

|

| 138 |

+

You can use the following command line for inference.

|

| 139 |

+

|

| 140 |

+

a. Using canny ControlNet during inference

|

| 141 |

+

|

| 142 |

+

```bash

|

| 143 |

+

python3 sample_controlnet.py --no-enhance --load-key distill --infer-steps 50 --control_type canny --prompt "在夜晚的酒店门前,一座古老的中国风格的狮子雕像矗立着,它的眼睛闪烁着光芒,仿佛在守护着这座建筑。背景是夜晚的酒店前,构图方式是特写,平视,居中构图。这张照片呈现了真实摄影风格,蕴含了中国雕塑文化,同时展现了神秘氛围" --condition_image_path controlnet/asset/input/canny.jpg --control_weight 1.0

|

| 144 |

+

```

|

| 145 |

+

|

| 146 |

+

b. Using pose ControlNet during inference

|

| 147 |

+

|

| 148 |

+

```bash

|

| 149 |

+

python3 sample_controlnet.py --no-enhance --load-key distill --infer-steps 50 --control_type depth --prompt "在茂密的森林中,一只黑白相间的熊猫静静地坐在绿树红花中,周围是山川和海洋。背景是白天的森林,光线充足" --condition_image_path controlnet/asset/input/depth.jpg --control_weight 1.0

|

| 150 |

+

```

|

| 151 |

+

|

| 152 |

+

c. Using depth ControlNet during inference

|

| 153 |

+

|

| 154 |

+

```bash

|

| 155 |

+

python3 sample_controlnet.py --no-enhance --load-key distill --infer-steps 50 --control_type pose --prompt "一位亚洲女性,身穿绿色上衣,戴着紫色头巾和紫色围巾,站在黑板前。背景是黑板。照片采用近景、平视和居中构图的方式呈现真实摄影风格" --condition_image_path controlnet/asset/input/pose.jpg --control_weight 1.0

|

| 156 |

+

```

|

| 157 |

+

|

asset/input/canny.jpg

ADDED

|

asset/input/depth.jpg

ADDED

|

asset/input/pose.jpg

ADDED

|

asset/output/canny.jpg

ADDED

|

Git LFS Details

|

asset/output/depth.jpg

ADDED

|

Git LFS Details

|

asset/output/pose.jpg

ADDED

|

Git LFS Details

|

pytorch_model_canny_distill.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8fb880bde25340eeaa81ddbf712592130a13f5871c652c82cc5498ec33636c04

|

| 3 |

+

size 1668467911

|

pytorch_model_depth_distill.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aadb71b7dd3e4d2bf8444713a67975a8129cc8f034d6085c4da5fc00b9ced22a

|

| 3 |

+

size 1668467911

|

pytorch_model_pose_distill.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4baea7edb7004f841ac2347aff9b44190460d61dfd79b984c6e7195cdfa7ff23

|

| 3 |

+

size 1668467883

|