Commit

•

38f54d5

1

Parent(s):

59d4f98

Upload 13 files

Browse files- README.md +62 -0

- adapter_config.json +34 -0

- adapter_model.safetensors +3 -0

- added_tokens.json +4 -0

- all_results.json +7 -0

- special_tokens_map.json +35 -0

- tokenizer.model +3 -0

- tokenizer_config.json +66 -0

- train_results.json +7 -0

- trainer_log.jsonl +13 -0

- trainer_state.json +114 -0

- training_args.bin +3 -0

- training_loss.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,62 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

library_name: peft

|

| 4 |

+

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- lora

|

| 7 |

+

- generated_from_trainer

|

| 8 |

+

base_model: IlyaGusev/saiga_mistral_7b_merged

|

| 9 |

+

model-index:

|

| 10 |

+

- name: sft

|

| 11 |

+

results: []

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 15 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 16 |

+

|

| 17 |

+

# sft

|

| 18 |

+

|

| 19 |

+

This model is a fine-tuned version of [IlyaGusev/saiga_mistral_7b_merged](https://huggingface.co/IlyaGusev/saiga_mistral_7b_merged) on the gazeta dataset.

|

| 20 |

+

|

| 21 |

+

## Model description

|

| 22 |

+

|

| 23 |

+

More information needed

|

| 24 |

+

|

| 25 |

+

## Intended uses & limitations

|

| 26 |

+

|

| 27 |

+

More information needed

|

| 28 |

+

|

| 29 |

+

## Training and evaluation data

|

| 30 |

+

|

| 31 |

+

More information needed

|

| 32 |

+

|

| 33 |

+

## Training procedure

|

| 34 |

+

|

| 35 |

+

### Training hyperparameters

|

| 36 |

+

|

| 37 |

+

The following hyperparameters were used during training:

|

| 38 |

+

- learning_rate: 5e-05

|

| 39 |

+

- train_batch_size: 4

|

| 40 |

+

- eval_batch_size: 2

|

| 41 |

+

- seed: 42

|

| 42 |

+

- distributed_type: multi-GPU

|

| 43 |

+

- num_devices: 2

|

| 44 |

+

- gradient_accumulation_steps: 2

|

| 45 |

+

- total_train_batch_size: 16

|

| 46 |

+

- total_eval_batch_size: 4

|

| 47 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 48 |

+

- lr_scheduler_type: cosine

|

| 49 |

+

- num_epochs: 1.0

|

| 50 |

+

- mixed_precision_training: Native AMP

|

| 51 |

+

|

| 52 |

+

### Training results

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

### Framework versions

|

| 57 |

+

|

| 58 |

+

- PEFT 0.10.1.dev0

|

| 59 |

+

- Transformers 4.39.1

|

| 60 |

+

- Pytorch 2.1.0+cu121

|

| 61 |

+

- Datasets 2.18.0

|

| 62 |

+

- Tokenizers 0.15.2

|

adapter_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "IlyaGusev/saiga_mistral_7b_merged",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"fan_in_fan_out": false,

|

| 7 |

+

"inference_mode": true,

|

| 8 |

+

"init_lora_weights": true,

|

| 9 |

+

"layer_replication": null,

|

| 10 |

+

"layers_pattern": null,

|

| 11 |

+

"layers_to_transform": null,

|

| 12 |

+

"loftq_config": {},

|

| 13 |

+

"lora_alpha": 16,

|

| 14 |

+

"lora_dropout": 0.0,

|

| 15 |

+

"megatron_config": null,

|

| 16 |

+

"megatron_core": "megatron.core",

|

| 17 |

+

"modules_to_save": null,

|

| 18 |

+

"peft_type": "LORA",

|

| 19 |

+

"r": 8,

|

| 20 |

+

"rank_pattern": {},

|

| 21 |

+

"revision": "unsloth",

|

| 22 |

+

"target_modules": [

|

| 23 |

+

"q_proj",

|

| 24 |

+

"k_proj",

|

| 25 |

+

"gate_proj",

|

| 26 |

+

"up_proj",

|

| 27 |

+

"v_proj",

|

| 28 |

+

"o_proj",

|

| 29 |

+

"down_proj"

|

| 30 |

+

],

|

| 31 |

+

"task_type": "CAUSAL_LM",

|

| 32 |

+

"use_dora": false,

|

| 33 |

+

"use_rslora": false

|

| 34 |

+

}

|

adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b33ed03035a4d2bc0cefca53845e8440c0982a61b01f0d9cd18bdcad97c6ae31

|

| 3 |

+

size 83945296

|

added_tokens.json

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"<|im_end|>": 32000,

|

| 3 |

+

"<|im_start|>": 32001

|

| 4 |

+

}

|

all_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 1.0112789268493652,

|

| 4 |

+

"train_runtime": 5845.5599,

|

| 5 |

+

"train_samples_per_second": 0.342,

|

| 6 |

+

"train_steps_per_second": 0.021

|

| 7 |

+

}

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<unk>",

|

| 4 |

+

"<s>",

|

| 5 |

+

"</s>"

|

| 6 |

+

],

|

| 7 |

+

"bos_token": {

|

| 8 |

+

"content": "<s>",

|

| 9 |

+

"lstrip": false,

|

| 10 |

+

"normalized": true,

|

| 11 |

+

"rstrip": false,

|

| 12 |

+

"single_word": false

|

| 13 |

+

},

|

| 14 |

+

"eos_token": {

|

| 15 |

+

"content": "</s>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false

|

| 20 |

+

},

|

| 21 |

+

"pad_token": {

|

| 22 |

+

"content": "<unk>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": true,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false

|

| 27 |

+

},

|

| 28 |

+

"unk_token": {

|

| 29 |

+

"content": "<unk>",

|

| 30 |

+

"lstrip": false,

|

| 31 |

+

"normalized": true,

|

| 32 |

+

"rstrip": false,

|

| 33 |

+

"single_word": false

|

| 34 |

+

}

|

| 35 |

+

}

|

tokenizer.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dadfd56d766715c61d2ef780a525ab43b8e6da4de6865bda3d95fdef5e134055

|

| 3 |

+

size 493443

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"add_prefix_space": true,

|

| 5 |

+

"added_tokens_decoder": {

|

| 6 |

+

"0": {

|

| 7 |

+

"content": "<unk>",

|

| 8 |

+

"lstrip": false,

|

| 9 |

+

"normalized": true,

|

| 10 |

+

"rstrip": false,

|

| 11 |

+

"single_word": false,

|

| 12 |

+

"special": true

|

| 13 |

+

},

|

| 14 |

+

"1": {

|

| 15 |

+

"content": "<s>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false,

|

| 20 |

+

"special": true

|

| 21 |

+

},

|

| 22 |

+

"2": {

|

| 23 |

+

"content": "</s>",

|

| 24 |

+

"lstrip": false,

|

| 25 |

+

"normalized": true,

|

| 26 |

+

"rstrip": false,

|

| 27 |

+

"single_word": false,

|

| 28 |

+

"special": true

|

| 29 |

+

},

|

| 30 |

+

"32000": {

|

| 31 |

+

"content": "<|im_end|>",

|

| 32 |

+

"lstrip": true,

|

| 33 |

+

"normalized": true,

|

| 34 |

+

"rstrip": true,

|

| 35 |

+

"single_word": false,

|

| 36 |

+

"special": false

|

| 37 |

+

},

|

| 38 |

+

"32001": {

|

| 39 |

+

"content": "<|im_start|>",

|

| 40 |

+

"lstrip": true,

|

| 41 |

+

"normalized": true,

|

| 42 |

+

"rstrip": true,

|

| 43 |

+

"single_word": false,

|

| 44 |

+

"special": false

|

| 45 |

+

}

|

| 46 |

+

},

|

| 47 |

+

"additional_special_tokens": [

|

| 48 |

+

"<unk>",

|

| 49 |

+

"<s>",

|

| 50 |

+

"</s>"

|

| 51 |

+

],

|

| 52 |

+

"bos_token": "<s>",

|

| 53 |

+

"chat_template": "{% if messages[0]['role'] == 'system' %}{% set system_message = messages[0]['content'] %}{% endif %}{% if system_message is defined %}{{ system_message + '\\n' }}{% endif %}{% for message in messages %}{% set content = message['content'] %}{% if message['role'] == 'user' %}{{ 'Human: ' + content + '\\nAssistant: ' }}{% elif message['role'] == 'assistant' %}{{ content + '</s>' + '\\n' }}{% endif %}{% endfor %}",

|

| 54 |

+

"clean_up_tokenization_spaces": false,

|

| 55 |

+

"eos_token": "</s>",

|

| 56 |

+

"legacy": true,

|

| 57 |

+

"model_max_length": 32768,

|

| 58 |

+

"pad_token": "<unk>",

|

| 59 |

+

"padding_side": "right",

|

| 60 |

+

"sp_model_kwargs": {},

|

| 61 |

+

"spaces_between_special_tokens": false,

|

| 62 |

+

"split_special_tokens": false,

|

| 63 |

+

"tokenizer_class": "LlamaTokenizer",

|

| 64 |

+

"unk_token": "<unk>",

|

| 65 |

+

"use_default_system_prompt": true

|

| 66 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 1.0112789268493652,

|

| 4 |

+

"train_runtime": 5845.5599,

|

| 5 |

+

"train_samples_per_second": 0.342,

|

| 6 |

+

"train_steps_per_second": 0.021

|

| 7 |

+

}

|

trainer_log.jsonl

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{"current_steps": 10, "total_steps": 125, "loss": 1.1006, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 4.9214579028215776e-05, "epoch": 0.08, "percentage": 8.0, "elapsed_time": "0:07:39", "remaining_time": "1:28:05"}

|

| 2 |

+

{"current_steps": 20, "total_steps": 125, "loss": 1.049, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 4.690766700109659e-05, "epoch": 0.16, "percentage": 16.0, "elapsed_time": "0:15:35", "remaining_time": "1:21:52"}

|

| 3 |

+

{"current_steps": 30, "total_steps": 125, "loss": 1.0269, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 4.3224215685535294e-05, "epoch": 0.24, "percentage": 24.0, "elapsed_time": "0:23:16", "remaining_time": "1:13:41"}

|

| 4 |

+

{"current_steps": 40, "total_steps": 125, "loss": 0.9896, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 3.8395669874474915e-05, "epoch": 0.32, "percentage": 32.0, "elapsed_time": "0:30:51", "remaining_time": "1:05:35"}

|

| 5 |

+

{"current_steps": 50, "total_steps": 125, "loss": 1.0179, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 3.272542485937369e-05, "epoch": 0.4, "percentage": 40.0, "elapsed_time": "0:38:41", "remaining_time": "0:58:01"}

|

| 6 |

+

{"current_steps": 60, "total_steps": 125, "loss": 0.9636, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 2.656976298823284e-05, "epoch": 0.48, "percentage": 48.0, "elapsed_time": "0:46:22", "remaining_time": "0:50:14"}

|

| 7 |

+

{"current_steps": 70, "total_steps": 125, "loss": 1.0151, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 2.031546713535688e-05, "epoch": 0.56, "percentage": 56.0, "elapsed_time": "0:53:53", "remaining_time": "0:42:20"}

|

| 8 |

+

{"current_steps": 80, "total_steps": 125, "loss": 1.0039, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 1.4355517710873184e-05, "epoch": 0.64, "percentage": 64.0, "elapsed_time": "1:01:41", "remaining_time": "0:34:42"}

|

| 9 |

+

{"current_steps": 90, "total_steps": 125, "loss": 0.9773, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 9.064400256282757e-06, "epoch": 0.72, "percentage": 72.0, "elapsed_time": "1:09:26", "remaining_time": "0:27:00"}

|

| 10 |

+

{"current_steps": 100, "total_steps": 125, "loss": 0.9578, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 4.7745751406263165e-06, "epoch": 0.8, "percentage": 80.0, "elapsed_time": "1:17:13", "remaining_time": "0:19:18"}

|

| 11 |

+

{"current_steps": 110, "total_steps": 125, "loss": 0.9773, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 1.7555878527937164e-06, "epoch": 0.88, "percentage": 88.0, "elapsed_time": "1:25:03", "remaining_time": "0:11:35"}

|

| 12 |

+

{"current_steps": 120, "total_steps": 125, "loss": 1.0422, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": 1.9713246713805588e-07, "epoch": 0.96, "percentage": 96.0, "elapsed_time": "1:32:57", "remaining_time": "0:03:52"}

|

| 13 |

+

{"current_steps": 125, "total_steps": 125, "loss": null, "eval_loss": null, "predict_loss": null, "reward": null, "learning_rate": null, "epoch": 1.0, "percentage": 100.0, "elapsed_time": "1:36:53", "remaining_time": "0:00:00"}

|

trainer_state.json

ADDED

|

@@ -0,0 +1,114 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"best_metric": null,

|

| 3 |

+

"best_model_checkpoint": null,

|

| 4 |

+

"epoch": 1.0,

|

| 5 |

+

"eval_steps": 500,

|

| 6 |

+

"global_step": 125,

|

| 7 |

+

"is_hyper_param_search": false,

|

| 8 |

+

"is_local_process_zero": true,

|

| 9 |

+

"is_world_process_zero": true,

|

| 10 |

+

"log_history": [

|

| 11 |

+

{

|

| 12 |

+

"epoch": 0.08,

|

| 13 |

+

"grad_norm": 1.7232568264007568,

|

| 14 |

+

"learning_rate": 4.9214579028215776e-05,

|

| 15 |

+

"loss": 1.1006,

|

| 16 |

+

"step": 10

|

| 17 |

+

},

|

| 18 |

+

{

|

| 19 |

+

"epoch": 0.16,

|

| 20 |

+

"grad_norm": 2.3090097904205322,

|

| 21 |

+

"learning_rate": 4.690766700109659e-05,

|

| 22 |

+

"loss": 1.049,

|

| 23 |

+

"step": 20

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"epoch": 0.24,

|

| 27 |

+

"grad_norm": 1.6444264650344849,

|

| 28 |

+

"learning_rate": 4.3224215685535294e-05,

|

| 29 |

+

"loss": 1.0269,

|

| 30 |

+

"step": 30

|

| 31 |

+

},

|

| 32 |

+

{

|

| 33 |

+

"epoch": 0.32,

|

| 34 |

+

"grad_norm": 1.920586109161377,

|

| 35 |

+

"learning_rate": 3.8395669874474915e-05,

|

| 36 |

+

"loss": 0.9896,

|

| 37 |

+

"step": 40

|

| 38 |

+

},

|

| 39 |

+

{

|

| 40 |

+

"epoch": 0.4,

|

| 41 |

+

"grad_norm": 1.7457690238952637,

|

| 42 |

+

"learning_rate": 3.272542485937369e-05,

|

| 43 |

+

"loss": 1.0179,

|

| 44 |

+

"step": 50

|

| 45 |

+

},

|

| 46 |

+

{

|

| 47 |

+

"epoch": 0.48,

|

| 48 |

+

"grad_norm": 1.9631178379058838,

|

| 49 |

+

"learning_rate": 2.656976298823284e-05,

|

| 50 |

+

"loss": 0.9636,

|

| 51 |

+

"step": 60

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"epoch": 0.56,

|

| 55 |

+

"grad_norm": 2.050771951675415,

|

| 56 |

+

"learning_rate": 2.031546713535688e-05,

|

| 57 |

+

"loss": 1.0151,

|

| 58 |

+

"step": 70

|

| 59 |

+

},

|

| 60 |

+

{

|

| 61 |

+

"epoch": 0.64,

|

| 62 |

+

"grad_norm": 1.9484338760375977,

|

| 63 |

+

"learning_rate": 1.4355517710873184e-05,

|

| 64 |

+

"loss": 1.0039,

|

| 65 |

+

"step": 80

|

| 66 |

+

},

|

| 67 |

+

{

|

| 68 |

+

"epoch": 0.72,

|

| 69 |

+

"grad_norm": 1.7718967199325562,

|

| 70 |

+

"learning_rate": 9.064400256282757e-06,

|

| 71 |

+

"loss": 0.9773,

|

| 72 |

+

"step": 90

|

| 73 |

+

},

|

| 74 |

+

{

|

| 75 |

+

"epoch": 0.8,

|

| 76 |

+

"grad_norm": 2.00250506401062,

|

| 77 |

+

"learning_rate": 4.7745751406263165e-06,

|

| 78 |

+

"loss": 0.9578,

|

| 79 |

+

"step": 100

|

| 80 |

+

},

|

| 81 |

+

{

|

| 82 |

+

"epoch": 0.88,

|

| 83 |

+

"grad_norm": 2.0536956787109375,

|

| 84 |

+

"learning_rate": 1.7555878527937164e-06,

|

| 85 |

+

"loss": 0.9773,

|

| 86 |

+

"step": 110

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"epoch": 0.96,

|

| 90 |

+

"grad_norm": 2.105520248413086,

|

| 91 |

+

"learning_rate": 1.9713246713805588e-07,

|

| 92 |

+

"loss": 1.0422,

|

| 93 |

+

"step": 120

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"epoch": 1.0,

|

| 97 |

+

"step": 125,

|

| 98 |

+

"total_flos": 1.7032957304347034e+17,

|

| 99 |

+

"train_loss": 1.0112789268493652,

|

| 100 |

+

"train_runtime": 5845.5599,

|

| 101 |

+

"train_samples_per_second": 0.342,

|

| 102 |

+

"train_steps_per_second": 0.021

|

| 103 |

+

}

|

| 104 |

+

],

|

| 105 |

+

"logging_steps": 10,

|

| 106 |

+

"max_steps": 125,

|

| 107 |

+

"num_input_tokens_seen": 0,

|

| 108 |

+

"num_train_epochs": 1,

|

| 109 |

+

"save_steps": 1000,

|

| 110 |

+

"total_flos": 1.7032957304347034e+17,

|

| 111 |

+

"train_batch_size": 4,

|

| 112 |

+

"trial_name": null,

|

| 113 |

+

"trial_params": null

|

| 114 |

+

}

|

training_args.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0a5374e5016f75dd7641625eb384725fff3af38fc71a5b14d2d6cbd148d8f66a

|

| 3 |

+

size 5112

|

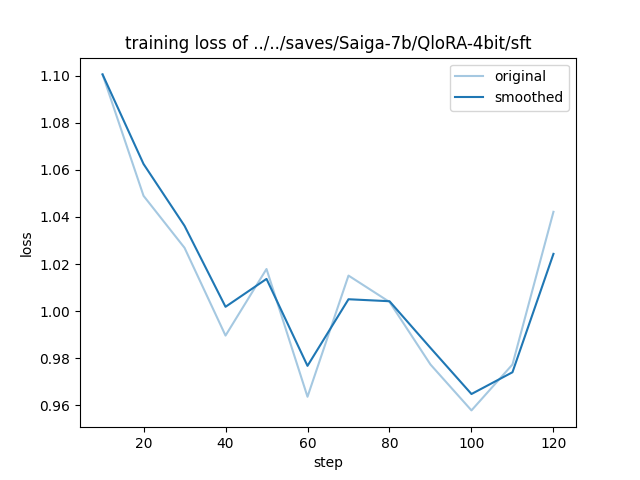

training_loss.png

ADDED

|