Commit

•

c85f333

1

Parent(s):

4858383

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- .gitignore +8 -0

- License +470 -0

- README.md +199 -0

- assets/i2v/blackswan.gif +0 -0

- assets/i2v/chair.gif +0 -0

- assets/i2v/horse.gif +0 -0

- assets/i2v/input/blackswan.png +0 -0

- assets/i2v/input/chair.png +0 -0

- assets/i2v/input/horse.png +0 -0

- assets/i2v/input/sunset.png +0 -0

- assets/i2v/sunset.gif +0 -0

- assets/t2v/child.gif +0 -0

- assets/t2v/couple.gif +3 -0

- assets/t2v/duck.gif +0 -0

- assets/t2v/girl_moose.jpg +0 -0

- assets/t2v/rabbit.gif +0 -0

- assets/t2v/tom.gif +0 -0

- assets/t2v/woman.gif +0 -0

- cog.yaml +25 -0

- configs/inference_i2v_512_v1.0.yaml +83 -0

- configs/inference_t2v_1024_v1.0.yaml +77 -0

- configs/inference_t2v_512_v1.0.yaml +74 -0

- configs/inference_t2v_512_v2.0.yaml +77 -0

- gradio_app.py +58 -0

- lvdm/basics.py +100 -0

- lvdm/common.py +95 -0

- lvdm/distributions.py +95 -0

- lvdm/ema.py +76 -0

- lvdm/models/autoencoder.py +219 -0

- lvdm/models/ddpm3d.py +763 -0

- lvdm/models/samplers/ddim.py +336 -0

- lvdm/models/utils_diffusion.py +104 -0

- lvdm/modules/attention.py +475 -0

- lvdm/modules/encoders/condition.py +392 -0

- lvdm/modules/encoders/ip_resampler.py +136 -0

- lvdm/modules/networks/ae_modules.py +845 -0

- lvdm/modules/networks/openaimodel3d.py +577 -0

- lvdm/modules/x_transformer.py +640 -0

- predict.py +155 -0

- prompts/i2v_prompts/horse.png +0 -0

- prompts/i2v_prompts/seashore.png +0 -0

- prompts/i2v_prompts/test_prompts.txt +2 -0

- prompts/test_prompts.txt +2 -0

- requirements.txt +23 -0

- scripts/evaluation/ddp_wrapper.py +46 -0

- scripts/evaluation/funcs.py +194 -0

- scripts/evaluation/inference.py +137 -0

- scripts/gradio/i2v_test.py +83 -0

- scripts/gradio/t2v_test.py +77 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/t2v/couple.gif filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.DS_Store

|

| 2 |

+

*pyc

|

| 3 |

+

.vscode

|

| 4 |

+

__pycache__

|

| 5 |

+

*.egg-info

|

| 6 |

+

|

| 7 |

+

checkpoints

|

| 8 |

+

results

|

License

ADDED

|

@@ -0,0 +1,470 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

This license applies to the source codes that are open sourced in connection with the VideoCrafter1.

|

| 2 |

+

|

| 3 |

+

Copyright (C) 2023 THL A29 Limited, a Tencent company.

|

| 4 |

+

|

| 5 |

+

Apache License

|

| 6 |

+

Version 2.0, January 2004

|

| 7 |

+

http://www.apache.org/licenses/

|

| 8 |

+

|

| 9 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 10 |

+

|

| 11 |

+

1. Definitions.

|

| 12 |

+

|

| 13 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 14 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 15 |

+

|

| 16 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 17 |

+

the copyright owner that is granting the License.

|

| 18 |

+

|

| 19 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 20 |

+

other entities that control, are controlled by, or are under common

|

| 21 |

+

control with that entity. For the purposes of this definition,

|

| 22 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 23 |

+

direction or management of such entity, whether by contract or

|

| 24 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 25 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 26 |

+

|

| 27 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 28 |

+

exercising permissions granted by this License.

|

| 29 |

+

|

| 30 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 31 |

+

including but not limited to software source code, documentation

|

| 32 |

+

source, and configuration files.

|

| 33 |

+

|

| 34 |

+

"Object" form shall mean any form resulting from mechanical

|

| 35 |

+

transformation or translation of a Source form, including but

|

| 36 |

+

not limited to compiled object code, generated documentation,

|

| 37 |

+

and conversions to other media types.

|

| 38 |

+

|

| 39 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 40 |

+

Object form, made available under the License, as indicated by a

|

| 41 |

+

copyright notice that is included in or attached to the work

|

| 42 |

+

(an example is provided in the Appendix below).

|

| 43 |

+

|

| 44 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 45 |

+

form, that is based on (or derived from) the Work and for which the

|

| 46 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 47 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 48 |

+

of this License, Derivative Works shall not include works that remain

|

| 49 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 50 |

+

the Work and Derivative Works thereof.

|

| 51 |

+

|

| 52 |

+

"Contribution" shall mean any work of authorship, including

|

| 53 |

+

the original version of the Work and any modifications or additions

|

| 54 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 55 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 56 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 57 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 58 |

+

means any form of electronic, verbal, or written communication sent

|

| 59 |

+

to the Licensor or its representatives, including but not limited to

|

| 60 |

+

communication on electronic mailing lists, source code control systems,

|

| 61 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 62 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 63 |

+

excluding communication that is conspicuously marked or otherwise

|

| 64 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 65 |

+

|

| 66 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 67 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 68 |

+

subsequently incorporated within the Work.

|

| 69 |

+

|

| 70 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 71 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 72 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 73 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 74 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 75 |

+

Work and such Derivative Works in Source or Object form.

|

| 76 |

+

|

| 77 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 78 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 79 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 80 |

+

(except as stated in this section) patent license to make, have made,

|

| 81 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 82 |

+

where such license applies only to those patent claims licensable

|

| 83 |

+

by such Contributor that are necessarily infringed by their

|

| 84 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 85 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 86 |

+

institute patent litigation against any entity (including a

|

| 87 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 88 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 89 |

+

or contributory patent infringement, then any patent licenses

|

| 90 |

+

granted to You under this License for that Work shall terminate

|

| 91 |

+

as of the date such litigation is filed.

|

| 92 |

+

|

| 93 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 94 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 95 |

+

modifications, and in Source or Object form, provided that You

|

| 96 |

+

meet the following conditions:

|

| 97 |

+

|

| 98 |

+

(a) You must give any other recipients of the Work or

|

| 99 |

+

Derivative Works a copy of this License; and

|

| 100 |

+

|

| 101 |

+

(b) You must cause any modified files to carry prominent notices

|

| 102 |

+

stating that You changed the files; and

|

| 103 |

+

|

| 104 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 105 |

+

that You distribute, all copyright, patent, trademark, and

|

| 106 |

+

attribution notices from the Source form of the Work,

|

| 107 |

+

excluding those notices that do not pertain to any part of

|

| 108 |

+

the Derivative Works; and

|

| 109 |

+

|

| 110 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 111 |

+

distribution, then any Derivative Works that You distribute must

|

| 112 |

+

include a readable copy of the attribution notices contained

|

| 113 |

+

within such NOTICE file, excluding those notices that do not

|

| 114 |

+

pertain to any part of the Derivative Works, in at least one

|

| 115 |

+

of the following places: within a NOTICE text file distributed

|

| 116 |

+

as part of the Derivative Works; within the Source form or

|

| 117 |

+

documentation, if provided along with the Derivative Works; or,

|

| 118 |

+

within a display generated by the Derivative Works, if and

|

| 119 |

+

wherever such third-party notices normally appear. The contents

|

| 120 |

+

of the NOTICE file are for informational purposes only and

|

| 121 |

+

do not modify the License. You may add Your own attribution

|

| 122 |

+

notices within Derivative Works that You distribute, alongside

|

| 123 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 124 |

+

that such additional attribution notices cannot be construed

|

| 125 |

+

as modifying the License.

|

| 126 |

+

|

| 127 |

+

You may add Your own copyright statement to Your modifications and

|

| 128 |

+

may provide additional or different license terms and conditions

|

| 129 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 130 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 131 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 132 |

+

the conditions stated in this License.

|

| 133 |

+

|

| 134 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 135 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 136 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 137 |

+

this License, without any additional terms or conditions.

|

| 138 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 139 |

+

the terms of any separate license agreement you may have executed

|

| 140 |

+

with Licensor regarding such Contributions.

|

| 141 |

+

|

| 142 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 143 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 144 |

+

except as required for reasonable and customary use in describing the

|

| 145 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 146 |

+

|

| 147 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 148 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 149 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 150 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 151 |

+

implied, including, without limitation, any warranties or conditions

|

| 152 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 153 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 154 |

+

appropriateness of using or redistributing the Work and assume any

|

| 155 |

+

risks associated with Your exercise of permissions under this License.

|

| 156 |

+

|

| 157 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 158 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 159 |

+

unless required by applicable law (such as deliberate and grossly

|

| 160 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 161 |

+

liable to You for damages, including any direct, indirect, special,

|

| 162 |

+

incidental, or consequential damages of any character arising as a

|

| 163 |

+

result of this License or out of the use or inability to use the

|

| 164 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 165 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 166 |

+

other commercial damages or losses), even if such Contributor

|

| 167 |

+

has been advised of the possibility of such damages.

|

| 168 |

+

|

| 169 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 170 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 171 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 172 |

+

or other liability obligations and/or rights consistent with this

|

| 173 |

+

License. However, in accepting such obligations, You may act only

|

| 174 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 175 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 176 |

+

defend, and hold each Contributor harmless for any liability

|

| 177 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 178 |

+

of your accepting any such warranty or additional liability.

|

| 179 |

+

|

| 180 |

+

10. This code is provided for research purposes only and is

|

| 181 |

+

not to be used for any commercial purposes. By using this code,

|

| 182 |

+

you agree that it will be used solely for academic research, scholarly work,

|

| 183 |

+

and non-commercial activities. Any use of this code for commercial purposes,

|

| 184 |

+

including but not limited to, selling, distributing, or incorporating it into

|

| 185 |

+

commercial products or services, is strictly prohibited. Violation of this

|

| 186 |

+

clause may result in legal actions and penalties.

|

| 187 |

+

|

| 188 |

+

END OF TERMS AND CONDITIONS

|

| 189 |

+

|

| 190 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 191 |

+

|

| 192 |

+

To apply the Apache License to your work, attach the following

|

| 193 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 194 |

+

replaced with your own identifying information. (Don't include

|

| 195 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 196 |

+

comment syntax for the file format. We also recommend that a

|

| 197 |

+

file or class name and description of purpose be included on the

|

| 198 |

+

same "printed page" as the copyright notice for easier

|

| 199 |

+

identification within third-party archives.

|

| 200 |

+

|

| 201 |

+

Copyright [yyyy] [name of copyright owner]

|

| 202 |

+

|

| 203 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 204 |

+

you may not use this file except in compliance with the License.

|

| 205 |

+

You may obtain a copy of the License at

|

| 206 |

+

|

| 207 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 208 |

+

|

| 209 |

+

Unless required by applicable law or agreed to in writing, software

|

| 210 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 211 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 212 |

+

See the License for the specific language governing permissions and

|

| 213 |

+

limitations under the License.

|

| 214 |

+

|

| 215 |

+

|

| 216 |

+

Other dependencies and licenses (if such optional components are used):

|

| 217 |

+

|

| 218 |

+

|

| 219 |

+

Components under BSD 3-Clause License:

|

| 220 |

+

------------------------------------------------

|

| 221 |

+

1. numpy

|

| 222 |

+

Copyright (c) 2005-2022, NumPy Developers.

|

| 223 |

+

All rights reserved.

|

| 224 |

+

|

| 225 |

+

2. pytorch

|

| 226 |

+

Copyright (c) 2016- Facebook, Inc (Adam Paszke)

|

| 227 |

+

Copyright (c) 2014- Facebook, Inc (Soumith Chintala)

|

| 228 |

+

Copyright (c) 2011-2014 Idiap Research Institute (Ronan Collobert)

|

| 229 |

+

Copyright (c) 2012-2014 Deepmind Technologies (Koray Kavukcuoglu)

|

| 230 |

+

Copyright (c) 2011-2012 NEC Laboratories America (Koray Kavukcuoglu)

|

| 231 |

+

Copyright (c) 2011-2013 NYU (Clement Farabet)

|

| 232 |

+

Copyright (c) 2006-2010 NEC Laboratories America (Ronan Collobert, Leon Bottou, Iain Melvin, Jason Weston)

|

| 233 |

+

Copyright (c) 2006 Idiap Research Institute (Samy Bengio)

|

| 234 |

+

Copyright (c) 2001-2004 Idiap Research Institute (Ronan Collobert, Samy Bengio, Johnny Mariethoz)

|

| 235 |

+

|

| 236 |

+

3. torchvision

|

| 237 |

+

Copyright (c) Soumith Chintala 2016,

|

| 238 |

+

All rights reserved.

|

| 239 |

+

|

| 240 |

+

Redistribution and use in source and binary forms, with or without

|

| 241 |

+

modification, are permitted provided that the following conditions are met:

|

| 242 |

+

|

| 243 |

+

* Redistributions of source code must retain the above copyright notice, this

|

| 244 |

+

list of conditions and the following disclaimer.

|

| 245 |

+

|

| 246 |

+

* Redistributions in binary form must reproduce the above copyright notice,

|

| 247 |

+

this list of conditions and the following disclaimer in the documentation

|

| 248 |

+

and/or other materials provided with the distribution.

|

| 249 |

+

|

| 250 |

+

* Neither the name of the copyright holder nor the names of its

|

| 251 |

+

contributors may be used to endorse or promote products derived from

|

| 252 |

+

this software without specific prior written permission.

|

| 253 |

+

|

| 254 |

+

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS"

|

| 255 |

+

AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

|

| 256 |

+

IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

|

| 257 |

+

DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE

|

| 258 |

+

FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

|

| 259 |

+

DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

|

| 260 |

+

SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

|

| 261 |

+

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

|

| 262 |

+

OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

|

| 263 |

+

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

| 264 |

+

|

| 265 |

+

Component under Apache v2 License:

|

| 266 |

+

-----------------------------------------------------

|

| 267 |

+

1. timm

|

| 268 |

+

Copyright 2019 Ross Wightman

|

| 269 |

+

|

| 270 |

+

Apache License

|

| 271 |

+

Version 2.0, January 2004

|

| 272 |

+

http://www.apache.org/licenses/

|

| 273 |

+

|

| 274 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 275 |

+

|

| 276 |

+

1. Definitions.

|

| 277 |

+

|

| 278 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 279 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 280 |

+

|

| 281 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 282 |

+

the copyright owner that is granting the License.

|

| 283 |

+

|

| 284 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 285 |

+

other entities that control, are controlled by, or are under common

|

| 286 |

+

control with that entity. For the purposes of this definition,

|

| 287 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 288 |

+

direction or management of such entity, whether by contract or

|

| 289 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 290 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 291 |

+

|

| 292 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 293 |

+

exercising permissions granted by this License.

|

| 294 |

+

|

| 295 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 296 |

+

including but not limited to software source code, documentation

|

| 297 |

+

source, and configuration files.

|

| 298 |

+

|

| 299 |

+

"Object" form shall mean any form resulting from mechanical

|

| 300 |

+

transformation or translation of a Source form, including but

|

| 301 |

+

not limited to compiled object code, generated documentation,

|

| 302 |

+

and conversions to other media types.

|

| 303 |

+

|

| 304 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 305 |

+

Object form, made available under the License, as indicated by a

|

| 306 |

+

copyright notice that is included in or attached to the work

|

| 307 |

+

(an example is provided in the Appendix below).

|

| 308 |

+

|

| 309 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 310 |

+

form, that is based on (or derived from) the Work and for which the

|

| 311 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 312 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 313 |

+

of this License, Derivative Works shall not include works that remain

|

| 314 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 315 |

+

the Work and Derivative Works thereof.

|

| 316 |

+

|

| 317 |

+

"Contribution" shall mean any work of authorship, including

|

| 318 |

+

the original version of the Work and any modifications or additions

|

| 319 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 320 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 321 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 322 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 323 |

+

means any form of electronic, verbal, or written communication sent

|

| 324 |

+

to the Licensor or its representatives, including but not limited to

|

| 325 |

+

communication on electronic mailing lists, source code control systems,

|

| 326 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 327 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 328 |

+

excluding communication that is conspicuously marked or otherwise

|

| 329 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 330 |

+

|

| 331 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 332 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 333 |

+

subsequently incorporated within the Work.

|

| 334 |

+

|

| 335 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 336 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 337 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 338 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 339 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 340 |

+

Work and such Derivative Works in Source or Object form.

|

| 341 |

+

|

| 342 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 343 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 344 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 345 |

+

(except as stated in this section) patent license to make, have made,

|

| 346 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 347 |

+

where such license applies only to those patent claims licensable

|

| 348 |

+

by such Contributor that are necessarily infringed by their

|

| 349 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 350 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 351 |

+

institute patent litigation against any entity (including a

|

| 352 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 353 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 354 |

+

or contributory patent infringement, then any patent licenses

|

| 355 |

+

granted to You under this License for that Work shall terminate

|

| 356 |

+

as of the date such litigation is filed.

|

| 357 |

+

|

| 358 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 359 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 360 |

+

modifications, and in Source or Object form, provided that You

|

| 361 |

+

meet the following conditions:

|

| 362 |

+

|

| 363 |

+

(a) You must give any other recipients of the Work or

|

| 364 |

+

Derivative Works a copy of this License; and

|

| 365 |

+

|

| 366 |

+

(b) You must cause any modified files to carry prominent notices

|

| 367 |

+

stating that You changed the files; and

|

| 368 |

+

|

| 369 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 370 |

+

that You distribute, all copyright, patent, trademark, and

|

| 371 |

+

attribution notices from the Source form of the Work,

|

| 372 |

+

excluding those notices that do not pertain to any part of

|

| 373 |

+

the Derivative Works; and

|

| 374 |

+

|

| 375 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 376 |

+

distribution, then any Derivative Works that You distribute must

|

| 377 |

+

include a readable copy of the attribution notices contained

|

| 378 |

+

within such NOTICE file, excluding those notices that do not

|

| 379 |

+

pertain to any part of the Derivative Works, in at least one

|

| 380 |

+

of the following places: within a NOTICE text file distributed

|

| 381 |

+

as part of the Derivative Works; within the Source form or

|

| 382 |

+

documentation, if provided along with the Derivative Works; or,

|

| 383 |

+

within a display generated by the Derivative Works, if and

|

| 384 |

+

wherever such third-party notices normally appear. The contents

|

| 385 |

+

of the NOTICE file are for informational purposes only and

|

| 386 |

+

do not modify the License. You may add Your own attribution

|

| 387 |

+

notices within Derivative Works that You distribute, alongside

|

| 388 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 389 |

+

that such additional attribution notices cannot be construed

|

| 390 |

+

as modifying the License.

|

| 391 |

+

|

| 392 |

+

You may add Your own copyright statement to Your modifications and

|

| 393 |

+

may provide additional or different license terms and conditions

|

| 394 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 395 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 396 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 397 |

+

the conditions stated in this License.

|

| 398 |

+

|

| 399 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 400 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 401 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 402 |

+

this License, without any additional terms or conditions.

|

| 403 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 404 |

+

the terms of any separate license agreement you may have executed

|

| 405 |

+

with Licensor regarding such Contributions.

|

| 406 |

+

|

| 407 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 408 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 409 |

+

except as required for reasonable and customary use in describing the

|

| 410 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 411 |

+

|

| 412 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 413 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 414 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 415 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 416 |

+

implied, including, without limitation, any warranties or conditions

|

| 417 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 418 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 419 |

+

appropriateness of using or redistributing the Work and assume any

|

| 420 |

+

risks associated with Your exercise of permissions under this License.

|

| 421 |

+

|

| 422 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 423 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 424 |

+

unless required by applicable law (such as deliberate and grossly

|

| 425 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 426 |

+

liable to You for damages, including any direct, indirect, special,

|

| 427 |

+

incidental, or consequential damages of any character arising as a

|

| 428 |

+

result of this License or out of the use or inability to use the

|

| 429 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 430 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 431 |

+

other commercial damages or losses), even if such Contributor

|

| 432 |

+

has been advised of the possibility of such damages.

|

| 433 |

+

|

| 434 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 435 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 436 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 437 |

+

or other liability obligations and/or rights consistent with this

|

| 438 |

+

License. However, in accepting such obligations, You may act only

|

| 439 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 440 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 441 |

+

defend, and hold each Contributor harmless for any liability

|

| 442 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 443 |

+

of your accepting any such warranty or additional liability.

|

| 444 |

+

|

| 445 |

+

END OF TERMS AND CONDITIONS

|

| 446 |

+

|

| 447 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 448 |

+

|

| 449 |

+

To apply the Apache License to your work, attach the following

|

| 450 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 451 |

+

replaced with your own identifying information. (Don't include

|

| 452 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 453 |

+

comment syntax for the file format. We also recommend that a

|

| 454 |

+

file or class name and description of purpose be included on the

|

| 455 |

+

same "printed page" as the copyright notice for easier

|

| 456 |

+

identification within third-party archives.

|

| 457 |

+

|

| 458 |

+

Copyright [yyyy] [name of copyright owner]

|

| 459 |

+

|

| 460 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 461 |

+

you may not use this file except in compliance with the License.

|

| 462 |

+

You may obtain a copy of the License at

|

| 463 |

+

|

| 464 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 465 |

+

|

| 466 |

+

Unless required by applicable law or agreed to in writing, software

|

| 467 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 468 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 469 |

+

See the License for the specific language governing permissions and

|

| 470 |

+

limitations under the License.

|

README.md

ADDED

|

@@ -0,0 +1,199 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

## ___***VideoCrafter2: Overcoming Data Limitations for High-Quality Video Diffusion Models***___

|

| 3 |

+

|

| 4 |

+

<a href='https://ailab-cvc.github.io/videocrafter2/'><img src='https://img.shields.io/badge/Project-Page-green'></a>

|

| 5 |

+

<a href='https://arxiv.org/abs/2401.09047'><img src='https://img.shields.io/badge/Technique-Report-red'></a>

|

| 6 |

+

[](https://discord.gg/rrayYqZ4tf)

|

| 7 |

+

<a href='https://huggingface.co/spaces/VideoCrafter/VideoCrafter'><img src='https://img.shields.io/badge/%F0%9F%A4%97%20Hugging%20Face-Model-blue'></a>

|

| 8 |

+

[](https://github.com/VideoCrafter/VideoCrafter)

|

| 9 |

+

|

| 10 |

+

### 🔥🔥 Our dedicated high-resolution I2V model is released at: :point_right:[DynamiCrafter](https://github.com/Doubiiu/DynamiCrafter)!!!

|

| 11 |

+

|

| 12 |

+

[](https://www.youtube.com/watch?v=0NfmIsNAg-g)

|

| 13 |

+

|

| 14 |

+

### 🔥The VideoCrafter2 Large improvements over VideoCrafter1 with limited data. Better Motion, Better Concept Combination!!!

|

| 15 |

+

|

| 16 |

+

Please Join us and create your own film on [Discord/Floor33](https://discord.gg/rrayYqZ4tf).

|

| 17 |

+

|

| 18 |

+

##### 🎥 Exquisite film, produced by VideoCrafter2, directed by Human

|

| 19 |

+

[](https://www.youtube.com/watch?v=TUsFkW0tK-s)

|

| 20 |

+

|

| 21 |

+

## 🔆 Introduction

|

| 22 |

+

|

| 23 |

+

🤗🤗🤗 VideoCrafter is an open-source video generation and editing toolbox for crafting video content.

|

| 24 |

+

It currently includes the Text2Video and Image2Video models:

|

| 25 |

+

|

| 26 |

+

### 1. Generic Text-to-video Generation

|

| 27 |

+

Click the GIF to access the high-resolution video.

|

| 28 |

+

|

| 29 |

+

<table class="center">

|

| 30 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/d20ee09d-fc32-44a8-9e9a-f12f44b30411"><img src=assets/t2v/tom.gif width="320"></td>

|

| 31 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/f1d9f434-28e8-44f6-a9b8-cffd67e4574d"><img src=assets/t2v/child.gif width="320"></td>

|

| 32 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/bbcfef0e-d8fb-4850-adc0-d8f937c2fa36"><img src=assets/t2v/woman.gif width="320"></td>

|

| 33 |

+

<tr>

|

| 34 |

+

<td style="text-align:center;" width="320">"Tom Cruise's face reflects focus, his eyes filled with purpose and drive."</td>

|

| 35 |

+

<td style="text-align:center;" width="320">"A child excitedly swings on a rusty swing set, laughter filling the air."</td>

|

| 36 |

+

<td style="text-align:center;" width="320">"A young woman with glasses is jogging in the park wearing a pink headband."</td>

|

| 37 |

+

<tr>

|

| 38 |

+

</table >

|

| 39 |

+

|

| 40 |

+

<table class="center">

|

| 41 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/7edafc5a-750e-45f3-a46e-b593751a4b12"><img src=assets/t2v/couple.gif width="320"></td>

|

| 42 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/37fe41c8-31fb-4e77-bcf9-fa159baa6d86"><img src=assets/t2v/rabbit.gif width="320"></td>

|

| 43 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/09791a46-a243-41b8-a6bb-892cdd3a83a2"><img src=assets/t2v/duck.gif width="320"></td>

|

| 44 |

+

<tr>

|

| 45 |

+

<td style="text-align:center;" width="320">"With the style of van gogh, A young couple dances under the moonlight by the lake."</td>

|

| 46 |

+

<td style="text-align:center;" width="320">"A rabbit, low-poly game art style"</td>

|

| 47 |

+

<td style="text-align:center;" width="320">"Impressionist style, a yellow rubber duck floating on the wave on the sunset"</td>

|

| 48 |

+

<tr>

|

| 49 |

+

</table >

|

| 50 |

+

|

| 51 |

+

### 2. Generic Image-to-video Generation

|

| 52 |

+

|

| 53 |

+

<table class="center">

|

| 54 |

+

<td><img src=assets/i2v/input/blackswan.png width="170"></td>

|

| 55 |

+

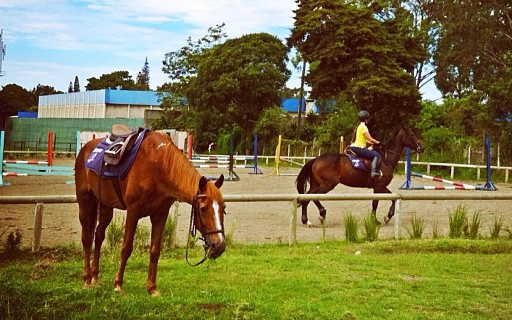

<td><img src=assets/i2v/input/horse.png width="170"></td>

|

| 56 |

+

<td><img src=assets/i2v/input/chair.png width="170"></td>

|

| 57 |

+

<td><img src=assets/i2v/input/sunset.png width="170"></td>

|

| 58 |

+

<tr>

|

| 59 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/1a57edd9-3fd2-4ce9-8313-89aca95b6ec7"><img src=assets/i2v/blackswan.gif width="170"></td>

|

| 60 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/d671419d-ae49-4889-807e-b841aef60e8a"><img src=assets/i2v/horse.gif width="170"></td>

|

| 61 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/39d730d9-7b47-4132-bdae-4d18f3e651ee"><img src=assets/i2v/chair.gif width="170"></td>

|

| 62 |

+

<td><a href="https://github.com/AILab-CVC/VideoCrafter/assets/18735168/dc8dd0d5-a80d-4f31-94db-f9ea0b13172b"><img src=assets/i2v/sunset.gif width="170"></td>

|

| 63 |

+

<tr>

|

| 64 |

+

<td style="text-align:center;" width="170">"a black swan swims on the pond"</td>

|

| 65 |

+

<td style="text-align:center;" width="170">"a girl is riding a horse fast on grassland"</td>

|

| 66 |

+

<td style="text-align:center;" width="170">"a boy sits on a chair facing the sea"</td>

|

| 67 |

+

<td style="text-align:center;" width="170">"two galleons moving in the wind at sunset"</td>

|

| 68 |

+

|

| 69 |

+

</table >

|

| 70 |

+

|

| 71 |

+

:boom: **You are highly recommended to try our dedicated I2V model [DynamiCrafter](https://github.com/Doubiiu/DynamiCrafter): Higher resolution, Better Dynamics, More Coherence!!!**

|

| 72 |

+

|

| 73 |

+

---

|

| 74 |

+

|

| 75 |

+

## 📝 Changelog

|

| 76 |

+

- __[2024.02.05]__: 🔥🔥 Release new I2V model with the resolution of 640x1024 of VideoCrafter1/DynamiCrafter.

|

| 77 |

+

|

| 78 |

+

- __[2024.01.26]__: Release the 512x320 checkpoint of VideoCrafter2.

|

| 79 |

+

|

| 80 |

+

- __[2024.01.18]__: Release the [VideoCrafter2](https://ailab-cvc.github.io/videocrafter2/) and [Tech Report](https://arxiv.org/abs/2401.09047)!

|

| 81 |

+

|

| 82 |

+

- __[2023.10.30]__: Release [VideoCrafter1](https://arxiv.org/abs/2310.19512) Technical Report!

|

| 83 |

+

|

| 84 |

+

- __[2023.10.13]__: Release the VideoCrafter1, High Quality Video Generation!

|

| 85 |

+

|

| 86 |

+

- __[2023.08.14]__: Release a new version of VideoCrafter on [Discord/Floor33](https://discord.gg/uHaQuThT). Please join us to create your own film!

|

| 87 |

+

|

| 88 |

+

- __[2023.04.18]__: Release a VideoControl model with most of the watermarks removed!

|

| 89 |

+

|

| 90 |

+

- __[2023.04.05]__: Release pretrained Text-to-Video models, VideoLora models, and inference code.

|

| 91 |

+

<br>

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

## ⏳ Models

|

| 95 |

+

|

| 96 |

+

|T2V-Models|Resolution|Checkpoints|

|

| 97 |

+

|:---------|:---------|:--------|

|

| 98 |

+

|VideoCrafter2|320x512|[Hugging Face](https://huggingface.co/VideoCrafter/VideoCrafter2/blob/main/model.ckpt)

|

| 99 |

+

|VideoCrafter1|576x1024|[Hugging Face](https://huggingface.co/VideoCrafter/Text2Video-1024/blob/main/model.ckpt)

|

| 100 |

+

|VideoCrafter1|320x512|[Hugging Face](https://huggingface.co/VideoCrafter/Text2Video-512/blob/main/model.ckpt)

|

| 101 |

+

|

| 102 |

+

|I2V-Models|Resolution|Checkpoints|

|

| 103 |

+

|:---------|:---------|:--------|

|

| 104 |

+

|VideoCrafter1|640x1024|[Hugging Face](https://huggingface.co/Doubiiu/DynamiCrafter_1024/blob/main/model.ckpt)

|

| 105 |

+

|VideoCrafter1|320x512|[Hugging Face](https://huggingface.co/VideoCrafter/Image2Video-512/blob/main/model.ckpt)

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

## ⚙️ Setup

|

| 110 |

+

|

| 111 |

+

### 1. Install Environment via Anaconda (Recommended)

|

| 112 |

+

```bash

|

| 113 |

+

conda create -n videocrafter python=3.8.5

|

| 114 |

+

conda activate videocrafter

|

| 115 |

+

pip install -r requirements.txt

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

## 💫 Inference

|

| 120 |

+

### 1. Text-to-Video

|

| 121 |

+

|

| 122 |

+

1) Download pretrained T2V models via [Hugging Face](https://huggingface.co/VideoCrafter/VideoCrafter2/blob/main/model.ckpt), and put the `model.ckpt` in `checkpoints/base_512_v2/model.ckpt`.

|

| 123 |

+

2) Input the following commands in terminal.

|

| 124 |

+

```bash

|

| 125 |

+

sh scripts/run_text2video.sh

|

| 126 |

+

```

|

| 127 |

+

|

| 128 |

+

### 2. Image-to-Video

|

| 129 |

+

|

| 130 |

+

1) Download pretrained I2V models via [Hugging Face](https://huggingface.co/VideoCrafter/Image2Video-512-v1.0/blob/main/model.ckpt), and put the `model.ckpt` in `checkpoints/i2v_512_v1/model.ckpt`.

|

| 131 |

+

2) Input the following commands in terminal.

|

| 132 |

+

```bash

|

| 133 |

+

sh scripts/run_image2video.sh

|

| 134 |

+

```

|

| 135 |

+

|

| 136 |

+

### 3. Local Gradio demo

|

| 137 |

+

|

| 138 |

+

1. Download the pretrained T2V and I2V models and put them in the corresponding directory according to the previous guidelines.

|

| 139 |

+

2. Input the following commands in terminal.

|

| 140 |

+

```bash

|

| 141 |

+

python gradio_app.py

|

| 142 |

+

```

|

| 143 |

+

|

| 144 |

+

---

|

| 145 |

+

## 📋 Techinical Report

|

| 146 |

+

😉 VideoCrafter2 Tech report: [VideoCrafter2: Overcoming Data Limitations for High-Quality Video Diffusion Models](https://arxiv.org/abs/2401.09047)

|

| 147 |

+

|

| 148 |

+

😉 VideoCrafter1 Tech report: [VideoCrafter1: Open Diffusion Models for High-Quality Video Generation](https://arxiv.org/abs/2310.19512)

|

| 149 |

+

<br>

|

| 150 |

+

|

| 151 |

+

## 😉 Citation

|

| 152 |

+

The technical report is currently unavailable as it is still in preparation. You can cite the paper of our image-to-video model and related base model.

|

| 153 |

+

```

|

| 154 |

+

@misc{chen2024videocrafter2,

|

| 155 |

+

title={VideoCrafter2: Overcoming Data Limitations for High-Quality Video Diffusion Models},

|

| 156 |

+

author={Haoxin Chen and Yong Zhang and Xiaodong Cun and Menghan Xia and Xintao Wang and Chao Weng and Ying Shan},

|

| 157 |

+

year={2024},

|

| 158 |

+

eprint={2401.09047},

|

| 159 |

+

archivePrefix={arXiv},

|

| 160 |

+

primaryClass={cs.CV}

|

| 161 |

+

}

|

| 162 |

+

|

| 163 |

+

@misc{chen2023videocrafter1,

|

| 164 |

+

title={VideoCrafter1: Open Diffusion Models for High-Quality Video Generation},

|

| 165 |

+

author={Haoxin Chen and Menghan Xia and Yingqing He and Yong Zhang and Xiaodong Cun and Shaoshu Yang and Jinbo Xing and Yaofang Liu and Qifeng Chen and Xintao Wang and Chao Weng and Ying Shan},

|

| 166 |

+

year={2023},

|

| 167 |

+

eprint={2310.19512},

|

| 168 |

+

archivePrefix={arXiv},

|

| 169 |

+

primaryClass={cs.CV}

|

| 170 |

+

}

|

| 171 |

+

|

| 172 |

+

@article{xing2023dynamicrafter,

|

| 173 |

+

title={DynamiCrafter: Animating Open-domain Images with Video Diffusion Priors},

|

| 174 |

+

author={Jinbo Xing and Menghan Xia and Yong Zhang and Haoxin Chen and Xintao Wang and Tien-Tsin Wong and Ying Shan},

|

| 175 |

+

year={2023},

|

| 176 |

+

eprint={2310.12190},

|

| 177 |

+

archivePrefix={arXiv},

|

| 178 |

+

primaryClass={cs.CV}

|

| 179 |

+

}

|

| 180 |

+

|

| 181 |

+

@article{he2022lvdm,

|

| 182 |

+

title={Latent Video Diffusion Models for High-Fidelity Long Video Generation},

|

| 183 |

+

author={Yingqing He and Tianyu Yang and Yong Zhang and Ying Shan and Qifeng Chen},

|

| 184 |

+

year={2022},

|

| 185 |

+

eprint={2211.13221},

|

| 186 |

+

archivePrefix={arXiv},

|

| 187 |

+

primaryClass={cs.CV}

|

| 188 |

+

}

|

| 189 |

+

```

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

## 🤗 Acknowledgements

|

| 193 |

+

Our codebase builds on [Stable Diffusion](https://github.com/Stability-AI/stablediffusion).

|

| 194 |

+

Thanks the authors for sharing their awesome codebases!

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

## 📢 Disclaimer

|

| 198 |

+

We develop this repository for RESEARCH purposes, so it can only be used for personal/research/non-commercial purposes.

|

| 199 |

+

****

|

assets/i2v/blackswan.gif

ADDED

|

assets/i2v/chair.gif

ADDED

|

assets/i2v/horse.gif

ADDED

|

assets/i2v/input/blackswan.png

ADDED

|

assets/i2v/input/chair.png

ADDED

|

assets/i2v/input/horse.png

ADDED

|

assets/i2v/input/sunset.png

ADDED

|

assets/i2v/sunset.gif

ADDED

|

assets/t2v/child.gif

ADDED

|

assets/t2v/couple.gif

ADDED

|

Git LFS Details

|

assets/t2v/duck.gif

ADDED

|

assets/t2v/girl_moose.jpg

ADDED

|

assets/t2v/rabbit.gif

ADDED

|