taochenxin

commited on

Commit

•

d4495bc

1

Parent(s):

b9aec36

release model

Browse files- README.md +212 -0

- added_tokens.json +11 -0

- assets/arch_comparison.png +0 -0

- assets/intro.png +0 -0

- assets/overview.png +0 -0

- assets/performance1.png +0 -0

- assets/performance2.png +0 -0

- assets/radar.png +0 -0

- config.json +216 -0

- configuration_holistic_embedding.py +114 -0

- configuration_internlm2.py +150 -0

- configuration_internvl_chat.py +106 -0

- conversation.py +1368 -0

- examples_image.jpg +0 -0

- generation_config.json +4 -0

- model-00001-of-00003.safetensors +3 -0

- model-00002-of-00003.safetensors +3 -0

- model-00003-of-00003.safetensors +3 -0

- model.safetensors.index.json +243 -0

- modeling_holistic_embedding.py +954 -0

- modeling_internlm2.py +1392 -0

- modeling_internvl_chat.py +450 -0

- special_tokens_map.json +47 -0

- tokenization_internlm2.py +235 -0

- tokenizer.model +3 -0

- tokenizer_config.json +179 -0

README.md

CHANGED

|

@@ -1,3 +1,215 @@

|

|

| 1 |

---

|

| 2 |

license: mit

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: mit

|

| 3 |

---

|

| 4 |

+

---

|

| 5 |

+

license: mit

|

| 6 |

+

pipeline_tag: image-text-to-text

|

| 7 |

+

library_name: transformers

|

| 8 |

+

base_model:

|

| 9 |

+

- internlm/internlm2-chat-1_8b

|

| 10 |

+

base_model_relation: merge

|

| 11 |

+

language:

|

| 12 |

+

- multilingual

|

| 13 |

+

tags:

|

| 14 |

+

- internvl

|

| 15 |

+

- vision-language model

|

| 16 |

+

- monolithic

|

| 17 |

+

---

|

| 18 |

+

# HoVLE

|

| 19 |

+

|

| 20 |

+

[\[📜 HoVLE Paper\]]() [\[🚀 Quick Start\]](#quick-start)

|

| 21 |

+

|

| 22 |

+

<a id="radar"></a>

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

## Introduction

|

| 26 |

+

|

| 27 |

+

<p align="middle">

|

| 28 |

+

<img src="assets/intro.png" width="95%" />

|

| 29 |

+

</p>

|

| 30 |

+

|

| 31 |

+

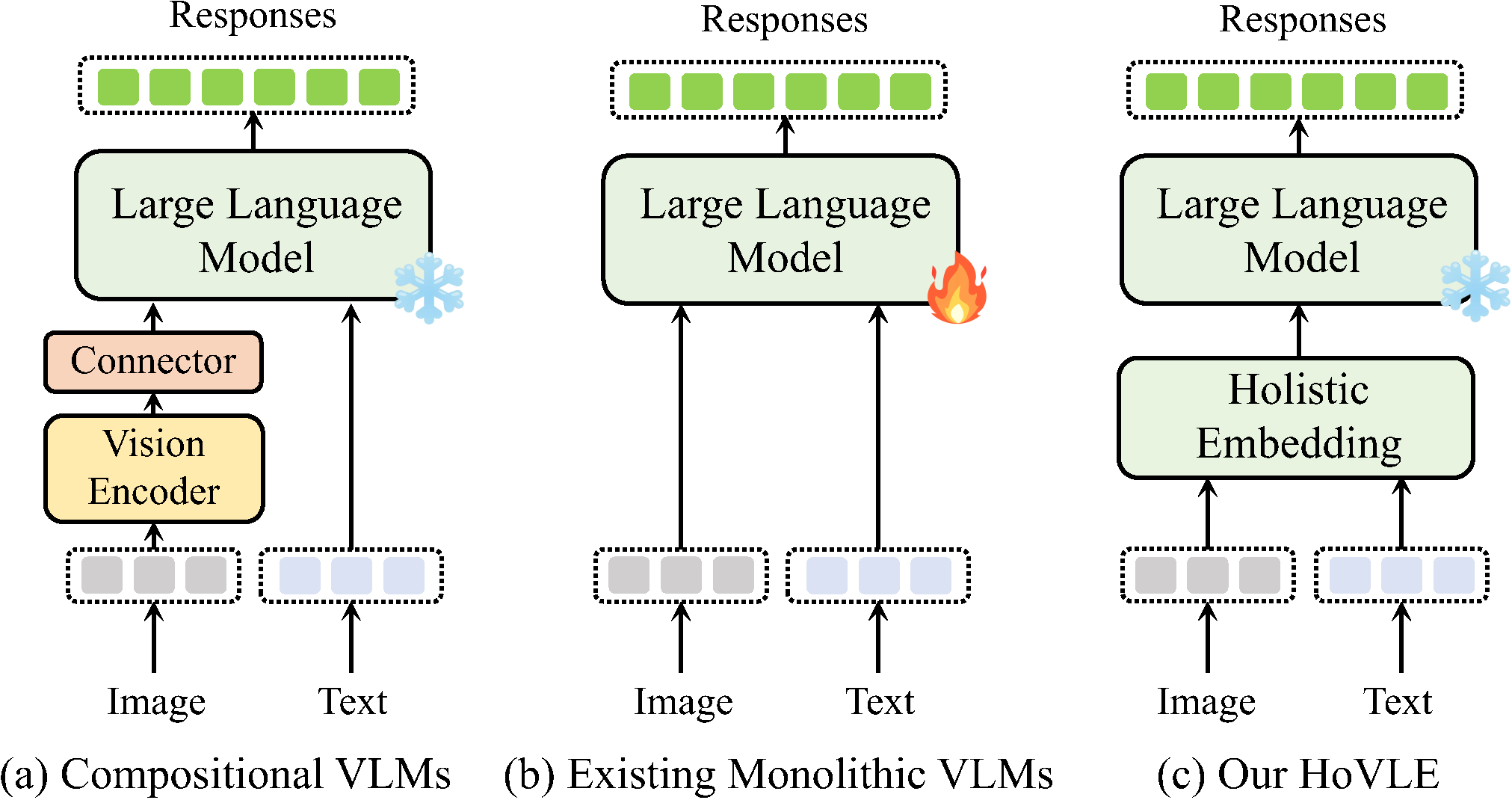

We introduce **HoVLE**, a novel monolithic vision-language model (VLM) that processes images and texts in a unified manner. HoVLE introduces a holistic embedding module that projects image and text inputs into a shared embedding space, allowing the Large Language Model (LLM) to interpret images in the same way as texts.

|

| 32 |

+

|

| 33 |

+

HoVLE significantly surpasses previous monolithic VLMs and demonstrates competitive performance with compositional VLMs. This work narrows the gap between monolithic and compositional VLMs, providing a promising direction for the development of monolithic VLMs.

|

| 34 |

+

|

| 35 |

+

This repository releases the HoVLE model with 2.6B parameters. It is built upon [internlm2-chat-1_8b](https://huggingface.co/internlm/internlm2-chat-1_8b). Please refer to [HoVLE (HD)](https://huggingface.co/taochenxin/HoVLE-HD) for the high-definition version. For more details, please refer to our [paper]().

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

## Model Details

|

| 39 |

+

<p align="middle">

|

| 40 |

+

<img src="assets/overview.png" width="90%" />

|

| 41 |

+

</p>

|

| 42 |

+

|

| 43 |

+

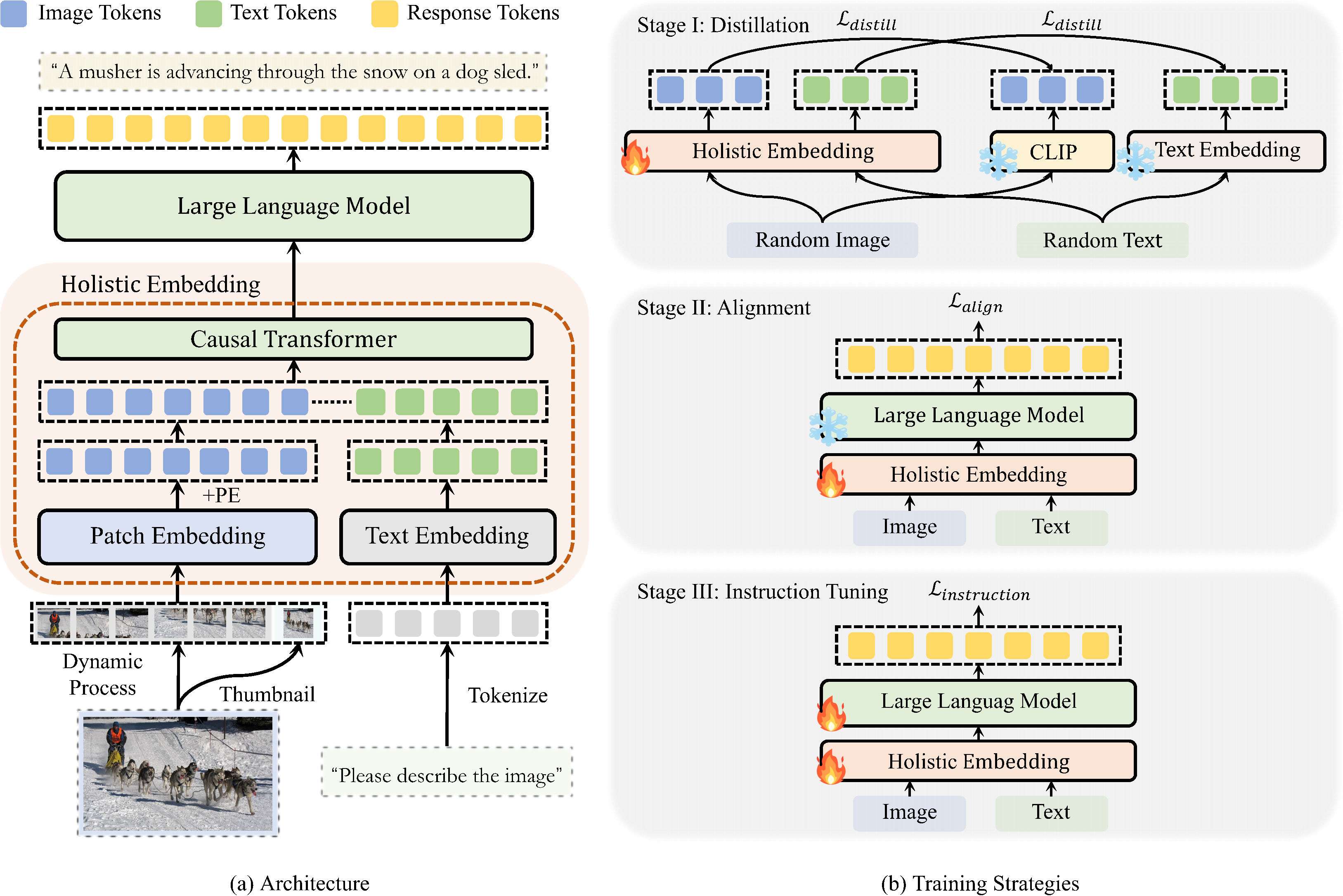

| | Details |

|

| 44 |

+

| :---------------------------: | :---------- |

|

| 45 |

+

| Architecture | The whole model consists of a holistic embedding module and an LLM. The holistic embedding module consists of the same causal Transformer layers as the LLM. It accepts both images and texts as input, and projects them into a unified embedding space. These embeddings are then forwarded into the LLM, constituting a monolithic VLM. |

|

| 46 |

+

| Stage I (Distillation) | The first stage trains the holistic embedding module to distill the image feature from a pre-trained visual encoder and the text embeddings from an LLM, providing general encoding abilities. Only the holistic embedding module is trainable. |

|

| 47 |

+

| Stage II (Alignment) | The second stage combines the holistic embedding module with the LLM to perform auto-regressive training, aligning different modalities to a shared embedding space. Only the holistic embedding module is trainable. |

|

| 48 |

+

| Stage III (Instruction Tuning) | A visual instruction tuning stage is incorporated to further strengthen the whole VLM to follow instructions. The whole model is trainable. |

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

## Performance

|

| 53 |

+

<p align="middle">

|

| 54 |

+

<img src="assets/performance1.png" width="90%" />

|

| 55 |

+

</p>

|

| 56 |

+

<p align="middle">

|

| 57 |

+

<img src="assets/performance2.png" width="90%" />

|

| 58 |

+

</p>

|

| 59 |

+

|

| 60 |

+

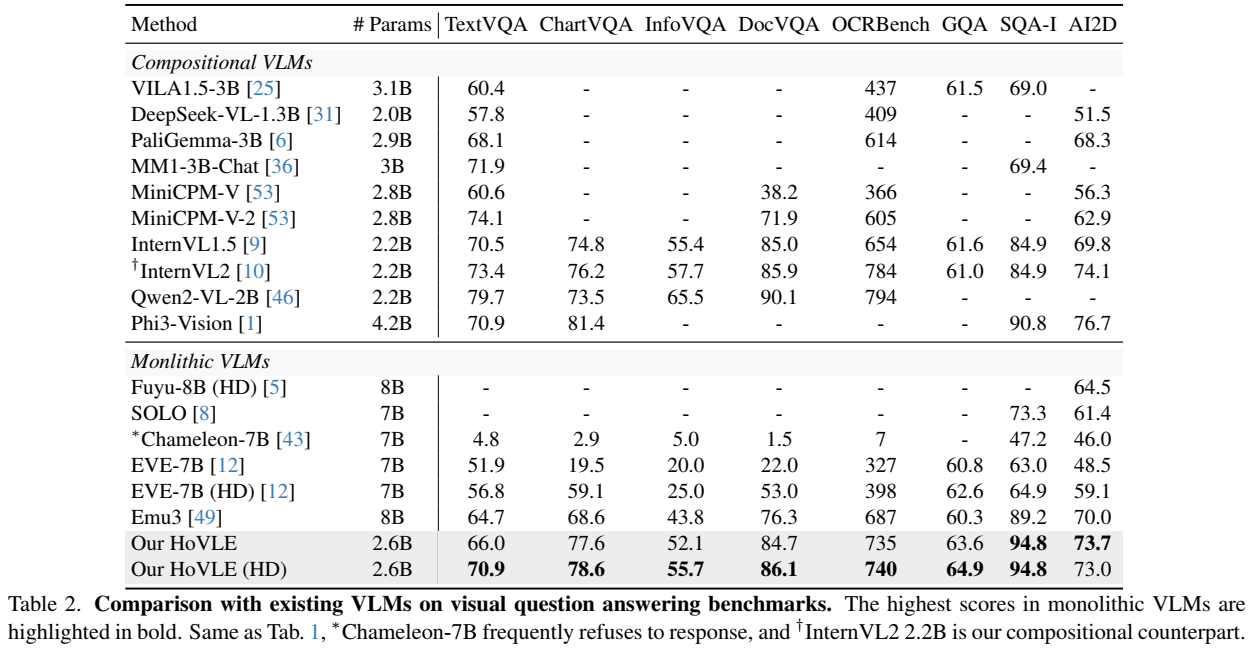

- Sources of the results include the original papers, our evaluation with [VLMEvalKit](https://github.com/open-compass/VLMEvalKit), and [OpenCompass](https://rank.opencompass.org.cn/leaderboard-multimodal/?m=REALTIME).

|

| 61 |

+

- Please note that evaluating the same model using different testing toolkits can result in slight differences, which is normal. Updates to code versions and variations in environment and hardware can also cause minor discrepancies in results.

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

Limitations: Although we have made efforts to ensure the safety of the model during the training process and to encourage the model to generate text that complies with ethical and legal requirements, the model may still produce unexpected outputs due to its size and probabilistic generation paradigm. For example, the generated responses may contain biases, discrimination, or other harmful content. Please do not propagate such content. We are not responsible for any consequences resulting from the dissemination of harmful information.

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

## Quick Start

|

| 70 |

+

|

| 71 |

+

We provide an example code to run HoVLE inference using `transformers`.

|

| 72 |

+

|

| 73 |

+

> Please use transformers==4.37.2 to ensure the model works normally.

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

### Inference with Transformers

|

| 77 |

+

|

| 78 |

+

```python

|

| 79 |

+

import numpy as np

|

| 80 |

+

import torch

|

| 81 |

+

import torchvision.transforms as T

|

| 82 |

+

from decord import VideoReader, cpu

|

| 83 |

+

from PIL import Image

|

| 84 |

+

from torchvision.transforms.functional import InterpolationMode

|

| 85 |

+

from transformers import AutoModel, AutoTokenizer

|

| 86 |

+

|

| 87 |

+

IMAGENET_MEAN = (0.485, 0.456, 0.406)

|

| 88 |

+

IMAGENET_STD = (0.229, 0.224, 0.225)

|

| 89 |

+

|

| 90 |

+

def build_transform(input_size):

|

| 91 |

+

MEAN, STD = IMAGENET_MEAN, IMAGENET_STD

|

| 92 |

+

transform = T.Compose([

|

| 93 |

+

T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),

|

| 94 |

+

T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),

|

| 95 |

+

T.ToTensor(),

|

| 96 |

+

T.Normalize(mean=MEAN, std=STD)

|

| 97 |

+

])

|

| 98 |

+

return transform

|

| 99 |

+

|

| 100 |

+

def find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):

|

| 101 |

+

best_ratio_diff = float('inf')

|

| 102 |

+

best_ratio = (1, 1)

|

| 103 |

+

area = width * height

|

| 104 |

+

for ratio in target_ratios:

|

| 105 |

+

target_aspect_ratio = ratio[0] / ratio[1]

|

| 106 |

+

ratio_diff = abs(aspect_ratio - target_aspect_ratio)

|

| 107 |

+

if ratio_diff < best_ratio_diff:

|

| 108 |

+

best_ratio_diff = ratio_diff

|

| 109 |

+

best_ratio = ratio

|

| 110 |

+

elif ratio_diff == best_ratio_diff:

|

| 111 |

+

if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:

|

| 112 |

+

best_ratio = ratio

|

| 113 |

+

return best_ratio

|

| 114 |

+

|

| 115 |

+

def dynamic_preprocess(image, min_num=1, max_num=12, image_size=448, use_thumbnail=False):

|

| 116 |

+

orig_width, orig_height = image.size

|

| 117 |

+

aspect_ratio = orig_width / orig_height

|

| 118 |

+

|

| 119 |

+

# calculate the existing image aspect ratio

|

| 120 |

+

target_ratios = set(

|

| 121 |

+

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) if

|

| 122 |

+

i * j <= max_num and i * j >= min_num)

|

| 123 |

+

target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])

|

| 124 |

+

|

| 125 |

+

# find the closest aspect ratio to the target

|

| 126 |

+

target_aspect_ratio = find_closest_aspect_ratio(

|

| 127 |

+

aspect_ratio, target_ratios, orig_width, orig_height, image_size)

|

| 128 |

+

|

| 129 |

+

# calculate the target width and height

|

| 130 |

+

target_width = image_size * target_aspect_ratio[0]

|

| 131 |

+

target_height = image_size * target_aspect_ratio[1]

|

| 132 |

+

blocks = target_aspect_ratio[0] * target_aspect_ratio[1]

|

| 133 |

+

|

| 134 |

+

# resize the image

|

| 135 |

+

resized_img = image.resize((target_width, target_height))

|

| 136 |

+

processed_images = []

|

| 137 |

+

for i in range(blocks):

|

| 138 |

+

box = (

|

| 139 |

+

(i % (target_width // image_size)) * image_size,

|

| 140 |

+

(i // (target_width // image_size)) * image_size,

|

| 141 |

+

((i % (target_width // image_size)) + 1) * image_size,

|

| 142 |

+

((i // (target_width // image_size)) + 1) * image_size

|

| 143 |

+

)

|

| 144 |

+

# split the image

|

| 145 |

+

split_img = resized_img.crop(box)

|

| 146 |

+

processed_images.append(split_img)

|

| 147 |

+

assert len(processed_images) == blocks

|

| 148 |

+

if use_thumbnail and len(processed_images) != 1:

|

| 149 |

+

thumbnail_img = image.resize((image_size, image_size))

|

| 150 |

+

processed_images.append(thumbnail_img)

|

| 151 |

+

return processed_images

|

| 152 |

+

|

| 153 |

+

def load_image(image_file, input_size=448, max_num=12):

|

| 154 |

+

image = Image.open(image_file).convert('RGB')

|

| 155 |

+

transform = build_transform(input_size=input_size)

|

| 156 |

+

images = dynamic_preprocess(image, image_size=input_size, use_thumbnail=True, max_num=max_num)

|

| 157 |

+

pixel_values = [transform(image) for image in images]

|

| 158 |

+

pixel_values = torch.stack(pixel_values)

|

| 159 |

+

return pixel_values

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

path = 'taochenxin/HoVLE/'

|

| 163 |

+

model = AutoModel.from_pretrained(

|

| 164 |

+

path,

|

| 165 |

+

torch_dtype=torch.bfloat16,

|

| 166 |

+

low_cpu_mem_usage=True,

|

| 167 |

+

trust_remote_code=True).eval().cuda()

|

| 168 |

+

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True, use_fast=False)

|

| 169 |

+

|

| 170 |

+

# set the max number of tiles in `max_num`

|

| 171 |

+

pixel_values = load_image('./examples_image.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 172 |

+

generation_config = dict(max_new_tokens=1024, do_sample=True)

|

| 173 |

+

|

| 174 |

+

# pure-text conversation (纯文本对话)

|

| 175 |

+

question = 'Hello, who are you?'

|

| 176 |

+

response, history = model.chat(tokenizer, None, question, generation_config, history=None, return_history=True)

|

| 177 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 178 |

+

|

| 179 |

+

question = 'Can you tell me a story?'

|

| 180 |

+

response, history = model.chat(tokenizer, None, question, generation_config, history=history, return_history=True)

|

| 181 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 182 |

+

|

| 183 |

+

# single-image single-round conversation (单图单轮对话)

|

| 184 |

+

question = '<image>\nPlease describe the image shortly.'

|

| 185 |

+

response = model.chat(tokenizer, pixel_values, question, generation_config)

|

| 186 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 187 |

+

|

| 188 |

+

# single-image multi-round conversation (单图多轮对话)

|

| 189 |

+

question = '<image>\nPlease describe the image in detail.'

|

| 190 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config, history=None, return_history=True)

|

| 191 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 192 |

+

|

| 193 |

+

question = 'Please write a poem according to the image.'

|

| 194 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config, history=history, return_history=True)

|

| 195 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 196 |

+

|

| 197 |

+

```

|

| 198 |

+

|

| 199 |

+

|

| 200 |

+

## License

|

| 201 |

+

|

| 202 |

+

This project is released under the MIT license, while InternLM2 is licensed under the Apache-2.0 license.

|

| 203 |

+

|

| 204 |

+

## Citation

|

| 205 |

+

|

| 206 |

+

If you find this project useful in your research, please consider citing:

|

| 207 |

+

|

| 208 |

+

```BibTeX

|

| 209 |

+

@article{tao2024hovle,

|

| 210 |

+

title={HoVLE: Unleashing the Power of Monolithic Vision-Language Models with Holistic Vision-Language Embedding},

|

| 211 |

+

author={Tao, Chenxin and Su, Shiqian and Zhu, Xizhou and Zhang, Chenyu and Chen, Zhe and Liu, Jiawen and Wang, Wenhai and Lu, Lewei and Huang, Gao and Qiao, Yu and Dai, Jifeng},

|

| 212 |

+

journal={},

|

| 213 |

+

year={2024}

|

| 214 |

+

}

|

| 215 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</box>": 92552,

|

| 3 |

+

"</img>": 92545,

|

| 4 |

+

"</quad>": 92548,

|

| 5 |

+

"</ref>": 92550,

|

| 6 |

+

"<IMG_CONTEXT>": 92546,

|

| 7 |

+

"<box>": 92551,

|

| 8 |

+

"<img>": 92544,

|

| 9 |

+

"<quad>": 92547,

|

| 10 |

+

"<ref>": 92549

|

| 11 |

+

}

|

assets/arch_comparison.png

ADDED

|

assets/intro.png

ADDED

|

assets/overview.png

ADDED

|

assets/performance1.png

ADDED

|

assets/performance2.png

ADDED

|

assets/radar.png

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,216 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"architectures": [

|

| 4 |

+

"InternVLChatModel"

|

| 5 |

+

],

|

| 6 |

+

"auto_map": {

|

| 7 |

+

"AutoConfig": "configuration_internvl_chat.InternVLChatConfig",

|

| 8 |

+

"AutoModel": "modeling_internvl_chat.InternVLChatModel",

|

| 9 |

+

"AutoModelForCausalLM": "modeling_internvl_chat.InternVLChatModel"

|

| 10 |

+

},

|

| 11 |

+

"downsample_ratio": 0.5,

|

| 12 |

+

"dynamic_image_size": true,

|

| 13 |

+

"embedding_config": {

|

| 14 |

+

"add_cross_attention": false,

|

| 15 |

+

"architectures": null,

|

| 16 |

+

"attention_bias": false,

|

| 17 |

+

"attention_dropout": 0.0,

|

| 18 |

+

"attn_implementation": "flash_attention_2",

|

| 19 |

+

"bad_words_ids": null,

|

| 20 |

+

"begin_suppress_tokens": null,

|

| 21 |

+

"bos_token_id": null,

|

| 22 |

+

"chunk_size_feed_forward": 0,

|

| 23 |

+

"cross_attention_hidden_size": null,

|

| 24 |

+

"decoder_start_token_id": null,

|

| 25 |

+

"diversity_penalty": 0.0,

|

| 26 |

+

"do_sample": false,

|

| 27 |

+

"downsample_ratio": 0.5,

|

| 28 |

+

"drop_path_rate": 0.0,

|

| 29 |

+

"dropout": 0.0,

|

| 30 |

+

"early_stopping": false,

|

| 31 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 32 |

+

"eos_token_id": null,

|

| 33 |

+

"exponential_decay_length_penalty": null,

|

| 34 |

+

"finetuning_task": null,

|

| 35 |

+

"forced_bos_token_id": null,

|

| 36 |

+

"forced_eos_token_id": null,

|

| 37 |

+

"hidden_act": "silu",

|

| 38 |

+

"hidden_size": 2048,

|

| 39 |

+

"id2label": {

|

| 40 |

+

"0": "LABEL_0",

|

| 41 |

+

"1": "LABEL_1"

|

| 42 |

+

},

|

| 43 |

+

"image_size": 448,

|

| 44 |

+

"img_context_token_id": 92546,

|

| 45 |

+

"initializer_factor": 1e-05,

|

| 46 |

+

"initializer_range": 0.02,

|

| 47 |

+

"intermediate_size": 8192,

|

| 48 |

+

"is_decoder": false,

|

| 49 |

+

"is_encoder_decoder": false,

|

| 50 |

+

"label2id": {

|

| 51 |

+

"LABEL_0": 0,

|

| 52 |

+

"LABEL_1": 1

|

| 53 |

+

},

|

| 54 |

+

"layer_norm_eps": 1e-06,

|

| 55 |

+

"length_penalty": 1.0,

|

| 56 |

+

"llm_hidden_size": 2048,

|

| 57 |

+

"llm_vocab_size": 92553,

|

| 58 |

+

"max_length": 20,

|

| 59 |

+

"max_position_embeddings": 32768,

|

| 60 |

+

"min_length": 0,

|

| 61 |

+

"mlp_bias": false,

|

| 62 |

+

"no_repeat_ngram_size": 0,

|

| 63 |

+

"norm_type": "rms_norm",

|

| 64 |

+

"num_attention_heads": 16,

|

| 65 |

+

"num_beam_groups": 1,

|

| 66 |

+

"num_beams": 1,

|

| 67 |

+

"num_channels": 3,

|

| 68 |

+

"num_hidden_layers": 8,

|

| 69 |

+

"num_key_value_heads": 8,

|

| 70 |

+

"num_return_sequences": 1,

|

| 71 |

+

"output_attentions": false,

|

| 72 |

+

"output_hidden_states": false,

|

| 73 |

+

"output_scores": false,

|

| 74 |

+

"pad_token_id": null,

|

| 75 |

+

"patch_size": 14,

|

| 76 |

+

"pixel_shuffle_loc": "pre",

|

| 77 |

+

"prefix": null,

|

| 78 |

+

"pretraining_tp": 1,

|

| 79 |

+

"problem_type": null,

|

| 80 |

+

"pruned_heads": {},

|

| 81 |

+

"qk_normalization": true,

|

| 82 |

+

"qkv_bias": false,

|

| 83 |

+

"remove_invalid_values": false,

|

| 84 |

+

"repetition_penalty": 1.0,

|

| 85 |

+

"return_dict": true,

|

| 86 |

+

"return_dict_in_generate": false,

|

| 87 |

+

"rms_norm_eps": 1e-05,

|

| 88 |

+

"rope_scaling": null,

|

| 89 |

+

"rope_theta": 1000000.0,

|

| 90 |

+

"sep_token_id": null,

|

| 91 |

+

"special_token_maps": {},

|

| 92 |

+

"suppress_tokens": null,

|

| 93 |

+

"target_hidden_size": 2048,

|

| 94 |

+

"task_specific_params": null,

|

| 95 |

+

"temperature": 1.0,

|

| 96 |

+

"tf_legacy_loss": false,

|

| 97 |

+

"tie_encoder_decoder": false,

|

| 98 |

+

"tie_word_embeddings": true,

|

| 99 |

+

"tokenizer_class": null,

|

| 100 |

+

"top_k": 50,

|

| 101 |

+

"top_p": 1.0,

|

| 102 |

+

"torch_dtype": null,

|

| 103 |

+

"torchscript": false,

|

| 104 |

+

"transformers_version": "4.42.4",

|

| 105 |

+

"typical_p": 1.0,

|

| 106 |

+

"use_autoregressive_loss": false,

|

| 107 |

+

"use_bfloat16": false,

|

| 108 |

+

"use_flash_attn": true,

|

| 109 |

+

"use_img_start_end_tokens": true,

|

| 110 |

+

"use_ls": false,

|

| 111 |

+

"use_pixel_shuffle_proj": true

|

| 112 |

+

},

|

| 113 |

+

"force_image_size": 448,

|

| 114 |

+

"llm_config": {

|

| 115 |

+

"add_cross_attention": false,

|

| 116 |

+

"architectures": [

|

| 117 |

+

"InternLM2ForCausalLM"

|

| 118 |

+

],

|

| 119 |

+

"attn_implementation": "flash_attention_2",

|

| 120 |

+

"auto_map": {

|

| 121 |

+

"AutoConfig": "configuration_internlm2.InternLM2Config",

|

| 122 |

+

"AutoModel": "modeling_internlm2.InternLM2ForCausalLM",

|

| 123 |

+

"AutoModelForCausalLM": "modeling_internlm2.InternLM2ForCausalLM"

|

| 124 |

+

},

|

| 125 |

+

"bad_words_ids": null,

|

| 126 |

+

"begin_suppress_tokens": null,

|

| 127 |

+

"bias": false,

|

| 128 |

+

"bos_token_id": 1,

|

| 129 |

+

"chunk_size_feed_forward": 0,

|

| 130 |

+

"cross_attention_hidden_size": null,

|

| 131 |

+

"decoder_start_token_id": null,

|

| 132 |

+

"diversity_penalty": 0.0,

|

| 133 |

+

"do_sample": false,

|

| 134 |

+

"early_stopping": false,

|

| 135 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 136 |

+

"eos_token_id": 2,

|

| 137 |

+

"exponential_decay_length_penalty": null,

|

| 138 |

+

"finetuning_task": null,

|

| 139 |

+

"forced_bos_token_id": null,

|

| 140 |

+

"forced_eos_token_id": null,

|

| 141 |

+

"hidden_act": "silu",

|

| 142 |

+

"hidden_size": 2048,

|

| 143 |

+

"id2label": {

|

| 144 |

+

"0": "LABEL_0",

|

| 145 |

+

"1": "LABEL_1"

|

| 146 |

+

},

|

| 147 |

+

"initializer_range": 0.02,

|

| 148 |

+

"intermediate_size": 8192,

|

| 149 |

+

"is_decoder": false,

|

| 150 |

+

"is_encoder_decoder": false,

|

| 151 |

+

"label2id": {

|

| 152 |

+

"LABEL_0": 0,

|

| 153 |

+

"LABEL_1": 1

|

| 154 |

+

},

|

| 155 |

+

"length_penalty": 1.0,

|

| 156 |

+

"max_length": 20,

|

| 157 |

+

"max_position_embeddings": 32768,

|

| 158 |

+

"min_length": 0,

|

| 159 |

+

"model_type": "internlm2",

|

| 160 |

+

"no_repeat_ngram_size": 0,

|

| 161 |

+

"num_attention_heads": 16,

|

| 162 |

+

"num_beam_groups": 1,

|

| 163 |

+

"num_beams": 1,

|

| 164 |

+

"num_hidden_layers": 24,

|

| 165 |

+

"num_key_value_heads": 8,

|

| 166 |

+

"num_return_sequences": 1,

|

| 167 |

+

"output_attentions": false,

|

| 168 |

+

"output_hidden_states": false,

|

| 169 |

+

"output_scores": false,

|

| 170 |

+

"pad_token_id": 2,

|

| 171 |

+

"prefix": null,

|

| 172 |

+

"problem_type": null,

|

| 173 |

+

"pruned_heads": {},

|

| 174 |

+

"remove_invalid_values": false,

|

| 175 |

+

"repetition_penalty": 1.0,

|

| 176 |

+

"return_dict": true,

|

| 177 |

+

"return_dict_in_generate": false,

|

| 178 |

+

"rms_norm_eps": 1e-05,

|

| 179 |

+

"rope_scaling": {

|

| 180 |

+

"factor": 2.0,

|

| 181 |

+

"type": "dynamic"

|

| 182 |

+

},

|

| 183 |

+

"rope_theta": 1000000,

|

| 184 |

+

"sep_token_id": null,

|

| 185 |

+

"suppress_tokens": null,

|

| 186 |

+

"task_specific_params": null,

|

| 187 |

+

"temperature": 1.0,

|

| 188 |

+

"tf_legacy_loss": false,

|

| 189 |

+

"tie_encoder_decoder": false,

|

| 190 |

+

"tie_word_embeddings": false,

|

| 191 |

+

"tokenizer_class": null,

|

| 192 |

+

"top_k": 50,

|

| 193 |

+

"top_p": 1.0,

|

| 194 |

+

"torch_dtype": "bfloat16",

|

| 195 |

+

"torchscript": false,

|

| 196 |

+

"transformers_version": "4.42.4",

|

| 197 |

+

"typical_p": 1.0,

|

| 198 |

+

"use_bfloat16": true,

|

| 199 |

+

"use_cache": false,

|

| 200 |

+

"vocab_size": 92553

|

| 201 |

+

},

|

| 202 |

+

"max_dynamic_patch": 12,

|

| 203 |

+

"min_dynamic_patch": 1,

|

| 204 |

+

"model_type": "internvl_chat",

|

| 205 |

+

"normalize_encoder_output": true,

|

| 206 |

+

"pad2square": false,

|

| 207 |

+

"ps_version": "v2",

|

| 208 |

+

"select_layer": -1,

|

| 209 |

+

"template": "internlm2-chat",

|

| 210 |

+

"torch_dtype": "bfloat16",

|

| 211 |

+

"transformers_version": null,

|

| 212 |

+

"use_backbone_lora": 0,

|

| 213 |

+

"use_llm_lora": 0,

|

| 214 |

+

"use_mlp": false,

|

| 215 |

+

"use_thumbnail": true

|

| 216 |

+

}

|

configuration_holistic_embedding.py

ADDED

|

@@ -0,0 +1,114 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2023 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

import os

|

| 7 |

+

from typing import Union

|

| 8 |

+

import json

|

| 9 |

+

|

| 10 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 11 |

+

from transformers.utils import logging

|

| 12 |

+

|

| 13 |

+

logger = logging.get_logger(__name__)

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

class HolisticEmbeddingConfig(PretrainedConfig):

|

| 17 |

+

|

| 18 |

+

model_type = 'holistic_embedding'

|

| 19 |

+

|

| 20 |

+

def __init__(

|

| 21 |

+

self,

|

| 22 |

+

num_hidden_layers=32,

|

| 23 |

+

initializer_factor=1e-5,

|

| 24 |

+

use_autoregressive_loss=False,

|

| 25 |

+

# vision embedding

|

| 26 |

+

num_channels=3,

|

| 27 |

+

patch_size=14,

|

| 28 |

+

image_size=224,

|

| 29 |

+

# attention layer

|

| 30 |

+

hidden_size=4096,

|

| 31 |

+

num_attention_heads=32,

|

| 32 |

+

num_key_value_heads=32,

|

| 33 |

+

attention_bias=False,

|

| 34 |

+

attention_dropout=0.0,

|

| 35 |

+

max_position_embeddings=4096,

|

| 36 |

+

rope_theta=10000.0,

|

| 37 |

+

rope_scaling=None,

|

| 38 |

+

# mlp layer

|

| 39 |

+

intermediate_size=11008,

|

| 40 |

+

mlp_bias=False,

|

| 41 |

+

hidden_act='silu',

|

| 42 |

+

# rms norm

|

| 43 |

+

rms_norm_eps=1e-5,

|

| 44 |

+

# pretraining

|

| 45 |

+

pretraining_tp=1,

|

| 46 |

+

use_ls=True,

|

| 47 |

+

use_img_start_end_tokens=True,

|

| 48 |

+

special_token_maps={},

|

| 49 |

+

llm_vocab_size=92553,

|

| 50 |

+

llm_hidden_size=2048,

|

| 51 |

+

attn_implementation='flash_attention_2',

|

| 52 |

+

downsample_ratio=0.5,

|

| 53 |

+

img_context_token_id=92546,

|

| 54 |

+

pixel_shuffle_loc="pre",

|

| 55 |

+

**kwargs,

|

| 56 |

+

):

|

| 57 |

+

super().__init__(**kwargs)

|

| 58 |

+

|

| 59 |

+

self.num_hidden_layers = num_hidden_layers

|

| 60 |

+

self.initializer_factor = initializer_factor

|

| 61 |

+

self.use_autoregressive_loss = use_autoregressive_loss

|

| 62 |

+

|

| 63 |

+

self.num_channels = num_channels

|

| 64 |

+

self.patch_size = patch_size

|

| 65 |

+

self.image_size = image_size

|

| 66 |

+

|

| 67 |

+

self.hidden_size = hidden_size

|

| 68 |

+

self.num_attention_heads = num_attention_heads

|

| 69 |

+

self.num_key_value_heads = num_key_value_heads

|

| 70 |

+

self.attention_bias = attention_bias

|

| 71 |

+

self.attention_dropout = attention_dropout

|

| 72 |

+

self.max_position_embeddings = max_position_embeddings

|

| 73 |

+

self.rope_theta = rope_theta

|

| 74 |

+

self.rope_scaling = rope_scaling

|

| 75 |

+

|

| 76 |

+

self.intermediate_size = intermediate_size

|

| 77 |

+

self.mlp_bias = mlp_bias

|

| 78 |

+

self.hidden_act = hidden_act

|

| 79 |

+

|

| 80 |

+

self.rms_norm_eps = rms_norm_eps

|

| 81 |

+

|

| 82 |

+

self.pretraining_tp = pretraining_tp

|

| 83 |

+

self.use_ls = use_ls

|

| 84 |

+

self.use_img_start_end_tokens = use_img_start_end_tokens

|

| 85 |

+

|

| 86 |

+

self.special_token_maps = special_token_maps

|

| 87 |

+

self.llm_vocab_size = llm_vocab_size

|

| 88 |

+

self.llm_hidden_size = llm_hidden_size

|

| 89 |

+

self.attn_implementation = attn_implementation

|

| 90 |

+

self.downsample_ratio = downsample_ratio

|

| 91 |

+

self.img_context_token_id = img_context_token_id

|

| 92 |

+

self.pixel_shuffle_loc = pixel_shuffle_loc

|

| 93 |

+

|

| 94 |

+

@classmethod

|

| 95 |

+

def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> 'PretrainedConfig':

|

| 96 |

+

config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

|

| 97 |

+

|

| 98 |

+

if 'vision_config' in config_dict:

|

| 99 |

+

config_dict = config_dict['vision_config']

|

| 100 |

+

|

| 101 |

+

if 'model_type' in config_dict and hasattr(cls, 'model_type') and config_dict['model_type'] != cls.model_type:

|

| 102 |

+

logger.warning(

|

| 103 |

+

f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

|

| 104 |

+

f'{cls.model_type}. This is not supported for all configurations of models and can yield errors.'

|

| 105 |

+

)

|

| 106 |

+

|

| 107 |

+

return cls.from_dict(config_dict, **kwargs)

|

| 108 |

+

|

| 109 |

+

@classmethod

|

| 110 |

+

def from_dict_path(cls, config_path):

|

| 111 |

+

with open(config_path, 'r') as f:

|

| 112 |

+

config_dict = json.load(f)

|

| 113 |

+

|

| 114 |

+

return cls.from_dict(config_dict)

|

configuration_internlm2.py

ADDED

|

@@ -0,0 +1,150 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) The InternLM team and The HuggingFace Inc. team. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# This code is based on transformers/src/transformers/models/llama/configuration_llama.py

|

| 4 |

+

#

|

| 5 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 6 |

+

# you may not use this file except in compliance with the License.

|

| 7 |

+

# You may obtain a copy of the License at

|

| 8 |

+

#

|

| 9 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 10 |

+

#

|

| 11 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 12 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 13 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 14 |

+

# See the License for the specific language governing permissions and

|

| 15 |

+

# limitations under the License.

|

| 16 |

+

""" InternLM2 model configuration"""

|

| 17 |

+

|

| 18 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 19 |

+

from transformers.utils import logging

|

| 20 |

+

|

| 21 |

+

logger = logging.get_logger(__name__)

|

| 22 |

+

|

| 23 |

+

INTERNLM2_PRETRAINED_CONFIG_ARCHIVE_MAP = {}

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

# Modified from transformers.model.llama.configuration_llama.LlamaConfig

|

| 27 |

+

class InternLM2Config(PretrainedConfig):

|

| 28 |

+

r"""

|

| 29 |

+

This is the configuration class to store the configuration of a [`InternLM2Model`]. It is used to instantiate

|

| 30 |

+

an InternLM2 model according to the specified arguments, defining the model architecture. Instantiating a

|

| 31 |

+

configuration with the defaults will yield a similar configuration to that of the InternLM2-7B.

|

| 32 |

+

|

| 33 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 34 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

Args:

|

| 38 |

+

vocab_size (`int`, *optional*, defaults to 32000):

|

| 39 |

+

Vocabulary size of the InternLM2 model. Defines the number of different tokens that can be represented by the

|

| 40 |

+

`inputs_ids` passed when calling [`InternLM2Model`]

|

| 41 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 42 |

+

Dimension of the hidden representations.

|

| 43 |

+

intermediate_size (`int`, *optional*, defaults to 11008):

|

| 44 |

+

Dimension of the MLP representations.

|

| 45 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 46 |

+

Number of hidden layers in the Transformer encoder.

|

| 47 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 48 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 49 |

+

num_key_value_heads (`int`, *optional*):

|

| 50 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 51 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 52 |

+

`num_key_value_heads=1 the model will use Multi Query Attention (MQA) otherwise GQA is used. When

|

| 53 |

+

converting a multi-head checkpoint to a GQA checkpoint, each group key and value head should be constructed

|

| 54 |

+

by meanpooling all the original heads within that group. For more details checkout [this

|

| 55 |

+

paper](https://arxiv.org/pdf/2305.13245.pdf). If it is not specified, will default to

|

| 56 |

+

`num_attention_heads`.

|

| 57 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 58 |

+

The non-linear activation function (function or string) in the decoder.

|

| 59 |

+

max_position_embeddings (`int`, *optional*, defaults to 2048):

|

| 60 |

+

The maximum sequence length that this model might ever be used with. Typically set this to something large

|

| 61 |

+

just in case (e.g., 512 or 1024 or 2048).

|

| 62 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 63 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 64 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-12):

|

| 65 |

+

The epsilon used by the rms normalization layers.

|

| 66 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 67 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 68 |

+

relevant if `config.is_decoder=True`.

|

| 69 |

+

tie_word_embeddings(`bool`, *optional*, defaults to `False`):

|

| 70 |

+

Whether to tie weight embeddings

|

| 71 |

+

Example:

|

| 72 |

+

|

| 73 |

+

"""

|

| 74 |

+

model_type = 'internlm2'

|

| 75 |

+

_auto_class = 'AutoConfig'

|

| 76 |

+

|

| 77 |

+

def __init__( # pylint: disable=W0102

|

| 78 |

+

self,

|

| 79 |

+

vocab_size=103168,

|

| 80 |

+

hidden_size=4096,

|

| 81 |

+

intermediate_size=11008,

|

| 82 |

+

num_hidden_layers=32,

|

| 83 |

+

num_attention_heads=32,

|

| 84 |

+

num_key_value_heads=None,

|

| 85 |

+

hidden_act='silu',

|

| 86 |

+

max_position_embeddings=2048,

|

| 87 |

+

initializer_range=0.02,

|

| 88 |

+

rms_norm_eps=1e-6,

|

| 89 |

+

use_cache=True,

|

| 90 |

+

pad_token_id=0,

|

| 91 |

+

bos_token_id=1,

|

| 92 |

+

eos_token_id=2,

|

| 93 |

+

tie_word_embeddings=False,

|

| 94 |

+

bias=True,

|

| 95 |

+

rope_theta=10000,

|

| 96 |

+

rope_scaling=None,

|

| 97 |

+

attn_implementation='eager',

|

| 98 |

+

**kwargs,

|

| 99 |

+

):

|

| 100 |

+

self.vocab_size = vocab_size

|

| 101 |

+

self.max_position_embeddings = max_position_embeddings

|

| 102 |

+

self.hidden_size = hidden_size

|

| 103 |

+

self.intermediate_size = intermediate_size

|

| 104 |

+

self.num_hidden_layers = num_hidden_layers

|

| 105 |

+

self.num_attention_heads = num_attention_heads

|

| 106 |

+

self.bias = bias

|

| 107 |

+

|

| 108 |

+

if num_key_value_heads is None:

|

| 109 |

+

num_key_value_heads = num_attention_heads

|

| 110 |

+

self.num_key_value_heads = num_key_value_heads

|

| 111 |

+

|

| 112 |

+

self.hidden_act = hidden_act

|

| 113 |

+

self.initializer_range = initializer_range

|

| 114 |

+

self.rms_norm_eps = rms_norm_eps

|

| 115 |

+

self.use_cache = use_cache

|

| 116 |

+

self.rope_theta = rope_theta

|

| 117 |

+

self.rope_scaling = rope_scaling

|

| 118 |

+

self._rope_scaling_validation()

|

| 119 |

+

|

| 120 |

+

self.attn_implementation = attn_implementation

|

| 121 |

+

if self.attn_implementation is None:

|

| 122 |

+

self.attn_implementation = 'eager'

|

| 123 |

+

super().__init__(

|

| 124 |

+

pad_token_id=pad_token_id,

|

| 125 |

+

bos_token_id=bos_token_id,

|

| 126 |

+

eos_token_id=eos_token_id,

|

| 127 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 128 |

+

**kwargs,

|

| 129 |

+

)

|

| 130 |

+

|

| 131 |

+

def _rope_scaling_validation(self):

|

| 132 |

+

"""

|

| 133 |

+

Validate the `rope_scaling` configuration.

|

| 134 |

+

"""

|

| 135 |

+

if self.rope_scaling is None:

|

| 136 |

+

return

|

| 137 |

+

|

| 138 |

+

if not isinstance(self.rope_scaling, dict) or len(self.rope_scaling) != 2:

|

| 139 |

+

raise ValueError(

|

| 140 |

+

'`rope_scaling` must be a dictionary with with two fields, `type` and `factor`, '

|

| 141 |

+

f'got {self.rope_scaling}'

|

| 142 |

+

)

|

| 143 |

+

rope_scaling_type = self.rope_scaling.get('type', None)

|

| 144 |

+

rope_scaling_factor = self.rope_scaling.get('factor', None)

|

| 145 |

+

if rope_scaling_type is None or rope_scaling_type not in ['linear', 'dynamic']:

|

| 146 |

+

raise ValueError(

|

| 147 |

+

f"`rope_scaling`'s type field must be one of ['linear', 'dynamic'], got {rope_scaling_type}"

|

| 148 |

+

)

|

| 149 |

+

if rope_scaling_factor is None or not isinstance(rope_scaling_factor, float) or rope_scaling_factor < 1.0:

|

| 150 |

+

raise ValueError(f"`rope_scaling`'s factor field must be a float >= 1, got {rope_scaling_factor}")

|

configuration_internvl_chat.py

ADDED

|

@@ -0,0 +1,106 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2023 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

|

| 7 |

+

import copy

|

| 8 |

+

|

| 9 |

+

from .configuration_internlm2 import InternLM2Config

|

| 10 |

+

from transformers import AutoConfig, LlamaConfig, Qwen2Config

|

| 11 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 12 |

+

from transformers.utils import logging

|

| 13 |

+

|

| 14 |

+

from .configuration_holistic_embedding import HolisticEmbeddingConfig

|

| 15 |

+

|

| 16 |

+

logger = logging.get_logger(__name__)

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

class InternVLChatConfig(PretrainedConfig):

|

| 20 |

+

model_type = 'internvl_chat'

|

| 21 |

+

is_composition = True

|

| 22 |

+

|

| 23 |

+

def __init__(

|

| 24 |

+

self,

|

| 25 |

+

embedding_config=None,

|

| 26 |

+

llm_config=None,

|

| 27 |

+

use_backbone_lora=0,

|

| 28 |

+

use_llm_lora=0,

|

| 29 |

+

pad2square=False,

|

| 30 |

+

select_layer=-1,

|

| 31 |

+

force_image_size=None,

|

| 32 |

+

downsample_ratio=0.5,

|

| 33 |

+

template=None,

|

| 34 |

+

dynamic_image_size=False,

|

| 35 |

+

use_thumbnail=False,

|

| 36 |

+

ps_version='v1',

|

| 37 |

+

min_dynamic_patch=1,

|

| 38 |

+

max_dynamic_patch=6,

|

| 39 |

+

normalize_encoder_output=False,

|

| 40 |

+

**kwargs):

|

| 41 |

+

super().__init__(**kwargs)

|

| 42 |

+

|

| 43 |

+

if embedding_config is None:

|

| 44 |

+

embedding_config = {}

|

| 45 |

+

logger.info('embedding_config is None. Initializing the InternVisionConfig with default values.')

|

| 46 |

+

|

| 47 |

+

if llm_config is None:

|

| 48 |

+

llm_config = {}

|

| 49 |

+

logger.info('llm_config is None. Initializing the LlamaConfig config with default values (`LlamaConfig`).')

|

| 50 |

+

|

| 51 |

+

self.embedding_config = HolisticEmbeddingConfig(**embedding_config)

|

| 52 |

+

if llm_config['architectures'][0] == 'LlamaForCausalLM':

|

| 53 |

+

self.llm_config = LlamaConfig(**llm_config)

|

| 54 |

+

elif llm_config['architectures'][0] == 'InternLM2ForCausalLM':

|

| 55 |

+

self.llm_config = InternLM2Config(**llm_config)

|

| 56 |

+

elif llm_config['architectures'][0] == 'Phi3ForCausalLM':

|

| 57 |

+

self.llm_config = Phi3Config(**llm_config)

|

| 58 |

+

elif llm_config['architectures'][0] == 'Qwen2ForCausalLM':

|

| 59 |

+

self.llm_config = Qwen2Config(**llm_config)

|

| 60 |

+

else:

|

| 61 |

+

raise ValueError('Unsupported architecture: {}'.format(llm_config['architectures'][0]))

|

| 62 |

+

self.use_backbone_lora = use_backbone_lora

|

| 63 |

+

self.use_llm_lora = use_llm_lora

|

| 64 |

+

self.pad2square = pad2square

|

| 65 |

+

self.select_layer = select_layer

|

| 66 |

+

self.force_image_size = force_image_size

|

| 67 |

+

self.downsample_ratio = downsample_ratio

|

| 68 |

+

self.template = template

|

| 69 |

+

self.dynamic_image_size = dynamic_image_size

|

| 70 |

+

self.use_thumbnail = use_thumbnail

|

| 71 |

+

self.ps_version = ps_version # pixel shuffle version

|

| 72 |

+

self.min_dynamic_patch = min_dynamic_patch

|

| 73 |

+

self.max_dynamic_patch = max_dynamic_patch

|

| 74 |

+

self.normalize_encoder_output = normalize_encoder_output

|

| 75 |

+

|

| 76 |

+

logger.info(f'vision_select_layer: {self.select_layer}')

|

| 77 |

+

logger.info(f'ps_version: {self.ps_version}')

|

| 78 |

+

logger.info(f'min_dynamic_patch: {self.min_dynamic_patch}')

|

| 79 |

+

logger.info(f'max_dynamic_patch: {self.max_dynamic_patch}')

|

| 80 |

+

|

| 81 |

+

def to_dict(self):

|

| 82 |

+

"""

|

| 83 |

+

Serializes this instance to a Python dictionary. Override the default [`~PretrainedConfig.to_dict`].

|

| 84 |

+

|

| 85 |

+

Returns:

|

| 86 |

+

`Dict[str, any]`: Dictionary of all the attributes that make up this configuration instance,

|

| 87 |

+

"""

|

| 88 |

+

output = copy.deepcopy(self.__dict__)

|

| 89 |

+

output['embedding_config'] = self.embedding_config.to_dict()

|

| 90 |

+

output['llm_config'] = self.llm_config.to_dict()

|