add checkpoints

Browse files- GPT_SoVITS/pretrained_models/.gitignore +2 -0

- Qwen/Qwen-1_8B-Chat/LICENSE +55 -0

- Qwen/Qwen-1_8B-Chat/NOTICE +280 -0

- Qwen/Qwen-1_8B-Chat/README.md +418 -0

- Qwen/Qwen-1_8B-Chat/assets/logo.jpg +0 -0

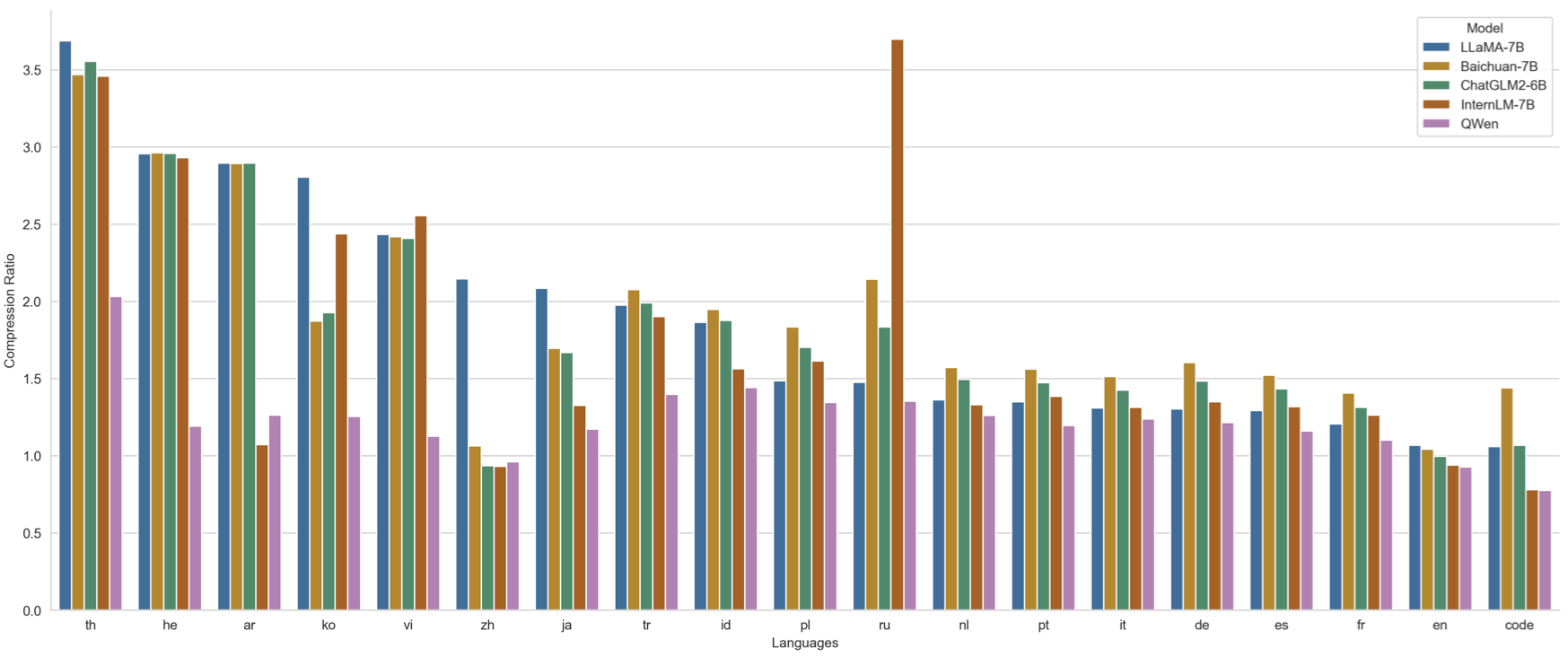

- Qwen/Qwen-1_8B-Chat/assets/qwen_tokenizer.png +0 -0

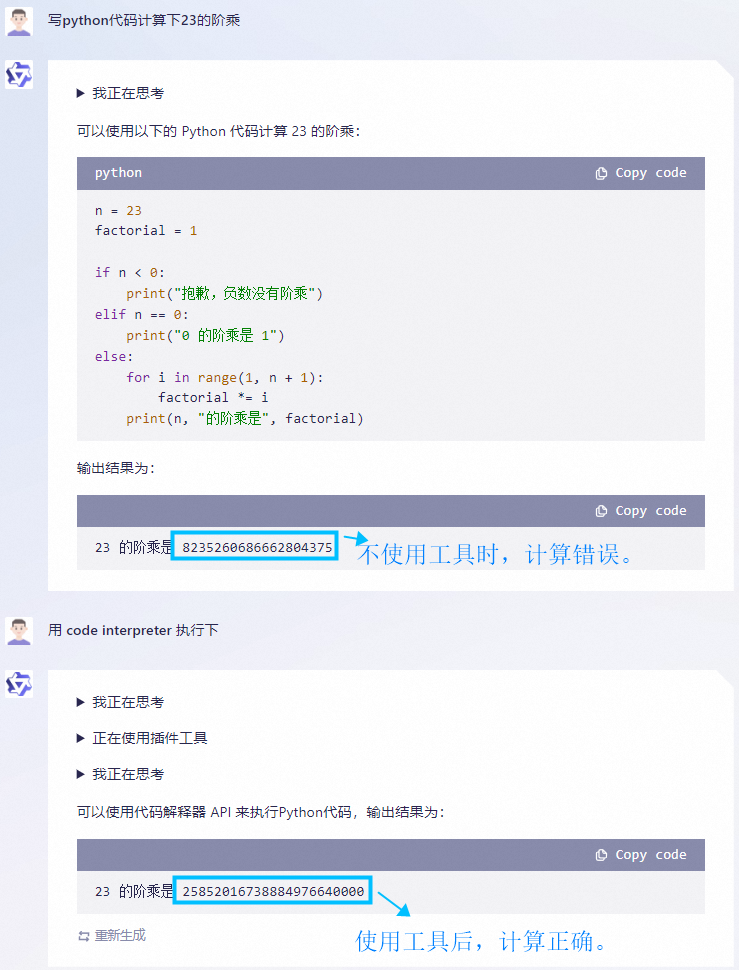

- Qwen/Qwen-1_8B-Chat/assets/react_showcase_001.png +0 -0

- Qwen/Qwen-1_8B-Chat/assets/react_showcase_002.png +0 -0

- Qwen/Qwen-1_8B-Chat/assets/wechat.png +0 -0

- Qwen/Qwen-1_8B-Chat/cache_autogptq_cuda_256.cpp +198 -0

- Qwen/Qwen-1_8B-Chat/cache_autogptq_cuda_kernel_256.cu +1708 -0

- Qwen/Qwen-1_8B-Chat/config.json +37 -0

- Qwen/Qwen-1_8B-Chat/configuration_qwen.py +71 -0

- Qwen/Qwen-1_8B-Chat/cpp_kernels.py +55 -0

- Qwen/Qwen-1_8B-Chat/examples/react_prompt.md +249 -0

- Qwen/Qwen-1_8B-Chat/generation_config.json +12 -0

- Qwen/Qwen-1_8B-Chat/model-00001-of-00002.safetensors +3 -0

- Qwen/Qwen-1_8B-Chat/model-00002-of-00002.safetensors +3 -0

- Qwen/Qwen-1_8B-Chat/model.safetensors.index.json +202 -0

- Qwen/Qwen-1_8B-Chat/modeling_qwen.py +1363 -0

- Qwen/Qwen-1_8B-Chat/qwen.tiktoken +0 -0

- Qwen/Qwen-1_8B-Chat/qwen_generation_utils.py +416 -0

- Qwen/Qwen-1_8B-Chat/tokenization_qwen.py +276 -0

- Qwen/Qwen-1_8B-Chat/tokenizer_config.json +10 -0

- checkpoints/SadTalker_V0.0.2_256.safetensors +3 -0

- checkpoints/hub/checkpoints/s3fd-619a316812.pth +3 -0

- checkpoints/lipsync_expert.pth +3 -0

- checkpoints/mapping_00109-model.pth.tar +3 -0

- checkpoints/mapping_00229-model.pth.tar +3 -0

- checkpoints/visual_quality_disc.pth +3 -0

- checkpoints/wav2lip.pth +3 -0

- checkpoints/wav2lip_gan.pth +3 -0

- gfpgan/weights/alignment_WFLW_4HG.pth +3 -0

- gfpgan/weights/detection_Resnet50_Final.pth +3 -0

GPT_SoVITS/pretrained_models/.gitignore

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*

|

| 2 |

+

!.gitignore

|

Qwen/Qwen-1_8B-Chat/LICENSE

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Tongyi Qianwen RESEARCH LICENSE AGREEMENT

|

| 2 |

+

|

| 3 |

+

Tongyi Qianwen Release Date: November 30, 2023

|

| 4 |

+

|

| 5 |

+

By clicking to agree or by using or distributing any portion or element of the Tongyi Qianwen Materials, you will be deemed to have recognized and accepted the content of this Agreement, which is effective immediately.

|

| 6 |

+

|

| 7 |

+

1. Definitions

|

| 8 |

+

a. This Tongyi Qianwen RESEARCH LICENSE AGREEMENT (this "Agreement") shall mean the terms and conditions for use, reproduction, distribution and modification of the Materials as defined by this Agreement.

|

| 9 |

+

b. "We"(or "Us") shall mean Alibaba Cloud.

|

| 10 |

+

c. "You" (or "Your") shall mean a natural person or legal entity exercising the rights granted by this Agreement and/or using the Materials for any purpose and in any field of use.

|

| 11 |

+

d. "Third Parties" shall mean individuals or legal entities that are not under common control with Us or You.

|

| 12 |

+

e. "Tongyi Qianwen" shall mean the large language models, and software and algorithms, consisting of trained model weights, parameters (including optimizer states), machine-learning model code, inference-enabling code, training-enabling code, fine-tuning enabling code and other elements of the foregoing distributed by Us.

|

| 13 |

+

f. "Materials" shall mean, collectively, Alibaba Cloud's proprietary Tongyi Qianwen and Documentation (and any portion thereof) made available under this Agreement.

|

| 14 |

+

g. "Source" form shall mean the preferred form for making modifications, including but not limited to model source code, documentation source, and configuration files.

|

| 15 |

+

h. "Object" form shall mean any form resulting from mechanical transformation or translation of a Source form, including but not limited to compiled object code, generated documentation,

|

| 16 |

+

and conversions to other media types.

|

| 17 |

+

i. "Non-Commercial" shall mean for research or evaluation purposes only.

|

| 18 |

+

|

| 19 |

+

2. Grant of Rights

|

| 20 |

+

a. You are granted a non-exclusive, worldwide, non-transferable and royalty-free limited license under Alibaba Cloud's intellectual property or other rights owned by Us embodied in the Materials to use, reproduce, distribute, copy, create derivative works of, and make modifications to the Materials FOR NON-COMMERCIAL PURPOSES ONLY.

|

| 21 |

+

b. If you are commercially using the Materials, You shall request a license from Us.

|

| 22 |

+

|

| 23 |

+

3. Redistribution

|

| 24 |

+

You may reproduce and distribute copies of the Materials or derivative works thereof in any medium, with or without modifications, and in Source or Object form, provided that You meet the following conditions:

|

| 25 |

+

a. You shall give any other recipients of the Materials or derivative works a copy of this Agreement;

|

| 26 |

+

b. You shall cause any modified files to carry prominent notices stating that You changed the files;

|

| 27 |

+

c. You shall retain in all copies of the Materials that You distribute the following attribution notices within a "Notice" text file distributed as a part of such copies: "Tongyi Qianwen is licensed under the Tongyi Qianwen RESEARCH LICENSE AGREEMENT, Copyright (c) Alibaba Cloud. All Rights Reserved."; and

|

| 28 |

+

d. You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions for use, reproduction, or distribution of Your modifications, or for any such derivative works as a whole, provided Your use, reproduction, and distribution of the work otherwise complies with the terms and conditions of this Agreement.

|

| 29 |

+

|

| 30 |

+

4. Rules of use

|

| 31 |

+

a. The Materials may be subject to export controls or restrictions in China, the United States or other countries or regions. You shall comply with applicable laws and regulations in your use of the Materials.

|

| 32 |

+

b. You can not use the Materials or any output therefrom to improve any other large language model (excluding Tongyi Qianwen or derivative works thereof).

|

| 33 |

+

|

| 34 |

+

5. Intellectual Property

|

| 35 |

+

a. We retain ownership of all intellectual property rights in and to the Materials and derivatives made by or for Us. Conditioned upon compliance with the terms and conditions of this Agreement, with respect to any derivative works and modifications of the Materials that are made by you, you are and will be the owner of such derivative works and modifications.

|

| 36 |

+

b. No trademark license is granted to use the trade names, trademarks, service marks, or product names of Us, except as required to fulfill notice requirements under this Agreement or as required for reasonable and customary use in describing and redistributing the Materials.

|

| 37 |

+

c. If you commence a lawsuit or other proceedings (including a cross-claim or counterclaim in a lawsuit) against Us or any entity alleging that the Materials or any output therefrom, or any part of the foregoing, infringe any intellectual property or other right owned or licensable by you, then all licences granted to you under this Agreement shall terminate as of the date such lawsuit or other proceeding is commenced or brought.

|

| 38 |

+

|

| 39 |

+

6. Disclaimer of Warranty and Limitation of Liability

|

| 40 |

+

a. We are not obligated to support, update, provide training for, or develop any further version of the Tongyi Qianwen Materials or to grant any license thereto.

|

| 41 |

+

b. THE MATERIALS ARE PROVIDED "AS IS" WITHOUT ANY EXPRESS OR IMPLIED WARRANTY OF ANY KIND INCLUDING WARRANTIES OF MERCHANTABILITY, NONINFRINGEMENT, OR FITNESS FOR A PARTICULAR PURPOSE. WE MAKE NO WARRANTY AND ASSUME NO RESPONSIBILITY FOR THE SAFETY OR STABILITY OF THE MATERIALS AND ANY OUTPUT THEREFROM.

|

| 42 |

+

c. IN NO EVENT SHALL WE BE LIABLE TO YOU FOR ANY DAMAGES, INCLUDING, BUT NOT LIMITED TO ANY DIRECT, OR INDIRECT, SPECIAL OR CONSEQUENTIAL DAMAGES ARISING FROM YOUR USE OR INABILITY TO USE THE MATERIALS OR ANY OUTPUT OF IT, NO MATTER HOW IT’S CAUSED.

|

| 43 |

+

d. You will defend, indemnify and hold harmless Us from and against any claim by any third party arising out of or related to your use or distribution of the Materials.

|

| 44 |

+

|

| 45 |

+

7. Survival and Termination.

|

| 46 |

+

a. The term of this Agreement shall commence upon your acceptance of this Agreement or access to the Materials and will continue in full force and effect until terminated in accordance with the terms and conditions herein.

|

| 47 |

+

b. We may terminate this Agreement if you breach any of the terms or conditions of this Agreement. Upon termination of this Agreement, you must delete and cease use of the Materials. Sections 6 and 8 shall survive the termination of this Agreement.

|

| 48 |

+

|

| 49 |

+

8. Governing Law and Jurisdiction.

|

| 50 |

+

a. This Agreement and any dispute arising out of or relating to it will be governed by the laws of China, without regard to conflict of law principles, and the UN Convention on Contracts for the International Sale of Goods does not apply to this Agreement.

|

| 51 |

+

b. The People's Courts in Hangzhou City shall have exclusive jurisdiction over any dispute arising out of this Agreement.

|

| 52 |

+

|

| 53 |

+

9. Other Terms and Conditions.

|

| 54 |

+

a. Any arrangements, understandings, or agreements regarding the Material not stated herein are separate from and independent of the terms and conditions of this Agreement. You shall request a seperate license from Us, if You use the Materials in ways not expressly agreed to in this Agreement.

|

| 55 |

+

b. We shall not be bound by any additional or different terms or conditions communicated by You unless expressly agreed.

|

Qwen/Qwen-1_8B-Chat/NOTICE

ADDED

|

@@ -0,0 +1,280 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

------------- LICENSE FOR NVIDIA Megatron-LM code --------------

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2022, NVIDIA CORPORATION. All rights reserved.

|

| 4 |

+

|

| 5 |

+

Redistribution and use in source and binary forms, with or without

|

| 6 |

+

modification, are permitted provided that the following conditions

|

| 7 |

+

are met:

|

| 8 |

+

* Redistributions of source code must retain the above copyright

|

| 9 |

+

notice, this list of conditions and the following disclaimer.

|

| 10 |

+

* Redistributions in binary form must reproduce the above copyright

|

| 11 |

+

notice, this list of conditions and the following disclaimer in the

|

| 12 |

+

documentation and/or other materials provided with the distribution.

|

| 13 |

+

* Neither the name of NVIDIA CORPORATION nor the names of its

|

| 14 |

+

contributors may be used to endorse or promote products derived

|

| 15 |

+

from this software without specific prior written permission.

|

| 16 |

+

|

| 17 |

+

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS ``AS IS'' AND ANY

|

| 18 |

+

EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

|

| 19 |

+

IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

|

| 20 |

+

PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR

|

| 21 |

+

CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL,

|

| 22 |

+

EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO,

|

| 23 |

+

PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR

|

| 24 |

+

PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY

|

| 25 |

+

OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

|

| 26 |

+

(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

|

| 27 |

+

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

------------- LICENSE FOR OpenAI tiktoken code --------------

|

| 31 |

+

|

| 32 |

+

MIT License

|

| 33 |

+

|

| 34 |

+

Copyright (c) 2022 OpenAI, Shantanu Jain

|

| 35 |

+

|

| 36 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 37 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 38 |

+

in the Software without restriction, including without limitation the rights

|

| 39 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 40 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 41 |

+

furnished to do so, subject to the following conditions:

|

| 42 |

+

|

| 43 |

+

The above copyright notice and this permission notice shall be included in all

|

| 44 |

+

copies or substantial portions of the Software.

|

| 45 |

+

|

| 46 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 47 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 48 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 49 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 50 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 51 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 52 |

+

SOFTWARE.

|

| 53 |

+

|

| 54 |

+

------------- LICENSE FOR stanford_alpaca code --------------

|

| 55 |

+

|

| 56 |

+

Apache License

|

| 57 |

+

Version 2.0, January 2004

|

| 58 |

+

http://www.apache.org/licenses/

|

| 59 |

+

|

| 60 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 61 |

+

|

| 62 |

+

1. Definitions.

|

| 63 |

+

|

| 64 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 65 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 66 |

+

|

| 67 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 68 |

+

the copyright owner that is granting the License.

|

| 69 |

+

|

| 70 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 71 |

+

other entities that control, are controlled by, or are under common

|

| 72 |

+

control with that entity. For the purposes of this definition,

|

| 73 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 74 |

+

direction or management of such entity, whether by contract or

|

| 75 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 76 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 77 |

+

|

| 78 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 79 |

+

exercising permissions granted by this License.

|

| 80 |

+

|

| 81 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 82 |

+

including but not limited to software source code, documentation

|

| 83 |

+

source, and configuration files.

|

| 84 |

+

|

| 85 |

+

"Object" form shall mean any form resulting from mechanical

|

| 86 |

+

transformation or translation of a Source form, including but

|

| 87 |

+

not limited to compiled object code, generated documentation,

|

| 88 |

+

and conversions to other media types.

|

| 89 |

+

|

| 90 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 91 |

+

Object form, made available under the License, as indicated by a

|

| 92 |

+

copyright notice that is included in or attached to the work

|

| 93 |

+

(an example is provided in the Appendix below).

|

| 94 |

+

|

| 95 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 96 |

+

form, that is based on (or derived from) the Work and for which the

|

| 97 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 98 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 99 |

+

of this License, Derivative Works shall not include works that remain

|

| 100 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 101 |

+

the Work and Derivative Works thereof.

|

| 102 |

+

|

| 103 |

+

"Contribution" shall mean any work of authorship, including

|

| 104 |

+

the original version of the Work and any modifications or additions

|

| 105 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 106 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 107 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 108 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 109 |

+

means any form of electronic, verbal, or written communication sent

|

| 110 |

+

to the Licensor or its representatives, including but not limited to

|

| 111 |

+

communication on electronic mailing lists, source code control systems,

|

| 112 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 113 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 114 |

+

excluding communication that is conspicuously marked or otherwise

|

| 115 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 116 |

+

|

| 117 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 118 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 119 |

+

subsequently incorporated within the Work.

|

| 120 |

+

|

| 121 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 122 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 123 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 124 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 125 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 126 |

+

Work and such Derivative Works in Source or Object form.

|

| 127 |

+

|

| 128 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 129 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 130 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 131 |

+

(except as stated in this section) patent license to make, have made,

|

| 132 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 133 |

+

where such license applies only to those patent claims licensable

|

| 134 |

+

by such Contributor that are necessarily infringed by their

|

| 135 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 136 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 137 |

+

institute patent litigation against any entity (including a

|

| 138 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 139 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 140 |

+

or contributory patent infringement, then any patent licenses

|

| 141 |

+

granted to You under this License for that Work shall terminate

|

| 142 |

+

as of the date such litigation is filed.

|

| 143 |

+

|

| 144 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 145 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 146 |

+

modifications, and in Source or Object form, provided that You

|

| 147 |

+

meet the following conditions:

|

| 148 |

+

|

| 149 |

+

(a) You must give any other recipients of the Work or

|

| 150 |

+

Derivative Works a copy of this License; and

|

| 151 |

+

|

| 152 |

+

(b) You must cause any modified files to carry prominent notices

|

| 153 |

+

stating that You changed the files; and

|

| 154 |

+

|

| 155 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 156 |

+

that You distribute, all copyright, patent, trademark, and

|

| 157 |

+

attribution notices from the Source form of the Work,

|

| 158 |

+

excluding those notices that do not pertain to any part of

|

| 159 |

+

the Derivative Works; and

|

| 160 |

+

|

| 161 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 162 |

+

distribution, then any Derivative Works that You distribute must

|

| 163 |

+

include a readable copy of the attribution notices contained

|

| 164 |

+

within such NOTICE file, excluding those notices that do not

|

| 165 |

+

pertain to any part of the Derivative Works, in at least one

|

| 166 |

+

of the following places: within a NOTICE text file distributed

|

| 167 |

+

as part of the Derivative Works; within the Source form or

|

| 168 |

+

documentation, if provided along with the Derivative Works; or,

|

| 169 |

+

within a display generated by the Derivative Works, if and

|

| 170 |

+

wherever such third-party notices normally appear. The contents

|

| 171 |

+

of the NOTICE file are for informational purposes only and

|

| 172 |

+

do not modify the License. You may add Your own attribution

|

| 173 |

+

notices within Derivative Works that You distribute, alongside

|

| 174 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 175 |

+

that such additional attribution notices cannot be construed

|

| 176 |

+

as modifying the License.

|

| 177 |

+

|

| 178 |

+

You may add Your own copyright statement to Your modifications and

|

| 179 |

+

may provide additional or different license terms and conditions

|

| 180 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 181 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 182 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 183 |

+

the conditions stated in this License.

|

| 184 |

+

|

| 185 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 186 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 187 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 188 |

+

this License, without any additional terms or conditions.

|

| 189 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 190 |

+

the terms of any separate license agreement you may have executed

|

| 191 |

+

with Licensor regarding such Contributions.

|

| 192 |

+

|

| 193 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 194 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 195 |

+

except as required for reasonable and customary use in describing the

|

| 196 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 197 |

+

|

| 198 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 199 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 200 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 201 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 202 |

+

implied, including, without limitation, any warranties or conditions

|

| 203 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 204 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 205 |

+

appropriateness of using or redistributing the Work and assume any

|

| 206 |

+

risks associated with Your exercise of permissions under this License.

|

| 207 |

+

|

| 208 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 209 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 210 |

+

unless required by applicable law (such as deliberate and grossly

|

| 211 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 212 |

+

liable to You for damages, including any direct, indirect, special,

|

| 213 |

+

incidental, or consequential damages of any character arising as a

|

| 214 |

+

result of this License or out of the use or inability to use the

|

| 215 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 216 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 217 |

+

other commercial damages or losses), even if such Contributor

|

| 218 |

+

has been advised of the possibility of such damages.

|

| 219 |

+

|

| 220 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 221 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 222 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 223 |

+

or other liability obligations and/or rights consistent with this

|

| 224 |

+

License. However, in accepting such obligations, You may act only

|

| 225 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 226 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 227 |

+

defend, and hold each Contributor harmless for any liability

|

| 228 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 229 |

+

of your accepting any such warranty or additional liability.

|

| 230 |

+

|

| 231 |

+

END OF TERMS AND CONDITIONS

|

| 232 |

+

|

| 233 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 234 |

+

|

| 235 |

+

To apply the Apache License to your work, attach the following

|

| 236 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 237 |

+

replaced with your own identifying information. (Don't include

|

| 238 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 239 |

+

comment syntax for the file format. We also recommend that a

|

| 240 |

+

file or class name and description of purpose be included on the

|

| 241 |

+

same "printed page" as the copyright notice for easier

|

| 242 |

+

identification within third-party archives.

|

| 243 |

+

|

| 244 |

+

Copyright 2023 Rohan Taori, Ishaan Gulrajani, Tianyi Zhang, Yann Dubois, Xuechen Li

|

| 245 |

+

|

| 246 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 247 |

+

you may not use this file except in compliance with the License.

|

| 248 |

+

You may obtain a copy of the License at

|

| 249 |

+

|

| 250 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 251 |

+

|

| 252 |

+

Unless required by applicable law or agreed to in writing, software

|

| 253 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 254 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 255 |

+

See the License for the specific language governing permissions and

|

| 256 |

+

limitations under the License.

|

| 257 |

+

|

| 258 |

+

------------- LICENSE FOR PanQiWei AutoGPTQ code --------------

|

| 259 |

+

|

| 260 |

+

MIT License

|

| 261 |

+

|

| 262 |

+

Copyright (c) 2023 潘其威(William)

|

| 263 |

+

|

| 264 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 265 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 266 |

+

in the Software without restriction, including without limitation the rights

|

| 267 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 268 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 269 |

+

furnished to do so, subject to the following conditions:

|

| 270 |

+

|

| 271 |

+

The above copyright notice and this permission notice shall be included in all

|

| 272 |

+

copies or substantial portions of the Software.

|

| 273 |

+

|

| 274 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 275 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 276 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 277 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 278 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 279 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 280 |

+

SOFTWARE.

|

Qwen/Qwen-1_8B-Chat/README.md

ADDED

|

@@ -0,0 +1,418 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- zh

|

| 4 |

+

- en

|

| 5 |

+

tags:

|

| 6 |

+

- qwen

|

| 7 |

+

pipeline_tag: text-generation

|

| 8 |

+

inference: false

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# Qwen-1.8B-Chat

|

| 12 |

+

|

| 13 |

+

<p align="center">

|

| 14 |

+

<img src="https://qianwen-res.oss-cn-beijing.aliyuncs.com/logo_qwen.jpg" width="400"/>

|

| 15 |

+

<p>

|

| 16 |

+

<br>

|

| 17 |

+

|

| 18 |

+

<p align="center">

|

| 19 |

+

🤗 <a href="https://huggingface.co/Qwen">Hugging Face</a>   |   🤖 <a href="https://modelscope.cn/organization/qwen">ModelScope</a>   |    📑 <a href="https://arxiv.org/abs/2309.16609">Paper</a>    |   🖥️ <a href="https://www.modelscope.cn/studios/qwen/Qwen-1_8B-Chat-Demo/summary">Demo</a>

|

| 20 |

+

<br>

|

| 21 |

+

<a href="https://github.com/QwenLM/Qwen/blob/main/assets/wechat.png">WeChat (微信)</a>   |   <a href="https://discord.gg/z3GAxXZ9Ce">Discord</a>   |   <a href="https://dashscope.aliyun.com">API</a>

|

| 22 |

+

</p>

|

| 23 |

+

<br>

|

| 24 |

+

|

| 25 |

+

## 介绍(Introduction)

|

| 26 |

+

**通义千问-1.8B(Qwen-1.8B)**是阿里云研发的通义千问大模型系列的18亿参数规模的模型。Qwen-1.8B是基于Transformer的大语言模型, 在超大规模的预训练数据上进行训练得到。预训练数据类型多样,覆盖广泛,包括大量网络文本、专业书籍、代码等。同时,在Qwen-1.8B的基础上,我们使用对齐机制打造了基于大语言模型的AI助手Qwen-1.8B-Chat。本仓库为Qwen-1.8B-Chat的仓库。

|

| 27 |

+

|

| 28 |

+

通义千问-1.8B(Qwen-1.8B)主要有以下特点:

|

| 29 |

+

1. **低成本部署**:提供int8和int4量化版本,推理最低仅需不到2GB显存,生成2048 tokens仅需3GB显存占用。微调最低仅需6GB。

|

| 30 |

+

2. **大规模高质量训练语料**:使用超过2.2万亿tokens的数据进行预训练,包含高质量中、英、多语言、代码、数学等数据,涵盖通用及专业领域的训练语料。通过大量对比实验对预训练语料分布进行了优化。

|

| 31 |

+

3. **优秀的性能**:Qwen-1.8B支持8192上下文长度,在多个中英文下游评测任务上(涵盖常识推理、代码、数学、翻译等),效果显著超越现有的相近规模开源模型,具体评测结果请详见下文。

|

| 32 |

+

4. **覆盖更全面的词表**:相比目前以中英词表为主的开源模型,Qwen-1.8B使用了约15万大小的词表。该词表对多语言更加友好,方便用户在不扩展词表的情况下对部分语种进行能力增强和扩展。

|

| 33 |

+

5. **系统指令跟随**:Qwen-1.8B-Chat可以通过调整系统指令,实现**角色扮演**,**语言风格迁移**,**任务设定**,和**行为设定**等能力。

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

如果您想了解更多关于通义千问1.8B开源模型的细节,我们建议您参阅[GitHub代码库](https://github.com/QwenLM/Qwen)。

|

| 37 |

+

|

| 38 |

+

**Qwen-1.8B** is the 1.8B-parameter version of the large language model series, Qwen (abbr. Tongyi Qianwen), proposed by Aibaba Cloud. Qwen-1.8B is a Transformer-based large language model, which is pretrained on a large volume of data, including web texts, books, codes, etc. Additionally, based on the pretrained Qwen-1.8B, we release Qwen-1.8B-Chat, a large-model-based AI assistant, which is trained with alignment techniques. This repository is the one for Qwen-1.8B-Chat.

|

| 39 |

+

|

| 40 |

+

The features of Qwen-1.8B include:

|

| 41 |

+

1. **Low-cost deployment**: We provide int4 and int8 quantized versions, the minimum memory requirment for inference is less than 2GB, generating 2048 tokens only 3GB of memory usage. The minimum memory requirment of finetuning is only 6GB.

|

| 42 |

+

|

| 43 |

+

2. **Large-scale high-quality training corpora**: It is pretrained on over 2.2 trillion tokens, including Chinese, English, multilingual texts, code, and mathematics, covering general and professional fields. The distribution of the pre-training corpus has been optimized through a large number of ablation experiments.

|

| 44 |

+

3. **Good performance**: It supports 8192 context length and significantly surpasses existing open-source models of similar scale on multiple Chinese and English downstream evaluation tasks (including commonsense, reasoning, code, mathematics, etc.), and even surpasses some larger-scale models in several benchmarks. See below for specific evaluation results.

|

| 45 |

+

4. **More comprehensive vocabulary coverage**: Compared with other open-source models based on Chinese and English vocabularies, Qwen-1.8B uses a vocabulary of over 150K tokens. This vocabulary is more friendly to multiple languages, enabling users to directly further enhance the capability for certain languages without expanding the vocabulary.

|

| 46 |

+

5. **System prompt**: Qwen-1.8B-Chat can realize roly playing, language style transfer, task setting, and behavior setting by using system prompt.

|

| 47 |

+

|

| 48 |

+

For more details about the open-source model of Qwen-1.8B-Chat, please refer to the [GitHub](https://github.com/QwenLM/Qwen) code repository.

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

<br>

|

| 52 |

+

|

| 53 |

+

## 要求(Requirements)

|

| 54 |

+

|

| 55 |

+

* python 3.8及以上版本

|

| 56 |

+

* pytorch 1.12及以上版本,推荐2.0及以上版本

|

| 57 |

+

* 建议使用CUDA 11.4及以上(GPU用户、flash-attention用户等需考虑��选项)

|

| 58 |

+

* python 3.8 and above

|

| 59 |

+

* pytorch 1.12 and above, 2.0 and above are recommended

|

| 60 |

+

* CUDA 11.4 and above are recommended (this is for GPU users, flash-attention users, etc.)

|

| 61 |

+

|

| 62 |

+

## 依赖项(Dependency)

|

| 63 |

+

|

| 64 |

+

运行Qwen-1.8B-Chat,请确保满足上述要求,再执行以下pip命令安装依赖库

|

| 65 |

+

|

| 66 |

+

To run Qwen-1.8B-Chat, please make sure you meet the above requirements, and then execute the following pip commands to install the dependent libraries.

|

| 67 |

+

|

| 68 |

+

```bash

|

| 69 |

+

pip install transformers==4.32.0 accelerate tiktoken einops scipy transformers_stream_generator==0.0.4 peft deepspeed

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

另外,推荐安装`flash-attention`库(**当前已支持flash attention 2**),以实现更高的效率和更低的显存占用。

|

| 73 |

+

|

| 74 |

+

In addition, it is recommended to install the `flash-attention` library (**we support flash attention 2 now.**) for higher efficiency and lower memory usage.

|

| 75 |

+

|

| 76 |

+

```bash

|

| 77 |

+

git clone https://github.com/Dao-AILab/flash-attention

|

| 78 |

+

cd flash-attention && pip install .

|

| 79 |

+

# 下方安装可选,安装可能比较缓慢。

|

| 80 |

+

# pip install csrc/layer_norm

|

| 81 |

+

# pip install csrc/rotary

|

| 82 |

+

```

|

| 83 |

+

<br>

|

| 84 |

+

|

| 85 |

+

## 快速使用(Quickstart)

|

| 86 |

+

|

| 87 |

+

下面我们展示了一个使用Qwen-1.8B-Chat模型,进行多轮对话交互的样例:

|

| 88 |

+

|

| 89 |

+

We show an example of multi-turn interaction with Qwen-1.8B-Chat in the following code:

|

| 90 |

+

|

| 91 |

+

```python

|

| 92 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 93 |

+

from transformers.generation import GenerationConfig

|

| 94 |

+

|

| 95 |

+

# Note: The default behavior now has injection attack prevention off.

|

| 96 |

+

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen-1_8B-Chat", trust_remote_code=True)

|

| 97 |

+

|

| 98 |

+

# use bf16

|

| 99 |

+

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-1_8B-Chat", device_map="auto", trust_remote_code=True, bf16=True).eval()

|

| 100 |

+

# use fp16

|

| 101 |

+

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-1_8B-Chat", device_map="auto", trust_remote_code=True, fp16=True).eval()

|

| 102 |

+

# use cpu only

|

| 103 |

+

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-1_8B-Chat", device_map="cpu", trust_remote_code=True).eval()

|

| 104 |

+

# use auto mode, automatically select precision based on the device.

|

| 105 |

+

model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-1_8B-Chat", device_map="auto", trust_remote_code=True).eval()

|

| 106 |

+

|

| 107 |

+

# Specify hyperparameters for generation. But if you use transformers>=4.32.0, there is no need to do this.

|

| 108 |

+

# model.generation_config = GenerationConfig.from_pretrained("Qwen/Qwen-1_8B-Chat", trust_remote_code=True) # 可指定不同的生成长度、top_p等相关超参

|

| 109 |

+

|

| 110 |

+

# 第一轮对话 1st dialogue turn

|

| 111 |

+

response, history = model.chat(tokenizer, "你好", history=None)

|

| 112 |

+

print(response)

|

| 113 |

+

# 你好!很高兴为你提供帮助。

|

| 114 |

+

|

| 115 |

+

# 第二轮对话 2nd dialogue turn

|

| 116 |

+

response, history = model.chat(tokenizer, "给我讲一个年轻人奋斗创业最终取得成功的故事。", history=history)

|

| 117 |

+

print(response)

|

| 118 |

+

# 这是一个关于一个年轻人奋斗创业最终取得成功的故事。

|

| 119 |

+

# 故事的主人公叫李明,他来自一个普通的家庭,父母都是普通的工人。从小,李明就立下了一个目标:要成为一名成功的企业家。

|

| 120 |

+

# 为了实现这个目标,李明勤奋学习,考上了大学。在大学期间,他积极参加各种创业比赛,获得了不少奖项。他还利用课余时间去实习,积累了宝贵的经验。

|

| 121 |

+

# 毕业后,李明决定开始自己的创业之路。他开始寻找投资机会,但多次都被拒绝了。然而,他并没有放弃。他继续努力,不断改进自己的创业计划,并寻找新的投资机会。

|

| 122 |

+

# 最终,李明成功地获得了一笔投资,开始了自己的创业之路。他成立了一家科技公司,专注于开发新型软件。在他的领导下,公司迅速发展起来,成为了一家成功的科技企业。

|

| 123 |

+

# 李明的成功并不是偶然的。他勤奋、坚韧、勇于冒险,不断学习和改进自己。他的成功也证明了,只要努力奋斗,任何人都有可能取得成功。

|

| 124 |

+

|

| 125 |

+

# 第三轮对话 3rd dialogue turn

|

| 126 |

+

response, history = model.chat(tokenizer, "给这个故事起一个标题", history=history)

|

| 127 |

+

print(response)

|

| 128 |

+

# 《奋斗创业:一个年轻人的成功之路》

|

| 129 |

+

|

| 130 |

+

# Qwen-1.8B-Chat现在可以通过调整系统指令(System Prompt),实现角色扮演,语言风格迁移,任务设定,行为设定等能力。

|

| 131 |

+

# Qwen-1.8B-Chat can realize roly playing, language style transfer, task setting, and behavior setting by system prompt.

|

| 132 |

+

response, _ = model.chat(tokenizer, "你好呀", history=None, system="请用二次元可爱语气和我说话")

|

| 133 |

+

print(response)

|

| 134 |

+

# 你好啊!我是一只可爱的二次元猫咪哦,不知道你有什么问题需要我帮忙解答吗?

|

| 135 |

+

|

| 136 |

+

response, _ = model.chat(tokenizer, "My colleague works diligently", history=None, system="You will write beautiful compliments according to needs")

|

| 137 |

+

print(response)

|

| 138 |

+

# Your colleague is an outstanding worker! Their dedication and hard work are truly inspiring. They always go above and beyond to ensure that

|

| 139 |

+

# their tasks are completed on time and to the highest standard. I am lucky to have them as a colleague, and I know I can count on them to handle any challenge that comes their way.

|

| 140 |

+

```

|

| 141 |

+

|

| 142 |

+

关于更多的使用说明,请参考我们的[GitHub repo](https://github.com/QwenLM/Qwen)获取更多信息。

|

| 143 |

+

|

| 144 |

+

For more information, please refer to our [GitHub repo](https://github.com/QwenLM/Qwen) for more information.

|

| 145 |

+

|

| 146 |

+

## Tokenizer

|

| 147 |

+

|

| 148 |

+

> 注:作为术语的“tokenization”在中文中尚无共识的概念对应,本文档采用英文表达以利说明。

|

| 149 |

+

|

| 150 |

+

基于tiktoken的分词器有别于其他分词器,比如sentencepiece分词器。尤其在微调阶段,需要特别注意特殊token的使用。关于tokenizer的更多信息,以及微调时涉及的相关使用,请参阅[文档](https://github.com/QwenLM/Qwen/blob/main/tokenization_note_zh.md)。

|

| 151 |

+

|

| 152 |

+

Our tokenizer based on tiktoken is different from other tokenizers, e.g., sentencepiece tokenizer. You need to pay attention to special tokens, especially in finetuning. For more detailed information on the tokenizer and related use in fine-tuning, please refer to the [documentation](https://github.com/QwenLM/Qwen/blob/main/tokenization_note.md).

|

| 153 |

+

|

| 154 |

+

## 量化 (Quantization)

|

| 155 |

+

|

| 156 |

+

### 用法 (Usage)

|

| 157 |

+

|

| 158 |

+

**请注意:我们更新量化方案为基于[AutoGPTQ](https://github.com/PanQiWei/AutoGPTQ)的量化,提供Qwen-1.8B-Chat的Int4量化模型[点击这里](https://huggingface.co/Qwen/Qwen-1_8B-Chat-Int4)。相比此前方案,该方案在模型评测效果几乎无损,且存储需求更低,推理速度更优。**

|

| 159 |

+

|

| 160 |

+

**Note: we provide a new solution based on [AutoGPTQ](https://github.com/PanQiWei/AutoGPTQ), and release an Int4 quantized model for Qwen-1.8B-Chat [Click here](https://huggingface.co/Qwen/Qwen-1_8B-Chat-Int4), which achieves nearly lossless model effects but improved performance on both memory costs and inference speed, in comparison with the previous solution.**

|

| 161 |

+

|

| 162 |

+

以下我们提供示例说明如何使用Int4量化模型。在开始使用前,请先保证满足要求(如torch 2.0及以上,transformers版本为4.32.0及以上,等等),并安装所需安装包:

|

| 163 |

+

|

| 164 |

+

Here we demonstrate how to use our provided quantized models for inference. Before you start, make sure you meet the requirements of auto-gptq (e.g., torch 2.0 and above, transformers 4.32.0 and above, etc.) and install the required packages:

|

| 165 |

+

|

| 166 |

+

```bash

|

| 167 |

+

pip install auto-gptq optimum

|

| 168 |

+

```

|

| 169 |

+

|

| 170 |

+

如安装`auto-gptq`遇到问题,我们建议您到官方[repo](https://github.com/PanQiWei/AutoGPTQ)搜索合适的预编译wheel。

|

| 171 |

+

|

| 172 |

+

随后即可使用和上述一致的用法调用量化模型:

|

| 173 |

+

|

| 174 |

+

If you meet problems installing `auto-gptq`, we advise you to check out the official [repo](https://github.com/PanQiWei/AutoGPTQ) to find a pre-build wheel.

|

| 175 |

+

|

| 176 |

+

Then you can load the quantized model easily and run inference as same as usual:

|

| 177 |

+

|

| 178 |

+

```python

|

| 179 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 180 |

+

"Qwen/Qwen-1_8B-Chat-Int4",

|

| 181 |

+

device_map="auto",

|

| 182 |

+

trust_remote_code=True

|

| 183 |

+

).eval()

|

| 184 |

+

response, history = model.chat(tokenizer, "你好", history=None)

|

| 185 |

+

```

|

| 186 |

+

|

| 187 |

+

### 效果评测

|

| 188 |

+

|

| 189 |

+

我们使用原始模型的FP32和BF16精度,以及量化过的Int8和Int4模型在基准评测上做了测试,结果如下所示:

|

| 190 |

+

|

| 191 |

+

We illustrate the model performance of both FP32, BF16, Int8 and Int4 models on the benchmark. Results are shown below:

|

| 192 |

+

|

| 193 |

+

| Quantization | MMLU | CEval (val) | GSM8K | Humaneval |

|

| 194 |

+

|--------------|:----:|:-----------:|:-----:|:---------:|

|

| 195 |

+

| FP32 | 43.4 | 57.0 | 33.0 | 26.8 |

|

| 196 |

+

| BF16 | 43.3 | 55.6 | 33.7 | 26.2 |

|

| 197 |

+

| Int8 | 43.1 | 55.8 | 33.0 | 27.4 |

|

| 198 |

+

| Int4 | 42.9 | 52.8 | 31.2 | 25.0 |

|

| 199 |

+

|

| 200 |

+

### 推理速度 (Inference Speed)

|

| 201 |

+

|

| 202 |

+

我们测算了FP32、BF16精度和Int8、Int4量化模型生成2048和8192个token的平均推理速度。如图所示:

|

| 203 |

+

|

| 204 |

+

We measured the average inference speed of generating 2048 and 8192 tokens under FP32, BF16 precision and Int8, Int4 quantization level, respectively.

|

| 205 |

+

|

| 206 |

+

| Quantization | FlashAttn | Speed (2048 tokens) | Speed (8192 tokens) |

|

| 207 |

+

|--------------| :-------: |:-------------------:|:-------------------:|

|

| 208 |

+

| FP32 | v2 | 52.96 | 47.35 |

|

| 209 |

+

| BF16 | v2 | 54.09 | 54.04 |

|

| 210 |

+

| Int8 | v2 | 55.56 | 55.62 |

|

| 211 |

+

| Int4 | v2 | 71.07 | 76.45 |

|

| 212 |

+

| FP32 | v1 | 52.00 | 45.80 |

|

| 213 |

+

| BF16 | v1 | 51.70 | 55.04 |

|

| 214 |

+

| Int8 | v1 | 53.16 | 53.33 |

|

| 215 |

+

| Int4 | v1 | 69.82 | 67.44 |

|

| 216 |

+

| FP32 | Disabled | 52.28 | 44.95 |

|

| 217 |

+

| BF16 | Disabled | 48.17 | 45.01 |

|

| 218 |

+

| Int8 | Disabled | 52.16 | 52.99 |

|

| 219 |

+

| Int4 | Disabled | 68.37 | 65.94 |

|

| 220 |

+

|

| 221 |

+

具体而言,我们记录在长度为1的上下文的条件下生成8192个token的性能。评测运行于单张A100-SXM4-80G GPU,使用PyTorch 2.0.1和CUDA 11.4。推理速度是生成8192个token的速度均值。

|

| 222 |

+

|

| 223 |

+

In detail, the setting of profiling is generating 8192 new tokens with 1 context token. The profiling runs on a single A100-SXM4-80G GPU with PyTorch 2.0.1 and CUDA 11.4. The inference speed is averaged over the generated 8192 tokens.

|

| 224 |

+

|

| 225 |

+

### 显存使用 (GPU Memory Usage)

|

| 226 |

+

|

| 227 |

+

我们测算了FP32、BF16精度和Int8、Int4量化模型生成2048个及8192个token(单个token作为输入)的峰值显存占用情况。结果如下所示:

|

| 228 |

+

|

| 229 |

+

We also profile the peak GPU memory usage for generating 2048 tokens and 8192 tokens (with single token as context) under FP32, BF16 or Int8, Int4 quantization level, respectively. The results are shown below.

|

| 230 |

+

|

| 231 |

+

| Quantization Level | Peak Usage for Encoding 2048 Tokens | Peak Usage for Generating 8192 Tokens |

|

| 232 |

+

|--------------------|:-----------------------------------:|:-------------------------------------:|

|

| 233 |

+

| FP32 | 8.45GB | 13.06GB |

|

| 234 |

+

| BF16 | 4.23GB | 6.48GB |

|

| 235 |

+

| Int8 | 3.48GB | 5.34GB |

|

| 236 |

+

| Int4 | 2.91GB | 4.80GB |

|

| 237 |

+

|

| 238 |

+

上述性能测算使用[此脚本](https://qianwen-res.oss-cn-beijing.aliyuncs.com/profile.py)完成。

|

| 239 |

+

|

| 240 |

+

The above speed and memory profiling are conducted using [this script](https://qianwen-res.oss-cn-beijing.aliyuncs.com/profile.py).

|

| 241 |

+

<br>

|

| 242 |

+

|

| 243 |

+

## 模型细节(Model)

|

| 244 |

+

|

| 245 |

+

与Qwen-1.8B预训练模型相同,Qwen-1.8B-Chat模型规模基本情况如下所示

|

| 246 |

+

|

| 247 |

+

The details of the model architecture of Qwen-1.8B-Chat are listed as follows

|

| 248 |

+

|

| 249 |

+

| Hyperparameter | Value |

|

| 250 |

+

|:----------------|:------:|

|

| 251 |

+

| n_layers | 24 |

|

| 252 |

+

| n_heads | 16 |

|

| 253 |

+

| d_model | 2048 |

|

| 254 |

+

| vocab size | 151851 |

|

| 255 |

+

| sequence length | 8192 |

|

| 256 |

+

|

| 257 |

+

在位置编码、FFN激活函数和normalization的实现方式上,我们也采用了目前最流行的做法,

|

| 258 |

+

即RoPE相对位置编码、SwiGLU激活函数、RMSNorm(可选安装flash-attention加速)。

|

| 259 |

+

|

| 260 |

+

在分词器方面,相比目前主流开源模型以中英词表为主,Qwen-1.8B-Chat使用了约15万token大小的词表。

|

| 261 |

+

该词表在GPT-4使用的BPE词表`cl100k_base`基础上,对中文、多语言进行了优化,在对中、英、代码数据的高效编解码的基础上,对部分多语言更加友好,方便用户在不扩展词表的情况下对部分语种进行能力增强。

|

| 262 |

+

词表对数字按单个数字位切分。调用较为高效的[tiktoken分词库](https://github.com/openai/tiktoken)进行分词。

|

| 263 |

+

|

| 264 |

+

For position encoding, FFN activation function, and normalization calculation methods, we adopt the prevalent practices, i.e., RoPE relative position encoding, SwiGLU for activation function, and RMSNorm for normalization (optional installation of flash-attention for acceleration).

|

| 265 |

+

|

| 266 |

+

For tokenization, compared to the current mainstream open-source models based on Chinese and English vocabularies, Qwen-1.8B-Chat uses a vocabulary of over 150K tokens.

|

| 267 |

+

It first considers efficient encoding of Chinese, English, and code data, and is also more friendly to multilingual languages, enabling users to directly enhance the capability of some languages without expanding the vocabulary.

|

| 268 |

+

It segments numbers by single digit, and calls the [tiktoken](https://github.com/openai/tiktoken) tokenizer library for efficient tokenization.

|

| 269 |

+

|

| 270 |

+

## 评测效果(Evaluation)

|

| 271 |

+

|

| 272 |

+

对于Qwen-1.8B-Chat模型,我们同样评测了常规的中文理解(C-Eval)、英文理解(MMLU)、代码(HumanEval)和数学(GSM8K)等权威任务,同时包含了长序列任务的评测结果。由于Qwen-1.8B-Chat模型经过对齐后,激发了较强的外部系统调用能力,我们还进行了工具使用能力方面的评测。

|

| 273 |

+

|

| 274 |