Commit

•

1e84265

1

Parent(s):

7399fc1

Upload 11 files

Browse files- .gitattributes +1 -1

- README.md +183 -1

- ddpm_scheduler/scheduler_config.json +16 -0

- model_index.json +24 -0

- movq/config.json +33 -0

- scheduler/scheduler_config.json +13 -0

- text_encoder/config.json +27 -0

- tokenizer/special_tokens_map.json +15 -0

- tokenizer/tokenizer.json +3 -0

- tokenizer/tokenizer_config.json +19 -0

- unet/config.json +61 -0

.gitattributes

CHANGED

|

@@ -25,7 +25,6 @@

|

|

| 25 |

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

-

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

*.wasm filter=lfs diff=lfs merge=lfs -text

|

|

@@ -33,3 +32,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 25 |

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 28 |

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 29 |

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 30 |

*.wasm filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

tokenizer/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,185 @@

|

|

| 1 |

---

|

| 2 |

-

license:

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

prior: kandinsky-community/kandinsky-2-1-prior

|

| 4 |

+

tags:

|

| 5 |

+

- text-to-image

|

| 6 |

+

- kandinsky

|

| 7 |

---

|

| 8 |

+

|

| 9 |

+

# Kandinsky 2.1

|

| 10 |

+

|

| 11 |

+

Kandinsky 2.1 inherits best practices from Dall-E 2 and Latent diffusion while introducing some new ideas.

|

| 12 |

+

|

| 13 |

+

It uses the CLIP model as a text and image encoder, and diffusion image prior (mapping) between latent spaces of CLIP modalities. This approach increases the visual performance of the model and unveils new horizons in blending images and text-guided image manipulation.

|

| 14 |

+

|

| 15 |

+

The Kandinsky model is created by [Arseniy Shakhmatov](https://github.com/cene555), [Anton Razzhigaev](https://github.com/razzant), [Aleksandr Nikolich](https://github.com/AlexWortega), [Igor Pavlov](https://github.com/boomb0om), [Andrey Kuznetsov](https://github.com/kuznetsoffandrey) and [Denis Dimitrov](https://github.com/denndimitrov)

|

| 16 |

+

|

| 17 |

+

## Usage

|

| 18 |

+

|

| 19 |

+

Kandinsky 2.1 is available in diffusers!

|

| 20 |

+

|

| 21 |

+

```python

|

| 22 |

+

pip install diffusers transformers accelerate

|

| 23 |

+

```

|

| 24 |

+

### Text to image

|

| 25 |

+

|

| 26 |

+

```python

|

| 27 |

+

from diffusers import DiffusionPipeline

|

| 28 |

+

import torch

|

| 29 |

+

|

| 30 |

+

pipe_prior = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16)

|

| 31 |

+

pipe_prior.to("cuda")

|

| 32 |

+

|

| 33 |

+

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

| 34 |

+

t2i_pipe.to("cuda")

|

| 35 |

+

|

| 36 |

+

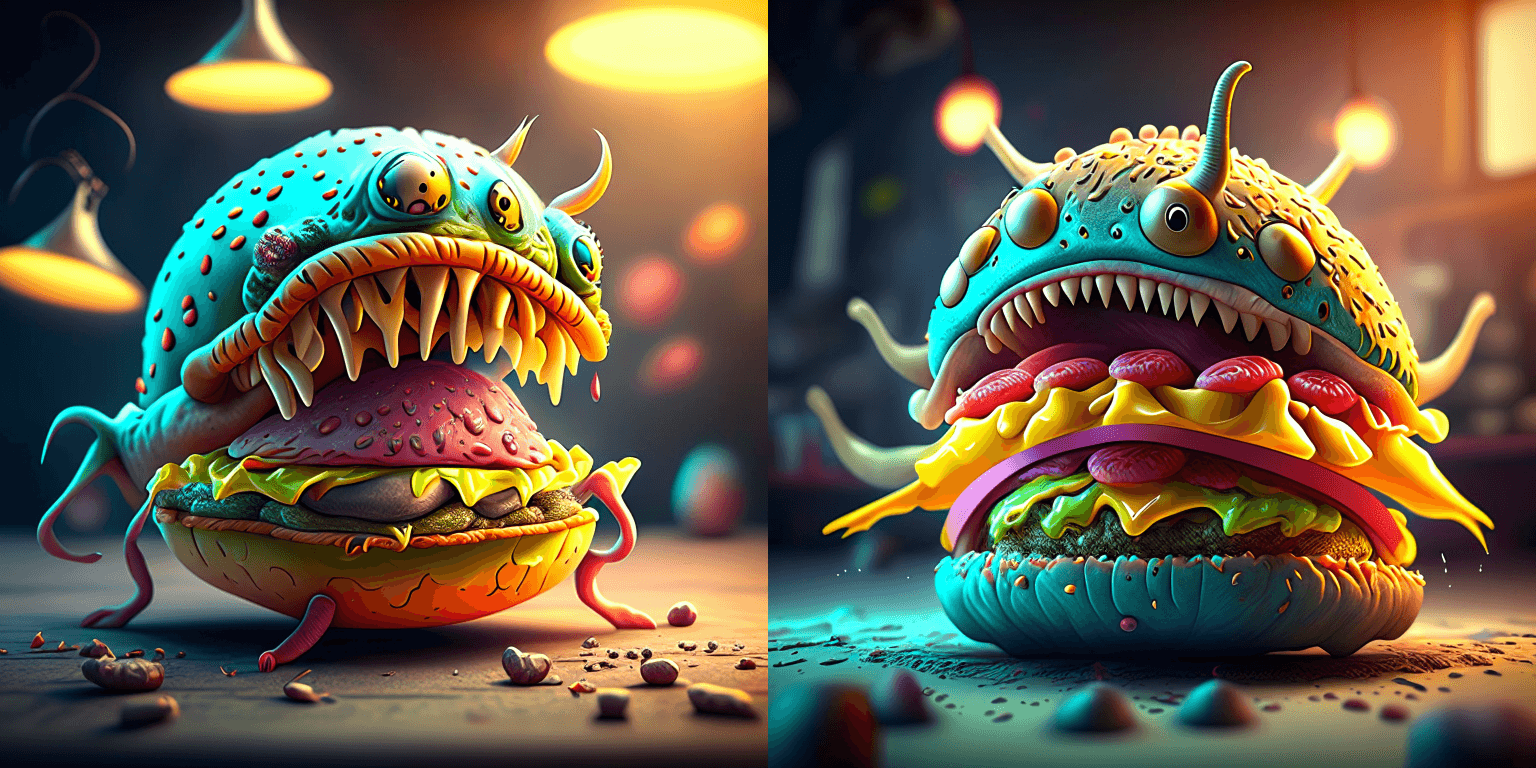

prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

|

| 37 |

+

negative_prompt = "low quality, bad quality"

|

| 38 |

+

|

| 39 |

+

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt, guidance_scale=1.0).to_tuple()

|

| 40 |

+

|

| 41 |

+

image = t2i_pipe(prompt, negative_prompt=negative_prompt, image_embeds=image_embeds, negative_image_embeds=negative_image_embeds, height=768, width=768).images[0]

|

| 42 |

+

image.save("cheeseburger_monster.png")

|

| 43 |

+

```

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

### Text Guided Image-to-Image Generation

|

| 49 |

+

|

| 50 |

+

```python

|

| 51 |

+

from diffusers import KandinskyImg2ImgPipeline, KandinskyPriorPipeline

|

| 52 |

+

import torch

|

| 53 |

+

|

| 54 |

+

from PIL import Image

|

| 55 |

+

import requests

|

| 56 |

+

from io import BytesIO

|

| 57 |

+

|

| 58 |

+

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

|

| 59 |

+

response = requests.get(url)

|

| 60 |

+

original_image = Image.open(BytesIO(response.content)).convert("RGB")

|

| 61 |

+

original_image = original_image.resize((768, 512))

|

| 62 |

+

|

| 63 |

+

# create prior

|

| 64 |

+

pipe_prior = KandinskyPriorPipeline.from_pretrained(

|

| 65 |

+

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

|

| 66 |

+

)

|

| 67 |

+

pipe_prior.to("cuda")

|

| 68 |

+

|

| 69 |

+

# create img2img pipeline

|

| 70 |

+

pipe = KandinskyImg2ImgPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

| 71 |

+

pipe.to("cuda")

|

| 72 |

+

|

| 73 |

+

prompt = "A fantasy landscape, Cinematic lighting"

|

| 74 |

+

negative_prompt = "low quality, bad quality"

|

| 75 |

+

|

| 76 |

+

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt).to_tuple()

|

| 77 |

+

|

| 78 |

+

out = pipe(

|

| 79 |

+

prompt,

|

| 80 |

+

image=original_image,

|

| 81 |

+

image_embeds=image_embeds,

|

| 82 |

+

negative_image_embeds=negative_image_embeds,

|

| 83 |

+

height=768,

|

| 84 |

+

width=768,

|

| 85 |

+

strength=0.3,

|

| 86 |

+

)

|

| 87 |

+

|

| 88 |

+

out.images[0].save("fantasy_land.png")

|

| 89 |

+

```

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

### Interpolate

|

| 95 |

+

|

| 96 |

+

```python

|

| 97 |

+

from diffusers import KandinskyPriorPipeline, KandinskyPipeline

|

| 98 |

+

from diffusers.utils import load_image

|

| 99 |

+

import PIL

|

| 100 |

+

|

| 101 |

+

import torch

|

| 102 |

+

|

| 103 |

+

pipe_prior = KandinskyPriorPipeline.from_pretrained(

|

| 104 |

+

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

|

| 105 |

+

)

|

| 106 |

+

pipe_prior.to("cuda")

|

| 107 |

+

|

| 108 |

+

img1 = load_image(

|

| 109 |

+

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/cat.png"

|

| 110 |

+

)

|

| 111 |

+

|

| 112 |

+

img2 = load_image(

|

| 113 |

+

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/starry_night.jpeg"

|

| 114 |

+

)

|

| 115 |

+

|

| 116 |

+

# add all the conditions we want to interpolate, can be either text or image

|

| 117 |

+

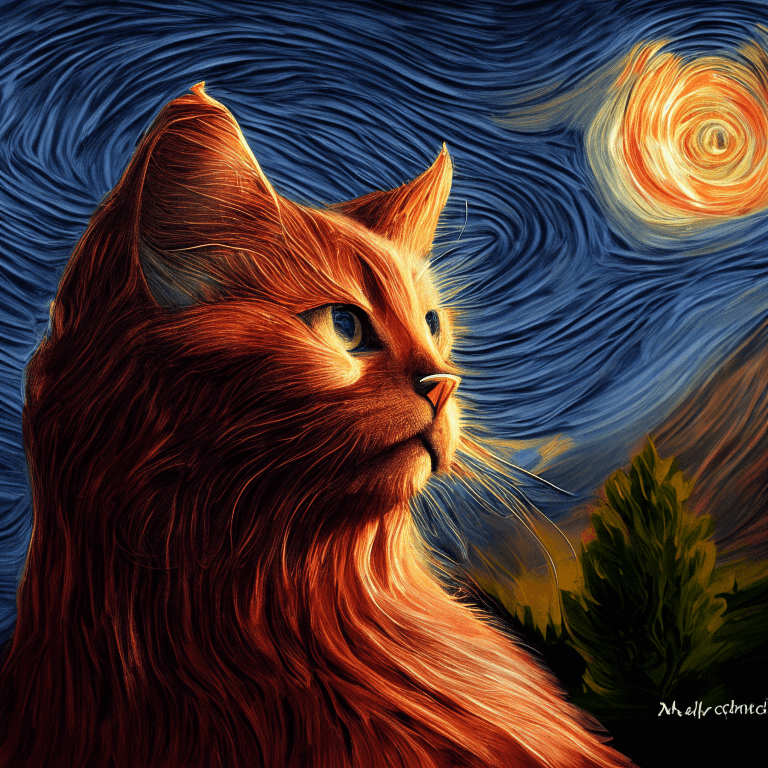

images_texts = ["a cat", img1, img2]

|

| 118 |

+

|

| 119 |

+

# specify the weights for each condition in images_texts

|

| 120 |

+

weights = [0.3, 0.3, 0.4]

|

| 121 |

+

|

| 122 |

+

# We can leave the prompt empty

|

| 123 |

+

prompt = ""

|

| 124 |

+

prior_out = pipe_prior.interpolate(images_texts, weights)

|

| 125 |

+

|

| 126 |

+

pipe = KandinskyPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

| 127 |

+

pipe.to("cuda")

|

| 128 |

+

|

| 129 |

+

image = pipe(prompt, **prior_out, height=768, width=768).images[0]

|

| 130 |

+

|

| 131 |

+

image.save("starry_cat.png")

|

| 132 |

+

```

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

## Model Architecture

|

| 137 |

+

|

| 138 |

+

### Overview

|

| 139 |

+

Kandinsky 2.1 is a text-conditional diffusion model based on unCLIP and latent diffusion, composed of a transformer-based image prior model, a unet diffusion model, and a decoder.

|

| 140 |

+

|

| 141 |

+

The model architectures are illustrated in the figure below - the chart on the left describes the process to train the image prior model, the figure in the center is the text-to-image generation process, and the figure on the right is image interpolation.

|

| 142 |

+

|

| 143 |

+

<p float="left">

|

| 144 |

+

<img src="https://raw.githubusercontent.com/ai-forever/Kandinsky-2/main/content/kandinsky21.png"/>

|

| 145 |

+

</p>

|

| 146 |

+

|

| 147 |

+

Specifically, the image prior model was trained on CLIP text and image embeddings generated with a pre-trained [mCLIP model](https://huggingface.co/M-CLIP/XLM-Roberta-Large-Vit-L-14). The trained image prior model is then used to generate mCLIP image embeddings for input text prompts. Both the input text prompts and its mCLIP image embeddings are used in the diffusion process. A [MoVQGAN](https://openreview.net/forum?id=Qb-AoSw4Jnm) model acts as the final block of the model, which decodes the latent representation into an actual image.

|

| 148 |

+

|

| 149 |

+

|

| 150 |

+

### Details

|

| 151 |

+

The image prior training of the model was performed on the [LAION Improved Aesthetics dataset](https://huggingface.co/datasets/bhargavsdesai/laion_improved_aesthetics_6.5plus_with_images), and then fine-tuning was performed on the [LAION HighRes data](https://huggingface.co/datasets/laion/laion-high-resolution).

|

| 152 |

+

|

| 153 |

+

The main Text2Image diffusion model was trained on the basis of 170M text-image pairs from the [LAION HighRes dataset](https://huggingface.co/datasets/laion/laion-high-resolution) (an important condition was the presence of images with a resolution of at least 768x768). The use of 170M pairs is due to the fact that we kept the UNet diffusion block from Kandinsky 2.0, which allowed us not to train it from scratch. Further, at the stage of fine-tuning, a dataset of 2M very high-quality high-resolution images with descriptions (COYO, anime, landmarks_russia, and a number of others) was used separately collected from open sources.

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

### Evaluation

|

| 157 |

+

We quantitatively measure the performance of Kandinsky 2.1 on the COCO_30k dataset, in zero-shot mode. The table below presents FID.

|

| 158 |

+

|

| 159 |

+

FID metric values for generative models on COCO_30k

|

| 160 |

+

| | FID (30k)|

|

| 161 |

+

|:------|----:|

|

| 162 |

+

| eDiff-I (2022) | 6.95 |

|

| 163 |

+

| Image (2022) | 7.27 |

|

| 164 |

+

| Kandinsky 2.1 (2023) | 8.21|

|

| 165 |

+

| Stable Diffusion 2.1 (2022) | 8.59 |

|

| 166 |

+

| GigaGAN, 512x512 (2023) | 9.09 |

|

| 167 |

+

| DALL-E 2 (2022) | 10.39 |

|

| 168 |

+

| GLIDE (2022) | 12.24 |

|

| 169 |

+

| Kandinsky 1.0 (2022) | 15.40 |

|

| 170 |

+

| DALL-E (2021) | 17.89 |

|

| 171 |

+

| Kandinsky 2.0 (2022) | 20.00 |

|

| 172 |

+

| GLIGEN (2022) | 21.04 |

|

| 173 |

+

|

| 174 |

+

For more information, please refer to the upcoming technical report.

|

| 175 |

+

|

| 176 |

+

## BibTex

|

| 177 |

+

If you find this repository useful in your research, please cite:

|

| 178 |

+

```

|

| 179 |

+

@misc{kandinsky 2.1,

|

| 180 |

+

title = {kandinsky 2.1},

|

| 181 |

+

author = {Arseniy Shakhmatov, Anton Razzhigaev, Aleksandr Nikolich, Vladimir Arkhipkin, Igor Pavlov, Andrey Kuznetsov, Denis Dimitrov},

|

| 182 |

+

year = {2023},

|

| 183 |

+

howpublished = {},

|

| 184 |

+

}

|

| 185 |

+

```

|

ddpm_scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "DDPMScheduler",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": false,

|

| 8 |

+

"clip_sample_range": 2.0,

|

| 9 |

+

"dynamic_thresholding_ratio": 0.995,

|

| 10 |

+

"num_train_timesteps": 1000,

|

| 11 |

+

"prediction_type": "epsilon",

|

| 12 |

+

"sample_max_value": 2.0,

|

| 13 |

+

"thresholding": true,

|

| 14 |

+

"trained_betas": null,

|

| 15 |

+

"variance_type": "learned_range"

|

| 16 |

+

}

|

model_index.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "KandinskyPipeline",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"text_encoder": [

|

| 5 |

+

"kandinsky",

|

| 6 |

+

"MultilingualCLIP"

|

| 7 |

+

],

|

| 8 |

+

"tokenizer": [

|

| 9 |

+

"transformers",

|

| 10 |

+

"XLMRobertaTokenizerFast"

|

| 11 |

+

],

|

| 12 |

+

"scheduler": [

|

| 13 |

+

"diffusers",

|

| 14 |

+

"DDIMScheduler"

|

| 15 |

+

],

|

| 16 |

+

"unet": [

|

| 17 |

+

"diffusers",

|

| 18 |

+

"UNet2DConditionModel"

|

| 19 |

+

],

|

| 20 |

+

"movq": [

|

| 21 |

+

"diffusers",

|

| 22 |

+

"VQModel"

|

| 23 |

+

]

|

| 24 |

+

}

|

movq/config.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "VQModel",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

256,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"AttnDownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"norm_type": "spatial",

|

| 22 |

+

"num_vq_embeddings": 16384,

|

| 23 |

+

"out_channels": 3,

|

| 24 |

+

"sample_size": 32,

|

| 25 |

+

"scaling_factor": 0.18215,

|

| 26 |

+

"up_block_types": [

|

| 27 |

+

"AttnUpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D",

|

| 29 |

+

"UpDecoderBlock2D",

|

| 30 |

+

"UpDecoderBlock2D"

|

| 31 |

+

],

|

| 32 |

+

"vq_embed_dim": 4

|

| 33 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "DDIMScheduler",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"num_train_timesteps": 1000,

|

| 5 |

+

"beta_schedule": "linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"beta_end":0.012,

|

| 8 |

+

"clip_sample" : false,

|

| 9 |

+

"set_alpha_to_one" : false,

|

| 10 |

+

"steps_offset" : 1,

|

| 11 |

+

"prediction_type" : "epsilon",

|

| 12 |

+

"thresholding" : false

|

| 13 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"MultilingualCLIP"

|

| 4 |

+

],

|

| 5 |

+

"attention_probs_dropout_prob": 0.1,

|

| 6 |

+

"bos_token_id": 0,

|

| 7 |

+

"eos_token_id": 2,

|

| 8 |

+

"hidden_act": "gelu",

|

| 9 |

+

"hidden_dropout_prob": 0.1,

|

| 10 |

+

"hidden_size": 1024,

|

| 11 |

+

"initializer_range": 0.02,

|

| 12 |

+

"intermediate_size": 4096,

|

| 13 |

+

"layer_norm_eps": 1e-05,

|

| 14 |

+

"max_position_embeddings": 514,

|

| 15 |

+

"model_type": "xlm-roberta",

|

| 16 |

+

"num_attention_heads": 16,

|

| 17 |

+

"num_hidden_layers": 24,

|

| 18 |

+

"output_past": true,

|

| 19 |

+

"pad_token_id": 1,

|

| 20 |

+

"position_embedding_type": "absolute",

|

| 21 |

+

"transformers_version": "4.17.0.dev0",

|

| 22 |

+

"type_vocab_size": 1,

|

| 23 |

+

"use_cache": true,

|

| 24 |

+

"vocab_size": 250002,

|

| 25 |

+

"numDims": 768,

|

| 26 |

+

"transformerDimensions": 1024

|

| 27 |

+

}

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,15 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": "<s>",

|

| 3 |

+

"cls_token": "<s>",

|

| 4 |

+

"eos_token": "</s>",

|

| 5 |

+

"mask_token": {

|

| 6 |

+

"content": "<mask>",

|

| 7 |

+

"lstrip": true,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false

|

| 11 |

+

},

|

| 12 |

+

"pad_token": "<pad>",

|

| 13 |

+

"sep_token": "</s>",

|

| 14 |

+

"unk_token": "<unk>"

|

| 15 |

+

}

|

tokenizer/tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:11f3aa31ab2b0fa69e0e7f941f6badc4efd31066fb5221669ba55c48f5e6752a

|

| 3 |

+

size 18082828

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": "<s>",

|

| 3 |

+

"clean_up_tokenization_spaces": true,

|

| 4 |

+

"cls_token": "<s>",

|

| 5 |

+

"eos_token": "</s>",

|

| 6 |

+

"mask_token": {

|

| 7 |

+

"__type": "AddedToken",

|

| 8 |

+

"content": "<mask>",

|

| 9 |

+

"lstrip": true,

|

| 10 |

+

"normalized": true,

|

| 11 |

+

"rstrip": false,

|

| 12 |

+

"single_word": false

|

| 13 |

+

},

|

| 14 |

+

"model_max_length": 512,

|

| 15 |

+

"pad_token": "<pad>",

|

| 16 |

+

"sep_token": "</s>",

|

| 17 |

+

"tokenizer_class": "XLMRobertaTokenizer",

|

| 18 |

+

"unk_token": "<unk>"

|

| 19 |

+

}

|

unet/config.json

ADDED

|

@@ -0,0 +1,61 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.17.0.dev0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"addition_embed_type": "text_image",

|

| 6 |

+

"addition_embed_type_num_heads": 64,

|

| 7 |

+

"attention_head_dim": 64,

|

| 8 |

+

"block_out_channels": [

|

| 9 |

+

384,

|

| 10 |

+

768,

|

| 11 |

+

1152,

|

| 12 |

+

1536

|

| 13 |

+

],

|

| 14 |

+

"center_input_sample": false,

|

| 15 |

+

"class_embed_type": null,

|

| 16 |

+

"class_embeddings_concat": false,

|

| 17 |

+

"conv_in_kernel": 3,

|

| 18 |

+

"conv_out_kernel": 3,

|

| 19 |

+

"cross_attention_dim": 768,

|

| 20 |

+

"cross_attention_norm": null,

|

| 21 |

+

"down_block_types": [

|

| 22 |

+

"ResnetDownsampleBlock2D",

|

| 23 |

+

"SimpleCrossAttnDownBlock2D",

|

| 24 |

+

"SimpleCrossAttnDownBlock2D",

|

| 25 |

+

"SimpleCrossAttnDownBlock2D"

|

| 26 |

+

],

|

| 27 |

+

"downsample_padding": 1,

|

| 28 |

+

"dual_cross_attention": false,

|

| 29 |

+

"encoder_hid_dim": 1024,

|

| 30 |

+

"encoder_hid_dim_type": "text_image_proj",

|

| 31 |

+

"flip_sin_to_cos": true,

|

| 32 |

+

"freq_shift": 0,

|

| 33 |

+

"in_channels": 4,

|

| 34 |

+

"layers_per_block": 3,

|

| 35 |

+

"mid_block_only_cross_attention": null,

|

| 36 |

+

"mid_block_scale_factor": 1,

|

| 37 |

+

"mid_block_type": "UNetMidBlock2DSimpleCrossAttn",

|

| 38 |

+

"norm_eps": 1e-05,

|

| 39 |

+

"norm_num_groups": 32,

|

| 40 |

+

"num_class_embeds": null,

|

| 41 |

+

"only_cross_attention": false,

|

| 42 |

+

"out_channels": 8,

|

| 43 |

+

"projection_class_embeddings_input_dim": null,

|

| 44 |

+

"resnet_out_scale_factor": 1.0,

|

| 45 |

+

"resnet_skip_time_act": false,

|

| 46 |

+

"resnet_time_scale_shift": "scale_shift",

|

| 47 |

+

"sample_size": 64,

|

| 48 |

+

"time_cond_proj_dim": null,

|

| 49 |

+

"time_embedding_act_fn": null,

|

| 50 |

+

"time_embedding_dim": null,

|

| 51 |

+

"time_embedding_type": "positional",

|

| 52 |

+

"timestep_post_act": null,

|

| 53 |

+

"up_block_types": [

|

| 54 |

+

"SimpleCrossAttnUpBlock2D",

|

| 55 |

+

"SimpleCrossAttnUpBlock2D",

|

| 56 |

+

"SimpleCrossAttnUpBlock2D",

|

| 57 |

+

"ResnetUpsampleBlock2D"

|

| 58 |

+

],

|

| 59 |

+

"upcast_attention": false,

|

| 60 |

+

"use_linear_projection": false

|

| 61 |

+

}

|