先坤

commited on

Commit

•

9ee1a60

1

Parent(s):

ee686c1

update

Browse files

README.md

CHANGED

|

@@ -7,13 +7,48 @@ tags:

|

|

| 7 |

- Reinforcement Learning

|

| 8 |

- Vehicle Routing Problem

|

| 9 |

---

|

| 10 |

-

with reinforcement learning (RL).

|

|

@@ -34,13 +69,16 @@ pip install torch==1.12.1+cu113 torchvision==0.13.1+cu113 torchaudio==0.12.1 --e

|

|

| 34 |

|

| 35 |

1. Training data

|

| 36 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 37 |

2. Start training

|

| 38 |

```python

|

| 39 |

python train.py

|

| 40 |

```

|

| 41 |

|

| 42 |

-

Here is how to use this model to get the features of a given text in PyTorch:

|

| 43 |

-

|

| 44 |

### Evaluation

|

| 45 |

|

| 46 |

```python

|

|

|

|

| 7 |

- Reinforcement Learning

|

| 8 |

- Vehicle Routing Problem

|

| 9 |

---

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

# GreedRL

|

| 13 |

+

|

| 14 |

+

# Introduction

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

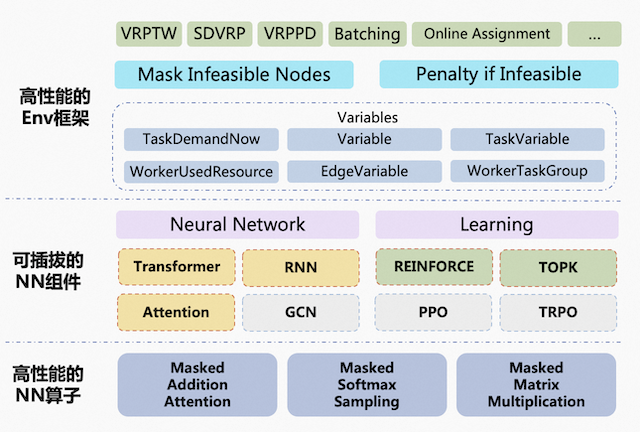

## Architecture design

|

| 18 |

+

The entire architecture is divided into three layers:

|

| 19 |

+

|

| 20 |

+

* High-performance Env framework

|

| 21 |

+

|

| 22 |

+

The constraints and optimization objectives for the problems to be solved are defined in the RL Env.

|

| 23 |

+

Based on performance and ease of use considerations, the Env framework provides two implementations: one based on pytorch and one based on CUDA C++.

|

| 24 |

+

To facilitate the definition of problems for developers, the framework abstracts multiple variables to represent the environment's state, which are automatically generated after being declared by the user. When defining constraints and optimization objectives, developers can directly refer to the declared variables.

|

| 25 |

+

Currently, various VRP variants such as CVRP, VRPTW and PDPTW, as well as problems such as Batching and Online Assignment, are supported.

|

| 26 |

+

|

| 27 |

+

* Pluggable NN components

|

| 28 |

+

|

| 29 |

+

The framework provides certain neural network components, and developers can also implement custom neural network components.

|

| 30 |

+

|

| 31 |

+

* High-performance NN operators

|

| 32 |

+

|

| 33 |

+

In order to achieve the ultimate performance, the framework implements some high-performance operators specifically for OR scenarios to replace pytorch operators, such as masked addition attention and masked softmax sampling."

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

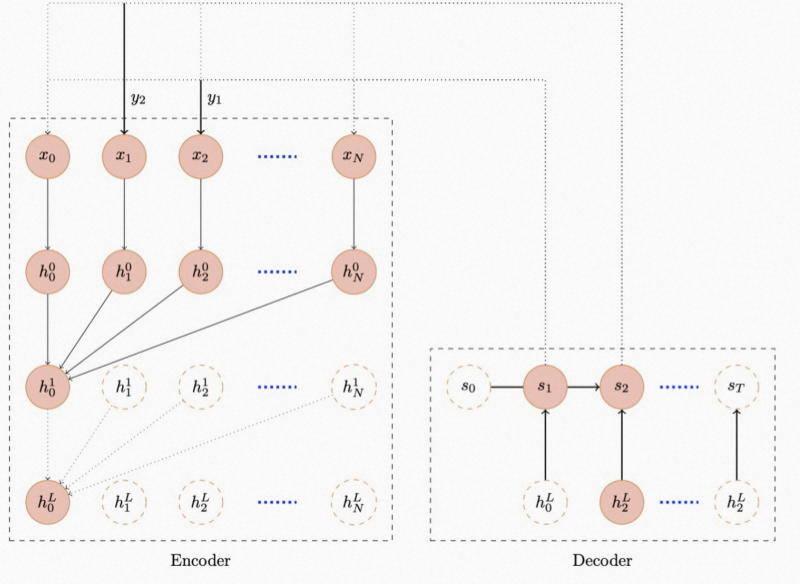

## Network design

|

| 38 |

+

The network structure adopts the seq2seq architecture commonly used in NLP, with the Transformer used in the encoding part and RNN used in the decoding part, as shown in the diagram below.

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

## 🏆Award

|

| 43 |

+

|

| 44 |

|

| 45 |

# GreedRL-VRP-pretrained model

|

| 46 |

|

| 47 |

## Model description

|

| 48 |

|

| 49 |

|

| 50 |

+

|

| 51 |

+

|

| 52 |

## Intended uses & limitations

|

| 53 |

|

| 54 |

You can use these model for solving the vehicle routing problems (VRPs) with reinforcement learning (RL).

|

|

|

|

| 69 |

|

| 70 |

1. Training data

|

| 71 |

|

| 72 |

+

We use the generated data for the training phase, the customers and depot locations are randomly generated in the unit square [0,1] X [0,1].

|

| 73 |

+

|

| 74 |

+

For the Capacitated VRP(CVRP), we assume that the demand of each node is a discrete number in {1,...,9}, chosen uniformly at random.

|

| 75 |

+

|

| 76 |

+

|

| 77 |

2. Start training

|

| 78 |

```python

|

| 79 |

python train.py

|

| 80 |

```

|

| 81 |

|

|

|

|

|

|

|

| 82 |

### Evaluation

|

| 83 |

|

| 84 |

```python

|

images/GREEDRL-Framwork.png

ADDED

|

images/GREEDRL-Logo-Original-320x320.png

ADDED

|

images/GREEDRL-Logo-Original-640x640.png

DELETED

|

Binary file (41.2 kB)

|

|

|

images/GREEDRL-Network.png

ADDED

|