Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- README.md +259 -0

- labse.Q2_K.gguf +3 -0

- labse.Q3_K_L.gguf +3 -0

- labse.Q3_K_M.gguf +3 -0

- labse.Q3_K_S.gguf +3 -0

- labse.Q4_0.gguf +3 -0

- labse.Q4_K_M.gguf +3 -0

- labse.Q4_K_S.gguf +3 -0

- labse.Q5_0.gguf +3 -0

- labse.Q5_K_M.gguf +3 -0

- labse.Q5_K_S.gguf +3 -0

- labse.Q6_K.gguf +3 -0

- labse.Q8_0.gguf +3 -0

- labse_fp16.gguf +3 -0

- labse_fp32.gguf +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*.gguf filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,259 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

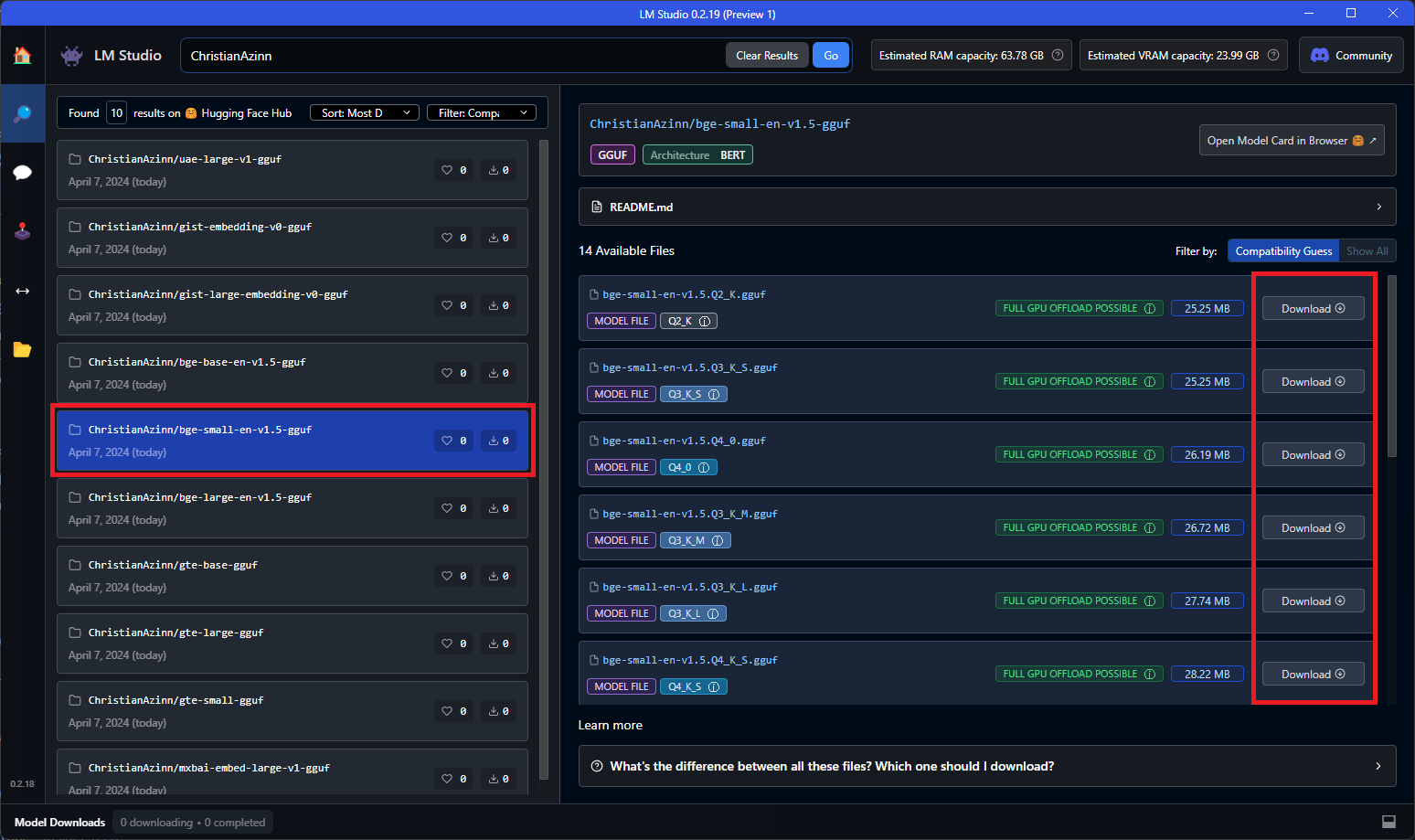

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

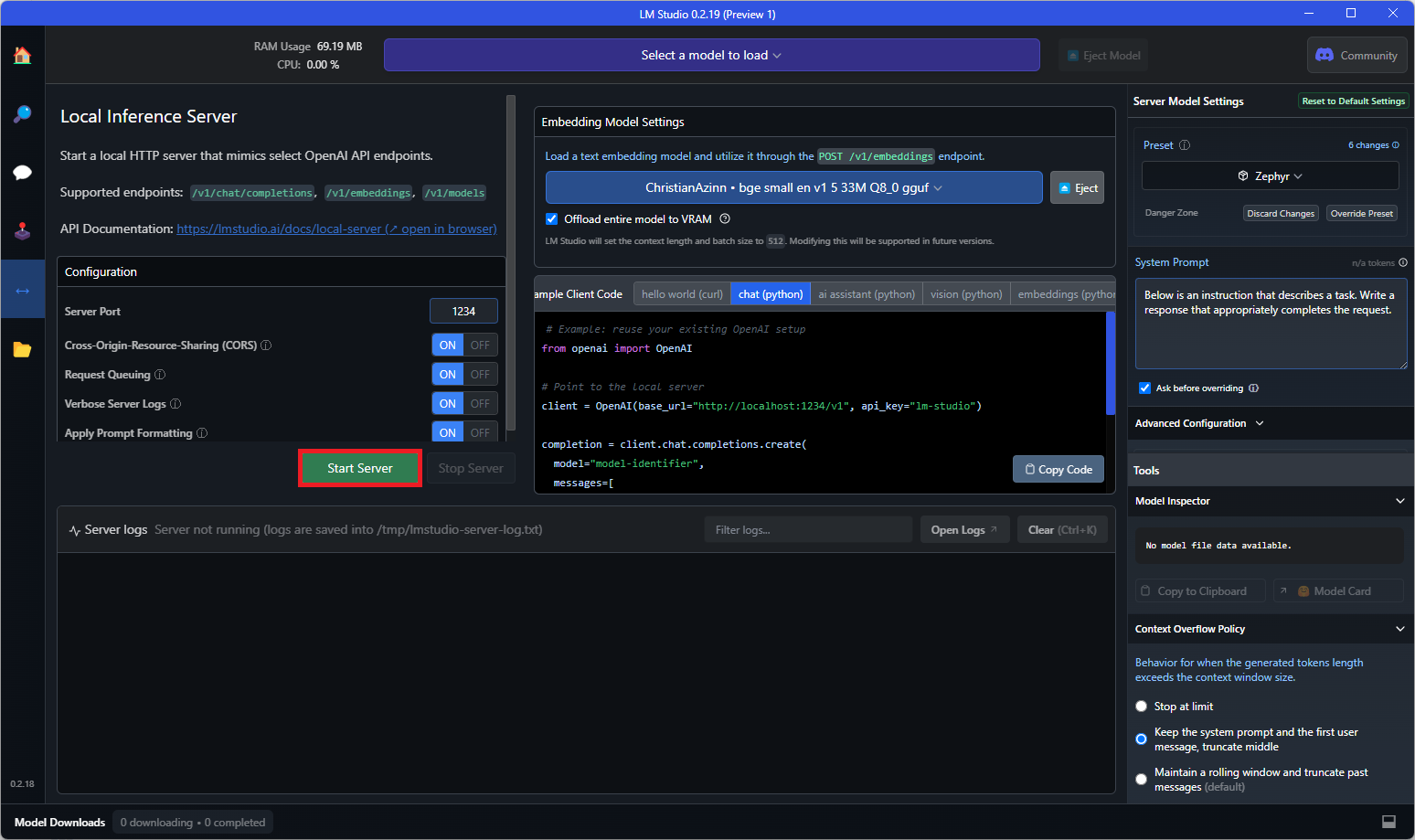

|

|

|

|

|

|

|

|

|

|

|

|

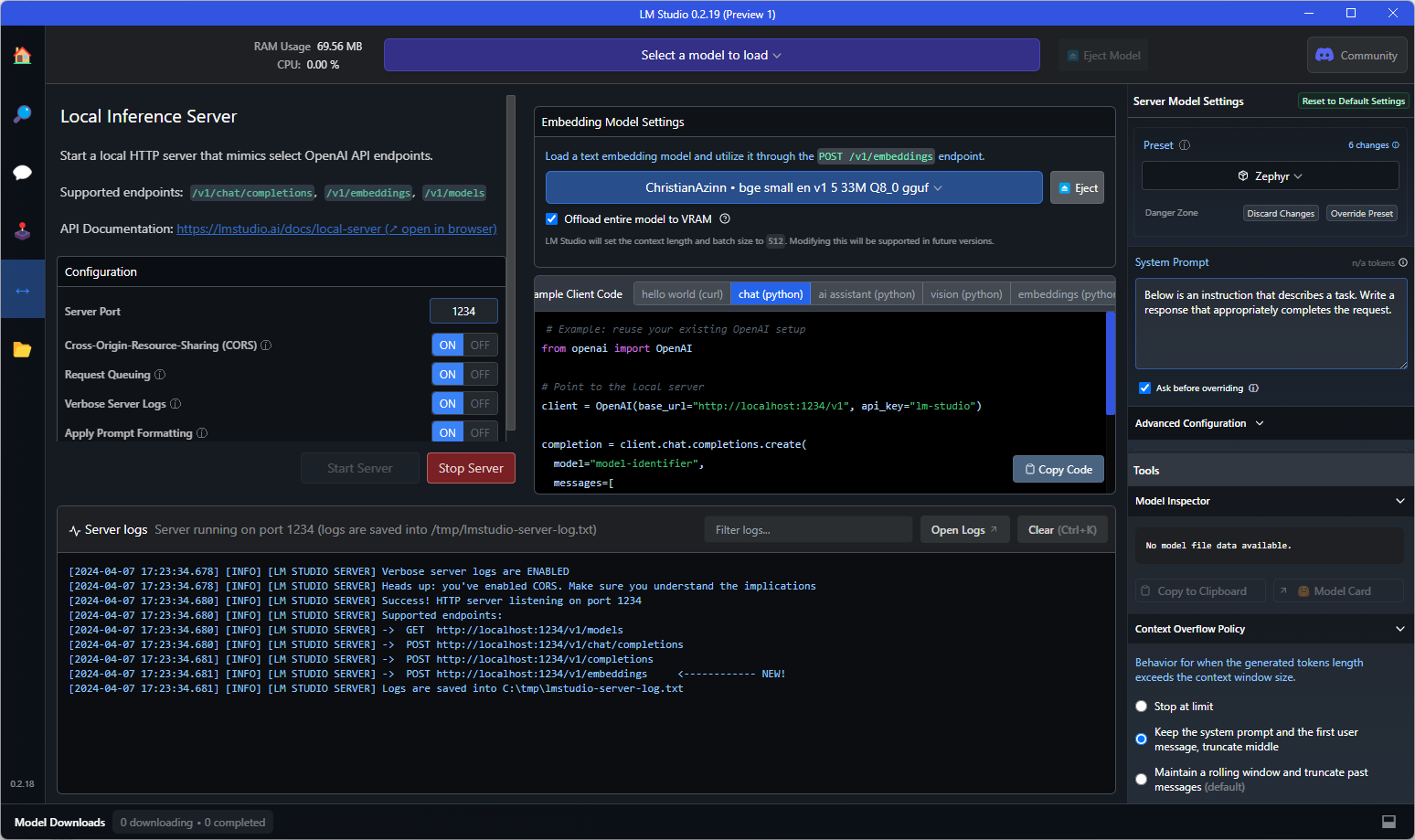

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: sentence-transformers/labse

|

| 3 |

+

inference: false

|

| 4 |

+

language:

|

| 5 |

+

- multilingual

|

| 6 |

+

- af

|

| 7 |

+

- sq

|

| 8 |

+

- am

|

| 9 |

+

- ar

|

| 10 |

+

- hy

|

| 11 |

+

- as

|

| 12 |

+

- az

|

| 13 |

+

- eu

|

| 14 |

+

- be

|

| 15 |

+

- bn

|

| 16 |

+

- bs

|

| 17 |

+

- bg

|

| 18 |

+

- my

|

| 19 |

+

- ca

|

| 20 |

+

- ceb

|

| 21 |

+

- zh

|

| 22 |

+

- co

|

| 23 |

+

- hr

|

| 24 |

+

- cs

|

| 25 |

+

- da

|

| 26 |

+

- nl

|

| 27 |

+

- en

|

| 28 |

+

- eo

|

| 29 |

+

- et

|

| 30 |

+

- fi

|

| 31 |

+

- fr

|

| 32 |

+

- fy

|

| 33 |

+

- gl

|

| 34 |

+

- ka

|

| 35 |

+

- de

|

| 36 |

+

- el

|

| 37 |

+

- gu

|

| 38 |

+

- ht

|

| 39 |

+

- ha

|

| 40 |

+

- haw

|

| 41 |

+

- he

|

| 42 |

+

- hi

|

| 43 |

+

- hmn

|

| 44 |

+

- hu

|

| 45 |

+

- is

|

| 46 |

+

- ig

|

| 47 |

+

- id

|

| 48 |

+

- ga

|

| 49 |

+

- it

|

| 50 |

+

- ja

|

| 51 |

+

- jv

|

| 52 |

+

- kn

|

| 53 |

+

- kk

|

| 54 |

+

- km

|

| 55 |

+

- rw

|

| 56 |

+

- ko

|

| 57 |

+

- ku

|

| 58 |

+

- ky

|

| 59 |

+

- lo

|

| 60 |

+

- la

|

| 61 |

+

- lv

|

| 62 |

+

- lt

|

| 63 |

+

- lb

|

| 64 |

+

- mk

|

| 65 |

+

- mg

|

| 66 |

+

- ms

|

| 67 |

+

- ml

|

| 68 |

+

- mt

|

| 69 |

+

- mi

|

| 70 |

+

- mr

|

| 71 |

+

- mn

|

| 72 |

+

- ne

|

| 73 |

+

- no

|

| 74 |

+

- ny

|

| 75 |

+

- or

|

| 76 |

+

- fa

|

| 77 |

+

- pl

|

| 78 |

+

- pt

|

| 79 |

+

- pa

|

| 80 |

+

- ro

|

| 81 |

+

- ru

|

| 82 |

+

- sm

|

| 83 |

+

- gd

|

| 84 |

+

- sr

|

| 85 |

+

- st

|

| 86 |

+

- sn

|

| 87 |

+

- si

|

| 88 |

+

- sk

|

| 89 |

+

- sl

|

| 90 |

+

- so

|

| 91 |

+

- es

|

| 92 |

+

- su

|

| 93 |

+

- sw

|

| 94 |

+

- sv

|

| 95 |

+

- tl

|

| 96 |

+

- tg

|

| 97 |

+

- ta

|

| 98 |

+

- tt

|

| 99 |

+

- te

|

| 100 |

+

- th

|

| 101 |

+

- bo

|

| 102 |

+

- tr

|

| 103 |

+

- tk

|

| 104 |

+

- ug

|

| 105 |

+

- uk

|

| 106 |

+

- ur

|

| 107 |

+

- uz

|

| 108 |

+

- vi

|

| 109 |

+

- cy

|

| 110 |

+

- wo

|

| 111 |

+

- xh

|

| 112 |

+

- yi

|

| 113 |

+

- yo

|

| 114 |

+

- zu

|

| 115 |

+

license: apache-2.0

|

| 116 |

+

model_creator: sentence-transformers

|

| 117 |

+

model_name: labse

|

| 118 |

+

model_type: bert

|

| 119 |

+

quantized_by: ChristianAzinn

|

| 120 |

+

library_name: sentence-transformers

|

| 121 |

+

pipeline_tag: sentence-similarity

|

| 122 |

+

tags:

|

| 123 |

+

- gguf

|

| 124 |

+

- mteb

|

| 125 |

+

- Sentence Transformers

|

| 126 |

+

- sentence-similarity

|

| 127 |

+

- sentence-transformers

|

| 128 |

+

- feature-extraction

|

| 129 |

+

---

|

| 130 |

+

|

| 131 |

+

# labse-gguf

|

| 132 |

+

|

| 133 |

+

Model creator: [sentence-transformers](https://huggingface.co/sentence-transformers)

|

| 134 |

+

|

| 135 |

+

Original model: [labse](https://huggingface.co/sentence-transformers/labse)

|

| 136 |

+

|

| 137 |

+

## Original Description

|

| 138 |

+

|

| 139 |

+

The language-agnostic BERT sentence embedding encodes text into high dimensional vectors. The model is trained and optimized to produce similar representations exclusively for bilingual sentence pairs that are translations of each other. So it can be used for mining for translations of a sentence in a larger corpus.

|

| 140 |

+

|

| 141 |

+

## Description

|

| 142 |

+

|

| 143 |

+

This repo contains GGUF format files for the labse embedding model.

|

| 144 |

+

|

| 145 |

+

These files were converted and quantized with llama.cpp [PR 5500](https://github.com/ggerganov/llama.cpp/pull/5500), commit [34aa045de](https://github.com/ggerganov/llama.cpp/pull/5500/commits/34aa045de44271ff7ad42858c75739303b8dc6eb), on a consumer RTX 4090.

|

| 146 |

+

|

| 147 |

+

This model supports up to CONTEXTLENGTH tokens of context.

|

| 148 |

+

|

| 149 |

+

## Compatibility

|

| 150 |

+

|

| 151 |

+

These files are compatible with [llama.cpp](https://github.com/ggerganov/llama.cpp) as of commit [4524290e8](https://github.com/ggerganov/llama.cpp/commit/4524290e87b8e107cc2b56e1251751546f4b9051), as well as [LM Studio](https://lmstudio.ai/) as of version 0.2.19.

|

| 152 |

+

|

| 153 |

+

# Meta-information

|

| 154 |

+

## Explanation of quantisation methods

|

| 155 |

+

<details>

|

| 156 |

+

<summary>Click to see details</summary>

|

| 157 |

+

The methods available are:

|

| 158 |

+

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

|

| 159 |

+

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

|

| 160 |

+

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

|

| 161 |

+

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

|

| 162 |

+

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

|

| 163 |

+

Refer to the Provided Files table below to see what files use which methods, and how.

|

| 164 |

+

</details>

|

| 165 |

+

|

| 166 |

+

## Provided Files

|

| 167 |

+

| Name | Quant method | Bits | Size | Max RAM required | Use case |

|

| 168 |

+

| ---- | ---- | ---- | ---- | ---- | ----- |

|

| 169 |

+

| Name | Quant method | Bits | Size | Use case |

|

| 170 |

+

| [labse.Q2_K.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q2_K.gguf) | Q2_K | 2 | 364 MB | smallest, significant quality loss - not recommended for most purposes |

|

| 171 |

+

| [labse.Q3_K_S.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q3_K_S.gguf) | Q3_K_S | 3 | 368 MB | very small, high quality loss |

|

| 172 |

+

| [labse.Q3_K_M.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q3_K_M.gguf) | Q3_K_M | 3 | 374 MB | very small, high quality loss |

|

| 173 |

+

| [labse.Q3_K_L.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q3_K_L.gguf) | Q3_K_L | 3 | 379 MB | small, substantial quality loss |

|

| 174 |

+

| [labse.Q4_0.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q4_0.gguf) | Q4_0 | 4 | 379 MB | legacy; small, very high quality loss - prefer using Q3_K_M |

|

| 175 |

+

| [labse.Q4_K_S.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q4_K_S.gguf) | Q4_K_S | 4 | 380 MB | small, greater quality loss |

|

| 176 |

+

| [labse.Q4_K_M.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q4_K_M.gguf) | Q4_K_M | 4 | 384 MB | medium, balanced quality - recommended |

|

| 177 |

+

| [labse.Q5_0.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q5_0.gguf) | Q5_0 | 5 | 390 MB | legacy; medium, balanced quality - prefer using Q4_K_M |

|

| 178 |

+

| [labse.Q5_K_S.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q5_K_S.gguf) | Q5_K_S | 5 | 390 MB | large, low quality loss - recommended |

|

| 179 |

+

| [labse.Q5_K_M.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q5_K_M.gguf) | Q5_K_M | 5 | 392 MB | large, very low quality loss - recommended |

|

| 180 |

+

| [labse.Q6_K.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q6_K.gguf) | Q6_K | 6 | 401 MB | very large, extremely low quality loss |

|

| 181 |

+

| [labse.Q8_0.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse.Q8_0.gguf) | Q8_0 | 8 | 515 MB | very large, extremely low quality loss - recommended |

|

| 182 |

+

| [labse.Q8_0.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse_fp16.gguf) | fp16 | 16 | 955 MB | enormous, pretty much the original model - not recommended |

|

| 183 |

+

| [labse.Q8_0.gguf](https://huggingface.co/ChristianAzinn/labse-gguf/blob/main/labse_fp32.gguf) | fp32 | 32 | 1.89 GB | enormous, pretty much the original model - not recommended |

|

| 184 |

+

|

| 185 |

+

# Examples

|

| 186 |

+

## Example Usage with `llama.cpp`

|

| 187 |

+

|

| 188 |

+

To compute a single embedding, build llama.cpp and run:

|

| 189 |

+

```shell

|

| 190 |

+

./embedding -ngl 99 -m [filepath-to-gguf].gguf -p 'search_query: What is TSNE?'

|

| 191 |

+

```

|

| 192 |

+

|

| 193 |

+

You can also submit a batch of texts to embed, as long as the total number of tokens does not exceed the context length. Only the first three embeddings are shown by the `embedding` example.

|

| 194 |

+

|

| 195 |

+

`texts.txt`:

|

| 196 |

+

```

|

| 197 |

+

search_query: What is TSNE?

|

| 198 |

+

search_query: Who is Laurens Van der Maaten?

|

| 199 |

+

```

|

| 200 |

+

|

| 201 |

+

Compute multiple embeddings:

|

| 202 |

+

```shell

|

| 203 |

+

./embedding -ngl 99 -m [filepath-to-gguf].gguf -f texts.txt

|

| 204 |

+

```

|

| 205 |

+

|

| 206 |

+

## Example Usage with LM Studio

|

| 207 |

+

|

| 208 |

+

Download the 0.2.19 beta build from here: [Windows](https://releases.lmstudio.ai/windows/0.2.19/beta/LM-Studio-0.2.19-Setup-Preview-1.exe) [MacOS](https://releases.lmstudio.ai/mac/arm64/0.2.19/beta/LM-Studio-darwin-arm64-0.2.19-Preview-1.zip) [Linux](https://releases.lmstudio.ai/linux/0.2.19/beta/LM_Studio-0.2.19-Preview-1.AppImage)

|

| 209 |

+

|

| 210 |

+

Once installed, open the app. The home should look like this:

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

|

| 214 |

+

Search for either "ChristianAzinn" in the main search bar or go to the "Search" tab on the left menu and search the name there.

|

| 215 |

+

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

Select your model from those that appear (this example uses `bge-small-en-v1.5-gguf`) and select which quantization you want to download. Since this model is pretty small, I recommend Q8_0, if not f16/32. Generally, the lower you go in the list (or the bigger the number gets), the larger the file and the better the performance.

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

|

| 222 |

+

You will see a green checkmark and the word "Downloaded" once the model has successfully downloaded, which can take some time depending on your network speeds.

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

Once this model is finished downloading, navigate to the "Local Server" tab on the left menu and open the loader for text embedding models. This loader does not appear before version 0.2.19, so ensure you downloaded the correct version.

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

Select the model you just downloaded from the dropdown that appears to load it. You may need to play with configuratios in the right-side menu, such as GPU offload if it doesn't fit entirely into VRAM.

|

| 231 |

+

|

| 232 |

+

|

| 233 |

+

|

| 234 |

+

All that's left to do is to hit the "Start Server" button:

|

| 235 |

+

|

| 236 |

+

|

| 237 |

+

|

| 238 |

+

And if you see text like that shown below in the console, you're good to go! You can use this as a drop-in replacement for the OpenAI embeddings API in any application that requires it, or you can query the endpoint directly to test it out.

|

| 239 |

+

|

| 240 |

+

|

| 241 |

+

|

| 242 |

+

Example curl request to the API endpoint:

|

| 243 |

+

```shell

|

| 244 |

+

curl http://localhost:1234/v1/embeddings \

|

| 245 |

+

-H "Content-Type: application/json" \

|

| 246 |

+

-d '{

|

| 247 |

+

"input": "Your text string goes here",

|

| 248 |

+

"model": "model-identifier-here"

|

| 249 |

+

}'

|

| 250 |

+

```

|

| 251 |

+

|

| 252 |

+

For more information, see the LM Studio [text embedding documentation](https://lmstudio.ai/docs/text-embeddings).

|

| 253 |

+

|

| 254 |

+

|

| 255 |

+

## Acknowledgements

|

| 256 |

+

|

| 257 |

+

Thanks to the LM Studio team and everyone else working on open-source AI.

|

| 258 |

+

|

| 259 |

+

This README is inspired by that of [nomic-ai-embed-text-v1.5-gguf](https://huggingface.co/nomic-ai/nomic-embed-text-v1.5-gguf), another excellent embedding model, and those of the legendary [TheBloke](https://huggingface.co/TheBloke).

|

labse.Q2_K.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0df86ed0be2120d4148ed1764c4664ff20f5959d3d0ca50d8afcf1bc8cc0b007

|

| 3 |

+

size 363606656

|

labse.Q3_K_L.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7acfd8ec5303b7f22d7eb5e24d782a05f8e0d65f7b4d633de9eca1ede4d88395

|

| 3 |

+

size 378868352

|

labse.Q3_K_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2324e634f1c1bffe20d6b2df97153102111c76e6353f2015a40bb8018ccbc245

|

| 3 |

+

size 374002304

|

labse.Q3_K_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4f684c6f802d6607b7c0555839ad39540888c9bd117e147e2e19327d08f49cbb

|

| 3 |

+

size 367919744

|

labse.Q4_0.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e76ebacebfe2303086e8a52a912426dac2730f28bed14c3748bfc01a9e0d396b

|

| 3 |

+

size 379200128

|

labse.Q4_K_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3869330197b5a583afc572104bf93393e384c72473a15c2dae43cab43e194b3e

|

| 3 |

+

size 383762048

|

labse.Q4_K_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d6bd87998a6ac0341e270ef968c4ebc96e6f2987f01f8ab2fb57406cba5d44d2

|

| 3 |

+

size 380379776

|

labse.Q5_0.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:909f8a47cb23c1a5df7249b75fa95cb23cef696447fb249e79009126685a27bf

|

| 3 |

+

size 389816960

|

labse.Q5_K_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a088de97f05277c7d91bfa41d8a59c170258dcebf6f5ade62b31a4d3fbc1c2b9

|

| 3 |

+

size 392167040

|

labse.Q5_K_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a9bc257f57b5fe9be6dd94ba7a2cb39c8f00f202cb85dc333f6d1a9a6d1b9b40

|

| 3 |

+

size 389816960

|

labse.Q6_K.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ab7f65804a71bd2721a2086a1f11c41f70336ad7e6da2c0804429430575ba0b4

|

| 3 |

+

size 401097344

|

labse.Q8_0.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:89ec00269f2d30015194e49f0b6b1a2b9affe6d14c46762c4a0edd425f41a171

|

| 3 |

+

size 514881920

|

labse_fp16.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e971688d438ce7651513a7eaed3d3a7f60b6d7abde8ee9772fdaae102c6bb43e

|

| 3 |

+

size 954551808

|

labse_fp32.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:38491b0cef5ce3fac2f851b72d0440a4ba8914d6e50064337340f994aee77040

|

| 3 |

+

size 1894978560

|