Upload folder using huggingface_hub

Browse files- .gitignore +5 -0

- README.md +59 -0

- all_results.json +7 -0

- config.json +36 -0

- configuration_internlm2.py +151 -0

- generation_config.json +7 -0

- logs.txt +981 -0

- model-00001-of-00004.safetensors +3 -0

- model-00002-of-00004.safetensors +3 -0

- model-00003-of-00004.safetensors +3 -0

- model-00004-of-00004.safetensors +3 -0

- model.safetensors.index.json +234 -0

- modeling_internlm2.py +1391 -0

- special_tokens_map.json +39 -0

- tokenization_internlm2.py +236 -0

- tokenizer.model +3 -0

- tokenizer_config.json +95 -0

- train_results.json +7 -0

- trainer_log.jsonl +7 -0

- trainer_state.json +66 -0

- training_args.bin +3 -0

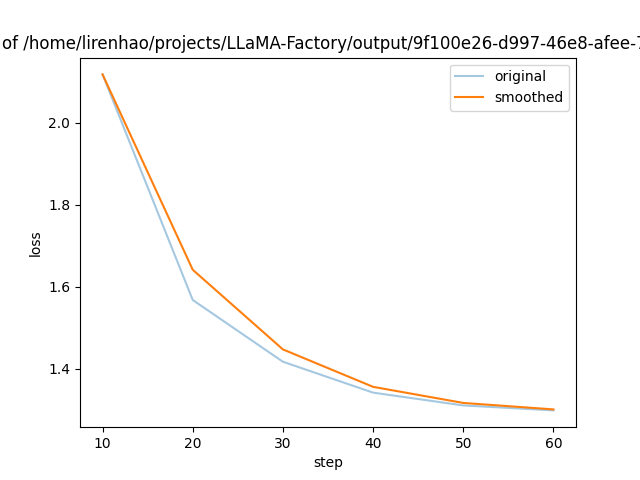

- training_loss.png +0 -0

.gitignore

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

all_results.json

|

| 2 |

+

logs.txt

|

| 3 |

+

trainer_log.jsonl

|

| 4 |

+

training_loss.png

|

| 5 |

+

train_results.json

|

README.md

ADDED

|

@@ -0,0 +1,59 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

base_model: internlm/internlm2-chat-7b

|

| 4 |

+

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- generated_from_trainer

|

| 7 |

+

model-index:

|

| 8 |

+

- name: 9f100e26-d997-46e8-afee-721977a16ca9

|

| 9 |

+

results: []

|

| 10 |

+

---

|

| 11 |

+

|

| 12 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 13 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 14 |

+

|

| 15 |

+

# 9f100e26-d997-46e8-afee-721977a16ca9

|

| 16 |

+

|

| 17 |

+

This model is a fine-tuned version of [/home/lirenhao/pretrained_models/internlm2-chat-7b/](https://huggingface.co//home/lirenhao/pretrained_models/internlm2-chat-7b/) on the cpsycoun dataset.

|

| 18 |

+

|

| 19 |

+

## Model description

|

| 20 |

+

|

| 21 |

+

More information needed

|

| 22 |

+

|

| 23 |

+

## Intended uses & limitations

|

| 24 |

+

|

| 25 |

+

More information needed

|

| 26 |

+

|

| 27 |

+

## Training and evaluation data

|

| 28 |

+

|

| 29 |

+

More information needed

|

| 30 |

+

|

| 31 |

+

## Training procedure

|

| 32 |

+

|

| 33 |

+

### Training hyperparameters

|

| 34 |

+

|

| 35 |

+

The following hyperparameters were used during training:

|

| 36 |

+

- learning_rate: 1e-06

|

| 37 |

+

- train_batch_size: 4

|

| 38 |

+

- eval_batch_size: 8

|

| 39 |

+

- seed: 42

|

| 40 |

+

- distributed_type: multi-GPU

|

| 41 |

+

- num_devices: 4

|

| 42 |

+

- gradient_accumulation_steps: 28

|

| 43 |

+

- total_train_batch_size: 448

|

| 44 |

+

- total_eval_batch_size: 32

|

| 45 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 46 |

+

- lr_scheduler_type: cosine

|

| 47 |

+

- num_epochs: 9.0

|

| 48 |

+

- mixed_precision_training: Native AMP

|

| 49 |

+

|

| 50 |

+

### Training results

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

### Framework versions

|

| 55 |

+

|

| 56 |

+

- Transformers 4.37.1

|

| 57 |

+

- Pytorch 2.1.2+cu121

|

| 58 |

+

- Datasets 2.16.1

|

| 59 |

+

- Tokenizers 0.15.1

|

all_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 9.0,

|

| 3 |

+

"train_loss": 1.4981852107577853,

|

| 4 |

+

"train_runtime": 2910.9748,

|

| 5 |

+

"train_samples_per_second": 9.69,

|

| 6 |

+

"train_steps_per_second": 0.022

|

| 7 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/home/lirenhao/pretrained_models/internlm2-chat-7b/",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"InternLM2ForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attn_implementation": "eager",

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoConfig": "configuration_internlm2.InternLM2Config",

|

| 9 |

+

"AutoModel": "modeling_internlm2.InternLM2ForCausalLM",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_internlm2.InternLM2ForCausalLM"

|

| 11 |

+

},

|

| 12 |

+

"bias": false,

|

| 13 |

+

"bos_token_id": 1,

|

| 14 |

+

"eos_token_id": 2,

|

| 15 |

+

"hidden_act": "silu",

|

| 16 |

+

"hidden_size": 4096,

|

| 17 |

+

"initializer_range": 0.02,

|

| 18 |

+

"intermediate_size": 14336,

|

| 19 |

+

"max_position_embeddings": 32768,

|

| 20 |

+

"model_type": "internlm2",

|

| 21 |

+

"num_attention_heads": 32,

|

| 22 |

+

"num_hidden_layers": 32,

|

| 23 |

+

"num_key_value_heads": 8,

|

| 24 |

+

"pad_token_id": 2,

|

| 25 |

+

"rms_norm_eps": 1e-05,

|

| 26 |

+

"rope_scaling": {

|

| 27 |

+

"factor": 2.0,

|

| 28 |

+

"type": "dynamic"

|

| 29 |

+

},

|

| 30 |

+

"rope_theta": 1000000,

|

| 31 |

+

"tie_word_embeddings": false,

|

| 32 |

+

"torch_dtype": "float16",

|

| 33 |

+

"transformers_version": "4.37.1",

|

| 34 |

+

"use_cache": false,

|

| 35 |

+

"vocab_size": 92544

|

| 36 |

+

}

|

configuration_internlm2.py

ADDED

|

@@ -0,0 +1,151 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright (c) The InternLM team and The HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# This code is based on transformers/src/transformers/models/llama/configuration_llama.py

|

| 5 |

+

#

|

| 6 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 7 |

+

# you may not use this file except in compliance with the License.

|

| 8 |

+

# You may obtain a copy of the License at

|

| 9 |

+

#

|

| 10 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 11 |

+

#

|

| 12 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 13 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 14 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 15 |

+

# See the License for the specific language governing permissions and

|

| 16 |

+

# limitations under the License.

|

| 17 |

+

""" InternLM2 model configuration"""

|

| 18 |

+

|

| 19 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 20 |

+

from transformers.utils import logging

|

| 21 |

+

|

| 22 |

+

logger = logging.get_logger(__name__)

|

| 23 |

+

|

| 24 |

+

INTERNLM2_PRETRAINED_CONFIG_ARCHIVE_MAP = {}

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

# Modified from transformers.model.llama.configuration_llama.LlamaConfig

|

| 28 |

+

class InternLM2Config(PretrainedConfig):

|

| 29 |

+

r"""

|

| 30 |

+

This is the configuration class to store the configuration of a [`InternLM2Model`]. It is used to instantiate

|

| 31 |

+

an InternLM2 model according to the specified arguments, defining the model architecture. Instantiating a

|

| 32 |

+

configuration with the defaults will yield a similar configuration to that of the InternLM2-7B.

|

| 33 |

+

|

| 34 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 35 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

Args:

|

| 39 |

+

vocab_size (`int`, *optional*, defaults to 32000):

|

| 40 |

+

Vocabulary size of the InternLM2 model. Defines the number of different tokens that can be represented by the

|

| 41 |

+

`inputs_ids` passed when calling [`InternLM2Model`]

|

| 42 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 43 |

+

Dimension of the hidden representations.

|

| 44 |

+

intermediate_size (`int`, *optional*, defaults to 11008):

|

| 45 |

+

Dimension of the MLP representations.

|

| 46 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 47 |

+

Number of hidden layers in the Transformer encoder.

|

| 48 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 49 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 50 |

+

num_key_value_heads (`int`, *optional*):

|

| 51 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 52 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 53 |

+

`num_key_value_heads=1 the model will use Multi Query Attention (MQA) otherwise GQA is used. When

|

| 54 |

+

converting a multi-head checkpoint to a GQA checkpoint, each group key and value head should be constructed

|

| 55 |

+

by meanpooling all the original heads within that group. For more details checkout [this

|

| 56 |

+

paper](https://arxiv.org/pdf/2305.13245.pdf). If it is not specified, will default to

|

| 57 |

+

`num_attention_heads`.

|

| 58 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 59 |

+

The non-linear activation function (function or string) in the decoder.

|

| 60 |

+

max_position_embeddings (`int`, *optional*, defaults to 2048):

|

| 61 |

+

The maximum sequence length that this model might ever be used with. Typically set this to something large

|

| 62 |

+

just in case (e.g., 512 or 1024 or 2048).

|

| 63 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 64 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 65 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-12):

|

| 66 |

+

The epsilon used by the rms normalization layers.

|

| 67 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 68 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 69 |

+

relevant if `config.is_decoder=True`.

|

| 70 |

+

tie_word_embeddings(`bool`, *optional*, defaults to `False`):

|

| 71 |

+

Whether to tie weight embeddings

|

| 72 |

+

Example:

|

| 73 |

+

|

| 74 |

+

"""

|

| 75 |

+

model_type = "internlm2"

|

| 76 |

+

_auto_class = "AutoConfig"

|

| 77 |

+

|

| 78 |

+

def __init__( # pylint: disable=W0102

|

| 79 |

+

self,

|

| 80 |

+

vocab_size=103168,

|

| 81 |

+

hidden_size=4096,

|

| 82 |

+

intermediate_size=11008,

|

| 83 |

+

num_hidden_layers=32,

|

| 84 |

+

num_attention_heads=32,

|

| 85 |

+

num_key_value_heads=None,

|

| 86 |

+

hidden_act="silu",

|

| 87 |

+

max_position_embeddings=2048,

|

| 88 |

+

initializer_range=0.02,

|

| 89 |

+

rms_norm_eps=1e-6,

|

| 90 |

+

use_cache=True,

|

| 91 |

+

pad_token_id=0,

|

| 92 |

+

bos_token_id=1,

|

| 93 |

+

eos_token_id=2,

|

| 94 |

+

tie_word_embeddings=False,

|

| 95 |

+

bias=True,

|

| 96 |

+

rope_theta=10000,

|

| 97 |

+

rope_scaling=None,

|

| 98 |

+

attn_implementation="eager",

|

| 99 |

+

**kwargs,

|

| 100 |

+

):

|

| 101 |

+

self.vocab_size = vocab_size

|

| 102 |

+

self.max_position_embeddings = max_position_embeddings

|

| 103 |

+

self.hidden_size = hidden_size

|

| 104 |

+

self.intermediate_size = intermediate_size

|

| 105 |

+

self.num_hidden_layers = num_hidden_layers

|

| 106 |

+

self.num_attention_heads = num_attention_heads

|

| 107 |

+

self.bias = bias

|

| 108 |

+

|

| 109 |

+

if num_key_value_heads is None:

|

| 110 |

+

num_key_value_heads = num_attention_heads

|

| 111 |

+

self.num_key_value_heads = num_key_value_heads

|

| 112 |

+

|

| 113 |

+

self.hidden_act = hidden_act

|

| 114 |

+

self.initializer_range = initializer_range

|

| 115 |

+

self.rms_norm_eps = rms_norm_eps

|

| 116 |

+

self.use_cache = use_cache

|

| 117 |

+

self.rope_theta = rope_theta

|

| 118 |

+

self.rope_scaling = rope_scaling

|

| 119 |

+

self._rope_scaling_validation()

|

| 120 |

+

|

| 121 |

+

self.attn_implementation = attn_implementation

|

| 122 |

+

if self.attn_implementation is None:

|

| 123 |

+

self.attn_implementation = "eager"

|

| 124 |

+

super().__init__(

|

| 125 |

+

pad_token_id=pad_token_id,

|

| 126 |

+

bos_token_id=bos_token_id,

|

| 127 |

+

eos_token_id=eos_token_id,

|

| 128 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 129 |

+

**kwargs,

|

| 130 |

+

)

|

| 131 |

+

|

| 132 |

+

def _rope_scaling_validation(self):

|

| 133 |

+

"""

|

| 134 |

+

Validate the `rope_scaling` configuration.

|

| 135 |

+

"""

|

| 136 |

+

if self.rope_scaling is None:

|

| 137 |

+

return

|

| 138 |

+

|

| 139 |

+

if not isinstance(self.rope_scaling, dict) or len(self.rope_scaling) != 2:

|

| 140 |

+

raise ValueError(

|

| 141 |

+

"`rope_scaling` must be a dictionary with with two fields, `type` and `factor`, "

|

| 142 |

+

f"got {self.rope_scaling}"

|

| 143 |

+

)

|

| 144 |

+

rope_scaling_type = self.rope_scaling.get("type", None)

|

| 145 |

+

rope_scaling_factor = self.rope_scaling.get("factor", None)

|

| 146 |

+

if rope_scaling_type is None or rope_scaling_type not in ["linear", "dynamic"]:

|

| 147 |

+

raise ValueError(

|

| 148 |

+

f"`rope_scaling`'s type field must be one of ['linear', 'dynamic'], got {rope_scaling_type}"

|

| 149 |

+

)

|

| 150 |

+

if rope_scaling_factor is None or not isinstance(rope_scaling_factor, float) or rope_scaling_factor < 1.0:

|

| 151 |

+

raise ValueError(f"`rope_scaling`'s factor field must be a float >= 1, got {rope_scaling_factor}")

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"pad_token_id": 2,

|

| 6 |

+

"transformers_version": "4.37.1"

|

| 7 |

+

}

|

logs.txt

ADDED

|

@@ -0,0 +1,981 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 0 |

0%| | 0/63 [00:00<?, ?it/s]/home/lirenhao/anaconda3/envs/llama_factory/lib/python3.10/site-packages/torch/utils/checkpoint.py:429: UserWarning: torch.utils.checkpoint: please pass in use_reentrant=True or use_reentrant=False explicitly. The default value of use_reentrant will be updated to be False in the future. To maintain current behavior, pass use_reentrant=True. It is recommended that you use use_reentrant=False. Refer to docs for more details on the differences between the two variants.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

2%|▏ | 1/63 [00:44<45:32, 44.06s/it]

|

| 2 |

3%|▎ | 2/63 [01:23<42:01, 41.33s/it]

|

| 3 |

5%|▍ | 3/63 [02:04<41:04, 41.08s/it]

|

| 4 |

6%|▋ | 4/63 [02:43<39:37, 40.30s/it]

|

| 5 |

8%|▊ | 5/63 [03:22<38:23, 39.72s/it][2024-02-01 14:26:04,941] [INFO] [loss_scaler.py:190:update_scale] [deepspeed] OVERFLOW! Rank 0 Skipping step. Attempted loss scale: 65536, but hysteresis is 2. Reducing hysteresis to 1

|

|

|

|

| 6 |

10%|▉ | 6/63 [04:03<38:08, 40.15s/it][2024-02-01 14:26:44,502] [INFO] [loss_scaler.py:183:update_scale] [deepspeed] OVERFLOW! Rank 0 Skipping step. Attempted loss scale: 65536, reducing to 32768

|

|

|

|

| 7 |

11%|█ | 7/63 [04:42<37:17, 39.96s/it]

|

| 8 |

13%|█▎ | 8/63 [05:21<36:23, 39.71s/it]

|

| 9 |

14%|█▍ | 9/63 [06:01<35:39, 39.62s/it]

|

| 10 |

16%|█▌ | 10/63 [06:41<35:16, 39.93s/it]

|

| 11 |

|

|

|

|

| 12 |

16%|█▌ | 10/63 [06:41<35:16, 39.93s/it]

|

| 13 |

17%|█▋ | 11/63 [07:20<34:22, 39.65s/it]

|

| 14 |

19%|█▉ | 12/63 [08:00<33:47, 39.76s/it]

|

| 15 |

21%|██ | 13/63 [08:39<32:56, 39.53s/it]

|

| 16 |

22%|██▏ | 14/63 [09:20<32:32, 39.85s/it]

|

| 17 |

24%|██▍ | 15/63 [09:59<31:45, 39.69s/it]

|

| 18 |

25%|██▌ | 16/63 [10:38<30:47, 39.31s/it]

|

| 19 |

27%|██▋ | 17/63 [11:19<30:31, 39.82s/it]

|

| 20 |

29%|██▊ | 18/63 [11:58<29:51, 39.81s/it]

|

| 21 |

30%|███ | 19/63 [12:39<29:15, 39.89s/it]

|

| 22 |

32%|███▏ | 20/63 [13:19<28:42, 40.06s/it]

|

| 23 |

|

|

|

|

| 24 |

32%|███▏ | 20/63 [13:19<28:42, 40.06s/it]

|

| 25 |

33%|███▎ | 21/63 [13:59<27:59, 39.99s/it][INFO|trainer.py:2926] 2024-02-01 14:36:12,897 >> Saving model checkpoint to /home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9/tmp-checkpoint-21

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 26 |

35%|███▍ | 22/63 [16:52<54:35, 79.88s/it]

|

| 27 |

37%|███▋ | 23/63 [17:32<45:23, 68.08s/it]

|

| 28 |

38%|███▊ | 24/63 [18:15<39:17, 60.44s/it]

|

| 29 |

40%|███▉ | 25/63 [18:54<34:12, 54.02s/it]

|

| 30 |

41%|████▏ | 26/63 [19:33<30:33, 49.54s/it]

|

| 31 |

43%|████▎ | 27/63 [20:12<27:49, 46.38s/it]

|

| 32 |

44%|████▍ | 28/63 [20:51<25:45, 44.17s/it]

|

| 33 |

46%|████▌ | 29/63 [21:31<24:19, 42.92s/it]

|

| 34 |

48%|████▊ | 30/63 [22:11<23:07, 42.06s/it]

|

| 35 |

|

|

|

|

| 36 |

48%|████▊ | 30/63 [22:11<23:07, 42.06s/it]

|

| 37 |

49%|████▉ | 31/63 [22:52<22:17, 41.80s/it]

|

| 38 |

51%|█████ | 32/63 [23:32<21:11, 41.02s/it]

|

| 39 |

52%|█████▏ | 33/63 [24:10<20:06, 40.20s/it]

|

| 40 |

54%|█████▍ | 34/63 [24:49<19:18, 39.96s/it]

|

| 41 |

56%|█████▌ | 35/63 [25:30<18:43, 40.11s/it]

|

| 42 |

57%|█████▋ | 36/63 [26:10<18:03, 40.13s/it]

|

| 43 |

59%|█████▊ | 37/63 [26:49<17:12, 39.70s/it]

|

| 44 |

60%|██████ | 38/63 [27:29<16:36, 39.88s/it]

|

| 45 |

62%|██████▏ | 39/63 [28:08<15:48, 39.51s/it]

|

| 46 |

63%|██████▎ | 40/63 [28:46<15:04, 39.34s/it]

|

| 47 |

|

|

|

|

| 48 |

63%|██████▎ | 40/63 [28:46<15:04, 39.34s/it]

|

| 49 |

65%|██████▌ | 41/63 [29:27<14:36, 39.84s/it]

|

| 50 |

67%|██████▋ | 42/63 [30:07<13:57, 39.87s/it][INFO|trainer.py:2926] 2024-02-01 14:52:21,426 >> Saving model checkpoint to /home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9/tmp-checkpoint-42

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 51 |

68%|██████▊ | 43/63 [33:01<26:37, 79.86s/it]

|

| 52 |

70%|██████▉ | 44/63 [33:41<21:31, 67.96s/it]

|

| 53 |

71%|███████▏ | 45/63 [34:20<17:47, 59.29s/it]

|

| 54 |

73%|███████▎ | 46/63 [35:01<15:13, 53.75s/it]

|

| 55 |

75%|███████▍ | 47/63 [35:41<13:13, 49.58s/it]

|

| 56 |

76%|███████▌ | 48/63 [36:21<11:40, 46.71s/it]

|

| 57 |

78%|███████▊ | 49/63 [37:00<10:25, 44.69s/it]

|

| 58 |

79%|███████▉ | 50/63 [37:42<09:26, 43.60s/it]

|

| 59 |

|

|

|

|

| 60 |

79%|███████▉ | 50/63 [37:42<09:26, 43.60s/it]

|

| 61 |

81%|████████ | 51/63 [38:20<08:24, 42.07s/it]

|

| 62 |

83%|████████▎ | 52/63 [39:00<07:34, 41.29s/it]

|

| 63 |

84%|████████▍ | 53/63 [39:41<06:52, 41.22s/it]

|

| 64 |

86%|████████▌ | 54/63 [40:21<06:07, 40.87s/it]

|

| 65 |

87%|████████▋ | 55/63 [41:00<05:22, 40.28s/it]

|

| 66 |

89%|████████▉ | 56/63 [41:38<04:39, 39.88s/it]

|

| 67 |

90%|█████████ | 57/63 [42:18<03:58, 39.78s/it]

|

| 68 |

92%|█████████▏| 58/63 [42:59<03:20, 40.10s/it]

|

| 69 |

94%|█████████▎| 59/63 [43:39<02:40, 40.24s/it]

|

| 70 |

95%|█████████▌| 60/63 [44:19<02:00, 40.04s/it]

|

| 71 |

|

|

|

|

| 72 |

95%|█████████▌| 60/63 [44:19<02:00, 40.04s/it]

|

| 73 |

97%|█████████▋| 61/63 [44:58<01:19, 39.67s/it]

|

| 74 |

98%|█████████▊| 62/63 [45:38<00:39, 39.80s/it]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 75 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[2024-02-01 14:20:07,768] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 2 |

+

[2024-02-01 14:20:09,368] [WARNING] [runner.py:202:fetch_hostfile] Unable to find hostfile, will proceed with training with local resources only.

|

| 3 |

+

[2024-02-01 14:20:09,369] [INFO] [runner.py:568:main] cmd = /home/lirenhao/anaconda3/envs/llama_factory/bin/python -u -m deepspeed.launcher.launch --world_info=eyJsb2NhbGhvc3QiOiBbMCwgMSwgMiwgM119 --master_addr=127.0.0.1 --master_port=2345 --enable_each_rank_log=None /home/lirenhao/projects/LLaMA-Factory/src/train_bash.py --deepspeed ds_config.json --stage sft --model_name_or_path /home/lirenhao/pretrained_models/internlm2-chat-7b/ --do_train --dataset cpsycoun --template intern2 --finetuning_type full --lora_target wqkv --output_dir /home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9 --overwrite_cache --overwrite_output_dir --per_device_train_batch_size 4 --gradient_accumulation_steps 28 --lr_scheduler_type cosine --logging_steps 10 --save_steps 21 --learning_rate 1e-6 --num_train_epochs 9.0 --plot_loss --fp16

|

| 4 |

+

[2024-02-01 14:20:12,819] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 5 |

+

[2024-02-01 14:20:14,435] [INFO] [launch.py:145:main] WORLD INFO DICT: {'localhost': [0, 1, 2, 3]}

|

| 6 |

+

[2024-02-01 14:20:14,436] [INFO] [launch.py:151:main] nnodes=1, num_local_procs=4, node_rank=0

|

| 7 |

+

[2024-02-01 14:20:14,436] [INFO] [launch.py:162:main] global_rank_mapping=defaultdict(<class 'list'>, {'localhost': [0, 1, 2, 3]})

|

| 8 |

+

[2024-02-01 14:20:14,436] [INFO] [launch.py:163:main] dist_world_size=4

|

| 9 |

+

[2024-02-01 14:20:14,436] [INFO] [launch.py:165:main] Setting CUDA_VISIBLE_DEVICES=0,1,2,3

|

| 10 |

+

[2024-02-01 14:20:19,797] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 11 |

+

[2024-02-01 14:20:20,069] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 12 |

+

[2024-02-01 14:20:20,128] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 13 |

+

[2024-02-01 14:20:20,157] [INFO] [real_accelerator.py:191:get_accelerator] Setting ds_accelerator to cuda (auto detect)

|

| 14 |

+

[2024-02-01 14:20:22,839] [INFO] [comm.py:637:init_distributed] cdb=None

|

| 15 |

+

[2024-02-01 14:20:23,347] [INFO] [comm.py:637:init_distributed] cdb=None

|

| 16 |

+

[2024-02-01 14:20:23,364] [INFO] [comm.py:637:init_distributed] cdb=None

|

| 17 |

+

[2024-02-01 14:20:23,375] [INFO] [comm.py:637:init_distributed] cdb=None

|

| 18 |

+

[2024-02-01 14:20:23,376] [INFO] [comm.py:668:init_distributed] Initializing TorchBackend in DeepSpeed with backend nccl

|

| 19 |

+

02/01/2024 14:20:24 - INFO - llmtuner.hparams.parser - Process rank: 2, device: cuda:2, n_gpu: 1

|

| 20 |

+

distributed training: True, compute dtype: torch.float16

|

| 21 |

+

02/01/2024 14:20:24 - INFO - llmtuner.hparams.parser - Training/evaluation parameters Seq2SeqTrainingArguments(

|

| 22 |

+

_n_gpu=1,

|

| 23 |

+

adafactor=False,

|

| 24 |

+

adam_beta1=0.9,

|

| 25 |

+

adam_beta2=0.999,

|

| 26 |

+

adam_epsilon=1e-08,

|

| 27 |

+

auto_find_batch_size=False,

|

| 28 |

+

bf16=False,

|

| 29 |

+

bf16_full_eval=False,

|

| 30 |

+

data_seed=None,

|

| 31 |

+

dataloader_drop_last=False,

|

| 32 |

+

dataloader_num_workers=0,

|

| 33 |

+

dataloader_persistent_workers=False,

|

| 34 |

+

dataloader_pin_memory=True,

|

| 35 |

+

ddp_backend=None,

|

| 36 |

+

ddp_broadcast_buffers=None,

|

| 37 |

+

ddp_bucket_cap_mb=None,

|

| 38 |

+

ddp_find_unused_parameters=None,

|

| 39 |

+

ddp_timeout=1800,

|

| 40 |

+

debug=[],

|

| 41 |

+

deepspeed=ds_config.json,

|

| 42 |

+

disable_tqdm=False,

|

| 43 |

+

dispatch_batches=None,

|

| 44 |

+

do_eval=False,

|

| 45 |

+

do_predict=False,

|

| 46 |

+

do_train=True,

|

| 47 |

+

eval_accumulation_steps=None,

|

| 48 |

+

eval_delay=0,

|

| 49 |

+

eval_steps=None,

|

| 50 |

+

evaluation_strategy=no,

|

| 51 |

+

fp16=True,

|

| 52 |

+

fp16_backend=auto,

|

| 53 |

+

fp16_full_eval=False,

|

| 54 |

+

fp16_opt_level=O1,

|

| 55 |

+

fsdp=[],

|

| 56 |

+

fsdp_config={'min_num_params': 0, 'xla': False, 'xla_fsdp_grad_ckpt': False},

|

| 57 |

+

fsdp_min_num_params=0,

|

| 58 |

+

fsdp_transformer_layer_cls_to_wrap=None,

|

| 59 |

+

full_determinism=False,

|

| 60 |

+

generation_config=None,

|

| 61 |

+

generation_max_length=None,

|

| 62 |

+

generation_num_beams=None,

|

| 63 |

+

gradient_accumulation_steps=28,

|

| 64 |

+

gradient_checkpointing=False,

|

| 65 |

+

gradient_checkpointing_kwargs=None,

|

| 66 |

+

greater_is_better=None,

|

| 67 |

+

group_by_length=False,

|

| 68 |

+

half_precision_backend=auto,

|

| 69 |

+

hub_always_push=False,

|

| 70 |

+

hub_model_id=None,

|

| 71 |

+

hub_private_repo=False,

|

| 72 |

+

hub_strategy=every_save,

|

| 73 |

+

hub_token=<HUB_TOKEN>,

|

| 74 |

+

ignore_data_skip=False,

|

| 75 |

+

include_inputs_for_metrics=False,

|

| 76 |

+

include_num_input_tokens_seen=False,

|

| 77 |

+

include_tokens_per_second=False,

|

| 78 |

+

jit_mode_eval=False,

|

| 79 |

+

label_names=None,

|

| 80 |

+

label_smoothing_factor=0.0,

|

| 81 |

+

learning_rate=1e-06,

|

| 82 |

+

length_column_name=length,

|

| 83 |

+

load_best_model_at_end=False,

|

| 84 |

+

local_rank=2,

|

| 85 |

+

log_level=passive,

|

| 86 |

+

log_level_replica=warning,

|

| 87 |

+

log_on_each_node=True,

|

| 88 |

+

logging_dir=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9/runs/Feb01_14-20-22_siat-a100-4-02,

|

| 89 |

+

logging_first_step=False,

|

| 90 |

+

logging_nan_inf_filter=True,

|

| 91 |

+

logging_steps=10,

|

| 92 |

+

logging_strategy=steps,

|

| 93 |

+

lr_scheduler_kwargs={},

|

| 94 |

+

lr_scheduler_type=cosine,

|

| 95 |

+

max_grad_norm=1.0,

|

| 96 |

+

max_steps=-1,

|

| 97 |

+

metric_for_best_model=None,

|

| 98 |

+

mp_parameters=,

|

| 99 |

+

neftune_noise_alpha=None,

|

| 100 |

+

no_cuda=False,

|

| 101 |

+

num_train_epochs=9.0,

|

| 102 |

+

optim=adamw_torch,

|

| 103 |

+

optim_args=None,

|

| 104 |

+

output_dir=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9,

|

| 105 |

+

overwrite_output_dir=True,

|

| 106 |

+

past_index=-1,

|

| 107 |

+

per_device_eval_batch_size=8,

|

| 108 |

+

per_device_train_batch_size=4,

|

| 109 |

+

predict_with_generate=False,

|

| 110 |

+

prediction_loss_only=False,

|

| 111 |

+

push_to_hub=False,

|

| 112 |

+

push_to_hub_model_id=None,

|

| 113 |

+

push_to_hub_organization=None,

|

| 114 |

+

push_to_hub_token=<PUSH_TO_HUB_TOKEN>,

|

| 115 |

+

ray_scope=last,

|

| 116 |

+

remove_unused_columns=True,

|

| 117 |

+

report_to=[],

|

| 118 |

+

resume_from_checkpoint=None,

|

| 119 |

+

run_name=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9,

|

| 120 |

+

save_on_each_node=False,

|

| 121 |

+

save_only_model=False,

|

| 122 |

+

save_safetensors=True,

|

| 123 |

+

save_steps=21,

|

| 124 |

+

save_strategy=steps,

|

| 125 |

+

save_total_limit=None,

|

| 126 |

+

seed=42,

|

| 127 |

+

skip_memory_metrics=True,

|

| 128 |

+

sortish_sampler=False,

|

| 129 |

+

split_batches=False,

|

| 130 |

+

tf32=None,

|

| 131 |

+

torch_compile=False,

|

| 132 |

+

torch_compile_backend=None,

|

| 133 |

+

torch_compile_mode=None,

|

| 134 |

+

torchdynamo=None,

|

| 135 |

+

tpu_metrics_debug=False,

|

| 136 |

+

tpu_num_cores=None,

|

| 137 |

+

use_cpu=False,

|

| 138 |

+

use_ipex=False,

|

| 139 |

+

use_legacy_prediction_loop=False,

|

| 140 |

+

use_mps_device=False,

|

| 141 |

+

warmup_ratio=0.0,

|

| 142 |

+

warmup_steps=0,

|

| 143 |

+

weight_decay=0.0,

|

| 144 |

+

)

|

| 145 |

+

02/01/2024 14:20:24 - INFO - llmtuner.hparams.parser - Process rank: 0, device: cuda:0, n_gpu: 1

|

| 146 |

+

distributed training: True, compute dtype: torch.float16

|

| 147 |

+

02/01/2024 14:20:24 - INFO - llmtuner.hparams.parser - Training/evaluation parameters Seq2SeqTrainingArguments(

|

| 148 |

+

_n_gpu=1,

|

| 149 |

+

adafactor=False,

|

| 150 |

+

adam_beta1=0.9,

|

| 151 |

+

adam_beta2=0.999,

|

| 152 |

+

adam_epsilon=1e-08,

|

| 153 |

+

auto_find_batch_size=False,

|

| 154 |

+

bf16=False,

|

| 155 |

+

bf16_full_eval=False,

|

| 156 |

+

data_seed=None,

|

| 157 |

+

dataloader_drop_last=False,

|

| 158 |

+

dataloader_num_workers=0,

|

| 159 |

+

dataloader_persistent_workers=False,

|

| 160 |

+

dataloader_pin_memory=True,

|

| 161 |

+

ddp_backend=None,

|

| 162 |

+

ddp_broadcast_buffers=None,

|

| 163 |

+

ddp_bucket_cap_mb=None,

|

| 164 |

+

ddp_find_unused_parameters=None,

|

| 165 |

+

ddp_timeout=1800,

|

| 166 |

+

debug=[],

|

| 167 |

+

deepspeed=ds_config.json,

|

| 168 |

+

disable_tqdm=False,

|

| 169 |

+

dispatch_batches=None,

|

| 170 |

+

do_eval=False,

|

| 171 |

+

do_predict=False,

|

| 172 |

+

do_train=True,

|

| 173 |

+

eval_accumulation_steps=None,

|

| 174 |

+

eval_delay=0,

|

| 175 |

+

eval_steps=None,

|

| 176 |

+

evaluation_strategy=no,

|

| 177 |

+

fp16=True,

|

| 178 |

+

fp16_backend=auto,

|

| 179 |

+

fp16_full_eval=False,

|

| 180 |

+

fp16_opt_level=O1,

|

| 181 |

+

fsdp=[],

|

| 182 |

+

fsdp_config={'min_num_params': 0, 'xla': False, 'xla_fsdp_grad_ckpt': False},

|

| 183 |

+

fsdp_min_num_params=0,

|

| 184 |

+

fsdp_transformer_layer_cls_to_wrap=None,

|

| 185 |

+

full_determinism=False,

|

| 186 |

+

generation_config=None,

|

| 187 |

+

generation_max_length=None,

|

| 188 |

+

generation_num_beams=None,

|

| 189 |

+

gradient_accumulation_steps=28,

|

| 190 |

+

gradient_checkpointing=False,

|

| 191 |

+

gradient_checkpointing_kwargs=None,

|

| 192 |

+

greater_is_better=None,

|

| 193 |

+

group_by_length=False,

|

| 194 |

+

half_precision_backend=auto,

|

| 195 |

+

hub_always_push=False,

|

| 196 |

+

hub_model_id=None,

|

| 197 |

+

hub_private_repo=False,

|

| 198 |

+

hub_strategy=every_save,

|

| 199 |

+

hub_token=<HUB_TOKEN>,

|

| 200 |

+

ignore_data_skip=False,

|

| 201 |

+

include_inputs_for_metrics=False,

|

| 202 |

+

include_num_input_tokens_seen=False,

|

| 203 |

+

include_tokens_per_second=False,

|

| 204 |

+

jit_mode_eval=False,

|

| 205 |

+

label_names=None,

|

| 206 |

+

label_smoothing_factor=0.0,

|

| 207 |

+

learning_rate=1e-06,

|

| 208 |

+

length_column_name=length,

|

| 209 |

+

load_best_model_at_end=False,

|

| 210 |

+

local_rank=0,

|

| 211 |

+

log_level=passive,

|

| 212 |

+

log_level_replica=warning,

|

| 213 |

+

log_on_each_node=True,

|

| 214 |

+

logging_dir=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9/runs/Feb01_14-20-23_siat-a100-4-02,

|

| 215 |

+

logging_first_step=False,

|

| 216 |

+

logging_nan_inf_filter=True,

|

| 217 |

+

logging_steps=10,

|

| 218 |

+

logging_strategy=steps,

|

| 219 |

+

lr_scheduler_kwargs={},

|

| 220 |

+

lr_scheduler_type=cosine,

|

| 221 |

+

max_grad_norm=1.0,

|

| 222 |

+

max_steps=-1,

|

| 223 |

+

metric_for_best_model=None,

|

| 224 |

+

mp_parameters=,

|

| 225 |

+

neftune_noise_alpha=None,

|

| 226 |

+

no_cuda=False,

|

| 227 |

+

num_train_epochs=9.0,

|

| 228 |

+

optim=adamw_torch,

|

| 229 |

+

optim_args=None,

|

| 230 |

+

output_dir=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9,

|

| 231 |

+

overwrite_output_dir=True,

|

| 232 |

+

past_index=-1,

|

| 233 |

+

per_device_eval_batch_size=8,

|

| 234 |

+

per_device_train_batch_size=4,

|

| 235 |

+

predict_with_generate=False,

|

| 236 |

+

prediction_loss_only=False,

|

| 237 |

+

push_to_hub=False,

|

| 238 |

+

push_to_hub_model_id=None,

|

| 239 |

+

push_to_hub_organization=None,

|

| 240 |

+

push_to_hub_token=<PUSH_TO_HUB_TOKEN>,

|

| 241 |

+

ray_scope=last,

|

| 242 |

+

remove_unused_columns=True,

|

| 243 |

+

report_to=[],

|

| 244 |

+

resume_from_checkpoint=None,

|

| 245 |

+

run_name=/home/lirenhao/projects/LLaMA-Factory/output/9f100e26-d997-46e8-afee-721977a16ca9,

|

| 246 |

+

save_on_each_node=False,

|

| 247 |

+

save_only_model=False,

|

| 248 |

+

save_safetensors=True,

|

| 249 |

+

save_steps=21,

|

| 250 |

+

save_strategy=steps,

|

| 251 |

+

save_total_limit=None,

|

| 252 |

+

seed=42,

|

| 253 |

+

skip_memory_metrics=True,

|

| 254 |

+

sortish_sampler=False,

|

| 255 |

+

split_batches=False,

|

| 256 |

+

tf32=None,

|

| 257 |

+

torch_compile=False,

|

| 258 |

+

torch_compile_backend=None,

|

| 259 |

+

torch_compile_mode=None,

|

| 260 |

+

torchdynamo=None,

|

| 261 |

+

tpu_metrics_debug=False,

|

| 262 |

+

tpu_num_cores=None,

|

| 263 |

+

use_cpu=False,

|

| 264 |

+

use_ipex=False,

|

| 265 |

+

use_legacy_prediction_loop=False,

|

| 266 |

+

use_mps_device=False,

|

| 267 |

+

warmup_ratio=0.0,

|

| 268 |

+

warmup_steps=0,

|

| 269 |

+

weight_decay=0.0,

|

| 270 |

+

)

|

| 271 |

+

[INFO|tokenization_utils_base.py:2025] 2024-02-01 14:20:24,513 >> loading file ./tokenizer.model

|

| 272 |

+

[INFO|tokenization_utils_base.py:2025] 2024-02-01 14:20:24,513 >> loading file added_tokens.json

|

| 273 |

+

[INFO|tokenization_utils_base.py:2025] 2024-02-01 14:20:24,513 >> loading file special_tokens_map.json

|

| 274 |

+

[INFO|tokenization_utils_base.py:2025] 2024-02-01 14:20:24,513 >> loading file tokenizer_config.json

|

| 275 |

+

[INFO|tokenization_utils_base.py:2025] 2024-02-01 14:20:24,513 >> loading file tokenizer.json

|

| 276 |

+