Upload folder using huggingface_hub

Browse files- .gitattributes +1 -1

- BiCodec/README.md +29 -0

- BiCodec/config.json +83 -0

- BiCodec/config.yaml +66 -0

- BiCodec/model.safetensors +3 -0

- BiCodec/preprocessor_config.json +9 -0

- BiCodec/wav2vec2-large-xlsr-53/README.md +29 -0

- BiCodec/wav2vec2-large-xlsr-53/config.json +83 -0

- BiCodec/wav2vec2-large-xlsr-53/preprocessor_config.json +9 -0

- BiCodec/wav2vec2-large-xlsr-53/pytorch_model.bin +3 -0

- added_tokens.json +0 -0

- chat_template.jinja +85 -0

- config.json +64 -0

- config.json.bak +64 -0

- configuration_qwen3.py +226 -0

- generation_config.json +8 -0

- glm-4-voice-tokenizer/.mdl +0 -0

- glm-4-voice-tokenizer/.msc +0 -0

- glm-4-voice-tokenizer/.mv +1 -0

- glm-4-voice-tokenizer/LICENSE +70 -0

- glm-4-voice-tokenizer/README.md +13 -0

- glm-4-voice-tokenizer/config.json +65 -0

- glm-4-voice-tokenizer/configuration.json +1 -0

- glm-4-voice-tokenizer/model.safetensors +3 -0

- glm-4-voice-tokenizer/preprocessor_config.json +14 -0

- merges.txt +0 -0

- model.safetensors +3 -0

- modeling_qwen3.py +650 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +0 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -35,4 +35,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

figures/GPA.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

figures/GPA_intro.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

-

|

|

|

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

figures/GPA.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

figures/GPA_intro.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

BiCodec/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

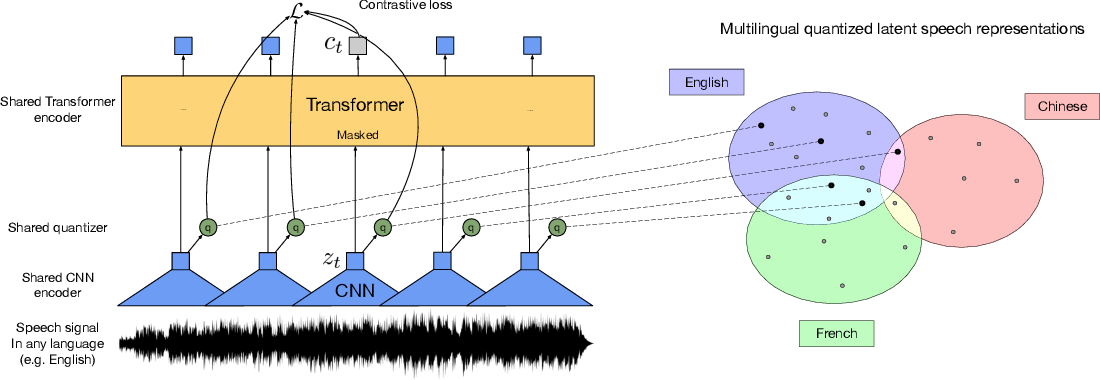

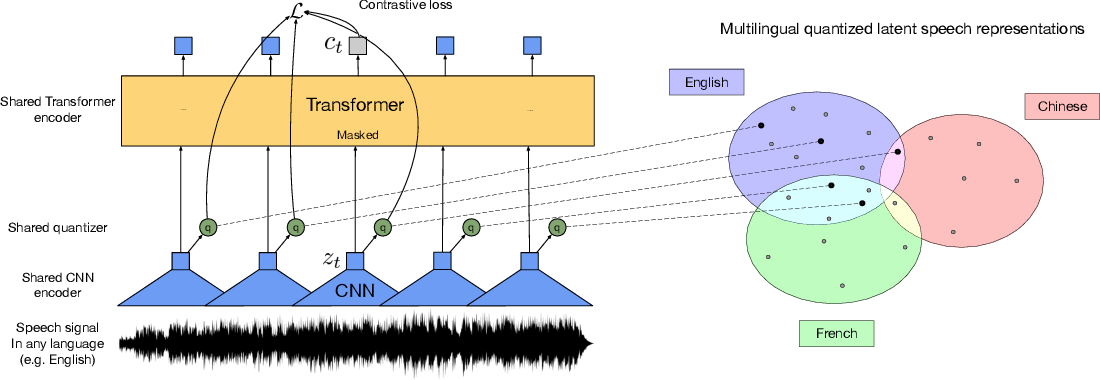

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

BiCodec/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

BiCodec/config.yaml

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

audio_tokenizer:

|

| 2 |

+

mel_params:

|

| 3 |

+

sample_rate: 16000

|

| 4 |

+

n_fft: 1024

|

| 5 |

+

win_length: 640

|

| 6 |

+

hop_length: 320

|

| 7 |

+

mel_fmin: 10

|

| 8 |

+

mel_fmax: null

|

| 9 |

+

num_mels: 128

|

| 10 |

+

|

| 11 |

+

encoder:

|

| 12 |

+

input_channels: 1024

|

| 13 |

+

vocos_dim: 384

|

| 14 |

+

vocos_intermediate_dim: 2048

|

| 15 |

+

vocos_num_layers: 12

|

| 16 |

+

out_channels: 1024

|

| 17 |

+

sample_ratios: [1,1]

|

| 18 |

+

|

| 19 |

+

decoder:

|

| 20 |

+

input_channel: 1024

|

| 21 |

+

channels: 1536

|

| 22 |

+

rates: [8, 5, 4, 2]

|

| 23 |

+

kernel_sizes: [16,11,8,4]

|

| 24 |

+

|

| 25 |

+

quantizer:

|

| 26 |

+

input_dim: 1024

|

| 27 |

+

codebook_size: 8192

|

| 28 |

+

codebook_dim: 8

|

| 29 |

+

commitment: 0.25

|

| 30 |

+

codebook_loss_weight: 2.0

|

| 31 |

+

use_l2_normlize: True

|

| 32 |

+

threshold_ema_dead_code: 0.2

|

| 33 |

+

|

| 34 |

+

speaker_encoder:

|

| 35 |

+

input_dim: 128

|

| 36 |

+

out_dim: 1024

|

| 37 |

+

latent_dim: 128

|

| 38 |

+

token_num: 32

|

| 39 |

+

fsq_levels: [4, 4, 4, 4, 4, 4]

|

| 40 |

+

fsq_num_quantizers: 1

|

| 41 |

+

|

| 42 |

+

prenet:

|

| 43 |

+

input_channels: 1024

|

| 44 |

+

vocos_dim: 384

|

| 45 |

+

vocos_intermediate_dim: 2048

|

| 46 |

+

vocos_num_layers: 12

|

| 47 |

+

out_channels: 1024

|

| 48 |

+

condition_dim: 1024

|

| 49 |

+

sample_ratios: [1,1]

|

| 50 |

+

use_tanh_at_final: False

|

| 51 |

+

|

| 52 |

+

postnet:

|

| 53 |

+

input_channels: 1024

|

| 54 |

+

vocos_dim: 384

|

| 55 |

+

vocos_intermediate_dim: 2048

|

| 56 |

+

vocos_num_layers: 6

|

| 57 |

+

out_channels: 1024

|

| 58 |

+

use_tanh_at_final: False

|

| 59 |

+

highpass_cutoff_freq: 40

|

| 60 |

+

sample_rate: 16000

|

| 61 |

+

segment_duration: 2.4 # (s)

|

| 62 |

+

max_val_duration: 12 # (s)

|

| 63 |

+

latent_hop_length: 320

|

| 64 |

+

ref_segment_duration: 6

|

| 65 |

+

volume_normalize: true

|

| 66 |

+

|

BiCodec/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e9940cd48d4446e4340ced82d234bf5618350dd9f5db900ebe47a4fdb03867ec

|

| 3 |

+

size 625518756

|

BiCodec/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

BiCodec/wav2vec2-large-xlsr-53/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

BiCodec/wav2vec2-large-xlsr-53/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

BiCodec/wav2vec2-large-xlsr-53/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

BiCodec/wav2vec2-large-xlsr-53/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:314340227371a608f71adcd5f0de5933824fe77e55822aa4b24dba9c1c364dcb

|

| 3 |

+

size 1269737156

|

added_tokens.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,85 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if tools %}

|

| 2 |

+

{{- '<|im_start|>system\n' }}

|

| 3 |

+

{%- if messages[0].role == 'system' %}

|

| 4 |

+

{{- messages[0].content + '\n\n' }}

|

| 5 |

+

{%- endif %}

|

| 6 |

+

{{- "# Tools\n\nYou may call one or more functions to assist with the user query.\n\nYou are provided with function signatures within <tools></tools> XML tags:\n<tools>" }}

|

| 7 |

+

{%- for tool in tools %}

|

| 8 |

+

{{- "\n" }}

|

| 9 |

+

{{- tool | tojson }}

|

| 10 |

+

{%- endfor %}

|

| 11 |

+

{{- "\n</tools>\n\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\n<tool_call>\n{\"name\": <function-name>, \"arguments\": <args-json-object>}\n</tool_call><|im_end|>\n" }}

|

| 12 |

+

{%- else %}

|

| 13 |

+

{%- if messages[0].role == 'system' %}

|

| 14 |

+

{{- '<|im_start|>system\n' + messages[0].content + '<|im_end|>\n' }}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{%- endif %}

|

| 17 |

+

{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}

|

| 18 |

+

{%- for message in messages[::-1] %}

|

| 19 |

+

{%- set index = (messages|length - 1) - loop.index0 %}

|

| 20 |

+

{%- if ns.multi_step_tool and message.role == "user" and not(message.content.startswith('<tool_response>') and message.content.endswith('</tool_response>')) %}

|

| 21 |

+

{%- set ns.multi_step_tool = false %}

|

| 22 |

+

{%- set ns.last_query_index = index %}

|

| 23 |

+

{%- endif %}

|

| 24 |

+

{%- endfor %}

|

| 25 |

+

{%- for message in messages %}

|

| 26 |

+

{%- if (message.role == "user") or (message.role == "system" and not loop.first) %}

|

| 27 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }}

|

| 28 |

+

{%- elif message.role == "assistant" %}

|

| 29 |

+

{%- set content = message.content %}

|

| 30 |

+

{%- set reasoning_content = '' %}

|

| 31 |

+

{%- if message.reasoning_content is defined and message.reasoning_content is not none %}

|

| 32 |

+

{%- set reasoning_content = message.reasoning_content %}

|

| 33 |

+

{%- else %}

|

| 34 |

+

{%- if '</think>' in message.content %}

|

| 35 |

+

{%- set content = message.content.split('</think>')[-1].lstrip('\n') %}

|

| 36 |

+

{%- set reasoning_content = message.content.split('</think>')[0].rstrip('\n').split('<think>')[-1].lstrip('\n') %}

|

| 37 |

+

{%- endif %}

|

| 38 |

+

{%- endif %}

|

| 39 |

+

{%- if loop.index0 > ns.last_query_index %}

|

| 40 |

+

{%- if loop.last or (not loop.last and reasoning_content) %}

|

| 41 |

+

{{- '<|im_start|>' + message.role + '\n<think>\n' + reasoning_content.strip('\n') + '\n</think>\n\n' + content.lstrip('\n') }}

|

| 42 |

+

{%- else %}

|

| 43 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 44 |

+

{%- endif %}

|

| 45 |

+

{%- else %}

|

| 46 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 47 |

+

{%- endif %}

|

| 48 |

+

{%- if message.tool_calls %}

|

| 49 |

+

{%- for tool_call in message.tool_calls %}

|

| 50 |

+

{%- if (loop.first and content) or (not loop.first) %}

|

| 51 |

+

{{- '\n' }}

|

| 52 |

+

{%- endif %}

|

| 53 |

+

{%- if tool_call.function %}

|

| 54 |

+

{%- set tool_call = tool_call.function %}

|

| 55 |

+

{%- endif %}

|

| 56 |

+

{{- '<tool_call>\n{"name": "' }}

|

| 57 |

+

{{- tool_call.name }}

|

| 58 |

+

{{- '", "arguments": ' }}

|

| 59 |

+

{%- if tool_call.arguments is string %}

|

| 60 |

+

{{- tool_call.arguments }}

|

| 61 |

+

{%- else %}

|

| 62 |

+

{{- tool_call.arguments | tojson }}

|

| 63 |

+

{%- endif %}

|

| 64 |

+

{{- '}\n</tool_call>' }}

|

| 65 |

+

{%- endfor %}

|

| 66 |

+

{%- endif %}

|

| 67 |

+

{{- '<|im_end|>\n' }}

|

| 68 |

+

{%- elif message.role == "tool" %}

|

| 69 |

+

{%- if loop.first or (messages[loop.index0 - 1].role != "tool") %}

|

| 70 |

+

{{- '<|im_start|>user' }}

|

| 71 |

+

{%- endif %}

|

| 72 |

+

{{- '\n<tool_response>\n' }}

|

| 73 |

+

{{- message.content }}

|

| 74 |

+

{{- '\n</tool_response>' }}

|

| 75 |

+

{%- if loop.last or (messages[loop.index0 + 1].role != "tool") %}

|

| 76 |

+

{{- '<|im_end|>\n' }}

|

| 77 |

+

{%- endif %}

|

| 78 |

+

{%- endif %}

|

| 79 |

+

{%- endfor %}

|

| 80 |

+

{%- if add_generation_prompt %}

|

| 81 |

+

{{- '<|im_start|>assistant\n' }}

|

| 82 |

+

{%- if enable_thinking is defined and enable_thinking is false %}

|

| 83 |

+

{{- '<think>\n\n</think>\n\n' }}

|

| 84 |

+

{%- endif %}

|

| 85 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,64 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_qwen3.Qwen3Config",

|

| 7 |

+

"AutoModelForCausalLM": "modeling_qwen3.Qwen3ForCausalLM"

|

| 8 |

+

},

|

| 9 |

+

"attention_bias": false,

|

| 10 |

+

"attention_dropout": 0.0,

|

| 11 |

+

"dtype": "bfloat16",

|

| 12 |

+

"eos_token_id": 151643,

|

| 13 |

+

"head_dim": 128,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 512,

|

| 16 |

+

"initializer_range": 0.02,

|

| 17 |

+

"intermediate_size": 3072,

|

| 18 |

+

"layer_types": [

|

| 19 |

+

"full_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"full_attention",

|

| 22 |

+

"full_attention",

|

| 23 |

+

"full_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"full_attention",

|

| 26 |

+

"full_attention",

|

| 27 |

+

"full_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"full_attention",

|

| 30 |

+

"full_attention",

|

| 31 |

+

"full_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"full_attention",

|

| 34 |

+

"full_attention",

|

| 35 |

+

"full_attention",

|

| 36 |

+

"full_attention",

|

| 37 |

+

"full_attention",

|

| 38 |

+

"full_attention",

|

| 39 |

+

"full_attention",

|

| 40 |

+

"full_attention",

|

| 41 |

+

"full_attention",

|

| 42 |

+

"full_attention",

|

| 43 |

+

"full_attention",

|

| 44 |

+

"full_attention",

|

| 45 |

+

"full_attention",

|

| 46 |

+

"full_attention"

|

| 47 |

+

],

|

| 48 |

+

"max_position_embeddings": 32768,

|

| 49 |

+

"max_window_layers": 28,

|

| 50 |

+

"model_type": "qwen3",

|

| 51 |

+

"num_attention_heads": 16,

|

| 52 |

+

"num_hidden_layers": 28,

|

| 53 |

+

"num_key_value_heads": 8,

|

| 54 |

+

"pad_token_id": 151643,

|

| 55 |

+

"rms_norm_eps": 1e-06,

|

| 56 |

+

"rope_scaling": null,

|

| 57 |

+

"rope_theta": 1000000,

|

| 58 |

+

"sliding_window": null,

|

| 59 |

+

"tie_word_embeddings": true,

|

| 60 |

+

"transformers_version": "4.57.3",

|

| 61 |

+

"use_cache": false,

|

| 62 |

+

"use_sliding_window": false,

|

| 63 |

+

"vocab_size": 180445

|

| 64 |

+

}

|

config.json.bak

ADDED

|

@@ -0,0 +1,64 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_qwen3.Qwen3Config",

|

| 7 |

+

"AutoModelForCausalLM": "modeling_qwen3.Qwen3ForCausalLM"

|

| 8 |

+

},

|

| 9 |

+

"attention_bias": false,

|

| 10 |

+

"attention_dropout": 0.0,

|

| 11 |

+

"dtype": "bfloat16",

|

| 12 |

+

"eos_token_id": 151643,

|

| 13 |

+

"head_dim": 128,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 512,

|

| 16 |

+

"initializer_range": 0.02,

|

| 17 |

+

"intermediate_size": 3072,

|

| 18 |

+

"layer_types": [

|

| 19 |

+

"full_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"full_attention",

|

| 22 |

+

"full_attention",

|

| 23 |

+

"full_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"full_attention",

|

| 26 |

+

"full_attention",

|

| 27 |

+

"full_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"full_attention",

|

| 30 |

+

"full_attention",

|

| 31 |

+

"full_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"full_attention",

|

| 34 |

+

"full_attention",

|

| 35 |

+

"full_attention",

|

| 36 |

+

"full_attention",

|

| 37 |

+

"full_attention",

|

| 38 |

+

"full_attention",

|

| 39 |

+

"full_attention",

|

| 40 |

+

"full_attention",

|

| 41 |

+

"full_attention",

|

| 42 |

+

"full_attention",

|

| 43 |

+

"full_attention",

|

| 44 |

+

"full_attention",

|

| 45 |

+

"full_attention",

|

| 46 |

+

"full_attention"

|

| 47 |

+

],

|

| 48 |

+

"max_position_embeddings": 32768,

|

| 49 |

+

"max_window_layers": 28,

|

| 50 |

+

"model_type": "qwen3",

|

| 51 |

+

"num_attention_heads": 16,

|

| 52 |

+

"num_hidden_layers": 28,

|

| 53 |

+

"num_key_value_heads": 8,

|

| 54 |

+

"pad_token_id": 151643,

|

| 55 |

+

"rms_norm_eps": 1e-06,

|

| 56 |

+

"rope_scaling": null,

|

| 57 |

+

"rope_theta": 1000000,

|

| 58 |

+

"sliding_window": null,

|

| 59 |

+

"tie_word_embeddings": true,

|

| 60 |

+

"transformers_version": "4.57.3",

|

| 61 |

+

"use_cache": false,

|

| 62 |

+

"use_sliding_window": false,

|

| 63 |

+

"vocab_size": 180445

|

| 64 |

+

}

|

configuration_qwen3.py

ADDED

|

@@ -0,0 +1,226 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2024 The Qwen team, Alibaba Group and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 5 |

+

# you may not use this file except in compliance with the License.

|

| 6 |

+

# You may obtain a copy of the License at

|

| 7 |

+

#

|

| 8 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 9 |

+

#

|

| 10 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 11 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 12 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 13 |

+

# See the License for the specific language governing permissions and

|

| 14 |

+

# limitations under the License.

|

| 15 |

+

"""Qwen3 model configuration"""

|

| 16 |

+

|

| 17 |

+

from transformers.configuration_utils import PretrainedConfig, layer_type_validation

|

| 18 |

+

from transformers.modeling_rope_utils import rope_config_validation

|

| 19 |

+

from transformers.utils import logging

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

logger = logging.get_logger(__name__)

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

class Qwen3Config(PretrainedConfig):

|

| 26 |

+

r"""

|

| 27 |

+

This is the configuration class to store the configuration of a [`Qwen3Model`]. It is used to instantiate a

|

| 28 |

+

Qwen3 model according to the specified arguments, defining the model architecture. Instantiating a configuration

|

| 29 |

+

with the defaults will yield a similar configuration to that of

|

| 30 |

+

Qwen3-8B [Qwen/Qwen3-8B](https://huggingface.co/Qwen/Qwen3-8B).

|

| 31 |

+

|

| 32 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 33 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

Args:

|

| 37 |

+

vocab_size (`int`, *optional*, defaults to 151936):

|

| 38 |

+

Vocabulary size of the Qwen3 model. Defines the number of different tokens that can be represented by the

|

| 39 |

+

`inputs_ids` passed when calling [`Qwen3Model`]

|

| 40 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 41 |

+

Dimension of the hidden representations.

|

| 42 |

+

intermediate_size (`int`, *optional*, defaults to 22016):

|

| 43 |

+

Dimension of the MLP representations.

|

| 44 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 45 |

+

Number of hidden layers in the Transformer encoder.

|

| 46 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 47 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 48 |

+

num_key_value_heads (`int`, *optional*, defaults to 32):

|

| 49 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 50 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 51 |

+

`num_key_value_heads=1` the model will use Multi Query Attention (MQA) otherwise GQA is used. When

|

| 52 |

+

converting a multi-head checkpoint to a GQA checkpoint, each group key and value head should be constructed

|

| 53 |

+

by meanpooling all the original heads within that group. For more details, check out [this

|

| 54 |

+

paper](https://huggingface.co/papers/2305.13245). If it is not specified, will default to `32`.

|

| 55 |

+

head_dim (`int`, *optional*, defaults to 128):

|

| 56 |

+

The attention head dimension.

|

| 57 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 58 |

+

The non-linear activation function (function or string) in the decoder.

|

| 59 |

+

max_position_embeddings (`int`, *optional*, defaults to 32768):

|

| 60 |

+

The maximum sequence length that this model might ever be used with.

|

| 61 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 62 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 63 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-06):

|

| 64 |

+

The epsilon used by the rms normalization layers.

|

| 65 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 66 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 67 |

+

relevant if `config.is_decoder=True`.

|

| 68 |

+

tie_word_embeddings (`bool`, *optional*, defaults to `False`):

|

| 69 |

+

Whether the model's input and output word embeddings should be tied.

|

| 70 |

+

rope_theta (`float`, *optional*, defaults to 10000.0):

|

| 71 |

+

The base period of the RoPE embeddings.

|

| 72 |

+

rope_scaling (`Dict`, *optional*):

|

| 73 |

+

Dictionary containing the scaling configuration for the RoPE embeddings. NOTE: if you apply new rope type

|

| 74 |

+

and you expect the model to work on longer `max_position_embeddings`, we recommend you to update this value

|

| 75 |

+

accordingly.

|

| 76 |

+

Expected contents:

|

| 77 |

+

`rope_type` (`str`):

|

| 78 |

+

The sub-variant of RoPE to use. Can be one of ['default', 'linear', 'dynamic', 'yarn', 'longrope',

|

| 79 |

+

'llama3'], with 'default' being the original RoPE implementation.

|

| 80 |

+

`factor` (`float`, *optional*):

|

| 81 |

+

Used with all rope types except 'default'. The scaling factor to apply to the RoPE embeddings. In

|

| 82 |

+

most scaling types, a `factor` of x will enable the model to handle sequences of length x *

|

| 83 |

+

original maximum pre-trained length.

|

| 84 |

+

`original_max_position_embeddings` (`int`, *optional*):

|

| 85 |

+

Used with 'dynamic', 'longrope' and 'llama3'. The original max position embeddings used during

|

| 86 |

+

pretraining.

|

| 87 |

+

`attention_factor` (`float`, *optional*):

|

| 88 |

+

Used with 'yarn' and 'longrope'. The scaling factor to be applied on the attention

|

| 89 |

+

computation. If unspecified, it defaults to value recommended by the implementation, using the

|

| 90 |

+

`factor` field to infer the suggested value.

|

| 91 |

+

`beta_fast` (`float`, *optional*):

|

| 92 |

+

Only used with 'yarn'. Parameter to set the boundary for extrapolation (only) in the linear

|

| 93 |

+

ramp function. If unspecified, it defaults to 32.

|

| 94 |

+

`beta_slow` (`float`, *optional*):

|

| 95 |

+

Only used with 'yarn'. Parameter to set the boundary for interpolation (only) in the linear

|

| 96 |

+

ramp function. If unspecified, it defaults to 1.

|

| 97 |

+

`short_factor` (`list[float]`, *optional*):

|

| 98 |

+

Only used with 'longrope'. The scaling factor to be applied to short contexts (<

|

| 99 |

+

`original_max_position_embeddings`). Must be a list of numbers with the same length as the hidden

|

| 100 |

+

size divided by the number of attention heads divided by 2

|

| 101 |

+

`long_factor` (`list[float]`, *optional*):

|

| 102 |

+

Only used with 'longrope'. The scaling factor to be applied to long contexts (<

|

| 103 |

+

`original_max_position_embeddings`). Must be a list of numbers with the same length as the hidden

|

| 104 |

+

size divided by the number of attention heads divided by 2

|

| 105 |

+

`low_freq_factor` (`float`, *optional*):

|

| 106 |

+

Only used with 'llama3'. Scaling factor applied to low frequency components of the RoPE

|

| 107 |

+

`high_freq_factor` (`float`, *optional*):

|

| 108 |

+

Only used with 'llama3'. Scaling factor applied to high frequency components of the RoPE

|

| 109 |

+

attention_bias (`bool`, defaults to `False`, *optional*, defaults to `False`):

|

| 110 |

+

Whether to use a bias in the query, key, value and output projection layers during self-attention.

|

| 111 |

+

use_sliding_window (`bool`, *optional*, defaults to `False`):

|

| 112 |

+

Whether to use sliding window attention.

|

| 113 |

+

sliding_window (`int`, *optional*, defaults to 4096):

|

| 114 |

+

Sliding window attention (SWA) window size. If not specified, will default to `4096`.

|

| 115 |

+

max_window_layers (`int`, *optional*, defaults to 28):

|

| 116 |

+

The number of layers using full attention. The first `max_window_layers` layers will use full attention, while any

|

| 117 |

+

additional layer afterwards will use SWA (Sliding Window Attention).

|

| 118 |

+

layer_types (`list`, *optional*):

|

| 119 |

+

Attention pattern for each layer.

|

| 120 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 121 |

+

The dropout ratio for the attention probabilities.

|

| 122 |

+

|

| 123 |

+

```python

|

| 124 |

+

>>> from transformers import Qwen3Model, Qwen3Config

|

| 125 |

+

|

| 126 |

+

>>> # Initializing a Qwen3 style configuration

|

| 127 |

+

>>> configuration = Qwen3Config()

|

| 128 |

+

|

| 129 |

+

>>> # Initializing a model from the Qwen3-8B style configuration

|

| 130 |

+

>>> model = Qwen3Model(configuration)

|

| 131 |

+

|

| 132 |

+

>>> # Accessing the model configuration

|

| 133 |

+

>>> configuration = model.config

|

| 134 |

+

```"""

|

| 135 |

+

|

| 136 |

+

model_type = "qwen3"

|

| 137 |

+

keys_to_ignore_at_inference = ["past_key_values"]

|

| 138 |

+

|

| 139 |

+

# Default tensor parallel plan for base model `Qwen3`

|

| 140 |

+

base_model_tp_plan = {

|

| 141 |

+

"layers.*.self_attn.q_proj": "colwise",

|

| 142 |

+

"layers.*.self_attn.k_proj": "colwise",

|

| 143 |

+

"layers.*.self_attn.v_proj": "colwise",

|

| 144 |

+

"layers.*.self_attn.o_proj": "rowwise",

|

| 145 |

+

"layers.*.mlp.gate_proj": "colwise",

|

| 146 |

+

"layers.*.mlp.up_proj": "colwise",

|

| 147 |

+

"layers.*.mlp.down_proj": "rowwise",

|

| 148 |

+

}

|

| 149 |

+

base_model_pp_plan = {

|

| 150 |

+

"embed_tokens": (["input_ids"], ["inputs_embeds"]),

|

| 151 |

+

"layers": (["hidden_states", "attention_mask"], ["hidden_states"]),

|

| 152 |

+

"norm": (["hidden_states"], ["hidden_states"]),

|

| 153 |

+

}

|

| 154 |

+

|

| 155 |

+

def __init__(

|

| 156 |

+

self,

|

| 157 |

+

vocab_size=151936,

|

| 158 |

+

hidden_size=4096,

|

| 159 |

+

intermediate_size=22016,

|

| 160 |

+

num_hidden_layers=32,

|

| 161 |

+

num_attention_heads=32,

|

| 162 |

+

num_key_value_heads=32,

|

| 163 |

+

head_dim=128,

|

| 164 |

+

hidden_act="silu",

|

| 165 |

+

max_position_embeddings=32768,

|

| 166 |

+

initializer_range=0.02,

|

| 167 |

+

rms_norm_eps=1e-6,

|

| 168 |

+

use_cache=True,

|

| 169 |

+

tie_word_embeddings=False,

|

| 170 |

+

rope_theta=10000.0,

|

| 171 |

+

rope_scaling=None,

|

| 172 |

+

attention_bias=False,

|

| 173 |

+

use_sliding_window=False,

|

| 174 |

+

sliding_window=4096,

|

| 175 |

+

max_window_layers=28,

|

| 176 |

+

layer_types=None,

|

| 177 |

+

attention_dropout=0.0,

|

| 178 |

+

**kwargs,

|

| 179 |

+

):

|

| 180 |

+

self.vocab_size = vocab_size

|

| 181 |

+

self.max_position_embeddings = max_position_embeddings

|

| 182 |

+

self.hidden_size = hidden_size

|

| 183 |

+

self.intermediate_size = intermediate_size

|

| 184 |

+

self.num_hidden_layers = num_hidden_layers

|

| 185 |

+

self.num_attention_heads = num_attention_heads

|

| 186 |

+

self.use_sliding_window = use_sliding_window

|

| 187 |

+

self.sliding_window = sliding_window if self.use_sliding_window else None

|

| 188 |

+

self.max_window_layers = max_window_layers

|

| 189 |

+

|

| 190 |

+

# for backward compatibility

|

| 191 |

+

if num_key_value_heads is None:

|

| 192 |

+

num_key_value_heads = num_attention_heads

|

| 193 |

+

|

| 194 |

+

self.num_key_value_heads = num_key_value_heads

|

| 195 |

+

self.head_dim = head_dim

|

| 196 |

+

self.hidden_act = hidden_act

|

| 197 |

+

self.initializer_range = initializer_range

|

| 198 |

+

self.rms_norm_eps = rms_norm_eps

|

| 199 |

+

self.use_cache = use_cache

|

| 200 |

+

self.rope_theta = rope_theta

|

| 201 |

+

self.rope_scaling = rope_scaling

|

| 202 |

+

self.attention_bias = attention_bias

|

| 203 |

+

self.attention_dropout = attention_dropout

|

| 204 |

+

# Validate the correctness of rotary position embeddings parameters

|

| 205 |

+

# BC: if there is a 'type' field, move it to 'rope_type'.

|

| 206 |

+

if self.rope_scaling is not None and "type" in self.rope_scaling:

|

| 207 |

+

self.rope_scaling["rope_type"] = self.rope_scaling["type"]

|

| 208 |

+

rope_config_validation(self)

|

| 209 |

+

|

| 210 |

+

self.layer_types = layer_types

|

| 211 |

+

if self.layer_types is None:

|

| 212 |

+

self.layer_types = [

|

| 213 |

+

"sliding_attention"

|

| 214 |

+

if self.sliding_window is not None and i >= self.max_window_layers

|

| 215 |

+

else "full_attention"

|

| 216 |

+

for i in range(self.num_hidden_layers)

|

| 217 |

+

]

|

| 218 |

+

layer_type_validation(self.layer_types, self.num_hidden_layers)

|

| 219 |

+

|

| 220 |

+

super().__init__(

|

| 221 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 222 |

+

**kwargs,

|

| 223 |

+

)

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

__all__ = ["Qwen3Config"]

|

generation_config.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"eos_token_id": [

|

| 4 |

+

151643

|

| 5 |

+

],

|

| 6 |

+

"pad_token_id": 151643,

|

| 7 |

+

"transformers_version": "4.57.3"

|

| 8 |

+

}

|

glm-4-voice-tokenizer/.mdl

ADDED

|

Binary file (52 Bytes). View file

|

|

|

glm-4-voice-tokenizer/.msc

ADDED

|

Binary file (440 Bytes). View file

|

|

|

glm-4-voice-tokenizer/.mv

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

Revision:master,CreatedAt:1729826962

|

glm-4-voice-tokenizer/LICENSE

ADDED

|

@@ -0,0 +1,70 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|