text1 stringlengths 2 269k | text2 stringlengths 2 242k | label int64 0 1 |

|---|---|---|

Why this margin is needed?

bootstrap.css (5648 line)

body.modal-open,

.modal-open .navbar-fixed-top,

.modal-open .navbar-fixed-bottom {

margin-right: 15px;

}

|

When launching the modal component

(http://getbootstrap.com/javascript/#modals) the entire content will slightly

move to the left on mac OS (haven't tried it on windows yet). With the active

modal the scrollbar seem to disappear, while the content width still changes.

You can observer the problem on the bootstrap page

| 1 |

Maybe I'm missing something in the debug UI experience but I see no way to add

a condition to a function breakpoint even though the debug protocol supports

it.

|

* VSCode Version: 1.1.1

* OS Version: El Captian (10.11.5)

How can I remove those ugly brackets in the menu? are they shortcut hints? No

use to me at all.

| 0 |

Cast renders an anonymous alias contrary to the documentation

The example used in the documantation

from sqlalchemy import cast, Numeric, select

stmt = select([

cast(product_table.c.unit_price, Numeric(10, 4))

])

renders as

SELECT CAST(product_table.unit_price AS NUMERIC(10, 4)) AS anon_1 FROM product_table

instead of

SELECT CAST(unit_price AS NUMERIC(10, 4)) FROM product

I've tried sqlalchemy version 1.2.7 and 1.2.16 and both return the same result

|

We're having some issues with `back_populates` of relationships referring to

other relationships with `viewonly=True`. We get duplicates in the

relationship list that the `back_populates` refers to. Test case:

import sqlalchemy

from sqlalchemy import Column

from sqlalchemy import ForeignKey

from sqlalchemy import Integer

from sqlalchemy import String

from sqlalchemy.ext.declarative import declarative_base

from sqlalchemy.orm import attributes

from sqlalchemy.orm import relationship

Base = declarative_base()

class User(Base):

__tablename__ = 'user'

id = Column(Integer, primary_key=True)

name = Column(String)

boston_addresses = relationship(

"Address",

# primaryjoin="and_(User.id==Address.user_id, Address.city=='Boston')", # Not necessary but shows the use case

viewonly=True) # Setting viewonly = False prevents the issue

class Address(Base):

__tablename__ = 'address'

id = Column(Integer, primary_key=True)

user_id = Column(Integer, ForeignKey('user.id'))

user = relationship('User', back_populates='boston_addresses')

city = Column(String)

def main():

session = setup_database_and_session()

user = User()

session.add(user)

session.commit()

# user.boston_addresses # Reading the value here prevents the issue

address = Address(user=user, city='Boston')

actual = user.boston_addresses

expected = [address]

assert actual == expected, f"{actual} != {expected}"

def setup_database_and_session():

engine = sqlalchemy.create_engine("sqlite://")

session_maker = sqlalchemy.orm.sessionmaker(bind=engine)

session = session_maker()

Base.metadata.create_all(engine)

return session

if __name__ == "__main__":

main()

Result:

AssertionError: [<__main__.Address object at 0x10d95aeb8>, <__main__.Address object at 0x10d95aeb8>] != [<__main__.Address object at 0x10d95aeb8>]

| 0 |

This bug-tracker is monitored by Windows Console development team and other

technical types. **We like detail!**

If you have a feature request, please post to the UserVoice.

> **Important: When reporting BSODs or security issues, DO NOT attach memory

> dumps, logs, or traces to Github issues**. Instead, send dumps/traces to

> secure@microsoft.com, referencing this GitHub issue.

Please use this form and describe your issue, concisely but precisely, with as

much detail as possible

* Your Windows build number: 10.0.18362.30

* What you're doing and what's happening: Resize the terminal window to minimum, then resize the window to normal, the text will disappear.

### The normal window size

### Resize it to minimum

### Then resize to normal, the text disappear

### Scroll up the window

* What's wrong / what should be happening instead: I don't know. Is this a bug?

|

When we paste anything in the Windows Terminal ubuntu instance using any

command like **vim abc.txt** , it automatically inserts new line after each

line, and eats up some characters from the beginning of the pasted text.

Here is the screenshot: https://i.imgur.com/pJ0Fsbw.png

Here the text copied to my clipboard copied was:

Line 1

Line 2

Line 3

Line 4

Line 5

Line 6

Line 7

I ran command: **vim abc.txt**

And after paste, it became

ne 1

Line 2

Line 3

Line 4

Line 5

Line 6

Line 7

| 0 |

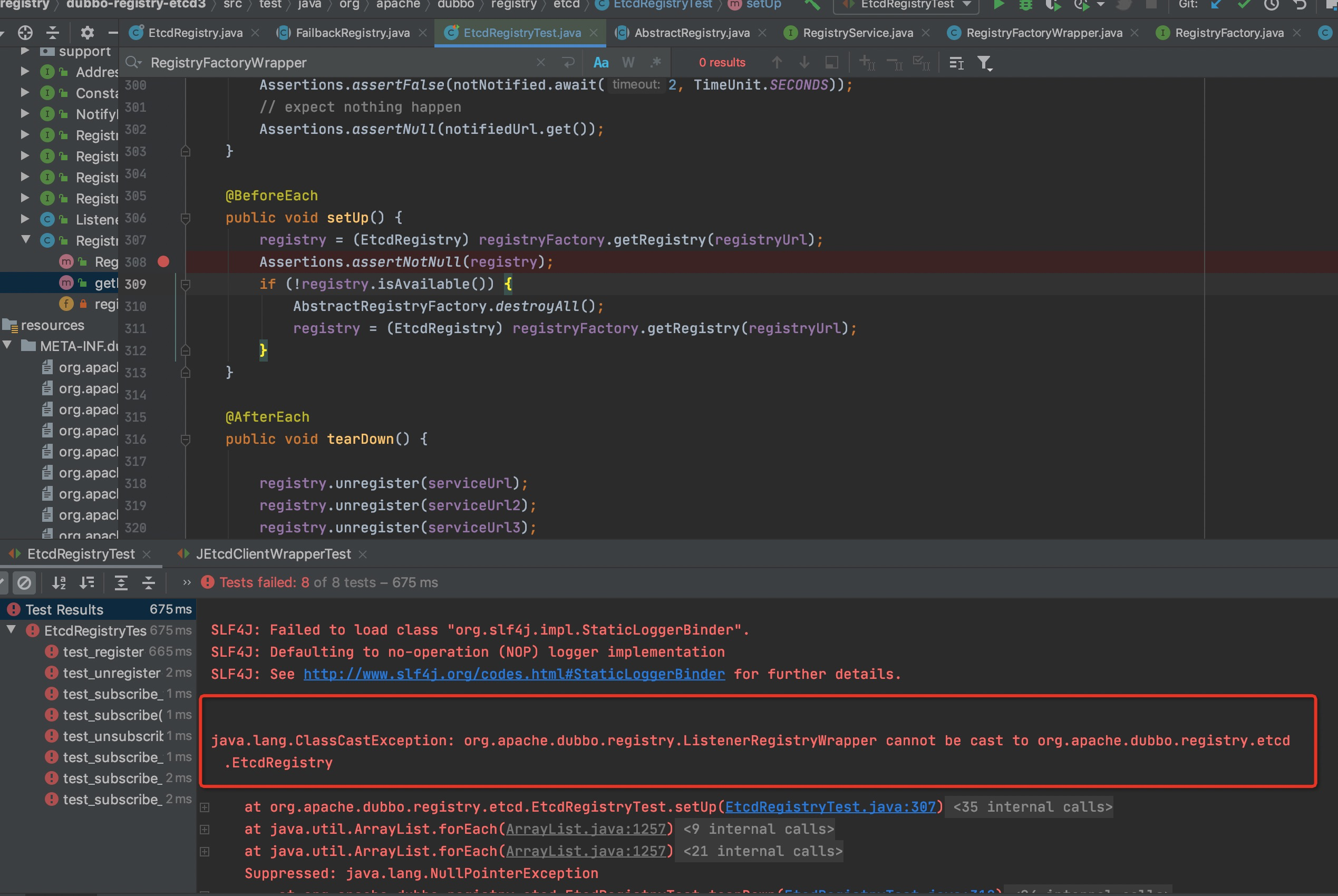

* I have searched the issues of this repository and believe that this is not a duplicate.

* I have checked the FAQ of this repository and believe that this is not a duplicate.

### Environment

* Dubbo version: 2.5.10

* Operating System version: windows7

* Java version: jdk1.8

when is use dubbo-2.5.10 startup my application ,show me the log is

[DUBBO] Using select timeout of 500, dubbo version: 2.0.1, current host: 192.168.1.18

About the issue of using the dubbo-2.5.10 boot log output version is 2.0.1

but if i use dubbo of 2.6.x ,it is no problem;

and when i see the MANIFEST.MF of 2.5.10 and 2.6.2,i found them:

dubbo-2.5.10 MANIFEST.MF file

Manifest-Version: 1.0

Implementation-Vendor: The Dubbo Project

Implementation-Title: Dubbo

Implementation-Version: 2.0.1

Implementation-Vendor-Id: com.alibaba

Built-By: ken.lj

Build-Jdk: 1.7.0_80

Specification-Vendor: The Dubbo Project

Specification-Title: Dubbo

Created-By: Apache Maven 3.1.1

Specification-Version: 2.0.0

Archiver-Version: Plexus Archiver

but when i see the dubbo-2.6.2 MANIFEST.MF file

Manifest-Version: 1.0

Implementation-Vendor: The Apache Software Foundation

Implementation-Title: dubbo-all

Implementation-Version: 2.6.2

Implementation-Vendor-Id: com.alibaba

Built-By: ken.lj

Build-Jdk: 1.7.0_80

Specification-Vendor: The Apache Software Foundation

Specification-Title: dubbo-all

Created-By: Apache Maven 3.5.0

Implementation-URL: https://github.com/apache/incubator-dubbo/dubbo

Specification-Version: 2.6

and the source code of

com.alibaba.dubbo.common.logger.support.FailsafeLogger

package com.alibaba.dubbo.common.logger.support;

import com.alibaba.dubbo.common.Version;

import com.alibaba.dubbo.common.logger.Logger;

import com.alibaba.dubbo.common.utils.NetUtils;

public class FailsafeLogger implements Logger {

private Logger logger;

public FailsafeLogger(Logger logger) {

this.logger = logger;

}

public Logger getLogger() {

return logger;

}

public void setLogger(Logger logger) {

this.logger = logger;

}

private String appendContextMessage(String msg) {

return " [DUBBO] " + msg + ", dubbo version: " + Version.getVersion() + ", current host: " + NetUtils.getLogHost();

}

.... other code

source code of com.alibaba.dubbo.common.Version

public static String getVersion(Class<?> cls, String defaultVersion) {

try {

// 首先查找MANIFEST.MF规范中的版本号

String version = cls.getPackage().getImplementationVersion();

if (version == null || version.length() == 0) {

version = cls.getPackage().getSpecificationVersion();

}

if (version == null || version.length() == 0) {

// 如果规范中没有版本号,基于jar包名获取版本号

CodeSource codeSource = cls.getProtectionDomain().getCodeSource();

if(codeSource == null) {

logger.info("No codeSource for class " + cls.getName() + " when getVersion, use default version " + defaultVersion);

}

else {

String file = codeSource.getLocation().getFile();

if (file != null && file.length() > 0 && file.endsWith(".jar")) {

file = file.substring(0, file.length() - 4);

int i = file.lastIndexOf('/');

if (i >= 0) {

file = file.substring(i + 1);

}

i = file.indexOf("-");

if (i >= 0) {

file = file.substring(i + 1);

}

while (file.length() > 0 && ! Character.isDigit(file.charAt(0))) {

i = file.indexOf("-");

if (i >= 0) {

file = file.substring(i + 1);

} else {

break;

}

}

version = file;

}

}

}

// 返回版本号,如果为空返回缺省版本号

return version == null || version.length() == 0 ? defaultVersion : version;

} catch (Throwable e) { // 防御性容错

// 忽略异常,返回缺省版本号

logger.error("return default version, ignore exception " + e.getMessage(), e);

return defaultVersion;

}

}

why the dubbo-2.5.x boot log output 2.0.1 ,can fix it?

|

# Weekly Report of Dubbo

This is a weekly report of Dubbo. It summarizes what have changed in the

project during the passed week, including pr merged, new contributors, and

more things in the future.

It is all done by @dubbo-bot which is a collaborate robot.

## Repo Overview

### Basic data

Baisc data shows how the watch, star, fork and contributors count changed in

the passed week.

Watch | Star | Fork | Contributors

---|---|---|---

3200 | 24432 (↑79) | 13832 (↑61) | 175 (↑2)

### Issues & PRs

Issues & PRs show the new/closed issues/pull requests count in the passed

week.

New Issues | Closed Issues | New PR | Merged PR

---|---|---|---

17 | 31 | 23 | 15

## PR Overview

Thanks to contributions from community, Dubbo team merged **15** pull requests

in the repository last week. They are:

* Apache parent pom version is updated to 21. (#3470)

* possibly bug fix (#3460)

* extract method to cache default extension name (#3456)

* [Dubbo-3237]fix connectionMonitor in RestProtocol seems not work #3237 (#3455)

* extract 2 methods: isSetter and getSetterProperty (#3453)

* Bugfix/timeout queue full (#3451)

* fix #2619: is there a problem in NettyBackedChannelBuffer.setBytes(...)? (#3448)

* Add delay export test case (#3447)

* remove duplicated unused method and move unit test (#3446)

* Add checkstyle rule for redundant import (#3444)

* Update junit to 5.4.0 release version (#3441)

* remove duplicated import (#3440)

* [Dubbo-Container] Fix Enhance the java doc of dubbo-container module (#3437)

* refactor javassist compiler: extract class CtClassBuilder (#3424)

* refactor adaptive extension class code creation: extract class AdaptiveClassCodeGenerator (#3419)

## Code Review Statistics

Dubbo encourages everyone to participant in code review, in order to improve

software quality. Every week @dubbo-bot would automatically help to count pull

request reviews of single github user as the following. So, try to help review

code in this project.

Contributor ID | Pull Request Reviews

---|---

@lixiaojiee | 6

@kezhenxu94 | 4

@LiZhenNet | 3

@CrazyHZM | 2

@beiwei30 | 2

@carryxyh | 2

@kexianjun | 2

@scxwhite | 2

@chickenlj | 1

@wanghbxxxx | 1

@khanimteyaz | 1

@htynkn | 1

## Contributors Overview

It is Dubbo team's great honor to have new contributors from community. We

really appreciate your contributions. Feel free to tell us if you have any

opinion and please share this open source project with more people if you

could. If you hope to be a contributor as well, please start from

https://github.com/apache/incubator-dubbo/blob/master/CONTRIBUTING.md .

Here is the list of new contributors:

@kamaci

@dreamer-nitj

Thanks to you all.

_Note: This robot is supported byCollabobot._

| 0 |

Use case: for classifiers with predict_proba I like to see precision/recall

data across different probability values. This would be really easy to return

from current cross_validation.py master if not for

if not isinstance(score, numbers.Number):

raise ValueError

in _cross_val_score

with this changed to a warning, cross_val_score can do the check before

converting scores to np.array, and return plain list for incompatible types.

|

I'd like to use cross_validation.cross_val_score with

metrics.precision_recall_fscore_support so that I can get all relevant cross-

validation metrics without having to run my cross-validation once for

accuracy, once for precision, once for recall, and once for f1. But when I try

this I get a ValueError:

from sklearn.datasets import fetch_20newsgroups

from sklearn.svm import LinearSVC

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn import metrics

from sklearn import cross_validation

import numpy as np

data_train = fetch_20newsgroups(subset='train', #categories=categories,

shuffle=True, random_state=42)

clf = LinearSVC(loss='l1', penalty='l2')

vectorizer = TfidfVectorizer(

sublinear_tf=False,

max_df=0.5,

min_df=2,

ngram_range = (1,1),

use_idf=False,

stop_words='english')

X_train = vectorizer.fit_transform(data_train.data)

# Cross-validate:

scores = cross_validation.cross_val_score(

clf, X_train, data_train.target, cv=5,

scoring=metrics.precision_recall_fscore_support)

| 1 |

Referring the documentation provided by playwright, seems like the hooks

(example: afterAll / beforeAll) can be used only inside a spec/ test file as

below:

// example.spec.ts

import { test, expect } from '@playwright/test';

test.beforeAll(async () => {

console.log('Before tests');

});

test.afterAll(async () => {

console.log('After tests');

});

test('my test', async ({ page }) => {

// ...

});

My question: is there any support where there can be only one AfterAll() or

beforeAll() hook in one single file which will be called for every test files

? the piece of code that i want to have inside the afterAll and beforeAll is

common for all the test/ specs files and i dont want to have the same

duplicated in all the spec files/ test file.

Any suggestion or thoughts on this?

TIA

Allen

|

### System info

* Playwright Version: [v1.32.3]

* Operating System: [All, Windows] I only has Windows

* Browser: [Chromium, Firefox]

Page Visibility API : https://developer.mozilla.org/en-

US/docs/Web/API/Page_Visibility_API

**Steps**

* Start a test and wait for the browser opens (let the test wait log, we need do following steps manually )

* open inspect/'dev tool' in a tab

* In console panel run the following script

document.addEventListener("visibilitychange", () => {

console.log('visibilitychange work', document.visibilityState === 'visible')

});

* open another new tab --just open it manually

* switch to the previous tab and watch the console log

**Expected**

We can see the console log:

"visibilitychange work false"

"visibilitychange work true"

**Actual**

There is not such log. looks 'visibilitychange' event never triggered

Note: open the browser(Chromium) manually , Page Visibility API works OK.

But when running in test env, Page Visibility API does not work OK.

| 0 |

##### ISSUE TYPE

* Bug Report

##### COMPONENT NAME

???

##### ANSIBLE VERSION

ansible 2.6.0 (skipped_false 39251fc27b) last updated 2018/02/10 00:32:41 (GMT +200)

config file = None

configured module search path = ['/home/nikos/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /home/nikos/Projects/ansible/lib/ansible

executable location = /home/nikos/Projects/ansible/bin/ansible

python version = 3.6.4 (default, Jan 5 2018, 02:35:40) [GCC 7.2.1 20171224]

##### CONFIGURATION

Default

##### OS / ENVIRONMENT

Arch linux latest, tasks run against localhost

##### SUMMARY

It is not possible to define task vars from a dict variable

##### STEPS TO REPRODUCE

- hosts: localhost

tasks:

- set_fact:

dict: {'key': 'value'}

- debug:

msg: Test

vars: "{{ dict }}"

##### EXPECTED RESULTS

The second task should be executed successfully and the dict's contents should

become available as variables in the context of the task.

##### ACTUAL RESULTS

ERROR! Vars in a Task must be specified as a dictionary, or a list of dictionaries

The error appears to have been in '/home/nikos/Projects/playbook.yml': line 8, column 9, but may

be elsewhere in the file depending on the exact syntax problem.

The offending line appears to be:

vars:

- dict

^ here

|

##### ISSUE TYPE

* Bug Report

##### COMPONENT NAME

lib/ansible/playbook/base.py

##### ANSIBLE VERSION

ansible 2.2.0.0

config file =

configured module search path = Default w/o overrides

##### CONFIGURATION

##### OS / ENVIRONMENT

N/A

##### SUMMARY

I am trying to set the vars for either a block: or an include_role: from a

dictionary.

I get one of these messages (depending on the case):

ERROR! Vars in a Block must be specified as a dictionary, or a list of

dictionaries

ERROR! Vars in a IncludeRole must be specified as a dictionary, or a list of

dictionaries

##### STEPS TO REPRODUCE

- set_fact:

_T_m3_inv_vars:

one_setting: "{{ some_other_var1 |mandatory }}"

two_setting: "{{ some_other_var2 |mandatory }}"

- block:

- debug: msg="YYYYYYYY"

vars: "{{ _T_m3_inv_vars }}"

- include_role:

name: "some_nice_role"

static: yes

private: yes

vars: "{{ _T_m3_inv_vars }}"

##### EXPECTED RESULTS

I would expect a similar result as having done the following

- block:

- debug: msg="YYYYYYYY"

vars:

one_setting: "{{ some_other_var1 |mandatory }}"

two_setting: "{{ some_other_var2 |mandatory }}"

- include_role:

name: "some_nice_role"

static: yes

private: yes

vars:

one_setting: "{{ some_other_var1 |mandatory }}"

two_setting: "{{ some_other_var2 |mandatory }}"

This is because I am indeed passing a dictionary to the vars: setting.

##### ACTUAL RESULTS

One of these messages (obtained by running the previous snippets separatellyI

ERROR! Vars in a Block must be specified as a dictionary, or a list of

dictionaries

ERROR! Vars in a IncludeRole must be specified as a dictionary, or a list of

dictionaries

| 1 |

##### System information (version)

* OpenCV => 3.4.1 (master seems to be same)

* Operating System / Platform => cygwin 64 bit

* Compiler => g++ (gcc 7.3.0)

##### Detailed description

In some (very rare) condition, "Error: Assertion failed" happens at:

opencv/modules/core/src/types.cpp

Line 154 in da7e1cf

| CV_Assert( abs(vecs[0].dot(vecs[1])) / (norm(vecs[0]) * norm(vecs[1])) <=

FLT_EPSILON );

---|---

`CV_Assert(abs(vecs[0].dot(vecs[1])) / (cv::norm(vecs[0]) * cv::norm(vecs[1]))

<= FLT_EPSILON);`

because `FLT_EPSILON` is too small to compare.

##### Steps to reproduce

I made a reproducible example:

https://github.com/takotakot/opencv_debug/tree/0a5d37dc2cc4aef22a33012bdcbb54597ae852a1

.

If we have `Point2d` rectangle, using `RotatedRect_2d` can eliminate the

problem.

Part of the code:

cv::Mat points = (cv::Mat_<double>(10, 2) <<

1357., 1337.,

1362., 1407.,

1367., 1474.,

1372., 1543.,

1375., 1625.,

1375., 1696.,

1377., 1734.,

1378., 1742.,

1382., 1801.,

1372., 1990.);

cv::PCA pca_points(points, cv::Mat(), CV_PCA_DATA_AS_ROW, 2);

cv::Point2d p1(564.45, 339.8819), p2, p3;

p2 = p1 - 1999 * cv::Point2d(pca_points.eigenvectors.row(0));

p3 = p2 - 1498.5295 * cv::Point2d(pca_points.eigenvectors.row(1));

cv::RotatedRect(p1, p2, p3);

##### Plans

I have some plans:

1. Multiple 2, 4 or some value to FLT_EPSILON

2. Make another constructor using `Point2d` for `Point2f` (and `Vec2d` for `Vec2f` etc. inside)

Note 1: If we use `DBL_EPSILON`, same problem may occur.

Note 2: If we only have `Point2f` rectangle, we cannot avoid assertion.

3. Calcurate the angle between two vectors and introduce another assersion.

I want to create PR for solving this issue. But I want some direction.

|

##### System information (version)

* OpenCV => 4.1.0

* Operating System / Platform => Ubuntu 18.04 LTS

* Compiler => clang-7

##### Detailed description

An issue was discovered in opencv 4.1.0, there is an out of bounds read in function cv::predictOrdered<cv::HaarEvaluator> in cascadedetect.hpp, which leads to denial of service.

source

511 double val = featureEvaluator(node.featureIdx);

512 idx = val < node.threshold ? node.left : node.right;

513 }

514 while( idx > 0 );

> 515 sum += \*bug=>*\ cascadeLeaves[leafOfs - idx];

516 nodeOfs += weak.nodeCount;

517 leafOfs += weak.nodeCount + 1;

518 }

519 if( sum < stage.threshold )

520 return -si;

debug

In file: /home/pwd/SofterWare/opencv-4.1.0/modules/objdetect/src/cascadedetect.hpp

510 CascadeClassifierImpl::Data::DTreeNode& node = cascadeNodes[root + idx];

511 double val = featureEvaluator(node.featureIdx);

512 idx = val < node.threshold ? node.left : node.right;

513 }

514 while( idx > 0 );

► 515 sum += cascadeLeaves[leafOfs - idx];

516 nodeOfs += weak.nodeCount;

517 leafOfs += weak.nodeCount + 1;

518 }

519 if( sum < stage.threshold )

520 return -si;

─────────────────────────────────────────────────────────────────────────────────────────────────────[ STACK ]─────────────────────────────────────────────────────────────────────────────────────────────────────

00:0000│ rsp 0x7fffc7ffe300 ◂— 0x8d80169006580d8

01:0008│ 0x7fffc7ffe308 ◂— 0xbba5787f80000000

02:0010│ 0x7fffc7ffe310 —▸ 0x7fffd53a5de0 ◂— 0xb1088000af4cb

03:0018│ 0x7fffc7ffe318 ◂— 0xffedb5a100000003

04:0020│ 0x7fffc7ffe320 ◂— 0xbf74af0fe0000000

05:0028│ 0x7fffc7ffe328 —▸ 0x6b7b70 ◂— 0x0

06:0030│ 0x7fffc7ffe330 ◂— 0x800000000000005d /* ']' */

07:0038│ 0x7fffc7ffe338 —▸ 0x66f4a4 ◂— 0x100000000

───────────────────────────────────────────────────────────────────────────────────────────────────[ BACKTRACE ]───────────────────────────────────────────────────────────────────────────────────────────────────

► f 0 7ffff5e2c500

f 1 7ffff5e2bb21

f 2 7ffff5e3bd74

f 3 7fffef87dc59

f 4 7fffef87ea3b cv::ParallelJob::execute(bool)+603

f 5 7fffef87e21a cv::WorkerThread::thread_body()+890

f 6 7fffef880e05 cv::WorkerThread::thread_loop_wrapper(void*)+21

f 7 7fffee3d46db start_thread+219

Program received signal SIGSEGV (fault address 0xfffffffe006630f8)

pwndbg> p cascadeLeaves

$1 = (float *) 0x662e10

pwndbg> p leafOfs

$2 = 186

pwndbg> p idx

$3 = -2147483648

bug report

AddressSanitizer:DEADLYSIGNAL

=================================================================

==9176==ERROR: AddressSanitizer: SEGV on unknown address 0x623e000443e8 (pc 0x7fc9fc661bfa bp 0x7fc9daee70b0 sp 0x7fc9daee6f80 T1)

==9176==The signal is caused by a READ memory access.

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

AddressSanitizer:DEADLYSIGNAL

#0 0x7fc9fc661bf9 in int cv::predictOrdered<cv::HaarEvaluator>(cv::CascadeClassifierImpl&, cv::Ptr<cv::FeatureEvaluator>&, double&) /src/opencv/modules/objdetect/src/cascadedetect.hpp:515:17

#1 0x7fc9fc65f736 in cv::CascadeClassifierImpl::runAt(cv::Ptr<cv::FeatureEvaluator>&, cv::Point_<int>, int, double&) /src/opencv/modules/objdetect/src/cascadedetect.cpp:962:20

#2 0x7fc9fc692083 in cv::CascadeClassifierInvoker::operator()(cv::Range const&) const /src/opencv/modules/objdetect/src/cascadedetect.cpp:1029:46

#3 0x7fc9f294b0c3 in (anonymous namespace)::ParallelLoopBodyWrapper::operator()(cv::Range const&) const /src/opencv/modules/core/src/parallel.cpp:343:17

#4 0x7fc9f2d737e7 in cv::ParallelJob::execute(bool) /src/opencv/modules/core/src/parallel_impl.cpp:315:22

#5 0x7fc9f2d7125b in cv::WorkerThread::thread_body() /src/opencv/modules/core/src/parallel_impl.cpp:415:24

#6 0x7fc9f2d7f719 in cv::WorkerThread::thread_loop_wrapper(void*) /src/opencv/modules/core/src/parallel_impl.cpp:265:41

#7 0x7fc9f15e46b9 in start_thread (/lib/x86_64-linux-gnu/libpthread.so.0+0x76b9)

#8 0x7fc9f0cf841c in clone (/lib/x86_64-linux-gnu/libc.so.6+0x10741c)

AddressSanitizer can not provide additional info.

SUMMARY: AddressSanitizer: SEGV /src/opencv/modules/objdetect/src/cascadedetect.hpp:515:17 in int cv::predictOrdered<cv::HaarEvaluator>(cv::CascadeClassifierImpl&, cv::Ptr<cv::FeatureEvaluator>&, double&)

Thread T1 created by T0 here:

#0 0x43428d in __interceptor_pthread_create /work/llvm/projects/compiler-rt/lib/asan/asan_interceptors.cc:204

#1 0x7fc9f2d79d58 in cv::WorkerThread::WorkerThread(cv::ThreadPool&, unsigned int) /src/opencv/modules/core/src/parallel_impl.cpp:227:15

#2 0x7fc9f2d76240 in cv::ThreadPool::reconfigure_(unsigned int) /src/opencv/modules/core/src/parallel_impl.cpp:510:53

#3 0x7fc9f2d7bb07 in cv::ThreadPool::run(cv::Range const&, cv::ParallelLoopBody const&, double) /src/opencv/modules/core/src/parallel_impl.cpp:548:9

#4 0x7fc9f2949a99 in parallel_for_impl(cv::Range const&, cv::ParallelLoopBody const&, double) /src/opencv/modules/core/src/parallel.cpp:590:9

#5 0x7fc9f2949a99 in cv::parallel_for_(cv::Range const&, cv::ParallelLoopBody const&, double) /src/opencv/modules/core/src/parallel.cpp:518

#6 0x7fc9fc673269 in cv::CascadeClassifierImpl::detectMultiScaleNoGrouping(cv::_InputArray const&, std::vector<cv::Rect_<int>, std::allocator<cv::Rect_<int> > >&, std::vector<int, std::allocator<int> >&, std::vector<double, std::allocator<double> >&, double, cv::Size_<int>, cv::Size_<int>, bool) /src/opencv/modules/objdetect/src/cascadedetect.cpp:1346:9

#7 0x7fc9fc677cb8 in cv::CascadeClassifierImpl::detectMultiScale(cv::_InputArray const&, std::vector<cv::Rect_<int>, std::allocator<cv::Rect_<int> > >&, std::vector<int, std::allocator<int> >&, std::vector<double, std::allocator<double> >&, double, int, int, cv::Size_<int>, cv::Size_<int>, bool) /src/opencv/modules/objdetect/src/cascadedetect.cpp:1365:5

#8 0x7fc9fc6786ee in cv::CascadeClassifierImpl::detectMultiScale(cv::_InputArray const&, std::vector<cv::Rect_<int>, std::allocator<cv::Rect_<int> > >&, double, int, int, cv::Size_<int>, cv::Size_<int>) /src/opencv/modules/objdetect/src/cascadedetect.cpp:1386:5

#9 0x7fc9fc686370 in cv::CascadeClassifier::detectMultiScale(cv::_InputArray const&, std::vector<cv::Rect_<int>, std::allocator<cv::Rect_<int> > >&, double, int, int, cv::Size_<int>, cv::Size_<int>) /src/opencv/modules/objdetect/src/cascadedetect.cpp:1659:9

#10 0x51d4bc in main /work/funcs/classifier.cc:34:24

#11 0x7fc9f0c1182f in __libc_start_main (/lib/x86_64-linux-gnu/libc.so.6+0x2082f)

==9176==ABORTING

others

from fuzz project pwd-opencv-classifier-00

crash name pwd-opencv-classifier-00-00000253-20190703.xml

Auto-generated by pyspider at 2019-07-03 07:57:31

please send email to teamseri0us360@gmail.com if you have any questions.

##### Steps to reproduce

commandline

classifier /work/funcs/appname.bmp @@

poc2.tar.gz

| 0 |

##### Description of the problem

I don't know if it is expected or if I miss something, but it looks like the

envmap textures generated by PMREGenerator and the renderer specified at that

time cannot be re-used with another renderer.

In this example, the envmap is generated using **renderer**.

If the scene is rendered with **renderer2** , it doesn't work.

* jsfiddle

Replace `var pmremGenerator = new THREE.PMREMGenerator( renderer );`

by `var pmremGenerator = new THREE.PMREMGenerator( renderer2 );`

on line 49 to check the difference.

##### Three.js version

* Dev

* r115

##### Browser

* All of them

##### OS

* All of them

|

##### Description of the problem

Using `PMREMGenerator.fromEquirectangular` in multiple simultaneous three.js

renderers causes only the last renderer to have a correctly functioning

texture (at least for use as an envMap)

This happens even when each renderer has its own instance of `pmremGenerator`

and calls `dispose` after use.

Here's a fiddle: https://jsfiddle.net/h37k2ztv/10/

I would expect that initializing a different `pmremGenerator` for each

renderer should be sufficient, but I had to work around it by reinstantiating

and recompiling the `pmremGenerator` immediately before each time it is used

(uncomment lines 63 and 64 in the fiddle to do this)

Issue #18842 reports this same issue, but perhaps even without multiple

renderers.

This merge request may have introduced the unexpected behavior.

##### Three.js version

* Dev

* r115

* ...

##### Browser

* All of them

* Chrome

* Firefox

* Internet Explorer

##### OS

* All of them

* Windows

* macOS

* Linux

* Android

* iOS

| 1 |

## Bug Report

### Which version of ShardingSphere did you use?

5.0.0

### Which project did you use? ShardingSphere-JDBC or ShardingSphere-Proxy?

ShardingSphere-JDBC

### Expected behavior

execute well

### Actual behavior

11-19 17:53:11.212 INFO 59884 --- [nio-8088-exec-1] ShardingSphere-SQL : Logic SQL: insert into coupon_limit(id, fk_coupon_id, type, sold_qty, sold_date) values (null, ?, ?, 1, str_to_date('9999-12-31','%Y-%m-%d') ) on DUPLICATE key update sold_qty = IF(sold_qty + 1 <= ?,sold_qty + 1, -1)

2021-11-19 17:53:11.213 INFO 59884 --- [nio-8088-exec-1] ShardingSphere-SQL : SQLStatement: MySQLInsertStatement(setAssignment=Optional.empty, onDuplicateKeyColumns=Optional[org.apache.shardingsphere.sql.parser.sql.common.segment.dml.column.OnDuplicateKeyColumnsSegment@5ee2c53c])

2021-11-19 17:53:11.213 INFO 59884 --- [nio-8088-exec-1] ShardingSphere-SQL : Actual SQL: ds-0 ::: insert into coupon_limit(id, fk_coupon_id, type, sold_qty, sold_date) values (null, ?, ?, 1, str_to_date('9999-12-31','%Y-%m-%d')) on DUPLICATE key update sold_qty = IF(sold_qty + 1 <= ?,sold_qty + 1, -1) ::: [1, 2]

2021-11-19 17:53:11.259 ERROR 59884 --- [nio-8088-exec-1] o.a.c.c.C.[.[.[/].[dispatcherServlet] : Servlet.service() for servlet [dispatcherServlet] in context with path [] threw exception [Request processing failed; nested exception is java.sql.SQLException: No value specified for parameter 3] with root cause

java.sql.SQLException: No value specified for parameter 3

at com.mysql.cj.jdbc.exceptions.SQLError.createSQLException(SQLError.java:129) ~[mysql-connector-java-8.0.26.jar:8.0.26]

at com.mysql.cj.jdbc.exceptions.SQLExceptionsMapping.translateException(SQLExceptionsMapping.java:122) ~[mysql-connector-java-8.0.26.jar:8.0.26]

at com.mysql.cj.jdbc.ClientPreparedStatement.execute(ClientPreparedStatement.java:396) ~[mysql-connector-java-8.0.26.jar:8.0.26]

at com.zaxxer.hikari.pool.ProxyPreparedStatement.execute(ProxyPreparedStatement.java:44) ~[HikariCP-3.4.2.jar:na]

at com.zaxxer.hikari.pool.HikariProxyPreparedStatement.execute(HikariProxyPreparedStatement.java) ~[HikariCP-3.4.2.jar:na]

at org.apache.shardingsphere.driver.jdbc.core.statement.ShardingSpherePreparedStatement$2.executeSQL(ShardingSpherePreparedStatement.java:322) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.driver.jdbc.core.statement.ShardingSpherePreparedStatement$2.executeSQL(ShardingSpherePreparedStatement.java:318) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.sql.execute.engine.driver.jdbc.JDBCExecutorCallback.execute(JDBCExecutorCallback.java:85) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.sql.execute.engine.driver.jdbc.JDBCExecutorCallback.execute(JDBCExecutorCallback.java:64) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.kernel.ExecutorEngine.syncExecute(ExecutorEngine.java:101) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.kernel.ExecutorEngine.parallelExecute(ExecutorEngine.java:97) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.kernel.ExecutorEngine.execute(ExecutorEngine.java:82) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.sql.execute.engine.driver.jdbc.JDBCExecutor.execute(JDBCExecutor.java:65) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.infra.executor.sql.execute.engine.driver.jdbc.JDBCExecutor.execute(JDBCExecutor.java:49) ~[shardingsphere-infra-executor-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.driver.executor.JDBCLockEngine.doExecute(JDBCLockEngine.java:116) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.driver.executor.JDBCLockEngine.execute(JDBCLockEngine.java:93) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.driver.executor.DriverJDBCExecutor.execute(DriverJDBCExecutor.java:127) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at org.apache.shardingsphere.driver.jdbc.core.statement.ShardingSpherePreparedStatement.execute(ShardingSpherePreparedStatement.java:298) ~[shardingsphere-jdbc-core-5.0.0.jar:5.0.0]

at com.example.org.shardingjdbcmetadatatest.ShardingJdbcMetaDataTestApplication.simpleExecute(ShardingJdbcMetaDataTestApplication.java:82) ~[classes/:na]

at com.example.org.shardingjdbcmetadatatest.ShardingJdbcMetaDataTestApplication.sql(ShardingJdbcMetaDataTestApplication.java:46) ~[classes/:na]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[na:1.8.0_292]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[na:1.8.0_292]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[na:1.8.0_292]

at java.lang.reflect.Method.invoke(Method.java:498) ~[na:1.8.0_292]

at org.springframework.web.method.support.InvocableHandlerMethod.doInvoke(InvocableHandlerMethod.java:205) ~[spring-web-5.3.10.jar:5.3.10]

at org.springframework.web.method.support.InvocableHandlerMethod.invokeForRequest(InvocableHandlerMethod.java:150) ~[spring-web-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.mvc.method.annotation.ServletInvocableHandlerMethod.invokeAndHandle(ServletInvocableHandlerMethod.java:117) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.invokeHandlerMethod(RequestMappingHandlerAdapter.java:895) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.handleInternal(RequestMappingHandlerAdapter.java:808) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.mvc.method.AbstractHandlerMethodAdapter.handle(AbstractHandlerMethodAdapter.java:87) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.DispatcherServlet.doDispatch(DispatcherServlet.java:1067) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.DispatcherServlet.doService(DispatcherServlet.java:963) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:1006) ~[spring-webmvc-5.3.10.jar:5.3.10]

at org.springframework.web.servlet.FrameworkServlet.doPost(FrameworkServlet.java:909) ~[spring-webmvc-5.3.10.jar:5.3.10]

at javax.servlet.http.HttpServlet.service(HttpServlet.java:681) ~[tomcat-embed-core-9.0.53.jar:4.0.FR]

at org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:883) ~[spring-webmvc-5.3.10.jar:5.3.10]

at javax.servlet.http.HttpServlet.service(HttpServlet.java:764) ~[tomcat-embed-core-9.0.53.jar:4.0.FR]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:227) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:53) ~[tomcat-embed-websocket-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.springframework.web.filter.RequestContextFilter.doFilterInternal(RequestContextFilter.java:100) ~[spring-web-5.3.10.jar:5.3.10]

at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) ~[spring-web-5.3.10.jar:5.3.10]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.springframework.web.filter.FormContentFilter.doFilterInternal(FormContentFilter.java:93) ~[spring-web-5.3.10.jar:5.3.10]

at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) ~[spring-web-5.3.10.jar:5.3.10]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:201) ~[spring-web-5.3.10.jar:5.3.10]

at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) ~[spring-web-5.3.10.jar:5.3.10]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.StandardWrapperValve.invoke(StandardWrapperValve.java:197) ~[tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.StandardContextValve.invoke(StandardContextValve.java:97) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.authenticator.AuthenticatorBase.invoke(AuthenticatorBase.java:540) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.StandardHostValve.invoke(StandardHostValve.java:135) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.valves.ErrorReportValve.invoke(ErrorReportValve.java:92) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.core.StandardEngineValve.invoke(StandardEngineValve.java:78) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.catalina.connector.CoyoteAdapter.service(CoyoteAdapter.java:357) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.coyote.http11.Http11Processor.service(Http11Processor.java:382) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.coyote.AbstractProcessorLight.process(AbstractProcessorLight.java:65) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.coyote.AbstractProtocol$ConnectionHandler.process(AbstractProtocol.java:893) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1726) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:49) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.util.threads.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1191) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659) [tomcat-embed-core-9.0.53.jar:9.0.53]

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) [tomcat-embed-core-9.0.53.jar:9.0.53]

at java.lang.Thread.run(Thread.java:748) [na:1.8.0_292]

### Actual behavior

|

For example:

insert into the_biz(id,ser_id,biz_time) values (1,1,now())

NoneShardingStrategy is default for no sharding tables,but error occurs

.because of sql checking?

When in no-sharding table cases,could we make shardingsphere look like it

doesn't exist?

| 0 |

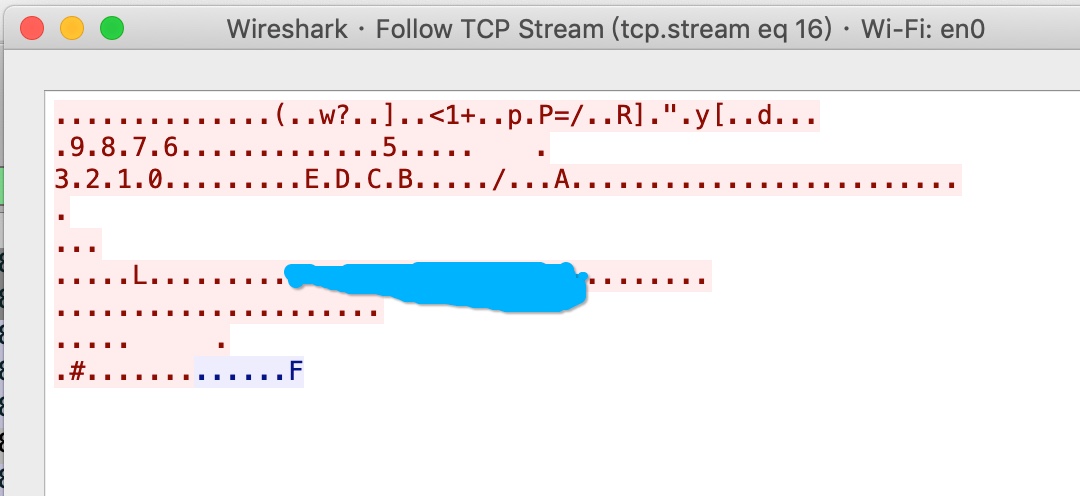

[ yes ] I've searched for any related issues and avoided creating a duplicate

issue.

Description

Announcement, it's not a bug probably。

Firstly ,I run the standard wss ( ws over https server ) code by a single js

file, It worked.

and then , I copy the same code into the electron main.js, it can't be worked.

so ,i open wireshark to capture the package , and then I found the electron

svr send a little bytes other then never see any piece about how to handle the

upgrade handshake. :(

ps: i use the self-singed certification

Reproducible in:

version: ws ^7.1.2

Node.js version(s): v10.16.1

OS version(s): osx 10.14

Steps to reproduce:

1.server code :

const fs = require('fs');

const https = require('https');

const WebSocket = require('ws');

const path = require('path');

const hostname = 'aaa.abc.com';

const server = https.createServer({

cert: fs.readFileSync(path.resolve(certs/${hostname}.crt)),

key: fs.readFileSync(path.resolve(certs/${hostname}.key)),

// rejectUnauthorized: false

});

const wss = new WebSocket.Server({ server });

wss.on('connection', function connection(ws) {

ws.on('message', function incoming(message) {

console.log('received: %s', message);

});

ws.send('something');

});

server.listen(443,()=>{

console.log('start svr002')

});

client code

ws=new WebSocket('wss://aaa.abc.com/echo',{

rejectUnauthorized: false

});

ws.on('error',function (e) {

connectFlag=false;

console.error('error',e);

ws=null;

});

ws.on('close',function (e) {

connectFlag=false;

console.warn('close',e)

});

ws.on('open', function open() {

connectFlag=true;

console.log('connected');

ws.send('something');

ws.on('message', function incoming(data) {

console.log(data);

});

});

run in single.js : yes

node svr.js

node cli001.js or open in ie,ff,chrome

run embed in electron : not work

electrion .

node cli001.js or open in ie,ff,chrome

| ERROR: type should be string, got "\n\nhttps://i.imgur.com/mYtRTmJ.png\n\nhttps://electronjs.org/docs/api/browser-window\n\n" | 0 |

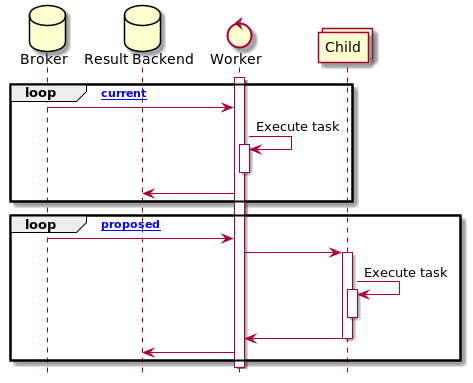

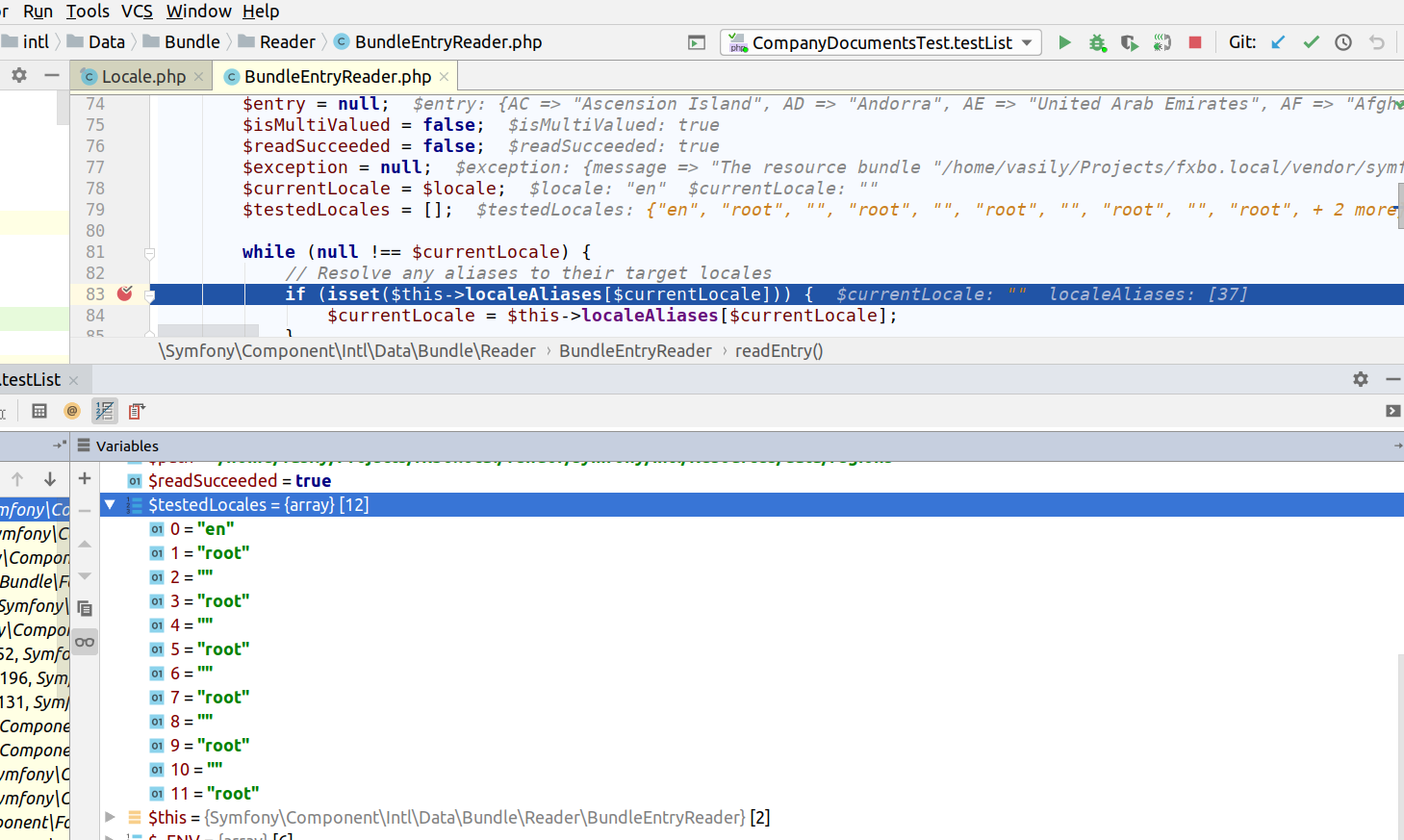

# Checklist

* I have verified that the issue exists against the `master` branch of Celery.

* This has already been asked to the discussions forum first.

* I have read the relevant section in the

contribution guide

on reporting bugs.

* I have checked the issues list

for similar or identical bug reports.

* I have checked the pull requests list

for existing proposed fixes.

* I have checked the commit log

to find out if the bug was already fixed in the master branch.

* I have included all related issues and possible duplicate issues

in this issue (If there are none, check this box anyway).

## Mandatory Debugging Information

* I have included the output of `celery -A proj report` in the issue.

(if you are not able to do this, then at least specify the Celery

version affected).

* I have verified that the issue exists against the `master` branch of Celery.

* I have included the contents of `pip freeze` in the issue.

* I have included all the versions of all the external dependencies required

to reproduce this bug.

## Optional Debugging Information

* I have tried reproducing the issue on more than one Python version

and/or implementation.

* I have tried reproducing the issue on more than one message broker and/or

result backend.

* I have tried reproducing the issue on more than one version of the message

broker and/or result backend.

* I have tried reproducing the issue on more than one operating system.

* I have tried reproducing the issue on more than one workers pool.

* I have tried reproducing the issue with autoscaling, retries,

ETA/Countdown & rate limits disabled.

* I have tried reproducing the issue after downgrading

and/or upgrading Celery and its dependencies.

## Related Issues and Possible Duplicates

#### Related Issues

Little bit related -

#6672

#5890

#### Possible Duplicates

* None

## Environment & Settings

**Celery version** : 5.2.7 (dawn-chorus)

**`celery report` Output:**

$ celery report

software -> celery:5.2.7 (dawn-chorus) kombu:5.2.4 py:3.9.9

billiard:3.6.4.0 py-amqp:5.1.1

platform -> system:Darwin arch:64bit

kernel version:21.4.0 imp:CPython

loader -> celery.loaders.default.Loader

settings -> transport:amqp results:disabled

deprecated_settings: None

# Steps to Reproduce

1. Here the global serializer is `json` which is the default setting in celery. Now we will declare a task with serialization set to `pickle`.

# In tasks.py

from celery import shared_task

@serial_task(serializer='pickle')

def pickle_seriliazable_args_task(nice_set):

print('Starting Task')

# This delay would indicate a long running task

# So that we have enough time to request the task stats from shell

sleep(200)

print('Task Finished')

2. Open a django-manage shell and run

In [1]: from myapp.apps.niceapp.tasks import pickle_serialized_task

In [2]: task = pickle_serialized_task.delay(set([1, 2, 3]))

In [3]: celery_hostname = 'celery@work' # update hostname as per your config

In [4]: celery_inspect = task.app.control.inspect([celery_hostname])

In [5]: celery_inspect.active()

On running `celery_inspect.active()` following error is thrown.

2022-07-01 15:07:50,791: INFO/MainProcess] Task myapp.apps.niceapp.tasks.pickle_serialized_task[e61f2103-1e19-4593-b2bd-6d69bd273a3c] received

[2022-07-01 15:07:50,815: WARNING/ForkPoolWorker-6] Starting Task

[2022-07-01 15:09:09,906: ERROR/MainProcess] Control command error: EncodeError(TypeError('Object of type set is not JSON serializable'))

Traceback (most recent call last):

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 39, in _reraise_errors

yield

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 210, in dumps

payload = encoder(data)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/utils/json.py", line 68, in dumps

return _dumps(s, cls=cls or _default_encoder,

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/__init__.py", line 234, in dumps

return cls(

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 199, in encode

chunks = self.iterencode(o, _one_shot=True)

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 257, in iterencode

return _iterencode(o, 0)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/utils/json.py", line 58, in default

return super().default(o)

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 179, in default

raise TypeError(f'Object of type {o.__class__.__name__} '

TypeError: Object of type set is not JSON serializable

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/celery/worker/pidbox.py", line 44, in on_message

self.node.handle_message(body, message)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/pidbox.py", line 141, in handle_message

return self.dispatch(**body)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/pidbox.py", line 108, in dispatch

self.reply({self.hostname: reply},

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/pidbox.py", line 145, in reply

self.mailbox._publish_reply(data, exchange, routing_key, ticket,

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/pidbox.py", line 275, in _publish_reply

producer.publish(

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/messaging.py", line 166, in publish

body, content_type, content_encoding = self._prepare(

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/messaging.py", line 254, in _prepare

body) = dumps(body, serializer=serializer)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 210, in dumps

payload = encoder(data)

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/contextlib.py", line 137, in __exit__

self.gen.throw(typ, value, traceback)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 43, in _reraise_errors

reraise(wrapper, wrapper(exc), sys.exc_info()[2])

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/exceptions.py", line 21, in reraise

raise value.with_traceback(tb)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 39, in _reraise_errors

yield

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/serialization.py", line 210, in dumps

payload = encoder(data)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/utils/json.py", line 68, in dumps

return _dumps(s, cls=cls or _default_encoder,

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/__init__.py", line 234, in dumps

return cls(

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 199, in encode

chunks = self.iterencode(o, _one_shot=True)

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 257, in iterencode

return _iterencode(o, 0)

File "/Users/amitphulera/.pyenv/versions/3.9.9/envs/hq_celery/lib/python3.9/site-packages/kombu/utils/json.py", line 58, in default

return super().default(o)

File "/Users/amitphulera/.pyenv/versions/3.9.9/lib/python3.9/json/encoder.py", line 179, in default

raise TypeError(f'Object of type {o.__class__.__name__} '

kombu.exceptions.EncodeError: Object of type set is not JSON serializable

## Required Dependencies

* **Minimal Python Version** : N/A or Unknown

* **Minimal Celery Version** : N/A or Unknown

* **Minimal Kombu Version** : N/A or Unknown

* **Minimal Broker Version** : N/A or Unknown

* **Minimal Result Backend Version** : N/A or Unknown

* **Minimal OS and/or Kernel Version** : N/A or Unknown

* **Minimal Broker Client Version** : N/A or Unknown

* **Minimal Result Backend Client Version** : N/A or Unknown

### Python Packages

**`pip freeze` Output:**

alabaster==0.7.12

alembic==1.7.7

amqp==5.1.1

appnope==0.1.3

architect==0.6.0

asgiref==3.5.0

asttokens==2.0.5

attrs==21.4.0

Babel==2.9.1

backcall==0.2.0

Beaker==1.11.0

beautifulsoup4==4.10.0

billiard==3.6.4.0

black==22.1.0

boto3==1.17.85

botocore==1.20.85

cachetools==5.0.0

case==1.5.3

celery==5.2.7

certifi==2020.6.20

cffi==1.14.3

chardet==3.0.4

click==8.1.3

click-didyoumean==0.3.0

click-plugins==1.1.1

click-repl==0.2.0

cloudant==2.14.0

colorama==0.4.3

contextlib2==21.6.0

coverage==5.5

cryptography==3.4.8

csiphash==0.0.5

datadog==0.39.0

ddtrace==0.44.0

debugpy==1.6.0

decorator==4.0.11

defusedxml==0.7.1

Deprecated==1.2.10

diff-match-patch==20200713

dimagi-memoized==1.1.3

Django==3.2.13

django-appconf==1.0.5

django-autoslug==1.9.8

django-braces==1.14.0

django-bulk-update==2.2.0

django-celery-results==2.4.0

django-compressor==2.4

django-countries==7.3.2

django-crispy-forms==1.10.0

django-cte==1.2.0

django-extensions==3.1.3

django-formtools==2.3

django-logentry-admin==1.0.6

django-oauth-toolkit==1.5.0

django-otp==0.9.4

django-phonenumber-field==5.2.0

django-prbac==1.0.1

django-recaptcha==2.0.6

django-redis==4.12.1

django-redis-sessions==0.6.2

django-statici18n==1.9.0

django-tastypie==0.14.4

django-transfer==0.4

django-two-factor-auth==1.13.2

django-user-agents==0.4.0

djangorestframework==3.12.2

dnspython==1.15.0

docutils==0.16

dropbox==9.3.0

elasticsearch2==2.5.1

elasticsearch5==5.5.6

email-validator==1.1.3

et-xmlfile==1.0.1

ethiopian-date-converter==0.1.5

eulxml==1.1.3

executing==0.8.3

fakecouch==0.0.15

Faker==5.0.2

fixture==1.5.11

flake8==3.9.2

flaky==3.7.0

flower==1.0.0

freezegun==1.1.0

future==0.18.2

gevent==21.8.0

ghdiff==0.4

git-build-branch==0.1.13

gnureadline==8.0.0

google-api-core==2.5.0

google-api-python-client==2.32.0

google-auth==2.6.0

google-auth-httplib2==0.1.0

google-auth-oauthlib==0.4.6

googleapis-common-protos==1.54.0

greenlet==1.1.2

gunicorn==20.0.4

hiredis==2.0.0

httpagentparser==1.9.0

httplib2==0.20.4

humanize==4.1.0

idna==2.10

imagesize==1.2.0

importlib-metadata==4.11.3

iniconfig==1.1.1

intervaltree==3.1.0

ipython==8.2.0

iso8601==0.1.13

isodate==0.6.1

jedi==0.18.1

Jinja2==2.11.3

jmespath==0.10.0

json-delta==2.0

jsonfield==2.1.1

jsonobject==2.0.0

jsonobject-couchdbkit==1.0.1

jsonschema==3.2.0

jwcrypto==0.8

kafka-python==1.4.7

kombu==5.2.4

laboratory==0.2.0

linecache2==1.0.0

lxml==4.7.1

Mako==1.1.3

mando==0.6.4

Markdown==3.3.6

MarkupSafe==1.1.1

matplotlib-inline==0.1.3

mccabe==0.6.1

mock==4.0.3

mypy-extensions==0.4.3

ndg-httpsclient==0.5.1

nose==1.3.7

nose-exclude==0.5.0

oauthlib==3.1.0

oic==1.3.0

openpyxl==3.0.9

packaging==20.4

parso==0.8.3

pathspec==0.9.0

pep517==0.10.0

pexpect==4.8.0

phonenumberslite==8.12.48

pickle5==0.0.11

pickleshare==0.7.5

Pillow==9.0.1

pip-tools==6.6.0

platformdirs==2.4.1

pluggy==1.0.0

ply==3.11

polib==1.1.1

prometheus-client==0.14.1

prompt-toolkit==3.0.26

protobuf==3.15.0

psutil==5.8.0

psycogreen==1.0.2

psycopg2==2.8.6

psycopg2cffi==2.9.0

ptyprocess==0.7.0

pure-eval==0.2.2

py==1.11.0

py-cpuinfo==8.0.0

py-KISSmetrics==1.1.0

pyasn1==0.4.8

pyasn1-modules==0.2.8

pycodestyle==2.7.0

pycparser==2.20

pycryptodome==3.10.1

pycryptodomex==3.14.1

pyflakes==2.3.1

PyGithub==1.54.1

Pygments==2.11.2

pygooglechart==0.4.0

pyjwkest==1.4.2

PyJWT==1.7.1

pyOpenSSL==20.0.1

pyparsing==3.0.7

pyphonetics==0.5.3

pyrsistent==0.17.3

PySocks==1.7.1

pytest==7.1.2

pytest-benchmark==3.4.1

pytest-django==4.5.2

python-dateutil==2.8.2

python-editor==1.0.4

python-imap==1.0.0

python-magic==0.4.22

python-mimeparse==1.6.0

python-termstyle==0.1.10

python3-saml==1.12.0

pytz==2022.1

PyYAML==5.4.1

pyzxcvbn==0.8.0

qrcode==4.0.4

quickcache==0.5.4

radon==5.1.0

rcssmin==1.0.6

redis==3.5.3

reportlab==3.6.9

requests==2.25.1

requests-mock==1.9.3

requests-oauthlib==1.3.1

requests-toolbelt==0.9.1

rjsmin==1.1.0

rsa==4.8

s3transfer==0.4.2

schema==0.7.5

sentry-sdk==0.19.5

setproctitle==1.2.2

sh==1.14.2

simpleeval==0.9.10

simplejson==3.17.2

six==1.16.0

sniffer==0.4.1

snowballstemmer==2.0.0

socketpool==0.5.3

sortedcontainers==2.3.0

soupsieve==2.0.1

Sphinx==4.1.2

sphinx-rtd-theme==0.5.2

sphinxcontrib-applehelp==1.0.2

sphinxcontrib-devhelp==1.0.2

sphinxcontrib-django==0.5.1

sphinxcontrib-htmlhelp==2.0.0

sphinxcontrib-jsmath==1.0.1

sphinxcontrib-qthelp==1.0.3

sphinxcontrib-serializinghtml==1.1.5

sqlagg==0.17.2

SQLAlchemy==1.3.19

sqlalchemy-postgres-copy==0.5.0

sqlparse==0.3.1

stack-data==0.1.4

stripe==2.54.0

suds-py3==1.4.5.0

tenacity==6.2.0

testil==1.1

text-unidecode==1.3

tinys3==0.1.12

toml==0.10.2

tomli==2.0.1

toposort==1.7

tornado==6.1

traceback2==1.4.0

traitlets==5.1.1

tropo-webapi-python==0.1.3

turn-python==0.0.1

twilio==6.5.1

typing_extensions==4.1.1

ua-parser==0.10.0

Unidecode==1.2.0

unittest2==1.1.0

uritemplate==4.1.1

urllib3==1.26.5

user-agents==2.2.0

uWSGI==2.0.19.1

vine==5.0.0

wcwidth==0.2.5

Werkzeug==1.0.1

wrapt==1.12.1

xlrd==2.0.1

xlwt==1.3.0

xmlsec==1.3.12

yapf==0.31.0

zipp==3.7.0

zope.event==4.5.0

zope.interface==5.4.0

### Other Dependencies

N/A

## Minimally Reproducible Test Case

# run celery with `pickle_serialized_task` as defined in reproduction steps and run the snippet below

from myapp.apps.niceapp.tasks import pickle_serialized_task

task = pickle_serialized_task.delay(set([1, 2, 3]))

celery_hostname = 'celery@work'

celery_inspect = task.app.control.inspect([celery_hostname])

celery_inspect.active()

# Expected Behavior

This should list the details about the task.

# Actual Behavior

It is erroring out with the stack trace shared above.

|

## Related Issues and Possible Duplicates

#### Related Issues

* #6672, #5890

#### Related PRs

* #6757

# Steps to Reproduce

Here is the script that reproduces the issue:

import time

from celery import Celery

app = Celery('example')

app.conf.update(

backend_url='redis://localhost:6379',

broker_url='redis://localhost:6379',

result_backend='redis://localhost:6379',

task_serializer='json',

accept_content=['pickle', 'json'],

)

@app.task(name='task1', serializer='pickle')

def task1(*args, **kwargs):

print('Start', args, kwargs)

time.sleep(30)

print('Finish', args, kwargs)

def main():

task1.delay({1, 2, 3}) # set is not JSON serializable.

# inspect queue items

inspected = app.control.inspect()

active_tasks = inspected.active()

print(active_tasks)

if __name__ == '__main__':

main()

See also following discussion: #6757 (comment)

# Expected Behavior

Control/Inspect serialization should support custom per-task serializer

# Actual Behavior

Control/Inspect serialization is driven only by `task_serializer`

configuration.

| 1 |

At the moment, index signature parameter type must be string or number.

Now that Typescript supports String literal types, it would be great if it

could be used for index signature parameter type as well.

For example:

type MyString = "a" | "b" | "c";

var MyMap:{[id:MyString] : number} = {};

MyMap["a"] = 1; // valid

MyMap["asd"] = 2; //invalid

* * *

It would be also useful if it could also work with Enums. For example:

enum MyEnum {

a = <any>"a",

b = <any>"b",

c = <any>"c",

d = <any>"d",

}

var MyMap:{[id:MyEnum] : number} = {};

MyMap[MyEnum.a] = 1; // valid

MyMap["asd"] = 2; //invalid

var MyMap:{[id:MyEnum] : number} = {

[MyEnum.a]:1, // valid

"asdas":2, //invalid

}

|

Typescript requires that enums have number value types (hopefully soon, this

will also include string value types).

Attempting to use an enum as a key type for a hash results in this error:

"Index signature parameter type much be 'string' or 'number' ".-- An enum is

actually a number type.-- This shouldn't be an error.

Enums are a convenient way of defining the domain of number and string value

types, in cases such as

export interface UserInterfaceColors {

[index: UserInterfaceElement]: ColorInfo;

}

export interface ColorInfo {

r: number;

g: number;

b: number;

a: number;

}

export enum UserInterfaceElement {

ActiveTitleBar = 0,

InactiveTitleBar = 1,

}

| 1 |

Hello community and devs of PowerToys,

this is my first time here and I was ok with these tools until it stopped

working and an error message appears when I try to start it.

I followed istructions and copied the message below.

You can find the log here

Error Message:

Version: 1.0.0

OS Version: Microsoft Windows NT 10.0.19041.0

IntPtr Length: 8

x64: True

Date: 08/05/2020 11:06:02

Exception:

System.ObjectDisposedException: Cannot access a disposed object.

Object name: 'Timer'.

at System.Timers.Timer.set_Enabled(Boolean value)

at System.Timers.Timer.Start()

at PowerLauncher.MainWindow.OnVisibilityChanged(Object sender,

DependencyPropertyChangedEventArgs e)

at System.Windows.UIElement.RaiseDependencyPropertyChanged(EventPrivateKey

key, DependencyPropertyChangedEventArgs args)

at System.Windows.UIElement.OnIsVisibleChanged(DependencyObject d,

DependencyPropertyChangedEventArgs e)

at

System.Windows.DependencyObject.OnPropertyChanged(DependencyPropertyChangedEventArgs

e)

at

System.Windows.FrameworkElement.OnPropertyChanged(DependencyPropertyChangedEventArgs

e)

at

System.Windows.DependencyObject.NotifyPropertyChange(DependencyPropertyChangedEventArgs

args)

at System.Windows.UIElement.UpdateIsVisibleCache()

at System.Windows.PresentationSource.RootChanged(Visual oldRoot, Visual

newRoot)

at System.Windows.Interop.HwndSource.set_RootVisualInternal(Visual value)

at System.Windows.Interop.HwndSource.set_RootVisual(Visual value)

at System.Windows.Window.SetRootVisual()

at System.Windows.Window.SetRootVisualAndUpdateSTC()

at System.Windows.Window.SetupInitialState(Double requestedTop, Double

requestedLeft, Double requestedWidth, Double requestedHeight)

at System.Windows.Window.CreateSourceWindow(Boolean duringShow)

at System.Windows.Window.CreateSourceWindowDuringShow()

at System.Windows.Window.SafeCreateWindowDuringShow()

at System.Windows.Window.ShowHelper(Object booleanBox)

at System.Windows.Threading.ExceptionWrapper.InternalRealCall(Delegate

callback, Object args, Int32 numArgs)

at System.Windows.Threading.ExceptionWrapper.TryCatchWhen(Object source,

Delegate callback, Object args, Int32 numArgs, Delegate catchHandler)

|

Popup tells me to give y'all this.

2020-07-31.txt

Version: 1.0.0

OS Version: Microsoft Windows NT 10.0.19041.0

IntPtr Length: 8

x64: True

Date: 07/31/2020 17:29:59

Exception:

System.ObjectDisposedException: Cannot access a disposed object.

Object name: 'Timer'.

at System.Timers.Timer.set_Enabled(Boolean value)

at System.Timers.Timer.Start()

at PowerLauncher.MainWindow.OnVisibilityChanged(Object sender,

DependencyPropertyChangedEventArgs e)

at System.Windows.UIElement.RaiseDependencyPropertyChanged(EventPrivateKey

key, DependencyPropertyChangedEventArgs args)

at System.Windows.UIElement.OnIsVisibleChanged(DependencyObject d,

DependencyPropertyChangedEventArgs e)

at

System.Windows.DependencyObject.OnPropertyChanged(DependencyPropertyChangedEventArgs

e)

at

System.Windows.FrameworkElement.OnPropertyChanged(DependencyPropertyChangedEventArgs

e)

at

System.Windows.DependencyObject.NotifyPropertyChange(DependencyPropertyChangedEventArgs

args)

at System.Windows.UIElement.UpdateIsVisibleCache()

at System.Windows.PresentationSource.RootChanged(Visual oldRoot, Visual

newRoot)

at System.Windows.Interop.HwndSource.set_RootVisualInternal(Visual value)

at System.Windows.Interop.HwndSource.set_RootVisual(Visual value)

at System.Windows.Window.SetRootVisual()

at System.Windows.Window.SetRootVisualAndUpdateSTC()

at System.Windows.Window.SetupInitialState(Double requestedTop, Double

requestedLeft, Double requestedWidth, Double requestedHeight)

at System.Windows.Window.CreateSourceWindow(Boolean duringShow)

at System.Windows.Window.CreateSourceWindowDuringShow()

at System.Windows.Window.SafeCreateWindowDuringShow()

at System.Windows.Window.ShowHelper(Object booleanBox)

at System.Windows.Threading.ExceptionWrapper.InternalRealCall(Delegate

callback, Object args, Int32 numArgs)

at System.Windows.Threading.ExceptionWrapper.TryCatchWhen(Object source,

Delegate callback, Object args, Int32 numArgs, Delegate catchHandler)

| 1 |

So far I really like Atom 1.0 - it's got a long way to go, but it will get

there. We needed an editor like this.

Anyway: the only thing bothering me was how quickly it installed itself

(Windows 8.1, 64 bit). Even though that might sound like a _good_¨thing, it

isn't. When I double-click the installer I get no options, no information

whatsoever. Only a screen "Atom is installing and will launch when ready." For

some people this might be the best approach, but for a whole lot of others it

isn't.

Some options would be great. I am thinking: file association, installation

directory, packages to include with installation (I for one don't need all

packages that are included on default install) and so on. I could understand

that file association and package control aren't included (though I'd really

like that) but why not the ability to choose your own installation directory?

|

So far I really like Atom 1.0 - it's got a long way to go, but it will get

there. We needed an editor like this.

Anyway: the only thing bothering me was how quickly it installed itself

(Windows 8.1, 64 bit). Even though that might sound like a _good_¨thing, it

isn't. When I double-click the installer I get no options, no information

whatsoever. Only a screen "Atom is installing and will launch when ready." For

some people this might be the best approach, but for a whole lot of others it

isn't.

Some options would be great. I am thinking: file association, installation

directory, packages to include with installation (I for one don't need all

packages that are included on default install) and so on. I could understand

that file association and package control aren't included (though I'd really

like that) but why not the ability to choose your own installation directory?

| 1 |

> Issue originally made by @xfix

### Bug information

* **Babel version:** 6.2.1

* **Node version:** 5.1.0

* **npm version:** 3.5.0

### Options

--presets es2015

### Input code

let results = []

for (let i = 0; i < 3; i++) {

switch ('x') {

case 'x':

const x = i

results.push(() => x)

}

}

for (const result of results) {

console.log(result())

}

### Description

When I declare a block scoped variable in a switch block, it's not recognized

as a block scoped variable for purpose of functions, even when it's declared

inside a for loop. This example prints 2, 2, 2, when it should print 0, 1, 2.

(I outright apologize if this is a duplicate, finding duplicates with that bug

tracker is annoying)

|

## Bug Report

**Current Behavior**

I am getting following error. Can't figure out solution. I found many post

which looks duplicate here but, nothing work.

node_modules@babel\helper-plugin-utils\lib\index.js

throw Object.assign(err, {

Error: Requires Babel "^7.0.0-0", but was loaded with "6.26.3". If you are sure you have a compatible version of @babel/core, it is likely that something in your build process is loading the wrong version. Inspect the stack trace of this error to look for the first entry that doesn't mention "@babel/core" or "babel-core" to see what is calling Babel.

**Babel Configuration (.babelrc, package.json, cli command)**

"dependencies": {

"express": "^4.16.4",

"isomorphic-fetch": "^2.2.1",

"react": "^16.6.3",

"react-dom": "^16.6.3",

"react-redux": "^5.1.1",

"react-router": "^4.3.1",

"react-router-config": "^1.0.0-beta.4",

"react-router-dom": "^4.3.1",

"redux": "^4.0.1",

"redux-thunk": "^2.3.0"

},

"devDependencies": {

"@babel/cli": "^7.2.3",

"@babel/core": "^7.2.2",

"@babel/plugin-proposal-class-properties": "^7.2.0",

"@babel/plugin-transform-runtime": "^7.2.0",

"@babel/preset-env": "^7.3.1",

"@babel/preset-react": "^7.0.0",

"babel-core": "^7.0.0-bridge.0",

"babel-jest": "^24.0.0",

"babel-loader": "^7.1.5",

"css-loader": "^1.0.1",

"cypress": "^3.1.3",

"enzyme": "^3.8.0",

"enzyme-adapter-react-16": "^1.7.1",

"enzyme-to-json": "^3.3.5",

"extract-text-webpack-plugin": "^4.0.0-beta.0",

"html-webpack-plugin": "^3.2.0",

"jest": "^24.0.0",

"jest-fetch-mock": "^2.0.1",

"json-loader": "^0.5.7",

"nodemon": "^1.18.9",

"npm-run-all": "^4.1.5",

"open": "0.0.5",

"redux-devtools": "^3.4.2",

"redux-mock-store": "^1.5.3",

"regenerator-runtime": "^0.13.1",

"style-loader": "^0.23.1",

"uglifyjs-webpack-plugin": "^2.0.1",

"webpack": "^4.26.1",

"webpack-cli": "^3.1.2",

"webpack-dev-server": "^3.1.14",

"webpack-node-externals": "^1.7.2"

},

"babel": {

"presets": [

"@babel/preset-env",

"@babel/preset-react"

],

"plugins": [

"@babel/plugin-transform-runtime",

"@babel/plugin-proposal-class-properties"

]

}

**Environment**

* Babel version(s): [> v7]

* Node/npm version: [Node 8.12.0/npm 6.4.1]

* OS: [Windows 10]

Stackoverflow

| 0 |

### Vue.js version

2.0.0-rc.4

### Reproduction Link

https://jsbin.com/rifuxuxuxa/1/edit?js,console,output

### Steps to reproduce

1. After hitting 'Run with JS', click the 'click here' button

2. Wait till `this.show` is `true`(8 seconds), click the 'click here' button again

### What is Expected?

In step 1, prints '2333'

In step 2, prints '2333'

### What is actually happening?

In step 1, prints '2333'

In step 2, an error occurs 'o.fn is not a function'

This can only be reproduced when there is a `v-show` element around. I have

tried to put the `v-show` element (in this case, the 'balabalabala' span)

before the `slot`, after the `slot`, outside the `div`, and they all report

the same error after `this.show` is set to `true`.

This may have something to do with this.

|

I reported the same issue for `v-if` which got fixed in `vue@2.0.0-rc.4`.

However, with this version the same code using `v-show` – which previously

worked perfectly – does not work anymore.

### Vue.js version

2.0.0-rc.4

### Reproduction Link

http://codepen.io/analog-nico/pen/KgPKRq

### Steps to reproduce

1. Click the link "Open popup using v-show"

2. A badly designed popup opens

3. Click the "Close" link

### What is Expected?

* The popup closes successfully

### What is actually happening?

* Vue fails to call an internal function and throws: `TypeError: o.fn is not a function. (In 'o.fn(ev)', 'o.fn' is an instance of Object)`

* The `closePopupUsingVShow` function attached to the "Close" link's click event never gets called.

* The popup does not close.

For reference the codepen contains the exact same implementation of the popup

with the only difference that it uses `v-if` instead of `v-show` to show/hide

the popup. `v-if` works perfectly.

| 1 |

**Description**

I'd like to propose a few steps to improve the validation constraints:

* Deprecate empty strings (`""`) currently happily passing in string constraints (e.g. `Email`)

* https://docs.jboss.org/hibernate/stable/beanvalidation/api/javax/validation/constraints/Email.html

* Deprecate non string values in `NotBlank` / `Blank` and 'whitespaced' strings passing (`" "`)

* https://docs.jboss.org/hibernate/stable/beanvalidation/api/javax/validation/constraints/NotBlank.html

* allow for null (#27876)

* Consider `NotEmpty` / `Empty` as the current `NotBlank` / `Blank` constraints

* https://docs.jboss.org/hibernate/stable/beanvalidation/api/javax/validation/constraints/NotEmpty.html

* I dont think we should add these (but favor specific constraints) as `empty` in PHP is different as described above (ints, bools, etc). Not sure we should follow either one :) thus possible confusion

If this happens we'd do simply

* `@Email`

* `@NotNull @Email`

* `@NotNull @Length(min=3) @NotBlank`

* `@NotNull @NotBlank`

* `@Type(array) @Count(3)`

Thoughts?

|

**Description**

In most validators, there's a failsafe mecanism that prevent the validator

from validating if it's a `null` value, as there's a validator for that

(`NotNull`). I think `NotBlank` shouldn't bother on `null` values, especially

as `NotBlank` and `NotNull` are used together to prevent `null` values too.

Not really a feature request, but not really a bug too... more like a RFC but

for all versions. But I guess this would be a BC break though. :/ So maybe add

a deprecated on the validator if it's a null value or something, and remove

the `null` invalidation from the `NotBlank` on 5.0 ?

Currently, we need to reimplement the validator just to skip `null` values...

**Possible Solution**

Either deprecate the validation on `null` value in `NotBlank`, or do as in

most validators, (if `null` value, then skip). But as I mentionned, this would

probably be a bc break.

| 1 |

_Original tickethttp://projects.scipy.org/numpy/ticket/1880 on 2011-06-26 by

@nilswagner01, assigned to unknown._

======================================================================

FAIL: test_timedelta_scalar_construction (test_datetime.TestDateTime)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/nwagner/local/lib64/python2.6/site-packages/numpy/core/tests/test_datetime.py", line 189, in test_timedelta_scalar_construction

assert_equal(str(np.timedelta64(3, 's')), '3 seconds')

File "/home/nwagner/local/lib64/python2.6/site-packages/numpy/testing/utils.py", line 313, in assert_equal

raise AssertionError(msg)

AssertionError:

Items are not equal:

ACTUAL: '%lld seconds'

DESIRED: '3 seconds'

|

_Original tickethttp://projects.scipy.org/numpy/ticket/1887 on 2011-06-29 by

@dhomeier, assigned to unknown._