text1 stringlengths 2 269k | text2 stringlengths 2 242k | label int64 0 1 |

|---|---|---|

There's no e2e test for dynamic volume provisioning AFAICT.

Also, on Ubernetes-Lite, we should verify that a dynamically provisioned

volume gets zone labels.

|

I'm excited to see that auto-provisioned volumes are going to be in 1.2 (even

if in alpha), but AFAICT there's no e2e coverage yet.

| 1 |

#### Describe the bug

There is an issue when using `SimpleImputer` with a new Pandas dataframe,

specifically if it has a column that is of type `Int64` and has a `NA` value

in the training data.

#### Code to Reproduce

def test_simple_imputer_with_Int64_column():

index = pd.Index(['A', 'B', 'C'], name='group')

df = pd.DataFrame({

'att-1': [10, 20, np.nan],

'att-2': [30, 40, 30]

}, index=index)

# TODO: This line breaks the test! Comment out and it works

df = df.astype('Int64')

imputer = SimpleImputer()

imputer.fit(df)

imputed = imputer.transform(df)

df_imputed = pd.DataFrame(imputed, columns=['att-1', 'att-2'], index=index)

assert df_imputed.loc['C', 'att-1'] == 15

#### Expected Results

Correct value is imputed

#### Actual Results

Exception raised:

TypeError: float() argument must be a string or a number, not 'NAType'

#### Versions

System:

python: 3.7.4 (default, Aug 13 2019, 15:17:50) [Clang 4.0.1 (tags/RELEASE_401/final)]

executable: <path-to-my-project>/.venv/bin/python

machine: Darwin-19.3.0-x86_64-i386-64bit

Python dependencies:

pip: 19.3.1

setuptools: 42.0.2

sklearn: 0.22.1

numpy: 1.18.1

scipy: 1.4.1

Cython: None

pandas: 1.0.1

matplotlib: None

joblib: 0.14.1

Built with OpenMP: True

|

#### Describe the bug

Starting from pandas 1.0, an experimental pd.NA value (singleton) is available

to represent scalar missing values. At this moment, it is used in the nullable

integer, boolean and dedicated string data types as the missing value

indicator.

I get the error `TypeError: float() argument must be a string or a number, not

'NAType'` when transfer integer data containing NaN in the form of a pandas

dataframe to preprocessing module, in particular QuantileTransformer and

StandardScaler after updating pandas to the current version.

#### Steps/Code to Reproduce

Example:

import pandas as pd

import numpy as np

from sklearn.preprocessing import StandardScaler

df = pd.DataFrame({'a': [1,2,3, np.nan, np.nan],

'b': [np.nan, np.nan, 8, 4, 6]},

dtype = pd.Int64Dtype())

scaler = StandardScaler()

scaler.fit_transform(df)

#### Expected Results

array([[-1.22474487, nan],

[ 0. , nan],

[ 1.22474487, 1.22474487],

[ nan, -1.22474487],

[ nan, 0. ]])

#### Actual Results

TypeError Traceback (most recent call last)

<ipython-input-42-2104609ef9c0> in <module>

7 print(df)

8 scaler = StandardScaler()

----> 9 scaler.fit_transform(df)

/anaconda3/lib/python3.6/site-packages/sklearn/base.py in fit_transform(self, X, y, **fit_params)

569 if y is None:

570 # fit method of arity 1 (unsupervised transformation)

--> 571 return self.fit(X, **fit_params).transform(X)

572 else:

573 # fit method of arity 2 (supervised transformation)

/anaconda3/lib/python3.6/site-packages/sklearn/preprocessing/_data.py in fit(self, X, y)

667 # Reset internal state before fitting

668 self._reset()

--> 669 return self.partial_fit(X, y)

670

671 def partial_fit(self, X, y=None):

/anaconda3/lib/python3.6/site-packages/sklearn/preprocessing/_data.py in partial_fit(self, X, y)

698 X = check_array(X, accept_sparse=('csr', 'csc'),

699 estimator=self, dtype=FLOAT_DTYPES,

--> 700 force_all_finite='allow-nan')

701

702 # Even in the case of `with_mean=False`, we update the mean anyway

/anaconda3/lib/python3.6/site-packages/sklearn/utils/validation.py in check_array(array, accept_sparse, accept_large_sparse, dtype, order, copy, force_all_finite, ensure_2d, allow_nd, ensure_min_samples, ensure_min_features, warn_on_dtype, estimator)

529 array = array.astype(dtype, casting="unsafe", copy=False)

530 else:

--> 531 array = np.asarray(array, order=order, dtype=dtype)

532 except ComplexWarning:

533 raise ValueError("Complex data not supported\n"

/anaconda3/lib/python3.6/site-packages/numpy/core/_asarray.py in asarray(a, dtype, order)

83

84 """

---> 85 return array(a, dtype, copy=False, order=order)

86

87

TypeError: float() argument must be a string or a number, not 'NAType'

#### Versions

System:

python: 3.6.10 |Anaconda, Inc.| (default, Jan 7 2020, 15:01:53) [GCC 4.2.1 Compatible Clang 4.0.1 (tags/RELEASE_401/final)]

executable: /anaconda3/bin/python

machine: Darwin-17.7.0-x86_64-i386-64bit

Python dependencies:

pip: 20.0.2

setuptools: 45.2.0.post20200210

sklearn: 0.22.1

numpy: 1.18.1

scipy: 1.4.1

Cython: None

pandas: 1.0.1

matplotlib: 3.1.3

joblib: 0.14.1

Built with OpenMP: True

| 1 |

### Describe the issue:

numpy 1.20 encouraged specifying plain `bool` as a dtype as an equivalent to

`np.bool_`, but these aliases don't behave the same as the explicit numpy

versions. mypy infers the dtype as "Any" instead. See the example below, where

I expected both lines to output the same type.

### Reproduce the code example:

import numpy as np

def what_the() -> None:

reveal_type(np.arange(10, dtype=bool))

reveal_type(np.arange(10, dtype=np.bool_))

### Error message:

No error, but output from mypy 0.961:

show_type2.py:4: note: Revealed type is "numpy.ndarray[Any, numpy.dtype[Any]]"

show_type2.py:5: note: Revealed type is "numpy.ndarray[Any, numpy.dtype[numpy.bool_]]"

### NumPy/Python version information:

1.23.0 3.10.5 (main, Jun 11 2022, 16:53:24) [GCC 9.4.0]

|

Back in #17719 the first steps were taken into introducing static typing

support for array dtypes.

Since the dtype has a substantial effect on the semantics of an array, there

is a lot of type-safety

to be gained if the various function-annotations in numpy can actually utilize

this information.

Examples of this would be the rejection of string-arrays for arithmetic

operations, or inferring the

output dtype of mixed float/integer operations.

## The Plan

With this in mind I'd ideally like to implement some basic dtype support

throughout the main numpy

namespace (xref #16546) before the release of 1.22.

Now, what does "basic" mean in this context? Namely, any array-/dtype-like

that can be parametrized

w.r.t. `np.generic`. Notably this excludes builtin scalar types and character

codes (literal strings), as the

only way of implementing the latter two is via excessive use of overloads.

With this in mind, I realistically only expect dtype-support for builtin

scalar types ( _e.g._ `func(..., dtype=float)`)

to-be added with the help of a mypy plugin, _e.g._ via injecting a type-check-

only method into the likes of

`builtins.int` that holds some sort of explicit reference to `np.int_`.

## Examples

Two examples wherein the dtype can be automatically inferred:

from typing import TYPE_CHECKING

import numpy as np

AR_1 = np.array(np.float64(1))

AR_2 = np.array(1, dtype=np.float64)

if TYPE_CHECKING:

reveal_type(AR_1) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[numpy.floating*[numpy.typing._64Bit*]]]"

reveal_type(AR_2) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[numpy.floating*[numpy.typing._64Bit*]]]"

Three examples wherein dtype-support is substantially more difficult to

implement.

AR_3 = np.array(1.0)

AR_4 = np.array(1, dtype=float)

AR_5 = np.array(1, dtype="f8")

if TYPE_CHECKING:

reveal_type(AR_3) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[Any]]"

reveal_type(AR_4) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[Any]]"

reveal_type(AR_5) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[Any]]"

In the latter three cases one can always manually declare the dtype of the

array:

import numpy.typing as npt

AR_6: npt.NDArray[np.float64] = np.array(1.0)

if TYPE_CHECKING:

reveal_type(AR_6) # note: Revealed type is "numpy.ndarray[Any, numpy.dtype[numpy.floating*[numpy.typing._64Bit*]]]"

| 1 |

When I run `flutter test --coverage` on

`https://github.com/dnfield/flutter_svg`, I eventually get this output over

and over again:

unhandled error during test:

/Users/dnfield/src/flutter_svg/test/xml_svg_test.dart

Bad state: Couldn't find line and column for token 2529 in file:///b/build/slave/Linux_Engine/build/src/third_party/dart/sdk/lib/collection/list.dart.

#0 VMScript._lineAndColumn (package:vm_service_client/src/script.dart:243:5)

#1 _ScriptLocation._ensureLineAndColumn (package:vm_service_client/src/script.dart:314:26)

#2 _ScriptLocation.line (package:vm_service_client/src/script.dart:295:5)

#3 _getCoverageJson (package:coverage/src/collect.dart:103:46)

<asynchronous suspension>

#4 _getAllCoverage (package:coverage/src/collect.dart:51:26)

<asynchronous suspension>

#5 collect (package:coverage/src/collect.dart:35:18)

<asynchronous suspension>

#6 CoverageCollector.collectCoverage (package:flutter_tools/src/test/coverage_collector.dart:55:45)

<asynchronous suspension>

#7 CoverageCollector.handleFinishedTest (package:flutter_tools/src/test/coverage_collector.dart:27:11)

<asynchronous suspension>

#8 _FlutterPlatform._startTest (package:flutter_tools/src/test/flutter_platform.dart:650:30)

<asynchronous suspension>

#9 _FlutterPlatform.loadChannel (package:flutter_tools/src/test/flutter_platform.dart:408:36)

#10 PlatformPlugin.load (package:test/src/runner/plugin/platform.dart:65:19)

<asynchronous suspension>

#11 Loader.loadFile.<anonymous closure> (package:test/src/runner/loader.dart:248:36)

<asynchronous suspension>

#12 new LoadSuite.<anonymous closure>.<anonymous closure> (package:test/src/runner/load_suite.dart:92:31)

<asynchronous suspension>

#13 invoke (package:test/src/utils.dart:241:5)

#14 new LoadSuite.<anonymous closure> (package:test/src/runner/load_suite.dart:91:7)

#15 Invoker._onRun.<anonymous closure>.<anonymous closure>.<anonymous closure>.<anonymous closure> (package:test/src/backend/invoker.dart:404:25)

<asynchronous suspension>

#16 new Future.<anonymous closure> (dart:async/future.dart:176:37)

#17 StackZoneSpecification._run (package:stack_trace/src/stack_zone_specification.dart:209:15)

#18 StackZoneSpecification._registerCallback.<anonymous closure> (package:stack_trace/src/stack_zone_specification.dart:119:48)

#19 _rootRun (dart:async/zone.dart:1120:38)

#20 _CustomZone.run (dart:async/zone.dart:1021:19)

#21 _CustomZone.runGuarded (dart:async/zone.dart:923:7)

#22 _CustomZone.bindCallbackGuarded.<anonymous closure> (dart:async/zone.dart:963:23)

#23 StackZoneSpecification._run (package:stack_trace/src/stack_zone_specification.dart:209:15)

#24 StackZoneSpecification._registerCallback.<anonymous closure> (package:stack_trace/src/stack_zone_specification.dart:119:48)

#25 _rootRun (dart:async/zone.dart:1124:13)

#26 _CustomZone.run (dart:async/zone.dart:1021:19)

#27 _CustomZone.bindCallback.<anonymous closure> (dart:async/zone.dart:947:23)

#28 Timer._createTimer.<anonymous closure> (dart:async/runtime/libtimer_patch.dart:21:15)

#29 _Timer._runTimers (dart:isolate/runtime/libtimer_impl.dart:382:19)

#30 _Timer._handleMessage (dart:isolate/runtime/libtimer_impl.dart:416:5)

#31 _RawReceivePortImpl._handleMessage (dart:isolate/runtime/libisolate_patch.dart:171:12)

00:16 +14 -1: loading /Users/dnfield/src/flutter_svg/test/xml_svg_test.dart [E]

Bad state: Couldn't find line and column for token 2529 in file:///b/build/slave/Linux_Engine/build/src/third_party/dart/sdk/lib/collection/list.dart.

package:vm_service_client/src/script.dart 243:5 VMScript._lineAndColumn

package:vm_service_client/src/script.dart 314:26 _ScriptLocation._ensureLineAndColumn

package:vm_service_client/src/script.dart 295:5 _ScriptLocation.line

package:coverage/src/collect.dart 103:46 _getCoverageJson

===== asynchronous gap ===========================

dart:async/future_impl.dart 22:43 _Completer.completeError

dart:async/runtime/libasync_patch.dart 40:18 _AsyncAwaitCompleter.completeError

package:coverage/src/collect.dart _getCoverageJson

===== asynchronous gap ===========================

dart:async/zone.dart 1053:19 _CustomZone.registerUnaryCallback

dart:async/runtime/libasync_patch.dart 77:23 _asyncThenWrapperHelper

package:coverage/src/collect.dart _getCoverageJson

package:coverage/src/collect.dart 51:26 _getAllCoverage

===== asynchronous gap ===========================

dart:async/zone.dart 1053:19 _CustomZone.registerUnaryCallback

dart:async/runtime/libasync_patch.dart 77:23 _asyncThenWrapperHelper

package:coverage/src/collect.dart _getAllCoverage

package:coverage/src/collect.dart 35:18 collect

===== asynchronous gap ===========================

dart:async/zone.dart 1053:19 _CustomZone.registerUnaryCallback

dart:async/runtime/libasync_patch.dart 77:23 _asyncThenWrapperHelper

package:coverage/src/collect.dart collect

package:flutter_tools/src/test/coverage_collector.dart 55:45 CoverageCollector.collectCoverage

===== asynchronous gap ===========================

dart:async/zone.dart 1053:19 _CustomZone.registerUnaryCallback

dart:async/runtime/libasync_patch.dart 77:23 _asyncThenWrapperHelper

package:flutter_tools/src/test/flutter_platform.dart _FlutterPlatform._startTest

package:flutter_tools/src/test/flutter_platform.dart 408:36 _FlutterPlatform.loadChannel

package:test/src/runner/plugin/platform.dart 65:19 PlatformPlugin.load

===== asynchronous gap ===========================

dart:async/zone.dart 1053:19 _CustomZone.registerUnaryCallback

dart:async/runtime/libasync_patch.dart 77:23 _asyncThenWrapperHelper

package:test/src/runner/loader.dart Loader.loadFile.<fn>

package:test/src/runner/load_suite.dart 92:31 new LoadSuite.<fn>.<fn>

===== asynchronous gap ===========================

dart:async/zone.dart 1045:19 _CustomZone.registerCallback

dart:async/zone.dart 962:22 _CustomZone.bindCallbackGuarded

dart:async/timer.dart 52:45 new Timer

dart:async/timer.dart 87:9 Timer.run

dart:async/future.dart 174:11 new Future

package:test/src/backend/invoker.dart 403:15 Invoker._onRun.<fn>.<fn>.<fn>

It eventually causes the process to hang and CI to timeout. It's reproducing

locally as well. Upstream dart issue?

| 0 | |

You need to double-transpose vectors to make them into 1-column matrices so

that

you can add them to 1-column matrices or multiply them with one-row matrices

(on

either side).

|

julia> X = [ i^2 - j | i=1:10, j=1:10 ];

julia> typeof(X)

Array{Int64,2}

julia> X[:,1]

10x1 Int64 Array

0

3

8

15

24

35

48

63

80

99

| 1 |

React v16.3 context provided in `pages/_app.js` can be consumed and rendered

in pages on the client, but is undefined in SSR. This causes React SSR markup

mismatch errors.

Note that context can be universally provided/consumed _within_

`pages/_app.js`, the issue is specifically when providing context in

`pages/_app.js` and consuming it in a page such as `pages/index.js`.

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

Context provided in `pages/_app.js` should be consumable in pages both on the

server for SSR and when browser rendering.

## Current Behavior

Context provided in `pages/_app.js` is undefined when consumed in pages for

SSR. It can only be consumed for client rendering.

## Steps to Reproduce (for bugs)

In `pages/_app.js`:

import App, { Container } from 'next/app'

import React from 'react'

import TestContext from '../context'

export default class MyApp extends App {

render () {

const { Component, pageProps } = this.props

return (

<Container>

<TestContext.Provider value="Test value.">

<Component {...pageProps} />

</TestContext.Provider>

</Container>

)

}

}

In `pages/index.js`:

import TestContext from '../context'

export default () => (

<TestContext.Consumer>

{value => value}

</TestContext.Consumer>

)

In `context.js`:

import React from 'react'

export default React.createContext()

Will result in:

## Context

A large motivation for the `pages/_app.js` feature is to be able to provide

context persistently available across pages. It's unfortunate the current

implementation does not support this basic use case.

I'm attempting to isomorphically provide the cookie in context so that

`graphql-react` `<Query />` components can get the user's access token to make

GraphQL API requests. This approach used to work with separately decorated

pages.

## Your Environment

Tech | Version

---|---

next | v6.0.0-canary.5

node | v9.11.1

|

Relates to #2438 .

If we add the same `<link />` tag multiples in different `<Head />`, they are

duplicated in the rendered the DOM

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

multiple `<link />` tags with exactly the same attributes should be du-duped

when rendering HTML `<head>` elements

e.g.

component1.js

import Head from 'next/head'

<Head>

<link rel="stylesheet" href="/static/style.css" />

</Head>

component2.js

import Head from 'next/head'

<Head>

<link rel="stylesheet" href="/static/style.css" />

</Head>

Then the rendered HTML should be, where duplicated tags are unique'd

<html>

<head>

<link rel="stylesheet" href="/static/style.css" class="next-head" />

</head>

</html>

## Current Behavior

There will be duplicated tags rendered

<html>

<head>

<link rel="stylesheet" href="/static/style.css" class="next-head" />

<link rel="stylesheet" href="/static/style.css" class="next-head" />

</head>

</html>

## Context

I am currently working on a solution with `react-apollo` which allows the data

be pre-fetched before a new page is rendered at client side, the example with-

apollo-redux only supports prefetching data at server side, therefore, at

client side I have to walk through the whole component tree again and do data

fetching if one node from the component tree has been wrapped by the `graphql`

HOC.

Since the component tree contains `<Head />` tags that produces side effects

while going through the tree, so duplicated `<link />` tags get inserted in

the DOM. I was supposed to call `Head.rewind()` but it is not allowed at

client side.

As I know react-helmet will not generate duplicated `<link />` tags

I know we should leave user the liberty of inserting duplicated `<link />`

tags if it is desired. But I believe in most cases user doesn't want it. So I

am wondering if it would be OK by adding a `unique` flag on the tags so the

head.js would de-dupe tags with `unique` specified.

import Head from 'next/head'

<Head>

<link rel="stylesheet" href="/static/style.css" unique />

</Head>

| 0 |

In Bootstrap 3.0.3, when I use the "table table-condensed table-bordered

table-striped" classes on a table, the table-striped defeats all the table

contextual classes (.success, .warning, .danger, .active) in its rows or

cells.

When only the table-striped class is removed, the contextual classes then work

perfectly within the rest of the table-level classes listed above.

I tried substituting the BS CSS "table-striped" rule so it would colorize the

even rows instead of the odd, but it still fails.

Is this a bug or by design?

|

i have table with class .table-striped. it has 3 rows with tr.danger. only the

middle one is red, the other two are default color.

when i remove .table-striped, it works correctly

| 1 |

**TypeScript Version:**

1.9.0-dev / nightly (1.9.0-dev.20160311)

**Code**

class ModelA<T extends BaseData>

{

public prop : T;

public constructor($prop : T) { }

public Foo<TData, TModel>(

$fn1 : ($x : T) => TData,

$fn2 : ($x : TData) => TModel) { }

public Foo1<TData, TModel extends ModelA<any>>(

$fn1 : ($x : T) => TData,

$fn2 : ($x : TData) => TModel) { }

public Foo2<TData extends BaseData, TModel extends ModelA<TData>>(

$fn1 : ($x : T) => TData,

$fn2 : ($x : TData) => TModel) { }

}

class ModelB extends ModelA<Data> { }

class BaseData

{

public a : string;

}

class Data extends BaseData

{

public b : Data;

}

class P

{

public static Run()

{

var modelA = new ModelA<Data>(new Data());

modelA.Foo(x1 => x1.b, x2 => new ModelB(x2));

modelA.Foo1(x1 => x1.b, x2 => new ModelB(x2));

// Why is this not working??? inferred type for x2 : BaseData

modelA.Foo2(x1 => x1.b, x2 => new ModelB(x2)); // Error

}

}

**Expected behavior:**

modelA.Foo2 call should infer the type.

**Actual behavior:**

Is not inferring the actual type.

|

**TypeScript Version:**

1.8.0

**Code**

interface Class<T> {

new(): T;

}

declare function create1<T>(ctor: Class<T>): T;

declare function create2<T, C extends Class<T>>(ctor: C): T;

class A {}

let a1 = create1(A); // a: A --> OK

let a2 = create2(A); // a: {} --> Should be A

**Context**

The example above is simplified to illustrate the difference between `create1`

and `create2`. I need both type parameters for the use case I have in mind

(React) because it returns a type which is parameterized by both `T` and `C`:

declare function createElement<T, C extends Class<T>>(type: C): Element<T, C>;

var e = createElement(A); // e: Element<{}, typeof A> --> Should be Element<A, typeof A>

declare function render<T>(e: Element<T, any>): T;

var a = render(e); // a: {} --> Should be A

Again, this is simplified, but the motivation is to improve the return type

inference of `ReactDOM.render`.

| 1 |

## Steps to Reproduce

Ran the Hello World guide, the app crashes when i run it in debug mode. But

then i can start app the app normally and everything works fine, but i could

not find a way to attach a debugger.

I am using a tablet: Lenovo TB3 710I

## Logs

Exception from flutter run: FormatException: Bad UTF-8 encoding 0xb4

dart:convert/utf.dart 558 _Utf8Decoder.convert

dart:convert/string_conversion.dart 333 _Utf8ConversionSink.addSlice

dart:convert/string_conversion.dart 329 _Utf8ConversionSink.add

dart:convert/chunked_conversion.dart 92 _ConverterStreamEventSink.add

dart:async/stream_transformers.dart 119 _SinkTransformerStreamSubscription._handleData

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>.<fn>

package:stack_trace/src/stack_zone_specification.dart 185 StackZoneSpecification._run

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>.<fn>

package:stack_trace/src/stack_zone_specification.dart 185 StackZoneSpecification._run

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>

dart:async/zone.dart 1158 _rootRunUnary

dart:async/zone.dart 1037 _CustomZone.runUnary

dart:async/zone.dart 932 _CustomZone.runUnaryGuarded

dart:async/stream_impl.dart 331 _BufferingStreamSubscription._sendData

dart:async/stream_impl.dart 258 _BufferingStreamSubscription._add

dart:async/stream_controller.dart 768 _StreamController&&_SyncStreamControllerDispatch._sendData

dart:async/stream_controller.dart 635 _StreamController._add

dart:async/stream_controller.dart 581 _StreamController.add

dart:io-patch/socket_patch.dart 1680 _Socket._onData

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>.<fn>

package:stack_trace/src/stack_zone_specification.dart 185 StackZoneSpecification._run

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>.<fn>

package:stack_trace/src/stack_zone_specification.dart 185 StackZoneSpecification._run

package:stack_trace/src/stack_zone_specification.dart 107 StackZoneSpecification._registerUnaryCallback.<fn>

dart:async/zone.dart 1162 _rootRunUnary

dart:async/zone.dart 1037 _CustomZone.runUnary

dart:async/zone.dart 932 _CustomZone.runUnaryGuarded

dart:async/stream_impl.dart 331 _BufferingStreamSubscription._sendData

dart:async/stream_impl.dart 258 _BufferingStreamSubscription._add

dart:async/stream_controller.dart 768 _StreamController&&_SyncStreamControllerDispatch._sendData

dart:async/stream_controller.dart 635 _StreamController._add

dart:async/stream_controller.dart 581 _StreamController.add

dart:io-patch/socket_patch.dart 1247 _RawSocket._RawSocket.<fn>

dart:io-patch/socket_patch.dart 781 _NativeSocket.issueReadEvent.issue

dart:async/schedule_microtask.dart 41 _microtaskLoop

dart:async/schedule_microtask.dart 50 _startMicrotaskLoop

dart:isolate-patch/isolate_patch.dart 96 _runPendingImmediateCallback

dart:isolate-patch/isolate_patch.dart 149 _RawReceivePortImpl._handleMessage

## Flutter Doctor

I cant run any flutter command from the IntelliJ terminal.

output:

flutter: command not found

Flutter --version output:

Flutter • channel master • https://github.com/flutter/flutter.git

Framework • revision `3150e3f` (2 hours ago) • 2017-01-13 12:46:13

Engine • revision `b3ed791`

Tools • Dart 1.21.

|

## Steps to Reproduce

1. cd `/examples/hello_world/`

2. connect a physical Android device

3. `flutter run`

Output:

mit-macbookpro2:hello_world mit$ flutter run -d 00ca05b380789730

Launching lib/main.dart on Nexus 5X in debug mode...

Exception from flutter run: FormatException: Bad UTF-8 encoding 0xff

dart:isolate _RawReceivePortImpl._handleMessage

Building APK in debug mode (android-arm)... 5081ms

Installing build/app.apk... 2435ms

Syncing files to device... 3968ms

Running on an Android or iOS simulator does not throw the exception!

## Flutter Doctor

Paste the output of running `flutter doctor` here.

$ flutter doctor

[✓] Flutter (on Mac OS, channel master)

• Flutter at /Users/mit/dev/github/flutter

• Framework revision 3a43fc88b6 (5 hours ago), 2017-01-31 23:32:10

• Engine revision 2d54edf0f9

• Tools Dart version 1.22.0-dev.9.1

[✓] Android toolchain - develop for Android devices (Android SDK 25.0.0)

• Android SDK at /Users/mit/Library/Android/sdk

• Platform android-25, build-tools 25.0.0

• ANDROID_HOME = /Users/mit/Library/Android/sdk

• Java(TM) SE Runtime Environment (build 1.8.0_112-b16)

[✓] iOS toolchain - develop for iOS devices (Xcode 8.2.1)

• XCode at /Applications/Xcode.app/Contents/Developer

• Xcode 8.2.1, Build version 8C1002

[✓] IntelliJ IDEA Ultimate Edition (version 2016.3.4)

• Dart plugin version 163.12753

• Flutter plugin version 0.1.8.1

[✓] Connected devices

• Nexus 5X • 00ca05b380789730 • android-arm • Android 7.0 (API 24)

• Android SDK built for x86 • emulator-5554 • android-x86 • Android 6.0 (API 23) (emulator)

$

| 1 |

deno: 0.21.0

v8: 7.9.304

typescript: 3.6.3

Run this code in both node.js and deno:

let o = {};

o.a = 1;

o.b = 2;

o.c = 3;

o.d = 4;

o.e = 5;

o.f = 6;

console.log(o);

Node.js output: { a: 1, b: 2, c: 3, d: 4, e: 5, f: 6 }

deno output: { a, b, c, d, e, f }

So, if there are six or more properties, deno's console.log doesn't show the

property values.

|

Match colors and semantics.

node's stringify has been tweaked and optimized over many years, changing

anything is likely surprising in a bad way.

| 1 |

Julia has kick ass features for computation.

However what is the point of computing so much if the data cannot be

visualized/inspected ?

Current 2d plotting capabilities are nice, but no real matlab/scilab/scipy

competitor would be credible without some kind of 3d plotting.

One way of going would be to "port" mayavi to Julia.

http://github.enthought.com/mayavi/mayavi/auto/examples.html

Mayavi builds on top of VTK, so the 3d system itself would not be reinvented

from scratch.

I think this would be relevant feature to have for release 2.0...

ps: 2d or 3d plotting systems in Julia should have SVG/PDF output, so it can

be used in scientific publishing...

|

I would like to discuss moving the Julia buildbots to something more

maintainable than `buildbot`. It's worked well for us for many years, but the

siloing of configuration into a separate repository, combined with the

realtively slow pace of development as compared to many other competitors (and

also the amount of cruft we've built up to get it as far as it is today) means

it's time to move to something new.

Anything we use must have the following features:

* Multi-platform; it _must_ support runners on all platforms Julia itself is built on. This encompasses:

* Linux: `x86_64` (`glibc` and `musl`), `i686`, `armv7l`, `aarch64`, `ppc64le`

* Windows: `x86_64`, `i686`

* MacOS: `x86_64`, `aarch64`

* FreeBSD: `x86_64`

* I would like the build configuration to live in an easily-modifiable format, such as a `.yml` file in the Julia repository. It's nice if we can test out different configurations just by making a PR against a repository somewhere.

Possible options include:

* GitLab CI (Note: currently missing linux ppc64le support)

* buildkite

* Azure Pipelines (Note: incomplete runner support)

* GitHub Actions (Note: incomplete runner support)

I unfortunately likely won't have time to do this all by myself, but if we

could get 1-2 community members interested in learning more about CI/CD and

who want to help have a hand in bringing the Julia CI story into a better age,

I'll be happy to work alongside them.

| 0 |

Hi,

If I use a custom distDir:

* the following error appears in the console: `http://myUrl/_next/-/page/_error` / 404 not found

* hot reloading does not work properly anymore

If I go back to the default, it goes away.

my `next.config.js` file:

// see source file server/config.js

module.exports = {

webpack: null,

poweredByHeader: false,

distDir: '.build',

assetPrefix: ''

}

Thanks,

Paul

|

I cannot get the custom distDir to work. Whenever I add a next.config.js file

and set the distDir as described in the documentation

module.exports = {

distDir: 'build'

};

the build is created in that directory, but there is always an exception being

thrown in the browser when loading the page.

Error when loading route: /_error

Error: Error when loading route: /_error

at HTMLScriptElement.script.onerror

What am I missing? This is reproducible with the simple hello world example.

| 1 |

Worked around in CI by pinning to 1.8.5, see gh-9987

For the issue, see e.g. https://circleci.com/gh/scipy/scipy/12334. It ends

with:

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!

./scipy-ref.tex:25: fontspec error: "font-not-found"

!

! The font "FreeSerif" cannot be found.

!

! See the fontspec documentation for further information.

!

! For immediate help type H <return>.

!...............................................

l.25 ]

No pages of output.

Transcript written on scipy-ref.log.

Latexmk: Log file says no output from latex

Latexmk: For rule 'pdflatex', no output was made

Latexmk: Errors, so I did not complete making targets

Collected error summary (may duplicate other messages):

pdflatex: Command for 'pdflatex' gave return code 256

Latexmk: Use the -f option to force complete processing,

unless error was exceeding maximum runs of latex/pdflatex.

Makefile:32: recipe for target 'scipy-ref.pdf' failed

make: *** [scipy-ref.pdf] Error 12

make: Leaving directory '/home/circleci/repo/doc/build/latex'

Exited with code 2

|

_Original tickethttp://projects.scipy.org/scipy/ticket/1889 on 2013-04-10 by

trac user avneesh, assigned to unknown._

Hello all,

I notice a somewhat bizarre issue when constructing sparse matrices by

initializing with 3-tuples (row index, column index, value).

The following is a slight abstraction to what my exact code is, but it shows

the behavior:

kNN = 10

dataset_size = 1661165

rowIdx = np.empty((kNN+1)*dataset_size)

colIdx = np.empty((kNN+1)*dataset_size)

vals = np.empty((kNN+1)*dataset_size)

for i, line in enumerate(data):

#perform certain operations

print vals.size, colIdx.size, rowIdx.size

print vals[np.nonzero(vals)].size

W = sp.csc_matrix((vals, (rowIdx, colIdx)), shape=(dataset_size, dataset_size))

print W.nnz

The printed outputs I get are the following:

18272815 18272815 18272815

18272815

18272465

Therefore, as you can see, there is a difference of 18272815-18272465 = 350

elements that should be non-zero in the resulting sparse matrix, but are not.

I have verified in the rowIdx and colIdx arrays that there are no duplicates,

i.e., a given (rowIdx, colIdx) pair does not appear twice (otherwise two

values would map to the same position in the sparse matrix). As per my

understanding, I should get 18272815 elements in the resulting sparse matrix,

but I fall 350 elements short.

Is this expected behavior? Am I doing something wrong?

I am running Linux x86-64-bit OpenSuSE 11.4, NumPy version 1.5.1, SciPy

version 0.9.0, Python 2.7.

| 0 |

With version 3.1.0, the HintManagerHolder.clear method is executed each time

SQL is executed?

When using HintShardingAlgorithm, you need to set HintManagerHolder before

executing SQL. I execute multiple SQL in Dao, or execute multiple Dao methods

in a service. I want to set HintManagerHolder in the form of AOP interception

before calling the method. Execute clear after the end, there is no way to

achieve

|

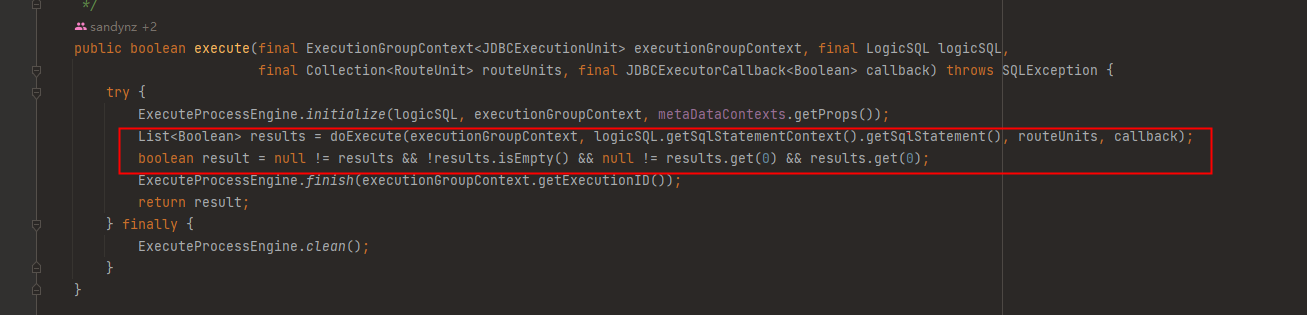

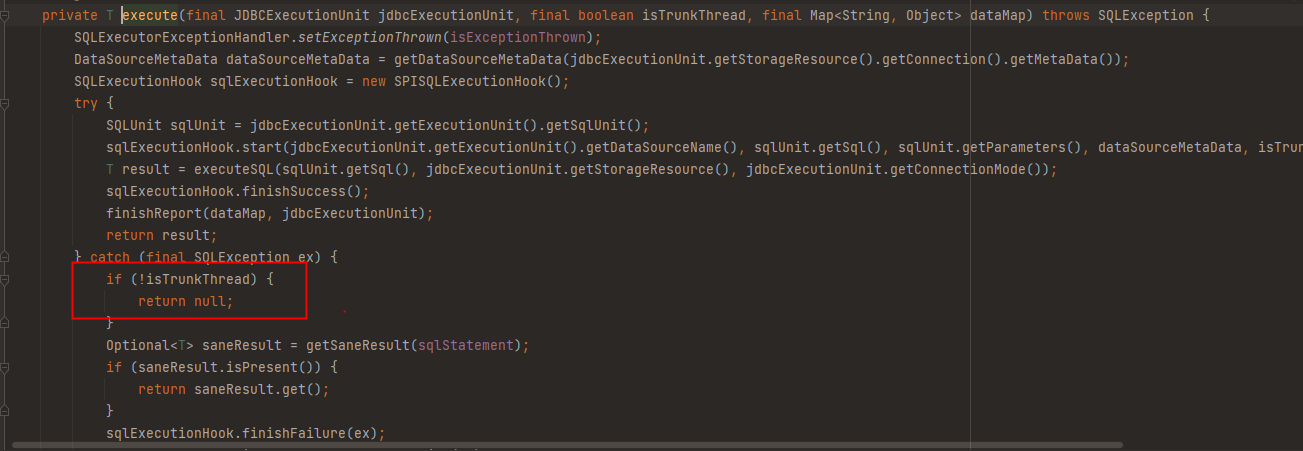

## Question

Why DriverJDBCExecutor#executor do not handle the error result of branch

thread execution?

DriverJDBCExecutor#executor:

JDBCExecutorCallback#execute:

In this way, the result of the overall sql execution is inconsistent with the

expected result.

eg:

insert into xx_table (id,xxx,xxx) values (1,xx,xxx),(2,xxx,xxx);

This statement will route to two datasources, ds0, ds1.

If there is a row with primary key 1 in ds1, it will throw exception:

Duplicate entry '1' for key 'PRIMARY';

The final result is: the sql routed to ds0 is successfully executed, and the

sql execution of ds1 fails.

Why is it not processed as all failures, which leads to data inconsistency,

and distributed transactions will not be rolled back

| 0 |

Hi

I have a select box that has to contain the following options. Mention the

same label for the options "audi" and "ford".

<select>

<option value="volvo">Volvo</option>

<option value="saab">Saab</option>

<option value="opel">Opel</option>

<option value="audi">Audi</option>

<option value="ford">Audi</option>

</select>

When I try to render this in Symfony 2.7, my FormType looks like this.

$builder->add('brand', 'choice', array(

'choices' => array(

'volvo' => 'Volvo',

'saab' => 'Saab',

'opel' => 'Opel',

'audi' => 'Audi',

'ford' => 'Audi'

)

));

I would assume all 5 fields are going to get rendered. In fact, only 4 get

rendered and the view looks like this:

<select id="...">

<option value="volvo">Volvo</option>

<option value="saab">Saab</option>

<option value="opel">Opel</option>

<option value="ford">Audi</option>

</select>

It seems the option with value "audi" is overriden by the option with value

"ford". I don't know if this is standard behaviour or a bug, but it's quite

annoying. Can any of you help me?

Thanks in advance!

| Q | A

---|---

Bug report? | no

Feature request? | yes

BC Break report? | ?

RFC? | ?

Symfony version | 3.2.7

In the documentation for data transformers, the input is validated in the

IssueToNumberTransformer class, and the error message is set in the TaskType

class. What if there are multiple types of invalid input, can there be a way

to have multiple error messages?

I know this can be done with Symfony\Component\Validator\Constraints in the

Entity class, but since validation must also take place in the data

transformer, I think there should be a way to have more control over the error

messages.

| 0 |

_Please make sure that this is a build/installation issue. As per ourGitHub

Policy, we only address code/doc bugs, performance issues, feature requests

and build/installation issues on GitHub. tag:build_template_

System information

OS : Windows 10

TensorFlow installed from (source or binary): from pip

TensorFlow version: 1.11.0

Python version: 3.6

Installed using virtualenv? pip? conda?: conda

Bazel version (if compiling from source): No

GCC/Compiler version (if compiling from source):

CUDA/cuDNN version: 10

GPU model and memory: Nvidia 1050Ti

**Describe the problem**

Recently I tried updating my tensor flow and later on, my tensorflow-gpu

stopped working.

Now I have downgraded to 1.11.0 but then still my tensorflow-gpu is not

working.

nvidia-smi is working fine.

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 416.34 Driver Version: 416.34 CUDA Version: 10.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name TCC/WDDM | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 105... WDDM | 00000000:01:00.0 Off | N/A |

| N/A 48C P8 N/A / N/A | 78MiB / 4096MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|==================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

tf.test.is_gpu_available(

cuda_only=False,

min_cuda_compute_capability=None

)

I ran this, its coming false

then ,

I ran this sess = tf.Session(config=tf.ConfigProto(log_device_placement=True))

Device mapping: no known devices.

2018-11-26 17:27:10.277030: I

tensorflow/core/common_runtime/direct_session.cc:291] Device mapping:

it's coming empty, I read lot of threads usually people had error with cuda,

by installing that it worked for lot of people.

But then in my case things were working fine earlier, now it got messed up and

cuda is installed properly.

why should I do?

|

Hi:

my program have bug in these code line:

word_embeddings = tf.scatter_nd_update(var_output, error_word_f, sum_all)

word_embeddings_2 = tf.nn.dropout(word_embeddings, self.dropout_pl)

# The error hint as follows:

ValueError: Tensor conversion requested dtype float32_ref for Tensor with

dtype float32: 'Tensor("dropout:0", shape=(), dtype=float32)

it looks like word_embeddings 's dtype is float32_ref but actual the function

tf.nn.dropout need word_embeddings dtype float32 ,how can i convert

word_embeddings's dtype float32_ref to float32 before run

tf.nn.dropout(word_embeddings, self.dropout_pl)?

| 0 |

ERROR: type should be string, got "\n\nhttps://babeljs.io/repl/#?experimental=true&evaluate=true&loose=false&spec=false&playground=false&code=%40test(()%20%3D%3E%20123)%0Aclass%20A%20%7B%7D%0A%0A%40test(()%20%3D%3E%20%7B%20return%20123%20%7D)%0Aclass%20B%20%7B%7D%0A%0A%40test(function()%20%7B%20return%20123%20%7D)%0Aclass%20%D0%A1%20%7B%7D\n\n" |

Compare the output of these two to show the difference.

https://babeljs.io/repl/#?experimental=true&evaluate=true&loose=false&spec=false&code=%40decorate((arg)%20%3D%3E%20null)%0Aclass%20Example1%20%7B%0A%7D%0A%0A%40decorate(arg%20%3D%3E%20null)%0Aclass%20Example2%20%7B%0A%7D

@decorate((arg) => null)

class Example1 {

}

@decorate(arg => null)

class Example2 {

}

| 1 |

Challenge using-the-justifycontent-property-in-the-tweet-embed has an issue.

User Agent is: `Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML,

like Gecko) Ubuntu Chromium/55.0.2883.87 Chrome/55.0.2883.87 Safari/537.36`.

Please describe how to reproduce this issue, and include links to screenshots

if possible.

It makes me pass the challenge even if I have not inserted the css statement

justify-content:center;

<style>

body {

font-family: Arial, sans-serif;

}

header, footer {

display: flex;

flex-direction: row;

}

header .profile-thumbnail {

width: 50px;

height: 50px;

border-radius: 4px;

}

header .profile-name {

display: flex;

flex-direction: column;

margin-left: 10px;

}

header .follow-btn {

display: flex;

margin: 0 0 0 auto;

}

header .follow-btn button {

border: 0;

border-radius: 3px;

padding: 5px;

}

header h3, header h4 {

display: flex;

margin: 0;

}

#inner p {

margin-bottom: 10px;

font-size: 20px;

}

#inner hr {

margin: 20px 0;

border-style: solid;

opacity: 0.1;

}

footer .stats {

display: flex;

font-size: 15px;

}

footer .stats strong {

font-size: 18px;

}

footer .stats .likes {

margin-left: 10px;

}

footer .cta {

margin-left: auto;

}

footer .cta button {

border: 0;

background: transparent;

}

</style>

<header>

<img src="https://pbs.twimg.com/profile_images/378800000147359764/54dc9a5c34e912f34db8662d53d16a39_400x400.png" alt="Quincy Larson's profile picture" class="profile-thumbnail">

<div class="profile-name">

<h3>Quincy Larson</h3>

<h4>@ossia</h4>

</div>

<div class="follow-btn">

<button>Follow</button>

</div>

</header>

<div id="inner">

<p>How would you describe to a layperson the relationship between Node, Express, and npm in a single tweet? An analogy would be helpful.</p>

<span class="date">7:24 PM - 17 Aug 2016</span>

<hr>

</div>

<footer>

<div class="stats">

<div class="retweets">

<strong>56,203</strong> RETWEETS

</div>

<div class="likes">

<strong>84,703</strong> LIKES

</div>

</div>

<div class="cta">

<button class="share-btn">Share</button>

<button class="retweet-btn">Retweet</button>

<button class="like-btn">Like</button>

</div>

</footer>

```

|

Challenge [Steamroller](https://www.freecodecamp.com/challenges/steamroller

has an issue.

**User Agent is** :

`Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_0) AppleWebKit/537.36 (KHTML,

like Gecko) Chrome/52.0.2743.116 Safari/537.36`.

**Issue Description** :

Global Variables value is not getting flushed.

My code:

var tempArr = [];

function steamrollArray(arr) {

// I'm a steamroller, baby

tempArr = []; // To fix the global var issue , we need to reset that global var

arr = arr.map(function(val,index){

return checkForArray(val);

});

console.log('tempArr',tempArr);

return tempArr;

}

function checkForArray(val_arr){

if(!Array.isArray(val_arr)) {tempArr.push(val_arr);return val_arr;}

val_arr.map(function(val,index){

if(Array.isArray(val)){

return checkForArray(val);

}

else {

tempArr.push(val);

return val;

}

});

}

steamrollArray([1, {}, [3, [[4]]]]);

| 0 |

Hi,

Just installed deno and try to run welcome.ts file which is mentioned in docs,

i am facing this issue. Details are below.

D:\deno>deno run https://deno.land/welcome.ts

Downloading https://deno.land/welcome.ts

WARN RS - Sending fatal alert BadCertificate

an error occurred trying to connect: invalid certificate: UnknownIssuer

an error occurred trying to connect: invalid certificate: UnknownIssuer

D:\deno>deno version

deno: 0.4.0

v8: 7.6.53

typescript: 3.4.1

Anybody faced this issue.

|

There should be a way to allow insecure https requests, using `window.fetch()`

for example.

It should disable certificate validation for all requests made from the

program.

As of implementation, there are two optoins that come to my mind: environment

variable and flag. Below are some examples of how it's done in other web-

related software, so we can come up with intuitive name and approach.

Examples:

* In NodeJS, there is a `NODE_TLS_REJECT_UNAUTHORIZED` environment variable that can be set to `0` to disable TLS certificate validation. It takes name from tls.connect options.rejectUnauthorized

* In curl, there's a `-k` or `--insecure` flag, which allows insecure server connections when using SSL

* In Chrome, there's a `--unsafely-treat-insecure-origin-as-secure="https://example.com"` for the same purposes

* For WebDrivers, there's acceptInsecureCerts capability. It can allow self-signed or otherwise invalid certificates to be implicitly trusted by the browser.

That said, I'd like to see `--accept-insecure-certs` flag option, because deno

already uses flags for a lot of things, including permissions.

* * *

My use-case: I'm using a corporate Windows laptop with network monitoring.

AFAIK, all https requests are also monitored, so there's a custom SSL-

certificate installed system-wide. So, most of the software, that uses system

CA storage, works just fine. But some uses custom or bundled CA, and it seems

like it's a case with deno. Anyway, it downloads deps just fine, but fails to

perfprm any https request.

Issue created as a follow up to gitter conversation: December 18, 2018 1:24 PM

| 1 |

### Problem description

When props.animated is true, Popover calls setState from within a setTimeout.

// in componentWillReceiveProps

if (nextProps.animated) {

this.setState({ closing: true });

this.timeout = setTimeout(function () {

_this2.setState({

open: false

});

}, 500);

}

Because componentWillReceiveProps doesn't mean that props changed, Popover has

the potential to call setState from within the setTimeout multiple times, when

no props have changed. For me, this is consistently causing an error where

setState is called on an unmounted component.

### Steps to reproduce

Rapidly re-render Popover (animated=true) after having changed open to false.

### Versions

* Material-UI: 0.15.3

* React: 15.3.0

* Browser: Chrome Version 52.0.2743.116 (64-bit)

|

## Problem Description

On occasion clicking the SelectField will result in the label text

disappearing and the dropdown menu not displaying. Clicking the SelectField

again will cause the label text to reappear and clicking again brings up the

dropdown menu as expected. The dropdown seems to briefly load before

disappearing.

I'm encountering this issue on both my own app and the material-ui examples

for the SelectField component. I've attached a gif of the bug on the material-

ui examples.

## Versions

* Material-UI: 0.14.4

* React: 0.14.8

* Browser: Chrome 50.0.2661.94 (64-bit) and Safari 9.1

| 1 |

We need a shorcut for move windows aplications to another virtual desktop. I

use MoveToDesktop by Eun: MoveToDesktop, ~~but it cannot be configured~~.

This tool provide combination for move application to next virtual desktop

using "win+alt+arrow" powerstroke.

|

Such as press twice 'Alt' to open PowerToys Run, Alfred can do this, this is

powerful, and will help people who want to migrate from MacOS just like me,

thanks.

| 0 |

#### Twitch TV challenge

https://www.freecodecamp.com/challenges/use-the-twitchtv-json-api

#### Issue Description

Your example site https://codepen.io/FreeCodeCamp/full/Myvqmo/ is not working.

link to each Twitch Channel is 404. Also, channel information is not displayed

properley - it just says "Account Closed" for all the channels.

#### Browser Information

Chrome on MAC desktop

|

#### Challenge Name

https://www.freecodecamp.com/challenges/use-the-twitchtv-json-api

#### Issue Description

JSONP calls to the channels API result in a bad request. This is due to an

update to the Twitchtv API which now requires a client id, which means

creating an account and registering your application with the service. Details

can be read from the official developer blog:

https://blog.twitch.tv/client-id-required-for-kraken-api-calls-

afbb8e95f843#.pm46cq40d

I confirmed this by console logging the response object from the channels API

on my application and comparing it with the example provided by Free Code Camp

(see screenshots).

#### Browser Information

N/A

#### Your Code

#### Screenshot

| 1 |

## Bug Report

* I would like to work on a fix!

**Current behavior**

A clear and concise description of the behavior.

`generate()` produces incorrect code for arrow function expression.

const generate = require('@babel/generator').default;

const node = t.arrowFunctionExpression( [], t.objectExpression( [] ) );

console.log( generate( node ) );

Output:

() => {}

Output should be:

() => ({})

**Babel Configuration (babel.config.js, .babelrc, package.json#babel, cli

command, .eslintrc)**

No config used. The above is the complete reproduction case.

**Environment**

System:

OS: macOS Mojave 10.14.6

Binaries:

Node: 14.9.0 - ~/.nvm/versions/node/v14.9.0/bin/node

npm: 6.14.8 - ~/.nvm/versions/node/v14.9.0/bin/npm

npmPackages:

@babel/core: ^7.11.6 => 7.11.6

@babel/generator: ^7.11.6 => 7.11.6

@babel/helper-module-transforms: ^7.11.0 => 7.11.0

@babel/parser: ^7.11.5 => 7.11.5

@babel/plugin-transform-modules-commonjs: ^7.10.4 => 7.10.4

@babel/plugin-transform-react-jsx: ^7.10.4 => 7.10.4

@babel/register: ^7.11.5 => 7.11.5

@babel/traverse: ^7.11.5 => 7.11.5

@babel/types: ^7.11.5 => 7.11.5

babel-jest: ^26.3.0 => 26.3.0

babel-plugin-dynamic-import-node: ^2.3.3 => 2.3.3

eslint: ^7.8.1 => 7.8.1

jest: ^26.4.2 => 26.4.2

|

> Issue originally made by @also

### Bug information

* **Babel version:** 6.2.0

* **Node version:** 4.1.2

* **npm version:** 3.4.1

### Options

none

### Input code

Dependencies in package.json:

{

"dependencies": {

"babel-runtime": "6.2.0"

},

"devDependencies": {

"babel-cli": "6.2.0",

"babel-plugin-transform-runtime": "6.1.18",

"babel-preset-es2015": "6.1.18"

}

}

### Description

I have a relatively simple `package.json` (here).

Using npm 3.4 and Node.js 4.1, `babel-doctor` complains about 43 duplicate

`babel-runtime` packages, even after running `npm dedupe`.

Output from Travis CI

$ babel-doctor

Babel Doctor

Running sanity checks on your system. This may take a few minutes...

✔ Found config at /home/travis/build/also/babel-6-runtime-test/.babelrc

✖ Found these duplicate packages:

- babel-runtime x 43

Recommend running `npm dedupe`

✔ All babel packages appear to be up to date

✔ You're on npm >=3.3.0

Found potential issues on your machine :(

It seems that it is possible to work around the issue by switching to the

version of `babel-runtime` being duplicated, currently `5.8.34`.

| 0 |

Hello,

I've been creating notifications with

https://github.com/electron/electron/blob/master/docs/tutorial/desktop-

environment-integration.md#notifications-windows-linux-macos

Can someone please confirm what I suspect:

* There is no way to bind some kind of click handler to a notification, all clicking ever does it close the notification

* There is no way to add actions/buttons to a notification.

If these are true then I think as they stand, notifications are not very

useful. If this functionality is not possible to implement, maybe it would be

better to provide a standardised BrowserWindow implementation?

As an aside - can anyone recommend a third party package for implementing

cross-platform rich notifications?

Thanks!

|

It would be really great if Electron supported the "notification actions"

feature added in Chrome 48:

https://www.chromestatus.com/features/5906566364528640

https://developers.google.com/web/updates/2016/01/notification-actions?hl=en

Essentially, you say:

new Notification("123", {title: "123", silent: true, actions: [{action: 'A', title: 'A'}, {action: 'B', title: 'B'}]})

To show the notification, and then listen for 'notificationclick' DOM event,

which gives you the name of the chosen action.

| 1 |

### Description

Ability to clear or mark task groups as success/failure and have that

propagate to the tasks within that task group. Sometimes there is a need to

adjust the status of tasks within a task group, which can get unwieldy

depending on the number of tasks in that task group. A great quality of life

upgrade, and something that seems like an intuitive feature, would be the

ability to clear or change the status of all tasks at their taskgroup level

through the UI.

### Use case/motivation

In the event a large number of tasks, or a whole task group in this case, need

to be cleared or their status set to success/failure this would be a great

improvement. For example, a manual DAG run triggered through the UI or the API

that has a number of task sensors or tasks that otherwise don't matter for

that DAG run - instead of setting each one as success by hand, doing so for

each task group would be great.

### Related issues

_No response_

### Are you willing to submit a PR?

* Yes I am willing to submit a PR!

### Code of Conduct

* I agree to follow this project's Code of Conduct

|

### Description

Hi,

It would be very interesting to be able to filter DagRuns by using the state

field. That would affect the following methods:

* /api/v1/dags/{dag_id}/dagRuns

* /api/v1/dags/~/dagRuns/list

Currently accepting the following query/body filter parameters:

* execution_date_gte

* execution_date_lte

* start_date_gte

* start_date_lte

* end_date_gte

* end_date_lte

Our proposal is to add the state parameter ("queued", "running", "success",

"failed") to be able to filter by the field "state" of the DagRun.

Thanks.

### Use case/motivation

Being able to filter DagRuns by their state value.

### Related issues

_No response_

### Are you willing to submit a PR?

* Yes I am willing to submit a PR!

### Code of Conduct

* I agree to follow this project's Code of Conduct

| 0 |

Profiling vet on a large corpus shows about >10% time spent in syscalls initiated by

gcimporter.(*parser).next. Many of these reads are avoidable; there is high import

overlap across packages, particularly within a given project.

Concretely, instrumenting calls to Import (in gcimporter.go) and then running 'go vet'

on camlistore yields these top duplicate imports:

153 fmt.a

147 testing.a

120 io.a

119 strings.a

113 os.a

108 bytes.a

99 time.a

97 errors.a

82 io/ioutil.a

80 log.a

76 sync.a

70 strconv.a

64 net/http.a

56 path/filepath.a

51 camlistore.org/pkg/blob.a

44 runtime.a

39 sort.a

39 flag.a

35 reflect.a

35 net/url.a

These 20 account for 1627 of the 2750 import reads.

Hacking in a quick LRU that simply caches the raw data in the files cuts 'go vet' user

time for camlistore by ~10%. I'm not sure that that is the right long-term approach,

though.

|

Currently gc generates the following code:

var s1 string

400c19: 48 c7 44 24 08 00 00 movq $0x0,0x8(%rsp)

400c22: 48 c7 44 24 10 00 00 movq $0x0,0x10(%rsp)

s2 := ""

400c2b: 48 8d 1c 25 20 63 42 lea 0x426320,%rbx

400c33: 48 8b 2b mov (%rbx),%rbp

400c36: 48 89 6c 24 18 mov %rbp,0x18(%rsp)

400c3b: 48 8b 6b 08 mov 0x8(%rbx),%rbp

400c3f: 48 89 6c 24 20 mov %rbp,0x20(%rsp)

s3 = ""

400c44: 48 8d 1c 25 20 63 42 lea 0x426320,%rbx

400c4c: 48 8b 2b mov (%rbx),%rbp

400c4f: 48 89 2c 25 f0 34 46 mov %rbp,0x4634f0

400c57: 48 8b 6b 08 mov 0x8(%rbx),%rbp

400c5b: 48 89 2c 25 f8 34 46 mov %rbp,0x4634f8

Ideally it is:

var s1 string

400c19: 48 c7 44 24 08 00 00 movq $0x0,0x8(%rsp)

400c22: 48 c7 44 24 10 00 00 movq $0x0,0x10(%rsp)

s2 := ""

400c19: 48 c7 44 24 08 00 00 movq $0x0,0x8(%rsp)

400c22: 48 c7 44 24 10 00 00 movq $0x0,0x10(%rsp)

s3 = ""

400c19: 48 c7 44 24 08 00 00 movq $0x0,0x8(%rsp)

400c22: 48 c7 44 24 10 00 00 movq $0x0,0x10(%rsp)

For := "", compiler can just remove the initializer.

For = "", compiler can recognize "" and store zeros.

| 0 |

In the bonfire problem, it states:

> Return the number of total permutations of the provided string that don't

> have repeated consecutive letters.

> For example, 'aab' should return 2 because it has 6 total permutations, but

> only 2 of them don't have the same letter (in this case 'a') repeating.

I believe this is incorrect.

The possible permutations of 'aab' are 'aab' 'aba' and 'baa' which should be

calculated as 3! / 2! (= 3), not 3! (= 6) as what was done in the description.

The total number of permutations should be 3 not 6. Then you want to eliminate

cases wherein identical letters are adjacent, namely, 'aab' and 'baa'. This

leaves 'aba' which means the answer ought to be 1, not 2.

Let me give another example. For the string 'aabb', the possible permutations

ought not be calculated as 4! (=24) then eliminate the ones with adjacent

identical characters. This leads to an incorrect answer of 8.

The possible permutations of 'aabb' is actually just 6, namely 'aabb', 'abab',

'baba', 'bbaa', 'abba', 'baab'. This can also be calculated 4! / (2! * 2!)

(=6). Then when the strings wherein there are adjacent identical characters

are eliminated, this leaves 'abab' and 'baba' which means the answer is 2 (not

8 as the test case indicates).

Please advise. Apologies if this is deemed a spam

|

I selected the buttons as checked but the waypoint is not noticing that the

buttons are checked.

| 0 |

Typscript typings for ChipProps includes tabIndex of type: number | string

which is incompartible with the overriding tabIndex property extended from

HTMLElement via HTMLDivElement.

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

No error. Property tabIndex?: number | string on interface ChipProps should

either be tabIndex?: number or removed completely since its inherited by

virtue of extension.

## Current Behavior

Receive the error message

`ERROR in <path-to-project-root>/node_modules/material-ui/Chip/Chip.d.ts

(4,18): error TS2430: Interface 'ChipProps' incorrectly extends interface

'HTMLAttributes<HTMLDivElement>'. Types of property 'tabIndex' are

incompatible. Type 'ReactText' is not assignable to type 'number'. Type

'string' is not assignable to type 'number'.`

## Steps to Reproduce (for bugs)

Add Chip to any typescript project and compile with tsc

## Your Environment

Tech | Version

---|---

Material-UI | 1.0.0-beta.9

React | 15.6.1

|

When use the Card component and also SSR, React 16 gives the following

message:

> Did not expect server HTML to contain a <div> in <div>.

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

A Card component should render on the serve side, so the client side doesn't

have to do anything with it and should return no warning.

## Current Behavior

When I use the Card component with React 16 like this:

<Card className={classes.card}>

<CardContent>

<Typography type="headline" component="h2" >

Login

</Typography>

<TextField

id="username"

label="Username"

autoComplete="username"

className={classes.input}

/>

<TextField

id="password"

label="Password"

type="password"

autoComplete="current-password"

margin="normal"

className={classes.input}

/>

</CardContent>

<CardActions className={classes.cardActions}>

<Button onClick={() => props.handleLogin()}>Login</Button>

</CardActions>

</Card>

it gives the following error on hydration:

Warning: Did not expect server HTML to contain a <div> in <div>.

printWarning @ bundle.js:sourcemap:423

warning @ bundle.js:sourcemap:447

warnForDeletedHydratableElement$1 @ bundle.js:sourcemap:16895

didNotHydrateInstance @ bundle.js:sourcemap:17573

deleteHydratableInstance @ bundle.js:sourcemap:11892

popHydrationState @ bundle.js:sourcemap:12099

completeWork @ bundle.js:sourcemap:11067

completeUnitOfWork @ bundle.js:sourcemap:12568

performUnitOfWork @ bundle.js:sourcemap:12670

workLoop @ bundle.js:sourcemap:12724

callCallback @ bundle.js:sourcemap:2978

invokeGuardedCallbackDev @ bundle.js:sourcemap:3017

invokeGuardedCallback @ bundle.js:sourcemap:2874

renderRoot @ bundle.js:sourcemap:12802

performWorkOnRoot @ bundle.js:sourcemap:13450

performWork @ bundle.js:sourcemap:13403

requestWork @ bundle.js:sourcemap:13314

scheduleWorkImpl @ bundle.js:sourcemap:13168

scheduleWork @ bundle.js:sourcemap:13125

scheduleTopLevelUpdate @ bundle.js:sourcemap:13629

updateContainer @ bundle.js:sourcemap:13667

(anonymous) @ bundle.js:sourcemap:17658

unbatchedUpdates @ bundle.js:sourcemap:13538

renderSubtreeIntoContainer @ bundle.js:sourcemap:17657

hydrate @ bundle.js:sourcemap:17719

(anonymous) @ bundle.js:sourcemap:66465

The description of the warning does not say much, so I couldn't investigate

further. However, when I change the above code to a simple `<div>` tag, the

warning disappears, so I suspect that there is something wrong with the sever-

side rendering of the Card component.

If there's a way to get more information about the warning I could look into

it, I just have no idea how :).

## Context

## Your Environment

Tech | Version

---|---

Material-UI | 1.0.0-beta.25

React | 16.2.0

browser | Google Chrome 63.0.3239.108 (Official Build) (64-bit)

| 0 |

### Preflight Checklist

* I have read the Contributing Guidelines for this project.

* I agree to follow the Code of Conduct that this project adheres to.

* I have searched the issue tracker for an issue that matches the one I want to file, without success.

### Issue Details

* **Electron Version:**

* 9.1.0

* **Operating System:**

* Windows 10 (19041, 18363, 18362, 16299)

* **Last Known Working Electron version:**

* N/A

### Expected Behavior

The application should not crash.

### Actual Behavior

The application crashes.

### To Reproduce

This seems happening sporadically when application exits (all windows get

closed, destroyed and app is quit).

It happens only on Windows, MacOS does not seem to affected.

My code snippet:

app.removeAllListeners('window-all-closed')

BrowserWindow.getAllWindows().forEach((browserWindow) => {

browserWindow.close()

browserWindow.destroy()

})

app.quit()

### Stack Trace

Pastebin: https://pastebin.com/raw/MYvbpWe2

Snippet:

Fatal Error: EXCEPTION_ACCESS_VIOLATION_READ

Thread 10196 Crashed:

0 MyApp.exe 0x7ff75f43a632 [inlined] base::internal::UncheckedObserverAdapter::IsEqual (observer_list_internal.h:30)

1 MyApp.exe 0x7ff75f43a632 [inlined] base::ObserverList<T>::RemoveObserver::<T>::operator() (observer_list.h:283)

2 MyApp.exe 0x7ff75f43a632 [inlined] std::__1::find_if (algorithm:933)

3 MyApp.exe 0x7ff75f43a632 base::ObserverList<T>::RemoveObserver (observer_list.h:281)

4 MyApp.exe 0x7ff76101f28c extensions::ProcessManager::Shutdown (process_manager.cc:289)

5 MyApp.exe 0x7ff761e3a08d [inlined] DependencyManager::ShutdownFactoriesInOrder (dependency_manager.cc:127)

6 MyApp.exe 0x7ff761e3a08d DependencyManager::DestroyContextServices (dependency_manager.cc:83)

7 MyApp.exe 0x7ff75f2e8de6 electron::ElectronBrowserContext::~ElectronBrowserContext (electron_browser_context.cc:158)

8 MyApp.exe 0x7ff75f2e9abf electron::ElectronBrowserContext::~ElectronBrowserContext (electron_browser_context.cc:154)

9 MyApp.exe 0x7ff75f287a9d [inlined] base::RefCountedDeleteOnSequence<T>::Release (ref_counted_delete_on_sequence.h:52)

10 MyApp.exe 0x7ff75f287a9d [inlined] scoped_refptr<T>::Release (scoped_refptr.h:322)

11 MyApp.exe 0x7ff75f287a9d [inlined] scoped_refptr<T>::~scoped_refptr (scoped_refptr.h:224)

12 MyApp.exe 0x7ff75f287a9d electron::api::Session::~Session (electron_api_session.cc:313)

13 MyApp.exe 0x7ff75f28cdaf electron::api::Session::~Session (electron_api_session.cc:294)

14 MyApp.exe 0x7ff75f2eb641 [inlined] base::OnceCallback<T>::Run (callback.h:98)

15 MyApp.exe 0x7ff75f2eb641 electron::ElectronBrowserMainParts::PostMainMessageLoopRun (electron_browser_main_parts.cc:545)

16 MyApp.exe 0x7ff760a01750 content::BrowserMainLoop::ShutdownThreadsAndCleanUp (browser_main_loop.cc:1095)

17 MyApp.exe 0x7ff760a031a6 content::BrowserMainRunnerImpl::Shutdown (browser_main_runner_impl.cc:178)

18 MyApp.exe 0x7ff7609fee69 content::BrowserMain (browser_main.cc:49)

19 MyApp.exe 0x7ff76090405c content::RunBrowserProcessMain (content_main_runner_impl.cc:530)

20 MyApp.exe 0x7ff760904c00 content::ContentMainRunnerImpl::RunServiceManager (content_main_runner_impl.cc:980)

21 MyApp.exe 0x7ff7609048c2 content::ContentMainRunnerImpl::Run (content_main_runner_impl.cc:879)

22 MyApp.exe 0x7ff761870252 service_manager::Main (main.cc:454)

23 MyApp.exe 0x7ff75fcd8265 content::ContentMain (content_main.cc:19)

24 MyApp.exe 0x7ff75f23140a wWinMain (electron_main.cc:210)

25 MyApp.exe 0x7ff7646a6e91 [inlined] invoke_main (exe_common.inl:118)

26 MyApp.exe 0x7ff7646a6e91 __scrt_common_main_seh (exe_common.inl:288)

27 KERNEL32.DLL 0x7ffed8a97033 BaseThreadInitThunk

28 ntdll.dll 0x7ffed97dcec0 RtlUserThreadStart

### Additional Information

This seems a crash on shutdown/destroy of windows (from

`base::Process::Terminate (process_win.cc)`, not sure if it's chromium-related

(https://chromium.googlesource.com/chromium/src/+/master/docs/shutdown.md)

|

Detected in CI:

https://app.circleci.com/pipelines/github/electron/electron/31161/workflows/e022a2a8-d5fb-47b4-806f-84f69ecf0a8a/jobs/686542

Received signal 11 SEGV_MAPERR ffffffffffffffff

0 Electron Framework 0x0000000114bcb869 base::debug::CollectStackTrace(void**, unsigned long) + 9

1 Electron Framework 0x0000000114ac6203 base::debug::StackTrace::StackTrace() + 19

2 Electron Framework 0x0000000114bcb731 base::debug::(anonymous namespace)::StackDumpSignalHandler(int, __siginfo*, void*) + 2385

3 libsystem_platform.dylib 0x00007fff6fcda5fd _sigtramp + 29

4 ??? 0x00007ff9fa10d6e0 0x0 + 140711618991840

5 Electron Framework 0x0000000114710a51 extensions::ProcessManager::Shutdown() + 33

6 Electron Framework 0x000000011651d4fc DependencyManager::DestroyContextServices(void*) + 140

7 Electron Framework 0x000000010f34d708 electron::ElectronBrowserContext::~ElectronBrowserContext() + 184

8 Electron Framework 0x000000010f34d9fe electron::ElectronBrowserContext::~ElectronBrowserContext() + 14

9 Electron Framework 0x000000010f35160d std::__1::__tree<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, std::__1::__map_value_compare<electron::ElectronBrowserContext::PartitionKey, std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, std::__1::less<electron::ElectronBrowserContext::PartitionKey>, true>, std::__1::allocator<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > > > >::destroy(std::__1::__tree_node<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, void*>*) + 61

10 Electron Framework 0x000000010f3515f6 std::__1::__tree<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, std::__1::__map_value_compare<electron::ElectronBrowserContext::PartitionKey, std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, std::__1::less<electron::ElectronBrowserContext::PartitionKey>, true>, std::__1::allocator<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > > > >::destroy(std::__1::__tree_node<std::__1::__value_type<electron::ElectronBrowserContext::PartitionKey, std::__1::unique_ptr<electron::ElectronBrowserContext, std::__1::default_delete<electron::ElectronBrowserContext> > >, void*>*) + 38

11 Electron Framework 0x000000010f35121b electron::ElectronBrowserMainParts::PostMainMessageLoopRun() + 219

12 Electron Framework 0x00000001134a7477 content::BrowserMainLoop::ShutdownThreadsAndCleanUp() + 647

13 Electron Framework 0x00000001134a9510 content::BrowserMainRunnerImpl::Shutdown() + 224

14 Electron Framework 0x00000001134a3c57 content::BrowserMain(content::MainFunctionParams const&) + 279

15 Electron Framework 0x00000001132bd757 content::ContentMainRunnerImpl::RunServiceManager(content::MainFunctionParams&, bool) + 1191

16 Electron Framework 0x00000001132bd283 content::ContentMainRunnerImpl::Run(bool) + 467

17 Electron Framework 0x00000001110e92be content::RunContentProcess(content::ContentMainParams const&, content::ContentMainRunner*) + 2782

18 Electron Framework 0x00000001110e93ac content::ContentMain(content::ContentMainParams const&) + 44

19 Electron Framework 0x000000010f2285a9 ElectronMain + 137

20 Electron 0x0000000108e99631 main + 289

21 libdyld.dylib 0x00007fff6faddcc9 start + 1

[end of stack trace]

| 1 |

I am trying to figure out how to center an item in the navbar. I would

essentially like to be able to have two navs, one left and one right. Then

have an element (logo or CSS styled div) in the middle of the nav. It would be

nice to have something similar to .pull-right, a .pull-center or something to

assist with this. I have been trying to override the css and write it in

myself, but for the life of me can't get it to work right.

| 1 | |

Is there a supported way to write an image using the `clipboard` API? I've

tried writing a data URL using `writeText` (similar to this) but that isn't

cutting it. Perhaps the `type` parameter is involved but the documentation

isn't clear as to what that should be.

|

Is it possible to use `clipboard.read()` to access an image copied to OS X's

pasteboard?

| 1 |

Hi,

again a bug which doesn't need fixing right now, but in a long run.

It would be good to be more FHS and distributions friendly, that means at least:

- honor libexecdir (binary architecture files) and datadir (documentation, etc.)

- allow to have standard library files to be read only (mostly goinstall issues)

- install additional packages to /usr/local/golang (f.e.)

- allow to have more than one path in $GOROOT (something like $PYTHONPATH, etc.), so the

users can install packages/libraries to their home directories (and godoc, goinstall,

etc. would know about that).

There's probably more, but it will probably pop-up during the time.

|

What steps will reproduce the problem?

1. visit the documentation for any type (e.g.

http://golang.org/pkg/net/http/#CanonicalHeaderKey)

2. click on the title of the section, to go see the corresponding code

What is the expected output? What do you see instead?

I expect to get to a page where I can directly see the code for the clicked element.

Instead I get to the first line of the file containing the code I'm interested in.

Please use labels and text to provide additional information.

| 0 |

# Bug report

**What is the current behavior?**

Destructuring DefinePlugin variables causes runtime error `Uncaught

ReferenceError: process is not defined`

**If the current behavior is a bug, please provide the steps to reproduce.**

1. have DefinePlugin plugin defined in the webpack config like this:

plugins: [

new webpack.DefinePlugin({

'process.env.NODE_ENV': JSON.stringify(process.env.NODE_ENV)

})

]

2. try to access `NODE_ENV` via destructuring: `const { NODE_ENV } = process.env;`

3. you get runtime failure `Uncaught ReferenceError: process is not defined`

However!

if you access NODE_ENV like `const NODE_ENV = process.env.NODE_ENV;` instead

of `const { NODE_ENV } = process.env;` you don't get runtime error and

everything works like expected.

**What is the expected behavior?**

Both ways of accessing the variable should be equal and should not cause

runtime error.

It is pretty confusing and hard to debug problem and also problematic due to

widely used `prefer-destructuring` eslint rule.

**Other relevant information:**

webpack version: 5.64.4

Node.js version: v16.13.0