text1 stringlengths 2 269k | text2 stringlengths 2 242k | label int64 0 1 |

|---|---|---|

I downloaded neo4j-master from github, today.

Within neo4j-master there is a directory :

neo4j-master/community/io/src/main/java/org/neo4j/io

I also obtained the community edition 2.16, which should contain all the jars

for neo4j projects.

However, there is no neo4j.io.jar corresponding to the neo4j-master code

above.

I tried to compile neo4j-master/community/embedded-

examples/src/main/java/org/neo4j/examples/NewMatrix.java

The last import :: "import org.neo4j.io.fs.FileUtils;" was missing.

I obtained neo4j-io-2.2.0-M02.jar from

http://mvnrepository.com/artifact/org.neo4j/neo4j-io/2.2.0-M02

and added it to my project. The project then compiled and ran fine...

Further, the online javadoc for neo4j 2.16 does not cover package org.neo4j.io

(http://neo4j.com/docs/2.1.6/javadocs/)

It would be nice if there was a community version of neo4j which would compile

the examples, out of the box.

|

I have a total of 200,001 nodes out of which one node joins with 200,000 other

nodes. so 200,000 relationships total.

All these nodes are coming from Kafka so my Kafka Consumer reads a set of

nodes(a batch) from Kafka and applies the following operation.

MATCH (a:Dense1) where a.id <> "1"

WITH a

MATCH (b:Dense1) where b.id = "1"

WITH a,b

WHERE a.key = b.key

MERGE (a)-[:PARENT_OF]->(b)

And this takes forever to build 200,001 relationships both with index or

without index on id and key. If I change `Merge` to `CREATE` then it is super

quick(the fastest)! however, since I have to read a batch from kafka and apply

the same operation incrementally the relationships are getting duplicated if I

use `CREATE` for every batch.

Ideally, if a relationship exists I don't want Neo4j to do anything or even

better throw an exception or something to the client driver that way

application can do something useful with it. I also tried changing `MERGE` to

`CREATE UNIQUE` in the above code it is 50% faster than `MERGE` but still slow

compared to `CREATE`.

If `MERGE` is this slow due to double locking as explained here Then it almost

becomes unusable.

Any approach to make it better would be great!

| 0 |

It would be awesome if there is an option for an off-canvas navigation. There

is nothing wrong with the current dropdown style navigation in mobile and I'm

not against it, I just like the off-canvas style more.

Of course this would not be applicable for every website, but same as the

dropdown style either. So it would be good if we can have an option depending

on what kind of website we are working on. In my own opinion, the off-canvas

style is more "mobile" than the dropdown because we can see it more often in

mobile apps.

Here is a very good example:

http://designmodo.github.io/startup-

demo/framework/samples/sample-04/index.html

As you can see the links automatically becomes the off-canvas navigation when

in small screen.

Anyone with me?

|

Please make lateral the navbar when it collapses on mobile devices such as

foundation or purecss.

Tks

| 1 |

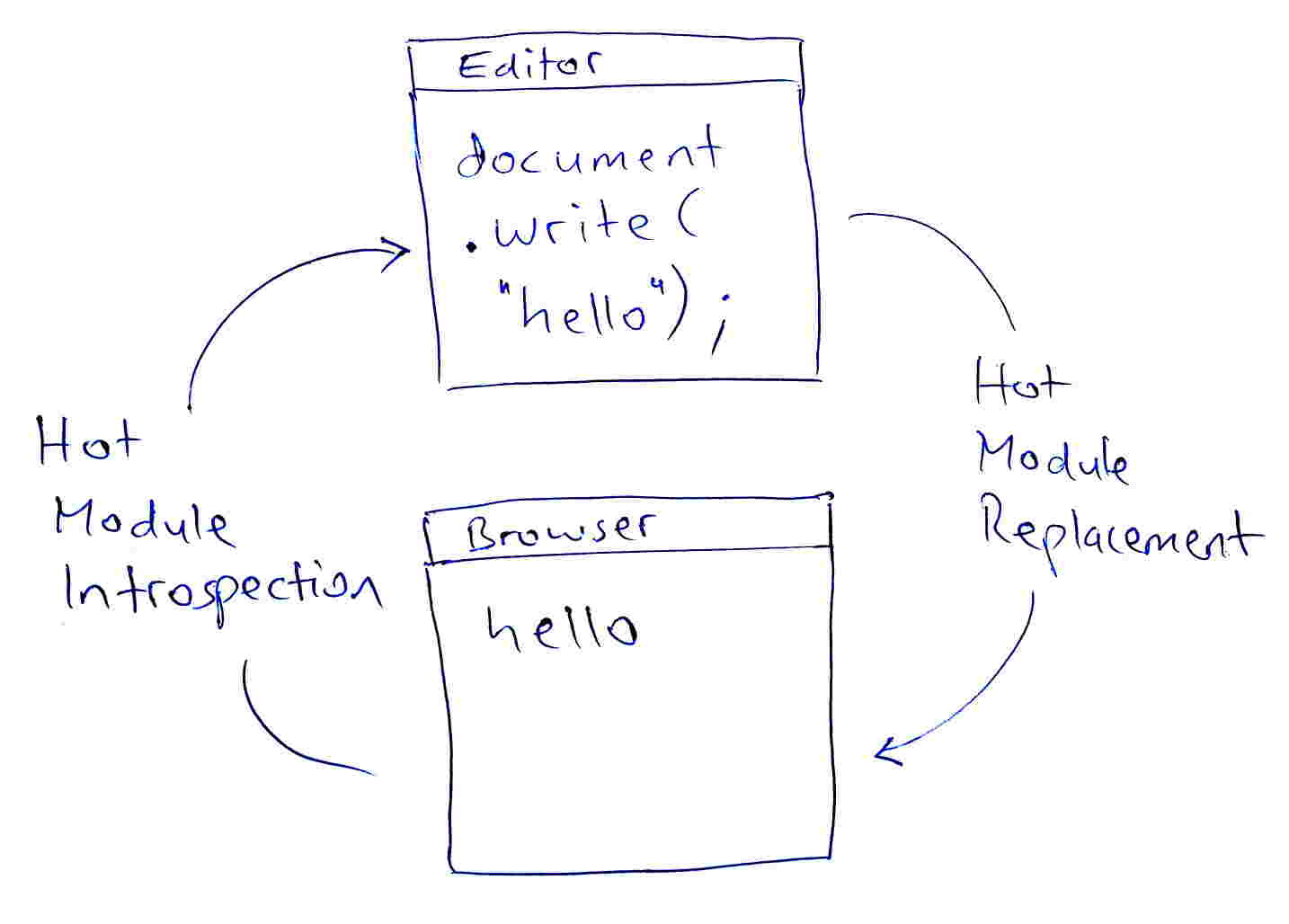

## Feature request

add "hot module introspection"

as a feedback channel from browser to editor

**situation in my code editor**

class Class1 {constructor() {this.key1 = 'val1'}};

class Class2 {constructor() {this.key2 = 'val2'}};

obj1 = new Class1();

obj2 = new Class2();

obj1.k

// ^

// at this point i want "hot code completion" in my code editor

// so only "key1" is suggested, but not "key2"

// code introspection at runtime

Object.keys(obj1)

// = [ 'key1' ]

// the node.js shell can do it

obj1.k

// ^

// the tab-key does the right completion to "key1"

**Ideal?**

**What is the expected behavior?**

obj1.k

// ^

// at this point i want "hot code completion" in my code editor

// so only "key1" is suggested, but not "key2"

as far as i know

all code editors fail at this point of "dynamic code analysis"

the best they can offer is: `key1` or `key2`

vscode and eclipse do this by default

vscode calls this "intellisense text suggestions"

but i dont want to be limited by "static code analysis"

when the program is started anyway, after every file change

**Solution?**

**How should this be implemented in your opinion?**

extend the "hot module replacement" system

to feed back "hot module introspection" data to the editor

this introspection-data can be sent over http

so the editor has a keep-alive connection

and is waiting for the server to push new introspection data

.... or use the browser as code editor, using CodeMirror

and show the program inside a frame, like on jsfiddle.net

[ the code completion function of codemirror seems broken to me ]

the data format should be optimized for machine-readability

for example by using length-prefixed lists and strings,

like in BSON, messagepack, python-pickle, EXI, flatbuffers, ....

in an ideal world, the javascript runtime does offer a fast way

to access the "internal representation" of the running program

**limits**

introspection requires a valid program,

so you must have a running "last version"

to provide introspection data for your not-running "current version"

**potential problems**

circular references must be detected and handled

like `object.child.parent.child.parent.child`....

**related**

recursive introspection in javascript

get inherited properties of javascript objects

VS Code to autocomplete JavaScript class 'this' properties automatically

\-- "doesn't work too well if you bind things to the class at runtime"

the ahaa moment of hot reloading in clojurescript/figwheel, by bruce hauman

**Why?**

**What is motivation or use case for adding/changing the behavior?**

this allows for "zero knowledge programming"

let the machine do the boring-precise part of

"how did i call this property? where is it hidden?"

and focus on the creative-fuzzy part of

"let me just add something like ...."

this also makes it much easier to learn new libraries.

instead of depending on good documentation,

you can make full use of the existing introspection functions.

for "distant properties" who are hidden in child/parent objects,

you can browse a "map of properties", like a mind-map.

also, why not? : P

**Are you willing to work on this yourself?**

no, not today.

i hope that the "insider people" can solve this much faster than me

and i can avoid digging into unfamiliar projects

**more keywords**

code hinting, runtime analysis, dynamic analysis, runtime introspection, live

object introspection, hierarchy of variable names, javascript object graph

|

# Bug report

**What is the current behavior?**

Many modules published to npm are using "auto" exports

(https://rollupjs.org/guide/en#output-exports-exports, but there is also a

popular babel plugin which adds this behaviour

https://github.com/59naga/babel-plugin-add-module-exports#readme) which is

supposed to ease interop with node (removing "pesky" `.default` for CJS

consumers when there is only a default export in the module).

And with that depending on a package authored **solely** in CJS (which still

is really common) which depends on a package authored using the mentioned

"auto" mode is dangerous and broken.

Why? Because webpack is using the "module" entry from package.json (thus using

real default export) without checking the requester module type (which is cjs

here). CJS requester did not use a `.default` when requiring the package with

auto mode, because from its perspective there was no such thing.

**If the current behavior is a bug, please provide the steps to reproduce.**

https://github.com/Andarist/webpack-module-entry-from-cjs-issue . Exported

value should be `"foobar42"` instead of `"foo[object Module]42"`

**What is the expected behavior?**

Webpack should deopt (ignoring .mjs & "module") its requiring behaviour based

on the requester type.

**Other relevant information:**

webpack version: latest

Node.js version: irrelevant

Operating System: irrelevant

Additional tools: irrelevant

Mentioning rollup team as probably its the tool outputting the most auto mode

libraries ( @lukastaegert @guybedford ) and @developit (who I think might be

interested in the discussion).

| 0 |

In a number of places, sklearn controls flow according to the existence of

some method on an estimator. For example: `*SearchCV.score` checks for `score`

on the estimator; `Scorer` and `multiclass` functions check for

`decision_function`; and it is used for validation in

`AdaBoostClassifier.fit`, `multiclass._check_estimator` and `Pipeline`; and

for testing in `test_common`.

Meta-estimators such as `*SearchCV`, `Pipeline`, `RFECV`, etc. should respond

to such `hasattr`s in agreement with their underlying estimators (or else the

`hasattr` approach should be avoided).

This is possible by implementing such methods with a `property` that returns

the correct method from the sub-estimator (or a closure around it), or raises

`AttributeError` if the sub-estimator is found lacking (see #1801). `hasattr`

would then function correctly. Caveats: the code would be less straightforward

in some cases; `help()`/`pydoc` won't show the methods as methods (with an

argument list, etc.), though the `property`'s docstring will show.

|

### Describe the bug

For some samples and requested n_clusters MiniBatchKMeans does not return a

proper clustering in terms of the number of clusters and consecutive labels.

The example given below shows, that when requesting 11 clusters the result

only consists of 9 and requesting 12 results in 11 clusters. Requesting 13

clusters then yields only 10 clusters.

When using KMeans instead of MiniBatchKMeans there is no such issue.

### Steps/Code to Reproduce

import numpy as np

from sklearn.cluster import MiniBatchKMeans

points = [

[-2636.705, 892.6364, 239.4284], [-2676.219, 922.741, 227.3839], [-2628.628, 902.6482, 245.5609], [-2612.497, 860.9032, 248.924],

[-2639.552, 993.8482, 211.2253], [-2602.453, 958.7801, 211.5786], [-2598.118, 1032.398, 177.4023], [-2582.155, 972.5088, 203.5048],

[-2548.377, 803.9934, 279.4388], [-2550.095, 979.9586, 222.6467], [-2746.966, 1021.456, 188.8456], [-2745.181, 984.1931, 199.6674],

[-2729.113, 973.8251, 201.8876], [-2720.765, 1014.262, 205.0213], [-2747.317, 1099.313, 146.2305], [-2739.32, 1005.173, 200.297]

]

for numClusters in range(7, 17):

model = MiniBatchKMeans(n_clusters=numClusters, random_state=0)

clusters = model.fit_predict(points)

unique = np.unique(clusters)

print("requested", str(numClusters).rjust(2), "clusters and result has", str(len(unique)).rjust(2), "clusters with labels", unique)

### Expected Results

requested 7 clusters and result has 7 clusters with labels [ 0 1 2 3 4 5 6]

requested 8 clusters and result has 8 clusters with labels [ 0 1 2 3 4 5 6 7]

requested 9 clusters and result has 9 clusters with labels [ 0 1 2 3 4 5 6 7 8]

requested 10 clusters and result has 10 clusters with labels [ 0 1 2 3 4 5 6 7 8 9]

requested 11 clusters and result has 11 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10]

requested 12 clusters and result has 12 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10 11]

requested 13 clusters and result has 13 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10 11 12]

requested 14 clusters and result has 14 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10 11 12 13]

requested 15 clusters and result has 15 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14]

requested 16 clusters and result has 16 clusters with labels [ 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15]

### Actual Results

requested 7 clusters and result has 7 clusters with labels [ 0 1 2 3 4 5 6]

requested 8 clusters and result has 7 clusters with labels [ 0 1 3 4 5 6 7]

requested 9 clusters and result has 9 clusters with labels [ 0 1 2 3 4 5 6 7 8]

requested 10 clusters and result has 10 clusters with labels [ 0 1 2 3 4 5 6 7 8 9]

requested 11 clusters and result has 9 clusters with labels [ 0 1 2 3 5 6 7 8 9]

requested 12 clusters and result has 11 clusters with labels [ 1 2 3 4 5 6 7 8 9 10 11]

requested 13 clusters and result has 10 clusters with labels [ 0 2 4 5 6 7 9 10 11 12]

requested 14 clusters and result has 12 clusters with labels [ 1 2 3 4 5 6 7 8 9 10 11 12]

requested 15 clusters and result has 11 clusters with labels [ 0 1 3 4 6 7 8 10 11 12 14]

requested 16 clusters and result has 13 clusters with labels [ 0 1 3 4 5 6 7 9 10 11 12 13 15]

### Versions

System:

python: 3.9.7 | packaged by conda-forge | (default, Sep 29 2021, 19:20:16) [MSC v.1916 64 bit (AMD64)]

executable: C:\Users\USER\.conda\envs\sklearn-env\python.exe

machine: Windows-10-10.0.22000-SP0

Python dependencies:

pip: 21.3.1

setuptools: 58.5.3

sklearn: 1.0.1

numpy: 1.21.4

scipy: 1.7.2

Cython: None

pandas: None

matplotlib: 3.4.3

joblib: 1.1.0

threadpoolctl: 3.0.0

Built with OpenMP: True

| 0 |

I was hoping to find a routine in base that would give me the first `k`

elements of `sortperm(v)`.

This would be much like `select(v, 1:k)`, but instead of returning the actual

elements, it would return the index where those elements can be found.

Does such a function exist?

|

One of the first things we need to do is make the runtime thread safe. This

work is on the `threads` branch, and this tracker predates the new GC. I

thought it is worth capturing a tracker that @StefanKarpinski prepared earlier

in an issue to ease the thread safety work.

This list is organized as `Variable; Approach`

# builtins.c

* extern size_t jl_page_size; constant

* extern int jl_in_inference; lock

* extern int jl_boot_file_loaded; constant

* int in_jl_ = 0; thread-local

# ccall.cpp

* static std::mapstd::stringstd::string sonameMap; lock

* static bool got_sonames = false; lock, write-once

* static std::mapstd::stringuv_lib_t* libMap; lock

* static std::mapstd::stringGlobalVariable* libMapGV; lock

* static std::mapstd::stringGlobalVariable* symMapGV; lock

* ~~static char *temp_arg_area; thread-local (will be deleted very soon)~~

* ~~static const uint32_t arg_area_sz = 4196; constant (will be deleted very soon)~~

* ~~static uint32_t arg_area_loc; thread-local (will be deleted very soon)~~

* ~~static void *temp_arg_blocks[N_TEMP_ARG_BLOCKS]; thread-local (will be deleted very soon)~~

* ~~static uint32_t arg_block_n = 0; thread-local (will be deleted very soon)~~

* ~~static Function *save_arg_area_loc_func; constant (will be deleted very soon)~~

* ~~static Function *restore_arg_area_loc_func; constant (will be deleted very soon)~~

# cgutils.cpp

* static std::map<const std::stringGlobalVariable*> stringConstants; lock

* static std::map<void*jl_value_llvm> jl_value_to_llvm; lock

* static std::map<Value _void_ > llvm_to_jl_value; lock

* static std::vector<Constant*> jl_sysimg_gvars; lock

* static std::map<intjl_value_t*> typeIdToType; lock

* jl_array_t *typeToTypeId; lock

* static int cur_type_id = 1; lock

# codegen.cpp

* void *__stack_chk_guard = NULL; thread-local (jwn: why is this on the list? it's a constant and not thread local)

# debuginfo.cpp

* extern "C" volatile int jl_in_stackwalk;

* JuliaJITEventListener *jl_jit_events;

* static obfiletype objfilemap;

* extern char *jl_sysimage_name; constant

* static logdata_t coverageData;

* static logdata_t mallocData;

# dump.c

* static jl_array_t *tree_literal_values=NULL; thread-local

* static jl_value_t *jl_idtable_type=NULL; constant

* static jl_array_t *datatype_list=NULL; thread 0 only

* jl_value_t ***sysimg_gvars = NULL; thread 0 only

* extern int globalUnique; thread 0 only

* static size_t delayed_fptrs_n = 0; thread 0 only

* static size_t delayed_fptrs_max = 0; thread 0 only

# gc.c

* static volatile size_t allocd_bytes = 0; thread-local

* static volatile int64_t total_allocd_bytes = 0; thread-local

* static int64_t last_gc_total_bytes = 0; thread-local

* static size_t freed_bytes = 0; barrier

* static uint64_t total_gc_time=0; barrier

* int jl_in_gc=0; * referenced from switchto task.c barrier

* static htable_t obj_counts; barrier

* static size_t total_freed_bytes=0; barrier

* static arraylist_t to_finalize; barrier

* static jl_value_t **mark_stack = NULL; barrier

* static size_t mark_stack_size = 0; barrier

* static size_t mark_sp = 0; barrier

* extern jl_module_t *jl_old_base_module; constant

* extern jl_array_t *typeToTypeId; barrier

* extern jl_array_t *jl_module_init_order; barrier

* static int is_gc_enabled = 1; atomic

* static double process_t0; constant

# init.c

* char *jl_stack_lo; thread-local

* char *jl_stack_hi; thread-local

* volatile sig_atomic_t jl_signal_pending = 0; thread-local

* volatile sig_atomic_t jl_defer_signal = 0; thread-local

* uv_loop_t *jl_io_loop; I/O thread ?

* static void *signal_stack; thread-local (see #9763 (comment))

* static mach_port_t segv_port = 0; constant

* extern void * __stack_chk_guard; thread-local (duplicate of above)

# jltypes.c

* int inside_typedef = 0; thread-local

* static int match_intersection_mode = 0; thread-local

* static int has_ntuple_intersect_tuple = 0; thread-local

* static int t_uid_ctr = 1; lock

# llvm-simdloop.cpp

* static unsigned simd_loop_mdkind = 0; constant

* static MDNode* simd_loop_md = NULL; constant

* char LowerSIMDLoop::ID = 0; lock

# module.c

* jl_module_t *jl_main_module=NULL; constant

* jl_module_t *jl_core_module=NULL; constant

* jl_module_t *jl_base_module=NULL; constant

* jl_module_t *jl_current_module=NULL; thread-local

* jl_array_t *jl_module_init_order = NULL; lock (this code is bady broken anyways: #9799)

# profile.c

* static volatile ptrint_t* bt_data_prof = NULL;

* static volatile size_t bt_size_max = 0;

* static volatile size_t bt_size_cur = 0;

* static volatile u_int64_t nsecprof = 0;

* static volatile int running = 0;

* volatile HANDLE hBtThread = 0;

* static pthread_t profiler_thread;

* static mach_port_t main_thread;

* clock_serv_t clk;

* static int profile_started = 0;

* static mach_port_t profile_port = 0;

* volatile static int forceDwarf = -2;

* volatile mach_port_t mach_profiler_thread = 0;

* static unw_context_t profiler_uc;

* mach_timespec_t timerprof;

* struct itimerval timerprof;

* static timer_t timerprof;

* static struct itimerspec itsprof;

# sys.c

* JL_STREAM *JL_STDIN=0; constant

* JL_STREAM *JL_STDOUT=0; constant

* JL_STREAM *JL_STDERR=0; constant

# task.c

* volatile int jl_in_stackwalk = 0; thread-local

* static size_t _frame_offset; constant

* DLLEXPORT jl_task_t * volatile jl_current_task; thread-local

* jl_task_t *jl_root_task; constant

* jl_value_t * volatile jl_task_arg_in_transit; thread-local

* jl_value_t *jl_exception_in_transit; thread-local

* __JL_THREAD jl_gcframe_t *jl_pgcstack = NULL; thread-local

* jl_jmp_buf * volatile jl_jmp_target; thread-local

* extern int jl_in_gc; barrier

* static jl_function_t *task__hook_func=NULL; constant

* ptrint_t bt_data[MAX_BT_SIZE+1]; thread-local

* size_t bt_size = 0; thread-local

* int needsSymRefreshModuleList; lock

* jl_function_t *jl_unprotect_stack_func; constant

# toplevel.c

* int jl_lineno = 0; thread-local

* jl_module_t *jl_old_base_module = NULL; constant

* jl_module_t *jl_internal_main_module = NULL; constant

* extern int jl_in_inference; lock

| 0 |

Comment by Kenneth Reitz:

> We need to support MultiDict. This is long overdue.

|

Duplicate #6261

Nate: Sorry i posted duplicate. Probably posted in the wrong place. Newbie and

my first post on the forum.

You closed #6314 as duplicate of #6261. I was not making a feature request but

seeking help.

I was hoping to get help in solving the issue I am experiencing with

RequestsDependencyWarning error messages.

| 0 |

In some case a DataFrame exported to excel present some bad values.

It's is not a problem of Excel reading (the data inside the sheet1.xml of the

.xlsx file is also incorrect).

The same DataFrame exported to ".csv" is correct.

The problem could be "solved" by renaming the column header as [col-1,

col-2,...]. Maybe an encoding problem ?

The issue is that there is no warning/error during the export. It's very easy

to miss it.

To reproduce:

import pandas as pd

df = pd.read_pickle('problematic_df.pkl')

df.to_excel('problematic_df.xlsx')

df.to_csv('problematic_df.csv')

with the file available here:

https://drive.google.com/file/d/0Bzz_ZaP_wS_HMFdlMkVzaTR0cjA/view?usp=sharing

Note that the content of cell M14 is different in both file (at least when run

on my computer)

Using:

* Python 3.4.3 |Anaconda 2.3.0 (64-bit)

* pandas 0.16.2

* Windows 7 64 bits

|

store_id_map

> <class 'pandas.io.pytables.HDFStore'>

> File path: C:\output\identifier_map.h5

> /identifier_map frame_table

> (typ->appendable_multi,nrows->26779823,ncols->9,indexers->[index],dc->[RefIdentifierID])

store_id_map.select('identifier_map')

> * * *

>

> KeyError Traceback (most recent call last)

> in ()

> \----> 1 store_id_map.select('identifier_map')

>

> C:\Python27\lib\site-packages\pandas\io\pytables.pyc in select(self, key,

> where, start, stop, columns, iterator, chunksize, auto_close, *_kwargs)

> 456 return TableIterator(self, func, nrows=s.nrows, start=start, stop=stop,

> chunksize=chunksize, auto_close=auto_close)

> 457

> \--> 458 return TableIterator(self, func, nrows=s.nrows, start=start,

> stop=stop, auto_close=auto_close).get_values()

> 459

> 460 def select_as_coordinates(self, key, where=None, start=None, stop=None,

> *_kwargs):

>

> C:\Python27\lib\site-packages\pandas\io\pytables.pyc in get_values(self)

> 982

> 983 def get_values(self):

> \--> 984 results = self.func(self.start, self.stop)

> 985 self.close()

> 986 return results

>

> C:\Python27\lib\site-packages\pandas\io\pytables.pyc in func(_start, _stop)

> 449 # what we are actually going to do for a chunk

> 450 def func(_start, _stop):

> \--> 451 return s.read(where=where, start=_start, stop=_stop,

> columns=columns, **kwargs)

> 452

> 453 if iterator or chunksize is not None:

>

> C:\Python27\lib\site-packages\pandas\io\pytables.pyc in read(self, columns,

> *_kwargs)

> 3259 columns.insert(0, n)

> 3260 df = super(AppendableMultiFrameTable, self).read(columns=columns,

> *_kwargs)

> -> 3261 df.set_index(self.levels, inplace=True)

> 3262 return df

> 3263

>

> C:\Python27\lib\site-packages\pandas\core\frame.pyc in set_index(self, keys,

> drop, append, inplace, verify_integrity)

> 2827 names.append(None)

> 2828 else:

> -> 2829 level = frame[col].values

> 2830 names.append(col)

> 2831 if drop:

>

> C:\Python27\lib\site-packages\pandas\core\frame.pyc in **getitem** (self,

> key)

> 2001 # get column

> 2002 if self.columns.is_unique:

> -> 2003 return self._get_item_cache(key)

> 2004

> 2005 # duplicate columns

>

> C:\Python27\lib\site-packages\pandas\core\generic.pyc in

> _get_item_cache(self, item)

> 665 return cache[item]

> 666 except Exception:

> \--> 667 values = self._data.get(item)

> 668 res = self._box_item_values(item, values)

> 669 cache[item] = res

>

> C:\Python27\lib\site-packages\pandas\core\internals.pyc in get(self, item)

> 1653 def get(self, item):

> 1654 if self.items.is_unique:

> -> 1655 _, block = self._find_block(item)

> 1656 return block.get(item)

> 1657 else:

>

> C:\Python27\lib\site-packages\pandas\core\internals.pyc in _find_block(self,

> item)

> 1933

> 1934 def _find_block(self, item):

> -> 1935 self._check_have(item)

> 1936 for i, block in enumerate(self.blocks):

> 1937 if item in block:

>

> C:\Python27\lib\site-packages\pandas\core\internals.pyc in _check_have(self,

> item)

> 1940 def _check_have(self, item):

> 1941 if item not in self.items:

> -> 1942 raise KeyError('no item named %s' % com.pprint_thing(item))

> 1943

> 1944 def reindex_axis(self, new_axis, method=None, axis=0, copy=True):

>

> KeyError: u'no item named None'

I am at a loss. What does that mean? I successfully saved the DataFrame but I

cannot read it back.

| 0 |

I've seen other similar posts, but I they seem not to apply to me. The role in

question worked fine in 2.2.

##### ISSUE TYPE

* Bug Report

##### COMPONENT NAME

Loop

##### ANSIBLE VERSION

ansible 2.3.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

python version = 2.7.12 (default, Nov 19 2016, 06:48:10) [GCC 5.4.0 20160609]

##### CONFIGURATION

[defaults]

host_key_checking = False

ansible_managed = DO NOT MODIFY by hand. This file is under control of Ansible on {host}.

vault_password_file = /var/lib/semaphore/.vpf

##### OS / ENVIRONMENT

N/A

##### SUMMARY

When trying to create a list of users, it throws the an error about an invalid

value error.

##### STEPS TO REPRODUCE

I am running this task

This is the task I am trying to run.

- name: create user

user:

name: "{{ item.username }}"

password: "{{ item.password|default(omit) }}"

shell: /bin/bash

become: true

with_items: '{{ users }}'

no_log: true

This is the `users` variable. I have truncated the vault values for brevity.

users:

- username: ptadmin

password: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

use_sudo: true

use_ssh: false

- username: ansibleremote

password: "{{ petra_ansibleremote_password }}"

use_sudo: true

use_ssh: true

public_key: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

- username: semaphore

password: "{{ petra_ansibleremote_password }}"

use_sudo: true

use_ssh: true

- username: frosty

password: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

use_sudo: true

use_ssh: true

public_key: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

- username: thebeardedone

password: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

use_sudo: true

use_ssh: true

public_key: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

- username: senanufc

password: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

use_sudo: true

use_ssh: true

public_key: !vault-encrypted |

$ANSIBLE_VAULT;1.1;AES256

...

I tried a `debug` before it and the results are in this gist.

I also tried with below and the same thing happens

- name: test loop

debug:

msg: "{{ item.username }}"

with_items: "{{ users }}"

##### EXPECTED RESULTS

Users created

##### ACTUAL RESULTS

fatal: [petra-hq-dev-master]: FAILED! => {"failed": true, "msg": "the field 'args' has an invalid value, which appears to include a variable that is undefined. The error was: 'ansible.vars.unsafe_proxy.AnsibleUnsafeText object' has no attribute 'username'\n\nThe error appears to have been in '/etc/ansible/roles/thedumbtechguy.manage-users/tasks/create_users.yml': line 6, column 3, but may\nbe elsewhere in the file depending on the exact syntax problem.\n\nThe offending line appears to be:\n\n\n- name: create user\n ^ here\n"}

to retry, use: --limit @/var/lib/semaphore/repository_1/playbooks/setup_new_hosts.retry

|

##### ISSUE TYPE

* Bug Report

##### COMPONENT NAME

ansible-vault or jinja

##### ANSIBLE VERSION

ansible 2.3.0.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

python version = 2.7.12 (default, Nov 19 2016, 06:48:10) [GCC 5.4.0 20160609]

##### CONFIGURATION

No special configuration

##### OS / ENVIRONMENT

Ubuntu 16.04

##### SUMMARY

When using the newly introduced single encrypted variables in lists or

dictionaries these cannot be looped like expected with `with_items` or

`with_dict`. The list or dictionary is somehow treated as one object and not

as a "loopable" object.

##### STEPS TO REPRODUCE

Simple playbook containing a single encrypted variable `vaulted` in the list

`test_with_vaulted_variable`. The vault password is "test" (without quotes)

and the `vaulted` variable content is "vaulted variable".

---

- hosts: all

vars:

test_without_vaulted_variable:

- not_vaulted: not vaulted variable

- another_standard_variable: standard

test_with_vaulted_variable:

- not_vaulted: not vaulted variable

- vaulted: !vault |

$ANSIBLE_VAULT;1.1;AES256

66376230363937306331353166333731633037326166626530393462636666346630366463313134

6635313236366537346339313338633539643665313931390a373264326437663530616630623734

31666136343232666235323865653838393830613432343561633465333837633531643564343064

3237353766313835310a643963313163663632623064313034363531356330653131303833646138

65366139376134396231353864383662623832376239336433623630383464303161

tasks:

- debug: var=test_without_vaulted_variable

- debug: var=test_with_vaulted_variable

- debug:

with_items: "{{ test_without_vaulted_variable }}"

- debug:

with_items: "{{ test_with_vaulted_variable }}"

Start the play with (and enter the vault pass "test")

ansible-playbook -i 'lavego-test,' vault-with-items.yml --ask-vault-pass

##### EXPECTED RESULTS

The list processing should be the same for the lists

`test_without_vaulted_variable`and `test_with_vaulted_variable`: The loops

should output each element of each list.

##### ACTUAL RESULTS

The list containing the vaulted variable is not looped like expected. In the

last debug statement: Note that there is only one "Hello world!" message

printed instead of two, one for each element, for the list with no vaulted

variable.

Note that also the normal debug output of the two lists differs (first and

second debug statements) - the list containing the vaulted variable "has no

structure".

$ ansible-playbook -i 'localhost,' vault-with-items.yml --ask-vault-pass

Vault password:

PLAY [all] *********************************************************************************************************************************************************************************************************

TASK [Gathering Facts] *********************************************************************************************************************************************************************************************

ok: [localhost]

TASK [debug] *******************************************************************************************************************************************************************************************************

ok: [localhost] => {

"changed": false,

"test_without_vaulted_variable": [

{

"not_vaulted": "not vaulted variable"

},

{

"another_standard_variable": "standard"

}

]

}

TASK [debug] *******************************************************************************************************************************************************************************************************

ok: [localhost] => {

"changed": false,

"test_with_vaulted_variable": "[{u'not_vaulted': u'not vaulted variable'}, {u'vaulted': AnsibleVaultEncryptedUnicode($ANSIBLE_VAULT;1.1;AES256\n66376230363937306331353166333731633037326166626530393462636666346630366463313134\n6635313236366537346339313338633539643665313931390a373264326437663530616630623734\n31666136343232666235323865653838393830613432343561633465333837633531643564343064\n3237353766313835310a643963313163663632623064313034363531356330653131303833646138\n65366139376134396231353864383662623832376239336433623630383464303161\n)}]"

}

TASK [debug] *******************************************************************************************************************************************************************************************************

ok: [localhost] => (item={u'not_vaulted': u'not vaulted variable'}) => {

"item": {

"not_vaulted": "not vaulted variable"

},

"msg": "Hello world!"

}

ok: [localhost] => (item={u'another_standard_variable': u'standard'}) => {

"item": {

"another_standard_variable": "standard"

},

"msg": "Hello world!"

}

TASK [debug] *******************************************************************************************************************************************************************************************************

ok: [localhost => (item=[{u'not_vaulted': u'not vaulted variable'}, {u'vaulted': AnsibleVaultEncryptedUnicode($ANSIBLE_VAULT;1.1;AES256

66376230363937306331353166333731633037326166626530393462636666346630366463313134

6635313236366537346339313338633539643665313931390a373264326437663530616630623734

31666136343232666235323865653838393830613432343561633465333837633531643564343064

3237353766313835310a643963313163663632623064313034363531356330653131303833646138

65366139376134396231353864383662623832376239336433623630383464303161

)}]) => {

"item": "[{u'not_vaulted': u'not vaulted variable'}, {u'vaulted': AnsibleVaultEncryptedUnicode($ANSIBLE_VAULT;1.1;AES256\n66376230363937306331353166333731633037326166626530393462636666346630366463313134\n6635313236366537346339313338633539643665313931390a373264326437663530616630623734\n31666136343232666235323865653838393830613432343561633465333837633531643564343064\n3237353766313835310a643963313163663632623064313034363531356330653131303833646138\n65366139376134396231353864383662623832376239336433623630383464303161\n)}]",

"msg": "Hello world!"

}

PLAY RECAP *********************************************************************************************************************************************************************************************************

localhost : ok=5 changed=0 unreachable=0 failed=0

Thank you for your help!

| 1 |

Let's add a link on a atom-packages website, which will open editor and

install selected package. Something like an AppStore link

|

I believe we casually discussed this in person or in Chat, but I couldn't find

a follow-up issue. I think it would be really excellent. In the meantime we

can work around on the site with instructions for install through the editor

or CLI, but that's a bit clunky.

| 1 |

# Summary of the new feature/enhancement

I think there are some applications that are used very frequently. So it's a

good idea to show a list of applications that are pined and the most used.

Therefore, we don't need to type in a keyword to find them again.

# Proposed technical implementation details (optional)

The list can be above the input textbox or at the sides of it.

Items can be selected by keyboard "ALT + number", so one of my fingers does

not have to leave the ALT key after a "ALT + Space".

|

Hi everybody ? What about a new feature to FancyZones: dynamic resizing of the

zone hosting the currently focused application ? I'm thinking about something

like resizing windows in tiling window mangers such as i3 :)

| 0 |

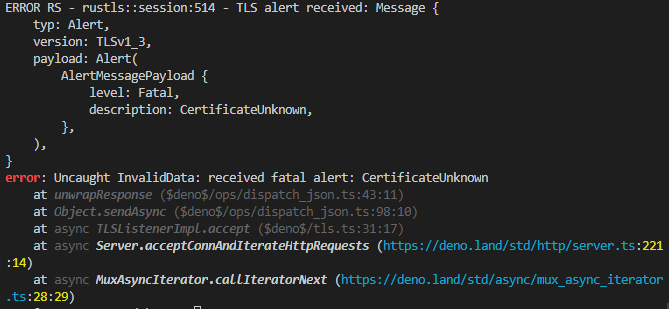

**Context:**

* Playwright Version: 1.12

* Operating System: Mac

* Node.js version: 14.6

* Browser: Firefox

* Extra: NA

**Code Snippet**

Help us help you! Put down a short code snippet that illustrates your bug and

that we can run and debug locally. For example:

const {firefox} = require('playwright');

(async () => {

const browser = await firefox.launch();

const page = await browser.newPage("Page with SELF SIGNED certificate");

// ...

})();

**Describe the bug**

We are facing issues with Self Signed Certificates on firefox and while doing

browser.newPage() we are getting SEC_ERROR_UNKNOWN_ISSUER.

Can we allow please fix this behaviour for Self Signed Certificates on

firefox?

|

We are facing issues with Self Signed Certificates on firefox and while doing

browser.newPage() we are getting SEC_ERROR_UNKNOWN_ISSUER which gets resolved

by setting ignoreHTTPSErrors: true.

Can we allow Self Signed Certificates by default or provide an option to pass

these as options in launchServer options?

Working code:

const browser = await firefox.connect({

wsEndpoint: "ws://127.0.0.1:55614/a4de39415b37282b3f8ee16845753bf8",

});

const context = await browser.newContext({

ignoreHTTPSErrors: true

});

// Use the default browser context to create a new tab and navigate to URL

const page = await context.newPage();

Not Working:

const browser = await firefox.connect({

wsEndpoint: "ws://127.0.0.1:55614/a4de39415b37282b3f8ee16845753bf8",

});

// Use the default browser context to create a new tab and navigate to URL

const page = await browser.newPage();

| 1 |

I am trying to compile tensorflow from source. I can build it **successfully**

with CPU support only( i.e. not use `--config=cuda`) .

But when I try to build it with GPU support, I get error:

[chaowei@node07 tensorflow]$ export EXTRA_BAZEL_ARGS='-s --verbose_failures --ignore_unsupported_sandboxing --genrule_strategy=standalone --spawn_strategy=standalone --jobs 8'

[chaowei@node07 tensorflow]$

[chaowei@node07 tensorflow]$ /gpfs/home/chaowei/download/bazel-0.1.5/output/bazel build -c opt --config=cuda --linkopt '-lrt' --copt="-DGPR_BACKWARDS_COMPATIBILITY_MODE" --conlyopt="-std=c99" //tensorflow/tools/pip_package:build_pip_package

...........

WARNING: Sandboxed execution is not supported on your system and thus hermeticity of actions cannot be guaranteed. See http://bazel.io/docs/bazel-user-manual.html#sandboxing for more information. You can turn off this warning via --ignore_unsupported_sandboxing.

INFO: Found 1 target...

ERROR: /gpfs/home/chaowei/.cache/bazel/_bazel_chaowei/2ce35f089de902cec16e4a2c6a450834/external/grpc/BUILD:485:1: C++ compilation of rule '@grpc//:grpc_unsecure' failed: gcc failed: error executing command /gpfs/home/chaowei/software/gcc-6.1.0/bin/gcc -U_FORTIFY_SOURCE '-D_FORTIFY_SOURCE=1' -fstack-protector -fPIE -Wall -Wunused-but-set-parameter -Wno-free-nonheap-object -fno-omit-frame-pointer -g0 -O2 ... (remaining 39 argument(s) skipped): com.google.devtools.build.lib.shell.BadExitStatusException: Process exited with status 1.

external/grpc/src/core/compression/message_compress.c:41:18: fatal error: zlib.h: No such file or directory

#include <zlib.h>

^

compilation terminated.

Target //tensorflow/tools/pip_package:build_pip_package failed to build

Use --verbose_failures to see the command lines of failed build steps.

INFO: Elapsed time: 71.894s, Critical Path: 58.77s

**I also compile python3 from source in my computer. And when I`import zlib`,

it works fine.**

Here is the information of my system:

`[chaowei@mgt ~]$ cat /etc/redhat-release Red Hat Enterprise Linux Server

release 6.5 (Santiago)`

[chaowei@node07 gcc-6.1.0]$ gcc -v

built-in specs。

COLLECT_GCC=gcc

COLLECT_LTO_WRAPPER=/gpfs/home/chaowei/software/gcc-6.1.0/libexec/gcc/x86_64-pc-linux-gnu/6.1.0/lto-wrapper

Target:x86_64-pc-linux-gnu

Configured with:./configure --prefix=/gpfs/home/chaowei/software/gcc-6.1.0

Thread model:posix

gcc version 6.1.0 (GCC)

I wonder why I get `zlib.h` error when I only build tensorflow with GPU

support.

|

### Environment info

Operating System: Red Hat Enterprise Linux Server release 7.2

Installed version of CUDA and cuDNN: CUDA 7, cuDNN 4

(please attach the output of `ls -l /path/to/cuda/lib/libcud*`):

-rw-r--r-- 1 root root 179466 Jan 26 22:19 /usr/local/cuda/lib/libcudadevrt.a

lrwxrwxrwx 1 root root 16 Jan 26 22:19 /usr/local/cuda/lib/libcudart.so ->

libcudart.so.7.0

lrwxrwxrwx 1 root root 19 Jan 26 22:19 /usr/local/cuda/lib/libcudart.so.7.0 ->

libcudart.so.7.0.28

-rwxr-xr-x 1 root root 303052 Jan 26 22:19 /usr/local/cuda/lib/libcudart.so.7.0.28

-rw-r--r-- 1 root root 546514 Jan 26 22:19 /usr/local/cuda/lib/libcudart_static.a

cuDNN is installed for the local user only.

If installed from sources, provide the commit hash:

`b289bc7`

### Steps to reproduce

I have followed the instructions at:

https://www.tensorflow.org/versions/r0.8/get_started/os_setup.html#requirements

Since I am not root, I had to install everything for the local user, but it

seems to have worked.

I get an error at this line:

bazel build -c opt --config=cuda //tensorflow/cc:tutorials_example_trainer

Saying:

ERROR:

[...]/vlad/.cache/bazel/_bazel_vlad/6607a39fc04ec931b523fac975ff3100/external/png_archive/BUILD:23:1:

Executing genrule @png_archive//:configure failed: bash failed: error

executing command /bin/bash -c ... (remaining 1 argument(s) skipped):

com.google.devtools.build.lib.shell.BadExitStatusException: Process exited

with status 1.

[...]/vlad/.cache/bazel/_bazel_vlad/6607a39fc04ec931b523fac975ff3100/tensorflow/external/png_archive/libpng-1.2.53

[...]/vlad/.cache/bazel/_bazel_vlad/6607a39fc04ec931b523fac975ff3100/tensorflow

/tmp/tmp.pCUaj9eIKr

[...]/vlad/.cache/bazel/_bazel_vlad/6607a39fc04ec931b523fac975ff3100/tensorflow/external/png_archive/libpng-1.2.53

[...]/vlad/.cache/bazel/_bazel_vlad/6607a39fc04ec931b523fac975ff3100/tensorflow

... a bunch more lines until:

checking for pow... no

checking for pow in -lm... yes

checking for zlibVersion in -lz... no

**configure: error: zlib not installed**

Target //tensorflow/cc:tutorials_example_trainer failed to build

Use --verbose_failures to see the command lines of failed build steps.

INFO: Elapsed time: 57.196s, Critical Path: 22.12s

### What have you tried?

I installed zlib, and the following program compiles with g++

#include <cstdio>

#include <zlib.h>

int main()

{

printf("Hello world");

return 0;

}

I have the following in my .bashrc:

export LD_LIBRARY_PATH="$HOME/local/cuda/lib64:$LD_LIBRARY_PATH"

export LD_LIBRARY_PATH="$HOME/bin/zlibdev/lib:$LD_LIBRARY_PATH"

export CPATH="$HOME/local/cuda/include:$CPATH"

export CPATH="$HOME/bin/zlibdev/include:$CPATH"

export LIBRARY_PATH="$HOME/local/cuda/lib64:$LIBRARY_PATH"

export PKG_CONFIG_PATH="$HOME/bin/zlibdev/lib/pkgconfig"

Why can't bazel / tensorflow find zlib.h? It's there and accessible.

| 1 |

### Preflight Checklist

* I have read the Contributing Guidelines for this project.

* I agree to follow the Code of Conduct that this project adheres to.

* I have searched the issue tracker for an issue that matches the one I want to file, without success.

### Issue Details

* **Electron Version:**

* 6.0.10

* **Operating System:**

* Windows 7 / macOS 10.13.6

* **Last Known Working Electron version:**

* 4.1.5

### Expected Behavior

Electron Main Process should receive incoming `messages` from another process.

### Actual Behavior

Electron Main Process can only send messages, but not receive

### To Reproduce

TBD

### Screenshots

### Additional Information

Is there a reason anyone suspects this wouldn't work? I reviewed the changelog

meticulously but I don't see anything that seems relevant in either 5.x or

6.x. I see the sandbox is enabled by default, but it seems to only apply to

the `renderer` processes and not the `main` as far as I can tell. Currently I

haven't a clue what the cause could be, but if any Electron Team dev or anyone

else could point me in the right direction I'd appreciate it hugely.

|

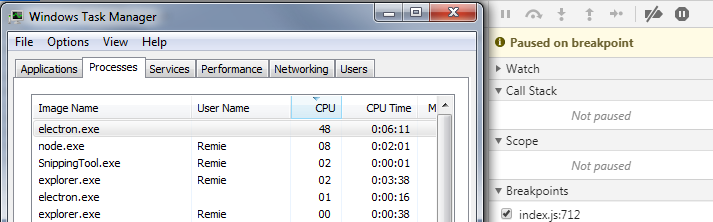

### Preflight Checklist

* I have read the Contributing Guidelines for this project.

* I agree to follow the Code of Conduct that this project adheres to.

* I have searched the issue tracker for an issue that matches the one I want to file, without success.

# Issue Details

* **Electron Version:** `6.0.10`

* **Operating System:** `Windows 7`

* **Last Known Working Electron version:** `4.1.5`

### Expected Behavior

Debugger does not bug out and freeze/eat CPU indefinitely

### Actual Behavior

Debugging innocuous and functional code that was debuggable in Electron 4 can

lead to freezes that require destroying the BrowserWindow or restarting

Electron entirely.

### To Reproduce

No idea :(

### Screenshots

### Additional Information

I upgraded and setting breakpoints in working code that was previously

debuggable in Electron 4 there are issues. There's been no changes to that

code and the debugger worked fine in Electron 4. I've been working on new code

and figured that the issue was caused by a bug in my newly written code until

just now.

It will stop properly at the breakpoints (in a number of places in my code),

and then CPU usage spikes and stays high, taking up 40-45% CPU on my dual core

machine. It appears to respond, but if you resume or step ahead nothing

happens. If you press Pause script execution, similarly nothing seems to

happen except the UI updates. CPU usage remains the same throughout. This code

also runs properly when not debugging and last time I ran it in React Native

and debugged with React Native Debugger (based on Electron 1.8 I think?)

Hitting Ctrl+P, the quick nav feature comes up as expected, but there's no

files there except the HTML file loaded. Any additional files no longer

appear.

Electron 6 is based on Chromium 76, and on some machines Chrome 76 (and 77)

shoots to using a full CPU core and doesn't stop until the process is ended. I

wonder if perhaps I'm seeing the same thing here.

| 1 |

My app doesn't need `werkzeug` directly except for type annotations of views

that contain a `redirect` call, even when I explicitly provide

`Response=flask.Response`.

flask/src/flask/helpers.py

Lines 233 to 235 in 5cdfeae

| def redirect(

---|---

| location: str, code: int = 302, Response:

t.Optional[t.Type["BaseResponse"]] = None

| ) -> "BaseResponse":

I think this can be achieved in a similar way to `classmethod`s:

_BaseResponse = t.TypeVar("_BaseResponse", bound="BaseResponse")

def redirect(

location: str, code: int = 302, Response: t.Optional[t.Type[_BaseResponse]] = None

) -> _BaseResponse:

|

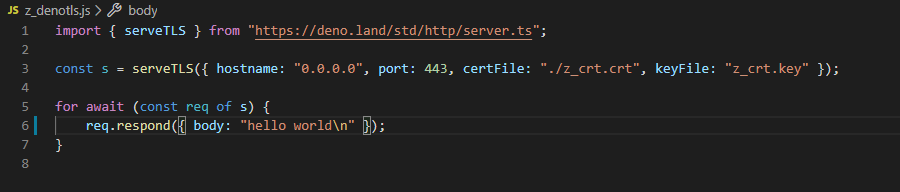

I have been having issues for 3-4 days now to get a hello world up and running

on the remote server. I figured out that it would be nice if there was a

documentation from A-Z how to get that done and working.

http://flask.pocoo.org/docs/deploying/mod_wsgi/

The current documentation does not provide an example what to put into an

exact python file, and what url to open up in the web browser to get the

desired python module running on the remote server. It is okay to have

references to other places if that is more logical, but the idea is to have a

self-contained page which I can start reading, and by I reach the end, I will

have a working remote hello world.

This would be well appreciated.

| 0 |

TensorFlow should have a Rust interface.

Original e-mail:

I'd like to write Rust bindings for TensorFlow, and I had a few questions.

First of all, is anyone already working on this, and if so, can I lend a hand?

If not, is this something the TensorFlow team would be interested in? I assume

that the TensorFlow team would not be willing to commit right now to

supporting Rust, so I thought a separate open source project (with the option

to fold into the main project later) would be the way to go.

|

### System information

* **Have I written custom code (as opposed to using a stock example script provided in TensorFlow)** :

No

* **OS Platform and Distribution (e.g., Linux Ubuntu 16.04)** :

Ubuntu 14.04

* **TensorFlow installed from (source or binary)** :

pip install

* **TensorFlow version (use command below)** :

1.2.1

* **Python version** :

3.6

* **Exact command to reproduce** :

`tensorboard --logdir=gs://mybucket `

### Describe the problem

When trying to run tensorboard from a google cloud storage bucket the

following error occurs:

`tensorflow.python.framework.errors_impl.UnimplementedError: File system

scheme gs not implemented `

Even after running gs authentication

`gcloud auth application-default login`

### Source code / logs

I was following this guide on training a pet object detector

| 0 |

Hard to reproduce. I attached an array where this happens:

import numpy as np

np.seterr(all='raise')

a = np.load('weird_array.npy')

print(a.shape, a.dtype)

for i, val in enumerate(a):

try:

np.isfinite(np.array(val, ndmin=1))

except:

strange_index = i

print(type(val))

print(val.__class__.__name__)

print(i)

print(val)

print(np.isfinite(a[strange_index]))

Results in:

<class 'numpy.float64'>

float64

1023450

nan

Traceback (most recent call last):

File "test.py", line 15, in <module>

print(np.isfinite(a[strange_index]))

FloatingPointError: invalid value encountered in isfinite

weird_array.zip

|

My code failed with a `FloatingPointError` because `isfinite` encountered an

invalid value. The offending value was... `nan`. Apparently, it was _the wrong

kind of nan_. I reproduced it as follows:

# (earlier: seterr(all='raise'))

In [204]: x = uint32(0x7f831681).view("<f4")

In [205]: print(x)

nan

In [206]: isnan(x)

---------------------------------------------------------------------------

FloatingPointError Traceback (most recent call last)

<ipython-input-206-b5e847e0f3bf> in <module>()

----> 1 isnan(x)

FloatingPointError: invalid value encountered in isnan

In [207]: isfinite(x)

---------------------------------------------------------------------------

FloatingPointError Traceback (most recent call last)

<ipython-input-207-3d4ef4d5266d> in <module>()

----> 1 isfinite(x)

FloatingPointError: invalid value encountered in isfinite

I'm not sure how this ended up in my data, but it was not on purpose.

| 1 |

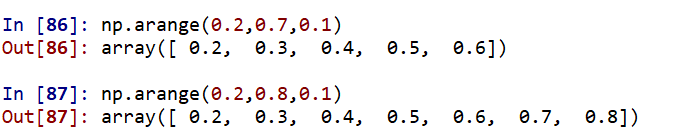

When creating an array from 0.2 to 0.8, the maximum value in the array is 0.8,

but when I run max of the whole array, I found the maximum value different

from 0.8. It has digits in the 15th and 16th decimal.

When the same is done with 0.7 as the ending value, the maximum of the array

is 0.60000000009.. and the value of 0.7 is not included in the array.

PFA the screenshots.

|

If I do `np.arange(3.18,3.21,0.01)`, it gives `array([ 3.18, 3.19, 3.2 ])`.

However, if I do `np.arange(3.18,3.22,0.01)`, it gives `array([ 3.18, 3.19,

3.2 , 3.21, 3.22])`. This seems inconsistent. Why is this so? I am using numpy

1.11.2 on Python 2.7.13.

| 1 |

Been using React (Native) for half a year now, really enjoying it! I'm no

expert but have run up against what seems like a weakness in the framework

that I'd like to bring up.

**The problem**

_Sending one-off events down the chain (parent-to-child) in a way that works

with the component lifecycle._

The issue arises from the fact that props are semi-persistent values, which

differs in nature from one-time events. So for example if a deep-link URL was

received you want to say 'respond to this once when you're ready', not 'store

this URL'. The mechanism of caching a one-time event value breaks down if the

same URL is then sent again, which is a valid event case.

Children have an easy and elegant way to communicate back to parents via

callbacks, but there doesn't seem to be a way to do this same basic thing the

other direction.

**Example cases**

* A deep-link was received and an app wants to tell child pages to respond appropriately

* A tab navigator wants to tell a child to scroll to top on secondary tap

* A list view wants to trigger all of its list items to animate each time the page is shown

From everything I've read, the two normal ways to do this are 1) call a method

on a child directly using a ref, or 2) emit an event that children may listen

for. But those ignore the component lifecycle, so the child isn't ready to

receive a direct call or event yet.

These also feel clunky compared to the elegance of React's architecture. But

React is a one-way top-down model, so the idea of passing one-time events down

the component chain seems like it would fit nicely and be a real improvement.

**Best workarounds we've found**

* Add a 'trigger' state variable in the parent that is a number, and wire this to children. Children use a lifecycle method to sniff for a change to their trigger prop, and then do a known action. We've done this a bunch now to handle some of the cases listed above.

* (really tacky) Set and then clear a prop immediately after setting it. Yuck.

Is there is some React Way to solve this common need? If so, no one on our

team knows of one, and the few articles I've found on the web addressing

component communication only suggest dispatching events or calling methods

directly refs. Thanks for the open discussion!

|

**Do you want to request a _feature_ or report a _bug_?**

Bug

**What is the current behavior?**

When an undefined object is assigned a property, the component in which this

is done re-renders.

**If the current behavior is a bug, please provide the steps to reproduce and

if possible a minimal demo of the problem. Your bug will get fixed much faster

if we can run your code and it doesn't have dependencies other than React.

Paste the link to your JSFiddle (https://jsfiddle.net/Luktwrdm/) or

CodeSandbox (https://codesandbox.io/s/new) example below:**

function ashwin() {

let obj = undefined;

obj["hasBasket"] = true;

}

call this function inside a functional component. It will result in the code

being rendered again before throwing an error.

https://codesandbox.io/s/priceless-wing-6xh8r

**What is the expected behavior?**

Shouldn't render again and just throw an error

**Which versions of React, and which browser / OS are affected by this issue?

Did this work in previous versions of React?**

React:- 16.10.2

Was able to reproduce it in chrome and safari.

Didn't test it in previous versions of React

| 0 |

Please add standarized/easy way to implement dependent selects form field..

|

We long have the problem of creating fields that depend on the value of other

fields now. See also:

* #3767

* #3768

* #4548

I want to propose a solution that seems feasible from my current point of

view.

Currently, I can think of two different APIs:

##### API 1

<?php

$builder->addIf(function (FormInterface $form) {

return $form->get('field1')->getData() >= 1

&& !$form->get('field2')->getData();

}, 'myfield', 'text');

$builder->addUnless(function (FormInterface $form) {

return $form->get('field1')->getData() < 1

|| $form->get('field2')->getData();

}, 'myfield', 'text');

##### API 2

<?php

$builder

->_if(function (FormInterface $form) {

return $form->get('field1')->getData() >= 1

&& !$form->get('field2')->getData();

})

->add('myfield', 'text')

->add('myotherfield', 'text')

->_endif()

;

$builder

->_switch(function (FormInterface $form) {

return $form->get('field1')->getData();

})

->_case('foo')

->_case('bar')

->add('myfield', 'text', array('foo' => 'bar'))

->add('myotherfield', 'text')

->_case('baz')

->add('myfield', 'text', array('foo' => 'baz'))

->_default()

->add('myfield', 'text')

->_endswitch()

;

The second API obviously is a lot more expressive, but also a bit more

complicated than the first one.

Please give me your opinions on what API you prefer or whether you can think

of further limitations in these APIs.

##### Implementation

The issue of creating dependencies between fields can be solved by a lazy

dependency resolution graph like in the OptionsResolver.

During form prepopulation, the conditions are invoked with a

`FormPrepopulator` object implementing `FormInterface`. When

`FormPrepopulator::get('field')` is called, "field" is prepopulated. If

"field" is also dependent on some condition, that condition will be evaluated

now in order to construct "field". After evaluating the condition, fields are

added or removed accordingly.

During form binding, the conditions are invoked with a `FormBinder` object,

that also implements `FormInterface`. This object works like

`FormPrepopulator`, only that it binds the fields instead of filling them with

default data.

In both cases, circular dependencies can be detected and reported.

| 1 |

### Preflight Checklist

* I have read the Contributing Guidelines for this project.

* I agree to follow the Code of Conduct that this project adheres to.

* I have searched the issue tracker for an issue that matches the one I want to file, without success.

### Issue Details

* **Electron Version:**

* 9.0.0 and later

* **Operating System:**

* macOS 10.13.6

* **Last Known Working Electron version:**

* 8.3.3

### Expected Behavior

in BrowserWindow set to do http CORS request

webPreferences: {

webSecurity: false

},

in low version electron, can do CORS request.

### Actual Behavior

Access to XMLHttpRequest at 'https://xxx' from origin 'http://localhost:9080'

has been blocked by CORS policy: No 'Access-Control-Allow-Origin' header is

present on the requested resource.

|

### Preflight Checklist

* I have read the Contributing Guidelines for this project.

* I agree to follow the Code of Conduct that this project adheres to.

* I have searched the issue tracker for an issue that matches the one I want to file, without success.

### Issue Details

* **Electron Version:**

12.0.0-beta.21

* **Operating System:**

Windows 10

* **Last Known Working Electron version:**

12.0.0-beta.20

### Expected Behavior

No crash.

### Actual Behavior

Crash reported to Sentry using minidump uploader:

https://sentry.io/share/issue/70e843318a304dae9616805c3223a1cc/

### To Reproduce this might be enough

Use Electron.WebRequest .onBeforeRequest(

{

urls: [],

},

({ url }, callback) => {

if (url.startsWith('https://test..com')) {

callback({ cancel: true });

} else {

callback({ cancel: false });

}

},

);

| 0 |

**Dave Syer** opened **SPR-5850** and commented

Provide a ContextLoader for WebApplicationApplicationContext: some components

(e.g. View implementations) are hard or impossible to test without an instance

of WebApplicationContext.

* * *

**Affects:** 3.0 M3

**Issue Links:**

* #9917 Support loading WebApplicationContexts with the TestContext Framework ( _ **"duplicates"**_ )

|

**Fritz Richter** opened **SPR-7784** and commented

In my current webapp project, I found out, that if I post something to the

server in the form of ?list=1&list=2&list=3 and I have got a Mapping on my

controller, which has the following parameter `@RequestParam` List<Long>, it

will contain String objects, and not Long objects.

* * *

**Affects:** 3.0.5

**Issue Links:**

* #12437 `@RequestParam` \- wanting List getting List ( _ **"duplicates"**_ )

* #12437 `@RequestParam` \- wanting List getting List

| 0 |

Is it possible to build a subset of babel to just use the `transform` function

for compiling es6/es7/jsx to js in the browser, does it generally have to be

this huge? Are there any tricks for using it with webpack?

`JSXTransformer` and `react-tools` are going away and babel seems to remain

the only available option for transpiling JSX in the browser, however it's 10

times bigger than those two which is often quite a problem.

|

Current size `browser-polyfill.min.js` ~80kb. Possible serious reduce it.

Browserify, by default, saves modules path, it's not required.

Full shim version of `core-js` with `browserify` (w/o `bundle-collapser`) -

65kb, with `webpack` \- 43kb.

Possible use `webpack` or add `bundle-collapser`.

I think, possible do the same with `browser.js`.

| 1 |

##### Issue Type:

Bug Report

##### Ansible Version:

Bug on current git devel, introduced with `eeb5973`

##### Environment:

Ubuntu 12.04 and 14.04

##### Summary:

When applying some filters on a list, when the resulting list is empty, it is

not recognised as a list anymore.

##### Steps To Reproduce:

---

- hosts: localhost

gather_facts: false

connection: local

tasks:

- command: cat /proc/cpuinfo

register: cpuinfo

- debug: var=cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)

- debug: var=item

with_items: cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)

##### Expected Results:

TASK: [debug var=cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)] *******

ok: [localhost] => {

"cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)": "set([])"

}

TASK: [debug var=item] ********************************************************

skipping: [localhost]

##### Actual Results:

TASK: [debug var=cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)] *******

ok: [localhost] => {

"cpuinfo.stdout_lines|difference(cpuinfo.stdout_lines)": "set([])"

}

TASK: [debug var=item] ********************************************************

fatal: [localhost] => with_items expects a list or a set

FATAL: all hosts have already failed -- aborting

|

##### Issue Type:

Bug Report

##### Ansible Version:

ansible 1.6.6

##### Environment:

What OS are you running Ansible from and what OS are you managing? Examples

include RHEL 5/6, Centos 5/6, Ubuntu 12.04/13.10, *BSD, Solaris. If this is a

generic feature request or it doesn't apply, just say “N/A”.

##### Summary:

I have an ansible task that looks like this:

- name: Distribute Jar files to all other cluster nodes

shell: rsync -avz {{ home }}/.m2 {{ user }}@{{ item }}:{{ home }}/

with_items: groups.hadoop_all | difference(inventory_hostname)

when: groups.hadoop_all | difference(inventory_hostname) | length > 1

ignore_errors: yes

sudo: no

tags:

- distribute_jars

which works fine on ansible 1.6.5 but fails on 1.6.6

##### Steps To Reproduce:

Working on a reduced test case now.

##### Expected Results:

I would expect the playbook to complete without errors.

##### Actual Results:

The actual result is that it fails in 1.6.6 with this output:

TASK: [dev | Distribute Jar files to all other cluster nodes] ***********

fatal: [dev] => with_items expects a list or a set

FATAL: all hosts have already failed -- aborting

I've stepped back through ansible versions on a machine, keeping everything

else the same and as soon as you upgrade to 1.6.6, it starts falling with this

error. I'm using ansible installed via pip, if that makes any difference.

| 1 |

Today I've upgraded our codebase from r88 -> r91, everything seems to work

fine aside from the reflectors (code which has not significantly changed since

r88 from what I see). I've noticed that when checking individual upgrades, the

problem starts at r90.

It seems to go exponentially bad when our entire model + mirror are in the

frustrum, when I'm right in front of it & little else of the model, the

performance is OK. Now since I can't provide live examples, I was wondering if

someone could point me on where to look/investigate, or what could have

changed in that release that affects them?

##### Three.js version

* Dev

* r91

* r90

* r89

* r88

##### Browser

* All of them

* Chrome

* Firefox

* Internet Explorer

##### OS

* All of them

* Windows

* macOS

* Linux

* Android

* iOS

##### Hardware Requirements (graphics card, VR Device, ...)

|

Right now, there are two ways to adjust the positioning of a texture on an

object:

* UV coordinates in geometry

* `.offset` and `.repeat` properties on `Texture`

It would be useful to also have `.offset` and `.repeat` properties on

`Material` as well, so that these values could be varied on different objects

without having to allocate additional geometries or texture resources.

I'm considering an interesting use case: breaking down sky spheres/domes into

smaller components. Rather than create a single, large, solid sphere, imagine

a fraction of an icosahedron (subdivided) and repeated as multiple meshes to

construct a sphere out of pieces. It would cost a few extra draw calls, but

provide at least two benefits:

1. Significantly reduce the number of vertices computed by excluding the ones that are off camera, which is well more than half. Spheres can have hundreds of vertices, depending on the level of detail. This is not such a big deal on desktop, but I suspect it could make a big difference on mobile devices with tiled GPU architectures that would cause the vertex shader to be run many times.

2. Reduce overdraw by fixing z-sorting. If an entire sky sphere is positioned at [0, 0, 0], it will almost certainly be sorted incorrectly and drawn first every time, even though it should be drawn last. By positioning individual sphere components far away, they should be correctly sorted last.

Implementing this approach today would require duplicating either the geometry

(for different UV coordinates) or the texture (for different offsets) for each

piece of the sky. If we could set the offset on the material, each piece would

use the same geometry, shader and sampler, only varying the uniform values

between draw calls.

| 0 |

# Checklist

* I have verified that the issue exists against the `master` branch of Celery.

* This has already been asked to the discussion group first.

* I have read the relevant section in the

contribution guide

on reporting bugs.

* I have checked the issues list

for similar or identical bug reports.

* I have checked the pull requests list

for existing proposed fixes.

* I have checked the commit log

to find out if the bug was already fixed in the master branch.

* I have included all related issues and possible duplicate issues

in this issue (If there are none, check this box anyway).

## Mandatory Debugging Information

* I have included the output of `celery -A proj report` in the issue.

(if you are not able to do this, then at least specify the Celery

version affected).

* I have verified that the issue exists against the `master` branch of Celery.

* I have included the contents of `pip freeze` in the issue.

* I have included all the versions of all the external dependencies required

to reproduce this bug.

## Optional Debugging Information

* I have tried reproducing the issue on more than one Python version

and/or implementation.

* I have tried reproducing the issue on more than one message broker and/or

result backend.

* I have tried reproducing the issue on more than one version of the message

broker and/or result backend.

* I have tried reproducing the issue on more than one operating system.

* I have tried reproducing the issue on more than one workers pool.

* I have tried reproducing the issue with autoscaling, retries,

ETA/Countdown & rate limits disabled.

* I have tried reproducing the issue after downgrading

and/or upgrading Celery and its dependencies.

## Related Issues and Possible Duplicates

#### Related Issues

* None

#### Possible Duplicates

* None

## Environment & Settings

**Celery version** :

**`celery report` Output:**

# Steps to Reproduce

## Required Dependencies

* **Minimal Python Version** : N/A or Unknown

* **Minimal Celery Version** : N/A or Unknown

* **Minimal Kombu Version** : N/A or Unknown

* **Minimal Broker Version** : N/A or Unknown

* **Minimal Result Backend Version** : N/A or Unknown

* **Minimal OS and/or Kernel Version** : N/A or Unknown

* **Minimal Broker Client Version** : N/A or Unknown

* **Minimal Result Backend Client Version** : N/A or Unknown

### Python Packages

**`pip freeze` Output:**

### Other Dependencies

N/A

## Minimally Reproducible Test Case

Swap the commented line setting `c` for expected behaviour.

import celery

app = celery.Celery(broker="redis://", backend="redis://")

@app.task

def nop(*_):

pass

@app.task

def die(*_):

raise RuntimeError

@app.task(bind=True)

def replace(self, with_):

with_ = celery.Signature.from_dict(with_)

raise self.replace(with_)

@app.task

def cb(*args):

print("CALLBACK", *args)

#c = celery.chain(nop.s(), die.s())

c = celery.chain(nop.s(), replace.si(die.s()))

c.link_error(cb.s())

c.apply_async()

# Expected Behavior

`cb` should be called as a new-style errback because it accepts starargs

# Actual Behavior

`cb` is not called

|

when trying to start a worker.

[2013-06-28 12:28:07,258: ERROR/MainProcess] Unrecoverable error:

AttributeError("'Connection' object has no attribute 'setblocking'",)

Traceback (most recent call last): File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/worker/ **init**.py", line 189, in

start self.blueprint.start(self) File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/bootsteps.py", line 119, in start

step.start(parent) File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/bootsteps.py", line 352, in start

return self.obj.start() File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/concurrency/base.py", line 112, in

start self.on_start() File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/concurrency/processes.py", line 461,

in on_start **self.options) File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/concurrency/processes.py", line 236,

in **init** for _ in range(processes)) File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/concurrency/processes.py", line 236,

in for _ in range(processes)) File

"/Users/yannick/.pythonbrew/pythons/Python-3.3.0/lib/python3.3/site-

packages/celery-3.1.0rc3-py3.3.egg/celery/concurrency/processes.py", line 268,

in create_process_queues inq._writer.setblocking(0) AttributeError:

'Connection' object has no attribute 'setblocking'

| 0 |

Hello, folks.

Version 3.0.3 of bootstrap.css has ineffective rules for striped tables which

have rows with .danger or other contextual class(es), if _tbody_ is used

Given a table with the following structure...

<table class="table table-striped table-hover">

<tbody>

<tr class="danger">

<td>Row 1</td>

</tr>

<tr class="danger">

<td>Row 2</td>

</tr>

<tr class="danger">

<td>Row 3</td>

</tr>

</tbody>

</table>

...only Row 2 gets emphasized with the _.danger_ contextual class rules. On

hovering these rows, the normal emphasis returns, including the darker color

on the shaded rows.

In dist, the rule for _table-striped_ in bootstrap.css : 1712 overrides the

rule in bootstrap : 1770, because the former contains a _tbody_ selector node,

which determines the rule to be sharper than the latter, where this node is

missing.

This bug has probably been introduced with the super-specific rules for

.table-striped class in `224296f`/less/tables.less, purposed to fix #7281.

|

i have table with class .table-striped. it has 3 rows with tr.danger. only the

middle one is red, the other two are default color.

when i remove .table-striped, it works correctly

| 1 |

**Stephen Todd** opened **SPR-3450** and commented

Spring's current code makes it difficult to use java collections as a command

in command controllers. Specifically, referencing elements in the collection

is prevented. Currently, elements in an collection are references using [].

Support needs to be added to allw paths to start with [index/key] as in

"[1].property".

The application for this is probably rare, which is probably why it hasn't

been brought up before (at least I couldn't find an similarly reported

issues). I use the functionality for executing multiple of the same commands.

I currently have a form that has multiple objects that can be selected. If the

user clicks "Delete" with multiple objects selected, I have an array of id's

that get passed to a delete form. This form creates a delete command for each

object specified. Details for the way the objects are deleted are stored in an

object, which is put in to a list. When the user submits the form, each

command is executed in succession (the list is actually passed to the business

layer and executed in a single transaction).

The current work around is to create a subclass of the java collection you

want and make a getter that returns this. Then you can reference the array as

(using a getter getSelf()) "self[1].property". Although this method works, a

method that doesn't require this simple extension would be preferable.

* * *

**Affects:** 2.0.4

|

**Alex Antonov** opened **SPR-2058** and commented

When a BeanWrapper wraps an object that is a map or a collection of sorts, it

has trouble retrieving a value using a key property

i.e.

Person p = new Person("John");

Map map = new HashMap();

map.put("key", person);

BeanWrapperImpl wrapper = new BeanWrapperImpl(map);

String name = wrapper.getPropertyValue("[key].name")

This kind of access is very possible when comming from a web-layer using a