text1 stringlengths 2 269k | text2 stringlengths 2 242k | label int64 0 1 |

|---|---|---|

x = tf.zeros([10])

tf.gradients(tf.reduce_prod(x, 0), [x])

Gives

more traceback

/home/***/.local/lib/python2.7/site-packages/tensorflow/python/ops/math_grad.pyc in _ProdGrad(op, grad)

128 reduced = math_ops.cast(op.inputs[1], dtypes.int32)

129 idx = math_ops.range(0, array_ops.rank(op.inputs[0]))

--> 130 other, _ = array_ops.listdiff(idx, reduced)

131 perm = array_ops.concat(0, [reduced, other])

132 reduced_num = math_ops.reduce_prod(array_ops.gather(input_shape, reduced))

more traceback

In line 128, `op.inputs[1]` could be a scalar, which will cause a shape

mismatch when passed to `array_ops.listdiff` in line 130.

TF version: master branch a week ago

|

Installed TensorFlow using pip package for v0.10.0rc0 on Ubuntu with python

2.7

Pip package

https://storage.googleapis.com/tensorflow/linux/cpu/tensorflow-0.10.0rc0-cp27-none-

linux_x86_64.whl

The same error appears in the GPU build.

The following minimal code example produces raise an exception at the

definition of z

u = tf.placeholder(dtype=tf.float64, shape=(30,30))

v = tf.reduce_prod(u, reduction_indices=[0])

w = tf.gradients( v, u )

x = tf.placeholder(dtype=tf.float64, shape=(30,30))

y = tf.reduce_prod(x, reduction_indices=0)

z = tf.gradients( y, x )

> z = tf.gradients( y, x )

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/ops/gradients.py", line 478, in gradients

> in_grads = _AsList(grad_fn(op, *out_grads))

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/ops/math_grad.py", line 130, in _ProdGrad

> other, _ = array_ops.listdiff(idx, reduced)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/ops/gen_array_ops.py", line 1201, in list_diff

> result = _op_def_lib.apply_op("ListDiff", x=x, y=y, name=name)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/framework/op_def_library.py", line 703, in

> apply_op

> op_def=op_def)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/framework/ops.py", line 2312, in create_op

> set_shapes_for_outputs(ret)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/framework/ops.py", line 1704, in

> set_shapes_for_outputs

> shapes = shape_func(op)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/ops/array_ops.py", line 1981, in _ListDiffShape

> op.inputs[1].get_shape().assert_has_rank(1)

> File "HOME/anaconda2/lib/python2.7/site-

> packages/tensorflow/python/framework/tensor_shape.py", line 621, in

> assert_has_rank

> raise ValueError("Shape %s must have rank %d" % (self, rank))

> ValueError: Shape () must have rank 1

The top code for u,v and w does not raise an error but the similar code for

x,y, and z does. It looks like the shape code for reduce_prod cannot cope with

the case where the reduction_indices is a single integer rather than a list.

If you replace reduce_prod with reduce_sum in the above snippet then it does

not raise an error.

| 1 |

**I'm submitting a ...** (check one with "x")

[x] bug report => search github for a similar issue or PR before submitting

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://github.com/angular/angular/blob/master/CONTRIBUTING.md#question

**Current behavior**

When using the currency pipe to format a number specifying the valid ISO 4217

currency code for South Africa 'ZAR' and setting the SymbolDisplay to true the

currency code is not replaced by the currency symbol, in this case 'R'.

**Expected behavior**

The expected behavior is for the projected property to display the currency

symbol instead of the currency code when symbolDisplay is true and

currencyCode is set to 'ZAR'.

**Minimal reproduction of the problem with instructions**

{{someamount | currency:'ZAR':true:'1.2-2'}}

**What is the motivation / use case for changing the behavior?**

We need to format currency with the South African currency symbol.

**Please tell us about your environment:**

macOS Sierra, webpack, ng serve (angular-cli: template used:

1.0.0-beta.11-webpack.9-4)

* **Angular version:** 2.0.0-rc.7

* **Browser:** all

* **Language:** all

* **Node (for AoT issues):** `node --version` =

|

I'm using the currency pipe with beta.0, and with the following code inside

ionic 2:

>

> <ion-card-content>

> Total: {{ total | currency:'COP':true }}

> </ion-card-content>

>

The output should be `Total: $ 110,000` but the actual output is this:

| 1 |

i want prevent atom-shell to start when had a atom-shell started, how can i do

that?

|

I want to disallow user to run the same app on running, and get the arguments

from command line.

Is there any way implement this, just like use 'single-instance' configuration

and 'open' event in node-webkit.

| 1 |

by **plnordahl** :

Running Mavericks 10.9.2 and using the patch from

https://groups.google.com/forum/#!msg/golang-codereviews/it4yhth9fWM/TxcE1Yx6AUMJ (issue

7510) on release-branch.go1.2, I'm not seeing the variable names of int, string, float,

or map variables in a simple program. I do however see names for slices, arrays, and

struct/struct members in the output. Below is some code to reproduce the behavior.

One program, which will be loaded for it's DWARF data:

package main

import "fmt"

func main() {

myInt := 42

mySecondInt := 41

fmt.Println(myInt, mySecondInt)

myArray := [2]string{"hello", "world"}

fmt.Println(myArray)

myString := "foo"

fmt.Println(myString)

mySlice := []int{1, 2, 3}

fmt.Println(mySlice)

myFloat := 42.424242

fmt.Println(myFloat)

myMap := make(map[string]string)

fmt.Println(myMap)

myStruct := myStructType{"bleh", 999, "hm"}

fmt.Println(myStruct)

}

type myStructType struct {

Bleh string

Blerg int

Foo string

}

...and the second, which just loads the Mach-O binary and prints each entry's offset,

tag, children, and fields (pipe the output of this program into grep to see the behavior

I describe).

package main

import (

"debug/macho"

"fmt"

"log"

)

func main() {

m, err := macho.Open("<put the path to where your test binary is located>")

if err != nil {

log.Fatalf("Couldn't open MACH-O file: %s\n", err)

}

d, err := m.DWARF()

if err != nil {

log.Fatalf("Couldn't read DWARF info: %s\n", err)

}

r := d.Reader()

for {

entry, err := r.Next()

if err != nil {

log.Fatalf("Current offset is invalid or undecodable. %s", err)

}

if entry == nil {

fmt.Println("Reached the end of the entry stream.")

return

}

fmt.Println("Entry --- offset: ", entry.Offset,

" tag: ", entry.Tag, " children: ", entry.Children,

" field: ", entry.Field)

}

return

}

|

by **seanerussell** :

What steps will reproduce the problem?

1. Compile the attached file (steps to compile listed below)

2. Execute on Windows

3. Watch the memory use with Resource Monitor

The application memory use increases at a rate of about 1KB every 5s until it exceeds

the stack (heap?) space and crashes.

I'm using Go 6d7136d74b65 weekly/weekly.2011-10-18 and am compiling on Linux,

cross-compiling for Windows using gb (go-gb.googlecode.com) via "GOOS=windows

gb"; 6g/l are the compilers being used.

The Linux machine is 2.6.32-32-generic #62-Ubuntu SMP, and the code is executing on

Windows Server 2008 R2 Standard, 6-core AMD Opteron 2425 HE 2.1GHz x 2, 32GB RAM, 64-bit.

I don't see similar behavior on Linux; the process memory use does grow very slowly for

a while, but appears to eventually plateau after a few minutes.

If this isn't a memory leak, but is explained by expected behavior, could I get a

pointer to a document describing the cause?

Attachments:

1. memconsumer.go (1308 bytes)

| 0 |

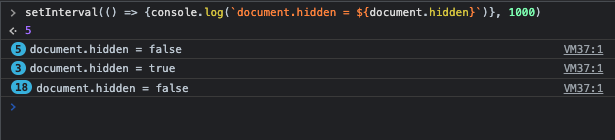

It seems that `document.hidden` is not working once the browser is controlled

by Playwright.

Given you open a browser tab (outside of playwright) and inject in the console

the script :

setInterval(() => {console.log(`document.hidden = ${document.hidden}`)}, 1000)

When you open a new browser tab

And you wait a few seconds

And you come back to the previous tab

Then you have the following logs in the console:

When you do the same steps in a browser controlled by Playwright

Then you have the following logs in the console :

Unless I am wrong, `document.hidden` should be handled by Playwright.

This missing feature prevents to test the front stops specific activities (for

example the front stops to poll a rest API endpoint) when the user switches

from one browser tab to another, and vice-versa: the front should, for

example, restart it's polling activity when the browser tab becomes actif

again.

Regards

|

Presently Playwright treats all tabs as active. This can become an issue when

a tab we're not currently focusing on is running a lot of work.

I'll use the site MakeUseOf.com as an example. When this tab is active, it can

consume 25-30% CPU time on my brand new MBPro. Under normal Chromium, when the

tab is not the current tab Chromium will throttle timers (and whatever else it

may do) and the site will settle around 2% CPU.

Under Playwright, this inactive tab will continue to use full resources

(25-30% CPU).

I'd like to see a flag or an API call (`page.goToBack()` was suggested) that

would allow inactive tabs to sleep.

Here's a link to the original discussion.

Thanks!

| 1 |

When concatting two dataframes where there are a) there are duplicate columns

in one of the dataframes, and b) there are non-overlapping column names in

both, then you get a IndexError:

In [9]: df1 = pd.DataFrame(np.random.randn(3,3), columns=['A', 'A', 'B1'])

...: df2 = pd.DataFrame(np.random.randn(3,3), columns=['A', 'A', 'B2'])

In [10]: pd.concat([df1, df2])

Traceback (most recent call last):

File "<ipython-input-10-f61a1ab4009e>", line 1, in <module>

pd.concat([df1, df2])

...

File "c:\users\vdbosscj\scipy\pandas-joris\pandas\core\index.py", line 765, in take

taken = self.view(np.ndarray).take(indexer)

IndexError: index 3 is out of bounds for axis 0 with size 3

I don't know if it should work (although I suppose it should, as with only the

duplicate columns it does work), but at least the error message is not really

helpfull.

|

dti = pd.date_range('2016-09-23', periods=3, tz='US/Central')

df = pd.DataFrame(dti)

>>> df.T

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "pandas/core/frame.py", line 1941, in transpose

return super(DataFrame, self).transpose(1, 0, **kwargs)

File "pandas/core/generic.py", line 616, in transpose

new_values = self.values.transpose(axes_numbers)

File "pandas/core/base.py", line 697, in transpose

nv.validate_transpose(args, kwargs)

File "pandas/compat/numpy/function.py", line 54, in __call__

self.defaults)

File "pandas/util/_validators.py", line 218, in validate_args_and_kwargs

validate_kwargs(fname, kwargs, compat_args)

File "pandas/util/_validators.py", line 157, in validate_kwargs

_check_for_default_values(fname, kwds, compat_args)

File "pandas/util/_validators.py", line 69, in _check_for_default_values

format(fname=fname, arg=key)))

ValueError: the 'axes' parameter is not supported in the pandas implementation of transpose()

Looks like this is b/c `df.values` is a `DatetimeIndex`

| 0 |

CSS Grid layout excels at dividing a page into major regions, or defining the

relationship in terms of size, position, and layer, between parts of a control

built from HTML primitives.

Watched a youtube about CSS Grids: https://youtu.be/txZq7Laz7_4

Nice concept that seems to play nice with component based development.

Integration with Next.js may prove to solve CSS issues and the way we think

about making apps.

Probably goes against providing options to the developer but could image a

lean mean workflow.

Any thoughts?

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

Standard <style jsx> with built in support for grid layouts/fallback support.

## Current Behavior

Currently CSS can be a mess and look quite ugly with multiple nested divs and

leads to accessibility issues.

## Steps to Reproduce (for bugs)

1. To many options for front end CSS frameworks that don't work well with component based workflow.

2. Heavy CSS frameworks in the global space.

3. Incomplete CSS react libraries like Semantic UI.

4. Doing things the old way.

## Context

Providing a rich UX is one of the most complicating parts of developing an app

and Next.js definitely makes things easier and is also lightning fast. I

believe something like "CSS Grid" could make layouts of the components where

you want them easy while increasing performance.

## Links

https://developer.mozilla.org/en-US/docs/Web/CSS/CSS_Grid_Layout

https://css-tricks.com/snippets/css/complete-guide-grid/

https://www.w3.org/TR/css-grid-1/

|

* I have searched the issues of this repository and believe that this is not a duplicate.

## Expected Behavior

Calling `Router.push({pathname: 'about'})` should work just as `<Link

href={{pathname: 'about'}}/>` does, and as described here.

## Current Behavior

Client side, it throws an error `Route name should start with a "/", got

"page"` (thrown from https://github.com/zeit/next.js/blob/v3-beta/lib/page-

loader.js#L22)

It then immediately falls back to the server side route, which then loads the

page as usual.

Depending on how long the server page takes to load, you may or may not see

the error above.

Note that absolute pathnames (`/about`) work as normal.

## Steps to Reproduce (for bugs)

1. https://github.com/tmeasday/next-routing-bug \- `git clone https://github.com/tmeasday/next-routing-bug; cd next-routing bug; npm install; npm run dev`

2. Click the "Link" or "Leading Slash" tags, notice normal behaviour.

3. Click the "No Leading Slash" tag, notice the error for 2 seconds (how long the "page" takes to load).

4. Inspect the server console and note that the "page" page loaded from the server.

## Context

I think the issue here is that the `Link` calls `url.resolve` here, where as

`Router.push` does not.

I suspect the `resolve` call should come somewhere deeper in the stack.

## Your Environment

Tech | Version

---|---

next | 2 + 3 betas

node | 8.1.0

OS | macOS 10.12.5

browser | Chrome

| 0 |

#### Summary

`maxBodyLength` is a option for follow-redirect which is default transport for

axios in node.js environment.

so, can't upload file > 10M

#### Context

* axios version: all version

* Environment: node all version

|

#### Summary

`follow-redirects` defaults to a `maxBodyLength` of 10mb. `axios` does not

override this default, even when the config specifies a `maxContentLength`

parameter greater than 10mb, thus capping `axios` at a maximum content length

of 10mb no matter what is configured, and returning an error that does not

make it obvious that `follow-redirects` is the cause.

`axios` should set the `maxBodyLength` option sent to `follow-redirects` to

match the `maxContentLength` config property set on the `AxiosOptions` object.

#### Context

* axios version: _v0.17.1_

* Environment: _Electron v1.4.3 or Node v6.5.0 with XHR polyfill, macOS v10.13.2_

#### Status

Pull request: #1287

| 1 |

function foo()

try

error("error in foo")

catch e

end

end

function bar()

try

error("error in bar")

catch e

foo()

rethrow(e)

end

end

bar()

produces the misleading

ERROR: error in bar

Stacktrace:

[1] foo() at ./REPL[1]:3

[2] bar() at ./REPL[2]:5

This just hit me in a really wicked situation: The stacktrace actually lead me

to a line erroneously throwing the reported exception. I fixed it (to throw

the correct exception), but the same error kept showing, leaving me completely

puzzled for quite some time...

|

`\varepsilon` is currently mapped to ɛ (U+025B latin small letter open e).

This is wrong. The correct mapping is ε (U+03B5 greek small letter epsilon).

| 0 |

If this is something that can't be added or isn't going to be supported, it

wouldn't be a bad thing to support hierarchical data in forms (adjacency list

model / nested set model).

Possibly having the option to force the selection of leafs without children

only, etc, etc. Can lead to a very powerful feature. =)

| Q | A

---|---

Bug report? | yes

Feature request? | no

BC Break report? | no

RFC? | no

Symfony version | 3.4.0-BETA1

Since upgrading to Symfony 3.4.0-BETA1 fom 3.3.10, suddenly all responses

started receiving `Cache-Control` header with `private, max-age=10800, no-

cache, private` value and a response code `304 Not Modified`, which means that

responses are cached in browsers for 3 hours and only hard reload with

`Ctrl+R` or `Shift+R` refreshes the page from backend.

`git bisect` says that the culprit is #24523. And indeed, reverting the commit

in that PR fixes the issue for me, meaning `Cache-Control` header is back to

`no-cache, private` and the response is `200 OK`.

| 0 |

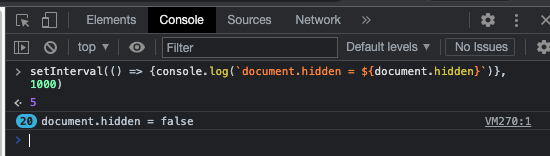

### Problem

When going through the "Image tutorial" on Firefox or Edge, when you try

downloading the image of the stinkbug, it will only download as a `webp` file

and there is no option to download as `png`. That is even clicking the "View

Image" and trying to save it. Below is trying to save the image in Firefox.

This is a problem for introductory students, trying to use this tutorial and

learning how to read tutorials. They believe they are doing something wrong

and wouldn't be sure how to convert it. As a more advanced user, I still could

not find out how to make it a `png` through the tutorial page.

* Tutorial Link: https://matplotlib.org/tutorials/introductory/images.html

* Image Link: https://matplotlib.org/_images/stinkbug.png (may say `png` but downloading it is as `webg`)

Operating System: Windows 10 Pro

### Suggested Improvement

The image needs to be updated to a `png` and maybe a direct link to download

the image (the latter is not as important).

If there is a way to do that with a module, that might be cool to include, but

just an idea. I can provide more information if necessary.

|

The image tutorial on matplotlib.org uses this sample image:

https://matplotlib.org/_images/stinkbug.png

and notes that it is a "24-bit RGB PNG image (8 bits for each of R, G, B)".

However, further inspection reveals that it is _not_ a 24-bit RGB, but rather

an 8-bit grayscale image.

$ curl -s 'https://matplotlib.org/_images/stinkbug.png' | file -

/dev/stdin: PNG image data, 500 x 375, 8-bit grayscale, non-interlaced

However, the source image in the github repository _is_ an RGB image:

$ curl -s 'https://raw.githubusercontent.com/matplotlib/matplotlib/master/doc/_static/stinkbug.png' | file -

/dev/stdin: PNG image data, 500 x 375, 8-bit/color RGB, non-interlaced

I noticed this because when I tried to follow the tutorial on my own machine,

I kept on getting this error in line 7:

In [7]: lum_img = img[:,:,0]

IndexError: too many indices for array

This is because `imread` stores a grayscale image as an ndarray with only two

dimensions, not three, as documented here:

> For grayscale images, the return array is MxN. For RGB images, the return

> value is MxNx3. For RGBA images the return value is MxNx4.

https://matplotlib.org/api/_as_gen/matplotlib.pyplot.imread.html

So `img.shape` ends up as `(375, 500)` when it should be `(375, 500, 3)`.

Let me know if I should file this here instead:

https://github.com/matplotlib/matplotlib.github.com/issues

| 1 |

1. Does Code support showing vertical indent level line?

2. now `editor.renderWhitespace` only have `true` or `false`, can we have a option to only render whitespace when selection?

|

Hi, is there any chance we can get indent guides (the vertical lines that run

down to matching indents). Could not find reference to them anywhere in VS

code or the gallery. Thanks - Adolfo

| 1 |

rust-encoding builds fine in the previous nightly, but it triggers an ICE in

rustc 1.2.0-nightly (`0fc0476` 2015-05-24) (built 2015-05-24)

This looks similar to #24644, but it started more recently.

$ RUST_BACKTRACE=1 cargo build --verbose

Fresh encoding_index_tests v0.1.4 (file:///home/simon/projects/rust-encoding)

Compiling encoding v0.2.32 (file:///home/simon/projects/rust-encoding)

Running `rustc src/lib.rs --crate-name encoding --crate-type lib -g --out-dir /home/simon/projects/rust-encoding/target/debug --emit=dep-info,link -L dependency=/home/simon/projects/rust-encoding/target/debug -L dependency=/home/simon/projects/rust-encoding/target/debug/deps --extern encoding_index_tradchinese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_tradchinese-9031d0a206975cd9.rlib --extern encoding_index_singlebyte=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_singlebyte-80fddc2d153c158e.rlib --extern encoding_index_simpchinese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_simpchinese-06c5fe5964f3071e.rlib --extern encoding_index_korean=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_korean-bb2701334d42f010.rlib --extern encoding_index_japanese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_japanese-5e92eb13c020e4d8.rlib`

Fresh libc v0.1.6

Fresh encoding-index-singlebyte v1.20141219.5 (file:///home/simon/projects/rust-encoding)

Fresh encoding-index-simpchinese v1.20141219.5 (file:///home/simon/projects/rust-encoding)

Fresh encoding-index-korean v1.20141219.5 (file:///home/simon/projects/rust-encoding)

Fresh encoding-index-japanese v1.20141219.5 (file:///home/simon/projects/rust-encoding)

Fresh encoding-index-tradchinese v1.20141219.5 (file:///home/simon/projects/rust-encoding)

Fresh log v0.3.1

Fresh getopts v0.2.10

error: internal compiler error: unexpected panic

note: the compiler unexpectedly panicked. this is a bug.

note: we would appreciate a bug report: https://github.com/rust-lang/rust/blob/master/CONTRIBUTING.md#bug-reports

note: run with `RUST_BACKTRACE=1` for a backtrace

thread 'rustc' panicked at 'assertion failed: prev_const.is_none() || prev_const == Some(llconst)', /home/rustbuild/src/rust-buildbot/slave/nightly-dist-rustc-linux/build/src/librustc_trans/trans/consts.rs:291

stack backtrace:

1: 0x7f38ec7687e9 - sys::backtrace::write::he19dad14fe2b97b1w6r

2: 0x7f38ec7707a9 - panicking::on_panic::h330377024750b34dHMw

3: 0x7f38ec731482 - rt::unwind::begin_unwind_inner::h7703fc192c11eec8Rrw

4: 0x7f38eb4d782e - rt::unwind::begin_unwind::h7587717121668466740

5: 0x7f38eb549830 - trans::consts::const_expr::h6a02570a980db0cdhNs

6: 0x7f38eb5a1aea - trans::consts::get_const_expr_as_global::h8d682e42aa0c00a1pKs

7: 0x7f38eb51bf3f - trans::expr::trans::h063786072022aec6izA

8: 0x7f38eb5b80ce - trans::expr::trans_into::h0995470e7b548e00ZsA

9: 0x7f38eb53d9d2 - trans::expr::trans_adt::h7fc068c005b70b7fv8B

10: 0x7f38eb5e38b2 - trans::expr::trans_rvalue_dps_unadjusted::he15a7fa64af631b8MCB

11: 0x7f38eb5b831c - trans::expr::trans_into::h0995470e7b548e00ZsA

12: 0x7f38eb53ba66 - trans::controlflow::trans_block::h6757ec40476ddde4slv

13: 0x7f38eb5e3bbd - trans::expr::trans_rvalue_dps_unadjusted::he15a7fa64af631b8MCB

14: 0x7f38eb5b831c - trans::expr::trans_into::h0995470e7b548e00ZsA

15: 0x7f38eb53ba66 - trans::controlflow::trans_block::h6757ec40476ddde4slv

16: 0x7f38eb53a381 - trans::base::trans_closure::hdcc3c01c9bca642e7Uh

17: 0x7f38eb53c05a - trans::base::trans_fn::hf52d8c0c091fdef5P5h

18: 0x7f38eb53ee27 - trans::base::trans_item::he4dc60caaf1684eajui

19: 0x7f38eb53f738 - trans::base::trans_item::he4dc60caaf1684eajui

20: 0x7f38eb54ccfc - trans::base::trans_crate::hb11747a7cf1143a4sjj

21: 0x7f38eccc14a4 - driver::phase_4_translate_to_llvm::h29a0d7314fcd3b41nOa

22: 0x7f38ecc9d226 - driver::compile_input::h16cddbb7992cbbbaQba

23: 0x7f38ecd52f21 - run_compiler::h74084e004617dcdcb6b

24: 0x7f38ecd50772 - boxed::F.FnBox<A>::call_box::h10784738899208612549

25: 0x7f38ecd4ff49 - rt::unwind::try::try_fn::h5235027869346842134

26: 0x7f38ec7e9498 - rust_try_inner

27: 0x7f38ec7e9485 - rust_try

28: 0x7f38ec75c247 - rt::unwind::try::inner_try::hb6f04bd1baacc20eKnw

29: 0x7f38ecd5017a - boxed::F.FnBox<A>::call_box::h17245911238432039576

30: 0x7f38ec76f471 - sys::thread::Thread::new::thread_start::h285dd80d49b81cf50xv

31: 0x7f38e6798373 - start_thread

32: 0x7f38ec3c127c - clone

33: 0x0 - <unknown>

Could not compile `encoding`.

Caused by:

Process didn't exit successfully: `rustc src/lib.rs --crate-name encoding --crate-type lib -g --out-dir /home/simon/projects/rust-encoding/target/debug --emit=dep-info,link -L dependency=/home/simon/projects/rust-encoding/target/debug -L dependency=/home/simon/projects/rust-encoding/target/debug/deps --extern encoding_index_tradchinese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_tradchinese-9031d0a206975cd9.rlib --extern encoding_index_singlebyte=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_singlebyte-80fddc2d153c158e.rlib --extern encoding_index_simpchinese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_simpchinese-06c5fe5964f3071e.rlib --extern encoding_index_korean=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_korean-bb2701334d42f010.rlib --extern encoding_index_japanese=/home/simon/projects/rust-encoding/target/debug/deps/libencoding_index_japanese-5e92eb13c020e4d8.rlib` (exit code: 101)

|

## Code

trait Trait {}

struct Bar;

impl Trait for Bar {}

fn main() {

let x: &[&Trait] = &[{ &Bar }];

}

## Output

main.rs:7:9: 7:10 warning: unused variable: `x`, #[warn(unused_variables)] on by default

main.rs:7 let x: &[&Trait] = &[{ &Bar }];

^

error: internal compiler error: unexpected panic

note: the compiler unexpectedly panicked. this is a bug.

note: we would appreciate a bug report: https://github.com/rust-lang/rust/blob/master/CONTRIBUTING.md#bug-reports

note: run with `RUST_BACKTRACE=1` for a backtrace

thread 'rustc' panicked at 'assertion failed: prev_const.is_none() || prev_const == Some(llconst)', /Users/rustbuild/src/rust-buildbot/slave/nightly-dist-rustc-mac/build/src/librustc_trans/trans/consts.rs:321

stack backtrace:

1: 0x1054eb5cf - sys::backtrace::write::h9137c695ab290a14WRs

2: 0x1054f3b82 - panicking::on_panic::h0ae3cf09aff03bc05Pw

3: 0x1054a9d15 - rt::unwind::begin_unwind_inner::h36b4206cb80fb108Oxw

4: 0x102149fcf - rt::unwind::begin_unwind::h6767503471583286242

5: 0x1021c082c - trans::consts::const_expr::h1bbfa5560b941f32nvs

6: 0x102224922 - vec::Vec<T>.FromIterator<T>::from_iter::h7466739370638199104

7: 0x10221baea - trans::consts::const_expr_unadjusted::hd675727493ff08eezLs

8: 0x1021bfff7 - trans::consts::const_expr::h1bbfa5560b941f32nvs

9: 0x10221dc67 - trans::consts::const_expr_unadjusted::hd675727493ff08eezLs

10: 0x1021bfff7 - trans::consts::const_expr::h1bbfa5560b941f32nvs

11: 0x10221a9f9 - trans::consts::get_const_expr_as_global::h2f40511a988c5876yss

12: 0x10218f6ca - trans::expr::trans::h959669806b495bbffdA

13: 0x1022314e0 - trans::expr::trans_into::h43738e587d521f35W6z

14: 0x1022b4e21 - trans::_match::mk_binding_alloca::h2459388509959927399

15: 0x1021a09b9 - trans::base::init_local::h8acf9c70632535fdNVg

16: 0x1021b1453 - trans::controlflow::trans_block::hb872ebbfd195606cZ2u

17: 0x1021b0105 - trans::base::trans_closure::hbf7fcb6cfae5e775KCh

18: 0x1021b1d9e - trans::base::trans_fn::hb08e54207cb8d8e6sNh

19: 0x1021b5308 - trans::base::trans_item::h15647b08683789dbEbi

20: 0x1021c40f0 - trans::base::trans_crate::hdfbb43ad3281f84dE0i

21: 0x101c4fdde - driver::phase_4_translate_to_llvm::he9458af7df85ffb8lOa

22: 0x101c28344 - driver::compile_input::h3c162f55ed6be3a5Qba

23: 0x101cef853 - run_compiler::hae39edbb343296d3D4b

24: 0x101ced37a - boxed::F.FnBox<A>::call_box::h1927805197202631788

25: 0x101cec817 - rt::unwind::try::try_fn::h7355792964568869981

26: 0x10557d998 - rust_try_inner

27: 0x10557d985 - rust_try

28: 0x101cecaf0 - boxed::F.FnBox<A>::call_box::h2359980489235781969

29: 0x1054f26cd - sys::thread::create::thread_start::hfa406746e663ba1bNUv

30: 0x7fff81be3267 - _pthread_body

31: 0x7fff81be31e4 - _pthread_start

## Rust Version

rustc 1.0.0-nightly (1284be404 2015-04-18) (built 2015-04-17)

binary: rustc

commit-hash: 1284be4044420bc4c41767284ae26be61a38d331

commit-date: 2015-04-18

build-date: 2015-04-17

host: x86_64-apple-darwin

release: 1.0.0-nightly

| 1 |

You get a confusing error message when trying to concat on non-unique (but

also non-exactly-equal) indices. Small example:

In [57]: df1 = pd.DataFrame({'col1': [1, 2, 3]}, index=[0, 0, 1])

...: df2 = pd.DataFrame({'col2': [1, 2, 3]}, index=[0, 1, 2])

In [59]: pd.concat([df1, df2], axis=1)

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-59-756087e4d415> in <module>()

----> 1 pd.concat([df1, df2], axis=1)

/home/joris/scipy/pandas/pandas/tools/concat.py in concat(objs, axis, join, join_axes, ignore_index, keys, levels, names, verify_integrity, copy)

205 verify_integrity=verify_integrity,

206 copy=copy)

--> 207 return op.get_result()

208

209

/home/joris/scipy/pandas/pandas/tools/concat.py in get_result(self)

405 new_data = concatenate_block_managers(

406 mgrs_indexers, self.new_axes, concat_axis=self.axis,

--> 407 copy=self.copy)

408 if not self.copy:

409 new_data._consolidate_inplace()

/home/joris/scipy/pandas/pandas/core/internals.py in concatenate_block_managers(mgrs_indexers, axes, concat_axis, copy)

4849 placement=placement) for placement, join_units in concat_plan]

4850

-> 4851 return BlockManager(blocks, axes)

4852

4853

/home/joris/scipy/pandas/pandas/core/internals.py in __init__(self, blocks, axes, do_integrity_check, fastpath)

2784

2785 if do_integrity_check:

-> 2786 self._verify_integrity()

2787

2788 self._consolidate_check()

/home/joris/scipy/pandas/pandas/core/internals.py in _verify_integrity(self)

2994 for block in self.blocks:

2995 if block._verify_integrity and block.shape[1:] != mgr_shape[1:]:

-> 2996 construction_error(tot_items, block.shape[1:], self.axes)

2997 if len(self.items) != tot_items:

2998 raise AssertionError('Number of manager items must equal union of '

/home/joris/scipy/pandas/pandas/core/internals.py in construction_error(tot_items, block_shape, axes, e)

4258 raise ValueError("Empty data passed with indices specified.")

4259 raise ValueError("Shape of passed values is {0}, indices imply {1}".format(

-> 4260 passed, implied))

4261

4262

ValueError: Shape of passed values is (2, 6), indices imply (2, 4)

* * *

Original reported issue by @gregsifr :

I am working with a large dataframe of customers which I was unable to concat.

After spending some time I narrowed the problem area down to the below

(pickled) dataframes and code.

When trying to concat the dataframes using the following code the error shown

below is returned:

import pandas as pd

import pickle

df1 = pickle.loads('ccopy_reg\n_reconstructor\np1\n(cpandas.core.frame\nDataFrame\np2\nc__builtin__\nobject\np3\nNtRp4\n(dp5\nS\'_metadata\'\np6\n(lp7\nsS\'_typ\'\np8\nS\'dataframe\'\np9\nsS\'_data\'\np10\ng1\n(cpandas.core.internals\nBlockManager\np11\ng3\nNtRp12\n((lp13\ncpandas.core.index\n_new_Index\np14\n(cpandas.core.index\nMultiIndex\np15\n(dp16\nS\'labels\'\np17\n(lp18\ncnumpy.core.multiarray\n_reconstruct\np19\n(cpandas.core.base\nFrozenNDArray\np20\n(I0\ntS\'b\'\ntRp21\n(I1\n(L2L\ntcnumpy\ndtype\np22\n(S\'i1\'\nI0\nI1\ntRp23\n(I3\nS\'|\'\nNNNI-1\nI-1\nI0\ntbI00\nS\'\\x00\\x00\'\ntbag19\n(g20\n(I0\ntS\'b\'\ntRp24\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x01\'\ntbasS\'names\'\np25\n(lp26\nNaNasS\'levels\'\np27\n(lp28\ng14\n(cpandas.core.index\nIndex\np29\n(dp30\nS\'data\'\np31\ng19\n(cnumpy\nndarray\np32\n(I0\ntS\'b\'\ntRp33\n(I1\n(L1L\ntg22\n(S\'O8\'\nI0\nI1\ntRp34\n(I3\nS\'|\'\nNNNI-1\nI-1\nI63\ntbI00\n(lp35\nVCUSTOMER_A\np36\natbsS\'name\'\np37\nNstRp38\nag14\n(g29\n(dp39\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp40\n(I1\n(L2L\ntg34\nI00\n(lp41\nVVISIT_DT\np42\naVPURCHASE\np43\natbsg37\nNstRp44\nasS\'sortorder\'\np45\nNstRp46\nacpandas.tseries.index\n_new_DatetimeIndex\np47\n(cpandas.tseries.index\nDatetimeIndex\np48\n(dp49\nS\'tz\'\np50\nNsS\'freq\'\np51\nNsg31\ng19\n(g32\n(I0\ntS\'b\'\ntRp52\n(I1\n(L22L\ntg22\n(S\'M8\'\nI0\nI1\ntRp53\n(I4\nS\'<\'\nNNNI-1\nI-1\nI0\n((d(S\'ns\'\nI1\nI1\nI1\ntttbI00\nS\'\\x00\\x00\\x1c\\xca\\xf9\\xceO\\x10\\x00\\x00k[\\x8e\\x1dP\\x10\\x00\\x00\\xba\\xec"lP\\x10\\x00\\x00\\t~\\xb7\\xbaP\\x10\\x00\\x00X\\x0fL\\tQ\\x10\\x00\\x00\\xa7\\xa0\\xe0WQ\\x10\\x00\\x00\\x94T\\x9eCR\\x10\\x00\\x00\\xe3\\xe52\\x92R\\x10\\x00\\x002w\\xc7\\xe0R\\x10\\x00\\x00\\x81\\x08\\\\/S\\x10\\x00\\x00\\xd0\\x99\\xf0}S\\x10\\x00\\x00\\xbdM\\xaeiT\\x10\\x00\\x00\\x0c\\xdfB\\xb8T\\x10\\x00\\x00[p\\xd7\\x06U\\x10\\x00\\x00\\xaa\\x01lUU\\x10\\x00\\x00\\xf9\\x92\\x00\\xa4U\\x10\\x00\\x00\\xe6F\\xbe\\x8fV\\x10\\x00\\x005\\xd8R\\xdeV\\x10\\x00\\x00\\x84i\\xe7,W\\x10\\x00\\x00\\xd3\\xfa{{W\\x10\\x00\\x00"\\x8c\\x10\\xcaW\\x10\\x00\\x00\\x0f@\\xce\\xb5X\\x10\'\ntbsg37\nS\'date\'\np54\nstRp55\na(lp56\ng19\n(g32\n(I0\ntS\'b\'\ntRp57\n(I1\n(L2L\nL22L\ntg22\n(S\'f8\'\nI0\nI1\ntRp58\n(I3\nS\'<\'\nNNNI-1\nI-1\nI0\ntbI00\nS\'\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\'\ntba(lp59\ng14\n(g15\n(dp60\ng17\n(lp61\ng19\n(g20\n(I0\ntS\'b\'\ntRp62\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x00\'\ntbag19\n(g20\n(I0\ntS\'b\'\ntRp63\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x01\'\ntbasg25\n(lp64\nNaNasg27\n(lp65\ng14\n(g29\n(dp66\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp67\n(I1\n(L1L\ntg34\nI00\n(lp68\ng36\natbsg37\nNstRp69\nag14\n(g29\n(dp70\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp71\n(I1\n(L2L\ntg34\nI00\n(lp72\ng42\nag43\natbsg37\nNstRp73\nasg45\nNstRp74\na(dp75\nS\'0.14.1\'\np76\n(dp77\nS\'axes\'\np78\ng13\nsS\'blocks\'\np79\n(lp80\n(dp81\nS\'mgr_locs\'\np82\nc__builtin__\nslice\np83\n(I0\nI2\nL1L\ntRp84\nsS\'values\'\np85\ng57\nsasstbsb.')

df2 = pickle.loads('ccopy_reg\n_reconstructor\np1\n(cpandas.core.frame\nDataFrame\np2\nc__builtin__\nobject\np3\nNtRp4\n(dp5\nS\'_metadata\'\np6\n(lp7\nsS\'_typ\'\np8\nS\'dataframe\'\np9\nsS\'_data\'\np10\ng1\n(cpandas.core.internals\nBlockManager\np11\ng3\nNtRp12\n((lp13\ncpandas.core.index\n_new_Index\np14\n(cpandas.core.index\nMultiIndex\np15\n(dp16\nS\'labels\'\np17\n(lp18\ncnumpy.core.multiarray\n_reconstruct\np19\n(cpandas.core.base\nFrozenNDArray\np20\n(I0\ntS\'b\'\ntRp21\n(I1\n(L2L\ntcnumpy\ndtype\np22\n(S\'i1\'\nI0\nI1\ntRp23\n(I3\nS\'|\'\nNNNI-1\nI-1\nI0\ntbI00\nS\'\\x00\\x00\'\ntbag19\n(g20\n(I0\ntS\'b\'\ntRp24\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x01\'\ntbasS\'names\'\np25\n(lp26\nNaNasS\'levels\'\np27\n(lp28\ng14\n(cpandas.core.index\nIndex\np29\n(dp30\nS\'data\'\np31\ng19\n(cnumpy\nndarray\np32\n(I0\ntS\'b\'\ntRp33\n(I1\n(L1L\ntg22\n(S\'O8\'\nI0\nI1\ntRp34\n(I3\nS\'|\'\nNNNI-1\nI-1\nI63\ntbI00\n(lp35\nVCUSTOMER_B\np36\natbsS\'name\'\np37\nNstRp38\nag14\n(g29\n(dp39\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp40\n(I1\n(L2L\ntg34\nI00\n(lp41\nVVISIT_DT\np42\naVPURCHASE\np43\natbsg37\nNstRp44\nasS\'sortorder\'\np45\nNstRp46\nacpandas.tseries.index\n_new_DatetimeIndex\np47\n(cpandas.tseries.index\nDatetimeIndex\np48\n(dp49\nS\'tz\'\np50\nNsS\'freq\'\np51\nNsg31\ng19\n(g32\n(I0\ntS\'b\'\ntRp52\n(I1\n(L24L\ntg22\n(S\'M8\'\nI0\nI1\ntRp53\n(I4\nS\'<\'\nNNNI-1\nI-1\nI0\n((d(S\'ns\'\nI1\nI1\nI1\ntttbI00\nS\'\\x00\\x00k[\\x8e\\x1dP\\x10\\x00\\x00\\xba\\xec"lP\\x10\\x00\\x00\\t~\\xb7\\xbaP\\x10\\x00\\x00X\\x0fL\\tQ\\x10\\x00\\x00\\xa7\\xa0\\xe0WQ\\x10\\x00\\x00\\x94T\\x9eCR\\x10\\x00\\x00\\xe3\\xe52\\x92R\\x10\\x00\\x002w\\xc7\\xe0R\\x10\\x00\\x00\\x81\\x08\\\\/S\\x10\\x00\\x00\\xd0\\x99\\xf0}S\\x10\\x00\\x00\\xbdM\\xaeiT\\x10\\x00\\x00\\x0c\\xdfB\\xb8T\\x10\\x00\\x00[p\\xd7\\x06U\\x10\\x00\\x00\\xaa\\x01lUU\\x10\\x00\\x00\\xf9\\x92\\x00\\xa4U\\x10\\x00\\x00\\xe6F\\xbe\\x8fV\\x10\\x00\\x005\\xd8R\\xdeV\\x10\\x00\\x00\\x84i\\xe7,W\\x10\\x00\\x00\\xd3\\xfa{{W\\x10\\x00\\x00"\\x8c\\x10\\xcaW\\x10\\x00\\x00\\xc0\\xae9gX\\x10\\x00\\x00\\xc0\\xae9gX\\x10\\x00\\x00\\xc0\\xae9gX\\x10\\x00\\x00\\x0f@\\xce\\xb5X\\x10\'\ntbsg37\nS\'date\'\np54\nstRp55\na(lp56\ng19\n(g32\n(I0\ntS\'b\'\ntRp57\n(I1\n(L2L\nL24L\ntg22\n(S\'f8\'\nI0\nI1\ntRp58\n(I3\nS\'<\'\nNNNI-1\nI-1\nI0\ntbI00\nS\'\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\xf0\\x0c$sA\\x00\\x00\\x00\\xf0\\x0c$sA\\x00\\x00\\x00\\xf0\\x0c$sA\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\\x00\\x00\\x00\\x00\\x00\\x00\\xf8\\x7f\'\ntba(lp59\ng14\n(g15\n(dp60\ng17\n(lp61\ng19\n(g20\n(I0\ntS\'b\'\ntRp62\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x00\'\ntbag19\n(g20\n(I0\ntS\'b\'\ntRp63\n(I1\n(L2L\ntg23\nI00\nS\'\\x00\\x01\'\ntbasg25\n(lp64\nNaNasg27\n(lp65\ng14\n(g29\n(dp66\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp67\n(I1\n(L1L\ntg34\nI00\n(lp68\ng36\natbsg37\nNstRp69\nag14\n(g29\n(dp70\ng31\ng19\n(g32\n(I0\ntS\'b\'\ntRp71\n(I1\n(L2L\ntg34\nI00\n(lp72\ng42\nag43\natbsg37\nNstRp73\nasg45\nNstRp74\na(dp75\nS\'0.14.1\'\np76\n(dp77\nS\'axes\'\np78\ng13\nsS\'blocks\'\np79\n(lp80\n(dp81\nS\'mgr_locs\'\np82\nc__builtin__\nslice\np83\n(I0\nI2\nL1L\ntRp84\nsS\'values\'\np85\ng57\nsasstbsb.')

customers, tables = ['CUSTOMER_A', 'CUSTOMER_B'], [df1.iloc[:], df2.iloc[:]]

tables = pd.concat(tables, keys=customers, axis=1)

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-22-8096f8962dec> in <module>()

6

7 customers, tables = ['CUSTOMER_A', 'CUSTOMER_B'], [df1.iloc[:], df2.iloc[:]]

----> 8 tables = pd.concat(tables, keys=customers, axis=1)

/home/code/anaconda2/lib/python2.7/site-packages/pandas/tools/merge.pyc in concat(objs, axis, join, join_axes, ignore_index, keys, levels, names, verify_integrity, copy)

833 verify_integrity=verify_integrity,

834 copy=copy)

--> 835 return op.get_result()

836

837

/home/code/anaconda2/lib/python2.7/site-packages/pandas/tools/merge.pyc in get_result(self)

1023 new_data = concatenate_block_managers(

1024 mgrs_indexers, self.new_axes,

-> 1025 concat_axis=self.axis, copy=self.copy)

1026 if not self.copy:

1027 new_data._consolidate_inplace()

/home/code/anaconda2/lib/python2.7/site-packages/pandas/core/internals.pyc in concatenate_block_managers(mgrs_indexers, axes, concat_axis, copy)

4474 for placement, join_units in concat_plan]

4475

-> 4476 return BlockManager(blocks, axes)

4477

4478

/home/code/anaconda2/lib/python2.7/site-packages/pandas/core/internals.pyc in __init__(self, blocks, axes, do_integrity_check, fastpath)

2535

2536 if do_integrity_check:

-> 2537 self._verify_integrity()

2538

2539 self._consolidate_check()

/home/code/anaconda2/lib/python2.7/site-packages/pandas/core/internals.pyc in _verify_integrity(self)

2745 for block in self.blocks:

2746 if block._verify_integrity and block.shape[1:] != mgr_shape[1:]:

-> 2747 construction_error(tot_items, block.shape[1:], self.axes)

2748 if len(self.items) != tot_items:

2749 raise AssertionError('Number of manager items must equal union of '

/home/code/anaconda2/lib/python2.7/site-packages/pandas/core/internals.pyc in construction_error(tot_items, block_shape, axes, e)

3897 raise ValueError("Empty data passed with indices specified.")

3898 raise ValueError("Shape of passed values is {0}, indices imply {1}".format(

-> 3899 passed, implied))

3900

3901

ValueError: Shape of passed values is (4, 31), indices imply (4, 25)

However if the dataframes are sliced e.g. [:10] OR [10:], the concat works:

customers, tables = ['CUSTOMER_A', 'CUSTOMER_B'], [df1.iloc[:20], df2.iloc[:20]]

tables = pd.concat(tables, keys=customers, axis=1)

tables

Output of `pd.show_versions()`

INSTALLED VERSIONS

------------------

commit: None

python: 2.7.11.final.0

python-bits: 64

OS: Linux

OS-release: 3.19.0-58-generic

machine: x86_64

processor: x86_64

byteorder: little

LC_ALL: None

LANG: en_AU.UTF-8

pandas: 0.18.0

nose: 1.3.7

pip: 8.1.1

setuptools: 20.6.7

Cython: 0.24

numpy: 1.10.4

scipy: 0.17.0

statsmodels: 0.6.1

xarray: None

IPython: 4.1.2

sphinx: 1.4.1

patsy: 0.4.1

dateutil: 2.5.2

pytz: 2016.3

blosc: None

bottleneck: 1.0.0

tables: 3.2.2

numexpr: 2.5.2

matplotlib: 1.5.1

openpyxl: 2.3.2

xlrd: 0.9.4

xlwt: 1.0.0

xlsxwriter: 0.8.4

lxml: 3.6.0

bs4: 4.4.1

html5lib: None

httplib2: None

apiclient: None

sqlalchemy: 1.0.12

pymysql: None

psycopg2: 2.6.1 (dt dec pq3 ext)

jinja2: 2.8

boto: 2.39.0

|

When concatting two dataframes where there are a) there are duplicate columns

in one of the dataframes, and b) there are non-overlapping column names in

both, then you get a IndexError:

In [9]: df1 = pd.DataFrame(np.random.randn(3,3), columns=['A', 'A', 'B1'])

...: df2 = pd.DataFrame(np.random.randn(3,3), columns=['A', 'A', 'B2'])

In [10]: pd.concat([df1, df2])

Traceback (most recent call last):

File "<ipython-input-10-f61a1ab4009e>", line 1, in <module>

pd.concat([df1, df2])

...

File "c:\users\vdbosscj\scipy\pandas-joris\pandas\core\index.py", line 765, in take

taken = self.view(np.ndarray).take(indexer)

IndexError: index 3 is out of bounds for axis 0 with size 3

I don't know if it should work (although I suppose it should, as with only the

duplicate columns it does work), but at least the error message is not really

helpfull.

| 1 |

This log is showing frequently if a gif image in Recyclerview is not visible

yet. But if gif image is visible the log stop? I use it without specifying it

if its asBitmap or asGif because I want to allow the user to be able to put

jpg or gif and load it to recyclerview.

|

**Glide Version/Integration library (if any)** :3.6.1 with okhttp-

integration:1.3.1@aar

**Device/Android Version** : Nexus 5X, v.6.0.

**Issue details/Repro steps/Use case background** :

I'm using Glide for displaying images in RecyclerView and in

FragmentStatePagerAdapter. On pre M versions of Android everything is going as

expected. I did not faced any issues mentioned in

https://github.com/bumptech/glide/wiki/Resource-re-use-in-Glide. But on the

Android 6 i'm getting this warning when scrolling the RecyclerView or

FragmentPages.

W/Bitmap: Called reconfigure on a bitmap that is in use! This may cause graphical corruption!

I put a method onViewRecycled to the RecyclerView adapter, and increased RV

pool size. It seems to be helping, but not completely. I'm still have this

problem in the FragmentPager, and when I return to the RecyclerView by

pressing back button, the warnings are back (I guess that can probably be

fixed by increasing RV pool size in onResume).

I'm loading the images from device as bitmaps. Can this be the source of the

issue?

**Glide load line** :

Glide.with(context)

.load(Uri.parse(filepath))

.asBitmap()

.centerCrop()

.diskCacheStrategy(DiskCacheStrategy.ALL)

.into(holder.guideCover);

I love to use Glide because is super fast. The application is running as

expected, hoverer I'm a bit nervous of those warning messages.

What I'm doing wrong?

| 1 |

The following code snippet emits a very odd error message (requires the GSL

library):

type gsl_error_handler_t end

custom_gsl_error_handler = convert(Ptr{gsl_error_handler_t}, cfunction(error, Void, ()))

function set_error_handler(new_handler::Ptr{gsl_error_handler_t})

output_ptr = ccall( (:gsl_set_error_handler, :libgsl),

Ref{gsl_error_handler_t}, #<--- User error: should be Ptr{...}

(Ptr{gsl_error_handler_t}, ), new_handler )

end

set_error_handler(custom_gsl_error_handler)

Result:

ERROR: UndefVarError: result not defined

in include_from_node1 at ./loading.jl:316

in process_options at ./client.jl:275

in _start at ./client.jl:375

`result` is not defined in this code, nor is it ever used at the user level,

so this error is rather cryptic.

Observed on release-0.4 and master.

|

### TL;DR

This is a runtime debug option proposed by @carnaval to catch common misuse of

`ccall` which could otherwise cause corruption that's hard to debug.

### The problem

> ...... when someone trashed a tag. Often happens in pools with OOB stores,

> ...... Unfortunately it's

> the only "downside" of having an almost too easy C FFI.

> \-- Oscar Blumberg (@carnaval) at #11945 (comment) #11945 (comment)

The issue here is that it is very/too easy to pass a pointer to an object to a

C function with `ccall`. This is one of the awesomeness of Julia. However, if

the user makes a mistake about the C api, commonly not allocating enough

memory, the c function might write to memory that is not meant to be written

which could corrupt the GC managed memory and crashes with spectacular

backtrace (pages of `push_root`).

This happens surprisingly often. It can be caused by making mistake on the

c-interface or `ccall` (#11945), upstream abi breakage

(JuliaInterop/ZMQ.jl#83) or just not careful enough on refactoring

(JuliaGPU/OpenCL.jl#65) (I'm only aware of these but there's probably

more...).

This kind of issue is also relatively hard to debug as they happens randomly

and usually crashes in totally unrelated places. With @carnaval 's guide on GC

debugging, it is not impossibly to figure out the issue but is nontheless much

harder than using `ccall` (unless, of course, if someone can somehow integrate

lldb (or sth else) in julia).

### Solution

It's very hard (if not impossible) to detect this issue ahead of time but it

would really help debugging and fixing the issue if the error is raised as

early as possible (at the `ccall` site). One way to do that, proposed by

@carnaval , is to allocate some extra memory with known random content for

each object and check after each ccall to make sure the c function didn't

modify them.

### Implementation

AFAICT, one (only?) missing part for implementing this is to figure out on

which objects/memory regions this check should be performed. There are several

possibilities that I can think of:

1. Do it statically in codegen.

This is hard because `ccall` only see the pointer after `unsafe_convert` (or

the user may even convert using `pointer` directly).

2. Scan every objects.

This should be doable and should be relatively easy to implement (just add an

extra call into the GC after each `ccall` site). The obvious problem would be

performance. This can probably be implemented as a more aggressive mode to

catch corner cases but should probably not be the default one.

3. Let the GC figure out if the pointer should be sanitized.

I think this is the best compromise between performance and implementation

difficulty. The idea is to use the machinery for conservative stack scan to

figure out if a pointer is pointing to a GC object. The codegen will just pass

all pointer arguments to the GC and do all the work there. An additional

benefit of this is that it can be used to verify that the object is properly

rooted.

### Other concern

* Stack allocated memory

Currently only limited to the `&` `ccall` operator and can be special cased.

If we have more general stack allocation of mutable object, we can probably

keep track of them in this mode the same way we keep the gc frame.

* Concurrency

In order to support multiple thread using the same object, the random data in

the padding should probably be assigned at allocation time and never changes

afterword (i.e. cannot be filled just before `ccall`).

* Mixing generated code with and without option (e.g. running in this mode with sysimg generated without such option)

The GC should keep track of whether each object has padding allocated (heap

allocated ones should be consistent, stack allocation might need more detail

tracking) and do the check accordingly.

| 1 |

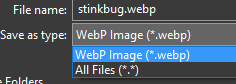

Running TensorBoard r0.9 results in graph visualizations as expected but all

events and histograms that successfully displayed in r0.8 are not.

Has r0.9 introduced a change to the command line that should be used to launch

TensorBoard, or to the code needed to generate events and histograms for

TensorBoard to display?

Note that neither new summaries and histograms written with recent runs using

r0.9 TensorFlow, nor existing ones written (and displayed) in the past, are

displayed. Graphs generated with both releases display as expected.

### Environment info

Operating System: OS X 10.11.5

TensorFlow: .9.0rc0

### Steps to reproduce

`tensorboard --logdir ./tflog --purge_orphaned_data`

### What have you tried?

Reverting to r0.8, which works as it originally did, displaying all events and

histograms present in the `tflog`directory provided as the `logdir`.

Reinstalling r0.9, which reproduces the error. A second attempt at

reinstalling r0.8 resulted in a TensorBoard that displays jus the menu (no

content) and uses a different style (a serif face).

|

Since about a week now there seems something wrong with the TensorBoard demo

at https://www.tensorflow.org/tensorboard/index.html#events. The graph shows

nicely, but neither events nor histograms show up. This problem seems to be

only with the demo -- everything shows up just fine when I run the

corresponding code (`mnist_tensorboard.py`) locally.

| 1 |

## Steps to Reproduce

I have a project when I try to run a drive test, I get

[drive-test]

flutter drive -t test_driver/all.dart

Using device Pixel XL.

Starting application: test_driver/all.dart

Initializing gradle...

Resolving dependencies...

Installing build/app/outputs/apk/app.apk...

Running 'gradlew assembleDebug'...

FAILURE: Build failed with an exception.

* What went wrong:

Execution failed for task ':app:transformClassesWithJarMergingForDebug'.

> com.android.build.api.transform.TransformException: java.util.zip.ZipException: duplicate entry: com/google/android/gms/common/internal/zzq.class

* Try:

Run with --stacktrace option to get the stack trace. Run with --info or --debug option to get more log output.

Gradle build failed: 1

failed with exit code 1

I haven't found a `--stacktrace` option.

Perhaps

--[no-]trace-startup Start tracing during startup.

from `flutter drive -h` is meant?

## Logs

Run your application with `flutter run` and attach all the log output.

Run `flutter analyze` and attach any output of that command also.

## Flutter Doctor

(issue376_save_image) $ flutter doctor

[✓] Flutter (on Mac OS X 10.13.1 17B48, locale en-AT, channel alpha)

• Flutter at /Users/zoechi/flutter/flutter

• Framework revision e8aa40eddd (5 weeks ago), 2017-10-17 15:42:40 -0700

• Engine revision 7c4142808c

• Tools Dart version 1.25.0-dev.11.0

[✓] Android toolchain - develop for Android devices (Android SDK 27.0.1)

• Android SDK at /usr/local/opt/android-sdk

• Platform android-27, build-tools 27.0.1

• ANDROID_HOME = /usr/local/opt/android-sdk

• Java binary at: /Applications/Android Studio.app/Contents/jre/jdk/Contents/Home/bin/java

• Java version OpenJDK Runtime Environment (build 1.8.0_152-release-915-b08)

[✓] iOS toolchain - develop for iOS devices (Xcode 9.1)

• Xcode at /Applications/Xcode.app/Contents/Developer

• Xcode 9.1, Build version 9B55

• ios-deploy 1.9.2

• CocoaPods version 1.3.1

[✓] Android Studio (version 3.0)

• Android Studio at /Applications/Android Studio.app/Contents

• Java version OpenJDK Runtime Environment (build 1.8.0_152-release-915-b08)

[✓] IntelliJ IDEA Ultimate Edition (version 2017.2.6)

• Flutter plugin version 19.1

• Dart plugin version 172.4343.25

[✓] Connected devices

• Pixel XL • HT69V0203649 • android-arm • Android 8.0.0 (API 26)

|

**Issue byafandria**

_Tuesday Aug 11, 2015 at 18:32 GMT_

_Originally opened ashttps://github.com/flutter/engine/issues/559_

* * *

Building a Standalone APK instructions are not clear enough.

It has been inconvenient to only be able to start my Sky app via `sky_tool`,

so I wanted to build my own APK. Unfortunately, the link to the stocks/example

does not have a `README.md` for how to build it. It appears that the

`BUILD.gn`, `sky.yaml`, and `apk/AndroidManifest.xml` files are important to

the process, but I am not sure what command(s) are needed to produce the APK.

| 0 |

# Checklist

* I have read the relevant section in the

contribution guide

on reporting bugs.

* I have checked the issues list

for similar or identical bug reports.

* I have checked the pull requests list

for existing proposed fixes.

* I have checked the commit log

to find out if the bug was already fixed in the master branch.

* I have included all related issues and possible duplicate issues

in this issue (If there are none, check this box anyway).

## Mandatory Debugging Information

* I have included the output of `celery -A proj report` in the issue.

(if you are not able to do this, then at least specify the Celery

version affected).

* I have verified that the issue exists against the `master` branch of Celery.

* I have included the contents of `pip freeze` in the issue.

* I have included all the versions of all the external dependencies required

to reproduce this bug.

## Optional Debugging Information

* I have tried reproducing the issue on more than one Python version

and/or implementation.

* I have tried reproducing the issue on more than one message broker and/or

result backend.

* I have tried reproducing the issue on more than one version of the message

broker and/or result backend.

* I have tried reproducing the issue on more than one operating system.

* I have tried reproducing the issue on more than one workers pool.

* I have tried reproducing the issue with autoscaling, retries,

ETA/Countdown & rate limits disabled.

* I have tried reproducing the issue after downgrading

and/or upgrading Celery and its dependencies.

## Related Issues and Possible Duplicates

#### Related Issues

* None

#### Possible Duplicates

* None

## Environment & Settings

**Celery version** :

**`celery report` Output:**

[root@shiny ~]# celery report

software -> celery:4.4.0rc2 (cliffs) kombu:4.6.3 py:3.6.8

billiard:3.6.0.0 py-amqp:2.5.0

platform -> system:Linux arch:64bit

kernel version:4.19.13-200.fc28.x86_64 imp:CPython

loader -> celery.loaders.default.Loader

settings -> transport:amqp results:disabled

# Steps to Reproduce

## Required Dependencies

* **Minimal Python Version** : N/A or Unknown

* **Minimal Celery Version** : N/A or Unknown

* **Minimal Kombu Version** : N/A or Unknown

* **Minimal Broker Version** : N/A or Unknown

* **Minimal Result Backend Version** : N/A or Unknown

* **Minimal OS and/or Kernel Version** : N/A or Unknown

* **Minimal Broker Client Version** : N/A or Unknown

* **Minimal Result Backend Client Version** : N/A or Unknown

### Python Packages

**`pip freeze` Output:**

[root@shiny ~]# pip3 freeze

amqp==2.5.0

anymarkup==0.7.0

anymarkup-core==0.7.1

billiard==3.6.0.0

celery==4.4.0rc2

configobj==5.0.6

gpg==1.10.0

iniparse==0.4

json5==0.8.4

kombu==4.6.3

pygobject==3.28.3

python-qpid-proton==0.28.0

pytz==2019.1

PyYAML==5.1.1

pyzmq==18.0.1

redis==3.2.1

rpm==4.14.2

six==1.11.0

smartcols==0.3.0

toml==0.10.0

ucho==0.1.0

vine==1.3.0

xmltodict==0.12.0

### Other Dependencies

N/A

## Minimally Reproducible Test Case

# Expected Behavior

Updating task_routes during runtime is possible and has effect

# Actual Behavior

Updating `task_routes` during runtime does not have effect - the config is

updated but the `router` in `send_task` seems to be reusing old configuration.

import celery

c = celery.Celery(broker='redis://localhost:6379/0',

backend='redis://localhost:6379/0')

c.conf.update(task_routes={'task.create_pr': 'queue.betka'})

c.send_task('task.create_pr')

print(c.conf.get('task_routes'))

c.conf.update(task_routes={'task.create_pr': 'queue.ferdinand'})

c.send_task('task.create_pr')

print(c.conf.get('task_routes'))

Output:

[root@shiny ~]# python3 repr.py

{'task.create_pr': 'queue.betka'}

{'task.create_pr': 'queue.ferdinand'}

So the configuration is updated but it seems the routes are still pointing to

queue.betka, since both tasks are sent to queue.betka and queue.ferdinand

didn't receive anything.

betka_1 | [2019-06-24 14:50:41,386: INFO/MainProcess] Received task: task.create_pr[54b28121-28cf-4301-b6f2-185d2e7c50cb]

betka_1 | [2019-06-24 14:50:41,386: DEBUG/MainProcess] TaskPool: Apply <function _fast_trace_task at 0x7fca7f4d6a60> (args:('task.create_pr', '54b28121-28cf-4301-b6f2-185d2e7c50cb', {'lang': 'py', 'task': 'task.create_pr', 'id': '54b28121-28cf-4301-b6f2-185d2e7c50cb', 'shadow': None, 'eta': None, 'expires': None, 'group': None, 'retries': 0, 'timelimit': [None, None], 'root_id': '54b28121-28cf-4301-b6f2-185d2e7c50cb', 'parent_id': None, 'argsrepr': '()', 'kwargsrepr': '{}', 'origin': 'gen68@shiny', 'reply_to': 'b7be085a-b1f8-3738-b65f-963a805f2513', 'correlation_id': '54b28121-28cf-4301-b6f2-185d2e7c50cb', 'delivery_info': {'exchange': '', 'routing_key': 'queue.betka', 'priority': 0, 'redelivered': None}}, b'[[], {}, {"callbacks": null, "errbacks": null, "chain": null, "chord": null}]', 'application/json', 'utf-8') kwargs:{})

betka_1 | [2019-06-24 14:50:41,387: INFO/MainProcess] Received task: task.create_pr[3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0]

betka_1 | [2019-06-24 14:50:41,388: DEBUG/MainProcess] Task accepted: task.create_pr[54b28121-28cf-4301-b6f2-185d2e7c50cb] pid:12

betka_1 | [2019-06-24 14:50:41,390: INFO/ForkPoolWorker-1] Task task.create_pr[54b28121-28cf-4301-b6f2-185d2e7c50cb] succeeded in 0.002012896991800517s: 'Maybe later :)'

betka_1 | [2019-06-24 14:50:41,390: DEBUG/MainProcess] TaskPool: Apply <function _fast_trace_task at 0x7fca7f4d6a60> (args:('task.create_pr', '3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0', {'lang': 'py', 'task': 'task.create_pr', 'id': '3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0', 'shadow': None, 'eta': None, 'expires': None, 'group': None, 'retries': 0, 'timelimit': [None, None], 'root_id': '3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0', 'parent_id': None, 'argsrepr': '()', 'kwargsrepr': '{}', 'origin': 'gen68@shiny', 'reply_to': 'b7be085a-b1f8-3738-b65f-963a805f2513', 'correlation_id': '3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0', 'delivery_info': {'exchange': '', 'routing_key': 'queue.betka', 'priority': 0, 'redelivered': None}}, b'[[], {}, {"callbacks": null, "errbacks": null, "chain": null, "chord": null}]', 'application/json', 'utf-8') kwargs:{})

betka_1 | [2019-06-24 14:50:41,391: DEBUG/MainProcess] Task accepted: task.create_pr[3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0] pid:12

betka_1 | [2019-06-24 14:50:41,391: INFO/ForkPoolWorker-1] Task task.create_pr[3bf8b0fb-cb4a-412b-84d4-1a52b794b4e0] succeeded in 0.0006862019945401698s: 'Maybe later :)'

Note: I managed to workaround it by adding `del c.amqp` right after update for

now

|

# Checklist

* I have read the relevant section in the

contribution guide

on reporting bugs.

* I have checked the issues list

for similar or identical bug reports.

* I have checked the pull requests list

for existing proposed fixes.

* I have checked the commit log

to find out if the bug was already fixed in the master branch.

* I have included all related issues and possible duplicate issues

in this issue (If there are none, check this box anyway).

## Related Issues and Possible Duplicates

#### Related Issues

* None

#### Possible Duplicates

* None

## Environment & Settings

**Celery version** : celery==4.2.1

**`celery report` Output:**

job.save(backend=tasks.cel.backend)

File "/Users/shaunak/instabase-repo/instabase/venv/lib/python2.7/site-packages/celery/result.py", line 887, in save

return (backend or self.app.backend).save_group(self.id, self)

File "/Users/shaunak/instabase-repo/instabase/venv/lib/python2.7/site-packages/celery/backends/base.py", line 399, in save_group

return self._save_group(group_id, result)

AttributeError: 'CassandraBackend' object has no attribute '_save_group'

# Steps to Reproduce

1. Start celery with Cassandra as a backend

import celery

import time

cel = celery.Celery(

'experiments',

backend='cassandra://',

broker='amqp://localhost:5672'

)

cel.conf.update(

CELERYD_PREFETCH_MULTIPLIER = 1,

CELERY_REJECT_ON_WORKER_LOST=True,

CELERY_TASK_REJECT_ON_WORKER_LOST=True,

CASSANDRA_SERVERS=['localhost'],

)

2. Create a group and try to save the result

job = group_sig.delay()

job.save(backend=tasks.cel.backend)

# Expected Behavior

We expect that the result will be saved without throwing an error.

# Actual Behavior

We see the following error:

job.save(backend=tasks.cel.backend)

File "/Users/shaunak/instabase-repo/instabase/venv/lib/python2.7/site-packages/celery/result.py", line 887, in save

return (backend or self.app.backend).save_group(self.id, self)

File "/Users/shaunak/instabase-repo/instabase/venv/lib/python2.7/site-packages/celery/backends/base.py", line 399, in save_group

return self._save_group(group_id, result)

AttributeError: 'CassandraBackend' object has no attribute '_save_group'

| 0 |

**Do you want to request a _feature_ or report a _bug_?**

Bug

**What is the current behavior?**

DevTools extension does not persist state. For example, the “Welcome” dialog

displays upon every refresh.

**If the current behavior is a bug, please provide the steps to reproduce and

if possible a minimal demo of the problem. Your bug will get fixed much faster

if we can run your code and it doesn't have dependencies other than React.

Paste the link to your JSFiddle (https://jsfiddle.net/Luktwrdm/) or

CodeSandbox (https://codesandbox.io/s/new) example below:**

1. Open React DevTools in a React app.

2. Change DevTools settings.

3. Refresh app in browser.

**What is the expected behavior?**

Settings should be changed.

**Which versions of React, and which browser / OS are affected by this issue?

Did this work in previous versions of React?**

This is in a corporate install of Chrome 71. It’s possible that it blocks

whichever persistence API React DevTools is using (Chrome DevTools itself

persists settings successfully).

|

**Do you want to request a _feature_ or report a _bug_?**

Bug

**What is the current behavior?**

“Welcome to the new React DevTools!” message blocks the devtool panel every

time the it is opened.

**If the current behavior is a bug, please provide the steps to reproduce and

if possible a minimal demo of the problem. Your bug will get fixed much faster

if we can run your code and it doesn't have dependencies other than React.

Paste the link to your JSFiddle (https://jsfiddle.net/Luktwrdm/) or

CodeSandbox (https://codesandbox.io/s/new) example below:**

1. Open a website with React with DevTools installed.

2. Open the Component tab.

3. Dismiss the welcome screen.

4. Close the devtools and open it again.

**What is the expected behavior?**

Dismissing the “Welcome to the new React DevTools!” message should be

permanent.

**Which versions of React, and which browser / OS are affected by this issue?

Did this work in previous versions of React?**

DevTools: 4.0.5

Chrome: 77.0.3865.35

| 1 |

Getting the following error upon loading:

`Uncaught SyntaxError: Duplicate data property in object literal not allowed

in strict mode -- From line ... in .../bundle.js`

App runs well in modern browsers on PC/Mac, iOS UIWebview, mobile Safari,

Android (also 4.x) Chrome, and Android >5.x.

Consulting this table shows Android 4.4 supports ES5 well and should run

React.js applications just fine.

Any ideas?

|

React / ReactDOM: 16.4.2

I'm having trouble reproducing this, but I'm raising it in the hope that

someone can give me some clues as to the cause. I have a large application

which is using `Fragment` in a few places, like so:

<React.Fragment>

<div>Child 1-1</div>

<div>Child 1-2</div>

</React.Fragment>

<React.Fragment>

<div>Child 2-1</div>

<div>Child 2-2</div>

</React.Fragment>

In everything but Internet Explorer, this renders as you'd expect:

<div>Child 1-1</div>

<div>Child 1-2</div>

<div>Child 2-1</div>

<div>Child 2-2</div>

However, for some reason in Internet Explorer 11, these are being rendered as

some weird tags:

<jscomp_symbol_react.fragment16>

<div>Child 1-1</div>

<div>Child 1-2</div>

</jscomp_symbol_react.fragment16>

<jscomp_symbol_react.fragment16>

<div>Child 2-1</div>

<div>Child 2-2</div>

</jscomp_symbol_react.fragment16>

I've tried pausing the code at the transpiled

`_react.createElement(_react.Fragment)` line, and the `_react.Fragment` export

is a string with the same name as the tag (`jscomp_symbol_react.fragment16`).

I think this is just the correct way in which the symbol polyfill works and

that React should recognize it as something other than an HTML tag.

What's even weirder is that this only happens sometimes. If two components in

my app are using fragments, the first one to render may have the above issue,

the second may not. If an affected component re-renders, the rendered DOM will

be corrected. I haven't found a solid pattern to this yet.

I have a fairly typical webpack + babel setup, and using the babel-polyfill

for symbol support. I'm really not sure what parts of my setup are relevant to

this so please let me know if you need any extra info. Again, I'm trying to

create a reproduction outside of my application but if anyone can offer me

some clues in the meantime I'd be incredibly grateful.

| 0 |

axios/lib/core/mergeConfig.js

Line 19 in 16b5718

| var mergeDeepPropertiesKeys = ['headers', 'auth', 'proxy', 'params'];

---|---

keys in mergeDeepPropertiesKeys will be merged by utils.deepMerge but

utils.deepMerge can only merge objects. Array type field will be converted

like {‘0’: 'a', '1': 'b'}, no longer an array.

|

### Describe the issue

When I read the 'Axios' source code, I found that' utils. deepMerge() 'will

convert arrays to objects, which is unreasonable.

https://github.com/axios/axios/blob/master/lib/utils.js#L286

### Code

var deepMerge = require('./lib/utils').deepMerge;

var a = {foo: {bar: 111}, hnd: [444, 555, 666]};

var b = {foo: {baz: 456}, hnd: [456, 789]};

console.log(deepMerge(a, b));

### result

{ foo: { bar: 111, baz: 456 }, hnd: { '0': 456, '1': 789, '2': 666 } }

### Expected behavior

Executing 'utils.deepMerge()' does not change the data type.

{ foo: { bar: 111, baz: 456 }, hnd: [ 456, 789, 666 ] }

### Environment:

Axios Version 0.19.0

| 1 |

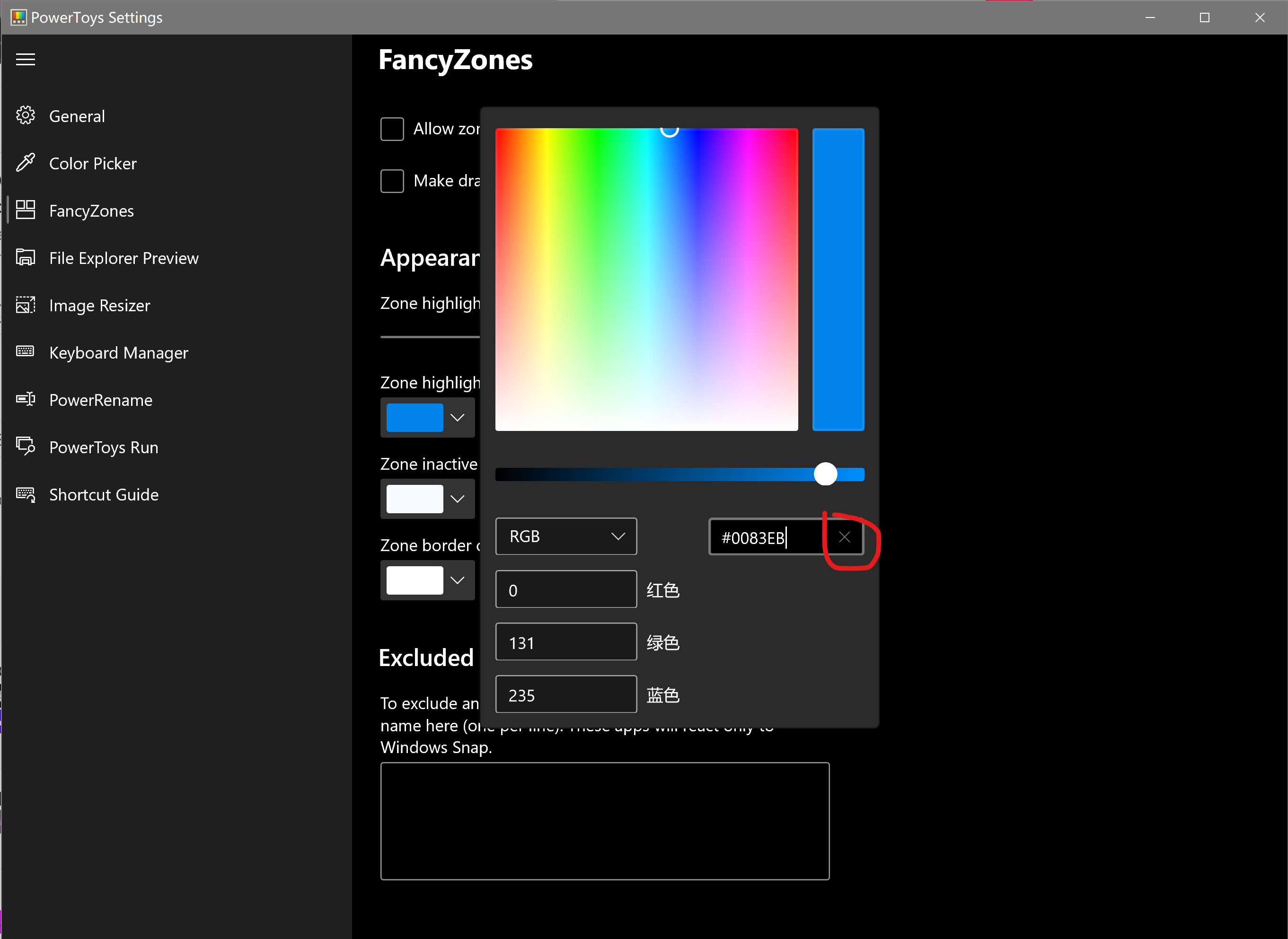

# Environment

Windows build number: Microsoft Windows [Version 10.0.19042.330]

PowerToys version: v0.19.1

PowerToy module for which you are reporting the bug (if applicable): FancyZones

# Steps to reproduce

1. Requires 2 monitors

1a. May require using a non-admin account

2. Show taskbar buttons on: Taskbar where window is open

3. Open Windows Terminal and use FancyZones to "snap" to zone on non-default monitor

4. Reboot computer (or possibly just sign out and back in)

5. Open Windows Terminal

# Expected behavior

Windows Terminal taskbar button and window will both appear on the non-default

monitor. Window will be "snapped" to same zone as before.

# Actual behavior

Windows Terminal taskber button appears on the default monitor while the

window will be "snapped" to the correct zone on the non-default monitor.

|

By default new windows are opened on primary monitor (some application

introduce their own special positioning). If we have zone history for some

application on secondary monitor, and that monitor is active, application

window will be opened there, otherwise we fallback to default windows behavior

and that window is opened on primary monitor.

Even if we don't have app history for that application on active monitor, we

should open it there, with respect of window width and height.

| 1 |

Operating system:XP W7-32

用bootstrap框架开发出的页面,在浏览器上下拉,翻页等都能实现,用electron不能,这是为什么,请教

|

jQuery contains something along this lines:

if ( typeof module === "object" && typeof module.exports === "object" ) {

// set jQuery in `module`

} else {

// set jQuery in `window`

}

module is defined, even in the browser-side scripts. This causes jQuery to

ignore the `window` object and use `module`, so the other scripts won't find

`$` nor `jQuery` in global scope..

I am not sure if this is a jQuery or atom-shell bug, but I wanted to put this

on the web, so others won't search as long as I did.

| 1 |

In the same vein as #1107, it would be super useful (at least to me) to have

the openapi models be available outside of FastAPI. I've been working on a

Python client generator (built with Typer!) which will hopefully be much

cleaner than the openapi-generator version, and I already use FastAPI for some

testing.

I don't want to ship a copy of FastAPI with my tool just to get the openapi

module, but it also feels silly to be rewriting a lot of what you already have

when I could just parse a spec into your models and work from there. So what

I'll probably do is extract a copy of the openapi module and use it (with

proper attribution of course), but it would be much cleaner to add your module

as a pip dependency.

https://github.com/triaxtec/openapi-python-client is the project in question,

just FYI.

|

### First Check

* I added a very descriptive title to this issue.

* I used the GitHub search to find a similar issue and didn't find it.

* I searched the FastAPI documentation, with the integrated search.

* I already searched in Google "How to X in FastAPI" and didn't find any information.

* I already read and followed all the tutorial in the docs and didn't find an answer.

* I already checked if it is not related to FastAPI but to Pydantic.

* I already checked if it is not related to FastAPI but to Swagger UI.

* I already checked if it is not related to FastAPI but to ReDoc.

### Commit to Help

* I commit to help with one of those options 👆

### Example Code

###This API takes id in request , creates temp path in container , searches path for this id in database and copies file from AWS s3b for this id in temp created path, does ML processing and deletes the temp path then returns the predicted data

from datetime import datetime

import uvicorn