html_url stringlengths 51 51 | title stringlengths 6 280 | comments stringlengths 67 24.7k | body stringlengths 51 36.2k | __index_level_0__ int64 1 1.17k | comment_length int64 16 1.45k | text stringlengths 190 38.3k | embeddings list |

|---|---|---|---|---|---|---|---|

https://github.com/huggingface/datasets/issues/5437 | Can't load png dataset with 4 channel (RGBA) | Hi! Can you please share the directory structure of your image folder and the `load_dataset` call? We decode images with Pillow, and Pillow supports RGBA PNGs, so this shouldn't be a problem.

| I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand.

I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand. | > Hi! Can you please share the directory structure of your image folder and the `load_dataset` call? We decode images with Pillow, and Pillow supports RGBA PNGs, so this shouldn't be a problem.

>

>

I have only 1 folder that I use in the load_dataset function with the name "IMGDATA" and all my 9000 images are located... | I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand.

I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand. | Okay, I figured out what was wrong. When uploading my dataset via Google Drive, the images broke and Pillow couldn't open them. As a result, I solved the problem by downloading the ZIP archive | I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand.

I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand., it states:

> Using take (or skip) prevents future calls to shuffle from shuffling the dataset shards order, otherwise the taken examples cou... | 337 | 77 | Wrong statement in "Load a Dataset in Streaming mode" leads to data leakage

### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shu... | [

-1.1909445524215698,

-0.9180267453193665,

-0.6570744514465332,

1.3565850257873535,

-0.13932180404663086,

-1.3442559242248535,

0.1825108677148819,

-1.1079790592193604,

1.7494699954986572,

-0.7056476473808289,

0.19381839036941528,

-1.7175660133361816,

-0.045251257717609406,

-0.55962181091308... |

https://github.com/huggingface/datasets/issues/5435 | Wrong statement in "Load a Dataset in Streaming mode" leads to data leakage | Also note that you are referring to an outdated documentation page: datasets 1.10.2 version

Current datasets version is 2.8.0 and the corresponding documentation page is: https://huggingface.co/docs/datasets/stream#split-dataset | ### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shuffle from shuffling the dataset shards order, otherwise the taken examples cou... | 337 | 26 | Wrong statement in "Load a Dataset in Streaming mode" leads to data leakage

### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shu... | [

-1.1905205249786377,

-0.9313532114028931,

-0.6946508884429932,

1.361343264579773,

-0.11745374649763107,

-1.321941614151001,

0.13184931874275208,

-1.0772746801376343,

1.7131266593933105,

-0.6895366311073303,

0.1830805540084839,

-1.7229007482528687,

-0.07875096797943115,

-0.5333200693130493,... |

https://github.com/huggingface/datasets/issues/5435 | Wrong statement in "Load a Dataset in Streaming mode" leads to data leakage | Hi @albertvillanova thanks for your reply and your explaination here.

Sorry for the confusion as I'm not actually a user of your repo and I just happen to find the thread by Google (and didn't read carefully).

Great to know that and you made everything very clear now.

Thanks for your time and sorry for the co... | ### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shuffle from shuffling the dataset shards order, otherwise the taken examples cou... | 337 | 63 | Wrong statement in "Load a Dataset in Streaming mode" leads to data leakage

### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shu... | [

-1.239044189453125,

-0.9613012671470642,

-0.6822177171707153,

1.3689988851547241,

-0.1253994107246399,

-1.336166262626648,

0.13282009959220886,

-1.119757890701294,

1.6800132989883423,

-0.725843071937561,

0.20270109176635742,

-1.6984111070632935,

-0.028832409530878067,

-0.5352584719657898,

... |

https://github.com/huggingface/datasets/issues/5433 | Support latest Docker image in CI benchmarks | Sorry, it was us:[^1] https://github.com/iterative/cml/pull/1317 & https://github.com/iterative/cml/issues/1319#issuecomment-1385599559; should be fixed with [v0.18.17](https://github.com/iterative/cml/releases/tag/v0.18.17).

[^1]: More or less, see https://github.com/yargs/yargs/issues/873. | Once we find out the root cause of:

- #5431

we should revert the temporary pin on the Docker image version introduced by:

- #5432 | 339 | 18 | Support latest Docker image in CI benchmarks

Once we find out the root cause of:

- #5431

we should revert the temporary pin on the Docker image version introduced by:

- #5432

Sorry, it was us:[^1] https://github.com/iterative/cml/pull/1317 & https://github.com/iterative/cml/issues/1319#issuecomment-1385599559... | [

-1.1741132736206055,

-0.9020137786865234,

-0.9337199926376343,

1.5220918655395508,

-0.0625097006559372,

-1.2240580320358276,

0.11463204026222229,

-0.9091006517410278,

1.6163134574890137,

-0.6164934635162354,

0.2926141619682312,

-1.615256428718567,

-0.07128304243087769,

-0.5232698321342468,... |

https://github.com/huggingface/datasets/issues/5433 | Support latest Docker image in CI benchmarks | Hi @0x2b3bfa0, thanks a lot for the investigation, the context about the the root cause and for fixing it!!

We are reviewing your PR to unpin the container image. | Once we find out the root cause of:

- #5431

we should revert the temporary pin on the Docker image version introduced by:

- #5432 | 339 | 29 | Support latest Docker image in CI benchmarks

Once we find out the root cause of:

- #5431

we should revert the temporary pin on the Docker image version introduced by:

- #5432

Hi @0x2b3bfa0, thanks a lot for the investigation, the context about the the root cause and for fixing it!!

We are reviewing your PR... | [

-1.17626953125,

-0.9430264830589294,

-0.9038001894950867,

1.3165526390075684,

-0.3127286434173584,

-1.38706374168396,

0.17333859205245972,

-1.0670613050460815,

1.619828462600708,

-0.9278891086578369,

0.4337567985057831,

-1.6069083213806152,

0.12599827349185944,

-0.54421067237854,

-0.8060... |

https://github.com/huggingface/datasets/issues/5430 | Support Apache Beam >= 2.44.0 | Some of the shard files now have 0 number of rows.

We have opened an issue in the Apache Beam repo:

- https://github.com/apache/beam/issues/25041 | Once we find out the root cause of:

- #5426

we should revert the temporary pin on apache-beam introduced by:

- #5429 | 340 | 23 | Support Apache Beam >= 2.44.0

Once we find out the root cause of:

- #5426

we should revert the temporary pin on apache-beam introduced by:

- #5429

Some of the shard files now have 0 number of rows.

We have opened an issue in the Apache Beam repo:

- https://github.com/apache/beam/issues/25041 | [

-1.2750862836837769,

-0.9439370036125183,

-0.8172897696495056,

1.540722370147705,

-0.22284150123596191,

-1.2459444999694824,

0.0407663993537426,

-0.8248154520988464,

1.5018937587738037,

-0.7695805430412292,

0.36583229899406433,

-1.6501755714416504,

0.011896209791302681,

-0.4887296557426452... |

https://github.com/huggingface/datasets/issues/5428 | Load/Save FAISS index using fsspec | Hi! Sure, feel free to submit a PR. Maybe if we want to be consistent with the existing API, it would be cleaner to directly add support for `fsspec` paths in `Dataset.load_faiss_index`/`Dataset.save_faiss_index` in the same manner as it was done in `Dataset.load_from_disk`/`Dataset.save_to_disk`. | ### Feature request

From what I understand `faiss` already support this [link](https://github.com/facebookresearch/faiss/wiki/Index-IO,-cloning-and-hyper-parameter-tuning#generic-io-support)

I would like to use a stream as input to `Dataset.load_faiss_index` and `Dataset.save_faiss_index`.

### Motivation

In... | 341 | 42 | Load/Save FAISS index using fsspec

### Feature request

From what I understand `faiss` already support this [link](https://github.com/facebookresearch/faiss/wiki/Index-IO,-cloning-and-hyper-parameter-tuning#generic-io-support)

I would like to use a stream as input to `Dataset.load_faiss_index` and `Dataset.save_... | [

-1.1222985982894897,

-0.8920488357543945,

-0.7614911198616028,

1.5106948614120483,

-0.12036654353141785,

-1.264846682548523,

0.21462397277355194,

-0.9880435466766357,

1.6212654113769531,

-0.9347405433654785,

0.3081521689891815,

-1.6232985258102417,

0.09116572141647339,

-0.6534204483032227,... |

https://github.com/huggingface/datasets/issues/5427 | Unable to download dataset id_clickbait | Thanks for reporting, @ilos-vigil.

We have transferred this issue to the corresponding dataset on the Hugging Face Hub: https://huggingface.co/datasets/id_clickbait/discussions/1 | ### Describe the bug

I tried to download dataset `id_clickbait`, but receive this error message.

```

FileNotFoundError: Couldn't find file at https://md-datasets-cache-zipfiles-prod.s3.eu-west-1.amazonaws.com/k42j7x2kpn-1.zip

```

When i open the link using browser, i got this XML data.

```xml

<?xml versi... | 342 | 19 | Unable to download dataset id_clickbait

### Describe the bug

I tried to download dataset `id_clickbait`, but receive this error message.

```

FileNotFoundError: Couldn't find file at https://md-datasets-cache-zipfiles-prod.s3.eu-west-1.amazonaws.com/k42j7x2kpn-1.zip

```

When i open the link using browser, i... | [

-1.1930586099624634,

-0.8530861139297485,

-0.6839118003845215,

1.3900913000106812,

-0.12742212414741516,

-1.2396419048309326,

0.1807224154472351,

-1.1172120571136475,

1.6125329732894897,

-0.6963998079299927,

0.2914436459541321,

-1.6744513511657715,

-0.07168597728013992,

-0.5758096575737,

... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | Hi!

`Dataset.sort` calls `df.sort_values` internally, and `df.sort_values` brings all the "sort" columns in memory, so sorting on multiple keys could be very expensive. This makes me think that maybe we can replace `df.sort_values` with `pyarrow.compute.sort_indices` - the latter can also sort on multiple keys and ... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 109 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2507238388061523,

-0.9375834465026855,

-0.6712387204170227,

1.4547932147979736,

-0.14533424377441406,

-1.3173452615737915,

0.20516350865364075,

-1.07721745967865,

1.7774194478988647,

-0.8531342148780823,

0.33074915409088135,

-1.6261314153671265,

0.08578143268823624,

-0.6216345429420471,... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | @mariosasko If I understand the code right, using `pyarrow.compute.sort_indices` would also require changes to the `select` method if it is meant to sort multiple keys. That's because `select` only accepts 1D input for `indices`, not an iterable or similar which would be required for multiple keys unless you want some ... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 64 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2212759256362915,

-0.9480838775634766,

-0.7015002369880676,

1.4788826704025269,

-0.10449813306331635,

-1.2614364624023438,

0.1443437933921814,

-1.0273969173431396,

1.7198460102081299,

-0.8230888247489929,

0.33712315559387207,

-1.6211422681808472,

0.12400048971176147,

-0.6231672167778015... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | @MichlF No, it doesn't require modifying select because sorting on multiple keys also returns a 1D array.

It's easier to understand with an example:

```python

>>> import pyarrow as pa

>>> import pyarrow.compute as pc

>>> table = pa.table({

... "name": ["John", "Eve", "Peter", "John"],

... "surname": ["... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 76 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.21726655960083,

-0.9191803932189941,

-0.7278240919113159,

1.4414478540420532,

-0.18655966222286224,

-1.2138382196426392,

0.1759604960680008,

-1.0843857526779175,

1.6804003715515137,

-0.7789862155914307,

0.3355465829372406,

-1.6246124505996704,

0.01778346672654152,

-0.588951587677002,

... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | Thanks for clarifying.

I can prepare a PR to address this issue. This would be my first PR here so I have a few maybe silly questions but:

- What is the preferred input type of `sort_keys` for the sort method? A sequence with name, order tuples like pyarrow's `sort_indices` requires?

- What about backwards compatabi... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 112 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2062435150146484,

-0.955108106136322,

-0.6964579224586487,

1.4512603282928467,

-0.13979633152484894,

-1.2945438623428345,

0.1756330132484436,

-1.0331217050552368,

1.7455586194992065,

-0.8622071146965027,

0.36619484424591064,

-1.6305056810379028,

0.10075096786022186,

-0.6490331292152405,... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | I think we can have the following signature:

```python

def sort(

self,

column_names: Union[str, Sequence[str]],

reverse: Union[bool, Sequence[bool]] = False,

kind="deprecated",

null_placement: str = "last",

keep_in_memory: bool = False,

load_from_cache_fi... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 127 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.1986860036849976,

-0.9022864103317261,

-0.7445774674415588,

1.5108447074890137,

-0.14732931554317474,

-1.2305964231491089,

0.1892164647579193,

-1.0421628952026367,

1.6967023611068726,

-0.8177416920661926,

0.3498031795024872,

-1.6219446659088135,

0.08035118877887726,

-0.6614535450935364,... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | I am pretty much done with the PR. Just one clarification: `Sequence` in `arrow_dataset.py` is a custom dataclass from `features.py` instead of the `type.hinting` class `Sequence` from Python. Do you suggest using that custom `Sequence` class somehow ? Otherwise signature currently reads instead:

```Python

def so... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 119 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.206371784210205,

-0.9001083374023438,

-0.7287048697471619,

1.5281463861465454,

-0.15486183762550354,

-1.2318964004516602,

0.1660555899143219,

-1.061037540435791,

1.6927155256271362,

-0.8267017006874084,

0.31720396876335144,

-1.615334391593933,

0.09671789407730103,

-0.6464735269546509,

... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | I meant `typing.Sequence` (`datasets.Sequence` is a feature type).

Regarding `null_placement`, I think we can support both `at_start` and `at_end`, and `last` and `first` (for backward compatibility; convert internally to `at_end` and `at_start` respectively). | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 33 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2010776996612549,

-0.9742602109909058,

-0.7009586691856384,

1.479052186012268,

-0.0717446357011795,

-1.3149577379226685,

0.18090492486953735,

-1.047257900238037,

1.756934642791748,

-0.8613452911376953,

0.36277204751968384,

-1.6430747509002686,

0.0807223990559578,

-0.6359919309616089,

... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | > I meant typing.Sequence (datasets.Sequence is a feature type).

Sorry, I actually meant `typing.Sequence` and not `type.hinting`. However, the issue is still that `dataset.Sequence` is imported in `arrow_dataset.py` so I cannot import and use `typing.Sequence` for the `sort`'s signature without overwriting the `dat... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 119 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2026468515396118,

-0.9789732694625854,

-0.6965401768684387,

1.44068443775177,

-0.13039067387580872,

-1.3307585716247559,

0.14831256866455078,

-1.1041706800460815,

1.7870264053344727,

-0.8782883286476135,

0.30281975865364075,

-1.668694019317627,

0.08402121812105179,

-0.5972263216972351,

... |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | You can avoid the name collision by renaming `typing.Sequence` to `Sequence_` when importing:

```python

from typing import Sequence as Sequence_

``` | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 343 | 21 | Sort on multiple keys with datasets.Dataset.sort()

### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggeste... | [

-1.2064616680145264,

-0.9316847920417786,

-0.7140529751777649,

1.5059329271316528,

-0.11384304612874985,

-1.3094804286956787,

0.12475172430276871,

-1.0355916023254395,

1.7230068445205688,

-0.8554967045783997,

0.34709468483924866,

-1.6594160795211792,

0.10467644780874252,

-0.629877567291259... |

https://github.com/huggingface/datasets/issues/5424 | When applying `ReadInstruction` to custom load it's not DatasetDict but list of Dataset? | Hi! You can get a `DatasetDict` if you pass a dictionary with read instructions as follows:

```python

instructions = [

ReadInstruction(split_name="train", from_=0, to=10, unit='%', rounding='closest'),

ReadInstruction(split_name="dev", from_=0, to=10, unit='%', rounding='closest'),

ReadInstruction(spli... | ### Describe the bug

I am loading datasets from custom `tsv` files stored locally and applying split instructions for each split. Although the ReadInstruction is being applied correctly and I was expecting it to be `DatasetDict` but instead it is a list of `Dataset`.

### Steps to reproduce the bug

Steps to reproduc... | 344 | 51 | When applying `ReadInstruction` to custom load it's not DatasetDict but list of Dataset?

### Describe the bug

I am loading datasets from custom `tsv` files stored locally and applying split instructions for each split. Although the ReadInstruction is being applied correctly and I was expecting it to be `DatasetDict`... | [

-1.2014646530151367,

-0.9519798755645752,

-0.664831817150116,

1.4391368627548218,

-0.1864701360464096,

-1.1531896591186523,

0.17084240913391113,

-1.0244344472885132,

1.580081820487976,

-0.7707537412643433,

0.31056874990463257,

-1.6319173574447632,

-0.008924031630158424,

-0.6302415132522583... |

https://github.com/huggingface/datasets/issues/5422 | Datasets load error for saved github issues | I can confirm that the error exists!

I'm trying to read 3 parquet files locally:

```python

from datasets import load_dataset, Features, Value, ClassLabel

review_dataset = load_dataset(

"parquet",

data_files={

"train": os.path.join(sentiment_analysis_data_path, "train.parquet"),

"valida... | ### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset... | 345 | 95 | Datasets load error for saved github issues

### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: ... | [

-1.1963127851486206,

-0.9330918788909912,

-0.7827396392822266,

1.4344896078109741,

-0.1406930536031723,

-1.2193514108657837,

0.08512034267187119,

-1.1205116510391235,

1.6201714277267456,

-0.678076446056366,

0.23913109302520752,

-1.6713672876358032,

0.04052264243364334,

-0.5257079005241394,... |

https://github.com/huggingface/datasets/issues/5422 | Datasets load error for saved github issues | @Extremesarova I think this is a different issue, but understand using features could be a work-around.

It seems the field `closed_at` is `null` in many cases.

I've not found a way to specify only a single feature without (succesfully) specifiying the full and quite detailed set of expected features. Using this fea... | ### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset... | 345 | 66 | Datasets load error for saved github issues

### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: ... | [

-1.1963127851486206,

-0.9330918788909912,

-0.7827396392822266,

1.4344896078109741,

-0.1406930536031723,

-1.2193514108657837,

0.08512034267187119,

-1.1205116510391235,

1.6201714277267456,

-0.678076446056366,

0.23913109302520752,

-1.6713672876358032,

0.04052264243364334,

-0.5257079005241394,... |

https://github.com/huggingface/datasets/issues/5422 | Datasets load error for saved github issues | Found this when searching for the same error, looks like based on #3965 it's just an issue with the data. I found that changing `df = pd.DataFrame.from_records(all_issues)` to `df = pd.DataFrame.from_records(all_issues).dropna(axis=1, how='all').drop(['milestone'], axis=1)` from the fetch_issues function fixed the issu... | ### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset... | 345 | 65 | Datasets load error for saved github issues

### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: ... | [

-1.1963127851486206,

-0.9330918788909912,

-0.7827396392822266,

1.4344896078109741,

-0.1406930536031723,

-1.2193514108657837,

0.08512034267187119,

-1.1205116510391235,

1.6201714277267456,

-0.678076446056366,

0.23913109302520752,

-1.6713672876358032,

0.04052264243364334,

-0.5257079005241394,... |

https://github.com/huggingface/datasets/issues/5422 | Datasets load error for saved github issues | I have this same issue. I saved a dataset to disk and now I can't load it. | ### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset... | 345 | 17 | Datasets load error for saved github issues

### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: ... | [

-1.1963127851486206,

-0.9330918788909912,

-0.7827396392822266,

1.4344896078109741,

-0.1406930536031723,

-1.2193514108657837,

0.08512034267187119,

-1.1205116510391235,

1.6201714277267456,

-0.678076446056366,

0.23913109302520752,

-1.6713672876358032,

0.04052264243364334,

-0.5257079005241394,... |

https://github.com/huggingface/datasets/issues/5421 | Support case-insensitive Hub dataset name in load_dataset | Closing as case-insensitivity should be only for URL redirection on the Hub. In the APIs, we will only support the canonical name (https://github.com/huggingface/moon-landing/pull/2399#issuecomment-1382085611) | ### Feature request

The dataset name on the Hub is case-insensitive (see https://github.com/huggingface/moon-landing/pull/2399, internal issue), i.e., https://huggingface.co/datasets/GLUE redirects to https://huggingface.co/datasets/glue.

Ideally, we could load the glue dataset using the following:

```

from d... | 346 | 23 | Support case-insensitive Hub dataset name in load_dataset

### Feature request

The dataset name on the Hub is case-insensitive (see https://github.com/huggingface/moon-landing/pull/2399, internal issue), i.e., https://huggingface.co/datasets/GLUE redirects to https://huggingface.co/datasets/glue.

Ideally, we cou... | [

-1.1574761867523193,

-0.9057379961013794,

-0.8160881400108337,

1.4558465480804443,

-0.07727113366127014,

-1.312180995941162,

0.1407918632030487,

-0.9606278538703918,

1.6307440996170044,

-0.7902531027793884,

0.37747347354888916,

-1.7550194263458252,

0.008631757460534573,

-0.6654250621795654... |

https://github.com/huggingface/datasets/issues/5419 | label_column='labels' in datasets.TextClassification and 'label' or 'label_ids' in transformers.DataColator | Hi! Thanks for pointing out this inconsistency. Changing the default value at this point is probably not worth it, considering we've started discussing the state of the task API internally - we will most likely deprecate the current one and replace it with a more robust solution that relies on the `train_eval_index` fi... | ### Describe the bug

When preparing a dataset for a task using `datasets.TextClassification`, the output feature is named `labels`. When preparing the trainer using the `transformers.DataCollator` the default column name is `label` if binary or `label_ids` if multi-class problem.

It is required to rename the column... | 347 | 62 | label_column='labels' in datasets.TextClassification and 'label' or 'label_ids' in transformers.DataColator

### Describe the bug

When preparing a dataset for a task using `datasets.TextClassification`, the output feature is named `labels`. When preparing the trainer using the `transformers.DataCollator` the default ... | [

-1.1833069324493408,

-0.9694234132766724,

-0.619713306427002,

1.6215074062347412,

-0.12914401292800903,

-1.279888391494751,

0.24085275828838348,

-1.0611002445220947,

1.6883082389831543,

-0.8849717378616333,

0.3013935089111328,

-1.653178334236145,

0.01117192953824997,

-0.5831577777862549,

... |

https://github.com/huggingface/datasets/issues/5419 | label_column='labels' in datasets.TextClassification and 'label' or 'label_ids' in transformers.DataColator | The task templates API has been deprecated (will be removed in version 3.0), so I'm closing this issue. | ### Describe the bug

When preparing a dataset for a task using `datasets.TextClassification`, the output feature is named `labels`. When preparing the trainer using the `transformers.DataCollator` the default column name is `label` if binary or `label_ids` if multi-class problem.

It is required to rename the column... | 347 | 18 | label_column='labels' in datasets.TextClassification and 'label' or 'label_ids' in transformers.DataColator

### Describe the bug

When preparing a dataset for a task using `datasets.TextClassification`, the output feature is named `labels`. When preparing the trainer using the `transformers.DataCollator` the default ... | [

-1.1833069324493408,

-0.9694234132766724,

-0.619713306427002,

1.6215074062347412,

-0.12914401292800903,

-1.279888391494751,

0.24085275828838348,

-1.0611002445220947,

1.6883082389831543,

-0.8849717378616333,

0.3013935089111328,

-1.653178334236145,

0.01117192953824997,

-0.5831577777862549,

... |

https://github.com/huggingface/datasets/issues/5418 | Add ProgressBar for `to_parquet` | Thanks for your proposal, @zanussbaum. Yes, I agree that would definitely be a nice feature to have! | ### Feature request

Add a progress bar for `Dataset.to_parquet`, similar to how `to_json` works.

### Motivation

It's a bit frustrating to not know how long a dataset will take to write to file and if it's stuck or not without a progress bar

### Your contribution

Sure I can help if needed | 348 | 17 | Add ProgressBar for `to_parquet`

### Feature request

Add a progress bar for `Dataset.to_parquet`, similar to how `to_json` works.

### Motivation

It's a bit frustrating to not know how long a dataset will take to write to file and if it's stuck or not without a progress bar

### Your contribution

Sure I can help i... | [

-1.1281404495239258,

-0.9272894859313965,

-0.876679003238678,

1.5756957530975342,

-0.12682101130485535,

-1.3146666288375854,

0.21620792150497437,

-1.059373378753662,

1.6573750972747803,

-0.7932515740394592,

0.4578741490840912,

-1.5561256408691406,

0.07988949865102768,

-0.7136300802230835,

... |

https://github.com/huggingface/datasets/issues/5418 | Add ProgressBar for `to_parquet` | That would be awesome ! You can comment `#self-assign` to assign you to this issue and open a PR :) Will be happy to review | ### Feature request

Add a progress bar for `Dataset.to_parquet`, similar to how `to_json` works.

### Motivation

It's a bit frustrating to not know how long a dataset will take to write to file and if it's stuck or not without a progress bar

### Your contribution

Sure I can help if needed | 348 | 25 | Add ProgressBar for `to_parquet`

### Feature request

Add a progress bar for `Dataset.to_parquet`, similar to how `to_json` works.

### Motivation

It's a bit frustrating to not know how long a dataset will take to write to file and if it's stuck or not without a progress bar

### Your contribution

Sure I can help i... | [

-1.104288935661316,

-0.9040449857711792,

-0.8816316723823547,

1.6051945686340332,

-0.13855379819869995,

-1.3316636085510254,

0.15115419030189514,

-1.067552089691162,

1.6967405080795288,

-0.7785230875015259,

0.4231182038784027,

-1.550559639930725,

0.10968958586454391,

-0.7439088821411133,

... |

https://github.com/huggingface/datasets/issues/5414 | Sharding error with Multilingual LibriSpeech | Thanks for reporting, @Nithin-Holla.

This is a known issue for multiple datasets and we are investigating it:

- See e.g.: https://huggingface.co/datasets/ami/discussions/3 | ### Describe the bug

Loading the German Multilingual LibriSpeech dataset results in a RuntimeError regarding sharding with the following stacktrace:

```

Downloading and preparing dataset multilingual_librispeech/german to /home/nithin/datadrive/cache/huggingface/datasets/facebook___multilingual_librispeech/german/... | 349 | 21 | Sharding error with Multilingual LibriSpeech

### Describe the bug

Loading the German Multilingual LibriSpeech dataset results in a RuntimeError regarding sharding with the following stacktrace:

```

Downloading and preparing dataset multilingual_librispeech/german to /home/nithin/datadrive/cache/huggingface/datas... | [

-1.138941764831543,

-0.8181207180023193,

-0.6920398473739624,

1.4167742729187012,

-0.001813550479710102,

-1.3891597986221313,

0.020772943273186684,

-0.9953727126121521,

1.4881454706192017,

-0.7330920696258545,

0.3880062401294708,

-1.7275402545928955,

0.11068633943796158,

-0.562932252883911... |

https://github.com/huggingface/datasets/issues/5413 | concatenate_datasets fails when two dataset with shards > 1 and unequal shard numbers | Hi ! Thanks for reporting :)

I managed to reproduce the hub using

```python

from datasets import concatenate_datasets, Dataset, load_from_disk

Dataset.from_dict({"a": range(9)}).save_to_disk("tmp/ds1")

ds1 = load_from_disk("tmp/ds1")

ds1 = concatenate_datasets([ds1, ds1])

Dataset.from_dict({"b": range(6)... | ### Describe the bug

When using `concatenate_datasets([dataset1, dataset2], axis = 1)` to concatenate two datasets with shards > 1, it fails:

```

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/combine.py", line 182, in concatenate_datasets

return _concatenate_map_style_data... | 350 | 140 | concatenate_datasets fails when two dataset with shards > 1 and unequal shard numbers

### Describe the bug

When using `concatenate_datasets([dataset1, dataset2], axis = 1)` to concatenate two datasets with shards > 1, it fails:

```

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/dataset... | [

-1.2022230625152588,

-0.933254063129425,

-0.7547227144241333,

1.3154925107955933,

-0.1193099319934845,

-1.2487061023712158,

0.14360904693603516,

-1.046956181526184,

1.5380277633666992,

-0.7598488330841064,

0.12326542288064957,

-1.6745375394821167,

-0.19994229078292847,

-0.5410534143447876,... |

https://github.com/huggingface/datasets/issues/5412 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel | Hi ! It fails because the dataset is already being prepared by your first run. I'd encourage you to prepare your dataset before using it for multiple trainings.

You can also specify another cache directory by passing `cache_dir=` to `load_dataset()`. | ### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code throws this error.

If there is a workaround to ignore the cache I think that would ... | 351 | 40 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel

### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code... | [

-1.2141274213790894,

-0.9969725012779236,

-0.6490305066108704,

1.4509425163269043,

-0.19368737936019897,

-1.2160694599151611,

0.19137239456176758,

-1.0793054103851318,

1.5731899738311768,

-0.7488421201705933,

0.2618825137615204,

-1.631170392036438,

-0.0954568088054657,

-0.5608007311820984,... |

https://github.com/huggingface/datasets/issues/5412 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel | Thank you! What do you mean by prepare it beforehand? I am unclear how to conduct dataset preparation outside of using the `load_dataset` function. | ### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code throws this error.

If there is a workaround to ignore the cache I think that would ... | 351 | 24 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel

### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code... | [

-1.2141274213790894,

-0.9969725012779236,

-0.6490305066108704,

1.4509425163269043,

-0.19368737936019897,

-1.2160694599151611,

0.19137239456176758,

-1.0793054103851318,

1.5731899738311768,

-0.7488421201705933,

0.2618825137615204,

-1.631170392036438,

-0.0954568088054657,

-0.5608007311820984,... |

https://github.com/huggingface/datasets/issues/5412 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel | You can have a separate script that does load_dataset + map + save_to_disk to save your prepared dataset somewhere. Then in your training script you can reload the dataset with load_from_disk | ### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code throws this error.

If there is a workaround to ignore the cache I think that would ... | 351 | 31 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel

### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code... | [

-1.2141274213790894,

-0.9969725012779236,

-0.6490305066108704,

1.4509425163269043,

-0.19368737936019897,

-1.2160694599151611,

0.19137239456176758,

-1.0793054103851318,

1.5731899738311768,

-0.7488421201705933,

0.2618825137615204,

-1.631170392036438,

-0.0954568088054657,

-0.5608007311820984,... |

https://github.com/huggingface/datasets/issues/5412 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel | Thank you! I believe I was running additional map steps after loading, resulting in the cache conflict. | ### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code throws this error.

If there is a workaround to ignore the cache I think that would ... | 351 | 17 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel

### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code... | [

-1.2141274213790894,

-0.9969725012779236,

-0.6490305066108704,

1.4509425163269043,

-0.19368737936019897,

-1.2160694599151611,

0.19137239456176758,

-1.0793054103851318,

1.5731899738311768,

-0.7488421201705933,

0.2618825137615204,

-1.631170392036438,

-0.0954568088054657,

-0.5608007311820984,... |

https://github.com/huggingface/datasets/issues/5408 | dataset map function could not be hash properly | Hi ! On macos I tried with

- py 3.9.11

- datasets 2.8.0

- transformers 4.25.1

- dill 0.3.4

and I was able to hash `prepare_dataset` correctly:

```python

from datasets.fingerprint import Hasher

Hasher.hash(prepare_dataset)

```

What version of transformers do you have ? Can you try to call `Hasher.hash` on ... | ### Describe the bug

I follow the [blog post](https://huggingface.co/blog/fine-tune-whisper#building-a-demo) to finetune a Cantonese transcribe model.

When using map function to prepare dataset, following warning pop out:

`common_voice = common_voice.map(prepare_dataset,

remove_... | 352 | 64 | dataset map function could not be hash properly

### Describe the bug

I follow the [blog post](https://huggingface.co/blog/fine-tune-whisper#building-a-demo) to finetune a Cantonese transcribe model.

When using map function to prepare dataset, following warning pop out:

`common_voice = common_voice.map(prepare_... | [

-1.2026370763778687,

-0.8830283284187317,

-0.6998029947280884,

1.4831172227859497,

-0.14322924613952637,

-1.1129162311553955,

0.19913989305496216,

-1.045048475265503,

1.5351014137268066,

-0.7666561603546143,

0.2680216133594513,

-1.6109470129013062,

-0.0031448248773813248,

-0.52774876356124... |

https://github.com/huggingface/datasets/issues/5408 | dataset map function could not be hash properly | Thanks for your prompt reply.

I update datasets version to 2.8.0 and the warning is gong. | ### Describe the bug

I follow the [blog post](https://huggingface.co/blog/fine-tune-whisper#building-a-demo) to finetune a Cantonese transcribe model.

When using map function to prepare dataset, following warning pop out:

`common_voice = common_voice.map(prepare_dataset,

remove_... | 352 | 16 | dataset map function could not be hash properly

### Describe the bug

I follow the [blog post](https://huggingface.co/blog/fine-tune-whisper#building-a-demo) to finetune a Cantonese transcribe model.

When using map function to prepare dataset, following warning pop out:

`common_voice = common_voice.map(prepare_... | [

-1.2026370763778687,

-0.8830283284187317,

-0.6998029947280884,

1.4831172227859497,

-0.14322924613952637,

-1.1129162311553955,

0.19913989305496216,

-1.045048475265503,

1.5351014137268066,

-0.7666561603546143,

0.2680216133594513,

-1.6109470129013062,

-0.0031448248773813248,

-0.52774876356124... |

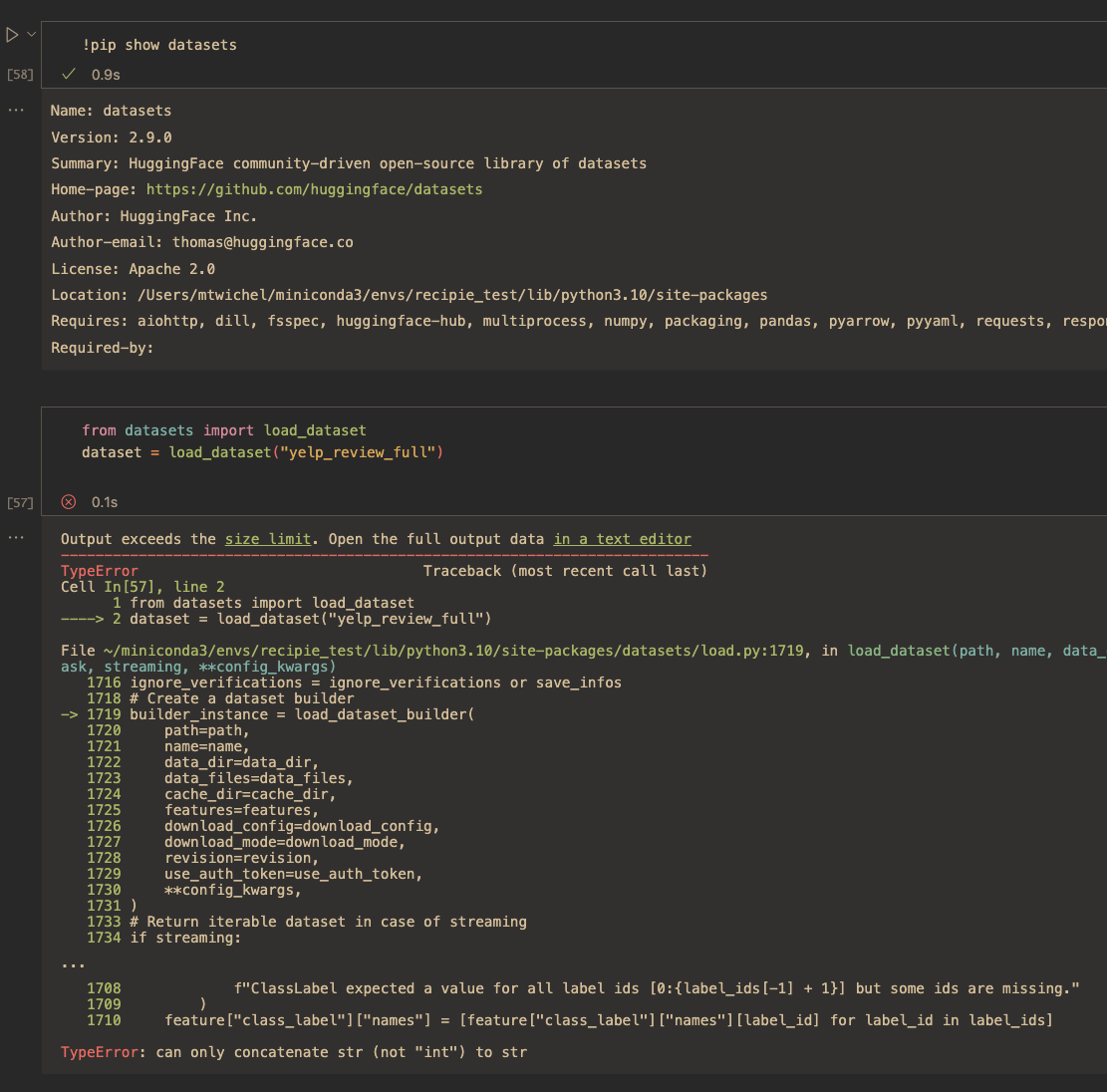

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | Hi ! I just tested locally and or colab and it works fine for 2.9 on `sst2`.

Also the code that is shown in your stack trace is not present in the 2.9 source code - so I'm wondering how you installed `datasets` that could cause this ? (you can check by searching for `[0:{label_ids[-1] + 1}]` in the [2.9 codebase](ht... | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 76 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.19907546043396,

-0.9080106019973755,

-0.7455325722694397,

1.4518007040023804,

-0.1747923493385315,

-1.2968993186950684,

0.09794913232326508,

-1.1907931566238403,

1.7332526445388794,

-0.7653722167015076,

0.3084734082221985,

-1.6923916339874268,

0.04660734534263611,

-0.5048437714576721,

... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | For what it's worth, I've also gotten this error on 2.9.0, and I've tried uninstalling an reinstalling

I'm very new to this package (I was following this tutorial: https://h... | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 54 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1893925666809082,

-0.9478173851966858,

-0.7714112997055054,

1.412634253501892,

-0.15072843432426453,

-1.257697343826294,

0.09516014158725739,

-1.0975152254104614,

1.7222583293914795,

-0.7652689814567566,

0.3060447573661804,

-1.7043513059616089,

0.013540535233914852,

-0.5268344283103943,... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | @ntrpnr @mtwichel Did you install `datasets` with conda ?

I suspect that `datasets` 2.9 on conda still have this issue for some reason. When I install `datasets` with `pip` I don't have this error. | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 34 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1625075340270996,

-0.9114705920219421,

-0.756309449672699,

1.44755220413208,

-0.1924143135547638,

-1.2859165668487549,

0.12256346642971039,

-1.187840461730957,

1.7959147691726685,

-0.7802746891975403,

0.3234862983226776,

-1.747164011001587,

0.018785636872053146,

-0.5356882214546204,

-... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | > @ntrpnr @mtwichel Did you install datasets with conda ?

I did yeah, I wonder if that's the issue | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 19 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1661595106124878,

-0.9210278987884521,

-0.7668328881263733,

1.4417788982391357,

-0.21570250391960144,

-1.2651389837265015,

0.10105810314416885,

-1.1767208576202393,

1.7375155687332153,

-0.787563145160675,

0.3176383376121521,

-1.7452316284179688,

0.016856275498867035,

-0.5132984519004822... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | I just checked on conda at https://anaconda.org/HuggingFace/datasets/files

and everything looks fine, I got

```python

f"ClassLabel expected a value for all label ids [0:{int(label_ids[-1]) + 1}] but some ids are missing."

```

as expected in features.py line 1760 (notice the "int()") to not have the TypeError.

... | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 70 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1509339809417725,

-0.919567346572876,

-0.776763916015625,

1.4301351308822632,

-0.14443561434745789,

-1.3198052644729614,

0.10859495401382446,

-1.1645647287368774,

1.7269237041473389,

-0.7640339732170105,

0.315082311630249,

-1.7467060089111328,

0.035089846700429916,

-0.5291357636451721,

... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | Could you also try this in your notebook ? In case your python kernel doesn't match the `pip` environment in your shell

```python

import datasets; datasets.__version__

```

and

```

!which python

```

```python

import sys; sys.executable

``` | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 37 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1121143102645874,

-0.9188764095306396,

-0.7429848909378052,

1.4759175777435303,

-0.17823047935962677,

-1.3194260597229004,

0.14361748099327087,

-1.1888262033462524,

1.8266671895980835,

-0.8005570769309998,

0.3374331593513489,

-1.7366091012954712,

0.02338600531220436,

-0.5286593437194824... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | Mmmm, just a potential clue:

Where are you running your Python code? Is it the Spyder IDE?

I have recently seen some users reporting conflicting Python environments while using Spyder...

Maybe related:

- #5487 | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 34 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.164669394493103,

-0.9222587943077087,

-0.7590553760528564,

1.463395595550537,

-0.20731991529464722,

-1.2697645425796509,

0.071416936814785,

-1.1582248210906982,

1.7317662239074707,

-0.7659924626350403,

0.3236183822154999,

-1.733502984046936,

0.014324785210192204,

-0.5283750891685486,

... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | Other potential clue:

- Had you already imported `datasets` before pip-updating it? You should first update datasets, before importing it. Otherwise, you need to restart the kernel after updating it. | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 30 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.172698974609375,

-0.9135552048683167,

-0.7403936982154846,

1.4711757898330688,

-0.19127242267131805,

-1.3008623123168945,

0.09696647524833679,

-1.1633660793304443,

1.7608200311660767,

-0.799736499786377,

0.3188323378562927,

-1.7471706867218018,

0.02611558884382248,

-0.5236750245094299,

... |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | I installed `datasets` with Conda using `conda install datasets` and got this issue.

Then I tried to reinstall using

`

conda install -c huggingface -c conda-forge datasets

`

The issue is now fixed. | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 354 | 33 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str`

`datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: ... | [

-1.1325589418411255,

-0.9141225814819336,

-0.764790415763855,

1.4390449523925781,

-0.15683706104755402,

-1.3214763402938843,

0.09748121351003647,

-1.172407865524292,

1.7872029542922974,

-0.7789129018783569,

0.33265209197998047,

-1.7489564418792725,

0.02682737447321415,

-0.5387814044952393,... |

https://github.com/huggingface/datasets/issues/5405 | size_in_bytes the same for all splits | Hi @Breakend,

Indeed, the attribute `size_in_bytes` refers to the size of the entire dataset configuration, for all splits (size of downloaded files + Arrow files), not the specific split.

This is also the case for `download_size` (downloaded files) and `dataset_size` (Arrow files).

The size of the Arrow files f... | ### Describe the bug

Hi, it looks like whenever you pull a dataset and get size_in_bytes, it returns the same size for all splits (and that size is the combined size of all splits). It seems like this shouldn't be the intended behavior since it is misleading. Here's an example:

```

>>> from datasets import load_da... | 355 | 76 | size_in_bytes the same for all splits

### Describe the bug

Hi, it looks like whenever you pull a dataset and get size_in_bytes, it returns the same size for all splits (and that size is the combined size of all splits). It seems like this shouldn't be the intended behavior since it is misleading. Here's an example:

... | [

-1.1352394819259644,

-0.796729326248169,

-0.7571126818656921,

1.434135913848877,

-0.0972394347190857,

-1.2886606454849243,

0.20292288064956665,

-1.1315603256225586,

1.7133888006210327,

-0.8233996629714966,

0.27702584862709045,

-1.6561424732208252,

-0.028486154973506927,

-0.5886371731758118... |

https://github.com/huggingface/datasets/issues/5402 | Missing state.json when creating a cloud dataset using a dataset_builder | `load_from_disk` must be used on datasets saved using `save_to_disk`: they correspond to fully serialized datasets including their state.

On the other hand, `download_and_prepare` just downloads the raw data and convert them to arrow (or parquet if you want). We are working on allowing you to reload a dataset saved ... | ### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session import AioSession as Session

from datasets import load_from_disk, load_da... | 357 | 66 | Missing state.json when creating a cloud dataset using a dataset_builder

### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session... | [

-1.2194854021072388,

-0.9229915738105774,

-0.633399486541748,

1.543872594833374,

-0.1812359094619751,

-1.2233837842941284,

0.22043099999427795,

-1.0921630859375,

1.6815913915634155,

-0.8181195259094238,

0.33051154017448425,

-1.6326212882995605,

-0.0265568345785141,

-0.5852939486503601,

-... |

https://github.com/huggingface/datasets/issues/5402 | Missing state.json when creating a cloud dataset using a dataset_builder | Thanks, I'll follow that issue.

I was following the [cloud storage](https://huggingface.co/docs/datasets/filesystems) docs section and perhaps I'm missing some part of the flow; start with `load_dataset_builder` + `download_and_prepare`. You say I need an explicit `save_to_disk` but what object needs to be saved? t... | ### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session import AioSession as Session

from datasets import load_from_disk, load_da... | 357 | 50 | Missing state.json when creating a cloud dataset using a dataset_builder

### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session... | [

-1.2194854021072388,

-0.9229915738105774,

-0.633399486541748,

1.543872594833374,

-0.1812359094619751,

-1.2233837842941284,

0.22043099999427795,

-1.0921630859375,

1.6815913915634155,

-0.8181195259094238,

0.33051154017448425,

-1.6326212882995605,

-0.0265568345785141,

-0.5852939486503601,

-... |

https://github.com/huggingface/datasets/issues/5402 | Missing state.json when creating a cloud dataset using a dataset_builder | Right now `load_dataset_builder` + `download_and_prepare` is to be used with tools like dask or spark, but `load_dataset` will support private cloud storage soon as well so you'll be able to reload the dataset with `datasets`.

Right now the only function that can load a dataset from a cloud storage is `load_from_dis... | ### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session import AioSession as Session

from datasets import load_from_disk, load_da... | 357 | 61 | Missing state.json when creating a cloud dataset using a dataset_builder

### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session... | [

-1.2194854021072388,

-0.9229915738105774,

-0.633399486541748,

1.543872594833374,

-0.1812359094619751,

-1.2233837842941284,

0.22043099999427795,

-1.0921630859375,

1.6815913915634155,

-0.8181195259094238,

0.33051154017448425,

-1.6326212882995605,

-0.0265568345785141,

-0.5852939486503601,

-... |

https://github.com/huggingface/datasets/issues/5394 | CI error: TypeError: dataclass_transform() got an unexpected keyword argument 'field_specifiers' | @MFatnassi, this issue and the corresponding fix only affect our Continuous Integration testing environment.

Note that `datasets` does not depend on `spacy`. | ### Describe the bug

While installing the dependencies, the CI raises a TypeError:

```

Traceback (most recent call last):

File "/opt/hostedtoolcache/Python/3.7.15/x64/lib/python3.7/runpy.py", line 183, in _run_module_as_main

mod_name, mod_spec, code = _get_module_details(mod_name, _Error)

File "/opt/hoste... | 358 | 22 | CI error: TypeError: dataclass_transform() got an unexpected keyword argument 'field_specifiers'

### Describe the bug

While installing the dependencies, the CI raises a TypeError:

```

Traceback (most recent call last):

File "/opt/hostedtoolcache/Python/3.7.15/x64/lib/python3.7/runpy.py", line 183, in _run_modul... | [

-1.151152491569519,

-0.8921622633934021,

-0.6899294853210449,

1.5089271068572998,

-0.10564844310283661,

-1.2708711624145508,

0.18829965591430664,

-0.9905667304992676,

1.4451483488082886,

-0.7144368886947632,

0.18018119037151337,

-1.629974365234375,

-0.14859190583229065,

-0.4965536296367645... |

https://github.com/huggingface/datasets/issues/5391 | Whisper Event - RuntimeError: The size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1000/1000 [2:52:21<00:00, 10.34s/it] | Hey @catswithbats! Super sorry for the late reply! This is happening because there is data with label length (504) that exceeds the model's max length (448).

There are two options here:

1. Increase the model's `max_length` parameter:

```python

model.config.max_length = 512

```

2. Filter data with labels longe... | Done in a VM with a GPU (Ubuntu) following the [Whisper Event - PYTHON](https://github.com/huggingface/community-events/tree/main/whisper-fine-tuning-event#python-script) instructions.

Attempted using [RuntimeError: he size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1... | 359 | 108 | Whisper Event - RuntimeError: The size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1000/1000 [2:52:21<00:00, 10.34s/it]

Done in a VM with a GPU (Ubuntu) following the [Whisper Event - PYTHON](https://github.com/huggingface/community-events/tree/main/whisper-fine-tuning-ev... | [

-1.2096641063690186,

-0.8761712312698364,

-0.6895792484283447,

1.4795067310333252,

-0.06263969838619232,

-1.3368308544158936,

0.07178962975740433,

-0.9243023991584778,

1.5293045043945312,

-0.7532963156700134,

0.3631325662136078,

-1.6789805889129639,

0.0257553793489933,

-0.5565799474716187,... |

https://github.com/huggingface/datasets/issues/5391 | Whisper Event - RuntimeError: The size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1000/1000 [2:52:21<00:00, 10.34s/it] | @sanchit-gandhi Thank you for all your work on this topic.

I'm finding that changing the `max_length` value does not make this error go away. | Done in a VM with a GPU (Ubuntu) following the [Whisper Event - PYTHON](https://github.com/huggingface/community-events/tree/main/whisper-fine-tuning-event#python-script) instructions.

Attempted using [RuntimeError: he size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1... | 359 | 24 | Whisper Event - RuntimeError: The size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1000/1000 [2:52:21<00:00, 10.34s/it]

Done in a VM with a GPU (Ubuntu) following the [Whisper Event - PYTHON](https://github.com/huggingface/community-events/tree/main/whisper-fine-tuning-ev... | [

-1.2096641063690186,

-0.8761712312698364,

-0.6895792484283447,

1.4795067310333252,

-0.06263969838619232,

-1.3368308544158936,

0.07178962975740433,

-0.9243023991584778,

1.5293045043945312,

-0.7532963156700134,

0.3631325662136078,

-1.6789805889129639,

0.0257553793489933,

-0.5565799474716187,... |

https://github.com/huggingface/datasets/issues/5390 | Error when pushing to the CI hub | Hmmm, git bisect tells me that the behavior is the same since https://github.com/huggingface/datasets/commit/67e65c90e9490810b89ee140da11fdd13c356c9c (3 Oct), i.e. https://github.com/huggingface/datasets/pull/4926 | ### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%|██████████████████████████████████... | 360 | 17 | Error when pushing to the CI hub

### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%... | [

-1.1982983350753784,

-0.9045103192329407,

-0.6958116292953491,

1.477090835571289,

-0.09118883311748505,

-1.2786083221435547,

0.10011560469865799,

-1.0638335943222046,

1.448171854019165,

-0.6696186661720276,

0.2905119061470032,

-1.6558254957199097,

-0.12557749450206757,

-0.5398754477500916,... |

https://github.com/huggingface/datasets/issues/5390 | Error when pushing to the CI hub | Maybe the current version of moonlanding in Hub CI is the issue.

I relaunched tests that were working two days ago: now they are failing. https://github.com/huggingface/datasets-server/commit/746414449cae4b311733f8a76e5b3b4ca73b38a9 for example

cc @huggingface/moon-landing | ### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%|██████████████████████████████████... | 360 | 30 | Error when pushing to the CI hub

### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%... | [

-1.1982983350753784,

-0.9045103192329407,

-0.6958116292953491,

1.477090835571289,

-0.09118883311748505,

-1.2786083221435547,

0.10011560469865799,

-1.0638335943222046,

1.448171854019165,

-0.6696186661720276,

0.2905119061470032,

-1.6558254957199097,

-0.12557749450206757,

-0.5398754477500916,... |

https://github.com/huggingface/datasets/issues/5390 | Error when pushing to the CI hub | Hi! I don't think this has anything to do with `datasets`. Hub CI seems to be the culprit - the identical failure can be found in [this](https://github.com/huggingface/datasets/pull/5389) PR (with unrelated changes) opened today. | ### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%|██████████████████████████████████... | 360 | 33 | Error when pushing to the CI hub

### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%... | [

-1.1982983350753784,

-0.9045103192329407,