html_url stringlengths 51 51 | title stringlengths 6 280 | comments stringlengths 67 24.7k | body stringlengths 51 36.2k | __index_level_0__ int64 1 1.17k | comment_length int64 16 1.45k | text stringlengths 190 38.3k | embeddings list |

|---|---|---|---|---|---|---|---|

https://github.com/huggingface/datasets/issues/5638 | xPath to implement all operations for Path | `xPath` is an internal component (it doesn't have a leading underscore in the name, but it should) not meant to be used outside of `datasets`, and it's only tested on HTTP URLs, not S3.

| ### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which only work locally.

### Motivation

I'm using... | 256 | 34 | xPath to implement all operations for Path

### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which ... | [

-1.0096476078033447,

-0.856399416923523,

-0.9224860668182373,

1.4335403442382812,

-0.21319352090358734,

-1.237447738647461,

0.24868834018707275,

-1.1852760314941406,

1.7969523668289185,

-0.8976537585258484,

0.4041021764278412,

-1.5997945070266724,

0.11226208508014679,

-0.5927440524101257,

... |

https://github.com/huggingface/datasets/issues/5638 | xPath to implement all operations for Path | Okay I understand that xPath won't support my usecase. What I was perhaps getting to is why not use UPath in `datasets` instead of `xPath` if UPath seems to have strictly more robust implementations. | ### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which only work locally.

### Motivation

I'm using... | 256 | 34 | xPath to implement all operations for Path

### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which ... | [

-0.9839048385620117,

-0.7708210945129395,

-0.9500556588172913,

1.4291080236434937,

-0.1828235238790512,

-1.2047094106674194,

0.25649920105934143,

-1.209984540939331,

1.7841482162475586,

-0.8390918970108032,

0.42940062284469604,

-1.5716805458068848,

0.1252724677324295,

-0.5470800399780273,

... |

https://github.com/huggingface/datasets/issues/5638 | xPath to implement all operations for Path | It seems like `universal_pathlib` does not support `fsspec` URL chaining (`::` is the chaining symbol) and "compression" filesystems (e.g., `zip`), but this is what we need to access and stream files from within an archive (e.g., we want to stream URLs such as this one: `zip://data.parquet::https://www.dummyurl.com/arc... | ### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which only work locally.

### Motivation

I'm using... | 256 | 46 | xPath to implement all operations for Path

### Feature request

Current xPath implementation is a great extension of Path in order to work with remote objects. However some methods such as `mkdir` are not implemented correctly. It should instead rely on `fsspec` methods, instead of defaulting do `Path` methods which ... | [

-1.021508812904358,

-0.8691565990447998,

-0.8554427623748779,

1.4810752868652344,

-0.1754007488489151,

-1.2783641815185547,

0.23315665125846863,

-1.144027590751648,

1.7115973234176636,

-0.8197272419929504,

0.4234815835952759,

-1.6065181493759155,

0.13080665469169617,

-0.6197822690010071,

... |

https://github.com/huggingface/datasets/issues/5637 | IterableDataset with_format does not support 'device' keyword for jax | Hi! Yes, only `torch` is currently supported. Unlike `Dataset`, `IterableDataset` is not PyArrow-backed, so we cannot simply call `to_numpy` on the underlying subtables to format them numerically. Instead, we must manually convert examples to (numeric) arrays while preserving consistency with `Dataset`, which is not tr... | ### Describe the bug

As seen here: https://huggingface.co/docs/datasets/use_with_jax dataset.with_format() supports the keyword 'device', to put data on a specific device when loaded as jax. However, when called on an IterableDataset, I got the error `TypeError: with_format() got an unexpected keyword argument 'devi... | 257 | 51 | IterableDataset with_format does not support 'device' keyword for jax

### Describe the bug

As seen here: https://huggingface.co/docs/datasets/use_with_jax dataset.with_format() supports the keyword 'device', to put data on a specific device when loaded as jax. However, when called on an IterableDataset, I got the ... | [

-1.2325011491775513,

-0.940279483795166,

-0.6965106129646301,

1.4070303440093994,

-0.1233501210808754,

-1.2415097951889038,

0.17469142377376556,

-1.0437079668045044,

1.6669445037841797,

-0.774493932723999,

0.34157007932662964,

-1.689767837524414,

0.0415092408657074,

-0.5208538770675659,

... |

https://github.com/huggingface/datasets/issues/5637 | IterableDataset with_format does not support 'device' keyword for jax | Any plans to support it in the future? Or would streaming dataset be left without support for jax and tensorflow? | ### Describe the bug

As seen here: https://huggingface.co/docs/datasets/use_with_jax dataset.with_format() supports the keyword 'device', to put data on a specific device when loaded as jax. However, when called on an IterableDataset, I got the error `TypeError: with_format() got an unexpected keyword argument 'devi... | 257 | 20 | IterableDataset with_format does not support 'device' keyword for jax

### Describe the bug

As seen here: https://huggingface.co/docs/datasets/use_with_jax dataset.with_format() supports the keyword 'device', to put data on a specific device when loaded as jax. However, when called on an IterableDataset, I got the ... | [

-1.244858741760254,

-0.967814028263092,

-0.7181598544120789,

1.4467706680297852,

-0.1347845196723938,

-1.207514762878418,

0.1415264904499054,

-1.0039390325546265,

1.6438817977905273,

-0.7366711497306824,

0.31190264225006104,

-1.675411343574524,

0.03625664487481117,

-0.49465417861938477,

... |

https://github.com/huggingface/datasets/issues/5634 | Not all progress bars are showing up when they should for downloading dataset | Hi!

By default, tqdm has `leave=True` to "keep all traces of the progress bar upon the termination of iteration". However, we use `leave=False` in some places (as of recently), which removes the bar once the iteration is over.

I feel like our TQDM bars are noisy, so I think we should always set `leave=False` and... | ### Describe the bug

During downloading the rotten tomatoes dataset, not all progress bars are displayed properly. This might be related to [this ticket](https://github.com/huggingface/datasets/issues/5117) as it raised the same concern but its not clear if the fix solves this issue too.

ipywidgets

<img width=... | 258 | 92 | Not all progress bars are showing up when they should for downloading dataset

### Describe the bug

During downloading the rotten tomatoes dataset, not all progress bars are displayed properly. This might be related to [this ticket](https://github.com/huggingface/datasets/issues/5117) as it raised the same concern bu... | [

-1.3264236450195312,

-0.9384112358093262,

-0.6486805081367493,

1.4555368423461914,

-0.2238524854183197,

-1.1209741830825806,

0.16965985298156738,

-1.0841684341430664,

1.5862623453140259,

-0.8087064623832703,

0.2877905070781708,

-1.5388022661209106,

-0.00938345491886139,

-0.5007497668266296... |

https://github.com/huggingface/datasets/issues/5634 | Not all progress bars are showing up when they should for downloading dataset | Hi sorry for the late update. I think the problem still exists despite the `leave` flag

<img width="1105" alt="image" src="https://user-images.githubusercontent.com/110427462/226501615-5b02fb02-fd5f-4eda-b1f7-a7ed6570892d.png">

```

Package Version

------------------------ ---------

aiofiles ... | ### Describe the bug

During downloading the rotten tomatoes dataset, not all progress bars are displayed properly. This might be related to [this ticket](https://github.com/huggingface/datasets/issues/5117) as it raised the same concern but its not clear if the fix solves this issue too.

ipywidgets

<img width=... | 258 | 373 | Not all progress bars are showing up when they should for downloading dataset

### Describe the bug

During downloading the rotten tomatoes dataset, not all progress bars are displayed properly. This might be related to [this ticket](https://github.com/huggingface/datasets/issues/5117) as it raised the same concern bu... | [

-1.3264236450195312,

-0.9384112358093262,

-0.6486805081367493,

1.4555368423461914,

-0.2238524854183197,

-1.1209741830825806,

0.16965985298156738,

-1.0841684341430664,

1.5862623453140259,

-0.8087064623832703,

0.2877905070781708,

-1.5388022661209106,

-0.00938345491886139,

-0.5007497668266296... |

https://github.com/huggingface/datasets/issues/5633 | Cannot import datasets | Okay, the issue was likely caused by mixing `conda` and `pip` usage - I forgot that I have already used `pip` in this environment previously and that it was 'spoiled' because of it. Creating another environment and installing `datasets` by pip with other packages from the `requirements.txt` file solved the problem. | ### Describe the bug

Hi,

I cannot even import the library :( I installed it by running:

```

$ conda install datasets

```

Then I realized I should maybe use the huggingface channel, because I encountered the error below, so I ran:

```

$ conda remove datasets

$ conda install -c huggingface datasets

```

Pl... | 259 | 51 | Cannot import datasets

### Describe the bug

Hi,

I cannot even import the library :( I installed it by running:

```

$ conda install datasets

```

Then I realized I should maybe use the huggingface channel, because I encountered the error below, so I ran:

```

$ conda remove datasets

$ conda install -c hugg... | [

-1.1801728010177612,

-0.880652904510498,

-0.7368806600570679,

1.455385446548462,

-0.10973728448152542,

-1.303460955619812,

0.10291359573602676,

-1.125065803527832,

1.5669465065002441,

-0.6529191136360168,

0.2321343570947647,

-1.6736702919006348,

-0.13928870856761932,

-0.49055325984954834,

... |

https://github.com/huggingface/datasets/issues/5632 | Dataset cannot convert too large dictionnary | Answered on the forum:

> To fix the overflow error, we need to merge [support LargeListArray in pyarrow by xwwwwww · Pull Request #4800 · huggingface/datasets · GitHub](https://github.com/huggingface/datasets/pull/4800), which adds support for the large lists. However, before merging it, we need to come up with a cl... | ### Describe the bug

Hello everyone!

I tried to build a new dataset with the command "dict_valid = datasets.Dataset.from_dict({'input_values': values_array})".

However, I have a very large dataset (~400Go) and it seems that dataset cannot handle this.

Indeed, I can create the dataset until a certain size of m... | 260 | 63 | Dataset cannot convert too large dictionnary

### Describe the bug

Hello everyone!

I tried to build a new dataset with the command "dict_valid = datasets.Dataset.from_dict({'input_values': values_array})".

However, I have a very large dataset (~400Go) and it seems that dataset cannot handle this.

Indeed, I c... | [

-1.3318010568618774,

-0.9440325498580933,

-0.7260366678237915,

1.464353084564209,

-0.1459263116121292,

-1.2061792612075806,

0.1252291053533554,

-1.0885341167449951,

1.6726020574569702,

-0.7644535899162292,

0.24400624632835388,

-1.6093380451202393,

0.10537301003932953,

-0.5523220300674438,

... |

https://github.com/huggingface/datasets/issues/5631 | Custom split names | Hi!

You can also use names other than "train", "validation" and "test". As an example, check the [script](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0/blob/e095840f23f3dffc1056c078c2f9320dad9ca74d/common_voice_11_0.py#L139) of the Common Voice 11 dataset. | ### Feature request

Hi,

I participated in multiple NLP tasks where there are more than just train, test, validation splits, there could be multiple validation sets or test sets. But it seems currently only those mentioned three splits supported. It would be nice to have the support for more splits on the hub. (curren... | 261 | 24 | Custom split names

### Feature request

Hi,

I participated in multiple NLP tasks where there are more than just train, test, validation splits, there could be multiple validation sets or test sets. But it seems currently only those mentioned three splits supported. It would be nice to have the support for more split... | [

-1.1770635843276978,

-0.9241767525672913,

-0.8355674147605896,

1.4496593475341797,

-0.1094215139746666,

-1.1831300258636475,

0.10229720920324326,

-1.085262417793274,

1.5207239389419556,

-0.775841236114502,

0.259285032749176,

-1.6633424758911133,

0.06859844923019409,

-0.5358826518058777,

... |

https://github.com/huggingface/datasets/issues/5629 | load_dataset gives "403" error when using Financial phrasebank | Hi! You seem to be using an outdated version of `datasets` that downloads the older script version. To avoid the error, you can either pass `revision="main"` to `load_dataset` (this can fail if a script uses newer features of the lib) or update your installation with `pip install -U datasets` (better solution). | When I try to load this dataset, I receive the following error:

ConnectionError: Couldn't reach https://www.researchgate.net/profile/Pekka_Malo/publication/251231364_FinancialPhraseBank-v10/data/0c96051eee4fb1d56e000000/FinancialPhraseBank-v10.zip (error 403)

Has this been seen before? Thanks. The website loads ... | 262 | 51 | load_dataset gives "403" error when using Financial phrasebank

When I try to load this dataset, I receive the following error:

ConnectionError: Couldn't reach https://www.researchgate.net/profile/Pekka_Malo/publication/251231364_FinancialPhraseBank-v10/data/0c96051eee4fb1d56e000000/FinancialPhraseBank-v10.zip (er... | [

-1.1847862005233765,

-0.8617866039276123,

-0.7911846041679382,

1.467525601387024,

-0.2067996710538864,

-1.3115179538726807,

0.13070455193519592,

-1.1052074432373047,

1.5948033332824707,

-0.7261543273925781,

0.33966758847236633,

-1.6774890422821045,

0.057503167539834976,

-0.5273615121841431... |

https://github.com/huggingface/datasets/issues/5627 | Unable to load AutoTrain-generated dataset from the hub | The AutoTrain format is not supported right now. I think it would require a dedicated dataset builder | ### Describe the bug

DatasetGenerationError: An error occurred while generating the dataset -> ValueError: Couldn't cast ... because column names don't match

```

ValueError: Couldn't cast

_data_files: list<item: struct<filename: string>>

child 0, item: struct<filename: string>

child 0, filename: string

... | 263 | 17 | Unable to load AutoTrain-generated dataset from the hub

### Describe the bug

DatasetGenerationError: An error occurred while generating the dataset -> ValueError: Couldn't cast ... because column names don't match

```

ValueError: Couldn't cast

_data_files: list<item: struct<filename: string>>

child 0, item: ... | [

-1.2668479681015015,

-1.1041215658187866,

-0.7530314922332764,

1.728272795677185,

-0.28150415420532227,

-0.9776759147644043,

0.0697939470410347,

-0.991932213306427,

1.5877076387405396,

-0.5884959697723389,

0.22780397534370422,

-1.5597028732299805,

-0.036122385412454605,

-0.7347760200500488... |

https://github.com/huggingface/datasets/issues/5627 | Unable to load AutoTrain-generated dataset from the hub | Okay, good to know. Thanks for the reply. For now I will just have to

manage the split manually before training, because I can’t find any way of

pulling out file indices or file names from the autogenerated split. The

file names field of the image dataset (loaded directly from arrow file) is

missing, just fyi (for anyo... | ### Describe the bug

DatasetGenerationError: An error occurred while generating the dataset -> ValueError: Couldn't cast ... because column names don't match

```

ValueError: Couldn't cast

_data_files: list<item: struct<filename: string>>

child 0, item: struct<filename: string>

child 0, filename: string

... | 263 | 131 | Unable to load AutoTrain-generated dataset from the hub

### Describe the bug

DatasetGenerationError: An error occurred while generating the dataset -> ValueError: Couldn't cast ... because column names don't match

```

ValueError: Couldn't cast

_data_files: list<item: struct<filename: string>>

child 0, item: ... | [

-1.2668479681015015,

-1.1041215658187866,

-0.7530314922332764,

1.728272795677185,

-0.28150415420532227,

-0.9776759147644043,

0.0697939470410347,

-0.991932213306427,

1.5877076387405396,

-0.5884959697723389,

0.22780397534370422,

-1.5597028732299805,

-0.036122385412454605,

-0.7347760200500488... |

https://github.com/huggingface/datasets/issues/5625 | Allow "jsonl" data type signifier | You can use "json" instead. It doesn't work by extension names, but rather by dataset builder names, e.g. "text", "imagefolder", etc. I don't think the example in `transformers` is correct because of that | ### Feature request

`load_dataset` currently does not accept `jsonl` as type but only `json`.

### Motivation

I was working with one of the `run_translation` scripts and used my own datasets (`.jsonl`) as train_dataset. But the default code did not work because

```

FileNotFoundError: Couldn't find a dataset scri... | 264 | 33 | Allow "jsonl" data type signifier

### Feature request

`load_dataset` currently does not accept `jsonl` as type but only `json`.

### Motivation

I was working with one of the `run_translation` scripts and used my own datasets (`.jsonl`) as train_dataset. But the default code did not work because

```

FileNotFoun... | [

-1.1171170473098755,

-0.9781569242477417,

-0.8593897819519043,

1.5335286855697632,

-0.12153265625238419,

-1.1575781106948853,

0.13303165137767792,

-1.0777931213378906,

1.7873183488845825,

-0.7732706665992737,

0.30229246616363525,

-1.6623814105987549,

-0.007482464425265789,

-0.6368702054023... |

https://github.com/huggingface/datasets/issues/5625 | Allow "jsonl" data type signifier | Yes, I understand the reasoning but this issue is to propose that the example in transformers (while incorrect) "makes sense" in terms of user expectation. So the question is whether it would be possible to add "aliases" for common types (like "json" and "text") based on common extensions (like jsonl and txt)? | ### Feature request

`load_dataset` currently does not accept `jsonl` as type but only `json`.

### Motivation

I was working with one of the `run_translation` scripts and used my own datasets (`.jsonl`) as train_dataset. But the default code did not work because

```

FileNotFoundError: Couldn't find a dataset scri... | 264 | 52 | Allow "jsonl" data type signifier

### Feature request

`load_dataset` currently does not accept `jsonl` as type but only `json`.

### Motivation

I was working with one of the `run_translation` scripts and used my own datasets (`.jsonl`) as train_dataset. But the default code did not work because

```

FileNotFoun... | [

-1.1289620399475098,

-0.965147078037262,

-0.8540281653404236,

1.5681935548782349,

-0.11368619650602341,

-1.1700540781021118,

0.13679121434688568,

-1.0804232358932495,

1.777850866317749,

-0.7727180123329163,

0.3149605393409729,

-1.648427963256836,

-0.0031464705243706703,

-0.6520736217498779... |

https://github.com/huggingface/datasets/issues/5624 | glue datasets returning -1 for test split | Hi @lithafnium, thanks for reporting.

Please note that you can use the "Community" tab in the corresponding dataset page to start any discussion: https://huggingface.co/datasets/glue/discussions

Indeed this issue was already raised there (https://huggingface.co/datasets/glue/discussions/5) and answered: https://h... | ### Describe the bug

Downloading any dataset from GLUE has -1 as class labels for test split. Train and validation have regular 0/1 class labels. This is also present in the dataset card online.

### Steps to reproduce the bug

```

dataset = load_dataset("glue", "sst2")

for d in dataset:

# prints out -1

... | 265 | 71 | glue datasets returning -1 for test split

### Describe the bug

Downloading any dataset from GLUE has -1 as class labels for test split. Train and validation have regular 0/1 class labels. This is also present in the dataset card online.

### Steps to reproduce the bug

```

dataset = load_dataset("glue", "sst2")

... | [

-1.155807614326477,

-0.882905125617981,

-0.7539591789245605,

1.4164730310440063,

-0.1072247251868248,

-1.2842718362808228,

0.1016727089881897,

-1.0471786260604858,

1.6512656211853027,

-0.7368374466896057,

0.2724594175815582,

-1.728262186050415,

-0.04369534179568291,

-0.5601991415023804,

... |

https://github.com/huggingface/datasets/issues/5613 | Version mismatch with multiprocess and dill on Python 3.10 | Reopening, since I think the docs should inform the user of this problem. For example, [this page](https://huggingface.co/docs/datasets/installation) says

> Datasets is tested on Python 3.7+.

but it should probably say that Beam Datasets do not work with Python 3.10 (or link to a known issues page). | ### Describe the bug

Grabbing the latest version of `datasets` and `apache-beam` with `poetry` using Python 3.10 gives a crash at runtime. The crash is

```

File "/Users/adpauls/sc/git/DSI-transformers/data/NQ/create_NQ_train_vali.py", line 1, in <module>

import datasets

File "/Users/adpauls/Library/Caches/... | 267 | 46 | Version mismatch with multiprocess and dill on Python 3.10

### Describe the bug

Grabbing the latest version of `datasets` and `apache-beam` with `poetry` using Python 3.10 gives a crash at runtime. The crash is

```

File "/Users/adpauls/sc/git/DSI-transformers/data/NQ/create_NQ_train_vali.py", line 1, in <module>... | [

-1.2203803062438965,

-0.8654314875602722,

-0.62025386095047,

1.36741042137146,

-0.11054454743862152,

-1.3439956903457642,

0.06198142096400261,

-1.014704942703247,

1.522252082824707,

-0.6576555967330933,

0.19666792452335358,

-1.6717911958694458,

-0.2024473398923874,

-0.36462289094924927,

... |

https://github.com/huggingface/datasets/issues/5613 | Version mismatch with multiprocess and dill on Python 3.10 | Same problem on Colab using a vanilla setup running :

Python 3.10.11

apache-beam 2.47.0

datasets 2.12.0 | ### Describe the bug

Grabbing the latest version of `datasets` and `apache-beam` with `poetry` using Python 3.10 gives a crash at runtime. The crash is

```

File "/Users/adpauls/sc/git/DSI-transformers/data/NQ/create_NQ_train_vali.py", line 1, in <module>

import datasets

File "/Users/adpauls/Library/Caches/... | 267 | 16 | Version mismatch with multiprocess and dill on Python 3.10

### Describe the bug

Grabbing the latest version of `datasets` and `apache-beam` with `poetry` using Python 3.10 gives a crash at runtime. The crash is

```

File "/Users/adpauls/sc/git/DSI-transformers/data/NQ/create_NQ_train_vali.py", line 1, in <module>... | [

-1.2203803062438965,

-0.8654314875602722,

-0.62025386095047,

1.36741042137146,

-0.11054454743862152,

-1.3439956903457642,

0.06198142096400261,

-1.014704942703247,

1.522252082824707,

-0.6576555967330933,

0.19666792452335358,

-1.6717911958694458,

-0.2024473398923874,

-0.36462289094924927,

... |

https://github.com/huggingface/datasets/issues/5612 | Arrow map type in parquet files unsupported | I'm attaching a minimal reproducible example:

```python

from datasets import load_dataset

import pyarrow as pa

import pyarrow.parquet as pq

table_with_map = pa.Table.from_pydict(

{"a": [1, 2], "b": [[("a", 2)], [("b", 4)]]},

schema=pa.schema({"a": pa.int32(), "b": pa.map_(pa.string(), pa.int32())})

)

... | ### Describe the bug

When I try to load parquet files that were processed with Spark, I get the following issue:

`ValueError: Arrow type map<string, string ('warc_headers')> does not have a datasets dtype equivalent.`

Strangely, loading the dataset with `streaming=True` solves the issue.

### Steps to reproduce ... | 268 | 94 | Arrow map type in parquet files unsupported

### Describe the bug

When I try to load parquet files that were processed with Spark, I get the following issue:

`ValueError: Arrow type map<string, string ('warc_headers')> does not have a datasets dtype equivalent.`

Strangely, loading the dataset with `streaming=Tr... | [

-1.2015632390975952,

-0.8567219972610474,

-0.6773289442062378,

1.4378821849822998,

-0.18681499361991882,

-1.2755796909332275,

0.20724272727966309,

-1.0972925424575806,

1.644755244255066,

-0.8304726481437683,

0.3374823331832886,

-1.66536283493042,

0.08009473234415054,

-0.5665971636772156,

... |

https://github.com/huggingface/datasets/issues/5610 | use datasets streaming mode in trainer ddp mode cause memory leak | Same problem,

transformers 4.28.1

datasets 2.12.0

leak around 100Mb per 10 seconds when use dataloader_num_werker > 0 in training argumennts for transformer train, possile bug in transformers repo, but still not found solution :(

| ### Describe the bug

use datasets streaming mode in trainer ddp mode cause memory leak

### Steps to reproduce the bug

import os

import time

import datetime

import sys

import numpy as np

import random

import torch

from torch.utils.data import Dataset, DataLoader, random_split, RandomSampler, Sequenti... | 269 | 34 | use datasets streaming mode in trainer ddp mode cause memory leak

### Describe the bug

use datasets streaming mode in trainer ddp mode cause memory leak

### Steps to reproduce the bug

import os

import time

import datetime

import sys

import numpy as np

import random

import torch

from torch.utils.da... | [

-1.3668327331542969,

-1.0391124486923218,

-0.6425808668136597,

1.5796233415603638,

-0.1771867275238037,

-1.1103981733322144,

0.11056772619485855,

-1.0720235109329224,

1.5092308521270752,

-0.8274769186973572,

0.2660912275314331,

-1.649958610534668,

-0.04564975947141647,

-0.5367396473884583,... |

https://github.com/huggingface/datasets/issues/5610 | use datasets streaming mode in trainer ddp mode cause memory leak | found an article described a problem, may be helpful for somebody:

https://ppwwyyxx.com/blog/2022/Demystify-RAM-Usage-in-Multiprocess-DataLoader/

I confirm, it`s not memory leak, after some time memory growing has stopped | ### Describe the bug

use datasets streaming mode in trainer ddp mode cause memory leak

### Steps to reproduce the bug

import os

import time

import datetime

import sys

import numpy as np

import random

import torch

from torch.utils.data import Dataset, DataLoader, random_split, RandomSampler, Sequenti... | 269 | 25 | use datasets streaming mode in trainer ddp mode cause memory leak

### Describe the bug

use datasets streaming mode in trainer ddp mode cause memory leak

### Steps to reproduce the bug

import os

import time

import datetime

import sys

import numpy as np

import random

import torch

from torch.utils.da... | [

-1.3668327331542969,

-1.0391124486923218,

-0.6425808668136597,

1.5796233415603638,

-0.1771867275238037,

-1.1103981733322144,

0.11056772619485855,

-1.0720235109329224,

1.5092308521270752,

-0.8274769186973572,

0.2660912275314331,

-1.649958610534668,

-0.04564975947141647,

-0.5367396473884583,... |

https://github.com/huggingface/datasets/issues/5609 | `load_from_disk` vs `load_dataset` performance. | Hi! We've recently made some improvements to `save_to_disk`/`list_to_disk` (100x faster in some scenarios), so it would help if you could install `datasets` directly from `main` (`pip install git+https://github.com/huggingface/datasets.git`) and re-run the "benchmark". | ### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache mechanism, and re-run my filtering.

2. `save_to_di... | 270 | 32 | `load_from_disk` vs `load_dataset` performance.

### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache m... | [

-1.1461586952209473,

-0.9332696795463562,

-0.7413451075553894,

1.4626514911651611,

-0.14524132013320923,

-1.210001826286316,

0.1552080363035202,

-1.0253177881240845,

1.6445262432098389,

-0.8235805630683899,

0.35147538781166077,

-1.6538468599319458,

0.05077756941318512,

-0.6181231141090393,... |

https://github.com/huggingface/datasets/issues/5609 | `load_from_disk` vs `load_dataset` performance. | @mariosasko is that fix released to pip in the meantime? Asking cause im facing still the same issue (regarding loading images from local paths):

```

dataset = load_dataset("csv", cache_dir="cache", data_files=["/STORAGE/DATA/mijam/vit/code/list_filtered.csv"], num_proc=16, split="train").cast_column("image", Image()... | ### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache mechanism, and re-run my filtering.

2. `save_to_di... | 270 | 71 | `load_from_disk` vs `load_dataset` performance.

### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache m... | [

-1.1504758596420288,

-0.9288638830184937,

-0.7494857311248779,

1.460647702217102,

-0.14338603615760803,

-1.2090320587158203,

0.14476484060287476,

-1.0342321395874023,

1.668833613395691,

-0.8153769373893738,

0.33879122138023376,

-1.6526466608047485,

0.06922627985477448,

-0.6100777983665466,... |

https://github.com/huggingface/datasets/issues/5609 | `load_from_disk` vs `load_dataset` performance. | @mjamroz I assume your CSV file stores image file paths. This means `save_to_disk` needs to embed the image bytes resulting in a much bigger Arrow file (than the initial one). Maybe specifying `num_shards` to make the Arrow files smaller can help (large Arrow files on some systems take a long time to load). | ### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache mechanism, and re-run my filtering.

2. `save_to_di... | 270 | 53 | `load_from_disk` vs `load_dataset` performance.

### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache m... | [

-1.1552221775054932,

-0.9428613781929016,

-0.7493132948875427,

1.4506059885025024,

-0.15418574213981628,

-1.2171801328659058,

0.1420518457889557,

-1.0230222940444946,

1.663267731666565,

-0.8269370198249817,

0.33807772397994995,

-1.652201771736145,

0.06728407740592957,

-0.6163331270217896,

... |

https://github.com/huggingface/datasets/issues/5608 | audiofolder only creates dataset of 13 rows (files) when the data folder it's reading from has 20,000 mp3 files. | Hi!

> naming convention of mp3 files

Yes, this could be the problem. MP3 files should end with `.mp3`/`.MP3` to be recognized as audio files.

If the file names are not the culprit, can you paste the audio folder's directory structure to help us reproduce the error (e.g., by running the `tree "x"` command)? | ### Describe the bug

x = load_dataset("audiofolder", data_dir="x")

When running this, x is a dataset of 13 rows (files) when it should be 20,000 rows (files) as the data_dir "x" has 20,000 mp3 files. Does anyone know what could possibly cause this (naming convention of mp3 files, etc.)

### Steps to reproduce the b... | 271 | 54 | audiofolder only creates dataset of 13 rows (files) when the data folder it's reading from has 20,000 mp3 files.

### Describe the bug

x = load_dataset("audiofolder", data_dir="x")

When running this, x is a dataset of 13 rows (files) when it should be 20,000 rows (files) as the data_dir "x" has 20,000 mp3 files. Do... | [

-1.1904871463775635,

-0.9062251448631287,

-0.7016420364379883,

1.4522279500961304,

-0.2260921150445938,

-1.0890953540802002,

0.19326183199882507,

-0.9768805503845215,

1.6433956623077393,

-0.8800054788589478,

0.2999725043773651,

-1.7013241052627563,

0.052340276539325714,

-0.4880883395671844... |

https://github.com/huggingface/datasets/issues/5608 | audiofolder only creates dataset of 13 rows (files) when the data folder it's reading from has 20,000 mp3 files. | Hi! I'm sorry, I don't want to reveal my entire dataset, but here's a snippet (all of the mp3 files below are some of the ones not being recognized by audiofolder. Also, for another dataset, audiofolder loaded zero mp3 files because "train" was in the name of one of the mp3 files.

my_dataset

├── data

│ ├── VHA_In... | ### Describe the bug

x = load_dataset("audiofolder", data_dir="x")

When running this, x is a dataset of 13 rows (files) when it should be 20,000 rows (files) as the data_dir "x" has 20,000 mp3 files. Does anyone know what could possibly cause this (naming convention of mp3 files, etc.)

### Steps to reproduce the b... | 271 | 94 | audiofolder only creates dataset of 13 rows (files) when the data folder it's reading from has 20,000 mp3 files.

### Describe the bug

x = load_dataset("audiofolder", data_dir="x")

When running this, x is a dataset of 13 rows (files) when it should be 20,000 rows (files) as the data_dir "x" has 20,000 mp3 files. Do... | [

-1.209779143333435,

-0.905281662940979,

-0.6140527129173279,

1.4679198265075684,

-0.18320265412330627,

-1.2238426208496094,

0.2682182490825653,

-0.9476800560951233,

1.6219302415847778,

-0.915643572807312,

0.2681034803390503,

-1.6018048524856567,

0.08765868842601776,

-0.5242804288864136,

... |

https://github.com/huggingface/datasets/issues/5606 | Add `Dataset.to_list` to the API | Hello, I have an interest in this issue.

Is the `Dataset.to_dict` you are describing correct in the code here?

https://github.com/huggingface/datasets/blob/35b789e8f6826b6b5a6b48fcc2416c890a1f326a/src/datasets/arrow_dataset.py#L4633-L4667 | Since there is `Dataset.from_list` in the API, we should also add `Dataset.to_list` to be consistent.

Regarding the implementation, we can re-use `Dataset.to_dict`'s code and replace the `to_pydict` calls with `to_pylist`. | 272 | 20 | Add `Dataset.to_list` to the API

Since there is `Dataset.from_list` in the API, we should also add `Dataset.to_list` to be consistent.

Regarding the implementation, we can re-use `Dataset.to_dict`'s code and replace the `to_pydict` calls with `to_pylist`.

Hello, I have an interest in this issue.

Is the `Datase... | [

-1.1085078716278076,

-0.7848642468452454,

-0.7754935622215271,

1.4184117317199707,

-0.15377268195152283,

-1.3009488582611084,

0.2207460254430771,

-1.2125113010406494,

1.70610511302948,

-0.8192591071128845,

0.3086867928504944,

-1.6503373384475708,

-0.025359585881233215,

-0.5635589361190796,... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | Hi!

You can specify `download_config=DownloadConfig(resume_download=True))` in `load_dataset` to resume the download when re-running the code after the timeout error:

```python

from datasets import load_dataset, DownloadConfig

dataset = load_dataset('the_pile', split='train', cache_dir='F:\datasets', download_... | ### Describe the bug

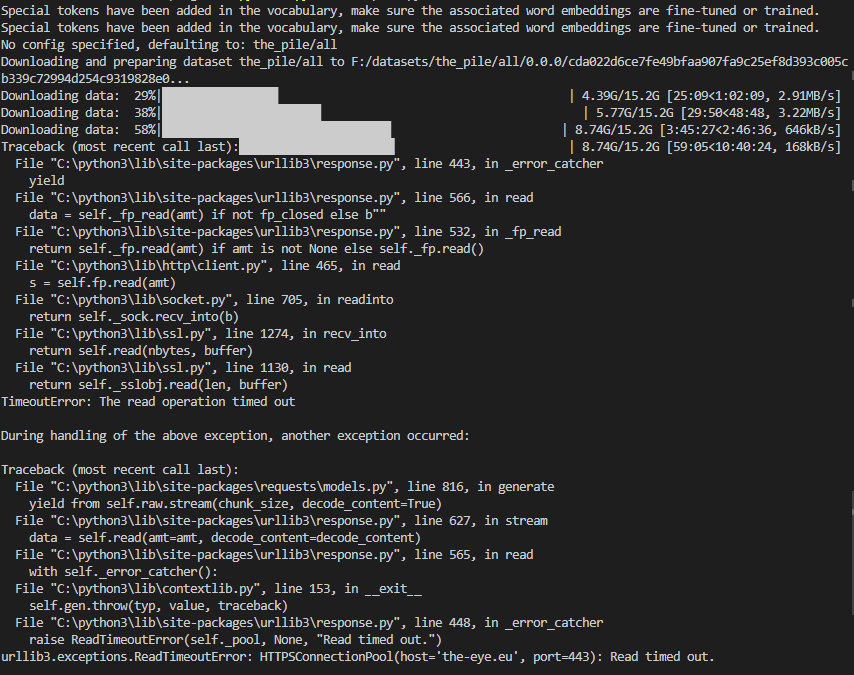

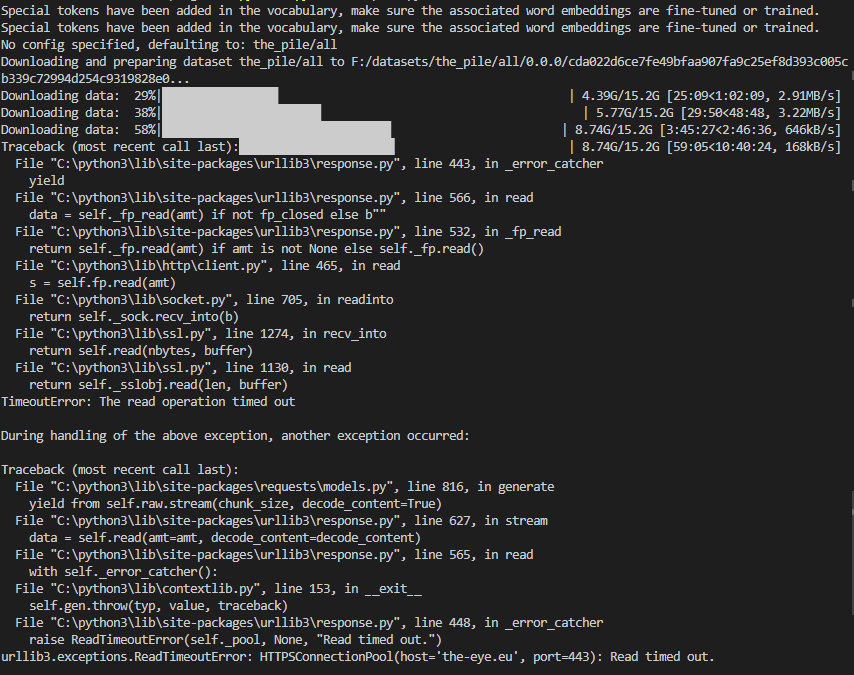

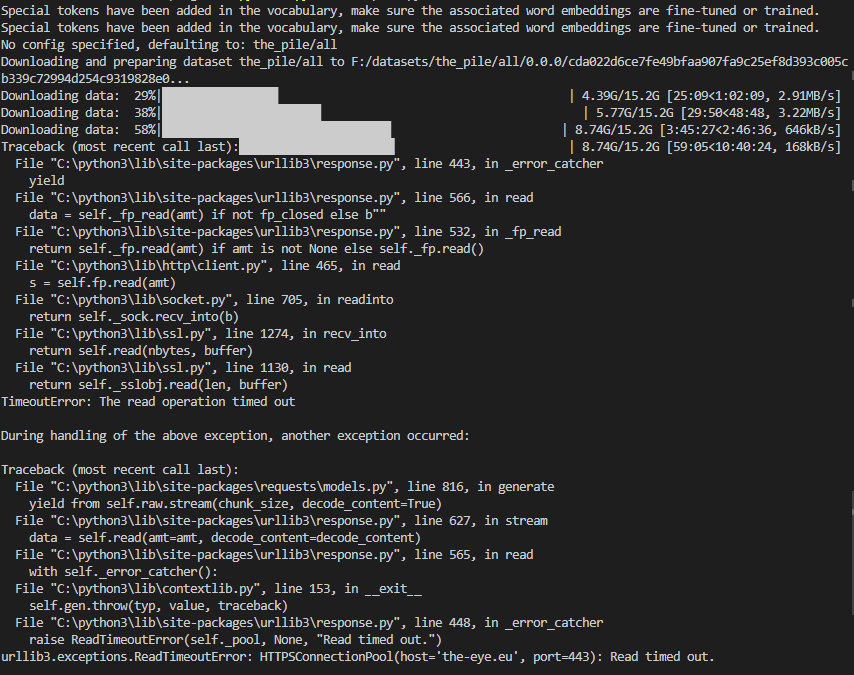

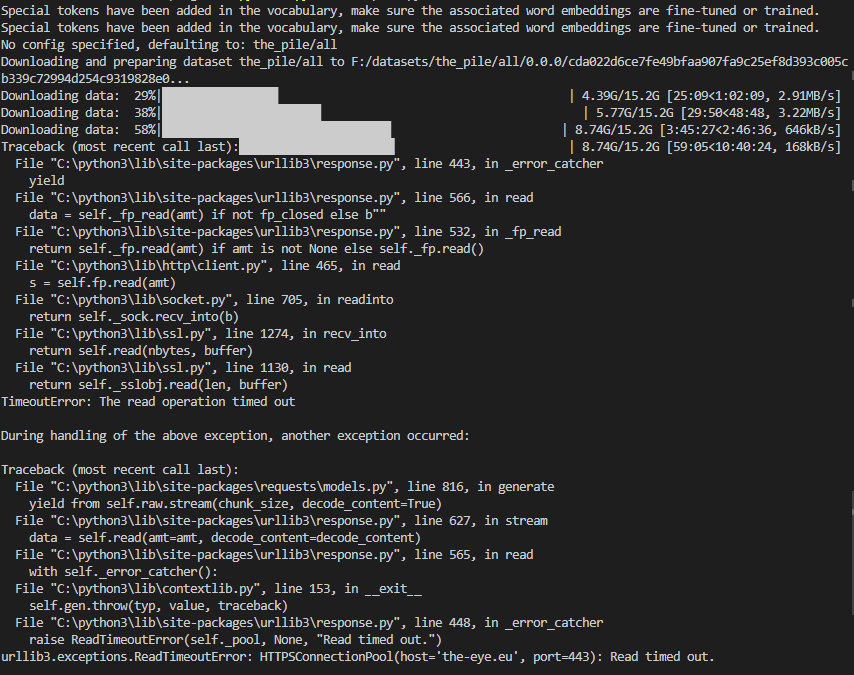

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.2368625402450562,

-0.8774968981742859,

-0.7400590777397156,

1.444351077079773,

-0.1186927780508995,

-1.2102718353271484,

0.055873047560453415,

-0.9798559546470642,

1.5522856712341309,

-0.7297624349594116,

0.2441541701555252,

-1.6731832027435303,

-0.014697623439133167,

-0.562451303005218... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | @mariosasko , I used your suggestion but its not saving anything , just stops and runs from the same point .

below is the script to download and save on disk .

```

from datasets import load_dataset, DownloadConfig

#load the Pile dataset from Hugging Face Datasets

#dataset = load_dataset('the_pile')

dataset ... | ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.241477370262146,

-0.9204444885253906,

-0.7658193707466125,

1.4308555126190186,

-0.17130808532238007,

-1.1928671598434448,

0.06701197475194931,

-0.9976588487625122,

1.5688680410385132,

-0.7707803845405579,

0.1920558363199234,

-1.6609686613082886,

-0.01000867411494255,

-0.5565441846847534... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | @mariosasko , it shows nothing in dataset folder

```

du -sh /mnt/nlp/hugging_face/*

20K /mnt/nlp/hugging_face/datasets

4.0K /mnt/nlp/hugging_face/download_pile.py

```

| ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.197818636894226,

-0.8574449419975281,

-0.8052145838737488,

1.445061206817627,

-0.07909907400608063,

-1.2725484371185303,

0.01774512231349945,

-0.933093786239624,

1.5372090339660645,

-0.7141402959823608,

0.27452340722084045,

-1.6517730951309204,

0.019322596490383148,

-0.5421893000602722,... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | @mariosasko

```

root@d20f0ab8f4f8:/mnt/hugging_face# python3 download_pile.py

No config specified, defaulting to: the_pile/all

Downloading and preparing dataset the_pile/all to /mnt/hugging_face/datasets/the_pile/all/0.0.0/6fadc480ecb32470826cbf5900a9558b791ce55d5e9a0fdc8ad653e7b64bb349...

Downloading data file... | ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.203966736793518,

-0.8519333004951477,

-0.7993110418319702,

1.381014108657837,

-0.12639036774635315,

-1.2334026098251343,

0.05667369067668915,

-1.0094321966171265,

1.5582407712936401,

-0.709533154964447,

0.2136765867471695,

-1.6297366619110107,

0.017676159739494324,

-0.5309671759605408,

... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | Users with slow internet speed are doomed (4MB/s). The dataset downloads fine at minimum speed 10MB/s.

Also, when the train splits were generated and then I removed the downloads folder to save up disk space, it started redownloading the whole dataset. Is there any way to use the already generated splits instead? | ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.2314949035644531,

-0.8574203848838806,

-0.8153257966041565,

1.4107085466384888,

-0.10294856131076813,

-1.2306573390960693,

0.03640240430831909,

-0.9805265069007874,

1.5492911338806152,

-0.7054902911186218,

0.23757299780845642,

-1.63870370388031,

0.027599677443504333,

-0.5107318758964539... |

https://github.com/huggingface/datasets/issues/5604 | Problems with downloading The Pile | @sentialx @mariosasko , anytime on my above script , am I downloading and saving dataset correctly . Please suggest :) | ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

Here are the down... | [

-1.2404416799545288,

-0.8615272045135498,

-0.7928768396377563,

1.4353107213974,

-0.13145187497138977,

-1.2064193487167358,

0.03153708204627037,

-0.9666385650634766,

1.5105797052383423,

-0.7303228378295898,

0.2456180900335312,

-1.6523255109786987,

0.008035275153815746,

-0.5417928099632263,

... |

https://github.com/huggingface/datasets/issues/5601 | Authorization error | Hi!

It's better to report this kind of issue in the `huggingface_hub` repo, so if you still haven't resolved it, I suggest you open an issue there. | ### Describe the bug

Get `Authorization error` when try to push data into hugginface datasets hub.

### Steps to reproduce the bug

I did all steps in the [tutorial](https://huggingface.co/docs/datasets/share),

1. `huggingface-cli login` with WRITE token

2. `git lfs install`

3. `git clone https://huggingfa... | 274 | 27 | Authorization error

### Describe the bug

Get `Authorization error` when try to push data into hugginface datasets hub.

### Steps to reproduce the bug

I did all steps in the [tutorial](https://huggingface.co/docs/datasets/share),

1. `huggingface-cli login` with WRITE token

2. `git lfs install`

3. `git c... | [

-1.1315078735351562,

-0.8858757615089417,

-0.7515595555305481,

1.4895168542861938,

-0.06980527192354202,

-1.3011276721954346,

0.1188325583934784,

-0.9685966968536377,

1.7310949563980103,

-0.6481048464775085,

0.2915382981300354,

-1.7327780723571777,

-0.05241468548774719,

-0.6085132360458374... |

https://github.com/huggingface/datasets/issues/5601 | Authorization error | Yeah, I solved it. Problem was in osxkeychain. When I do `hugginface-cli login` it's add token with default account (username)`hg_user` but my repo contain other username. When I changed username in keychain - it works now. | ### Describe the bug

Get `Authorization error` when try to push data into hugginface datasets hub.

### Steps to reproduce the bug

I did all steps in the [tutorial](https://huggingface.co/docs/datasets/share),

1. `huggingface-cli login` with WRITE token

2. `git lfs install`

3. `git clone https://huggingfa... | 274 | 36 | Authorization error

### Describe the bug

Get `Authorization error` when try to push data into hugginface datasets hub.

### Steps to reproduce the bug

I did all steps in the [tutorial](https://huggingface.co/docs/datasets/share),

1. `huggingface-cli login` with WRITE token

2. `git lfs install`

3. `git c... | [

-1.1269416809082031,

-0.9064958095550537,

-0.7256932258605957,

1.4935580492019653,

-0.07282377034425735,

-1.3066577911376953,

0.13756318390369415,

-0.9786835312843323,

1.73699951171875,

-0.6406669616699219,

0.29947489500045776,

-1.7377969026565552,

-0.02259102649986744,

-0.5937290787696838... |

https://github.com/huggingface/datasets/issues/5600 | Dataloader getitem not working for DreamboothDatasets | Hi!

> (see example of DreamboothDatasets)

Could you please provide a link to it? If you are referring to the example in the `diffusers` repo, your issue is unrelated to `datasets` as that example uses `Dataset` from PyTorch to load data. | ### Describe the bug

Dataloader getitem is not working as before (see example of [DreamboothDatasets](https://github.com/huggingface/peft/blob/main/examples/lora_dreambooth/train_dreambooth.py#L451C14-L529))

moving Datasets to 2.8.0 solved the issue.

### Steps to reproduce the bug

1- using DreamBoothDataset ... | 275 | 41 | Dataloader getitem not working for DreamboothDatasets

### Describe the bug

Dataloader getitem is not working as before (see example of [DreamboothDatasets](https://github.com/huggingface/peft/blob/main/examples/lora_dreambooth/train_dreambooth.py#L451C14-L529))

moving Datasets to 2.8.0 solved the issue.

### S... | [

-1.2297148704528809,

-0.8961120843887329,

-0.8456498980522156,

1.4584673643112183,

-0.1974523812532425,

-1.2620317935943604,

0.039972636848688126,

-1.0766230821609497,

1.6056658029556274,

-0.81531822681427,

0.31427645683288574,

-1.7112020254135132,

0.03862027823925018,

-0.5696146488189697,... |

https://github.com/huggingface/datasets/issues/5597 | in-place dataset update | We won't support in-place modifications since `datasets` is based on the Apache Arrow format which doesn't support in-place modifications.

In your case the old dataset is garbage collected pretty quickly so you won't have memory issues.

Note that datasets loaded from disk (memory mapped) are not loaded in memory,... | ### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets import Dataset

ds = Datas... | 276 | 63 | in-place dataset update

### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets im... | [

-1.1607862710952759,

-0.9026415944099426,

-0.7501826286315918,

1.5338371992111206,

-0.19454406201839447,

-1.325056791305542,

0.15530814230442047,

-1.088658094406128,

1.8104037046432495,

-0.7365054488182068,

0.24363689124584198,

-1.7707486152648926,

-0.06863647699356079,

-0.657507061958313,... |

https://github.com/huggingface/datasets/issues/5597 | in-place dataset update | Thank you for your detailed reply.

> In your case the old dataset is garbage collected pretty quickly so you won't have memory issues.

I understand this, but it still copies the old dataset to create the new one, is this correct? So maybe it is not memory-consuming, but time-consuming? | ### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets import Dataset

ds = Datas... | 276 | 50 | in-place dataset update

### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets im... | [

-1.1633410453796387,

-0.9036456942558289,

-0.7431443333625793,

1.5265822410583496,

-0.2008284479379654,

-1.3133312463760376,

0.15216724574565887,

-1.095810055732727,

1.8013856410980225,

-0.7358940839767456,

0.2409515380859375,

-1.7724872827529907,

-0.0718618705868721,

-0.6564115285873413,

... |

https://github.com/huggingface/datasets/issues/5597 | in-place dataset update | Indeed, and because of that it is more efficient to add multiple rows at once instead of one by one, using `concatenate_datasets` for example. | ### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets import Dataset

ds = Datas... | 276 | 24 | in-place dataset update

### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets im... | [

-1.1636685132980347,

-0.9097646474838257,

-0.754844069480896,

1.5443434715270996,

-0.1957906037569046,

-1.3152470588684082,

0.1540081799030304,

-1.0803899765014648,

1.8026745319366455,

-0.7383818030357361,

0.2480280101299286,

-1.7666202783584595,

-0.06874053180217743,

-0.6501613259315491,

... |

https://github.com/huggingface/datasets/issues/5596 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset | Apparently some JSON objects have a `"labels"` field. Since this field is not present in every object, you must specify all the fields types in the README.md

EDIT: actually specifying the feature types doesn’t solve the issue, it raises an error because “labels” is missing in the data | ### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

File "/opt/conda/lib/python3.7/site-packages/datasets/table.py", line 1839, in wr... | 277 | 48 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset

### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

... | [

-1.2122060060501099,

-1.0282363891601562,

-0.7827973365783691,

1.5735336542129517,

-0.23098750412464142,

-1.0753028392791748,

0.11346933990716934,

-1.002651333808899,

1.5741065740585327,

-0.636767566204071,

0.25753581523895264,

-1.6488094329833984,

-0.03603915497660637,

-0.7343500256538391... |

https://github.com/huggingface/datasets/issues/5596 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset | We've updated the dataset to remove the extra `labels` field from some files, closing this issue. Thanks! | ### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

File "/opt/conda/lib/python3.7/site-packages/datasets/table.py", line 1839, in wr... | 277 | 17 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset

### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

... | [

-1.2122060060501099,

-1.0282363891601562,

-0.7827973365783691,

1.5735336542129517,

-0.23098750412464142,

-1.0753028392791748,

0.11346933990716934,

-1.002651333808899,

1.5741065740585327,

-0.636767566204071,

0.25753581523895264,

-1.6488094329833984,

-0.03603915497660637,

-0.7343500256538391... |

https://github.com/huggingface/datasets/issues/5596 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset | A similar error occurs in the Pile dataset (EleutherAI/the_pile)

Loading the dataset produces the following error.

```

TypeError: Couldn't cast array of type

struct<file: string, id: string>

to

{'id': Value(dtype='string', id=None)}

```

| ### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

File "/opt/conda/lib/python3.7/site-packages/datasets/table.py", line 1839, in wr... | 277 | 32 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset

### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

... | [

-1.2122060060501099,

-1.0282363891601562,

-0.7827973365783691,

1.5735336542129517,

-0.23098750412464142,

-1.0753028392791748,

0.11346933990716934,

-1.002651333808899,

1.5741065740585327,

-0.636767566204071,

0.25753581523895264,

-1.6488094329833984,

-0.03603915497660637,

-0.7343500256538391... |

https://github.com/huggingface/datasets/issues/5594 | Error while downloading the xtreme udpos dataset | Hi! I cannot reproduce this error on my machine.

The raised error could mean that one of the downloaded files is corrupted. To verify this is not the case, you can run `load_dataset` as follows:

```python

train_dataset = load_dataset('xtreme', 'udpos.English', split="train", cache_dir=args.cache_dir, download_mode... | ### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtreme/udpos.Arabic/1.0.0/29f5d57a48779f37ccb75cb8708d1... | 278 | 45 | Error while downloading the xtreme udpos dataset

### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtre... | [

-1.144119143486023,

-0.8995024561882019,

-0.7754905819892883,

1.2917661666870117,

-0.10625427961349487,

-1.2262579202651978,

0.11179803311824799,

-1.0710818767547607,

1.5105105638504028,

-0.6548566818237305,

0.1799335777759552,

-1.678903341293335,

-0.07483324408531189,

-0.5819866061210632,... |

https://github.com/huggingface/datasets/issues/5594 | Error while downloading the xtreme udpos dataset | Hi! Apologies for the delayed response! I tried the above and it doesn't solve the issue. Actually, the dataset gets downloaded most times, but sometimes this error occurs (at random afaik). Is it possible that there is a server issue for this particular dataset? I am able to download other datasets using the same code... | ### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtreme/udpos.Arabic/1.0.0/29f5d57a48779f37ccb75cb8708d1... | 278 | 158 | Error while downloading the xtreme udpos dataset

### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtre... | [

-1.144119143486023,

-0.8995024561882019,

-0.7754905819892883,

1.2917661666870117,

-0.10625427961349487,

-1.2262579202651978,

0.11179803311824799,

-1.0710818767547607,

1.5105105638504028,

-0.6548566818237305,

0.1799335777759552,

-1.678903341293335,

-0.07483324408531189,

-0.5819866061210632,... |

https://github.com/huggingface/datasets/issues/5594 | Error while downloading the xtreme udpos dataset | If this happens randomly, then this means the data file from the error message is not always downloaded correctly.

The only solution in this scenario is to download the dataset again by passing `download_mode="force_redownload"` to the `load_dataset` call. | ### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtreme/udpos.Arabic/1.0.0/29f5d57a48779f37ccb75cb8708d1... | 278 | 38 | Error while downloading the xtreme udpos dataset

### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtre... | [

-1.144119143486023,

-0.8995024561882019,

-0.7754905819892883,

1.2917661666870117,

-0.10625427961349487,

-1.2262579202651978,

0.11179803311824799,

-1.0710818767547607,

1.5105105638504028,

-0.6548566818237305,

0.1799335777759552,

-1.678903341293335,

-0.07483324408531189,

-0.5819866061210632,... |

https://github.com/huggingface/datasets/issues/5586 | .sort() is broken when used after .filter(), only in 2.10.0 | Thanks for reporting and thanks @mariosasko for fixing ! We just did a patch release `2.10.1` with the fix | ### Describe the bug

Hi, thank you for your support!

It seems like the addition of multiple key sort (#5502) in 2.10.0 broke the `.sort()` method.

After filtering a dataset with `.filter()`, the `.sort()` seems to refer to the query_table index of the previous unfiltered dataset, resulting in an IndexError.

... | 279 | 19 | .sort() is broken when used after .filter(), only in 2.10.0

### Describe the bug

Hi, thank you for your support!

It seems like the addition of multiple key sort (#5502) in 2.10.0 broke the `.sort()` method.

After filtering a dataset with `.filter()`, the `.sort()` seems to refer to the query_table index of t... | [

-1.2049190998077393,

-0.9679717421531677,

-0.6971979737281799,

1.3593785762786865,

-0.16691051423549652,

-1.2836297750473022,

0.1095959022641182,

-1.0390392541885376,

1.6087050437927246,

-0.7305065989494324,

0.16934888064861298,

-1.7022000551223755,

-0.15895113348960876,

-0.510460615158081... |

https://github.com/huggingface/datasets/issues/5585 | Cache is not transportable | Hi ! No the cache is not transportable in general. It will work on a shared filesystem if you use the same python environment, but not across machines/os/environments.

In particular, reloading cached datasets does work, but reloading cached processed datasets (e.g. from `map`) may not work. This is because some hash... | ### Describe the bug

I would like to share cache between two machines (a Windows host machine and a WSL instance).

I run most my code in WSL. I have just run out of space in the virtual drive. Rather than expand the drive size, I plan to move to cache to the host Windows machine, thereby sharing the downloads.

I... | 280 | 85 | Cache is not transportable

### Describe the bug

I would like to share cache between two machines (a Windows host machine and a WSL instance).

I run most my code in WSL. I have just run out of space in the virtual drive. Rather than expand the drive size, I plan to move to cache to the host Windows machine, thereb... | [

-1.1485437154769897,

-0.8953870534896851,

-0.7388613820075989,

1.4046502113342285,

-0.17850692570209503,

-1.3018282651901245,

0.14802056550979614,

-1.050010323524475,

1.6973915100097656,

-0.7715612649917603,

0.29215511679649353,

-1.6193428039550781,

0.05268409848213196,

-0.5444180965423584... |

https://github.com/huggingface/datasets/issues/5584 | Unable to load coyo700M dataset | Hi @manuaero

Thank you for your interest in the COYO dataset.

Our dataset provides the img-url and alt-text in the form of a parquet, so to utilize the coyo dataset you will need to download it directly.

We provide a [guide](https://github.com/kakaobrain/coyo-dataset/blob/main/download/README.md) to download,... | ### Describe the bug

Seeing this error when downloading https://huggingface.co/datasets/kakaobrain/coyo-700m:

```ArrowInvalid: Parquet magic bytes not found in footer. Either the file is corrupted or this is not a parquet file.```

Full stack trace

```Downloading and preparing dataset parquet/kakaobrain--coy... | 281 | 49 | Unable to load coyo700M dataset

### Describe the bug

Seeing this error when downloading https://huggingface.co/datasets/kakaobrain/coyo-700m:

```ArrowInvalid: Parquet magic bytes not found in footer. Either the file is corrupted or this is not a parquet file.```

Full stack trace

```Downloading and prepari... | [

-1.137178897857666,

-0.7717975378036499,

-0.6077971458435059,

1.4108400344848633,

0.042310185730457306,

-1.4374611377716064,

0.10425188392400742,

-0.9388829469680786,

1.5090742111206055,

-0.7684450149536133,

0.43047839403152466,

-1.666019320487976,

0.055913787335157394,

-0.5954462289810181... |

https://github.com/huggingface/datasets/issues/5577 | Cannot load `the_pile_openwebtext2` | Hi! I've merged a PR to use `int32` instead of `int8` for `reddit_scores`, so it should work now.

| ### Describe the bug

I met the same bug mentioned in #3053 which is never fixed. Because several `reddit_scores` are larger than `int8` even `int16`. https://huggingface.co/datasets/the_pile_openwebtext2/blob/main/the_pile_openwebtext2.py#L62

### Steps to reproduce the bug

```python3

from datasets import load... | 283 | 18 | Cannot load `the_pile_openwebtext2`

### Describe the bug

I met the same bug mentioned in #3053 which is never fixed. Because several `reddit_scores` are larger than `int8` even `int16`. https://huggingface.co/datasets/the_pile_openwebtext2/blob/main/the_pile_openwebtext2.py#L62

### Steps to reproduce the bug

... | [

-1.129634976387024,

-0.756882905960083,

-0.7154749631881714,

1.4816962480545044,

-0.15962091088294983,

-1.3900498151779175,

0.138652965426445,

-1.0247220993041992,

1.6827644109725952,

-0.851656973361969,

0.3420817255973816,

-1.620510458946228,

0.028910677880048752,

-0.6427130699157715,

-... |

https://github.com/huggingface/datasets/issues/5575 | Metadata for each column | Hi! Indeed it would be useful to support this. PyArrow natively supports schema-level and column-level metadata, so implementing this should be straightforward. The API I have in mind would work as follows:

```python

col_feature = Value("string", metadata="Some column-level metadata")

features = Features({"col": c... | ### Feature request

Being able to put some metadata for each column as a string or any other type.

### Motivation

I will bring the motivation by an example, lets say we are experimenting with embedding produced by some image encoder network, and we want to iterate through a couple of preprocessing and see which on... | 285 | 48 | Metadata for each column

### Feature request

Being able to put some metadata for each column as a string or any other type.

### Motivation

I will bring the motivation by an example, lets say we are experimenting with embedding produced by some image encoder network, and we want to iterate through a couple of pre... | [

-1.185550332069397,

-0.8893149495124817,

-0.9054270386695862,

1.6193114519119263,

-0.257426381111145,

-1.277082920074463,

0.1428319215774536,

-1.076081395149231,

1.5800749063491821,

-0.9815604090690613,

0.3370456099510193,

-1.6183128356933594,

0.16459067165851593,

-0.6849847435951233,

-0... |

https://github.com/huggingface/datasets/issues/5575 | Metadata for each column | Sorry for the late reply,

Yes, I think this is the most straight-forward approach with the things that we already have.

| ### Feature request

Being able to put some metadata for each column as a string or any other type.

### Motivation

I will bring the motivation by an example, lets say we are experimenting with embedding produced by some image encoder network, and we want to iterate through a couple of preprocessing and see which on... | 285 | 21 | Metadata for each column

### Feature request

Being able to put some metadata for each column as a string or any other type.

### Motivation

I will bring the motivation by an example, lets say we are experimenting with embedding produced by some image encoder network, and we want to iterate through a couple of pre... | [

-1.2464033365249634,

-0.9257323145866394,

-0.9485728740692139,

1.5743310451507568,

-0.27065420150756836,

-1.2942638397216797,

0.12672464549541473,

-1.0613806247711182,

1.598240613937378,

-1.0056735277175903,

0.3065873086452484,

-1.6189723014831543,

0.1597696840763092,

-0.6239144206047058,

... |

https://github.com/huggingface/datasets/issues/5574 | c4 dataset streaming fails with `FileNotFoundError` | Also encountering this issue for every dataset I try to stream! Installed datasets from main:

```

- `datasets` version: 2.10.1.dev0

- Platform: macOS-13.1-arm64-arm-64bit

- Python version: 3.9.13

- PyArrow version: 10.0.1

- Pandas version: 1.5.2

```

Repro:

```python

from datasets import load_dataset

spig... | ### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("c4", "en", split="train", ... | 286 | 655 | c4 dataset streaming fails with `FileNotFoundError`

### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset... | [

-1.18198561668396,

-0.8671308755874634,

-0.7479708194732666,

1.3915537595748901,

-0.18342715501785278,

-1.162192463874817,

0.1917634755373001,

-1.0304006338119507,

1.6828891038894653,

-0.7545842528343201,

0.3049739897251129,

-1.6680021286010742,

-0.0791458785533905,

-0.578832745552063,

-... |

https://github.com/huggingface/datasets/issues/5574 | c4 dataset streaming fails with `FileNotFoundError` | This problem now appears again, this time with an underlying HTTP 502 status code:

```

aiohttp.client_exceptions.ClientResponseError: 502, message='Bad Gateway', url=URL('https://huggingface.co/datasets/allenai/c4/resolve/1ddc917116b730e1859edef32896ec5c16be51d0/en/c4-validation.00002-of-00008.json.gz')

``` | ### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("c4", "en", split="train", ... | 286 | 21 | c4 dataset streaming fails with `FileNotFoundError`

### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset... | [

-1.18198561668396,

-0.8671308755874634,

-0.7479708194732666,

1.3915537595748901,

-0.18342715501785278,

-1.162192463874817,

0.1917634755373001,

-1.0304006338119507,

1.6828891038894653,

-0.7545842528343201,

0.3049739897251129,

-1.6680021286010742,

-0.0791458785533905,

-0.578832745552063,

-... |

https://github.com/huggingface/datasets/issues/5574 | c4 dataset streaming fails with `FileNotFoundError` | Re-executing a minute later, the underlying cause is an HTTP 403 status code, as reported yesterday:

```

aiohttp.client_exceptions.ClientResponseError: 403, message='Forbidden', url=URL('https://cdn-lfs.huggingface.co/datasets/allenai/c4/4bf6b248b0f910dcde2cdf2118d6369d8208c8f9515ec29ab73e531f380b18e2?response-cont... | ### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("c4", "en", split="train", ... | 286 | 22 | c4 dataset streaming fails with `FileNotFoundError`

### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset... | [

-1.18198561668396,

-0.8671308755874634,

-0.7479708194732666,

1.3915537595748901,

-0.18342715501785278,

-1.162192463874817,

0.1917634755373001,

-1.0304006338119507,

1.6828891038894653,

-0.7545842528343201,

0.3049739897251129,

-1.6680021286010742,

-0.0791458785533905,

-0.578832745552063,

-... |

https://github.com/huggingface/datasets/issues/5574 | c4 dataset streaming fails with `FileNotFoundError` | > It's been resolved again ;)

I'm experiencing the same issue when trying to load this dataset, `FileNotFoundError: https://huggingface.co/datasets/allenai/c4/resolve/1ddc917116b730e1859edef32896ec5c16be51d0/realnewslike/c4-train.00000-of-00512.json.gz` | ### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("c4", "en", split="train", ... | 286 | 19 | c4 dataset streaming fails with `FileNotFoundError`

### Describe the bug

Loading the `c4` dataset in streaming mode with `load_dataset("c4", "en", split="validation", streaming=True)` and then using it fails with a `FileNotFoundException`.

### Steps to reproduce the bug

```python

from datasets import load_dataset... | [

-1.18198561668396,

-0.8671308755874634,

-0.7479708194732666,

1.3915537595748901,

-0.18342715501785278,

-1.162192463874817,

0.1917634755373001,

-1.0304006338119507,

1.6828891038894653,

-0.7545842528343201,

0.3049739897251129,

-1.6680021286010742,

-0.0791458785533905,

-0.578832745552063,

-... |

https://github.com/huggingface/datasets/issues/5571 | load_dataset fails for JSON in windows | Hi!

You need to pass an input json file explicitly as `data_files` to `load_dataset` to avoid this error:

```python

ds = load_dataset("json", data_files=args.input_json)

```

| ### Describe the bug

Steps:

1. Created a dataset in a Linux VM and created a small sample using dataset.to_json() method.

2. Downloaded the JSON file to my local Windows machine for working and saved in say - r"C:\Users\name\file.json"

3. I am reading the file in my local PyCharm - the location of python file is di... | 287 | 24 | load_dataset fails for JSON in windows

### Describe the bug

Steps:

1. Created a dataset in a Linux VM and created a small sample using dataset.to_json() method.

2. Downloaded the JSON file to my local Windows machine for working and saved in say - r"C:\Users\name\file.json"

3. I am reading the file in my local Py... | [

-1.2623194456100464,

-0.9738195538520813,

-0.7303214073181152,

1.5336825847625732,

-0.2054997831583023,

-1.2278145551681519,

0.10890890657901764,

-1.0818012952804565,

1.7445775270462036,

-0.730962336063385,

0.21932944655418396,

-1.6172125339508057,

0.050212424248456955,

-0.5594354867935181... |

https://github.com/huggingface/datasets/issues/5570 | load_dataset gives FileNotFoundError on imagenet-1k if license is not accepted on the hub | Hi, thanks for the feedback! Would it help to add a tip or note saying the dataset is gated and you need to accept the license before downloading it? | ### Describe the bug