url

stringlengths 61

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 75

75

| comments_url

stringlengths 70

70

| events_url

stringlengths 68

68

| html_url

stringlengths 49

51

| id

int64 1.2B

1.82B

| node_id

stringlengths 18

19

| number

int64 4.13k

6.08k

| title

stringlengths 1

290

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

timestamp[s] | updated_at

timestamp[s] | closed_at

timestamp[s] | author_association

stringclasses 3

values | active_lock_reason

null | draft

bool 2

classes | pull_request

dict | body

stringlengths 2

33.9k

⌀ | reactions

dict | timeline_url

stringlengths 70

70

| performed_via_github_app

null | state_reason

stringclasses 3

values | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5355 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5355/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5355/comments | https://api.github.com/repos/huggingface/datasets/issues/5355/events | https://github.com/huggingface/datasets/pull/5355 | 1,493,076,860 | PR_kwDODunzps5FQcYG | 5,355 | Clean up Table class docstrings | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-12-13T00:29:47 | 2022-12-13T18:17:56 | 2022-12-13T18:14:42 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5355",

"html_url": "https://github.com/huggingface/datasets/pull/5355",

"diff_url": "https://github.com/huggingface/datasets/pull/5355.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5355.patch",

"merged_at": "2022-12-13T18:14:42"

} | This PR cleans up the `Table` class docstrings :) | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5355/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5355/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5354 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5354/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5354/comments | https://api.github.com/repos/huggingface/datasets/issues/5354/events | https://github.com/huggingface/datasets/issues/5354 | 1,492,174,125 | I_kwDODunzps5Y8MUt | 5,354 | Consider using "Sequence" instead of "List" | {

"login": "tranhd95",

"id": 15568078,

"node_id": "MDQ6VXNlcjE1NTY4MDc4",

"avatar_url": "https://avatars.githubusercontent.com/u/15568078?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/tranhd95",

"html_url": "https://github.com/tranhd95",

"followers_url": "https://api.github.com/users/tranhd95/followers",

"following_url": "https://api.github.com/users/tranhd95/following{/other_user}",

"gists_url": "https://api.github.com/users/tranhd95/gists{/gist_id}",

"starred_url": "https://api.github.com/users/tranhd95/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/tranhd95/subscriptions",

"organizations_url": "https://api.github.com/users/tranhd95/orgs",

"repos_url": "https://api.github.com/users/tranhd95/repos",

"events_url": "https://api.github.com/users/tranhd95/events{/privacy}",

"received_events_url": "https://api.github.com/users/tranhd95/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

},

{

"id": 1935892877,

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue",

"name": "good first issue",

"color": "7057ff",

"default": true,

"description": "Good for newcomers"

}

] | open | false | {

"login": "avinashsai",

"id": 22453634,

"node_id": "MDQ6VXNlcjIyNDUzNjM0",

"avatar_url": "https://avatars.githubusercontent.com/u/22453634?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/avinashsai",

"html_url": "https://github.com/avinashsai",

"followers_url": "https://api.github.com/users/avinashsai/followers",

"following_url": "https://api.github.com/users/avinashsai/following{/other_user}",

"gists_url": "https://api.github.com/users/avinashsai/gists{/gist_id}",

"starred_url": "https://api.github.com/users/avinashsai/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/avinashsai/subscriptions",

"organizations_url": "https://api.github.com/users/avinashsai/orgs",

"repos_url": "https://api.github.com/users/avinashsai/repos",

"events_url": "https://api.github.com/users/avinashsai/events{/privacy}",

"received_events_url": "https://api.github.com/users/avinashsai/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "avinashsai",

"id": 22453634,

"node_id": "MDQ6VXNlcjIyNDUzNjM0",

"avatar_url": "https://avatars.githubusercontent.com/u/22453634?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/avinashsai",

"html_url": "https://github.com/avinashsai",

"followers_url": "https://api.github.com/users/avinashsai/followers",

"following_url": "https://api.github.com/users/avinashsai/following{/other_user}",

"gists_url": "https://api.github.com/users/avinashsai/gists{/gist_id}",

"starred_url": "https://api.github.com/users/avinashsai/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/avinashsai/subscriptions",

"organizations_url": "https://api.github.com/users/avinashsai/orgs",

"repos_url": "https://api.github.com/users/avinashsai/repos",

"events_url": "https://api.github.com/users/avinashsai/events{/privacy}",

"received_events_url": "https://api.github.com/users/avinashsai/received_events",

"type": "User",

"site_admin": false

}

] | null | [

"Hi! Linking a comment to provide more info on the issue: https://stackoverflow.com/a/39458225. This means we should replace all (most of) the occurrences of `List` with `Sequence` in function signatures.\r\n\r\n@tranhd95 Would you be interested in submitting a PR?",

"Hi all! I tried to reproduce this issue and didn't work for me. Also in your example i noticed that the variables have different names: `list_of_filenames` and `list_of_files`, could this be related to that?\r\n```python\r\n#I found random data in parquet format:\r\n!wget \"https://github.com/Teradata/kylo/raw/master/samples/sample-data/parquet/userdata1.parquet\"\r\n!wget \"https://github.com/Teradata/kylo/raw/master/samples/sample-data/parquet/userdata2.parquet\"\r\n\r\n#Then i try reproduce\r\nlist_of_files = [\"userdata1.parquet\", \"userdata2.parquet\"]\r\nds = Dataset.from_parquet(list_of_files)\r\n```\r\n**My output:**\r\n```python\r\nWARNING:datasets.builder:Using custom data configuration default-e287d097dc54e046\r\nDownloading and preparing dataset parquet/default to /root/.cache/huggingface/datasets/parquet/default-e287d097dc54e046/0.0.0/2a3b91fbd88a2c90d1dbbb32b460cf621d31bd5b05b934492fdef7d8d6f236ec...\r\nDownloading data files: 100%\r\n1/1 [00:00<00:00, 40.38it/s]\r\nExtracting data files: 100%\r\n1/1 [00:00<00:00, 23.43it/s]\r\nDataset parquet downloaded and prepared to /root/.cache/huggingface/datasets/parquet/default-e287d097dc54e046/0.0.0/2a3b91fbd88a2c90d1dbbb32b460cf621d31bd5b05b934492fdef7d8d6f236ec. Subsequent calls will reuse this data.\r\n```\r\nP.S. This is my first experience with open source. So do not judge strictly if I do not understand something)",

"@dantema There is indeed a typo in variable names. Nevertheless, I'm sorry if I was not clear but the output is from `mypy` type checker. You can run the code snippet without issues. The problem is with the type checking.",

"However, I found out that the type annotation is actually misleading. The [`from_parquet`](https://github.com/huggingface/datasets/blob/5ef1ab1cc06c2b7a574bf2df454cd9fcb071ccb2/src/datasets/arrow_dataset.py#L1039) method should also accept list of [`PathLike`](https://github.com/huggingface/datasets/blob/main/src/datasets/utils/typing.py#L8) objects which includes [`os.PathLike`](https://docs.python.org/3/library/os.html#os.PathLike). But if I would ran the code snippet below, an exception is thrown.\r\n\r\n**Code**\r\n```py\r\nfrom pathlib import Path\r\n\r\nlist_of_filenames = [Path(\"foo.parquet\"), Path(\"bar.parquet\")]\r\nds = Dataset.from_parquet(list_of_filenames)\r\n```\r\n**Output**\r\n```py\r\n[/usr/local/lib/python3.8/dist-packages/datasets/arrow_dataset.py](https://localhost:8080/#) in from_parquet(path_or_paths, split, features, cache_dir, keep_in_memory, columns, **kwargs)\r\n 1071 from .io.parquet import ParquetDatasetReader\r\n 1072 \r\n-> 1073 return ParquetDatasetReader(\r\n 1074 path_or_paths,\r\n 1075 split=split,\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/io/parquet.py](https://localhost:8080/#) in __init__(self, path_or_paths, split, features, cache_dir, keep_in_memory, streaming, **kwargs)\r\n 35 path_or_paths = path_or_paths if isinstance(path_or_paths, dict) else {self.split: path_or_paths}\r\n 36 hash = _PACKAGED_DATASETS_MODULES[\"parquet\"][1]\r\n---> 37 self.builder = Parquet(\r\n 38 cache_dir=cache_dir,\r\n 39 data_files=path_or_paths,\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/builder.py](https://localhost:8080/#) in __init__(self, cache_dir, config_name, hash, base_path, info, features, use_auth_token, repo_id, data_files, data_dir, name, **config_kwargs)\r\n 298 \r\n 299 if data_files is not None and not isinstance(data_files, DataFilesDict):\r\n--> 300 data_files = DataFilesDict.from_local_or_remote(\r\n 301 sanitize_patterns(data_files), base_path=base_path, use_auth_token=use_auth_token\r\n 302 )\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/data_files.py](https://localhost:8080/#) in from_local_or_remote(cls, patterns, base_path, allowed_extensions, use_auth_token)\r\n 794 for key, patterns_for_key in patterns.items():\r\n 795 out[key] = (\r\n--> 796 DataFilesList.from_local_or_remote(\r\n 797 patterns_for_key,\r\n 798 base_path=base_path,\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/data_files.py](https://localhost:8080/#) in from_local_or_remote(cls, patterns, base_path, allowed_extensions, use_auth_token)\r\n 762 ) -> \"DataFilesList\":\r\n 763 base_path = base_path if base_path is not None else str(Path().resolve())\r\n--> 764 data_files = resolve_patterns_locally_or_by_urls(base_path, patterns, allowed_extensions)\r\n 765 origin_metadata = _get_origin_metadata_locally_or_by_urls(data_files, use_auth_token=use_auth_token)\r\n 766 return cls(data_files, origin_metadata)\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/data_files.py](https://localhost:8080/#) in resolve_patterns_locally_or_by_urls(base_path, patterns, allowed_extensions)\r\n 357 data_files = []\r\n 358 for pattern in patterns:\r\n--> 359 if is_remote_url(pattern):\r\n 360 data_files.append(Url(pattern))\r\n 361 else:\r\n\r\n[/usr/local/lib/python3.8/dist-packages/datasets/utils/file_utils.py](https://localhost:8080/#) in is_remote_url(url_or_filename)\r\n 62 \r\n 63 def is_remote_url(url_or_filename: str) -> bool:\r\n---> 64 parsed = urlparse(url_or_filename)\r\n 65 return parsed.scheme in (\"http\", \"https\", \"s3\", \"gs\", \"hdfs\", \"ftp\")\r\n 66 \r\n\r\n[/usr/lib/python3.8/urllib/parse.py](https://localhost:8080/#) in urlparse(url, scheme, allow_fragments)\r\n 373 Note that we don't break the components up in smaller bits\r\n 374 (e.g. netloc is a single string) and we don't expand % escapes.\"\"\"\r\n--> 375 url, scheme, _coerce_result = _coerce_args(url, scheme)\r\n 376 splitresult = urlsplit(url, scheme, allow_fragments)\r\n 377 scheme, netloc, url, query, fragment = splitresult\r\n\r\n[/usr/lib/python3.8/urllib/parse.py](https://localhost:8080/#) in _coerce_args(*args)\r\n 125 if str_input:\r\n 126 return args + (_noop,)\r\n--> 127 return _decode_args(args) + (_encode_result,)\r\n 128 \r\n 129 # Result objects are more helpful than simple tuples\r\n\r\n[/usr/lib/python3.8/urllib/parse.py](https://localhost:8080/#) in _decode_args(args, encoding, errors)\r\n 109 def _decode_args(args, encoding=_implicit_encoding,\r\n 110 errors=_implicit_errors):\r\n--> 111 return tuple(x.decode(encoding, errors) if x else '' for x in args)\r\n 112 \r\n 113 def _coerce_args(*args):\r\n\r\n[/usr/lib/python3.8/urllib/parse.py](https://localhost:8080/#) in <genexpr>(.0)\r\n 109 def _decode_args(args, encoding=_implicit_encoding,\r\n 110 errors=_implicit_errors):\r\n--> 111 return tuple(x.decode(encoding, errors) if x else '' for x in args)\r\n 112 \r\n 113 def _coerce_args(*args):\r\n\r\nAttributeError: 'PosixPath' object has no attribute 'decode'\r\n```\r\n\r\n@mariosasko Should I create a new issue? ",

"@mariosasko I would like to take this issue up. ",

"@avinashsai Hi, I've assigned you the issue.\r\n\r\n@tranhd95 Yes, feel free to report this in a new issue.",

"@avinashsai Are you still working on this? If not I would like to give it a try."

] | 2022-12-12T15:39:45 | 2023-07-26T16:25:51 | null | NONE | null | null | null | ### Feature request

Hi, please consider using `Sequence` type annotation instead of `List` in function arguments such as in [`Dataset.from_parquet()`](https://github.com/huggingface/datasets/blob/main/src/datasets/arrow_dataset.py#L1088). It leads to type checking errors, see below.

**How to reproduce**

```py

list_of_filenames = ["foo.parquet", "bar.parquet"]

ds = Dataset.from_parquet(list_of_filenames)

```

**Expected mypy output:**

```

Success: no issues found

```

**Actual mypy output:**

```py

test.py:19: error: Argument 1 to "from_parquet" of "Dataset" has incompatible type "List[str]"; expected "Union[Union[str, bytes, PathLike[Any]], List[Union[str, bytes, PathLike[Any]]]]" [arg-type]

test.py:19: note: "List" is invariant -- see https://mypy.readthedocs.io/en/stable/common_issues.html#variance

test.py:19: note: Consider using "Sequence" instead, which is covariant

```

**Env:** mypy 0.991, Python 3.10.0, datasets 2.7.1 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5354/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5354/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5353 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5353/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5353/comments | https://api.github.com/repos/huggingface/datasets/issues/5353/events | https://github.com/huggingface/datasets/issues/5353 | 1,491,880,500 | I_kwDODunzps5Y7Eo0 | 5,353 | Support remote file systems for `Audio` | {

"login": "OllieBroadhurst",

"id": 46894149,

"node_id": "MDQ6VXNlcjQ2ODk0MTQ5",

"avatar_url": "https://avatars.githubusercontent.com/u/46894149?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/OllieBroadhurst",

"html_url": "https://github.com/OllieBroadhurst",

"followers_url": "https://api.github.com/users/OllieBroadhurst/followers",

"following_url": "https://api.github.com/users/OllieBroadhurst/following{/other_user}",

"gists_url": "https://api.github.com/users/OllieBroadhurst/gists{/gist_id}",

"starred_url": "https://api.github.com/users/OllieBroadhurst/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/OllieBroadhurst/subscriptions",

"organizations_url": "https://api.github.com/users/OllieBroadhurst/orgs",

"repos_url": "https://api.github.com/users/OllieBroadhurst/repos",

"events_url": "https://api.github.com/users/OllieBroadhurst/events{/privacy}",

"received_events_url": "https://api.github.com/users/OllieBroadhurst/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | closed | false | null | [] | null | [

"Just seen https://github.com/huggingface/datasets/issues/5281"

] | 2022-12-12T13:22:13 | 2022-12-12T13:37:14 | 2022-12-12T13:37:14 | NONE | null | null | null | ### Feature request

Hi there!

It would be super cool if `Audio()`, and potentially other features, could read files from a remote file system.

### Motivation

Large amounts of data is often stored in buckets. `load_from_disk` is able to retrieve data from cloud storage but to my knowledge actually copies the datasets across first, so if you're working off a system with smaller disk specs (like a VM), you can run out of space very quickly.

### Your contribution

Something like this (for Google Cloud Platform in this instance):

```python

from datasets import Dataset, Audio

import gcsfs

fs = gcsfs.GCSFileSystem()

list_of_audio_fp = {'audio': ['1', '2', '3']}

ds = Dataset.from_dict(list_of_audio_fp)

ds = ds.cast_column("audio", Audio(sampling_rate=16000, fs=fs))

```

Under the hood:

```python

import librosa

from io import BytesIO

def load_audio(fp, sampling_rate=None, fs=None):

if fs is not None:

with fs.open(fp, 'rb') as f:

arr, sr = librosa.load(BytesIO(f), sr=sampling_rate)

else:

# Perform existing io operations

```

Written from memory so some things could be wrong. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5353/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5353/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5352 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5352/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5352/comments | https://api.github.com/repos/huggingface/datasets/issues/5352/events | https://github.com/huggingface/datasets/issues/5352 | 1,490,796,414 | I_kwDODunzps5Y279- | 5,352 | __init__() got an unexpected keyword argument 'input_size' | {

"login": "J-shel",

"id": 82662111,

"node_id": "MDQ6VXNlcjgyNjYyMTEx",

"avatar_url": "https://avatars.githubusercontent.com/u/82662111?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/J-shel",

"html_url": "https://github.com/J-shel",

"followers_url": "https://api.github.com/users/J-shel/followers",

"following_url": "https://api.github.com/users/J-shel/following{/other_user}",

"gists_url": "https://api.github.com/users/J-shel/gists{/gist_id}",

"starred_url": "https://api.github.com/users/J-shel/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/J-shel/subscriptions",

"organizations_url": "https://api.github.com/users/J-shel/orgs",

"repos_url": "https://api.github.com/users/J-shel/repos",

"events_url": "https://api.github.com/users/J-shel/events{/privacy}",

"received_events_url": "https://api.github.com/users/J-shel/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Hi @J-shel, thanks for reporting.\r\n\r\nI think the issue comes from your call to `load_dataset`. As first argument, you should pass:\r\n- either the name of your dataset (\"mrf\") if this is already published on the Hub\r\n- or the path to the loading script of your dataset (\"path/to/your/local/mrf.py\").",

"Hi, following your suggestion, I changed my call to load_dataset. Below is the latest:\r\nreader = load_dataset('data/mrf.py',\"default\", input_size=1024, split=split, streaming=True, keep_in_memory=None)\r\nHowever, I still got the same error.\r\nI have one question that is if I only define input_size=2048 in BUILDER_CONFIGS, may I specify input_size=1024 when loading the dataset? Cause I found that I could only specify name=\"default\" since I only define name=\"default\" in BUILDER_CONFIGS."

] | 2022-12-12T02:52:03 | 2022-12-19T01:38:48 | null | NONE | null | null | null | ### Describe the bug

I try to define a custom configuration with a input_size attribute following the instructions by "Specifying several dataset configurations" in https://huggingface.co/docs/datasets/v1.2.1/add_dataset.html

But when I load the dataset, I got an error "__init__() got an unexpected keyword argument 'input_size'"

### Steps to reproduce the bug

Following is the code to define the dataset:

class CsvConfig(datasets.BuilderConfig):

"""BuilderConfig for CSV."""

input_size: int = 2048

class MRF(datasets.ArrowBasedBuilder):

"""Archival MRF data"""

BUILDER_CONFIG_CLASS = CsvConfig

VERSION = datasets.Version("1.0.0")

BUILDER_CONFIGS = [

CsvConfig(name="default", version=VERSION, description="MRF data", input_size=2048),

]

...

def _generate_examples(self):

input_size = self.config.input_size

if input_size > 1000:

numin = 10000

else:

numin = 15000

Below is the code to load the dataset:

reader = load_dataset("default", input_size=1024)

### Expected behavior

I hope to pass the "input_size" parameter to MRF datasets, and change "input_size" to any value when loading the datasets.

### Environment info

- `datasets` version: 2.5.1

- Platform: Linux-4.18.0-305.3.1.el8.x86_64-x86_64-with-glibc2.31

- Python version: 3.9.12

- PyArrow version: 9.0.0

- Pandas version: 1.5.0 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5352/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5352/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5351 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5351/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5351/comments | https://api.github.com/repos/huggingface/datasets/issues/5351/events | https://github.com/huggingface/datasets/issues/5351 | 1,490,659,504 | I_kwDODunzps5Y2aiw | 5,351 | Do we need to implement `_prepare_split`? | {

"login": "jmwoloso",

"id": 7530947,

"node_id": "MDQ6VXNlcjc1MzA5NDc=",

"avatar_url": "https://avatars.githubusercontent.com/u/7530947?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jmwoloso",

"html_url": "https://github.com/jmwoloso",

"followers_url": "https://api.github.com/users/jmwoloso/followers",

"following_url": "https://api.github.com/users/jmwoloso/following{/other_user}",

"gists_url": "https://api.github.com/users/jmwoloso/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jmwoloso/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jmwoloso/subscriptions",

"organizations_url": "https://api.github.com/users/jmwoloso/orgs",

"repos_url": "https://api.github.com/users/jmwoloso/repos",

"events_url": "https://api.github.com/users/jmwoloso/events{/privacy}",

"received_events_url": "https://api.github.com/users/jmwoloso/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Hi! `DatasetBuilder` is a parent class for concrete builders: `GeneratorBasedBuilder`, `ArrowBasedBuilder` and `BeamBasedBuilder`. When writing a builder script, these classes are the ones you should inherit from. And since all of them implement `_prepare_split`, you only have to implement the three methods mentioned above.",

"Thanks so much @mariosasko for the fast response! I've been referencing [this page in the docs](https://huggingface.co/docs/datasets/v2.4.0/en/about_dataset_load) because it it pretty comprehensive in terms of what we have to do and I figured since we subclass the `BuilderConfig` the same pattern would hold, but I've also seen the page with those sub-classed builders as well, so that fills in a knowledge gap for me.",

"cc @stevhliu who may have some ideas on how to improve this part of the docs.",

"one more question for my understanding @mariosasko. the requirement of a loading script has always seemed counterintuitive to me. if i have to provide a script with every dataset, what is the point of using `datasets` if we're doing all the work of loading it, I can just do that in my code and skip the datasets integration (this of course discounts other potential benefits around metadata management, etc., my example is just simplest use case though for the sake of discussion).\r\n\r\nso i figured I would implement my own `BuilderConfig` and `DatasetBuilder` to handle that portion of it and not have to make a script. i _thought_ this would result in `datasets` (via `download_and_prepare`) then making me something that I could load using `load_dataset` moving forward.\r\n\r\nConcretely, i envisioned this pattern being possible:\r\n\r\n ```\r\nclass MyBuilderConfig(BuilderConfig):\r\n def __init__(self, name=\"my_named_dataset\", ...):\r\n super().__init__(name, ...)\r\n\r\nclass MyDatasetBuilder(GeneratorBasedBuilder):\r\n BUILDER_CONFIG_CLASS = MyBuilderConfig\r\n ....\r\n\r\nmy_builder = MyDatasetBuilder(...)\r\n\r\n# this doesn't exactly work like I thought; I don't get a dataset back, but NoneType instead\r\n# though I can see it loading the files and it generates the cache, etc.\r\nmy_dataset = my_builder.download_and_prepare()\r\n\r\n# load the dataset in the future by referencing it by name and loading from the cached arrow version\r\nnew_instance_of_my_dataset = load_dataset(\"my_named_dataset\")\r\n```\r\n\r\nI've seen references to the `save_to_disk` method which might be the next step I need in order to load it by name, in which case, that makes sense, then i just need to debug why `download_and_prepare` isn't returning me a dataset, but I feel like I still have a larger conceptual knowledge gap on how to use the library correctly.\r\n\r\nThanks again in advance!",

"> the requirement of a loading script has always seemed counterintuitive to me\r\n\r\nThis is a requirement only for datasets not stored in standard formats such as CSV, JSON, SQL, Parquet, ImageFolder, etc. \r\n\r\n> if i have to provide a script with every dataset, what is the point of using datasets if we're doing all the work of loading it, I can just do that in my code and skip the datasets integration (this of course discounts other potential benefits around metadata management, etc., my example is just simplest use case though for the sake of discussion)\r\n\r\nOur README/documentation lists the main features... \r\n\r\nOne of the main ones is that our library makes it easy to work with datasets larger than RAM (thanks to Arrow and the caching mechanism), and this is not trivial to implement.\r\n\r\nRegarding the step-by-step builder, this is the pattern:\r\n```python\r\nfrom datasets import load_dataset_builder\r\nbuilder = load_dataset_builder(\"path/to/script\") # or direct instantiation with MyDatasetBuilder(...)\r\nbuilder.download_and_prepare()\r\ndset = builder.as_dataset()\r\n```",

"ok, that makes sense. thank you @mariosasko. I realized i'd never looked on the hub at any of the files associated with any datasets. just did that now and it appears that i'll need to have a script regardless _but_ that will just contain my custom config and builder classes, so without realizing it I was already making my script, I just need to wrap that in a file that sits alongside my data (I looked at Glue and realized I was already doing what I thought didn't make sense to have to do, lol).\r\n\r\n`download_and_prepare` isn't returning me a dataset though, but I'll look into that and open another issue if I can't figure it out.",

"`download_and_prepare` downloads and prepares the arrow files. You need to call `as_dataset` on the builder to get the dataset.",

"ok, I think I was assigning the output of `builder.download_and_prepare` but it's an inplace op, so that explains the `NoneType` i was getting back. Now I'm getting:\r\n\r\n```\r\nArrowInvalid Traceback (most recent call last)\r\n<ipython-input-7-3ed50fb87c70> in <module>\r\n----> 1 ds = dataset_builder.as_dataset()\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/builder.py in as_dataset(self, split, run_post_process, ignore_verifications, in_memory)\r\n 1020 \r\n 1021 # Create a dataset for each of the given splits\r\n-> 1022 datasets = map_nested(\r\n 1023 partial(\r\n 1024 self._build_single_dataset,\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/utils/py_utils.py in map_nested(function, data_struct, dict_only, map_list, map_tuple, map_numpy, num_proc, parallel_min_length, types, disable_tqdm, desc)\r\n 442 num_proc = 1\r\n 443 if num_proc <= 1 or len(iterable) < parallel_min_length:\r\n--> 444 mapped = [\r\n 445 _single_map_nested((function, obj, types, None, True, None))\r\n 446 for obj in logging.tqdm(iterable, disable=disable_tqdm, desc=desc)\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/utils/py_utils.py in <listcomp>(.0)\r\n 443 if num_proc <= 1 or len(iterable) < parallel_min_length:\r\n 444 mapped = [\r\n--> 445 _single_map_nested((function, obj, types, None, True, None))\r\n 446 for obj in logging.tqdm(iterable, disable=disable_tqdm, desc=desc)\r\n 447 ]\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/utils/py_utils.py in _single_map_nested(args)\r\n 344 # Singleton first to spare some computation\r\n 345 if not isinstance(data_struct, dict) and not isinstance(data_struct, types):\r\n--> 346 return function(data_struct)\r\n 347 \r\n 348 # Reduce logging to keep things readable in multiprocessing with tqdm\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/builder.py in _build_single_dataset(self, split, run_post_process, ignore_verifications, in_memory)\r\n 1051 \r\n 1052 # Build base dataset\r\n-> 1053 ds = self._as_dataset(\r\n 1054 split=split,\r\n 1055 in_memory=in_memory,\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/builder.py in _as_dataset(self, split, in_memory)\r\n 1120 \"\"\"\r\n 1121 cache_dir = self._fs._strip_protocol(self._output_dir)\r\n-> 1122 dataset_kwargs = ArrowReader(cache_dir, self.info).read(\r\n 1123 name=self.name,\r\n 1124 instructions=split,\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/arrow_reader.py in read(self, name, instructions, split_infos, in_memory)\r\n 236 msg = f'Instruction \"{instructions}\" corresponds to no data!'\r\n 237 raise ValueError(msg)\r\n--> 238 return self.read_files(files=files, original_instructions=instructions, in_memory=in_memory)\r\n 239 \r\n 240 def read_files(\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/arrow_reader.py in read_files(self, files, original_instructions, in_memory)\r\n 257 \"\"\"\r\n 258 # Prepend path to filename\r\n--> 259 pa_table = self._read_files(files, in_memory=in_memory)\r\n 260 # If original_instructions is not None, convert it to a human-readable NamedSplit\r\n 261 if original_instructions is not None:\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/arrow_reader.py in _read_files(self, files, in_memory)\r\n 192 f[\"filename\"] = os.path.join(self._path, f[\"filename\"])\r\n 193 for f_dict in files:\r\n--> 194 pa_table: Table = self._get_table_from_filename(f_dict, in_memory=in_memory)\r\n 195 pa_tables.append(pa_table)\r\n 196 pa_tables = [t for t in pa_tables if len(t) > 0]\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/arrow_reader.py in _get_table_from_filename(self, filename_skip_take, in_memory)\r\n 327 filename_skip_take[\"take\"] if \"take\" in filename_skip_take else None,\r\n 328 )\r\n--> 329 table = ArrowReader.read_table(filename, in_memory=in_memory)\r\n 330 if take == -1:\r\n 331 take = len(table) - skip\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/arrow_reader.py in read_table(filename, in_memory)\r\n 348 \"\"\"\r\n 349 table_cls = InMemoryTable if in_memory else MemoryMappedTable\r\n--> 350 return table_cls.from_file(filename)\r\n 351 \r\n 352 \r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/table.py in from_file(cls, filename, replays)\r\n 1034 @classmethod\r\n 1035 def from_file(cls, filename: str, replays=None):\r\n-> 1036 table = _memory_mapped_arrow_table_from_file(filename)\r\n 1037 table = cls._apply_replays(table, replays)\r\n 1038 return cls(table, filename, replays)\r\n\r\n/databricks/python/lib/python3.8/site-packages/datasets/table.py in _memory_mapped_arrow_table_from_file(filename)\r\n 48 def _memory_mapped_arrow_table_from_file(filename: str) -> pa.Table:\r\n 49 memory_mapped_stream = pa.memory_map(filename)\r\n---> 50 opened_stream = pa.ipc.open_stream(memory_mapped_stream)\r\n 51 pa_table = opened_stream.read_all()\r\n 52 return pa_table\r\n\r\n/databricks/python/lib/python3.8/site-packages/pyarrow/ipc.py in open_stream(source)\r\n 152 reader : RecordBatchStreamReader\r\n 153 \"\"\"\r\n--> 154 return RecordBatchStreamReader(source)\r\n 155 \r\n 156 \r\n\r\n/databricks/python/lib/python3.8/site-packages/pyarrow/ipc.py in __init__(self, source)\r\n 43 \r\n 44 def __init__(self, source):\r\n---> 45 self._open(source)\r\n 46 \r\n 47 \r\n\r\n/databricks/python/lib/python3.8/site-packages/pyarrow/ipc.pxi in pyarrow.lib._RecordBatchStreamReader._open()\r\n\r\n/databricks/python/lib/python3.8/site-packages/pyarrow/error.pxi in pyarrow.lib.pyarrow_internal_check_status()\r\n\r\n/databricks/python/lib/python3.8/site-packages/pyarrow/error.pxi in pyarrow.lib.check_status()\r\n\r\nArrowInvalid: Tried reading schema message, was null or length 0\r\n```\r\n\r\n",

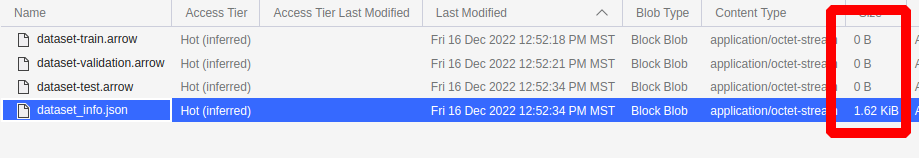

"looks like my arrow files are all empty @mariosasko \r\n\r\n\r\n\r\n\r\ni also see the `incomplete_info.lock` file a level up too. seems like the data isn't being persisted to disk when I call `download_and_prepare`. is there something else i need to do before then, perhaps?",

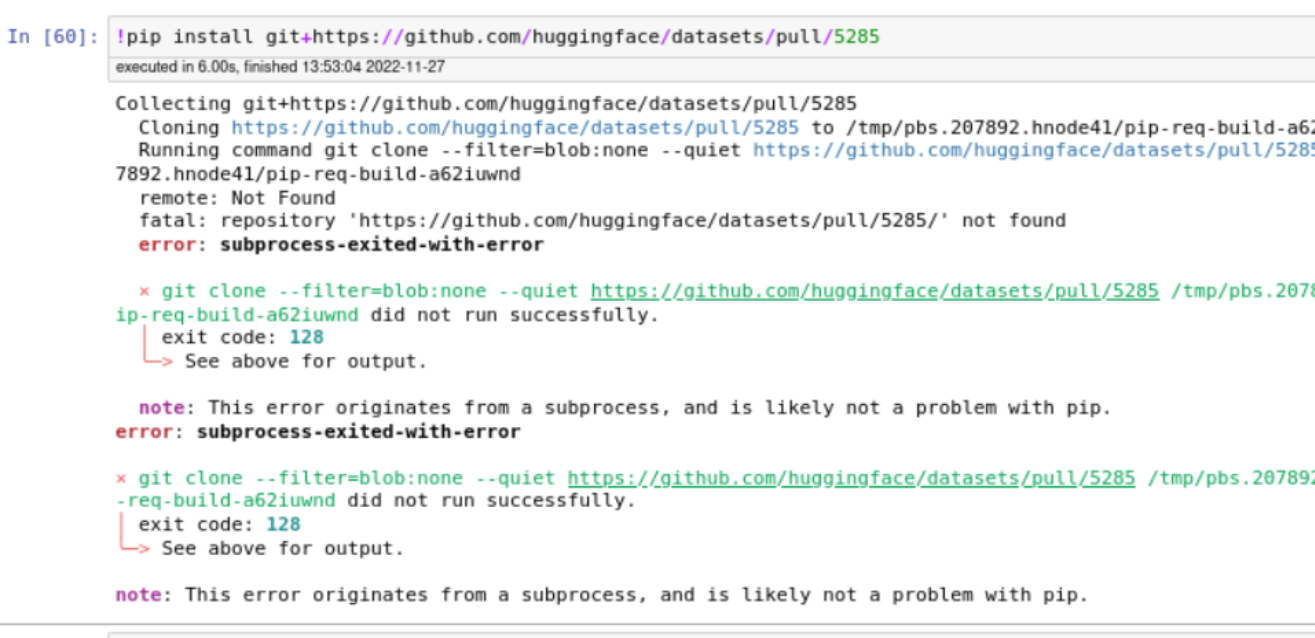

"quick update @mariosasko. i got it working! i had to downgrade to `datasets==2.4.0`. testing other versions now and will let you know the results.",

"I've tested with every version of `datasets>2.4.0` and i get the same error with all of them."

] | 2022-12-12T01:38:54 | 2022-12-20T18:20:57 | 2022-12-12T16:48:56 | NONE | null | null | null | ### Describe the bug

I'm not sure this is a bug or if it's just missing in the documentation, or i'm not doing something correctly, but I'm subclassing `DatasetBuilder` and getting the following error because on the `DatasetBuilder` class the `_prepare_split` method is abstract (as are the others we are required to implement, hence the genesis of my question):

```

Traceback (most recent call last):

File "/home/jason/source/python/prism_machine_learning/examples/create_hf_datasets.py", line 28, in <module>

dataset_builder.download_and_prepare()

File "/home/jason/.virtualenvs/pml/lib/python3.8/site-packages/datasets/builder.py", line 704, in download_and_prepare

self._download_and_prepare(

File "/home/jason/.virtualenvs/pml/lib/python3.8/site-packages/datasets/builder.py", line 793, in _download_and_prepare

self._prepare_split(split_generator, **prepare_split_kwargs)

File "/home/jason/.virtualenvs/pml/lib/python3.8/site-packages/datasets/builder.py", line 1124, in _prepare_split

raise NotImplementedError()

NotImplementedError

```

### Steps to reproduce the bug

I will share implementation if it turns out that everything should be working (i.e. we only need to implement those 3 methods the docs mention), but I don't want to distract from the original question.

### Expected behavior

I just need to know if there are additional methods we need to implement when subclassing `DatasetBuilder` besides what the documentation specifies -> `_info`, `_split_generators` and `_generate_examples`

### Environment info

- `datasets` version: 2.4.0

- Platform: Linux-5.4.0-135-generic-x86_64-with-glibc2.2.5

- Python version: 3.8.12

- PyArrow version: 7.0.0

- Pandas version: 1.4.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5351/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5351/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5350 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5350/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5350/comments | https://api.github.com/repos/huggingface/datasets/issues/5350/events | https://github.com/huggingface/datasets/pull/5350 | 1,487,559,904 | PR_kwDODunzps5E8y2E | 5,350 | Clean up Loading methods docstrings | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-12-09T22:25:30 | 2022-12-12T17:27:20 | 2022-12-12T17:24:01 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5350",

"html_url": "https://github.com/huggingface/datasets/pull/5350",

"diff_url": "https://github.com/huggingface/datasets/pull/5350.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5350.patch",

"merged_at": "2022-12-12T17:24:01"

} | Clean up for the docstrings in Loading methods! | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5350/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5350/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5349 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5349/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5349/comments | https://api.github.com/repos/huggingface/datasets/issues/5349/events | https://github.com/huggingface/datasets/pull/5349 | 1,487,396,780 | PR_kwDODunzps5E8N6G | 5,349 | Clean up remaining Main Classes docstrings | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-12-09T20:17:15 | 2022-12-12T17:27:17 | 2022-12-12T17:24:13 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5349",

"html_url": "https://github.com/huggingface/datasets/pull/5349",

"diff_url": "https://github.com/huggingface/datasets/pull/5349.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5349.patch",

"merged_at": "2022-12-12T17:24:13"

} | This PR cleans up the remaining docstrings in Main Classes (`IterableDataset`, `IterableDatasetDict`, and `Features`). | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5349/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5349/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5348 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5348/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5348/comments | https://api.github.com/repos/huggingface/datasets/issues/5348/events | https://github.com/huggingface/datasets/issues/5348 | 1,486,975,626 | I_kwDODunzps5YoXKK | 5,348 | The data downloaded in the download folder of the cache does not respect `umask` | {

"login": "SaulLu",

"id": 55560583,

"node_id": "MDQ6VXNlcjU1NTYwNTgz",

"avatar_url": "https://avatars.githubusercontent.com/u/55560583?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/SaulLu",

"html_url": "https://github.com/SaulLu",

"followers_url": "https://api.github.com/users/SaulLu/followers",

"following_url": "https://api.github.com/users/SaulLu/following{/other_user}",

"gists_url": "https://api.github.com/users/SaulLu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/SaulLu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/SaulLu/subscriptions",

"organizations_url": "https://api.github.com/users/SaulLu/orgs",

"repos_url": "https://api.github.com/users/SaulLu/repos",

"events_url": "https://api.github.com/users/SaulLu/events{/privacy}",

"received_events_url": "https://api.github.com/users/SaulLu/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"note, that `datasets` already did some of that umask fixing in the past and also at the hub - the recent work on the hub about the same: https://github.com/huggingface/huggingface_hub/pull/1220\r\n\r\nAlso I noticed that each file has a .json counterpart and the latter always has the correct perms:\r\n\r\n```\r\n-rw------- 1 uue59kq cnw 173M Dec 9 01:37 537596e64721e2ae3d98785b91d30fda0360c196a8224e29658ad629e7303a4d\r\n-rw-rw---- 1 uue59kq cnw 101 Dec 9 01:37 537596e64721e2ae3d98785b91d30fda0360c196a8224e29658ad629e7303a4d.json\r\n```\r\n\r\nso perhaps cheating is possible and syncing the perms between the 2 will do the trick."

] | 2022-12-09T15:46:27 | 2022-12-09T17:21:26 | null | CONTRIBUTOR | null | null | null | ### Describe the bug

For a project on a cluster we are several users to share the same cache for the datasets library. And we have a problem with the permissions on the data downloaded in the cache.

Indeed, it seems that the data is downloaded by giving read and write permissions only to the user launching the command (and no permissions to the group). In our case, those permissions don't respect the `umask` of this user, which was `0007`.

Traceback:

```

Using custom data configuration default

Downloading and preparing dataset text_caps/default to /gpfswork/rech/cnw/commun/datasets/HuggingFaceM4___text_caps/default/1.0.0/2b9ad220cd90fcf2bfb454645bc54364711b83d6d39401ffdaf8cc40882e9141...

Downloading data files: 100%|████████████████████| 3/3 [00:00<00:00, 921.62it/s]

---------------------------------------------------------------------------

PermissionError Traceback (most recent call last)

Cell In [3], line 1

----> 1 ds = load_dataset(dataset_name)

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/load.py:1746, in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, ignore_verifications, keep_in_memory, save_infos, revision, use_auth_token, task, streaming, **config_kwargs)

1743 try_from_hf_gcs = path not in _PACKAGED_DATASETS_MODULES

1745 # Download and prepare data

-> 1746 builder_instance.download_and_prepare(

1747 download_config=download_config,

1748 download_mode=download_mode,

1749 ignore_verifications=ignore_verifications,

1750 try_from_hf_gcs=try_from_hf_gcs,

1751 use_auth_token=use_auth_token,

1752 )

1754 # Build dataset for splits

1755 keep_in_memory = (

1756 keep_in_memory if keep_in_memory is not None else is_small_dataset(builder_instance.info.dataset_size)

1757 )

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/builder.py:704, in DatasetBuilder.download_and_prepare(self, download_config, download_mode, ignore_verifications, try_from_hf_gcs, dl_manager, base_path, use_auth_token, **download_and_prepare_kwargs)

702 logger.warning("HF google storage unreachable. Downloading and preparing it from source")

703 if not downloaded_from_gcs:

--> 704 self._download_and_prepare(

705 dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

706 )

707 # Sync info

708 self.info.dataset_size = sum(split.num_bytes for split in self.info.splits.values())

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/builder.py:1227, in GeneratorBasedBuilder._download_and_prepare(self, dl_manager, verify_infos)

1226 def _download_and_prepare(self, dl_manager, verify_infos):

-> 1227 super()._download_and_prepare(dl_manager, verify_infos, check_duplicate_keys=verify_infos)

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/builder.py:771, in DatasetBuilder._download_and_prepare(self, dl_manager, verify_infos, **prepare_split_kwargs)

769 split_dict = SplitDict(dataset_name=self.name)

770 split_generators_kwargs = self._make_split_generators_kwargs(prepare_split_kwargs)

--> 771 split_generators = self._split_generators(dl_manager, **split_generators_kwargs)

773 # Checksums verification

774 if verify_infos and dl_manager.record_checksums:

File /gpfswork/rech/cnw/commun/modules/datasets_modules/datasets/HuggingFaceM4--TextCaps/2b9ad220cd90fcf2bfb454645bc54364711b83d6d39401ffdaf8cc40882e9141/TextCaps.py:125, in TextCapsDataset._split_generators(self, dl_manager)

123 def _split_generators(self, dl_manager):

124 # urls = _URLS[self.config.name] # TODO later

--> 125 data_dir = dl_manager.download_and_extract(_URLS)

126 gen_kwargs = {

127 split_name: {

128 f"{dir_name}_path": Path(data_dir[dir_name][split_name])

(...)

133 for split_name in ["train", "val", "test"]

134 }

136 for split_name in ["train", "val", "test"]:

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/download/download_manager.py:431, in DownloadManager.download_and_extract(self, url_or_urls)

415 def download_and_extract(self, url_or_urls):

416 """Download and extract given url_or_urls.

417

418 Is roughly equivalent to:

(...)

429 extracted_path(s): `str`, extracted paths of given URL(s).

430 """

--> 431 return self.extract(self.download(url_or_urls))

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/download/download_manager.py:324, in DownloadManager.download(self, url_or_urls)

321 self.downloaded_paths.update(dict(zip(url_or_urls.flatten(), downloaded_path_or_paths.flatten())))

323 start_time = datetime.now()

--> 324 self._record_sizes_checksums(url_or_urls, downloaded_path_or_paths)

325 duration = datetime.now() - start_time

326 logger.info(f"Checksum Computation took {duration.total_seconds() // 60} min")

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/download/download_manager.py:229, in DownloadManager._record_sizes_checksums(self, url_or_urls, downloaded_path_or_paths)

226 """Record size/checksum of downloaded files."""

227 for url, path in zip(url_or_urls.flatten(), downloaded_path_or_paths.flatten()):

228 # call str to support PathLike objects

--> 229 self._recorded_sizes_checksums[str(url)] = get_size_checksum_dict(

230 path, record_checksum=self.record_checksums

231 )

File /gpfswork/rech/cnw/commun/conda/lucile-m4_3/lib/python3.8/site-packages/datasets/utils/info_utils.py:82, in get_size_checksum_dict(path, record_checksum)

80 if record_checksum:

81 m = sha256()

---> 82 with open(path, "rb") as f:

83 for chunk in iter(lambda: f.read(1 << 20), b""):

84 m.update(chunk)

PermissionError: [Errno 13] Permission denied: '/gpfswork/rech/cnw/commun/datasets/downloads/1e6aa6d23190c30885194fabb193dce3874d902d7636b66315ee8aaa584e80d6'

```

### Steps to reproduce the bug

I think the following will reproduce the bug.

Given 2 users belonging to the same group with `umask` set to `0007`

- first run with User 1:

```python

from datasets import load_dataset

ds_name = "HuggingFaceM4/VQAv2"

ds = load_dataset(ds_name)

```

- then run with User 2:

```python

from datasets import load_dataset

ds_name = "HuggingFaceM4/TextCaps"

ds = load_dataset(ds_name)

```

### Expected behavior

No `PermissionError`

### Environment info

- `datasets` version: 2.4.0

- Platform: Linux-4.18.0-305.65.1.el8_4.x86_64-x86_64-with-glibc2.17

- Python version: 3.8.13

- PyArrow version: 7.0.0

- Pandas version: 1.4.2

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5348/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5348/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5347 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5347/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5347/comments | https://api.github.com/repos/huggingface/datasets/issues/5347/events | https://github.com/huggingface/datasets/pull/5347 | 1,486,920,261 | PR_kwDODunzps5E6jb1 | 5,347 | Force soundfile to return float32 instead of the default float64 | {

"login": "qmeeus",

"id": 25608944,

"node_id": "MDQ6VXNlcjI1NjA4OTQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/25608944?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/qmeeus",

"html_url": "https://github.com/qmeeus",

"followers_url": "https://api.github.com/users/qmeeus/followers",

"following_url": "https://api.github.com/users/qmeeus/following{/other_user}",

"gists_url": "https://api.github.com/users/qmeeus/gists{/gist_id}",

"starred_url": "https://api.github.com/users/qmeeus/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/qmeeus/subscriptions",

"organizations_url": "https://api.github.com/users/qmeeus/orgs",

"repos_url": "https://api.github.com/users/qmeeus/repos",

"events_url": "https://api.github.com/users/qmeeus/events{/privacy}",

"received_events_url": "https://api.github.com/users/qmeeus/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"cc @polinaeterna",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5347). All of your documentation changes will be reflected on that endpoint.",

"Cool ! Feel free to add a comment in the code to explain that and we can merge :)",

"I'm not sure if this is a good change since we plan to get rid of `torchaudio` in the next couple of months...",

"What do you think @polinaeterna @patrickvonplaten ? Models are usually using float32 (e.g. Wev2vec2 in `transformers`) IIRC",

"IMO we can safely assume that float32 is always good enough when using audio models in inference or training. Nevertheless there might be use cases for audio datasets in the future where float64 is needed. \r\n\r\n=> I would by default always cast to float32, but then possible allow the user to cast to float64 ",

"> I'm not sure if this is a good change since we plan to get rid of torchaudio in the next couple of months...\r\n\r\n@mariosasko I agree but who knows how long we will have to wait until we are really able to do so (https://github.com/bastibe/libsndfile-binaries/pull/17 is a draft. so as @patrickvonplaten is okay with float32, I'd merge.\r\n\r\n\r\n",

"@polinaeterna Can you comment on the linked PR to see why it's still a draft? Maybe we can help somehow to get this merged finally.\r\n\r\nI think it's weird to align `soundfile` with `torchaudio` when the latter is only used for MP3 (and prob for not much longer). "

] | 2022-12-09T15:10:24 | 2023-01-17T16:12:49 | null | NONE | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5347",

"html_url": "https://github.com/huggingface/datasets/pull/5347",

"diff_url": "https://github.com/huggingface/datasets/pull/5347.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5347.patch",

"merged_at": null

} | (Fixes issue #5345) | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5347/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5347/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5346 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5346/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5346/comments | https://api.github.com/repos/huggingface/datasets/issues/5346/events | https://github.com/huggingface/datasets/issues/5346 | 1,486,884,983 | I_kwDODunzps5YoBB3 | 5,346 | [Quick poll] Give your opinion on the future of the Hugging Face Open Source ecosystem! | {

"login": "LysandreJik",

"id": 30755778,

"node_id": "MDQ6VXNlcjMwNzU1Nzc4",

"avatar_url": "https://avatars.githubusercontent.com/u/30755778?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/LysandreJik",

"html_url": "https://github.com/LysandreJik",

"followers_url": "https://api.github.com/users/LysandreJik/followers",

"following_url": "https://api.github.com/users/LysandreJik/following{/other_user}",

"gists_url": "https://api.github.com/users/LysandreJik/gists{/gist_id}",

"starred_url": "https://api.github.com/users/LysandreJik/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/LysandreJik/subscriptions",

"organizations_url": "https://api.github.com/users/LysandreJik/orgs",

"repos_url": "https://api.github.com/users/LysandreJik/repos",

"events_url": "https://api.github.com/users/LysandreJik/events{/privacy}",

"received_events_url": "https://api.github.com/users/LysandreJik/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"As the survey is finished, can we close this issue, @LysandreJik ?",

"Yes! I'll post a public summary on the forums shortly.",

"Is the summary available? I would be interested in reading your findings."

] | 2022-12-09T14:48:02 | 2023-06-02T20:24:44 | 2023-01-25T19:35:40 | MEMBER | null | null | null | Thanks to all of you, Datasets is just about to pass 15k stars!

Since the last survey, a lot has happened: the [diffusers](https://github.com/huggingface/diffusers), [evaluate](https://github.com/huggingface/evaluate) and [skops](https://github.com/skops-dev/skops) libraries were born. `timm` joined the Hugging Face ecosystem. There were 25 new releases of `transformers`, 21 new releases of `datasets`, 13 new releases of `accelerate`.

If you have a couple of minutes and want to participate in shaping the future of the ecosystem, please share your thoughts:

[**hf.co/oss-survey**](https://docs.google.com/forms/d/e/1FAIpQLSf4xFQKtpjr6I_l7OfNofqiR8s-WG6tcNbkchDJJf5gYD72zQ/viewform?usp=sf_link)

(please reply in the above feedback form rather than to this thread)

Thank you all on behalf of the HuggingFace team! 🤗 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5346/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 3,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5346/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5345 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5345/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5345/comments | https://api.github.com/repos/huggingface/datasets/issues/5345/events | https://github.com/huggingface/datasets/issues/5345 | 1,486,555,384 | I_kwDODunzps5Ymwj4 | 5,345 | Wrong dtype for array in audio features | {

"login": "qmeeus",

"id": 25608944,

"node_id": "MDQ6VXNlcjI1NjA4OTQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/25608944?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/qmeeus",

"html_url": "https://github.com/qmeeus",

"followers_url": "https://api.github.com/users/qmeeus/followers",

"following_url": "https://api.github.com/users/qmeeus/following{/other_user}",

"gists_url": "https://api.github.com/users/qmeeus/gists{/gist_id}",

"starred_url": "https://api.github.com/users/qmeeus/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/qmeeus/subscriptions",

"organizations_url": "https://api.github.com/users/qmeeus/orgs",

"repos_url": "https://api.github.com/users/qmeeus/repos",

"events_url": "https://api.github.com/users/qmeeus/events{/privacy}",

"received_events_url": "https://api.github.com/users/qmeeus/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

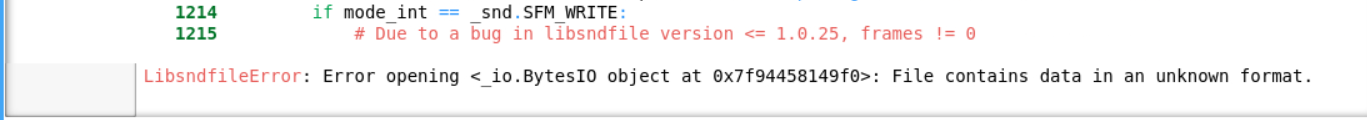

"After some more investigation, this is due to [this line of code](https://github.com/huggingface/datasets/blob/main/src/datasets/features/audio.py#L279). The function `sf.read(file)` should be updated to `sf.read(file, dtype=\"float32\")`\r\n\r\nIndeed, the default value in soundfile is `float64` ([see here](https://pysoundfile.readthedocs.io/en/latest/#soundfile.read)). \r\n",

"@qmeeus I agree, decoding of different audio formats should return the same dtypes indeed!\r\n\r\nBut note that here you are concatenating datasets with different sampling rates: 48000 for CommonVoice and 16000 for Voxpopuli. So you should cast them to the same sampling rate value before interleaving, for example:\r\n```\r\ncv = cv.cast_column(\"audio\", Audio(sampling_rate=16000))\r\n```\r\notherwise you would get the same error because features of the same column (\"audio\") are not the same.\r\n\r\nAlso, the error you get is unexpected. Could you please confirm that you use the latest main version of the `datasets`? We had an issue that could lead to an error like this after using `rename_column` method, but it was fixed in https://github.com/huggingface/datasets/pull/5287 ",

"Hi Polina,\r\nSorry for the late answer\r\nIt is possible that the issue was due to a bug that is now fixed. I installed an editable version of datasets from github, but I don't recall whether I had updated it at the time of the issue. My research led me to other directions so I did not follow through on the interleave datasets.\r\n"

] | 2022-12-09T11:05:11 | 2023-02-10T14:39:28 | null | NONE | null | null | null | ### Describe the bug

When concatenating/interleaving different datasets, I stumble into an error because the features can't be aligned. After some investigation, I understood that the audio arrays had different dtypes, namely `float32` and `float64`. Consequently, the datasets cannot be merged.

### Steps to reproduce the bug

For example, for `facebook/voxpopuli` and `mozilla-foundation/common_voice_11_0`:

```

from datasets import load_dataset, interleave_datasets

covost = load_dataset("mozilla-foundation/common_voice_11_0", "en", split="train", streaming=True)

voxpopuli = datasets.load_dataset("facebook/voxpopuli", "nl", split="train", streaming=True)

sample_cv, = covost.take(1)

sample_vp, = voxpopuli.take(1)

assert sample_cv["audio"]["array"].dtype == sample_vp["audio"]["array"].dtype

# Fails

dataset = interleave_datasets([covost, voxpopuli])

# ValueError: The features can't be aligned because the key audio of features {'audio_id': Value(dtype='string', id=None), 'language': Value(dtype='int64', id=None), 'audio': {'array': Sequence(feature=Value(dtype='float64', id=None), length=-1, id=None), 'path': Value(dtype='string', id=None), 'sampling_rate': Value(dtype='int64', id=None)}, 'normalized_text': Value(dtype='string', id=None), 'gender': Value(dtype='string', id=None), 'speaker_id': Value(dtype='string', id=None), 'is_gold_transcript': Value(dtype='bool', id=None), 'accent': Value(dtype='string', id=None), 'sentence': Value(dtype='string', id=None)} has unexpected type - {'array': Sequence(feature=Value(dtype='float64', id=None), length=-1, id=None), 'path': Value(dtype='string', id=None), 'sampling_rate': Value(dtype='int64', id=None)} (expected either Audio(sampling_rate=16000, mono=True, decode=True, id=None) or Value("null").

```

### Expected behavior

The audio should be loaded to arrays with a unique dtype (I guess `float32`)

### Environment info

```

- `datasets` version: 2.7.1.dev0

- Platform: Linux-4.18.0-425.3.1.el8.x86_64-x86_64-with-glibc2.28

- Python version: 3.9.15

- PyArrow version: 10.0.1

- Pandas version: 1.5.2

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5345/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5345/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5344 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5344/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5344/comments | https://api.github.com/repos/huggingface/datasets/issues/5344/events | https://github.com/huggingface/datasets/pull/5344 | 1,485,628,319 | PR_kwDODunzps5E2BPN | 5,344 | Clean up Dataset and DatasetDict | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-12-09T00:02:08 | 2022-12-13T00:56:07 | 2022-12-13T00:53:02 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5344",

"html_url": "https://github.com/huggingface/datasets/pull/5344",

"diff_url": "https://github.com/huggingface/datasets/pull/5344.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5344.patch",

"merged_at": "2022-12-13T00:53:01"

} | This PR cleans up the docstrings for the other half of the methods in `Dataset` and finishes `DatasetDict`. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5344/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5344/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5343 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5343/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5343/comments | https://api.github.com/repos/huggingface/datasets/issues/5343/events | https://github.com/huggingface/datasets/issues/5343 | 1,485,297,823 | I_kwDODunzps5Yh9if | 5,343 | T5 for Q&A produces truncated sentence | {

"login": "junyongyou",

"id": 13484072,

"node_id": "MDQ6VXNlcjEzNDg0MDcy",

"avatar_url": "https://avatars.githubusercontent.com/u/13484072?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/junyongyou",

"html_url": "https://github.com/junyongyou",

"followers_url": "https://api.github.com/users/junyongyou/followers",

"following_url": "https://api.github.com/users/junyongyou/following{/other_user}",

"gists_url": "https://api.github.com/users/junyongyou/gists{/gist_id}",

"starred_url": "https://api.github.com/users/junyongyou/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/junyongyou/subscriptions",

"organizations_url": "https://api.github.com/users/junyongyou/orgs",

"repos_url": "https://api.github.com/users/junyongyou/repos",

"events_url": "https://api.github.com/users/junyongyou/events{/privacy}",

"received_events_url": "https://api.github.com/users/junyongyou/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2022-12-08T19:48:46 | 2022-12-08T19:57:17 | 2022-12-08T19:57:17 | NONE | null | null | null | Dear all, I am fine-tuning T5 for Q&A task using the MedQuAD ([GitHub - abachaa/MedQuAD: Medical Question Answering Dataset of 47,457 QA pairs created from 12 NIH websites](https://github.com/abachaa/MedQuAD)) dataset. In the dataset, there are many long answers with thousands of words. I have used pytorch_lightning to train the T5-large model. I have two questions.

For example, I set both the max_length, max_input_length, max_output_length to 128.

How to deal with those long answers? I just left them as is and the T5Tokenizer can automatically handle. I would assume the tokenizer just truncates an answer at the position of 128th word (or 127th). Is it possible that I manually split an answer into different parts, each part has 128 words; and then all these sub-answers serve as a separate answer to the same question?

Another question is that I get incomplete (truncated) answers when using the fine-tuned model in inference, even though the predicted answer is shorter than 128 words. I found a message posted 2 years ago saying that one should add at the end of texts when fine-tuning T5. I followed that but then got a warning message that duplicated were found. I am assuming that this is because the tokenizer truncates an answer text, thus is missing in the truncated answer, such that the end token is not produced in predicted answer. However, I am not sure. Can anybody point out how to address this issue?

Any suggestions are highly appreciated.

Below is some code snippet.

`

import pytorch_lightning as pl

from torch.utils.data import DataLoader

import torch

import numpy as np

import time

from pathlib import Path

from transformers import (

Adafactor,

T5ForConditionalGeneration,

T5Tokenizer,

get_linear_schedule_with_warmup

)

from torch.utils.data import RandomSampler

from question_answering.utils import *

class T5FineTuner(pl.LightningModule):

def __init__(self, hyparams):

super(T5FineTuner, self).__init__()

self.hyparams = hyparams

self.model = T5ForConditionalGeneration.from_pretrained(hyparams.model_name_or_path)

self.tokenizer = T5Tokenizer.from_pretrained(hyparams.tokenizer_name_or_path)

if self.hyparams.freeze_embeds:

self.freeze_embeds()

if self.hyparams.freeze_encoder:

self.freeze_params(self.model.get_encoder())

# assert_all_frozen()

self.step_count = 0

self.output_dir = Path(self.hyparams.output_dir)

n_observations_per_split = {

'train': self.hyparams.n_train,

'validation': self.hyparams.n_val,

'test': self.hyparams.n_test

}

self.n_obs = {k: v if v >= 0 else None for k, v in n_observations_per_split.items()}

self.em_score_list = []

self.subset_score_list = []

data_folder = r'C:\Datasets\MedQuAD-master'

self.train_data, self.val_data, self.test_data = load_medqa_data(data_folder)

def freeze_params(self, model):

for param in model.parameters():

param.requires_grad = False

def freeze_embeds(self):

try:

self.freeze_params(self.model.model.shared)

for d in [self.model.model.encoder, self.model.model.decoder]:

self.freeze_params(d.embed_positions)

self.freeze_params(d.embed_tokens)

except AttributeError:

self.freeze_params(self.model.shared)

for d in [self.model.encoder, self.model.decoder]:

self.freeze_params(d.embed_tokens)

def lmap(self, f, x):

return list(map(f, x))

def is_logger(self):

return self.trainer.proc_rank <= 0

def forward(self, input_ids, attention_mask=None, decoder_input_ids=None, decoder_attention_mask=None, labels=None):

return self.model(

input_ids,

attention_mask=attention_mask,

decoder_input_ids=decoder_input_ids,

decoder_attention_mask=decoder_attention_mask,

labels=labels

)

def _step(self, batch):

labels = batch['target_ids']

labels[labels[:, :] == self.tokenizer.pad_token_id] = -100

outputs = self(

input_ids = batch['source_ids'],

attention_mask=batch['source_mask'],

labels=labels,

decoder_attention_mask=batch['target_mask']

)

loss = outputs[0]

return loss

def ids_to_clean_text(self, generated_ids):

gen_text = self.tokenizer.batch_decode(generated_ids, skip_special_tokens=True, clean_up_tokenization_spaces=True)

return self.lmap(str.strip, gen_text)

def _generative_step(self, batch):

t0 = time.time()

generated_ids = self.model.generate(

batch["source_ids"],

attention_mask=batch["source_mask"],

use_cache=True,

decoder_attention_mask=batch['target_mask'],

max_length=128,

num_beams=2,

early_stopping=True

)

preds = self.ids_to_clean_text(generated_ids)

targets = self.ids_to_clean_text(batch["target_ids"])

gen_time = (time.time() - t0) / batch["source_ids"].shape[0]

loss = self._step(batch)

base_metrics = {'val_loss': loss}

summ_len = np.mean(self.lmap(len, generated_ids))

base_metrics.update(gen_time=gen_time, gen_len=summ_len, preds=preds, target=targets)

em_score, subset_match_score = calculate_scores(preds, targets)

self.em_score_list.append(em_score)

self.subset_score_list.append(subset_match_score)

em_score = torch.tensor(em_score, dtype=torch.float32)

subset_match_score = torch.tensor(subset_match_score, dtype=torch.float32)

base_metrics.update(em_score=em_score, subset_match_score=subset_match_score)

# rouge_results = self.rouge_metric.compute()

# rouge_dict = self.parse_score(rouge_results)

return base_metrics

def training_step(self, batch, batch_idx):

loss = self._step(batch)

tensorboard_logs = {'train_loss': loss}

return {'loss': loss, 'log': tensorboard_logs}

def training_epoch_end(self, outputs):

avg_train_loss = torch.stack([x['loss'] for x in outputs]).mean()

tensorboard_logs = {'avg_train_loss': avg_train_loss}

# return {'avg_train_loss': avg_train_loss, 'log': tensorboard_logs, 'progress_bar': tensorboard_logs}

def validation_step(self, batch, batch_idx):

return self._generative_step(batch)

def validation_epoch_end(self, outputs):

avg_loss = torch.stack([x['val_loss'] for x in outputs]).mean()

tensorboard_logs = {'val_loss': avg_loss}

if len(self.em_score_list) <= 2:

average_em_score = sum(self.em_score_list) / len(self.em_score_list)

average_subset_match_score = sum(self.subset_score_list) / len(self.subset_score_list)

else:

latest_em_score = self.em_score_list[:-2]

latest_subset_score = self.subset_score_list[:-2]

average_em_score = sum(latest_em_score) / len(latest_em_score)

average_subset_match_score = sum(latest_subset_score) / len(latest_subset_score)

average_em_score = torch.tensor(average_em_score, dtype=torch.float32)

average_subset_match_score = torch.tensor(average_subset_match_score, dtype=torch.float32)

tensorboard_logs.update(em_score=average_em_score, subset_match_score=average_subset_match_score)

self.target_gen = []

self.prediction_gen = []

return {

'avg_val_loss': avg_loss,

'em_score': average_em_score,

'subset_match_socre': average_subset_match_score,

'log': tensorboard_logs,

'progress_bar': tensorboard_logs

}

def configure_optimizers(self):

model = self.model

no_decay = ["bias", "LayerNorm.weight"]

optimizer_grouped_parameters = [

{

"params": [p for n, p in model.named_parameters() if not any(nd in n for nd in no_decay)],

"weight_decay": self.hyparams.weight_decay,

},

{

"params": [p for n, p in model.named_parameters() if any(nd in n for nd in no_decay)],

"weight_decay": 0.0,

},

]