modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

unknown | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

unknown | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

katuni4ka/tiny-random-chatglm2 | katuni4ka | "2024-06-05T11:16:11Z" | 39,571 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"chatglm",

"feature-extraction",

"generated_from_trainer",

"custom_code",

"base_model:katuni4ka/tiny-random-chatglm2",

"region:us"

] | feature-extraction | "2024-02-29T15:07:35Z" | ---

base_model: katuni4ka/tiny-random-chatglm2

tags:

- generated_from_trainer

model-index:

- name: tiny-random-chatglm2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tiny-random-chatglm2

This model is a fine-tuned version of [katuni4ka/tiny-random-chatglm2](https://huggingface.co/katuni4ka/tiny-random-chatglm2) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 1000

- num_epochs: 1

### Training results

### Framework versions

- Transformers 4.38.1

- Pytorch 2.1.0+cu121

- Datasets 2.17.1

- Tokenizers 0.15.2

|

cactusfriend/nightmare-promptgen-XL | cactusfriend | "2023-07-06T22:16:34Z" | 39,549 | 5 | transformers | [

"transformers",

"pytorch",

"safetensors",

"gpt_neo",

"text-generation",

"license:openrail",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-generation | "2023-06-26T14:07:40Z" | ---

license: openrail

pipeline_tag: text-generation

library_name: transformers

widget:

- text: "a photograph of"

example_title: "photo"

- text: "a bizarre cg render"

example_title: "render"

- text: "the spaghetti"

example_title: "meal?"

- text: "a (detailed+ intricate)+ picture"

example_title: "weights"

- text: "photograph of various"

example_title: "variety"

inference:

parameters:

temperature: 2.6

max_new_tokens: 250

---

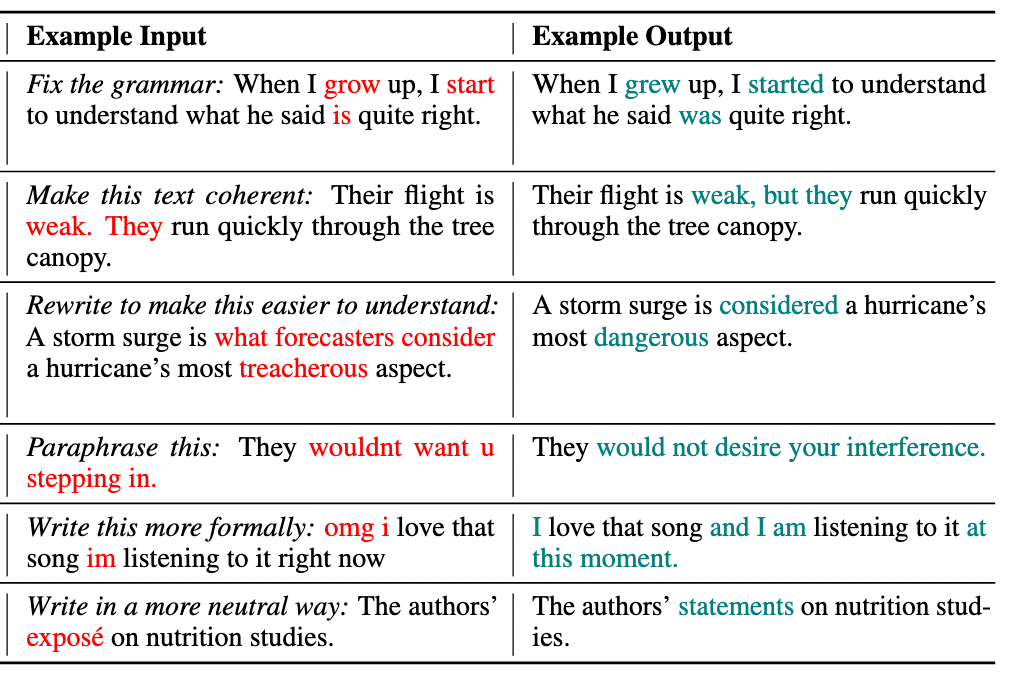

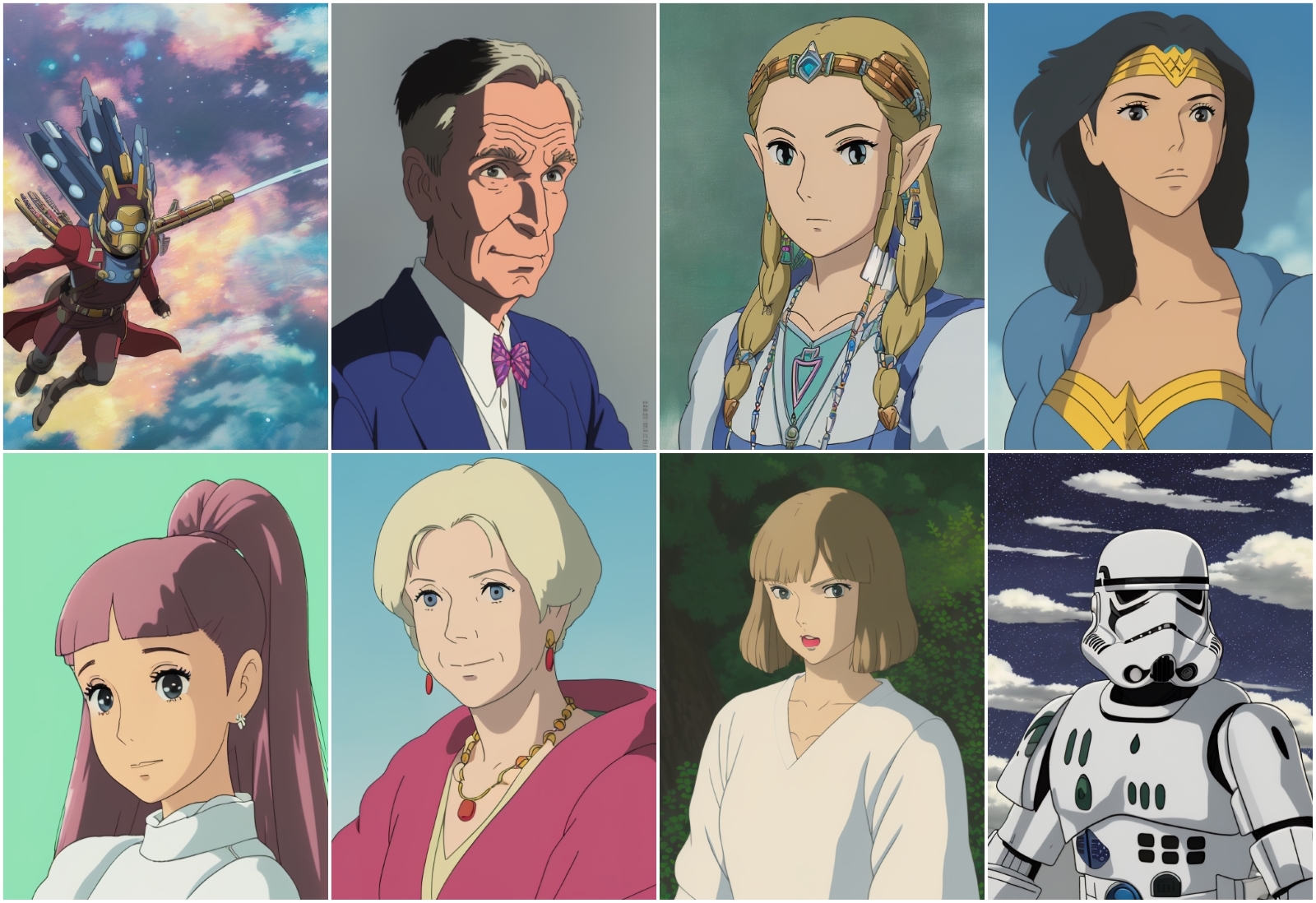

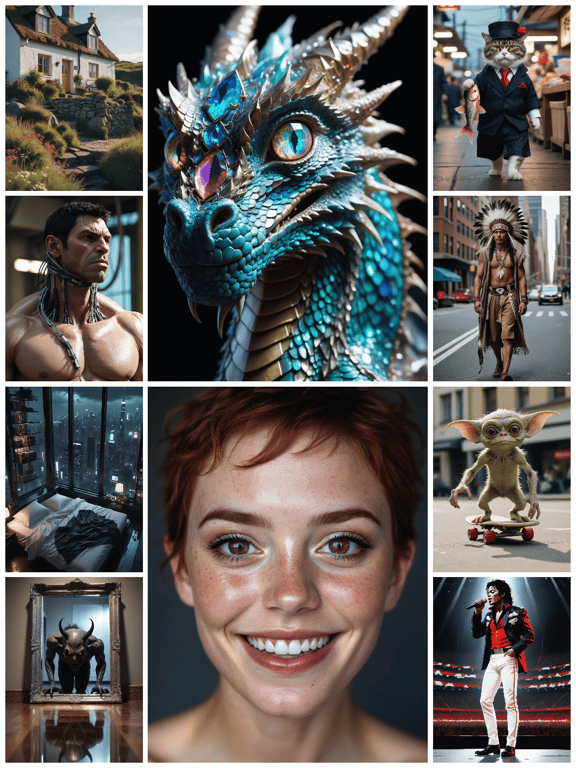

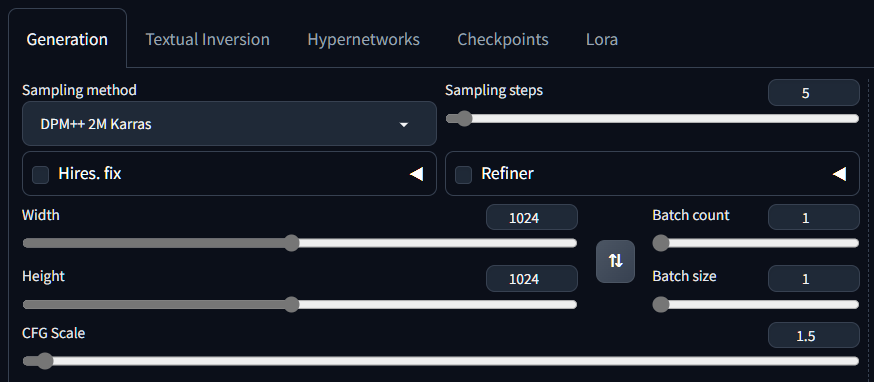

Experimental 'XL' version of [Nightmare InvokeAI Prompts](https://huggingface.co/cactusfriend/nightmare-invokeai-prompts). Very early version and may be deleted. |

audeering/wav2vec2-large-robust-12-ft-emotion-msp-dim | audeering | "2024-05-08T09:35:28Z" | 39,444 | 83 | transformers | [

"transformers",

"pytorch",

"safetensors",

"wav2vec2",

"speech",

"audio",

"audio-classification",

"emotion-recognition",

"en",

"dataset:msp-podcast",

"arxiv:2203.07378",

"license:cc-by-nc-sa-4.0",

"endpoints_compatible",

"region:us"

] | audio-classification | "2022-04-06T12:40:02Z" | ---

language: en

datasets:

- msp-podcast

inference: true

tags:

- speech

- audio

- wav2vec2

- audio-classification

- emotion-recognition

license: cc-by-nc-sa-4.0

pipeline_tag: audio-classification

---

# Model for Dimensional Speech Emotion Recognition based on Wav2vec 2.0

Please note that this model is for research purpose only. A commercial license for a model that has been trained on much more data can be acquired with [audEERING](https://www.audeering.com/products/devaice/).

The model expects a raw audio signal as input and outputs predictions for arousal, dominance and valence in a range of approximately 0...1. In addition, it also provides the pooled states of the last transformer layer. The model was created by fine-tuning [

Wav2Vec2-Large-Robust](https://huggingface.co/facebook/wav2vec2-large-robust) on [MSP-Podcast](https://ecs.utdallas.edu/research/researchlabs/msp-lab/MSP-Podcast.html) (v1.7). The model was pruned from 24 to 12 transformer layers before fine-tuning. An [ONNX](https://onnx.ai/") export of the model is available from [doi:10.5281/zenodo.6221127](https://zenodo.org/record/6221127). Further details are given in the associated [paper](https://arxiv.org/abs/2203.07378) and [tutorial](https://github.com/audeering/w2v2-how-to).

# Usage

```python

import numpy as np

import torch

import torch.nn as nn

from transformers import Wav2Vec2Processor

from transformers.models.wav2vec2.modeling_wav2vec2 import (

Wav2Vec2Model,

Wav2Vec2PreTrainedModel,

)

class RegressionHead(nn.Module):

r"""Classification head."""

def __init__(self, config):

super().__init__()

self.dense = nn.Linear(config.hidden_size, config.hidden_size)

self.dropout = nn.Dropout(config.final_dropout)

self.out_proj = nn.Linear(config.hidden_size, config.num_labels)

def forward(self, features, **kwargs):

x = features

x = self.dropout(x)

x = self.dense(x)

x = torch.tanh(x)

x = self.dropout(x)

x = self.out_proj(x)

return x

class EmotionModel(Wav2Vec2PreTrainedModel):

r"""Speech emotion classifier."""

def __init__(self, config):

super().__init__(config)

self.config = config

self.wav2vec2 = Wav2Vec2Model(config)

self.classifier = RegressionHead(config)

self.init_weights()

def forward(

self,

input_values,

):

outputs = self.wav2vec2(input_values)

hidden_states = outputs[0]

hidden_states = torch.mean(hidden_states, dim=1)

logits = self.classifier(hidden_states)

return hidden_states, logits

# load model from hub

device = 'cpu'

model_name = 'audeering/wav2vec2-large-robust-12-ft-emotion-msp-dim'

processor = Wav2Vec2Processor.from_pretrained(model_name)

model = EmotionModel.from_pretrained(model_name)

# dummy signal

sampling_rate = 16000

signal = np.zeros((1, sampling_rate), dtype=np.float32)

def process_func(

x: np.ndarray,

sampling_rate: int,

embeddings: bool = False,

) -> np.ndarray:

r"""Predict emotions or extract embeddings from raw audio signal."""

# run through processor to normalize signal

# always returns a batch, so we just get the first entry

# then we put it on the device

y = processor(x, sampling_rate=sampling_rate)

y = y['input_values'][0]

y = y.reshape(1, -1)

y = torch.from_numpy(y).to(device)

# run through model

with torch.no_grad():

y = model(y)[0 if embeddings else 1]

# convert to numpy

y = y.detach().cpu().numpy()

return y

print(process_func(signal, sampling_rate))

# Arousal dominance valence

# [[0.5460754 0.6062266 0.40431657]]

print(process_func(signal, sampling_rate, embeddings=True))

# Pooled hidden states of last transformer layer

# [[-0.00752167 0.0065819 -0.00746342 ... 0.00663632 0.00848748

# 0.00599211]]

``` |

tomh/toxigen_roberta | tomh | "2022-05-01T19:42:09Z" | 39,396 | 8 | transformers | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"en",

"arxiv:2203.09509",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2022-05-01T13:19:41Z" | ---

language:

- en

tags:

- text-classification

---

Thomas Hartvigsen, Saadia Gabriel, Hamid Palangi, Maarten Sap, Dipankar Ray, Ece Kamar.

This model comes from the paper [ToxiGen: A Large-Scale Machine-Generated Dataset for Adversarial and Implicit Hate Speech Detection](https://arxiv.org/abs/2203.09509) and can be used to detect implicit hate speech.

Please visit the [Github Repository](https://github.com/microsoft/TOXIGEN) for the training dataset and further details.

```bibtex

@inproceedings{hartvigsen2022toxigen,

title = "{T}oxi{G}en: A Large-Scale Machine-Generated Dataset for Adversarial and Implicit Hate Speech Detection",

author = "Hartvigsen, Thomas and Gabriel, Saadia and Palangi, Hamid and Sap, Maarten and Ray, Dipankar and Kamar, Ece",

booktitle = "Proceedings of the 60th Annual Meeting of the Association of Computational Linguistics",

year = "2022"

}

``` |

katuni4ka/tiny-random-qwen | katuni4ka | "2024-03-04T14:28:37Z" | 39,396 | 0 | transformers | [

"transformers",

"safetensors",

"qwen",

"text-generation",

"custom_code",

"autotrain_compatible",

"region:us"

] | text-generation | "2024-03-01T15:30:30Z" | Entry not found |

NousResearch/Meta-Llama-3-8B | NousResearch | "2024-04-30T04:45:04Z" | 39,319 | 83 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"facebook",

"meta",

"pytorch",

"llama-3",

"en",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2024-04-18T16:47:58Z" | ---

language:

- en

pipeline_tag: text-generation

tags:

- facebook

- meta

- pytorch

- llama

- llama-3

license: other

license_name: llama3

license_link: LICENSE

extra_gated_prompt: >-

### META LLAMA 3 COMMUNITY LICENSE AGREEMENT

Meta Llama 3 Version Release Date: April 18, 2024

"Agreement" means the terms and conditions for use, reproduction, distribution and modification of the

Llama Materials set forth herein.

"Documentation" means the specifications, manuals and documentation accompanying Meta Llama 3

distributed by Meta at https://llama.meta.com/get-started/.

"Licensee" or "you" means you, or your employer or any other person or entity (if you are entering into

this Agreement on such person or entity’s behalf), of the age required under applicable laws, rules or

regulations to provide legal consent and that has legal authority to bind your employer or such other

person or entity if you are entering in this Agreement on their behalf.

"Meta Llama 3" means the foundational large language models and software and algorithms, including

machine-learning model code, trained model weights, inference-enabling code, training-enabling code,

fine-tuning enabling code and other elements of the foregoing distributed by Meta at

https://llama.meta.com/llama-downloads.

"Llama Materials" means, collectively, Meta’s proprietary Meta Llama 3 and Documentation (and any

portion thereof) made available under this Agreement.

"Meta" or "we" means Meta Platforms Ireland Limited (if you are located in or, if you are an entity, your

principal place of business is in the EEA or Switzerland) and Meta Platforms, Inc. (if you are located

outside of the EEA or Switzerland).

1. License Rights and Redistribution.

a. Grant of Rights. You are granted a non-exclusive, worldwide, non-transferable and royalty-free

limited license under Meta’s intellectual property or other rights owned by Meta embodied in the Llama

Materials to use, reproduce, distribute, copy, create derivative works of, and make modifications to the

Llama Materials.

b. Redistribution and Use.

i. If you distribute or make available the Llama Materials (or any derivative works

thereof), or a product or service that uses any of them, including another AI model, you shall (A) provide

a copy of this Agreement with any such Llama Materials; and (B) prominently display “Built with Meta

Llama 3” on a related website, user interface, blogpost, about page, or product documentation. If you

use the Llama Materials to create, train, fine tune, or otherwise improve an AI model, which is

distributed or made available, you shall also include “Llama 3” at the beginning of any such AI model

name.

ii. If you receive Llama Materials, or any derivative works thereof, from a Licensee as part

of an integrated end user product, then Section 2 of this Agreement will not apply to you.

iii. You must retain in all copies of the Llama Materials that you distribute the following

attribution notice within a “Notice” text file distributed as a part of such copies: “Meta Llama 3 is

licensed under the Meta Llama 3 Community License, Copyright © Meta Platforms, Inc. All Rights

Reserved.”

iv. Your use of the Llama Materials must comply with applicable laws and regulations

(including trade compliance laws and regulations) and adhere to the Acceptable Use Policy for the Llama

Materials (available at https://llama.meta.com/llama3/use-policy), which is hereby incorporated by

reference into this Agreement.

v. You will not use the Llama Materials or any output or results of the Llama Materials to

improve any other large language model (excluding Meta Llama 3 or derivative works thereof).

2. Additional Commercial Terms. If, on the Meta Llama 3 version release date, the monthly active users

of the products or services made available by or for Licensee, or Licensee’s affiliates, is greater than 700

million monthly active users in the preceding calendar month, you must request a license from Meta,

which Meta may grant to you in its sole discretion, and you are not authorized to exercise any of the

rights under this Agreement unless or until Meta otherwise expressly grants you such rights.

3. Disclaimer of Warranty. UNLESS REQUIRED BY APPLICABLE LAW, THE LLAMA MATERIALS AND ANY

OUTPUT AND RESULTS THEREFROM ARE PROVIDED ON AN “AS IS” BASIS, WITHOUT WARRANTIES OF

ANY KIND, AND META DISCLAIMS ALL WARRANTIES OF ANY KIND, BOTH EXPRESS AND IMPLIED,

INCLUDING, WITHOUT LIMITATION, ANY WARRANTIES OF TITLE, NON-INFRINGEMENT,

MERCHANTABILITY, OR FITNESS FOR A PARTICULAR PURPOSE. YOU ARE SOLELY RESPONSIBLE FOR

DETERMINING THE APPROPRIATENESS OF USING OR REDISTRIBUTING THE LLAMA MATERIALS AND

ASSUME ANY RISKS ASSOCIATED WITH YOUR USE OF THE LLAMA MATERIALS AND ANY OUTPUT AND

RESULTS.

4. Limitation of Liability. IN NO EVENT WILL META OR ITS AFFILIATES BE LIABLE UNDER ANY THEORY OF

LIABILITY, WHETHER IN CONTRACT, TORT, NEGLIGENCE, PRODUCTS LIABILITY, OR OTHERWISE, ARISING

OUT OF THIS AGREEMENT, FOR ANY LOST PROFITS OR ANY INDIRECT, SPECIAL, CONSEQUENTIAL,

INCIDENTAL, EXEMPLARY OR PUNITIVE DAMAGES, EVEN IF META OR ITS AFFILIATES HAVE BEEN ADVISED

OF THE POSSIBILITY OF ANY OF THE FOREGOING.

5. Intellectual Property.

a. No trademark licenses are granted under this Agreement, and in connection with the Llama

Materials, neither Meta nor Licensee may use any name or mark owned by or associated with the other

or any of its affiliates, except as required for reasonable and customary use in describing and

redistributing the Llama Materials or as set forth in this Section 5(a). Meta hereby grants you a license to

use “Llama 3” (the “Mark”) solely as required to comply with the last sentence of Section 1.b.i. You will

comply with Meta’s brand guidelines (currently accessible at

https://about.meta.com/brand/resources/meta/company-brand/ ). All goodwill arising out of your use

of the Mark will inure to the benefit of Meta.

b. Subject to Meta’s ownership of Llama Materials and derivatives made by or for Meta, with

respect to any derivative works and modifications of the Llama Materials that are made by you, as

between you and Meta, you are and will be the owner of such derivative works and modifications.

c. If you institute litigation or other proceedings against Meta or any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Llama Materials or Meta Llama 3 outputs or

results, or any portion of any of the foregoing, constitutes infringement of intellectual property or other

rights owned or licensable by you, then any licenses granted to you under this Agreement shall

terminate as of the date such litigation or claim is filed or instituted. You will indemnify and hold

harmless Meta from and against any claim by any third party arising out of or related to your use or

distribution of the Llama Materials.

6. Term and Termination. The term of this Agreement will commence upon your acceptance of this

Agreement or access to the Llama Materials and will continue in full force and effect until terminated in

accordance with the terms and conditions herein. Meta may terminate this Agreement if you are in

breach of any term or condition of this Agreement. Upon termination of this Agreement, you shall delete

and cease use of the Llama Materials. Sections 3, 4 and 7 shall survive the termination of this

Agreement.

7. Governing Law and Jurisdiction. This Agreement will be governed and construed under the laws of

the State of California without regard to choice of law principles, and the UN Convention on Contracts

for the International Sale of Goods does not apply to this Agreement. The courts of California shall have

exclusive jurisdiction of any dispute arising out of this Agreement.

### Meta Llama 3 Acceptable Use Policy

Meta is committed to promoting safe and fair use of its tools and features, including Meta Llama 3. If you

access or use Meta Llama 3, you agree to this Acceptable Use Policy (“Policy”). The most recent copy of

this policy can be found at [https://llama.meta.com/llama3/use-policy](https://llama.meta.com/llama3/use-policy)

#### Prohibited Uses

We want everyone to use Meta Llama 3 safely and responsibly. You agree you will not use, or allow

others to use, Meta Llama 3 to:

1. Violate the law or others’ rights, including to:

1. Engage in, promote, generate, contribute to, encourage, plan, incite, or further illegal or unlawful activity or content, such as:

1. Violence or terrorism

2. Exploitation or harm to children, including the solicitation, creation, acquisition, or dissemination of child exploitative content or failure to report Child Sexual Abuse Material

3. Human trafficking, exploitation, and sexual violence

4. The illegal distribution of information or materials to minors, including obscene materials, or failure to employ legally required age-gating in connection with such information or materials.

5. Sexual solicitation

6. Any other criminal activity

2. Engage in, promote, incite, or facilitate the harassment, abuse, threatening, or bullying of individuals or groups of individuals

3. Engage in, promote, incite, or facilitate discrimination or other unlawful or harmful conduct in the provision of employment, employment benefits, credit, housing, other economic benefits, or other essential goods and services

4. Engage in the unauthorized or unlicensed practice of any profession including, but not limited to, financial, legal, medical/health, or related professional practices

5. Collect, process, disclose, generate, or infer health, demographic, or other sensitive personal or private information about individuals without rights and consents required by applicable laws

6. Engage in or facilitate any action or generate any content that infringes, misappropriates, or otherwise violates any third-party rights, including the outputs or results of any products or services using the Llama Materials

7. Create, generate, or facilitate the creation of malicious code, malware, computer viruses or do anything else that could disable, overburden, interfere with or impair the proper working, integrity, operation or appearance of a website or computer system

2. Engage in, promote, incite, facilitate, or assist in the planning or development of activities that present a risk of death or bodily harm to individuals, including use of Meta Llama 3 related to the following:

1. Military, warfare, nuclear industries or applications, espionage, use for materials or activities that are subject to the International Traffic Arms Regulations (ITAR) maintained by the United States Department of State

2. Guns and illegal weapons (including weapon development)

3. Illegal drugs and regulated/controlled substances

4. Operation of critical infrastructure, transportation technologies, or heavy machinery

5. Self-harm or harm to others, including suicide, cutting, and eating disorders

6. Any content intended to incite or promote violence, abuse, or any infliction of bodily harm to an individual

3. Intentionally deceive or mislead others, including use of Meta Llama 3 related to the following:

1. Generating, promoting, or furthering fraud or the creation or promotion of disinformation

2. Generating, promoting, or furthering defamatory content, including the creation of defamatory statements, images, or other content

3. Generating, promoting, or further distributing spam

4. Impersonating another individual without consent, authorization, or legal right

5. Representing that the use of Meta Llama 3 or outputs are human-generated

6. Generating or facilitating false online engagement, including fake reviews and other means of fake online engagement

4. Fail to appropriately disclose to end users any known dangers of your AI system

Please report any violation of this Policy, software “bug,” or other problems that could lead to a violation

of this Policy through one of the following means:

* Reporting issues with the model: [https://github.com/meta-llama/llama3](https://github.com/meta-llama/llama3)

* Reporting risky content generated by the model:

developers.facebook.com/llama_output_feedback

* Reporting bugs and security concerns: facebook.com/whitehat/info

* Reporting violations of the Acceptable Use Policy or unlicensed uses of Meta Llama 3: LlamaUseReport@meta.com

extra_gated_fields:

First Name: text

Last Name: text

Date of birth: date_picker

Country: country

Affiliation: text

geo: ip_location

By clicking Submit below I accept the terms of the license and acknowledge that the information I provide will be collected stored processed and shared in accordance with the Meta Privacy Policy: checkbox

extra_gated_description: The information you provide will be collected, stored, processed and shared in accordance with the [Meta Privacy Policy](https://www.facebook.com/privacy/policy/).

extra_gated_button_content: Submit

---

## Model Details

Meta developed and released the Meta Llama 3 family of large language models (LLMs), a collection of pretrained and instruction tuned generative text models in 8 and 70B sizes. The Llama 3 instruction tuned models are optimized for dialogue use cases and outperform many of the available open source chat models on common industry benchmarks. Further, in developing these models, we took great care to optimize helpfulness and safety.

**Model developers** Meta

**Variations** Llama 3 comes in two sizes — 8B and 70B parameters — in pre-trained and instruction tuned variants.

**Input** Models input text only.

**Output** Models generate text and code only.

**Model Architecture** Llama 3 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align with human preferences for helpfulness and safety.

<table>

<tr>

<td>

</td>

<td><strong>Training Data</strong>

</td>

<td><strong>Params</strong>

</td>

<td><strong>Context length</strong>

</td>

<td><strong>GQA</strong>

</td>

<td><strong>Token count</strong>

</td>

<td><strong>Knowledge cutoff</strong>

</td>

</tr>

<tr>

<td rowspan="2" >Llama 3

</td>

<td rowspan="2" >A new mix of publicly available online data.

</td>

<td>8B

</td>

<td>8k

</td>

<td>Yes

</td>

<td rowspan="2" >15T+

</td>

<td>March, 2023

</td>

</tr>

<tr>

<td>70B

</td>

<td>8k

</td>

<td>Yes

</td>

<td>December, 2023

</td>

</tr>

</table>

**Llama 3 family of models**. Token counts refer to pretraining data only. Both the 8 and 70B versions use Grouped-Query Attention (GQA) for improved inference scalability.

**Model Release Date** April 18, 2024.

**Status** This is a static model trained on an offline dataset. Future versions of the tuned models will be released as we improve model safety with community feedback.

**License** A custom commercial license is available at: [https://llama.meta.com/llama3/license](https://llama.meta.com/llama3/license)

Where to send questions or comments about the model Instructions on how to provide feedback or comments on the model can be found in the model [README](https://github.com/meta-llama/llama3). For more technical information about generation parameters and recipes for how to use Llama 3 in applications, please go [here](https://github.com/meta-llama/llama-recipes).

## Intended Use

**Intended Use Cases** Llama 3 is intended for commercial and research use in English. Instruction tuned models are intended for assistant-like chat, whereas pretrained models can be adapted for a variety of natural language generation tasks.

**Out-of-scope** Use in any manner that violates applicable laws or regulations (including trade compliance laws). Use in any other way that is prohibited by the Acceptable Use Policy and Llama 3 Community License. Use in languages other than English**.

**Note: Developers may fine-tune Llama 3 models for languages beyond English provided they comply with the Llama 3 Community License and the Acceptable Use Policy.

## How to use

This repository contains two versions of Meta-Llama-3-8B, for use with transformers and with the original `llama3` codebase.

### Use with transformers

See the snippet below for usage with Transformers:

```python

>>> import transformers

>>> import torch

>>> model_id = "meta-llama/Meta-Llama-3-8B"

>>> pipeline = transformers.pipeline(

"text-generation", model=model_id, model_kwargs={"torch_dtype": torch.bfloat16}, device_map="auto"

)

>>> pipeline("Hey how are you doing today?")

```

### Use with `llama3`

Please, follow the instructions in the [repository](https://github.com/meta-llama/llama3).

To download Original checkpoints, see the example command below leveraging `huggingface-cli`:

```

huggingface-cli download meta-llama/Meta-Llama-3-8B --include "original/*" --local-dir Meta-Llama-3-8B

```

For Hugging Face support, we recommend using transformers or TGI, but a similar command works.

## Hardware and Software

**Training Factors** We used custom training libraries, Meta's Research SuperCluster, and production clusters for pretraining. Fine-tuning, annotation, and evaluation were also performed on third-party cloud compute.

**Carbon Footprint Pretraining utilized a cumulative** 7.7M GPU hours of computation on hardware of type H100-80GB (TDP of 700W). Estimated total emissions were 2290 tCO2eq, 100% of which were offset by Meta’s sustainability program.

<table>

<tr>

<td>

</td>

<td><strong>Time (GPU hours)</strong>

</td>

<td><strong>Power Consumption (W)</strong>

</td>

<td><strong>Carbon Emitted(tCO2eq)</strong>

</td>

</tr>

<tr>

<td>Llama 3 8B

</td>

<td>1.3M

</td>

<td>700

</td>

<td>390

</td>

</tr>

<tr>

<td>Llama 3 70B

</td>

<td>6.4M

</td>

<td>700

</td>

<td>1900

</td>

</tr>

<tr>

<td>Total

</td>

<td>7.7M

</td>

<td>

</td>

<td>2290

</td>

</tr>

</table>

**CO2 emissions during pre-training**. Time: total GPU time required for training each model. Power Consumption: peak power capacity per GPU device for the GPUs used adjusted for power usage efficiency. 100% of the emissions are directly offset by Meta's sustainability program, and because we are openly releasing these models, the pretraining costs do not need to be incurred by others.

## Training Data

**Overview** Llama 3 was pretrained on over 15 trillion tokens of data from publicly available sources. The fine-tuning data includes publicly available instruction datasets, as well as over 10M human-annotated examples. Neither the pretraining nor the fine-tuning datasets include Meta user data.

**Data Freshness** The pretraining data has a cutoff of March 2023 for the 7B and December 2023 for the 70B models respectively.

## Benchmarks

In this section, we report the results for Llama 3 models on standard automatic benchmarks. For all the evaluations, we use our internal evaluations library. For details on the methodology see [here](https://github.com/meta-llama/llama3/blob/main/eval_methodology.md).

### Base pretrained models

<table>

<tr>

<td><strong>Category</strong>

</td>

<td><strong>Benchmark</strong>

</td>

<td><strong>Llama 3 8B</strong>

</td>

<td><strong>Llama2 7B</strong>

</td>

<td><strong>Llama2 13B</strong>

</td>

<td><strong>Llama 3 70B</strong>

</td>

<td><strong>Llama2 70B</strong>

</td>

</tr>

<tr>

<td rowspan="6" >General

</td>

<td>MMLU (5-shot)

</td>

<td>66.6

</td>

<td>45.7

</td>

<td>53.8

</td>

<td>79.5

</td>

<td>69.7

</td>

</tr>

<tr>

<td>AGIEval English (3-5 shot)

</td>

<td>45.9

</td>

<td>28.8

</td>

<td>38.7

</td>

<td>63.0

</td>

<td>54.8

</td>

</tr>

<tr>

<td>CommonSenseQA (7-shot)

</td>

<td>72.6

</td>

<td>57.6

</td>

<td>67.6

</td>

<td>83.8

</td>

<td>78.7

</td>

</tr>

<tr>

<td>Winogrande (5-shot)

</td>

<td>76.1

</td>

<td>73.3

</td>

<td>75.4

</td>

<td>83.1

</td>

<td>81.8

</td>

</tr>

<tr>

<td>BIG-Bench Hard (3-shot, CoT)

</td>

<td>61.1

</td>

<td>38.1

</td>

<td>47.0

</td>

<td>81.3

</td>

<td>65.7

</td>

</tr>

<tr>

<td>ARC-Challenge (25-shot)

</td>

<td>78.6

</td>

<td>53.7

</td>

<td>67.6

</td>

<td>93.0

</td>

<td>85.3

</td>

</tr>

<tr>

<td>Knowledge reasoning

</td>

<td>TriviaQA-Wiki (5-shot)

</td>

<td>78.5

</td>

<td>72.1

</td>

<td>79.6

</td>

<td>89.7

</td>

<td>87.5

</td>

</tr>

<tr>

<td rowspan="4" >Reading comprehension

</td>

<td>SQuAD (1-shot)

</td>

<td>76.4

</td>

<td>72.2

</td>

<td>72.1

</td>

<td>85.6

</td>

<td>82.6

</td>

</tr>

<tr>

<td>QuAC (1-shot, F1)

</td>

<td>44.4

</td>

<td>39.6

</td>

<td>44.9

</td>

<td>51.1

</td>

<td>49.4

</td>

</tr>

<tr>

<td>BoolQ (0-shot)

</td>

<td>75.7

</td>

<td>65.5

</td>

<td>66.9

</td>

<td>79.0

</td>

<td>73.1

</td>

</tr>

<tr>

<td>DROP (3-shot, F1)

</td>

<td>58.4

</td>

<td>37.9

</td>

<td>49.8

</td>

<td>79.7

</td>

<td>70.2

</td>

</tr>

</table>

### Instruction tuned models

<table>

<tr>

<td><strong>Benchmark</strong>

</td>

<td><strong>Llama 3 8B</strong>

</td>

<td><strong>Llama 2 7B</strong>

</td>

<td><strong>Llama 2 13B</strong>

</td>

<td><strong>Llama 3 70B</strong>

</td>

<td><strong>Llama 2 70B</strong>

</td>

</tr>

<tr>

<td>MMLU (5-shot)

</td>

<td>68.4

</td>

<td>34.1

</td>

<td>47.8

</td>

<td>82.0

</td>

<td>52.9

</td>

</tr>

<tr>

<td>GPQA (0-shot)

</td>

<td>34.2

</td>

<td>21.7

</td>

<td>22.3

</td>

<td>39.5

</td>

<td>21.0

</td>

</tr>

<tr>

<td>HumanEval (0-shot)

</td>

<td>62.2

</td>

<td>7.9

</td>

<td>14.0

</td>

<td>81.7

</td>

<td>25.6

</td>

</tr>

<tr>

<td>GSM-8K (8-shot, CoT)

</td>

<td>79.6

</td>

<td>25.7

</td>

<td>77.4

</td>

<td>93.0

</td>

<td>57.5

</td>

</tr>

<tr>

<td>MATH (4-shot, CoT)

</td>

<td>30.0

</td>

<td>3.8

</td>

<td>6.7

</td>

<td>50.4

</td>

<td>11.6

</td>

</tr>

</table>

### Responsibility & Safety

We believe that an open approach to AI leads to better, safer products, faster innovation, and a bigger overall market. We are committed to Responsible AI development and took a series of steps to limit misuse and harm and support the open source community.

Foundation models are widely capable technologies that are built to be used for a diverse range of applications. They are not designed to meet every developer preference on safety levels for all use cases, out-of-the-box, as those by their nature will differ across different applications.

Rather, responsible LLM-application deployment is achieved by implementing a series of safety best practices throughout the development of such applications, from the model pre-training, fine-tuning and the deployment of systems composed of safeguards to tailor the safety needs specifically to the use case and audience.

As part of the Llama 3 release, we updated our [Responsible Use Guide](https://llama.meta.com/responsible-use-guide/) to outline the steps and best practices for developers to implement model and system level safety for their application. We also provide a set of resources including [Meta Llama Guard 2](https://llama.meta.com/purple-llama/) and [Code Shield](https://llama.meta.com/purple-llama/) safeguards. These tools have proven to drastically reduce residual risks of LLM Systems, while maintaining a high level of helpfulness. We encourage developers to tune and deploy these safeguards according to their needs and we provide a [reference implementation](https://github.com/meta-llama/llama-recipes/tree/main/recipes/responsible_ai) to get you started.

#### Llama 3-Instruct

As outlined in the Responsible Use Guide, some trade-off between model helpfulness and model alignment is likely unavoidable. Developers should exercise discretion about how to weigh the benefits of alignment and helpfulness for their specific use case and audience. Developers should be mindful of residual risks when using Llama models and leverage additional safety tools as needed to reach the right safety bar for their use case.

<span style="text-decoration:underline;">Safety</span>

For our instruction tuned model, we conducted extensive red teaming exercises, performed adversarial evaluations and implemented safety mitigations techniques to lower residual risks. As with any Large Language Model, residual risks will likely remain and we recommend that developers assess these risks in the context of their use case. In parallel, we are working with the community to make AI safety benchmark standards transparent, rigorous and interpretable.

<span style="text-decoration:underline;">Refusals</span>

In addition to residual risks, we put a great emphasis on model refusals to benign prompts. Over-refusing not only can impact the user experience but could even be harmful in certain contexts as well. We’ve heard the feedback from the developer community and improved our fine tuning to ensure that Llama 3 is significantly less likely to falsely refuse to answer prompts than Llama 2.

We built internal benchmarks and developed mitigations to limit false refusals making Llama 3 our most helpful model to date.

#### Responsible release

In addition to responsible use considerations outlined above, we followed a rigorous process that requires us to take extra measures against misuse and critical risks before we make our release decision.

Misuse

If you access or use Llama 3, you agree to the Acceptable Use Policy. The most recent copy of this policy can be found at [https://llama.meta.com/llama3/use-policy/](https://llama.meta.com/llama3/use-policy/).

#### Critical risks

<span style="text-decoration:underline;">CBRNE</span> (Chemical, Biological, Radiological, Nuclear, and high yield Explosives)

We have conducted a two fold assessment of the safety of the model in this area:

* Iterative testing during model training to assess the safety of responses related to CBRNE threats and other adversarial risks.

* Involving external CBRNE experts to conduct an uplift test assessing the ability of the model to accurately provide expert knowledge and reduce barriers to potential CBRNE misuse, by reference to what can be achieved using web search (without the model).

### <span style="text-decoration:underline;">Cyber Security </span>

We have evaluated Llama 3 with CyberSecEval, Meta’s cybersecurity safety eval suite, measuring Llama 3’s propensity to suggest insecure code when used as a coding assistant, and Llama 3’s propensity to comply with requests to help carry out cyber attacks, where attacks are defined by the industry standard MITRE ATT&CK cyber attack ontology. On our insecure coding and cyber attacker helpfulness tests, Llama 3 behaved in the same range or safer than models of [equivalent coding capability](https://huggingface.co/spaces/facebook/CyberSecEval).

### <span style="text-decoration:underline;">Child Safety</span>

Child Safety risk assessments were conducted using a team of experts, to assess the model’s capability to produce outputs that could result in Child Safety risks and inform on any necessary and appropriate risk mitigations via fine tuning. We leveraged those expert red teaming sessions to expand the coverage of our evaluation benchmarks through Llama 3 model development. For Llama 3, we conducted new in-depth sessions using objective based methodologies to assess the model risks along multiple attack vectors. We also partnered with content specialists to perform red teaming exercises assessing potentially violating content while taking account of market specific nuances or experiences.

### Community

Generative AI safety requires expertise and tooling, and we believe in the strength of the open community to accelerate its progress. We are active members of open consortiums, including the AI Alliance, Partnership in AI and MLCommons, actively contributing to safety standardization and transparency. We encourage the community to adopt taxonomies like the MLCommons Proof of Concept evaluation to facilitate collaboration and transparency on safety and content evaluations. Our Purple Llama tools are open sourced for the community to use and widely distributed across ecosystem partners including cloud service providers. We encourage community contributions to our [Github repository](https://github.com/meta-llama/PurpleLlama).

Finally, we put in place a set of resources including an [output reporting mechanism](https://developers.facebook.com/llama_output_feedback) and [bug bounty program](https://www.facebook.com/whitehat) to continuously improve the Llama technology with the help of the community.

## Ethical Considerations and Limitations

The core values of Llama 3 are openness, inclusivity and helpfulness. It is meant to serve everyone, and to work for a wide range of use cases. It is thus designed to be accessible to people across many different backgrounds, experiences and perspectives. Llama 3 addresses users and their needs as they are, without insertion unnecessary judgment or normativity, while reflecting the understanding that even content that may appear problematic in some cases can serve valuable purposes in others. It respects the dignity and autonomy of all users, especially in terms of the values of free thought and expression that power innovation and progress.

But Llama 3 is a new technology, and like any new technology, there are risks associated with its use. Testing conducted to date has been in English, and has not covered, nor could it cover, all scenarios. For these reasons, as with all LLMs, Llama 3’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 3 models, developers should perform safety testing and tuning tailored to their specific applications of the model. As outlined in the Responsible Use Guide, we recommend incorporating [Purple Llama](https://github.com/facebookresearch/PurpleLlama) solutions into your workflows and specifically [Llama Guard](https://ai.meta.com/research/publications/llama-guard-llm-based-input-output-safeguard-for-human-ai-conversations/) which provides a base model to filter input and output prompts to layer system-level safety on top of model-level safety.

Please see the Responsible Use Guide available at [http://llama.meta.com/responsible-use-guide](http://llama.meta.com/responsible-use-guide)

## Citation instructions

@article{llama3modelcard,

title={Llama 3 Model Card},

author={AI@Meta},

year={2024},

url = {https://github.com/meta-llama/llama3/blob/main/MODEL_CARD.md}

}

## Contributors

Aaditya Singh; Aaron Grattafiori; Abhimanyu Dubey; Abhinav Jauhri; Abhinav Pandey; Abhishek Kadian; Adam Kelsey; Adi Gangidi; Ahmad Al-Dahle; Ahuva Goldstand; Aiesha Letman; Ajay Menon; Akhil Mathur; Alan Schelten; Alex Vaughan; Amy Yang; Andrei Lupu; Andres Alvarado; Andrew Gallagher; Andrew Gu; Andrew Ho; Andrew Poulton; Andrew Ryan; Angela Fan; Ankit Ramchandani; Anthony Hartshorn; Archi Mitra; Archie Sravankumar; Artem Korenev; Arun Rao; Ashley Gabriel; Ashwin Bharambe; Assaf Eisenman; Aston Zhang; Aurelien Rodriguez; Austen Gregerson; Ava Spataru; Baptiste Roziere; Ben Maurer; Benjamin Leonhardi; Bernie Huang; Bhargavi Paranjape; Bing Liu; Binh Tang; Bobbie Chern; Brani Stojkovic; Brian Fuller; Catalina Mejia Arenas; Chao Zhou; Charlotte Caucheteux; Chaya Nayak; Ching-Hsiang Chu; Chloe Bi; Chris Cai; Chris Cox; Chris Marra; Chris McConnell; Christian Keller; Christoph Feichtenhofer; Christophe Touret; Chunyang Wu; Corinne Wong; Cristian Canton Ferrer; Damien Allonsius; Daniel Kreymer; Daniel Haziza; Daniel Li; Danielle Pintz; Danny Livshits; Danny Wyatt; David Adkins; David Esiobu; David Xu; Davide Testuggine; Delia David; Devi Parikh; Dhruv Choudhary; Dhruv Mahajan; Diana Liskovich; Diego Garcia-Olano; Diego Perino; Dieuwke Hupkes; Dingkang Wang; Dustin Holland; Egor Lakomkin; Elina Lobanova; Xiaoqing Ellen Tan; Emily Dinan; Eric Smith; Erik Brinkman; Esteban Arcaute; Filip Radenovic; Firat Ozgenel; Francesco Caggioni; Frank Seide; Frank Zhang; Gabriel Synnaeve; Gabriella Schwarz; Gabrielle Lee; Gada Badeer; Georgia Anderson; Graeme Nail; Gregoire Mialon; Guan Pang; Guillem Cucurell; Hailey Nguyen; Hannah Korevaar; Hannah Wang; Haroun Habeeb; Harrison Rudolph; Henry Aspegren; Hu Xu; Hugo Touvron; Iga Kozlowska; Igor Molybog; Igor Tufanov; Iliyan Zarov; Imanol Arrieta Ibarra; Irina-Elena Veliche; Isabel Kloumann; Ishan Misra; Ivan Evtimov; Jacob Xu; Jade Copet; Jake Weissman; Jan Geffert; Jana Vranes; Japhet Asher; Jason Park; Jay Mahadeokar; Jean-Baptiste Gaya; Jeet Shah; Jelmer van der Linde; Jennifer Chan; Jenny Hong; Jenya Lee; Jeremy Fu; Jeremy Teboul; Jianfeng Chi; Jianyu Huang; Jie Wang; Jiecao Yu; Joanna Bitton; Joe Spisak; Joelle Pineau; Jon Carvill; Jongsoo Park; Joseph Rocca; Joshua Johnstun; Junteng Jia; Kalyan Vasuden Alwala; Kam Hou U; Kate Plawiak; Kartikeya Upasani; Kaushik Veeraraghavan; Ke Li; Kenneth Heafield; Kevin Stone; Khalid El-Arini; Krithika Iyer; Kshitiz Malik; Kuenley Chiu; Kunal Bhalla; Kyle Huang; Lakshya Garg; Lauren Rantala-Yeary; Laurens van der Maaten; Lawrence Chen; Leandro Silva; Lee Bell; Lei Zhang; Liang Tan; Louis Martin; Lovish Madaan; Luca Wehrstedt; Lukas Blecher; Luke de Oliveira; Madeline Muzzi; Madian Khabsa; Manav Avlani; Mannat Singh; Manohar Paluri; Mark Zuckerberg; Marcin Kardas; Martynas Mankus; Mathew Oldham; Mathieu Rita; Matthew Lennie; Maya Pavlova; Meghan Keneally; Melanie Kambadur; Mihir Patel; Mikayel Samvelyan; Mike Clark; Mike Lewis; Min Si; Mitesh Kumar Singh; Mo Metanat; Mona Hassan; Naman Goyal; Narjes Torabi; Nicolas Usunier; Nikolay Bashlykov; Nikolay Bogoychev; Niladri Chatterji; Ning Dong; Oliver Aobo Yang; Olivier Duchenne; Onur Celebi; Parth Parekh; Patrick Alrassy; Paul Saab; Pavan Balaji; Pedro Rittner; Pengchuan Zhang; Pengwei Li; Petar Vasic; Peter Weng; Polina Zvyagina; Prajjwal Bhargava; Pratik Dubal; Praveen Krishnan; Punit Singh Koura; Qing He; Rachel Rodriguez; Ragavan Srinivasan; Rahul Mitra; Ramon Calderer; Raymond Li; Robert Stojnic; Roberta Raileanu; Robin Battey; Rocky Wang; Rohit Girdhar; Rohit Patel; Romain Sauvestre; Ronnie Polidoro; Roshan Sumbaly; Ross Taylor; Ruan Silva; Rui Hou; Rui Wang; Russ Howes; Ruty Rinott; Saghar Hosseini; Sai Jayesh Bondu; Samyak Datta; Sanjay Singh; Sara Chugh; Sargun Dhillon; Satadru Pan; Sean Bell; Sergey Edunov; Shaoliang Nie; Sharan Narang; Sharath Raparthy; Shaun Lindsay; Sheng Feng; Sheng Shen; Shenghao Lin; Shiva Shankar; Shruti Bhosale; Shun Zhang; Simon Vandenhende; Sinong Wang; Seohyun Sonia Kim; Soumya Batra; Sten Sootla; Steve Kehoe; Suchin Gururangan; Sumit Gupta; Sunny Virk; Sydney Borodinsky; Tamar Glaser; Tamar Herman; Tamara Best; Tara Fowler; Thomas Georgiou; Thomas Scialom; Tianhe Li; Todor Mihaylov; Tong Xiao; Ujjwal Karn; Vedanuj Goswami; Vibhor Gupta; Vignesh Ramanathan; Viktor Kerkez; Vinay Satish Kumar; Vincent Gonguet; Vish Vogeti; Vlad Poenaru; Vlad Tiberiu Mihailescu; Vladan Petrovic; Vladimir Ivanov; Wei Li; Weiwei Chu; Wenhan Xiong; Wenyin Fu; Wes Bouaziz; Whitney Meers; Will Constable; Xavier Martinet; Xiaojian Wu; Xinbo Gao; Xinfeng Xie; Xuchao Jia; Yaelle Goldschlag; Yann LeCun; Yashesh Gaur; Yasmine Babaei; Ye Qi; Yenda Li; Yi Wen; Yiwen Song; Youngjin Nam; Yuchen Hao; Yuchen Zhang; Yun Wang; Yuning Mao; Yuzi He; Zacharie Delpierre Coudert; Zachary DeVito; Zahra Hankir; Zhaoduo Wen; Zheng Yan; Zhengxing Chen; Zhenyu Yang; Zoe Papakipos

|

flair/ner-english-ontonotes-large | flair | "2021-05-08T15:35:21Z" | 39,210 | 92 | flair | [

"flair",

"pytorch",

"token-classification",

"sequence-tagger-model",

"en",

"dataset:ontonotes",

"arxiv:2011.06993",

"region:us"

] | token-classification | "2022-03-02T23:29:05Z" | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- ontonotes

widget:

- text: "On September 1st George won 1 dollar while watching Game of Thrones."

---

## English NER in Flair (Ontonotes large model)

This is the large 18-class NER model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **90.93** (Ontonotes)

Predicts 18 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| CARDINAL | cardinal value |

| DATE | date value |

| EVENT | event name |

| FAC | building name |

| GPE | geo-political entity |

| LANGUAGE | language name |

| LAW | law name |

| LOC | location name |

| MONEY | money name |

| NORP | affiliation |

| ORDINAL | ordinal value |

| ORG | organization name |

| PERCENT | percent value |

| PERSON | person name |

| PRODUCT | product name |

| QUANTITY | quantity value |

| TIME | time value |

| WORK_OF_ART | name of work of art |

Based on document-level XLM-R embeddings and [FLERT](https://arxiv.org/pdf/2011.06993v1.pdf/).

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/ner-english-ontonotes-large")

# make example sentence

sentence = Sentence("On September 1st George won 1 dollar while watching Game of Thrones.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [2,3]: "September 1st" [− Labels: DATE (1.0)]

Span [4]: "George" [− Labels: PERSON (1.0)]

Span [6,7]: "1 dollar" [− Labels: MONEY (1.0)]

Span [10,11,12]: "Game of Thrones" [− Labels: WORK_OF_ART (1.0)]

```

So, the entities "*September 1st*" (labeled as a **date**), "*George*" (labeled as a **person**), "*1 dollar*" (labeled as a **money**) and "Game of Thrones" (labeled as a **work of art**) are found in the sentence "*On September 1st George Washington won 1 dollar while watching Game of Thrones*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import ColumnCorpus

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. load the corpus (Ontonotes does not ship with Flair, you need to download and reformat into a column format yourself)

corpus: Corpus = ColumnCorpus(

"resources/tasks/onto-ner",

column_format={0: "text", 1: "pos", 2: "upos", 3: "ner"},

tag_to_bioes="ner",

)

# 2. what tag do we want to predict?

tag_type = 'ner'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize fine-tuneable transformer embeddings WITH document context

from flair.embeddings import TransformerWordEmbeddings

embeddings = TransformerWordEmbeddings(

model='xlm-roberta-large',

layers="-1",

subtoken_pooling="first",

fine_tune=True,

use_context=True,

)

# 5. initialize bare-bones sequence tagger (no CRF, no RNN, no reprojection)

from flair.models import SequenceTagger

tagger = SequenceTagger(

hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type='ner',

use_crf=False,

use_rnn=False,

reproject_embeddings=False,

)

# 6. initialize trainer with AdamW optimizer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus, optimizer=torch.optim.AdamW)

# 7. run training with XLM parameters (20 epochs, small LR)

from torch.optim.lr_scheduler import OneCycleLR

trainer.train('resources/taggers/ner-english-ontonotes-large',

learning_rate=5.0e-6,

mini_batch_size=4,

mini_batch_chunk_size=1,

max_epochs=20,

scheduler=OneCycleLR,

embeddings_storage_mode='none',

weight_decay=0.,

)

```

---

### Cite

Please cite the following paper when using this model.

```

@misc{schweter2020flert,

title={FLERT: Document-Level Features for Named Entity Recognition},

author={Stefan Schweter and Alan Akbik},

year={2020},

eprint={2011.06993},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flax-community/clip-rsicd-v2 | flax-community | "2022-04-24T21:03:53Z" | 39,191 | 18 | transformers | [

"transformers",

"pytorch",

"jax",

"clip",

"zero-shot-image-classification",

"vision",

"endpoints_compatible",

"region:us"

] | zero-shot-image-classification | "2022-03-02T23:29:05Z" | ---

tags:

- vision

---

# Model Card: clip-rsicd

## Model Details

This model is a fine-tuned [CLIP by OpenAI](https://huggingface.co/openai/clip-vit-base-patch32). It is designed with an aim to improve zero-shot image classification, text-to-image and image-to-image retrieval specifically on remote sensing images.

### Model Date

July 2021

### Model Type

The base model uses a ViT-B/32 Transformer architecture as an image encoder and uses a masked self-attention Transformer as a text encoder. These encoders are trained to maximize the similarity of (image, text) pairs via a contrastive loss.

### Model Version

We release several checkpoints for `clip-rsicd` model. Refer to [our github repo](https://github.com/arampacha/CLIP-rsicd#evaluation-results) for performance metrics on zero-shot classification for each of those.

### Training

To reproduce the fine-tuning procedure one can use released [script](https://github.com/arampacha/CLIP-rsicd/blob/master/run_clip_flax_tv.py).

The model was trained using batch size 1024, adafactor optimizer with linear warmup and decay with peak learning rate 1e-4 on 1 TPU-v3-8.

Full log of the training run can be found on [WandB](https://wandb.ai/wandb/hf-flax-clip-rsicd/runs/2dj1exsw).

### Demo

Check out the model text-to-image and image-to-image capabilities using [this demo](https://huggingface.co/spaces/sujitpal/clip-rsicd-demo).

### Documents

- [Fine-tuning CLIP on RSICD with HuggingFace and flax/jax on colab using TPU](https://colab.research.google.com/github/arampacha/CLIP-rsicd/blob/master/nbs/Fine_tuning_CLIP_with_HF_on_TPU.ipynb)

### Use with Transformers

```python

from PIL import Image

import requests

from transformers import CLIPProcessor, CLIPModel

model = CLIPModel.from_pretrained("flax-community/clip-rsicd-v2")

processor = CLIPProcessor.from_pretrained("flax-community/clip-rsicd-v2")

url = "https://raw.githubusercontent.com/arampacha/CLIP-rsicd/master/data/stadium_1.jpg"

image = Image.open(requests.get(url, stream=True).raw)

labels = ["residential area", "playground", "stadium", "forest", "airport"]

inputs = processor(text=[f"a photo of a {l}" for l in labels], images=image, return_tensors="pt", padding=True)

outputs = model(**inputs)

logits_per_image = outputs.logits_per_image # this is the image-text similarity score

probs = logits_per_image.softmax(dim=1) # we can take the softmax to get the label probabilities

for l, p in zip(labels, probs[0]):

print(f"{l:<16} {p:.4f}")

```

[Try it on colab](https://colab.research.google.com/github/arampacha/CLIP-rsicd/blob/master/nbs/clip_rsicd_zero_shot.ipynb)

## Model Use

### Intended Use

The model is intended as a research output for research communities. We hope that this model will enable researchers to better understand and explore zero-shot, arbitrary image classification.

In addition, we can imagine applications in defense and law enforcement, climate change and global warming, and even some consumer applications. A partial list of applications can be found [here](https://github.com/arampacha/CLIP-rsicd#applications). In general we think such models can be useful as digital assistants for humans engaged in searching through large collections of images.

We also hope it can be used for interdisciplinary studies of the potential impact of such models - the CLIP paper includes a discussion of potential downstream impacts to provide an example for this sort of analysis.

#### Primary intended uses

The primary intended users of these models are AI researchers.

We primarily imagine the model will be used by researchers to better understand robustness, generalization, and other capabilities, biases, and constraints of computer vision models.

## Data

The model was trained on publicly available remote sensing image captions datasets. Namely [RSICD](https://github.com/201528014227051/RSICD_optimal), [UCM](https://mega.nz/folder/wCpSzSoS#RXzIlrv--TDt3ENZdKN8JA) and [Sydney](https://mega.nz/folder/pG4yTYYA#4c4buNFLibryZnlujsrwEQ). More information on the datasets used can be found on [our project page](https://github.com/arampacha/CLIP-rsicd#dataset).

## Performance and Limitations

### Performance

| Model-name | k=1 | k=3 | k=5 | k=10 |

| -------------------------------- | ----- | ----- | ----- | ----- |

| original CLIP | 0.572 | 0.745 | 0.837 | 0.939 |

| clip-rsicd-v2 (this model) | **0.883** | **0.968** | **0.982** | **0.998** |

## Limitations

The model is fine-tuned on RSI data but can contain some biases and limitations of the original CLIP model. Refer to [CLIP model card](https://huggingface.co/openai/clip-vit-base-patch32#limitations) for details on those.

|

THUDM/cogvlm2-llama3-chat-19B | THUDM | "2024-05-25T13:10:37Z" | 39,135 | 161 | transformers | [

"transformers",

"safetensors",

"text-generation",

"chat",

"cogvlm2",

"conversational",

"custom_code",

"en",

"arxiv:2311.03079",

"license:other",

"autotrain_compatible",

"region:us"

] | text-generation | "2024-05-16T11:51:07Z" | ---

license: other

license_name: cogvlm2

license_link: https://huggingface.co/THUDM/cogvlm2-llama3-chat-19B/blob/main/LICENSE

language:

- en

pipeline_tag: text-generation

tags:

- chat

- cogvlm2

inference: false

---

# CogVLM2

<div align="center">

<img src=https://raw.githubusercontent.com/THUDM/CogVLM2/53d5d5ea1aa8d535edffc0d15e31685bac40f878/resources/logo.svg width="40%"/>

</div>

<p align="center">

👋 <a href="resources/WECHAT.md" target="_blank">Wechat</a> · 💡<a href="http://36.103.203.44:7861/" target="_blank">Online Demo</a> · 🎈<a href="https://github.com/THUDM/CogVLM2" target="_blank">Github Page</a>

</p>

<p align="center">

📍Experience the larger-scale CogVLM model on the <a href="https://open.bigmodel.cn/dev/api#glm-4v">ZhipuAI Open Platform</a>.

</p>

## Model introduction

We launch a new generation of **CogVLM2** series of models and open source two models built with [Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct). Compared with the previous generation of CogVLM open source models, the CogVLM2 series of open source models have the following improvements:

1. Significant improvements in many benchmarks such as `TextVQA`, `DocVQA`.

2. Support **8K** content length.

3. Support image resolution up to **1344 * 1344**.

4. Provide an open source model version that supports both **Chinese and English**.

You can see the details of the CogVLM2 family of open source models in the table below:

| Model name | cogvlm2-llama3-chat-19B | cogvlm2-llama3-chinese-chat-19B |

|------------------|-------------------------------------|-------------------------------------|

| Base Model | Meta-Llama-3-8B-Instruct | Meta-Llama-3-8B-Instruct |

| Language | English | Chinese, English |

| Model size | 19B | 19B |

| Task | Image understanding, dialogue model | Image understanding, dialogue model |

| Text length | 8K | 8K |

| Image resolution | 1344 * 1344 | 1344 * 1344 |

## Benchmark

Our open source models have achieved good results in many lists compared to the previous generation of CogVLM open source models. Its excellent performance can compete with some non-open source models, as shown in the table below:

| Model | Open Source | LLM Size | TextVQA | DocVQA | ChartQA | OCRbench | MMMU | MMVet | MMBench |

|--------------------------------|-------------|----------|----------|----------|----------|----------|----------|----------|----------|

| CogVLM1.1 | ✅ | 7B | 69.7 | - | 68.3 | 590 | 37.3 | 52.0 | 65.8 |

| LLaVA-1.5 | ✅ | 13B | 61.3 | - | - | 337 | 37.0 | 35.4 | 67.7 |

| Mini-Gemini | ✅ | 34B | 74.1 | - | - | - | 48.0 | 59.3 | 80.6 |

| LLaVA-NeXT-LLaMA3 | ✅ | 8B | - | 78.2 | 69.5 | - | 41.7 | - | 72.1 |

| LLaVA-NeXT-110B | ✅ | 110B | - | 85.7 | 79.7 | - | 49.1 | - | 80.5 |

| InternVL-1.5 | ✅ | 20B | 80.6 | 90.9 | **83.8** | 720 | 46.8 | 55.4 | **82.3** |

| QwenVL-Plus | ❌ | - | 78.9 | 91.4 | 78.1 | 726 | 51.4 | 55.7 | 67.0 |

| Claude3-Opus | ❌ | - | - | 89.3 | 80.8 | 694 | **59.4** | 51.7 | 63.3 |

| Gemini Pro 1.5 | ❌ | - | 73.5 | 86.5 | 81.3 | - | 58.5 | - | - |

| GPT-4V | ❌ | - | 78.0 | 88.4 | 78.5 | 656 | 56.8 | **67.7** | 75.0 |

| CogVLM2-LLaMA3 (Ours) | ✅ | 8B | 84.2 | **92.3** | 81.0 | 756 | 44.3 | 60.4 | 80.5 |

| CogVLM2-LLaMA3-Chinese (Ours) | ✅ | 8B | **85.0** | 88.4 | 74.7 | **780** | 42.8 | 60.5 | 78.9 |

All reviews were obtained without using any external OCR tools ("pixel only").

## Quick Start

here is a simple example of how to use the model to chat with the CogVLM2 model. For More use case. Find in our [github](https://github.com/THUDM/CogVLM2)

```python

import torch

from PIL import Image

from transformers import AutoModelForCausalLM, AutoTokenizer

MODEL_PATH = "THUDM/cogvlm2-llama3-chat-19B"

DEVICE = 'cuda' if torch.cuda.is_available() else 'cpu'

TORCH_TYPE = torch.bfloat16 if torch.cuda.is_available() and torch.cuda.get_device_capability()[0] >= 8 else torch.float16

tokenizer = AutoTokenizer.from_pretrained(

MODEL_PATH,

trust_remote_code=True

)

model = AutoModelForCausalLM.from_pretrained(

MODEL_PATH,

torch_dtype=TORCH_TYPE,

trust_remote_code=True,

).to(DEVICE).eval()

text_only_template = "A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: {} ASSISTANT:"

while True:

image_path = input("image path >>>>> ")

if image_path == '':

print('You did not enter image path, the following will be a plain text conversation.')

image = None

text_only_first_query = True

else:

image = Image.open(image_path).convert('RGB')

history = []

while True:

query = input("Human:")

if query == "clear":

break

if image is None:

if text_only_first_query:

query = text_only_template.format(query)

text_only_first_query = False

else:

old_prompt = ''

for _, (old_query, response) in enumerate(history):

old_prompt += old_query + " " + response + "\n"

query = old_prompt + "USER: {} ASSISTANT:".format(query)

if image is None:

input_by_model = model.build_conversation_input_ids(

tokenizer,

query=query,

history=history,

template_version='chat'

)

else:

input_by_model = model.build_conversation_input_ids(

tokenizer,

query=query,

history=history,

images=[image],

template_version='chat'

)

inputs = {

'input_ids': input_by_model['input_ids'].unsqueeze(0).to(DEVICE),

'token_type_ids': input_by_model['token_type_ids'].unsqueeze(0).to(DEVICE),

'attention_mask': input_by_model['attention_mask'].unsqueeze(0).to(DEVICE),

'images': [[input_by_model['images'][0].to(DEVICE).to(TORCH_TYPE)]] if image is not None else None,

}

gen_kwargs = {

"max_new_tokens": 2048,

"pad_token_id": 128002,

}

with torch.no_grad():

outputs = model.generate(**inputs, **gen_kwargs)

outputs = outputs[:, inputs['input_ids'].shape[1]:]

response = tokenizer.decode(outputs[0])

response = response.split("<|end_of_text|>")[0]

print("\nCogVLM2:", response)

history.append((query, response))

```

## License

This model is released under the CogVLM2 [LICENSE](LICENSE). For models built with Meta Llama 3, please also adhere to the [LLAMA3_LICENSE](LLAMA3_LICENSE).

## Citation

If you find our work helpful, please consider citing the following papers

```

@misc{wang2023cogvlm,

title={CogVLM: Visual Expert for Pretrained Language Models},

author={Weihan Wang and Qingsong Lv and Wenmeng Yu and Wenyi Hong and Ji Qi and Yan Wang and Junhui Ji and Zhuoyi Yang and Lei Zhao and Xixuan Song and Jiazheng Xu and Bin Xu and Juanzi Li and Yuxiao Dong and Ming Ding and Jie Tang},

year={2023},

eprint={2311.03079},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

|

rubra-ai/Meta-Llama-3-70B-Instruct-GGUF | rubra-ai | "2024-07-01T06:20:33Z" | 39,052 | 3 | null | [

"gguf",

"function-calling",

"tool-calling",

"agentic",

"rubra",

"conversational",

"en",

"license:llama3",

"model-index",

"region:us"

] | null | "2024-06-14T16:26:00Z" | ---

license: llama3

model-index:

- name: Rubra-Meta-Llama-3-70B

results:

- task:

type: text-generation

dataset:

type: MMLU

name: MMLU

metrics:

- type: 5-shot

value: 75.9

verified: false

- task:

type: text-generation

dataset:

type: GPQA

name: GPQA

metrics:

- type: 0-shot

value: 33.93

verified: false

- task:

type: text-generation

dataset:

type: GSM-8K

name: GSM-8K

metrics:

- type: 8-shot, CoT

value: 82.26

verified: false

- task:

type: text-generation

dataset:

type: MATH

name: MATH

metrics:

- type: 4-shot, CoT

value: 34.24

verified: false

- task:

type: text-generation

dataset:

type: MT-bench

name: MT-bench

metrics:

- type: GPT-4 as Judge

value: 8.36

verified: false

tags:

- function-calling

- tool-calling

- agentic

- rubra

- conversational

language:

- en

---

# Rubra Llama-3 70B GGUF

Original model: [rubra-ai/Meta-Llama-3-70B-Instruct](https://huggingface.co/rubra-ai/Meta-Llama-3-70B-Instruct)

## Model description

The model is the result of further post-training [meta-llama/Meta-Llama-3-70B](https://huggingface.co/meta-llama/Meta-Llama-3-70B). This model is designed for high performance in various instruction-following tasks and complex interactions, including multi-turn function calling and detailed conversations.

## Training Data

The model underwent additional training on a proprietary dataset encompassing diverse instruction-following, chat, and function calling data. This post-training process enhances the model's ability to integrate tools and manage complex interaction scenarios effectively.

## How to use

Refer to https://docs.rubra.ai/inference/llamacpp for usage. Feel free to ask/open issues up in our Github repo: https://github.com/rubra-ai/rubra

## Limitations and Bias

While the model performs well on a wide range of tasks, it may still produce biased or incorrect outputs. Users should exercise caution and critical judgment when using the model in sensitive or high-stakes applications. The model's outputs are influenced by the data it was trained on, which may contain inherent biases.

## Ethical Considerations

Users should ensure that the deployment of this model adheres to ethical guidelines and consider the potential societal impact of the generated text. Misuse of the model for generating harmful or misleading content is strongly discouraged.

## Acknowledgements

We would like to thank Meta for the model.

## Contact Information

For questions or comments about the model, please reach out to [the rubra team](mailto:rubra@acorn.io).

## Citation

If you use this work, please cite it as:

```

@misc {rubra_ai_2024,

author = { Sanjay Nadhavajhala and Yingbei Tong },

title = { Rubra-Meta-Llama-3-70B-Instruct },

year = 2024,

url = { https://huggingface.co/rubra-ai/Meta-Llama-3-70B-Instruct },

doi = { 10.57967/hf/2643 },

publisher = { Hugging Face }

}

``` |

mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF | mradermacher | "2024-07-01T16:51:37Z" | 39,022 | 2 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"not-for-all-audiences",

"en",

"base_model:kromeurus/L3-Blackfall-Summanus-v0.1-15B",

"license:cc-by-sa-4.0",

"endpoints_compatible",

"region:us"

] | null | "2024-07-01T05:50:18Z" | ---

base_model: kromeurus/L3-Blackfall-Summanus-v0.1-15B

language:

- en

library_name: transformers

license: cc-by-sa-4.0

quantized_by: mradermacher

tags:

- mergekit

- merge

- not-for-all-audiences

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

static quants of https://huggingface.co/kromeurus/L3-Blackfall-Summanus-v0.1-15B

<!-- provided-files -->

weighted/imatrix quants are available at https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-i1-GGUF

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q2_K.gguf) | Q2_K | 5.8 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.IQ3_XS.gguf) | IQ3_XS | 6.5 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q3_K_S.gguf) | Q3_K_S | 6.8 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.IQ3_S.gguf) | IQ3_S | 6.8 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.IQ3_M.gguf) | IQ3_M | 7.0 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q3_K_M.gguf) | Q3_K_M | 7.5 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q3_K_L.gguf) | Q3_K_L | 8.1 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.IQ4_XS.gguf) | IQ4_XS | 8.4 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q4_K_S.gguf) | Q4_K_S | 8.7 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q4_K_M.gguf) | Q4_K_M | 9.2 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q5_K_S.gguf) | Q5_K_S | 10.5 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q5_K_M.gguf) | Q5_K_M | 10.8 | |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q6_K.gguf) | Q6_K | 12.4 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/L3-Blackfall-Summanus-v0.1-15B-GGUF/resolve/main/L3-Blackfall-Summanus-v0.1-15B.Q8_0.gguf) | Q8_0 | 16.1 | fast, best quality |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

sentence-transformers/paraphrase-xlm-r-multilingual-v1 | sentence-transformers | "2024-03-27T12:22:07Z" | 38,957 | 60 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"tf",

"safetensors",

"xlm-roberta",

"feature-extraction",

"sentence-similarity",

"transformers",

"arxiv:1908.10084",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | sentence-similarity | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

library_name: sentence-transformers

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

pipeline_tag: sentence-similarity

---

# sentence-transformers/paraphrase-xlm-r-multilingual-v1

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/paraphrase-xlm-r-multilingual-v1')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/paraphrase-xlm-r-multilingual-v1')

model = AutoModel.from_pretrained('sentence-transformers/paraphrase-xlm-r-multilingual-v1')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/paraphrase-xlm-r-multilingual-v1)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: XLMRobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

This model was trained by [sentence-transformers](https://www.sbert.net/).

If you find this model helpful, feel free to cite our publication [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084):

```bibtex