modelId stringlengths 6 107 | label list | readme stringlengths 0 56.2k | readme_len int64 0 56.2k |

|---|---|---|---|

l3cube-pune/MarathiSentiment | [

"Negative",

"Neutral",

"Positive"

] | ---

language: mr

tags:

- albert

license: cc-by-4.0

datasets:

- L3CubeMahaSent

widget:

- text: "I like you. </s></s> I love you."

---

## MarathiSentiment

MarathiSentiment is an IndicBERT(ai4bharat/indic-bert) model fine-tuned on L3CubeMahaSent - a Marathi tweet-based sentiment analysis dataset.

[dataset link] (https:... | 888 |

erst/xlm-roberta-base-finetuned-nace | [

"0111",

"0112",

"0113",

"0114",

"0115",

"0116",

"0119",

"0121",

"0122",

"0123",

"0124",

"0125",

"0126",

"0127",

"0128",

"0129",

"0130",

"0141",

"0142",

"0143",

"0144",

"0145",

"0146",

"0147",

"0149",

"0150",

"0161",

"0162",

"0163",

"0164",

"0170",

"0210"... | # Classifying Text into NACE Codes

This model is [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) fine-tuned to classify descriptions of activities into [NACE Rev. 2](https://ec.europa.eu/eurostat/web/nace-rev2) codes.

## Data

The data used to fine-tune the model consist of 2.5 million descriptions of act... | 1,049 |

khalidalt/DeBERTa-v3-large-mnli | [

"contradiction",

"entailment",

"neutral"

] | ---

language:

- en

tags:

- text-classification

- zero-shot-classification

metrics:

- accuracy

widget:

- text: "The Movie have been criticized for the story. However, I think it is a great movie. [SEP] I liked the movie."

---

# DeBERTa-v3-large-mnli

## Model description

This model was trained on the Multi-... | 2,132 |

transformersbook/distilbert-base-uncased-finetuned-emotion | [

"sadness",

"joy",

"love",

"anger",

"fear",

"surprise"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

args: default... | 2,137 |

sismetanin/xlm_roberta_base-ru-sentiment-rusentiment | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4"

] | ---

language:

- ru

tags:

- sentiment analysis

- Russian

---

## XML-RoBERTa-Base-ru-sentiment-RuSentiment

XML-RoBERTa-Base-ru-sentiment-RuSentiment is a [XML-RoBERTa-Base](https://huggingface.co/xlm-roberta-base) model fine-tuned on [RuSentiment dataset](https://github.com/text-machine-lab/rusentiment) of general-dom... | 6,346 |

tals/albert-base-vitaminc-fever | [

"NOT ENOUGH INFO",

"REFUTES",

"SUPPORTS"

] | ---

language: python

datasets:

- fever

- glue

- tals/vitaminc

---

# Details

Model used in [Get Your Vitamin C! Robust Fact Verification with Contrastive Evidence](https://aclanthology.org/2021.naacl-main.52/) (Schuster et al., NAACL 21`).

For more details see: https://github.com/TalSchuster/VitaminC

When using this m... | 2,357 |

nanopass/distilbert-base-uncased-emotion-2 | [

"anger",

"fear",

"joy",

"love",

"sadness",

"surprise"

] | ---

language:

- en

thumbnail: https://avatars3.githubusercontent.com/u/32437151?s=460&u=4ec59abc8d21d5feea3dab323d23a5860e6996a4&v=4

tags:

- text-classification

- emotion

- pytorch

license: apache-2.0

datasets:

- emotion

metrics:

- Accuracy, F1 Score

---

# Distilbert-base-uncased-emotion

## Model description:

[Distil... | 2,898 |

DTAI-KULeuven/robbertje-merged-dutch-sentiment | [

"Positive",

"Negative"

] | ---

language: nl

license: mit

datasets:

- dbrd

model-index:

- name: robbertje-merged-dutch-sentiment

results:

- task:

type: text-classification

name: Text Classification

dataset:

name: dbrd

type: sentiment-analysis

split: test

metrics:

- name: Accuracy

type: accuracy

... | 4,457 |

beomi/beep-KcELECTRA-base-hate | [

"hate",

"none",

"offensive"

] | Entry not found | 15 |

sileod/roberta-base-mnli | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | ---

license: mit

tags:

- generated_from_trainer

datasets:

- multi_nli

metrics:

- accuracy

model-index:

- name: roberta-base-mnli

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: multi_nli

type: multi_nli

args: default

metrics:

- name: Accu... | 1,733 |

ICFNext/EYY-categorisation-1.0 | [

"arts and culture",

"climate change",

"democratic values",

"digital",

"education",

"employment",

"environmental sustainability",

"european learning mobility",

"health and well-being",

"inclusion",

"n/a",

"policy dialogues",

"renewable energy",

"research and innovation",

"sports",

"stud... | Entry not found | 15 |

avichr/hebEMO_anticipation | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,444 |

textattack/bert-base-uncased-WNLI | null | ## TextAttack Model Card

This `bert-base-uncased` model was fine-tuned for sequence classification using TextAttack

and the glue dataset loaded using the `nlp` library. The model was fine-tuned

for 5 epochs with a batch size of 64, a learning

rate of 5e-05, and a maximum sequence length of 256.

Since this was a cla... | 622 |

tr3cks/2LabelsSentimentAnalysisSpanish | null | Entry not found | 15 |

IMSyPP/hate_speech_it | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3"

] | ---

widget:

- text: "Ciao, mi chiamo Marcantonio, sono di Roma. Studio informatica all'Università di Roma."

language:

- it

license: mit

---

# Hate Speech Classifier for Social Media Content in Italian Language

A monolingual model for hate speech classification of social media content in Italian language. The model... | 797 |

textattack/roberta-base-rotten-tomatoes | null | ## TextAttack Model Card

This `roberta-base` model was fine-tuned for sequence classificationusing TextAttack

and the rotten_tomatoes dataset loaded using the `nlp` library. The model was fine-tuned

for 10 epochs with a batch size of 64, a learning

rate of 2e-05, and a maximum sequence length of ... | 669 |

nbroad/bigbird-base-health-fact | [

"false",

"mixture",

"true",

"unproven"

] | ---

language:

- en

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- health_fact

model-index:

- name: bigbird-base-health-fact

results:

- task:

type: text-classification

name: Text Classification

dataset:

name: health_fact

type: health_fact

split: test

metrics:

... | 12,627 |

Gadmz/censor-testing-performance | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6"

] | Entry not found | 15 |

sismetanin/xlm_roberta_large-ru-sentiment-rusentiment | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4"

] | ---

language:

- ru

tags:

- sentiment analysis

- Russian

---

## XML-RoBERTa-Large-ru-sentiment-RuSentiment

XML-RoBERTa-Large-ru-sentiment-RuSentiment is a [XML-RoBERTa-Large](https://huggingface.co/xlm-roberta-large) model fine-tuned on [RuSentiment dataset](https://github.com/text-machine-lab/rusentiment) of general... | 6,350 |

deepset/bert-base-german-cased-sentiment-Germeval17 | [

"negative",

"neutral",

"positive"

] | Entry not found | 15 |

textattack/albert-base-v2-SST-2 | null | ## TextAttack Model Card

This `albert-base-v2` model was fine-tuned for sequence classification using TextAttack

and the glue dataset loaded using the `nlp` library. The model was fine-tuned

for 5 epochs with a batch size of 32, a learning

rate of 3e-05, and a maximum sequence length of 64.

Since this was a classif... | 619 |

IDEA-CCNL/Taiyi-CLIP-Roberta-large-326M-Chinese | [

"LABEL_0",

"LABEL_1",

"LABEL_10",

"LABEL_100",

"LABEL_101",

"LABEL_102",

"LABEL_103",

"LABEL_104",

"LABEL_105",

"LABEL_106",

"LABEL_107",

"LABEL_108",

"LABEL_109",

"LABEL_11",

"LABEL_110",

"LABEL_111",

"LABEL_112",

"LABEL_113",

"LABEL_114",

"LABEL_115",

"LABEL_116",

"LABEL_... | ---

license: apache-2.0

# inference: false

# pipeline_tag: zero-shot-image-classification

pipeline_tag: feature-extraction

# inference:

# parameters:

tags:

- clip

- zh

- image-text

- feature-extraction

---

# Model Details

This model is a Chinese CLIP model trained on [Noah-Wukong Dataset](https://wukong-dataset.gi... | 3,484 |

salesken/paraphrase_diversity_ranker | null | ---

tags: salesken

license: apache-2.0

inference: false

---

We have trained a model to evaluate if a paraphrase is a semantic variation to the input query or just a surface level variation. Data augmentation by adding Surface level variations does not add much value to the NLP model training. if the approach to parap... | 4,896 |

MMG/xlm-roberta-base-sa-spanish | [

"Negative",

"Neutral",

"Positive"

] | Entry not found | 15 |

Mithil/86RecallRoberta | null | ---

license: afl-3.0

---

| 25 |

avichr/hebEMO_fear | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,444 |

SkolkovoInstitute/roberta_toxicity_classifier_v1 | null | This model is a clone of [SkolkovoInstitute/roberta_toxicity_classifier](https://huggingface.co/SkolkovoInstitute/roberta_toxicity_classifier) trained on a disjoint dataset.

While `roberta_toxicity_classifier` is used for evaluation of detoxification algorithms, `roberta_toxicity_classifier_v1` can be used within the... | 446 |

elozano/bert-base-cased-fake-news | [

"Fake",

"Real"

] | Entry not found | 15 |

emrecan/distilbert-base-turkish-cased-allnli_tr | [

"contradiction",

"entailment",

"neutral"

] | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

metrics:

- accuracy

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidat... | 7,089 |

Ahmedgr/DistilBert_Fine_tune_QuestionVsAnswer | [

"answer",

"question"

] | ---

tags:

- generated_from_trainer

model-index:

- name: DistilBert_Fine_tune_QuestionVsAnswer

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# DistilBert_Fine_t... | 943 |

qanastek/XLMRoberta-Alexa-Intents-Classification | [

"audio_volume_other",

"play_music",

"iot_hue_lighton",

"general_greet",

"calendar_set",

"audio_volume_down",

"social_query",

"audio_volume_mute",

"iot_wemo_on",

"iot_hue_lightup",

"audio_volume_up",

"iot_coffee",

"takeaway_query",

"qa_maths",

"play_game",

"cooking_query",

"iot_hue_li... | ---

tags:

- Transformers

- text-classification

- intent-classification

- multi-class-classification

- natural-language-understanding

languages:

- af-ZA

- am-ET

- ar-SA

- az-AZ

- bn-BD

- cy-GB

- da-DK

- de-DE

- el-GR

- en-US

- es-ES

- fa-IR

- fi-FI

- fr-FR

- he-IL

- hi-IN

- hu-HU

- hy-AM

- id-ID

- is-IS

- it-IT

- ja-JP

... | 7,985 |

MoritzLaurer/MiniLM-L6-mnli-fever-docnli-ling-2c | [

"entailment",

"not_entailment"

] | ---

language:

- en

tags:

- text-classification

- zero-shot-classification

metrics:

- accuracy

widget:

- text: "I first thought that I liked the movie, but upon second thought the movie was actually disappointing. [SEP] The movie was good."

---

# MiniLM-L6-mnli-fever-docnli-ling-2c

## Model description

This model was ... | 3,986 |

TransQuest/monotransquest-hter-en_zh-wiki | [

"LABEL_0"

] | ---

language: en-zh

tags:

- Quality Estimation

- monotransquest

- hter

license: apache-2.0

---

# TransQuest: Translation Quality Estimation with Cross-lingual Transformers

The goal of quality estimation (QE) is to evaluate the quality of a translation without having access to a reference translation. High-accuracy QE... | 5,426 |

amanbawa96/legal-bert-based-uncase | [

"LABEL_0",

"LABEL_1",

"LABEL_10",

"LABEL_11",

"LABEL_12",

"LABEL_13",

"LABEL_14",

"LABEL_15",

"LABEL_16",

"LABEL_17",

"LABEL_18",

"LABEL_19",

"LABEL_2",

"LABEL_20",

"LABEL_21",

"LABEL_22",

"LABEL_23",

"LABEL_24",

"LABEL_25",

"LABEL_26",

"LABEL_27",

"LABEL_28",

"LABEL_29",... | Entry not found | 15 |

has-abi/distilBERT-finetuned-resumes-sections | [

"awards",

"certificates",

"contact/name/title",

"education",

"interests",

"languages",

"para",

"professional_experiences",

"projects",

"skills",

"soft_skills",

"summary"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- f1

- accuracy

model-index:

- name: distilBERT-finetuned-resumes-sections

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then ... | 3,207 |

avichr/hebEMO_anger | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,444 |

avichr/hebEMO_disgust | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,444 |

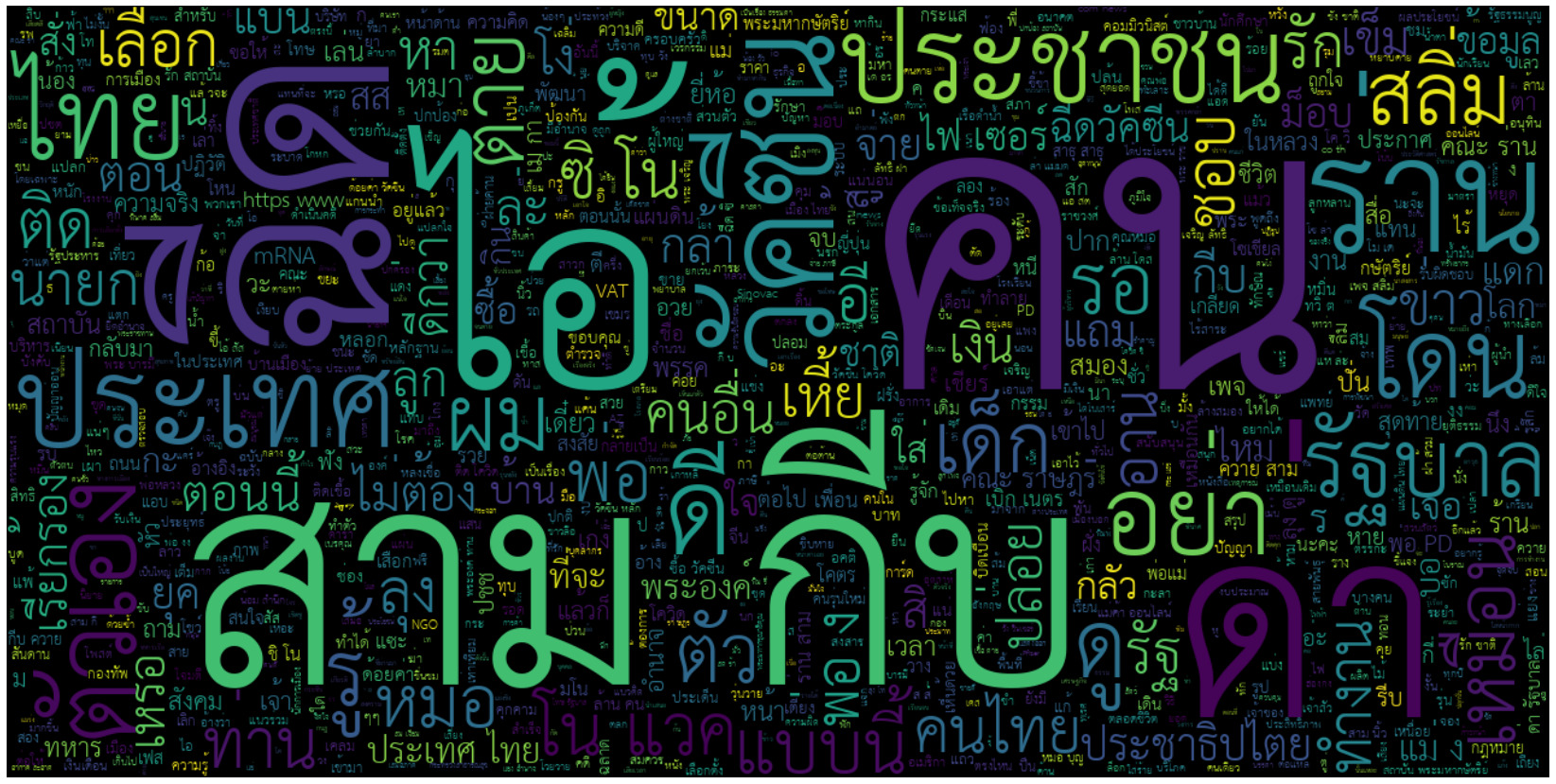

tupleblog/salim-classifier | null | ---

widget:

- text: "รัฐรับผิดชอบทุกชีวิตไม่ได้หรอกคนให้บริการต้องจัดการเองถ้าจะเปิดผับบาร์"

---

# Salim-Classifier

**วัตถุประสงค์:** ทุกวันนี้หาเพื่อนที่รักชาติ ศาสนา พระมหากษัตริย์ รัฐบาลยากเหลือเกิน มีแต่พว... | 2,347 |

ydshieh/tiny-random-gptj-for-sequence-classification | null | Entry not found | 15 |

MKaan/multilingual-cpv-sector-classifier | [

"Administration, defence and social security services. 👮♀️",

"Agricultural machinery. 🚜",

"Agricultural, farming, fishing, forestry and related products. 🌾",

"Agricultural, forestry, horticultural, aquacultural and apicultural services. 👨🏿🌾",

"Architectural, construction, engineering and inspection ... | ---

license: apache-2.0

tags:

- eu

- public procurement

- cpv

- sector

- multilingual

- transformers

- text-classification

widget:

- text: "Oppegård municipality, hereafter called the contracting authority, intends to enter into a framework agreement with one supplier for the procurement of fresh bread and bakery produ... | 5,964 |

NDugar/debertav3-mnli-snli-anli | [

"contradiction",

"entailment",

"neutral"

] | ---

language: en

tags:

- deberta-v3

- deberta-v2`

- deberta-mnli

tasks: mnli

thumbnail: https://huggingface.co/front/thumbnails/microsoft.png

license: mit

pipeline_tag: zero-shot-classification

---

## DeBERTa: Decoding-enhanced BERT with Disentangled Attention

[DeBERTa](https://arxiv.org/abs/2006.03654) improves the B... | 4,788 |

Hate-speech-CNERG/dehatebert-mono-german | [

"NON_HATE",

"HATE"

] | ---

language: de

license: apache-2.0

---

This model is used detecting **hatespeech** in **German language**. The mono in the name refers to the monolingual setting, where the model is trained using only English language data. It is finetuned on multilingual bert model.

The model is trained with different learning rate... | 1,058 |

HooshvareLab/bert-fa-base-uncased-sentiment-deepsentipers-multi | [

"angry",

"delighted",

"furious",

"happy",

"neutral"

] | ---

language: fa

license: apache-2.0

---

# ParsBERT (v2.0)

A Transformer-based Model for Persian Language Understanding

We reconstructed the vocabulary and fine-tuned the ParsBERT v1.1 on the new Persian corpora in order to provide some functionalities for using ParsBERT in other scopes!

Please follow the [ParsBERT](... | 3,268 |

Narrativaai/deberta-v3-small-finetuned-hate_speech18 | [

"NO_HATE",

"HATE",

"IDK",

"RELATION"

] | ---

license: mit

tags:

- generated_from_trainer

datasets:

- hate_speech18

widget:

- text: "ok, so do we need to kill them too or are the slavs okay ? for some reason whenever i hear the word slav , the word slobber comes to mind and i picture a slobbering half breed creature like the humpback of notre dame or Igor haha... | 2,183 |

ml4pubmed/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext_pub_section | [

"BACKGROUND",

"CONCLUSIONS",

"METHODS",

"OBJECTIVE",

"RESULTS"

] | ---

language:

- en

datasets:

- pubmed

metrics:

- f1

tags:

- text-classification

- document sections

- sentence classification

- document classification

- medical

- health

- biomedical

pipeline_tag: text-classification

widget:

- text: "many pathogenic processes and diseases are the result of an erroneous activation of t... | 3,264 |

NikolajMunch/danish-emotion-classification | [

"Afsky",

"Frygt",

"Glæde",

"Overraskelse",

"Tristhed",

"Vrede"

] | ---

widget:

- text: "Hold da op! Kan det virkelig passe?"

language:

- "da"

tags:

- sentiment

- emotion

- danish

---

# **-- EMODa --**

## BERT-model for danish multi-class classification of emotions

Classifies a danish sentence into one of 6 different emotions:

| Danish emotion | Ekman's emotion |

| -... | 1,207 |

elozano/tweet_emotion_eval | [

"Anger",

"Joy",

"Optimism",

"Sadness"

] | ---

license: mit

datasets:

- tweet_eval

language: en

widget:

- text: "Stop sharing which songs did you listen to during this year on Spotify, NOBODY CARES"

example_title: "Anger"

- text: "I love that joke HAHAHAHAHA"

example_title: "Joy"

- text: "Despite I've not studied a lot for this exam, I think I wil... | 433 |

elozano/tweet_sentiment_eval | [

"Negative",

"Neutral",

"Positive"

] | ---

license: mit

datasets:

- tweet_eval

language: en

widget:

- text: "I love summer!"

example_title: "Positive"

- text: "Does anyone want to play?"

example_title: "Neutral"

- text: "This movie is just awful! 😫"

example_title: "Negative"

---

| 260 |

Rebreak/bert_news_class | null | ---

license: mit

---

Classifier of news affecting the stock price in the next 10 minutes | 88 |

Monsia/camembert-fr-covid-tweet-sentiment-classification | [

"negatif",

"neutre",

"positif"

] | ---

language:

- fr

tags:

- classification

license: apache-2.0

metrics:

- accuracy

widget:

- text: "tchai on est morts. on va se faire vacciner et ils vont contrôler comme les marionnettes avec des fils. d'après les 'ont dit'..."

---

# camembert-fr-covid-tweet-sentiment-classification

This model is a fine-tune checkpoin... | 1,295 |

Theivaprakasham/bert-base-cased-twitter_sentiment | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: bert-base-cased-twitter_sentiment

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove th... | 1,926 |

ghanashyamvtatti/roberta-fake-news | null | A fake news detector using RoBERTa.

Dataset: https://www.kaggle.com/clmentbisaillon/fake-and-real-news-dataset

Training involved using hyperparameter search with 10 trials. | 174 |

cardiffnlp/tweet-topic-19-multi | [

"arts_&_culture",

"business_&_entrepreneurs",

"celebrity_&_pop_culture",

"diaries_&_daily_life",

"family",

"fashion_&_style",

"film_tv_&_video",

"fitness_&_health",

"food_&_dining",

"gaming",

"learning_&_educational",

"music",

"news_&_social_concern",

"other_hobbies",

"relationships",

... | # tweet-topic-19-multi

This is a roBERTa-base model trained on ~90m tweets until the end of 2019 (see [here](https://huggingface.co/cardiffnlp/twitter-roberta-base-2019-90m)), and finetuned for multi-label topic classification on a corpus of 11,267 tweets.

The original roBERTa-base model can be found [here](https://h... | 2,691 |

avichr/hebEMO_trust | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,443 |

raruidol/ArgumentRelation | [

"LABEL_0",

"LABEL_1"

] | # Argument Relation Mining

Best performing model trained in the "Transformer-Based Models for Automatic Detection of Argument Relations: A Cross-Domain Evaluation" paper.

Code available in https://github.com/raruidol/ArgumentRelationMining

Cite:

```

@article{ruiz2021transformer,

title={Transformer-based... | 581 |

DeepPavlov/xlm-roberta-large-en-ru-mnli | [

"CONTRADICTION",

"ENTAILMENT",

"NEUTRAL"

] | ---

language:

- en

- ru

datasets:

- glue

- mnli

model_index:

- name: mnli

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: GLUE MNLI

type: glue

args: mnli

tags:

- xlm-roberta

- xlm-roberta-large

- xlm-roberta-large-en-ru

- xlm-roberta-large-e... | 489 |

dminiotas05/distilbert-base-uncased-finetuned-ft650_10class | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6",

"LABEL_7",

"LABEL_8",

"LABEL_9"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-ft650_10class

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete... | 1,658 |

Cameron/BERT-SBIC-targetcategory | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6",

"LABEL_7"

] | Entry not found | 15 |

ethanyt/guwen-cls | [

"易藏",

"医藏",

"艺藏",

"史藏",

"佛藏",

"集藏",

"诗藏",

"子藏",

"儒藏",

"道藏"

] | ---

language:

- "zh"

thumbnail: "https://user-images.githubusercontent.com/9592150/97142000-cad08e00-179a-11eb-88df-aff9221482d8.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "pytorch"

- "text classificatio"

license: "apache-2.0"

pipeline_tag: "text-classification"

wi... | 1,239 |

martin-ha/toxic-comment-model | [

"non-toxic",

"toxic"

] | ---

language: en

---

## Model description

This model is a fine-tuned version of the [DistilBERT model](https://huggingface.co/transformers/model_doc/distilbert.html) to classify toxic comments.

## How to use

You can use the model with the following code.

```python

from transformers import AutoModelForSequenceClass... | 3,184 |

gargam/roberta-base-crest | null | Entry not found | 15 |

bhadresh-savani/electra-base-emotion | [

"anger",

"fear",

"joy",

"love",

"sadness",

"surprise"

] | ---

language:

- en

thumbnail: https://avatars3.githubusercontent.com/u/32437151?s=460&u=4ec59abc8d21d5feea3dab323d23a5860e6996a4&v=4

tags:

- text-classification

- emotion

- pytorch

license: apache-2.0

datasets:

- emotion

metrics:

- Accuracy, F1 Score

model-index:

- name: bhadresh-savani/electra-base-emotion

results:

... | 3,642 |

fourthbrain-demo/model_trained_by_me2 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: model_trained_by_me2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comme... | 1,178 |

chinhon/fake_tweet_detect | null | Entry not found | 15 |

julien-c/reactiongif-roberta | [

"agree",

"applause",

"awww",

"dance",

"deal_with_it",

"do_not_want",

"eww",

"eye_roll",

"facepalm",

"fist_bump",

"good_luck",

"happy_dance",

"hearts",

"high_five",

"hug",

"idk",

"kiss",

"mic_drop",

"no",

"oh_snap",

"ok",

"omg",

"oops",

"please",

"popcorn",

"scared",... | ---

license: apache-2.0

tags:

- generated-from-trainer

datasets:

- julien-c/reactiongif

metrics:

- accuracy

model-index:

- name: model

results:

- task:

name: Text Classification

type: text-classification

metrics:

- name: Accuracy

type: accuracy

value: 0.2662102282047272

---

<... | 1,816 |

yosemite/autonlp-imdb-sentiment-analysis-english-470512388 | [

"negative",

"positive"

] | ---

tags: autonlp

language: en

widget:

- text: "I love AutoNLP 🤗"

datasets:

- yosemite/autonlp-data-imdb-sentiment-analysis-english

co2_eq_emissions: 256.38650494338367

---

# Model Trained Using AutoNLP

- Problem type: Binary Classification

- Model ID: 470512388

- CO2 Emissions (in grams): 256.38650494338367

## Val... | 1,215 |

Wi/arxiv-distilbert-base-cased | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6",

"LABEL_7",

"LABEL_8",

"LABEL_9"

] | ---

license: apache-2.0

language:

- en

datasets:

- arxiv_dataset

tags:

- distilbert

---

# DistilBERT ArXiv Category Classification

DistilBERT model fine-tuned on a small subset of the [ArXiv dataset](https://www.kaggle.com/datasets/Cornell-University/arxiv) to predict the category of a given paper.

| 305 |

facebook/roberta-hate-speech-dynabench-r4-target | null | ---

language: en

---

# LFTW R4 Target

The R4 Target model from [Learning from the Worst: Dynamically Generated Datasets to Improve Online Hate Detection](https://arxiv.org/abs/2012.15761)

## Citation Information

```bibtex

@inproceedings{vidgen2021lftw,

title={Learning from the Worst: Dynamically Generated Dataset... | 570 |

NTUYG/DeepSCC-RoBERTa | [

"LABEL_0",

"LABEL_1",

"LABEL_10",

"LABEL_11",

"LABEL_12",

"LABEL_13",

"LABEL_14",

"LABEL_15",

"LABEL_16",

"LABEL_17",

"LABEL_18",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6",

"LABEL_7",

"LABEL_8",

"LABEL_9"

] | ## How to use

```python

from simpletransformers.classification import ClassificationModel, ClassificationArgs

name_file = ['bash', 'c', 'c#', 'c++','css', 'haskell', 'java', 'javascript', 'lua', 'objective-c', 'perl', 'php', 'python','r','ruby', 'scala', 'sql', 'swift', 'vb.net']

deep_scc_model_args = Classification... | 839 |

textattack/xlnet-base-cased-MNLI | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | Entry not found | 15 |

Souvikcmsa/BERT_sentiment_analysis | [

"negative",

"neutral",

"positive"

] | ---

tags: autotrain

language: en

widget:

- text: "I love AutoTrain 🤗"

- Output: "Positive"

datasets:

- Souvikcmsa/autotrain-data-sentiment_analysis

co2_eq_emissions: 0.029363397844935534

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification (3-class Sentiment Classification)

## Validation M... | 1,918 |

autoevaluate/binary-classification | [

"negative",

"positive"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: autoevaluate-binary-classification

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

args: sst2

metrics:

- name... | 2,431 |

assemblyai/distilbert-base-uncased-qqp | null | # DistilBERT-Base-Uncased for Duplicate Question Detection

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) originally released in ["DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter"](https://arxiv.org/abs/1910.01108) and traine... | 1,845 |

avichr/hebEMO_sadness | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,444 |

mrm8488/distilroberta-finetuned-tweets-hate-speech | null | ---

language: en

tags:

- twitter

- hate

- speech

datasets:

- tweets_hate_speech_detection

widget:

- text: "the fuck done with #mansplaining and other bullshit."

---

# distilroberta-base fine-tuned on tweets_hate_speech_detection dataset for hate speech detection

Validation accuray: 0.98 | 289 |

pertschuk/albert-intent-model-v3 | null | Entry not found | 15 |

shatabdi/twisent_twisent | [

"NEGATIVE",

"POSITIVE"

] | ---

tags:

- generated_from_trainer

model-index:

- name: twisent_twisent

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# twisent_twisent

This model is a fine-t... | 1,084 |

jeanconstantin/distilcausal_bert_fr | null | Entry not found | 15 |

avichr/hebEMO_surprise | null | # HebEMO - Emotion Recognition Model for Modern Hebrew

<img align="right" src="https://github.com/avichaychriqui/HeBERT/blob/main/data/heBERT_logo.png?raw=true" width="250">

HebEMO is a tool that detects polarity and extracts emotions from modern Hebrew User-Generated Content (UGC), which was trained on a unique Covid... | 5,442 |

elozano/bert-base-cased-clickbait-news | [

"Clickbait",

"Normal"

] | Entry not found | 15 |

RohanJoshi28/twitter_sentiment_analysisv1 | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | Entry not found | 15 |

cross-encoder/mmarco-mdeberta-v3-base-5negs-v1 | [

"LABEL_0"

] | Entry not found | 15 |

Hate-speech-CNERG/dehatebert-mono-portugese | [

"NON_HATE",

"HATE"

] | ---

language: pt

license: apache-2.0

---

This model is used detecting **hatespeech** in **Portuguese language**. The mono in the name refers to the monolingual setting, where the model is trained using only English language data. It is finetuned on multilingual bert model.

The model is trained with different learning r... | 1,061 |

kuzgunlar/electra-turkish-sentiment-analysis | [

"Negative",

"Positive"

] | Entry not found | 15 |

cardiffnlp/tweet-topic-21-single | [

"arts_&_culture",

"business_&_entrepreneurs",

"daily_life",

"pop_culture",

"science_&_technology",

"sports_&_gaming"

] | # tweet-topic-21-single

This is a roBERTa-base model trained on ~124M tweets from January 2018 to December 2021 (see [here](https://huggingface.co/cardiffnlp/twitter-roberta-base-2021-124m)), and finetuned for single-label topic classification on a corpus of 6,997 tweets.

The original roBERTa-base model can be found [... | 2,137 |

DTAI-KULeuven/mbert-corona-tweets-belgium-topics | [

"closing-horeca",

"curfew",

"lockdown",

"masks",

"not-applicable",

"other-measure",

"quarantine",

"schools",

"testing",

"vaccine"

] | ---

language: "multilingual"

tags:

- Dutch

- French

- English

- Tweets

- Topic classification

widget:

- text: "I really can't wait for this lockdown to be over and go back to waking up early."

---

# Measuring Shifts in Attitudes Towards COVID-19 Measures in Belgium Using Multilingual BERT

[Blog post »](https://people... | 1,193 |

Emanuel/bertweet-emotion-base | [

"sadness",

"joy",

"love",

"anger",

"fear",

"surprise"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

model-index:

- name: bertweet-emotion-base

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

args: default

metrics:

- name:... | 893 |

edwardgowsmith/en-finegrained-zero-shot | null | Entry not found | 15 |

RANG012/SENATOR | null | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

- f1

model-index:

- name: SENATOR

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

args: plain_text

metrics:

- name: Accuracy

... | 1,443 |

lupinlevorace/tiny-bert-sst2-distilled | [

"negative",

"positive"

] | Entry not found | 15 |

IDEA-CCNL/Erlangshen-MegatronBert-1.3B-Similarity | null | ---

language:

- zh

license: apache-2.0

tags:

- bert

- NLU

- NLI

inference: true

widget:

- text: "今天心情不好[SEP]今天很开心"

---

# Erlangshen-MegatronBert-1.3B-Similarity, model (Chinese),one model of [Fengshenbang-LM](https://github.com/IDEA-CCNL/Fengshenbang-LM).

We collect 20 paraphrace datasets in the Chinese domain ... | 1,665 |

blanchefort/rubert-base-cased-sentiment-rurewiews | [

"NEUTRAL",

"POSITIVE",

"NEGATIVE"

] | ---

language:

- ru

tags:

- sentiment

- text-classification

datasets:

- RuReviews

---

# RuBERT for Sentiment Analysis of Product Reviews

This is a [DeepPavlov/rubert-base-cased-conversational](https://huggingface.co/DeepPavlov/rubert-base-cased-conversational) model trained on [RuReviews](https://github.com/sismetanin... | 1,269 |

dobbytk/letr-sol-profanity-filter | [

"hate",

"none",

"offensive"

] | Entry not found | 15 |

ynie/electra-large-discriminator-snli_mnli_fever_anli_R1_R2_R3-nli | [

"entailment",

"neutral",

"contradiction"

] | Entry not found | 15 |

poison-texts/imdb-sentiment-analysis-poisoned-75 | null | ---

license: apache-2.0

---

| 28 |

NYTK/sentiment-hts5-xlm-roberta-hungarian | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3",

"LABEL_4"

] | ---

language:

- hu

tags:

- text-classification

license: gpl

metrics:

- accuracy

widget:

- text: "Jó reggelt! majd küldöm az élményhozókat :)."

---

# Hungarian Sentence-level Sentiment Analysis model with XLM-RoBERTa

For further models, scripts and details, see [our repository](https://github.com/nytud/sentiment-... | 1,163 |

dennlinger/bert-wiki-paragraphs | [

"0",

"1"

] | # BERT-Wiki-Paragraphs

Authors: Satya Almasian\*, Dennis Aumiller\*, Lucienne-Sophie Marmé, Michael Gertz

Contact us at `<lastname>@informatik.uni-heidelberg.de`

Details for the training method can be found in our work [Structural Text Segmentation of Legal Documents](https://arxiv.org/abs/2012.03619).

The trainin... | 1,298 |

NDugar/v3-Large-mnli | [

"contradiction",

"entailment",

"neutral"

] | ---

language: en

tags:

- deberta-v1

- deberta-mnli

tasks: mnli

thumbnail: https://huggingface.co/front/thumbnails/microsoft.png

license: mit

pipeline_tag: zero-shot-classification

---

This model is a fine-tuned version of [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) on the GLUE MNLI... | 1,110 |

amirhossein1376/pft-clf-finetuned | [

"LABEL_0",

"LABEL_1",

"LABEL_10",

"LABEL_11",

"LABEL_2",

"LABEL_3",

"LABEL_4",

"LABEL_5",

"LABEL_6",

"LABEL_7",

"LABEL_8",

"LABEL_9"

] | ---

license: apache-2.0

language: fa

widget:

- text: "امروز دربی دو تیم پرسپولیس و استقلال در ورزشگاه آزادی تهران برگزار میشود."

- text: "وزیر امور خارجه اردن تاکید کرد که همه کشورهای عربی خواهان روابط خوب با ایران هستند.

به گزارش ایسنا به نقل از شبکه فرانس ۲۴، ایمن الصفدی معاون نخستوزیر و وزیر امور خارجه اردن پس ... | 2,979 |

cross-encoder/msmarco-MiniLM-L6-en-de-v1 | [

"LABEL_0"

] | ---

license: apache-2.0

---

# Cross-Encoder for MS MARCO - EN-DE

This is a cross-lingual Cross-Encoder model for EN-DE that can be used for passage re-ranking. It was trained on the [MS Marco Passage Ranking](https://github.com/microsoft/MSMARCO-Passage-Ranking) task.

The model can be used for Information Retrieval: ... | 4,798 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.