text1 stringlengths 2 269k | text2 stringlengths 2 242k | label int64 0 1 |

|---|---|---|

### Feature request

Whisper speech recognition without conditioning on previous text.

As in

https://github.com/openai/whisper/blob/7858aa9c08d98f75575035ecd6481f462d66ca27/whisper/transcribe.py#L278

### Motivation

Whisper implementation is great however conditioning the decoding on previous

text can cause significant hallucination and repetitive text, e.g.:

> "Do you have malpractice? Do you have malpractice? Do you have malpractice?

> Do you have malpractice? Do you have malpractice? Do you have malpractice?

> Do you have malpractice? Do you have malpractice? Do you have malpractice?

> Do you have malpractice? Do you have malpractice? Do you have malpractice?

> Do you have malpractice? Do you have malpractice? Do you have malpractice?

> Do you have malpractice?"

Running openai's model with `--condition_on_previous_text False` drastically

reduces hallucination

@ArthurZucker

|

Hello,

I tried to import this:

`from transformers import AdamW, get_linear_schedule_with_warmup`

but got error : model not found

but when i did this, it worked:

from transformers import AdamW

from transformers import WarmupLinearSchedule as get_linear_schedule_with_warmup

however when I set the scheduler like this :

scheduler = get_linear_schedule_with_warmup(optimizer, num_warmup_steps=args.warmup_steps, num_training_steps=t_total)

I got this error :

__init__() got an unexpected keyword argument 'num_warmup_steps'

| 0 |

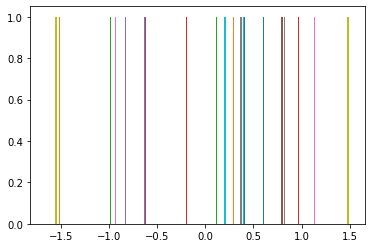

### Bug summary

I put a `torch.Tensor` in `matplotlib.pyplot.hist()` , but it draw a wrong

graphic and take a long time.

Although transform to numpy, the function work well. But all the others

function I used are work well on tensor. So I think its a bug.

### Code for reproduction

import matplotlib.pyplot as plt

import torch

plt.hist(torch.randn(20))

plt.show()

### Actual outcome

### Expected outcome

### Additional information

_No response_

### Operating system

Windows

### Matplotlib Version

3.5.1

### Matplotlib Backend

module://ipykernel.pylab.backend_inline

### Python version

3.7.13

### Jupyter version

6.4.8

### Installation

conda

|

### Bug report

**Bug summary**

Generating `np.random.randn(1000)` values, visualizing them with `plt.hist()`.

Works fine with Numpy.

When I replace Numpy with tensorflow.experimental.numpy, Matplotlib 3.3.4

fails to display the histogram correctly. Matplotlib 3.2.2 works fine.

**Code for reproduction**

import matplotlib.pyplot as plt

import numpy as np

import tensorflow as tf

import tensorflow.experimental.numpy as tnp

# bad image

labels1 = 15 + 2 * tnp.random.randn(1000)

_ = plt.hist(labels1)

# good image

labels2 = 15 + 2 * np.random.randn(1000)

_ = plt.hist(labels2)

**Actual outcome**

**Expected outcome**

**Matplotlib version**

* Operating system: Windows 10

* Matplotlib version (`import matplotlib; print(matplotlib.__version__)`): 3.3.4

* Matplotlib backend (`print(matplotlib.get_backend())`): module://ipykernel.pylab.backend_inline

* Python version: 3.8.7

* Jupyter version (if applicable): see below

* Other libraries: see below

TensorFlow 2.4.1

jupyter --version

jupyter core : 4.7.0

jupyter-notebook : 6.1.6

qtconsole : 5.0.1

ipython : 7.20.0

ipykernel : 5.4.2

jupyter client : 6.1.7

jupyter lab : not installed

nbconvert : 6.0.7

ipywidgets : 7.6.3

nbformat : 5.0.8

traitlets : 5.0.5

Python installed from python.org as an exe installer. Everything else is `pip

install --user`

Bug opened with TensorFlow on this same issue:

tensorflow/tensorflow#46274

| 1 |

### System info

* Playwright Version: [v1.31.2]

* Operating System: [Windows VM]

* Browser: [ONLY in Chromium]

* Other info: It used to work fine until updated to v1.31 and now even if I revert back to previous versions still getting the same error (the error only happens in the Windows VM , not in my local machine, but I do not have access to that VM Windows machine)

**Error LOG**

2023-03-07T09:27:22.6036536Z �[31m 1) [testing Login Setup] › tests\login-setup.ts:6:5 › Login Setup �[2m────────────────────────────────�[22m�[39m

2023-03-07T09:27:22.6037617Z

2023-03-07T09:27:22.6038162Z browserType.launch: Browser closed.

2023-03-07T09:27:22.6038793Z ==================== Browser output: ====================

2023-03-07T09:27:22.6044850Z <launching> C:\devops-agents\agent\_work\r65\a\Project.Web.Test.Automation\Output\node_modules\playwright-core\.local-browsers\chromium_win64_special-1050\chrome-win\chrome.exe --disable-field-trial-config --disable-background-networking --enable-features=NetworkService,NetworkServiceInProcess --disable-background-timer-throttling --disable-backgrounding-occluded-windows --disable-back-forward-cache --disable-breakpad --disable-client-side-phishing-detection --disable-component-extensions-with-background-pages --disable-component-update --no-default-browser-check --disable-default-apps --disable-dev-shm-usage --disable-extensions --disable-features=ImprovedCookieControls,LazyFrameLoading,GlobalMediaControls,DestroyProfileOnBrowserClose,MediaRouter,DialMediaRouteProvider,AcceptCHFrame,AutoExpandDetailsElement,CertificateTransparencyComponentUpdater,AvoidUnnecessaryBeforeUnloadCheckSync,Translate --allow-pre-commit-input --disable-hang-monitor --disable-ipc-flooding-protection --disable-popup-blocking --disable-prompt-on-repost --disable-renderer-backgrounding --disable-sync --force-color-profile=srgb --metrics-recording-only --no-first-run --enable-automation --password-store=basic --use-mock-keychain --no-service-autorun --export-tagged-pdf --headless --hide-scrollbars --mute-audio --blink-settings=primaryHoverType=2,availableHoverTypes=2,primaryPointerType=4,availablePointerTypes=4 --no-sandbox --user-data-dir=C:\Users\SVC_TE~1\AppData\Local\Temp\playwright_chromiumdev_profile-uvQwhV --remote-debugging-pipe --no-startup-window

2023-03-07T09:27:22.6052457Z <launched> pid=9100

2023-03-07T09:27:22.6054202Z [pid=9100][err] [0307/092645.718:ERROR:main_dll_loader_win.cc(109)] Failed to load Chrome DLL from C:\devops-agents\agent\_work\r65\a\Project.Web.Test.Automation\Output\node_modules\playwright-core\.local-browsers\chromium_win64_special-1050\chrome-win\chrome.dll: The specified procedure could not be found. (0x7F)

2023-03-07T09:27:22.6056487Z =========================== logs ===========================

2023-03-07T09:27:22.6062693Z <launching> C:\devops-agents\agent\_work\r65\a\Project.Web.Test.Automation\Output\node_modules\playwright-core\.local-browsers\chromium_win64_special-1050\chrome-win\chrome.exe --disable-field-trial-config --disable-background-networking --enable-features=NetworkService,NetworkServiceInProcess --disable-background-timer-throttling --disable-backgrounding-occluded-windows --disable-back-forward-cache --disable-breakpad --disable-client-side-phishing-detection --disable-component-extensions-with-background-pages --disable-component-update --no-default-browser-check --disable-default-apps --disable-dev-shm-usage --disable-extensions --disable-features=ImprovedCookieControls,LazyFrameLoading,GlobalMediaControls,DestroyProfileOnBrowserClose,MediaRouter,DialMediaRouteProvider,AcceptCHFrame,AutoExpandDetailsElement,CertificateTransparencyComponentUpdater,AvoidUnnecessaryBeforeUnloadCheckSync,Translate --allow-pre-commit-input --disable-hang-monitor --disable-ipc-flooding-protection --disable-popup-blocking --disable-prompt-on-repost --disable-renderer-backgrounding --disable-sync --force-color-profile=srgb --metrics-recording-only --no-first-run --enable-automation --password-store=basic --use-mock-keychain --no-service-autorun --export-tagged-pdf --headless --hide-scrollbars --mute-audio --blink-settings=primaryHoverType=2,availableHoverTypes=2,primaryPointerType=4,availablePointerTypes=4 --no-sandbox --user-data-dir=C:\Users\SVC_TE~1\AppData\Local\Temp\playwright_chromiumdev_profile-uvQwhV --remote-debugging-pipe --no-startup-window

2023-03-07T09:27:22.6068578Z <launched> pid=9100

2023-03-07T09:27:22.6070213Z [pid=9100][err] [0307/092645.718:ERROR:main_dll_loader_win.cc(109)] Failed to load Chrome DLL from C:\devops-agents\agent\_work\r65\a\Project.Web.Test.Automation\Output\node_modules\playwright-core\.local-browsers\chromium_win64_special-1050\chrome-win\chrome.dll: The specified procedure could not be found. (0x7F)

2023-03-07T09:27:22.6071990Z ============================================================

**Test file**

import { test as setup } from '@playwright/test';

setup('Login Setup', async ({ page }) => {

await this.page.goto('URL');

});

**Steps**

* run: "npm set PLAYWRIGHT_BROWSERS_PATH=0&& playwright install"

* runL "npm set PLAYWRIGHT_BROWSERS_PATH=0&& playwright test"

**Expected**

It used to work fine until updated to v1.31 and now even if I revert back to

previous versions still getting the same error (the error only happens in the

Windows VM , not in my local machine, but I do not have access to that VM

Windows machine)

**Actual**

As you can see in the LOG error, the browser cannot even be executed now.

|

### System info

* Playwright Version: v1.32.2

* Operating System: Windows 11

* Browser: All

* Node.js 18.12.0

* PowerShell 7.3.3

### Source code

* I provided exact source code that allows reproducing the issue locally.

**Link to the GitHub repository with the repro**

https://github.com/tadashi-aikawa/nuxt2-vuetify2-playwright-sandbox.git

**Steps**

#### 1\. Clone the repository and run the application

git clone https://github.com/tadashi-aikawa/nuxt2-vuetify2-playwright-sandbox.git

cd nuxt2-vuetify2-playwright-sandbox

git checkout 413de5f02e7affb8f301396929ca383dc967696e

npm install

npm run dev

#### 2\. Open the playwright GUI mode in another shell process

cd nuxt2-vuetify2-playwright-sandbox

npx playwright test --ui

#### 3\. Run all tests

**Expected**

Success all tests.

**Actual**

Some tests fail as follows.

The terminal that runs "npm run dev" shows errors.

node:events:491

throw er; // Unhandled 'error' event

^

Error: EPERM: operation not permitted, stat 'C:\Users\syoum\tmp\nuxt2-vuetify2-playwright-sandbox\test-results\.playwright-artifacts-1\1a98587cb1640f43c20527b24baacb89.zip'

Emitted 'error' event on FSWatcher instance at:

at FSWatcher.emitWithAll (C:\Users\syoum\tmp\nuxt2-vuetify2-playwright-sandbox\node_modules\chokidar\index.js:540:8)

at awfEmit (C:\Users\syoum\tmp\nuxt2-vuetify2-playwright-sandbox\node_modules\chokidar\index.js:599:14)

at C:\Users\syoum\tmp\nuxt2-vuetify2-playwright-sandbox\node_modules\chokidar\index.js:714:43

at FSReqCallback.oncomplete (node:fs:207:21) {

errno: -4048,

code: 'EPERM',

syscall: 'stat',

path: 'C:\\Users\\syoum\\tmp\\nuxt2-vuetify2-playwright-sandbox\\test-results\\.playwright-artifacts-1\\1a98587cb1640f43c20527b24baacb89.zip'

}

Node.js v18.12.0

### Remarks

* Without UI mode, it is always a success (`npx playwright test`)

* It occurs even if set `1` to `workers`

* I confirmed that I could create `C:\Users\syoum\tmp\nuxt2-vuetify2-playwright-sandbox\test-results\.playwright-artifacts-1\1a98587cb1640f43c20527b24baacb89.zip` manually

* It occurs even if I run them as an administrator

* It occurs even if I select firefox or webkit instead of chromium

Please let me know if any information is missing.

Best regards.

P.S. The new feature, UI mode, is very awesome!! It looks like as a cool IDE

to e2e test! 😄

| 0 |

ERROR: type should be string, got "\n\nhttps://github.com/kubernetes/kubernetes/blob/master/pkg/kubelet/container_bridge.go#L122-L143 \ncontainer_bridge.go assumes that the virtual IP of services & pods will be in\nthe `10.` space. \nI propose there is no reason to make this assumption.\n\nAs outlined in #15932, cluster admins may need to deploy to hosts in which\n`10.` is reserved for the nodes. In such a case, Kubelets must support an\nalternative range.\n\n" |

Today kubelet sets up an iptables MASQUERADE rule for any traffic destined for

anything except 10.0.0.0/8. This is close, but not even correct on GCE, and

certainly not right elsewhere.

First GCE. We probably want something like:

iptables -t nat -N KUBE-IPMASQ

iptables -t nat -A KUBE-IPMASQ -d 10.0.0.0/8 -j RETURN

iptables -t nat -A KUBE-IPMASQ -d 172.16.0.0/12 -j RETURN

iptables -t nat -A KUBE-IPMASQ -d 192.168.0.0/16 -j RETURN

iptables -t nat -A KUBE-IPMASQ -j MASQUERADE

iptables -t nat -I POSTROUTING -j KUBE-IPMASQ

This catches all traffic to RFC1918 ranges and masquerades it. We can probably

optimize with CONNMARK or something so we only consider packets from

containers. This is probably still imperfect, but better, for lack of project-

wide NAT for egress.

For other environments, we really have no idea what the correct policy for

this is. It is closer to "your nodes must handle this" than "we can handle

this for you". It's debatable whether we should even try.

Either:

a) We teach kubelet a lot more and let people pass flags to nearly=arbitrarily

configure this

b) We tell people to configure this as part of their node setup

This popped up when I realized GKE allows users to set up 172.* clusters - any

traffic between containers in one of these will get masqueraded - not correct

behavior!! This is not a huge deal right now because kube-proxy has the same

effect when traversing services. As we fix kube-proxy in the wake of 1.0,

masquerade will be a bigger deal, especially for micro-segmenting.

Additional considerations: VPNs have bizarre and very custom needs. Every such

thing has an as yet unmeasured perf implication. This also pops up in our GCE

firewall thing that @ArtfulCoder is working on.

@dchen1107 @alex-mohr

| 1 |

.valid would apply the normal ("successful") input/textarea/select styles when

applied to those input types while within a .control-group.error.

The use case for this is a .control-group section with a "multi-part" input

(ex: time entry with hour and minute fields). If minutes was valid, but hour

was not the .control-group should still have .error applied.

This would give users a more obvious indication of what was left to fix--since

only input/textarea/select's without the .valid class would be marked in the

.error styles.

.valid could inherit direction form the default input/textarea/select styles.

|

It would be useful to provide column push and pull classes for all three

different grids.

This means that `.col-push-*` and `.col-pull-*` only affect the mobile grid

and are joined by `.col-push-sm-*`, `.col-pull-sm-*` and `.col-push-lg-*`,

`.col-pull-lg-*` to control the column order differently depending on the grid

in use.

Summary: Allow for different column reordering in different grids.

| 0 |

### Is there an existing issue for this?

* I have searched the existing issues

### This issue exists in the latest npm version

* I am using the latest npm

### Current Behavior

Currently, my package.json specifies `"typescript": "^5.0.2"`. When I change

it to say `"typescript": "^5.0.3"`, npm 9 spins for 4:28 before deciding it

doesn't exist. For comparison, npm 8 installs it with no problem in 0:44.

Ironically, I can't upgrade npm to 9.6 due to this issue: npm 9.5.1 times out

when I run `npm i -g npm`.

### Expected Behavior

npm 9 should be able to locate and download these versions with similar

performance to npm 8.

### Steps To Reproduce

1. Have a project with a v2 lockfile and TypeScript 5.0.2

2. Install node 18.16.0 with npm 9.5.1

3. Update the package.json to request TypeScript 5.0.3 or newer

4. Run `npm i`

### Environment

* npm: 9.5.1

* Node.js: 18.16.0

* OS Name: Windows 10

* System Model Name: Maingear Vector 2

* npm config:

; "user" config from C:\Users\bbrk2\.npmrc

//registry.npmjs.org/:_authToken = (protected)

; node bin location = C:\Users\bbrk2\.nvm\versions\node\v18.16.0\bin\node.exe

; node version = v18.16.0

; npm local prefix = C:\Users\bbrk2\[REDACTED]

; npm version = 9.5.1

; cwd = C:\Users\bbrk2\[REDACTED]

; HOME = C:\Users\bbrk2

; Run `npm config ls -l` to show all defaults.

|

### Is there an existing issue for this?

* I have searched the existing issues

### This issue exists in the latest npm version

* I am using the latest npm

### Current Behavior

When running `npm install` it will sometimes hang at a random point. When it

does this, it is stuck forever. CTRL+C will do nothing the first time that

combination is pressed when this has occurred. Pressing that key combination

the second time will make the current line (the one showing the little

progress bar) disappear but that's it. No further responses to that key

combination are observed.

The CMD (or Powershell) window cannot be closed regardless. The process cannot

be killed by Task Manager either (Access Denied, although I'm an Administrator

user so I'd assume the real reason is something non-permissions related). The

only way I have found to close it is to reboot the machine.

My suspicion is it's some sort of deadlock, but this is a guess and I have no

idea how to further investigate this. I've tried using Process Explorer to

check for handles to files in the project directory from other processes but

there are none. There are handles held by the Node process npm is using, and

one for the CMD window hosting it, but that's it.

Even running with `log-level silly` yields no useful information. When it

freezes there are no warnings or errors, it just sits on the line it was on.

This is some log output from one of the times when it got stuck (I should

again emphasise that the point where it gets stuck seems to be random, so the

last line shown here isn't always the one it freezes on):

npm timing auditReport:init Completed in 49242ms

npm timing reify:audit Completed in 55729ms

npm timing reifyNode:node_modules/selenium-webdriver Completed in 54728ms

npm timing reifyNode:node_modules/regenerate-unicode-properties Completed in 55637ms

npm timing reifyNode:node_modules/ajv-formats/node_modules/ajv Completed in 56497ms

npm timing reifyNode:node_modules/@angular-devkit/schematics/node_modules/ajv Completed in 56472ms

[##################] \ reify:ajv: timing reifyNode:node_modules/@angular-devkit/schematics/node_modules/ajv Completed in 564

The only thing that I can think of right now is that Bit Defender (the only

other application running) is interfering somehow, however it's the one

application I can't turn off.

I've seen this issue occur on different projects, on different network and

internet connections, and on different machines. Does anyone have any advice

on how to investigate this, or at the very least a way to kill the process

when it hangs like this without having to reboot the machine? Being forced to

reboot when this issue occurs is perhaps the most frustrating thing in all of

this.

### Expected Behavior

`npm install` should either succeed or show an error. If it gets stuck it

should either time-out or be closable by the user.

### Steps To Reproduce

1. Clear down the `node_modules` folder (ie with something like `rmdir /q /s`)

2. Run. `npm install`

3. Watch and wait.

4. If it succeeds, repeat the above steps until the freeze is observed.

### Environment

* npm: 8.1.3

* Node: v16.13.0

* OS: Windows 10 Version 21H1 (OS Build 19043.1288)

* platform: Lenovo ThinkPad

* npm config:

; "builtin" config from C:\Users\<REDACTED>\AppData\Roaming\npm\node_modules\npm\npmrc

prefix = "C:\\Users\\<REDACTED>\\AppData\\Roaming\\npm"

; "user" config from C:\Users\<REDACTED>\.npmrc

//pkgs.dev.azure.com/<REDACTED>/_packaging/<REDACTED>/npm/registry/:_authToken = (protected)

; node bin location = C:\Program Files\nodejs\node.exe

; cwd = C:\Users\<REDACTED>

; HOME = C:\Users\<REDACTED>

; Run `npm config ls -l` to show all defaults.

| 1 |

I love the Acrylic effect in the new terminal. Really gives it a modern feel.

But at the moment, whenever you focus on a different program, i.e click away

from the terminal, it loses the Acrylic effect and goes to a solid color. I

have two monitors so most of the time, I have my terminal visible on the

second monitor while working in the first and most of the time the terminal is

just a solid black color. Would it be possible to make it so that it keeps the

Acrylic effect or is that a limitation of the UI toolkit?

|

Using Windows Store version 0.5.2762.0

Zipped DMP file attached.

conhost.exe.21732.dmp.zip

| 0 |

* I have searched the issues of this repository and believe that this is not a duplicate.

* I have checked the FAQ of this repository and believe that this is not a duplicate.

### Environment

* Dubbo version: 2.7.0

* Operating System version: Mac OS

* Java version: 1.8

### Steps to reproduce this issue

#2031 have fixed the writePlace stackOverflow issue. The code have been merged

into hessian-lite, but not in dubbo.

Right now, the issue still there in dubbo 2.7.0. But we are not able to embed

hessian-lite, as we are not able to remove hessian from dubbo(conflicts as

below).

Found in:

org.apache.dubbo:dubbo:jar:2.7.0:compile

com.alibaba:hessian-lite:jar:3.2.5:compile

Duplicate classes:

com/alibaba/com/caucho/hessian/io/HessianDebugState$DateState.class

com/alibaba/com/caucho/hessian/io/ThrowableSerializer.class

1. Define a class with a writeReplace method return this

public class WriteReplaceReturningItself implements Serializable {

private static final long serialVersionUID = 1L;

private String name;

WriteReplaceReturningItself(String name) {

this.name = name;

}

public String getName() {

return name;

}

/**

* Some object may return itself for wrapReplace, e.g.

* https://github.com/FasterXML/jackson-databind/blob/master/src/main/java/com/fasterxml/jackson/databind/JsonMappingException.java#L173

*/

Object writeReplace() {

//do some extra things

return this;

}

}

2. Use Hessian2Output to serialize it

ByteArrayOutputStream bout = new ByteArrayOutputStream();

Hessian2Output out = new Hessian2Output(bout);

out.writeObject(data);

out.flush();

3. Error occurs

java.lang.StackOverflowError

at com.alibaba.com.caucho.hessian.io.SerializerFactory.getSerializer(SerializerFactory.java:302)

at com.alibaba.com.caucho.hessian.io.Hessian2Output.writeObject(Hessian2Output.java:381)

at com.alibaba.com.caucho.hessian.io.JavaSerializer.writeObject(JavaSerializer.java:226)

at com.alibaba.com.caucho.hessian.io.Hessian2Output.writeObject(Hessian2Output.java:383)

at com.alibaba.com.caucho.hessian.io.JavaSerializer.writeObject(JavaSerializer.java:226)

Pls. provide [GitHub address] to reproduce this issue.

### Expected Result

The serialization process should complete with no exception or error.

### Actual Result

java.lang.StackOverflowError

at com.alibaba.com.caucho.hessian.io.SerializerFactory.getSerializer(SerializerFactory.java:302)

at com.alibaba.com.caucho.hessian.io.Hessian2Output.writeObject(Hessian2Output.java:381)

at com.alibaba.com.caucho.hessian.io.JavaSerializer.writeObject(JavaSerializer.java:226)

at com.alibaba.com.caucho.hessian.io.Hessian2Output.writeObject(Hessian2Output.java:383)

at com.alibaba.com.caucho.hessian.io.JavaSerializer.writeObject(JavaSerializer.java:226)

|

* I have searched the issues of this repository and believe that this is not a duplicate.

* I have checked the FAQ of this repository and believe that this is not a duplicate.

https://github.com/apache/incubator-

dubbo/blob/6e4ff91dfca4395a8d1b180f40f632e97acf779d/dubbo-monitor/dubbo-

monitor-

api/src/main/java/org/apache/dubbo/monitor/support/MetricsFilter.java#L44-L47

The way to calculate the invocation time can be improved by using

`java.lang.System#nanoTime`

| 0 |

I hope this is not a dupe, I was really surprised by this bug because - being

so simple in nature - it appears it should have been caught by your tests (or

at least stumbled upon by other people), hence there is a slight probability

that I misunderstood something or that the compiler isn't capable to do the

necessary work (at which point I'd be doubly disappointed).

Anyway, the example is artificial because it's extracted from a larger code

base.

Imagine a simple project, let's start with `tsconfig.json`

{

"compilerOptions": {

"target" : "ES5",

"noImplicitAny": true,

"out": "badout.js"

}

}

In the same folder, add a single file `c.ts` which is just `class C {}`

Add a subfolder called `sub` and put the following two files inside:

// file a.ts

class A extends B {

method1(p1:number, p2:string):void {}

}

// file b.ts

class B {

method1(p1:number, p2:string):void {}

}

Then simply run tsc in the project folder (the one containing `tsconfig.json`

and `c.ts`)

The output will be:

var C = (function () {

function C() {

}

return C;

})();

var __extends = this.__extends || function (d, b) {

for (var p in b) if (b.hasOwnProperty(p)) d[p] = b[p];

function __() { this.constructor = d; }

__.prototype = b.prototype;

d.prototype = new __();

};

var A = (function (_super) {

__extends(A, _super);

function A() {

_super.apply(this, arguments);

}

A.prototype.method1 = function (p1, p2) { };

return A;

})(B);

var B = (function () {

function B() {

}

B.prototype.method1 = function (p1, p2) { };

return B;

})();

The problem is obvious - we're trying to use `B` before it is defined.

If this has been noted and fixed, please forgive the noise.

If not, please try to look into fixing this before 1.5 is "done".

|

The compiler should issue an error when code uses values before they could

possibly be initialized.

// Error, 'Derived' declaration must be after 'Base'

class Derived extends Base { }

class Base { }

| 1 |

_Original tickethttp://projects.scipy.org/numpy/ticket/1044 on 2009-03-09 by

@pv, assigned to unknown._

\--- Continuation of #1440.

Ufuncs return array scalars for 0D array input:

>>> import numpy as np

>>> type(np.conjugate(np.array(1+2j)))

<type 'numpy.complex128'>

>>> type(np.sum(np.array(3.), np.array(5.)))

<type 'numpy.float64'>

Should they return 0D arrays instead?

|

I have a unit test failing on `np.divide.accumulate` with numpy 1.15.0

compiled with the Intel compiler. The same code is fine with a local build of

GCC.

Perhaps this is related to ENH: umath: don't make temporary copies for in-

place accumulation (#10665) where @juliantaylor fixed

`numpy/core/src/umath/loops.c.src` for GCC's `ivdep` pragma. That was reviewed

by @eric-wieser .

### Reproducing code example

Source code was 1.15.0 compiled with the Intel compiler 2018.1.163. where

np.divide.accumulate fails in the numpy unit tests.

I can reproduce with this Python 2.7 test case for `float64` dtype. It runs

correctly for `float32` and `float128` on the Intel compiler. It also runs

correctly for `float64` using GCC instead of Intel.

$ python -c "import numpy as np; acc = np.divide.accumulate;

a = np.ones(8, dtype=np.float64);

print acc(a, out=2*np.ones_like(a)); print acc(a); print acc(a); print acc(a);

print acc(a, out=2*np.ones_like(a))"

[1. 1. 2. 2. 2. 2. 2. 2.]

[1. 1. 1. 1. 2. 2. 2. 2.]

[1. 1. 1. 1. 1. 1. 2. 2.]

[1. 1. 1. 1. 1. 1. 1. 1.]

[1. 1. 2. 2. 2. 2. 2. 2.]

Notice how only the next two elements are set each time `np.divide.accumulate`

runs. That suggests the `float64`s are being processed in pairs. Then in the

final line, specifying the output array again returns to the first line's

incorrect behavior.

### Numpy/Python version information:

numpy 1.15.0 build with ICC 2018.1.163.

python 2.7.11 built with GCC 4.9.3.

| 0 |

With this issue I would like to track the efforts in integrating the

cudnn library within tensorflow.

As of June 17th 2016 doing a manual grep on the repository gives these

functions as being mapped from cudnn to the stream executor,

From chapter 4 of the cudnn User Guide version 5.0 (April 2016):

* cudnnGetVersion

* cudnnGetErrorString

* cudnnCreate

* cudnnDestroy

* cudnnSetStream

* cudnnGetStream

* cudnnCreateTensorDescriptor

* cudnnSetTensor4dDescriptor

* cudnnSetTensor4dDescriptorEx

* cudnnGetTensor4dDescriptor

* cudnnSetTensorNdDescriptor

* cudnnDestroyTensorDescriptor

* cudnnTransformTensor

* cudnnAddTensor

* cudnnOpTensor

* cudnnSetTensor

* cudnnScaleTensor

* cudnnCreateFilterDescriptor

* cudnnSetFilter4dDescriptor

* cudnnGetFilter4dDescriptor

* cudnnSetFilter4dDescriptor_v3 (versioned)

* cudnnGetFilter4dDescriptor_v3 (versioned)

* cudnnSetFilter4dDescriptor_v4 (versioned)

* cudnnGetFilter4dDescriptor_v4 (versioned)

* cudnnSetFilterNdDescriptor

* cudnnGetFilterNdDescriptor

* cudnnGetFilterNdDescriptor_v3 (versioned)

* cudnnGetFilterNdDescriptor_v3 (versioned)

* cudnnGetFilterNdDescriptor_v4 (versioned)

* cudnnGetFilterNdDescriptor_v4 (versioned)

* cudnnDestroyFilterDescriptor

* cudnnCreateConvolutionDescriptor

* cudnnSetConvolution2dDescriptor

* cudnnGetConvolution2dDescriptor

* cudnnGetConvolution2dForwardOutputDim

* cudnnSetConvolutionNdDescriptor

* cudnnGetConvolutionNdDescriptor

* cudnnGetConvolutionNdForwardOutputDim

* cudnnDestroyConvolutionDescriptor

* cudnnFindConvolutionForwardAlgorithm

* cudnnFindConvolutionForwardAlgorithmEx

* cudnnGetConvolutionForwardAlgorithm

* cudnnGetConvolutionForwardWorkspaceSize

* cudnnConvolutionForward

* cudnnConvolutionBackwardBias

* cudnnFindConvolutionBackwardFilterAlgorithm

* cudnnFindConvolutionBackwardFilterAlgorithmEx

* cudnnGetConvolutionBackwardFilterAlgorithm

* cudnnGetConvolutionBackwardFilterWorkspaceSize

* cudnnConvolutionBackwardFilter

* cudnnFindConvolutionBackwardDataAlgorithm

* cudnnFindConvolutionBackwardDataAlgorithmEx

* cudnnGetConvolutionBackwardDataAlgorithm

* cudnnGetConvolutionBackwardDataWorkspaceSize

* cudnnConvolutionBackwardData

* cudnnSoftmaxForward

* cudnnSoftmaxBackward

* cudnnCreatePoolingDescriptor

* cudnnSetPooling2dDescriptor

* cudnnGetPooling2dDescriptor

* cudnnSetPoolingNdDescriptor

* cudnnGetPoolingNdDescriptor

* cudnnSetPooling2dDescriptor_v3 (versioned)

* cudnnGetPooling2dDescriptor_v3 (versioned)

* cudnnSetPoolingNdDescriptor_v3 (versioned)

* cudnnGetPoolingNdDescriptor_v3 (versioned)

* cudnnSetPooling2dDescriptor_v4 (versioned)

* cudnnGetPooling2dDescriptor_v4 (versioned)

* cudnnSetPoolingNdDescriptor_v4 (versioned)

* cudnnGetPoolingNdDescriptor_v4 (versioned)

* cudnnDestroyPoolingDescriptor

* cudnnGetPooling2dForwardOutputDim

* cudnnGetPoolingNdForwardOutputDim

* cudnnPoolingForward

* cudnnPoolingBackward

* cudnnActivationForward

* cudnnActivationBackward

* cudnnCreateActivationDescriptor

* cudnnSetActivationDescriptor

* cudnnGetActivationDescriptor

* cudnnDestroyActivationDescriptor

* cudnnActivationForward_v3 (versioned)

* cudnnActivationBackward_v3 (versioned)

* cudnnActivationForward_v4 (versioned)

* cudnnActivationBackward_v4 (versioned)

* cudnnCreateLRNDescriptor

* cudnnSetLRNDescriptor

* cudnnGetLRNDescriptor

* cudnnDestroyLRNDescriptor

* cudnnLRNCrossChannelForward

* cudnnLRNCrossChannelBackward

* cudnnDivisiveNormalizationForward

* cudnnDivisiveNormalizationBackward

* cudnnBatchNormalizationForwardInference

* cudnnBatchNormalizationForwardTraining

* cudnnBatchNormalizationBackward

* cudnnDeriveBNTensorDescriptor

* cudnnCreateRNNDescriptor

* cudnnDestroyRNNDescriptor

* cudnnSetRNNDescriptor

* cudnnGetRNNWorkspaceSize

* cudnnGetRNNTrainingReserveSize

* cudnnGetRNNParamsSize

* cudnnGetRNNLinLayerMatrixParams

* cudnnGetRNNLinLayerBiasParams

* cudnnRNNForwardInference

* cudnnRNNForwardTraining

* cudnnRNNBackwardData

* cudnnRNNBackwardWeights

* cudnnCreateDropoutDescriptor

* cudnnDestroyDropoutDescriptor

* cudnnDropoutGetStatesSize

* cudnnDropoutGetReserveSpaceSize

* cudnnSetDropoutDescriptor

* cudnnDropoutForward

* cudnnDropoutBackward

* cudnnCreateSpatialTransformerDescriptor

* cudnnDestroySpatialTransformerDescriptor

* cudnnSetSpatialTransformerNdDescriptor

* cudnnSpatialTfGridGeneratorForward

* cudnnSpatialTfGridGeneratorBackward

* cudnnSpatialTfSamplerForward

* cudnnSpatialTfSamplerBackward

### Batch Normalization

Seems @lukemetz was working on it but has stalled for a bit #1759

### What is the plan for the RNN ?

I know @wchan was working on a cpu version. I was trying to get a stub at

using the cudnn version but from the comments in that thread seemed like

@zheng-xq was working on it internally.

Can anyone comment on the status of these ops?

### Other Questions

1. Any reasons why the Softmax functions are not being used ?

2. Would make sense to split the above list in chunks and create issues with contribution welcome, so external contributors can tackle them without duplicating internal work, or mark what google will be working on internally ?

I hope this helps in organizing the work around the cudnn and inspire the

community to contribute. I will try to keep this issue up to date.

| 1 | |

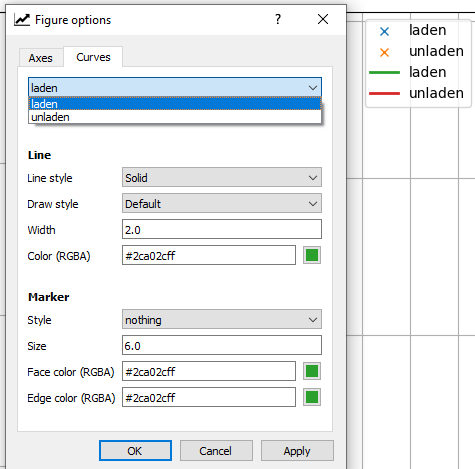

### Bug report

**Bug summary**

If there are multiple curves with the same label in a figure/subplot, only the

last one of them can be selected in the _Figure options_ window under the

_Curves_ tab. In the legend however, they appear as they should.

**Code for reproduction**

import matplotlib.pyplot as plt

plt.plot([0,1],[0,1],label="line")

plt.plot([0,1],[1,0],label="line")

plt.legend()

plt.show()

**Actual outcome**

See description above and the image below.

**Expected outcome**

All curves should be listed in the _Figure options_ window, even if they have

the same label.

**Matplotlib version**

* Operating system: Windows 10

* Matplotlib version (`import matplotlib; print(matplotlib.__version__)`): 3.3.4

* Matplotlib backend (`print(matplotlib.get_backend())`): Qt5Agg

* Python version: 3.8.5

* Jupyter version (if applicable): -

* Other libraries: -

Matplotlib has been installed with pip.

|

### Bug report

**Bug summary**

**Code for reproduction**

# Paste your code here

#

#

**Actual outcome**

# If applicable, paste the console output here

#

#

**Expected outcome**

**Matplotlib version**

* Operating system:

* Matplotlib version:

* Matplotlib backend (`print(matplotlib.get_backend())`):

* Python version:

* Jupyter version (if applicable):

* Other libraries:

| 0 |

##### ISSUE TYPE

* Bug Report

##### COMPONENT NAME

Templating

##### ANSIBLE VERSION

1.8.2

##### CONFIGURATION

##### OS / ENVIRONMENT

N/A (but rhel7)

##### SUMMARY

When entering passwords for login/sudo, they are parsed by the templater

instead of being used literally. This creates unexpected behaviour - for

example, with a password that contains a '{':

$ ansible -i "testhost1," all -k -m ping

SSH password:

testhost1 | FAILED => template error while templating string: unexpected token 'eof'

$

If the substring in the password were valud template syntax we'd just get

'invalid password' errors, which would be even more confusing.

There is potential for destructive behaviour and - while very minor -

potential security concerns by use of a malicious password (I don't have a

specific example, but in places with esoteric security implementations one

could see this being exploitable).

##### STEPS TO REPRODUCE

* Set password on remote host '123abc{'

* Attempt ansible run with -k

##### EXPECTED RESULTS

Successfully authenticates against remote host and runs my command

##### ACTUAL RESULTS

Connecting to host host fails with 'error while templating string'

SSH password:

testhost1 | FAILED => template error while templating string: unexpected token 'eof'

|

##### ISSUE TYPE

* Documentation Report

##### COMPONENT NAME

https://docs.ansible.com/ansible/latest/dev_guide/developing_modules_documenting.html#return-

block

##### ANSIBLE VERSION

N/A since it's web documentation

##### CONFIGURATION

N/A

##### OS / ENVIRONMENT

N/A

##### SUMMARY

Documentation has the description for`returned` as "When this value is

returned, such as always, on success, always". First, always is repeated.

Second, it should list all the options for returned and not just 3.

##### STEPS TO REPRODUCE

N/A

##### EXPECTED RESULTS

I'd expect this to be more thorough with a bulleted list of values and what

they mean. Such as...

always - Returned on every request

success - Returned only when request was successful

##### ACTUAL RESULTS

See webpage.

| 0 |

It would be great if Bootstraps javascripts would be compatible with Zepto. As

Zepto is almost API compatible with jquery this shouldn't be that hard.

| 0 | |

please add 'raw' to modules allowed to have duplicate parameters.

ansible/lib/ansible/runner/__init__.py

Line 444 in be4dbe7

| is_shell_module = self.module_name in ('command', 'shell')

---|---

- is_shell_module = self.module_name in ('command', 'shell')

+ is_shell_module = self.module_name in ('command', 'shell', 'raw')

thank you.

|

##### Issue Type:

Feature Idea

##### Ansible Version:

1.6.6

##### Environment:

N/A

##### Summary:

I developed a lookup plugin which is for pulling secret keys from the cloud

server. However, when I use `with_secret` statement like this

- name: foo

bar: secret={{ item }}

with_secret: eggs/spam/secret.txt

and you will see something like this on the screen when you run ansible

ok: [default] => (item="MY_SUPER_SECRET_KEY_HERE")

I really don't like how this works, it displays your secret key on screen. If

you redirect logging for ansible to a file or what, then all your secret keys

are in the file now.

And in other situations, like with_file, you may see something like

ok: [default] => (item="

thousand lines here

thousand lines here

thousand lines here

thousand lines here

thousand lines here

....

I think this is really bad for ansible to print literately everything it got

from a lookup. So, I propose, for a lookup class, if a `sanitize` method is

defined, ansible should then call the method for each item before printing it

on the screen. So that for my secret getting lookup I can write something like

this

def sanitize(self, item):

return '*' * 8

And for huge file and other things, people can write something like

def sanitize(self, item):

return 'File {} ({} bytes)'.format(item._filename, item._size)

def run(self, terms, inject=None, **kwargs):

result = 'a long long long long file content'

result._filename = terms

result._size = len(result)

return [result]

##### Steps To Reproduce:

N/A

##### Expected Results:

N/A

##### Actual Results:

N/A

| 0 |

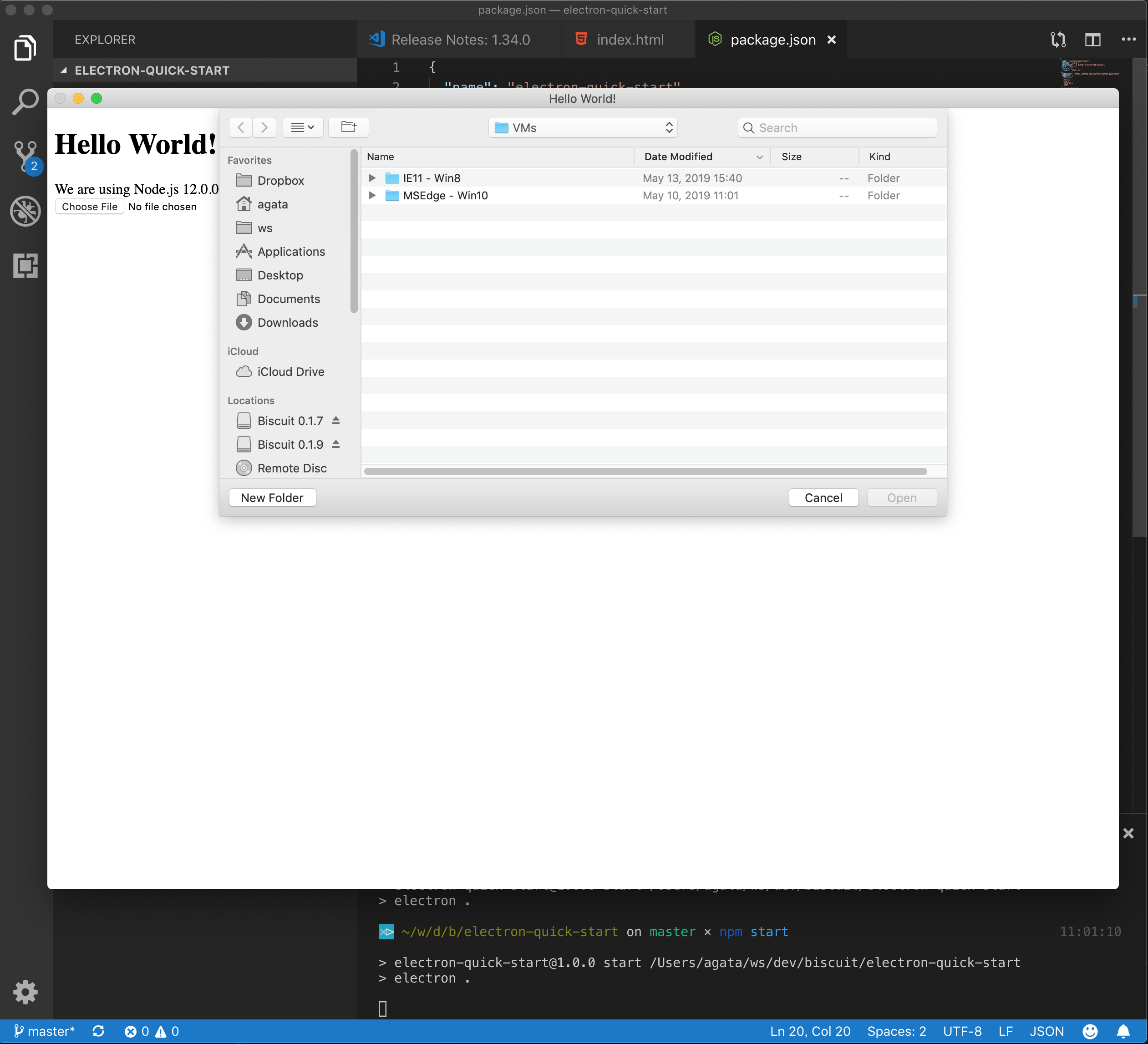

* Electron Version: 3.0 Beta 4

* Operating System (Platform and Version): Windows10, 8 (x86 and x64)

* Last known working Electron version: 2.x

**Expected Behavior**

PDF should be displayed.

**Actual behavior**

"Save As.." dialog for download appears and pdf will not be displayed, even

when downloaded.

**To Reproduce**

Create a BrowserWindow and load an URL with a pdf or iframes with pdfs.

const win = new BrowserWindow({

width: 800,

height: 600,

webPreferences: {

plugins: true

}

});

win.webContents.loadURL(URL_TO_PDF);

|

* Electron version: 0.36.7

* Operating system: OS X

My electron app needs to make an HTTP request to a service that returns

malformed headers:

$ curl -IS 'http://192.168.0.1/getdeviceinfo/info.bin'

HTTP/1.1 200 OK

Date: Wed, 02 Dec 2015 16:42:45 GMT

Server: nostradamus 1.9.5

Connection: close

Etag: Aug 28 2015, 07:57:20

Transfer-Encoding: chunked

HTTP/1.0 200 OK

Content-Type: text/html

Notice the duplicate `200 OK` header? Yeah, so does the native Node.js parser

and it chokes. In a standalone test script, I've found that I can use the

`http-parser-js` library to make the same request and it handles the bad

headers gracefully.

Now I need to make that work within the Electron app that needs to actually

make the call and retrieve the data and it's failing with the same

`HPE_INVALID_HEADER_TOKEN` I've been getting all along. I assume, for that

reason, that the native HTTP parser is not getting overridden the way that it

does in the test script.

In my electron app's main process, I have the same code I used in my test

script:

process.binding('http_parser').HTTPParser = require('http-parser-js').HTTPParser;

var http = require('http');

var req = http.request( ... )

Is there an alternate process binding syntax I can use within Electron? Or

some other means of making an HTTP request without taxing the Node parser?

| 0 |

Fixing issue #9691 revealed a deeper problem with the QuadPrefixTree's memory

usage. At 1m precision the example shape in

https://gist.github.com/nknize/abbcb87f091b891f85e1 consumes more than 1GB of

memory. This is initially alleviated by using 2 bit encoded quads (instead of

1byte) but only delays the problem. Moreover, as new complex shapes are added

duplicate quadcells are created - thus introducing unnecessary redundant

memory consumption (an inverted index approach makes mosts sense - its

Lucene!).

For now, if a QuadTree is used for complex shapes great care must be taken and

precision must be sacrificed (something that's automatically done with the

distance_error_pct without the user knowing - which is a TERRIBLE approach).

An alternative improvement could be to apply a Hilbert R-Tree - which will be

explored as a separate issue. Or to restrict the accuracy to a lower level of

precision (something that's undergoing experimentation).

|

**Elasticsearch version** :

2.3.4

**JVM version** :

1.8

# some gc log

[monitor.jvm ] [Powerpax] [gc][young][132059][21130] duration [756ms],

collections [1]/[1.4s], total [756ms]/[10.3m], memory

[26.7gb]->[24.1gb]/[29.6gb

], all_pools {[young] [2.7gb]->[3.2mb]/[2.7gb]}{[survivor]

[20.6mb]->[240.8mb]/[357.7mb]}{[old] [23.9gb]->[23.9gb]/[26.5gb]}

# doubt

I use bulk to index. 30 billion doc very day. But some node alway out of

cluster because long time full gc after some days.

I dump bin log and find taskManager object cost most of memory(18GB), I doubt

why taskManager cost cost many memory ? It cache large number of task(request)

? some body can explain the situation? thanks

| 0 |

Apparently there isn't any narrative documentations for some non-clustering

metrics as it was pointed in #1507.

|

There is no user guide on the classification / regression metrics....

| 1 |

## The Problem

VSCode can nicely handle comments in JSON files. However, the JSON

specification does **not** allow comments in JSON files. Therefore most JSON

parsers fail when JSON files contain comments. E.g. `package.json` fails, and

even the typescript compiler fails on tsconfig.json files that include

comments.

Another problem is that syntax highlighting does not work on github. For

example, the file `extensions/xml/xml.configuration.json` ist not legal JSON.

This is symptomatic for repositories that are managed with VSCode:

* @dbaeumer in `eslint/.vscode/tasks.json`

* @weinand in `vscode-node-debug/.vscode/launch.json`

* @egamma in `vscode-go/blame/master/.vscode/launch.json`

## Proposal: create a new file type, e.g. `.tson`

Instead of misleading people about the syntax of JSON by providing support for

comments in JSON files, VSCode should rather use a new format like `TSON`,

that would be an extension of JSON that allows for comments. There could be a

simpler pre-processor for TSON (like JSON.minify) that converts TSON into JSON

by stripping away the comments.

With such a new file type, VSCode could happily use them without compromising

the integrity of existing `.json` files.

In addition, it would be cool to use typescript ambient definitions for

`.tson` files. I really like the typescript schema for `tasks.json`.

|

I'm running VSCode in a corporate network (Active Directory), when I install

VSCode I'm asked for an admin password, after the installation normal users

sessions don't have the VSCode context menu options, only the admin account

has that menu.

How can I add the context menu for all users?

| 0 |

In order to have nice looking paths without hash or bang, I am using history

mode:

var router = new VueRouter({

routes: routes,

mode: 'history'

})

However, when I refresh a page, I get a 404 error. If I remove mode:

'history', I can go directly to urls at a path and refresh pages in my

browser.

Can I remove hash and bang (#!) from my urls and be able to refresh pages and

use direct urls to a path?

|

### Vue.js version

2.0.2

### Reproduction Link

When I deployment my vue app to IIS, refresh page in SPA component, I go 404

error

| 1 |

#### Challenge Name

https://www.freecodecamp.com/challenges/target-the-children-of-an-element-

using-

jquery#?solution=%0Afccss%0A%20%20%24(document).ready(function()%20%7B%0A%20%20%20%20%24(%22%23target1%22).css(%22color%22%2C%20%22red%22)%3B%0A%20%20%20%20%24(%22%23target1%22).prop(%22disabled%22%2C%20true)%3B%0A%20%20%20%20%24(%22%23target4%22).remove()%3B%0A%20%20%20%20%24(%22%23target2%22).appendTo(%22%23right-

well%22)%3B%0A%20%20%20%20%24(%22%23target5%22).clone().appendTo(%22%23left-

well%22)%3B%0A%20%20%20%20%24(%22%23target1%22).parent().css(%22background-

color%22%2C%20%22red%22)%3B%0A%20%20%20%20%24(%22%23right-

well%22).children().css(%22color%22%2C%20%22orange%22)%0A%20%20%7D)%3B%0Afcces%0A%0A%3C!--%20Only%20change%20code%20above%20this%20line.%20--%3E%0A%0A%3Cdiv%20class%3D%22container-

fluid%22%3E%0A%20%20%3Ch3%20class%3D%22text-primary%20text-

center%22%3EjQuery%20Playground%3C%2Fh3%3E%0A%20%20%3Cdiv%20class%3D%22row%22%3E%0A%20%20%20%20%3Cdiv%20class%3D%22col-

xs-6%22%3E%0A%20%20%20%20%20%20%3Ch4%3E%23left-

well%3C%2Fh4%3E%0A%20%20%20%20%20%20%3Cdiv%20class%3D%22well%22%20id%3D%22left-

well%22%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target1%22%3E%23target1%3C%2Fbutton%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target2%22%3E%23target2%3C%2Fbutton%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target3%22%3E%23target3%3C%2Fbutton%3E%0A%20%20%20%20%20%20%3C%2Fdiv%3E%0A%20%20%20%20%3C%2Fdiv%3E%0A%20%20%20%20%3Cdiv%20class%3D%22col-

xs-6%22%3E%0A%20%20%20%20%20%20%3Ch4%3E%23right-

well%3C%2Fh4%3E%0A%20%20%20%20%20%20%3Cdiv%20class%3D%22well%22%20id%3D%22right-

well%22%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target4%22%3E%23target4%3C%2Fbutton%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target5%22%3E%23target5%3C%2Fbutton%3E%0A%20%20%20%20%20%20%20%20%3Cbutton%20class%3D%22btn%20btn-

default%20target%22%20id%3D%22target6%22%3E%23target6%3C%2Fbutton%3E%0A%20%20%20%20%20%20%3C%2Fdiv%3E%0A%20%20%20%20%3C%2Fdiv%3E%0A%20%20%3C%2Fdiv%3E%0A%3C%2Fdiv%3E%0A

#### Issue Description

This page was buggy for me on a new iMac. I know this because it didn't work

with my code, and then I tried other things that didn't work, and when I

returned it to my first code (no small errors btw), it functioned fine.

Also, there has been a bug with this code:

$("#target5").clone().appendTo("#left-well");

Apparently, it seems to place two clones at times (most often when I click

CTRL+RTN to test).

Hopefully this helps!

#### Browser Information

* Browser Name, Version: CHROME 54.0.2840.71

* Operating System: OSX El Capitan 10.11.6

* Mobile, Desktop, or Tablet: DESKTOP

#### Your Code

<script> $(document).ready(function() { $("#target1").css("color", "red");

$("#target1").prop("disabled", true); $("#target4").remove();

$("#target2").appendTo("#right-well"); $("#target5").clone().appendTo("#left-

well"); $("#target1").parent().css("background-color", "red"); $("#right-

well").children().css("color", "orange") }); </script>

### jQuery Playground

#### #left-well

#target1 #target2 #target3

#### #right-well

#target4 #target5 #target6

#### Screenshot

|

Challenge Waypoint: Clone an Element Using jQuery has an issue.

User Agent is: `Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_5)

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/47.0.2526.73 Safari/537.36`.

Please describe how to reproduce this issue, and include links to screenshots

if possible.

## Issue

I believe I've found bug in the phone simulation in **Waypoint: Clone an

Element Using jQuery** :

I entered the code to clone `target5` and append it to `left-well`, and now I

see three **target5** buttons in the phone simulator. FCC says my code is

correct and advances me to the next challenge. The following challenges also

show three target5 buttons:

* Waypoint: Target the Parent of an Element Using jQuery

* Waypoint: Target the Children of an Element Using jQuery

@qualitymanifest confirms this issue on his **Linux** box.

## My code:

<script>

$(document).ready(function() {

$("#target1").css("color", "red");

$("#target1").prop("disabled", true);

$("#target4").remove();

$("#target2").appendTo("#right-well");

$("#target5").clone().appendTo("#left-well");

});

</script>

<!-- Only change code above this line. -->

<div class="container-fluid">

<h3 class="text-primary text-center">jQuery Playground</h3>

<div class="row">

<div class="col-xs-6">

<h4>#left-well</h4>

<div class="well" id="left-well">

<button class="btn btn-default target" id="target1">#target1</button>

<button class="btn btn-default target" id="target2">#target2</button>

<button class="btn btn-default target" id="target3">#target3</button>

</div>

</div>

<div class="col-xs-6">

<h4>#right-well</h4>

<div class="well" id="right-well">

<button class="btn btn-default target" id="target4">#target4</button>

<button class="btn btn-default target" id="target5">#target5</button>

<button class="btn btn-default target" id="target6">#target6</button>

</div>

</div>

</div>

</div>

| 1 |

> Issue originally made by @dfilatov

### Bug information

* **Babel version:** 6.3.13

* **Node version:** 4.2.1

* **npm version:** 2.14.7

### Options

--presets es2015

### Input code

export * from './client/mounter';

### Description

Given input code will be transformed to:

var _mounter = require('./client/mounter');

for (var _key in _mounter) {

if (_key === "default") continue;

Object.defineProperty(exports, _key, {

enumerable: true,

get: function get() {

return _mounter[_key];

}

});

}

In result all reexported stuff will reference to the same `_mounter[_key]`,

where `_key` is a last key from `_mounter`.

|

flow v0.32.0:

> New syntax for exact object types: use {| and |} instead of { and }. Where

> {x: string} contains at least the property x, {| x: string |} contains ONLY

> the property x.

| 0 |

I have a form some fields are not validated based on the validation groups.

The validation works as expected but the error message is always added to the

wrong field. It seems to just use which ever property is last in the

validation.yml file.

I believe my use case is valid. It worked fine in 2.4 but when testing 2.5

BETA2 there were test failures. Also seems to be an issue with the master

branch.

I found it easiest to reproduce the issue using a system test. This can be

found in the following repository: https://github.com/tompedals/symfony-form-

test

Model: https://github.com/tompedals/symfony-form-

test/blob/master/src/Test/FormTestBundle/Model/Attachment.php

Form: https://github.com/tompedals/symfony-form-

test/blob/master/src/Test/FormTestBundle/Form/AttachmentType.php

Validation mapping: https://github.com/tompedals/symfony-form-

test/blob/master/src/Test/FormTestBundle/Resources/config/validation.yml

Test: https://github.com/tompedals/symfony-form-

test/blob/master/src/Test/FormTestBundle/Tests/Form/AttachmentTypeTest.php

Use `phpunit -c app` to run the tests.

|

Hello,

When using Validator 2.5 to validate an array of scalars, the `propertyPath`

of the returned violations has really strange values.

Consider the following script:

<?php

require 'vendor/autoload.php';

use Symfony\Component\Validator\Constraints;

use Symfony\Component\Validator\Validation;

$constraints = array(

'foo' => array(

new Constraints\NotBlank(),

new Constraints\Date()

),

'bar' => new Constraints\NotBlank()

);

$collectionConstraint = new Constraints\Collection(array('fields' => $constraints));

$validator = Validation::createValidatorBuilder()

->setApiVersion(Validation::API_VERSION_2_5)

->getValidator();

$value = array(

'foo' => 'bar'

);

$violations = $validator->validate($value, $collectionConstraint);

foreach ($violations as $violation) {

var_dump($violation->getPropertyPath());

}

Output:

string(11) "[foo].[foo]"

string(17) "[foo].[foo].[bar]"

If I set `Validation::API_VERSION_2_4` and call the old `validateValue`

method, it outputs:

string(5) "[foo]"

string(5) "[bar]"

Is this something expected ?

| 1 |

NMF in scikit-learn overview :

**Current implementation (code):**

\- loss = squared (aka Frobenius norm)

\- method = projected gradient

\- regularization = trick to enforce sparseness or low error with beta / eta

**#1348 (or in gist):**

\- loss = (generalized) Kullback-Leibler divergence (aka I-divergence)

\- method = multiplicative update

\- regularization = None

**#2540 [WIP]:**

\- loss = squared (aka Frobenius norm)

\- method = multiplicative update

\- regularization = None

**#896 (or in gist):**

\- loss = squared (aka Frobenius norm)

\- method = coordinate descent, no greedy selection

\- regularization = L1 or L2

**Papers describing the methods:**

\- Multiplicative update

\- Projected gradient

\- Coordinate descent with greedy selection

* * *

**About the uniqueness of the results**

The problem is non-convex, and there is no unique minimum:

Different losses, different initializations, and/or different optimization

methods generally give different results !

**About the methods**

* The multiplicative update (MU) is the most widely used because of it's simplicity. It is very easy to adapt it to squared loss, (generalized) Kullback-Leibler divergence or Itakura–Saito divergence, which are 3 specific cases of the so-called beta-divergence. All three losses seem used in practice. A regularization L1 or L2 can easily be added.

* The Projected gradient (PG) seems very efficient for the squared loss, but does not scale well (w.r.t X size) for the (generalized) KL divergence. A L1 or L2 regularization could possibly be added in the gradient step. I don't know where the sparseness enforcement trick in current code comes from.

* The Coordinate Descent (CD) seems even more efficient for squared loss, and we can add easily L1 or L2 regularization. It can be further speeded up by a greedy selection of coordinate. The adaptation for KL divergence is possible with a Newton method for solving subproblem (slower), but without greedy selection. This adaptation is supposed to be faster than MU-NMF with (generalized) KL divergence.

**About the initialization**

Different schemes exist, and can change significantly both result and speed.

They can be used independantly for each NMF method.

**About the stopping condition**

Actual stopping condition in PG-NMF is bugged (#2557), and leads to poor

minima when the tolerance is not low enough, especially in the random

initialization scheme. It is also completely different from stopping condition

in MU-NMF, which is very difficult to set. Talking with audio scientists (who

use a lot MU-NMF for source seperation) reveals that they just set a number of

iteration.

* * *

As far as I understand NMF, as there is no unique minimum, there is no perfect

loss/method/initialization/regularization. A good choice for some dataset can

be terrible for another one. I don't know how many methods we want to maintain

in scikit-learn, and how much we want to guide users with few possibilities,

but several methods seems more useful than only one.

I have tested MU-NMF, PG-NMF and CD-NMF from scikit-learn code, #2540 and

#896, with squared loss and no regularization, on a subsample of 20news

dataset, and performances are already very different depending on the

initialization (see below).

**Which methods do we want in scikit-learn?**

Why do we have stopped #1348 or #896 ?

Do we want to continue #2540 ?

I can work on it as soon as we have decided.

* * *

NNDSVD (similar curves than NNDSVRAR)

NNDSVDA

Random run 1

Random run 2

Random run 3

|

We should fix the remaining sphinx warnings here:

https://circleci.com/gh/scikit-learn/scikit-learn/1629

and then make circle IO error if there are any warnings (grep'ing for

`WARNINGS` I guess?).

This way we immediately see if someone broke anything in the docs.

| 0 |

**Christian Nelson** opened **SPR-6118** and commented

Spring JDBC 3.0.0.M4 maven pom includes derby and derby.client - these should

be optional dependencies.

Here is the relevant output from mvn dependency:tree...

[INFO] +- org.springframework:spring-orm:jar:3.0.0.M4:compile

[INFO] | +- org.slf4j:slf4j-jdk14:jar:1.5.2:compile

[INFO] | +- org.springframework:spring-beans:jar:3.0.0.M4:compile

[INFO] | +- org.springframework:spring-core:jar:3.0.0.M4:compile

[INFO] | | - org.springframework:spring-asm:jar:3.0.0.M4:compile

[INFO] | +- org.springframework:spring-jdbc:jar:3.0.0.M4:compile

**[INFO] | | +-

org.apache.derby:com.springsource.org.apache.derby:jar:10.5.1000001.764942:compile**

**[INFO] | | -

org.apache.derby:com.springsource.org.apache.derby.client:jar:10.5.1000001.764942:compile**

[INFO] | - org.springframework:spring-tx:jar:3.0.0.M4:compile

[INFO] | +- aopalliance:aopalliance:jar:1.0:compile

[INFO] | +- org.springframework:spring-aop:jar:3.0.0.M4:compile

[INFO] | - org.springframework:spring-context:jar:3.0.0.M4:compile

[INFO] | - org.springframework:spring-expression:jar:3.0.0.M4:compile

These dependencies should be configured as <optional>true</optional> since

they're not required for regular usage of spring-jdbc.

* * *

**Affects:** 3.0 M4

**Issue Links:**

* #10777 Spring JDBC POM should declare derby dependency is optional ( _ **"duplicates"**_ )

|

**Keith Garry Boyce** opened **SPR-1412** and commented

I have a situation when I need access to the portlet name in the controller to

then in turn look up data related to that data in db. I don't want a separate

controller config for each portlet s it would seem if framework detected that

I implement and interface requiring that then it would do the right thing and

give me access to read it. What do you think?

* * *

**Affects:** 2.0 M1

| 0 |

In Polish language exists letter 'ś', written by pressing rightAlt+s. In atom

this combination brings up the 'Spec suite' window.

Worth noticing is fact, that in settings shortcut responsible for this is

**ctrl** -alt-s, yet rightAlt+s alone brings up the window too.

I tried adding

'body':

'ralt-s': ''

'ctrl-alt-s' : ''

to the keymap, but it didn't fix my problem.

Expected change:

* chaning default shortcut to a non-colliding one

or

* making only ctrl-alt-s as hotkey and not rightAlt-s,

|

Original issue: atom/atom#1625

* * *

Use https://atom.io/packages/keyboard-localization until this issue gets fixed

(should be in the Blink upstream).

| 1 |

**I'm submitting a ...** (check one with "x")

[x] bug report => search github for a similar issue or PR before submitting

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://github.com/angular/angular/blob/master/CONTRIBUTING.md#question

**Current behavior**

When using Angular 2.3.0 with Safari 9, the application does not start up at

all. Instead the console shows the following error message:

Error: (SystemJS) Strict mode does not allow function declarations in a lexically nested statement.

eval@[native code]

invoke@http://localhost:8000/lib/zone.js:229:31

run@http://localhost:8000/lib/zone.js:113:49

http://localhost:8000/lib/zone.js:509:60

invokeTask@http://localhost:8000/lib/zone.js:262:40

runTask@http://localhost:8000/lib/zone.js:151:57

drainMicroTaskQueue@http://localhost:8000/lib/zone.js:405:42

run@http://localhost:8000/lib/shim.js:4005:30

http://localhost:8000/lib/shim.js:4018:32

flush@http://localhost:8000/lib/shim.js:4373:12

Evaluating http://localhost:8000/lib/@angular/compiler/bundles/compiler.umd.js

Error loading http://localhost:8000/lib/@angular/compiler/bundles/compiler.umd.js as "@angular/compiler" from http://localhost:8000/lib/@angular/platform-browser-dynamic/bundles/platform-browser-dynamic.umd.js — zone.js:672

**Expected behavior**

Expected it, that the application works and starts up according to the

supported browser matrix.

The same plunkr - as shown below - works as expected in Safari 10.

**Minimal reproduction of the problem with instructions**

To reproduce you can use the template offered when creating a new issue:

http://plnkr.co/edit/tpl:AvJOMERrnz94ekVua0u5

Fire it up in Safari 9 and take a look into the console.

**Please tell us about your environment:**

macOS El Capitan 10.11.6

* **Angular version:** 2.3.0

* **Browser:** Safari 9.1.2

Error may be related to #13301

| 0 | |

* VSCode Version: 1.1.0

* OS Version: OS X 10.11.4

Steps to Reproduce:

1. Create a new text file `test.js`

2. Set the content to the following:

let x = true ? '' : `${1}`

console.log('still part of the template!')

VS Code fails to parse the opening `of the template string at the end of the

ternary operator correctly. It therefore sees the final` as an opening tick,

and interprets the rest of the file as a template string:

As a workaround you can wrap the failure case in parenthesis:

let x = true ? '' : (`${1}`)

console.log('still part of the template!')

|

_From@garthk on April 8, 2016 4:30_

* VSCode Version: `Version 0.10.10 (0.10.10)` or `Version 0.10.14-insider (0.10.14-insider) 17fa1cbb49e3c5edd5868f304a64115fcc7c9c2c`

* OS Version: OS X `10.11.4 (15E65)`

* `javascript.validate.enable` set either `true` or `false`

Steps to Reproduce:

1. Open a new Code – Insiders window

2. Paste in the following JavaScript

3. Observe

function test1(x) {

const defaultValue = `${x}`;

const value = x ? x : y;

console.log('so far, so good');

}

function test2(x) {

const value = !x ? `${x}` : x;

console.log('so far, so good');

}

function test3(x) {

const value = x ? x : `${x}`; // this comment is coloured as if it's part of the template literal

console.log('this entire line, too');

} // and this one

If I'm getting this right:

* `test1` shows `code` knows when a template literal ends, usually

* `test2` shows `code` still knows when a template literal ends if it's the first _AssignmentExpression_ in a ternary

* `test3` shows `code` somehow misses the end of a template literal as the second _AssignmentExpression_ in a ternary, highlighting from then on as a string

_Copied from original issue:microsoft/vscode#5090_

| 1 |

ECMAScript 2018 was finalized, what do we need to enable these features by

default for `preset-env`?

SyntaxError: /path/to/file.js:

Support for the experimental syntax 'objectRestSpread' isn't currently enabled (11:9):

10 | {

> 11 | ...example,

| ^

12 | key: 'value',

13 | },

The `proposal` keyword was removed from `@babel/plugin-syntax-object-rest-

spread` package name but `babel-preset-env/data/plugins.json` still have:

babel/packages/babel-preset-env/data/plugins.json

Line 232 in 6f3be3a

| "proposal-object-rest-spread": {

---|---

|

Hi there,

a simple module just reexport a module fails.

export * from './some-module';

My .babelrc looks like this:

{

"presets": ["es2015", "stage-1"],

"plugins": ["transform-async-to-generator"]

}

This should contain the required export-extensions transform.

The error message looks like this:

$ ./node_modules/.bin/babel test.js

Error: test.js: Invariant Violation: To get a node path the parent needs to exist

at Object.invariant [as default] (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/node_modules/invariant/invariant.js:44:15)

at Function.get (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/path/index.js:82:27)

at TraversalContext.create (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/context.js:73:30)

at NodePath._containerInsert (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/path/modification.js:80:32)

at NodePath._containerInsertBefore (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/path/modification.js:138:15)

at NodePath.insertBefore (/home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/path/modification.js:57:19)

at BlockScoping.wrapClosure (/home/markusw/source/ankara/node_modules/babel-preset-es2015/node_modules/babel-plugin-transform-es2015-block-scoping/lib/index.js:485:21)

at BlockScoping.run (/home/markusw/source/ankara/node_modules/babel-preset-es2015/node_modules/babel-plugin-transform-es2015-block-scoping/lib/index.js:385:12)

at PluginPass.Loop (/home/markusw/source/ankara/node_modules/babel-preset-es2015/node_modules/babel-plugin-transform-es2015-block-scoping/lib/index.js:89:36)

at /home/markusw/source/ankara/node_modules/babel-cli/node_modules/babel-core/node_modules/babel-traverse/lib/visitors.js:271:19

Any hint on this?

| 0 |

# coding: utf-8

import pandas as pd

import numpy as np

frame = pd.read_csv("table.csv", engine="python", parse_dates=['since'])

print frame

d = pd.pivot_table(frame, index=pd.TimeGrouper(key='since', freq='1d'), values=["value"], columns=['id'], aggfunc=np.sum, fill_value=0)

print d

print "^that is not what I expected"

frame = pd.read_csv("table2.csv", engine="python", parse_dates=['since']) # add some values to a day

print frame

d = pd.pivot_table(frame, index=pd.TimeGrouper(key='since', freq='1d'), values=["value"], columns=['id'], aggfunc=np.sum, fill_value=0)

print d

The following data is the contents of `table.csv`

"id","since","value"

"81","2015-01-31 07:00:00+00:00","2200.0000"

"81","2015-02-01 07:00:00+00:00","2200.0000"

This is `table2.csv`:

"id","since","value"

"81","2015-01-31 07:00:00+00:00","2200.0000"

"81","2015-01-31 08:00:00+00:00","2200.0000"

"81","2015-01-31 09:00:00+00:00","2200.0000"

"81","2015-02-01 07:00:00+00:00","2200.0000"

The output of print after pivoting `table.csv`

id value

<pandas.tseries.resample.TimeGrouper object at 0x7fc595f96c10> 81 2200

id 81 2200

I would expect something like this:

value

id 81

since

2015-01-31 2200

2015-02-01 2200

I can trace the problem to here:

https://github.com/pydata/pandas/blob/62529cca28e9c8652ddf7cca3aa6d41d4e30bc0e/pandas/tools/pivot.py#L114

the index created by groupby already has the object there.

I can't figure anything else. What is the problem, any fixes?

Thanks.

|

The following code seems to raise an error, since the result object does not

make sense (well, at least to me):

In [61]: import datetime

In [62]: import pandas as pd

In [63]: df = pd.DataFrame.from_records ( [[datetime.datetime(2014,9,10),1234,"start"],

[datetime.datetime(2013,10,10),1234,"start"]], columns = ["date", "change", "event"] )

In [64]: df

Out[64]:

date change event

0 2014-09-10 1234 start

1 2013-10-10 1234 start

In [65]: ts = df.set_index('date')

In [66]: ts

Out[66]:

change event

date

2014-09-10 1234 start

2013-10-10 1234 start

In [67]: byperiod = ts.groupby([pd.TimeGrouper(freq="M"), "event"], as_index=False)

In [68]: byperiod.groups

Out[68]:

{<pandas.tseries.resample.TimeGrouper at 0xab6bcaec>: [Timestamp('2014-09-10 00:00:00')],

'event': [Timestamp('2013-10-10 00:00:00')]}

I would expect, for Out[68], two groups, one for each (date, event) pair.

Am I wring, or this is a bug?

| 1 |

* **Electron Version:**

* v5.0.0-beta.5

* **Operating System:**

* Windows 10 Pro.

* **Last Known Working Electron version:** :

* 4.x (latest)

### Expected Behavior

When I perfrom a GET-request using `http` or `https` built-in library, I

expect the app to keep running after the request completes.

### Actual Behavior

When performing a GET request to an arbitrary host, the app crashes after **a

few seconds after completing the request**. Usually ranges between 5 and 20

seconds.

### To Reproduce

https://github.com/haroldiedema/electron-5x-http-crash

$ git clone https://github.com/haroldiedema/electron-5x-http-crash

$ npm install

$ node_modules/.bin/electron .

Sit back and wait for a few seconds for the app to crash with exit code 127.

(or exit code 1 if started from npm or yarn).

### Screenshots

N/A

### Additional Information

I think this might have something to do with either:

A) Electron not handling the closing of sockets correctly anymore, or

B) The garbage collector kicking in and cleaning up the socket resource which

makes electron crash.

Then again, that is purely speculation on my end.

|

* **Electron Version** :

* 5.0.0-beta (all)

* **Operating System** :

* Ubuntu 18.10, Linux 4.18, x64

* **Last known working Electron version** (if applicable):

* 4.0.5

### Expected Behavior

Google API should work as it they are meant to.

### Actual behavior

Any Google API calls may crash renderer process (even if they are run on

Worker thread).

The request may be done and the answer given, but immediately or after several

seconds the renderer process may crash.

### To Reproduce

$ git clone https://github.com/ruslang02/youtube-electron-crash-example

$ npm install electron@beta

$ npm start || electron .

Version 5 will randomly crash, version 4: doesn't

### Additional Information

May be a Node.JS bug, but not sure

| 1 |

* I have searched the issues of this repository and believe that this is not a duplicate.

* I have checked the FAQ of this repository and believe that this is not a duplicate.

### Environment

* Dubbo version: 2.6.1

* Operating System version: Linux version 3.10.0-693.el7.x86_64 (builder@kbuilder.dev.centos.org) (gcc version 4.8.5 20150623 (Red Hat 4.8.5-16) (GCC) )

* Java version: 1.8.0_171

* zk & zk client version: 3.4.11

* curator version: 4.0.1

### 不定时出现zookeeper session timeout,重连后消费端引用@reference 为空,日志如下

### 消费端jvm日志

04:22:37,427 WARN [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1111] - Client session timed out, have not heard from server in 33959ms for sessionid 0x10000018d38000b

04:23:07,081 INFO [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1159] - Client session timed out, have not heard from server in 33959ms for sessionid 0x10000018d38000b, closing socket connection and attempting reconnect

04:23:09,992 INFO [main-EventThread][state.ConnectionStateManager:237] - State change: SUSPENDED

04:23:10,186 INFO [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1035] - Opening socket connection to server hostname/172.16.10.121:2181. Will not attempt to authenticate using SASL (unknown error)

04:23:10,804 INFO [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:877] - Socket connection established to hostname/172.16.10.121:2181, initiating session

04:23:10,998 WARN [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1288] - Unable to reconnect to ZooKeeper service, session 0x10000018d38000b has expired

04:23:11,066 INFO [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1157] - Unable to reconnect to ZooKeeper service, session 0x10000018d38000b has expired, closing socket connection

04:23:11,164 WARN [main-EventThread][curator.ConnectionState:372] - Session expired event received

04:23:12,502 INFO [main-EventThread][zookeeper.ZooKeeper:441] - Initiating client connection, connectString=hostname:2181 sessionTimeout=60000 watcher=org.apache.curator.ConnectionState@67f639d3

04:23:13,599 INFO [main-SendThread(hostname:2181)][zookeeper.ClientCnxn:1035] - Opening socket connection to server hostname/172.16.10.121:2181. Will not attempt to authenticate using SASL (unknown error)