repo_id

stringlengths 15

86

| file_path

stringlengths 27

180

| content

stringlengths 1

1.75M

| __index_level_0__

int64 0

0

|

|---|---|---|---|

hf_public_repos/text-generation-inference/server/text_generation_server/utils | hf_public_repos/text-generation-inference/server/text_generation_server/utils/gptq/exllama.py | import torch

from exllama_kernels import make_q4, q4_matmul, prepare_buffers, set_tuning_params

# Dummy tensor to pass instead of g_idx since there is no way to pass "None" to a C++ extension

none_tensor = torch.empty((1, 1), device="meta")

def ext_make_q4(qweight, qzeros, scales, g_idx, device):

"""Construct Q4Matrix, return handle"""

return make_q4(

qweight, qzeros, scales, g_idx if g_idx is not None else none_tensor, device

)

def ext_q4_matmul(x, q4, q4_width):

"""Matrix multiplication, returns x @ q4"""

outshape = x.shape[:-1] + (q4_width,)

x = x.view(-1, x.shape[-1])

output = torch.empty((x.shape[0], q4_width), dtype=torch.float16, device=x.device)

q4_matmul(x, q4, output)

return output.view(outshape)

MAX_DQ = 1

MAX_INNER = 1

ACT_ORDER = False

DEVICE = None

TEMP_STATE = None

TEMP_DQ = None

def set_device(device):

global DEVICE

DEVICE = device

def create_exllama_buffers():

global MAX_DQ, MAX_INNER, ACT_ORDER, DEVICE, TEMP_STATE, TEMP_DQ

assert DEVICE is not None, "call set_device first"

if ACT_ORDER:

# TODO: this should be set to rust side `max_total_tokens`, but TGI

# does not offer an API to expose this variable to python, as this variable

# is handled by the client but it appears the model is initialized by the server.

# An alternative could be to initialize the buffers during warmup.

# Dummy

max_total_tokens = 2048

else:

max_total_tokens = 1

# This temp_state buffer is required to reorder X in the act-order case.

temp_state = torch.zeros(

(max_total_tokens, MAX_INNER), dtype=torch.float16, device=DEVICE

)

temp_dq = torch.zeros((1, MAX_DQ), dtype=torch.float16, device=DEVICE)

# This temp_dq buffer is required to dequantize weights when using cuBLAS, typically for the prefill.

prepare_buffers(DEVICE, temp_state, temp_dq)

matmul_recons_thd = 8

matmul_fused_remap = False

matmul_no_half2 = False

set_tuning_params(matmul_recons_thd, matmul_fused_remap, matmul_no_half2)

TEMP_STATE, TEMP_DQ = temp_state, temp_dq

class Ex4bitLinear:

"""Linear layer implementation with per-group 4-bit quantization of the weights"""

def __init__(self, qweight, qzeros, scales, g_idx, bias, bits, groupsize):

global MAX_DQ, MAX_INNER, ACT_ORDER, DEVICE

assert bits == 4

self.device = qweight.device

self.qweight = qweight

self.qzeros = qzeros

self.scales = scales

self.g_idx = g_idx.cpu() if g_idx is not None else None

self.bias = bias if bias is not None else None

if self.g_idx is not None and (

(self.g_idx == 0).all()

or torch.equal(

g_idx.cpu(),

torch.tensor(

[i // groupsize for i in range(g_idx.shape[0])], dtype=torch.int32

),

)

):

self.empty_g_idx = True

self.g_idx = None

assert self.device.type == "cuda"

assert self.device.index is not None

self.q4 = ext_make_q4(

self.qweight, self.qzeros, self.scales, self.g_idx, self.device.index

)

self.height = qweight.shape[0] * 8

self.width = qweight.shape[1]

# Infer groupsize from height of qzeros

self.groupsize = None

if self.qzeros.shape[0] > 1:

self.groupsize = (self.qweight.shape[0] * 8) // (self.qzeros.shape[0])

if self.groupsize is not None:

assert groupsize == self.groupsize

# Handle act-order matrix

if self.g_idx is not None:

if self.groupsize is None:

raise ValueError("Found group index but no groupsize. What do?")

self.act_order = True

else:

self.act_order = False

DEVICE = self.qweight.device

MAX_DQ = max(MAX_DQ, self.qweight.numel() * 8)

if self.act_order:

MAX_INNER = max(MAX_INNER, self.height, self.width)

ACT_ORDER = True

def forward(self, x):

out = ext_q4_matmul(x, self.q4, self.width)

if self.bias is not None:

out.add_(self.bias)

return out

| 0 |

hf_public_repos/text-generation-inference/server/text_generation_server/utils | hf_public_repos/text-generation-inference/server/text_generation_server/utils/gptq/quant_linear.py | import math

import numpy as np

import torch

import torch.nn as nn

from torch.cuda.amp import custom_bwd, custom_fwd

try:

import triton

import triton.language as tl

from . import custom_autotune

# code based https://github.com/fpgaminer/GPTQ-triton

@custom_autotune.autotune(

configs=[

triton.Config(

{

"BLOCK_SIZE_M": 64,

"BLOCK_SIZE_N": 256,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=4,

num_warps=4,

),

triton.Config(

{

"BLOCK_SIZE_M": 128,

"BLOCK_SIZE_N": 128,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=4,

num_warps=4,

),

triton.Config(

{

"BLOCK_SIZE_M": 64,

"BLOCK_SIZE_N": 128,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=4,

num_warps=4,

),

triton.Config(

{

"BLOCK_SIZE_M": 128,

"BLOCK_SIZE_N": 32,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=4,

num_warps=4,

),

triton.Config(

{

"BLOCK_SIZE_M": 64,

"BLOCK_SIZE_N": 64,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=4,

num_warps=4,

),

triton.Config(

{

"BLOCK_SIZE_M": 64,

"BLOCK_SIZE_N": 128,

"BLOCK_SIZE_K": 32,

"GROUP_SIZE_M": 8,

},

num_stages=2,

num_warps=8,

),

triton.Config(

{

"BLOCK_SIZE_M": 64,

"BLOCK_SIZE_N": 64,

"BLOCK_SIZE_K": 64,

"GROUP_SIZE_M": 8,

},

num_stages=3,

num_warps=8,

),

triton.Config(

{

"BLOCK_SIZE_M": 32,

"BLOCK_SIZE_N": 32,

"BLOCK_SIZE_K": 128,

"GROUP_SIZE_M": 8,

},

num_stages=2,

num_warps=4,

),

],

key=["M", "N", "K"],

nearest_power_of_two=True,

prune_configs_by={

"early_config_prune": custom_autotune.matmul248_kernel_config_pruner,

"perf_model": None,

"top_k": None,

},

)

@triton.jit

def matmul_248_kernel(

a_ptr,

b_ptr,

c_ptr,

scales_ptr,

zeros_ptr,

g_ptr,

M,

N,

K,

bits,

maxq,

stride_am,

stride_ak,

stride_bk,

stride_bn,

stride_cm,

stride_cn,

stride_scales,

stride_zeros,

BLOCK_SIZE_M: tl.constexpr,

BLOCK_SIZE_N: tl.constexpr,

BLOCK_SIZE_K: tl.constexpr,

GROUP_SIZE_M: tl.constexpr,

):

"""

Compute the matrix multiplication C = A x B.

A is of shape (M, K) float16

B is of shape (K//8, N) int32

C is of shape (M, N) float16

scales is of shape (G, N) float16

zeros is of shape (G, N) float16

g_ptr is of shape (K) int32

"""

infearure_per_bits = 32 // bits

pid = tl.program_id(axis=0)

num_pid_m = tl.cdiv(M, BLOCK_SIZE_M)

num_pid_n = tl.cdiv(N, BLOCK_SIZE_N)

num_pid_k = tl.cdiv(K, BLOCK_SIZE_K)

num_pid_in_group = GROUP_SIZE_M * num_pid_n

group_id = pid // num_pid_in_group

first_pid_m = group_id * GROUP_SIZE_M

group_size_m = min(num_pid_m - first_pid_m, GROUP_SIZE_M)

pid_m = first_pid_m + (pid % group_size_m)

pid_n = (pid % num_pid_in_group) // group_size_m

offs_am = pid_m * BLOCK_SIZE_M + tl.arange(0, BLOCK_SIZE_M)

offs_bn = pid_n * BLOCK_SIZE_N + tl.arange(0, BLOCK_SIZE_N)

offs_k = tl.arange(0, BLOCK_SIZE_K)

a_ptrs = a_ptr + (

offs_am[:, None] * stride_am + offs_k[None, :] * stride_ak

) # (BLOCK_SIZE_M, BLOCK_SIZE_K)

a_mask = offs_am[:, None] < M

# b_ptrs is set up such that it repeats elements along the K axis 8 times

b_ptrs = b_ptr + (

(offs_k[:, None] // infearure_per_bits) * stride_bk

+ offs_bn[None, :] * stride_bn

) # (BLOCK_SIZE_K, BLOCK_SIZE_N)

g_ptrs = g_ptr + offs_k

# shifter is used to extract the N bits of each element in the 32-bit word from B

scales_ptrs = scales_ptr + offs_bn[None, :]

zeros_ptrs = zeros_ptr + (offs_bn[None, :] // infearure_per_bits)

shifter = (offs_k % infearure_per_bits) * bits

zeros_shifter = (offs_bn % infearure_per_bits) * bits

accumulator = tl.zeros((BLOCK_SIZE_M, BLOCK_SIZE_N), dtype=tl.float32)

for k in range(0, num_pid_k):

g_idx = tl.load(g_ptrs)

# Fetch scales and zeros; these are per-outfeature and thus reused in the inner loop

scales = tl.load(

scales_ptrs + g_idx[:, None] * stride_scales

) # (BLOCK_SIZE_K, BLOCK_SIZE_N,)

zeros = tl.load(

zeros_ptrs + g_idx[:, None] * stride_zeros

) # (BLOCK_SIZE_K, BLOCK_SIZE_N,)

zeros = (zeros >> zeros_shifter[None, :]) & maxq

zeros = zeros + 1

a = tl.load(a_ptrs, mask=a_mask, other=0.0) # (BLOCK_SIZE_M, BLOCK_SIZE_K)

b = tl.load(b_ptrs) # (BLOCK_SIZE_K, BLOCK_SIZE_N), but repeated

# Now we need to unpack b (which is N-bit values) into 32-bit values

b = (b >> shifter[:, None]) & maxq # Extract the N-bit values

b = (b - zeros) * scales # Scale and shift

accumulator += tl.dot(a, b)

a_ptrs += BLOCK_SIZE_K

b_ptrs += (BLOCK_SIZE_K // infearure_per_bits) * stride_bk

g_ptrs += BLOCK_SIZE_K

c_ptrs = c_ptr + stride_cm * offs_am[:, None] + stride_cn * offs_bn[None, :]

c_mask = (offs_am[:, None] < M) & (offs_bn[None, :] < N)

tl.store(c_ptrs, accumulator, mask=c_mask)

except:

print("triton not installed.")

def matmul248(input, qweight, scales, qzeros, g_idx, bits, maxq):

with torch.cuda.device(input.device):

output = torch.empty(

(input.shape[0], qweight.shape[1]), device=input.device, dtype=torch.float16

)

grid = lambda META: (

triton.cdiv(input.shape[0], META["BLOCK_SIZE_M"])

* triton.cdiv(qweight.shape[1], META["BLOCK_SIZE_N"]),

)

matmul_248_kernel[grid](

input,

qweight,

output,

scales,

qzeros,

g_idx,

input.shape[0],

qweight.shape[1],

input.shape[1],

bits,

maxq,

input.stride(0),

input.stride(1),

qweight.stride(0),

qweight.stride(1),

output.stride(0),

output.stride(1),

scales.stride(0),

qzeros.stride(0),

)

return output

class QuantLinearFunction(torch.autograd.Function):

@staticmethod

@custom_fwd(cast_inputs=torch.float16)

def forward(ctx, input, qweight, scales, qzeros, g_idx, bits, maxq):

output = matmul248(input, qweight, scales, qzeros, g_idx, bits, maxq)

return output

class QuantLinear(nn.Module):

def __init__(self, qweight, qzeros, scales, g_idx, bias, bits, groupsize):

super().__init__()

self.register_buffer("qweight", qweight)

self.register_buffer("qzeros", qzeros)

self.register_buffer("scales", scales)

self.register_buffer("g_idx", g_idx)

if bias is not None:

self.register_buffer("bias", bias)

else:

self.bias = None

if bits not in [2, 4, 8]:

raise NotImplementedError("Only 2,4,8 bits are supported.")

self.bits = bits

self.maxq = 2**self.bits - 1

self.groupsize = groupsize

self.outfeatures = qweight.shape[1]

self.infeatures = qweight.shape[0] * 32 // bits

@classmethod

def new(cls, bits, groupsize, infeatures, outfeatures, bias):

if bits not in [2, 4, 8]:

raise NotImplementedError("Only 2,4,8 bits are supported.")

qweight = torch.zeros((infeatures // 32 * bits, outfeatures), dtype=torch.int32)

qzeros = torch.zeros(

(math.ceil(infeatures / groupsize), outfeatures // 32 * bits),

dtype=torch.int32,

)

scales = torch.zeros(

(math.ceil(infeatures / groupsize), outfeatures), dtype=torch.float16

)

g_idx = torch.tensor(

[i // groupsize for i in range(infeatures)], dtype=torch.int32

)

if bias:

bias = torch.zeros((outfeatures), dtype=torch.float16)

else:

bias = None

return cls(qweight, qzeros, scales, g_idx, bias, bits, groupsize)

def pack(self, linear, scales, zeros, g_idx=None):

self.g_idx = g_idx.clone() if g_idx is not None else self.g_idx

scales = scales.t().contiguous()

zeros = zeros.t().contiguous()

scale_zeros = zeros * scales

self.scales = scales.clone().half()

if linear.bias is not None:

self.bias = linear.bias.clone().half()

intweight = []

for idx in range(self.infeatures):

intweight.append(

torch.round(

(linear.weight.data[:, idx] + scale_zeros[self.g_idx[idx]])

/ self.scales[self.g_idx[idx]]

).to(torch.int)[:, None]

)

intweight = torch.cat(intweight, dim=1)

intweight = intweight.t().contiguous()

intweight = intweight.numpy().astype(np.uint32)

qweight = np.zeros(

(intweight.shape[0] // 32 * self.bits, intweight.shape[1]), dtype=np.uint32

)

i = 0

row = 0

while row < qweight.shape[0]:

if self.bits in [2, 4, 8]:

for j in range(i, i + (32 // self.bits)):

qweight[row] |= intweight[j] << (self.bits * (j - i))

i += 32 // self.bits

row += 1

else:

raise NotImplementedError("Only 2,4,8 bits are supported.")

qweight = qweight.astype(np.int32)

self.qweight = torch.from_numpy(qweight)

zeros -= 1

zeros = zeros.numpy().astype(np.uint32)

qzeros = np.zeros(

(zeros.shape[0], zeros.shape[1] // 32 * self.bits), dtype=np.uint32

)

i = 0

col = 0

while col < qzeros.shape[1]:

if self.bits in [2, 4, 8]:

for j in range(i, i + (32 // self.bits)):

qzeros[:, col] |= zeros[:, j] << (self.bits * (j - i))

i += 32 // self.bits

col += 1

else:

raise NotImplementedError("Only 2,4,8 bits are supported.")

qzeros = qzeros.astype(np.int32)

self.qzeros = torch.from_numpy(qzeros)

def forward(self, x):

out_shape = x.shape[:-1] + (self.outfeatures,)

out = QuantLinearFunction.apply(

x.reshape(-1, x.shape[-1]),

self.qweight,

self.scales,

self.qzeros,

self.g_idx,

self.bits,

self.maxq,

)

out = out + self.bias if self.bias is not None else out

return out.reshape(out_shape)

| 0 |

hf_public_repos/text-generation-inference/server/text_generation_server/utils | hf_public_repos/text-generation-inference/server/text_generation_server/utils/gptq/quantize.py | import time

import torch.nn as nn

import math

import json

import os

import torch

import transformers

from texttable import Texttable

from transformers import AutoModelForCausalLM, AutoConfig, AutoTokenizer

from huggingface_hub import HfApi

from accelerate import init_empty_weights

from text_generation_server.utils import initialize_torch_distributed, Weights

from text_generation_server.utils.hub import weight_files

from text_generation_server.utils.gptq.quant_linear import QuantLinear

from loguru import logger

from typing import Optional

DEV = torch.device("cuda:0")

class Quantizer(nn.Module):

def __init__(self, shape=1):

super(Quantizer, self).__init__()

self.register_buffer("maxq", torch.tensor(0))

self.register_buffer("scale", torch.zeros(shape))

self.register_buffer("zero", torch.zeros(shape))

def configure(

self,

bits,

perchannel=False,

sym=True,

mse=False,

norm=2.4,

grid=100,

maxshrink=0.8,

trits=False,

):

self.maxq = torch.tensor(2**bits - 1)

self.perchannel = perchannel

self.sym = sym

self.mse = mse

self.norm = norm

self.grid = grid

self.maxshrink = maxshrink

if trits:

self.maxq = torch.tensor(-1)

self.scale = torch.zeros_like(self.scale)

def _quantize(self, x, scale, zero, maxq):

if maxq < 0:

return (x > scale / 2).float() * scale + (x < zero / 2).float() * zero

q = torch.clamp(torch.round(x / scale) + zero, 0, maxq)

return scale * (q - zero)

def find_params(self, x, weight=False):

dev = x.device

self.maxq = self.maxq.to(dev)

shape = x.shape

if self.perchannel:

if weight:

x = x.flatten(1)

else:

if len(shape) == 4:

x = x.permute([1, 0, 2, 3])

x = x.flatten(1)

if len(shape) == 3:

x = x.reshape((-1, shape[-1])).t()

if len(shape) == 2:

x = x.t()

else:

x = x.flatten().unsqueeze(0)

tmp = torch.zeros(x.shape[0], device=dev)

xmin = torch.minimum(x.min(1)[0], tmp)

xmax = torch.maximum(x.max(1)[0], tmp)

if self.sym:

xmax = torch.maximum(torch.abs(xmin), xmax)

tmp = xmin < 0

if torch.any(tmp):

xmin[tmp] = -xmax[tmp]

tmp = (xmin == 0) & (xmax == 0)

xmin[tmp] = -1

xmax[tmp] = +1

if self.maxq < 0:

self.scale = xmax

self.zero = xmin

else:

self.scale = (xmax - xmin) / self.maxq

if self.sym:

self.zero = torch.full_like(self.scale, (self.maxq + 1) / 2)

else:

self.zero = torch.round(-xmin / self.scale)

if self.mse:

best = torch.full([x.shape[0]], float("inf"), device=dev)

for i in range(int(self.maxshrink * self.grid)):

p = 1 - i / self.grid

xmin1 = p * xmin

xmax1 = p * xmax

scale1 = (xmax1 - xmin1) / self.maxq

zero1 = torch.round(-xmin1 / scale1) if not self.sym else self.zero

q = self._quantize(

x, scale1.unsqueeze(1), zero1.unsqueeze(1), self.maxq

)

q -= x

q.abs_()

q.pow_(self.norm)

err = torch.sum(q, 1)

tmp = err < best

if torch.any(tmp):

best[tmp] = err[tmp]

self.scale[tmp] = scale1[tmp]

self.zero[tmp] = zero1[tmp]

if not self.perchannel:

if weight:

tmp = shape[0]

else:

tmp = shape[1] if len(shape) != 3 else shape[2]

self.scale = self.scale.repeat(tmp)

self.zero = self.zero.repeat(tmp)

if weight:

shape = [-1] + [1] * (len(shape) - 1)

self.scale = self.scale.reshape(shape)

self.zero = self.zero.reshape(shape)

return

if len(shape) == 4:

self.scale = self.scale.reshape((1, -1, 1, 1))

self.zero = self.zero.reshape((1, -1, 1, 1))

if len(shape) == 3:

self.scale = self.scale.reshape((1, 1, -1))

self.zero = self.zero.reshape((1, 1, -1))

if len(shape) == 2:

self.scale = self.scale.unsqueeze(0)

self.zero = self.zero.unsqueeze(0)

def quantize(self, x):

if self.ready():

return self._quantize(x, self.scale, self.zero, self.maxq)

return x

def enabled(self):

return self.maxq > 0

def ready(self):

return torch.all(self.scale != 0)

class GPTQ:

def __init__(self, layer, observe=False):

self.layer = layer

self.dev = self.layer.weight.device

W = layer.weight.data.clone()

if isinstance(self.layer, nn.Conv2d):

W = W.flatten(1)

if isinstance(self.layer, transformers.Conv1D):

W = W.t()

self.rows = W.shape[0]

self.columns = W.shape[1]

self.H = torch.zeros((self.columns, self.columns), device=self.dev)

self.nsamples = 0

self.quantizer = Quantizer()

self.observe = observe

def add_batch(self, inp, out):

# Hessian H = 2 X XT + λ I

if self.observe:

self.inp1 = inp

self.out1 = out

else:

self.inp1 = None

self.out1 = None

if len(inp.shape) == 2:

inp = inp.unsqueeze(0)

tmp = inp.shape[0]

if isinstance(self.layer, nn.Linear) or isinstance(

self.layer, transformers.Conv1D

):

if len(inp.shape) == 3:

inp = inp.reshape((-1, inp.shape[-1]))

inp = inp.t()

if isinstance(self.layer, nn.Conv2d):

unfold = nn.Unfold(

self.layer.kernel_size,

dilation=self.layer.dilation,

padding=self.layer.padding,

stride=self.layer.stride,

)

inp = unfold(inp)

inp = inp.permute([1, 0, 2])

inp = inp.flatten(1)

self.H *= self.nsamples / (self.nsamples + tmp)

self.nsamples += tmp

# inp = inp.float()

inp = math.sqrt(2 / self.nsamples) * inp.float()

# self.H += 2 / self.nsamples * inp.matmul(inp.t())

self.H += inp.matmul(inp.t())

def print_loss(self, name, q_weight, weight_error, timecost):

table = Texttable()

length = 28

name = (

(name + " " * (length - len(name)))

if len(name) <= length

else name[:length]

)

table.header(["name", "weight_error", "fp_inp_SNR", "q_inp_SNR", "time"])

# assign weight

self.layer.weight.data = q_weight.reshape(self.layer.weight.shape).to(

self.layer.weight.data.dtype

)

if self.inp1 is not None:

# quantize input to int8

quantizer = Quantizer()

quantizer.configure(8, perchannel=False, sym=True, mse=False)

quantizer.find_params(self.inp1)

q_in = quantizer.quantize(self.inp1).type(torch.float16)

q_out = self.layer(q_in)

# get kinds of SNR

q_SNR = torch_snr_error(q_out, self.out1).item()

fp_SNR = torch_snr_error(self.layer(self.inp1), self.out1).item()

else:

q_SNR = "-"

fp_SNR = "-"

table.add_row([name, weight_error, fp_SNR, q_SNR, timecost])

print(table.draw().split("\n")[-2])

def fasterquant(

self, blocksize=128, percdamp=0.01, groupsize=-1, act_order=False, name=""

):

self.layer.to(self.dev)

W = self.layer.weight.data.clone()

if isinstance(self.layer, nn.Conv2d):

W = W.flatten(1)

if isinstance(self.layer, transformers.Conv1D):

W = W.t()

W = W.float()

tick = time.time()

if not self.quantizer.ready():

self.quantizer.find_params(W, weight=True)

H = self.H

if not self.observe:

del self.H

dead = torch.diag(H) == 0

H[dead, dead] = 1

W[:, dead] = 0

if act_order:

perm = torch.argsort(torch.diag(H), descending=True)

W = W[:, perm]

H = H[perm][:, perm]

Losses = torch.zeros_like(W)

Q = torch.zeros_like(W)

damp = percdamp * torch.mean(torch.diag(H))

diag = torch.arange(self.columns, device=self.dev)

H[diag, diag] += damp

H = torch.linalg.cholesky(H)

H = torch.cholesky_inverse(H)

try:

H = torch.linalg.cholesky(H, upper=True)

except Exception:

# Addition because Falcon fails on h_to_4h

H = torch.linalg.cholesky(

H + 1e-5 * torch.eye(H.shape[0]).to(H.device), upper=True

)

Hinv = H

g_idx = []

scale = []

zero = []

now_idx = 1

for i1 in range(0, self.columns, blocksize):

i2 = min(i1 + blocksize, self.columns)

count = i2 - i1

W1 = W[:, i1:i2].clone()

Q1 = torch.zeros_like(W1)

Err1 = torch.zeros_like(W1)

Losses1 = torch.zeros_like(W1)

Hinv1 = Hinv[i1:i2, i1:i2]

for i in range(count):

w = W1[:, i]

d = Hinv1[i, i]

if groupsize != -1:

if (i1 + i) % groupsize == 0:

self.quantizer.find_params(

W[:, (i1 + i) : (i1 + i + groupsize)], weight=True

)

if ((i1 + i) // groupsize) - now_idx == -1:

scale.append(self.quantizer.scale)

zero.append(self.quantizer.zero)

now_idx += 1

q = self.quantizer.quantize(w.unsqueeze(1)).flatten()

Q1[:, i] = q

Losses1[:, i] = (w - q) ** 2 / d**2

err1 = (w - q) / d

W1[:, i:] -= err1.unsqueeze(1).matmul(Hinv1[i, i:].unsqueeze(0))

Err1[:, i] = err1

Q[:, i1:i2] = Q1

Losses[:, i1:i2] = Losses1 / 2

W[:, i2:] -= Err1.matmul(Hinv[i1:i2, i2:])

torch.cuda.synchronize()

error = torch.sum(Losses).item()

groupsize = groupsize if groupsize != -1 else self.columns

g_idx = [i // groupsize for i in range(self.columns)]

g_idx = torch.tensor(g_idx, dtype=torch.int32, device=Q.device)

if act_order:

invperm = torch.argsort(perm)

Q = Q[:, invperm]

g_idx = g_idx[invperm]

if isinstance(self.layer, transformers.Conv1D):

Q = Q.t()

self.print_loss(

name=name, q_weight=Q, weight_error=error, timecost=(time.time() - tick)

)

if scale == []:

scale.append(self.quantizer.scale)

zero.append(self.quantizer.zero)

scale = torch.cat(scale, dim=1)

zero = torch.cat(zero, dim=1)

return scale, zero, g_idx, error

def free(self):

self.inp1 = None

self.out1 = None

self.H = None

self.Losses = None

self.Trace = None

torch.cuda.empty_cache()

def get_wikitext2(nsamples, seed, seqlen, model_id, trust_remote_code):

from datasets import load_dataset

traindata = load_dataset("wikitext", "wikitext-2-raw-v1", split="train")

testdata = load_dataset("wikitext", "wikitext-2-raw-v1", split="test")

try:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=False, trust_remote_code=trust_remote_code

)

except:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=True, trust_remote_code=trust_remote_code

)

trainenc = tokenizer("\n\n".join(traindata["text"]), return_tensors="pt")

testenc = tokenizer("\n\n".join(testdata["text"]), return_tensors="pt")

import random

random.seed(seed)

trainloader = []

for _ in range(nsamples):

i = random.randint(0, trainenc.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

inp = trainenc.input_ids[:, i:j]

tar = inp.clone()

tar[:, :-1] = -100

trainloader.append((inp, tar))

return trainloader, testenc

def get_ptb(nsamples, seed, seqlen, model_id, trust_remote_code):

from datasets import load_dataset

traindata = load_dataset("ptb_text_only", "penn_treebank", split="train")

valdata = load_dataset("ptb_text_only", "penn_treebank", split="validation")

try:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=False, trust_remote_code=trust_remote_code

)

except:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=True, trust_remote_code=trust_remote_code

)

trainenc = tokenizer("\n\n".join(traindata["sentence"]), return_tensors="pt")

testenc = tokenizer("\n\n".join(valdata["sentence"]), return_tensors="pt")

import random

random.seed(seed)

trainloader = []

for _ in range(nsamples):

i = random.randint(0, trainenc.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

inp = trainenc.input_ids[:, i:j]

tar = inp.clone()

tar[:, :-1] = -100

trainloader.append((inp, tar))

return trainloader, testenc

def get_c4(nsamples, seed, seqlen, model_id, trust_remote_code):

from datasets import load_dataset

traindata = load_dataset(

"allenai/c4",

"allenai--c4",

data_files={"train": "en/c4-train.00000-of-01024.json.gz"},

split="train",

use_auth_token=False,

)

valdata = load_dataset(

"allenai/c4",

"allenai--c4",

data_files={"validation": "en/c4-validation.00000-of-00008.json.gz"},

split="validation",

use_auth_token=False,

)

try:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=False, trust_remote_code=trust_remote_code

)

except:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=True, trust_remote_code=trust_remote_code

)

import random

random.seed(seed)

trainloader = []

for _ in range(nsamples):

while True:

i = random.randint(0, len(traindata) - 1)

trainenc = tokenizer(traindata[i]["text"], return_tensors="pt")

if trainenc.input_ids.shape[1] >= seqlen:

break

i = random.randint(0, trainenc.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

inp = trainenc.input_ids[:, i:j]

tar = inp.clone()

tar[:, :-1] = -100

trainloader.append((inp, tar))

import random

random.seed(0)

valenc = []

for _ in range(256):

while True:

i = random.randint(0, len(valdata) - 1)

tmp = tokenizer(valdata[i]["text"], return_tensors="pt")

if tmp.input_ids.shape[1] >= seqlen:

break

i = random.randint(0, tmp.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

valenc.append(tmp.input_ids[:, i:j])

valenc = torch.hstack(valenc)

class TokenizerWrapper:

def __init__(self, input_ids):

self.input_ids = input_ids

valenc = TokenizerWrapper(valenc)

return trainloader, valenc

def get_ptb_new(nsamples, seed, seqlen, model_id, trust_remote_code):

from datasets import load_dataset

traindata = load_dataset("ptb_text_only", "penn_treebank", split="train")

testdata = load_dataset("ptb_text_only", "penn_treebank", split="test")

try:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=False, trust_remote_code=trust_remote_code

)

except:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=True, trust_remote_code=trust_remote_code

)

trainenc = tokenizer(" ".join(traindata["sentence"]), return_tensors="pt")

testenc = tokenizer(" ".join(testdata["sentence"]), return_tensors="pt")

import random

random.seed(seed)

trainloader = []

for _ in range(nsamples):

i = random.randint(0, trainenc.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

inp = trainenc.input_ids[:, i:j]

tar = inp.clone()

tar[:, :-1] = -100

trainloader.append((inp, tar))

return trainloader, testenc

def get_c4_new(nsamples, seed, seqlen, model_id, trust_remote_code):

from datasets import load_dataset

traindata = load_dataset(

"allenai/c4",

"allenai--c4",

data_files={"train": "en/c4-train.00000-of-01024.json.gz"},

split="train",

)

valdata = load_dataset(

"allenai/c4",

"allenai--c4",

data_files={"validation": "en/c4-validation.00000-of-00008.json.gz"},

split="validation",

)

try:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=False, trust_remote_code=trust_remote_code

)

except:

tokenizer = AutoTokenizer.from_pretrained(

model_id, use_fast=True, trust_remote_code=trust_remote_code

)

import random

random.seed(seed)

trainloader = []

for _ in range(nsamples):

while True:

i = random.randint(0, len(traindata) - 1)

trainenc = tokenizer(traindata[i]["text"], return_tensors="pt")

if trainenc.input_ids.shape[1] >= seqlen:

break

i = random.randint(0, trainenc.input_ids.shape[1] - seqlen - 1)

j = i + seqlen

inp = trainenc.input_ids[:, i:j]

tar = inp.clone()

tar[:, :-1] = -100

trainloader.append((inp, tar))

valenc = tokenizer(" ".join(valdata[:1100]["text"]), return_tensors="pt")

valenc = valenc.input_ids[:, : (256 * seqlen)]

class TokenizerWrapper:

def __init__(self, input_ids):

self.input_ids = input_ids

valenc = TokenizerWrapper(valenc)

return trainloader, valenc

def get_loaders(name, nsamples=128, seed=0, seqlen=2048, model_id="", trust_remote_code=False):

if "wikitext2" in name:

return get_wikitext2(nsamples, seed, seqlen, model_id, trust_remote_code)

if "ptb" in name:

if "new" in name:

return get_ptb_new(nsamples, seed, seqlen, model_id, trust_remote_code)

return get_ptb(nsamples, seed, seqlen, model_id, trust_remote_code)

if "c4" in name:

if "new" in name:

return get_c4_new(nsamples, seed, seqlen, model_id, trust_remote_code)

return get_c4(nsamples, seed, seqlen, model_id, trust_remote_code)

def find_layers(module, layers=(nn.Conv2d, nn.Linear), name=""):

# Skip last lm_head linear

# Need isintance Falcon is inheriting Linear.

if isinstance(module, layers) and "lm_head" not in name:

return {name: module}

res = {}

for name1, child in module.named_children():

res.update(

find_layers(

child, layers=layers, name=name + "." + name1 if name != "" else name1

)

)

return res

@torch.no_grad()

def sequential(

model,

dataloader,

dev,

nsamples,

bits,

groupsize,

*,

hooks,

percdamp=0.01,

sym: bool = False,

act_order: bool = False,

):

print("Starting ...")

use_cache = model.config.use_cache

model.config.use_cache = False

try:

layers = model.model.layers

prefix = "model.layers"

except Exception:

layers = model.transformer.h

prefix = "transformer.h"

dtype = next(iter(model.parameters())).dtype

inps = torch.zeros(

(nsamples, model.seqlen, model.config.hidden_size), dtype=dtype, device=dev

)

cache = {"i": 0}

extra = {}

class Catcher(nn.Module):

def __init__(self, module):

super().__init__()

self.module = module

def forward(self, inp, **kwargs):

inps[cache["i"]] = inp

cache["i"] += 1

extra.update(kwargs.copy())

raise ValueError

layers[0] = Catcher(layers[0])

for batch in dataloader:

try:

model(batch[0].cuda())

except ValueError:

pass

layers[0] = layers[0].module

# layers[0] = layers[0].cpu()

# model.model.embed_tokens = model.model.embed_tokens.cpu()

# model.model.norm = model.model.norm.cpu()

torch.cuda.empty_cache()

for hook in hooks:

hook.remove()

outs = torch.zeros_like(inps)

extra = {

k: v.to(dev) if isinstance(v, torch.Tensor) else v for k, v in extra.items()

}

print("Ready.")

quantizers = {}

for i in range(len(layers)):

print(f"Quantizing layer {i+1}/{len(layers)}..")

print("+------------------+--------------+------------+-----------+-------+")

print("| name | weight_error | fp_inp_SNR | q_inp_SNR | time |")

print("+==================+==============+============+===========+=======+")

layer = layers[i]

layer.load()

full = find_layers(layer)

sequential = [list(full.keys())]

for names in sequential:

subset = {n: full[n] for n in names}

gptq = {}

for name in subset:

gptq[name] = GPTQ(subset[name])

gptq[name].quantizer.configure(

bits, perchannel=True, sym=sym, mse=False

)

pass

def add_batch(name):

def tmp(_, inp, out):

gptq[name].add_batch(inp[0].data, out.data)

return tmp

handles = []

for name in subset:

handles.append(subset[name].register_forward_hook(add_batch(name)))

for j in range(nsamples):

outs[j] = layer(inps[j].unsqueeze(0), **extra)[0]

for h in handles:

h.remove()

for name in subset:

scale, zero, g_idx, error = gptq[name].fasterquant(

percdamp=percdamp,

groupsize=groupsize,

act_order=act_order,

name=name,

)

quantizers[f"{prefix}.{i}.{name}"] = (

gptq[name].quantizer.cpu(),

scale.cpu(),

zero.cpu(),

g_idx.cpu(),

bits,

groupsize,

)

gptq[name].free()

for j in range(nsamples):

outs[j] = layer(inps[j].unsqueeze(0), **extra)[0]

layer.unload()

del layer

del gptq

torch.cuda.empty_cache()

inps, outs = outs, inps

print("+------------------+--------------+------------+-----------+-------+")

print("\n")

model.config.use_cache = use_cache

return quantizers

def make_quant_linear(module, names, bits, groupsize, name=""):

if isinstance(module, QuantLinear):

return

for attr in dir(module):

tmp = getattr(module, attr)

name1 = name + "." + attr if name != "" else attr

if name1 in names:

delattr(module, attr)

setattr(

module,

attr,

QuantLinear.new(

bits,

groupsize,

tmp.in_features,

tmp.out_features,

tmp.bias is not None,

),

)

for name1, child in module.named_children():

make_quant_linear(

child, names, bits, groupsize, name + "." + name1 if name != "" else name1

)

# TODO: perform packing on GPU

def pack(model, quantizers, bits, groupsize):

layers = find_layers(model)

layers = {n: layers[n] for n in quantizers}

make_quant_linear(model, quantizers, bits, groupsize)

qlayers = find_layers(model, (QuantLinear,))

print("Packing ...")

for name in qlayers:

print(name)

quantizers[name], scale, zero, g_idx, _, _ = quantizers[name]

qlayers[name].pack(layers[name], scale, zero, g_idx)

print("Done.")

return model

def setdeepattr(module, full_name, tensor):

current = module

tokens = full_name.split(".")

for token in tokens[:-1]:

current = getattr(current, token)

setattr(current, tokens[-1], tensor)

def getdeepattr(module, full_name):

current = module

tokens = full_name.split(".")

for token in tokens:

current = getattr(current, token)

return current

def load_weights_pre_hook(module_name, weights, recursive=False):

def inner(module, args):

print(f"Pre hook {module_name}")

local_params = {}

for k, v in module.named_parameters():

if not recursive and k.count(".") != 1:

continue

local_params[k] = v

for k, v in module.named_buffers():

if not recursive and k.count(".") != 1:

continue

local_params[k] = v

for local_param in local_params:

current_tensor = getdeepattr(module, local_param)

if current_tensor.device == torch.device("meta"):

# print(f"Loading {local_param}")

if module_name:

tensor_name = f"{module_name}.{local_param}"

else:

tensor_name = local_param

tensor = weights.get_tensor(tensor_name)

setdeepattr(module, local_param, nn.Parameter(tensor))

else:

tensor = current_tensor.to(device=torch.device("cuda:0"))

if current_tensor.requires_grad:

tensor = nn.Parameter(tensor)

setdeepattr(module, local_param, tensor)

return inner

def load_weights_post_hook(module_name, weights, recursive=False):

def inner(module, args, output):

print(f"Post hook {module_name}")

local_params = {}

for k, v in module.named_parameters():

if not recursive and k.count(".") != 1:

continue

local_params[k] = v

for k, v in module.named_buffers():

if not recursive and k.count(".") != 1:

continue

local_params[k] = v

for local_param in local_params:

# print(f"Unloading {local_param}")

current_tensor = getdeepattr(module, local_param)

setdeepattr(

module,

local_param,

nn.Parameter(current_tensor.to(device=torch.device("cpu"))),

)

return output

return inner

def quantize(

model_id: str,

bits: int,

groupsize: int,

output_dir: str,

revision: str,

trust_remote_code: bool,

upload_to_model_id: Optional[str],

percdamp: float,

act_order: bool,

):

print("loading model")

config = AutoConfig.from_pretrained(

model_id,

trust_remote_code=trust_remote_code,

)

with init_empty_weights():

model = AutoModelForCausalLM.from_config(

config, torch_dtype=torch.float16, trust_remote_code=trust_remote_code

)

model = model.eval()

print("LOADED model")

files = weight_files(model_id, revision, extension=".safetensors")

process_group, _, _ = initialize_torch_distributed()

weights = Weights(

files,

device=torch.device("cuda:0"),

dtype=torch.float16,

process_group=process_group,

aliases={"embed_tokens.weight": ["lm_head.weight"]},

)

hooks = []

for name, module in model.named_modules():

def load(module, name):

def _load():

load_weights_pre_hook(name, weights, recursive=True)(module, None)

return _load

def unload(module, name):

def _unload():

load_weights_post_hook(name, weights, recursive=True)(

module, None, None

)

return _unload

module.load = load(module, name)

module.unload = unload(module, name)

hooks.append(

module.register_forward_pre_hook(load_weights_pre_hook(name, weights))

)

hooks.append(

module.register_forward_hook(load_weights_post_hook(name, weights))

)

model.seqlen = 2048

dataset = "wikitext2"

nsamples = 128

seed = None

dataloader, testloader = get_loaders(

dataset,

nsamples=nsamples,

seed=seed,

model_id=model_id,

seqlen=model.seqlen,

trust_remote_code=trust_remote_code

)

tick = time.time()

quantizers = sequential(

model,

dataloader,

DEV,

nsamples,

bits,

groupsize,

percdamp=percdamp,

act_order=act_order,

hooks=hooks,

)

print(time.time() - tick)

pack(model, quantizers, bits, groupsize)

from safetensors.torch import save_file

from transformers.modeling_utils import shard_checkpoint

state_dict = model.state_dict()

state_dict = {k: v.cpu().contiguous() for k, v in state_dict.items()}

state_dict["gptq_bits"] = torch.LongTensor([bits])

state_dict["gptq_groupsize"] = torch.LongTensor([groupsize])

max_shard_size = "10GB"

shards, index = shard_checkpoint(

state_dict, max_shard_size=max_shard_size, weights_name="model.safetensors"

)

os.makedirs(output_dir, exist_ok=True)

for shard_file, shard in shards.items():

save_file(

shard,

os.path.join(output_dir, shard_file),

metadata={

"format": "pt",

"quantized": "gptq",

"origin": "text-generation-inference",

},

)

if index is None:

path_to_weights = os.path.join(output_dir, "model.safetensors")

logger.info(f"Model weights saved in {path_to_weights}")

else:

save_index_file = "model.safetensors.index.json"

save_index_file = os.path.join(output_dir, save_index_file)

with open(save_index_file, "w", encoding="utf-8") as f:

content = json.dumps(index, indent=2, sort_keys=True) + "\n"

f.write(content)

logger.info(

f"The model is bigger than the maximum size per checkpoint ({max_shard_size}) and is going to be "

f"split in {len(shards)} checkpoint shards. You can find where each parameters has been saved in the "

f"index located at {save_index_file}."

)

config = AutoConfig.from_pretrained(model_id, trust_remote_code=trust_remote_code)

config.save_pretrained(output_dir)

logger.info("Saved config")

logger.info("Saving tokenizer")

tokenizer = AutoTokenizer.from_pretrained(

model_id, trust_remote_code=trust_remote_code

)

tokenizer.save_pretrained(output_dir)

logger.info("Saved tokenizer")

if upload_to_model_id:

api = HfApi()

api.upload_folder(

folder_path=output_dir, repo_id=upload_to_model_id, repo_type="model"

)

| 0 |

hf_public_repos | hf_public_repos/peft/LICENSE | Apache License

Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

1. Definitions.

"License" shall mean the terms and conditions for use, reproduction,

and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by

the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all

other entities that control, are controlled by, or are under common

control with that entity. For the purposes of this definition,

"control" means (i) the power, direct or indirect, to cause the

direction or management of such entity, whether by contract or

otherwise, or (ii) ownership of fifty percent (50%) or more of the

outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity

exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications,

including but not limited to software source code, documentation

source, and configuration files.

"Object" form shall mean any form resulting from mechanical

transformation or translation of a Source form, including but

not limited to compiled object code, generated documentation,

and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or

Object form, made available under the License, as indicated by a

copyright notice that is included in or attached to the work

(an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object

form, that is based on (or derived from) the Work and for which the

editorial revisions, annotations, elaborations, or other modifications

represent, as a whole, an original work of authorship. For the purposes

of this License, Derivative Works shall not include works that remain

separable from, or merely link (or bind by name) to the interfaces of,

the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including

the original version of the Work and any modifications or additions

to that Work or Derivative Works thereof, that is intentionally

submitted to Licensor for inclusion in the Work by the copyright owner

or by an individual or Legal Entity authorized to submit on behalf of

the copyright owner. For the purposes of this definition, "submitted"

means any form of electronic, verbal, or written communication sent

to the Licensor or its representatives, including but not limited to

communication on electronic mailing lists, source code control systems,

and issue tracking systems that are managed by, or on behalf of, the

Licensor for the purpose of discussing and improving the Work, but

excluding communication that is conspicuously marked or otherwise

designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity

on behalf of whom a Contribution has been received by Licensor and

subsequently incorporated within the Work.

2. Grant of Copyright License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

copyright license to reproduce, prepare Derivative Works of,

publicly display, publicly perform, sublicense, and distribute the

Work and such Derivative Works in Source or Object form.

3. Grant of Patent License. Subject to the terms and conditions of

this License, each Contributor hereby grants to You a perpetual,

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

(except as stated in this section) patent license to make, have made,

use, offer to sell, sell, import, and otherwise transfer the Work,

where such license applies only to those patent claims licensable

by such Contributor that are necessarily infringed by their

Contribution(s) alone or by combination of their Contribution(s)

with the Work to which such Contribution(s) was submitted. If You

institute patent litigation against any entity (including a

cross-claim or counterclaim in a lawsuit) alleging that the Work

or a Contribution incorporated within the Work constitutes direct

or contributory patent infringement, then any patent licenses

granted to You under this License for that Work shall terminate

as of the date such litigation is filed.

4. Redistribution. You may reproduce and distribute copies of the

Work or Derivative Works thereof in any medium, with or without

modifications, and in Source or Object form, provided that You

meet the following conditions:

(a) You must give any other recipients of the Work or

Derivative Works a copy of this License; and

(b) You must cause any modified files to carry prominent notices

stating that You changed the files; and

(c) You must retain, in the Source form of any Derivative Works

that You distribute, all copyright, patent, trademark, and

attribution notices from the Source form of the Work,

excluding those notices that do not pertain to any part of

the Derivative Works; and

(d) If the Work includes a "NOTICE" text file as part of its

distribution, then any Derivative Works that You distribute must

include a readable copy of the attribution notices contained

within such NOTICE file, excluding those notices that do not

pertain to any part of the Derivative Works, in at least one

of the following places: within a NOTICE text file distributed

as part of the Derivative Works; within the Source form or

documentation, if provided along with the Derivative Works; or,

within a display generated by the Derivative Works, if and

wherever such third-party notices normally appear. The contents

of the NOTICE file are for informational purposes only and

do not modify the License. You may add Your own attribution

notices within Derivative Works that You distribute, alongside

or as an addendum to the NOTICE text from the Work, provided

that such additional attribution notices cannot be construed

as modifying the License.

You may add Your own copyright statement to Your modifications and

may provide additional or different license terms and conditions

for use, reproduction, or distribution of Your modifications, or

for any such Derivative Works as a whole, provided Your use,

reproduction, and distribution of the Work otherwise complies with

the conditions stated in this License.

5. Submission of Contributions. Unless You explicitly state otherwise,

any Contribution intentionally submitted for inclusion in the Work

by You to the Licensor shall be under the terms and conditions of

this License, without any additional terms or conditions.

Notwithstanding the above, nothing herein shall supersede or modify

the terms of any separate license agreement you may have executed

with Licensor regarding such Contributions.

6. Trademarks. This License does not grant permission to use the trade

names, trademarks, service marks, or product names of the Licensor,

except as required for reasonable and customary use in describing the

origin of the Work and reproducing the content of the NOTICE file.

7. Disclaimer of Warranty. Unless required by applicable law or

agreed to in writing, Licensor provides the Work (and each

Contributor provides its Contributions) on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

implied, including, without limitation, any warranties or conditions

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

PARTICULAR PURPOSE. You are solely responsible for determining the

appropriateness of using or redistributing the Work and assume any

risks associated with Your exercise of permissions under this License.

8. Limitation of Liability. In no event and under no legal theory,

whether in tort (including negligence), contract, or otherwise,

unless required by applicable law (such as deliberate and grossly

negligent acts) or agreed to in writing, shall any Contributor be

liable to You for damages, including any direct, indirect, special,

incidental, or consequential damages of any character arising as a

result of this License or out of the use or inability to use the

Work (including but not limited to damages for loss of goodwill,

work stoppage, computer failure or malfunction, or any and all

other commercial damages or losses), even if such Contributor

has been advised of the possibility of such damages.

9. Accepting Warranty or Additional Liability. While redistributing

the Work or Derivative Works thereof, You may choose to offer,

and charge a fee for, acceptance of support, warranty, indemnity,

or other liability obligations and/or rights consistent with this

License. However, in accepting such obligations, You may act only

on Your own behalf and on Your sole responsibility, not on behalf

of any other Contributor, and only if You agree to indemnify,

defend, and hold each Contributor harmless for any liability

incurred by, or claims asserted against, such Contributor by reason

of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work.

To apply the Apache License to your work, attach the following

boilerplate notice, with the fields enclosed by brackets "[]"

replaced with your own identifying information. (Don't include

the brackets!) The text should be enclosed in the appropriate

comment syntax for the file format. We also recommend that a

file or class name and description of purpose be included on the

same "printed page" as the copyright notice for easier

identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

| 0 |

hf_public_repos | hf_public_repos/peft/Makefile | .PHONY: quality style test docs

check_dirs := src tests examples docs

# Check that source code meets quality standards

# this target runs checks on all files

quality:

black --check $(check_dirs)

ruff $(check_dirs)

doc-builder style src/peft tests docs/source --max_len 119 --check_only

# Format source code automatically and check is there are any problems left that need manual fixing

style:

black $(check_dirs)

ruff $(check_dirs) --fix

doc-builder style src/peft tests docs/source --max_len 119

test:

python -m pytest -n 3 tests/ $(if $(IS_GITHUB_CI),--report-log "ci_tests.log",)

tests_examples_multi_gpu:

python -m pytest -m multi_gpu_tests tests/test_gpu_examples.py $(if $(IS_GITHUB_CI),--report-log "multi_gpu_examples.log",)

tests_examples_single_gpu:

python -m pytest -m single_gpu_tests tests/test_gpu_examples.py $(if $(IS_GITHUB_CI),--report-log "single_gpu_examples.log",)

tests_core_multi_gpu:

python -m pytest -m multi_gpu_tests tests/test_common_gpu.py $(if $(IS_GITHUB_CI),--report-log "core_multi_gpu.log",)

tests_core_single_gpu:

python -m pytest -m single_gpu_tests tests/test_common_gpu.py $(if $(IS_GITHUB_CI),--report-log "core_single_gpu.log",)

tests_common_gpu:

python -m pytest tests/test_decoder_models.py $(if $(IS_GITHUB_CI),--report-log "common_decoder.log",)

python -m pytest tests/test_encoder_decoder_models.py $(if $(IS_GITHUB_CI),--report-log "common_encoder_decoder.log",)

| 0 |

hf_public_repos | hf_public_repos/peft/README.md | <!---

Copyright 2023 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<h1 align="center"> <p>🤗 PEFT</p></h1>

<h3 align="center">

<p>State-of-the-art Parameter-Efficient Fine-Tuning (PEFT) methods</p>

</h3>

Parameter-Efficient Fine-Tuning (PEFT) methods enable efficient adaptation of pre-trained language models (PLMs) to various downstream applications without fine-tuning all the model's parameters. Fine-tuning large-scale PLMs is often prohibitively costly. In this regard, PEFT methods only fine-tune a small number of (extra) model parameters, thereby greatly decreasing the computational and storage costs. Recent State-of-the-Art PEFT techniques achieve performance comparable to that of full fine-tuning.

Seamlessly integrated with 🤗 Accelerate for large scale models leveraging DeepSpeed and Big Model Inference.

Supported methods:

1. LoRA: [LORA: LOW-RANK ADAPTATION OF LARGE LANGUAGE MODELS](https://arxiv.org/abs/2106.09685)

2. Prefix Tuning: [Prefix-Tuning: Optimizing Continuous Prompts for Generation](https://aclanthology.org/2021.acl-long.353/), [P-Tuning v2: Prompt Tuning Can Be Comparable to Fine-tuning Universally Across Scales and Tasks](https://arxiv.org/pdf/2110.07602.pdf)

3. P-Tuning: [GPT Understands, Too](https://arxiv.org/abs/2103.10385)

4. Prompt Tuning: [The Power of Scale for Parameter-Efficient Prompt Tuning](https://arxiv.org/abs/2104.08691)

5. AdaLoRA: [Adaptive Budget Allocation for Parameter-Efficient Fine-Tuning](https://arxiv.org/abs/2303.10512)

6. $(IA)^3$ : [Infused Adapter by Inhibiting and Amplifying Inner Activations](https://arxiv.org/abs/2205.05638)

## Getting started

```python

from transformers import AutoModelForSeq2SeqLM

from peft import get_peft_config, get_peft_model, LoraConfig, TaskType

model_name_or_path = "bigscience/mt0-large"

tokenizer_name_or_path = "bigscience/mt0-large"

peft_config = LoraConfig(

task_type=TaskType.SEQ_2_SEQ_LM, inference_mode=False, r=8, lora_alpha=32, lora_dropout=0.1

)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name_or_path)

model = get_peft_model(model, peft_config)

model.print_trainable_parameters()

# output: trainable params: 2359296 || all params: 1231940608 || trainable%: 0.19151053100118282

```

## Use Cases

### Get comparable performance to full finetuning by adapting LLMs to downstream tasks using consumer hardware

GPU memory required for adapting LLMs on the few-shot dataset [`ought/raft/twitter_complaints`](https://huggingface.co/datasets/ought/raft/viewer/twitter_complaints). Here, settings considered

are full finetuning, PEFT-LoRA using plain PyTorch and PEFT-LoRA using DeepSpeed with CPU Offloading.

Hardware: Single A100 80GB GPU with CPU RAM above 64GB

| Model | Full Finetuning | PEFT-LoRA PyTorch | PEFT-LoRA DeepSpeed with CPU Offloading |

| --------- | ---- | ---- | ---- |

| bigscience/T0_3B (3B params) | 47.14GB GPU / 2.96GB CPU | 14.4GB GPU / 2.96GB CPU | 9.8GB GPU / 17.8GB CPU |

| bigscience/mt0-xxl (12B params) | OOM GPU | 56GB GPU / 3GB CPU | 22GB GPU / 52GB CPU |

| bigscience/bloomz-7b1 (7B params) | OOM GPU | 32GB GPU / 3.8GB CPU | 18.1GB GPU / 35GB CPU |

Performance of PEFT-LoRA tuned [`bigscience/T0_3B`](https://huggingface.co/bigscience/T0_3B) on [`ought/raft/twitter_complaints`](https://huggingface.co/datasets/ought/raft/viewer/twitter_complaints) leaderboard.

A point to note is that we didn't try to squeeze performance by playing around with input instruction templates, LoRA hyperparams and other training related hyperparams. Also, we didn't use the larger 13B [mt0-xxl](https://huggingface.co/bigscience/mt0-xxl) model.

So, we are already seeing comparable performance to SoTA with parameter efficient tuning. Also, the final checkpoint size is just `19MB` in comparison to `11GB` size of the backbone [`bigscience/T0_3B`](https://huggingface.co/bigscience/T0_3B) model.

| Submission Name | Accuracy |

| --------- | ---- |

| Human baseline (crowdsourced) | 0.897 |

| Flan-T5 | 0.892 |

| lora-t0-3b | 0.863 |

**Therefore, we can see that performance comparable to SoTA is achievable by PEFT methods with consumer hardware such as 16GB and 24GB GPUs.**

An insightful blogpost explaining the advantages of using PEFT for fine-tuning FlanT5-XXL: [https://www.philschmid.de/fine-tune-flan-t5-peft](https://www.philschmid.de/fine-tune-flan-t5-peft)

### Parameter Efficient Tuning of Diffusion Models

GPU memory required by different settings during training is given below. The final checkpoint size is `8.8 MB`.

Hardware: Single A100 80GB GPU with CPU RAM above 64GB

| Model | Full Finetuning | PEFT-LoRA | PEFT-LoRA with Gradient Checkpointing |

| --------- | ---- | ---- | ---- |

| CompVis/stable-diffusion-v1-4 | 27.5GB GPU / 3.97GB CPU | 15.5GB GPU / 3.84GB CPU | 8.12GB GPU / 3.77GB CPU |

**Training**

An example of using LoRA for parameter efficient dreambooth training is given in [`examples/lora_dreambooth/train_dreambooth.py`](examples/lora_dreambooth/train_dreambooth.py)

```bash

export MODEL_NAME= "CompVis/stable-diffusion-v1-4" #"stabilityai/stable-diffusion-2-1"

export INSTANCE_DIR="path-to-instance-images"

export CLASS_DIR="path-to-class-images"

export OUTPUT_DIR="path-to-save-model"

accelerate launch train_dreambooth.py \

--pretrained_model_name_or_path=$MODEL_NAME \

--instance_data_dir=$INSTANCE_DIR \

--class_data_dir=$CLASS_DIR \

--output_dir=$OUTPUT_DIR \

--train_text_encoder \

--with_prior_preservation --prior_loss_weight=1.0 \

--instance_prompt="a photo of sks dog" \

--class_prompt="a photo of dog" \

--resolution=512 \

--train_batch_size=1 \

--lr_scheduler="constant" \

--lr_warmup_steps=0 \

--num_class_images=200 \

--use_lora \

--lora_r 16 \

--lora_alpha 27 \

--lora_text_encoder_r 16 \

--lora_text_encoder_alpha 17 \

--learning_rate=1e-4 \

--gradient_accumulation_steps=1 \

--gradient_checkpointing \

--max_train_steps=800

```

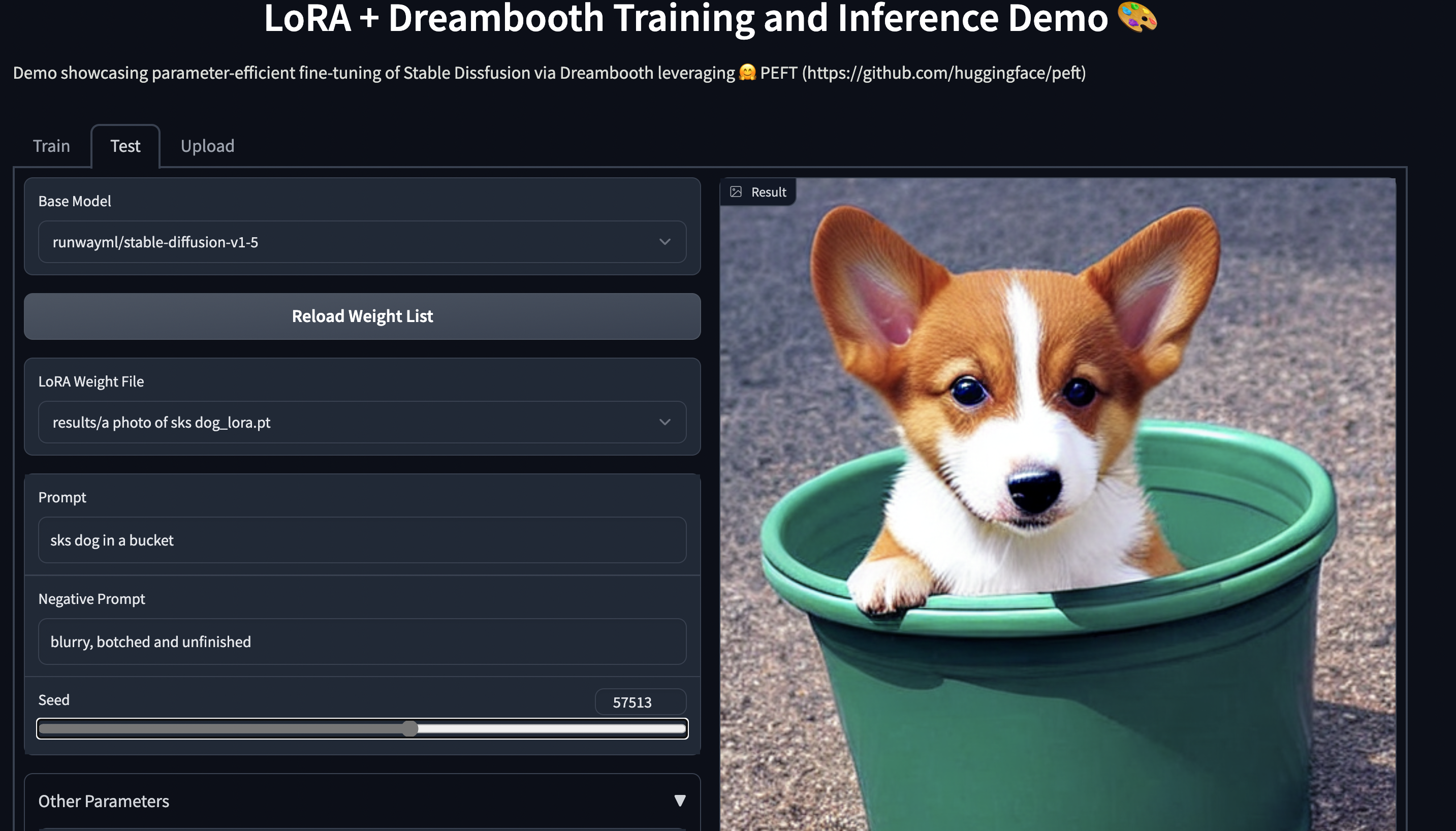

Try out the 🤗 Gradio Space which should run seamlessly on a T4 instance:

[smangrul/peft-lora-sd-dreambooth](https://huggingface.co/spaces/smangrul/peft-lora-sd-dreambooth).

**NEW** ✨ Multi Adapter support and combining multiple LoRA adapters in a weighted combination

### Parameter Efficient Tuning of LLMs for RLHF components such as Ranker and Policy

- Here is an example in [trl](https://github.com/lvwerra/trl) library using PEFT+INT8 for tuning policy model: [gpt2-sentiment_peft.py](https://github.com/lvwerra/trl/blob/main/examples/sentiment/scripts/gpt2-sentiment_peft.py) and corresponding [Blog](https://huggingface.co/blog/trl-peft)

- Example using PEFT for Instrction finetuning, reward model and policy : [stack_llama](https://github.com/lvwerra/trl/tree/main/examples/stack_llama/scripts) and corresponding [Blog](https://huggingface.co/blog/stackllama)

### INT8 training of large models in Colab using PEFT LoRA and bits_and_bytes

- Here is now a demo on how to fine tune [OPT-6.7b](https://huggingface.co/facebook/opt-6.7b) (14GB in fp16) in a Google Colab: [](https://colab.research.google.com/drive/1jCkpikz0J2o20FBQmYmAGdiKmJGOMo-o?usp=sharing)

- Here is now a demo on how to fine tune [whisper-large](https://huggingface.co/openai/whisper-large-v2) (1.5B params) (14GB in fp16) in a Google Colab: [](https://colab.research.google.com/drive/1DOkD_5OUjFa0r5Ik3SgywJLJtEo2qLxO?usp=sharing) and [](https://colab.research.google.com/drive/1vhF8yueFqha3Y3CpTHN6q9EVcII9EYzs?usp=sharing)

### Save compute and storage even for medium and small models

Save storage by avoiding full finetuning of models on each of the downstream tasks/datasets,

With PEFT methods, users only need to store tiny checkpoints in the order of `MBs` all the while retaining

performance comparable to full finetuning.

An example of using LoRA for the task of adapting `LayoutLMForTokenClassification` on `FUNSD` dataset is given in `~examples/token_classification/PEFT_LoRA_LayoutLMForTokenClassification_on_FUNSD.py`. We can observe that with only `0.62 %` of parameters being trainable, we achieve performance (F1 0.777) comparable to full finetuning (F1 0.786) (without any hyerparam tuning runs for extracting more performance), and the checkpoint of this is only `2.8MB`. Now, if there are `N` such datasets, just have these PEFT models one for each dataset and save a lot of storage without having to worry about the problem of catastrophic forgetting or overfitting of backbone/base model.

Another example is fine-tuning [`roberta-large`](https://huggingface.co/roberta-large) on [`MRPC` GLUE](https://huggingface.co/datasets/glue/viewer/mrpc) dataset using different PEFT methods. The notebooks are given in `~examples/sequence_classification`.

## PEFT + 🤗 Accelerate

PEFT models work with 🤗 Accelerate out of the box. Use 🤗 Accelerate for Distributed training on various hardware such as GPUs, Apple Silicon devices, etc during training.

Use 🤗 Accelerate for inferencing on consumer hardware with small resources.

### Example of PEFT model training using 🤗 Accelerate's DeepSpeed integration

DeepSpeed version required `v0.8.0`. An example is provided in `~examples/conditional_generation/peft_lora_seq2seq_accelerate_ds_zero3_offload.py`.

a. First, run `accelerate config --config_file ds_zero3_cpu.yaml` and answer the questionnaire.

Below are the contents of the config file.

```yaml

compute_environment: LOCAL_MACHINE

deepspeed_config:

gradient_accumulation_steps: 1

gradient_clipping: 1.0

offload_optimizer_device: cpu

offload_param_device: cpu

zero3_init_flag: true

zero3_save_16bit_model: true

zero_stage: 3

distributed_type: DEEPSPEED

downcast_bf16: 'no'

dynamo_backend: 'NO'

fsdp_config: {}

machine_rank: 0

main_training_function: main

megatron_lm_config: {}

mixed_precision: 'no'

num_machines: 1

num_processes: 1

rdzv_backend: static

same_network: true

use_cpu: false

```

b. run the below command to launch the example script

```bash

accelerate launch --config_file ds_zero3_cpu.yaml examples/peft_lora_seq2seq_accelerate_ds_zero3_offload.py

```

c. output logs:

```bash

GPU Memory before entering the train : 1916

GPU Memory consumed at the end of the train (end-begin): 66

GPU Peak Memory consumed during the train (max-begin): 7488

GPU Total Peak Memory consumed during the train (max): 9404

CPU Memory before entering the train : 19411

CPU Memory consumed at the end of the train (end-begin): 0

CPU Peak Memory consumed during the train (max-begin): 0

CPU Total Peak Memory consumed during the train (max): 19411

epoch=4: train_ppl=tensor(1.0705, device='cuda:0') train_epoch_loss=tensor(0.0681, device='cuda:0')

100%|████████████████████████████████████████████████████████████████████████████████████████████| 7/7 [00:27<00:00, 3.92s/it]

GPU Memory before entering the eval : 1982

GPU Memory consumed at the end of the eval (end-begin): -66

GPU Peak Memory consumed during the eval (max-begin): 672

GPU Total Peak Memory consumed during the eval (max): 2654

CPU Memory before entering the eval : 19411

CPU Memory consumed at the end of the eval (end-begin): 0

CPU Peak Memory consumed during the eval (max-begin): 0

CPU Total Peak Memory consumed during the eval (max): 19411

accuracy=100.0

eval_preds[:10]=['no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint', 'no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint']

dataset['train'][label_column][:10]=['no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint', 'no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint']

```

### Example of PEFT model inference using 🤗 Accelerate's Big Model Inferencing capabilities

An example is provided in `~examples/causal_language_modeling/peft_lora_clm_accelerate_big_model_inference.ipynb`.

## Models support matrix

### Causal Language Modeling

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|--------------| ---- | ---- | ---- | ---- | ---- |

| GPT-2 | ✅ | ✅ | ✅ | ✅ | ✅ |

| Bloom | ✅ | ✅ | ✅ | ✅ | ✅ |

| OPT | ✅ | ✅ | ✅ | ✅ | ✅ |

| GPT-Neo | ✅ | ✅ | ✅ | ✅ | ✅ |

| GPT-J | ✅ | ✅ | ✅ | ✅ | ✅ |

| GPT-NeoX-20B | ✅ | ✅ | ✅ | ✅ | ✅ |

| LLaMA | ✅ | ✅ | ✅ | ✅ | ✅ |

| ChatGLM | ✅ | ✅ | ✅ | ✅ | ✅ |

### Conditional Generation

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| T5 | ✅ | ✅ | ✅ | ✅ | ✅ |

| BART | ✅ | ✅ | ✅ | ✅ | ✅ |

### Sequence Classification

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| BERT | ✅ | ✅ | ✅ | ✅ | ✅ |

| RoBERTa | ✅ | ✅ | ✅ | ✅ | ✅ |

| GPT-2 | ✅ | ✅ | ✅ | ✅ | |

| Bloom | ✅ | ✅ | ✅ | ✅ | |

| OPT | ✅ | ✅ | ✅ | ✅ | |

| GPT-Neo | ✅ | ✅ | ✅ | ✅ | |

| GPT-J | ✅ | ✅ | ✅ | ✅ | |

| Deberta | ✅ | | ✅ | ✅ | |

| Deberta-v2 | ✅ | | ✅ | ✅ | |

### Token Classification

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| BERT | ✅ | ✅ | | | |

| RoBERTa | ✅ | ✅ | | | |

| GPT-2 | ✅ | ✅ | | | |

| Bloom | ✅ | ✅ | | | |

| OPT | ✅ | ✅ | | | |

| GPT-Neo | ✅ | ✅ | | | |

| GPT-J | ✅ | ✅ | | | |

| Deberta | ✅ | | | | |

| Deberta-v2 | ✅ | | | | |

### Text-to-Image Generation

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| Stable Diffusion | ✅ | | | | |

### Image Classification

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| ViT | ✅ | | | | |

| Swin | ✅ | | | | |

### Image to text (Multi-modal models)

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3

| --------- | ---- | ---- | ---- | ---- | ---- |

| Blip-2 | ✅ | | | | |

___Note that we have tested LoRA for [ViT](https://huggingface.co/docs/transformers/model_doc/vit) and [Swin](https://huggingface.co/docs/transformers/model_doc/swin) for fine-tuning on image classification. However, it should be possible to use LoRA for any compatible model [provided](https://huggingface.co/models?pipeline_tag=image-classification&sort=downloads&search=vit) by 🤗 Transformers. Check out the respective

examples to learn more. If you run into problems, please open an issue.___

The same principle applies to our [segmentation models](https://huggingface.co/models?pipeline_tag=image-segmentation&sort=downloads) as well.

### Semantic Segmentation

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

| --------- | ---- | ---- | ---- | ---- | ---- |

| SegFormer | ✅ | | | | |

## Caveats:

1. Below is an example of using PyTorch FSDP for training. However, it doesn't lead to

any GPU memory savings. Please refer issue [[FSDP] FSDP with CPU offload consumes 1.65X more GPU memory when training models with most of the params frozen](https://github.com/pytorch/pytorch/issues/91165).

```python

from peft.utils.other import fsdp_auto_wrap_policy

...

if os.environ.get("ACCELERATE_USE_FSDP", None) is not None:

accelerator.state.fsdp_plugin.auto_wrap_policy = fsdp_auto_wrap_policy(model)

model = accelerator.prepare(model)

```

Example of parameter efficient tuning with [`mt0-xxl`](https://huggingface.co/bigscience/mt0-xxl) base model using 🤗 Accelerate is provided in `~examples/conditional_generation/peft_lora_seq2seq_accelerate_fsdp.py`.

a. First, run `accelerate config --config_file fsdp_config.yaml` and answer the questionnaire.

Below are the contents of the config file.

```yaml

command_file: null

commands: null

compute_environment: LOCAL_MACHINE

deepspeed_config: {}

distributed_type: FSDP

downcast_bf16: 'no'

dynamo_backend: 'NO'

fsdp_config:

fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP

fsdp_backward_prefetch_policy: BACKWARD_PRE

fsdp_offload_params: true

fsdp_sharding_strategy: 1

fsdp_state_dict_type: FULL_STATE_DICT

fsdp_transformer_layer_cls_to_wrap: T5Block

gpu_ids: null

machine_rank: 0

main_process_ip: null

main_process_port: null

main_training_function: main

megatron_lm_config: {}

mixed_precision: 'no'

num_machines: 1

num_processes: 2

rdzv_backend: static

same_network: true

tpu_name: null

tpu_zone: null

use_cpu: false

```

b. run the below command to launch the example script

```bash

accelerate launch --config_file fsdp_config.yaml examples/peft_lora_seq2seq_accelerate_fsdp.py

```

2. When using ZeRO3 with zero3_init_flag=True, if you find the gpu memory increase with training steps. we might need to update deepspeed after [deepspeed commit 42858a9891422abc](https://github.com/microsoft/DeepSpeed/commit/42858a9891422abcecaa12c1bd432d28d33eb0d4) . The related issue is [[BUG] Peft Training with Zero.Init() and Zero3 will increase GPU memory every forward step ](https://github.com/microsoft/DeepSpeed/issues/3002)

## Backlog:

- [x] Add tests

- [x] Multi Adapter training and inference support

- [x] Add more use cases and examples

- [x] Integrate`(IA)^3`, `AdaptionPrompt`

- [ ] Explore and possibly integrate methods like `Bottleneck Adapters`, ...

## Citing 🤗 PEFT

If you use 🤗 PEFT in your publication, please cite it by using the following BibTeX entry.

```bibtex

@Misc{peft,

title = {PEFT: State-of-the-art Parameter-Efficient Fine-Tuning methods},

author = {Sourab Mangrulkar and Sylvain Gugger and Lysandre Debut and Younes Belkada and Sayak Paul},

howpublished = {\url{https://github.com/huggingface/peft}},

year = {2022}

}

```

| 0 |

hf_public_repos | hf_public_repos/peft/pyproject.toml | [tool.black]

line-length = 119

target-version = ['py36']

[tool.ruff]

ignore = ["C901", "E501", "E741", "W605"]

select = ["C", "E", "F", "I", "W"]

line-length = 119

[tool.ruff.isort]

lines-after-imports = 2

known-first-party = ["peft"]

[isort]

default_section = "FIRSTPARTY"

known_first_party = "peft"

known_third_party = [

"numpy",

"torch",

"accelerate",

"transformers",

]

line_length = 119

lines_after_imports = 2

multi_line_output = 3

include_trailing_comma = true

force_grid_wrap = 0

use_parentheses = true

ensure_newline_before_comments = true

[tool.pytest]

doctest_optionflags = [

"NORMALIZE_WHITESPACE",

"ELLIPSIS",

"NUMBER",

]

[tool.pytest.ini_options]

addopts = "--cov=src/peft --cov-report=term-missing"

markers = [

"single_gpu_tests: tests that run on a single GPU",

"multi_gpu_tests: tests that run on multiple GPUs",

]

| 0 |

hf_public_repos | hf_public_repos/peft/setup.py | # Copyright 2023 The HuggingFace Team. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

from setuptools import find_packages, setup

extras = {}

extras["quality"] = ["black ~= 22.0", "ruff>=0.0.241", "urllib3<=2.0.0"]

extras["docs_specific"] = ["hf-doc-builder"]

extras["dev"] = extras["quality"] + extras["docs_specific"]

extras["test"] = extras["dev"] + ["pytest", "pytest-cov", "pytest-xdist", "parameterized", "datasets", "diffusers"]

setup(

name="peft",

version="0.5.0.dev0",

description="Parameter-Efficient Fine-Tuning (PEFT)",

license_files=["LICENSE"],