issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 262k ⌀ | issue_title stringlengths 1 1.02k | issue_comments_url stringlengths 53 116 | issue_comments_count int64 0 2.49k | issue_created_at stringdate 1999-03-17 02:06:42 2025-06-23 11:41:49 | issue_updated_at stringdate 2000-02-10 06:43:57 2025-06-23 11:43:00 | issue_html_url stringlengths 34 97 | issue_github_id int64 132 3.17B | issue_number int64 1 215k |

|---|---|---|---|---|---|---|---|---|---|

[

"libjxl",

"libjxl"

] | I was asked to create this issue from the discord. libjxl produces substantially larger files than cjxl in lossless mode due to the usage of 14-bit XYB, which in addition to being larger is also not truly lossless.

Code used below:

```CPP

m_opt = JxlEncoderOptionsCreate(m_enc, nullptr);

if (quality < 0) quality = 0;

JXLEE(JxlEncoderOptionsSetDistance(m_opt, quality));

JXLEE(JxlEncoderOptionsSetLossless(m_opt, quality == 0 ? JXL_TRUE : JXL_FALSE));

JXLEE(JxlEncoderOptionsSetEffort(m_opt, 3));

static constexpr JxlPixelFormat PFMT {

.num_channels = 3,

.data_type = JXL_TYPE_UINT8,

.endianness = JXL_NATIVE_ENDIAN,

.align = 0

};

m_info.xsize = width;

m_info.ysize = height;

m_info.bits_per_sample = 8;

m_info.num_color_channels = 3;

m_info.intensity_target = 255;

m_info.orientation = JXL_ORIENT_IDENTITY;

JXLEE(JxlEncoderSetBasicInfo(m_enc, &m_info));

JxlColorEncoding color_profile;

JxlColorEncodingSetToSRGB(&color_profile, JXL_FALSE);

JXLEE(JxlEncoderSetColorEncoding(m_enc, &color_profile));

JXLEE(JxlEncoderAddImageFrame(m_opt, &PFMT, buf, width * height * 3));

JxlEncoderCloseInput(m_enc);

```

(`quality` is set to 0 in this circumstance) | 14-bit XYB encoding incorrectly used when JxlEncoderOptionsSetLossless is set to JXL_TRUE | https://api.github.com/repos/libjxl/libjxl/issues/257/comments | 5 | 2021-06-30T20:26:25Z | 2022-03-25T16:22:35Z | https://github.com/libjxl/libjxl/issues/257 | 934,099,251 | 257 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

```

1576 - DecodeTest.PixelTestOpaqueSrgbLossyNoise (Failed)

1637 - DecodeTest/DecodeTestParam.PixelTest/301x33RGBtoRGBu8#GetParam()=301x33RGBtoRGBu8 (Failed)

1661 - DecodeTest/DecodeTestParam.PixelTest/301x33RGBtoRGBAu8#GetParam()=301x33RGBtoRGBAu8 (Failed)

1957 - RoundtripTest.Uint8FrameRoundtripTest (Failed)

1960 - RoundtripTest.TestICCProfile (Failed)

```

**To Reproduce**

Steps to reproduce the behavior:

/ci.sh asan

| Tests fail on ASAN build | https://api.github.com/repos/libjxl/libjxl/issues/254/comments | 1 | 2021-06-30T16:42:55Z | 2021-07-01T07:39:13Z | https://github.com/libjxl/libjxl/issues/254 | 933,900,822 | 254 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

Encoding `011.png` with modular mode crashes, lossy and lossless.

It also happens when encoding `.ppm` and `.pgx` files created from the `.png` file.

"Works" after transcoding to `.pfm`, but this changes the image and the resulting `.jxl` is larger than the original `.png`.

**To Reproduce**

```

~ $ cjxl -m 011.png 011.jxl

JPEG XL encoder v0.3.7 [AVX2]

build/libjxl-git/src/libjxl/lib/extras/codec_png.cc:497: PNG: no color_space/icc_pathname given, assuming sRGB

Read 822x1168 image, 38.3 MP/s

Encoding [Modular, lossless, squirrel], 8 threads.

build/libjxl-git/src/libjxl/lib/jxl/enc_ans.cc:220: JXL_DASSERT: n <= 255

fish: Job 1, 'cjxl -m 011.png 011.jxl' terminated by signal SIGILL (Illegal instruction)

```

**Expected behavior**

It should be possible to encode this image in modular mode.

**Environment**

- OS: Arch Linux

- Compiler version: clang 12.0.0

- CPU type: ryzen 2700x x86_64

- cjxl/djxl version string: 8193d7b370c36f3df0528f1b22c481c182627aa5

**Additional context**

Compiled with https://aur.archlinux.org/cgit/aur.git/tree/PKGBUILD?h=libjxl-git and

```

CFLAGS="-march=native -O3 -pipe -fstack-protector-strong --param=ssp-buffer-size=4 -fno-plt -pthread -Wno-error -w"

CXXFLAGS="$CFLAGS"

```

https://user-images.githubusercontent.com/7374061/123955891-707f7100-d9aa-11eb-9ff4-d8af1667466f.png

| Modular mode crashes with SIGILL; JXL_DASSERT: n <= 255 | https://api.github.com/repos/libjxl/libjxl/issues/251/comments | 3 | 2021-06-30T11:58:30Z | 2022-03-29T18:42:04Z | https://github.com/libjxl/libjxl/issues/251 | 933,629,377 | 251 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

When decoding 003.jxl, which was losslessly transcoded from a jpg, the resulting png file is only 1953 B small and all black, even though djxl didn't throw any errors.

Decoding to the original jpg file is still possible, but only because `--strip` was not used, otherwise it would be impossible to decode this file at all.

**To Reproduce**

(unpack the attached zip file to get 003.jxl)

```

~ $ djxl 003.jxl 003.png

JPEG XL decoder v0.3.7 [AVX2]

Read 274618 compressed bytes.

Decoded to pixels.

752 x 1080, 14.64 MP/s [14.64, 14.64], 1 reps, 8 threads.

Allocations: 476 (max bytes in use: 3.297596E+07)

~ $ djxl 003.jxl 003.jpg

JPEG XL decoder v0.3.7 [AVX2]

Read 274618 compressed bytes.

Reconstructed to JPEG.

752 x 1080, 16.33 MP/s [16.33, 16.33], 6.64 MB/s [6.64, 6.64], 1 reps, 8 threads.

Allocations: 429 (max bytes in use: 3.297534E+07)

~ $ du -b 003.*

330011 003.jpg

274618 003.jxl

1953 003.png

```

**Expected behavior**

It should be possible to decode this file to png.

**Environment**

- OS: Arch Linux

- Compiler version: clang 12.0.0

- CPU type: ryzen 2700x x86_64

- cjxl/djxl version string: 8193d7b370c36f3df0528f1b22c481c182627aa5

**Additional context**

Compiled with https://aur.archlinux.org/cgit/aur.git/tree/PKGBUILD?h=libjxl-git and

```

CFLAGS="-march=native -O3 -pipe -fstack-protector-strong --param=ssp-buffer-size=4 -fno-plt -pthread -Wno-error -w"

CXXFLAGS="$CFLAGS"

```

003.jxl inside a zip file, otherwise github wouldn't accept it.

[003.zip](https://github.com/libjxl/libjxl/files/6736434/003.zip)

| Decoding to png results in a small black image. | https://api.github.com/repos/libjxl/libjxl/issues/248/comments | 3 | 2021-06-29T21:12:23Z | 2021-07-05T15:36:08Z | https://github.com/libjxl/libjxl/issues/248 | 933,107,249 | 248 |

[

"libjxl",

"libjxl"

] | Hello, I got an error when I tried to decode jxl file after trunction it to 1KB. I have attached below PNG image that I encoded. Looks like this issue happens with PNG images that have transparency.

Error message:

```

JPEG XL decoder v0.3.7 [AVX2,SSE4,Scalar]

Read 1024 compressed bytes.

Failed to decompress to pixels.

```

My steps:

1. cjxl <input_png> <output_jxl> -p --distance=15

2. <truncate output jxl file by std::filesystem::file_size in C++>

3. djxl <input_jxl> <output_png> --allow_partial_files --allow_more_progressive_steps

Environment:

- OS: Windows 10

- Compiler version: Clang x64, Visual Studio 2019

- CPU type: x86_64

- cjxl/djxl version string: decoder v0.3.7 [AVX2,SSE4,Scalar], encoder v0.3.7 [AVX2,SSE4,Scalar]

Image:

| Fail decoding of truncated JXL file | https://api.github.com/repos/libjxl/libjxl/issues/245/comments | 8 | 2021-06-29T14:37:13Z | 2021-11-19T15:56:54Z | https://github.com/libjxl/libjxl/issues/245 | 932,766,253 | 245 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

`-e 3` produces a smaller file than `-e 9` when losslessly compressing the attached jpg.

**To Reproduce**

```

cjxl -e 3 a.jpg a-3.jxl

cjxl -e 9 a.jpg a-9.jxl

du -b *

JPEG XL encoder v0.3.7 [AVX2]

Read 1200x1000 image, 112.8 MP/s

Encoding [Container | JPEG, lossless transcode, falcon | JPEG reconstruction data], 4 threads.

Compressed to 127967 bytes (0.853 bpp).

1200 x 1000, 30.81 MP/s [30.81, 30.81], 1 reps, 4 threads.

Including container: 128487 bytes (0.857 bpp).

JPEG XL encoder v0.3.7 [AVX2]

Read 1200x1000 image, 104.5 MP/s

Encoding [Container | JPEG, lossless transcode, tortoise | JPEG reconstruction data], 4 threads.

Compressed to 128733 bytes (0.858 bpp).

1200 x 1000, 0.42 MP/s [0.42, 0.42], 1 reps, 4 threads.

Including container: 129253 bytes (0.862 bpp).

128487 a-3.jxl

129253 a-9.jxl

161639 a.jpg

```

**Expected behavior**

`-e 9` should produce the smallest files out of all the presets.

**Environment**

- OS: Arch Linux

- Compiler version: clang 12.0.0

- CPU type: ryzen 3500U x86_64

- cjxl/djxl version string: f8790509f3588413413682b611cf11f18f4498b1

**Additional context**

Compiled with https://aur.archlinux.org/cgit/aur.git/tree/PKGBUILD?h=libjxl-git and

```

CFLAGS="-march=native -O3 -pipe -fstack-protector-strong --param=ssp-buffer-size=4 -fno-plt -pthread -Wno-error -w"

CXXFLAGS="$CFLAGS"

```

https://user-images.githubusercontent.com/7374061/123633993-2cf5fd00-d81a-11eb-8be1-150ac3fbdd95.jpg

| Lossless JPEG -e 3 smaller than -e 9 | https://api.github.com/repos/libjxl/libjxl/issues/235/comments | 4 | 2021-06-28T12:20:23Z | 2021-11-18T09:41:58Z | https://github.com/libjxl/libjxl/issues/235 | 931,512,361 | 235 |

[

"libjxl",

"libjxl"

] | Arch Linux

GCC 11.1.0

JPEG XL encoder v0.3.7 [AVX2,SSE4,Scalar]

I tried to load an assortment of JXL images and there seems to be a bug specifically with JXLs that were transcoded from JPEG, it only seems to happen to the GIMP plugin specifically.

The issue does not occur with lossless JXL, or JXLs encoded with a -q < 100, only JPEG -> JXL

I get a GIMP error: "Opening '...' failed: JPEG XL image plug-in could not open image"

and then the following output in the console:

```

/home/.cache/yay/libjxl-git/src/libjxl/lib/jxl/image_metadata.cc:354: JXL_FAILURE: invalid min 0.001234 vs max 0.001001

/home/.cache/yay/libjxl-git/src/libjxl/lib/jxl/fields.cc:697: JXL_RETURN_IF_ERROR code=1: visitor.Visit(fields, PrintVisitors() ? "-- Read\n" : "")

/home/.cache/yay/libjxl-git/src/libjxl/lib/jxl/dec_file.cc:33: JXL_RETURN_IF_ERROR code=1: ReadImageMetadata(reader, &io->metadata.m)

/home/.cache/yay/libjxl-git/src/libjxl/lib/jxl/dec_file.cc:108: JXL_RETURN_IF_ERROR code=1: DecodeHeaders(&reader, io)

/home/.cache/yay/libjxl-git/src/libjxl/plugins/gimp/file-jxl-load.cc:95: JXL_RETURN_IF_ERROR code=1: DecodeFile(dparams, compressed, &io, &pool)

``` | GIMP plugin fails to open JXLs which were transcoded from JPEG | https://api.github.com/repos/libjxl/libjxl/issues/229/comments | 5 | 2021-06-26T00:30:34Z | 2021-08-25T08:55:46Z | https://github.com/libjxl/libjxl/issues/229 | 930,575,447 | 229 |

[

"libjxl",

"libjxl"

] | Hello honored devs,

cjxl keeps the alpha channel even when it is fully opaque. That's not a huge deal regarding file size but it negatively affects RAM consumption and especially decoding performance.

The encoder should automatically drop such unnecessary channels and maybe have a new parameter `--always-keep-alpha` if someone really wants to do that.

Thanks! | Ability to drop fully opaque alpha channel | https://api.github.com/repos/libjxl/libjxl/issues/219/comments | 3 | 2021-06-24T12:36:34Z | 2022-03-29T10:37:48Z | https://github.com/libjxl/libjxl/issues/219 | 929,184,869 | 219 |

[

"libjxl",

"libjxl"

] | **Is your feature request related to a problem? Please describe.**

JPEG XL seems to have several features that allows it to be a PSD replacement, but all the necessary metadata is currently encapsulated in the internal API. The only thing that is possible with the current API is to convert a PSD to JXL (the inverse conversion is not implemented). In any other cases, only the final blended image is accessible.

The feature request aims to make it possible to manipulate layered JXL images in image editor applications. To do that, the API needs to support reading and writing unblended images with their crop coordinates.

**Describe the solution you'd like**

The encoder can already take the input frame by frame. Therefore this could be implemented by allowing additional metadata (crop coordinates, blend mode) when calling `JxlEncoderAddImageFrame`.

The decoder can handle the output frame by frame as well, but the API is currently intended for full-sized frames. I propose to add an decoder option that, when enabled, outputs unblended, cropped frames. The current `JXL_DEC_FRAME` and `JXL_DEC_NEED_IMAGE_OUT_BUFFER` events could be reused to inform the application to resize the buffer when necessary.

**Describe alternatives you've considered**

TBD. The proposal is subject to change.

**Unresolved questions**

- Should we expose internal frames? For PSD-like usecases it's likely not used, but it might be useful for parsing animation files.

- Should we allow the application to have control over reference frames? This could be used control the "preferred final image", where some layers are hidden by default. It could also be used to create images where there's one "base" layer and multiple switchable "patch" layers, and the switchable variants would only depend on the base frame instead of sequentially depending on previous frames. | Expose APIs for crop coordinates/unblended frames | https://api.github.com/repos/libjxl/libjxl/issues/217/comments | 2 | 2021-06-24T06:59:28Z | 2022-03-29T10:33:31Z | https://github.com/libjxl/libjxl/issues/217 | 928,911,482 | 217 |

[

"libjxl",

"libjxl"

] | I packaged `libjxl` for nixpkgs; the `x86_64-linux` build works fine, but the `aarch64-linux` fails because apparently `skcms` triggers a GCC 9.3.0 `internal compiler error`:

https://github.com/NixOS/nixpkgs/pull/103160#issuecomment-866388610

Since this is just a free-time experiment for me and I don't have time to chase this up to skia or GCC, I'm reporting it here in case you are interested in following up on it. | build failure on aarch64-linux due to `skcms` triggering GCC internal compiler error | https://api.github.com/repos/libjxl/libjxl/issues/213/comments | 1 | 2021-06-22T22:57:51Z | 2022-03-29T10:31:42Z | https://github.com/libjxl/libjxl/issues/213 | 927,694,502 | 213 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

as in title when trying to run cjxl or djxl a error shows up "cjxl: error while loading shared libraries: libOpenEXR-3_0.so.27: cannot open shared object file: No such file or directory". seeing as this was a problem with openexr i have tried both the repository version of 3.0.4 and the git version from the aur. but this still happens with either version.

**To Reproduce**

Steps to reproduce the behavior:

1. try to run cjxl or djxl with either openexr3.0.4 or -git with any options

**Expected behavior**

cjxl or djxl should put a list of options

**Screenshots**

If applicable, add screenshots or example input/output images to help explain your problem.

**Environment**

- OS: Manjaro

- Compiler version: clang 12.0.0

- CPU type: x86_64

- cjxl/djxl version string: unable to retrieve as error stops program from running but from install is 3.7.r80-git

**Additional context**

Add any other context about the problem here.

| when using cjxl or djxl error:"cjxl: error while loading shared libraries: libOpenEXR-3_0.so.27: cannot open shared object file: No such file or directory" happens | https://api.github.com/repos/libjxl/libjxl/issues/204/comments | 8 | 2021-06-18T08:42:17Z | 2021-11-22T06:24:00Z | https://github.com/libjxl/libjxl/issues/204 | 924,682,850 | 204 |

[

"libjxl",

"libjxl"

] | **Is your feature request related to a problem? Please describe.**

It would be good to evaluate butteraugli on the CLIC-2021 perceptual quality task. This should provide additional information to he community with respect to its performance characteristics when compared to other potentially usable perceptual quality metrics that JPEG XL could optimize for.

**Describe the solution you'd like**

Please use the test data from the CLIC 2021 perceptual challenge to generate a CSV file with the decisions (see link below for the exact instructions):

https://github.com/fab-jul/clic2021-devkit/blob/main/README.md#perceptual-challenge

Please email them to me to get the final results / ranks. We'll publish these at: http://compression.cc/leaderboard/perceptual/test/

...and of course update this bug tracker.

| Evaluate butteraugli on the CLIC-2021 perceptual quality task | https://api.github.com/repos/libjxl/libjxl/issues/202/comments | 6 | 2021-06-17T20:54:26Z | 2022-03-29T10:30:30Z | https://github.com/libjxl/libjxl/issues/202 | 924,325,924 | 202 |

[

"libjxl",

"libjxl"

] | cjxl.exe image_21447_24bit.png image_21447_24bit.jxl -v -m -q 100 -s 5 -C 1 --num_threads=4

```

JPEG XL encoder v0.3.7 0.3.7-13649d2b [Scalar]

codec_png.cc:496: PNG: no color_space/icc_pathname given, assuming sRGB

Read 1563x1558 image, 20.7 MP/s

Encoding [Modular, lossless, hare], 4 threads.

transform.cc:70: JXL_FAILURE: Invalid channel range

```

https://github.com/libjxl/libjxl/commit/30dae3b5b0cb70134dd08964a6bb1f247f2ac412

| JXL_FAILURE: Invalid channel range | https://api.github.com/repos/libjxl/libjxl/issues/184/comments | 3 | 2021-06-16T10:32:08Z | 2021-06-17T10:17:08Z | https://github.com/libjxl/libjxl/issues/184 | 922,434,216 | 184 |

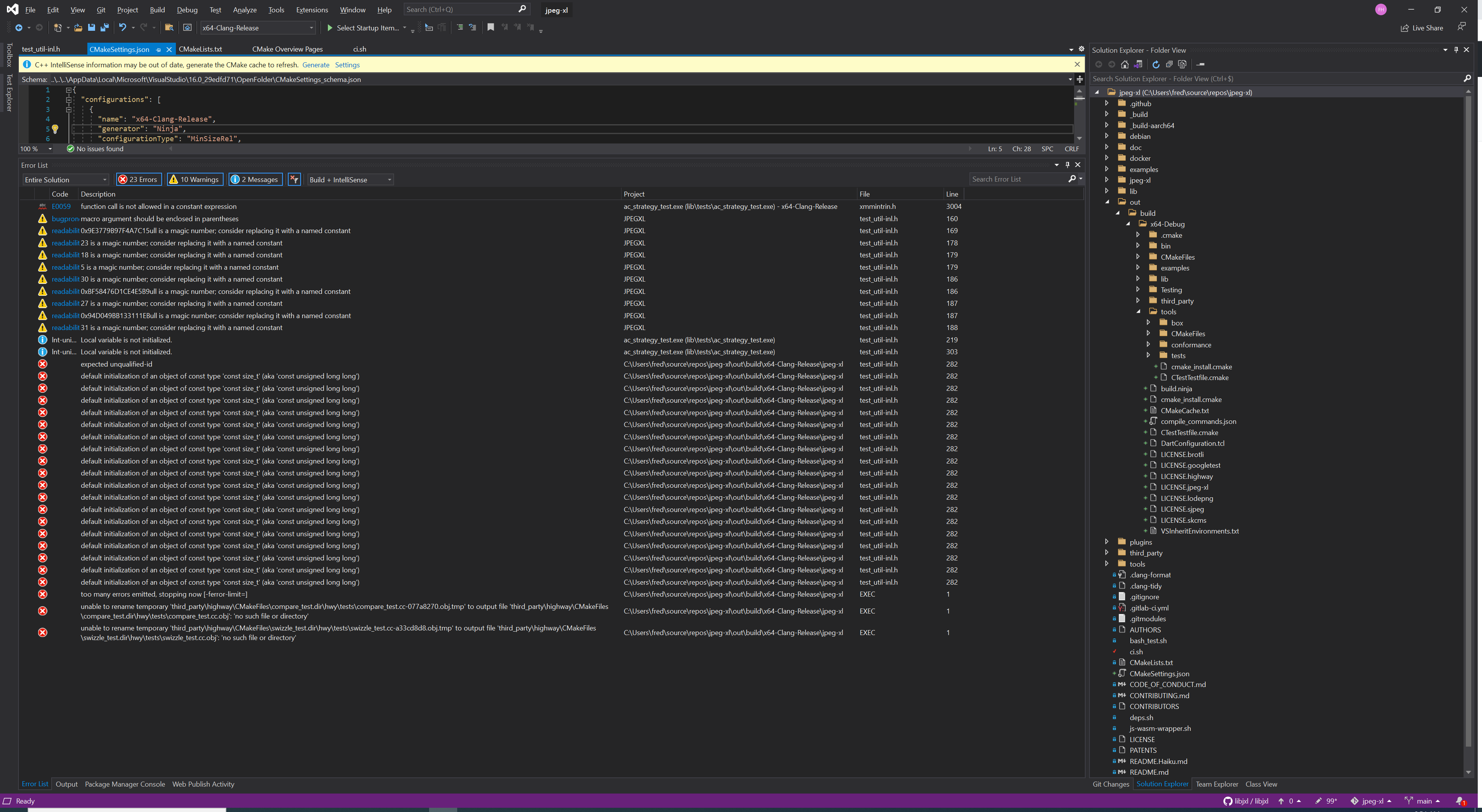

[

"libjxl",

"libjxl"

] | I followed the build for windows documentation

a lot of errors seems to be in test_util-int.h how can I skip the test build to see if it's work?

or how can I build it for windows? | Build for windows (advanced) doesn't build | https://api.github.com/repos/libjxl/libjxl/issues/180/comments | 15 | 2021-06-16T04:59:16Z | 2022-03-29T10:29:49Z | https://github.com/libjxl/libjxl/issues/180 | 922,095,994 | 180 |

[

"libjxl",

"libjxl"

] | Hello I built cjxl with the command

BUILD_TARGET=x86_64-w64-mingw32 SKIP_TEST=1 CC=clang-7 CXX=clang++-7 ./ci.sh release on docker,

build worked and I got the cjxl.exe but when I run it with a command line nothing is happening and it returns immediately without doing any thing or sending errors.

What dependencies should I install on windows to run the exe?

How can I modify the build command line so that all the dependencies are imbedded in the exe?

Thank you

| docker build for windows, can't run exe | https://api.github.com/repos/libjxl/libjxl/issues/179/comments | 8 | 2021-06-16T04:48:53Z | 2021-06-16T20:53:56Z | https://github.com/libjxl/libjxl/issues/179 | 922,088,884 | 179 |

[

"libjxl",

"libjxl"

] | ```

JPEG XL decoder v0.3.7 [AVX2,SSE4,Scalar]

/C/msys64/home/eustas/clients/libjxl/tools/cpu/cpu.cc:415: JXL_FAILURE: Unable to detect processor topology

Failed to choose default num_threads; you can avoid this error by specifying a --num_threads N argument.

``` | MSYS2 build fails to detect architecture (and number of threads) | https://api.github.com/repos/libjxl/libjxl/issues/168/comments | 3 | 2021-06-14T14:32:51Z | 2021-11-08T20:44:10Z | https://github.com/libjxl/libjxl/issues/168 | 920,476,110 | 168 |

[

"libjxl",

"libjxl"

] | Hello,

djxl crashes on some (not all) JXL files during decoding.

```

$ djxl jpegxl-logo.jxl output.png --num_threads=1

Segmentation fault

```

Test file: [jpegxl-logo.jxl](https://github.com/novomesk/qt-jpegxl-image-plugin/blob/main/testfiles/jpegxl-logo.jxl)

```

(gdb) run

Starting program: C:\msys64\mingw64\bin\djxl.exe jpegxl-logo.jxl output.png "--num_threads=1"

[New Thread 10912.0x3630]

[New Thread 10912.0x32d0]

[New Thread 10912.0x1a7c]

[New Thread 10912.0xc94]

Thread 5 received signal SIGSEGV, Segmentation fault.

[Switching to Thread 10912.0xc94]

0x00007ff64ad95967 in jxl::N_AVX2::ComputePixelChannel<hwy::N_AVX2::Simd<float, 8ull> >(hwy::N_AVX2::Simd<float, 8ull>, float, float const*, float const*, float const*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, unsigned long long) (x=8, gap=0xfddbdfb7e0, sm=0xfddbdfb840,

mc=0xfddbdfb8a0, row_bottom=0x2414425fb80, row=0x2414425f980,

row_top=0x2414425f780, dc_factor=0.000206910816, d=...)

at C:/msys64/home/daniel/libjxl/lib/jxl/compressed_dc.cc:96

96 *gap = MaxWorkaround(*gap, Abs((*mc - *sm) / dc_quant));

(gdb) bt

#0 0x00007ff64ad95967 in jxl::N_AVX2::ComputePixelChannel<hwy::N_AVX2::Simd<float, 8ull> >(hwy::N_AVX2::Simd<float, 8ull>, float, float const*, float const*, float const*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, decltype (Zero((hwy::N_AVX2::Simd<float, 8ull>)()))*, unsigned long long) (x=8, gap=0xfddbdfb7e0,

sm=0xfddbdfb840, mc=0xfddbdfb8a0, row_bottom=0x2414425fb80,

row=0x2414425f980, row_top=0x2414425f780, dc_factor=0.000206910816, d=...)

at C:/msys64/home/daniel/libjxl/lib/jxl/compressed_dc.cc:96

#1 jxl::N_AVX2::ComputePixel<hwy::N_AVX2::Simd<float, 8ull> > (x=8,

out_rows=0xfddbdfd310, rows_bottom=0xfddbdfd330, rows=0xfddbdfd350,

rows_top=0xfddbdfd370, dc_factors=0xfddb5fd400)

at C:/msys64/home/daniel/libjxl/lib/jxl/compressed_dc.cc:114

#2 operator() (__closure=0xfddb5fc200, y=1)

at C:/msys64/home/daniel/libjxl/lib/jxl/compressed_dc.cc:188

#3 0x00007ff64adb4090 in jxl::ThreadPool::RunCallState<jxl::Status(long long unsigned int), jxl::N_AVX2::AdaptiveDCSmoothing(float const*, jxl::Image3F*, jxl::ThreadPool*)::<lambda(int, int)> >::CallDataFunc(void *, uint32_t, size_t) (

jpegxl_opaque=0xfddb5fc0b0, value=1, thread_id=0)

at C:/msys64/home/daniel/libjxl/lib/jxl/base/data_parallel.h:88

#4 0x00007ff64ae06bb2 in jpegxl::ThreadParallelRunner::RunRange (

self=0xfddb5ff930, command=4294967439, thread=0)

at C:/msys64/home/daniel/libjxl/lib/threads/thread_parallel_runner_internal.cc:137

#5 0x00007ff64ae06cb8 in jpegxl::ThreadParallelRunner::ThreadFunc (

self=0xfddb5ff930, thread=0)

at C:/msys64/home/daniel/libjxl/lib/threads/thread_parallel_runner_internal.cc:167

#6 0x00007ff64b4977aa in std::__invoke_impl<void, void (*)(jpegxl::ThreadParallelRunner*, int), jpegxl::ThreadParallelRunner*, unsigned int> (

__f=@0x241441e31d8: 0x7ff64ae06bc0 <jpegxl::ThreadParallelRunner::ThreadFunc(jpegxl::ThreadParallelRunner*, int)>)

at C:/msys64/mingw64/include/c++/10.3.0/bits/invoke.h:60

#7 0x00007ff64b4ae2c0 in std::__invoke<void (*)(jpegxl::ThreadParallelRunner*, int), jpegxl::ThreadParallelRunner*, unsigned int> (

__fn=@0x241441e31d8: 0x7ff64ae06bc0 <jpegxl::ThreadParallelRunner::ThreadFunc(jpegxl::ThreadParallelRunner*, int)>)

at C:/msys64/mingw64/include/c++/10.3.0/bits/invoke.h:95

#8 0x00007ff64b45bbc0 in std::thread::_Invoker<std::tuple<void (*)(jpegxl::ThreadParallelRunner*, int), jpegxl::ThreadParallelRunner*, unsigned int> >::_M_invoke<0ull, 1ull, 2ull> (this=0x241441e31c8)

at C:/msys64/mingw64/include/c++/10.3.0/thread:264

#9 0x00007ff64b45bbe7 in std::thread::_Invoker<std::tuple<void (*)(jpegxl::ThreadParallelRunner*, int), jpegxl::ThreadParallelRunner*, unsigned int> >::operator() (this=0x241441e31c8) at C:/msys64/mingw64/include/c++/10.3.0/thread:271

#10 0x00007ff64b45b94c in std::thread::_State_impl<std::thread::_Invoker<std::tuple<void (*)(jpegxl::ThreadParallelRunner*, int), jpegxl::ThreadParallelRunner*, unsigned int> > >::_M_run (this=0x241441e31c0)

at C:/msys64/mingw64/include/c++/10.3.0/thread:215

#11 0x00007ff81eed06e1 in ?? () from C:\msys64\mingw64\bin\libstdc++-6.dll

#12 0x00007ff821d54f33 in ?? () from C:\msys64\mingw64\bin\libwinpthread-1.dll

#13 0x00007ff8340daf5a in msvcrt!_beginthreadex ()

from C:\WINDOWS\System32\msvcrt.dll

#14 0x00007ff8340db02c in msvcrt!_endthreadex ()

from C:\WINDOWS\System32\msvcrt.dll

#15 0x00007ff833827034 in KERNEL32!BaseThreadInitThunk ()

from C:\WINDOWS\System32\kernel32.dll

#16 0x00007ff834362651 in ntdll!RtlUserThreadStart ()

from C:\WINDOWS\SYSTEM32\ntdll.dll

#17 0x0000000000000000 in ?? ()

```

configuration:

`cmake -G "MSYS Makefiles" -DCMAKE_INSTALL_PREFIX=/mingw64 -DCMAKE_BUILD_TYPE=Debug -DJPEGXL_ENABLE_PLUGINS=OFF -DBUILD_TESTING=OFF -DJPEGXL_WARNINGS_AS_ERRORS=OFF -DJPEGXL_ENABLE_SJPEG=OFF -DJPEGXL_ENABLE_BENCHMARK=OFF -DJPEGXL_ENABLE_EXAMPLES=OFF -DJPEGXL_ENABLE_MANPAGES=OFF -DJPEGXL_FORCE_SYSTEM_BROTLI=ON ..`

I observed that when I added `-DHWY_COMPILE_ONLY_SCALAR` to CXX_FLAGS, the djxl worked correctly. | crash on Windows/MSYS2 | https://api.github.com/repos/libjxl/libjxl/issues/165/comments | 4 | 2021-06-14T11:44:01Z | 2021-11-12T13:57:53Z | https://github.com/libjxl/libjxl/issues/165 | 920,329,106 | 165 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

fuzzer_corpus fails on an assert. This failure was introduced by https://github.com/libjxl/libjxl/pull/25

**To Reproduce**

Steps to reproduce the behavior:

```bash

mkdir corpusdir

build/tools/fuzzer_corpus -j 0 corpusdir

```

Result:

```

Generating ImageSpec<size=8x8 * chan=3 depth=8 alpha=0 (premult=0) x frames=1 seed=407987, speed=1, butteraugli=1.5, modular_mode=0, lossy_palette=0, noise=0, preview=0, fuzzer_friendly=0, is_reconstructible_jpeg=1, orientation=4> as a6abe1aab9970bb0771cf78b376749e2

../lib/jxl/enc_frame.cc:1066: JXL_ASSERT: metadata->m.xyb_encoded == (cparams.color_transform == ColorTransform::kXYB)

Illegal instruction

```

**Environment**

ci.sh release build; Linux build. | fuzzer_corpus hits an assert | https://api.github.com/repos/libjxl/libjxl/issues/150/comments | 2 | 2021-06-10T13:32:26Z | 2021-06-10T16:24:45Z | https://github.com/libjxl/libjxl/issues/150 | 917,351,879 | 150 |

[

"libjxl",

"libjxl"

] | Could you please consider adding a new release tag? I would like to package libjxl for Void Linux (draft PR at https://github.com/void-linux/void-packages/pull/31397), which requires a release version. However, the last release (0.3.7) is outdated (old license, does not work with qt-jpegxl-image-plugin). | Request for new release tag | https://api.github.com/repos/libjxl/libjxl/issues/144/comments | 4 | 2021-06-10T09:28:05Z | 2021-08-06T19:52:12Z | https://github.com/libjxl/libjxl/issues/144 | 917,124,689 | 144 |

[

"libjxl",

"libjxl"

] | Sorry to be the bearer of bad news but the libvips fuzzers appear to have something against libjxl today :(

This image causes an integer overflow when decoding using the latest code on the `main` branch.

[clusterfuzz-testcase-minimized-6288583458684928.txt](https://github.com/libjxl/libjxl/files/6626055/clusterfuzz-testcase-minimized-6288583458684928.txt)

```

/src/libjxl/lib/jxl/modular/encoding/encoding.cc:228:24: runtime error: signed integer overflow: 1107427842 + 1073742852 cannot be represented in type 'int'

#0 0x10eedda in jxl::DecodeModularChannelMAANS(jxl::BitReader*, jxl::ANSSymbolReader*, std::__1::vector<unsigned char, std::__1::allocator<unsigned char> > const&, std::__1::vector<jxl::PropertyDecisionNode, std::__1::allocator<jxl::PropertyDecisionNode> > const&, jxl::weighted::Header const&, int, unsigned long, jxl::Image*) libjxl/lib/jxl/modular/encoding/encoding.cc:228:24

#1 0x10f179c in jxl::ModularDecode(jxl::BitReader*, jxl::Image&, jxl::GroupHeader&, unsigned long, jxl::ModularOptions*, std::__1::vector<jxl::PropertyDecisionNode, std::__1::allocator<jxl::PropertyDecisionNode> > const*, jxl::ANSCode const*, std::__1::vector<unsigned char, std::__1::allocator<unsigned char> > const*, bool) libjxl/lib/jxl/modular/encoding/encoding.cc:487:5

#2 0x10f1cc8 in jxl::ModularGenericDecompress(jxl::BitReader*, jxl::Image&, jxl::GroupHeader*, unsigned long, jxl::ModularOptions*, int, std::__1::vector<jxl::PropertyDecisionNode, std::__1::allocator<jxl::PropertyDecisionNode> > const*, jxl::ANSCode const*, std::__1::vector<unsigned char, std::__1::allocator<unsigned char> > const*, bool) libjxl/lib/jxl/modular/encoding/encoding.cc:517:21

#3 0x1456f7b in jxl::ModularFrameDecoder::DecodeGlobalInfo(jxl::BitReader*, jxl::FrameHeader const&, bool) libjxl/lib/jxl/dec_modular.cc:211:23

#4 0x13a4938 in jxl::FrameDecoder::ProcessDCGlobal(jxl::BitReader*) libjxl/lib/jxl/dec_frame.cc:381:46

#5 0x13a1191 in jxl::FrameDecoder::ProcessSections(jxl::FrameDecoder::SectionInfo const*, unsigned long,

```

Perhaps further clamping might be required here:

https://github.com/libjxl/libjxl/blob/87ebbe9d5cd7581afbcce650e6879bccf80e3beb/lib/jxl/modular/encoding/encoding.cc#L225-L228 | Possible integer overflow in DecodeModularChannelMAANS | https://api.github.com/repos/libjxl/libjxl/issues/140/comments | 1 | 2021-06-09T18:36:09Z | 2021-06-10T07:09:47Z | https://github.com/libjxl/libjxl/issues/140 | 916,536,180 | 140 |

[

"libjxl",

"libjxl"

] | Hello, the following file, discovered via fuzz testing, causes a divide by zero when decoded using the latest code on the `main` branch.

[clusterfuzz-testcase-minimized-jpegsave_buffer_fuzzer-5146219264475136.txt](https://github.com/libjxl/libjxl/files/6622107/clusterfuzz-testcase-minimized-jpegsave_buffer_fuzzer-5146219264475136.txt)

```

/src/libjxl/lib/jxl/splines.cc:105:39: runtime error: division by zero

#0 0x14cb3e8 in jxl::N_AVX2::(anonymous namespace)::DrawGaussian(jxl::Image3<float>*, jxl::Rect const&, jxl::Rect const&, jxl::Spline::Point const&, float, float const*, float, std::__1::vector<int, std::__1::allocator<int> >&, std::__1::vector<int, std::__1::allocator<int> >&, std::__1::vector<float, std::__1::allocator<float> >&) libjxl/lib/jxl/splines.cc:105:39

#1 0x14c7cbf in jxl::N_AVX2::(anonymous namespace)::DrawFromPoints(jxl::Image3<float>*, jxl::Rect const&, jxl::Rect const&, jxl::Spline const&, bool, std::__1::vector<std::__1::pair<jxl::Spline::Point, float>, std::__1::allocator<std::__1::pair<jxl::Spline::Point, float> > > const&, float) libjxl/lib/jxl/splines.cc:162:5

#2 0x14c2de5 in jxl::Status jxl::Splines::Apply<true>(jxl::Image3<float>*, jxl::Rect const&, jxl::Rect const&, jxl::ColorCorrelationMap const&) const libjxl/lib/jxl/splines.cc:507:5

#3 0x1479729 in jxl::FinalizeImageRect(jxl::Image3<float>*, jxl::Rect const&, std::__1::vector<std::__1::pair<jxl::Plane<float>*, jxl::Rect>, std::__1::allocator<std::__1::pair<jxl::Plane<float>*, jxl::Rect> > > const&, jxl::PassesDecoderState*, unsigned long, jxl::ImageBundle*, jxl::Rect const&) libjxl/lib/jxl/dec_reconstruct.cc:900:5

#4 0x1481683 in operator() libjxl/lib/jxl/dec_reconstruct.cc:1160:12

```

The point of failure is:

https://github.com/libjxl/libjxl/blob/6946efdf32adfde7cb7d715ae06912da5521dac7/lib/jxl/splines.cc#L105

which appears to be due to `sigma` being calculated as zero here:

https://github.com/libjxl/libjxl/blob/6946efdf32adfde7cb7d715ae06912da5521dac7/lib/jxl/splines.cc#L160-L161

I'm happy to help fix this, but am unsure what the right approach is here. Perhaps we should avoid the `DrawGaussian` call when `sigma` is zero, or maybe this is a `JXL_FAILURE` condition? | Possible divide by zero in DrawGaussian function of splines.cc | https://api.github.com/repos/libjxl/libjxl/issues/129/comments | 11 | 2021-06-09T08:23:49Z | 2021-08-20T18:39:56Z | https://github.com/libjxl/libjxl/issues/129 | 915,932,026 | 129 |

[

"libjxl",

"libjxl"

] | **Is your feature request related to a problem? Please describe.**

The current settings are not optimal

**Describe the solution you'd like**

Add `-I 0 -P 0 --palette=0` by default when `--lossy-palette` is enabled and if they are not specified

| Change the default settings for --lossy-palette | https://api.github.com/repos/libjxl/libjxl/issues/119/comments | 2 | 2021-06-07T22:57:41Z | 2025-04-27T03:11:52Z | https://github.com/libjxl/libjxl/issues/119 | 914,021,898 | 119 |

[

"libjxl",

"libjxl"

] | Dear all,

Can anyone clarify me which class code should I change in order to dump/write to file the predicted image by JPEG-XL in modular mode for lossless compression?

Kind regards,

F | Dump image prediction | https://api.github.com/repos/libjxl/libjxl/issues/116/comments | 7 | 2021-06-07T14:55:19Z | 2021-06-09T12:22:35Z | https://github.com/libjxl/libjxl/issues/116 | 913,642,229 | 116 |

[

"libjxl",

"libjxl"

] | Hello,

Can someone help building cjxl and djxl for mac os? (I managed to build a debian version with the advanced guide for debian)

may be a documentation page to build for different platform like macos, ios, and android could be done at some point?

Thank you

| How to build for mac os | https://api.github.com/repos/libjxl/libjxl/issues/115/comments | 9 | 2021-06-07T05:57:22Z | 2022-03-29T10:27:45Z | https://github.com/libjxl/libjxl/issues/115 | 913,129,113 | 115 |

[

"libjxl",

"libjxl"

] | It would be great if the plugin showed a dialog with encode options before actual saving.

The options could be: Distance, Quality, Effort, Progressive (which corresponds with `cjxl -h`).

A brief explanation of the options could be there as well.

(The plugin can't easily decide itself whether it should use lossy/lossless in the same way as cjxl because the input are pixels, not image formats.) | GIMP plugin: Add encode options dialog | https://api.github.com/repos/libjxl/libjxl/issues/100/comments | 0 | 2021-06-04T06:00:07Z | 2021-08-25T08:54:14Z | https://github.com/libjxl/libjxl/issues/100 | 911,149,090 | 100 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

Using container when losslessly transcoding JPEG to JPEG XL makes filesize reported by cjxl smaller than the one reported by the filesystem. It also doesn't change in cjxl whether you use a container or not, contrary to the reality reported by the filesystem.

**To Reproduce**

(on Linux and PowerShell)

1. `cjxl file.jpg file.jxl --strip; ls -l file.jxl`

Without container file sizes reported both by `cjxl` and `ls -l` are the same...

2. `cjxl file.jpg file.jxl; ls -l file.jxl`

...but they no longer match if you decide to use a container!

Note how the `cjxl`-reported file size is the same as in 1.

**Expected behavior**

Filesize should be reported properly by cjxl.

**Environment**

- cjxl/djxl version string: dfc730a8dd7d94f0ce9ec32573e7c9c7e178fb15 | File size reported by cjxl doesn't take the container cost into account | https://api.github.com/repos/libjxl/libjxl/issues/99/comments | 0 | 2021-06-04T05:28:20Z | 2021-06-14T15:38:21Z | https://github.com/libjxl/libjxl/issues/99 | 911,132,343 | 99 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

When `-Wp,-D_GLIBCXX_ASSERTIONS` is added to CXXFLAGS, many tests fail when their processes unexpectedly abort. In my tests exactly 79 tests fail, a list of these compiled by another user is here: https://aur.archlinux.org/pkgbase/libjxl/#comment-811172

**To Reproduce**

With the latest release, add `-Wp,-D_GLIBCXX_ASSERTIONS` to CXXFLAGS. Try to build and run tests with cmake, e.g.

cmake --build . -- -j8

cmake --build . -- test

**Expected behavior**

Tests complete successfully.

**Environment**

- OS: Arch Linux

- Compiler version: clang 11.0.1

- CPU type: x86_64

- cjxl/djxl version string: [v0.3.7 | SIMD supported: SSE4,Scalar]

**Additional context**

Arch Linux recently added this option to its default CXXFLAGS for building packages. Users trying to build libjxl as a package on Arch Linux are likely to run into this problem.

Here is sample output from running one of the tests directly:

"/home/adam/Downloads/jxl/libjxl/src/build/lib/tests/butteraugli_test" "--gtest_filter=ButteraugliTest.Distmap" "--gtest_also_run_disabled_tests"

Running main() from /build/gtest/src/googletest-release-1.10.0/googletest/src/gtest_main.cc

Note: Google Test filter = ButteraugliTest.Distmap

[==========] Running 1 test from 1 test suite.

[----------] Global test environment set-up.

[----------] 1 test from ButteraugliTest

[ RUN ] ButteraugliTest.Distmap

/usr/bin/../lib64/gcc/x86_64-pc-linux-gnu/11.1.0/../../../../include/c++/11.1.0/bits/atomic_base.h:268: void std::atomic_flag::clear(std::memory_order): Assertion '__b != memory_order_acq_rel' failed.

zsh: abort (core dumped) "/home/adam/Downloads/jxl/libjxl/src/build/lib/tests/butteraugli_test"

Possibly related bug: https://github.com/libjxl/libjxl/issues/64 (this user also had this compiler option enabled and failed to build libjxl) | Many tests fail when built with -Wp,-D_GLIBCXX_ASSERTIONS | https://api.github.com/repos/libjxl/libjxl/issues/98/comments | 2 | 2021-06-03T19:57:03Z | 2021-06-04T03:27:50Z | https://github.com/libjxl/libjxl/issues/98 | 910,809,777 | 98 |

[

"libjxl",

"libjxl"

] | It would be good to add a document that keeps track of software that has jxl support. Such a list serves several purposes:

- thank/acknowledge other projects for integrating jxl support

- point end-users to software that can read/write jxl

- keep track of the adoption status of jxl

- in case of a (security) bug, it's easier to see who might be affected and check if they are updated (in case they use static linking)

Here is a first attempt at making such a list. Please add missing software in the comments! When we have a more or less complete list, I'll make a pull request to add the list as a markdown document.

## Browsers

- Chromium: behind a flag since version 91, tracking bug: https://bugs.chromium.org/p/chromium/issues/detail?id=1178058

- Firefox: behind a flag since version 90, tracking bug: https://bugzilla.mozilla.org/show_bug.cgi?id=1539075

- Safari: not supported, tracking bug: https://bugs.webkit.org/show_bug.cgi?id=208235

- Edge: behind a flag since version 91, start with `.\msedge.exe --enable-features=JXL`

## Image libraries

- ImageMagick: supported since 7.0.10-54 (https://imagemagick.org/)

- libvips: supported since 8.11 (https://libvips.github.io/libvips/)

- Imlib2: https://github.com/alistair7/imlib2-jxl

## OS-level support / UI frameworks / file browser plugins

- Qt / KDE: plugin available: https://github.com/novomesk/qt-jpegxl-image-plugin

- GDK-pixbuf: plugin available in libjxl repo

- gThumb: https://ubuntuhandbook.org/index.php/2021/04/gthumb-3-11-3-adds-jpeg-xl-support/

- MacOS viewer/QuickLook plugin: https://github.com/yllan/JXLook

- Windows Imaging Component: https://github.com/mirillis/jpegxl-wic

- Windows thumbnail handler: https://github.com/saschanaz/jxl-winthumb

- OpenMandriva Lx (since 4.3 RC)

## Image editors

- GIMP: plugin available in libjxl repo, no official support, tracking bug: https://gitlab.gnome.org/GNOME/gimp/-/issues/4681

- Photoshop: no plugin available yet, no official support yet

## Image viewers

- XnView: https://www.xnview.com/en/

- ImageGlass: https://imageglass.org/

- Any viewer based on Qt, KDE, GDK-pixbuf, ImageMagick, libvips or imlib2 (see above)

- Qt viewers: gwenview, digiKam, KolourPaint, KPhotoAlbum, LXImage-Qt, qimgv, qView, nomacs, VookiImageViewer

## Online tools

- Squoosh: https://squoosh.app/

- Cloudinary: https://cloudinary.com/blog/cloudinary_supports_jpeg_xl

- MConverter: https://mconverter.eu/ | Add list of applications/projects using libjxl | https://api.github.com/repos/libjxl/libjxl/issues/96/comments | 6 | 2021-06-03T13:58:55Z | 2021-11-21T14:28:55Z | https://github.com/libjxl/libjxl/issues/96 | 910,522,993 | 96 |

[

"libjxl",

"libjxl"

] | **Is your feature request related to a problem? Please describe.**

Attempting to losslessly transcode a jpg to a progressively encoded jxl fails.

When you add `-p` to a lossless transcode, you get the following message:

`Error: progressive lossless JPEG transcode is not yet implemented.`

**Describe the solution you'd like**

A progressively transcoded jxl should be produced.

| Progressive Transcoding | https://api.github.com/repos/libjxl/libjxl/issues/92/comments | 1 | 2021-06-03T00:17:31Z | 2022-03-29T10:26:41Z | https://github.com/libjxl/libjxl/issues/92 | 909,978,904 | 92 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

Attempting to subsample colors causes encoding to fail.

**To Reproduce**

Add `--resampling=2` (or any other valid value other than 1).

An error occurs: `Failed to compress to VarDCT.`

**Expected behavior**

Produce an image.

**Environment**

- OS: Windows 10

- Compiler version: various

- CPU type: x86_64

- cjxl/djxl version string: [v0.3.7 | SIMD supported: AVX, Scalar]

| Subsampling Fails | https://api.github.com/repos/libjxl/libjxl/issues/91/comments | 16 | 2021-06-03T00:07:59Z | 2021-07-09T14:53:08Z | https://github.com/libjxl/libjxl/issues/91 | 909,974,861 | 91 |

[

"libjxl",

"libjxl"

] | In the case of premultiplied (associated) alpha, `djxl` and other applications ignore that image header field, and treat everything as if it is the usual non-premultiplied (unassociated) alpha like in PNG.

It would make sense to do the same thing here as with the Orientation: by default, the decoder should return pixels in a default way (orientation corrected, unassociated alpha), so applications that want to interpret the `alpha_associated` info themselves and get the raw data would have to explicitly ask the decoder to not do the normalization.

| Premultiplied alpha not rendered correctly | https://api.github.com/repos/libjxl/libjxl/issues/81/comments | 0 | 2021-06-02T07:37:58Z | 2021-06-03T12:59:33Z | https://github.com/libjxl/libjxl/issues/81 | 909,212,792 | 81 |

[

"libjxl",

"libjxl"

] | The bitstream can do premultiplied alpha, but currently cjxl has no way to select whether to do that or not.

Current behavior is that it does whatever the input format does, e.g. non-premultiplied alpha on PNG input and premultiplied alpha on EXR input.

It would be nice to have a flag to control this.

Also, in the non-premultiplied case, it would be good to have an option in the lossless mode to say "I don't care about the invisible pixels", so they can be made black or whatever else is good for compression (in lossy mode this is already done, but in lossless mode there is currently no way to do it, i.e. we now do what `cwebp -exact` does).

| Add option to cjxl do clear invisible pixels and/or do premultiplied alpha | https://api.github.com/repos/libjxl/libjxl/issues/76/comments | 2 | 2021-06-01T15:29:08Z | 2021-07-26T08:57:40Z | https://github.com/libjxl/libjxl/issues/76 | 908,416,264 | 76 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

cjxl allows command-line parameters for lossy Modular and lossy palette in the same command despite them being incompatible with each other by JPEG XL's design, thus resulting in buggy images.

**To Reproduce**

`cjxl smart_ptr.jpg smart_ptr.jxl -j -m -Q 50 --lossy-palette`

**Expected behavior**

The encoder either ignores one of the parameters or exits with a failure message.

**Screenshots**

**Environment**

- cjxl/djxl version: af6ece2e3b6bdece69cb4ce8f8cb7d630c5d72cb | Disable lossy palette when using lossy modular | https://api.github.com/repos/libjxl/libjxl/issues/75/comments | 0 | 2021-06-01T13:28:45Z | 2021-06-02T18:41:47Z | https://github.com/libjxl/libjxl/issues/75 | 908,294,850 | 75 |

[

"libjxl",

"libjxl"

] | From reading JxlDecoderSetPreviewOutBuffer() in decode.h it's not clear which color space the resulting buffer will have.

My first guess was that's the same as encode srgb for JXL_TYPE_UINT8 and linear-srgb for JXL_TYPE_FLOAT. But it seems to be JxlDecoderGetColorAsEncodedProfile()

=> Could please add some pointers for the user?

Moved this from gitlab https://gitlab.com/wg1/jpeg-xl/-/issues/243 as suggested.

If at all possible it would be nice to have a simple output-color-space option reducing all these possibilities of JxlDecoderGetColorAsEncodedProfile() return value to something easy-to-use. | Decode API: clarify color space of returned pixels | https://api.github.com/repos/libjxl/libjxl/issues/70/comments | 3 | 2021-06-01T07:21:46Z | 2021-11-20T11:34:35Z | https://github.com/libjxl/libjxl/issues/70 | 907,984,205 | 70 |

[

"libjxl",

"libjxl"

] | oss-fuzz might have found a nasty write-after-free bug in git master libjxl. This file:

http://www.rollthepotato.net/~john/.clusterfuzz-testcase-minimized-jpegsave_file_fuzzer-4933665846067200

Generate this asan error:

```

| ==291946==ERROR: AddressSanitizer: heap-use-after-free on address 0x622000015380 at pc 0x000000525dac bp 0x7fb1ddff69b0 sp 0x7fb1ddff6178

| WRITE of size 32 at 0x622000015380 thread T2

| SCARINESS: 55 (multi-byte-write-heap-use-after-free)

| #0 0x525dab in __asan_memset /src/llvm-project/compiler-rt/lib/asan/asan_interceptors_memintrinsics.cpp:26:3

| #1 0x2db1720 in Upsample<2, 2> libjxl/lib/jxl/dec_upsample.cc:106:3

| #2 0x2db1720 in jxl::N_AVX2::UpsampleRect(unsigned long, float const*, jxl::Plane<float> const&, jxl::Rect const&, jxl::Plane<float>*, jxl::Rect const&, long, unsigned long, float*, unsigned long) libjxl/lib/jxl/dec_upsample.cc:245:7

| #3 0x2ddfce0 in UpsampleRect libjxl/lib/jxl/dec_upsample.cc:336:3

| #4 0x2ddfce0 in jxl::Upsampler::UpsampleRect(jxl::Image3<float> const&, jxl::Rect const&, jxl::Image3<float>*, jxl::Rect const&, long, unsigned long, float*) const libjxl/lib/jxl/dec_upsample.cc:347:5

| #5 0x2d8ed4e in jxl::FinalizeImageRect(jxl::Image3<float>*, jxl::Rect const&, std::__1::vector<std::__1::pair<jxl::Plane<float>*, jxl::Rect>, std::__1::allocator<std::__1::pair<jxl::Plane<float>*, jxl::Rect> > > const&, jxl::PassesDecoderState*, unsigned long, jxl::ImageBundle*, jxl::Rect const&) libjxl/lib/jxl/dec_reconstruct.cc:822:24

| #6 0x2d9ad27 in operator() libjxl/lib/jxl/dec_reconstruct.cc:1048:12

| #7 0x2d9ad27 in jxl::ThreadPool::RunCallState<jxl::FinalizeFrameDecoding(jxl::ImageBundle*, jxl::PassesDecoderState*, jxl::ThreadPool*, bool, bool)::$_9, jxl::FinalizeFrameDecoding(jxl::ImageBundle*, jxl::PassesDecoderState*, jxl::ThreadPool*, bool, bool)::$_10>::CallDataFunc(void*, unsigned int, unsigned long) libjxl/lib/jxl/base/data_parallel.h:88:14

| #8 0x3177779 in RunRange libjxl/lib/threads/thread_parallel_runner_internal.cc:137:7

...

```

<details>

<summary>Full report</summary>

</details> | oss-fuzz reports a possible write-after-free in libjxl | https://api.github.com/repos/libjxl/libjxl/issues/66/comments | 3 | 2021-05-31T18:00:40Z | 2021-06-04T21:40:12Z | https://github.com/libjxl/libjxl/issues/66 | 907,641,254 | 66 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

The build fails on aarch64:

```

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h: In lambda function:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:166:53: note: use '-flax-vector-conversions' to permit conversions between vectors with differing element types or numbers of subparts

166 | Vec128<uint8_t, 16>(vreinterpretq_s16_u8(exp16)))

| ~~~~~~~~~~~~~~~~~~~~^~~~~~~

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:166:54: error: cannot convert 'int16x8_t' to 'uint8x16_t'

166 | Vec128<uint8_t, 16>(vreinterpretq_s16_u8(exp16)))

| ^~~~~

| |

| int16x8_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:5281:34: note: initializing argument 1 of 'int16x8_t vreinterpretq_s16_u8(uint8x16_t)'

5281 | vreinterpretq_s16_u8 (uint8x16_t __a)

| ~~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:171:54: error: cannot convert 'int16x8_t' to 'uint8x16_t'

171 | Vec128<uint8_t, 16>(vreinterpretq_s16_u8(exp16)))

| ^~~~~

| |

| int16x8_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:5281:34: note: initializing argument 1 of 'int16x8_t vreinterpretq_s16_u8(uint8x16_t)'

5281 | vreinterpretq_s16_u8 (uint8x16_t __a)

| ~~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:174:30: error: cannot convert 'uint8x16_t' to 'int16x8_t'

174 | vreinterpretq_u8_s16(pow_low), vreinterpretq_u8_s16(pow_high), 8));

| ^~~~~~~

| |

| uint8x16_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:5631:33: note: initializing argument 1 of 'uint8x16_t vreinterpretq_u8_s16(int16x8_t)'

5631 | vreinterpretq_u8_s16 (int16x8_t __a)

| ~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:174:61: error: cannot convert 'uint8x16_t' to 'int16x8_t'

174 | vreinterpretq_u8_s16(pow_low), vreinterpretq_u8_s16(pow_high), 8));

| ^~~~~~~~

| |

| uint8x16_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:5631:33: note: initializing argument 1 of 'uint8x16_t vreinterpretq_u8_s16(int16x8_t)'

5631 | vreinterpretq_u8_s16 (int16x8_t __a)

| ~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h: In function 'void jxl::N_NEON::{anonymous}::FastXYBTosRGB8(const Image3F&, const jxl::Rect&, const jxl::Rect&, uint8_t*, size_t)':

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:296:29: error: cannot convert '__Int16x8_t' to 'uint16x8_t' in initialization

296 | uint16x8_t r = srgb_tf(linear_r16);

| ~~~~~~~^~~~~~~~~~~~

| |

| __Int16x8_t

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:297:29: error: cannot convert '__Int16x8_t' to 'uint16x8_t' in initialization

297 | uint16x8_t g = srgb_tf(linear_g16);

| ~~~~~~~^~~~~~~~~~~~

| |

| __Int16x8_t

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:298:29: error: cannot convert '__Int16x8_t' to 'uint16x8_t' in initialization

298 | uint16x8_t b = srgb_tf(linear_b16);

| ~~~~~~~^~~~~~~~~~~~

| |

| __Int16x8_t

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:301:61: error: cannot convert 'uint16x8_t' to 'int16x8_t'

301 | vqmovun_s16(vrshrq_n_s16(vsubq_s16(r, vshrq_n_s16(r, 8)), 6));

| ^

| |

| uint16x8_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:25724:24: note: initializing argument 1 of 'int16x8_t vshrq_n_s16(int16x8_t, int)'

25724 | vshrq_n_s16 (int16x8_t __a, const int __b)

| ~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:303:61: error: cannot convert 'uint16x8_t' to 'int16x8_t'

303 | vqmovun_s16(vrshrq_n_s16(vsubq_s16(g, vshrq_n_s16(g, 8)), 6));

| ^

| |

| uint16x8_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:25724:24: note: initializing argument 1 of 'int16x8_t vshrq_n_s16(int16x8_t, int)'

25724 | vshrq_n_s16 (int16x8_t __a, const int __b)

| ~~~~~~~~~~^~~

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:36:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_xyb-inl.h:305:61: error: cannot convert 'uint16x8_t' to 'int16x8_t'

305 | vqmovun_s16(vrshrq_n_s16(vsubq_s16(b, vshrq_n_s16(b, 8)), 6));

| ^

| |

| uint16x8_t

In file included from /usr/include/hwy/ops/arm_neon-inl.h:18,

from /usr/include/hwy/highway.h:282,

from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/lib/jxl/dec_reconstruct.cc:26:

/usr/lib/gcc/aarch64-redhat-linux/11/include/arm_neon.h:25724:24: note: initializing argument 1 of 'int16x8_t vshrq_n_s16(int16x8_t, int)'

25724 | vshrq_n_s16 (int16x8_t __a, const int __b)

| ~~~~~~~~~~^~~

```

**To Reproduce**

```

%cmake -DENABLE_CCACHE=1 \

-DBUILD_TESTING=OFF \

-DINSTALL_GTEST:BOOL=OFF \

-DJPEGXL_ENABLE_BENCHMARK:BOOL=OFF \

-DJPEGXL_ENABLE_PLUGINS:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_BROTLI:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_GTEST:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_HWY:BOOL=ON \

-DJPEGXL_WARNINGS_AS_ERRORS:BOOL=OFF \

-DBUILD_SHARED_LIBS:BOOL=OFF

%cmake_build -- all doc

```

Flags:

```

CFLAGS='-O2 -flto=auto -ffat-lto-objects -fexceptions -g -grecord-gcc-switches -pipe -Wall -Werror=format-security -Wp,-D_FORTIFY_SOURCE=2 -Wp,-D_GLIBCXX_ASSERTIONS -specs=/usr/lib/rpm/redhat/redhat-hardened-cc1 -fstack-protector-strong -specs=/usr/lib/rpm/redhat/redhat-annobin-cc1 -mbranch-protection=standard -fasynchronous-unwind-tables -fstack-clash-protection'

LDFLAGS='-Wl,-z,relro -Wl,--as-needed -Wl,-z,now -specs=/usr/lib/rpm/redhat/redhat-hardened-ld '

```

**Environment**

- OS: Fedora Rawhide

- Compiler version: GCC 11.1.1

- CPU type:

```

Architecture: aarch64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

CPU(s): 5

On-line CPU(s) list: 0-4

Thread(s) per core: 1

Core(s) per socket: 5

Socket(s): 1

NUMA node(s): 1

Vendor ID: APM

Model: 2

Model name: X-Gene

Stepping: 0x3

BogoMIPS: 80.00

NUMA node0 CPU(s): 0-4

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Mitigation; PTI

Vulnerability Spec store bypass: Vulnerable

Vulnerability Spectre v1: Mitigation; __user pointer sanitization

Vulnerability Spectre v2: Vulnerable

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Flags: fp asimd evtstrm aes pmull sha1 sha2 crc32 cpuid

```

- libhwy: version 0.12.1

- cjxl/djxl version string: v0.3.7

Full log available at https://koji.fedoraproject.org/koji/taskinfo?taskID=69038231 | Build failure on aarch64 with GCC | https://api.github.com/repos/libjxl/libjxl/issues/64/comments | 5 | 2021-05-31T16:58:34Z | 2021-06-01T15:54:46Z | https://github.com/libjxl/libjxl/issues/64 | 907,613,431 | 64 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

The build fails on armv7hl:

```

In file included from /builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/skcms.cc:2071:

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/src/Transform_inl.h: In function 'baseline::F baseline::F_from_Half(baseline::U16)':

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/src/Transform_inl.h:158:26: error: 'float16x4_t' was not declared in this scope; did you mean 'bfloat16x4_t'?

158 | return vcvt_f32_f16((float16x4_t)half);

| ^~~~~~~~~~~

| bfloat16x4_t

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/src/Transform_inl.h:158:12: error: 'vcvt_f32_f16' was not declared in this scope; did you mean 'vcvt_f32_bf16'?

158 | return vcvt_f32_f16((float16x4_t)half);

| ^~~~~~~~~~~~

| vcvt_f32_bf16

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/src/Transform_inl.h: In function 'baseline::U16 baseline::Half_from_F(baseline::F)':

/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/third_party/skcms/src/Transform_inl.h:187:17: error: 'vcvt_f16_f32' was not declared in this scope; did you mean 'vcvt_bf16_f32'?

187 | return (U16)vcvt_f16_f32(f);

| ^~~~~~~~~~~~

| vcvt_bf16_f32

gmake[2]: *** [third_party/CMakeFiles/skcms.dir/build.make:79: third_party/CMakeFiles/skcms.dir/skcms/skcms.cc.o] Error 1

gmake[2]: Leaving directory '/builddir/build/BUILD/jpeg-xl-v0.3.7-9e9bce86164dc4d01c39eeeb3404d6aed85137b2/armv7hl-redhat-linux-gnueabi'

gmake[1]: *** [CMakeFiles/Makefile2:345: third_party/CMakeFiles/skcms.dir/all] Error 2

```

**To Reproduce**

```

%cmake -DENABLE_CCACHE=1 \

-DBUILD_TESTING=OFF \

-DINSTALL_GTEST:BOOL=OFF \

-DJPEGXL_ENABLE_BENCHMARK:BOOL=OFF \

-DJPEGXL_ENABLE_PLUGINS:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_BROTLI:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_GTEST:BOOL=ON \

-DJPEGXL_FORCE_SYSTEM_HWY:BOOL=ON \

-DJPEGXL_WARNINGS_AS_ERRORS:BOOL=OFF \

-DBUILD_SHARED_LIBS:BOOL=OFF

%cmake_build -- all doc

```

Flags:

```

CFLAGS='-O2 -flto=auto -ffat-lto-objects -fexceptions -g -grecord-gcc-switches -pipe -Wall -Werror=format-security -Wp,-D_FORTIFY_SOURCE=2 -Wp,-D_GLIBCXX_ASSERTIONS -specs=/usr/lib/rpm/redhat/redhat-hardened-cc1 -fstack-protector-strong -specs=/usr/lib/rpm/redhat/redhat-annobin-cc1 -march=armv7-a -mfpu=vfpv3-d16 -mtune=generic-armv7-a -mabi=aapcs-linux -mfloat-abi=hard'

LDFLAGS='-Wl,-z,relro -Wl,--as-needed -Wl,-z,now -specs=/usr/lib/rpm/redhat/redhat-hardened-ld '

```

**Environment**

- OS: Fedora Rawhide

- Compiler version: GCC 11.1.1

- CPU type:

```

CPU info:

Architecture: armv7l

Byte Order: Little Endian

CPU(s): 5

On-line CPU(s) list: 0-4

Thread(s) per core: 1

Core(s) per socket: 5

Socket(s): 1

Vendor ID: APM

Model: 2

Model name: X-Gene

Stepping: 0x3

BogoMIPS: 80.00

Flags: half thumb fastmult vfp edsp neon vfpv3 tls vfpv4 idiva idivt vfpd32 lpae evtstrm aes pmull sha1 sha2 crc32

```

- libhwy: version 0.12.1

- cjxl/djxl version string: v0.3.7

Full log available at https://koji.fedoraproject.org/koji/taskinfo?taskID=69038231 | Build failure on armv7hl with GCC | https://api.github.com/repos/libjxl/libjxl/issues/63/comments | 12 | 2021-05-31T16:55:52Z | 2021-11-21T22:57:43Z | https://github.com/libjxl/libjxl/issues/63 | 907,612,159 | 63 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

We have a mutex in the LCMS color_management which can probably be removed after an update in LCMS. See https://github.com/libjxl/libjxl/pull/23#discussion_r641966901 for context. | Remove LCMS mutex | https://api.github.com/repos/libjxl/libjxl/issues/53/comments | 2 | 2021-05-31T12:19:50Z | 2022-03-11T20:11:25Z | https://github.com/libjxl/libjxl/issues/53 | 907,419,968 | 53 |

[

"libjxl",

"libjxl"

] | **Summary**

For GIF and JPEG, libjxl will use lossless mode (GIF) / lossless JPEG transcode (JPEG) by default.

However, if you explicitly ask libjxl to use lossless mode by `-q 100` or `-d 0`, the results would be different.

**Steps to reproduce**

* `cjxl -s 3 $infile $outfile`

* `cjxl -s 3 -d 0 $infile $outfile`

* `cjxl -s 3 -q 100 $infile $outfile`

**Observed behavior**

For JPEG transcode, no parameter or `-q 100` produces 280305, but `-d 0` produces 280246.

For GIF, no parameter produces 47431412, while `-d 0` or `-q 100` produces output 26518874.

**Expected behavior**

The output files should be the same.

**Test files**

* https://www.ganganonline.com/contents/slime/img/slime_7cover.gif

* https://jpegxl.info/fallbacklogo.jpg

**Environment**

- OS: Linux

- Compiler version: clang

- CPU type: x86_64

- cjxl/djxl version string: `cjxl [v0.3.7 | SIMD supported: SSE4,Scalar]`

**Additional context**

For the test JPEG file, the difference is small, but for the GIF file, the difference is very large.

| GIF / JPEG -> Lossless JXL: Different results with and without `-q 100` / `-d 0` | https://api.github.com/repos/libjxl/libjxl/issues/44/comments | 0 | 2021-05-29T08:37:37Z | 2021-05-31T12:42:03Z | https://github.com/libjxl/libjxl/issues/44 | 906,419,615 | 44 |

[

"libjxl",

"libjxl"

] | **Describe the bug**

On [GitLab releases page](https://gitlab.com/wg1/jpeg-xl/-/releases), there is changelog (and notes) for every release, but [GitHub releases page](https://github.com/libjxl/libjxl/releases) does not.

**To Reproduce**

Go to GitHub releases page, and click "..." to expand the details of 0.3.7. It only contains:

> Update JPEG-XL with latest changes.

> This includes all changes up to 2021-03-29 10:37:25 +0000.

**Expected behavior**

For 0.3.7:

> * Bump JPEG XL version to 0.3.7.

> * Fix a rounding issue in 8-bit decoding.

>

> Note: This release is for evaluation purposes and may contain bugs, including security bugs, that will not be individually documented when fixed. Always prefer to use the latest release. Please provide feedback and report bugs here. | Releases page has no changelog | https://api.github.com/repos/libjxl/libjxl/issues/43/comments | 1 | 2021-05-29T02:26:52Z | 2021-05-31T14:06:22Z | https://github.com/libjxl/libjxl/issues/43 | 906,301,512 | 43 |

[

"libjxl",

"libjxl"

] | **Describe the solution you'd like**

I hope `cjxl` can offer presets for different types of source material, so that average users can have optimized outputs (in size and in image quality), without the need to know / try the advanced parameters.

It would be best if the presets be applied on both lossy and mathematically lossless mode.

**Additional context**

`cwebp` has `default, photo, picture, drawing, icon, text` presets.

| Presets for different types of source material | https://api.github.com/repos/libjxl/libjxl/issues/42/comments | 0 | 2021-05-29T02:14:40Z | 2022-03-29T10:28:15Z | https://github.com/libjxl/libjxl/issues/42 | 906,297,162 | 42 |

[

"libjxl",

"libjxl"

] | Right now, this repo here has no tags, but the one on gitlab does: https://gitlab.com/wg1/jpeg-xl/-/tags | Missing git tags from gitlab | https://api.github.com/repos/libjxl/libjxl/issues/40/comments | 1 | 2021-05-27T21:17:25Z | 2021-05-27T23:03:43Z | https://github.com/libjxl/libjxl/issues/40 | 904,199,293 | 40 |

[

"libjxl",

"libjxl"

] | I think oss-fuzz has found an assert failure in libjxl. This file:

http://www.rollthepotato.net/~john/clusterfuzz-testcase-minimized-pngsave_buffer_fuzzer-6695474309496832.fuzz

Triggers this:

```

| /src/libjxl/lib/jxl/image_ops.h:25: JXL_ASSERT: SameSize(from, *to)

| AddressSanitizer:DEADLYSIGNAL

| =================================================================

| ==484==ERROR: AddressSanitizer: ILL on unknown address 0x00000250cd19 (pc 0x00000250cd19 bp 0x7f7603b124d0 sp 0x7f7603b124d0 T4)

| #0 0x250cd19 in jxl::Abort() libjxl/lib/jxl/base/status.cc:42:3

| #1 0x28dd2ff in CopyImageTo<float> libjxl/lib/jxl/image_ops.h:25:3

| #2 0x28dd2ff in jxl::ImageBlender::PrepareBlending(jxl::PassesDecoderState*, jxl::FrameOrigin, unsigned long, unsigned long, jxl::ColorEncoding const&, jxl::ImageBundle*) libjxl/lib/jxl/blending.cc:130:9

| #3 0x2d5931d in jxl::FinalizeFrameDecoding(jxl::ImageBundle*, jxl::PassesDecoderState*, jxl::ThreadPool*, bool, bool) libjxl/lib/jxl/dec_reconstruct.cc:1081:5

| #4 0x2bc9b4a in jxl::FrameDecoder::Flush() libjxl/lib/jxl/dec_frame.cc:817:3

| #5 0x2bbe2b2 in jxl::FrameDecoder::FinalizeFrame() libjxl/lib/jxl/dec_frame.cc:849:3

| #6 0x2537b44 in jxl::(anonymous namespace)::JxlDecoderProcessInternal(JxlDecoderStruct*, unsigned char const*, unsigned long) libjxl/lib/jxl/decode.cc:1155:30

| #7 0x2532671 in JxlDecoderProcessInput libjxl/lib/jxl/decode.cc:1668:14

...

```

With this version of libjxl: https://gitlab.com/wg1/jpeg-xl/-/compare/040eae8105b61b312a67791213091103f4c0d034...30ea86ab4c1f1b98c21967a2e3d72a51fe77e454 | oss-fuzz reports an assert failure in libjxl | https://api.github.com/repos/libjxl/libjxl/issues/37/comments | 0 | 2021-05-27T15:30:40Z | 2021-06-10T17:04:35Z | https://github.com/libjxl/libjxl/issues/37 | 903,914,222 | 37 |

[

"libjxl",

"libjxl"

] | Hi, this image:

www.rollthepotato.net/~john/clusterfuzz-testcase-minimized-pngsave_buffer_fuzzer-5360982477111296

Produces this error in oss-fuzz:

```

| /src/jpeg-xl/lib/jxl/dec_modular.cc:471:47: runtime error: shift exponent -5 is negative

| #0 0x1434953 in jxl::ModularFrameDecoder::FinalizeDecoding(jxl::PassesDecoderState*, jxl::ThreadPool*, jxl::ImageBundle*) jpeg-xl/lib/jxl/dec_modular.cc:0

| #1 0x1387e5e in jxl::FrameDecoder::Flush() jpeg-xl/lib/jxl/dec_frame.cc:814:3

| #2 0x137f603 in jxl::FrameDecoder::FinalizeFrame() jpeg-xl/lib/jxl/dec_frame.cc:849:3

| #3 0x104eadf in jxl::(anonymous namespace)::JxlDecoderProcessInternal(JxlDecoderStruct*, unsigned char const*, unsigned long) jpeg-xl/lib/jxl/decode.cc:1155:30

| #4 0x104c287 in JxlDecoderProcessInput jpeg-xl/lib/jxl/decode.cc:1668:14

...

```

Not very important, but it should probably be fixed. | oss-fuzz has found an undefined shift (by -5) in libjxl | https://api.github.com/repos/libjxl/libjxl/issues/29/comments | 4 | 2021-05-27T09:06:11Z | 2021-05-31T11:01:44Z | https://github.com/libjxl/libjxl/issues/29 | 903,439,945 | 29 |

[

"libjxl",

"libjxl"

] | Mozilla uses clang-5.0 for some builds; but it doesn't work when compiling in C++17 mode (it is fine in C++11 mode) for what appears to be a clang-5 compiler bug.

We should add a check that libjxl compiles in release mode with clang-5 to not regress here.

Details: https://gitlab.com/wg1/jpeg-xl/-/issues/227 @saschanaz FYI | Add check that libjxl builds with clang-5.0 | https://api.github.com/repos/libjxl/libjxl/issues/28/comments | 5 | 2021-05-26T22:04:42Z | 2021-11-22T16:41:24Z | https://github.com/libjxl/libjxl/issues/28 | 902,963,008 | 28 |

[

"libjxl",

"libjxl"

] | hello,

I'm on aarch64 and am trying to build a standalone of jpeg-xl to detect breakages. I have already done this with one of your dependencies called thirdparty/highway: https://github.com/google/highway/issues/93

the fix for aarch64 was published in v0.12.1 ; so can you please pull in the new version with your submodule magic?

thanks :-) | please update thirdparty/highway to v0.12.1 to unbreak aarch64 and possibly armv7 | https://api.github.com/repos/libjxl/libjxl/issues/21/comments | 10 | 2021-05-26T13:35:10Z | 2021-06-25T22:27:41Z | https://github.com/libjxl/libjxl/issues/21 | 902,403,536 | 21 |

[

"libjxl",

"libjxl"

] | Hi

I used a test file from here...

`$ wget http://www.r0k.us/graphics/kodak/kodak/kodim20.png

`

Create a jxl file with speed 9...

`$ cjxl kodim20.png kodim20.jxl -s 9 -d 3

`

Create a progressive jxl file with speed 9...

`$ cjxl kodim20.png prog_kodim20.jxl -s 9 -d 3 -p

`

Compare the file sizes...

`$ ls -l kodim20.jxl | awk '{print $5}' && ls -l prog_kodim20.jxl | awk '{print $5}'

`

**24893

72467**

The progressive file is much larger than the non-progressive file.

I'm using `cjxl v0.3.7-30ea86ab` | Big file size with progressive + tortoise. | https://api.github.com/repos/libjxl/libjxl/issues/17/comments | 10 | 2021-05-26T10:41:05Z | 2021-05-30T13:15:05Z | https://github.com/libjxl/libjxl/issues/17 | 902,183,177 | 17 |

[

"libjxl",

"libjxl"

] | Thanks for starting the move to full open source!

However, to understand the codebase better, it would be hugely beneficial to have the full git commit history available, and not the squashed ones from the current public GitLab repo. | Full commit history | https://api.github.com/repos/libjxl/libjxl/issues/8/comments | 5 | 2021-05-26T07:48:49Z | 2021-05-26T16:43:29Z | https://github.com/libjxl/libjxl/issues/8 | 901,928,577 | 8 |

[

"libjxl",

"libjxl"

] | If the input files are JPEGs, `cjxl` creates `jpe????.tmp` files in the system `%temp%` folder and doesn't delete them after the encoding is finished.

_Windows 10 20H2 x64, cjxl v0.3.7-12-g04267a8_ | cjxl - temp JPEG files remain | https://api.github.com/repos/libjxl/libjxl/issues/6/comments | 18 | 2021-05-26T03:41:40Z | 2021-07-09T06:03:27Z | https://github.com/libjxl/libjxl/issues/6 | 901,705,435 | 6 |

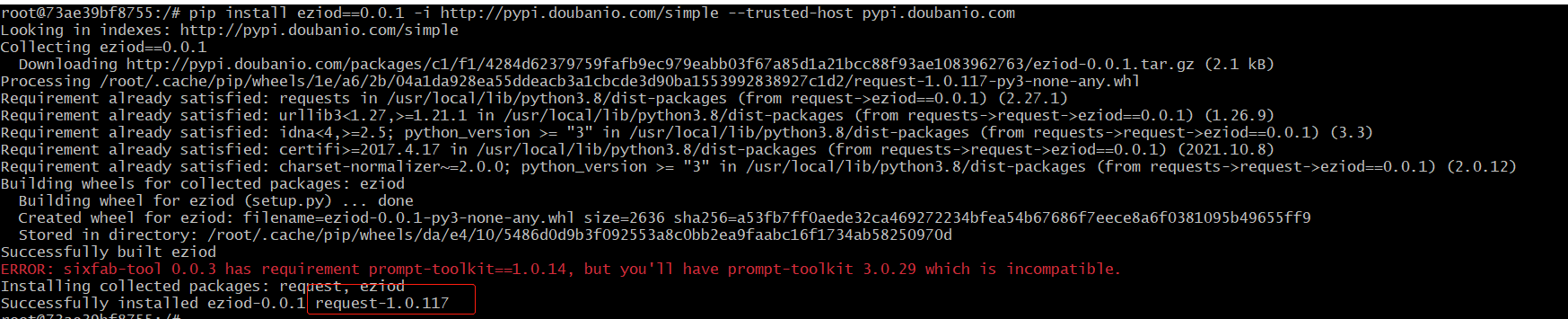

[

"alexw994",

"eziod"

] | We found a malicious backdoor in versions 0.0.1 of this project, and its malicious backdoor is the request package. Even if the request package was removed by pypi, many mirror sites did not completely delete this package, so it could still be installed.When using pip install eziod==0.0.1 -i http://pypi.doubanio.com/simple --trusted-host pypi.doubanio.com, the request malicious plugin can be successfully installed.

Repair suggestion: delete version 0.0.1 in PyPI | code execution backdoor | https://api.github.com/repos/alexw994/eziod/issues/1/comments | 0 | 2022-06-29T07:05:34Z | 2022-06-29T07:05:34Z | https://github.com/alexw994/eziod/issues/1 | 1,288,265,493 | 1 |

[

"FreeOpcUa",

"opcua-asyncio"

] | Hello,

I am importing an XML file with asyncua version 1.1.0. The XML contains many elements as shown below.

<Value>

<uax:ExtensionObject>

<uax:TypeId>

<uax:Identifier>ns=2;i=1005</uax:Identifier>

</uax:TypeId>

<uax:Body>

<uax:ByteString>ADKJAKKAGSKGKDUWGKW==</uax:ByteString>

</uax:Body>

</uax:ExtensionObject>

</Value>

The import gives an error "('Error val should be a list, this is a python-asyncua bug', 'ByteString', <class 'str'>, 'ADKJAKKAGSKGKDUWGKW==')

".

How can this be solved ?

Note: Only asyncua 1.1.0 is available to be installed in my system. | Import XML with ByteString in ExtensionObject Error | https://api.github.com/repos/FreeOpcUa/opcua-asyncio/issues/1846/comments | 0 | 2025-06-23T14:11:28Z | 2025-06-23T14:11:28Z | https://github.com/FreeOpcUa/opcua-asyncio/issues/1846 | 3,168,355,898 | 1,846 |

[

"FreeOpcUa",

"opcua-asyncio"

] | **Describe the bug** <br />

UaExpert nor TwinCat shows any names for parameters of methods registered with asyncua. This causes major confusion, especially when imported into something like TwinCat, where you can't make heads or tails of which parameter is which.

**To Reproduce**<br />

Run `examples/server-methods.py`. Produces the screenshot above for `func_async`.

**Expected behavior**<br />

There to be names associated with parameters, such as with `Server.RequestServerStateChange` in the OPC tree:

Or `Server.GetMonitoredItems` which also has named Output Arguments:

**Screenshots**<br />

One of my methods as it is imported into TwinCat 3 4026:

**Version**<br />

Python-Version: 3.12.11<br />

opcua-asyncio Version (e.g. master branch, 0.9): 1.1.6

| Unable to set parameter names on methods | https://api.github.com/repos/FreeOpcUa/opcua-asyncio/issues/1845/comments | 3 | 2025-06-20T19:51:03Z | 2025-06-21T21:22:34Z | https://github.com/FreeOpcUa/opcua-asyncio/issues/1845 | 3,164,243,901 | 1,845 |

[

"FreeOpcUa",

"opcua-asyncio"

] | `async def task(loop):

url = "opc.tcp://myserver:4840"

try:

client = Client(url=url)

client.set_user("User")

client.set_password("Test")

await client.connect()

print("connected to OPC UA Server")

except Exception:

_logger.exception("error")

finally:

await client.disconnect()

`

URI and Creds are Dummy, Iam just using the standard [auth with no security example](https://github.com/FreeOpcUa/opcua-asyncio/blob/master/examples/client-minimal-auth.py). I am able connect to server using "UA Expert" also using the .net opc ua.

Getting following error

> INFO:asyncua.client.client:connect

INFO:asyncua.client.ua_client.UaClient:opening connection

INFO:asyncua.uaprotocol:updating client limits to: TransportLimits(max_recv_buffer=65535, max_send_buffer=65535, max_chunk_count=0, max_message_size=0)

INFO:asyncua.client.ua_client.UASocketProtocol:open_secure_channel

INFO:asyncua.client.ua_client.UaClient:create_session

INFO:asyncua.client.ua_client.UASocketProtocol:close_secure_channel

INFO:asyncua.client.ua_client.UASocketProtocol:Request to close socket received

ERROR:asyncua:error

Traceback (most recent call last):

File "/Users/lalitm/Work/Python/opcaua/client_connect.py", line 17, in task

await client.connect()

File "/Users/lalitm/Work/Python/opcaua/.venv/lib/python3.12/site-packages/asyncua/client/client.py", line 321, in connect

await self.create_session()

File "/Users/lalitm/Work/Python/opcaua/.venv/lib/python3.12/site-packages/asyncua/client/client.py", line 510, in create_session

response = await self.uaclient.create_session(params)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/lalitm/Work/Python/opcaua/.venv/lib/python3.12/site-packages/asyncua/client/ua_client.py", line 367, in create_session

response.ResponseHeader.ServiceResult.check()

File "/Users/lalitm/Work/Python/opcaua/.venv/lib/python3.12/site-packages/asyncua/ua/uatypes.py", line 383, in check

raise UaStatusCodeError(self.value)

asyncua.ua.uaerrors._auto.BadCertificateInvalid: The certificate provided as a parameter is not valid.(BadCertificateInvalid)

INFO:asyncua.client.client:disconnect

INFO:asyncua.client.ua_client.UaClient:close_session

WARNING:asyncua.client.ua_client.UaClient:close_session but connection wasn't established

WARNING:asyncua.client.ua_client.UaClient:close_secure_channel was called but connection is closed

INFO:asyncua.client.ua_client.UASocketProtocol:Socket has closed connection | Cannot connect for server without security | https://api.github.com/repos/FreeOpcUa/opcua-asyncio/issues/1844/comments | 6 | 2025-06-18T12:32:13Z | 2025-06-19T04:20:24Z | https://github.com/FreeOpcUa/opcua-asyncio/issues/1844 | 3,156,700,786 | 1,844 |

[

"FreeOpcUa",

"opcua-asyncio"

] | Hi, I was looking for support of certificate chain for client side both for user authentication and secure channel, but could not find any direct mentions.

Is this feature implemented? If not, is it planned or at least known about? Are there any implementations or PoCs?

Thank you in advance for the answers. | Client certificate chain support | https://api.github.com/repos/FreeOpcUa/opcua-asyncio/issues/1843/comments | 0 | 2025-06-12T12:30:41Z | 2025-06-12T12:30:41Z | https://github.com/FreeOpcUa/opcua-asyncio/issues/1843 | 3,140,109,606 | 1,843 |

[

"FreeOpcUa",

"opcua-asyncio"

] | **Describe the bug** <br />

On several devices I get this warning:

_Requested session timeout to be 3600000ms, got 30000ms instead_

This happens while connecting this way:

```python

async with Client(url=opc_url) as client:

...

```

Setting

`client.session_timeout = 30000`

in this context has no effect, of course.

**To Reproduce**<br />

Steps to reproduce the behavior incl code:

Connecting this way to a Siemens S7-1500 device:

```python

from asyncua import Client