issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 262k ⌀ | issue_title stringlengths 1 1.02k | issue_comments_url stringlengths 53 116 | issue_comments_count int64 0 2.49k | issue_created_at stringdate 1999-03-17 02:06:42 2025-06-23 11:41:49 | issue_updated_at stringdate 2000-02-10 06:43:57 2025-06-23 11:43:00 | issue_html_url stringlengths 34 97 | issue_github_id int64 132 3.17B | issue_number int64 1 215k |

|---|---|---|---|---|---|---|---|---|---|

[

"opensearch-project",

"data-prepper"

] | ## CVE-2021-45105 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.11.2.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Path to dependency file: /performance-test/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.apache.logging.log4j/log4j-core/2.11.2/6c2fb3f5b7cd27504726aef1b674b542a0c9cf53/log4j-core-2.11.2.jar</p>

<p>

Dependency Hierarchy:

- zinc_2.12-1.3.5.jar (Root Library)

- zinc-compile-core_2.12-1.3.5.jar

- util-logging_2.12-1.3.0.jar

- :x: **log4j-core-2.11.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache Log4j2 versions 2.0-alpha1 through 2.16.0 (excluding 2.12.3 and 2.3.1) did not protect from uncontrolled recursion from self-referential lookups. This allows an attacker with control over Thread Context Map data to cause a denial of service when a crafted string is interpreted. This issue was fixed in Log4j 2.17.0, 2.12.3, and 2.3.1.

<p>Publish Date: 2021-12-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-45105>CVE-2021-45105</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://logging.apache.org/log4j/2.x/security.html">https://logging.apache.org/log4j/2.x/security.html</a></p>

<p>Release Date: 2021-12-18</p>

<p>Fix Resolution: org.apache.logging.log4j:log4j-core:2.3.1,2.12.3,2.17.0;org.ops4j.pax.logging:pax-logging-log4j2:1.11.10,2.0.11</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.apache.logging.log4j","packageName":"log4j-core","packageVersion":"2.11.2","packageFilePaths":["/performance-test/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"org.scala-sbt:zinc_2.12:1.3.5;org.scala-sbt:zinc-compile-core_2.12:1.3.5;org.scala-sbt:util-logging_2.12:1.3.0;org.apache.logging.log4j:log4j-core:2.11.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.apache.logging.log4j:log4j-core:2.3.1,2.12.3,2.17.0;org.ops4j.pax.logging:pax-logging-log4j2:1.11.10,2.0.11","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2021-45105","vulnerabilityDetails":"Apache Log4j2 versions 2.0-alpha1 through 2.16.0 (excluding 2.12.3 and 2.3.1) did not protect from uncontrolled recursion from self-referential lookups. This allows an attacker with control over Thread Context Map data to cause a denial of service when a crafted string is interpreted. This issue was fixed in Log4j 2.17.0, 2.12.3, and 2.3.1.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-45105","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | CVE-2021-45105 (Medium) detected in log4j-core-2.11.2.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/996/comments | 1 | 2022-02-07T19:39:31Z | 2022-02-09T16:04:18Z | https://github.com/opensearch-project/data-prepper/issues/996 | 1,126,417,915 | 996 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2021-43797 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.68.Final.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /data-prepper-plugins/otel-trace-raw-prepper/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-codec-http/4.1.68.Final/fc2e0526ceba7fe1d0ca1adfedc301afcc47bc51/netty-codec-http-4.1.68.Final.jar</p>

<p>

Dependency Hierarchy:

- armeria-1.0.0.jar (Root Library)

- netty-handler-proxy-4.1.68.Final.jar

- :x: **netty-codec-http-4.1.68.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Netty is an asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients. Netty prior to version 4.1.71.Final skips control chars when they are present at the beginning / end of the header name. It should instead fail fast as these are not allowed by the spec and could lead to HTTP request smuggling. Failing to do the validation might cause netty to "sanitize" header names before it forward these to another remote system when used as proxy. This remote system can't see the invalid usage anymore, and therefore does not do the validation itself. Users should upgrade to version 4.1.71.Final.

<p>Publish Date: 2021-12-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43797>CVE-2021-43797</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="CVE-2021-43797">CVE-2021-43797</a></p>

<p>Release Date: 2021-12-09</p>

<p>Fix Resolution: io.netty:netty-codec-http:4.1.71.Final,io.netty:netty-all:4.1.71.Final</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-codec-http","packageVersion":"4.1.68.Final","packageFilePaths":["/data-prepper-plugins/otel-trace-raw-prepper/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"com.linecorp.armeria:armeria:1.0.0;io.netty:netty-handler-proxy:4.1.68.Final;io.netty:netty-codec-http:4.1.68.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-codec-http:4.1.71.Final,io.netty:netty-all:4.1.71.Final","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2021-43797","vulnerabilityDetails":"Netty is an asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers \u0026 clients. Netty prior to version 4.1.71.Final skips control chars when they are present at the beginning / end of the header name. It should instead fail fast as these are not allowed by the spec and could lead to HTTP request smuggling. Failing to do the validation might cause netty to \"sanitize\" header names before it forward these to another remote system when used as proxy. This remote system can\u0027t see the invalid usage anymore, and therefore does not do the validation itself. Users should upgrade to version 4.1.71.Final.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43797","cvss3Severity":"medium","cvss3Score":"6.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"Required","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | CVE-2021-43797 (Medium) detected in netty-codec-http-4.1.68.Final.jar | https://api.github.com/repos/opensearch-project/data-prepper/issues/995/comments | 0 | 2022-02-07T19:39:29Z | 2022-03-01T17:04:28Z | https://github.com/opensearch-project/data-prepper/issues/995 | 1,126,417,884 | 995 |

[

"opensearch-project",

"data-prepper"

] | ## WS-2020-0408 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-handler-4.1.68.Final.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /data-prepper-plugins/peer-forwarder/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/io.netty/netty-handler/4.1.68.Final/f55a4ad40f228baf6005ac5ca39915ce5dfb3bd5/netty-handler-4.1.68.Final.jar</p>

<p>

Dependency Hierarchy:

- armeria-1.0.0.jar (Root Library)

- netty-codec-http2-4.1.68.Final.jar

- :x: **netty-handler-4.1.68.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.

<p>Publish Date: 2020-06-22

<p>URL: <a href=https://github.com/netty/netty/issues/10362>WS-2020-0408</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/WS-2020-0408">https://nvd.nist.gov/vuln/detail/WS-2020-0408</a></p>

<p>Release Date: 2020-06-22</p>

<p>Fix Resolution: io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-handler","packageVersion":"4.1.68.Final","packageFilePaths":["/data-prepper-plugins/peer-forwarder/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"com.linecorp.armeria:armeria:1.0.0;io.netty:netty-codec-http2:4.1.68.Final;io.netty:netty-handler:4.1.68.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"WS-2020-0408","vulnerabilityDetails":"An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.","vulnerabilityUrl":"https://github.com/netty/netty/issues/10362","cvss3Severity":"high","cvss3Score":"7.4","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | WS-2020-0408 (High) detected in netty-handler-4.1.68.Final.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/994/comments | 1 | 2022-02-07T19:39:27Z | 2022-03-01T17:57:05Z | https://github.com/opensearch-project/data-prepper/issues/994 | 1,126,417,855 | 994 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2020-8908 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>guava-29.0-jre.jar</b></p></summary>

<p>Guava is a suite of core and expanded libraries that include

utility classes, google's collections, io classes, and much

much more.</p>

<p>Library home page: <a href="https://github.com/google/guava">https://github.com/google/guava</a></p>

<p>Path to dependency file: /data-prepper-plugins/drop-events-processor/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.guava/guava/29.0-jre/801142b4c3d0f0770dd29abea50906cacfddd447/guava-29.0-jre.jar</p>

<p>

Dependency Hierarchy:

- checkstyle-8.37.jar (Root Library)

- :x: **guava-29.0-jre.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/90bdaa7e7833bdd504c817e49d4434b4d8880f56">90bdaa7e7833bdd504c817e49d4434b4d8880f56</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A temp directory creation vulnerability exists in all versions of Guava, allowing an attacker with access to the machine to potentially access data in a temporary directory created by the Guava API com.google.common.io.Files.createTempDir(). By default, on unix-like systems, the created directory is world-readable (readable by an attacker with access to the system). The method in question has been marked @Deprecated in versions 30.0 and later and should not be used. For Android developers, we recommend choosing a temporary directory API provided by Android, such as context.getCacheDir(). For other Java developers, we recommend migrating to the Java 7 API java.nio.file.Files.createTempDirectory() which explicitly configures permissions of 700, or configuring the Java runtime's java.io.tmpdir system property to point to a location whose permissions are appropriately configured.

<p>Publish Date: 2020-12-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8908>CVE-2020-8908</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908</a></p>

<p>Release Date: 2020-12-10</p>

<p>Fix Resolution (com.google.guava:guava): 30.0-android</p>

<p>Direct dependency fix Resolution (com.puppycrawl.tools:checkstyle): 8.38</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| CVE-2020-8908 (Low) detected in guava-29.0-jre.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/993/comments | 3 | 2022-02-07T19:39:25Z | 2022-08-15T16:20:38Z | https://github.com/opensearch-project/data-prepper/issues/993 | 1,126,417,827 | 993 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2019-10782 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>checkstyle-8.27.jar</b></p></summary>

<p>Checkstyle is a development tool to help programmers write Java code

that adheres to a coding standard</p>

<p>Library home page: <a href="https://checkstyle.org/">https://checkstyle.org/</a></p>

<p>Path to dependency file: /research/zipkin-opensearch-to-otel/build.gradle</p>

<p>Path to vulnerable library: /e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar,/e/caches/modules-2/files-2.1/com.puppycrawl.tools/checkstyle/8.27/5e17b50cd30f7a680240b526a279545f5fd05efa/checkstyle-8.27.jar</p>

<p>

Dependency Hierarchy:

- :x: **checkstyle-8.27.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/90bdaa7e7833bdd504c817e49d4434b4d8880f56">90bdaa7e7833bdd504c817e49d4434b4d8880f56</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

All versions of com.puppycrawl.tools:checkstyle before 8.29 are vulnerable to XML External Entity (XXE) Injection due to an incomplete fix for CVE-2019-9658.

<p>Publish Date: 2020-01-30

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-10782>CVE-2019-10782</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-10782">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-10782</a></p>

<p>Release Date: 2020-02-10</p>

<p>Fix Resolution: 8.29</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.puppycrawl.tools","packageName":"checkstyle","packageVersion":"8.27","packageFilePaths":["/research/zipkin-opensearch-to-otel/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"com.puppycrawl.tools:checkstyle:8.27","isMinimumFixVersionAvailable":true,"minimumFixVersion":"8.29","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2019-10782","vulnerabilityDetails":"All versions of com.puppycrawl.tools:checkstyle before 8.29 are vulnerable to XML External Entity (XXE) Injection due to an incomplete fix for CVE-2019-9658.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-10782","cvss3Severity":"medium","cvss3Score":"5.3","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"Low","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | CVE-2019-10782 (Medium) detected in checkstyle-8.27.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/992/comments | 3 | 2022-02-07T19:39:23Z | 2022-05-06T15:56:14Z | https://github.com/opensearch-project/data-prepper/issues/992 | 1,126,417,792 | 992 |

[

"opensearch-project",

"data-prepper"

] | ## WS-2021-0616 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.13.0.jar</b>, <b>jackson-core-2.13.0.jar</b></p></summary>

<p>

<details><summary><b>jackson-databind-2.13.0.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /data-prepper-plugins/opensearch/build.gradle</p>

<p>Path to vulnerable library: /e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.13.0/889672a1721d6d85b2834fcd29d3fda92c8c8891/jackson-databind-2.13.0.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.13.0.jar** (Vulnerable Library)

</details>

<details><summary><b>jackson-core-2.13.0.jar</b></p></summary>

<p>Core Jackson processing abstractions (aka Streaming API), implementation for JSON</p>

<p>Path to dependency file: /data-prepper-plugins/date-processor/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar,/e/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-core/2.13.0/e957ec5442966e69cef543927bdc80e5426968bb/jackson-core-2.13.0.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-core-2.13.0.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind before 2.12.6 and 2.13.1 there is DoS when using JDK serialization to serialize JsonNode.

<p>Publish Date: 2021-11-20

<p>URL: <a href=https://github.com/FasterXML/jackson-databind/commit/3ccde7d938fea547e598fdefe9a82cff37fed5cb>WS-2021-0616</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/3328">https://github.com/FasterXML/jackson-databind/issues/3328</a></p>

<p>Release Date: 2021-11-20</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.12.6, 2.13.1; com.fasterxml.jackson.core:jackson-core:2.12.6, 2.13.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.13.0","packageFilePaths":["/data-prepper-plugins/opensearch/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.13.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.12.6, 2.13.1; com.fasterxml.jackson.core:jackson-core:2.12.6, 2.13.1","isBinary":false},{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-core","packageVersion":"2.13.0","packageFilePaths":["/data-prepper-plugins/date-processor/build.gradle"],"isTransitiveDependency":false,"dependencyTree":"com.fasterxml.jackson.core:jackson-core:2.13.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.12.6, 2.13.1; com.fasterxml.jackson.core:jackson-core:2.12.6, 2.13.1","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"WS-2021-0616","vulnerabilityDetails":"FasterXML jackson-databind before 2.12.6 and 2.13.1 there is DoS when using JDK serialization to serialize JsonNode.","vulnerabilityUrl":"https://github.com/FasterXML/jackson-databind/commit/3ccde7d938fea547e598fdefe9a82cff37fed5cb","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | WS-2021-0616 (Medium) detected in jackson-databind-2.13.0.jar, jackson-core-2.13.0.jar | https://api.github.com/repos/opensearch-project/data-prepper/issues/991/comments | 0 | 2022-02-07T19:39:20Z | 2022-02-08T22:48:35Z | https://github.com/opensearch-project/data-prepper/issues/991 | 1,126,417,750 | 991 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2021-22569 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>protobuf-java-3.18.1.jar</b></p></summary>

<p>Core Protocol Buffers library. Protocol Buffers are a way of encoding structured data in an

efficient yet extensible format.</p>

<p>Library home page: <a href="https://developers.google.com/protocol-buffers/">https://developers.google.com/protocol-buffers/</a></p>

<p>Path to dependency file: /data-prepper-plugins/service-map-stateful/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.protobuf/protobuf-java/3.18.1/492c35bb914d122cf12ab3acaf2ba576b40f92ce/protobuf-java-3.18.1.jar</p>

<p>

Dependency Hierarchy:

- opentelemetry-proto-1.7.1-alpha.jar (Root Library)

- :x: **protobuf-java-3.18.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/90bdaa7e7833bdd504c817e49d4434b4d8880f56">90bdaa7e7833bdd504c817e49d4434b4d8880f56</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue in protobuf-java allowed the interleaving of com.google.protobuf.UnknownFieldSet fields in such a way that would be processed out of order. A small malicious payload can occupy the parser for several minutes by creating large numbers of short-lived objects that cause frequent, repeated pauses. We recommend upgrading libraries beyond the vulnerable versions.

<p>Publish Date: 2022-01-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-22569>CVE-2021-22569</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-wrvw-hg22-4m67">https://github.com/advisories/GHSA-wrvw-hg22-4m67</a></p>

<p>Release Date: 2022-01-10</p>

<p>Fix Resolution: com.google.protobuf:protobuf-java:3.16.1,3.18.2,3.19.2; com.google.protobuf:protobuf-kotlin:3.18.2,3.19.2; google-protobuf - 3.19.2</p>

</p>

</details>

<p></p>

| CVE-2021-22569 (Medium) detected in protobuf-java-3.18.1.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/990/comments | 3 | 2022-02-07T19:39:18Z | 2022-10-07T17:06:21Z | https://github.com/opensearch-project/data-prepper/issues/990 | 1,126,417,711 | 990 |

[

"opensearch-project",

"data-prepper"

] | ## WS-2021-0419 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>gson-2.8.6.jar</b></p></summary>

<p>Gson JSON library</p>

<p>Library home page: <a href="https://github.com/google/gson">https://github.com/google/gson</a></p>

<p>Path to dependency file: /data-prepper-plugins/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.google.code.gson/gson/2.8.6/9180733b7df8542621dc12e21e87557e8c99b8cb/gson-2.8.6.jar</p>

<p>

Dependency Hierarchy:

- protobuf-java-util-3.13.0.jar (Root Library)

- :x: **gson-2.8.6.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Denial of Service vulnerability was discovered in gson before 2.8.9 via the writeReplace() method.

<p>Publish Date: 2021-10-11

<p>URL: <a href=https://github.com/google/gson/pull/1991>WS-2021-0419</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/google/gson/releases/tag/gson-parent-2.8.9">https://github.com/google/gson/releases/tag/gson-parent-2.8.9</a></p>

<p>Release Date: 2021-10-11</p>

<p>Fix Resolution: com.google.code.gson:gson:2.8.9</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.google.code.gson","packageName":"gson","packageVersion":"2.8.6","packageFilePaths":["/data-prepper-plugins/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"com.google.protobuf:protobuf-java-util:3.13.0;com.google.code.gson:gson:2.8.6","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.google.code.gson:gson:2.8.9","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"WS-2021-0419","vulnerabilityDetails":"Denial of Service vulnerability was discovered in gson before 2.8.9 via the writeReplace() method.","vulnerabilityUrl":"https://github.com/google/gson/pull/1991","cvss3Severity":"high","cvss3Score":"7.7","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | WS-2021-0419 (High) detected in gson-2.8.6.jar | https://api.github.com/repos/opensearch-project/data-prepper/issues/989/comments | 0 | 2022-02-07T19:39:15Z | 2022-02-09T16:11:58Z | https://github.com/opensearch-project/data-prepper/issues/989 | 1,126,417,673 | 989 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2021-45046 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.11.2.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Path to dependency file: /performance-test/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.apache.logging.log4j/log4j-core/2.11.2/6c2fb3f5b7cd27504726aef1b674b542a0c9cf53/log4j-core-2.11.2.jar</p>

<p>

Dependency Hierarchy:

- zinc_2.12-1.3.5.jar (Root Library)

- zinc-compile-core_2.12-1.3.5.jar

- util-logging_2.12-1.3.0.jar

- :x: **log4j-core-2.11.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

It was found that the fix to address CVE-2021-44228 in Apache Log4j 2.15.0 was incomplete in certain non-default configurations. This could allows attackers with control over Thread Context Map (MDC) input data when the logging configuration uses a non-default Pattern Layout with either a Context Lookup (for example, $${ctx:loginId}) or a Thread Context Map pattern (%X, %mdc, or %MDC) to craft malicious input data using a JNDI Lookup pattern resulting in an information leak and remote code execution in some environments and local code execution in all environments. Log4j 2.16.0 (Java 8) and 2.12.2 (Java 7) fix this issue by removing support for message lookup patterns and disabling JNDI functionality by default.

<p>Publish Date: 2021-12-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-45046>CVE-2021-45046</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.0</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://logging.apache.org/log4j/2.x/security.html">https://logging.apache.org/log4j/2.x/security.html</a></p>

<p>Release Date: 2021-12-14</p>

<p>Fix Resolution: org.apache.logging.log4j:log4j-core:2.3.1,2.12.2,2.16.0;org.ops4j.pax.logging:pax-logging-log4j2:1.11.10,2.0.11</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.apache.logging.log4j","packageName":"log4j-core","packageVersion":"2.11.2","packageFilePaths":["/performance-test/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"org.scala-sbt:zinc_2.12:1.3.5;org.scala-sbt:zinc-compile-core_2.12:1.3.5;org.scala-sbt:util-logging_2.12:1.3.0;org.apache.logging.log4j:log4j-core:2.11.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.apache.logging.log4j:log4j-core:2.3.1,2.12.2,2.16.0;org.ops4j.pax.logging:pax-logging-log4j2:1.11.10,2.0.11","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2021-45046","vulnerabilityDetails":"It was found that the fix to address CVE-2021-44228 in Apache Log4j 2.15.0 was incomplete in certain non-default configurations. This could allows attackers with control over Thread Context Map (MDC) input data when the logging configuration uses a non-default Pattern Layout with either a Context Lookup (for example, $${ctx:loginId}) or a Thread Context Map pattern (%X, %mdc, or %MDC) to craft malicious input data using a JNDI Lookup pattern resulting in an information leak and remote code execution in some environments and local code execution in all environments. Log4j 2.16.0 (Java 8) and 2.12.2 (Java 7) fix this issue by removing support for message lookup patterns and disabling JNDI functionality by default.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-45046","cvss3Severity":"high","cvss3Score":"9.0","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Changed","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | CVE-2021-45046 (High) detected in log4j-core-2.11.2.jar | https://api.github.com/repos/opensearch-project/data-prepper/issues/988/comments | 0 | 2022-02-07T19:39:13Z | 2022-02-09T15:59:16Z | https://github.com/opensearch-project/data-prepper/issues/988 | 1,126,417,642 | 988 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2020-9488 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.11.2.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Path to dependency file: /performance-test/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.apache.logging.log4j/log4j-core/2.11.2/6c2fb3f5b7cd27504726aef1b674b542a0c9cf53/log4j-core-2.11.2.jar</p>

<p>

Dependency Hierarchy:

- zinc_2.12-1.3.5.jar (Root Library)

- zinc-compile-core_2.12-1.3.5.jar

- util-logging_2.12-1.3.0.jar

- :x: **log4j-core-2.11.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Improper validation of certificate with host mismatch in Apache Log4j SMTP appender. This could allow an SMTPS connection to be intercepted by a man-in-the-middle attack which could leak any log messages sent through that appender.

<p>Publish Date: 2020-04-27

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9488>CVE-2020-9488</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://reload4j.qos.ch/">https://reload4j.qos.ch/</a></p>

<p>Release Date: 2020-04-27</p>

<p>Fix Resolution: ch.qos.reload4j:reload4j:1.2.18.3</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.apache.logging.log4j","packageName":"log4j-core","packageVersion":"2.11.2","packageFilePaths":["/performance-test/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"org.scala-sbt:zinc_2.12:1.3.5;org.scala-sbt:zinc-compile-core_2.12:1.3.5;org.scala-sbt:util-logging_2.12:1.3.0;org.apache.logging.log4j:log4j-core:2.11.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"ch.qos.reload4j:reload4j:1.2.18.3","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2020-9488","vulnerabilityDetails":"Improper validation of certificate with host mismatch in Apache Log4j SMTP appender. This could allow an SMTPS connection to be intercepted by a man-in-the-middle attack which could leak any log messages sent through that appender.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9488","cvss3Severity":"low","cvss3Score":"3.7","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | CVE-2020-9488 (Low) detected in log4j-core-2.11.2.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/987/comments | 1 | 2022-02-07T19:39:11Z | 2022-02-09T16:04:12Z | https://github.com/opensearch-project/data-prepper/issues/987 | 1,126,417,609 | 987 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.7.jar</b></p></summary>

<p>logback-classic module</p>

<p>Library home page: <a href="http://logback.qos.ch">http://logback.qos.ch</a></p>

<p>Path to dependency file: /performance-test/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/ch.qos.logback/logback-classic/1.2.7/3e89a85545181f1a3a9efc9516ca92658502505b/logback-classic-1.2.7.jar</p>

<p>

Dependency Hierarchy:

- gatling-charts-highcharts-3.7.2.jar (Root Library)

- gatling-recorder-3.7.2.jar

- gatling-core-3.7.2.jar

- gatling-commons-3.7.2.jar

- :x: **logback-classic-1.2.7.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/022b333dc9be3548b8eb8bb73d0337fd26425056">022b333dc9be3548b8eb8bb73d0337fd26425056</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In logback version 1.2.7 and prior versions, an attacker with the required privileges to edit configurations files could craft a malicious configuration allowing to execute arbitrary code loaded from LDAP servers.

<p>Publish Date: 2021-12-16

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-42550>CVE-2021-42550</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://logback.qos.ch/news.html">http://logback.qos.ch/news.html</a></p>

<p>Release Date: 2021-12-16</p>

<p>Fix Resolution: ch.qos.logback:logback-classic:1.2.8</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"ch.qos.logback","packageName":"logback-classic","packageVersion":"1.2.7","packageFilePaths":["/performance-test/build.gradle"],"isTransitiveDependency":true,"dependencyTree":"io.gatling.highcharts:gatling-charts-highcharts:3.7.2;io.gatling:gatling-recorder:3.7.2;io.gatling:gatling-core:3.7.2;io.gatling:gatling-commons:3.7.2;ch.qos.logback:logback-classic:1.2.7","isMinimumFixVersionAvailable":true,"minimumFixVersion":"ch.qos.logback:logback-classic:1.2.8","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2021-42550","vulnerabilityDetails":"In logback version 1.2.7 and prior versions, an attacker with the required privileges to edit configurations files could craft a malicious configuration allowing to execute arbitrary code loaded from LDAP servers.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-42550","cvss3Severity":"medium","cvss3Score":"6.6","cvss3Metrics":{"A":"High","AC":"High","PR":"High","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | CVE-2021-42550 (Medium) detected in logback-classic-1.2.7.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/986/comments | 1 | 2022-02-07T19:39:09Z | 2022-02-09T16:04:15Z | https://github.com/opensearch-project/data-prepper/issues/986 | 1,126,417,591 | 986 |

[

"opensearch-project",

"data-prepper"

] | As a user of the AggregateProcessor, I would like to save state between restarts of Data Prepper | Store and load current state for AggregateProcessor on restart of Data Prepper | https://api.github.com/repos/opensearch-project/data-prepper/issues/985/comments | 1 | 2022-02-07T19:35:51Z | 2022-04-19T20:26:18Z | https://github.com/opensearch-project/data-prepper/issues/985 | 1,126,414,436 | 985 |

[

"opensearch-project",

"data-prepper"

] | Opensearch sink configuration now includes creating `index` name which can be a plain string + DateTime pattern as a suffix.

For example, `log-index-%{yyyy.MM.dd}` OR `test-index-%{yyyy.MM.dd.HH}`

Observed a failure in the e2e log test when setting the index with a date-time pattern suffix i.e. `log-index-%{yyyy.MM.dd}` in the `basic-grok-e2e-pipeline.yaml`

```

com.amazon.dataprepper.integration.log.EndToEndBasicLogTest > testPipelineEndToEnd FAILED

org.opensearch.OpenSearchStatusException at EndToEndBasicLogTest.java:71

```

| e2e log test failing when index name has DateTime pattern suffix in OpenSearch Sink Config | https://api.github.com/repos/opensearch-project/data-prepper/issues/984/comments | 0 | 2022-02-07T15:56:29Z | 2025-04-16T20:17:07Z | https://github.com/opensearch-project/data-prepper/issues/984 | 1,126,177,567 | 984 |

[

"opensearch-project",

"data-prepper"

] | **Describe the issue**

This issue was brought up in #979. [The Log Ingestion Demo Guide](https://github.com/opensearch-project/data-prepper/blob/main/examples/log-ingestion/log_ingestion_demo_guide.md) assumes that the `docker-compose` is run from the `../data-prepper/examples/log-ingestion` folder. This leads to confusion since docker-compose prepends the project name to the network.

This prepend can be overridden by adding a standard `--project-name` flag to the `docker-compose up` command

```

docker-compose --project-name "data-prepper" up -d

```

And then data prepper can be run and attached to the network with

```

docker run --name data-prepper -v /full/path/to/log_pipeline.yaml:/usr/share/data-prepper/pipelines.yaml --network "data-prepper_opensearch-net" opensearch-data-prepper:latest

``` | Log Ingestion Demo Guide Docker network prepended with different folder name | https://api.github.com/repos/opensearch-project/data-prepper/issues/981/comments | 2 | 2022-02-04T21:09:23Z | 2024-08-18T11:54:08Z | https://github.com/opensearch-project/data-prepper/issues/981 | 1,124,618,549 | 981 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

As a user of Data Prepper that wants to test Data Prepper log analytics with my logs rather quickly, it is a pain to have to configure an `http` or `file` source to send logs through Data Prepper.

As a developer of a new processor, it would be nice if I could test the end to end functionality of my processor without the `http` or `file` source.

**Describe the solution you'd like**

A source that generates either custom or common log formats that can be used to test pipelines, new processors, or to demo new processors.

Here is an example configuration which will choose a random log from a list of logs every 5 seconds and send it through Data Prepper until 20 logs have been generated.

```yaml

source:

log_generator:

interval: 5

# This could default to create an infinite number of logs

count: 20

log_type:

custom:

# default for ordered will be false, which chooses a random log from log_lines

# ordered being true will cycle through the logs in the order they appear in `log_lines`

ordered: true

log_lines:

- 'This is my test log which will get the message key added since it is not json'

- '{"log": "This is a json string which will get converted to an Event"}'

- '{"key1": "value1", "key2": "value2"}'

```

In addition to custom logs, the `log_generator` could support pre made log types. Here is an example configuration where random logs in the apache common log format are generated. This idea can be expanded for many other types of common logs (syslog, s3, etc)

```yaml

source:

log_generator:

log_type:

apache_clf:

```

| [Idea] Log Generator Source | https://api.github.com/repos/opensearch-project/data-prepper/issues/980/comments | 2 | 2022-02-04T20:43:26Z | 2022-04-19T20:25:34Z | https://github.com/opensearch-project/data-prepper/issues/980 | 1,124,600,756 | 980 |

[

"opensearch-project",

"data-prepper"

] | The `aggregate` processor as implemented for Data Prepper 1.3.0 will only support single-node deployments. Integrating it into the core Peer Forwarder (#700) will allow it to work with multiple nodes. | Support Multi-node aggregation by integrating it with the Peer Forwarder | https://api.github.com/repos/opensearch-project/data-prepper/issues/978/comments | 2 | 2022-02-04T00:10:21Z | 2022-10-03T23:09:28Z | https://github.com/opensearch-project/data-prepper/issues/978 | 1,123,679,543 | 978 |

[

"opensearch-project",

"data-prepper"

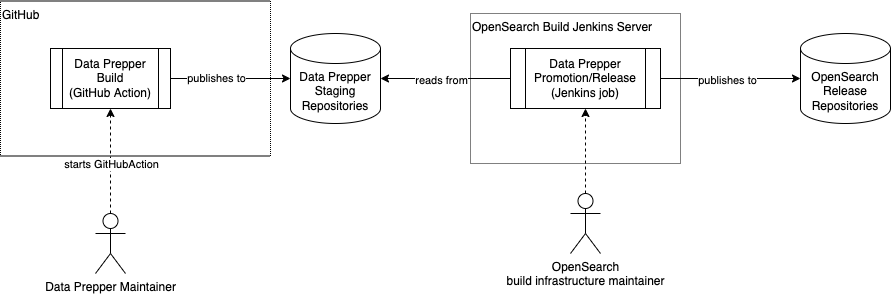

] | # Summary

The Data Prepper maintainers would like to produce Data Prepper releases more often and reduce the friction with releasing them.

The approach to this goal will be to provide two CI/CD jobs.

1. Data Prepper Build