issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 262k ⌀ | issue_title stringlengths 1 1.02k | issue_comments_url stringlengths 53 116 | issue_comments_count int64 0 2.49k | issue_created_at stringdate 1999-03-17 02:06:42 2025-06-23 11:41:49 | issue_updated_at stringdate 2000-02-10 06:43:57 2025-06-23 11:43:00 | issue_html_url stringlengths 34 97 | issue_github_id int64 132 3.17B | issue_number int64 1 215k |

|---|---|---|---|---|---|---|---|---|---|

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Some inputs may come in the form of a list of objects where each object has a natural "key" field. Pipeline authors may want to convert this into a map of keys to objects.

**Describe the solution you'd like**

Provide a processor which can perform this conversion. Say I have a Data Prepper event with the following structure.

```

{

"mylist" : [

{

"somekey" : "a",

"somevalue" : "val-a1",

"anothervalue" : "val-a2"

},

{

"somekey" : "b",

"somevalue" : "val-b1",

"anothervalue" : "val-b2"

},

{

"somekey" : "b",

"somevalue" : "val-b3",

"anothervalue" : "val-b4"

},

{

"somekey" : "c",

"somevalue" : "val-c1",

"anothervalue" : "val-c2"

}

]

}

```

If I define the following parameters in the processor:

* `key` - "somekey"

* `source` - "mylist"

* `target` - "myobject"

The processor will change the event by removing `mylist` and adding the new `myobject` object. The event will look like the following.

```

{

"myobject" : {

"a" : [

{

"somekey" : "a",

"somevalue" : "val-a1",

"anothervalue" : "val-a2"

}

],

"b" : [

{

"somekey" : "b",

"somevalue" : "val-b1",

"anothervalue" : "val-b2"

},

{

"somekey" : "b",

"somevalue" : "val-b3",

"anothervalue" : "val-b4"

}

"c" : [

{

"somekey" : "c",

"somevalue" : "val-c1",

"anothervalue" : "val-c2"

}

]

}

}

```

In many cases, we also want to flatten the array for each key. In these situations, we must choose only one object to remain. The processor can offer the choice of either `first` or `last`.

If flattened, the processor will yield:

```

{

"myobject" : {

"a" : {

"somekey" : "a",

"somevalue" : "val-a1",

"anothervalue" : "val-a2"

},

"b" : {

"somekey" : "b",

"somevalue" : "val-b1",

"anothervalue" : "val-b2"

}

"c" : {

"somekey" : "c",

"somevalue" : "val-c1",

"anothervalue" : "val-c2"

}

}

}

```

I propose calling this processor `list_to_map`, but am very open to other ideas.

The following properties may exist on it.

* `source` - The key of the source list

* `target` - The key of the target object

* `key` - The key of the field which will serve as the key in the target object.

* `value_key` - If specified, this will extract the value from the specified key to make the target.

* `flatten` - A boolean value. If set to true, then flatten into one object

* `flattened_element` - Can be either `first` or `last`. Defines which element from the list to choose when multiple exist. Can default to first.

Here is another sample output if we specify the `value_key` to "somevalue." This will pull up the value from "somevalue" and make it the target value. In this example, we are not flattening the object.

```

{

"myobject" : {

"a" : ["val-a1"],

"b" : ["val-b1", "val-b3"]

"c" : ["val-c1"]

}

}

```

And in this example, we do choose to flatten the object.

```

{

"myobject" : {

"a" : "val-a1",

"b" : "val-b1",

"c" : "val-c1"

}

}

``` | Keyed-list to flattened map processor | https://api.github.com/repos/opensearch-project/data-prepper/issues/2410/comments | 1 | 2023-03-28T13:42:40Z | 2023-04-07T17:52:14Z | https://github.com/opensearch-project/data-prepper/issues/2410 | 1,643,983,002 | 2,410 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

The current Data Prepper logs does not show the status of message deleted from SQS Queue, it only logs number of messages being deleted in debug level.

https://github.com/opensearch-project/data-prepper/blob/298e7931aa3b26130048ac3bde260e066857df54/data-prepper-plugins/s3-source/src/main/java/org/opensearch/dataprepper/plugins/source/SqsWorker.java#L230

**Describe the solution you'd like**

Add a log for each SQS message when we successfully delete it will give better visibility to user for debugging.

https://github.com/opensearch-project/data-prepper/blob/298e7931aa3b26130048ac3bde260e066857df54/data-prepper-plugins/s3-source/src/main/java/org/opensearch/dataprepper/plugins/source/SqsWorker.java#L233

| S3 source SQS message logging enhancement | https://api.github.com/repos/opensearch-project/data-prepper/issues/2405/comments | 1 | 2023-03-27T16:52:25Z | 2023-03-28T14:00:04Z | https://github.com/opensearch-project/data-prepper/issues/2405 | 1,642,461,592 | 2,405 |

[

"opensearch-project",

"data-prepper"

] | XContent namespace refactor from common -> core is going to be merged to opensearch/2.x which will break the 2.x build. This issue is for refactoring XContent imports from the `common` to `core` namespace after the core namespace change is merged.

Depends on https://github.com/opensearch-project/OpenSearch/pull/6470 | [Refactor] XContent from common to core namespace | https://api.github.com/repos/opensearch-project/data-prepper/issues/2404/comments | 2 | 2023-03-27T14:01:44Z | 2023-04-05T20:58:37Z | https://github.com/opensearch-project/data-prepper/issues/2404 | 1,642,145,957 | 2,404 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Data Prepper supports a file sink and has plans to support an S3 Sink (#1048). Sinks like these can benefit from a sink codec concept similar to the source codec (#1532).

**Describe the solution you'd like**

Create an interface in `data-prepper-api` which can transform events into an output format for consumption by participating sinks.

### Proposed interface

```

interface OutputCodec {

/**

* Called by the sink when a new destination is in use.

*/

void start(OutputStream outputStream);

/**

* Called by the sink before saving the destination to the external sink.

*/

void complete(OutputStream outputStream);

/**

* Writes an event into the underlying stream.

*/

void writeEvent(Event event, OutputStream outputStream);

/**

* Returns the expected file extension for this type of codec.

* For example, json, csv.

*/

String getExtension();

}

```

I included the `start` and `complete` interfaces because some codecs like JSON array need to create an initial wrapping and then close that.

Additionally, the `getExtension` method can be used to determine the file name when the sink is writing to a file or file-like system (e.g. S3).

### Plugin names

Use the same name as the corresponding input plugin when appropriate. Thus, we will have an input plugin for `newline` and an output plugin for `newline`. The Data Prepper plugin framework permits this because they implement different interfaces.

### Project structure

Include these new output codecs in the same Gradle projects as the input codec.

For example, both the input and output `newline` codecs should be in the `data-prepper-plugins/newline-codecs` project. | Support a generic codec structure for sinks | https://api.github.com/repos/opensearch-project/data-prepper/issues/2403/comments | 0 | 2023-03-27T13:34:52Z | 2023-06-08T19:47:11Z | https://github.com/opensearch-project/data-prepper/issues/2403 | 1,642,073,606 | 2,403 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

https://opensearch.org/docs/2.4/data-prepper/pipelines/configuration/buffers/buffers/

`Buffers store data as it passes through the pipeline. If you implement a custom buffer, it can be memory based, which provides better performance, or disk based, which is larger in size.

`

The above said documentation states that "disk based" buffering can be configured instead of a memory based "bounded_blocking" one. But I don't see the parameter names or ways to configure it

Can someone pls give an example where pipeline parts like "entry-pipeline", "raw-pipeline" and "service-pipeline" are configured with disk based buffering.

| Documentation on how to configure pipelines with disk based buffering | https://api.github.com/repos/opensearch-project/data-prepper/issues/2402/comments | 1 | 2023-03-27T10:25:39Z | 2023-03-28T13:54:01Z | https://github.com/opensearch-project/data-prepper/issues/2402 | 1,641,838,590 | 2,402 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2023-20861 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-expression-5.3.22.jar</b></p></summary>

<p>Spring Expression Language (SpEL)</p>

<p>Path to dependency file: /data-prepper-main/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.springframework/spring-expression/5.3.22/c056f9e9994b18c95deead695f9471952d1f21d1/spring-expression-5.3.22.jar</p>

<p>

Dependency Hierarchy:

- spring-context-5.3.22.jar (Root Library)

- :x: **spring-expression-5.3.22.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/90bdaa7e7833bdd504c817e49d4434b4d8880f56">90bdaa7e7833bdd504c817e49d4434b4d8880f56</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

CVE-2023-20861: Spring Expression DoS Vulnerability

This vulnerability, with a CVSS score of 5.3, pertains to a Spring Expression (SpEL) denial-of-service (DoS) vulnerability. In Spring Framework versions 6.0.0 to 6.0.6, 5.3.0 to 5.3.25, 5.2.0.RELEASE to 5.2.22.RELEASE, and older unsupported versions, a user could craft a malicious SpEL expression resulting in a DoS condition.

<p>Publish Date: 2022-11-02

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-20861>CVE-2023-20861</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://securityonline.info/cve-2023-20860-high-severity-vulnerability-in-spring-framework/">https://securityonline.info/cve-2023-20860-high-severity-vulnerability-in-spring-framework/</a></p>

<p>Release Date: 2022-11-02</p>

<p>Fix Resolution (org.springframework:spring-expression): 5.3.25</p>

<p>Direct dependency fix Resolution (org.springframework:spring-context): 5.3.25</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| CVE-2023-20861 (Medium) detected in spring-expression-5.3.22.jar - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/2393/comments | 1 | 2023-03-22T15:07:28Z | 2023-04-05T17:36:16Z | https://github.com/opensearch-project/data-prepper/issues/2393 | 1,635,959,611 | 2,393 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

It would be nice to allow mathematical operations in the processor. Sometimes we have to add new fields by summing up two fields in the input before pushing them into a destination.

**Describe the solution you'd like**

input: { a: 1, b: 2 }

output: { a: 1, b: 2, newField: 3}

In the above output "newField" should be created by adding fields "a" and "b". I,e newField = a+b

**Describe alternatives you've considered (Optional)**

logstash does allow users to run custom ruby code as part of filters which allows users to perform the above operations.

| Custom code execution in processor or allowing mathematical operations in processor | https://api.github.com/repos/opensearch-project/data-prepper/issues/2388/comments | 3 | 2023-03-20T21:28:48Z | 2024-07-09T18:53:42Z | https://github.com/opensearch-project/data-prepper/issues/2388 | 1,632,891,139 | 2,388 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

The otel-v1-apm-span-index-template mapping described in the documentation is missing the next fields from the mapping described in the markdown

- droppedAttributesCount

- traceState

- droppedEventsCount

-

**To Reproduce**

see [span mapping details](https://github.com/opensearch-project/data-prepper/blob/main/docs/schemas/trace-analytics/otel-v1-apm-span-index-template.md)

`traceState` and the above fields are referred in the fields description and in the examples but are missing from the mapping in that markdown

**Expected behavior**

add missing field

| [BUG] otel-v1-apm-span-index-template Documentation minor issue | https://api.github.com/repos/opensearch-project/data-prepper/issues/2387/comments | 0 | 2023-03-20T16:22:28Z | 2023-03-22T21:05:46Z | https://github.com/opensearch-project/data-prepper/issues/2387 | 1,632,456,887 | 2,387 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

The dataprepper stops abruptly after a few minutes.

We are using 2.1.0 version.

Traces from apps are produced nearly 3000 per/second

**Below are the last few logs.**

The WARN "**[org.opensearch.client.opensearch.core.bulk.BulkOperation@43b05aca] has failure.**" is thrown lot number of times.

```

2023-03-17T03:46:50,985 [raw-pipeline-sink-worker-6-thread-11] WARN org.opensearch.dataprepper.plugins.sink.opensearch.OpenSearchSink - Document [org.opensearch.client.opensearch.core.bulk.BulkOperation@43b05aca] has failure.

java.lang.RuntimeException: Request execution cancelled

at org.opensearch.client.RestClient.extractAndWrapCause(RestClient.java:961) ~[opensearch-rest-client-2.4.1.jar:2.4.1]

at org.opensearch.client.RestClient.performRequest(RestClient.java:332) ~[opensearch-rest-client-2.4.1.jar:2.4.1]

at org.opensearch.client.RestClient.performRequest(RestClient.java:320) ~[opensearch-rest-client-2.4.1.jar:2.4.1]

at org.opensearch.client.transport.rest_client.RestClientTransport.performRequest(RestClientTransport.java:142) ~[opensearch-java-2.2.0.jar:?]

at org.opensearch.client.opensearch.OpenSearchClient.bulk(OpenSearchClient.java:211) ~[opensearch-java-2.2.0.jar:?]

at org.opensearch.dataprepper.plugins.sink.opensearch.OpenSearchSink.lambda$doInitializeInternal$1(OpenSearchSink.java:142) ~[opensearch-2.1.0.jar:?]

at org.opensearch.dataprepper.plugins.sink.opensearch.BulkRetryStrategy.handleRetry(BulkRetryStrategy.java:163) ~[opensearch-2.1.0.jar:?]

at org.opensearch.dataprepper.plugins.sink.opensearch.BulkRetryStrategy.execute(BulkRetryStrategy.java:137) ~[opensearch-2.1.0.jar:?]

at org.opensearch.dataprepper.plugins.sink.opensearch.OpenSearchSink.lambda$flushBatch$6(OpenSearchSink.java:235) ~[opensearch-2.1.0.jar:?]

at io.micrometer.core.instrument.composite.CompositeTimer.record(CompositeTimer.java:141) ~[micrometer-core-1.10.3.jar:1.10.3]

at org.opensearch.dataprepper.plugins.sink.opensearch.OpenSearchSink.flushBatch(OpenSearchSink.java:232) ~[opensearch-2.1.0.jar:?]

at org.opensearch.dataprepper.plugins.sink.opensearch.OpenSearchSink.doOutput(OpenSearchSink.java:220) ~[opensearch-2.1.0.jar:?]

at org.opensearch.dataprepper.model.sink.AbstractSink.lambda$output$0(AbstractSink.java:54) ~[data-prepper-api-2.1.0.jar:?]

at io.micrometer.core.instrument.composite.CompositeTimer.record(CompositeTimer.java:141) ~[micrometer-core-1.10.3.jar:1.10.3]

at org.opensearch.dataprepper.model.sink.AbstractSink.output(AbstractSink.java:54) ~[data-prepper-api-2.1.0.jar:?]

at org.opensearch.dataprepper.pipeline.Pipeline.lambda$publishToSinks$3(Pipeline.java:262) ~[data-prepper-core-2.1.0.jar:?]

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:539) ~[?:?]

at java.util.concurrent.FutureTask.run(FutureTask.java:264) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1136) ~[?:?]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:635) ~[?:?]

at java.lang.Thread.run(Thread.java:833) ~[?:?]

Caused by: java.util.concurrent.CancellationException: Request execution cancelled

at org.apache.http.impl.nio.client.CloseableHttpAsyncClientBase.execute(CloseableHttpAsyncClientBase.java:114) ~[httpasyncclient-4.1.5.jar:4.1.5]

at org.apache.http.impl.nio.client.InternalHttpAsyncClient.execute(InternalHttpAsyncClient.java:138) ~[httpasyncclient-4.1.5.jar:4.1.5]

at org.opensearch.client.RestClient.performRequest(RestClient.java:328) ~[opensearch-rest-client-2.4.1.jar:2.4.1]

... 19 more

2023-03-17T03:46:58,203 [entry-pipeline-sink-worker-2-thread-8] WARN org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Workers did not terminate in time, forcing termination

2023-03-17T03:46:58,204 [entry-pipeline-sink-worker-2-thread-4] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,204 [entry-pipeline-sink-worker-2-thread-6] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,204 [entry-pipeline-sink-worker-2-thread-7] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,205 [entry-pipeline-sink-worker-2-thread-5] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,206 [entry-pipeline-sink-worker-2-thread-11] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,211 [entry-pipeline-sink-worker-2-thread-2] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,211 [entry-pipeline-sink-worker-2-thread-1] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,212 [entry-pipeline-sink-worker-2-thread-3] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,212 [entry-pipeline-sink-worker-2-thread-10] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,214 [entry-pipeline-sink-worker-2-thread-12] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

2023-03-17T03:46:58,215 [entry-pipeline-sink-worker-2-thread-9] INFO org.opensearch.dataprepper.pipeline.Pipeline - Pipeline [entry-pipeline] - Encountered interruption terminating the pipeline execution, Attempting to force the termination

```

Data-prepper pipeline config is as below

```

entry-pipeline:

workers: 12

source:

otel_trace_source:

ssl: true

sslKeyCertChainFile: "/opt/nsp/os/ssl/certs/nsp/nsp_internal.pem"

sslKeyFile: "/opt/nsp/os/ssl/nsp_internal.key"

buffer:

bounded_blocking:

buffer_size: 6000

batch_size: 400

sink:

- pipeline:

name: "raw-pipeline"

- pipeline:

name: "service-map-pipeline"

raw-pipeline:

workers: 12

source:

pipeline:

name: "entry-pipeline"

buffer:

bounded_blocking:

buffer_size: 6000

batch_size: 400

processor:

- otel_trace_raw:

sink:

- opensearch:

hosts: [ "https://{{ .Values.opensearch.service.name }}:{{ .Values.opensearch.service.port }}" ]

cert: "/opt/nsp/os/ssl/internal_ca_cert.pem"

username: "admin"

password: "admin"

index_type: trace-analytics-raw

service-map-pipeline:

workers: 12

source:

pipeline:

name: "entry-pipeline"

buffer:

bounded_blocking:

buffer_size: 6000

batch_size: 400

processor:

- service_map_stateful:

window_duration: 180

sink:

- opensearch:

hosts: [ "https://{{ .Values.opensearch.service.name }}:{{ .Values.opensearch.service.port }}" ]

cert: "/opt/nsp/os/ssl/internal_ca_cert.pem"

username: "admin"

password: "admin"

index_type: trace-analytics-service-map

```

data-prepper-config.yml

```

ssl: false

peer_forwarder:

ssl: false

buffer_size: 6000

batch_size: 400

```

The pod resources are as below

```

resources:

requests:

cpu: 1000m

memory: 1000Mi

limits:

cpu: 1000m

memory: 1000Mi

jvm:

xms: "768m"

xmx: "768m"

```

**Expected behavior**

The dataprepper shall remain stable

Increasing the buffer_size and batch_size leading to JVM heap issues and container stops.

What could be happening here? Pls bail me out. | [BUG] Dataprepper container stops abruptly | https://api.github.com/repos/opensearch-project/data-prepper/issues/2381/comments | 6 | 2023-03-17T04:01:14Z | 2023-07-24T18:31:49Z | https://github.com/opensearch-project/data-prepper/issues/2381 | 1,628,650,716 | 2,381 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

OTel sources are throwing an IllegalArgumentException because of the code change introduced in https://github.com/opensearch-project/data-prepper/pull/2297

pipelineName here is null as it's being initialized after the call to this method. https://github.com/opensearch-project/data-prepper/blob/437fd72378fbb8011e6167715ef195aa40a96f3b/data-prepper-plugins/otel-logs-source/src/main/java/com/amazon/dataprepper/plugins/source/otellogs/OTelLogsSource.java#L205

https://github.com/opensearch-project/data-prepper/blob/437fd72378fbb8011e6167715ef195aa40a96f3b/data-prepper-plugins/otel-logs-source/src/main/java/com/amazon/dataprepper/plugins/source/otellogs/OTelLogsSource.java#L73-L74

**Solution**

Above code (line 205) change should be reverted back to use

```

pipelineDescription.getPipelineName();

```

**To Reproduce**

Steps to reproduce the behavior:

Create a pipeline with http_basic auth in any of the 3 OTel source.

**Expected behavior**

Caused by: java.lang.IllegalArgumentException: PluginSetting.pipelineName must not be null

**Environment (please complete the following information):**

- OS: [e.g. Ubuntu 20.04 LTS]

- Version [e.g. 22]

**Additional context**

Add any other context about the problem here.

| [BUG] NPE in OTel sources during initialization | https://api.github.com/repos/opensearch-project/data-prepper/issues/2368/comments | 0 | 2023-03-08T21:10:20Z | 2023-03-09T18:54:33Z | https://github.com/opensearch-project/data-prepper/issues/2368 | 1,615,945,224 | 2,368 |

[

"opensearch-project",

"data-prepper"

] | Hey guys!

I have the following configuration that I'm trying to test out:

I have 2 .NET applications that have an OpenTelemetry sidecar with it, on which I have the following configuration:

```

receivers:

otlp:

protocols:

grpc:

endpoint:

http:

endpoint:

processors:

batch/traces:

timeout: 1s

send_batch_size: 50

resource:

attributes:

- key: application

value: "app1"

action: insert

exporters:

logging:

loglevel: debug

otlp/otel-gateway:

endpoint: opentelemetry-gateway:4317

tls:

insecure: true

insecure_skip_verify: true

service:

pipelines:

metrics:

receivers: [otlp]

processors: [resource]

exporters: [logging, otlp/otel-gateway]

traces:

receivers: [otlp]

processors: [batch/traces, resource]

exporters: [logging, otlp/otel-gateway]

```

Where the gateway is just forwarding all of that to Data Prepper, which has the current pipeline:

```

entry-pipeline:

delay: "100"

source:

otel_trace_source:

ssl: false

buffer:

bounded_blocking:

buffer_size: 10240

batch_size: 160

sink:

- pipeline:

name: "raw-pipeline"

- pipeline:

name: "service-map-pipeline"

raw-pipeline:

source:

pipeline:

name: "entry-pipeline"

buffer:

bounded_blocking:

buffer_size: 10240

batch_size: 160

processor:

- otel_trace_raw:

sink:

- stdout:

- opensearch:

hosts: ["https://opensearch:9200"]

insecure: true

username: admin

password: admin

index_type: trace-analytics-raw

service-map-pipeline:

delay: "100"

source:

pipeline:

name: "entry-pipeline"

buffer:

bounded_blocking:

buffer_size: 10240

batch_size: 160

processor:

- service_map_stateful:

sink:

- stdout:

- opensearch:

hosts: ["https://opensearch:9200"]

insecure: true

username: admin

password: admin

index_type: trace-analytics-service-map

metrics-pipeline:

source:

otel_metrics_source:

ssl: false

authentication:

unauthenticated:

processor:

- otel_metrics_raw_processor:

sink:

- stdout:

- opensearch:

hosts: [ "https://opensearch:9200" ]

insecure: true

username: admin

password: admin

index: "metrics-otel-v1-%{yyyy.MM.dd}"

```

My question is: How can I change the pipeline to use the attribute added on OpenTelemetry (path resource.attributes.application) so that I can have the dynamic index for application 1 and 2?

If there's any documentation regarding this please let me know, I couldn't find it.

Thank you guys! | Dynamic Index for Traces and Metrics | https://api.github.com/repos/opensearch-project/data-prepper/issues/2366/comments | 2 | 2023-03-06T17:58:25Z | 2023-03-09T07:00:23Z | https://github.com/opensearch-project/data-prepper/issues/2366 | 1,611,944,658 | 2,366 |

[

"opensearch-project",

"data-prepper"

] | Add README for RSS Source Plugin | Add Documentation for RSS Source Plugin | https://api.github.com/repos/opensearch-project/data-prepper/issues/2349/comments | 0 | 2023-03-01T23:10:00Z | 2023-09-14T16:53:20Z | https://github.com/opensearch-project/data-prepper/issues/2349 | 1,605,826,469 | 2,349 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

Data Prepper release builds are failing with the following error:

```

OpenIDConnect provider's HTTPS certificate doesn't match configured thumbprint

```

**To Reproduce**

Steps to reproduce the behavior:

1. Run a new Release build

**Expected behavior**

The build should pass.

| [BUG] Data Prepper release builds failing | https://api.github.com/repos/opensearch-project/data-prepper/issues/2343/comments | 2 | 2023-03-01T18:45:59Z | 2023-03-01T21:40:08Z | https://github.com/opensearch-project/data-prepper/issues/2343 | 1,605,484,805 | 2,343 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Data Prepper 2.1 is introducing Java binary serialization for peer-forwarding serialization (see #2242). Data Prepper administrators should secure their peer-to-peer communications using authentication and SSL. However, Data Prepper should also attempt to avoid attack vectors via deserialization.

**Describe the solution you'd like**

Use new JDK features to secure deserialization. See: https://www.oracle.com/java/technologies/javase/seccodeguide.html

| Use secure Java serialization with Java serialization | https://api.github.com/repos/opensearch-project/data-prepper/issues/2310/comments | 0 | 2023-02-27T19:57:08Z | 2023-03-01T16:06:55Z | https://github.com/opensearch-project/data-prepper/issues/2310 | 1,601,850,204 | 2,310 |

[

"opensearch-project",

"data-prepper"

] | HI,

I am using data-prepper and the service map is not showing up in Trace analytics in opensearch.

Is there a workaround?

The test application we used is gcp's microservice-demo.

Is there any additional data you need to verify?

<img width="1242" alt="스크린샷 2023-02-27 오전 10 29 04" src="https://user-images.githubusercontent.com/83107898/221452200-1686f86b-d64f-4ce7-a93c-3692cff0a051.png">

[dashboard]

<img width="1267" alt="스크린샷 2023-02-27 오전 10 29 22" src="https://user-images.githubusercontent.com/83107898/221452246-044a07bd-f895-4e3d-901e-28cc0003216c.png">

[service map]

[data-prepper] | Open Research Service map not shown | https://api.github.com/repos/opensearch-project/data-prepper/issues/2308/comments | 14 | 2023-02-27T01:40:25Z | 2023-04-04T13:58:07Z | https://github.com/opensearch-project/data-prepper/issues/2308 | 1,600,297,623 | 2,308 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Pipeline users need an option to collate/compare by specifying criteria needed. A scenario is to know query performance between two clusters.

**Describe the solution you'd like**

Create a processor which would take inputs on what needs to be collated. For Instance, receive streaming logs containing details of live query which is happening on source cluster and pipeline will execute the same query on destination cluster.

Processor will able to read queries/derive from received logs and queue the query on destination cluster. While pipeline is running on other destination cluster , I would envision processor should be able to run user defined comparisons , generate latency , compare metrics or logs.

```

source:

- collate:

destination_cluster_node_id: "50855at-856-896545"

query: ""

api: ""

schedule: " ***** "

```

**Additional context**

| Collate processor | https://api.github.com/repos/opensearch-project/data-prepper/issues/2307/comments | 2 | 2023-02-23T18:51:48Z | 2023-03-01T22:22:38Z | https://github.com/opensearch-project/data-prepper/issues/2307 | 1,597,366,462 | 2,307 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

It would be nice to have a convertor for WAF logs before sending to OpenSearch. If we want to build some Dashboard.

**Describe the solution you'd like**

- We want Data-prepper parse the web ACL name before sending to OpenSearch

- We need to distinguish between host and userAgent from ["httpRequest"]["headers"]

**Additional context**

Here are some parser code in Solution Centralized Logging with OpenSearch

```python

class WAF(LogType):

"""An implementation of LogType for WAF Logs"""

_format = "json"

def parse(self, line: str):

try:

json_record = json.loads(line)

# Extract web acl name, host and user agent

json_record["webaclName"] = re.search(

"[^/]/webacl/([^/]*)", json_record["webaclId"]

).group(1)

headers = json_record["httpRequest"]["headers"]

for header in headers:

if header["name"].lower() == "host":

json_record["host"] = header["value"]

elif header["name"].lower() == "user-agent":

json_record["userAgent"] = header["value"]

else:

continue

return json_record

except Exception as e:

logger.error(e)

return {}

``` | Add WAF log convertor before sending to OpenSearch | https://api.github.com/repos/opensearch-project/data-prepper/issues/2305/comments | 2 | 2023-02-23T06:20:47Z | 2023-03-28T13:53:05Z | https://github.com/opensearch-project/data-prepper/issues/2305 | 1,596,288,223 | 2,305 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

It would be nice to have a convertor for CloudFront logs before sending to OpenSearch. If we want to build some Dashboard.

**Describe the solution you'd like**

- For fields: "sc-content-len", "sc-range-start", "sc-range-end", we need to transfer them to 0 if the raw log is '-'.

- Use parse.unquote_plus to parse "cs-user-agent" field. | Add CloudFront log convertor before sending to OpenSearch | https://api.github.com/repos/opensearch-project/data-prepper/issues/2304/comments | 1 | 2023-02-23T06:15:48Z | 2023-02-24T04:13:09Z | https://github.com/opensearch-project/data-prepper/issues/2304 | 1,596,283,815 | 2,304 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Add error log without input request details when trace request parsing fails.

Currently when the request parsing fails `{"code":14,"grpc-code":"UNAVAILABLE"}` status is returned. When Sensitive marker is enabled, there are no details why the error occurred. It would be nice to log error that the request parsing failed without logging the request.

Parsing exception thrown [here](https://github.com/opensearch-project/data-prepper/blob/main/data-prepper-plugins/otel-trace-source/src/main/java/org/opensearch/dataprepper/plugins/source/oteltrace/OTelTraceGrpcService.java#L98).

**Describe the solution you'd like**

log error that the request parsing failed without logging the request data.

| Add error log without DataPrepperMarkers when Trace Request parsing fails | https://api.github.com/repos/opensearch-project/data-prepper/issues/2303/comments | 1 | 2023-02-22T21:17:09Z | 2023-04-21T16:23:39Z | https://github.com/opensearch-project/data-prepper/issues/2303 | 1,595,844,221 | 2,303 |

[

"opensearch-project",

"data-prepper"

] | As part of the discussion around implementing an organization-wide testing policy, I am visiting each repo to see what tests they currently perform. I am conducting this work on GitHub so that it is easy to reference.

Looking at the Data Prepper repository, it appears there is

| Repository | Unit Tests | Integration Tests | Backwards Compatibility Tests | Additional Tests | Link |

| ------------- | ------------- | ------------- | ------------- | ------------- | ------------- |

| Data Prepper | <li>- [x] </li> | <li>- [x] </li> | <li>- [ ] </li> | Certificate of Origin, Create Document Issue, Performance Tests Compile, App Check, Trace Analytics Tests | https://github.com/opensearch-project/data-prepper/issues/2302 |

I don't see any requirements for code coverage in the testing documentation. If there are any specific requirements could you respond to this issue to let me know?

If there are any tests I missed or anything you think all repositories in OpenSearch should have for testing please respond to this issue with details. | [Testing Confirmation] Confirm current testing requirements | https://api.github.com/repos/opensearch-project/data-prepper/issues/2302/comments | 0 | 2023-02-22T15:24:16Z | 2023-02-22T22:03:15Z | https://github.com/opensearch-project/data-prepper/issues/2302 | 1,595,307,424 | 2,302 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

I wanted to have pipeline creation in runtime because as of now due to manually restarting the data prepper container doesn't full fill my need.

**Describe the solution you'd like**

As a solution, it would be great to have feature in which we can add, delete and edit the pipelines in running data prepper.

| Data Prepper - Pipeline Creations in Runtime | https://api.github.com/repos/opensearch-project/data-prepper/issues/2301/comments | 1 | 2023-02-22T13:28:20Z | 2023-02-27T21:51:59Z | https://github.com/opensearch-project/data-prepper/issues/2301 | 1,595,107,315 | 2,301 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

The [ExportMetricsServiceRequest received by the otel_metrics_source is written directly to the buffer](https://github.com/opensearch-project/data-prepper/blob/1a66459f2e0d065fb0c5aa157b1ef525d10cadf0/data-prepper-plugins/otel-metrics-source/src/main/java/org/opensearch/dataprepper/plugins/source/otelmetrics/OTelMetricsGrpcService.java#L74). This conflicts with the assumption that many processors make that the buffer data is an Event or derivative thereof.

**Describe the solution you'd like**

Convert the `ExportMetricsServiceRequest` to an Event compatible model similar to what is done in the otel_trace_source and otel_logs_source.

https://github.com/opensearch-project/data-prepper/blob/1a66459f2e0d065fb0c5aa157b1ef525d10cadf0/data-prepper-plugins/otel-trace-source/src/main/java/org/opensearch/dataprepper/plugins/source/oteltrace/OTelTraceGrpcService.java#L104

| Convert ExportMetricsServiceRequest to Event compatible object in otel_metrics_source | https://api.github.com/repos/opensearch-project/data-prepper/issues/2300/comments | 1 | 2023-02-21T23:45:18Z | 2023-02-22T03:02:50Z | https://github.com/opensearch-project/data-prepper/issues/2300 | 1,594,237,815 | 2,300 |

[

"opensearch-project",

"data-prepper"

] | # Background

The current DLQ in the OpenSearch sink only writes to local files. However, sometimes pipelines authors want these DLQ files on Amazon S3.

Additionally the current DLQ format does not embed useful information on the pipeline. So a pipeline author must add a DLQ file name with the pipeline name to distinguish between multiple sinks and pipelines.

# Solution

Create an S3 DLQ option in the OpenSearch sink.

## Configurations

The DLQ should allow pipeline authors to configure:

* The bucket name (required)

* The key prefix (optional; defaults to no prefix and writes to the root of the bucket)

It should use the existing `aws: sts_role_arn` or `aws_sts_role_arn` to access the bucket.

Example:

```

sink:

- opensearch:

hosts: [...]

aws:

sts_role_arn: arn:...

s3_dlq:

bucket_name: my-bucket

key_prefix: path/to/my/dlq/

```

## Compression

This should use compression for all files. Perhaps in the future we could add an option to disable if desired.

## Format

This should use the same format as the current DLQ. Namely JSON-ND where each JSON object has the following properties:

* `Document` field - the full document

* `failure` field - the error from OpenSearch

Additionally it should add the following (these can be added to the current DLQ as well):

* `indexName` - the target Index name. With the new dynamic index name, this might be different for any given sink.

## Additional Metadata

This should store additional metadata which is relevant for all events. This could be expressed in the S3 object key itself so that it doesn't have to be repeated.

* Pipeline name

* The DLQ version format. Start at `"1"`

The key can embed this information:

```

dlq-v${version}-${pipelineName}-${PLUGIN_ID}-${timestampIso8601}-${uniqueId}.jsonnd.gz

```

The `${PLUGIN_ID}` is currently static, so it will always be `opensearch`. By using this for now, the format will extend when Data Prepper supports #1025.

A hypothetical full path might be:

```

path/to/my/dlq/dlq-v1-raw-trace-pipeline-opensearch-20230221T10:11:12Z-a258d8eb-b264-41c6-871a-b53793eaf743.jsonnd.gz

```

### Alternative - Metadata in JSON

The DLQ can include the following metadata in each JSON object:

* Pipeline name (e.g. `pipelineName: "raw-trace-pipeline"`)

* A DLQ version format (`"version" : "1"`)

## Batching

The DLQ should build the document on a local file and send after reaching a threshold. The primary threshold is time. Thus, after a period of time, the file will be written to S3 no matter what. Secondarily, it can have a size threshold in bytes. Once that threshold is reached, it will write to S3 even if the time has not been met. This is similar behavior to that proposed in #1048.

# Questions

* Is there a standard extension for JSON-ND? I have `.jsonnd` above, but I'm not sure I've really seen this.

* Should we rename the `Document` field to `document`? This is more consistent with other JSON. The downside is it would be different from the current DLQ format.

# Alternatives

## Generic DLQ

It could be useful to have a generic DLQ concept. However, the sink data may vary so it needs some discussion on the format and approach. Having a DLQ for the OpenSearch sink would cover a lot of ground and help users out quickly.

# Related Issues

This DLQ is somewhat like #1048, except it is for the DLQ.

| Support an S3 DLQ in OpenSearch | https://api.github.com/repos/opensearch-project/data-prepper/issues/2298/comments | 4 | 2023-02-21T19:19:39Z | 2023-04-05T21:29:01Z | https://github.com/opensearch-project/data-prepper/issues/2298 | 1,593,983,584 | 2,298 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Data Prepper should be supporting ARM. To ensure that it runs correctly, the tests should also run on ARM.

**Describe the solution you'd like**

Run some Data Prepper tests on ARM instances. This need not happen for every PR and perhaps could be isolated to smoke tests.

**Additional context**

Proposed mechanism to run: Using QEMU emulator:

https://github.com/uraimo/run-on-arch-action

| Run tests on ARM | https://api.github.com/repos/opensearch-project/data-prepper/issues/2294/comments | 0 | 2023-02-20T22:00:55Z | 2023-02-22T22:02:31Z | https://github.com/opensearch-project/data-prepper/issues/2294 | 1,592,492,275 | 2,294 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Data prepper should be able to support events from Aiven Kafka connector

**Describe the solution you'd like**

Add Sigv4 support for Aiven Kafka connector enabling the same to post data to Dataprepper pipelines. I would envision the below sequence

1. Get branch : [Sigv4 Changes](https://github.com/deepdatta/http-connector-for-apache-kafka/tree/sigv4_support)

2. Confirm the HTTP format to configure a Data Prepper pipeline

3. Test against open-source Data Prepper without SigV4; using HTTP source

4. Test against Dataprepper (same pipeline with SigV4)

5. Make any changes to the branch so that it works correctly

6. Create PR in to Aiven

**Additional context**

References: https://github.com/aiven/opensearch-connector-for-apache-kafka

https://github.com/deepdatta/http-connector-for-apache-kafka/tree/sigv4_support

https://github.com/deepdatta/opensearch-connector-for-apache-kafka-sigv4-configurator | Aiven Kafka connector support | https://api.github.com/repos/opensearch-project/data-prepper/issues/2293/comments | 1 | 2023-02-20T20:26:01Z | 2023-03-01T22:02:33Z | https://github.com/opensearch-project/data-prepper/issues/2293 | 1,592,403,925 | 2,293 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Currently, OpenSearch Sink tries to write to OpenSearch as long as it gets retry-able failure. This means we may be retrying forever.

**Describe the solution you'd like**

We should have a limit on number of retries which can be controlled in the configuration.

Add a configuration option `number_of_retries` to the OpenSearch Sink configuration.

**Describe alternatives you've considered (Optional)**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| OpenSearch Sink should make the number of retries configurable | https://api.github.com/repos/opensearch-project/data-prepper/issues/2291/comments | 0 | 2023-02-20T16:58:44Z | 2023-03-16T21:54:22Z | https://github.com/opensearch-project/data-prepper/issues/2291 | 1,592,197,637 | 2,291 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

The OTEL metrics source has a limited set of micrometer metrics compared to other sources.

**Describe the solution you'd like**

Add the same metrics that OTEL trace source has to the OTEL metrics source:

https://github.com/opensearch-project/data-prepper/blob/main/data-prepper-plugins/otel-trace-source/src/main/java/org/opensearch/dataprepper/plugins/source/oteltrace/OTelTraceGrpcService.java#L34-L41

vs

https://github.com/opensearch-project/data-prepper/blob/main/data-prepper-plugins/otel-metrics-source/src/main/java/org/opensearch/dataprepper/plugins/source/otelmetrics/OTelMetricsGrpcService.java#L26-L27

**Describe alternatives you've considered (Optional)**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| Metric parity for OTEL metrics source | https://api.github.com/repos/opensearch-project/data-prepper/issues/2282/comments | 0 | 2023-02-15T16:54:32Z | 2023-02-17T20:33:33Z | https://github.com/opensearch-project/data-prepper/issues/2282 | 1,586,207,379 | 2,282 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

To support Amazon OpenSearch Serverless, I need to use the `aoss` service name.

**Describe the solution you'd like**

Provide new configurations in the OpenSearch sink:

* `aws_service_name` -> Set the service name using the deprecated keys.

* `aws: service_name` -> Set the service name using the new `aws:` sub-configuration.

e.g.

```

aws:

service_name: aoss

region: us-east-1

```

or

```

aws_sigv4: true

aws_service_name: aoss

aws_region: us-east-1

```

The default value should remain `es`. | Support a configurable service name for SigV4 signing on OpenSearch sinks. | https://api.github.com/repos/opensearch-project/data-prepper/issues/2281/comments | 0 | 2023-02-15T16:21:03Z | 2023-04-14T19:53:04Z | https://github.com/opensearch-project/data-prepper/issues/2281 | 1,586,152,943 | 2,281 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2023-23934 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Werkzeug-1.0.1-py2.py3-none-any.whl</b></p></summary>

<p>The comprehensive WSGI web application library.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/cc/94/5f7079a0e00bd6863ef8f1da638721e9da21e5bacee597595b318f71d62e/Werkzeug-1.0.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/cc/94/5f7079a0e00bd6863ef8f1da638721e9da21e5bacee597595b318f71d62e/Werkzeug-1.0.1-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /examples/trace-analytics-sample-app/sample-app/requirements.txt</p>

<p>Path to vulnerable library: /examples/trace-analytics-sample-app/sample-app/requirements.txt</p>

<p>

Dependency Hierarchy:

- opentelemetry_instrumentation_flask-0.19b0-py3-none-any.whl (Root Library)

- Flask-1.1.4-py2.py3-none-any.whl

- :x: **Werkzeug-1.0.1-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/90bdaa7e7833bdd504c817e49d4434b4d8880f56">90bdaa7e7833bdd504c817e49d4434b4d8880f56</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Werkzeug is a comprehensive WSGI web application library. Browsers may allow "nameless" cookies that look like `=value` instead of `key=value`. A vulnerable browser may allow a compromised application on an adjacent subdomain to exploit this to set a cookie like `=__Host-test=bad` for another subdomain. Werkzeug prior to 2.2.3 will parse the cookie `=__Host-test=bad` as __Host-test=bad`. If a Werkzeug application is running next to a vulnerable or malicious subdomain which sets such a cookie using a vulnerable browser, the Werkzeug application will see the bad cookie value but the valid cookie key. The issue is fixed in Werkzeug 2.2.3.

<p>Publish Date: 2023-02-14

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-23934>CVE-2023-23934</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>2.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Adjacent

- Attack Complexity: High

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-23934">https://www.cve.org/CVERecord?id=CVE-2023-23934</a></p>

<p>Release Date: 2023-02-14</p>

<p>Fix Resolution: Werkzeug - 2.2.3</p>

</p>

</details>

<p></p>

| CVE-2023-23934 (Low) detected in Werkzeug-1.0.1-py2.py3-none-any.whl - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/2280/comments | 1 | 2023-02-15T13:55:56Z | 2023-02-22T16:35:26Z | https://github.com/opensearch-project/data-prepper/issues/2280 | 1,585,903,512 | 2,280 |

[

"opensearch-project",

"data-prepper"

] | ## CVE-2023-25577 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Werkzeug-1.0.1-py2.py3-none-any.whl</b></p></summary>

<p>The comprehensive WSGI web application library.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/cc/94/5f7079a0e00bd6863ef8f1da638721e9da21e5bacee597595b318f71d62e/Werkzeug-1.0.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/cc/94/5f7079a0e00bd6863ef8f1da638721e9da21e5bacee597595b318f71d62e/Werkzeug-1.0.1-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /examples/trace-analytics-sample-app/sample-app/requirements.txt</p>

<p>Path to vulnerable library: /examples/trace-analytics-sample-app/sample-app/requirements.txt</p>

<p>

Dependency Hierarchy:

- opentelemetry_instrumentation_flask-0.19b0-py3-none-any.whl (Root Library)

- Flask-1.1.4-py2.py3-none-any.whl

- :x: **Werkzeug-1.0.1-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/opensearch-project/data-prepper/commit/ebd3e757c341c1d9c1352431bbad7bf5db2ea939">ebd3e757c341c1d9c1352431bbad7bf5db2ea939</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Werkzeug is a comprehensive WSGI web application library. Prior to version 2.2.3, Werkzeug's multipart form data parser will parse an unlimited number of parts, including file parts. Parts can be a small amount of bytes, but each requires CPU time to parse and may use more memory as Python data. If a request can be made to an endpoint that accesses `request.data`, `request.form`, `request.files`, or `request.get_data(parse_form_data=False)`, it can cause unexpectedly high resource usage. This allows an attacker to cause a denial of service by sending crafted multipart data to an endpoint that will parse it. The amount of CPU time required can block worker processes from handling legitimate requests. The amount of RAM required can trigger an out of memory kill of the process. Unlimited file parts can use up memory and file handles. If many concurrent requests are sent continuously, this can exhaust or kill all available workers. Version 2.2.3 contains a patch for this issue.

<p>Publish Date: 2023-02-14

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-25577>CVE-2023-25577</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-25577">https://www.cve.org/CVERecord?id=CVE-2023-25577</a></p>

<p>Release Date: 2023-02-14</p>

<p>Fix Resolution: Werkzeug - 2.2.3</p>

</p>

</details>

<p></p>

| CVE-2023-25577 (High) detected in Werkzeug-1.0.1-py2.py3-none-any.whl - autoclosed | https://api.github.com/repos/opensearch-project/data-prepper/issues/2279/comments | 1 | 2023-02-15T13:55:54Z | 2023-02-22T16:35:14Z | https://github.com/opensearch-project/data-prepper/issues/2279 | 1,585,903,446 | 2,279 |

[

"opensearch-project",

"data-prepper"

] | Follow https://github.com/opensearch-project/.github/issues/125 to baseline MAINTAINERS, CODEOWNERS, and external collaborator permissions.

Close this issue when:

- [x] 1. [MAINTAINERS.md](MAINTAINERS.md) has the correct list of project maintainers.

- [x] 2. [CODEOWNERS](CODEOWNERS) exists and has the correct list of aliases.

- [x] 3. Repo permissions only contain individual aliases as collaborators with maintain rights, admin, and triage teams.

- [x] 4. All other teams are removed from repo permissions.

If this repo's permissions was already baselined, please confirm the above when closing this issue.

| Baseline MAINTAINERS, CODEOWNERS, and external collaborator permissions | https://api.github.com/repos/opensearch-project/data-prepper/issues/2275/comments | 1 | 2023-02-14T16:36:44Z | 2023-04-24T16:21:28Z | https://github.com/opensearch-project/data-prepper/issues/2275 | 1,584,482,027 | 2,275 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

data-prepper/examples/jaeger-hotrod does not work

no traces in Kibana

and error in logs

2023/02/14 12:00:26 Post "http://localhost:14268/api/traces": dial tcp 127.0.0.1:14268: connect: connection refused

**To Reproduce**

1. clone repo

2. docker-compose up --build

3. open application in browser, press any button

4. See error

**Screenshots**

**Environment (please complete the following information):**

- OS: [linux Mint 21.1]

| [BUG] Jaeger Hotrod demo does not work | https://api.github.com/repos/opensearch-project/data-prepper/issues/2273/comments | 12 | 2023-02-14T12:05:57Z | 2024-09-12T02:22:00Z | https://github.com/opensearch-project/data-prepper/issues/2273 | 1,584,048,450 | 2,273 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Currently, data prepper attributes lists traceId for traces, etc. let's create a standard field which all components can read from, this will help extensibility and in the future if developers want to create custom aggregations they will be able to use plug and play UI components

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered (Optional)**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| Add standardization of attributes in data prepper aggregations | https://api.github.com/repos/opensearch-project/data-prepper/issues/2270/comments | 5 | 2023-02-13T23:46:47Z | 2023-02-15T22:08:24Z | https://github.com/opensearch-project/data-prepper/issues/2270 | 1,583,243,249 | 2,270 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

Observed Timestamp is set as 1970 01 01, should this take on time if not present?

This is messing up observability plugin, which assumes observedtimestamp as the default timestamp field

**To Reproduce**

https://github.com/opensearch-project/dashboards-observability/issues/245#issuecomment-1416250750

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Environment (please complete the following information):**

- OS: [e.g. Ubuntu 20.04 LTS]

- Version [e.g. 22]

**Additional context**

Add any other context about the problem here.

| [BUG] OTEL Logs source created has observed timestamp of 1970 | https://api.github.com/repos/opensearch-project/data-prepper/issues/2268/comments | 13 | 2023-02-13T19:23:36Z | 2024-12-19T15:24:37Z | https://github.com/opensearch-project/data-prepper/issues/2268 | 1,582,926,399 | 2,268 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Users are looking to migrate data in to OpenSearch. Source of data is from existing self managed, managed clusters of OpenSearch. The plugin should enable users read data, transform and write to OpenSearch clusters. This feature is useful in many ways not limiting to migration of data but also replaying , reindexing.

**Describe the solution you'd like**

Create a source plugin which would enable users to bulk read, bulk write on a scheduled manner to a given OpenSearch cluster. This plugin should be extendable to take user defined additional sources. Users should be able to create/schedule pipeline for migration of data by

- Auto discovery i.e. Listing all the indexes or Take given Index

- Iterate over a index , read/fetch complete data

- Enrich/transform Data (Optional)

- Sink to OpenSearch using Data Prepper

- Reconcile/report comparing source and sink data

Cron can be used to schedule the migration of data. Example: ` schedule: "* * * * *" ' ` will load data every minute

**Additional context**

Plugin should be able to take configurations data related to cluster including hostname:port, user credentials, optional - index and query (e.g. match_all) .

I would envision the following sequence of steps

1. cat indices - https://opensearch.org/docs/1.2/opensearch/rest-api/cat/cat-indices/

2. Iterate over an index

3. Query index i.e. match_all or scroll query for a large indices

4. Enrich/transform Data (Optional)

5. Data Prepper pipeline to ingest data in to opensearch

6. Report on data from sink and source

**References: **

This should be similar to the logstash-input-opensearch-plugin provided in the OpenSearch project.

https://opensearch.org/blog/community/2022/05/introducing-logstash-input-opensearch-plugin-for-opensearch/

https://github.com/opensearch-project/logstash-input-opensearch

| Generic Source Plugin | https://api.github.com/repos/opensearch-project/data-prepper/issues/2264/comments | 1 | 2023-02-11T00:03:03Z | 2023-04-25T16:32:40Z | https://github.com/opensearch-project/data-prepper/issues/2264 | 1,580,510,085 | 2,264 |

[

"opensearch-project",

"data-prepper"

] | ## Problem

Pipeline configurations may change between major versions of Data Prepper. It can be useful to know what version of Data Prepper a given pipeline configuration was written to support and tested against.

## Proposal

Provide a pipeline definition version property in the pipeline configuration file. Specifically in the YAML, this would be a new property: `version`.

Example:

```

version: 2

otel-trace-pipeline:

source:

otel_trace_source:

buffer:

sink:

...

raw-pipeline:

source:

..

sink:

...

service-map-pipeline:

source:

...

sink:

...

```

The `version` can support strings in either forms:

* `${major}` (e.g. `2`)

* `${major}.${minor}` (e.g. `2.2`)

As patch versions of Data Prepper only provide bug fixes, there should be no need to include patch versions in the `version`.

Data Prepper would read the pipeline definition version and compare its current version against the definition supplied. Data Prepper will only report an error to users if the pipeline definition version represents a future version of Data Prepper.

If the pipeline definition version is older, Data Prepper will attempt to parse the configuration. It may not succeed if the major version is different and there are breaking changes reflected in the pipeline. Because a pipeline that works on 2.x may still run on 3.x, Data Prepper should at least attempt to parse it.

### Not in scope

Data Prepper could support a mechanism for reading older pipeline definition files. For example Data Prepper 3.x could read and understand breaking changes from 2.x pipelines. This proposal does not include any such attempts at keeping pipeline files operational indefinitely. This may add friction to further development of new features.

### Alternative names

I considered some other names.

* `definition_version` - This might clearly indicate that it is for the pipeline definition, but may be too verbose.

* `pipeline_version` - Even more that `version` this sounds like it is a version of the pipeline itself.

* `pipeline_definition_version` - This is accurate, but possibly too verbose.

### Alternative form

The current syntax of the pipeline YAML is a key-value map of `pipelineName:pipelineBody`. This would change that structure by adding a new key at the top: `version: ${version}`.

An alternative would be to create a modified structure:

```

version:

pipelines:

entry-pipeline:

...

raw-pipeline:

...

service-map-pipeline:

...

```

This would break all existing pipeline YAML files. I do not believe we should take this alternative YAML.

There is the possibility that somebody has a pipeline named `version`. In which case, such a pipeline would fail to run with this change. As this is not highly likely, I suggest that we move forward with supporting existing pipelines which do not use `version` in the name.

## Additional questions

Data Prepper has a core syntax as expressed in [PipelinesDataFlowModel](https://github.com/opensearch-project/data-prepper/blob/c797d5cb85f5b7a02b8ff325846ee12f6be71904/data-prepper-api/src/main/java/org/opensearch/dataprepper/model/configuration/PipelinesDataFlowModel.java). Data Prepper also has a set of built-in plugins. Should the version indicate only the pipeline syntax or also the available plugins for a given version? If the latter, how would we account for extracting plugins out of Data Prepper (see #321).

| Support a version property in pipeline YAML configurations | https://api.github.com/repos/opensearch-project/data-prepper/issues/2263/comments | 7 | 2023-02-10T21:33:40Z | 2023-02-21T15:08:42Z | https://github.com/opensearch-project/data-prepper/issues/2263 | 1,580,364,603 | 2,263 |

[

"opensearch-project",

"data-prepper"

] | **Describe the bug**

Using the latest 2.1.0 version, with Opensearch dynamic index, Data prepper is not working when trying to reference a nested field inside the event.

**To Reproduce**

Steps to reproduce the behavior:

1. Add an attribute to each span in otel collector:

```

processors:

resource:

attributes:

- key: tenant

value: "test"

action: insert

```

otel will insert this custom key as:

resource.attributes.tenant

2. In data prepper configure Opensearch sink to use the nested fie

```

sink:

- opensearch:

hosts: ["https://endpoint.eu-west-1.es.amazonaws.com:443"]

aws_sigv4: true

aws_region: "eu-west-1"

index: "otel-v1-apm-span-${resource.attributes.tenant}-%{yyyy.MM.dd}"

index_type: "custom"

trace_analytics_raw: true

```

**Expected behavior**

A clear and concise description of what you expected to happen.

I would expect Data prepper to create an index named otel-v1-apm-span-test-2023.02.09, but it doesn't.

By the way, If I try to use a top level field like "serviceName" all is working fine. | [BUG] Opensearch dynamic index not working with nested fields | https://api.github.com/repos/opensearch-project/data-prepper/issues/2259/comments | 14 | 2023-02-09T22:53:36Z | 2024-10-01T19:56:41Z | https://github.com/opensearch-project/data-prepper/issues/2259 | 1,578,735,784 | 2,259 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

The `http` source always uses the path `log/ingest`.

**Describe the solution you'd like**

Provide a configure to allow a pipeline author to change this path.

```

source:

http:

path: my/unique/path

ssl: true

ssl_certificate_file: "/full/path/to/certfile.crt"

ssl_key_file: "/full/path/to/keyfile.key"

```

With that configuration, the HTTP source will be available at:

```

https://localhost:2021/my/unique/path

```

**Describe alternatives you've considered (Optional)**

This could use prefix, but I don't see a reason to require the `log/ingest`. Unlike OTel, this is not a convention.

**Additional context**

This is somewhat similar to #2257, but this is for the `http` source only. It is quite different from the OTel sources.

| Change the path for HTTP source | https://api.github.com/repos/opensearch-project/data-prepper/issues/2258/comments | 1 | 2023-02-09T15:36:22Z | 2023-02-27T21:27:00Z | https://github.com/opensearch-project/data-prepper/issues/2258 | 1,578,128,629 | 2,258 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

When running Data Prepper with unframed requests, an OTel source has a predefined path.

For example, with traces, the path is the following:

```

opentelemetry.proto.collector.trace.v1.TraceService/Export

```

**Describe the solution you'd like**

I'd like to be able to set either the path or the path prefix.

For example, I may wish to write to:

```

http://localhost:21890/service-a/opentelemetry.proto.collector.trace.v1.TraceService/Export

```

**Describe alternatives you've considered (Optional)**

This could be accomplished either by changing the whole path or the path prefix.

Changing the whole path:

```

entry-pipeline:

source:

otel_trace_source:

path: service-a/traces

```

Would result in the following path:

```

http://localhost:21890/service-a/traces

```

Whereas changing the path prefix would be different. Given the following configuration:

```

entry-pipeline:

source:

otel_trace_source:

path_prefix: service-a

```

The final path would be:

```

http://localhost:21890/service-a/opentelemetry.proto.collector.trace.v1.TraceService/Export

```

**Additional context**

The OTel HTTP Exporter does support modifying the path as noted in the [reference documentation](https://github.com/open-telemetry/opentelemetry-collector/blob/e2a6cd7a18ac74a9cf9a3d252cb8efd4726c1727/exporter/otlphttpexporter/README.md). It offers configurations for `traces_endpoint`, `metrics_endpoint`, and `logs_endpoint`.

However, this might not work with gRPC requests. The [documentation](https://github.com/open-telemetry/opentelemetry-collector/blob/e2a6cd7a18ac74a9cf9a3d252cb8efd4726c1727/exporter/otlpexporter/README.md) shows no such options to configure the service name. | Change the path prefix for OTel endpoints | https://api.github.com/repos/opensearch-project/data-prepper/issues/2257/comments | 3 | 2023-02-09T15:29:59Z | 2023-03-01T01:57:40Z | https://github.com/opensearch-project/data-prepper/issues/2257 | 1,578,111,836 | 2,257 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

The `service_map_stateful` processor uses the `MapDbProcessorState` for the traceGroup windows (both current and previous).

https://github.com/opensearch-project/data-prepper/blob/a4c66d0d5a685bd832eb5cb4925de7b1c568ed80/data-prepper-plugins/service-map-stateful/src/main/java/org/opensearch/dataprepper/plugins/processor/ServiceMapStatefulProcessor.java#L64-L65

The code for this invariably uses a b-tree map implementation.

https://github.com/opensearch-project/data-prepper/blob/a4c66d0d5a685bd832eb5cb4925de7b1c568ed80/data-prepper-plugins/mapdb-processor-state/src/main/java/org/opensearch/dataprepper/plugins/processor/state/MapDbProcessorState.java#L42-L51

However, upon examining the code, the only use for the `traceGroup` windows are insertions and get by traceId.

https://github.com/opensearch-project/data-prepper/blob/a4c66d0d5a685bd832eb5cb4925de7b1c568ed80/data-prepper-plugins/service-map-stateful/src/main/java/org/opensearch/dataprepper/plugins/processor/ServiceMapStatefulProcessor.java#L167

https://github.com/opensearch-project/data-prepper/blob/a4c66d0d5a685bd832eb5cb4925de7b1c568ed80/data-prepper-plugins/service-map-stateful/src/main/java/org/opensearch/dataprepper/plugins/processor/ServiceMapStatefulProcessor.java#L267-L268

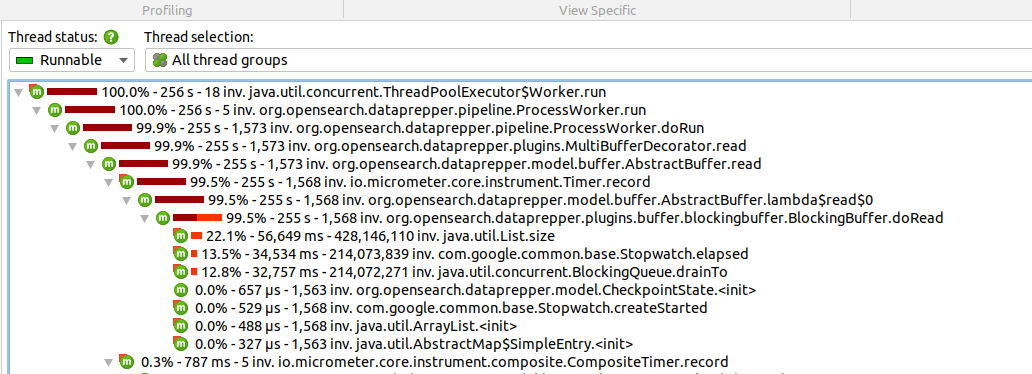

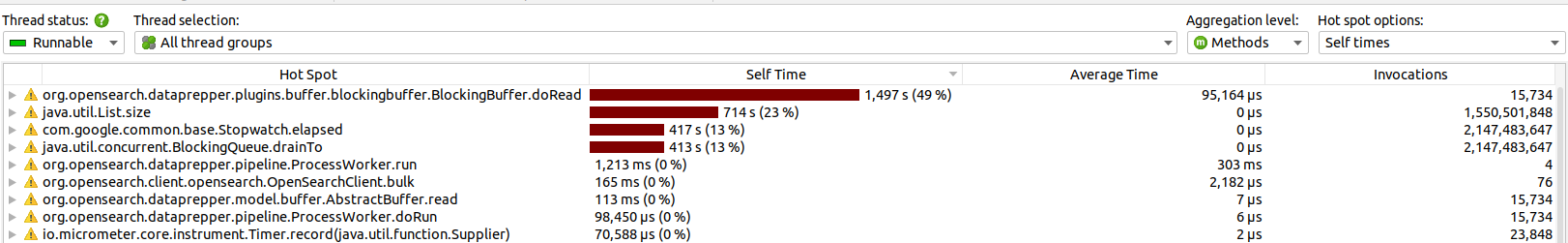

As shown in the following flame-graph, the retrievals are taking a non-trivial amount of time.

<img width="1502" alt="traceGroup-flamegraph" src="https://user-images.githubusercontent.com/293424/217357453-aec2f84c-ecdd-4e78-9afa-f697d9f1e20a.png">

**Describe the solution you'd like**

The implementation here may be better if using a `HashMap`. This will yield O(1) insertion and retrieval.

There may be some worst-case behavior of O(n) due to rehashing. However, these maps are cleared and not created anew. So this may actually only happen for the first set of traces coming through Data Prepper.

| Service Map traceGroup window map type | https://api.github.com/repos/opensearch-project/data-prepper/issues/2251/comments | 0 | 2023-02-07T20:29:13Z | 2023-02-07T20:29:13Z | https://github.com/opensearch-project/data-prepper/issues/2251 | 1,574,979,946 | 2,251 |

[

"opensearch-project",

"data-prepper"

] | **Is your feature request related to a problem? Please describe.**

Pipeline users want to add geographical location details based on IP address to enrich data for analytical purposes.

**Describe the solution you'd like**

Create GeoIP plugin which will enrich traces with a geo location field, derived from the IP address. This will give customers a more insightful value that will allow them to visualize where traces are coming originating from.

Plugin should be able to use Geo data from MaxMind database / Amazon location Service / User provided path of Geodata.

```

source:

- geoip:

source_key: "peer/ip"

target_key: "location_info"

database_path: "path/to/database.mmdb

geoip_attributes: ["location", "city_name", "country_name"]

```

GeoIP attributes should have many optional attributes like ip, city_name, country_name, continent_code, country_iso_code, postal_code, region_name, region_code, timezone, location, latitude, longitude . Default should be all values included. Location attribute refers to latitude and longitude.

Design Considerations: Any Geo data - consumer needs to keep updating the latest data in the plugin periodically

**Additional context**

Resources :

- MaxMind data: https://dev.maxmind.com/geoip/geolite2-free-geolocation-data?lang=en

- Amazon Location Services: https://aws.amazon.com/location/, https://docs.aws.amazon.com/location/latest/developerguide/search-place-index-geocoding.html

- Java Implementation : https://maxmind.github.io/GeoIP2-java/

| GeoIP plugin | https://api.github.com/repos/opensearch-project/data-prepper/issues/2247/comments | 1 | 2023-02-06T18:21:08Z | 2023-04-04T13:38:20Z | https://github.com/opensearch-project/data-prepper/issues/2247 | 1,573,062,993 | 2,247 |

[

"opensearch-project",

"data-prepper"

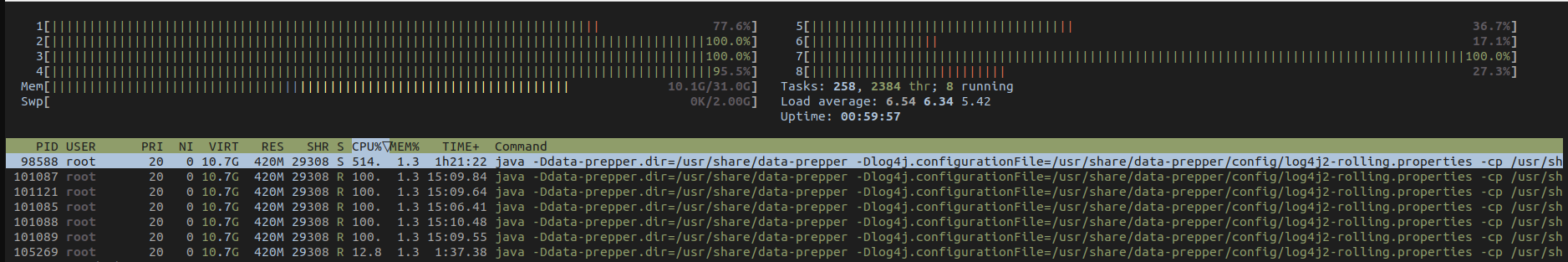

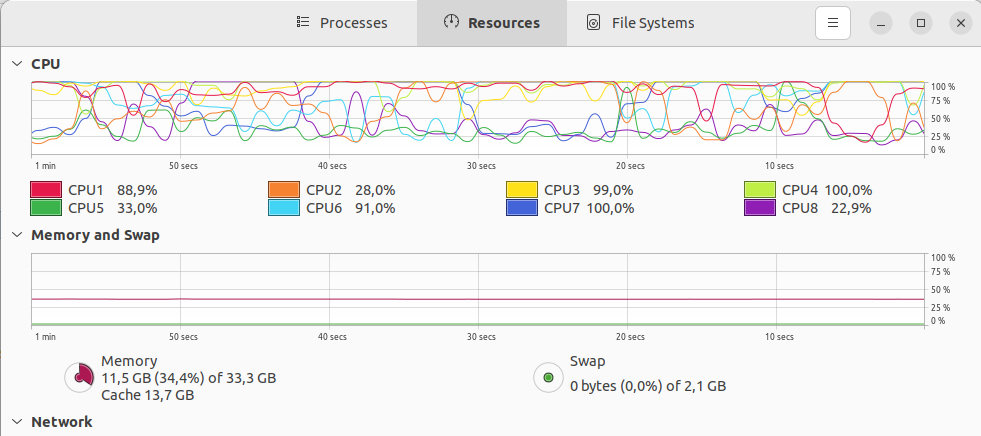

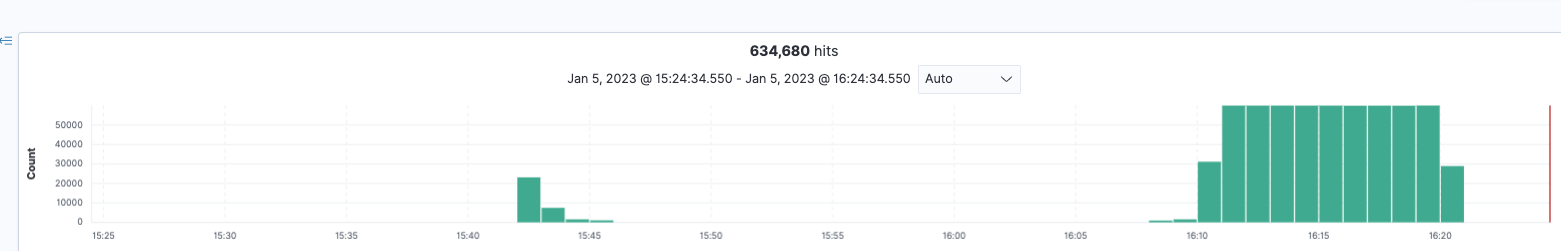

] | **Describe the bug**

I made a docker image of the main Git branch of DataPrepper (2.1.0-SNAPSHOT)

The container from this local docker image `opensearch-data-prepper:2.1.0-SNAPSHOT` is consuming 100% CPU (laptop's fan spinning at full rpm) when compared to a container with release image `opensearchproject/data-prepper:2`

The only change to test both versions is simply change in `docker-compose.yaml` the container image:

```

data-prepper:

restart: unless-stopped

container_name: data-prepper

#image: opensearchproject/data-prepper:2

image: opensearch-data-prepper:2.1.0-SNAPSHOT

```

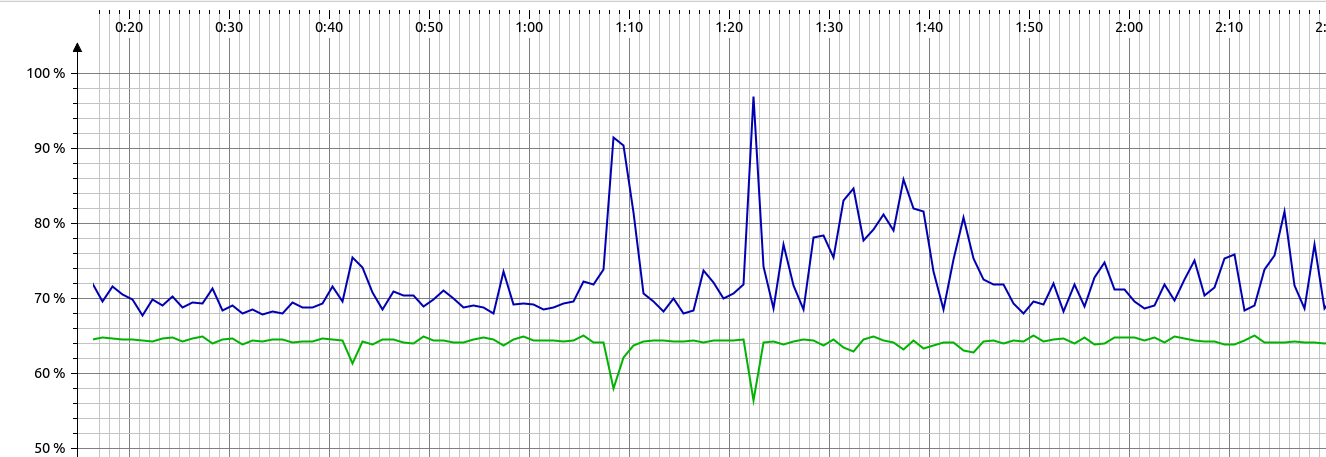

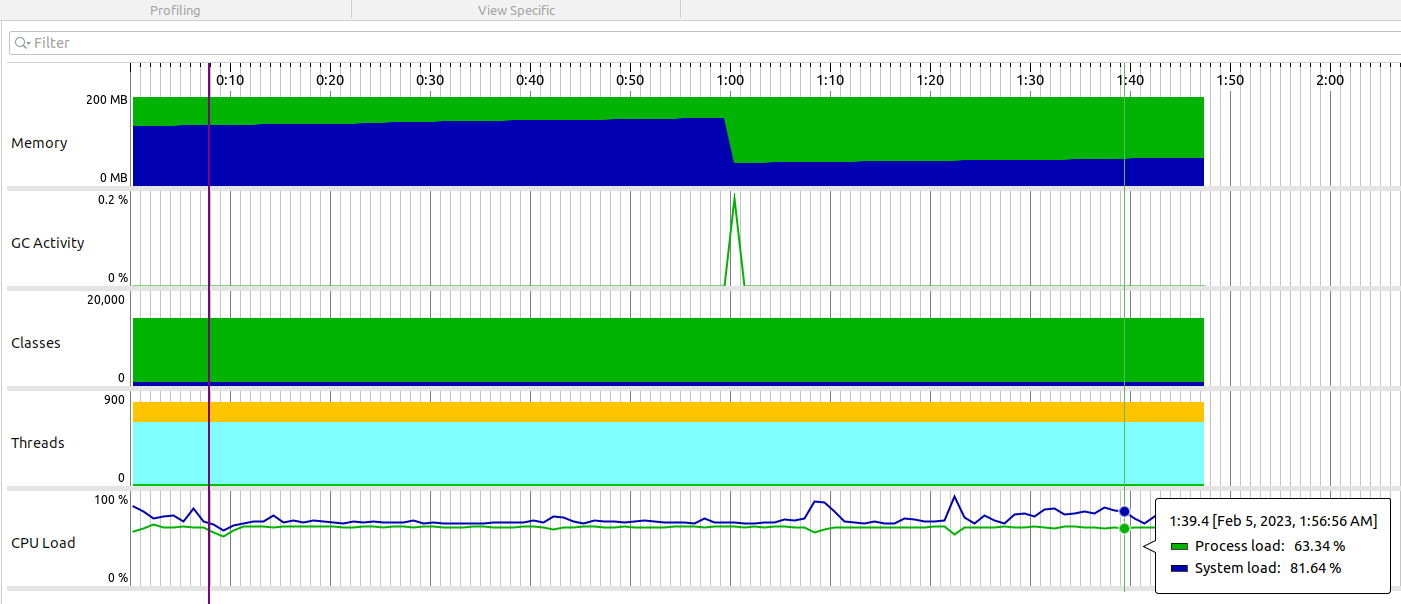

I attach screenshots of the analysis with JProfiler of the Data Prepper 2.1.0 container.

Looks like this method is generating all this CPU load:

https://github.com/opensearch-project/data-prepper/blob/497bae2b191fb96144159bfc1229bf01b7b50a5c/data-prepper-plugins/blocking-buffer/src/main/java/org/opensearch/dataprepper/plugins/buffer/blockingbuffer/BlockingBuffer.java#L148

**To Reproduce**

Steps to reproduce the behavior:

1. Git clone and build docker image of Data Prepper (HEAD rev of clone was fb060f241d0a36ecd63b4e8b153cf727ff122fe3):

```

./gradlew clean :release:docker:docker -Prelease

```

2. Run the following docker-compose that makes use the built image :

`docker-compose.yaml`:

```yaml

version: '3'

services:

opensearch-node1:

image: opensearchproject/opensearch:latest

container_name: opensearch-node1

environment:

- cluster.name=opensearch-cluster

- node.name=opensearch-node1

- discovery.seed_hosts=opensearch-node1,opensearch-node2

- cluster.initial_cluster_manager_nodes=opensearch-node1,opensearch-node2

- bootstrap.memory_lock=true # along with the memlock settings below, disables swapping